A central venous catheter is, in many ways, an amazing medical innovation— but it took insights from the social sciences, not the medical sciences, to make it safe to use. The catheter, known informally as a central line, is a narrow tube inserted into a large vein, which allows doctors to deliver drugs and fluids rapidly to a patient in the intensive care unit, where every minute counts. But central lines can get infected, and when that happens, they can be conduits for bacteria or fungi to go straight into the bloodstream, causing sepsis, organ failure, and even death.

Physicians have long considered lethal infections to be an inevitable risk of central lines. But these are not small risks. According to surgeon and author Atul Gawande, about 4 percent of central venous catheters become infected every year, involving about 80,000 patients. These infections, he writes, are expensive to treat and are fatal up to 28 percent of the time, “depending on how sick one is at the start.” If an innovation could be found to lower those rates, the impact on both quality of life and health care costs would be huge. It would also represent a significant step in medical progress—and, just possibly, in non-medical settings as well.

Peter Pronovost, a critical-care specialist at the Johns Hopkins University School of Medicine in Baltimore, thought of central line infections not as an inevitable risk but as a problem to be solved. In the early 2000s it occurred to him that if just a few steps were followed every time a central line was inserted, the prevailing narrative about central line infections could be changed from “these infections are inevitable” to “they can and must be prevented.”

Changing the prevailing narrative, though, would mean sparking a cultural shift in the ICU. And to do that, Pronovost needed to understand how major cultural shifts happen in the first place. This was a puzzle that could only be pieced together with input from social scientists—representing sociology, organizational psychology, and group dynamics—whose insights could explain the relevant aspects of human behavior.

In his book, The Checklist Manifesto: How to Get Things Right, Gawande made the analogy to another high-stakes situation, the introduction of the massive Boeing B-17 bomber shortly before World War II, a machine so complex it was nearly shelved as “too much airplane for one man to fly.” It was indeed a lot of airplane; it involved so many steps and maneuvers that it was easy for pilots to forget something important, with possibly devastating consequences. But after test pilots developed a simple checklist to itemize each step needed for taxiing, takeoff, flight, and landing, the prevailing narrative about the B-17 changed and it was viewed as practically crash-proof.

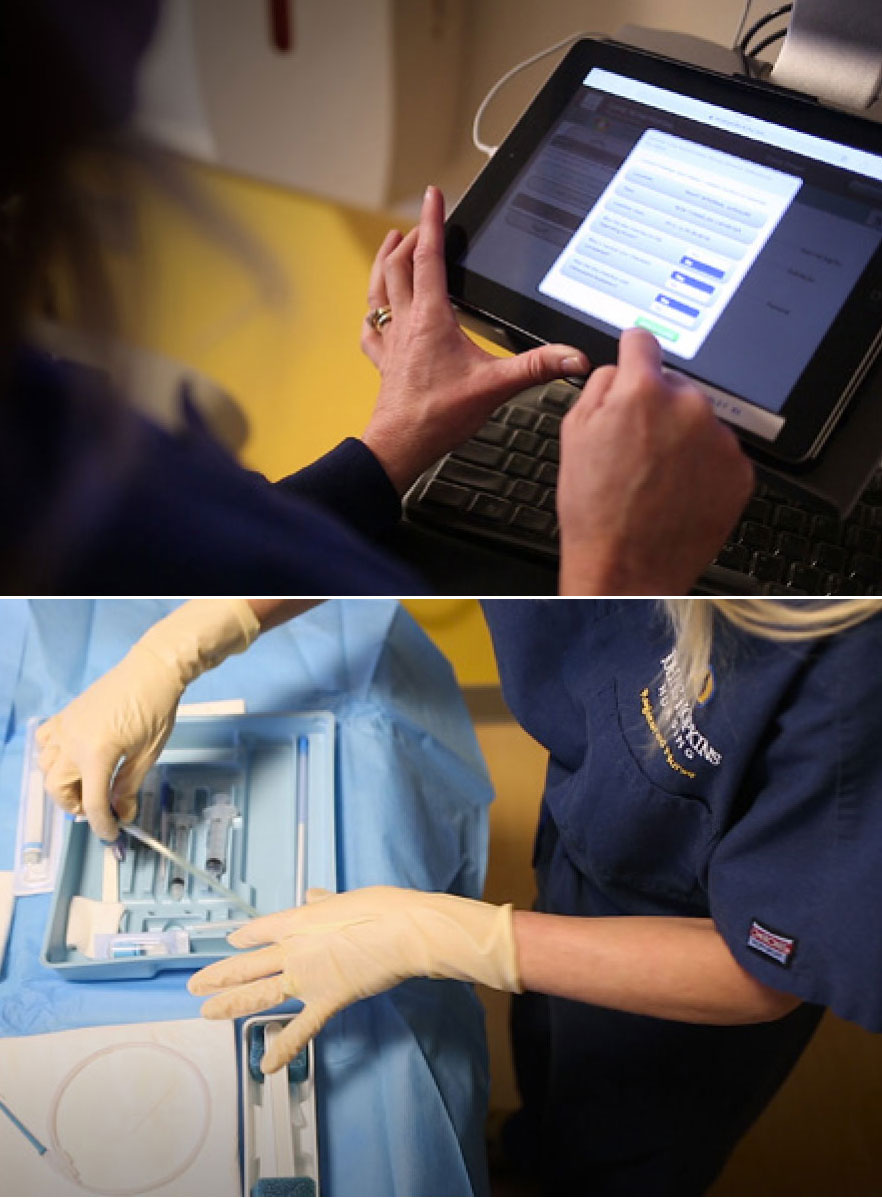

Could something similar work for central lines? Pronovost thought it might. He worked up a checklist of five safety routines to be run through every time a catheter was inserted: wash your hands with soap; clean the patient’s skin with a specific antiseptic; put sterile drapes over the entire patient (not just the immediate area around the catheter); wear sterile gear (mask, hat, gown, gloves); and put a sterile dressing over the catheter site after the line is inserted.

It might have seemed almost redundant to make a checklist of these steps, which providers already knew were an essential part of high-quality medical care. But knowing something was important and actually doing it turned out to be two different things. When Pronovost asked the ICU nurses at Hopkins to observe central line insertion carefully, it turned out that in one case out of three, the doctor skipped at least one of these essential steps.

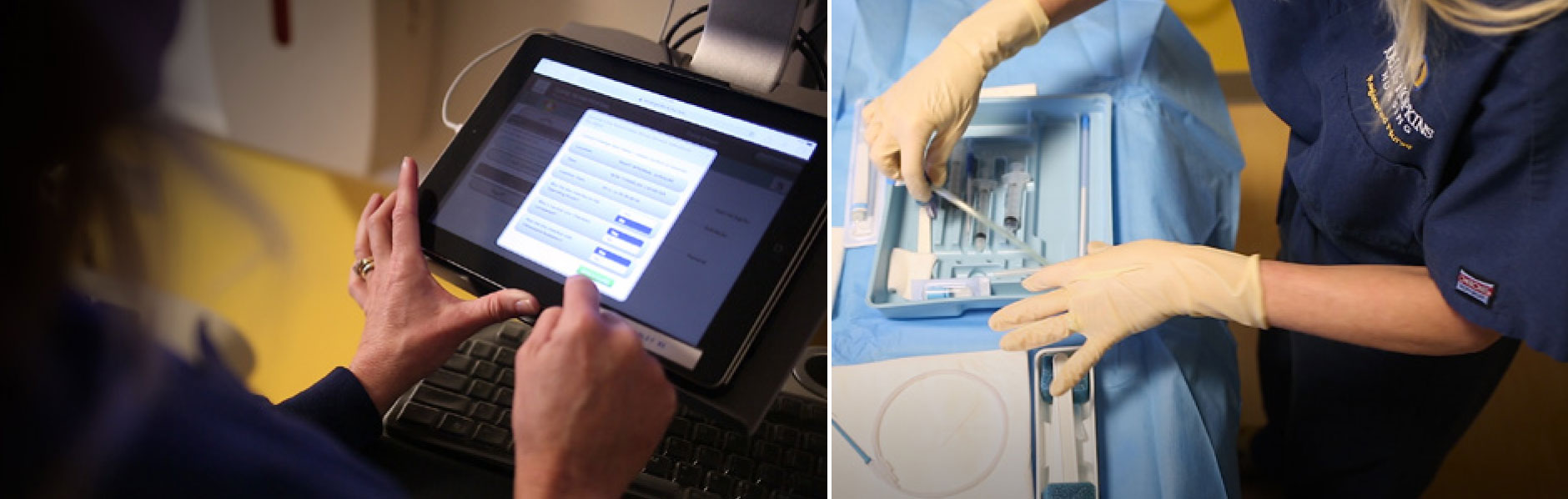

The challenge, then, was to get doctors to take the simple precautions they already knew they should be taking. To Pronovost, an innovation like the checklist was important, but he knew that whether it led to progress in the ICU depended on how it was introduced and implemented. He gave the ICU nurses instructions to run through the checklist before each central line insertion and to insist doctors follow each step. Next—and this part was crucial—he got hospital administrators to back up the nurses if the doctors balked at this disruption of the traditional medical hierarchy. He also employed some basic time management strategies, making sure that all the supplies needed for safe central line insertion—the full-body drapes, the sterile gowns and masks, the chlorhexidine soap—were assembled in one place in a central venous catheter kit.

One year later—checklists distributed, kits in place, hospital administration offering back-up support—the central line infection rate at Hopkins dropped from 11 percent to zero. It was almost too good to be true. So Pronovost continued the experiment for another 15 months, just to be sure. The results held.

In a way, the checklist seemed “like Harry Potter’s wand,” said Pronovost— you just wave it at a problem and something you thought was inevitable seemed to disappear. But it wasn’t magic, and it was important to figure out what was really happening so the innovation could be replicated elsewhere. When Pronovost and his team were asked to create a checklist program for the state of Michigan in 2003, they knew this could ultimately help them find out just how the checklist worked. They were pretty confident it would turn out that more was involved than just handing out a list with boxes to tick off.

The Michigan experiment, which involved 103 ICUs in 67 hospitals, led to improved outcomes as dramatic as those seen at Hopkins: After 18 months, central line infection rates dropped 66 percent state-wide, $175 million in health care costs were slashed, and an estimated 1,500 lives were saved.

Were these changes all because of the checklist? Not exactly. The changes were real, but they were probably linked to something more than the checklist itself. Figuring out what explained the power of this medical innovation was the next part of this puzzle to solve.

Social scientists were in a unique position to piece it together, explaining how and why the intervention worked and providing insights “that may have been obscure to its developers and implementers,” as Pronovost and University of Leicester medical sociologist Mary Dixon-Woods wrote in 2011. Social scientists can “help hunches mature into theories and can challenge assumptions.”

When Dixon-Woods and other social scientists took a serious look at the Michigan checklist experience, they saw that what really made the difference in infection rates was not just ticking off items on a checklist, but changing the culture. One of the ways this happened was through “isomorphism,” the tendency of organizations in the same field to resemble one another. Pronovost used more specific language for it—“creating clinical communities and influencing peer norms”—and he said it happened in Michigan by gathering together ICU staff from different hospitals and encouraging them to share what they were doing and what seemed to work.

As a tool, then, the checklist was important, but cultural changes were even more so. Just how important? That’s the latest puzzle piece now moving into place. Recently, Pronovost collaborated with Hopkins colleagues to evaluate its checklist program. They found that nearly 60 percent of their impact could be ascribed to a change in the “safety culture” in the ICU—that is, to a change in the attitude toward the inevitability of central line infections and other hazards of high-tech medicine and toward the importance of adhering to checklists in the first place.

The single most important indicator of this change? Widespread adoption and acceptance of the policy that error reporting is rewarded in the ICU.

Like other innovations in the field of “quality improvement,” Pronovost points out, checklist programs have to address both technical factors—the details of medical and surgical care—and adaptive ones, which go beyond simple adoption of techniques and address broader questions such as context setting, human psychology, and sociological issues. A common mistake “is to treat an adaptive problem as a technical one.” But in truth, it took both aspects of puzzle solving—the technical (medical science) and the adaptive (social science)—to make checklists work. “The science must be robust,” he wrote, “yet it must address values, beliefs, and attributes of the group involved.”

There is some urgency to figuring out this puzzle because of the recent groundswell of interest in applying the checklist tool more broadly—in other hospitals, for other hazards, and in some cases in completely different social and cultural contexts, such as childbirth centers throughout Africa. The World Health Organization is designing its own checklists and is seeking to use them in a variety of settings. But scaling things up without really understanding their mechanisms—even something as innocuous as a checklist—can be a tricky proposition.

The success of checklists in a variety of international settings has been mixed. In 2015, for instance, a team of social scientists from Imperial College, London, reported on how checklists have been received in Great Britain, where the National Health Service now requires them for various intensive care and surgical procedures. After conducting phone interviews with 119 operating room workers at 10 NHS hospitals, they found the surgical safety checklist was often rushed through, or completed only partially, with key players sometimes inattentive, annoyed, or often (around half the time) not even physically present while the items were being checked off. And the biggest reason for its lack of acceptance was a social one: hospital workers saw it as just another piece of government-mandated paperwork.

This was an adaptive problem, then, not a technical one. When the checklist was “introduced without any program or support,” one of the Imperial College, London, investigators, psychologist Stephanie Russ, told Nature, “it was just impossible, I think, for teams to buy into it.”

In the United States, realizing the importance of cultural and social factors has led to progress in the hospitals where checklists are used. The checklist idea has spread slowly, state by state, with each hospital sending in a team of ICU personnel to draw up a checklist of its own, based on its own needs. Each list must include the original five items from the first Hopkins checklist, but the customization—changing the order of the questions, say, or adding an item such as double-checking the patient’s name bracelet—has been crucial in getting each hospital to embrace the concept. The lists are probably about 95 percent the same from one hospital to another, according to Pronovost. But the 5 percent of each checklist that is unique is the “special sauce,” the final element that makes each hospital think its checklist is the best one yet.

This article was written by Robin Marantz Henig for From Research to Reward, a series produced by the National Academy of Sciences. This and other articles in the series can be found at www.nasonline.org/r2r. The Academy, located in Washington, DC, is a society of distinguished scholars dedicated to the use of science and technology for the public welfare. For more than 150 years, it has provided independent, objective scientific advice to the nation.

Please direct comments or questions about this series to Cortney Sloan at csloan@nas.edu.

Photo and Illustration Credits:

B-17 pilots: Military Images/Alamy Stock Photo • Data terminal: Johns Hopkins Medicine • Central venous catheter kit: Johns Hopkins Medicine • Peter Pronovost: Johns Hopkins Medicine • Labor Ward staff: WHO-Nigeria/R. Ogu • Background Photos: • Hand and clipboard: shutterstock/Aaron Amat • Air Force pilot: ClassicStock/Alamy

© by the National Academy of Sciences. These materials may be reposted and reproduced solely for non-commercial educational use without the written permission of the National Academy of Sciences.

Copyright ©

National Academy of Sciences. All rights reserved.

Terms of Use and Privacy Statement