2

The Scientific Merit of the Science to Achieve Results Program

The committee assessed the scientific merit of the Science to Achieve Results (STAR) program by evaluating whether the program had appropriate procedures in place to produce high-quality research. As described in the section on the committee’s approach in Chapter 1, it conducted its evaluation by comparing the STAR program’s procedures with procedures from selected other extramural research programs. The committee also read the requests for applications (RFAs) put out by the STAR program and it looked at the Environmental Protection Agency (EPA) grantee-project results database.

A number of public and private organizations support research on human health and the environment. The committee obtained relevant information about other extramural research programs through a combination of methods, including review of procedures posted on research-program Web sites, study of presentations provided to the committee, and communication via e-mail with program administrators. Table 2-1 shows the research programs selected for comparison with STAR, including brief descriptions of the research fields covered and the budgets. The committee chose the programs listed in Table 2-1 because they support research on topics somewhat similar to those supported by STAR. The committee also wanted to include a few grants programs administered by federal agencies with regulatory authority, because grants are for the benefit of the nation and not the sponsoring agency (Federal Grants and Cooperative Agreement Act of 1977). The programs vary in size, scope, and purpose. The California Air Resources Board (ARB) program was created to provide science-informed air-pollution policies and regulations. The Health Effects Institute (HEI) is an independent research organization which was created to provide high-quality, impartial, and relevant science on the health effects of air pollution; it is funded through grants from EPA and through funding from the automobile industry. Two other programs, the National Oceanic and Atmospheric Administration (NOAA) National Centers for Coastal Ocean Science (NCCOS) and the US Department of Agriculture (USDA) National Institute of Food and Agriculture (NIFA), are administered by federal agencies to support science related to their missions, and two, the National Science Foundation (NSF)

TABLE 2-1 US Extramural Research Programs Selected for Comparison with STAR

| Research Program | Program Description | Approximate 2016 Annual Budget | Parent Agency or Department Has Regulatory Authority |

|---|---|---|---|

| EPA STAR | The underlying scientific and engineering knowledge needed to address environmental and human health issues and improve decision-making, problem detection, and problem-solving. | $39 million | Yes |

| California Air Resources Board | Research to support regulations related to air quality and climate change | $4-8 million | Yes |

| Health Effects Institute | Health effects of air pollution | Total: $10-12 million; $5 million from EPA | No |

| National Institute of Environmental Health Sciences Division of Extramural Research and Training | Basic and translational research to understand how the environment influences human health and disease | About $400 million, including Superfund Research Program | No |

| National Oceanic and Atmospheric Administration National Centers for Coastal Ocean Science | Research on coastal science | $9 million | Yes |

| National Science Foundation Division of Earth Sciences | Research geared toward improving understanding of the structure, composition, and evolution of Earth, the life that it supports, and the processes that govern the formation and behavior of its materials (NSF 2017) | $181 million | No |

| US Department of Agriculture National Institute of Food and Agriculture | Research to support investment in and advancement of agricultural research, education, and extension to address societal challenges | $1.5 billion | Yes |

Division of Earth Sciences and the National Institute of Environmental Health Sciences (NIEHS) Division of Extramural Research and Training (DERT) have a principal mission of supporting basic research and the progress of science itself.

PRIORITY-SETTING

Priorities for STAR are set through 4-year strategic research action plans (StRAPs) for the national programs: Air, Climate, and Energy; Chemical Safety for Sustainability; Safe and Sustainable Water Resources; and Sustainable and Healthy Communities. EPA develops the StRAPs through a comprehensive 2-year planning process. The StRAPs are reviewed before implementation and again in the first year of implementation by two external scientific bodies: the Science Advisory Board, which comments on EPA’s strategic directions, and the Board of Scientific Counselors (BOSC), which evaluates the quality of the science delivered. The process begins again 2 years into the implementation of the plans. The Office of Research and Development (ORD) has established standing subcommittees of the BOSC for each of the national programs.

Each national research program is led by a national program director (NPD) who identifies the high-priority research topics, key science questions, and the times when the outputs are needed. The research is then implemented by EPA’s laboratories and centers which determine the research team and the science needed to address the priorities. The NPDs work with other staff in ORD to ensure the resources are appropriately allocated to support the approved projects to be implemented by the laboratories and centers. STAR projects are integrated into this planning process.

Other regulatory agencies that have research programs use slightly different planning mechanisms. The California ARB includes an open call to the public for research ideas. The research ideas are ranked by program needs and available funding and coordinated with other funding organizations to avoid duplication and to leverage funds. The California ARB Research Screening Committee reviews an annual research plan, which is also open for public comment. The Research Screening Committee consists of external scientists, engineers, and others who are knowledgeable, technically qualified, and experienced in air-pollution and climate-change problems. The annual research plan is then adopted by the ARB at a public hearing.

HEI defines its research agenda as it develops its strategic plan. The strategic plan sets the funding priorities for the next 5 years, and HEI’s board approves the strategic plan. HEI coordinates research topics with EPA to avoid duplicate funding.

NOAA’s NCCOS looks for opportunities that have the greatest chance of achieving useful outcomes for coastal management. NCCOS coordinates with other NOAA National Ocean Service offices, the coastal-management community, and other federal agencies and stakeholders. It develops prospectuses that articulate coastal-management research needs, who will use the information, and

the pathways to application, outputs, and outcomes. The prospectuses are vetted within NOAA by leaders and other partners that have overlapping interests or expertise to ensure that the chosen research priorities are clearly and strategically targeted to achieve management outcomes.

USDA NIFA develops research priorities through its 58 national program leaders and 4-year strategic plans. The program leaders consider inputs from numerous parties, including commodity groups, industry, interagency federal work groups, the National Academies, nongovernment organizations, scientific societies, and university partners (NRC 2014).

At NIEHS, DERT develops broad program priorities and recommends funding levels to ensure maximal use of available resources to attain institute objectives. Through cooperative relationships within the National Institutes of Health (NIH) and with public and private institutions and organizations, the division aims to maintain an awareness of national research efforts and assesses the need for research and research training in environmental health (NIEHS 2015).

NSF priority-setting seems to be set around broad topics that are highlighted in the agency’s 4-year strategic plan (NSF 2014a). In the divisions, the priorities typically cover a mix of projects that focus on basic-science concepts that can include environmental issues addressed by individual scientists or engineers or through multidisciplinary approaches. Ideas have a variety of sources, such as conferences and workshops, National Academies’ studies, national mandates, congressional budgetary guidance, the findings of other scientific research studies, and NSF program officers and directors.

None of the research programs uses exactly the same mechanism for priority-setting. The EPA process for STAR leads to a research program that is arguably more defined than that of a nonregulatory agency, such as NSF and NIEHS, and it appears to be more coordinated with the agency’s own research program than USDA’s NIFA or NOAA’s NCCOS. In contrast, such narrowly focused programs as those of the California ARB, HEI, and NCCOS have agendas more defined than that of STAR.

FUNDING ANNOUNCEMENTS

The STAR program has a defined process for developing RFAs and advertises funding announcements broadly. The program takes a year or less to develop an individual funding announcement. The process begins with a meeting to discuss the ideas for the announcement; EPA then assembles a writing team that writes the RFA, which is reviewed by EPA management before being opened to receive applications. During that time, there may be discussions with other federal agencies to combine funds when agencies share an interest. That step includes coordination with the EPA NPDs and other ORD program managers who establish the priorities for funding grants. Other research programs that STAR has partnered with include NSF, NIOSH, NIEHS, DOE, the Department of Homeland Security, USDA, and the UK Environmental Nanoscience Initiative.

EPA advertises funding announcements on its Web site and in the Federal Register. They are also disseminated at professional meetings, distributed on various e-mail lists, and advertised on such social-media outlets as Twitter and Face-book.

Other research programs develop their RFAs or funding announcements in their own ways. HEI’s Research Committee develops RFAs on the basis of input gathered from intensive expert workshops and from sponsors. HEI also accepts investigator-initiated proposals on the broad topic of air pollution from mobile sources. Similarly, the California ARB Research Screening Committee reviews and approves RFA objectives. The California ARB and HEI post announcements on their Web sites and e-mail services.

In NIEHS, each branch has a different process for the development of RFAs, but most branches involve consultation among the extramural staff, management-level approval, and concept approval by an external advisory committee. NIEHS allows both solicited applications, such as responses to RFAs as described above, and unsolicited (investigator-initiated) applications that are submitted to request funding for projects of interest to the submitting researcher, which may be related to any research topic within the mission of NIH (NIEHS 2015). NIEHS funding announcements are widely distributed, including posting on the agency’s Web site (grants.nih.gov), on e-mail lists, and on social media.

NCCOS’s staff officers develop funding announcements to provide detailed information for proposers and meet the criteria established in NOAA’s grants manual. NCCOS releases funding opportunities on a federal Web site (grants.gov) and on its own Web site.

It is standard in NSF to receive unsolicited applications that are submitted to request funding for projects of interest to the submitting researcher. Funding announcements are becoming more common. NSF program officials develop broadly framed funding announcements on the basis of a process that involves consultation with other programs in NSF, coordination with other stakeholders, and approval by managers. NSF funding opportunities are also announced widely on government Web sites, e-mail lists, and social media.

USDA RFAs are prepared by the RFA writing group, which comprises national program leaders and program specialists. The approval chain consists of the leaders of the relevant institute, senior executives (the Science Leadership Council), the Policy Office, and the Office of the Chief Scientist in NIFA (NRC 2014).

Like the other grant programs considered, EPA STAR has a well-established and responsive internal process for developing funding announcements. STAR also coordinates well with other agencies to leverage funds and avoid unnecessary duplication. STAR differs from NSF, NIEHS, the California ARB, and HEI in that it appears to have no mechanisms for submission of unsolicited proposals or ideas for research.

PROPOSAL REVIEW AND AWARDING OF GRANTS

After RFAs are developed and released, STAR provides academic investigators about 2 months to submit proposals, longer for some large center RFAs. Once received, proposals undergo several types of review: administrative review to verify that the application meet the requirements described in the RFA, peer-review for scientific merit, past-performance history review, and relevance review. Peer-review of STAR grants is performed by ad hoc external review panels. The panels include academic, government, or private sector scientists who are knowledgeable about the scientific subject of the RFA and meet the conflict-of-interest (COI) requirements.

At several points throughout the peer-review process, COI issues are checked and documented. In the inquiry e-mail, interested expert reviewers are asked to report any potential COI concerns. For example, potential peer-reviewers are asked whether they will be part of a team submitting an application in response to the RFA and whether an application will be submitted by their own institution. EPA scientific-review officers evaluate reviewers’ CVs and professional Web pages before assigning applications to reviewers. Once applications are available to reviewers, reviewers are required to check their assigned applications and to give notice immediately if there are unforeseen COI concerns.

External peer-reviewers are named as primary or secondary reviewers, receive copies of the proposals, and meet to discuss and assign a score to each proposal. Highly scored proposals are reviewed further for past performance and relevance. In December 2016 (subsequent to the committee’s final meeting), EPA finalized a new procedure for relevance review. The relevance review is completed within 4 weeks of the peer-review meeting. Much like peer-reviewers, relevance-review panelists are chosen on the basis of expertise in the scientific fields of the applications being reviewed. However, relevance-review panels consist only of EPA staff and include cross-agency representation (regional offices, ORD, and non-ORD program offices are all represented). Like peer-reviewers, relevance reviewers are screened for potential COI. Each application is to be reviewed by at least three reviewers, sometimes five for larger centers; one reviewer serves as a rapporteur and is responsible for the discussion of the RFA at the panel meeting. The criteria for the reviews are those listed in the RFA. After the panel meeting, the application scores are recorded and provided to program officers.

The research programs of the California ARB and HEI have processes that use steps similar to those of STAR: an internal administrative review, a peer-review, and a programmatic review. There are some differences in the peer-review process. In the California ARB, program staff and interagency project teams recommend proposals for funding, and peer-review oversight is completed by the Research Screening Committee. HEI proposals are reviewed by a special review panel that includes the Research Committee and external subject-matter experts who are not affiliated with the applicants. Those experts score

according to scientific merit, qualifications, and responsiveness to the RFA. Successful applications are then subjected to a programmatic review by the Research Committee, which makes the final award decisions. In both those research programs, the programmatic review includes an evaluation of past performance of the grantee and of the relevance of the proposed research to the program’s mission.

All NOAA NCCOS grant applications are reviewed by an ad hoc committee of reviewers selected on the basis of their qualifications and expertise in the topic; the reviewers are screened for COI in accordance with criteria established in NOAA’s grants manual. After review and scoring of applications, the NOAA program officer has discretion as to which applications to award.

The NIEHS peer-review process is different from that used by STAR and involves two steps. The first review is by the study section, which is a standing committee composed of external scientific reviewers who meet to review the scientific and technical merit of applications. The second includes the National Advisory Environmental Health Sciences (NAEHS) Council, which comprises appointed external expert scientists and internal NIEHS staff. Only applications that are recommended by both the study section and the NAEHS Council may be recommended for funding (NIEHS 2015).

The NSF proposal review and award procedures include peer-review by a committee of external scientists. NSF differs slightly in that proposals are reviewed for broader impacts in addition to intellectual merit. In some cases, other criteria are considered. The external reviewers’ analyses of the proposals are provided to the program officer, who makes recommendations to the division director; applications that are successfully reviewed by the division director are forwarded for a business review and then final decision on an award.

USDA’s NIFA uses a peer-review process in which panel managers and national program leaders assigned to each program area are responsible for review of proposals. Panel managers are part-time, temporary USDA employees who are recruited for the sole purpose of managing proposal review, whereas national program leaders are full-time, permanent USDA employees. The USDA program differs from the other programs included here in that its peer-review process is the only criterion that the program uses to make funding decisions (NRC 2014).

All the agencies reviewed use a competitive peer-review process, although there are differences in the peer-review procedures, such as the use of ad hoc peer-review committees (in EPA, NSF, and NCCOS) vs standing committees (in HEI, ARB, and NIEHS) or the inclusion of agency staff in the process (in USDA). Many agencies have a review step following peer-review. EPA was the only research program in this comparison that had a relevance review that is decoupled from other aspects of the programmatic review.

GRANT AWARD RATE

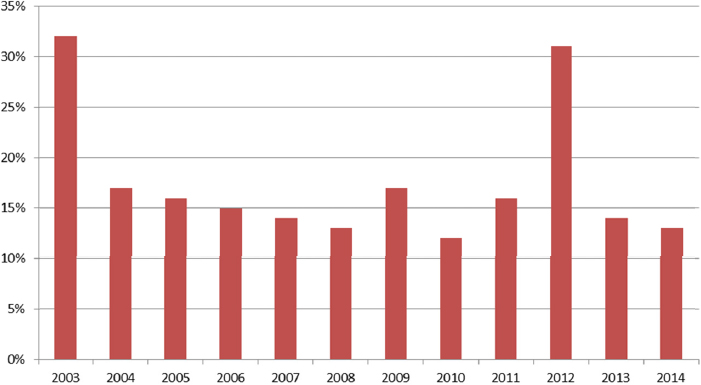

Figure 2-1 shows the percentage of STAR applications that were awarded in 2003-2014, as defined by the number of awardees per year divided by the number of applications received per year. The median rate over the last 13 years was 16% (in 2005), the lowest 12% (in 2010), and the highest 32% (in 2003). NIEHS had an award rate of 14.7% in 2015 (NIH 2015), NSF 23% in 2014 (NSF 2014b), and USDA 14.8% in 2014 (USDA NIFA 2014).

MANAGEMENT

In the STAR program, each awarded grant is assigned to a project officer, who tracks the progress of the project. Regular annual progress reports and a final report of both the scientific and financial aspects of the project are tracked by the project officer. EPA collects lists of all publications that result from each project. The STAR program also maintains a Grantee Research Projects Results Web page (https://cfpub.epa.gov/ncer_abstracts/index.cfm/fuseaction/search.welcome) in which it lists STAR research grants awarded in a particular research field and reports research outputs and project results.

STAR grant investigators for a specific RFA topic typically meet annually to circulate new information among the investigators and EPA scientists. For larger center programs, the STAR grantees hold public webinars in which investigators present research findings to the public and other stakeholders. In some cases, discussions are held between EPA scientists and grant awardees to determine whether additional collaborations would be beneficial in increasing information transfer and facilitating the research.

HEI and the California ARB issue contracts, not grants, and are more involved in the research process. ARB has quarterly progress meetings, review of draft manuscripts, and Research Screening Committee peer-review of draft final reports. In HEI, investigators conduct research with direct committee oversight; comprehensive reports include all results, both positive and negative.

Management of grantees by NIEHS is overseen by the grants management staff to ensure adherence to all applicable NIH and other federal government rules and policies. Grantees typically submit progress reports annually, and scientific progress must be determined to be satisfactory by program administrators before additional funds can be awarded for continuation of a project. For some projects and programs, there are periodic meetings and public webinars to facilitate collaborations and information transfer to the public.

NSF also requires annual reporting by grantees and a final report when a grant is completed. As with NIH, failure to submit timely reports will delay processing of additional funding. NSF also requires a project outcomes report for the general public that must be submitted within 90 days after expiration of the grant. That report serves as a brief summary, prepared specifically for the public, of the nature and outcomes of the project.

The management procedures used by EPA appear very similar to those of other research programs that issue grants. There seems to be a trend among grant funding agencies to have meetings, workshops, and webinars to facilitate collaboration.

COMMITTEE’S EVALUATION

The committee’s review of the STAR RFAs released by EPA from 2003 to 2015 revealed that the RFAs generally have well-described goals (90%) and explicit review criteria (90%). The research topics are as broad as EPA’s mission and include topics as varied as computational toxicology, microbial risk assessment, mitigation of oil spills, and creation of children’s environmental-health centers (Appendix C lists RFA titles). Most of the requests are in fairly specific focused research fields (over 80%), although the program has put forward some more general requests. The goals of the research effort also vary widely, for example, developing new technologies, developing research centers, and advancing knowledge and tools. As a result, the stakeholders vary widely—policy-makers and risk assessors at the local, state, and federal levels; the manufacturing industry; and the environmental research community.

The committee also reviewed a list of the scientists who had served on the STAR peer-review committees and their affiliations. To protect their confidentiality, EPA could not disclose the specific RFA peer-review committee on which each scientist served. In reviewing the names and affiliations of the reviewers, however, the committee was favorably impressed by the expertise represented. It was unclear whether investigators received scores or feedback from relevancy review.

The STAR grant-proposal award rate in recent years has been notable for its competitiveness, which may signify that the program sponsors research in areas that have a high demand, and is a measure of the vitality of a sponsored-research program (Cushman et al. 2015; von Hippel and von Hippel 2015). However, given the long-term trend of declining grant-award rates in most US federal research programs, the low probability of award is now the concern most noted by those who study the US research enterprise (Rockey 2014; Cushman et al. 2015; Noailly 2016). A number of adverse effects have been attributed to overcompetitive research funding, which can foster proposals, reviews, and publication practices that discourage the most innovative science (Berezin 2001; Stephan 2012; Edwards and Roy 2017). Interdisciplinary research of the type needed to address many environmental issues and yield high-impact products (Chen et al. 2015) is especially vulnerable to reduction in funding and award rates (Lyall et al. 2013; Bromham et al. 2016). Award rates that are too low can both discourage the broader participation of researchers in the field and decrease incentives for innovation.

What is an appropriate target for an acceptable grant proposal-award rate to maintain an innovative environmental research program that is responsive to critical knowledge needs? Cushman et al. (2015) summarized recent studies and concluded that a 30-35% grant award rate is ideal, but programs can sustain reductions down to 20% before researchers, especially those new to the field (often those most innovative), are discouraged from participating. Many US research programs, including STAR, are now below 20%. Cushman et al. further suggested that a rate of 6% is essentially an absolute minimum, at which the chance of success of a submission no longer justifies the time needed to develop and submit a responsive proposal. The committee speculates that one reason STAR has not become overcompetitive, is that the RFAs released are specific, and fewer researchers could develop a grant proposal to respond to the RFA.

The committee reviewed the STAR grantee project results on the aforementioned Web site. It found that the data were incomplete. There were many examples of individual principal-investigator grants long completed or at least in operation for a number of years on which annual or final reports are unavailable. It is unclear why the project Web site is not updated.

CONCLUSIONS

The committee found that the STAR program’s procedures contain elements similar to those of comparable research programs that the committee chose to examine. It is notable that STAR is one of the few programs that do not allow unsolicited proposals or the inclusion of RFA topic ideas from the many external stakeholders public. The committee acknowledges that EPA may have chosen to rely on focused research questions through RFAs, because it is easier to integrate STAR’s priorities then within its own intramural research program. The committee also thought it was appropriate that EPA integrated the priority setting procedures for STAR within four of ORD’s national programs; this al-

lows STAR to remain flexible in light of what EPA sees as the nation’s needs and avoids concerns of STAR being duplicative of EPA’s internal research. The committee noted that STAR was the only program that did not allow the general public to submit research topic ideas, or unsolicited proposals, which may limit the creativity of the program. Another adverse implication of the priority-setting process is that as the budget for ORD has declined (see Figure 1-1), the budget for STAR has declined even faster; this may be due to the defining of STAR’s budget according to the four national programs instead of directly for STAR itself.

The STAR program puts out RFAs that are generally of good quality and in a wide variety of topics. The peer-review process used by STAR is rigorous and conducted by qualified scientists. The committee thinks it may be worthwhile for the applicants to receive feedback on the relevancy reviews. The committee noted that many project reports were missing from the grantee project results Web site, which concerned some committee members. EPA should fix this Web site.

REFERENCES

Berezin, A.A. 2001. Discouragement of innovation by overcompetitive research funding. Interdiscipl. Sci. Rev. 26(2):97-102.

Bromham, L., R. Dinnage, and X. Hua. 2016. Interdisciplinary research has consistently lower funding success. Nature 534(7609):684-687.

Chen, S., C. Arsenault, and V. Larivière. 2015. Are top-cited papers more interdisciplinary? J. Informetr. 9(4):1034-1046.

Cushman, P., J.T. Hoeksema, C. Kouveliotou, J. Lowenthal, B. Peterson, K.G. Stassun, and T. von Hippel. 2015. Impact of declining proposal success rates on scientific productivity. arXiv preprint arXiv:1510.01647.

Edwards, M.A., and S. Roy. 2017. Academic research in the 21st century: Maintaining scientific integrity in a climate of perverse incentives and hypercompetition. Environ. Eng. Sci. 34(1):51-61.

Lyall, C., A. Bruce, W. Marsden, and L. Meagher. 2013. The role of funding agencies in creating interdisciplinary knowledge. Sci. Public Policy 40(1):62-71.

NIEHS (National Institute of Environmental Health Sciences). 2015. Division of Extramural Research and Training (DERT) Overview and Highlights [online]. Available: https://www.niehs.nih.gov/research/supported/dert/index.cfm [accessed January 18, 2017].

NIH (National Institutes of Health). 2015. Table 205. Reseach Project Grants and Other Mechisms Success Rates [online]. Available: http://report.nih.gov/success_rates.

Noailly, J. 2016. Research funding: Patience is a virtue. Nat. Energy 1(4):16038.

NRC (National Research Council). 2014. Spurring Innovation in Food and Agriculture: A Review of the USDA Agriculture and Food Research Initiative Program. Washington, DC: The National Academies Press.

NSF (National Science Foundation). 2014a. Investing in Science, Engineering, and Education for the Nation’s Future: Strategic Plan for 2014-2018 [online]. Available: https://www.nsf.gov/pubs/2014/nsf14043/nsf14043.pdf [accessed May 17, 2018].

NSF (National Science Foundation). 2014b. Report to the National Science Board on the National Science Foundation’s Merit Review Process-Fiscal Year 2013. May 2014 [online]. Available: https://www.nsf.gov/pubs/2014/nsb1432/nsb1432.pdf [accessed January 18, 2017].

NSF. 2017. About Earth Science [online]. Available: https://www.nsf.gov/geo/ear/about.jsp [accessed May 17, 2017].

Rockey, S. 2014. Comparing success rates, award rates and funding rates. RockTalk, NIH Extramural Nexus, March 5, 2014 [online]. Available: https://nexus.od.nih.gov/all/2014/03/05/comparing-success-award-funding-rates/ [accessed January 18, 2017].

Stephan, P. 2012. Research efficiency: Perverse incentives. Nature 484(7392):29-31.

USDA NIFA (U.S. Department of Agriculture, National Institute of Food and Agriculture). 2014. AFRI 2014 Synopsis Data [online]. Available: https://nifa.usda.gov/afri-2014-synopsis-data [accessed January 18, 2017].

von Hippel, T., and C. von Hippel. 2015. To apply or not to apply: A survey analysis of grant writing costs and benefits. PLoS ONE 10(3):e0118494.