CHAPTER 8

Decision Consequences and Trade-Offs

Following the development of a suite of alternatives, it becomes necessary for decision participants to develop a complete understanding of the impacts of those alternatives and then to begin the process of determining which set of alternatives provides the best solution that is supportable at some level by all decision participants. This chapter begins with a discussion of understanding consequences, capturing uncertainties, avoiding over-conservatism, and using a tool called a consequence table. The second half of the chapter discusses how the alternatives can be negotiated through, for example, identifying those that best meet stated objectives, refining metrics to better understand consequences, and clarifying the rules of decision making. By the end of the chapter, the reader will be at least familiar with the analytical-deliberative process. The chapter concludes with a brief discussion of PrOACT-like (Problem, Objectives, Alternatives, Consequences, Trade-offs) processes as compared to existing agency processes.

WHAT ARE THE CONSEQUENCES?

Consequences are the impacts of alternatives as measured by performance metrics. Each measurement reflects a with/without assessment (the value of each performance metric with the alternative, minus its value in the absence

of the alternative). In many cases, the performance metric is defined such that it has a zero value when the alternative is not implemented.

Care is needed in eliciting information and opinions regarding relationships between underlying physical processes and performance of different policy alternatives. Relationships may be misstated due to individual perceptions and potential mistrust among agencies and interested and affected parties. There may be a wide range of beliefs concerning a number of key underlying physical processes such as

- limiting factors for fish abundance and

- the ability of fish to migrate past the sediment retention structure (SRS),

- the ability of fish to pass through the sediment field above the SRS, and

- the impacts of dredging on aquatic habitat and fish migration;

- limiting factors for increased dredging capabilities, including

- where spoils can be put,

- acceptance of local residents to the placement of spoils,

- impact of dredging on listed species migration, and

- the ability to secure environmental permits in a timely manner;

- long-term performance of the tunnel, including

- seismic withstand, and

- the ability of tunnel to perform given the underlying geologic composition and dynamism of the surrounding rock;

- operational risk arising from tunnel repair closures;

- volume of material transfer from debris field, including whether the long-term trend of sediment migration is constant or declining;

- susceptibility of an open channel solution to downcutting from erosion;

- calculations of a Probable Maximum Flood (PMF); and

- magnitude of the 100-year flood.

Resolving the list of uncertainties in an environment where some participants cite low trust in other participants is daunting. Nevertheless,

scientific complexity is not a barrier to a successful participatory process. Increasing the participatory nature of processes adds to the quality of the analysis (NRC, 2008).

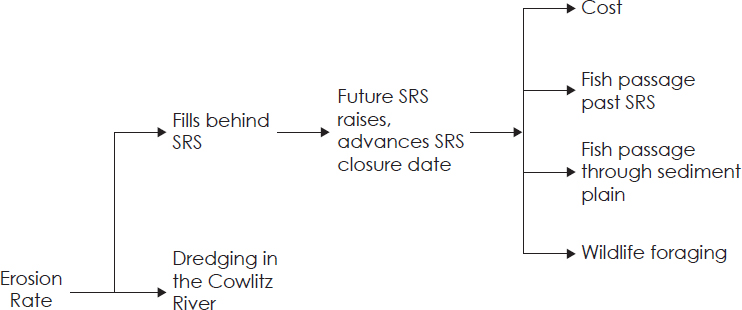

Influence diagrams, developed through discussion with decision participants, can clarify cause-and-effect relationships among the issues being considered. An influence diagram lays out how the system works. For example, the U.S. Army Corps of Engineers (USACE) highlighted “erosion” as a planning objective (USACE, 1983). Figure 8.1 is a simple influence diagram that shows how the rate of sediment production might be linked to other factors. An influence diagram is an outline of the “physics” of the system that clarifies the cause-and-effect pathways.

Capturing Uncertainty

Alternatives identified on the left-hand side of the influence diagram in Figure 8.1 cascade to a spectrum of potential outcomes or consequences on the right-hand side. To be useful in decision making, the consequences on the right-hand side must be measured along the scales of the metrics

associated with the chosen objectives. The predictions of these consequences within the context of the influence diagram are usually uncertain; therefore, they are expressed as probability distributions. For each of the decision alternatives there is a set of probabilistic forecasts expressed over each of the metrics associated with each of the objectives. These uncertain but quantitative forecasts of consequences related to each of the many objectives form the basis for decision.

A standard approach for capturing uncertainty (Morgan and Henrion, 1990) involves

- Modeling consequences using midpoint or best estimates to start;

- Identifying parameters (or hypotheses) that drive uncertainty and varying these in a sensitivity analysis to understand which uncertainties may significantly affect the decision. This will highlight where work to reconcile conflicting expert opinions is needed (Gregory et al., 2012);

- Testing the impact of key uncertainties, perhaps through analysis of scenarios created jointly with participants, so that the range of uncertainties is captured. These might include expected conditions, a 100-year inflow, a PMF, a moderate seismic event, and a moderate volcanic event; and

- Developing probability distributions for a manageable number of key uncertainties, either through historical data, modeling, or expert elicitation (Burgman, 2015), and building these into a model to show the relevant distribution of possible outcomes. This will highlight the key uncertainties and decision drivers.

Table 7.1 is an example of the first steps of this process where the robustness of alternatives is explored qualitatively against individual hazards (Grant, personal communication, November 1, 2016).

Where deviation from the expected case is large enough to rearrange the ranking of alternatives, additional work is needed to model the uncertainty and to characterize it in ways that assist the participants in understanding trade-offs. In particular, Table 7.1 indicates that additional work

is needed to aggregate different sources of risk into one overall measure that captures overall risk of a Spirit Lake breakout. The general public, decision participants, and policy makers will not have the technical background to combine these different sources of risk. Yet this information is critical to developing an understanding of how the different alternatives perform in preventing a catastrophic breakout. It is up to technical specialists working with the decision participants to finish this task.

Avoiding Over-Conservative Assumptions

It is a common mistake to build an element of risk aversion into analyses by using “conservative” modeling assumptions. Such assumptions may take the form of using the worst plausible case for negative outcomes as has been done where the USACE assumes a “no decay” time path of sediment erosion—the highest rate of erosion from several possible hypotheses (Britton et al., 2016b). Others may advocate the use of “safety margins” or engineering judgment to exaggerate possible negative consequences. The use of conservative modeling assumption should be avoided because

- Attitudes toward risk vary among individuals, and building risk aversion into the modeling step requires value judgments to be made on behalf of other participants. These may not be transparent or appropriate for all interested and affected parties.

- Attitudes toward risk can be thought of as the willingness to trade off more certainty for less certainty. Because of this, these attitudes are best captured in assessing trade-offs.

- Being “conservative” along one dimension (e.g., a more robust infrastructure design to improve safety) often means being profligate along another dimension (e.g., higher cost). Again, this tradeoff needs to be highlighted and examined, not made implicitly and invisibly during the modeling process.

Using Consequence Tables

In an iterative, collaborative, and deliberative process, it is useful to list and organize each management alternative and each decision objective in a manner that allows the performance of the objective to be seen. A tabular display of this relationship is called a consequence table. Table 8.1 is an example of such a table, adapted from a water management study in British Columbia, Canada (Gregory et al., 2012). In the illustrated case, decision participants sought a solution for conflicts among decision objectives related to generating power, avoiding floods, and enhancing ecological values. This table is likely to have more compact than would a consequence table developed for the Spirit Lake region for comparing system-wide alternatives for managing water and sediment.

Development of a consequence table is a key milestone in the decision process. Because it is built jointly through deliberation among decision participants (who are themselves interested and affected parties), it becomes an important element of a valuation framework. Building a consequence table is an iterative process, and early versions may require heavy revisions to capture information properly. The ultimate goal is a table that is noncontroversial—that is, participants should see their decision objectives, measured in ways that are both rigorous and meaningful to them and, perhaps, used to evaluate alternatives they helped to develop. The process of developing a consequence table helps the group create legitimacy of subsequent decisions among themselves and with a broad group of interested and affected parties. Box 8.1 provides examples of past communication of comparison of management alternatives for the Spirit Lake and Toutle River region. The methods used may not always have been conducive to public support for management decisions.

Comparison of alternatives remains difficult, however. Developing a colored, interactive consequence table can be helpful. Important to this is the concept of Minimum Significant Increment of Change (MSIC) (Gregory et al., 2012). MSIC refers to a pragmatic estimation of the precision of each measurement approach used to populate a consequence table. For example, if the precision of financial models does not allow distinguish-

ing among estimates that are within $1 million, then the MSIC for those financial measures will be $1 million. Alternatives within that range will be considered as equivalent.

The color scheme in Table 8.1 is not a simple high-medium-low risk ranking; rather, the color indicates a relative measure of the different alternatives with respect to the alternative being used as a point of comparison. The point of comparison is indicated in blue. Red cells in a particular row indicate alternatives with measures that are worse than the blue point-of-comparison cell; conversely, green cells indicate alternatives with measures that are preferable. A cell with no color indicates those alternatives with measures that are roughly equivalent (within the MSIC) to the blue cell. An “H” in the “Direction” column indicates that higher numbers (in this particular case) are preferable for a particular measure. “L” indicates lower numbers are preferable. (An interactive spreadsheet allows this basis of comparison to be changed during discussion.) This interactive visual tool is an excellent aid for exploring how management alternatives align with the decision objectives of the participants. This information may be used to rule out alternatives and criteria that are not key to the decision so that the group can focus on the main decision drivers.

It is important to have a technical discussion about the precision of data being used early in the decision process. The discussion is meant to focus participants on data that potentially drive decisions rather than on data differences that are inconsequential from a technical point of view. The discussion should be neither a value discussion nor a judgment on the importance of the decision objective to which the data relates. The relevance of this discussion becomes more clear later in the decision process when metrics (and decision objectives) that have similar measurements (i.e., within the MSIC) across alternatives can be set aside since they do not help to distinguish among alternatives. This early technical discussion can help to discourage later value-laden heated discussions over differences that are inconsequential from a technical point of view. It also provides a solid basis of understanding for discussions regarding the trade-offs across consequences among wider groups of interested and affected parties.

TABLE 8.1 An Example Consequence Table for Comparing Optionsa

| Objective | Performance Measure | Units | Dir | Alt 1 | Alt 2 | Alt 3 | Alt 4 | Alt 5 | Alt 8 |

|---|---|---|---|---|---|---|---|---|---|

| Minimize Flooding | |||||||||

|

@Lower Bridge River |

Flood Frequency | # days/yr | Lb | 1 | 1 | 0 | 0 | 0 | 0 |

|

@Seton Reservoir |

Flood Frequency | # days/yr | L | 6 | 6 | 6 | 6 | 6 | 6 |

| Maximize Fish Abundance | |||||||||

|

@Carpenter Reservoir |

Fish Index | 1-100 | Hc | 69 | 70 | 41 | 41 | 29 | 29 |

|

@Downton Reservoir |

Fish Index | 1-100 | H | 42 | 71 | 48 | 69 | 65 | 69 |

|

@Lower Bridge River |

Fish Index | 1-100 | H | 100 | 100 | 100 | 90 | 25 | 10 |

|

@Seton Reservoir |

Fish Index | 1-100 | H | 66 | 66 | 66 | 66 | 33 | 10 |

| Maximize Water Quality | |||||||||

|

@Seton Reservoir |

Water Suspended Solids | Tonnes/yr | L | 94 | 89 | 77 | 84 | 108 | 78 |

| Maximize Vegetated Area | |||||||||

|

@Downton Reservoir |

Weighted Area | Hectares | H | 223 | 231 | 322 | 313 | 295 | 300 |

|

@Carpenter Reservoir |

Weighted Area | Hectares | H | 759 | 522 | 758 | 520 | 602 | 600 |

| Maximize Power Benefits | |||||||||

|

Maximize Power Revenues |

Revenues | Levelized $M/yr | H | 141 | 145 | 146 | 149 | 144 | 145 |

aBlue represents the point of comparison; red indicates alternative measures significantly worse than blue; green indicates alternative measures significantly better; no color indicates no significant difference.

bL indicates lower numbers are preferable.

cH indicates higher numbers are preferable.

SOURCE: Modified from Gregory et al., 2012.

Representing Uncertainty in Consequence Tables

Decision analysis practitioners have not developed a comprehensive approach to representing uncertainty in a consequence table (Gregory and Keeney, 2017). If a key trade-off decision hinges on a risk-return consideration, a quick shortcut is to use the expected outcomes (e.g., the 50th percentile measure of a forecast impact) and some statistic of a downside outcome (e.g., the 10th percentile statistic of a forecast impact) as different line items in a consequence table. In the hypothetical example shown as Table 8.2, alternative 2 appears to deliver a much larger amount of habitat, on average, than does alternative 1. According to the chosen statistic, however, alternative 2 also delivers much less spawning habitat once every 10 years. Choosing between alternatives 1 and 2 requires considering the relative importance of average versus downside impacts. Presenting this type of trade-off and talking about sensitivity to downside risk could be an important line of inquiry in a deliberative process. This discussion can improve understanding about why different parties hold different views on the alternatives; about how susceptible the decision objective in question is to the occasionally poor outcome; and, perhaps, about how to identify ways to modify the otherwise preferred alternative to mitigate these rare occurrences.

TABLE 8.2 A Hypothetical Example of Higher Cost Versus Higher Certainty Trade-off

| Objective | Sub-Objective | Metric | Alternative 1 | Alternative 2 |

|---|---|---|---|---|

| Maximize Fish Abundance | Maximize expected usable Spawning habitat | Haa (P50)b | 100 | 200 |

| Maximize spawning habitat in low water years | Ha (P10) | 50 | 20 |

aHa = hectare.

bP is the annual probability.

This approach is a substitute for generating a higher-quality inquiry. But it does not fully represent uncertainty because it only looks at two parts of a broader distribution. Alternative 2 may also have a large but unlikely upside potential that is ignored by this shortcut. Gregory and Keeney (2017) correct this theoretical gap by developing, for each decision participant, a single certainty equivalent that reflects that individual’s risk preference. The certainty equivalent is the guaranteed amount that an individual would consider equivalent to a given distribution of uncertain amounts. The certainty equivalent differs from the expected value of the distribution according to the risk preference of the individual. A risk-seeking person would have a certainty equivalent greater than the expected value, while a risk-averse person would have a lower certainty equivalent (Raiffa, 1997).

Participants develop the certainty equivalent through a structured discussion. Clemen and Reilly (2014) take this a step further by estimating, through a structured gamble exercise, a risk-aversion parameter for each individual so that uncertain outcomes can be translated mathematically into a certainty equivalent. These more theoretically consistent approaches suffer a similar drawback, however. They greatly increase the process burden, as each decision participant must develop a unique consequence table tailored to reflect his or her unique attitude toward risk. Whether or not this incremental level of effort is warranted depends on the situation.

Helping decision participants collaboratively explore multiple alternatives when there is significant uncertainty across multiple objectives is inherently challenging. Attempts to fully incorporate uncertainty can easily lead to an unwieldy and contentious set of deliberations. Nevertheless, upfront transparency regarding the available tools, coupled with an explicit effort to match the techniques to the needs of the participants, can only add legitimacy to the process.

WHAT ARE THE TRADE-OFFS?

Identifying and closely considering trade-offs is the last step of the decision process. It is useful at this point to recall that the overall purpose of the process is not to find some objectively defined optimal solution, but to

find the best solution that is supportable at some level by all the decision participants. In most cases, this support hinges on participants’ awareness and acceptance of various trade-offs. Box 8.2 provides illustrative examples of some trade-offs that might be considered in the Spirit Lake and Toutle River region. As noted earlier, a common mistake in collaborative decision making is to prioritize objectives too early in the process (Keeney, 2002). Attempts to do so before decision objectives and their metrics are clarified and consequences are calculated will result in discussion at a level that is too high to uncover and resolve differences. For example, asking a participant in the Bridge River water management process highlighted in

Table 8.1 to prioritize too early among flooding, water quality, and wildlife habitat on the Carpenter Reservoir would have generated an answer, but one that was not grounded in any substantive knowledge of those issues in that particular decision context.

An effective decision process is based on the recognition that people develop their decision objectives and priorities as they deliberate and learn in a complicated, novel context (Slovic, 1995). People do not know at the outset how their values interact with what can be changed on the system nor how changing the system leads to intended and unintended impacts to the things they care about. The trade-off step is about exploring that decision space; looking for insights into how values are affected by the way in which the system reacts to changes; and looking for mutually advantageous solutions or, at least, solutions where important gains for some decision participants can be found without too much sacrifice of the interests of other participants.

Developing consequence tables was discussed in the previous sections. The next sections describe practical steps through which decision participants may be led to ultimately highlight the important trade-offs among a small number of alternatives so that a decision may ultimately be made.

Eliminating Dominated Alternatives

Pairwise comparisons of all the alternatives can show how they perform against each other. If an alternative is dominated—that is, not better than any other alternative with respect to all metrics, and worse with respect to at least one metric—it can be eliminated from further consideration. The application of this principle highlights the importance of including all relevant and significant metrics in the consequence table, lest an alternative be dropped prematurely.

Refining Consequence Metrics

Entries in a consequence table should reflect the best available knowledge and science. An early consideration of trade-offs in an iterative

deliberative process, however, can focus attention on greatest additional data needs.

Early in the process, before substantial investigation into all the performance metrics and consequences, some metrics will likely be based on judgment. These might have been represented in the consequence table with “yes-no” or “high-low” entries. If there are comparisons between alternatives where most consequences point to a dominant relationship except for one or a few judgmental entries, then the possibility of refining those entries must be considered. Even a modest refinement, such as replacing a “high-low” scale with “high-medium-low,” may allow the alternatives to be ranked. If so, there may be no reason to seek further refinement of the ordinal scale. Otherwise, the process must iterate back to the development of more substantive and quantified metrics to better inform what is being gained or given up in the trade-off. Even well-researched and quantified metrics may need to be refined if deeper questions regarding those metrics surface during trade-off discussions. Again, this would lead the group to iterate back to refining the metrics and reestimating the consequences.

It is important that any revision of metrics and consequences be entirely transparent and based on either newly available data or a more intensive analysis of existing data. Revision of prior judgmental metrics is generally to be avoided, as it may be viewed as self-serving or strategic on the part of the agency or the individual responsible for the judgment. This can quickly lead to an erosion of trust among the participants.

Eliminating Uninfluential Criteria

Some consequences brought forward by the decision participants may turn out to be of little importance for the decision at hand. The MSIC value (defined earlier) may be an indication of whether a particular metric can be ignored in decision making. Note the important process implications here: a decision objective and metric were put into the consequence table because one or more of the decision participants believed they were important factors when comparing alternatives. A careful consideration of the underlying impacts, however, could reveal that those factors, while still

important to some participants in a general way, can be set aside because they no longer help distinguish among alternatives. An example of this is in the colored consequence table (see Table 8.1). Because the consequences for power generation are roughly equivalent across all alternatives in that table, the group can conclude that this factor is not relevant to the decision. It must be emphasized that this is not a value judgment, but rather a technical one. Yet this technical judgment can reframe the problem in a powerful way, perhaps even helping to unlock a potentially deadlocked set of deliberations.

There is also an important communication element here. If this issue was important to the decision participants, then it is likely that it will be important to those outside the process as well. Communications regarding the process and its conclusions will need to include a careful justification for excluding the metric: explanation as to why it was initially included and what changes occurred that led to its later removal.

Monetizing Metrics

If after eliminating alternatives through the steps above it is still not possible to unambiguously rank alternatives, the next step could be to monetize certain impacts. In the example consequence table (see Table 8.1), only the power benefits are defined in monetary units. Some metrics in the table may not be amenable to monetization, but this is not always the case. For example, there are well-established methods for monetizing flood damage or recreation experience, and doing so may be a helpful way to simplify the consequence table and to clarify the comparison of alternatives to allow ranking.

Monetization methods include market-based valuation (e.g., travel cost analysis, hedonic price analysis), nonmarket valuation (e.g., contingent valuation, conjoint analysis), and benefit transfers. Yet, any valuation of this type should be performed carefully using the best available methods and data. Valuation techniques inject uncertainty into a process that is already characterized by considerable uncertainty. The analyst must be aware of the trade-off between additional information and increased uncertainty.

The move to monetize some impacts is suggested late in the decision process. Retaining the consequences in their natural units as long as possible is important in a decision process such as PrOACT for two reasons. First, monetization of consequences can be controversial, and doing so before mutual trust has developed may undermine the legitimacy of the decision process. Second, leaving consequences in their natural units until consideration of trade-offs increases the opportunity to align understanding of consequences and participants’ decision objectives. This can lead to the development of new and creative alternatives. Aggregating consequences into a monetized sum of costs or benefits too early may derail this learning opportunity (Gregory et al., 2012).

Comparing and Combining Objectives

Recall that the goal of this exercise is not to find a single best solution but a solution that is widely acceptable to the decision participants. A collaborative participatory process that includes building a consequence table and applies a structured approach to explore value trade-offs can often identify such a solution. Some have likened this final step to multiparty negotiation (Bourget, 2011). New insights may be used to refine alternatives and generate large gains to one set of interests with only small losses to other interests. Presumably, participants will agree to an outcome if there are greater benefits to doing so than not agreeing. Successful application of these ranking and weighting methods can occur only when a high level of trust exists—whether present at the outset of the process or as a result of the process.

In certain cases, it is possible that a thorough qualitative examination of trade-offs does not generate an obvious mutually acceptable solution. There still may remain a large number of alternatives or trade-offs across a large number of objectives with no single dominant alternative. In these cases, a more structured method may allow participants to think more rigorously about the relationships between their objectives and the alternatives. A structured elicitation and application of decision weights built on a multiple attribute utility theory (MAUT)—for example, swing

weighting1—has been used in other applications to break a deliberation impasse and reach a mutually agreeable solution (Keeney, 1988). While the swing weighting approach is built on and consistent with the theory of consumer choice as found in any microeconomics textbook, it is not an optimization process that generates the best answer based on objectively defined decision weights. Rather, it is a values-insight exercise that elicits decision “weights” from each individual. There is no need to reach agreement on these decision weights; each individual’s weights give rise to an individual’s rank ordering of the remaining alternatives. Based on these, the search for a commonly supported alternative can continue. Table 8.3 presents the same information shown in Table 8.1, but it highlights the range (from worst to best) across which the decision objectives are affected by the alternatives under consideration. Presented with the information in this way, a decision participant may move from a positional approach for comparing alternatives and instead may focus on their underlying interests. As an example, the participants can see that the effect of the various alternatives is that flooding in the lower Bridge River may range from zero to one day per year. This information could make it easier to prioritize among their decision objectives.

Individuals’ decision weights are tied to their personal values. As such, there is no “correct” answer, and weights are likely to vary from person to person. Guidance can help people construct their values around these difficult and novel considerations. For instance, when considering how to trade off X million dollars against some benefit (say, reduction of flood risk, or improvement of riparian habitat), a useful threshold question to ask is whether similar benefits can be achieved at a lesser cost through action in other parts of the system. A useful comparison question to ask is how other people value similar trade-offs. A useful substitute question to ask

___________________

1 Swing weighting is one of several methods for deriving weights for objectives, allowing performance against multiple objectives to be aggregated and management alternatives to be ranked. Weights for each objective are derived based on the range of worst to best outcome (the “swing”) across the alternatives. Following a process of normalization and determination of the relative value of the swings, weights are assigned to the objectives, where the objective with the potential to produce the greatest increase in overall value receives the largest weight (Belton and Stewart, 2002).

might be regarding other benefits that could be “purchased” with that same amount of money—either through different management options within the Toutle River system or through considering hypothetical options across society writ large.

If uncertainty is an important component of understanding trade-offs, it will also need to be addressed in this. This could be done by translating uncertain consequences into their certainty equivalents: for example, by using approaches described by Gregory and Keeney (2017), Clemen (1996), or Runge and others (2015). A unique set of consequence tables like Table 8.1 would be developed for each decision participant and the swing weighting could be carried out for each individual to help rank alternatives. As with all these tools, it is necessary to maintain communications with all decision participants to explicitly test whether the extra process burden is worth the resulting insights it could reveal.

It is important for participants to know that these valuation tools do not supplant decision making; rather, they help participants gain additional insights into their decision objectives that otherwise might be obscured by the multiple-objective decision making in a complex management system. Hobbes and Horn (1997) demonstrated how a divergence between “gut” level choices and those derived through swing weighting can be a source of additional value-insight. Building this exploratory step into the process could enhance the legitimacy of surprising swing weighting outcomes should they occur.

While the decision framework is described in a linear way, it can be iterative when insights gained at one step lead to revisiting previous steps. The need to revisit previous steps might be recognized when participants recognize data gaps as they try to balance consequences across management alternatives, when they recognize certain metrics need to be refined before consequences can be prioritized, or when they conclude that more creative thinking needs to be put into developing alternatives. Being able to answer the questions found in Box 8.3 positively is a good indication that the process is sufficiently mature. Given that the decision processes have to be completed in a finite time, however, it is important to have a common understanding of when deliberation can continue and when a final drive for

TABLE 8.3 Consequences, Swing Weighted from Worst to Best

| Swing Weighting | ||||||||

|---|---|---|---|---|---|---|---|---|

| Sub-Objective-Level Swing Weighting | Worst | Best | Rank | Weight | ||||

| Objective | Sub-Objective | Performance Measure | Units | Dir | ||||

| Minimize Flooding | ||||||||

| @Lower Bridge River | Flood frequency | #days/yr | L | 1 | 0 | |||

| @Seton Reservoir | Flood frequency | #days/yr | L | 6 | 6 | |||

| Maximize Fish Abundance | ||||||||

| @Carpenter Reservoir | Fish index | 1-100 | H | 29 | 70 | |||

| @Downton Reservoir | Fish index | 1-100 | H | 42 | 71 | |||

| @Lower Bridge River | Fish index | 1-100 | H | 10 | 100 | |||

| @Seton Reservoir | Fish index | 1-100 | H | 10 | 66 | |||

| Maximize Water Quality | ||||||||

| @Seton Reservoir | Water suspended solids | Tonnes/yr | L | 108 | 77 | |||

| Maximize Vegetated Area | ||||||||

| @Downton Reservoir | Weighted area | Hectares | H | 223 | 322 | |||

| @Carpenter Reservoir | Weighted area | Hectares | H | 520 | 759 | |||

| Maximize Power Benefits | ||||||||

| Maximize Power Revenues | Revenues | Levelized $M/yr | H | 141 | 149 | |||

SOURCE: Gregory et al., 2012.

a solution should be made. It is at this point that decision rules developed early in the decision process become important.

Clarifying Rules of Decision Making

Clarifying the rules of decision making in advance is an important early process design step (NRC, 2008). Deliberation until consensus is reached

is sometimes stated as a decision rule., but this may give too much sway to holdouts or extreme views as discussions move toward closure. A decision rule might be adopted that does not allow a single dissenting view to block closure but does allow two or more to do so. An alternative approach is to have consensus as a goal, but not a requirement. In absence of consensus, participants (agencies and nonagency participants) can fall back to smaller coalitions (or to themselves in the most extreme example) and the statutory powers they hold. Failing and others (2012) have found that this decision rule can be useful for contentious, multiparty water management issues. In their case, participants were asked to rate the final alternatives via the following scale:

- Endorse (participant fully supports the alternative)

- Accept (the alternative meets participant’s minimum needs, but with the following reservations…)

- Block (the alternative does not meet the participant’s minimum needs)

Failing and others defined “consensus” as an alternative that was not blocked by any participant. Agencies, in particular, may still block an alternative as required by their individual regulatory or legislated roles. Short of blocking an alternative, however, this format allows participants to state their concerns and reservations without standing in the way of reaching an agreed-upon outcome. In fact, these formally stated reservations may form the basis of discussion regarding monitoring, adaptive management triggers, and the conditions of future reviews.

COMPATIBILITY OF A PROACT-LIKE PROCESS WITH AGENCY PROCESSES

Determining whether the decision framework recommended in this report is better than another framework, or no framework at all, requires that the framework be decomposed into its overarching elements of organized participation and the integration of science. An examination of public

participation in environmental assessment and decision making (NRC, 2008) found empirical support for application of certain basic principles. With respect to organizing participation, inclusiveness of participation, collaborative problem formulation and problem design, transparency of processes, and good-faith communication were all found to be important. Whereas the committee recommends more inclusiveness in participation than has occurred in the past in this region, it also suggests there is a practical upper limit to inclusiveness. This suggestion expands beyond the principles as described by the NRC (2008). With respect to integrating science into the process, the NRC (2008) found support for iteration between analysis and broadly based deliberation dependent on the availability of decision-relevant information; explicit attention to facts and values; explicitness about analytic assumptions and uncertainties; independent review; and reconsideration of past conclusions. The committee’s recommendations are consistent with these principles.

Whether a process similar to PrOACT is better than some alternative for structuring the analytic content of public participation is difficult to assess. The decision process described in this report draws on real-world experience as well as current understanding of how people make decisions in complex and unfamiliar situations. It is designed to avoid common errors in decision making such as overlooking relevant facts, failing to properly communicate analytical results to all participants, or failing to account for the role of participants’ values in interpreting analytical results. A PrOACT-like process recognizes the potential for such errors and provides ways to avoid them.

A second line of evidence could be whether a PrOACT-like process outperforms other types of decision frameworks. There is some experimental evidence to show that a value-focused approach for managing environmental risks (in this case, water management decisions) leads to better outcomes than do approaches that are driven from simply comparing alternatives without a PrOACT-like framework (Arvai et al., 2001).

There is a broad alignment between the steps laid out in a PrOACT-like process, those in a USACE planning process, and a document prepared to comply with the National Environmental Policy Act, with perhaps a bit more granularity given by the five steps of the PrOACT framework. Given these similarities, following the recommended decision framework will not preclude also satisfying individual agency planning processes. Moreover, the information generated through the early steps of a PrOACT-like process can be used to support very dissimilar approaches. For instance, the information contained in a consequence table would also be collected at an interim step through a traditional benefit-cost analysis, where the impacts of the different alternatives would be tracked to the end points of interest.

This page intentionally left blank.