3

Systems and Collaborations

In his opening remarks for the workshop (see Chapter 1), Ted Clark, CENTRA Technology, Inc., described the Intelligence Community (IC) as part of a broad system in which analysts gather, manage, and analyze massive amounts of data for decision making; collaborate and communicate with colleagues; and interact with technology in many ways. The presentations in session 2 of the workshop, moderated by Jonathan Moreno, University of Pennsylvania, considered the state of the science on current challenges and opportunities, as well as research needed, for the IC to build high-functioning systems and teams, use technology to augment human capabilities effectively, and ensure successful communication.

INTEGRATING THE WORKFORCE INTO THE NATIONAL SECURITY SYSTEM

Nancy Cooke, Arizona State University, began by defining human–systems integration as “an approach in which human capabilities and limitations across a variety of dimensions are considered in the context of a very dynamical system of people, technology, environments, and tasks, and with the ultimate goal of achieving system resilience.” She identified human elements in this large system as including training, personnel, selection, survivability, human factors, manpower, and the interconnections among those elements. She explained that the human–systems approach differs from approaches that consider human factors, such as human–machine interactions, because it considers human factors to be only one dimension of a broader system. She added that the tools used to measure human fac-

tors are more suited to smaller systems, whereas a broader array of tools is needed to understand large sociotechnical systems integration.

To illustrate these differences, Cooke explained that human factors might examine a single human–machine interface, but one could also examine a system of many humans and many machines, or a system of systems as might be found in a nuclear control room or shipboard command and control. Similarly, she said, examining the interface with a single medical device differs from examining the coordination of patient care in a hospital.

Human–systems integration emphasizes multidisciplinary and team research, Cooke continued, including such disciplines as cognitive psychology, industrial engineering, systems engineering, and other fields, depending on the particular setting. Most important, she said, experts are brought together with the users in the system to learn from them. Together, experts and users seek to understand the entire system of interest.

Failure to consider whole systems can lead to unintended consequences, noted Cooke, a risk that increases as the complexity of the system increases. For example, she said, suppose that one person controls an unmanned aerial vehicle collecting sensor data. That system could be analyzed to determine how this person could control several unmanned aerial systems, and how these data could be fed into a central point where others were examining the sensor data for changes (e.g., looking for a car to move on a screen). Increasing the load on these central analysts could mean that they were being tasked with examining more data than they were able to process. As another example, Cooke cited systems she has seen that enabled observation of potential threats but failed to consider how to communicate those threats to people in harm’s way. She then used the Sago mine disaster to illustrate a case in which disaster might have been avoided had even one of the human–systems integration issues been resolved. She described how the miners followed their training and instructions for how to be located in an emergency. However, the procedures they followed were designed to trigger a seismic location device. Unfortunately, Cooke explained, they were trained in and implemented procedures that assumed such a device had been employed, but the device was found to be unwieldy, cumbersome, and time consuming to deploy. Therefore, she said, although the miners had been trained in a protocol that involved relying on the device, no one ever really intended that the device would be available, which led to tragic results.

Cooke continued by arguing that human–systems integration is useful not only for avoiding negative consequences but also for making systems more effective and efficient. Considering the system early in the process can save money, she suggested. As an example, she described an airport incident command center on which she was asked to consult because responders were receiving poor scores on mass casualty exercises from the Federal Aviation Administration and the National Transportation Safety

Board. Cooke and her colleagues identified a number of issues related to unnecessary equipment and communication. She observed that if not well-suited to the task that needs to be accomplished, costly equipment can be a hindrance rather than a help. Focusing on improving the technology can be the wrong solution, she added, if the best solution does not involve the technology at all.

Cooke suggested that applying human–systems integration approaches to meeting the needs of the IC will require additional collaboration with those who are working in this field. Based on her current understanding, however, there is a need to integrate selection, training, and work requirements across the whole enterprise instead of considering each element in isolation. She observed further that technology often is imposed on users without sufficient consideration of whether it will be used or useful. She added that tools may be tailored for individual analysts, but the tools’ effectiveness or appropriateness in working together in a larger system may not receive sufficient scrutiny. She suggested, for example, that automation can be seen as a way to overcome human limitations in analysis, but “can actually make the task much worse. It can lead to automation complacency, misuse, and distrust of the automation. . . . It has to be very carefully integrated into the system with all of the human capabilities and limitations in mind.”

Cooke also sees multidisciplinary teaming and communication as important to intelligence analysis. She argued that collaboration tools, especially those that help break down stovepipes, could help meet these needs. She added that further consideration of the classification of information will be necessary. A human–systems integration approach, she suggested, could also address questions about how the selection of candidates interacts with training and the technologies analysts use, or how teamwork interacts with issues related to classification, workload, and technologies. According to Cooke, a systems approach in this context would bring together engineers, human–systems integration specialists, and users—what could be termed a “golden triad” of design.

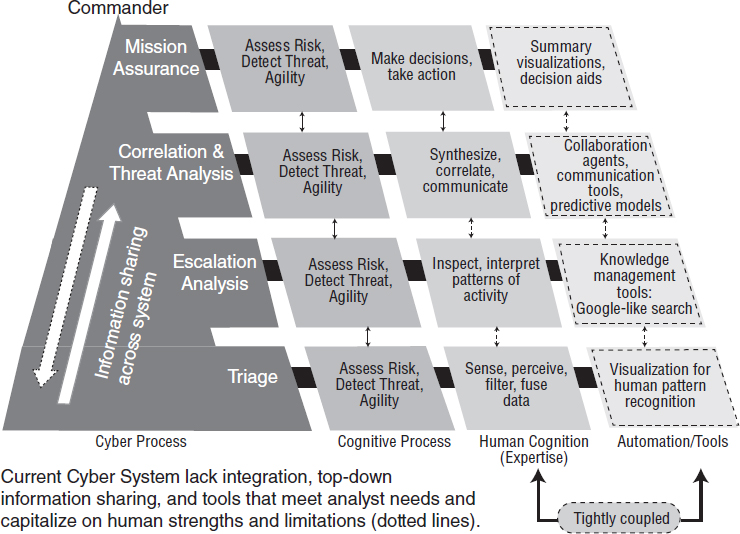

Cooke illustrated this approach with an example from her work on cybersecurity analysis for the Army Research Office. She explained that the work involved a series of steps toward understanding the system that was in place, beginning with a cognitive task analysis that involved interviews, observations, surveys, and the development of models. Next, after better understanding the system, she and her team tested empirically the hypotheses they had developed.1 They saw cybersecurity as a sociotechnical system comprising many people and a large amount of technology, as

___________________

1 Rajivan, P., and Cooke, N.J. (in press). Information Pooling Bias in Collaborative Security Incident Correlation Analysis. E-pub in advance of print. Available: http://journals.sagepub.com/doi/pdf/10.1177/0018720818769249 [April 2018].

NOTE: Figure is unpublished.

SOURCE: Presented by Nancy Cooke at the workshop.

shown in Figure 3-1. Human operators on the defense side had different roles and levels in a hierarchy, and varied in terms of the information, skills, and knowledge they possessed. The tools and algorithms they had varied as well, based on the analyses and visualizations needed for different levels of decision making, said Cooke.

Ideally, Cooke continued, processes are carried out by humans or tools based on which can perform them more effectively. However, she stated, this often does not occur. Instead, she said, in this instance, “the cyber analysts that we talked to said stop it with the tools, stop giving me the tools, I cannot use them, they get in the way, they just take more time for me to learn, I don’t want the tools.” Her team’s analyses also revealed that little information was being transferred from the top of the hierarchy to the bottom; rather, analysts poured over an enormous amount of information without any contextual guidance from leadership.

Cooke explained further that representing the system as a social network or as a network of tasks helped represent complexity and the ways

different parts of the system impacted other parts. She added that industry tends to be more heterogeneous and appear more team-like relative to the military, which has a more hierarchical structure.

Cooke also described how empirical studies in simulated environments could be useful for the IC. She has used this approach, for example, to compare working as a team and working as a group. She explained that a group may simply be people placed in a setting together to do a job, whereas a team is a special kind of group in which each member brings different knowledge, skills, and abilities to the work. Furthermore, she observed, team members work interdependently and communicate with each other deliberately.

Cooke went on to explain that the cognitive task analysis in the cybersecurity setting revealed little collaboration and minimal role differentiation. In addition, there was a bottom-up flow of information that appeared to emerge from the in-place reward structures for possessing interesting information. As a result, Cooke and her colleagues tested hypotheses about reward structures that are conducive to better interactions and teamwork and ultimately to higher signal detection. They created a simulation in which half of the groups were encouraged to work as a team after receiving training in various roles, and the other half were not. The two sets of groups worked side by side and could talk if they wanted to. The groups completed triage analysis, examining alerts and determining which alerts were real. The groups also competed with each other to earn bags of candy. Group members could earn candy as individuals by finding the most alerts. According to Cooke, the results of this simulation showed that working as a team when alerts were novel was important. Groups that worked as teams detected more true alerts than groups that did not, she reported. In addition, she and her colleagues found that groups that shared less information perceived more difficulty and time pressure with the task relative to high sharers.

Cooke asserted that if human–systems integration factors are considered early in the design of a system, the benefits to the IC can be substantial. The approach, she elaborated, can take interdependencies within the system into account and align technology with the IC’s needs and capabilities. Ultimately, she argued, this approach can improve effectiveness and national security.

During the discussion following Cooke’s presentation, several participants noted the need to help analysts identify those with whom they need to connect to do their work and how they can obtain specific information they need “just in time.” Kara Hall, National Cancer Institute, suggested that recommender systems or other tools from research, such as Harvard Catalyst, offer ways to identify networks of different researchers who are working on a topic. Such tools, she added, are more effective the more

people participate. Another participant suggested that artificial intelligence may offer greater ability to analyze text and ultimately to connect people working on similar topics. Stephen Fiore, University of Central Florida, stated that this would be especially helpful for “connecting the dots” and crossing disciplinary boundaries. Another participant observed that such tools could also help the IC make use of information gathered in the past that is currently difficult to access.

COLLABORATIVE KNOWLEDGE BUILDING: CYBERNETIC TEAM COGNITION

In his presentation, Fiore presented his views on team cognition, including the problem with human cognition and building knowledge from multiple information sources, the theory behind team cognition, and a roadmap for a solution to the problem as it relates to intelligence analysis. He argued that an unnecessary and problematic divide exists between scientists and engineers, and that this relationship will need to improve if the technologies that will be useful to the IC are to be developed. These technologies are going to be necessary, he explained, because of the central paradox of human cognition and building knowledge: that humanity is building knowledge at an exponential rate, and the rapid pace of technology development is providing people with ubiquitous access to information and knowledge,2,3 but human cognitive capabilities are evolving at a much slower rate. Therefore, he asserted, human ability to make use of knowledge and technology is significantly limited. He described a “burden of knowledge”—too much knowledge within particular disciplines—that makes it impossible for a single person to understand and use all the knowledge available across many areas. He believes these human limitations can be alleviated through technological or biological enhancements or cybernetics.

Fiore explained that although cybernetics is a term that “means different things to different people,” he would focus in his presentation on a particular adaptation of cybernetics: the symbiotic blend of humans and technology, such as brain implants, brain–computer interfaces, brain stimulation, and chemical enhancements. “The only way to accelerate the biology of human evolution,” he asserted, “is to merge man and machine. . . . This is how we [can better] address the challenges that we are facing in the 21st century.” In his view, this is the only way humans will be able to make use of all the knowledge being generated. He stressed, however,

___________________

2 Jones, B.F. (2009). The burden of knowledge and the “death of the renaissance man”: Is innovation getting harder? The Review of Economic Studies, 76(1), 283–317.

3 Jones, O.D., Wagner, A.D., Faigman, D.L., and Raichle, M.E. (2013). Neuroscientists in court. Nature Reviews Neuroscience, 14(10), 730–736.

that as with the human–systems integration approach, the development of technology needs to be theoretically grounded in an understanding of cognition and collaboration and the way they take place in the broader work environment. He regards this as a way to help prevent the common occurrence of the introduction of new technologies for intelligence analysis that fail to be adopted.

Fiore went on to describe a need for technoscience, or the convergence of technology development and science, where engineers and scientists work in increasingly indistinguishable ways and in an iterative fashion to learn from and inform one another. He added that cognitive scientists now study humans working with technology in new fields, using such methods as cognitive work analysis and cognitive systems engineering, enabling an examination of how cognition changes with the use of machines. He suggested that research and development adopting these approaches is more likely to produce effective technologies for intelligence analysis.

Fiore then turned to macrocognition in teams, which he identified as one approach for bringing scientists, engineers, and users together to develop these human-enhancing technologies for increasing the use of knowledge. He noted that key dimensions of macrocognition were outlined more than a decade ago by Erik Hollnagel (2002)4 and further described by Morrow and Fiore (2013)5:

- Across natural and artificial cognitive systems, the process and product of cognition will be distributed.

- Cognition is not self-contained and finite, but a continuance of activity.

- Cognition is contextually embedded within a social environment.

- Cognitive activity is not stagnant, but dynamic.

- Artifacts aid in nearly every cognitive action.

Fiore explained that the model of macrocognition in teams builds on this understanding to focus on cognition in the face of complexity in a team context. His research has been aimed at understanding how teams build knowledge in the service of solving complex problems. When a team works together to solve a problem, he elaborated, each member contributes his or her own thinking, and the members then work together to discuss their ideas, moving from individual to group cognition.

___________________

4 Hollnagel, E. (2002). Cognition as control: A pragmatic approach to the modeling of joint cognitive systems. Theoretical Issues in Ergonomical Science, 2, 309–315. doi: 10.1080/14639220110104934.

5 Morrow, P.B., and Fiore, S.M. (2013). Team cognition: Coordination across individuals and machines. In J.D. Lee and A. Kirlik (Eds.), The Oxford Handbook of Cognitive Engineering (p. 206). New York: Oxford University Press.

Through an interdependent and iterative process, stated Fiore, the group also moves from having data to having information to having knowledge, a process that involves individual processes and collaboration and communication among the members. He clarified that knowledge building involves organizing and synthesizing information in a particular context. Context is essential to understanding complex problems, he emphasized, and inextricably linked to transforming data to knowledge. He explained that synthesis can include creating artifacts, such as graphs; tables that aggregate or organize data; or integration of information to create a broader understanding. This synthesis produces knowledge, he said, or a solution when applied to a particular problem-solving context.

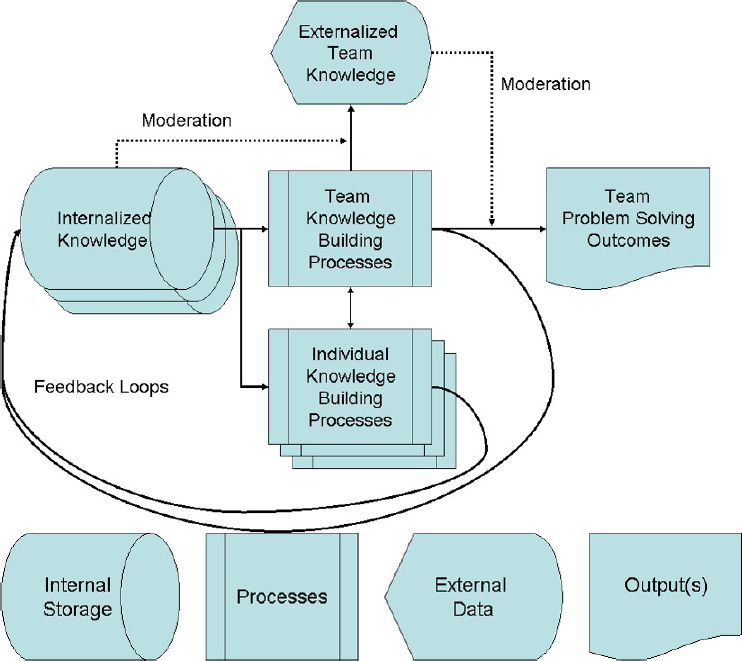

Fiore then showed a model of macrocognition in teams that he and his colleagues developed (see Figure 3-2).6,7 This model, he explained, incorporates multiple levels of individual cognitive processes, including those that involve interactions with technology; group cognitive processes and communication; and how aspects of how these processes move through different problem-solving phases over time. He argued that each of the elements in this model can become a leverage point for technology development. Uniting these elements, then, can provide a roadmap for cybernetics research and development, enabling the development of hybrid human–machine technologies to support team tasks and complex collaborative problem solving.

In Fiore’s view, macrocognitive cybernetics can provide “a way forward for intelligence analysts” because it can provide guidance on how to work more effectively with the vast amounts of data and information analysts must understand and apply, including through human–machine teams. Both he and Clark emphasized that increasing amounts of data from satellites, sensors, and social media pose a challenge for analysts to manage, validate, and weigh. Fiore stated that in the next 5 years, advances in research and development on extending team cognitive processes with machine artifacts will be ready for application. In the next 10 years, he believes that augmented cognition through improved collaboration with machines will become possible through advances in artificial intelligence and computational linguistics.

Fiore then described several facets of a research roadmap for the use of cybernetics to augment team cognition in intelligence analysis. He asserted that technologies that can help analysts offload information and free up

___________________

6 Fiore, S.M., Rosen, M.A., Smith-Jentsch, K.A., Salas, E., Letsky, M., and Warner, N. (2010). Toward an understanding of macrocognition in teams: Predicting processes in complex collaborative contexts. Human Factors, 52(2), 203–224.

7 Fiore, S.M., Smith-Jentsch, K.A., Salas, E., Warner, N., and Letsky, M. (2010). Toward an understanding of macrocognition in teams: Developing and defining complex collaborative processes and products. Theoretical Issues in Ergonomic Science, 11(4), 250–271.

SOURCE: Fiore et al. (2010, Fig. 1). Presented by Stephen Fiore at the workshop.

analysts’ cognitive resources for knowledge building are on the short-term horizon, citing the example of the construction of visualizations of data and other artifacts (e.g., cognitive artifacts, distributed cognition, external representations) to improve the ability to express and represent cognition outside of oneself. Such approaches, he argued, could enhance the effectiveness of intelligence analysis. “These are important collaborative processes,” he stated. “If you have ever been in a good meeting with smart people and you are engaged and you are curious, invariably, someone goes to the whiteboard. That is where the rubber hits the road. That is where knowledge co-construction truly begins to emerge in a team. That is what we need to take seriously, and study, if we want to create technologies that can augment team cognition and create a cybernetic future. We need a seamless blend between human and machine.”

Fiore noted that new technology, such as NetDraw,8 helps teams develop visualization tools to overcome their biases and represent which of their members possess needed knowledge, thereby improving decision making.9 He cited other advances that help with argument representation, representing intellectual conflict, interactions, and deliberation. He also noted that other technologies can serve as memory aids by facilitating the offloading of information and freeing up cognitive resources for other tasks.

According to Fiore, a third area that should be pursued for cybernetic teams is the distinction between task work and teamwork. He pointed out that traditionally, the knowledge, skills, and abilities needed to complete tasks are considered separately from the attitudes and skills needed to operate in a team, such as managing conflict or interpersonal skills. He suggested that both taskwork and teamwork should be studied to develop and integrate technologies that can augment these parts of team cognition. Tools exist, he observed, to help with such tasks as analyzing data, interpreting information, solving problems, and making decisions in support of the analyst’s task. What is needed, he argued, is integration of these tools with technologies that can also help support teamwork by providing a way to understand workflow, dynamic planning, and team member roles. He cited Base Camp as an example of a tool that attempts to attend to both teams and tasks.

Fiore noted that he and other researchers in this domain have examined and identified ways to quantify the use and transmission of internalized team knowledge, individual knowledge gathering and building, knowledge sharing, and information exchange across teams to evaluate alternatives.10,11 He described as an example studies of National Aeronautics and Space Administration (NASA) mission control teams that identified the qualitative problem-solving processes involved in effective collaborative problem solving,12 as well as the quantitative problem-solving processes in which

___________________

8 Available: https://sites.google.com/site/netdrawsoftware/home [March 2018].

9 Balakrishnan, A.D., Fussell, S.R., and Kiesler, S. (2008, April). Do visualizations improve synchronous remote collaboration? Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, 1227–1236. Available: http://sfussell.hci.cornell.edu/pubs/Manuscripts/Balakrishnan-CHI08.pdf [March 2018].

10 Kitchin, J., and Baber, C. (2017). The Dynamics of distributed situation awareness. In Proceedings of the Human Factors and Ergonomics Society Annual Meeting (vol. 61, pp. 277–281). Los Angeles, CA: SAGE.

11 Seeber, I., Maier, R., and Weber, B. (2013). Macrocognition in collaboration: Analyzing processes of team knowledge building with CoPrA. Group Decision and Negotiation, 1–28.

12 Fiore, S.M., Wiltshire, T.J., Oglesby, J.M., O’Keefe, W.S., and Salas, E. (2014). Complex collaborative problem solving in mission control. Aviation, Space, and Environmental Medicine, 85(4), 456–461.

teams engage when solving complex problems.13 In his view, new research is needed to connect concepts in team research emerging from the organizational sciences with work in other disciplines, such as cognitive and computer sciences, to pursue the creation of cybernetic teams capable of the kind of complex cognition found in intelligence analysis.

BUILDING A TEAM: THE SCIENCE OF TEAM SCIENCE

Hall began by explaining that her work has focused on helping to investigate the effectiveness of team science across all areas of science, with a particular emphasis on advancing cancer research. She noted that despite decades of research on teams (e.g., in the military or industry), by the early 2000s there was still little research examining the work of science teams specifically. She suggested that her work with colleagues in launching the Science of Team Science (SciTS) conference at the National Institutes of Health in 2006 could have useful application to the IC. Specifically, she described SciTS’s aim as developing an evidence base of team characteristics and processes and the institutional, funding, and other conditions that influence the effectiveness of collaboration in science, often with a focus on cross-disciplinary teams.14

Hall suggested that there are similarities, but also important differences, between the science and intelligence communities. She noted that scientists, like intelligence analysts, work in the knowledge space, and their work is shaped by multiple levels of factors, adding that the two communities also develop similar products, including publications, briefs, and presentations, and provide expert input. She observed that although academic researchers may have more autonomy as to the research they pursue and the members of their teams, their work is often shaped by the tenure and promotion process. As another distinction, she pointed to the fact that intelligence analysts work in environments where they or others may have received training in leadership, whereas academics infrequently receive leadership training. She added that power dynamics between the two communities also exist because of different levels of clearance or decision-making power.

Hall then presented Table 3-1, drawn from a 2015 National Academies report on team science, which shows that teams vary on a variety of dimensions. She stated that differences in these dimensions can greatly affect the nature of the work. She cited the amount of disciplinary integration as

___________________

13 Wiltshire, T.J., Butner, J.E. and Fiore, S.M. (2018). Problem-solving phase transitions during team collaboration. Cognitive Science, 42(1), 129–167. doi: 10.1111/cogs.12482.

14 Stokols, D., Hall, K.L., Taylor, B.K., and Moser, R.P. (2008). The science of team science: Overview of the field and introduction to the supplement. American Journal of Preventative Medicine, 35(2 Suppl.), S77–S89.

TABLE 3-1 Dimensions of Team Science

| Dimension | Range | |

|---|---|---|

| Diversity | Homogeneous | Heterogeneous |

| Integration | Unidisciplinary | Transdisciplinary |

| Size | Small (2) | Mega (1000s) |

| Proximity | Collocated | Globally distributed |

| Goal Alignment | Aligned | Divergent or misaligned |

| Boundaries | Stable | Fluid |

| Task Interdependence | Low | High |

SOURCE: Adapted from National Research Council. (2015, Tbl. 1-1). Enhancing the Effectiveness of Team Science. N.J. Cooke and M.L. Hilton (Eds.), Washington, DC: The National Academies Press. Presented by Kara Hall at the workshop.

an especially important factor. This factor, she elaborated, can range from individuals from a single discipline working together (unidisciplinary) to transdisciplinary, which she characterized as involving “trying to understand the perspective of others to develop new ways of thinking about things, and new ways of doing things in a more highly synthetic and transcendent way.” She drew a distinction between 2 chemists located at the same university and 20 researchers from different disciplines and countries working together.

Hall then listed the factors that can affect teamwork and make collaboration complex:

- intrapersonal factors (e.g., attitudes toward collaboration or inclusive leadership styles);

- aspects of the physical environment (e.g., spatial proximity or comfortable meeting spaces);

- interpersonal factors (e.g., member flexibility, familiarity with teammates, communication);

- technological factors (e.g., infrastructure, preparation to use technology, provisions for security and privacy);

- organizational factors (e.g., incentives for teamwork, opportunities for frequent communication, climate, broad disciplinary perspectives); and

- societal or political factors (e.g., crises that prompt the need for teaming, cooperative international policies).

Together, she said, these factors influence the collaborative effectiveness of teams generally, and transdisciplinary science initiatives in particular.

Summarizing a forthcoming review paper on the effectiveness of collaborations in science and noting exceptions to the findings,15 Hall stated that collaborations that spanned organizational and contextual boundaries were found to have enhanced the impact of the research and increase innovation and productivity. Furthermore, she reported, greater diversity of disciplines in the collaboration was found to lead to broader dissemination of results and greater identification of previously unexplored scientific areas. The size and composition of scientific teams also affected their outcomes, she noted. Whereas research has shown that smaller teams are more likely to develop new, disruptive ideas (e.g., patents, Nobel Prize–winning breakthroughs), she elaborated, larger teams appear to be better able to build on understanding and move it forward in a more translational way. She added that academic rank and professional roles impact collaboration such that teams comprised of junior members tend to have higher levels of breakthrough publications. On the other hand, she noted, teams with at least one full professor or a senior first author appear to be associated with publication in high-impact journals. She also pointed out as well that teams with moderate levels of cultural and ethnic diversity tend to have better outcomes in terms of publication impact and productivity relative to those with low or very high levels of diversity. Similarly, she said, gender diversity can enhance outcomes (e.g., having more citations, winning grant proposals), in part because women are engaging more than men in interdisciplinary collaboration.

Hall stated that coordination mechanisms are critical to the success of teams, and more so the more geographically dispersed and complex they are, adding that complexity can increase as a function of their size, the number of disciplines represented by their members, their distribution, team dynamics, or other organizational or bureaucratic dynamics. During the discussion following her presentation, Fiore noted that coordination mechanisms can include regular meetings or technology that supports coordination. He suggested that it would be important for the IC to examine its current coordination mechanisms and the degree to which they have helped people develop shared understandings.

Hall continued her presentation by suggesting that a key aspect of understanding how research on scientific teams might offer lessons for intelligence teams is gaining a sense of how outcomes that are valued and measured affect what is known to contribute to team success. She noted

___________________

15 Hall, K.L., Fiore, S.M., Vogel, A.L., Huang, G.C., Serrano, K.J., Rice, E.L., and Tsakraklides, S. (in press). The science of team science: A review of the empirical evidence and research gaps on collaboration in science. American Psychologist.

that decades of research have highlighted key principles (e.g., cognition, conflict, coordination, cooperation, context, composition, culture, communication, coaching) important to understanding how and why teams function as they do. However, she observed, academic researchers tend to focus on publication impact. “Basically, we get what we measure,” she stated. “So, when we think about the IC and the criteria that we are thinking about for selection and review, what are the things that we are promoting as a culture in the IC community? How is that driving some of the behaviors that are happening?”

Hall went on to point out that the early process of collaboration, which may lead to team formation, can begin with identifying the problem or the goals the team will address, which helps shape the process for identifying the members of the team. Many groups that work on common issues are not teams, she observed, but may be networks, working groups, or advisory groups, for example. To collaborate successfully, she explained, groups generate a shared mission and goals, develop an understanding of the strengths and weaknesses of different disciplines, cultivate a group environment of psychological safety, and encourage information sharing and knowledge creation. As the team matures, she added, its members develop shared language and ways of conceptualizing the problem and a greater sense of commitment to working together, and as its work progresses, the team develops sets of roles and processes for communicating and accomplishing the work. Teams can also develop a mechanism for reflecting on and evaluating their own effectiveness and adapt as needed, she said.

Hall explained that the team context affects areas addressed by other presenters at the workshop—selection and recruitment, training, leadership, and motivation, for example. She added that the needs of the team can affect who is selected and recruited and the training new employees receive so they can function as team members. She noted that training may also be needed to address the collaborative competencies required to work in a team.

Hall closed by stating that future research on teams should occur more often outside of laboratory settings through natural experiments, quasi-experimental designs, or simulation models. She suggested that this type of research would help in understanding the real-world complexity of teams and how various factors that affect team functioning interact. She suggested further that the collaborative platforms that could help teams function could also be used for research on teams to understand how they work together, as well as provide an opportunity for obtaining real-time feedback. She stressed that more needs to be known about the processes that lead to success or struggle in collaborative environments to advance understanding of team functioning and opportunities to improve training for working in a team.

THE CHALLENGE OF COMMUNICATION

Eric Eisenberg, University of South Florida, began by observing that having high intelligence and superior cognitive ability is no guarantee of having strong communication skills. This observation is important, he suggested, because research indicates that successful intelligence analysts need expertise in their subject matter, in analysis, and in communication.16 “While academics really want to focus on helping people to develop the complex skills associated with leadership,” he said, “the fact of the matter is that the key differentiators are people’s ability to regularly perform the simple, basic skills of fundamental interpersonal communication.” He added that these skills can include many essential, basic elements of communication, such as the ability to be present, listen, or make eye contact, upon which more complex skills can be built. He cited the most essential communication skills for leaders—and where efforts should focus—as self-awareness and the ability to communicate with curiosity with others who have a different viewpoint.

Eisenberg then pointed to several problematic assumptions about communication. First, he noted, people often assume that communication has occurred when it may only be a communication attempt. He likened this to handing someone a hot cup of coffee and not checking to make sure that the person is holding it securely before letting go. He recommends instead of asking such common questions as “Do you understand?” and “Do you have any questions?” eliciting information to check for understanding.

Eisenberg cited as a second problematic assumption that knowing the facts is enough, especially given the abundance of data to which intelligence analysts have access. He stressed that, in addition to the facts, analysts need frameworks and ways of making sense of the facts so they can use the facts effectively.

Third, Eisenberg observed that many people wrongly assume that those with whom they communicate share their worldview and vocabulary. The different ways people use and interpret language, he explained, can lead to misunderstanding and result in poor communication.

Eisenberg then identified three universal communication challenges:

- Managing massive amounts of data in the information age.

- Collaborating as a team across function, specialty, and agency.

- Making regular and disciplined use of iterative feedback in sensemaking.

___________________

16 Pidgeon, N., and Fischhoff, B. (2011). The role of social and decision science in communicating uncertain climate risks. Nature Climate Change, 1, 35–41. doi: 10.1038/nclimate1080.

In Eisenberg’s view, these challenges can offer important opportunities. For example, he observed, new types of analyses and predictions are possible with the use of large datasets. In addition, he said, having conversations in which people offer different ideas or provide feedback on others’ ideas can be challenging, but cultivating an environment of psychological safety in which different perspectives and ideas can be shared and built upon can lead to better sensemaking.

Eisenberg asserted that applying key principles and best practices in communication can improve the effectiveness of intelligence analysts. He quoted from a report reviewing the communication failures surrounding Iraq’s supposed possession of weapons of mass destruction: “The lesson is that it is vital to maintain analyst/customer relationships characterized by a slightly skeptical, probing sort of substantive engagement. This requires effort and understanding from both sides (pg.3).”17 According to Eisenberg, this statement underscores the importance of analysts being able to communicate and stand their ground, but also remain open to the ideas of others, who feel empowered to ask difficult questions and push back.

Communication can also be constrained by what people believe can be done with the information or interpretations of information they provide, Eisenberg continued. He illustrated this phenomenon with an observation based on his research in the context of emergency medicine, which showed that if an emergency room was connected to a psychiatric hospital, health care workers observed psychiatric symptoms in patients much more often than did health care workers in hospitals without readily available psychiatric care. He suggested that future research could examine whether similar constraining phenomena take place in the IC.

Eisenberg also offered another lesson from communication—that data clearinghouses do not work. Although people have a need to store and draw on collections of information, he said, “people don’t want to put lessons in them. People don’t want to search to get lessons out of them.” He added that future systems could improve on past storage attempts, but people currently prefer “just-in-time” information (e.g., restaurant reviews when they visit a new city).

Eisenberg went on to point out that analysts also need effective means of talking about uncertainty in ways that are understandable and that minimize people’s discomfort with it. Approaches to this end, he explained, include using certain terms to indicate uncertainty, as well as visualization tools that allow others to see the range of possibilities and confidence lev-

___________________

17 Ford, C.A. (2009). Relations Between Intelligence Analysts and Policymakers: Lessons of Iraq. Washington, DC: Center for the Study of Intelligence. Available: https://www.hudson.org/content/researchattachments/attachment/697/ford(lessons_of_irag)_final_lo-res.pdf [March 2018].

els associated with the information, such as the pathways used by weather forecasters to plot possible courses of hurricanes. Workshop participants from the IC commented that presenting information about uncertainty remains important but challenging. Fiore noted that both cognitive and interpersonal skills are needed to convey this type of information effectively.

In Eisenberg’s view, one of the most critical lessons about communication that analysts should apply is the need to make their thinking visible to others. The best communicators, he observed, do not simply communicate their conclusions; rather, they share the logical reasoning that led to their conclusions and meet the ideas and questions of others with curiosity and openness. He argued that this way of communicating, hearing, and incorporating multiple perspectives improves the accuracy of assessments.

In the discussion following Eisenberg’s presentation, Clark explained that analysts currently engage in many of these communication practices, using whiteboards to display their ideas and engaging in productive argumentation. Another participant seconded Eisenberg’s observation that in addition to being able to present one’s own viewpoint, it is necessary to remain open to the ideas of others.

This page intentionally left blank.