Proceedings of a Workshop

| IN BRIEF | |

|

August 2021 |

SECTION 230 PROTECTIONS: CAN LEGAL REVISIONS OR NOVEL TECHNOLOGIES LIMIT ONLINE MISINFORMATION AND ABUSE?

Proceedings of a Workshop—in Brief

INTRODUCTION

On April 22 and 27, 2021, the Committee on Science, Technology, and Law of the National Academies of Sciences, Engineering, and Medicine (the National Academies) convened a virtual workshop titled Section 230 Protections: Can Legal Revisions or Novel Technologies Limit Online Misinformation and Abuse? Provisions of Section 230 of the Communications Act of 1934, as enacted as part of the Communications Decency Act of 1996, are the subject of debate by a range of stakeholders.

Congress enacted Section 230 to foster the growth of the internet by providing certain immunities for internet-based technology companies. Section 230 contains two key immunity provisions. The first1 specifies that a provider of an interactive computer service shall not “be treated as the publisher or speaker of any information provided by another information content provider,” effectively exempting internet social media and networking services from liability under laws that apply to publishers, authors, and speakers. The second2 provides “good Samaritan” protection for providers who, in good faith, remove or moderate content that is obscene, lewd, lascivious, filthy, excessively violent, harassing, or otherwise objectionable.3

While Section 230 has played an important role in development of the internet as a platform for the global exchange of information and ideas, the internet has evolved in unanticipated ways since 1996. Today, a small number of large companies operate social media platforms that millions use for information and public discourse. Concentration of power, disinformation (including sophisticated disinformation campaigns), abuse on social media (hate speech, harassment, bullying, and discriminatory practices), use of algorithms to amplify and target content and advertising, and lack of transparency in content moderation have become issues of increasing concern. There are many opinions regarding potential solutions, including about whether (or by what means) Section 230 should be revised.

In welcoming participants, workshop planning committee chair Judith Miller noted that the workshop’s purpose was to address legal, policy, and technological aspects of Section 230 and its relationship with such critical issues as free speech, privacy, and civil rights and to examine approaches to address concerns about internet immunity protections while preserving free speech and democratic norms.

OVERVIEW OF SECTION 230

Section 230 has served as a “lightning rod for many concerns surrounding the impact of social media and the effect of online communications on our society, refracted by the prism of polarization,” noted Cameron Kerry (Brookings Institution). Section 230 was intended to protect the internet in its early years and to encourage innovation and uptake, he

__________________

1 47 USC Section 230(c)(1).

2 47 USC Section 230(c)(2).

3 These provisions do not grant total immunity—they do not apply to violations of criminal, intellectual property, or sex trafficking laws.

![]()

said. In so doing, it differentiates internet-based information services from broadcasting and telecommunications. A hands-off approach to government oversight of the internet has guided U.S. policy, even with the recognition that the growth of the internet has had dramatic ramifications with regard to privacy, cybersecurity, and intellectual property. Internet growth in terms of volume and velocity was unforeseen, he said.

In considering future action to address issues of concern, Kerry issued two cautions: Do no harm, and be humble. Many proposals to change Section 230 could have unintended consequences, such as diminishing freedom of expression, he said. He cautioned that mandated content moderation—the screening, flagging, or removal of user-generated content by the platforms hosting the content—could raise constitutional issues. He suggested looking beyond revision to or repeal of Section 230 at issues related to competition enforcement or privacy. He also stressed that the issue of content moderation is a global one and thus, “America should not act alone.”

Jeff Kosseff (U.S. Naval Academy) echoed the need for informed debate, rather than relying on “anecdotes, half-truths, and sound bites.” He harkened back to the 1950s to explain Section 230’s underpinnings. A 1959 Supreme Court decision rejected as unconstitutional the application of a Los Angeles ordinance under which the city held a bookseller liable for distributing obscene materials when the seller (distributor) had no knowledge of the book’s illegal content.4 This reasoning was applied to subsequent cases, holding that distributors of third-party content, such as newsstands, can only be held liable for third party content if they knew or had reason to know of the defamatory or illegal content. In 1991, the U.S. District Court for the Southern District of New York held that the electronic bulletin board CompuServe, which had a hands-off policy about moderating content, should be treated like an electronic newsstand (distributor) and thus not face liability when sued for defamatory content.5 In contrast, its competitor Prodigy, which attempted to moderate content, was treated as a publisher with legal responsibility for defamatory content, rather than a distributor, by a New York State judge.6 The Prodigy decision acted as a disincentive for platforms to do any moderating, Kosseff explained, since a platform that engaged in content moderation would be subject to a higher standard of liability as a publisher than as a distributor.

Congress was in the process of overhauling telecommunications laws when it amended Section 230 to enable platforms to moderate content without being exposed to liability as if they were publishers. It received little attention, according to Kosseff, until a lawyer defending America Online invoked Section 230 in 1997.7 The courts ruled in favor of America Online, deciding that unless a statutory exception applies (e.g. federal criminal law and intellectual property infringements), a platform is not liable for hosting content fully created by another party, regardless of the platform’s state of mind (e.g., whether the platform knew or should have known that the content is defamatory). This broad judicial interpretation of Section 230 has prevailed, allowing companies to base their business models around user-generated content.

PANEL 1: USE OF TECHNOLOGY IN CONTENT MODERATION

The panel moderator and workshop planning committee member Edward Felten (Princeton University), observed that, while technology continues to advance to moderate content, humans will always play a key role. Humans, he said, must set criteria and provide examples to train machine-learning models to make predictive decisions to allow or block new content at scale, based on the likelihood that the content violates a platform’s content policies. Human policy teams determine the decision threshold for acceptable reliability regarding decisions made by learning algorithms as to the appropriateness of content. If the threshold between allowing and blocking content is too low, the model over-blocks content and stifles free expression; set too high, the model under-blocks and potentially leads to disinformation, harassment, or other negative situations.

Charlotte Willner (Trust & Safety Professional Association) described her experience as a content moderator and the complex requirements in the emerging trust and safety field.8 When asked about Section 230 reform, she responded that “Trust and safety professionals know the capacity for unintended consequences is almost infinite.” She added that her perspective as a “relative outsider” to debates about Section 230 points to a larger problem: “Why are trust and safety professionals relative outsiders to the conversation?” she asked. “My primary recommendation [with

__________________

4 See Smith v. California, 361 U.S. 147 (1959). The Court found that imposing liability on a distributor of third-party speech without knowledge of the illegal material could chill legal free speech under the First Amendment.

5 See Cubby, Inc. v. CompuServe, Inc., 776 F. Supp. 135, 140–41 (S.D.N.Y. 1991).

6 See Stratton Oakmont, Inc. v. Prodigy Servs. Co., No. 31063/94, 1995 WL 323710 (N.Y. Sup. Ct. May 24, 1995).

7 The case was Zeran v. America Online, Inc., 129 F.3d 327, (4th Cir. 1997) cert. denied, 524 U.S. 937 (1998). For background, see Kosseff, J. 2020. The lawsuit against America Online that set up today’s internet battles. Slate. https://slate.com/technology/2020/07/section-230-america-online-ken-zeran.html.

8 This field seeks to establish business practices whereby online platforms reduce the risk that users will be exposed to harm, fraud, or other behaviors that are outside community guidelines.

regard to Section 230] is to get the direct perspective from the practitioners whose work will be most impacted by changes to Section 230.” Sarah T. Roberts (University of California Los Angeles) said she began research in the field when she learned how low-status, low-paid people in content-moderation call centers make mission-critical decisions central to companies’ brand management. Echoing Felten, she noted “There have been great advances in the field of computational mechanisms by which content moderation can occur, but contrary to many early predictions, they do not eliminate the need for human intervention.” She said that computational advances cannot eliminate human intervention at critical points in the production chain and noted legal and policy professionals often have a blind spot about implementation and operationalization of content moderation policy. “Content moderation in implementation is policy operationalized,” she stressed. “Any change to policy begets consequences for workers and others engaged in the process.”

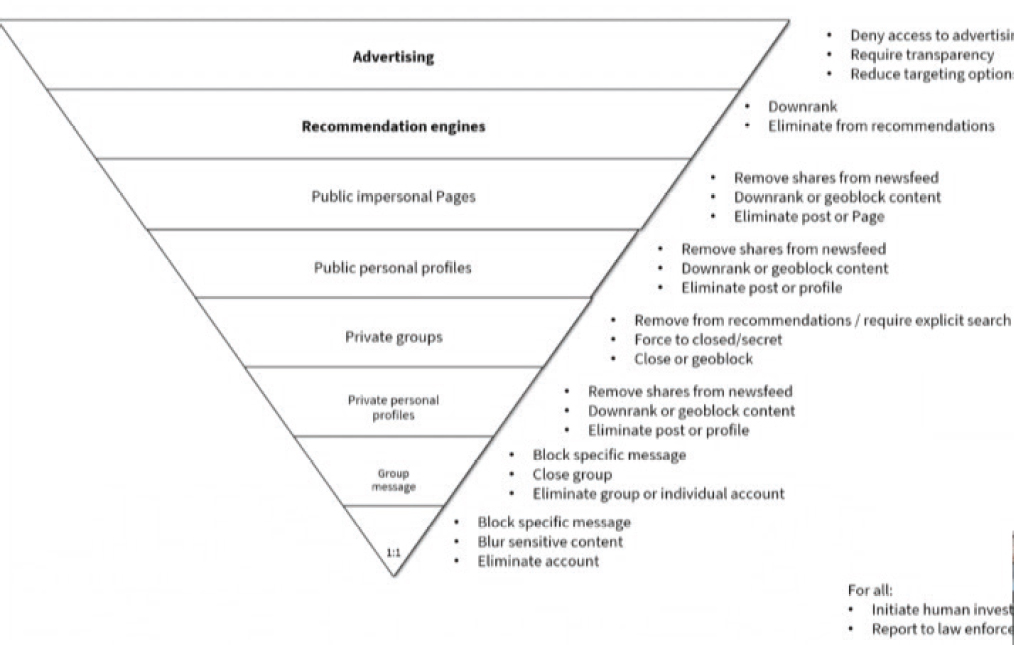

Alex Stamos (Stanford University) likened the power of machine learning in content moderation to “a billion first-graders”—good for simple tasks at scale but not tasks that require complex thinking. While a small percentage of content-moderation decisions capture public attention, he stressed the vast majority are not controversial. For example, Facebook reported taking down 6.4 million pieces of “organized hate” in the fourth quarter of 2020 compared to 1 billion pieces of spam. He urged teaching trust and safety issues to computer science students, just as cybersecurity was added to the curriculum in the early 2000s. Drawing from his own class on the subject, he pointed to privacy-safety trade-offs at different levels of amplification, from one-to-one communications, to small group conversations, to recommended engines, to paid advertising. Stamos noted that as services provide more amplification, there are correspondingly less free expression rights and diminished expectations of privacy. While most attention centers around content takedowns, he noted other options allow for free expression but also limit amplification (see Figure 1). A future issue looms, he warned: End-to-end encryption in products such as WhatsApp, which are widely used in many countries, renders user content invisible to content moderators.

SOURCE: Alex Stamos, Workshop Presentation, April 22, 2021.

The Content Moderation Workforce

Willner described how content moderators use rules and criteria to make thousands of decisions a day to host, take down, or flag user content on social media platforms. Machines help, but each situation is unique and sometimes rules do not clearly apply. Roberts added the commercial content moderation industry is global and growing. Working conditions vary greatly and the psychological impact can be immense, with frequent burn-out and turnover. One reason for poor working conditions, she said, is firms consider moderation a cost center, not a revenue generator. The biggest firms subcontract their operations and many are secretive about how their content moderation workforce fits within their business models. Roberts is hopeful that more public scrutiny will improve the situation. She stressed that content management is a nascent industry and that these conditions are not pre-ordained.

Setting and Enforcing Policy

Willner said policies must be enforceable, which calls for an overlap between those who set and those who enforce policy. Problems often occur when the two functions are divorced, especially as platforms increase their scale. Stamos noted platforms often post sweeping statements (e.g., “We do not tolerate hate speech”), but that fractalization occurs when a moderator has to decide on a specific piece of content. Content moderation may inevitably involve political choices, especially in countries in which a company’s government affairs team is close to the ruling party or dominant ethnic group, he warned.

Dealing with Change and Breadth

Felten noted that some actors try to circumvent content moderation. Willner said that a huge portion of content moderation is about change management, in which rules are updated to reflect real-time developments. Stamos urged continuous assessment using technology. Companies invest heavily in metrics related to revenue, but not in metrics related to abuse, he said.

Willner explained that the fundamental structure for content moderation is the same across companies. More formalized structures are implemented as a company’s size and/or external scrutiny grows. Trust and safety teams are situated within different company units, however, including online operations, legal, public policy, and public relations. Stamos added that each company can decide whether to use community-based content moderation or narrow the core goal of the platform to simplify its content moderation policy (e.g., Etsy does not need to moderate political content).

In response to the question whether content moderation systems at certain companies could be characterized as a private analogue to a governmental function, Roberts said she finds it worrisome when firms create institutional mechanisms to circumvent or lessen government oversight or regulation. Stamos pointed out that, in some countries, the government is the problem, as when regimes attempt to control citizens’ use of platforms.

PANEL 2: DISINFORMATION TO INFLUENCE AND UNDERMINE U.S. AND GLOBAL DEMOCRATIC PROCESSES

Panel moderator, workshop planning committee member Martha Minow (Harvard Law School), asked panelists to provide their perspectives on defining, preventing, and responding to disinformation through revisions to Section 230 or other reforms.9Joan Donovan (Harvard Kennedy School) said that she and her team look at media manipulation and disinformation, including how groups use technology to hide their identity or intentions. A team directed by Karen Kornbluh (German Marshall Fund) looks at sources of disinformation and their impact on democracy, especially when false information is laundered to appear to come from reputable sources. Zeynep Tufekci (University of North Carolina, Chapel Hill) suggested that, instead of focusing on definitions of disinformation, we should operationalize what constitutes disinformation by examining how content, policies, and incentives can contribute to societal goals. Attorney and technologist Alex Macgillivray stressed that because disinformation usually centers on adversarial issues, the public and private sectors, including academics, advocacy organizations, and political parties, all play a role in addressing it.

__________________

9Misinformation generally refers to false information presented as fact regardless of the intent to deceive, while disinformation generally refers to a subset of misinformation that is intentionally false and intended to deceive and mislead, hiding the interest and identity of the user. See https://www.businessinsider.com/misinformation-vs-disinformation. Panelists noted that defining disinformation is not always straightforward.

An Old Problem in a New Format

Lies and propaganda are not new to the internet, Minow observed, and asked about differences in this context. Donovan noted that social media frequently share links from cable news. “We have to face this is a media ecosystem, and everything is connected,” she said. What makes social media different is responsiveness, she said. Rather than traditional media’s “one-to-many” approach, the audience becomes the distribution mechanism.

Macgillivray noted that online gatekeepers and influencers interact with mainstream media, and focusing on platforms, mainstream media, or government alone will not prevent disinformation. Tufekci added that parts of the media ecosystem both interconnect and compete with each other. Additionally, while platforms are engagement-driven, she said, people in many countries view mainstream media as a problem that social media circumvents. Conspiracy theories are amplified through social media, she acknowledged, as happened during the COVID-19 pandemic. However, she pointed out that health institutions failed in some cases and lost public trust and said that it is important to find mechanisms that differentiate between health disinformation and legitimate intra-science disagreements.

Minow asked how platforms might separate fair debate from disinformation. Kornbluh referred to NewsGuard, a service that rates outlets that publish false information or disguise themselves as legitimate.10 She also pointed to the speed with which algorithms amplify salacious content. Even when content is taken down, she noted, it has already spread. She suggested that “there needs to be some way, like a circuit breaker, to not keep amplifying content while it is being evaluated.” Legitimate sources, she said, must learn to invite openness and strive to build scientific literacy.

Reforms Beyond Section 230

Donovan suggested that machine learning techniques similar to those used to detect copyright infringement might be useful in removing disinformation, but Macgillivray argued that such techniques would not work for this purpose. Instead, he urged specificity about the harm one seeks to prevent, and then targeting that harm, when considering ways to address disinformation. One problem identified in the 2016 election was false information spread by nation-states, Macgillivray said. Development of international norms could address that harm, he said. Kornbluh suggested going after “low-hanging fruit” to address on-line harms, e.g., updating existing laws and regulations to fit the online environment—registration of foreign agents, consumer protections, civil rights law, and voter suppression, for example. “A lot of enforcement or updating of offline laws for the online environment would not impose First Amendment11 challenges,” she said.

Harm Versus Intent

Donovan said the legal system is set up to address individual, not collective, harms. Tufekci suggested learning from environmental and food safety regulations, where harm is defined as a public goods problem. Kornbluh cautioned that, unlike in the case of these regulatory regimes, regulation of social media poses First Amendment issues. She supported the development of codes of conduct enforced by the Federal Trade Commission (FTC), international monitoring, and more transparent terms of service. Minow commented an additional harm is erosion of trust in the watchdog process and asked how to achieve transparency. Kornbluh said the internet grew up in an era when it was believed the market would suffice, that the internet would be pro-democratizing, and that regulations were not needed.

PANEL 3: HARMS TO INDIVIDUALS: HATE SPEECH, VIOLENCE, HARASSMENT AND BULLYING

In introducing a panel on online harms to individuals and ways to address them, panel moderator, workshop planning committee member, Susan Silbey (Massachusetts Institute of Technology) said that recent research has found that one in five U.S. adults has experienced online harassment.

Assessment of Online Hate

Online hate and extremism has grown exponentially in the past 25 years through viral sharing and algorithmic targeting, explained Christopher Wolf (Hogan Lovells). A turning point occurred when Facebook was launched in 2004 and everyone, including hate groups, became a publisher. There are now 4.2 billion social media users globally. Collectively, they post 1,000 hours of video on YouTube and tweet 350 million times every minute. If only 1 percent of tweets con-

__________________

10 See https://www.newsguardtech.com.

11 The First Amendment to the U.S. Constitution states, in relevant part, that: “Congress shall make no law...abridging freedom of speech.”

tained hate speech, 5 million such messages would circulate daily, he said. Globally, violent actors are inspired by online hate, as shown in a Council on Foreign Relations report,12 he said.

Further, hate speech is widespread and destructive.13 Wolf also called attention to harassment perpetuated through online gaming.

The False Narrative of Free Speech

Mary Anne Franks (University of Miami Law School) noted that the hate and harassment described by Wolf are often dismissed as “the cost of free speech.” She referred to this as a false narrative. “Costs are unevenly distributed,” she said. “The same people who are most silenced offline are those who are silenced online.” Social media, she said, aggregates harms while disaggregating responsibility. She suggested that Section 230 reinforces the notion that everything online relates to speech and that every attempt to regulate it is censorship—but online activity goes beyond communication to commerce, etc. She said that Section 230 should be rewritten to clarify that it only relates to speech. She urged scrutiny to see whether what is acceptable online would be acceptable offline.

Policy and Law

Olivier Sylvain (Fordham University School of Law) said laws can engender good behavior and redress harms, but Section 230 has precluded the application of law to private internet platforms as intermediaries. Such “intermediaries are not a reflection of the polity but of their own commercial and pecuniary interests,” he said. Much of what is online is not speech as such but commercial activity, including advertising and amplification of content.

For example, Facebook’s Ad Manager generated categories for advertisers that violated civil rights law. When revealed, civil rights groups filed a case and Facebook invoked Section 230 as a defense. Sylvain noted that the 2019 settlement of that case short-circuited the opportunity for public scrutiny. “We never get to discover how implicated the intermediary is in the distribution of harmful and unlawful content,” he pointed out. Citing Judge Katzmann’s partial dissent in Force v. Facebook, where Katzmann opined that a claim based upon connections Facebook’s algorithms make between individuals is not barred by Section 230, Sylvain said that a turning point may be approaching.14

Preventing Overreach

Genevieve Lakier (University of Chicago Law School) acknowledged a shift in political and public attitudes around the regulation of the digital environment. She called for supporting the interests of all parties, including the marginalized, but cautioned against limited immunity carve-outs, such as creating carve-outs for harassing, hateful, or discriminatory speech. Lakier said this can lead to over-enforcement, especially when the carve-out category is vague. She noted that limited carve-outs do not address the risk of wrongful takedown of speech. As an example, when FOSTA-SESTA was enacted to curb sex trafficking, platforms took down a broad array of discussion about prostitution, including conversations by and about sex workers.15

Lakier made two suggestions. First, in identifying what carve-outs, lawmakers should be specific in order to avoid chilling protected free speech (it is challenging to regulate speech because semantics occurs contextually, she said). Second, reforms should allow for take-down and keep-up decisions and include transparency requirements and due process rights for users. “We are in the early days of internet regulation and we should have a dynamic, rather than static, regulatory regime,” she said.

__________________

12 See Zachary Laub, Hate Speech on Social Media: Global Comparisons, Council on Foreign Relations, June 7, 2019, available at: https://www.cfr.org/backgrounder/hate-speech-social-media-global-comparisons.

13 See Online Hate and Harassment: The American Experience 2021, Anti-Defamation League, available at: https://www.adl.org/online-hate-2021.

14 See Force v. Facebook, Inc., 934 F.3d 53 (2d Cir 2019).

15 The Fight Online Sex Trafficking Act (FOSTA) and Stop Enabling Sex Traffickers Act (SESTA) was signed into law in April 2018.

Countering Hate

Wolf noted that one tool against harmful speech is counter-speech, but that most people cannot (or do not want to) engage with aggressors online. Franks noted the chilling effect of hateful speech on victims and those who fear being a future victim. Sylvain said that companies have not borne any social costs.

Online and Offline Norms

Silbey commented that, from the perspective of a social scientist, the internet presents a challenge because it lacks the type of interactions that have traditionally been a hallmark of communities. Social communities historically monitor behavior through face-to-face interactions and community control, she said. In the absence of these fundamental features, with the internet, we look for digital, legal, and technical controls to create norms. She questioned how to align online and offline norms for acceptable behavior. Wolf said that he finds perplexing the lack of cyberliteracy education and suggested research on interactions in anonymous online environments. Sylvain noted some norms have perpetuated, not minimized, harms, such as GamerGate’s proliferation of misogyny in 2014.16 In dealing with social norms online, he concluded, civil society has a large role to play.

PANEL IV: COMMERCIAL PRACTICES AND IMPACTS ON PRIVATE AND CIVIL RIGHTS

Panel moderator and workshop planning committee member David Vladeck (Georgetown Law School) asked the next panel about the impact of platforms’ commercial practices, such as hypertargeting and advertising, on privacy and civil rights.

Business Models and Section 230

To researcher and technologist Ashkan Soltani, Section 230 disrupts effective consumer protections. He noted that the business model in the 1990s was structured around limited online access for users. He contrasted this with today’s advertising-supported internet, which is structured to maximize time spent online. Platforms selectively decide which content to show and reveal and benefit from harmful content that drives engagement.

Dina Srinivasan (Yale University) described platforms’ online engagement cycles by drawing on her research on how Facebook gained users’ consent to share personal data across the internet. When Facebook entered the market in 2004, the company said it would not track users. When it became the dominant social media platform in 2014, Facebook started requiring users to permit access to their data across the internet. Srinivasan termed this requirement “a quintessential monopoly rent” because lack of competition contributed to it.17 Comprehensive privacy legislation is needed, she said, along with tools that provide more interoperability across platforms.

According to Deirdre Mulligan (University of California, Berkeley), law professor Joel Reidenburg foresaw many current issues in what he called Lex Informatica.18 “In the age of platforms, public governance plays a circumscribed role,” she said. “Much of the action is delegated and enacted directly and indirectly by private actors.” Content moderation comprises defining, identifying, locating, and moderating functions, she noted. The platforms determine how automation and humans execute these functions within various statutory and technological constraints. She suggested that a functional analysis can determine priorities related to values, procedural checks, and investments, and help in developing shared infrastructures, realizing the benefits of technology, and addressing systemic (and not just individual) harms.

Conduct, Not Content

Mulligan characterized ad-targeting algorithms as “conduct, not content.” Soltani suggested an approach that attributes portions of responsibility to software and content. Vladeck acknowledged that there are speech protections for those who place content on a platform, however hateful, but said hypertargeting is problematic. Vladeck asked whether

__________________

16 GamerGate was an internet culture and harassment campaign centered on issues of sexism and misogyny in the gaming world. See Caitlin Dewey, The Only Guide to Gamergate That You Will Ever Need to Read, The Washington Post, October 14, 2014, https://www.washingtonpost.com/news/the-intersect/wp/2014/10/14/the-only-guide-to-gamergate-you-will-ever-need-to-read/.

17 See https://ag.ny.gov/sites/default/files/facebook_complaint_12.9.2020.pdf.

18 Reidenburg, J. 1997. Lex Informatica: The Formulation of Information Policy Rules through Technology, available at: https://ir.lawnet.fordham.edu/faculty_scholarship/42/?_ga=2.215656339.500372520.1620995667-1394756176.1620995667.

“do-not-track” options protect users. Mulligan said that, while it is useful for individuals to have more privacy control, many concerns relate to surveillance. Soltani noted that he, Felten, and others were involved in earlier “do-not-track” efforts that industry lobbied against. The California Consumer Privacy Act offers a second chance, he said, although it is restricted to advertising.19 Srinivasan stressed the need for ease of use, so “opting out” is as easy as “opting in.”

Vladeck said that Section 230 hampers FTC enforcement of platform practices that would be illegal offline. Mulligan suggested that technical and policy collaborations around COVID-19 fraud and misinformation may provide lessons. Srinivasan called for “fixing the incentives problem. The reality is these companies make money,” he said, “whether it’s share of revenue or advertising of products that create problems.” Platforms’ scale and speed mean thousands of people have suffered by the time a regulator flags a problem, Soltani said. Either liability or incentives are needed for platforms to change, he said. Mulligan suggested that platforms be required to perform due diligence around systemic risks before they can benefit from safe harbor protections.

PANEL 5: INTERNATIONAL APPROACHES TO CONTENT MODERATION

In looking at alternatives to Section 230, examples from other countries are useful, suggested panel moderator and workshop planning committee member Daphne Keller (Stanford University). In the United States, she commented, Section 230 and the Digital Millennium Copyright Act (DMCA) have “occupied the space for so long” that case law is not well developed. Other countries have experimented with and learned from alternatives, she said, including in Europe and Latin America. Europe is in the process of debating the Digital Services Act (DSA), which Keller referred to as a once-in-a-generation overhaul to create more detailed platform rules and regulatory structures. DSA, she said, will affect global platforms whether or not the United States undertakes its own reforms and could provide a possible framework for U.S. activity.

Joris von Hoboken (Vrije Universiteit Brussels and University of Amsterdam) explained the European approach to intermediary liability. The Electronic Commerce Directive established the country-of-origin principle for information services and provides for harmonized, horizontal, and conditional safe harbors for “mere conduit, caching, and hosting activities.”20 The directive is based on the DMCA (although with less detail), he said. It provides no explicit safe harbor for search engines. Safe harbor is conditional—platforms with knowledge of illegal activity or information lose immunity if they fail to remove the content. He said that the current environment is characterized by fragmentation, an increasing amount of national legislation, and a lack of clarity about some services. The proposal for the new DSA provides additional clarity and processes and creates a new oversight structure.21 A tiered approach would apply to different service responsibilities and include a Good Samaritan defense when removing content. He said that the DSA has the potential for Europe to set a real standard in this area.

Informed by her experience as a member of the European Parliament, Marietje Schaake (Stanford University) noted that three branches of European lawmaking—the European Council, European Commission, and European Parliament—have made efforts to protect fundamental rights in digital and other realms. Protection of privacy is enshrined in the European Union (EU) Charter of Fundamental Rights, she said.

Across the EU, the identification of mechanisms to moderate content has moved from inaction, to self-regula-

__________________

19 For the text of the California Consumer Privacy Act, see https://oag.ca.gov/privacy/ccpa.

20 For the full text of the directive, see https://eur-lex.europa.eu/legal-content/EN/ALL/?uri=CELEX%3A32000L0031.

21 For the proposed DSA language, see https://digital-strategy.ec.europa.eu/en/policies/digital-services-act-package.

tion, to hard law and harmonization, Schaake said. EU-level efforts have addressed illegal and harmful-but-legal content. Legislation that impacts technology companies includes the General Data Protection Regulation (GDPR), antitrust requirements, and the Ecommerce Directive described above. She said that her concern is how pieces interrelate to prevent unintended loopholes or outcomes. Politically, she said, frustration has grown with reliance on private sector codes of practice and conduct. Some EU member states, however, have opted not to wait for an EU response and have instituted national regulations.

David Kaye (University of California, Irvine School of Law) addressed the relevance of human rights law in the context of online speech. He began by reviewing the roles of companies, governments, and users in addressing content. Kaye suggested that human rights law can guide the discussion, particularly Article 19 in the International Covenant on Civil and Political Rights (ICCPR). Many countries, including the United States, are bound by its language (see Box 1).

Kaye said that Article 19 applies to speakers and audiences. Section 3 states that freedom of expression may be subject to restrictions. “In other words, human rights law imagines that content moderation is not only about freedom of expression. It may also involve the ‘rights and reputations of others,’ such as the right to privacy,” he said. Additionally, ICCPR’s Article 20 prohibits advocacy of national, racial, or religious hatred that constitutes incitement of discrimination, hostility, or violence. Although the United States “placed a reservation” on this provision because it regulates speech protected under the First Amendment, the provision provides a focus for thinking internationally about content, Kaye said.22

Kaye highlighted three examples where human rights law principles relate to internet content. Brazil’s Marco Civil da Internet gives courts a central role to in determining whether a particular content action meets principles of due process and legal thresholds drawn from human rights law. The legislation involved a lengthy, seven-year process with significant stakeholder involvement, he pointed out. The Indian Constitution re-states human rights law in its own Article 19, and India’s Supreme Court decisions have bolstered freedom of expression. There have, however, been a surge in problematic national laws written under the guise of blocking misinformation or online harm, he said. These laws, when enforced, often serve to suppress freedom of expression.23

Evelyn Douek (Harvard University) characterized the Global Internet Forum to Counter Terrorism (GIFCT) as “the most important database you’ve never heard of.” GIFCT is a self-regulating hash-sharing consortium set up in 2017 by four large companies. Terrorism images and videos receive a “unique digital fingerprint” or hash that can prevent the content from uploading on a participating platform. The technology is able to counter cross-platform threats faster and more comprehensively than individual companies can.

GIFCT’s AI tools cannot distinguish context, Douek said. Definitions of “terrorism” and “glorification of terrorism” are highly politicized, she added. For example, Islamic (but not right-wing) terrorism is the main target, and what constitutes glorification of terrorism is not clear. There are also no remedial cross-platform mechanisms. Douek noted that the centralized database concept arose in the context of child abuse materials and that there are calls to use it to combat coordinated inauthentic behavior. “As we go up the chain with what I call content cartel creep, the category of content gets more nebulous and harder to define,” she said.

Laws and Customs Across Countries

Keller asked how internet companies deal with the diversity of laws and customs among countries. Kaye offered two perspectives. First, even when companies can regulate without government interference, their self-governance should consider the rights of people. Second, when governments make demands inconsistent with human rights, companies’ reliance on terms of service may be a weak response. Framing responses in human rights terms may be more effective. Douek urged attention on India, where the government serving platforms with takedown orders. The platforms are complying, in large part, to protect their employees from facing severe repercussions.

Schaake observed that authoritarian regimes do not want to cede control. Balkanization cannot be prevented, she said, so the main question is how democratic countries should respond. “The main question now is what democratic countries are going to do, and are they going to be able to work together at sufficient scale.”

__________________

22 For further discussion, see Kaye, D. 2013. State Execution of the International Covenant on Civil and Political Rights, 3 U.C. Irvine L. Rev. 95.

23 Kaye cited to Singapore’s Protection from Online Falsehoods and Manipulation Act, a disinformation law that provides the government power to take down online content without significant legal constraints.

Remedies and Predictions

Workshop planning committee chair Miller observed that some regimes call for court orders to address content issues, but that adjudicating through the legal system takes a long time. Von Hoboken said that European courts play a role as backstop, but notice-and-action procedures are the first line of action. Keller added that some countries are more amenable to relying on regulators than courts. No country requires court orders for everything, especially in cases of particularly dangerous and obviously illegal content, but more difficult cases go to court.

Workshop planning committee member Minow asked about potential future actions globally. Schaake said she sees growing recognition that the free market cannot sort things out on its own. “I am hopeful with the right kind of cooperation among democratic governments, there will be emphasis on protecting public interest and democracy,” she said. “There is not much time, and there is a huge job to be done.”

PANEL 6: CONGRESSIONAL APPROACHES TO SECTION 230 AND RELATED LEGISLATION

Congressional proposals and other legislation related to Section 230 were considered in a discussion between Vladeck and two members of the Congressional Research Service (CRS): Jason Gallo (Science and Technology Policy Section) and Valerie Brannon (American Law Division).24

Gallo noted that discussions about Section 230 relate to types of content, algorithmic amplification, moderation practices, and large companies’ potential monopoly powers. When contemplating legislative action, he explained, Congress considers: (1) Is action necessary to address the perceived problem?; (2) How is the problem defined?; (3) Who should bear responsibility?; (4) What alternative mechanisms are available?; and (5) What are potential unintended consequences of action or inaction?

The almost 40 bills proposed to amend or repeal Section 230 during the 116th and 117th Congress reflect diverse goals. One category would amend Section 230 by adding new exceptions to immunity. A second would shift to notice-based liability regimes. A third would impose conditions or obligations on platforms’ immunity. A fourth would otherwise limit takedown immunity, by, for instance specifying what contents is subject to takedown. Finally, some proposals would repeal Section 230.

First Amendment Considerations

In response to a question from Vladeck regarding First Amendment issues arising from proposed revisions to Section 230, Brannon said that the Supreme Court has recognized that private entities that provide forums for private speech have some First Amendment rights with regard to decisions on what they transmit. Lower courts extended this principle to websites and search engines. Section 230 does not prohibit or require certain speech or content, she clarified; it is an immunity provision with some conditions. A tricky question relates to whether a specific Congressional proposal conditions the benefits of Section 230 on constitutionally protected speech or on conduct. If on speech, Brannon said, a further question is whether the proposal is content-neutral, noting that the Supreme Court has ruled against “viewpoint discrimination.” Several proposals condition immunity on viewpoint-neutral content, but not all define what “viewpoint-neutral” means. If a bill were enacted and challenged in court, courts would probably turn to First Amendment case law, but Brannon noted that a lack of case law around private actors makes predictions about a proposal’s constitutionality difficult.

Technology in Legislative Proposals

Vladeck asked about treatment of algorithmic amplification in legislative proposals. Gallo pointed to definitional difficulties: Where do you draw the line, for example, when algorithms range from user-selected preferences to complex combinations of technologies and user input?

Treating Platforms Like Other Media

Vladeck asked about treating platforms as common carriers. Brannon explained that broadcast media are treated differently than online media under Federal Communications Commission (FCC) regulations. This is based on the view that broadcast is a scarce, government-allocated resource. Supreme Court decisions have adopted this perspective,

__________________

24 For further background, see two CRS recent reports: Social Media: Misinformation and Content Moderation Issues for Congress (Report R46662) and Section 230: An Overview (R46751), both available at http://crsresports.congress.gov.

including the 1997 Reno v. ACLU decision that stated the internet is unlike broadcast media. 25 In a more recent case in the District of Columbia Circuit Court,26 FCC’s adoption of net neutrality regulations for broadband providers was challenged on First Amendment grounds. The providers argued that the regulations compelled them to transmit speech. The court rejected the First Amendment challenge on the grounds that the broadband providers were mere conduits of the speech of others, and not engaging in editorial discretion. The court noted that the free speech analysis might be different if editorial discretion was implicated. Gallo suggested that treating platforms as common carriers could require platforms to host any content that is legal and neuter their ability to moderate content.

Other suggestions relate to altering Section 230 for only larger companies, Vladeck observed. Gallo pointed to definitional and measurement issues: for example, is size determined by number and/or locations of users, IP addresses, revenues, employees, or other measures, and who would determine the size? Also, if a post goes viral, a platform could grow much larger temporarily. Brannon noted the size issue has triggered heightened judicial scrutiny in the courts, but case law on the issue is currently unsettled.

Gallo and Brannon agreed the amount of legislative activity in the 116th and 117th Congresses points to a groundswell of interest, even if the bills do not share the same goals or scope.

PANEL 7: PROPOSALS TO REPEAL, RETAIN, AND MODIFY SECTION 230

The final workshop panel, moderated by workshop planning member Benjamin Wittes (The Brookings Institution), considered a range of potential responses to the issues raised by Section 230.

Emma Llansó (Center for Democracy and Technology) pointed to scope creep and other issues with FOSTA-SESTA legislation to illustrate why Section 230 should remain as is. Eric Goldman (Santa Clara University School of Law) called for a better understanding of problems that reform efforts aim to solve. Until then, he said, Section 230 represents the best option for balancing competing interests. Matthew Perault (Duke University) argued for reforms that: (1) modernize federal criminal law for the digital age; (2) hold content creators accountable; (3) stimulate the design products to improve reporting flows and responses; and (4) provide more transparency to understand the overall ecosystem. Kate Sheerin (Google) said that the debate tends to focus on the latest controversy and not on broad issues. She warned about unintended consequences and maintained that Section 230 allows for creativity by lowering the bar to entry. Andy O’Connell (Facebook) said that it is time to update laws that govern the internet, with requirements for how companies deal with unlawful and harmful content proportional to their size and independent oversight by a multi-stakeholder body. Alex Feerst (Neuralink) favored changes that support dynamic voluntary frameworks and incentivize transparency. Proportionality principles that allow for investment and innovation are also important, he said. He also noted the need to distinguish between accuracy and agreement with one’s own views in content judgments. Ellen Goodman (Rutgers University Law School) noted that platforms have roles not contemplated under Section 230. The courts have been generous to platforms, she said, and self-regulation has not worked to address harms. In her view, Section 230 has established a “culture of immunity.” She supported an expanded role for the courts, more transparency and access to data for researchers and auditors, harms-based approaches for advertising and micro-targeting, and state-level redress bodies.

Problems Within and Beyond Section 230

Goldman noted that many objections are levied against Section 230, but that it is not clear how reforms would solve problems attributed to the provision. Llansó underscored Section 230’s focus on intermediary liability, noting that the objectionable content may still be lawful speech. That foundational principle often gets glossed over, she said. To combat online harassment, she called for victims to have better access to the justice system. Perault pointed out that harassment, terrorism, and disinformation occur elsewhere (e.g., in telecommunication). A distinction with internet-based technology, Goodman said, is that harassment is networked. Access to justice against an individual harasser is insufficient, she said, and platform cooperation is essential. She expressed hope that well-crafted reforms could create a “culture of moral hazards” among the platforms that would motivate them to invest in addressing problematic issues. Feerst cautioned against reforms that lead to overzealous removal of content. Instead, he said, greater transparency can improve understanding and increase trust.

__________________

Offline Analogues to Social Media Platforms

Panelists did not favor treating social media sites as common carriers. Perault said that doing so would discourage innovation. Goldman suggested that advertisers would abandon the current business model and that this would result in subscription-only access or other reconfigurations. Llansó and Goodman commented that the common carrier concept conflates concerns about social media platforms with a desire for increased competition. When Wittes noted that Google and some other platforms operate at multiple levels, including taking on common carrier functions such as providing “last-mile access” for internet service delivery, Sheerin said that Section 230 allows flexibility across different activities.

Panelists observed that the absence of a comparable analogy highlights the need for care when considering reforms. Llansó called for more work on best practices for technology companies. It is important to understand the realm of technological possibility, she added, noting that some arguments falsely assume a technology exists to solve a particular problem. Feerst noted the value of consulting with younger technologists, lawyers, and others who grew up with the technology and have a “native sense” of the ways in which today’s platforms do not conform to prior communication models.

Impact of Section 230 Repeal

Wittes asked whether repealing Section 230 would lead to more developed common law that would produce workable policies or end the internet as it exists today. O’Connell suggested that Facebook would enforce policies but that there would be more litigation and less competition. Sheerin noted that large companies could probably handle the repeal, but small and mid-sized businesses might not. Feerst predicted that a repeal would lead to over-removal of content because of the threat of lawsuits and that the less empowered would have less of a voice. Goldman said that services would exit the industry or shift from user-generated content to professionally produced content.

When asked about preferred areas of reform, if not Section 230, Llansó supported baseline consumer privacy legislation like in Europe and other countries. Perault proposed the reforming federal criminal laws related to problematic speech. Sheerin called for greater transparency measures, and Goodman suggested that advertisers be empowered so that they know what they are getting from advertising dollars.

CONCLUDING REMARKS FROM THE WORKSHOP PLANNING COMMITTEE

In the final session, workshop planning committee members shared their takeaways from the workshop.

Felten emphasized that the variety of content and harms means that there is no one-size-fits-all solution to address the issues raised during the workshop. He noted that presenters described how company content policies are made internally, but that most companies outsource their execution. Overly focusing on the few “hard cases” could interfere with a system that works for most other cases, he said. He suggested applying lessons learned in the privacy field to the trust-and-safety profession. Felten said that automated approaches to content moderation work fairly well, but that a complex symbiosis between humans and machines is necessary at scale.

Minow reflected on the “do-no-harm” messages expressed by many speakers. She identified several approaches to Section 230; non-regulatory, industry activities; regulation, enforcement, and incentives unrelated to Section 230; and Section 230 modification. She suggested that doing nothing did not seem to be a viable option, but that singling out Section 230 does not get at the real problem or a solution. She shared two additional takeaways: (1) platforms responded to COVID-19 misinformation proactively and lessons learned could be applied to other problems; and (2) the United States must cooperate with other countries to deal with the issue of content moderation. “If we haven’t figured out a way as the United States to cooperate with other nations,” she said, “we will be regulated by other nations.”

The diversity of harms and tools to address them struck Silbey as noteworthy. She referred to recent social science literature that too much focus on outliers results in incorrectly declaring an entire apparatus a failure. She raised the idea of what may be unpopular—a new independent regulatory agency for the internet. She observed that regulation in a centralized agency has helped make other industries safe and that issues related to internet scale and speed could be addressed through coordinated effort. Finally, she emphasized the need to create new norms of behavior and practices.

To Vladeck, “The immunity bargain in Section 230 was the original sin, and we are paying for it.” He suggested that common law may have been more effective in dealing with the industry, rather than freezing regulation in

time. He agreed that a diversity of harms makes finding solutions difficult. He acknowledged criticism of the FTC for not “taming the beast,” but noted that the FCC has limited resources to enforce a multitude of laws. He agreed that many serious problems posed by Section 230 could be addressed with collateral legislation, such as privacy laws. He suggested that pressure be exerted to make industry step up in meaningful ways and reflected on the diverse legislative approaches that have been proposed in light of concerns about Section 230. While there is no gravitational center now, Vladeck suggested that continued concern about Section 230 suggests that there will be legislation in the future.

Keller said that international examples provide models from which to learn. These include clear rules for notice-and-takedown systems. “Pseudo law” developed by companies with questionable public oversight, such as the GIFCT, has proliferated, but legislative change takes time (as was seen in the seven-year process involved in the development of legislation in Brazil). She noted that the First Amendment means that some solutions developed elsewhere would not work in the United States.

Wittes said that Section 230 legislation is unlikely in the short term. A uniting theme is discontent, but “the twain will not meet” between differences in content moderation and other alternatives. To reach a consensus, he said, it is important to navigate the space between those who legitimately want the law to remain as it is and those who want change.

DISCLAIMER: This Proceedings of a Workshop—in Brief has been prepared by Paula Whitacre and Anita Eisenstadt as a factual summary of what occurred at the workshop. The committee’s role was limited to planning the event. The statements made are those of the individual workshop participants and do not necessarily represent the views of all participants, the planning committee, the Committee on Science, Technology, and Law, the National Academies.

REVIEWERS: To ensure that it meets institutional standards for quality and objectivity, this Proceedings of a Workshop—in Brief was reviewed by SINAN ARAL, Massachusetts Institute of Technology; DAPHNE KELLER, Stanford University; MARTHA MINOW, Harvard University. MARILYN BAKER, National Academies, served as the review coordinator.

Planning Committee: JUDITH A. MILLER (Chair) (Independent consultant); EDWARD W. FELTEN (NAE) (Princeton University); DAPHNE KELLER (Stanford University); MARTHA MINOW (Harvard Law School); SUSAN S. SILBEY, (Massachusetts Institute of Technology); DAVID C. VLADECK (Georgetown University Law Center); BENJAMIN WITTES (The Brookings Institution).

National Academies of Sciences, Engineering, and Medicine Staff: ANNE-MARIE MAZZA, Senior Director; ANITA EISENSTADT, Responsible Staff Officer; STEVEN KENDALL, Program Officer; SOPHIE BILLINGE, Senior Program Assistant, and DOMINIC LOBUGLIO, Senior Program Assistant.

SPONSORS: Support for this activity was provided by John S. and James L. Knight Foundation and the Gordon and Betty Moore Foundation.

For additional information about the Planning Committee for Section 230 Protections: Can Legal Revisions or Novel Technologies Limit Online Misinformation and Abuse?, visit https://www.nationalacademies.org/our-work/section-230-protections-can-legal-revisions-or-novel-technologies-limit-online-misinformation-and-abuse-a-workshop.

Suggested citation: National Academies of Sciences, Engineering, and Medicine. 2021. Section 230 Protections: Can Legal Revisions or Novel Technologies Limit Online Misinformation and Abuse?: Proceedings of a Workshop—in Brief. Washington, DC: The National Academies Press. https://doi.org/10.17226/26280.

Policy and Global Affairs

Copyright 2021 by the National Academy of Sciences. All rights reserved.