have increased overall safety and system efficiency (FAA, 1996). Reviews of cockpit automation such as those appearing in Aviation Week and Space Technology in the fall of 1995 note problems or ''glitches" in the human interface, but none of the parties involved in the flight deck dialog (e.g., pilots, airlines, air frame manufacturers, or regulatory bodies) suggests that these glitches necessitate a return to conventional technology. The belief is that digital technology, despite problems, is often beneficial, and, with evaluation and modification, will continue to improve.

ANALYSIS

Current Situation

In many respects, the discussion in NUREG-0711 (USNRC, 1994) summarizes the current situation quite well. Consider the following excerpts:

While the use of advanced technology is generally considered to enhance system performance, computer-based operator interfaces also have the potential to negatively affect human performance, spawn new types of human error, and reduce human reliability. … Despite the rapidly increasing utilization of advanced HSI technology in complex high-reliability systems such as NPP [nuclear power plants] and civilian aircraft, there is a broad consensus that the knowledge base for understanding the effects of this technology on human performance and system safety is in need of further research. … In the past, the [USNRC] staff has relied heavily on the use of HFE [human factors engineering] guidelines to support the identification of potential safety issues. … For conventional plants, the NRC HSI [human-system interaction] reviews rest heavily on an evaluation of the physical aspect of the HSI using HFE guidelines such as NUREG-0700. … Relative to the guidelines available for traditional hardware interfaces, the guidelines available for software based interfaces have a considerably weaker research base and have not been well tested and validated through many years of design application. … [B]ecause of the nature of advanced human-system interfaces, a good system cannot be designed by guidelines alone. … Reviews of HSIs should extend beyond HFE guideline evaluations and should include a variety of assessment techniques, such as validations of the fully integrated systems under realistic, dynamic conditions using experienced, trained operators performing the type of tasks the HSI has been designed for. [Pp. 1-2–1-4]

Currently, and for the foreseeable future, ensuring effective design with respect to human factors of digital I&C applications cannot rest on guidelines. Guidelines are frequently well meaning but vague, e.g., "do not overload the operator with too much data." Owing to rapidly emerging computer technologies and newly conducted studies, information in guidelines is sometimes dated or obsolete. Finally, and perhaps most importantly, guidelines typically give little definitive guidance on the more serious human factors problems, e.g., cognitive workload, interacting factors in a dynamic application (O'Hara, 1994), or classic human factors issues common to many computer applications in safety-critical systems, e.g., mode error, information overload, and the keyhole effect (Woods, 1992).

In some applications (e.g., analog controls and displays), adherence to standards specified in guidelines often defines acceptance criteria for a design (O'Hara, 1994). For digital applications, however, hard, generally applicable, criteria will be a long time in coming, if they come at all. It is important to note that NUREG-0700 Rev. 1 (USNRC, 1995) does not prescribe a set of sufficient criteria for operator interfaces using advanced human-computer interaction technologies. Moreover, no other safety-critical industry has adopted well-defined or crisp criteria. Representatives of the Electric Power Research Institute told the committee, for example, that their organization had no plans to more completely formalize human factors acceptance criteria for advanced technology control rooms.

Thus, design should adhere to guidelines, where trusted guidance is available. It is necessary to go beyond guidelines, however, to ensure a safe design.

The Limits of Guidelines

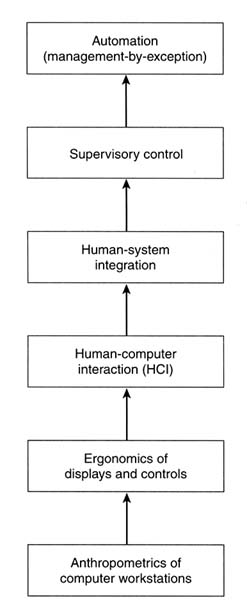

As indicated in the discussion of guidelines in NUREG-0711, there are many more issues than answers in the design of computer-based operator interfaces for complex dynamic systems. Figure 7-2 depicts a hierarchy of issues related to the human factors of advanced technologies for operators of nuclear power plants. The amount of existing knowledge is inversely related to the levels of the hierarchy. Thus, the most abundant, most generally accepted, and most widely available design knowledge is for lower-level issues. Moving up the hierarchy, design knowledge is less detailed and more conceptual, and design experience is not necessarily applicable across a variety of applications (e.g., office automation to control rooms).

Human Factor Issues

Anthropometrics of Computer Workstations. At the lowest level, anthropometry—the science of establishing the proper sizes of equipment and space—there is a good deal of knowledge. Like guidelines for conventional displays and controls, the hardware associated with computer-based workstations is not subject to widespread debate. There are standards and recommendations for computer-based workstations that specify working levels, desk height, foot rests, document holders, and viewing distances (e.g., Cakir et al., 1980; Cardosi and Murphy, 1995). NUREG-0700 Rev. 1 (USNRC, 1995) includes many of these standards in its extensive section on workplace design.

Ergonomics of Displays and Controls. At the next level are issues that specify the characteristics of computer-based controls and displays. Issues include font size, use of color,

FIGURE 7-2 Human factors issues in the control of safety critical systems.

input devices, and types of displays (e.g., visual, audio). Knowledge here blends commonly accepted guidelines with emerging research results that are often task- and/or user-dependent. For example, despite much dispute when first introduced in the late 1970's, a computer input device, called a mouse, was empirically shown to produce performance superior to available alternatives for pointing tasks. Today, mice are routinely packaged with computer workstation hardware. On the other hand, the number of buttons on a mouse still varies from one to three. Recommendations for the "best" design vary depending on user, task, and designer preference.

The issue of the ideal or best number of buttons on a mouse illustrates the state of a great deal of human factors engineering knowledge: there is no single, definitive best answer. In some cases, within some range, the characteristics do not make a difference and users can readily adapt to the characteristics. In other situations, an acceptable solution is task- and user-dependent. Such techniques as trial-and-error evaluations or mock-ups are needed to evaluate proposed designs. NUREG-0700 Rev. 1 contains most standard guidelines in this area.

Human-Computer Interaction. The third level, human-computer interaction, is the area to which the most study has been devoted. This area receives widespread academic and industry attention. Most guidelines address this level of human factors consideration. Issues include style of windows, windows management, dialog types, and menu styles. The guidelines address application-free, or generic, characteristics of human-computer interfaces. Most guidelines for human-computer interaction specify attributes that are likely to be desired, that may be desired, or that must be evaluated in the context of the application (see Cardosi and Murphy, 1995). Even at this level, there is a wide range of acceptable characteristics and no indication that a single, best design strategy is emerging. Following routine human-computer interaction style guidelines, this level of human factors issues can be adequately, though not optimally, addressed.

Combined, NUREG-0711 and NUREG-0700 Rev. 1 summarize most conventional wisdom in this area. NUREG-0711 makes a particularly important contribution with its discussion of the limitations of current guidance and state-of-the-art of design knowledge.

Human-System Integration. The transition to the fourth level of consideration, human-system integration, marks the point where many serious issues concerning human capabilities and limitations and the attributes of computer-based workstations arise. This is also the level where there are many more questions than definitive answers. At this time, the majority of issues arise, and must be addressed, in the context of the application-specific tasks for which the computer interface will be used.

Early issues, still not adequately resolved, include "getting lost" and the keyhole effect (Woods, 1984), gulfs of evaluation and execution (Hutchins et al., 1986), and the inability of designers to aggregate and abstract information meaningful to operator decision making from the vast amount of data available from control-based control systems. Essentially, issues at this level concern the semantics of the computer interface: how to design information displays and system controls that enhance human capabilities and compensate for human limitations (Rasmussen and Goodstein, 1988).

Getting lost describes the phenomenon in which a user, or operator, becomes lost in a wide and deep forest of display pages (Woods, 1984). Empirical research shows that some operators use information suboptimally in order to reduce the number of transitions among display pages

(Mitchell and Miller, 1986). When issues of across-display information processing are ignored, the computer screen becomes a serial data presentation medium in which the user has a keyhole through which data are observed. The limitations on short-term memory suggest that a keyhole view can severely limit information processing and increase cognitive workload, especially in comparison to the parallel displays common in control rooms using conventional analog technology.

Gulfs of evaluation and execution describe the conceptual distance between decisions or actions that an operator must undertake and the features of the interface that are available to carry them out. The greater the distance, the less desirable the interface (Hutchins et al., 1986; Norman, 1988). The gulfs describe attributes of a design that affect cognitive workload. The gulf of evaluation characterizes the difficulty with a particular design as a user goes from perceiving data about the system to making a situation assessment or a decision to make a change to the system. The gulf of execution characterizes the difficulty with a particular design as a user goes from forming an intention to make a change to the system to actually executing the change. Display characteristics such as data displayed at too low a level or decisions that require the operator to access several display pages sequentially, extracting and integrating data along the way, are likely to create a large gulf of evaluation. Likewise, control procedures that are sequential, complex, or require a large amount of low-level input from the operator are likely to create a large gulf of execution.

Finally, and particularly true of control rooms in which literally thousands of data items are potentially available, the issue of defining information—that is, the useful part of data—is a serious concern. The keyhole effect and getting lost are due to the vast number of display pages that result when each sensed datum is presented on one or more display pages. Rasmussen (1986) characterizes many computer-based displays provided in control rooms as representative of one-sensor/one-display design. Reminiscent of analog displays, and because displays may be used for many different purposes, data are presented at the lowest level of detail possible—typically the sensor level (Rasmussen, 1986). There is rarely an effort to analyze the information and control needs for particular operator tasks or to display information at an appropriate level of aggregation and abstraction given the current system state. Research has shown that displays tailored to operator activities based on models of the operator can significantly enhance operator performance when compared to conventional designs (e.g., Mitchell and Saisi, 1987; Thurman and Mitchell, 1995). There is no consensus, however, on the best model; see, for example, Vicente and Rasmussen (1992), who propose ecological interface design based on Rasmussen's abstraction hierarchy as an alternative to Mitchell's operator function model.

A good deal of conventional wisdom characterizing good human-system integration is available with the goal of minimizing the cognitive load associated with information extraction, decision making, and command execution in complex dynamic systems. Woods et al. (1994), for example, propose the concept of visual momentum to improve human-computer integration. Hutchins et al. (1986) use the concept of directness to bridge the gulfs of evaluation and execution, e.g., direct manipulation to support display and control. Others propose system and task models to organize, group, and integrate data items and sets of display pages (e.g., Kirlik et al., 1994; Mitchell, 1996; Vicente and Rasmussen, 1992).

Such concepts are well understood with broad agreement at the highest levels. This knowledge, however, does not, at this time, translate to definitive design guidelines or acceptance criteria. For example, there is common agreement that computer-based displays should not raise the level of required problem-solving behavior as defined by Rasmussen's SRK (skills-rules-knowledge) problem-solving paradigm (Rasmussen, 1986), yet agreement for how to design such displays does not exist. Thus, in part, design of operator workstations is an art requiring the use of current knowledge in conjunction with rigorous evaluation involving representative users and tasks.

Supervisory Control. Introduced by Sheridan (1976), the term "supervisory control" characterizes the change in an operator's role from manual controller to monitor, supervising one or more computer-based control systems. The advent of supervisory control raises many concerns about human performance. Changing the operator's role to that of a predominantly passive monitor carrying out occasional interventions is likely to tax human capabilities in an area where they are already quite weak (Wickens, 1984). Specific issues include automation complacency, out-of-the-loop familiarity, and a loss of situation awareness.

Keeping the operator in the loop has been addressed successfully by some researchers, using, for example, human-computer interaction technology to re-engage the operator in the predominantly passive monitoring and situation assessment tasks (Thurman, 1995). Most operational designs, however, address the out-of-the-loop issue by periodically requiring the operator to acknowledge the correctness of the computer's proposed solution path, despite research wisdom to the contrary (e.g., Roth et al., 1987). This design feature is similar in principle to a software-based deadman's switch: it guarantees that the operator is alive but not necessarily cognizant.

Concern about automation complacency is widespread in aviation applications where the ability of pilots to quickly detect and correct problems with computer-based navigation systems is essential for aircraft safety (Wiener, 1989). Yet, to date, there are no agreed-upon design methods to ensure that operators maintain effective vigilance over the automation or computer-based controls for which they are responsible. As with the concepts of visual momentum and direct

engagement, there is widespread agreement that keeping the operator in the loop and watchful of computer-based operations is an important goal (Sheridan, 1992), but there is currently no consensus as to how to achieve it.

Over the last 20 years, as supervisory control has become the dominant paradigm, computer-based workstations have begun to incorporate a variety of operator aids, including intelligent displays, electronic checklists, and knowledge-based advisory systems. Maintaining a stable, up-to-date knowledge base about nominal and off-nominal operations to support operator decision making and problem solving is very appealing. To date, however, research has not produced designs or design methodologies that consistently live up to promised potential. For example, although some research has shown that some intelligent display designs enhance operator performance (e.g., Mitchell and Saisi, 1987; Thurman and Mitchell, 1994), other designs that sought to facilitate performance with direct perception (Kirlik et al., 1994) or direct engagement (Benson et al., 1992; Pawlowski, 1990) found that although it helped during training, the design did not enhance the performance of a trained operator.

The design of fault-tolerant systems is a comparable issue. A fault-or error-tolerant system is a system in which a computer-based aid compensates for human error (Hollnagel and Woods, 1993; Morris and Rouse, 1985; Uhrig and Carter, 1993). As with displays, there are mixed results concerning the effectiveness of specific designs. When empirically evaluated, some aids had no positive effect (e.g., Knaeuper and Morris, 1984; Zinser and Henneman, 1988); whereas some designs for operator assistants resulted in human-computer teams that were as effective as teams of two human operators (Bushman et al., 1993).

Electronic checklists or procedures for operators are another popular concept. Such checklists or procedures are technically easy to implement and reduce the overhead associated with maintaining up-to-date paper versions of procedures and checklists. The Boeing 777 flight deck includes electronic checklists and several European nuclear plants are evaluating them (Turinsky et al., 1991).

Two recent studies demonstrate the mixed results often associated with this concept. In a full motion flight simulator at NASA's Ames Research Center, a study showed that pilots made more mistakes with computer-based checklists and "smart" checklists than with conventional paper versions (Palmer and Degani, 1991). A study in a nuclear power plant control room context also had mixed results. The experiment consisted of eight teams of two licensed reactor operators (one person in each team was a senior operator) who controlled a part-task simulator called the Pressurized Water Research Facility in North Carolina State University's Department of Nuclear Engineering. The data showed that, during accident scenarios, while computer-based procedures resulted in fewer errors, time to initiate a response was significantly longer with the computer-based as compared to traditional paper-based procedures (Converse, 1995).

NUREG-0700 Rev. 1 (USNRC, 1995) devotes a section of its guidelines to analysis and decision aids. Reflecting the content of other guidelines, advice is sometimes limited or vague. For example, Guideline 5.1-6 recommends that "user-KBS [knowledge-based system] dialog should be flexible in terms of the type and sequencing of user input [p. 5-1]." Acknowledging the importance of the more general, but difficult to specify, issues, NUREG-0700 Rev. 1 includes as an appendix a list and discussion of 18 design principles. One of these general principles states that the "operator's role should consist of purposeful and meaningful tasks that enable personnel to maintain familiarity with the plant and maintain a level of workload that is not so high as to negatively affect performance, but sufficient to maintain vigilance [p. A-2]." The document notes that these principles provide the underpinning for many of the more specific guidelines contained in the body of the report.

Automation (Management-by-Exception). In the continuum from manual control to full automation the human operator is increasingly removed from system control, and in-the-loop familiarity fades. In some systems, control will be fully automatic; anomalies will cause the system to fail safe, and a human will be notified and eventually repair the automation or mitigate the problems with the controlled system. "Lights out" automation in factories and ongoing experiments in aerospace systems are current examples (Brann et al., 1996). The Airbus-A320, in which an electronic envelope overrides pilot inputs, is a step in this direction.

There are numerous human performance issues associated with fully automatic control systems in which the operator is no longer in the control loop. The current debate typically centers on how to define and design automation either for a supervisory controller or for an automation manager. Can automation in which the human is a periodic manager ever be considered human-centered automation? If so, what design characteristics must it have? Billings (1991), for example, suggests that the design must explicitly support mutual intent inferencing by both computer and human agents in order to maintain understanding on the part of the human. Or, does the design facilitate system recovery by a human engaged in fault management rather than control? NUREG-0711 acknowledges both the possibility of all of these roles for human operators in advanced control rooms and the lack of any consensus on if or how to design human interfaces to effectively support them.

Reviewing Systems for Effective Human-System Interaction

Human-system interaction reviews should proceed carefully and in a series of steps. First, guidelines, where applicable, should be consulted. As noted by NUREG-0711 (USNRC, 1994), however, many of the most important human performance issues associated with advanced interface technologies are not adequately covered by current guidance.

Yet to wait for the research community to derive definitive guidance would forfeit many of the advantages of emerging digital technology, both for the overall system and for the human operator. An alternative, and one pursued in almost all other industries, is to design, prototype, and evaluate candidate applications.

A review should ensure that a design is based on a detailed specification of the role and activities of the human operators. At the beginning and throughout the design process, a detailed specification of the functions of the human operator will help to increase confidence that the design process produces a successful product. Given the importance of the operator to system safety and effectiveness, operator functions should be as well and as rigorously specified as the hardware and software functions of the system. Cognitive models of operator functions and system representations offer one way to gather the information essential to create a design that effectively anticipates operator requirements, capabilities, and limitations (Hollnagel and Woods, 1983; Mitchell, 1996; Rasmussen et al., 1994). Designs based on models of human-system interaction have been empirically shown to enhance performance and reduce errors (e.g., Mitchell and Saisi, 1987; Thurman, 1995; Vicente et al., 1995).

In conjunction with cognitive models of operator activities, designers need to intermittently assess proposed features of the human-system interface with respect to the set of classic design deficiencies. For example, if modes are used, does the interface give appropriate feedback to allow the operator to rapidly understand which mode is currently active? How many displayed items and separate display pages must be called and integrated to make an assessment? Is visual momentum lost? Does the organization and access to different display pages provide a keyhole through which the operator sees only part of the system, potentially overlooking an important state, state change, or trend?

Finally, proposed designs must be evaluated in a performance-based manner. Performance-based evaluations should include a realistic task environment, statistically testable performance data, and subjects who are actual users.

The decreasing cost of emerging digital technologies allows the use of part-task simulators in which high-fidelity dynamic mock-ups of a proposed design can be implemented and rigorously evaluated. Other industries make extensive use of workstation-based part-task simulators (e.g., aviation); results are found to scale quite well to the systems as a whole (e.g., Gopher et al., 1994).

The prevalence of concepts such as user-centered design (Norman, 1988) typically means that all designers know that they must involve users early in the design process. Designers often report that users are consulted at every step. Indeed, the committee heard of design evaluations in which nuclear power plant operators joined the design team to tailor display attributes for operator consoles in advanced reactors. While user input, preference, and acceptance are important issues, they do not take the place of rigorous performance-based evaluation. Empirical evaluations demonstrate repeatedly that well-intended designs and/or user preferences sometime fail to have the anticipated beneficial effects (Andre and Wickens, 1995). Particularly in areas that are changing as rapidly as that of human-computer interaction technologies, rigorous, statistical evaluations, over and above surveys of user preferences, are essential to ensure that the desired effect is in fact achieved.

The term "performance-based evaluation" is chosen to distinguish between studies of usability versus studies of utility. Usability studies are often not rigorous enough to generate behavioral data that can be analyzed statistically. Usability studies are conducted intermittently through various phases of the design process, iterating through the "designevaluate-design loop until the planned levels [of usability] are achieved" (Preece, 1994). Usability studies are also a mechanism for soliciting user input and advice. Typically such studies are somewhat informal, and their purpose is to ensure that the interactions the designer intended can be carried out by users. Thus, usability studies attempt to answer the question: Is the design "usable" in the ways expected during the design specification? Such studies, however, do not necessarily ensure that a design is useful, i.e., an improvement over what it replaces. Particularly with new technology and new strategies for design, usability studies, as normally conducted, do not go far enough. They fail to evaluate the utility of the output of the design process—the product—to ensure, via measurable human performance, that the results make a value-added contribution to the operator interface.

Moreover, it is essential to conduct evaluations with actual users and representative tasks. Much of the knowledge in human factors is known to be applicable to only certain classes of tasks and users (Cardosi and Murphy, 1995). NUREG-0711 notes that one weakness of guideline-based design is with interacting guidelines. The only way to ensure the effectiveness of the final product is to test it for both usability and utility with actual users and in the context of realistic tasks demands.

An approach based on a combination of judicious use of guidelines, a principled design process, realistic prototypes, and performance-based evaluation is likely to produce a design product that enhances operator effectiveness and guards against common design deficiencies in computer-based interfaces.

CONCLUSIONS AND RECOMMENDATIONS

Conclusions

Conclusion 1. Digital technology offers the potential to enhance the human-machine interface and thus overall operator performance. Human factors and human-machine interfaces are well enough understood that they do not represent a major barrier to the use of digital I&C systems in nuclear power plants.

Conclusion 2. The methodology and approach adopted by the USNRC for reviewing human factors and human-machine interfaces provides an initial and acceptable first step in a review. As described in NUREG-0700 Rev. 1 and NUREG-0711, existing USNRC procedures, for both the design product and process, are consistent with those of other industries. The guidelines are based on many already available in the literature or developed by specific industries. The methodology for reviewing the design process is based on sound system engineering principles consistent with the validation and verification of effective human factors.

Conclusion 3. Adequate design must go beyond guidelines. The discussion in NUREG-0711 on advanced technology and human performance and the design principles set out in Appendix A of NUREG-0700 Rev. 1 provide a framework within which the nuclear industry can specify, prototype, and empirically evaluate a proposed design. Demonstration that a design adheres to general principles of good human-system integration and takes into account known characteristics of human performance provides a viable framework in which implementation of somewhat intangible, but important, concepts can be assessed.

Conclusion 4. There is a wide range in the type and magnitude of the digital upgrades that can be made to safety and safety-related systems. It is important for the magnitude of the human factors review and evaluation to be commensurate with the magnitude of the change. Any change, however, that affects what information the operator sees or the system's response to a control input must be empirically evaluated to ensure that the new design does not compromise human-system interaction effectiveness.

Conclusion 5. The USNRC is not sufficiently active in the public human factors forum. For example, proposed human factors procedures and policies or sponsored research, such as NUREG-0700 Rev. 1, are not regularly presented and reviewed by the more general national and international human factors communities, including such organizations as the U.S. Human Factors and Ergonomics Society, IEEE Society on Systems, Man, and Cybernetics, and the Association of Computing Machinery Special Interest Group on Computer-Human Interaction. European nuclear human factors researchers have used nuclear power plant human factors research to further a better understanding of human performance issues in both nuclear power plants and other safety-critical industries. Other safety-critical U.S. industries, such as space, aviation, and defense, participate actively, benefiting from the review and experience of others.

Recommendations

Recommendation 1. The USNRC should continue to use, where appropriate, review guidelines for both the design product and process. Care should be taken to update these instruments as knowledge and conventional wisdom evolve—in both nuclear and nonnuclear applications.

Recommendation 2. The USNRC should assure that its reviews are not limited to guidelines or checklists. Designs should be assessed with respect to (a) the operator models that underlie them, (b) ways in which the designs address classic human-system interaction design problems, and (c) performance-based evaluations. Moreover, evaluations must use representative tasks, actual system dynamics, and real operators.

Recommendation 3. The USNRC should expand its review criteria to include a catalog or listing of classic human-machine interaction deficiencies that recur in many safety-critical applications. Understanding the problems and proposed solutions in other industries is a cost-effective way to avoid repeating the mistakes of others as digital technology is introduced into safety and safety-related nuclear systems.

Recommendation 4. Complementing Recommendation 2, although human factors reviews should be undertaken seriously, e.g., in a performance-based manner with realistic conditions and operators, the magnitude and range of the review should be commensurate with the nature and magnitude of the digital change.

Recommendation 5. The USNRC and the nuclear industry at large should regularly participate in the public forum. As noted in NUREG-0711, advanced human interface technologies potentially introduce many new, and as yet unresolved, human factors issues. It is crucial that the USNRC stay abreast of current research and best practices in other industries, and contribute findings from its own applications to the research and practitioner communities at large—for both review and education. (See also Technical Infrastructure chapter for additional discussion.)

Recommendation 6. The USNRC should encourage researchers with the Halden Reactor Project to actively participate in the international research forum to both share their results and learn from the efforts of others.

Recommendation 7. As funds are available, the USNRC's Office of Nuclear Regulatory Research should support research exploring higher-level issues of human-system integration, control, and automation. Such research should include exploration, specifically for nuclear power plant applications, of design methods, such as operator models, for more effectively specifying a design. Moreover, extensive field studies should be conducted to identify nuclear-specific technology problems and to compare and contrast the experiences in nuclear application with those of other safety-critical industries. Such research will add to the catalog of recurring deficiencies and potentially link them to proposed solutions.

Recommendation 8. Complementing its own research projects, the USNRC should consider coordinating1 a facility, perhaps with the U.S. Department of Energy, in which U.S. nuclear industries can prototype and empirically evaluate proposed designs. Inexpensive workstation technologies permit the development of high-fidelity workstation-based simulators of significant portions of control rooms. Other industries make extensive use of workstation-based part-task simulators (e.g., aviation); results are found to scale quite well to the systems as a whole.

REFERENCES

Andre, A.D., and C.D. Wickens. 1995. When Users Want What's NOT Best for Them. Ergonomics in Design, October.

Benson, C.R., T. Govindaraj, C.M. Mitchell, and S.M. Krosner. 1992. Effectiveness of direct manipulation interaction in the supervisory control of flexible manufacturing systems. Information and Decision Technologies 18:33–53.

Billings, C.E. 1991. Human-Centered Aircraft Automation: A Concept and Guidelines. Technical Memorandum No. 103885. Moffett Field, Calif.: NASA Ames Research Center.

Brann, D.M., D.A. Thurman, and C.M. Mitchell. 1996. Human interaction with lights-out automation: A field study. Pp. 276–283 in Proceedings of the 1996 3rd Symposium on Human Interaction in Complex Systems, Dayton, Ohio, August 25–28, 1996. Los Alamitos, Calif.: IEEE.

Bushman, J.B., C.M. Mitchell, P.M. Jones, and K.S. Rubin. 1993. ALLY. An operator's associate for cooperative supervisory control systems. IEEE Transactions on Systems, Man, and Cybernetics 23(1):111–128.

Cakir, A., D.J. Hart, and T.F.M. Hart. 1980. Visual Display Terminals: A Manual Covering Ergonomics, Workplace Design, Health and Safety, Task Organization. New York: John Wiley and Sons.

Cardosi, K.M., and E.D. Murphy (eds.). 1995. Human Factors in the Design and Evaluation of Air Traffic Control Systems. Springfield, Va.: National Technical Information Service.

Casey, S. 1993. Set Phasers on Stun and Other True Tales of Design, Technology, and Human Error. Santa Barbara, Calif.: Aegean Publishing.

Converse, S.A. 1995. Evaluation of the Computerized Procedures Manual II (COMPMA II). NUREG/CR-6398. Washington, D.C.: U.S. Nuclear Regulatory Commission.

FAA (Federal Aviation Administration). 1996. The Interfaces Between Flightcrews and Modern Flight Deck Systems. Washington, D.C.

Gopher, D., M. Weil, and T. Bareket. 1994. Transfer of skill from a computer game trainer to flight. Human Factors 36(3):387–405.

Hollnagel, E., and D.D. Woods. 1983. Cognitive systems engineering: New wine in new bottles. International Journal of Man-Machine Studies 18:583–600.

Hutchins, E.L., J.D. Hollan, and D.A. Norman. 1986. Direct manipulation interfaces. Pp. 87–124 in User Centered System Design, D.A. Norman and S.W. Draper (eds.). Hillsdale, N.J.: Lawrence Erlbaum Associates.

IAEA (International Atomic Energy Agency). 1988. Basic Safety Principles for Nuclear Power Plants. Safety Series No. 75-INSAG-3. Vienna, Austria: IAEA

Kirlik, A., M.F. Kossack, and R.J. Shively. 1994. Ecological Task Analysis: Supporting Skill Acquisition in Dynamic Interaction. Unpublished manuscript. Center for Human-Machine Systems Research, School of Industrial and Systems Engineering, Georgia Institute of Technology, Atlanta, Ga.

Knaeuper, A., and N.M. Morris. 1984. A model-based approach for on-line aiding and training in process control. Pp. 173–177 in Proceedings of the 1984 IEEE International Conference on Systems, Man, and Cybernetics, Haifax, Nova Scotia, October 10, 1984. New York: IEEE.

Lee, E.J. 1994. Computer-Based Digital System Failures. Technical Review Report AEOD/T94-03. Washington, D.C.: USNRC. July.

Mitchell, C.M. 1987. GT-MSOCC: A domain for modeling human-computer interaction and aiding decision making in supervisory control systems. IEEE Transactions on Systems, Man, and Cybernetics 17(4): 553–572.

Mitchell, C.M. 1996. GT-MSOCC: Operator models, model-based displays, and intelligent aiding. Pp. 233–293 in Human-Technology Interaction in Complex Systems, W.B. Rouse (ed.). Greenwich, Conn.: JAI Press Inc.

Mitchell, C. M., and D.L. Saisi. 1987. Use of model-based qualitative icons and adaptive windows in workstations for supervisory control systems. IEEE Transactions on Systems, Man, and Cybernetics 17(4):573–593.

Mitchell, C.M., and R.A. Miller. 1986. A discrete model of operator function: A methodology for information display design. IEEE Transactions on Systems, Man, and Cybernetics 16(3):343–357.

Moray, N., and B. Huey. 1988. Human Factors and Nuclear Safety. Washington, D.C.: National Academy Press.

Morris, N.M., and W.B. Rouse. 1985. The effects of type of knowledge upon human problem solving in a process control task. IEEE Transactions on Systems, Man, and Cybernetics 15(6):698–707.

NASA (National Aeronautics and Space Administration). 1988. Space Station Freedom Human-Computer Interface Guidelines. NASA USE-100. Washington, D.C.: NASA.

NASA. 1996. User Interface Guidelines for NASA Goddard Space Flight Center. NASA DSTL-95-033. Greenbelt, Md.: NASA.

Norman, D.A. 1988. The Psychology of Everyday Things. New York: Basics Books.

O'Hara, J.M. 1994. Advanced Human-System Interface Design Review Guideline. NUREG/CR-5908. Washington, D.C.: U.S. Nuclear Regulatory Commission.

Palmer, E.A., and A. Degani. 1991. Electronic checklists: Evaluation of two levels of automation. Pp. 178–183 (Volume 1) in Proceedings of Sixth International Symposium on Aviation Psychology, Columbus, Ohio, April 29–May 2, 1991. Columbus Ohio: Ohio State University.

Pawlowski, T.J. 1990. Design of Operator Interfaces to Support Effective Supervisory Control and to Facilitate Intent Inferencing by a Computer-based Operator's Associate. Ph.D. dissertation, School of Industrial and Systems Engineering, Georgia Institute of Technology, Atlanta.

Preece, J. 1994. Human-Computer Interaction. New York: Addison-Wesley.

Ragheb, H. 1996. Operating and Maintenance Experience with Computer-Based Systems in Nuclear Power Plants. Presentation at International Workshop on Technical Support for Licensing of Computer-Based Systems Important to Safety, Munich, Germany. March.

Rasmussen, J. 1986. Information Processing and Human-Machine Interaction: An Approach to Cognitive Engineering. New York: North-Holland.