5

Improving Operational Test Planning and Design

The operational testing of systems under development is a common industrial activity, in which the assessment of system performance is needed for a wide variety of intended environments.1 The statistical field of experimental design has provided the basis, through theoretical and applied advances over the last 80 years, for important improvements over direct but naive methods in test planning and test design. Methods now exist to guide the planning and design of efficient, informative tests for a wide variety of circumstances, constraints, and complications that can arise in actual use. As stated in Chapter 3, we recognize that there is a danger in drawing analogies between the problem of developing new products in industrial and other private- and public-sector areas and the development of new defense systems. Although defense systems have unique aspects, there are also substantial similarities in the operational testing of a defense system and the testing of a new industrial product. Given the very high stakes involved in the testing of defense systems that are candidates for full-rate production, it is extremely important that the officials in charge of designing and carrying out operational tests make use of the full range of techniques available so that operational tests are as informative as possible for the least cost possible.

The panel examined plans for the operational tests of several defense systems, including the Longbow Apache helicopter, the Common Missile Warning System, and the ATACMS/BAT system, as well as components of plans for

several other systems. The panel identified two broad problems from this examination of test designs. First, there is no evidence of a methodical approach to test planning, which is an important requisite to successful test design in industrial applications. Second, although we found many examples of the proper use of specific techniques of experimental design—including simple ideas, such as the benefits of randomization and control, and some more sophisticated designs, such as fractional factorial designs—there were also test designs that were clearly not representative of the state of the art. Our assessment is that the current level of test planning and experimental design for operational testing in the Department of Defense (DoD) is neither representative of best industrial practice, nor takes full advantage of the relevant experimental design literature. Yet with fairly modest effort and minor modifications in the process of operational test design, the quality of operational test design could be substantially improved, resulting in improved decisions about moving to full-rate production, as well as reduced costs for testing.2

This chapter identifies the primary ways in which operational test planning and test design can be improved through use of state-of-the-art methods. We first outline some general test principles, including the important role of test planning as a requisite to efficient and effective test design. We then present a discussion of some of the methods of statistical experimental design that should be examined for applicability to the design of operational testing. Fries (1994a) provides supporting material for much of that discussion. Finally, we address the key question of how to determine the appropriate size of an operational test.

GENERAL PRINCIPLES OF TEST DESIGN

Systematic Test Planning

As stated in Chapter 4, test planning is the collection of specific information about various characteristics of a system and the test environment, and the recognition of the implications of these characteristics for test design. Because a single, unanticipated restriction can render worthless an otherwise well considered design, test planning is extremely important as an input to test design. Without repeating the discussion in Chapter 4, we outline here the components of operational test planning and add some technical details. Again, much of this discussion is taken from Hahn (1977) and Coleman and Montgomery (1993).

The components of test planning include:

-

defining the purpose of the test;

-

identifying methods for handling test factors;

-

defining the test environment and specification of constraints;

-

using previous information to compare variation within and across environments, and to understand system performance as a function of test factors;

-

establishing standardized, consistent data recording processes; and

-

use of small-scale screening or guiding tests for collecting information on test planning.

Defining the Purpose of the Test

It is important to keep the decision context in mind when designing an operational test. The decision that a military service faces is whether or not to pass a system on to full-rate production. Therefore, those in charge of test design must remember that the test needs to provide as much useful information as possible, at minimal cost or at a given cost, for that decision. More specifically, an operational test is currently used to satisfy two broad objectives: (1) to help certify, through significance testing, that a system's performance satisfies its requirements as specified in the Operational Requirements Document (ORD) and related documents and (2) to identify any serious deficiencies in the system design that need correction before full-rate production. The panel recommends (see Chapter 3) that objective (2) receive greater emphasis in operational testing.

The choice of test objective has important implications for test design. One issue is how close should a system be tested to the limit of normal stress with respect to various factors (at times referred to as how close to the "edge of the envelope" to test). For example, if the requirement is that the system be able to deliver a payload of 20 tons, should it be tested at 25 tons? Testing at higher payloads has the advantage of providing information on system performance for higher payloads, with the possible disadvantage that somewhat less information (but very possibly acceptably less for the given purpose) is obtained on the performance for 20 tons. This might be a desirable trade off, especially if the test objective is (2) above, since, although present demands might make 20 tons seem like a reasonable upper bound for typical use, it is difficult to anticipate future needs and demands.

A related question is the following: Should one allocate a substantial fraction of test runs to a high probability scenario(s) (as specified in the Mission Profiles and Operational Mode Summary) thereby obtaining high-quality understanding of system performance in that scenario(s), or should one test using a wide variety of scenarios that represent potentially very different stresses to the system? The tradeoff is understanding system performance for a specific requirement across a more limited number of stresses, with little understanding of system performance for some lower probability environments, versus understanding system performance when used in a wide variety of environments that represent a broader array of stresses to the system, with possibly reduced understanding of

system performance for the most likely environments.3 Again, the answer lies in recognizing the goal of the test.4

Identifying Methods for Handling Test Factors

Test factors can be influential or not influential, and they can be under the control of the experimenter or not controllable. Influential factors are factors for which system performance varies substantially with changes in the level of the factor. Clearly, misdiagnosis of which factors are and are not influential is a serious problem—often leading to wasted test resources. Therefore, the use of results from developmental tests and tests of related systems, as well as early operational assessments, if they are available, are helpful for avoiding this misdiagnosis.

Factors that are under the tester's control include testing in day or night, choice of test terrain, and choice of test tactics. Examples of factors that are not under the tester's control are unknown differences in system prototypes and weather changes during the course of the test. Obviously, factors that are not under the tester's control are not considered in test design, except for the important recognition that a baseline system may be extremely useful as a control. (In this case one is either interested in estimating the differences in performance between the two systems to find out the amount of improvement represented by the new system but not specifically the level of performance of the new system, or one is mainly interested in how good the new system is and one has excellent information on the performance of the old system.) If factors that are not controllable are influential, it is crucial during test planning that the presence of these factors be recognized so that information about their levels during testing can be collected and their contribution to test outcomes can later be accounted for through the use of statistical modeling. Failure to take the variability of these uncontrolled factors into account at the evaluation stage would likely result in substantially biased estimates.

|

3 |

In stating the choice in this way, we are ignoring the fact that one can sometimes gain considerable information about performance in one scenario from its observed performance in others through statistical modeling; accelerated testing is one example. |

|

4 |

Operational test requirements, while typically unspecific about how to treat performance across scenarios, are often analyzed from the perspective that the requirement is to estimate the average performance across test scenarios. So. if a missile is to have a hit rate of 0.80, that hit rate could be measured as a weighted average of hit rates in individual scenarios, weighted by their frequency and military importance. Even though the objective of operational testing is often to determine whether this average is consistent with the system requirement, testers are also understandably very interested in examining performance in individual scenarios because system deficiencies might only be sensitive to some test scenarios. However. DoD typically cannot use such separate measurement as a test objective because of test size limitations. (See related discussion in Chapter 2.) |

Furthermore, when using a baseline system to account for uncontrollable factors, and when it is not possible to test the system of interest and the baseline system under identical circumstances, any remaining choices in test design could be decided either through the use of randomization, or preferably, through a "balanced" experimental design. For example, when it is not possible to test the systems with the same users, it is good to design the selection of users to systems, since if one group of users is either more adept or learns more quickly than the other group, the resulting test result can be misleading. Randomization or balanced designs can be used to select users to systems, where the balancing made use of the performance of users on a pretest to select them to systems. These methods protect against (even an unconscious) selection of more expert users to either the control system or the system of interest.

Another example occurs if there are several comparisons of the new and the baseline systems in several environments or scenarios, where one system's test has to precede the other test. The decision of which system to test first in each of these several scenarios could either be made through use of randomization, or again through use of a balanced design which would mean, in this case, that each system is tested first an equal number of times. Randomization or use of a balanced design in this case helps to account for factors such as changes in the weather between tests, that might affect either system's performance. The panel's understanding is that such uncontrolled effects are not always taken into consideration in operational test planning and test design.

In addition to understanding which factors influence system performance, it is also important to understand how test factors affect system performance, i.e., it is important to understand the variability of system performance resulting from changes in the levels of various test factors. (Of course this understanding is likely to be fairly incomplete at the test planning stage.) For example, the shape of the function relating the probability of hit of a missile system to the distance to target is likely to be a non-linear curve, where for small distances the hit probability is quite close to 1, the hit probability decreases quickly as distances increase, and then for great distances the hit probability slowly approaches 0. Many test factors that can be considered to be stresses on a system have a non-linear impact on the level of performance, e.g., temperature or degree of obscurance. It is useful to understand where the active part of the non-linear functions are since it is important to test more where system performance is more variable. Therefore, collecting information that is relevant to understanding how system performance relates to the levels of test factors is an important input to test design, in that it is used to determine what levels of each factor to test at, and how many replications should be taken at each level for each test factor.

Related to the above, it is also important to compare the variability of system performance within a scenario to the variability of system performance between scenarios. Within-scenario variability can be due to such effects as idiosyncratic differences in user performance across tests, changes in the weather, or random

differences in system prototypes. Information on the relative size of between-and within-scenario variabilities helps to answer important questions concerning how many different scenarios one can include in the test and the number of replications needed for each scenario. We do not provide details as to how to do this, but it is obvious that with less within-scenario variability in each replication one obtains more information on system performance for a given scenario. Therefore, small-within-scenario variability would make it feasible to have either fewer trials or more test scenarios. Obviously, it is important to decide such key issues on the basis of as much information as possible.

Use of Small-Scale Screening or Guiding Tests

Proper decisions about (optimal) operational test design and test size require information that is often system specific, especially with respect to operational characteristics. This information often cannot be fully gained through examination of related systems or the developmental test results for the system in question. The information needed includes many of the factors listed above concerning test constraints, test factors, and the relative size of within- and between-scenario variability, but it also includes additional data needed to ascertain the proper operational test sample size (discussed below), which is often not available through examination of other systems or developmental test results.

Instead of relying on this possibly limited, perhaps somewhat irrelevant information to decide on test design, small-scale screening or guiding tests—preliminary tests with operational realism—can be used to collect information concerning system performance. The information includes various parameter estimates, including estimates of within- and between-scenario variation, and logistics information, Other information that could be collected includes: information as to any potential flaws in the system under development; the expected difference in performance between a system under development and any control or baseline system; information on the plausibility of various assumptions; and information that might be helpful in determining the usefulness of modeling and simulation to supplement results from operational testing.

The information from these screening tests can be used to estimate costs on the-basis of the number of test runs, the standard error of prediction for estimates of system performance for individual scenarios and for average system performance across scenarios, and the statistical power if significance testing is used. In addition, other advantages and disadvantages of various plans (such as the reduction in opportunity for player learning) could also be considered, and data collection can be tested. Issues can also arise in these screening tests that are often difficult to anticipate, such as difficulties in measuring various aspects of system performance. As a result, information from the screening test can be used to facilitate measurement.

Screening or guiding tests performed in a relatively inexpensive way, can

help to ensure that operational tests are as informative, effective, and efficient as possible. Testers can use these tests to understand how to anticipate unusual circumstances that might arise when first conducting operational tests on a defense system. The notion of screening tests is related to the Army concept of a force development test and experimentation, but it is broader in that the collection of other information concerning test design and test conduct (besides force development) is the objective. Additional benefits of this idea are briefly discussed in Chapter 3, where the notion of a more continuous assessment of operational system performance is advanced.5 Certainly, there are aspects of operational testing that do not scale down to small tests easily, for example, the number of users and systems needed for a test of a theatre radar system. As was mentioned in Chapter 3, if these screening or guiding tests are not feasible, there will often be some real benefit from taking existing developmental testing and modifying it to have operational aspects where practicable. However, we are convinced that the use of screening tests has not been adequately explored, especially given their potential value.

Test planning needs to become a regular, early step in the design of operational tests. Recommendation 4.1 is an important requisite to the improvement of operational test design. We repeat that recommendation here:

Recommendation 4.1: Comprehensive operational test planning should be required of all ACAT I operational tests, and the results should be appropriately summarized in the Test and Evaluation Master Plan. The following information should be included: (1) the purpose and scope of the test, (2) explicit identification of the test factors and methods for handling them, (3) definition of the test environment and specification of constraints, (4) comparison of variation within and across test scenarios, and (5) specified, consistent data recording procedures. All of these steps should be documented in a set of standardized formats that are consistent across the military services. The elements of each set of formats would be designed for a specific type of system. The feasibility of preliminary testing should be fully explored by service test agencies as part of operational test planning.

To assist in the implementation of this recommendation, we strongly suggest the development and use of templates for test planning. Fairly straightforward modifications of the templates that have appeared in the literature (see, e.g., Coleman and Montgomery, 1993) should be applicable for operational testing of defense systems. The development and use of these templates should help raise the quality of operational test design, especially over time, since improvements in the template will have associated benefits in test design.

Linking Design and Analysis

In current practice, the focus of the quantitative evaluation of operational tests involves calculation of means and percentages computed across environments, followed by a direct comparison of these summary statistics with requirements, which is sometimes accompanied by simple significance tests comparing these summary statistics either with required levels or with baseline systems. However, various characteristics of the individual test environments undoubtedly affect system performance, and these effects are hidden when reporting (and subsequent decision making) focuses only on the summary statistics. Given the small number of test runs typical of operational tests, the benefit from more disaggregate analysis or statistical modeling may be limited. However, some information on questions such as whether the system’s performance in one scenario is significantly lower than in another, and which factor used to define test scenarios is most related to a decrease in system performance, are important to answer, even in a very approximate manner. (These types of questions are closely related to the panel’s recommended modified objective of operational test; see Chapter 3.) These questions require various kinds of statistical analysis to answer, possibly including analysis of variance or logistic or multiple regression. To make these analyses as effective as possible (given the test sample size and other competing test goals) the test design must take these intended analyses into consideration.

The selection of scenarios to test in, the number of test replications in a given test scenario, and the selection of levels of test factors in a test scenario, can be very inefficient and costly if determined without recognition of the intended analysis. As an example, if the difference in performance across two specific test scenarios is key to the evaluation of a system, it is crucial that there be enough information in the test to evaluate that difference. Also, if multiple regression is used to identify which test factors have the most important effects on system performance, it is useful to select factor levels so that each test factor is relatively uncorrelated with the remaining test factors. It is important to recognize that test design and the subsequent evaluation are closely linked: the Test and Evaluation Master Plan and subsequent test planning documents should include not only the test plan, but also an outline of the anticipated analysis and how the test design accommodates the evaluation. If averages and percentages remain the primary analysis associated with an operational test, this approach is much less important. But, if more thorough analysis becomes more typical, as we recommend, this approach is more important.

Objective, Informative Measures

Test design and successful testing depends on objective, informative measures. In the operational testing of defense systems, objective measurement is

even more important since, as described in Chapter 2, the participants have different incentives. Thus, any ambiguity in the definition of measures could be the source of disputes. Objective definitions—e.g., in scoring rules, to determine what constitutes an engagement opportunity, a successful engagement, a valid trial, a ''no-test," or various types of failures—are important to agree on in advance. Other examples of measures that require objective definitions include: what constitutes time on test for assessments of system suitability; a rule for deciding how and whether the data generated before a problem occurs in an operational test should be used (for example, when a force-on-force trial is aborted while in progress due to an accident, weather, or instrumentation failure); and, a rule for determining how to handle data contaminated by failure of test instrumentation or test personnel. (It should be pointed out that complete objectivity may be difficult to achieve in some of these cases.) Objective scoring rules reduce the possibility of the effect of individual incentives in this important decision-making process. Deciding how to enhance objectivity should be done in consultation with representatives from the Office of the Director, Operational Test and Evaluation (DOT&E).

Clearly defined measures also facilitate test design. Precise definitions allow one to link information from developmental tests and testing on related systems to questions concerning operational test design.

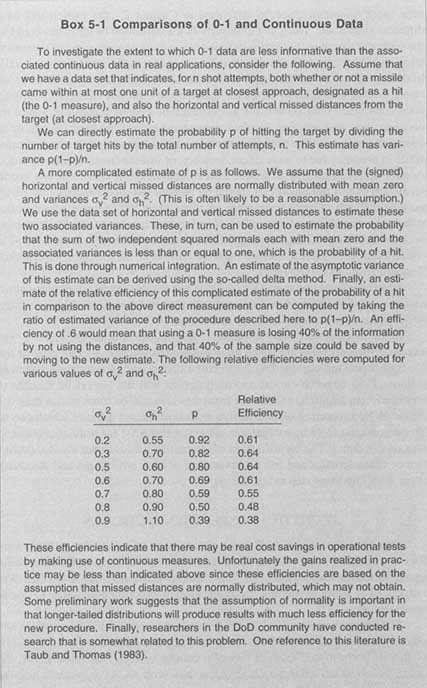

Some measures are more informative in a statistical sense than others. For example, measures that are based on 0-1 outcomes, such as a target miss or hit, generally speaking provide less information than continuous measures, such as distance from target at closest approach (which will not always be feasible to measure). (In addition, continuous measures also can be more useful than 0-1 measures by supporting more effective models of missed distance which can be useful in diagnosing why a target was missed; see Box 5-1 for additional information on 0-1 data.) The identification of alternative measures for various performance characteristics and full understanding of their advantages and disadvantages is an important step in test design.

STATISTICAL EXPERIMENTAL DESIGN

This section considers both some broad issues related to the methods used for experimental design of operational tests of defense systems and some specific issues raised in association with four "special problems" that the panel was introduced to while examining the application of experimental design to operational testing.

The operational test of a defense system is an experiment, one of whose key objectives is to determine whether the system satisfies performance requirements, or that its performance exceeds that of a control system. As noted above, the stakes involved are extremely high, especially with ACAT I systems, and the

decision whether to pass a system to full-rate production is of great consequence. Operational testing itself of ACAT I systems involves a considerable amount of money. Therefore, it is vital that operational tests be designed so that they are as informative and efficient as possible, and that they produce results that permit the best decisions to be made with respect to proceeding to full-rate production given the level of test funding.

Experimental Design for Operational Testing

Experimental design is concerned with how to make tests more effective. Design includes how to choose the number of test scenarios, the factors used to define each test scenario, at what level the factors are tested, the allocation of prototypes to scenarios, and the time sequence of testing. The field of experimental design has developed substantially since the work of Fisher, Box, Finney, Bose, Kiefer, Wolfowitz, their collaborators, and others (see, e.g., Steinberg and Hunter, 1984). In the twentieth century, industrial, agricultural, and military experimentation have raised a wide variety of problems, such as: how to accommodate various test constraints; how to accommodate trend effects; how to understand how a relatively large number of test factors affect system performance when one is limited to a relatively small number of test scenarios (e.g., fractional factorial and Latin Hypercube designs); how to learn about the effects of individual test factors and estimate performance well in a key scenario; and how to efficiently test to simultaneously inform about several measures. Methods that have been developed to address these and other problems have resulted in a rich technical literature. Technometrics is one of several journals that devote a substantial percentage of their pages to methods of optimal experimental design and the practice of experimental design in applied settings. Some of this literature is devoted to precisely the issue of how to design a test in a variety of scenarios to evaluate a system under development.

Many problems in experimental design are solved by identifying designs that are optimal for a stated criterion. Much of the classical theory of optimal design for a single measure yields asymptotically optimal designs for estimating a single unknown parameter. This theory for a single measure has limited application for several reasons: it is a large-sample theory, with unknown applicability for very small samples; the optimal designs often depend on the value of parameters (relating performance to test factors) that are costly or otherwise difficult to estimate; the optimality depends on the assumed model for the response being (approximately) correct; and most systems are judged using multiple measures, and an optimal design for one measure might not be the same as an optimal design for another.6 However, even with limited direct applicability, the theory of optimal design yields insights into good designs for non-standard problems that have features that approximate those for standard problems. So, although stan

dard techniques will often be inadequate for very complicated systems, the principles developed from answers to related, simple problems can be helpful for suggesting approaches to the more complicated problems.7 For example, one such principle is to test more where variation is greatest.8

As in industrial applications of experimental design for product testing, circumstances can conspire either to cause some test runs to abort or to require the operational test designer to choose alternative test events that were not planned. These unanticipated complications can affect the utility of a test design (e.g., by compromising features such as the orthogonality of a design, and thereby reducing the efficiency of a test; see discussion of these issues in Hahn, 1984). Again, techniques have been developed both to minimize the problems that result from aborting or altering of a test run and to adjust test designs when this occurs.

Unfortunately, the current practice of experimental design in the operational testing of defense systems does not demonstrate great fluency with this variety of powerful techniques that comprise the state of the art of applied experimental design, though there are exceptions. The result is inefficient test designs, wasted resources, and less effective decision making concerning the test and acquisition of defense systems. The knowledge of the existence of these techniques and this literature needs to be more widespread in the test and acquisition community. When it is appropriate, expert help will then be sought so that these techniques can be used to greater advantage in operational test design. We repeat Recommendation 4.4 from Chapter 4:

Recommendation 4.4: The service test agencies should examine the applicability of state-of-the-art experimental design techniques and principles and, as appropriate, make greater use of them in the design of operational tests.

|

6 |

A more recent Bayesian approach to the question of optimal design is, in contrast, a small-sample theory. |

|

7 |

An example is for a test design in which the response is assumed to be the slope of a linear function of a single input. The optimal design is to test only at both extreme values for this input (though one would generally include tests in between the extremes to investigate model misspecification). Now, if one is faced with a problem in which the response is a linear function of multiple inputs, and one is interested in estimating all regression coefficients, in a multiple regression test design problem one could use the principle from the case with a single factor to infer that it would be reasonable to oversample points with extreme values for one or more of the inputs. (Optimal theory can be applied if a criterion to be optimized is selected.) |

|

8 |

Understanding how to apply optimal design theory for non-standard problems requires relatively advanced understanding of the field of experimental design; see recommendations in Chapter 10 regarding the level of statistical expertise needed in the DoD test community. |

Experimental Design for Operational Testing: Special Problems

The remainder of this section describes some special problems raised in the application of experimental design to the design of operational testing of defense systems and the statistical techniques that have application to them.

Problem 1: Dubin's Challenge

Dubin's challenge9 is how to select a very small number of test scenarios and then allocate a possibly moderately larger number of test events to these test scenarios in order to understand system performance in a larger number of scenarios.10 That is, how should one allocate a given number of tests, t, to m different scenarios of interest, with t substantially smaller than m, both to best understand average system performance across the m scenarios and performance in the individual scenarios?

As a start, we examine a related problem. Considering the different scenarios as strata in a population, one knows from the literature on sample surveys that the best allocation scheme depends on the variability of the measure of interest, Y, within each scenario (σs2), and the cost of testing in each scenario (see, e.g., Cochran, 1977:Ch. 5). Assuming first that the goal is to estimate the average performance across all scenarios of interest, possibly weighted by some probabilities of occurrence or importance, one can work out an optimal allocation scheme as a function of the costs, variances (which would have to be estimated, possibly through the use of small-scale testing), and weights. If one assumes that the strata are not related and that no borrowing of information across strata would be possible, this approach requires that t is at least as large as m. For a simple example, if the importance weights, variances (σ2), and costs happen to be equal for all scenarios of interest, then equal allocation is optimal, each scenario receives t/m test units (assuming this is an integer), and the variance of the sample mean is equal to σ2/t. However, one cannot estimate Y well in each individual scenario as the variance of those estimates is m(σ2/t).

If t is smaller than m, the only way to proceed is to assume that the test scenarios have features in common and use statistical modeling.11 Modeling has

TABLE 5-1 Missed Distance at Eight Test Scenarios for Simple Example

|

|

Distance (in miles) |

|||

|

Obscurants |

1 |

2 |

3 |

4 |

|

0 |

6 |

10 |

20 |

32 |

|

1 |

15 |

23 |

32 |

40 |

the advantage of relating system performance across different scenarios, but there is the need to validate the assumptions used by a model.

We digress to demonstrate the advantages and disadvantages to be gained by combining information across scenarios for a related problem. Suppose one is interested in the performance of a missile system for four different distances (1, 2, 3, and 4 miles), and for two different levels of obscurants (denoted 0 and 1 for off and on). One performs one test for each of the eight scenarios represented by the crossing of these two factors. The (fictitious) missed distance for each of these eight tests is provided in Table 5-1.

We will discuss two models. The first model is less stringent than the second. The experimental design, assuming the models are correct, can be shown to reduce the variance of estimated performance by a substantial amount. However, this advantage comes at a potential cost, since there is a possible specification bias if the models are wrong. First, a two-way analysis of variance model that can be used to describe the data is

Yij= μ + αi+ βj+ eij

where Yij is the missed distance (the response), μ is the overall mean, αi (i =1, . . . , 4) is the effect for being distance i from the target (where it is assumed that the sum of these equals 0), βj (j =0, 1) is the effect from obscurants being at level 0 or 1 (again summing to 0), and eij represents an error term with unknown variance σ2. (A model similar to this might have been useful for the design and evaluation of the Common Missile Warning System; see Appendix B.) This model assumes that each distance and the level of obscurance has an additive impact on the response. However, nothing else is assumed about how Yij changes

as a function of the continuous variable distance (e.g., linear or quadratic). This model has six parameters (μ, α1, α2, α3, β1, and σ2). The estimates from this model for the mean performance in an individual scenario have variance of 0.625σ2.

Second, one can also assume, in addition, that mean performance is a linear function of distance, which results in the regression model:

Yij = α + βd (distance) + βo (indicator for whether obscurant used) + eij

where βd is the impact on expected missed distance of a 1-mile increase in the distance from the target, and βo represents the impact of the use of obscurants. This model makes a more restrictive assumption about the impact of distance from target on mean performance, but in return there are only four parameters to estimate ( α βd, βo, and σ2), producing estimates of the mean of Yij in an individual scenario that have variance of either 0.475σ2 or 0.275σ2, depending on the tested scenario. (There are similar gains in predicting mean performance for some untested scenarios.) Recall that if one assumes nothing, including additivity, about the relationship between Yij and the scenario characteristics, the result is model predictions for Yij in individual scenarios that have variance σ2. Therefore, these two models result in important decreases in the variance of estimates of mean performance. It is important to point out that instead of reducing the variance of the estimates of performance, one could instead have maintained the variance of the estimates of performance and used correspondingly smaller test sizes, with the associated costs savings. For instance, for analogous situations in which there are variance reductions proportionate to the drop from σ2 to 0.475σ2, the sample size could have been approximately halved, while maintaining the same quality estimates. (Of course, this assumes that a halved sample size was still a feasible test design.)

Although it is not always easy to determine whether the additional assumptions are supported, especially given the small sample sizes of operational tests and the limited information about a system's operational performance prior to operational testing, having a test data archive (as recommended in Chapter 3) would often provide much of the needed information to support these types of models. Returning to the example, Table 5-1 shows that scenarios with a distance of 2 miles have roughly an average missed distance of 6 more than scenarios with a distance of 1, 16 for distance 3, and 25 for distance 4. These values of 6, 16, and 25 seem to suggest that distance from target has a linear impact on missed distance. Of course, there are risks in making this assumption if it turns out not to be true, and therefore all additional information that can address the validity of this assumption needs to be used to reduce this risk.12 There is an extensive

literature on examining the validity of assumptions used in models that can be used to help reduce the possibility of choosing an inappropriate model.

We have shown that there are large potential gains in estimating performance by relating performance in different scenarios using statistical models, assuming the models are approximately true. Returning to Dubin's challenge, two specific approaches to solving it have been suggested by the panel which use statistical models to blend information across scenarios. One approach, making use of multidimensional scaling, is described in the panel's interim report (National Research Council, 1995). A second approach involves use of Bayesian optimal experimental design theory (Pollock, 1997). Both approaches need to be tried out on real data possibly with other alternative approaches and compared for situations where the answers are known.

Problem 2: Multiple Measures

Since there are often several dozen measures of performance or effectiveness of interest, especially for ACAT I systems, an operational test needs to be effective for estimating each of them. It is clear that interest in different measures has design implications since, for example, different environments stress different system components in different ways. However, producing a test design that can accommodate the needs of several measures is difficult.

As an initial step, one can reduce the number of measures by either setting priorities for the measures or combining them. (There is already a weak prioritization of the measures implicit or explicit in the analysis of alternatives—formerly cost and operational effectiveness analysis—and in the Test Evaluation Plan.) We proceed under the assumption that prioritization and combination still results in several measures of interest.

The theory of optimal experimental design for multiple measures is well established in some specific situations, but it is difficult to apply in many applied settings for the same reasons that it is difficult to apply optimal design for single measures. In addition, the breadth of application for multiple measures in terms of the problems that are solved is much more limited. Absent examination of individual applications and recommendation of specific techniques, there is a possible omnibus technique that should have utility. For each performance measure of interest, xi, identify two quantities: the minimum operationally meaningful difference in a measure, ∆xi, and a priority weight, wi. For example, suppose that one is estimating the hit rate of a missile, which is expected to be around 0.8. Assume that knowing the hit rate to within 0.05 is sufficient for deciding to proceed to full-rate production. Also suppose that the hit rate is the primary measure of interest in the system (system reliability might be another measure of interest). As a result, one wants the measure of the hit rate to represent 80 percent of the weight of the operational test. Then letting var(xi) denote the estimated variance of the mean of xi (which is a function of the experimental design), we

propose use of the following criterion: choose a design that minimizes Σwi [var(xi)/∆xi2] over each possible design.13

This approach is sensible since one is keeping each variance small relative to the square of its associated meaningful difference and these are then combined using the priority weights. Yet it might be difficult to apply this criterion in practice since linking the var(xi) to a specific experimental design may not be straightforward. Furthermore, var(xi) would often need to be based on information from developmental testing, test results, or modeling and simulation for similar systems, which argues again for both a test archive and for the use of screening or guiding tests.

Problem 3: Sequential Experimentation and Other Sequence and Time Issues

The accommodation of time and sequence considerations is another set of technical issues raised by the operational testing of defense systems. Time and sequence issues arise in several different ways. First, players involved in an operational test often "learn" how to be successful in the test as certain aspects of the test environment and the system become more familiar to them. Second, as mentioned above, as a test proceeds, various characteristics of the test environment will change, such as the weather, the degree of tiredness of the players, or the wear on the system. Test designs need to ensure that these time trends do not compromise the comparison of systems under test. Finally, sequential testing methods, not commonly used in the operational test of defense systems, might have wider applicability than current use would imply. We discuss each of these issues separately.

Player Learning and Other Time Trends The increased familiarity over time of users with the systems under test and the test environment is often referred to as player learning.14 Player learning can create design problems, since,

e.g., it can complicate comparisons of system performance between scenarios if the scenarios are tested at such different times that users have developed increased comfort with the system or the test environment between test events. Also, if a baseline system is included in the test as a control, the relative comfort and training of users on the baseline system compared with the new system raises some difficult design questions, since it is not clear what is meant by equal training or experience on the two systems, given some initial familiarity with the baseline system. Failure to address this question could provide an advantage to either system. The statistical principle is to make the comparison between the two systems, or two test scenarios, as unaffected by additional test factors as possible, but this can be difficult.

There are other trends besides player learning, especially environmental factors, that can change with time, and operational tests must also take these changes into account. Otherwise the comparison of the two systems may be affected (confounded) by a change in environmental factors, such as temperature or light, rather than real differences in the systems themselves. While there are often substantial logistic benefits to successive testing, the possibility of confounding is real. To address this type of problem, there are test designs that are balanced with respect to time trends; some examples are Daniel (1976) and Hill (1960).

Sequential Tests Sequential tests are tests that rely on results from previous trials to decide whether to continue testing. Sequential significance tests have the property that at a given confidence level and power, the expected test size will be smaller than the comparable fixed sample size test. In the application of operational testing, wide use of sequential testing could result in substantial savings of test dollars and a decrease in test time.

Unfortunately, such practical considerations as the need to obtain expedited analysis of test results and the scheduling of soldiers and test facilities make sequential designs difficult to apply in some circumstances. Acknowledging that there are practical constraints, the panel believes that these methods should be examined for more widespread use. The panel is unfamiliar with the extent to which these designs are used in developmental testing, but the above practical limitations should be less common in developmental tests. The panel is concerned that the technical demands of these tests contributes to their infrequent use. This would be unfortunate given their advantages in reducing test costs.

Problem 4: Different Systems, Different Tests

There are an extraordinarily wide variety of systems that can begin operational testing in any year. They include payroll management systems, which are entirely software; attack helicopters; airport surface repavers, and electronic warfare systems. To say that these systems need different tests is to state the obvious. How, then, can successful experience in test design and test evaluation be used on subsequent tests? The panel believes that a taxonomy or some taxonomic structure of defense systems with respect to their operational test properties would be useful. An attempt at creating a taxonomy of defense systems for operational test design is presented in Appendix C. When this initial attempt is refined, successful case studies for each cell in the finished taxonomy could be collected to help guide the major in charge of test design and evaluation by indicating techniques and tests that have worked previously. Of course, given the dynamic nature of defense systems and the understandable focus on the use of new technologies, any taxonomy will have to be flexible and change over time; it would need to be formally reconsidered on a regular basis. The taxonomy provided in Appendix C attempts to take this into account.

DETERMINATION OF SAMPLE SIZE USING DECISION THEORY

This section concerns the determination of the sample size of operational tests. Sample size is generally considered a component of test design, but sample size calculations are often ignored in current operational test design practice.

We start with the description of the justification for the test sample size contained in the Test and Evaluation Plan for the Longbow Apache (Longbow) Initial Operational Test and Evaluation (IOT&E) for the force-on-force phase of the operational test for this system. Here a replication is an opportunity to engage, i.e., a replication is an individual opportunity to fire at an ''enemy" within a test event (in which there will be multiple opportunities). (We are discussing this as an example that is consistent with best current practice in the service test agencies. We are not commenting on its appropriateness for the specific application.) The sample size for the Longbow force-on-force test was justified by the following.

Consider the two hypotheses:

Ho: p1≤pb

H1: p1> pb + δ,

where:

p1 = the probability of hit by the Longbow team against the Red forces;

pb= the probability of hit by the Apache (the baseline system) team against the Red forces; and

δ = the absolute magnitude of the difference between p1 and pb that it is considered important to detect.

The argument assumes that a t-test for the equality of two proportions-i.e., the standardized difference (to have mean zero and variance one under Ho) between the observed probability of hit by the Longbow and the Apache, which is a function of the test sample size n—is used to measure the statistical significance of the improvement represented by the Longbow over the Apache. Let Zc be the c percentile point on the standard normal distribution (that is, the area under the normal curve to the left of Zc is c). We choose the critical value Z1−α so that when P1 = Pb this t-test has significance level α; i.e., the probability of rejecting Ho when it is true is α. We would like to choose the sample size, n, so that the probability of rejecting Ho at the alternative p1 = pb + δ is 1−β for some error rate β

The required sample size n is then determined through use of the following formula (which makes use of the normal approximation to the binomial distribution):

n = 2*[Z1−α + Z1−β )/d]2 ,

Where

d = (2 arcsin √(0.5 + δ ) − 2 arcsin √0.5) ≤ (2 arcsin √p1 − 2 arcsin √pb).

For a = .10, β = .10, and δ = 0.10, n = 325, which was roughly the sample size used in the test for both the control and the new system. Finally, in the computations above, 0.5 was substituted as a conservative estimate of pb, and therefore p1 = pb + δ = 0.6, which gave an upper bound to the sample size to satisfy the above test criteria.

We note that in the above:

-

the conclusions associated with the hypotheses are not important compared with the action to be taken based on the conclusion; and

-

more generally, the power function is the probability of concluding in favor of H1 for various values of p1 and pb, which is primarily dependent on the sample size n and the difference p1 − pb, (Making the difference p1 − pb unrealistically small will require a very large n to achieve high probability of rejecting Ho in favor of H1.)

As a way of determining the sample size of operational tests, analytical procedures of this type have some advantages. The use of significance testing

provides an objective means of determining sample size, which, given the various incentives of participants in the test process, can be very useful. The test explicitly presents the improvement needed to justify acquisition, which is when the difference δ between the baseline and the new system is 0.10. Also, the test is designed so that a system with a 10 percentage point improvement over the baseline system will be identified correctly (Ho will be rejected) with a probability of 90 percent. Finally, the binomial percentages are transformed using the arcsine square root transformation, which is useful since it helps to symmetrize or normalize the binomial distribution and stabilize the variance.

The argument presented above is typical of current best practice in the service test agencies. If the practice results in a test that would cost less than the funds earmarked for operational testing by the program manager, the test will go forward. However, as is more likely for ACAT I systems, if the test budget of the program manager cannot support this sample size, negotiations between the program manager, the service test agency, and DOT&E take place, with the program manager's test budget limit possibly modestly augmented, but very likely resulting in an operational test size that is substantially reduced from that based on this approach.

Some appreciation of the difficulty of achieving even modest goals in inferring, for example, reliability with limited sample sizes using binomial data, may be derived from the following example. Suppose that it is desired to be able to state with confidence at least 0.8 (which we call approving the system) that the reliability of a system is at least r0= 0.81, based on n trials. To achieve this result

TABLE 5-2 True Reliability r* Needed to Have Probability 0.79 of Deciding that the System Has Reliability of at Least 81% with Confidence of at Least 80%

|

n |

S |

s = S/n |

r* |

p |

|

8 |

8 |

1.00 |

0.971 |

0.533 |

|

10 |

10 |

1.00 |

0.977 |

0.407 |

|

25 |

23 |

0.92 |

0.905 |

0.273 |

|

50 |

44 |

0.88 |

0.885 |

0.241 |

|

100 |

85 |

0.85 |

0.862 |

0.267 |

|

400 |

332 |

0.83 |

0.841 |

0.205 |

|

S = Number of successes required to certify at least 81% reliability with confidence at least 80% r* = True reliability required to assure probability of at least 79% of certification p = Probability of certification when true reliability is 81% |

||||

we need to have greater than or equal to S successes out of n trials, where S depends on n and is given in Table 5-2.

Now let us ask how reliable (denoted r*) the system must be so that we have probability at least p0 = 0.79, that the data will lead to approving the system. Finally, what is the probability p of certifying the system when the reliability is r0 = 0.81? Table 5-2 presents S, s = S/n, r* and p for various values of n.

From Table 5-2 it is clear that for small sample sizes, the true r* has to be very high for us to be moderately likely to approve the system. In particular, for n = 50, the true reliability has to be at least 88.5 percent for us to be only 79 percent sure of approving the system as having reliability of at least 81 percent, when approval requires only 80 percent confidence. In short, we need either large samples or modest goals in inference or both.

The above approach to determining test size ignores the costs associated with the decision at hand (as further discussed in Chapter 6). The program manager and test manager should address the question about setting test sample size by explicitly considering the costs associated with the decision as to whether to pass a system to full-rate production and the consequences of that decision. Two kinds of errors can be made: (1) passing a system to full-rate production when it was not (yet) satisfactorily effective and suitable (which would involve a broad spectrum of performances classified as unsatisfactory), and (2) returning a system for further tests or development when it was satisfactorily effective and suitable (which would again involve a broad spectrum of performances classified as satisfactory). The costs associated with these two kinds of errors can be dramatically different. Since the probabilities of these errors occurring are a function of the test sample size, one can justify test sample size by balancing the costs of additional testing against the reduction in the risk (probability of error multiplied by cost of error) achieved through additional testing. The principle is that the marginal cost of additional testing should be less than or equal to the marginal benefit gained through use of the information collected in the additional testing.

DoD needs to examine the feasibility of taking such a decision-theoretic approach to the question of "how much testing is enough." Although a cost-benefit comparison is at times difficult to make, the acquisition process will be improved through the attempt at explicitly making it. Other approaches to determining the size of operational tests, such as allocating a fixed percentage of program dollars to operational testing, do not use test dollars effectively.

Unfortunately, the decision-theoretic approach is difficult to apply since the costs associated with the wrong decisions are difficult to quantify and must be based, at least in part, on subjective judgments, which ought to be made explicit. For example, if one includes the decreased safety of military personnel as a cost either through an increased probability of a failure of the system itself or through a decreased probability of surviving a conflict given an ineffective system, the life of a soldier (or other service person) would have to be compared with test

dollars. Also, the entire defense procurement budget is essentially fixed at the level of this argument. Thus, an increase in the test budget for one system would cause a decrease in another system's test budget. So a full decision-theoretic approach would require measuring the value of additional runs of any system currently in operational testing. However, it is typical in problems such as this that precise cost estimates are not very important since good procedures are relatively insensitive to variations in the costs. Another complication is that the accounting procedures used to track costs in test and acquisition make it difficult to determine how much was spent in developmental and operational tests (see Rolph and Steffey, 1994). This difficulty is partially understandable since costs of soldiers, etc., are difficult to attribute. However, in order to undertake any cost-benefit comparison, the costs of testing must be available for analysis. Therefore accounting and documentation of costs must be adjusted to enable these types of analyses to be carried out.

Some issues that could ultimately be addressed by the comparison of the marginal benefits and marginal costs of test events are:

-

When is it preferable to use a sequential method of testing, rather than a fixed sample size test? For example, should one use 80 test events in one set of tests, or instead use 40 test events, evaluate and possibly decide to stop testing, or decide to conduct 40 more test events?

-

When is it preferable to allocate test units to a variety of environments of interest, and when is it better to concentrate the test units in a single environment—possibly the most stressful environment? For example, should one use two test replications in the desert, two in the tropics, two in the Arctic, and two in mountainous terrain, or should one use five events in the desert and one in each of the remaining environments?

-

Similarly, when is it preferable to test two factors considered related to system performance, leaving the third factor constant over tests, and when is it preferable to also vary the third factor, thereby gaining understanding of the third factor's effect on system performance but reducing the understanding of the effect of the first two factors?

These are hard problems that will require experience to address. However, there will be a number of important ACAT I systems where a cost-benefit comparison will either clearly support, or argue strongly against, the budget provided by the program manager.

Finally, if the objective of operational testing is viewed as not only a statistical certification that requirements have been met, but also to gain the most information about operational system performance and identification of deficiencies in system design, the assessment of the benefit of additional testing will involve broader considerations, such as:

-

identification of problems in operational use;

-

improvement of tactics and doctrine;

-

understanding of heterogeneity of units and performance of units in heterogeneous situations, including environment, tactical situations, etc.:

-

relating of deficiencies in performance to operational conditions;

-

understanding of training issues; and

-

generally, improving the understanding of whether the basic design is flawed, even for focused applications, thereby saving money required for retrofitting after full-rate production has begun.

When the decision rule encompasses all of these considerations, the decision-theory argument suggested here is even further complicated, since the decision rule is more difficult to analyze. To better understand how to implement the comparison of marginal cost with the value of the information gained through additional testing, a feedback system is needed, in which the service test agencies maintain records of the costs of testing and the costs of any retrofitting, along with the precise test events that were conducted and the reason why retrofitting was required. Discovered defects or system limitations might then be traced back to inadequate testing. In this way, the process of comparing costs and benefits can be improved so that similar problems do not recur. This approach should be systematically investigated.