1

Creating a Coordinated System of Education Indicators

Summary Conclusion 1. The current NAEP assessment has served as an important but limited monitor of academic performance in U.S. schools. Neither NAEP nor any other large-scale assessment can adequately measure all aspects of student achievement. Furthermore, measures of student achievement alone cannot meet the many and varied needs for information about the progress of American education.

Summary Recommendation 1. The nation's educational progress should be portrayed by a broad array of education indicators that includes but goes beyond NAEP's achievement results. The U.S. Department of Education should integrate and supplement the current collections of data about education inputs, practices, and outcomes to provide a more comprehensive picture of education in America. In this system, the measurement of student achievement should be reconfigured so that large-scale surveys are but one of several methods used to collect information about student achievement.

INTRODUCTION

NAEP has chronicled academic achievement for over a quarter of a century. It has been a valued source of information about the academic proficiency of students in the United States, providing among the best available trend data on

the academic performance of elementary, middle, and secondary students in key subject areas. The program has set an innovative agenda for conventional and performance-based testing and, in doing so, has become a leader in American achievement testing.

NAEP's prominence, however, has made it a victim of its own success. In the introductory chapter, we reviewed the demographic and sociopolitical conditions that have pushed the NAEP program in varied and, in some cases, conflicting directions. Recent demands for accountability at many levels of the educational system, the increasing diversity of America's school-age population, policy concerns about equal educational opportunity, and the emergence of standards-based reform have had demonstrable effects on the program. Policy makers, educators, researchers, and others with legitimate interest in the status of U.S. education have asked NAEP to do more and more beyond its central purpose (National Assessment Governing Board, 1996). Without changing its basic design, structural features have been added to NAEP and others changed in response to the growing constituency for assessment in schools. The state testing program, the introduction of performance standards, and the increased numbers of hands-on and other open-response tasks have made NAEP exceedingly complex (National Research Council, 1996).

In this chapter, we advance the following arguments:

-

NAEP cannot and should not attempt to meet all the diverse needs of the program's multiple constitutencies. However, a key need that should be addressed by the U.S. Department of Education is providing an interpretive context for NAEP results—helping policy makers, educators, and the public better understand student performance on NAEP and better investigate the policy implications of the results.

-

The nation needs a new definition of educational progress, one that goes beyond NAEP's student achievement results and provides a more comprehensive picture of education in America. NAEP should be only one component of a more comprehensive integrated system on teaching and learning in America's schools. Data on curriculum and instructional practice, academic standards, technology use, financial allocations, and other indicators of educational inputs, practices, and outcomes should be included in a coordinated system.

-

Educational performance and progress should be portrayed by a coordinated and comprehensive system of education indicators. The U.S. Department of Education should exploit synergies among its existing data collections and add components as necessary to build a coordinated system. The system should include a broad array of data collected using appropriate methods.

-

Measures of student achievement necessarily remain important components of the assessment of educational progress. However, the current NAEP achievement surveys fail to capitalize on contemporary research, theory, and practice in the disciplines in ways that support in-depth interpretations of student

-

knowledge and understanding. The surveys should be structured to support analyses within and across NAEP items and tasks to better portray students' strengths and weaknesses.

-

NAEP's student achievement measures should reach beyond the capacities of large-scale survey methods. The current assessments do not test portions of the current NAEP frameworks well and are ill-suited to conceptions of achievement that address more complex skills. Student achievement should be more broadly defined by NAEP frameworks and measured using methods that are matched to the subjects, skills, and populations of interest.

We begin our discussion in this chapter by reviewing information that led to our conclusion that the U.S. Department of Education must address educators' and policy makers' key need for an interpretive context for NAEP's results. We provide examples of the types of inferences —some supportable and some not—that NAEP's constituents draw from the results. We describe the purposes these interpretations suggest, noting those that are poorly served by the current program. To better serve the needs suggested by these data interpretations, we build the case for better measures of student achievement in NAEP and for the development of a broader indicator system on American education. In doing so, we describe the characteristics of successful social indicator systems and discuss possible features of a coordinated system of indicators for assessing educational progress.

NAEP'S CURRENT MISSION

In the most recent reauthorization of the NAEP program (P.L. 103-384, Improving America's Schools Act of 1994), Congress mandated that NAEP should:

provide a fair and accurate presentation of educational achievement in reading, writing, and other subjects included in the third National Education Goal, regarding student achievement and citizenship.

To implement this charge, the National Assessment Governing Board (NAGB) adopted three objectives for NAEP (National Assessment Governing Board, 1996:3):

-

To measure national and state progress toward the third National Education Goal and provide timely, fair, and accurate data about student achievement at the national level, among states, and in comparison to other nations;

-

To develop, through a national consensus, sound assessments to measure what students know and can do as well as what they should know and be able to do; and

-

To help states and others link their assessments to the National Assessment and use National Assessment data to improve education performance.

NAGB's three objectives call for the collection of data that support descriptions of student achievement, evaluation of student performance levels, and the use of NAEP results in educational improvement. These policy goals presage our discussion of the diverse needs of NAEP's users. This ambitious agenda for NAEP is the crux of the problem we described in 1996 (National Research Council, 1996), that we address in this report, and on which others have commented (National Academy of Education, 1996, 1997; KPMG Peat Marwick LLP, and Mathtech, Inc., 1996; Forsyth et al., 1996; National Assessment Governing Board, 1996). Indeed, the National Academy of Education panel that authored Assessment in Transition: Monitoring the Nation's Educational Progress , (National Academy of Education, 1997) began their report by affirming that, since its beginning, NAEP has accurately and usefully monitored and described the achievement of the nation's youth. However, it stated (pp. vi-vii):

[I]n less than 10 years, NAEP has expanded the number of assessed students approximately four-fold; has undergone substantial changes in content, design, and administration; and has drawn to itself veritable legions of stakeholders and observers. Taken singly, each of these changes represents a notable advancement for NAEP. Taken together, however, they have produced conflicting demands, strained resources, and technical complexities that potentially threaten the long-term viability of the entire program. … NAEP is at a point where critical choices must be made about its future.

VIEWS OF NAEP'S PURPOSE AND USE FROM PRIOR EVALUATORS

As stated in the introductory chapter, recent education summits, national and local reform efforts, the inception of state NAEP, and the introduction of performance standards have taken NAEP from a simple monitor of student achievement trends—free from political influence and notice—into the public spotlight. The speeches of President Clinton, the nation's governors, and state superintendents are punctuated with data on educational outcomes, much of which relies on NAEP results. Newspapers, news magazines, education weeklies, and education journals often carry data collected by NAEP.

In 1994 the Panel on the Evaluation of the NAEP Trial State Assessment of the National Academy of Education (NAE) identified criteria for the successful reporting of NAEP results (National Academy of Education, 1996). Among them was the likelihood that results would be interpreted correctly by NAEP's users. The panel applied this criterion to an examination of the interpretation of NAEP results by policy makers and the press after the 1990, 1992, and 1994

NAEP administrations. In their most recent review, the NAE panel examined NAEP-related articles in 50 high-volume newspapers across the United States.

The panel reported significant coverage of NAEP results, particularly for states that performed poorly in comparison to other states or in relation to past results. In poorly performing states, discussion emphasized the lackluster performance of students and schools and often went well beyond the capabilities of the data and NAEP's design to attribute blame to various school, home, and demographic variables. The panel's review revealed that many commentators drew unwarranted inferences from the data in attempts to explain the achievement results. For example, in California, where student performance was particularly disappointing, policy makers and reporters claimed that results were evidence of a myriad of sins, including overcrowded classrooms and the state's whole-language reading curriculum. When scores were released, the governor's education adviser declared (National Academy of Education, 1996:120):

California made a horrendous mistake in taking out the phonics and the basic decoding skills from our reading programs, and when you do that, kids aren't going to learn to read anywhere well enough, if at all.

South Carolina's poor showing was attributed to low expectations for student performance; local newspapers reported (National Academy of Education, 1996:122):

South Carolina students ranked near the bottom in a national test of reading skills, as more than half failed to achieve even basic reading levels. … State education superintendent Barbara Nielsen said that the scores come as no surprise. ''I know we need to raise our standards, and we will raise our scores,'' she said. "When children are expected to do more, they will do more."

Elsewhere, poor performance was ascribed to large proportions of English-language learners and transient students, too much television, meager education funding, parents' lack of involvement in children's education, and parents' inability or unwillingness to read to their sons and daughters. In their analysis, the NAE panel observed that some of the variables targeted as sources of poor student performance were absent from the variables measured by NAEP. In other cases, interpretations went beyond the data to bolster commentators' preconceived notions or already established political agendas.

The NAE panel noted that many accounts of the data included suggestions for educational improvement. From the 1994 NAEP data, reporters and policy makers made a variety of suggestions (National Academy of Education, 1996; Hartka and Stancavage, 1997; Barron and Koretz, in press). They called for ambitious education reform, more rigorous teacher training and certification, more stringent academic standards, increased education funding, better identification of student weaknesses, increased resources for early education, and the use of alternative assessments—all inferences that exceed NAEP's design. The NAE panel concluded their analysis by commenting on users' clear need to identify the

reasons for both good and poor achievement and to try to use this information in the service of educational improvement.

FINDINGS FROM OUR EVALUATION: USES OF NAEP

Building on this work, we examined the use of NAEP data following the 1996 release of the mathematics and science assessments. Our analysis relied on reports in the popular and professional press, NAEP publications, and various letters, memoranda, and other unpublished documents gathered during the course of the evaluation. We sought to determine and document the kinds of arguments users made with the most recent NAEP results.

Our analysis of the large body of reports on the 1996 mathematics and science assessments revealed that NAEP data were used by varied audiences to make descriptive statements, to serve evaluative purposes, and to meet interpretive ends. Our observations about these numerous uses of NAEP results parallel those of McDonnell (1994) in her research on state policy makers' use of assessment results, and of Barron and Koretz (in press) in their recent examination of the interpretation of state NAEP results. Specifically, we saw that 1996 results were used to:

-

describe the status of the education system,

-

describe the performance of students in different demographic groups,

-

identify the knowledge and skills over which students have (or do not have) mastery,

-

support judgments about the adequacy of observed performance,

-

argue the success or failure of instructional content and strategies,

-

discuss relationships among achievement and school and family variables,

-

reinforce the call for high academic standards and education reform, and

-

argue for system and school accountability.

Table 1-1 illustrates our findings. These illustrations come from the popular press, but they exemplify uses made of NAEP data in the varied publications we examined. Although the source data for some of the statements in Table 1-1 are unclear, what is clear is that the 1996 data were used to support descriptive, evaluative, and interpretive statements about student achievement in mathematics and science. The first column of the table identifies the sources of the reports. The second column includes statements that describe how well American students, subgroups of students, or states performed on 1996 NAEP. In describing the results, column 2 shows that users often drew comparisons—to the past, across states, across population groups—to bring more meaning to the data. The descriptive statements in these and the other reports we reviewed were generally consistent with NAEP's design. The third column gives examples of evaluative

TABLE 1-1 Excerpts from Newspaper Reports on 1996 Main NAEP Science and Mathematics Results

|

Newspaper, Date |

Descriptive |

Evaluative |

Interpretive |

|

Los Angeles Times, 2/28/97 |

"The nation's schools received an upbeat report card in mathematics, but the bad news continued for California as its fourth-graders lagged behind their peers in 40 states and came out ahead of only those in Mississippi. California eighth-graders performed somewhat better but still ranged behind students in 32 states in the 1996 NAEP. … California's poor students in the fourth grade ranked last compared to similar pupils elsewhere, the state's higher-income students, represented by those whose parents graduated from college, did slightly better but were still 35th out of 43 states." |

"[O]nly one in 10 of the state's fourth-graders and one in six of the older students are considered 'proficient' in math, a skill level higher than merely measuring the basics. … Governor Pete Wilson (said) the results were deplorable and intolerable." |

"The test found that 54% of California's fourth-graders are not mastering essential basic skills such as measuring something longer than a ruler. … Those findings are likely to fuel criticism that math 'reform' efforts of recent years have not produced gains. … Governor Pete Wilson … said results … point up the need now more than ever to teach basic computational math skills in the classroom'. … State Superintendent Delaine Eastin responded to the poor showing by renewing her call for academic standards that would be both demanding and mandatory, as well as statewide tests to monitor student performance on a system of rewards and sanctions." |

|

San Francisco Chronicle, 2/28/97 |

"Nationally, scores rose four points on a 500-point scale for fourth-graders and 12th-graders, and five points for eighth graders. … California math scores improved over 1992 for fourth-graders by one point. … Eighth-graders improved by two points, beating out eight states. … In the 1994 reading test. California fourth-graders tied with Louisiana for worst state but did outperform Guam." |

"The good news is California has stopped the downward spiral, (Superintendent) Eastin said. The bad news is we stopped it in the basement." |

"The fact that our students are the worst in the nation on average mathematics proficiency should be a wake-up call for every educator, parent, and elected official in our state that the math programs we are currently using just aren't working." |

|

Washington Post, 10/22/97 |

"Maryland and Virginia finished slightly above the national average … while the District was much lower." |

"A rigorous new test of what American students know in science has revealed that many of them are not demonstrating even basic competence in the subject in certain grades. More than 40 percent of high school seniors across the country who took the science exam, and more than one-third of fourth-and eight-graders, could not meet the minimum academic expectations set." |

"Education officials said the latest test results presented stark new evidence of a problem in how science is being taught. Too many schools, they contend, still emphasize rote memorization of facts instead of creative exercises that would arouse more curiosity in science and make the subject more relevant to students." |

|

Newspaper, Date |

Descriptive |

Evaluative |

Interpretive |

|

Washington Times, 2/28/97 |

"Commenting on the 'intriguing patterns' in the test results, William T. Randall, chairman of the National Assessment Governing Board, observed, 'The public schools in D.C. have the lowest-scoring group of black students in the nation and the highest-scoring group of whites." |

"[N]early 40 percent of eighth graders still can't perform at a good, solid proficient standard of achievement." |

"[The report is] more troubling for what it says about the condition of urban schools … [in that] four out of five eighth graders … performed worse than six years ago on the 1996 NAEP test of mathematical ability. … Eighth graders who can't function at the basic level have difficulty with whole numbers, decimals, fractions, percentages, diagrams, graphs, charts and fundamental algebraic and geometric concepts. … The states with the biggest gains all participated in an initiative funded by the National Science Foundation that allowed them, among other things to focus on the math curriculum and push algebra for all eight graders." |

statements about student performance in 1996 that primarily relied on NAEP achievement-level results. These accounts speak to the adequacy of students' performance in 1996. (We discuss the validity and utility of the achievement levels in Chapter 5.) The final column of Table 1-1 shows users' attempts to provide an interpretive context for NAEP data. These statements illustrate the need for clearer explication of the data and for possible explanations of the results. The statements in column 4 generally reach beyond the data and the design used to generate them to identify sources of good or poor performance.

In our view, the excerpts in Table 1-1 demonstrate how users hope and try to use NAEP results to inform thinking about the performance of the education system, schools, and student groups. As was observed for earlier administrations, some NAEP users accorded more meaning to the data than was warranted in laying out reasons for strong and weak performance. Others sought to better understand strengths and weaknesses in students' knowledge and skills.

To be sure, it is difficult to gauge and document the impact of statistical data on political and public discussion of education issues. We believe they can play an important role in stimulating and informing debate. Boruch and Boe (1994) argue that estimates of reliance on social science data in political discussion and decision making are biased downward. They say that normal filtering systems contribute to underestimates of the value and impact of statistical data in the policy arena. They explain (p. 27):

National Longitudinal Studies and High School and Beyond data have been used in academic reports by manpower experts . … Those reports have been augmented through further data analysis by the Congressional Budget Office. The results of the Congressional Budget Office reports, in turn, are filtered and given serious attention that leads to decisions and perhaps recommendations by the National Academy of Sciences Committee on Youth Employment Programs (National Research Council, 1985). These recommendations may then lead to changes in law, agency regulations, or policy.

Even NAEP's stewards seek to use NAEP results to more tangible ends. During the May 1998 meeting of the National Assessment Governing Board, board members discussed the inadequacy of some of the current data presentations. NAGB asked NAEP's technical staff from the National Center for Education Statistics (NCES) and the Educational Testing Service to explore options for exploiting the policy relevance of NAEP's findings. At the meeting, members noted that current presentations of NAEP results by grade, demographic group, state, and a small number of additional variables do not point policy makers and educators to possible sources of disappointing or promising performance or to their possible policy implications.

To illustrate their concern, board members brought up the oft-cited finding that fourth graders who received more hours of direct reading instruction per week did less well on the 1992 NAEP reading assessment than students who

spent fewer hours in direct reading instruction (Mullis et al., 1993:126-127). An analogous relationship was reported for fourth and eighth graders on the 1994 U.S. history assessment: students in classrooms with greater access to technology scored less well than students in classes with fewer computers (Beatty et al., 1996:47).

It probably is not the case that reading instruction depresses reading performance or that technology use depresses history knowledge. NAEP test takers with more hours of reading instruction may have received extra remedial instructional services, and students with more computers in the classroom may have attended schools in economically depressed areas where funding for technology may be easier to secure (and where, on average, students score less well on standardized tests). Board members pointed out that current data presentations may prompt faulty interpretations of results, in that the associations suggested by the paired-variable tables (e.g., summaries of NAEP scores by population group or by hours of instruction) may misrepresent complex relationships among related and, in many cases, unmeasured variables. Discussion of the reading and history results, for example, may have been informed by data on types of instructional services provided or the uses made of computers in the classroom. Although, on their own, survey data of the type NAEP collects cannot be used to test hypotheses and offer definitive statements about the relationships among teaching, learning, and achievement, they can fuel intelligent discussion of possible relationships, particularly in combination with corroborating evidence from other datasets. Most important, they can suggest hypotheses to be tested by research models that help reveal cause and effect relationships.

To reiterate, our analysis of press reports, NAEP publications, and other published and unpublished documents suggests that NAEP's constituents want the program to:

-

Provide descriptive information. NAEP has served and continues to serve as an important and useful monitor of American students' academic performance and progress. NAEP is a useful barometer of student achievement.

-

Serve an evaluative function. In this role, NAEP serves as an alarm bell for American schools. The establishment of performance levels for NAEP potentially allows policy makers and others to judge whether results are satisfactory or cause for alarm. They are meant to support inferences about the relationships among observed performance and externally defined performance goals.

-

Provide interpretive information to help them better understand student achievement results and begin to investigate their policy implications . Policy makers and educators need an interpretive context for NAEP to support in-depth understanding of student achievement and to intelligently investigate the policy implications of NAEP results—particularly if performance is disappointing. In fact, as shown in Table 1-1 and elsewhere, in the absence of contextual data,

-

some educators and policy makers go beyond the data and NAEP's design to lend their own interpretations to NAEP results.

Examination of the current NAEP program indicates that the program does a good job of meeting the descriptive needs of its users; NAEP performs the "barometer" function well. Currently, however, the evaluative and interpretive purposes are not well achieved by NAEP.

In speaking of the evaluative role for NAEP in 1989, past NAGB chairman Chester Finn (1989) explained that "NAEP has long had the potential not only to be descriptive but to say how good is good enough." Finn asserted that NAEP can serve an evaluative purpose. From their introduction, however, NAEP's standard-setting methods and results were roundly criticized (Stufflebeam et al., 1991; U.S. General Accounting Office, 1993; Koretz and Deibert, 1995/1996; Burstein et al., 1996; National Academy of Education, 1996; Linn, 1998). For a variety of reasons, evaluators have characterized NAEP standards as seriously flawed. Despite this, we note that the popularity of performance levels— and the evaluative judgments they support—is undeniable. Many policy makers and educators remain hopeful that NAEP standards will provide a useful external referent for observed student performance and signal the need to celebrate or revamp educational efforts. As others have, we encourage NAGB to continue improving their recently assumed evaluative activities, so that NAEP can make reasonable and useful statements about the adequacy of U.S. students' performance. In Chapter 5, we discuss the evaluative function in detail and make recommendations for improving the way that NAEP performance standards are set.

Also not well met are the interpretive functions users ascribe to NAEP. Improvement of NAEP's interpretive uses can occur at two levels, one internal to NAEP's assessments of achievement and the other external to the overall NAEP program:

-

Interpretive information about strengths and weaknesses in the knowledge and skills tested by NAEP can be obtained from more in-depth analyses of student responses within and across NAEP items and tasks than presently occurs. There appears to be considerable room for improvement in NAEP in supporting this level of interpretive activity. In Chapter 4 we discuss ways that framework and assessment development and reporting can evolve to provide interpretive information that supports better understanding of student achievement.

-

Interpretive information about the system-, school-, and student-level factors that relate to student achievement can be provided by including NAEP in a broader, well-integrated system of education data collections. Within the context of NCES' data collections, there appears to be considerable need for improvement in data coordination to support this level of interpretive activity.

We devote the remainder of this chapter to a proposal for building and using a broader system of indicators to address many of the interpretive needs of NAEP's users. We argue for the availability of contextual data to help users better understand NAEP results and focus their thinking about potentially useful or informative next steps. Historically, NAEP has attempted to fulfill this need for contextual information by collecting data using student, teacher, and school background questionnaires on factors thought to be related to student achievement. However, as we have already discussed, these data generally are presented in paired-variable tables. In recent years, the length of the background questionnaires gradually has been reduced, in part because they have failed to capture policy makers' and educators' attention. We contend that the current NAEP student, teacher, and school background questionnaire results should not be the principal source of data to meet NAEP users' interpretive needs. We seek, therefore, to accomplish this second type of interpretive function without further burdening NAEP.

To this end, we next develop a conceptual and structural basis for a coordinated system of indicators for assessing educational progress, housed within NCES and including NAEP and other currently discrete, large-scale data collections. We argue for a system that (1) expands the conception of educational progress to include educational outcomes that go beyond academic achievement, (2) informs educational debate by raising awareness of the complexity of the educational system, and (3) provides a basis for hypothesis generation about the relationships among academic achievement and school, demographic, and family variables that can be tested by appropriate research models. For ease of reference in this chapter and throughout the report, we call the proposed system CSEI: (the coordinated system of education indicators). We are not recommending this nomenclature for operational use by NCES but adopt it here for clarity and to streamline the text.

We foreshadow our discussion of the system by noting that much of the data we seek on student characteristics, teaching, learning, and assessment already reside at the U.S. Department of Education. The feasibility of the effort we propose relies on the department's ability to capitalize on potentially powerful synergies among current efforts in ways that enhance the usefulness of NAEP results and contribute to the knowledge base about American educational progress. Several of the current data collections could serve as important sources of contextual information about student achievement and signify educational progress in their own right. Among them are NCES's Schools and Staffing Survey, the Common Core of Data, the National Education Longitudinal Study, and upcoming longitudinal studies. Collectively, these surveys gather a wide range of data on students and schools, including demographic characteristics, enrollments, staffing levels, school revenues and expenditures, school organization and management, teacher preparation and qualifications, working conditions,

teacher satisfaction, teacher quality, instructional practice, curriculum and instruction, parental involvement, and school safety.

Each year NCES compiles recent data on many of these variables from across several separate surveys and publishes them in a compendium, The Condition of Education (e.g., Smith et al., 1997). This volume serves as a valued source of information on education indicators (with approximately 60 indicators selected for inclusion in each volume). Our recommendation for creating a coordinated system of indicators was instigated in part by imagining the enhanced value of these indicators if the data collections from which they were drawn were coordinated so that cross-connections between datasets could be realized. For example, if in CSEI, collection of data on public elementary and secondary expenditures (currently collected in the Common Core of Data) and high school course-taking patterns (currently collected in High School Transcript Studies) were coordinated with collections of data on student achievement (such as NAEP), relationships among these (and other) variables could be explored and presented in future reports. A more comprehensive view of the inputs, processes, and outputs of American education would be the result.

Table 1-2 shows many of the current NCES data collections and notes their elements. The table suggests important commonality among the datasets; these correspondences among data elements, units of observation, and populations of inference should facilitate CSEI's development. We return to the discussion of these specific data collections later in the chapter.

PURPOSE AND USE OF INDICATOR SYSTEMS

It is difficult to conceive of a system of education indicators that does not assign a key role to measures of student achievement in informing the public about how well schools are fulfilling their role in a democratic society. NAEP must serve as a key indicator in the coordinated system of education indicators that we are recommending. In fact, much of this report is devoted to commentary on aspects of NAEP that are important to its becoming integral to a larger system of indicators of progress in American education.

Bryk and Hermanson (1993:455) warn, however, of the danger of focusing exclusively on "academic achievement and the processes instrumentally linked to it—while ignoring everything else." They say that such thinking implies unrealistic "segmentation in the organization of schools, their operations and their effects." As we do, they advocate for an indicator system that reflects the different, interrelated aims of schooling.

In 1988 the Hawkins-Stafford Elementary and Secondary School Improvement Amendment (P.L. 100-297) authorized the U.S. Department of Education, through NCES, to establish the Special Study Panel on Education Indicators. The panel called for the development of a system of education indicators that "respect[s] the complexity of the educational process and the internal operations

TABLE 1-2 Overview of current NCES Data Collections

|

Data and Design Elements |

NAEP |

NELS |

ELS |

ECLS |

TIMSS |

CCD |

PSUS |

SASS |

NHES |

|

Data Elements |

|||||||||

|

Student achievement |

|

|

|

|

|

||||

|

Student background characteristics |

|

|

|

|

|

|

|

|

|

|

Home and community support for learning |

|

|

|

|

|

||||

|

Standards and curricula |

|

||||||||

|

Instructional practice and learning resources |

|

|

|

|

|

||||

|

School organization/governance |

|

|

|||||||

|

Teacher education and professional development |

|

|

|

|

|

||||

|

Financial resources |

|

|

|

|

|||||

|

School climate |

|

|

|

|

|

|

|||

|

Design Elements |

|||||||||

|

Type of design (CS=cross-sec-tional; L=longitudinal) |

CS, L |

L |

L |

L |

CS |

L |

L |

CS, L |

CS |

|

Periodicity (TBD=to be determined) |

2, 4, or 6 yrs. |

2-6 yrs. |

TBD |

TBD |

TBD |

Annual |

Biennial |

2-5 yrs. |

2-3 yrs. |

|

Unit of observation (S=students, T=teachers, A=administrators, P=parents, SC=schools, D=district, ST=states, H=households) |

S, T, A |

S, T, A |

S, A, P |

S, T, A, P |

S, T, A, P |

SC, D, ST |

SC |

T, A, SC |

H |

|

Data collection method (S=survey, R=record analysis, I=interview, V=video, C=case study, O=other) |

S |

S, R |

S, O |

S, O |

S, R, V, C |

S, R |

S |

S |

I |

|

Population of inference (N=national, S=state, G=demographic group) |

N, S, G |

N, G |

N, G |

N, G |

N |

N, S, G |

N |

N, S, G |

N, G |

|

NELS: National Education Longitudinal Study of 1988 ELS: Educational Longitudinal Study of 2002 ECLS: Early Childhood Longitudinal Study TIMSS: Third International Mathematics and Science Study CCD: Common Core of Data PSUS: Private School Universe Survey SASS: Schools and Staffing Survey NHES: National Household Education Survey |

|||||||||

of schools'' (Special Study Panel on Education Indicators, 1991:21). During the course of its work, the panel proposed such a system; it included academic achievement and other learner outcomes, the quality of educational opportunity, and support for learning variables. At the culmination of its work, the group issued a report entitled Education Counts (Special Study Panel on Education Indicators, 1991). This report documents the panel's thinking about the development of an education indicator system and makes recommendations for improved federal collection of education data. In the report, the panel provided a conceptual framework for a system that includes, but goes beyond student achievement data, identified relevant extant data sources, and cited gaps in currently available data and information. Their work provides important grounding for the efforts we propose here.

Functions of Social Indicator Systems

In an essay called ''Historical and Political Considerations in Developing a National Indicator System," Shavelson (1987) explains that, in their typical conceptions, indicator systems chart the degree to which a system is meeting its goals; they are generally structured to be policy relevant and problem oriented. Shavelson observes that indicator systems historically have been heralded as a cure for many ills. Social indicator systems have been variously proposed as vehicles for setting goals and priorities (Council of Chief State School Officers, 1989), for evaluating educational initiatives (Porter, 1991), for developing balance sheets to gauge the cost-effectiveness of educational programs (Rivlin, 1973), for managing and holding schools accountable (Richards, 1988), and for suggesting policy levers that decision makers "can pull in order to improve student performance" (Odden, 1990:24).

Shavelson goes on to describe how enthusiasm for indicator systems has waxed and waned over time. Linn and Baker (1998) recently wrote about renewed attention to educational indicators and described how interest in their uses is rising. They explain that current proposals describe indicator systems as vehicles for communicating to parents, students, teachers, policy makers, and the public about the course of educational progress in hopes that the educational community can "work together to improve the impact of educational services for our students" (p. 1).

Shavelson (1987) and others remind us to be cautious, however. Shavelson asserts that social indicator systems are properly used to (1) provide a broad picture of the health of a system, (2) improve public policy making by giving social problems visibility and by making informed judgments possible, and (3) provide insight into changes in outcomes over time and possibly suggesting policy options. Sheldon and Parke (1975) argue that social indicators can best be used to improve "our ability to state problems in a productive fashion, obtain clues as to promising lines of endeavor, and ask good questions." They and

de Neufville (1975) state that social indicators contribute to policy making by providing decision makers with information about the inputs, processes, and outputs of the system and raising awareness of the complexity of the system and the interrelationships among components.

The earlier-mentioned Special Study Panel on Education Indicators (1991:13) observed that "indicators cannot, by themselves, identify causes or solutions and should not be used to draw conclusions without other evidence." That panel and others contend that indicator systems can help identify school outcomes, student groups, and relationships among achievement and other variables that deserve closer attention and stimulate initial discussions about possible solutions (Bryk and Hermanson, 1993; Burstein, 1980; National Research Council, 1993).

We agree. We seek a system that suggests relationships among student, school, and achievement variables and that stimulates democratic discussion and debate about American education. We believe that NAEP is currently too "decoupled from important research and policy issues" (Bohrnstedt, 1997:2) and that, housed in broader system of education indicators, NAEP results can help drive and increase NAEP's relevance to policy research. Like Bohrnstedt (1997:10), we believe that a coordinated system will "provide a very fertile basis for hypothesis generation and the preliminary exploration of ideas about what works and doesn't in American education." It is our position that providing associative data about issues of concern to educators, policy makers, and the public will prompt more deliberate exploration and explanation of the interrelationships among achievement and educational variables. It is our hope that the system's products would be used to pose hypotheses about student achievement and test them, moving beyond observational to experimental research methods and using longitudinal designs.

We began this chapter by discussing interpretations of NAEP results by policy makers and others that exceed NAEP's data and the design used to generate them. We recognize that the system and products we propose here are likely to meet with similar treatment. We predict that some policy makers will use associative data from CSEI to tout their own initiatives, to argue for new educational practice, and to develop education policy. Our CSEI proposal is not intended as an argument for weak social science research or unwarranted inference. However, we start from the position that education policy based on imperfect empirical data is better than education policy with no empirical base. We believe that the benefits of documenting interrelationships among achievement and educational variables in ways that respect the complexity of the educational enterprise will outweigh its disadvantages.

Example Conceptions of Integrated Data Collections

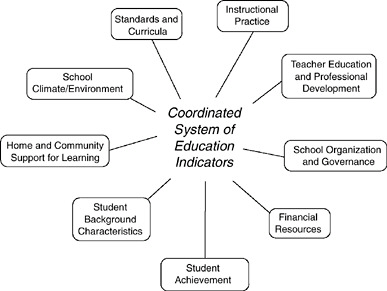

It is beyond our purview to recommend a conceptual model for CSEI, but Figure 1-1 shows a set of possible indicators that might be included within such

FIGURE 1-1 Possible elements in the proposed coordinated system of education indicators.

a system. The indicators are motivated by previous and current research and draw on the work of the Third International Mathematics and Science Study (Peak, 1996), the Reform Up Close project (Porter et al., 1993), RAND/UCLA's Validating National Curriculum Indicators project (Burstein et al., 1995), and the Council of Chief State School Officers' State Collaboratives on Assessment and Standards Project, as well as from the earlier-discussed Special Study Panel on Education Indicators (1991). The National Research Council and Institute of Medicine's work on Integrating Federal Statistics on Children (1995) and NCES's From Data to Information (Hoachlander et al., 1996) project also help suggest a framework for CSEI.

For example, in his ongoing examination of schooling, Porter (1996) is studying learning and its correlates by focusing on achievement measures, teacher background variables, student background variables, instructional practice indicators, and school climate variables. Porter and his colleagues are finding positive relationships between reform-relevant instructional practice and student achievement. Porter's earlier work with the Reform Up Close project (Porter et al., 1993) examined student work in relation to curriculum, instructional practices, and learning resources (technology, text, manipulatives, and other instructional equipment) and found that educational input and process indicators provided a useful context for understanding student achievement and helped to support policy-relevant statements about schooling.

As earlier noted, the NCES Special Study Panel on Education Indicators

proposed an indicator system that focuses on issues of "enduring educational importance" (1991:9). They described a system that includes learner outcomes, including academic achievement measurable by traditional and alternative measures, attitudes, and dispositions; the quality of educational opportunity, including learning opportunities, teacher preparedness, school organization and governance, and other school resources; and support for learning variables, including family support, community support, and financial investments.

In 1995 NCES convened a workshop and later published a proceedings volume entitled From Data to Information (Hoachlander et al., 1996). The volume title serves as a mantra for NCES's long-range planning. Conference organizers sought to (p. 3):

stimulate dialogue about future developments in the fields of education, statistical methodology, and technology, as well as to explore the implications of such developments for the nation's education statistics program … and continue as a key player in providing information to the American public, policy makers, education researchers, and educators nationwide.

Participants provided suggestions for tracking educational reform to the year 2010; measuring opportunity to learn, teacher education, and staff development; enhancing survey and experimental designs to include video and other qualitative designs; and effecting linkages to administrative records for research. The work of NCES staff and workshop participants provides important leads for designing CSEI.

Currently, within NCES itself as part of the Schools and Staffing Survey Program, researchers propose to track what is happening in the nation's schools around issues of school reform by collecting information on teacher capacity, school capacity, and system supports (National Center for Education Statistics, 1997). They will examine teacher capacity by documenting teacher quality, teacher career paths, teacher professional development, and teacher instructional practices. They will address school capacity by examining school organization and management, curriculum, and instruction—to include data on course offerings, instructional support, instructional organization and practices, school resources, parental involvement, and school safety and discipline. At this writing, NCES staff are considering the inclusion of student achievement data in the system, thus creating an initial version of a coordinated system of indicators, one function of which would be to better understand factors that influence patterns of student achievement.

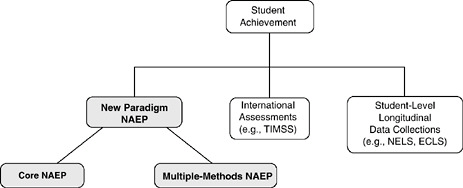

With these efforts as a guide and to illustrate, but not prescribe, a conceptual model for CSEI, we refer to Figure 1-2, which shows possible elements of the system and shows the role of student achievement measures in CSEI. Figure 1-2 suggests the types and range of indicators that might be included in a coordinated system.

FIGURE 1-2 Measures of student achievement within the proposed coordinated system.

NOTE: TIMSS = Third International Mathematics and Science Study; NELS = National Education Longitudinal Study; ECLS = Early Childhood Longitudinal Study.

POTENTIAL VALUE OF A COORDINATED SYSTEM OF INDICATORS

Two studies illustrate the value of embedding measures of student achievement within a broader range of educational measures: the Third International Mathematics and Science Study (TIMSS; Peak, 1996) and the secondary data analysis of David Grissmer and Ann Flanagan (1997).

TIMSS

TIMMS is one example of a data system that provides information on student achievement and educational variables (Peak, 1996). It was designed to describe student performance in mathematics and science and to promote understanding of the educational context in which learning and achievement take place.

The TIMSS dataset for grade 8 includes a wide variety of data about student achievement, curriculum and instruction, education policy, and teachers' and students' lives. The grade 8 study examined multiple levels of the education system using mixed methods of data collection. TIMSS researchers collected and analyzed data from student tests, student and teacher questionnaires, curriculum and textbook analyses, videotapes of classroom instruction, and case studies on policy topics.

TIMSS researchers posed a series of questions and then designed data collections to obtain the information needed to help answer those questions. TIMSS researchers designed the study to learn:

-

how well students in the United States perform in mathematics and science,

-

how U.S. curricula and expectations for student learning compare with those of other nations,

-

how the quality of classroom instruction in the United States compares with that in other countries,

-

how the level of support for U.S. teachers' efforts compares with that received by their colleagues in other nations, and

-

how students in the United States approach their studies as compared with their international counterparts.

TIMSS results indicated that U.S. students are not among the top nations of the world in mathematics and science (Peak, 1996). At the eighth-grade level, the U.S. performance was somewhat below the international average in mathematics and slightly above it in science. Furthermore, there appeared to be little improvement in U.S. students' international standing in mathematics and science over the past 30 years.

Classroom-, school-, and system-level data collections enabled TIMSS researchers to suggest a number of factors potentially associated with American students' lackluster performance that bear further investigation. The content of U.S. eighth-grade mathematics classes was found to be less challenging than that of other countries. Topic coverage was found to be less focused in U.S. eighth-grade mathematics classes than in classrooms of other nations. Although most U.S. teachers report familiarity with recommendations for reform of the discipline, only a few apply the key tenets in their teaching.

The TIMSS research examined education policy and practice broadly and used this information to describe American education and students' achievement and to frame hypotheses about strong and weak academic performance. This work has important implications for NAEP and CSEI, since it illustrates how different and complementary research methods and data can be brought to bear on important education policy questions.

Recent heated debate about the meaning of the disappointing performance of high school students on TIMSS stands in contrast to earlier discussion of the eighth-grade data. Many explanations have been offered for the poor showing of American twelfth graders: some analysts have attributed results to shallow and variable curricula, others to inadequate teacher education and professional development, others to students' insufficient motivation to perform, and still others to the large numbers of nonnative English-speaking students in America's schools. In the absence of the data collected for the eighth-grade study, users have few leads about the meaning of the high school data and face few constraints in assigning blame for disappointing results. The eighth-grade study provides an important precedent for the design, development, and operation of a coordinated system, albeit a smaller-scale system, than the one we envision.

Linkages of NAEP and Other Data Sources

Another study that suggests the value of coordinated data systems was conducted by David Grissmer and Ann Flanagan (1997) at RAND. They combined information from several federal databases to help explore student performance data, define problem areas for closer examination, and stimulate discussion of possible solutions.

Grissmer and Flanagan probed oft-cited NAEP data about the improved academic performance of U.S. minority students from 1971 through 1990. Data from trend NAEP showed that achievement gains for black and Hispanic students were greater than those for non-Hispanic white students through the 1970s and 1980s. Policy makers, the press, and researchers attributed these gains to various sources, including expansion of social welfare programs, increased public investment in education, increased allocations to schools in economically disadvantaged communities, and changes in family characteristics (e.g., poverty levels, number of adults in the home, employment status of mothers, family size, language dominance).

Grissmer and Flanagan investigated potential sources of improved performance by combining NAEP information with census data, information from the National Longitudinal Survey of Youth, and from the National Education Longitudinal Study. They studied academic gains in relation to data on changing family characteristics, changed education and social policies, and increased investment. Like other analysts, Grissmer and Flanagan found a strong relationship between family variables and academic performance. Most important, however, they found that class size and student/teacher ratio variables bore a lesser but still strong relationship to academic performance, a finding that ran counter to earlier, much publicized research. The smaller class sizes were funded by compensatory education monies available to minority students and schools during the time periods studied.

By creating links between NAEP and other data sources, Grissmer and

Flanagan were able to suggest issues that need to be further probed by researchers, perhaps through natural experiments or randomized studies. Findings from research on possible manipulable sources of differences in achievement on NAEP (i.e., class size) would make an important contribution to education policy.

DESIGNING AND SUPPORTING A COORDINATED SYSTEM

The pressures to satisfy many audiences and many purposes are not unique to NAEP but characterize the work of the U.S. Department of Education and, in particular, the National Center for Education Statistics. Over the last 15 years, the department and NCES have responded positively and aggressively to requests for better information about the condition of education. NCES has designed and conducted data collections on curriculum and instruction, school organization and governance, student achievement, school finance, and other aspects of schooling.

Historically and currently, however, the data collections exist as discrete entities, ignoring opportunities to reduce resource and response burden and enhance value and usefulness through coordinated sampling, instrumentation, database development, and analysis. In fact, these data collections could serve as important sources of contextual and associative data for NAEP and provide more telling information about educational performance. CSEI, the coordinated system of education indicators we envision, should:

-

address multiple levels of the education system, with data collected at the system, school and student levels,

-

include measures of student achievement as a critical component,

-

rely on mixed methods, including surveys, interviews, observations, logs, and samples of teacher and student work, and

-

forge links between existing data collections to increase efficiency.

We discuss each of these features in turn.

Addressing Multiple Levels of the Education System

CSEI should include sampling, data collection, analysis, and reporting for multiple levels of the education system, including:

-

System level. We envision a data system that produces national and state profiles of student achievement and other educational inputs, practices, and outcomes on a trend and cross-sectional basis. Data on school finance and governance, academic standards and curriculum, learning resources, enrollment and inclusion patterns, teacher professional development, and other schooling and student population variables would help describe American education and reveal

-

general trends and associations. Many of these data are already resident at NCES.

-

School level. We envision a system that supports closer examination of the school and classroom practices that covary with learning or signify educational progress in their own right. This should be a multifaceted program that can take advantage of ideas and instrumentation already developed by NCES and the Office of Education Research and Improvement. Work in this area would span the range of existing NCES data collections to more innovative data collections that use emerging technologies to describe schools and classrooms dynamically. Components of TIMSS and of several earlier-mentioned initiatives (e.g., the RAND/UCLA Validating National Curriculum Indicators project, Burstein et al., 1995; Council of Chief State School Officers' SCASS project, Porter, 1996), including video samples and analyses of data extracted from naturally occurring student and teacher work, also suggest possibilities for this investigation.

-

Student level. We envision a program of longitudinal data collection efforts that support inferences about developmental patterns of achievement and learning variables. Examples of current NCES efforts include the High School and Beyond Survey and the National Education Longitudinal Study. NCES recently explored links between NAEP and the longitudinal studies, but links are not yet in place. The longitudinal designs provide better support for inferences about sources of achievement gains and decrements. Because longitudinal designs follow individual students over time, they provide a better—although not unambiguous —basis for tracing cause and effect.

Integrating Measures of Student Achievement

Measures of student achievement should be a critical and integral component of CSEI. As we view the system, the measures of student achievement should include:

-

International assessments, such as TIMSS. These capture natural variation in schooling practices and support for learning outside the school, thus providing a comparative base for U.S. performance and making the associative data more informative.

-

Longitudinal measures, such as the National Education Longitudinal Study and the new Early Childhood Longitudinal Study. These collect information from individual students over time and, again, support stronger inferences about the relationship between education and achievement.

-

New paradigm NAEP. In CSEI, we conceptualize NAEP as a suite of national and state-level student achievement measures. We propose that NAEP's student achievement measures be reconfigured in some significant ways. In our view, the NAEP process of assessment development should be based on a new paradigm, one that does not assume that all (or most) of the measures of achievement

-

should be configured as large-scale assessments and in which the assessment method is selected to match both the knowledge and skills of interest and the testing purpose. In this new paradigm NAEP, we envision assessment frameworks that define broader achievement domains than those described in the current main NAEP frameworks. This broader conceptualization of achievement would include the kinds of disciplinary and cross-disciplinary knowledge, skills, and understanding that are increasingly expected to be outcomes of schooling, such as solving complex problems in a subject area, using technological tools to solve a multidisciplinary problem, and planning and carrying out tasks in a group situation. We discuss this conceptualization of student achievement in more detail in Chapter 4.

This new paradigm NAEP would continue to rely, in part, on large-scale survey assessments for the subjects assessed frequently enough to establish trend lines. Throughout this report we refer to this trend component of new paradigm NAEP as core NAEP. Our conception of core NAEP in CSEI is consistent with many features of the current main NAEP program—based on subject-area frameworks, measured by large-scale survey methods, and reported in relation to performance standards. We discuss core NAEP more fully in Chapter 2 and elsewhere in the report.

New paradigm NAEP would also address other aspects of achievement, using assessment methods tightly matched to assessment purpose. For example, in order to measure achievement in NAEP subjects for which only some students have had instruction, such as economics, smaller-scale surveys targeted only to those students who have had specified levels of instruction would be administered. For assessing aspects of the subject-area frameworks that are not well assessed by large-scale survey assessments, such as performing investigations in science and assessing the broader, cross-disciplinary aspects of achievement described above, collections of students' classroom work or videotapes of students' performances could be analyzed. Throughout this report, we refer to NAEP designs that go beyond large-scale survey assessments to targeted samples and differing methods as multiple-methods NAEP. Multiple-methods NAEP is described in more detail in Chapter 4.

The major components of the measures of student achievement within CSEI are presented in Figure 1-3. The rationale and more detailed descriptions of new paradigm NAEP —both core NAEP and multiple-methods NAEP—are addressed in subsequent chapters of this report.

Relying on Mixed Methods of Data Collection

Student achievement measures should rely on survey and other measurement methods; the same is true for schooling variables. There are important system-, school-, and classroom-level factors not measurable by large-scale survey methods.

FIGURE 1-3 Measures of student achievement, including new paradigm NAEP. NOTE: TIMSS = Third International Mathematics and Science Study; NELS = National Education Longitudinal Study; ECLS = Early Childhood Longitudinal Study.

Like the TIMSS researchers, we contend that the methods used to generate data for CSEI should be well suited to what one intends to measure and to the units of observation; in addition to survey data collections, the system's architecture should rely on interviews, videotaped observations, logs, and other samples of teacher and student work. If used to examine student performance and to document school and classroom practices, we believe nonsurvey methods can help illuminate student achievement results and portray education more comprehensively. Next, we provide examples of relevant variables and varied methods to help illustrate our view.

Teacher logs, checklists, or weekly reports, for instance, might be used to gather data on the knowledge and skills that are presented to students in the classroom. These methods could be used to examine topic coverage, the time and emphasis given to individual topics, targeted mastery levels, prior knowledge, and teacher expertise. Logs also could be used to document instructional practices and learning resources. Information about presentation formats, student activities, homework, and the use of technology and other instructional aids could be gathered and used to describe classroom practice as an end in itself and to better explore student performance results.

Observations, whether recorded by primary observation reports or on videotape, also could provide important information about school and classroom practice. These could be used to examine many of the same areas addressed by teacher logs. The resulting data would differ in that they would represent actual practice, rather than teachers' perceptions of it. As intimated earlier, videotaped observation methods were successfully used in TIMSS (Peak, 1996). TIMSS researchers developed schema for coding and analyzing observational data (Stigler and Hiebert, 1997). These strategies provide important models for CSEI.

Samples of student and teacher work or classroom artifacts could also be

collected to examine and enrich the portrayal of lesson content and student activity. Analyses of textbooks, curriculum guides, lesson plans, assignments, tests, and student projects and portfolios could be conducted to cross-check content and obtain a clearer picture of students' classroom experiences. Sampling intervals would need to be determined; coding schemes would be needed. Classroom artifacts might be collected in conjunction with observations or interviews.

Interviews of administrators, teachers, and students could be used to collect information about teaching and learning and improve understanding of student achievement results. Again, interviews could be used to gather data about curriculum, instructional practice, educational resources, school organization and governance, teacher professional development, and student performance. Interviews are helpful in learning about topics for which there is widely differing terminology and to further probe ideas and gather illustrations in ways not possible using forced-choice formats.

In the volume of research papers that accompanies this report James Stigler and Michelle Perry (1999) discuss these methods and describe their advantages and disadvantages, logistical hurdles and costs, and strategies for integrating nonsurvey-based methods into a coordinated indicator system.

Making System Design Efficient

As we noted earlier, the design of an efficient and informative system of indicators will rely on the U.S. Department of Education's ability to capitalize on potentially powerful synergies among its discrete data collection efforts. Several recent NCES initiatives provide a good foundation for the coordinated system. Some were previously mentioned, including the appointment of the NCES Special Study Panel on Education Indicators (1991), work on the Third International Mathematics and Science Study (1995-1998), and the NCES-sponsored workshop entitled From Data to Information (Hoachlander et al., 1996).

From Data to Information provides important direction for the system we propose. Conference organizers sought to ''consider more careful strategies that will permit integrated analysis of the interrelationships among education inputs, processes, and outcomes'' (Hoachlander et al., 1996:1-3). They noted that, although existing surveys do an admirable job of providing nationally representative data on important education issues, they do not support analyses that "might increase knowledge about what works and why" (p. 1-3). Participants laid out three objectives; they said NCES should "(1) expand the amount and type of data it collects, (2) adopt a wider range of data collection and analytic methods, and (3) function within the tight resource constraints that are certain to affect almost all federal agencies" (p. 1-3). During the conference, speakers and NCES staff provided suggestions for tracking educational reform to the year 2010; measuring opportunity to learn, teacher education, and staff development; enhancing survey and experimental design to include video and other qualitative designs; and making

linkages to administrative records for research. They challenged NCES to transform "quantitative facts about education into knowledge useful to policy makers, researchers, practitioners, and the general public" (p. 1-24).

Building on this and other NCES efforts, several analysts have discussed the integration of education datasets and documented factors that will help or inhibit data combination in the U.S. Department of Education; here, we describe the work of Hilton (1992) and Boruch and Terhanian (1999). These analysts detailed the purposes of varied data collections, the methods employed, sampling strategies used, the units of observation and data collection, the levels of analysis and inference supported by the datasets, the time of data collections during the school year, the periodicity of the efforts, and types of designs (cross-sectional or longitudinal) employed.

In 1992 Hilton conducted research for the National Science Foundation that examined the feasibility of combining different sources of statistical information to produce a "comprehensive, unified database" of science indicators for the United States. His goal was to capitalize on extant data on the education of scientists, mathematicians, and engineers. Hilton reviewed 24 education statistics databases. Early in his work, he determined that eight databases potentially could be combined to characterize the nature and quality of scientific training; these included NCES's National Education Longitudinal Study (NELS) of 1972 and 1988, the Equality of Opportunity Surveys, cross-sectional data on tests like the SAT, and NAEP.

Hilton eventually decided that links could not be forged to create a comprehensive science database. Factors preventing data combination, he stated, were a paucity of common variables across datasets; differences between surveys in the way variables like socioeconomic status and race and ethnicity are operationally defined; differences in sampling designs, measurement methods, and survey administration procedures; and inattention to the use of comparable conventions across datasets to enable linking.

Starting with Hilton's analyses, Robert Boruch and George Terhanian, in a paper in the volume that accompanies this report entitled "Putting Datasets Together: Linking NCES Surveys to One Another and to Data Sets from Other Sources" (Boruch and Terhanian, 1999), examined past linking efforts and suggested a hierarchical model for effecting future links. They laid out a conceptual framework for data combination that calls for designation of a primary dataset and making intended links by augmenting the primary data.

Boruch and Terhanian prompt designers to consider respondents from the primary data collection to be the primary sample and to consider samples from the same population, but from other data collections, as augmentations to the main sample. Similarly, they suggest that variables from secondary datasets should augment those from the primary data collection; they cite information derived from students' high school transcripts as an example of augmentation data. They discuss appending additional time panels for the primary sample,

adding data from relatives of primary sample members, adding data from different levels (aggregate or nested) of the education system, enriching the primary dataset with data from different measurement modes (video surveys, for example), adding a new population to the dataset, and replicating the primary sample dataset with a sample of different or the same population using identical measures.

The authors also discuss four NCES datasets that are likely candidates for linkage: the Schools and Staffing Survey (SASS), the National Education Longitudinal Study of 1988 (NELS:88), the Common Core of Data (CCD), and NAEP. They begin by stating that SASS, NELS:88, CCD, and NAEP should be linkable at the district level; and that SASS, NELS:88, and CCD are linkable at the school level. They conclude their examination, however, by stating that, although not incompatible, the surveys do not fit together nicely like pieces of an interesting "education puzzle." They state that, in some cases, for example, elements from one dataset have to be combined to match elements in another; in others, missing data in one dataset limit the number of cases matching to a second. They also cite as an impediment to linking the length of time taken to compile and make available individual NCES datasets.

Boruch and Terhanian suggest that a mapping of variables (and operational definitions) be developed across datasets to make possible linkages obvious and to suggest potentially useful standardization for future data collections. They suggest that NCES adopt a survey design strategy that fosters linkages and calls for pilot studies to test useful strategies.

We extend their call for pilot studies by suggesting that studies focus on the ways that the sampling schemes, construct definitions, instrumentation, database structures, and reporting mechanisms of individual databases can capitalize on the strengths of the other data collections. Once developed, CSEI should change so that its structure, links, and components are informed and improved by experience and the system's findings over time. Better understanding and analysis of the data that are collected also should lead to improved measurement and database design. Domain and construct definitions, sampling constraints, data collection designs, instrumentation, accommodations, analysis procedures, and reporting models also should improve as information from the field and from large-scale survey data collections suggest questions of interest for small-scale observational or experimental studies; knowledge derived from the smaller studies should be funneled back into the large-scale data collections.

PLANNING AND MANAGING THE SYSTEM

The development and implementation of CSEI calls for careful consideration of alternatives and careful planning. At a minimum, development of the system's conceptual and structural framework calls for:

-

the conduct of a feasibility study to determine likely costs for development,

-

implementation, maintenance, and management of the coordinated system,

-

development of a conceptual framework for the system that delineates issue areas and specifies the levels of the education system and educational performance that should be characterized,

-

specification of key variables that should be tracked,

-

identification of data elements already resident at NCES and the U.S. Department of Education,

-

for these elements, analysis of factors that aid or complicate linking, including similarities and differences in data collection objectives, sample definition, variable definitions, measurement methods, periodicity, confidentiality conventions, and design,

-

identification of data elements not collected by NCES or the U.S. Department of Education and determination of other data sources (other government statistical agencies or new data collections) with development of domain definitions for relevant variables not already measured,

-

specification of (and, if necessary, research about) measurement methods for the new schooling variables,

-

development of a plan for effecting the links,

-

development of a plan for organizing and housing the data,

-

specification of timelines for data linking and data availability,

-

development of a plan for reporting the data, and

-

design of mechanisms for revisiting, reviewing, and strengthening the system.

The organizational responsibility for planning and conducting this work should be accorded to NCES. This effort is consistent with their congressional charter, which calls for the collection and reporting of "statistics and information showing the condition and progress of education in the United States and other nations in order to promote and accelerate the improvement of American education" (National Education Statistics Act of 1994, 20 U.S.C. 9001 Section 402b).

In conducting this work, NCES should seek advice from several research and practitioner groups, including the:

-

Institute on Student Achievement, Curriculum, and Assessment,

-

National Center for Research on Evaluation, Standards, and Student Testing,

-

National Center for Improving Student Learning and Achievement in Mathematics and Science,

-

National Center for the Improvement of Early Reading Achievement,

-

National Research and Development Center on English Learning and Achievement,

-

National Center for History in the Schools,

-

national disciplinary organizations, such as the National Council of Teachers

-

of Mathematics, the International Reading Association, the National Science Teachers Association, the National Council of Teachers of English, and the National Council for the Social Studies,

-

Institute on Educational Governance, Finance, Policy Making, and Management,

-

National Center for Research on the Organization and Restructuring of Schools,

-

National Center for the Study of Teaching and Policy,

-

National Center on Increasing the Effectiveness of State and Local Education Reform Efforts,

-

National Association of State Test Directors, the Council of Chief State School Officers, and the Council of the Great City Schools, and

-

NAEP's subject-area standing committees and NCES's Advisory Council on Education Statistics.

The development and implementation of CSEI will be challenging. These research and practitioner groups can help expand deliberation about the conceptual and structural framework of the system. They can help evaluate and refine system plans and operations.