The Potential Role of GPS/MET Observations in Operational Numerical Weather Prediction

Ronald McPherson, Eugenia Kalnay, Stephen Lord

National Center for Environmental Prediction, National Weather Service

INTRODUCTION

Operational numerical weather prediction (NWP) applies the laws of physics, which govern the behavior of atmosphere, to the practical problem of weather prediction. In mathematical terms NWP is an initial value problem, in that the physical laws are used to calculate the temporal evolution of the physical state of the atmosphere, from an estimate of the initial atmospheric conditions.

Determining this “initial state” of the atmosphere is one of the three central problems in operational NWP. It requires observations of wind, temperature, pressure and humidity through the depth of the atmosphere, plus observations of some characteristics of the earth's surface such as snow cover, wetness, vegetation, and sea-surface temperature. These observations are presently obtained by a mixture of observing techniques that have evolved in a largely unplanned manner over the last five or six decades. For forecast projections longer than three or four days the complete global atmosphere must be sampled, and this has led to a considerable emphasis on space-based remote sensing techniques. The second section of this essay describes briefly the current observing system.

A second requirement for determining the initial state of the atmosphere is a system for assimilating disparate observations from this mixture of observing systems into a coherent, dynamically consistent, digital description of the atmosphere. Originally concerned merely with spatial interpolation of radiosonde data to grid of regularly-spaced points, modern four-dimensional data assimilation (4DDA) systems now seek to blend observations of many quantities from observing systems with widely differing error characteristics, with a highly accurate background (or “first guess”) estimate of the state of the atmosphere. Importantly, modern 4DDA systems are capable of ingesting observed quantities such as radiances or radar backscatter cross-sections rather than converting these quantities to more familiar meteorological variables such as temperature, wind, etc. The third section of this paper discusses characteristic of 4DDA systems that are relevant for the use of GPS/MET data.

From time to time, new observing technologies appear, offering either new data (to fill gaps), or better data (more accurate), or cheaper data. Several such possibilities are now, or soon will be, available. Governments that operate the existing, composite observing system are under enormous financial pressure to reduce the costs of observing the atmosphere. Therefore, the U.S. has recently undertaken a systematic redesign of the North American Observing System (NAOS), with the intent of better observing at less cost. Several new technologies will be considered in this redesign effort, which will last for several years. One of those new technologies, using radio occultation techniques in connection with the Global Positioning System, is the subject of this essay. The last section of this paper addresses the potential usefulness of atmospheric refractivity inferred from these techniques in operational numerical weather prediction.

Vertical profile observations of the mass field (i.e., temperature) are obtained from two principal sources: balloon-borne radiosondes flown twice daily from about 600 stations world-wide, and from passive radiometric measurements from satellite platforms. The former are quite accurate, with standard errors of 0.5 - 0.8C, have excellent vertical resolution, and have for many years been the backbone of the global observing system. On the other hand, radiosonde stations are mostly located on northern hemisphere continents, which provides very uneven spatial coverage, and are expensive to operate. Satellite temperature observations are less accurate with standard errors of 2C, and have poorer vertical resolution, but offer excellent spatial coverage. Current satellite systems are also extremely expensive.

Wind profiles are available from radiosondes, from ground-based radar wind profilers, Doppler weather-surveillance radars, and increasingly from wide-bodied jet aircraft on ascent and descent near airports. Single-level wind observations are obtained from aircraft, and by tracking cloud and moisture patterns in geostationary

satellite imagery. With the exception of the satellite-derived winds, these observations are accurate to within about 2m/s; satellite-derived wind are accurate to about 4-5 m/s. Importantly, essentially no wind profile information is available over the world's oceans. This is the greatest single deficiency in the present global observing system for operational NWP.

Moisture profiles are available from radiosondes and vertically-integrated moisture measurements are available from satellite. New technology may soon make moisture profiles available from aircraft on ascent and descent but these profiles and radiosondes humidity are, again, restricted to land areas. Thus, the second most serious deficiency in the current observing system is the absence of moisture profiles over the oceans and over land with sufficient horizontal, vertical, and temporal resolution This is especially important, indeed crucial, for short-period precipitation forecasting.

Until October 1995, data assimilation systems in use at operational NWP centers around the world required that satellite measurements of atmospheric radiance be converted to temperature profiles before they could be ingested. This process, called a “retrieval”, introduces errors and uncertainties into the retrieval profile. These errors tend to be spatially correlated, which greatly reduces their utility in operational NWP.

The U.S. National Centers for Environmental Prediction (NCEP) introduced a new data assimilation system in October 1995 that is based on a three-dimensional variational technique for satellite radiances. This new formulation permits radiance measurements to be ingested directly into the data assimilation, thereby by passing the retrieval process. Other operational centers are developing similar formulations.

In previous assimilation methods, as in the present one, the analysis was obtained by minimizing its distance to both the first guess (background field) and to the observations. However, if the observations (e.g., satellite radiances) were different from the model variables (e.g., temperature and moisture), the observations were first converted into model variables through a “retrieval process”. Since satellite observations are not sufficient to determine a unique atmospheric profile, this is an ill-posed problem which requires additional information such as a background field (e.g. climatology). The accuracy of the retrievals is compromised by these additional assumptions, the error characteristic are less clearly defined, and quality control is less effective.

Within the 3-D variational analysis, in which the observed radiances are compared with those that would be observed from a model atmosphere, we do not need to introduce any additional assumptions. It is a process similar to performing 3-D retrievals of all satellite data instead of the normal 1-D (column-wise) retrievals. Furthermore, it takes full advantage of the accurate model-generated first guess and all additional observations (e.g. radiosondes) simultaneously. The 3-D variational analysis with radiances produced improvements in five-day forecasts for the Northern Hemisphere equivalent to 40% of the total improvement of NCEP from 1984 to 1995. The improvement in Southern Hemisphere forecasts is even greater.

The framework established by the three-dimensional variational data assimilation system is applicable to many geophysical measurements relevant to the atmosphere. This requires the development of a forward model to go from model variables (bending angles or index of refraction in the case of the radio-occultation GPS data). In addition, we need to create the linear tangent model for the forward model (i.e., a perturbation model that indicates how much the observed variables will change if a small change is introduced in the model variables), and the adjoint of the linear tangent model, which transforms observed perturbations to model perturbations. The forward model and its linear tangent and adjoint should be accurate, and if possible, computationally efficient.

In the case of the radio-occultation technique using GPS, the measurements are actually of the signal delay due to the refraction of the atmosphere in the transmission of a radio signal from a GPS satellites to some point a known distance from the satellite. By geometric considerations, this delay can be transformed to a “bending angle”, which is proportional to the refractivity of the atmosphere. In turn, the refractivity is a function of temperature, humidity, and at a given altitude the pressure. Pressure can be determined hydrostatically, so given some external knowledge of the moisture distribution one can calculate temperature as a function of pressure; or, given some external knowledge of temperature, the moisture distribution can be determined from refractivity measurements.

However, it is extremely important to note that in modern data assimilation systems, the refractivity may be used directly without decomposition into temperature and moisture distributions. A very suitable framework thus exists to use refractivity information from the radio occultation technique.

Similarly, rather than assimilating precipitable water vapor estimates from delays observed in surface receiving stations, it would be preferable to assimilate the observed time delays themselves. This would make maximum use

of the GPS data by improving upon an already accurate model first guess of temperature and moisture.

The most important deficiency of the present observing system is most probably not the accuracy of the mass field, but rather the absence of wind profiles over the oceans. GPS/MET data will influence that only indirectly in the extra tropics, and not at all in the tropics.

It does appear possible that GPS/MET observations based on radio occultation techniques can improve the description of the distribution of moisture. NCEP modelers are eager to acquire the “forward model ” to convert temperature and moisture profiles to refractivity from colleagues at NCAR, and to begin experimenting with the GPS/MET data in the operational data assimilation system.

There is considerable evidence that the mass distribution in the atmosphere is fairly well measured and the recent advances in data assimilation noted above are making better use of that information. There is, therefore, limited room for GPS/MET observations to improve the current description of the atmospheric mass (temperature) field. GPS/MET observations may have precision, but experience clearly suggests that the addition of GPS/MET data is not likely to have a major impact on forecasting four or five days in advance.

However, if GPS/MET can provide as good a description of the temperature distribution as current systems, but at a significantly lower cost, then this would be an extremely valuable contribution to the North American Observing System.

Richard Anthes and William Schreiner

University Corporation for Atmospheric Research

Michael Exner, Douglas Hunt, Randolph Ware

University NAVSTAR Consortium

Ying-Hwa Kuo and Xiaolei Zou

National Center for Atmospheric Research

Sergey Sokolovskiy

Russian Institute of Atmospheric Physics

INTRODUCTION

On 3 April 1995, a Pegasus rocket carried aloft by an aircraft from Vandenburg Air Force Base launched a small satellite (MicroLab 1) into a circular orbit of about 750-km altitude and 70° inclination. The disk-shaped satellite, which circles Earth every 100 minutes, carried a laptop-sized Global Positioning System (GPS) receiver to demonstrate sensing of the terrestrial atmosphere by the radio occultation, or limb-sounding technique. This proof-of concept experiment is called GPS/Meteorology (GPS/MET). Since the 3 April launch, many thousands of atmospheric soundings of refractivity, temperature, pressure and water vapor have been retrieved. Some of the early results of the GPS/MET experiment are described by Ware et al. (1996) and Kursinski et al. (1996). This paper summarizes recent progress toward obtaining accurate atmospheric soundings of temperature and water vapor and the potential uses of GPS/MET data in atmospheric and climate research and weather prediction.

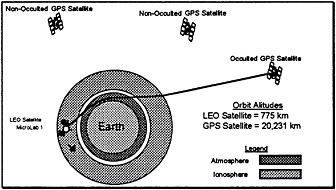

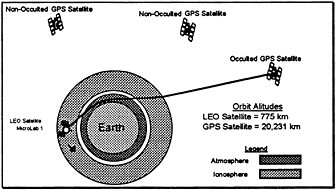

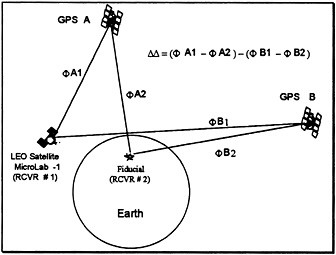

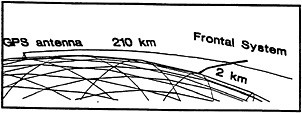

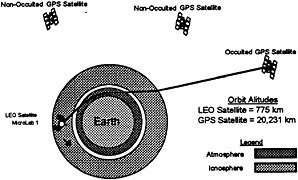

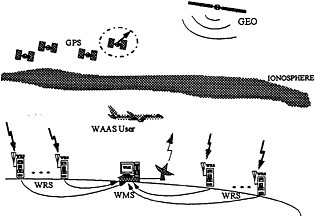

The radio occultation method used in GPS/MET was developed by scientists at the Jet Propulsion Laboratory (JPL) and used by scientists at Stanford University to measure the structure of planetary atmospheres (please see detailed references in Ware et al., 1996). In the GPS limb sounding method (Fig. 1), atmospheric soundings are retrieved from observations obtained when the radio path between a GPS satellite and a GPS receiver in low-Earth orbit (LEO) traverse's Earth's atmosphere. When the path of the GPS signal begins to transect the mesopause at about 85-km altitude, it is sufficiently

FIGURE 1 Schematic of radio occultation technique (not to scale)

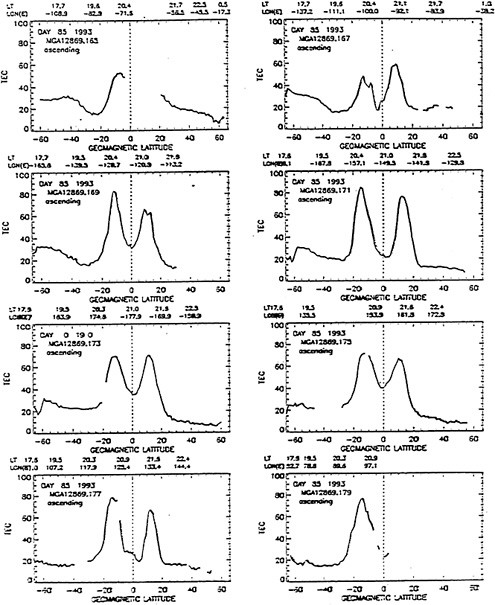

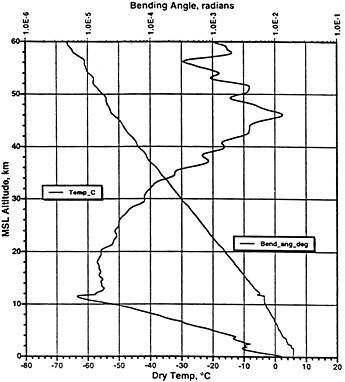

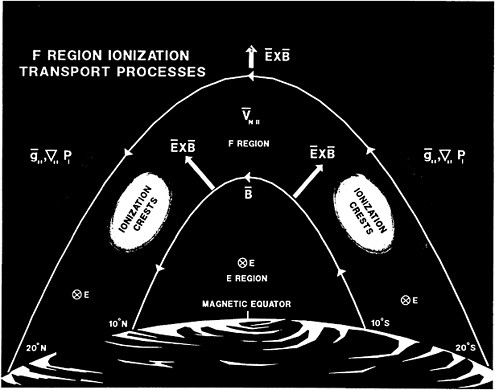

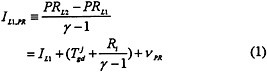

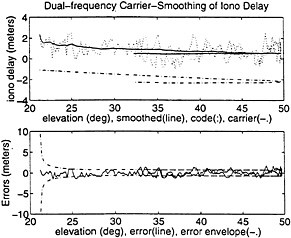

retarded by the atmosphere that a detectable delay in the dual-frequency carrier phase is observed by the LEO GPS receiver. As the radio waves are slowed by the atmosphere, they bend by a small but observable angle, which reaches a maximum value of between 0.02 and 0.03 radians near the Earth's surface (Fig. 2). Vertical profiles of atmospheric refractivity can be computed from the bending angle. The atmospheric refractivity, N, depends on pressure (P), temperature (T) and water vapor pressure (e) according to

(1)

Pressure is related hydrostatically to density, which is a function of temperature and water vapor. Thus refractivity is essentially a function of temperature and water vapor. Without knowing either temperature or water vapor, neither can be determined from refractivity alone in the general case. However, in regions of the atmosphere in which water vapor content is small and its contribution to refractivity negligible compared to that of temperature, accurate temperature profiles can be determined by assuming e=0 in (1). This approximation holds well in the upper troposphere, stratosphere, polar regions, and anywhere else where the temperatures are lower than 250 K.

FIGURE 2 Bending angle and temperature profiles for GPS/MET retrieval at 17:24 UTC 21 October 1995. The location is 47°S 59°W.

In the general case, if either temperature or water vapor is known from independent measurements or analyses (such as the global analyses prepared daily by the operational weather centers of the world), the other variable can be obtained from the refractivity. Thus, if water vapor pressure is known independently, temperature can be computed from

(2)

or, if temperature is known, water vapor pressure may be computed from

(3)

It is very important to note that for several important applications of GPS/MET, it is not necessary, or perhaps even desirable, to try to separate the temperature and water vapor effects. For example, trends of globally or regionally averaged atmospheric refractivity would be a good measure of global or regional climate change (Yuan et al. 1993). For operational numerical weather prediction, it is possible to assimilate directly refractivity measurements into the model. The assimilation of refractivity causes the model fields of temperature, pressure and winds to adjust in a dynamically and thermodynamically consistent way (Zou et al., 1995; Kuo et al., 1996).

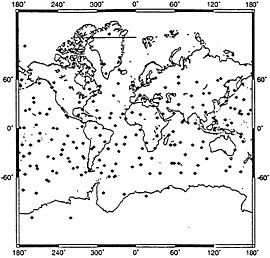

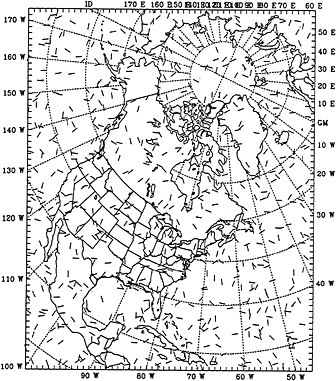

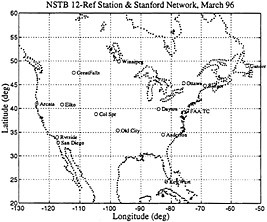

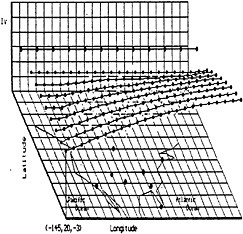

GPS/MET observations provide essentially global coverage with random spacing in the horizontal. A single GPS/MET satellite could theoretically produce approximately 500 soundings per day. Fig. 3 shows the soundings obtained on 21 October 1995 by the GPS/MET experiment; the number is significantly less than 500 because only setting occultations are obtained. With 12 (50) LEO satellites in orbit simultaneously, global atmospheric refractivity soundings at a horizontal resolution of approximately 400 km (200 km) can be expected every 12 hours.

FIGURE 3 Distribution of GPS/MET sounding on 21 October 1995.

Fig. 2 shows the retrieval of atmospheric bending angle and the temperature, derived with the assumption that water vapor is zero in (2). We call this the “dry temperature.” Because water vapor is a positive contribution to the computed temperature in (2), the “dry temperatures” generally show a significant cold bias in the lower troposphere. Fig. 2 indicates that the bending angle varies by more than three orders of magnitude from 60 km altitude to the surface. The temperature profile shows several interesting characteristics. The very sharp tropopause at around 12 km is characteristic of many GPS/MET temperature retrievals. It is in a region of the atmosphere where water vapor effects are negligible and the theoretical accuracy of the GPS/MET radio occultation methods is highest (better than 1 K). Thus the high vertical resolution and accuracy of the temperature in this regions suggests that GPS/MET observations will be very useful in upper-tropospheric and lower stratospheric research, including monitoring of climate change. Models predict a strong atmospheric response (cooling) in this region due to the enhanced greenhouse effect, and GPS/MET observations should be very useful in detecting any global or regional trends in this sensitive part of the atmosphere.

The vertical wave structure in the temperature profile of Fig. 2 is present in most of the GPS/MET temperature retrievals. In the lower stratosphere the features are almost certainly real, and associated with gravity waves. In the upper stratosphere (above 40 km), both errors in the retrieval and real atmospheric variability likely contribute to the wave structure. It is very difficult to verify the waves shown in Fig. 2 because other remote sensing systems in the stratosphere, such as HALOE (Halogen Occultation Experiment) and MLS (Microwave Limb Sounder) have inherently much lower vertical resolution than the GPS/MET technique. However, we know from rocket soundings and the LIMS (Limb Infrared Monitor of the Stratosphere) experiment that wavelike features with characteristics similar to those shown in Fig. 2 are ubiquitous in the stratosphere. For example, Fetzer and Gille (1994) state “The LIMS data are characterized by high vertical resolution, and often contain small scale peak-to-peak amplitudes as large as 40 K. These signals have dominant vertical wavelengths of about 10 km and horizontal wavelengths of about 1000 km.”

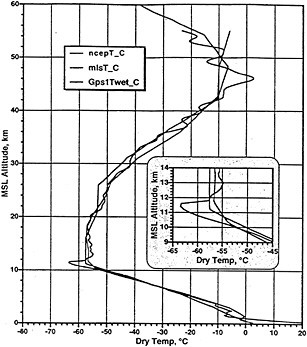

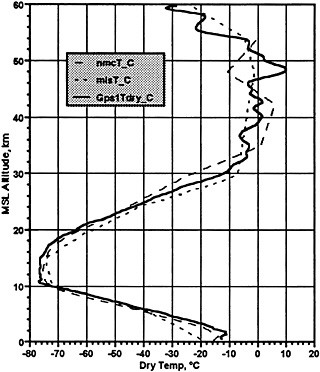

Fig. 4 shows a comparison of a GPS/MET temperature retrieval with a retrieval from the MLS and the global analysis of temperature from the National Centers from Environmental Prediction (NCEP). Both the MLS and NCEP soundings have much coarser vertical resolution in the upper troposphere and stratosphere so they do not show the sharp tropopause feature or the vertical waves that are observed in the GPS/MET sounding. However, the large-scale characteristics of the three soundings are similar, even in the upper part of the stratosphere (40-55 km).

FIGURE 4 Same temperature sounding shown in Fig. 2 compared to MLS and NCEP temperature soundings.

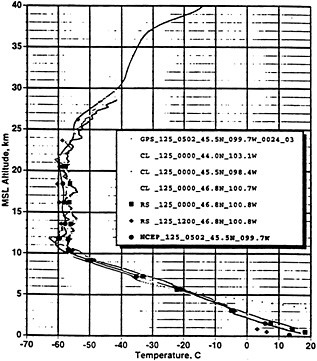

We have compared many GPS/MET temperature retrievals with nearby radiosondes. Fig. 5 shows a typical example of a dry temperature retrieval, from 5 May 1995. The high vertical resolution and the accuracy of the GPS/MET temperature profile in the layer from about 5 km to 35 km are confirmed by the nearby radiosonde measurements.

The lower portion of the GPS/MET temperature sounding in Fig. 5, indicated by the dotted line beginning at about 6.5 km and extending toward higher temperatures to about 3.5 km is in error, and represents a typical behavior of the temperature

FIGURE 5 GPS/MET “dry” temperature sounding at 0502 UTC 5 May 1995 at 45.5°N 99.7°W compared to nearby balloon soundings.

retrievals in the lower several kilometers of the atmosphere. Decreasing signal-to-noise ratio and the presence of increasing amounts of water vapor, and possible strong low-level temperature gradients cause multi-path effects and other errors. The manifestation of these errors is usually a sudden increase in the retrieved temperatures with decreasing elevation and is easily recognized as an erroneous result (a fact useful in quality control of the data).

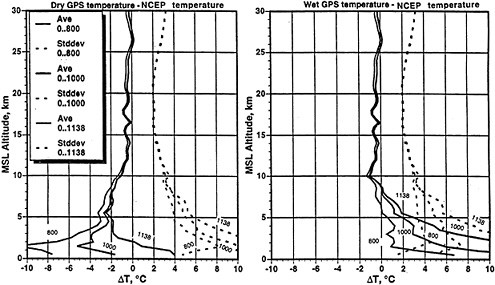

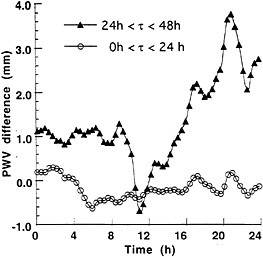

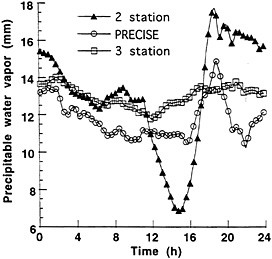

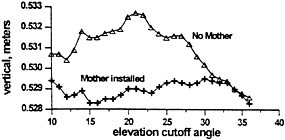

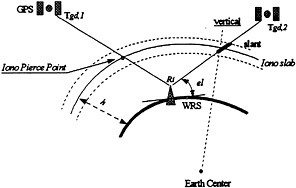

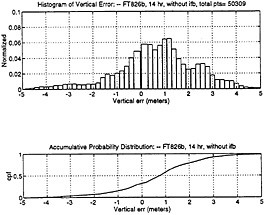

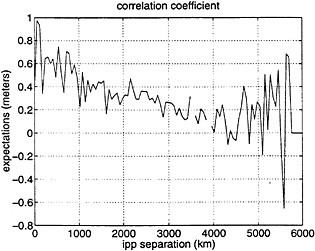

Fig. 6 shows a comparison of “dry” and “wet” GPS/MET temperature retrievals with the NCEP analyzed temperature for a complete set of 1138, and subsets of 1000, and 800 soundings respectively. The “wet” temperature retrievals refer to temperatures computed from (2) with water vapor pressure obtained from the NCEP analysis. The sets of soundings are determined as follows: The set containing 1138 soundings represents the total number of soundings processed during the period 10-25 October 1995. The 1000 and 800 sounding sets represent the subset of soundings which give the smallest mean and standard deviation differences from the NCEP data. In other words, the set of 1000 soundings was obtained by eliminating the “worst” 138 retrieved soundings out of the total set.

The profiles in Fig. 6 demonstrate the ensemble effect of the “warm bias” errors in the lower troposphere that was seen in the single example of Fig. 5. Elimination of the “worst” retrievals removes those soundings which develop the warm bias error at the highest elevations. Thus the subset of the 800 “best” cases shows the ensemble warm bias beginning at a level around

5 km while the total set shows the bias beginning around 9 km. It is noteworthy that there is no significant difference between the three sets above 10 km, indicating that the retrieved soundings are very robust and stable in this region.

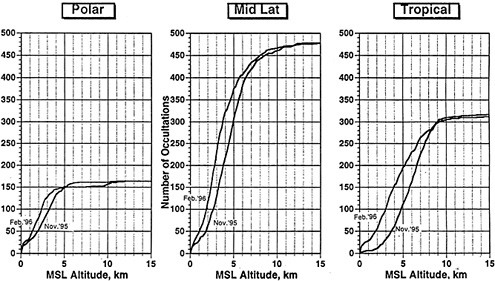

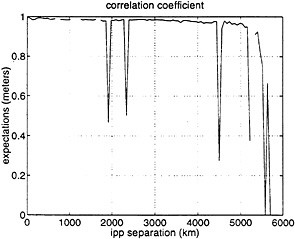

Fig. 7 shows a comparison of 1000 “dry” and “wet” temperature retrievals with NCEP analyzed temperatures, categorized by polar, middle latitude and tropical regions. The “good” soundings reach closest to the surface in the polar regions (about 2-5 km), while the “good soundings in the tropics typically reach to only about 9 km, a reflection of the fact that the lower troposphere in the tropics contain much more moisture than in the middle latitudes or polar regions.

Fig. 8 quantifies the number of retrieved “good” soundings which reach specified altitudes, again grouped into polar, middle latitude and tropical regions. The results from two retrieval algorithms are shown, the original algorithm and an improved algorithm. In tropical regions, the number of “good” soundings starts decreasing rapidly at the 9 km level, while for the middle latitude and polar regions the levels at which the number of “good” soundings begin to decrease rapidly are approximately 8 and 5 km respectively. The increase in the number of low-level “good” soundings due to the improved algorithm is apparent; for example, the number reaching the 5-km level approximately doubles from 100 to 200 in the tropical regions. This indicates that with adjustments in the instrumentation, antenna, and other aspects of the hardware, together with further improvement in the software, a significant improvement in the capability of GPS/MET to successfully sound the lower part of the atmosphere is possible. As will be shown later, it is very important in numerical weather prediction to obtain accurate refractivity profiles as close to the surface as possible.

FIGURE 8 Number of GPS/MET retrievals reading a given altitude using old (November 1995) and improved (February 1996) retrieval algorithms.

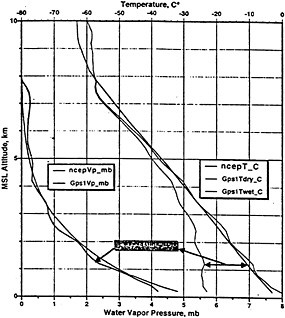

Fig. 9 illustrates the ability to use the measured refractivity to compute temperature given an independent estimate of water vapor using (2) or, alternatively, to compute water vapor pressure given an independent estimate of temperature from (3). In this example the independent estimates of temperature and water vapor are obtained from the NCEP analyses. We note that because the analysis and short-term global forecasts of temperatures are much more accurate than those of water vapor, it is likely that it will be more useful to derive water vapor from refractivity and an independent estimate of temperature than vice versa. It is also noteworthy that water vapor retrievals of the accuracy shown in Fig. 9 on a global basis would be extremely useful for research and operational purposes.

FIGURE 9 Example of temperature and water vapor retrieval using observed refractivity and either temperature or water vapor from the NCEP analysis as additional data.

One of the greatest potential applications of GPS/MET data is in operational numerical weather prediction. Advantages of GPS/MET data for this purpose include global coverage in all weather (GPS/MET retrievals are not affected by clouds), high

accuracy and high vertical resolution. Because GPS/MET data will occur at different spatial locations and at different times over the Earth, the best way to use the data will be through four-dimensional variational data assimilation (4DVAR). The 4DVAR technique is described by Zou et al. (1995). In this section we present a brief summary of the potential impact of assimilating GPS/MET data in a numerical model (Kuo et al., 1996).

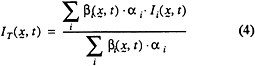

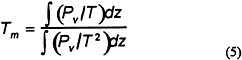

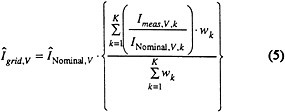

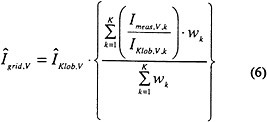

In the 4DVAR technique, simulated atmospheric refractivity data are assimilated into the model during a six-hour period using an iterative process. The objective of the process is to minimize a “cost function ” defined by

where x represents the model-predicted variables (in this case temperature, pressure and water vapor), N(x) is the model's value of refractivity and No is the observed value. Starting from an initial guess field xo(o), the minimization iteratively finds the better initial condition xo(k) which satisfies

J(xo(k)) ≤ J (xo(k−1)), (5)

where k is the interation number. During the interation process all variables in the model, including pressure and winds, adjust in response to the changing temperature and water vapor fields.

The case selected for the 4DVAR study was one of intense cyclogenesis over the Northwestern Atlantic Ocean on 4-5 January 1989. This storm was the most intense storm ever observed in this region, with an estimated pressure of 936 mb at 0000 UTC 5 January. The storm started as a 996-mb low off Cape Hatteras, NC, embedded within a broad baroclinic zone with moderate thermal gradient. During the following 24 hours, with the approach of an intense upper-level trough, the storm intensified rapidly over the warm Gulf Stream.

In order to simulate refractivity observations, we first conducted a control simulation with a version of the Penn State/NCAR mesoscale model version 5 (MM5). The horizontal resolution of this model was 90 km and there were 20 levels in the vertical. This simulation was initialized at 0000 UTC 3 January 1989 (defined as t = − 12 h) and was integrated for 60 hours. It covered the northern hemisphere with a mesh of 197x197x20. The initial conditions were obtained from conventional observations using the NCEP global analysis as the first guess. The lateral boundary conditions were obtained by linear interpolation of these analyses over 12-h intervals.

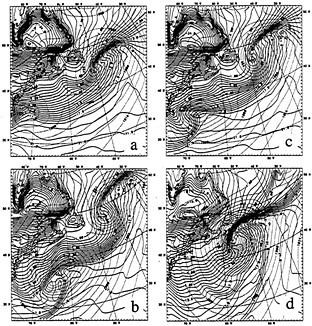

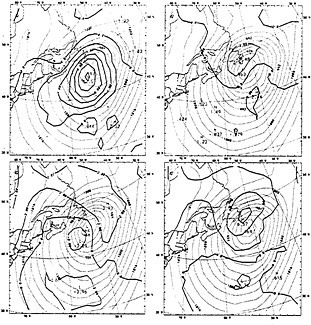

The control simulation reproduced the observed storm quite well (Kuo et al., 1996). Fig. 10 shows the control model's simulation of sea-level pressure and surface temperature for four time periods beginning with 0600 UTC 4 January to 1200 UTC 5 January, which are 30-h, 36-h, 42-h and 60-h

FIGURE 10 Sea-level pressure (4mb contour internal) and surface temperature (1°C contour internal) for control simulation

forecasts respectively. During this time period the model storm deepened from a central pressure of 987 mb to an intense storm with central pressure of 938 mb, which compared very well with the observed minimum pressure of 936 mb. Other features of the simulation were realistic as well, and thus we felt confident in extracting model data from the control simulation and constructing simulated refractivity data from these model data for use in subsequent numerical experiments.

To investigate the potential impact of refractivity data on subsequent model forecasts, we degraded the control model data at 1200 UTC 3 January 1989 (t=0) and then assimilated simulated refractivity data from the control simulation over a 6-h period from 1200 to 1800 UTC 3 January on the region shown in Fig. 11. We tested the impact of the simulated refractivity observations by running five 48-h simulations beginning at 1200 UTC 3 January and ending 1200 UTC 5 January 1989. To help avoid the “identical twin”

problem in which overly optimistic results are obtained when the identical model is used for both generating the observations and running subsequent forecasts to test the impact of the observations, we used a degraded version of MM5 in the assimilation experiments. In this version the horizontal resolution was 180 km, the number of vertical layers was 10 rather than 20, and the model domain consisted of the region shown in Fig. 11 rather than the full Northern hemisphere domain of the control simulation.

In Experiment 1, no refractivity data are used; the model is initialized with the degraded initial conditions at t=0. In Exp. 2, refractivity data are assumed available during the 6-h assimilation period at evenly spaced gridpoints 180 km apart. In Experiments 3 and 4, the refractivity data were assumed to be spaced in a random fashion with mean separations of 180 km and 360 km. Fig. 11 shows the location and orientation of the 360 km spaced observations. In Exps. 2-4, refractivity data were assumed available at all model levels, including the lower troposphere. Because obtaining accurate refractivity observations in the lower troposphere on a regular basis is still problematic, as discussed above, we performed Exp. 5 in which refractivity data are assumed available only above 3 km.

FIGURE 11 Randomly distributed and oriented simulated observations of refractivity with a mean spacing of 360 km.

The results show that the assimilation of refractivity data over the six-hour period improves the forecasts in all cases. Fig. 12 shows the 48-h forecasts of sea-level pressure for Exps. 1-4. Also shown are the errors in the sea-level pressure. Although all four simulations show significant errors compared to the control simulation (because of the degraded model used to make the forecasts), the forecasts which assimilate refractivity data are clearly better forecasts. Other aspects of the forecast also show improvement in the experiments which use the refractivity data (Kuo et al, 1996).

It is noteworthy that the forecast which assimilates refractivity observations on the regular 180-km grid and the one that assimilates observations in a random fashion show quite similar improvements; thus it is not necessary that refractivity observations be co-located with the model's grid points in order to have a significant positive impact. It is also important to note that eliminating the refractivity observations below 3 km has a significant negative impact.

FIGURE 12 48-h forecasts of sea-level pressure (dotted lines, contour interval 4 mb) and errors in SLP (solid lines, contour interval 2 mb) for Experiment 1(upper left), Exp. 2 (upper right) Exp. 3 (lower left) and Exp. 4 (lower right).

By analyzing the results of these experiments, Kuo et al. (1996) found that the assimilation of refractivity observations during the six-hour period causes the initial values of temperature, water vapor, winds and pressure to adjust in a dynamically consistent way to give an improved forecast. In particular, the definition of the

initial upper-level disturbance that triggered the low-level development off of Cape Hatteras, and the low-level thermal field off the North Carolina coast was improved by the assimilation of refractivity data. The importance of having observations of refractivity in the lowest 3 kilometers of the atmosphere was shown by a significant degrading of the forecast when these data were withheld.

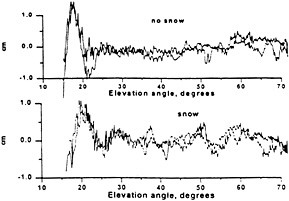

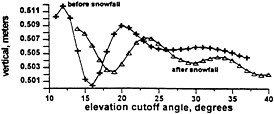

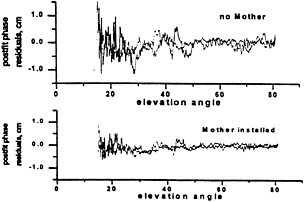

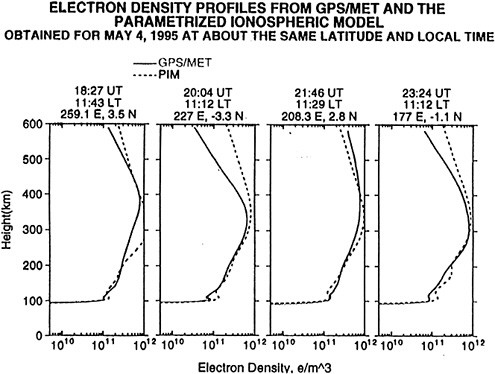

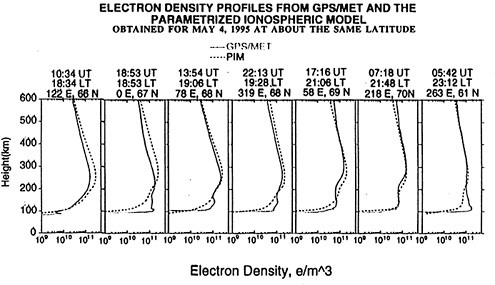

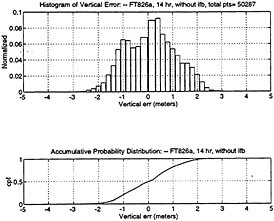

Active limb sounding of the atmosphere using Global Positioning System (GPS) radio signals received in low Earth orbit has been demonstrated by the GPS Meteorology (GPS/MET) instrument on the MicroLab-1 satellite launched in April 1995. In this paper the latest, improved temperature, water vapor and refractivity profiles obtained from GPS/MET are compared with radiosonde, operational gridded analyses from the National Centers for Environmental Prediction (NCEP) and other satellite data. Both individual soundings and statistics for more than 1000 soundings distributed globally are presented.

Accurate vertical profiles of refractivity are consistently obtained from approximately 30 km altitude to approximately 7 km altitude. Below 7 km, where multi-path effects and other sources of error are large, there are increasing difficulties in obtaining accurate profiles of refractivity. These difficulties are not thought to be fundamental to the technique, and efforts are underway to address them. Recent improvements in data processing have resulted in profiles up to 60 km which appear realistic when compared to HALOE and MLS data from the UARS.

Refractivity is a function of both temperature and water vapor. The GPS/MET temperature soundings agree closely with independent sources of data from approximately 30 km to 7 km where water vapor has a negligible effect. In this layer the mean differences between the GPS/MET temperatures and the other sources of data are approximately 1° C. The standard deviation of temperature differences in this layer range from 2° C to 3° C. The GPS/MET temperature profiles show vertical resolutions of about 1 km and resolve the location and minimum temperature of the tropopause very well.

Below 7 km, various sources of error produce an increasing number of erroneous soundings. However, under ideal conditions, accurate soundings have been obtained down to the surface. Also, under ideal conditions, it has been possible to calculate the atmospheric water vapor pressure from the GPS/MET refractivity and an independent estimate of temperature.

In observational system simulation experiments with a high-resolution numerical model, we showed that the four-dimensional assimilation of refractivity data over a six-h period produced a significant improvement in the subsequent forecast of a case of intense cyclogenesis. It is not necessary to separate out the effects of water vapor and temperature in order to use GPS/MET data in numerical weather prediction models. Nor is it necessary that the observations of refractivity be on a regular grid. Data assimilation studies in which randomly distributed refractivity data are assimilated directly into a model provide valuable information on both global and regional scales. Assimilating refractivity data caused dynamically consistent adjustments in the temperature, moisture and wind field in the model's initial state.

The GPS/MET observations show strong potential for contributing to atmospheric research and weather prediction. Accurate temperature profiles in the upper troposphere and lower stratosphere distributed uniformly over the Earth would be useful in operational numerical weather prediction, global and regional climate change studies and in studies of atmospheric dynamics and chemistry.

ACKNOWLEDGMENTS

We thank Bob Corell, Dick Greenfield, Jay Fein and Mike Mayhew of the National Science Foundation for their support of the GPS/MET project.

Fetzer, E. J. and J.C. Gille, 1994: Gravity wave variance in LIMS temperature. Part I: Variability and comparison with background winds. J. Atmos. Sci., 51, 2461-2483.

Kuo, Y.-H., X. Zou and W. Huang, 1996: The impact of GPS data on the prediction of an extratropical cyclone: an observing system simulation experiment. J. Dyn. Atmos, Ocean, (submitted).

Kursinski, E.R., G.A. Hajj, W.I. Bertiger, S.S. Leroy, T.K. Meehan, L.J. Romans, J.T. Schofield, D.J. McCleese, W.G. Melbourne, C.L. Thornton, T.P. Yunck, J.R. Eyre and R.N. Nagatani, 1996: Initial results of radio occultation observations of Earth's Atmosphere Using the Global Positioning System. Science, 271, 1107-1110.

Ware, R., M. Exner, D. Feng, M. Gorbunov, K. Hardy, B. Herman, Y. Kuo, T. Meehan, W. Melbourne, C. Rocken, W. Schreiner, S. Sokolovskiy, F. Solheim, X. Zou, R. Anthes, S. Businger and K. Trenberth, 1996: GPS sounding of the atmosphere for low Earth orbit: preliminary results . Bull. Amer. Met. Soc., 77, 19-40.

Yuan, L.R., R.A. Anthes, R.H. Ware, C. Rocken, W. Bonner, M. Bevis and S. Businger, 1993: Sensing climate change using the global positioning system. J. Geophys. Res., 98(D8), 14,925-14,937.

Zou, X., Y.-H. Kuo and Y.-R. Guo, 1995: Assimilation of atmospheric refractivity using a nonhydrostatic adjoint model. Mon. Wea. Rev., 123, 2229-2249.

Michael Exner

GPS/MET Program, University Corporation for Atmospheric Research

INTRODUCTION

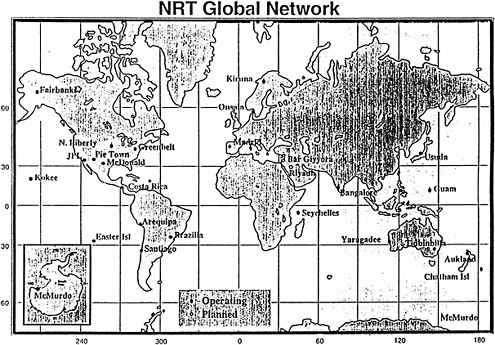

Over the last year, a new atmospheric remote sensing technology based on observations of GPS signals from space has been demonstrated by the GPS/MET Program.1 Based on preliminary results from this proof of concept program (Ware et. al. 1996), it appears likely that an operational GPS/MET system may be constructed in the near future. If such a system is constructed, it will require the use of real-time “fiducial data” and precise GPS orbit solutions derived from a network of globally distributed GPS ground fiducial sites. This paper provides a brief introduction to the GPS/MET technology and early results, and a projection of the data and ground network requirements for an operational GPS/MET observing system.

Less than 2 years after program start, the GPS/MET experiment got underway on April 3, 1995, when a small research satellite, MicroLab-1 (ML-1), was launched into a circular orbit of about 750 km altitude and 70 degrees inclination.2 The disk-shaped 76 kg satellite, which circles the Earth every 100 minutes, carries an instrument which is based on a precision dual frequency GPS receiver.3 This instrument is being used to demonstrate for the first time, using GPS signals, sensing of the terrestrial atmosphere by the radio occultation method.

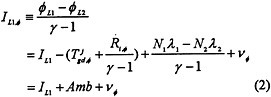

In the radio occultation method (Figure 1), atmospheric soundings are retrieved from observations obtained when the radio path between a GPS satellite and a GPS receiver in Low Earth Orbit (LEO) traverses the Earth's atmosphere (Ware et. al. 1996). When the path of the GPS signal begins to transect the mesopause at about 85 km altitude, it is sufficiently bent by refraction such that a detectable delay (1 mm) in the dual-frequency carrier phase observations is obtained by the LEO GPS receiver. As the occulted GPS satellite sets below the horizon as viewed from the LEO satellite, the signal path descends through successively denser layers of the atmosphere, and the delay increases to approximately 1 km at the Earth's surface. Thus, the atmosphere creates a unique signal with about 6 orders of magnitude in dynamic range. This “delay signal” can be inverted to obtain a profile of atmospheric refractivity vs. altitude, from which density, pressure, temperature, and moisture profiles can be computed under various conditions.

FIGURE 1 Radio occultation geometry.

|

1 |

The GPS/MET Program is managed by the University Corporation for Atmospheric Research (UCAR). Primary sponsorship has been provided by the National Science Foundation (NSF), with additional funding and support provided by the National Oceanic and Atmospheric Administration (NOAA), the Federal Aviation Administration (FAA) and the National Aeronautics and Space Administration (NASA). |

|

2 |

ML-1 is owned and operated by Orbital Sciences Corporation (OSC). Under contract with UCAR, OSC integrated the GPS/MET payload on ML-1 and delivers GPS/MET data to UCAR's Payload Operations Control Center (POCC) via Internet. |

|

3 |

The GPS/MET instrument was manufactured by Allen Osborne and Associates. The Jet Propulsion Laboratory (JPL) provided the special flight code used in the instrument and other engineering support for the program. |

A single LEO GPS/MET instrument could observe more than 500 such occultation events per day (250 rising occultations and 250 setting occultations). With an appropriate LEO orbit altitude and inclination, global coverage can be obtained with roughly uniform spatial sampling density. A constellation of 20 GPS/MET microsats would be capable of providing 10,000 soundings per day distributed globally. This would give global coverage with roughly the same spatial and temporal sampling density as the US radiosonde network provides today.

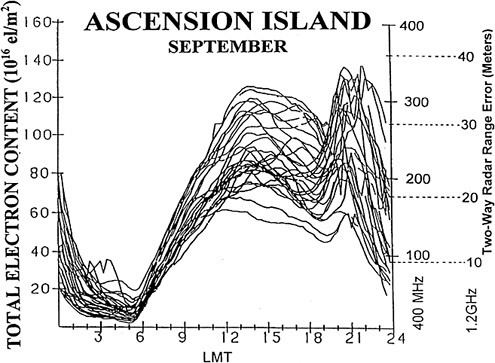

An operational GPS/MET observing system could significantly improve weather forecasts and provide valuable new data to support research on global and regional climate change (Kuo, 1996). The technology promises to provide valuable measurements of three important neutral atmospheric variables: temperature, pressure and water vapor. In addition to neutral atmospheric observations, the GPS/MET Program has shown that accurate, useful profiles of electron density and Total Electron Content (TEC) can be retrieved from GPS/MET observations through the ionosphere. These observations show promise for operational use within the new National Space Weather Program.4

Temperature profiles retrieved from several thousand GPS/MET “soundings” have been compared to radiosonde data, profiles from other satellite remote sensing instruments and analysis obtained from operational weather prediction centers, such as the National Centers for Environmental Prediction (NCEP). These comparisons have generally shown that GPS/MET temperature profiles agree within 1-2 K from about 5 to 40 km altitude. Additional development is underway to improve retrieval accuracy at altitudes below 5 km and above 40 km. Figure 2 gives an example of how a GPS/MET temperature profile compares to other data sources. Additional examples are given in the companion paper included in these NRC Proceedings, “GPS Sounding of the Atmosphere from Low Earth Orbit: Preliminary Results and Potential Impact on Numerical Weather Prediction”, by R. Anthes et. al.

FIGURE 2 A GPS retrieved temperature profile compared to temperatures interpolated from NCEP 2.5 degree gridded analysis and the MLS instrument on the UARS. Temperature profile comparison from occ_0022, 0215UTC, October 21, 1995.

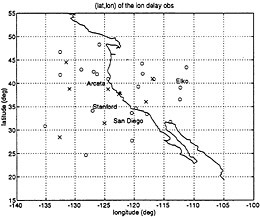

Using a variant of traditional “Differential GPS”, LEO satellite orbit and instrument clock errors are minimized by double differencing LEO GPS observations with ground-based observations collected from a network of fiducial sites (Figure 3). For the current GPS/MET proof of concept experiment, fiducial data is being provided by the International GPS Service (IGS) and NASA/JPL, typically with a 24-48 hour delay after the observations. For an operational GPS/MET observing system, fiducial data will be required in near real-time.

For many differential GPS applications, it is sufficient to collect fiducial data at modest sample rates. For example, the fiducial data available from IGS consists of 30 second samples typically. For other applications, such as precision air navigation, higher sample rates are required to track the dynamics of the platform.

|

4 |

National Space Weather Program: Strategic Plan, August 1995, Mr. Julian M. Wright, Jr., Chairman. |

FIGURE 3 Double difference geometry depicting one path (B2) transecting the atmosphere.

For GPS/MET, fiducial data is required for two independent purposes: (1) determination of precise orbits and (2) double difference processing of the occultation data. To compute precise orbits for LEO satellites, low rate (30 second) fiducial observations are adequate. Experience to date suggests that data from 20-30 such fiducial sites will be needed to obtain orbits with the required precision.5

To capture the high frequency dynamics of the signal as the ray path scans the neutral atmosphere, and to obtain high vertical sampling resolution, the sample rate on all links illustrated in Figure 3 above must be increased to 50 Hz or more for the 1 minute duration of each occultation.6 We refer to this fiducial data as “high rate fiducial data”. Simulations show global coverage could be provided from as few as 8 high rate fiducial sites. However, due to geographical limitations and the requirement for some redundancy, an operational system will require approximately 12-15 high rate fiducial sites.

To use GPS/MET soundings for weather prediction, the space and ground-based GPS/MET observations must be collected and processed at a central site in near real-time. High level meteorological data products then must be transmitted to the operational weather prediction centers where they will be assimilated along with other data into advanced 4DDA (four dimensional data assimilation) numerical models.

For the limited purpose of describing data and network requirements, the central site processing steps can be summarized as follows7:

-

Compute precise orbits for all GPS satellites using low rate observations (30 second data typical) from fiducial sites.

-

Compute the precise orbit of the LEO satellite(s) using the low rate observations from the LEO GPS/MET instrument, plus the low rate observations from fiducial sites and the GPS orbits computed in step 1 above.

-

Combine the precise GPS orbits, precise LEO orbits, low rate fiducial observations, high rate LEO and high rate fiducial observations to form high level meteorological data products.

To be competitive with other observing systems, the maximum latency (time from occultation event to data delivery at the operational centers) should be no more than 2 hours. To minimize latency, it is essential to identify the critical paths related to data collection and processing. With dedicated communications links, all high and low rate fiducial data could be collected in virtually real-time. However, data from the LEO satellites must be stored on the spacecraft until it is “in view” of a GPS/MET Earthstation. Once in view, the LEO data can be downloaded in 1-2 minutes. With judicious selection of LEO orbits and Earthstation sites, it will be possible to economically retrieve all LEO data with an average delay of about 50 minutes (100 minutes worst case).8 Once on the ground, the LEO data can be relayed to the central site in 1-2 minutes.

|

5 |

The number of fiducial sites needed varies depending on how well the sites are distributed globally. |

|

6 |

Provided that the fiducial site is equipped with a sufficiently stable local oscillator, such as a hydrogen maser, then the sample rate on links A2 and B2 can be reduced to approximately 1 Hz and interpolated to 50 Hz at the central data processing center. |

|

7 |

The specific real-time data required from a ground network supporting an operational system will depend on various architectural design tradeoffs. The processing steps assumed here are those for a system based on the architecture of the GPS/MET proof of concept system. |

|

8 |

With the addition of a data relay satellite, similar to TDRSS in functionality, it would be possible to reduce this delay to a few minutes. However, the added expense and complexity of this approach makes it unrealistic for a first generation system. |

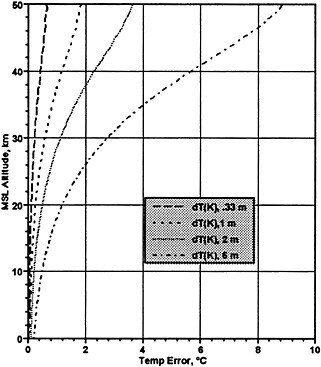

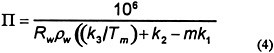

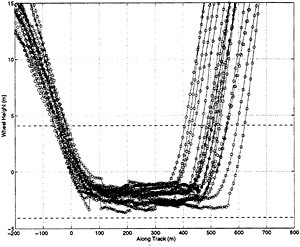

FIGURE 4 Simulated temperature error resulting from LEO & GPS orbit position errors of: 0.3, 1, 2, and 5 m RMS.

The processing time for Step 1 above is relatively lengthy, ordinarily taking on the order of several hours on a fast workstation. Fortunately, recent studies indicate GPS orbits may be propagated ahead on the order of 1 day without incurring orbit errors in excess of 0.5m. 9 Step 2 goes much faster, requiring on the order of 15 minutes. As with the GPS orbits, the LEO orbits can be propagated ahead. However, due to the lower altitude of the orbit, unmodelled atmospheric drag causes the predicted LEO orbit error to grow more rapidly with time. In addition, the retrieved meteorological data are more sensitive to errors in the LEO orbits than to errors in GPS orbits.10Figure 4 above shows the results of a simulation conducted by UCAR and the University of Arizona prior to the launch of ML-1 illustrating the sensitivity to orbit errors. These simulations suggest that GPS orbit error should be no more than approximately 0.5m to prevent orbit error from becoming a dominant source of temperature error at higher altitudes.

There are many system architectures which could be envisioned for an operational GPS/MET observing system. All involve trades between cost, accuracy and latency. However, if it is assumed that meteorological data must be delivered no later than 2 hours after the observation, and the LEO observations can take up to 100 minutes to flow through the LEO-to-central store and foreword communications network, then it follows that predicted orbits, propagated from the most recent observations practical, will be required for both the GPS and LEO satellites.

Since GPS orbits require relatively more computing time, but degrade more slowly, the GPS orbits could be re-computed on a 12 hour cycle and propagated ahead roughly 24-36 hours from the end of the data arc. This should provide ample time for communication and Step 1 processing so as to begin using new GPS orbits in Step 2 at a point when they are roughly 12 hours old (past the end of the data arc). By the time the next GPS orbit solution becomes available 12 hours later, the previous solution will be only 24 hours old.

As noted above, LEO orbits degrade more rapidly but take less time to compute. Since the LEO orbits rely on data from the LEO satellite itself, new LEO satellite orbits could be computed once per LEO satellite orbit and propagated ahead one orbit period (approximately 100 minutes).

Using predicted orbits for both the GPS and LEO satellites, the computing time available for Step 3 processing is tight, but adequate. Allowing 3 minutes for LEO to central site communications, and 2 minutes for communication of the results to the operational prediction centers, up to 15 minutes will be available for Step 3 processing. Based on current Step 3 algorithm run times, this should be adequate.

It should be emphasized that there are many alternative system architectures and strategies capable of delivering product within 2 hours of the observations. For example, if all LEO satellite data were collected via real-time data relay satellites (e.g., TDRSS), and state-of-the-art, massively parallel super computers were used, it would be theoretically possible to avoid the need for

|

9 |

See for example, “Scripps Orbit and Permanent Array Center (SOPAC) and Southern California Precision GPS Geodetic Array (PGGA)”, J. Behr and Y. Bock, appearing in these NRC Proceedings. |

|

10 |

A GPS or LEO orbit error on the order of a few meters does not result in significant temperature error per se. However, the associated velocity error manifests as Doppler error, which in turn manifests as bending angle error, and thus refractivity and temperature error. LEO satellites orbit with about 7 times the angular velocity of GPS satellites (100 minutes vs. 720 minutes per orbit). Thus, for a given position error, the GPS Doppler error is about an order of magnitude lower than for a LEO satellite with the same position error. |

predicted GPS or LEO orbits. However, the cost would be significantly higher, without providing any significant advantage for weather prediction. Thus, GPS predicted orbits will be a practical necessity for any operational GPS/MET observing system.

It appears likely that an operational GPS/MET observing system will be constructed in the near future to provide near-real-time data to weather prediction and space weather operational centers. A practical system will require real-time 30 second fiducial data from 20-30 fiducial sites, plus “high rate fiducial data” from approximately 15 select sites. In addition, predicted GPS and LEO satellite orbit solutions will be needed, updated at frequencies sufficient to keep overall orbit error below approximately 0.5 m at the time of use.

ACKNOWLEDGMENTS

Thanks to Bob Corell, Dick Greenfield, Jay Fein and Mike Mayhew of the National Science Foundation for their support of the GPS/MET project.

Kuo, Y.-H., X. Zou and W. Huang, 1996: The impact of GPS data on the prediction of an extratropical cyclone: an observing system simulation experiment. J. Dyn. Atmos, Ocean, (submitted).

Ware, R., M. Exner, D. Feng, M. Gorbunov, K. Hardy, B. Herman, Y. Kuo, T. Meehan, W. Melbourne, C. Rocken, W. Schreiner, S. Sokolovskiy, F. Solheim, X. Zou, R. Anthes, S. Businger and K. Trenberth, 1996: GPS sounding of the atmosphere for low Earth orbit: preliminary results . Bull. Amer. Met. Soc., 77, 19-40.

Judith Curry and Peter Webster

Program in Atmospheric and Oceanic Sciences, Department of Aerospace Engineering Sciences

University of Colorado-Boulder

INTRODUCTION

Unlike numerical weather prediction, climate models “spin up” their own water vapor fields after a short time, and thus initialization is not important. The primary application of water vapor data in climate modelling is to evaluate their performance. Additionally, water vapor data is needed to improve parameterizations for climate models. Accurate water vapor data is needed at certain “baseline” stations to test our understanding of atmospheric radiative transfer. Accurate water vapor amounts are also needed to improve cloud parameterization. Climate diagnostic studies use water vapor information to improve our understanding of the role of the atmospheric hydrological cycle in climate dynamics.

Water vapor feedback is believed to be among the chief mechanisms that would amplify the global climate response to increased concentrations of greenhouse gases. The positive feedback between surface temperature, water vapor and the greenhouse effect is referred to as the water vapor feedback. According to climate models, of the 4.2°C warming that results from a doubling of atmospheric CO2, ~1.7°C is contributed by increased water vapor. It is frequently assumed that global warming will result in an increase in atmospheric water vapor such that the atmospheric relative humidity will remain nearly although there has been controversy surrounding this topic.

Two elements of the U.S. Global Change Research Program (USGCRP) and the World Climate Research Programme (WCRP) are addressing water vapor in the context of climate. One of the components of the Global Water and Energy Experiment (GEWEX) is the GEWEX Water Vapor Programme (GVaP). GEWEX objectives in water vapor research are to:

-

determine the influence of water vapor on the Earth's radiation budget;

-

determine the processes that control the distribution of water vapor in the atmosphere;

-

improve the retrieval of water vapor from satellites;

-

improve the in-situ measurement of water vapor;

-

provide a global climatology of water vapor.

The programmatic goals of GVaP are:

-

assessment of global water vapor retrievals from satellites;

-

operation of a water vapor reference station that includes Raman lidar;

-

intercomparison of water vapor sensing instruments;

-

research and development to improve radiosonde humidity data.

GVap Progress to date includes:

-

NASA Global Water Vapor Data Set Project (Nvap) has blended TOVS, SSM/I and radiosonde data into a five year (1988-1992) climatology of total and 3-layer water vapor values on a 1° x 1° grid for daily, pentad and monthly averages (http://wwwdaac.msfc.nasa.gov)

-

GVap Validation Experiment (GVEX) was conducted in Fall '95 at Wallops Island, Virginia, coordinated with WMO's upper-air balloon-sonde intercomparison campaign. The DOE-ARM CART site will be used in future campaigns

-

Upper tropospheric/lower stratospheric water vapor workshop slated for mid-1996

-

The US NRC Panel on GEWEX is considering the US contribution to a broader international GEWEX initiative.

The second program that is addressing water vapor measurements for climate purposes in the Department of Energy Atmospheric Radiation Measurement Programme (ARM). ARM is a major program of atmospheric measurement and modeling intended to improve understanding of the processes and properties that affect

atmospheric radiation, with a particular focus on the influence of clouds and the role of the cloud radiative feedback (Stokes and Schwarz, 1994). Measurements of water vapor play a major role in the integrated radiative flux experiments and single-column model experiments. The ARM program is sponsoring extensive observational facilities for a period of 10 years at 3 sites: the southern great plains (centered at Lamont, OK), the tropical western Pacific Ocean (centered at Nauru), and the north slope of Alaska (centered at Barrow). GPS receivers have been installed at the southern great plains site.

In the context of understanding the role of water vapor in climate, precipitable water data from GPS (and eventually some information on water vapor profiles) will be useful mainly in those regions of globe where radiosondes are unavailable and/or where SSM/I and TOVS retrievals have large errors.

GVaP is relying on passive microwave observations of column water amount over oceans. This poses some problems in the tropics. SSM/I has a local coverage of twice per day in the tropics, and SSM/I precipitable water retrievals are unavailable under precipitating conditions. Sheu and Liu (1996) performed an intercomparison of 6 different SSM/I precipitable water algorithms against precipitable water derived from ship-borne radiosondes during TOGA COARE. The algorithms showed 10-15% errors when compared with the radiosondes. It is not clear at present whether SSM/I precipitable water retrievals in this environment can be improved to a more acceptable level of 5%. GPS may provide improved accuracy in this environment.

The importance of the diurnal cycle in tropical airsea interaction is being increasingly appreciated (e.g. Webster et al., 1996). It has been hypothesized that the diurnal cycle may influence lower frequency variations. GPS may provide the only method to observe the diurnal cycle in precipitable water.

Numerous islands in the equatorial oceans could in principle be used as locations for GPS receivers. Additionally, use of the TAO buoy array in the equatorial Pacific Ocean could be explored as potential platforms for GPS receivers.

Another oceanic region where current satellite observations of precipitable water are inadequate is theArctic Ocean. Simulation of clouds by climate and numerical weather prediction models is poor in the Arctic because of poor data bases and lack of understanding of physical processes that determine water vapor amount. Water vapor in the Arctic is of particular importance because of the hypothesized “hyper” water vapor feedback in the Arctic (Curry et al., 1995). At present, there are no radiosondes in the Arctic Ocean, and passive microwave techniques to retrieve precipitable water do not work over ice. TOVS retrievals of water vapor at high latitudes are also problematic. Since precipitable water values are commonly less than 1 g cm−2 in the Arctic, a considerable challenge is posed to any observing system.

Improved measurements of atmospheric water vapor would be useful for a variety of climate applications. The WCRP GEWEX GVaP program is the major national/international forum for addressing water vapor issues. The DOE ARM program is cooperating with GVaP to evaluate and improve techniques to determine water vapor amount. GPS technology has made some minor inroads into these programs, but there is some skepticism of the contribution to be made from GPS, beyond the observing network that is already in place.

GPS has the greatest potential for contributing to the global water vapor data base in remote land areas where there are no radiosondes, in the moist equatorial oceanic regions where passive microwave algorithms do not presently perform very well, and in the very dry polar regions.

Curry, J.A., J.L. Schramm, MC. Serreze, and E.E. Ebert, 1995: Water vapor feedback over the Arctic Ocean. J. Geophys. Res., 100, 14,223-14,229.

Sheu, R.-S. and G. Liu, 1995: Atmospheric humidity variations associated with westerly wind bursts during TOGA COARE. J. Geophys. Res., in press.

Stokes and Schwarz, 1994: The Atmospheric Radiation Measurement Program. Bull. Amer. Meteorol. Soc., 1201-1221.

Webster, P.J., C.A. Clayson, and J.A. Curry, 1995: Clouds, radiation, and the diurnal cycle of sea surface temperature in the tropical western Pacific. J. Clim., in press

Larry Cornman, Jothiram Vivekanandan, Richard Wagoner

Research Applications Program, National Center for Atmospheric Research

INTRODUCTION

In order to provide operationally useful hazardous weather information to both meteorologists and non-meteorologists, a robust method for synthesizing all available data is required. This methodology should include: data quality controls, algorithm modules, data and product integration, and concise, user-friendly outputs. In the following, a brief description of such a system is presented.

The strength of the system described below lies in the use of multiple data sources and multiple detection, diagnostic, and forecast algorithms. The synthesis of the disparate data and algorithm output is implemented via a fuzzy logic algorithm. As the use of fuzzy logic algorithms have been limited in the atmospheric sciences, a detailed introduction is given below.

As it is a novel source of data, a brief outline of possible applications of GPS data in a real-time weather system is presented.

The following list indicates a few potential applications of GPS data in a hazardous weather detection and forecasting system.

-

Hydrology

-

Watershed management.

-

Flash-flood monitoring.

-

Calibration of radar-based rainfall estimation techniques (Z/R relations).

-

-

Meso- and Storm Scale

-

Convective initiation.

-

Hazardous convective weather.

-

-

Icing and Winter Storms

-

Cloud liquid water content.

-

Snowfall rate estimation (calibration of radar-based Z/S relations)

-

-

Aviation-Specific Applications

-

Flight-level temperature.

-

Tropopause-height.

-

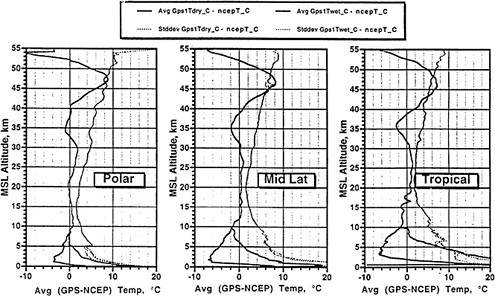

Figure 1 below illustrates the logical structure of the real-time system. At the top level is the data ingest and quality control algorithms. The output of these modules then feed into a suite of detection, diagnostic, and model-based algorithms.

Each algorithm module will perform its own quality control functions. These tasks will include the detection of time gaps or intermittence in the data stream and outlier detection from a meteorological perspective. Pertinent information about the data quality from a given algorithm module will be transmitted to the Integration Module (discussed below) via a set of confidence values.

In order to efficiently combine all the detection, diagnostic, and forecast information from the individual algorithm modules it is desirable to map all of this information onto a common spatial grid. The use of a common grid, the so-called analysis grid, will also facilitate the functions of the Alert Generation Module.

Along with the gridded detection and diagnostic information, each algorithm will produce a confidence value at each grid point. The idea behind the confidence value concept is twofold: producing a real time quality control metric and enabling an adaptive weighting

In order to minimize false alarms, it is important to quality control the output of the individual algorithms. This is distinct from the input quality control procedures described above, although filtering the raw input data will have an effect on the algorithm output data.

In the overall system, a number of individual detection, diagnostic, and forecast algorithms will be implemented. Each of these algorithms will generate information on the location and strength of hazardous weather. The purpose of the Integration Algorithm is to synthesize all of this disparate information in a cohesive spatial and temporal fashion such that accurate and reliable alerts are produced.

The disparate outputs from the individual algorithm modules, suitably mapped to their own analyses grids, must be systematically combined to produce the desired alerts. A simple and robust technique for performing this synthesis task is a fuzzy logic algorithm. The Integration Algorithm will ingest the various detection, diagnosis, forecast, and confidence grids from the algorithm modules and via the fuzzy logic machinery, produce composite gridded values for each type of hazardous weather phenomena.

In the last few years, fuzzy logic algorithms have evolved into a very useful tool in the scientist's and engineer's arsenal for solving complex, real-world problems. Fuzzy logic is well suited to applications in linear and nonlinear control systems, signal and image processing, and data analysis (Klir, 1988). The strength of these algorithms lies in their ability to systematically address the natural ambiguities in measurement data, classification, and pattern recognition. Typical, non-fuzzy applications require a rigid bifurcation into “true” or “false” -- nothing can lie in-between. Standard probability theory merely quantifies the likelihood that the outcome of a given process or experiment is true or false. Expert systems or neural network algorithms tend to be quite complicated and convoluted. It is usually quite difficult to add or subtract algorithm modules from these methods. Fuzzy logic allows for a more direct, intuitive and flexible methodology to deal with the vagaries of the real world.

While fuzzy logic algorithms have been widely and successfully applied in the engineering sciences, the use of these techniques in the atmospheric sciences has been somewhat limited, though highly effective. Due to the inherent ambiguity in many aspects of atmospheric data measurement, analysis, and numerical modeling, fuzzy logic should be a very useful tool in this field.

In general, there are four main steps in the construction of fuzzy logic algorithms: interest mapping, inference, composition and quantification.

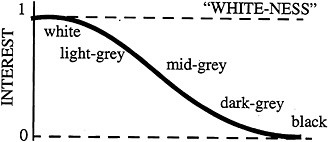

The first step performs the conversion of measurement data into scaled, unitless numbers which indicate the correspondence or “interest level ” of the data to the desired result. This correspondence is quantified by the application of a prescribed functional relation, or “interest map” between the data and the interest level. (In the fuzzy logic literature, the terminology “degree of truth” and “membership function” is used for “interest level” and “interest map,” respectively.) As an example, consider a number of balls which have been painted various shades of gray, where “white” and “black” are the two extremes. In Boolean logic, the question: “is this ball white?” can only have one of two answers, “yes” or “no.” Hence, the answer to this question for a white or a black ball is simple. However, for a gray ball which is “mostly white,” Boolean logic forces a “rounding-off,” i.e., the mostly-white ball is categorized as “white.” In fuzzy logic, an interest map which takes into account the shades of gray is constructed, as illustrated in Figure 2.

FIGURE 2 Interest map for “white-ness.”

The second step, inference, allows for the construction of logical rule expressions. In Boolean logic, such a rule might take the following form:

if (A = true and B = true) then (C = true) (1)

whereas in fuzzy logic such a rule might appear as:

if (A is 0.7 true and B is 0.3 true) then (C is 0.4 true) (2)

where a value of 0.7 true would be the resultant interest value after applying an interest map to the data A. For the “white-ness” example above, a ball that is light-gray may have an interest value greater than or equal to 0.7. It is important to note that in this example a maximum truth value of 1.0 was assumed. While this is enforced in Boolean logic, it is not necessary in fuzzy logic. That is, if it is appropriate for the given problem a maximum truth value of 2.8 could be used, so that 0.7 true would result in an interest value of 0.7 x 2.8 = 1.96. The use of inference rules is not incorporated in the current application, the synthesis of different data types is handled through the next step, composition.

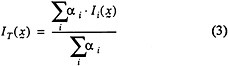

The third step in building fuzzy logic algorithms is composition wherein the interest values from a number of different data types are combined in a systematic fashion. This process can result in a new, higher-level logical rule or in a precise value. For the current application the interest values at a given analysis grid point are combined into a “total” interest value (IT), a precise, unique number for that point by using a weighted linear combination. The linear combination of the individual interest values is computed with coefficients (ai) chosen to maximize a given performance measure such as a statistical skill level. This can be done once and for all by various optimization routines or from empirical analysis. Mathematically, the total interest field at the coordinate location x is given by the simple formula,

where the sum is taken over all interest fields. It is important to note that all of the individual interest maps must have the same range, i.e., all 0 to 1 or all -1 to 1, etc. The normalization factor in equation (3), insures that the range values for the total interest will be in the same range as the interest maps. Another, more general application of equation (3) employs adaptive weighting,

where ß(x,t) = 1 can be considered as “confidence” values computed for each space-time point. The use of the confidence values as in equation (4) is a simple way to employ adaptive weighting in the composition process. For example, using the signal-to-noise ratio (SNR) measured at a given location by a weather radar to modulate the weights: low SNR leading to lower values of ß,and increasing the ß values with higher SNR values. Another use of the confidence values might be to lower the weight for a diagnostic algorithm if there is a quality control problem with the data from a given sensor. For example, with an algorithm that utilizes multiple sensor inputs, missing data from an individual sensor might degrade the quality of the overall result, so that a lower confidence in the output would be expected.

A problem with equation (4) occurs when there is a limited number of data sources giving valid information for a given grid point. Consider the extreme case of only one valid source of information “k” at a grid point (e.g., only one non-zero confidence value), whereby equation (4) reduces to,

That is, the use of the weighting functions are in effect negated. This can be problematic when the confidence value for the remaining data source is very low.

One way to combat this potential difficulty is via the introduction of a total confidence metric and dynamic thresholding Equation (6) defines the total confidence value,

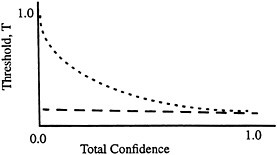

which gives information on the available confidence at a given point relative to the total possible. With this quantity, a dynamic threshold which is a function of the total confidence can then be assigned at a given point. This threshold value would vary between 1.0 at zero total confidence and a nominal value for a total confidence of

1. Figure 3 illustrates a dynamic threshold which uses an exponential decay.

With the total confidence and dynamic threshold, grid points which have very low total confidence would be required to have a total interest value close to 1.0 to be “valid”. Quantiatively, a valid point would be required to satisfy; IT(x,t) > T[x,t;CT(x,t)].

FIGURE 3 Dynamic threshold using exponential decay.

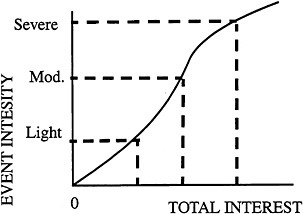

The final (optional) step, quantification, takes the result of the composition step -- if it had generated a composite fuzzy rule -- and produces a precise number. The fuzzy logic methods described above are quite powerful tools for event detection, i.e., for answering the question, “is something important happening at this location?” Once a given point is determined to satisfy this condition, a quantitative indicator of the magnitude of the event is required.

There are a few options for dealing with this issue: empirical testing, parallel magnitude grids, or inverse interest mapping. The empirical testing method would determine a suitable mapping between the total interest values and a measure of “truth.” The truth values can be derived from simulated or real data. This method would most likely be used (in this context) to set a small number of threshold values. For example, two interest values which define “moderate” and “severe” turbulence, respectively. If finer resolution is required, method three described below would be used.

The parallel magnitude grid method would separate the event detection and magnitude estimation tasks. That is, use the fuzzy logic machinery to find the location of the events. A set of analysis grids which are not “fuzzified,” (i.e. contain actual magnitudes), are then used to determine the magnitude of the events.

In the last method, inverse interest mapping, a special interest map would be generated that reflects a relationship between total interest values (via equation (4)) and event magnitudes. This map would then be used to produce a magnitude value at each grid point from the total interest value at that point, hence the term inverse interest mapping. Figure 4 illustrates what an inverse interest map may look like. This map can be constructed via the empirical method described above (which would actually obviate the need for that method), or by a amalgam of the individual interest maps (e.g. centroid or average, etc.).

FIGURE 4 Example of an inverse interest map.

These quantification methods would be used to set appropriate alert thresholds for total interest values or to assign specific magnitudes at the analysis grid points.

The output of the Decision Module is a set of analysis grids which have at each point the total interest values, magnitude, and dynamic threshold values, respectively. The purpose of the Alert Generation Module is to process these grids in order to determine the locations of the hazardous weather events and produce event-specific alerts.

The analysis grids do not give any spatial information, per se, only point-by-point values. In order to remove spurious grid points (i.e., outlier magnitudes or spatially isolated points), it is useful to build global features out of the local grid point data. An appropriate technique for this task exists (Dixon and Wiener, 1993).

This clumping algorithm will identify regions of the total interest grid which correspond to individual events.

In this step, events (i.e., clumped regions) which do not satisfy certain a priori criteria are discarded. These criteria may deal with spatial extent, temporal continuity, low confidence values, etc.

The alert magnitude is generated using the quantification analysis grid point values (from interest values or the parallel magnitude grid values) that are associated with the given event(s). The simplest method is to take a certain percentile of the grid point magnitudes within a given event. A median value (50th percentile) would prevent overwarning, though it might fail to provide critical hazard information. A higher value, for example the 85th percentile, could be a good compromise.

The general structure of a sensor-based system which can provide operational warnings of hazardous weather has been described. It is clear that the use of GPS data can enhance the quality of such a system. This warning system consists of a number of detection, diagnostic, and model-based algorithms. The outputs from the individual algorithm modules are synthesized using a fuzzy logic algorithm. Fuzzy logic algorithms are well suited to this type of problem, wherein a number of disparate data sources must be combined in a simple and efficient manner. A number of practical issues regarding the generation of warnings have also been discussed.

Dixon, M. and G. Wiener, 1993: TITAN: Thunderstorm identification, tracking, analysis, and nowcasting -- a radar-based methodology, Journal of Atmospheric and Oceanic Technology, 10, 785-797.

Klir, G. J. and T. A. Folger, 1988: Fuzzy sets, uncertainty and information, Prentice-Hall, New Jersey.

Thomas Runge, Yoaz Bar-Sever, Garth Franklin, Peter Kroger, Ulf Lindqwister

Tracking Systems and Applications Section, Jet Propulsion Laboratory

INTRODUCTION

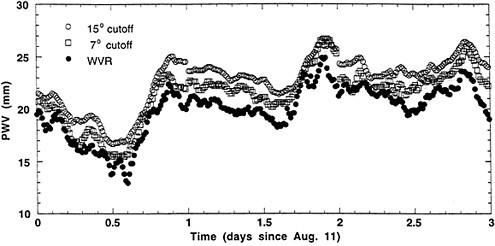

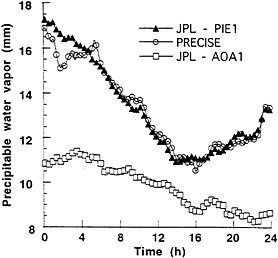

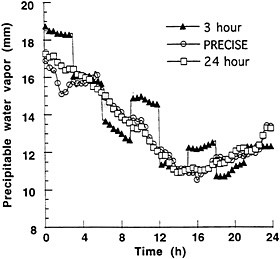

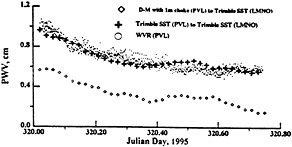

The size and scope of permanent arrays of continuously operating GPS receivers will soon rival the current worldwide network of approximately 600 radiosonde launch sites. The accuracy of ground-based GPS estimates of precipitable water vapor (PWV) has already been demonstrated through a number of direct comparisons with simultaneous radiosonde and water vapor radiometer (WVR) measurements of this quantity (NOAA, 1995). A GPS-based system for determination of PWV offers the added benefits of more frequent estimates of this quantity and the potential for near real time availability. Including additional PWV estimates into numerical weather models could significantly improve the accuracy of weather forecasts.

We describe here the components of a GPS-based system that is capable of providing near real time estimates of PWV. These include:

-

A surface meteorological instrument package capable of providing accurate measurements of barometric pressure and surface temperature. Ideally, this instrument package should be interfaced directly to a GPS receiver, and incorporate the pressure and temperature data directly into the GPS data stream.

-

A means of transferring both the GPS and surface meteorological data to a central processing facility in near real time.

-

A source of, or a means of computing, GPS orbits of sufficient accuracy whenever new data arrive at the central processing facility.

-

An automated data handling and analysis system that can produce estimates of PWV from the GPS and surface meteorological data and GPS orbits whenever new data from a remote site arrive at the central processing facility.