Page 37

2

Complex Systems

Life, our environment, and the materials all around us are complex systems of interacting parts. Often these parts are themselves complex. Take the human body. At a very small scale it is just electrons and nuclei in atoms. Some of these are organized into larger molecules whose interactions produce still larger-scale body functions such as growth, movement, and reasoning. The study of complex systems presents daunting challenges, but rapid progress is now being made, bringing with it enormous benefits. Who can deny, for instance, the desirability of understanding crack formation in structural materials, forecasting catastrophes such as earthquakes, or treating plaque deposition in arteries?

A traditional approach in physics when trying to understand a system made up of many interacting parts is to break the system into smaller parts and study the behavior of each. While this has worked at times, it often fails because the interactions of the parts are far more complicated and important than the behavior of an individual part. An earthquake is a good example. At the microscopic level an earthquake is the breaking of bonds between molecules in a rock as it cracks. Theoretical and computational models of earthquake dynamics have shown that earthquake behavior is characterized by the interacting of many faults (cracks), resulting in avalanches of slippage. These slippages are largely insensitive to the details of the actual molecular bonds.

In the study of complex systems, physicists are expanding the boundaries of their discipline: They are joining forces with biologists to understand life, with geologists to explore Earth and the planets, and with engineers to study crack propagation. Much progress is being made in applying the quantitative methods and modeling techniques of physics to complex systems, and instruments developed by physicists are being used to better measure the behavior of those systems. Advances in computing are responsible for much of the progress. They enable large amounts of data to

Page 38

be collected, stored, analyzed, and visualized, and they have made possible numerical simulations using very sophisticated models.

In this chapter, the committee describes five areas of complex system research: the nonequilibrium behavior of matter; turbulence in fluids, plasmas, and gases; high-energy-density systems; physics in biology; and Earth and its surroundings.

NONEQUILIBRIUM BEHAVIOR OF MATTER

The most successful theory of complex systems is equilibrium statistical mechanics—the description of the state that many systems reach after waiting a long enough time. About a hundred years ago, the great American theoretical physicist Josiah Willard Gibbs formulated the first general statement on statistical mechanics. Embodied in his approach was the idea that sufficiently chaotic motions at the microscopic scale make the large-scale behavior of the system at, or even near, equilibrium particularly simple. Full-scale nonequilibrium physics, by contrast, is the study of general complex systems—systems that are drastically changed as they are squeezed, heated, or otherwise moved from their state of repose. In some of these nonequilibrium systems even the notion of temperature is not useful. Although no similarly general theory of nonequilibrium systems exists, recent research has shown that classes of such systems exhibit patterns of common (“universal”) behavior, much as do equilibrium systems. These new theories are again finding simplicity in complexity.

Everyday matter is made of vast numbers of atoms, and its complexity is compounded for materials that are not in thermal equilibrium. The properties of a material depend, then, on its history as well as current conditions. Although materials in thermal equilibrium can display formidable complexity, nonequilibrium systems can behave in ways fundamentally different from equilibrium ones. Nature is filled with nonequilibrium systems: Looking out a window reveals much that is not in thermal equilibrium, including all living things. The glass itself has key properties, such as transparency and strength, that are very different from those of the same material (SiO2) in the crystalline state. Essentially all processes for manufacturing industrial materials involve nonequilibrium phenomena.

A few of the many examples of matter away from equilibrium are described below.

Page 39

Granular Materials

Granular materials are large conglomerations of distinct macroscopic particles. They are everywhere in our daily lives, from cement to cat food to chalk, and are very important industrially; in the chemical industry approximately one-half of the products and at least three-quarters of the raw materials are in granular form. Despite their seeming simplicity, granular materials are poorly understood. They have some properties in common with solids, liquids, and gases, yet they can behave differently from these familiar forms of matter. They can support weight but they also flow like liquids—sand on a beach is a good example. A granular material can behave in a way reminiscent of gases in that it allows other gases to pass through it, such as in fluidized beds used industrially for catalysis. The wealth of phenomena that granular materials display cannot easily be understood in terms of the properties of the grains of which they are composed. The presence of friction combines with very-short-range intergrain forces to give rise to their distinctive properties.

Glasses: Nonequilibrium Solids

When a liquid is cooled slowly enough, it crystallizes. However, most liquids, if cooled quickly, will form a glass. The glass phase has a disordered structure superficially similar to a liquid, but unlike the liquid phase, its molecules are frozen in place. Glasses have important properties that can be quite different from those of a crystal made of the same molecules—properties, for example, that are vital to their use as optical fibers. Understanding the relationship between the properties of a glass, the atoms of which it is composed, and the means by which it was prepared is key for the development and exploitation of glasses with useful properties. Understanding the nature of the glass transition (whether it is a true phase transition or a mere slowing down) is of fundamental importance and will yield insight into other disordered systems such as neural networks.

Failure Modes: Fracture and Crack Propagation

Understanding the initiation and propagation of cracks is extremely important in contexts ranging from aerospace and architecture to earthquakes. The problem is very challenging because cracking involves the interplay of many length scales. A crack forms in response to a large-scale strain, and yet the crack initiation event itself occurs at very short distances.

Page 40

In many crystals the stress needed to initiate a crack drops substantially if there is a single individual defect in the crystal lattice. This is one example of the general tendency of nonequilibrium systems to focus energy input from large scales onto small, sometimes even atomic scales. Other examples include turbulence, adhesion, and cavitation. Depending on material properties that are not well understood, a small crack can grow to a long crack, or it can blunt and stop growing. Recent advances in computation and visualization techniques are now providing important new insight into this interplay through numerical simulation.

Foams and Emulsions

Foams and emulsions are mixtures of two different phases, often with a surfactant (for instance, a layer of soap) at the boundary of the phases. A foam can be thought of as a network of bubbles: It is mostly air and contains a relatively small amount of liquid. Foams are used in many applications, from firefighting to cooking to shaving to insulating. The properties of foams are quite different from those of either air or the liquid; in fact, foams are often stiffer than any of their constituents. Emulsions are more nearly equal combinations of two immiscible phases such as oil and water. For example, mayonnaise is an emulsion. The properties of mayonnaise are quite different from those of either the oil or the water of which it is almost entirely composed. With the widespread use of foams and emulsions, it is important to design such materials so as to optimize them for particular applications. Further progress will require a better understanding of the relationship between the properties of foams and emulsions and the properties of their constituents and more knowledge of other factors that determine the behavior of these complex materials.

Colloids

Colloids are formed when small particles are suspended in a liquid. The particles do not dissolve but are small enough for thermal motions to keep them suspended. Colloids are common and important in our lives: paint, milk, and ink are just three examples. In addition, colloids are pervasive in biological systems; blood, for instance, is a colloid.

A colloid combines the ease of using a liquid with the special properties of the solid suspended in it. Although it is often desirable to have a large number of particles in the suspension, particles in highly concentrated suspensions have a tendency to agglomerate and settle out of the suspension.

Page 41

The interactions between the particles are complex because the motions of the particles and the fluid are coupled. When the colloidal particles themselves are very small, quantum effects become crucial in determining their properties, as in quantum dots or nanocrystals.

New x-ray- and neutron-scattering facilities and techniques are enabling the study of the relation between structure and properties of very complex systems on very small scales. Sophisticated light-scattering techniques enable new insight into the dynamics of flow and fluctuations at micron distances. Improved computational capabilities enable not just theoretical modeling of unprecedented faithfulness, complexity, and size, but also the analysis of images made by video microscopy, yielding new insight into the motion and interactions of colloidal particles.

The combination of advanced experimental probes, powerful computational resources, and new theoretical concepts is leading to a new level of understanding of nonequilibrium materials. This progress will continue and is enabling the development and design of new materials with exotic and useful properties.

TURBULENCE IN FLUIDS, PLASMAS, AND GASES

The intricate swirls of the flow of a stream or the bubbling surface of a pan of boiling water are familiar examples of turbulence, a challenging problem in modern physics. Despite their familiarity, many aspects of these phenomena remain unexplained. Turbulence is not limited to such everyday examples; it is present in many terrestrial and extraterrestrial systems, from the disks of matter spiraling into black holes to flow around turbine components and heart valves. Turbulence can occur in all kinds of matter, for example, molten rock, liquids, plasmas, and gases.

The understanding and control of turbulence can also have significant economic impact. For instance, the elimination or reduction of turbulence in flow around cars reduces fuel consumption, and the elimination of turbulence from fusion plasmas reduces the cost of future reactors (see sidebar “Suppressing Turbulence to Improve Fusion”). Perhaps the most dramatic phenomena involving turbulence are those with catastrophic dynamics, such as tornadoes in the atmosphere, substorms in the magnetosphere, and solar flares in the solar corona.

Although turbulence is everywhere in nature, it is hard to quantify and predict. There are four important reasons for this. First, turbulence is a complex interplay between order and chaos. For example, coherent eddies in a turbulent stream often persist for surprisingly long times. Second, turbu-

Page 42

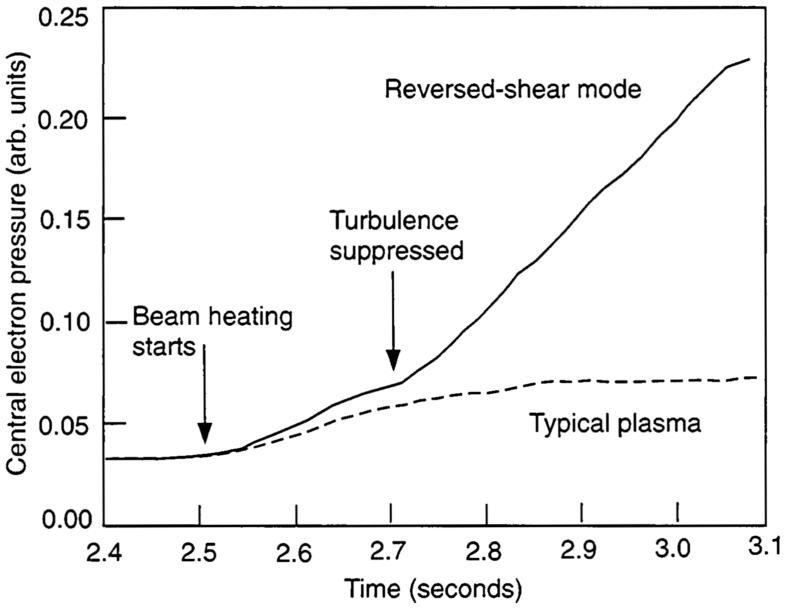

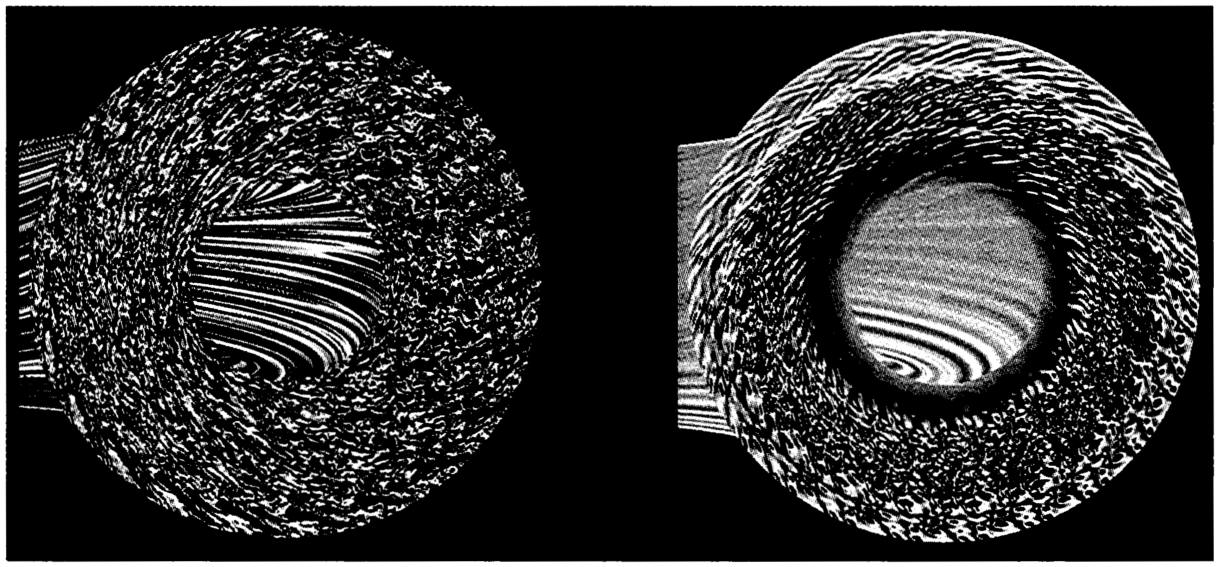

SUPPRESSING TURBULENCE TO IMPROVE FUSION

To produce a practical fusion energy source on Earth, plasmas with temperatures hotter than the core of the Sun must be confined efficiently in a magnetic trap. In the 1990s, new methods of plugging the turbulent holes in these magnetic cages were discovered. The figure below shows two computer simulations. The leftmost simulation shows turbulent pressure fluctuations in a cross section of a tokamak—a popular type of magnetic trap. These turbulent eddies are like small whirlpools, carrying high-temperature plasma from the center toward the lowertemperature edge. The rightmost simulation shows that adding sheared rotational flows can suppress the turbulence by stretching and shearing apart the eddies, analogous to keeping honey on a fork by twirling the fork. Several experimental fusion devices have demonstrated turbulence suppression by sheared rotational flows. This graph of data from the Tokamak Fusion Test Reactor at the Princeton Plasma Physics Laboratory, which achieved 10 MW of fusion power in 1994, shows how suppressing the turbulence can significantly boost the central plasma pressure and thus the fusion reaction rate.

~ enlarge ~

~ enlarge ~ |

lence often takes place over a vast range of distances. Third, the traditional mathematical techniques of physics are inappropriate for the highly nonlinear equations of turbulence. And fourth, turbulence is hard to measure experimentally.

Page 43

Despite these formidable difficulties, research over the past two decades has made much progress on all four fronts: For example, ordered structures have been identified within chaotic turbulent systems. Such structures are often very specific to a given system. Turbulence in the solar corona, for example, appears to form extremely thin sheets of electric current, which may heat the solar corona. In fusion devices, plasma turbulence has been found theoretically and experimentally to generate large-scale sheared flows, which suppress turbulence and decrease thermal losses from the plasma. The patchy, intermittent nature of turbulence so evident in flowing streams is being understood in terms of fractal models that quantify patchiness. The universe itself is a turbulent medium; much of modern cosmological simulation seeks to understand how the observed structure arises from fluctuations in the early universe.

The recognition that turbulent activity takes place over a range of vastly different length scales is ancient. Leonardo da Vinci's drawings of turbulence in a stream show small eddies within big ones. In theories of fluid turbulence, the passing of energy from large to small scales explains how small scales arise when turbulence is excited by large-scale stirring, like a teaspoon stirring coffee in a cup. Small-scale activity can also arise from the formation of isolated singular structures: shock waves, vortex sheets, or current sheets.

The difficulty of measuring turbulence is due partly to the enormous range of length scales and the need to make measurements without disturbing the system. However, the structure and dynamics of many turbulent systems have been measured with greatly improved accuracy using new diagnostic tools. Light scattering is being used to probe turbulence in gases and liquids. Plasma turbulence is being measured by scattering microwaves off the turbulence and by measuring light emission from probing particles. Soft x-ray imaging of the Sun by satellites is revealing detailed structure in the corona. Measurements of the seismic oscillations of the Sun are probing its turbulent convection zone. Advances in computing hardware and software have made it possible to acquire, process, and visualize vast turbulence data sets (see sidebar “Earth's Dynamo”).

HIGH-ENERGY-DENSITY SYSTEMS

When stars explode, a burst of light suddenly appears in the sky. In a matter of minutes, the star has collapsed and released huge quantities of energy. These supernova explosions are examples of high-energy-density phenomena, an immense quantity of energy confined to a small space. Our

Page 44

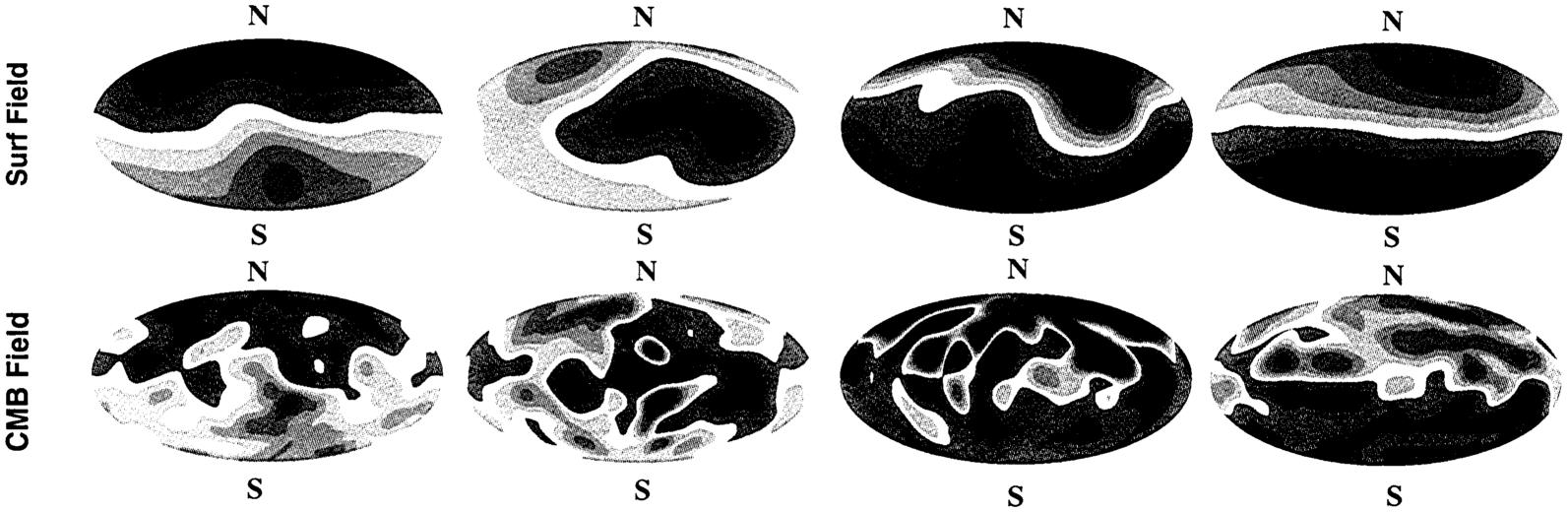

EARTH'S DYNAMO

Earth's magnetic field reverses direction on average every 200,000 years. The series of snapshots of a reversal shown below is taken from a computer simulation in which such reversals occur spontaneously. The snapshots are 300 years apart during one of two reversals in the 300,000 years simulated. In the top four pictures the red denotes outward field and the blue inward field on Earth's surface. The bottom four show the same thing beneath the crust on top of the liquid core. Through computer simulation this complex phenomenon is being understood and quantified.

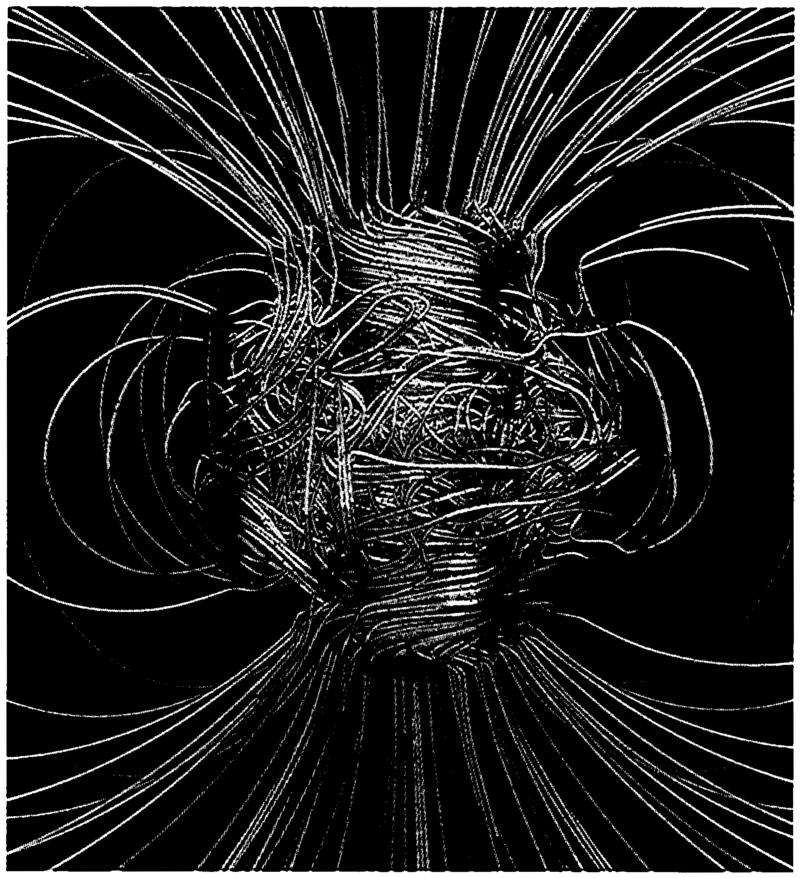

~ enlarge ~ Motions of Earth's liquid core drive electrical currents that form the magnetic field. This field pokes through Earth's surface and orients compasses. The picture at the right shows the magnetic field lines in a computer simulation of this process. Where the line is blue it is pointing inward and where it is gold it is pointing outward. The picture is two Earth diameters across.

~ enlarge ~ |

Page 45

experience with high energy densities has been limited; until recently, it was mainly the concern of astrophysicists and nuclear weapons experts. However, the advent of high-intensity lasers, pulsed power sources, and intense particle beams has opened up this area of physics to a larger community.

There are several major areas of research in high-energy-density physics. First, high-energy-density regimes relevant to astrophysics and cosmology are being reproduced and studied in the laboratory. Second, researchers are inventing new sources of x rays, gamma rays, and high-energy particles for applications in industry and medicine. Third, an understanding of high-energy-density physics may provide a route to economical fusion energy. And it is worth noting that the very highest energy densities in the universe since 1 µs after the Big Bang are being created in the Relativistic Heavy Ion Collider (RHIC) at Brookhaven National Laboratory.

Since 1980, the intensity of lasers has been increased by a factor of 10,000. The interaction of such intense lasers with matter produces highly relativistic electrons and a host of nonlinear plasma effects. For example, a laser beam can carve out a channel in a plasma, keeping itself focused over long distances. Other nonlinear effects are being harnessed to produce novel accelerators and radiation sources. These high-intensity lasers are also used to produce shocks and hydrodynamic instabilities similar to those expected in supernovae and other astrophysical situations.

High-energy-density physics often requires large facilities. The National Ignition Facility (NIF) at the Lawrence Livermore National Laboratory will study the compression of tiny pellets containing fusion fuel (deuterium and tritium) with a high-energy laser. These experiments are important for fusion energy and astrophysics. During compression, which lasts a few billionths of a second, the properties of highly compressed hydrogen are measured. The information gained is directly relevant to the behavior of the hydrogen in the center of stars. The NIF will also study the interaction of intense x rays and matter and the subsequent shock-wave generation. These topics are also being studied at the Sandia National Laboratories using x rays produced by dense plasma discharges. A commercial source of fusion energy by the compression of pellets will require developing an efficient driver to replace the NIF laser.

PHYSICS IN BIOLOGY

The intersection between physics and biology has blossomed in recent years. In many areas of biology, function depends so directly on physical

Page 46

processes that these systems may be fruitfully approached from a physical viewpoint. For example, the brain sends electrical signals (i.e., nerve impulses), and these signaling systems are properly viewed as a standard physical system (that happens to be alive). In this section, the committee describes several important areas at the interface of physics and biology and discusses some future directions.

Structural Biology

Modern structural biology, that branch of biophysics responsible for determining the structure of biological molecules down to the position of the individual constituent atoms, had its origins in the work on x-ray crystallography by von Laue (Nobel Prize in 1914) and W.H. Bragg and W.L. Bragg (Nobel Prize in 1915). This was followed by the discovery of nuclear magnetic resonance by Rabi and by Purcell and Bloch, resulting in Nobel Prizes in 1944 and 1952. With x-ray crystallography and modern computers, the structure of any molecule that forms crystals can be determined down to the position of all of the individual atoms. Through technological advances depending on microelectronics and computers, it is now possible to determine the detailed structure of many protein molecules in their natural state. The structures of hundreds of proteins are now known, and biophysicists are learning how these proteins do their jobs. For many enzymes, the structural rearrangements and specific chemical interactions that underlie the enzymatic activity are now known, and how molecular motors move is becoming understood.

The main challenges that remain are determining how information is transmitted from one region of a molecule to another—from a binding site on one surface of a protein to an enzymatic active site on the opposite surface, for example—and how the specific sequence of amino acids determines the final structure of the protein. Proteins fold consistently and rapidly, but how they search out their proper place in a vast space of possible structures is not understood. Understanding how proteins assume their correct shape remains a central problem in biophysics (see sidebar “Protein Folding”).

Biomechanics

Cells depend on molecular motors for a variety of functions, such as cell division, material transport, and motion of all or part of the organism (like the beating of the heart or the movement of a limb). At mid-century it was learned that muscles use specific proteins to generate force, but the

Page 47

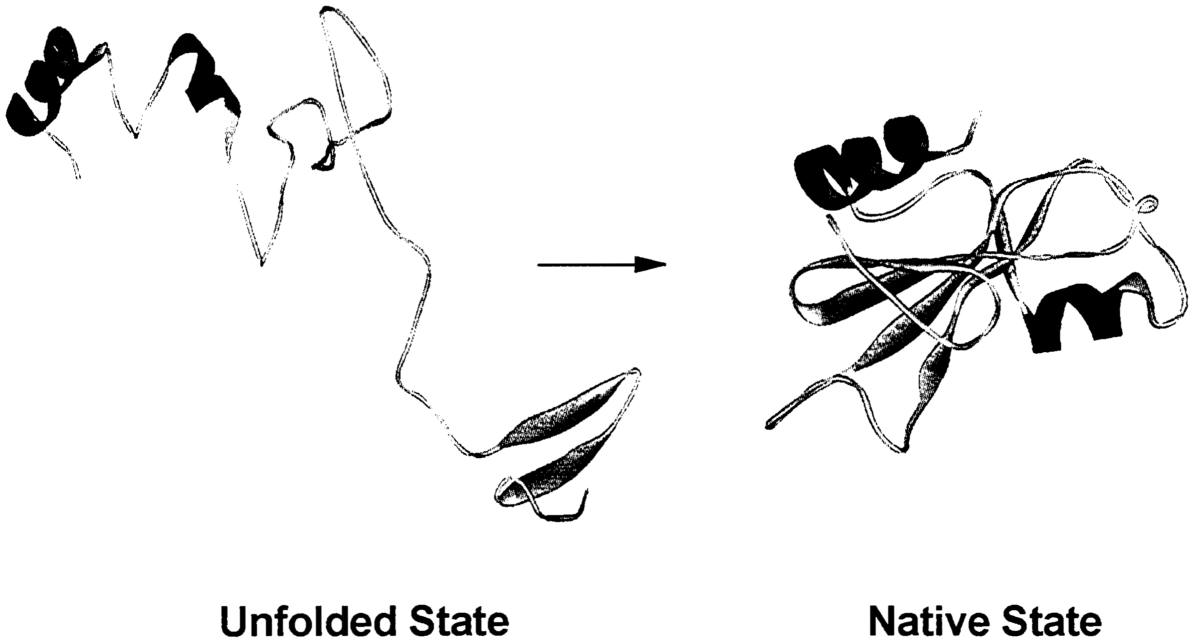

PROTEIN FOLDING

Proteins are giant molecules that play key roles as diverse as photosynthetic centers in green plants, light receptors in the eye, oxygen transporters in blood, motor molecules in muscle, ion valves in nerve conduction, and chemical catalysts (enzymes). Their architecture is derived from information in the genes, but this information gives us the rather disappointing one-dimensional chain molecule seen at left in the figure. Only when the chain folds into its compact “native state” does its inherent structural motif appear, which enables guessing the protein's function or targeting it with a drug. How can this folding process be understood? Experimentation gives only hints, so computation must play a key role. In the computer it is possible to calculate the trajectories of the constituent atoms of the protein molecule and thus simulate the folding process. This computation of the forces acting on an atom due to other protein atoms and to the atoms in the surrounding water must be repeated many times on the fast time scale of the twinkling atomic motions, which is 10−15 seconds (1 fs). Since even the fastest-folding proteins take close to 100 µs (10−4 s) to fold, all the forces must be computed as many as 1011 times. The computational power necessary to do this is enormous. Even for a small protein, a single calculation of all the forces takes 1010 computer operations, so to do this 1011 times in, say, 10 days requires on the order of 1015 computer operations per second (1 petaflop). This is 50 times the entire computational power of the world's population of supercomputers. Even though the power of currently delivered supercomputers is little more than 1 teraflop (1012 operations per second), computers with petaflop power for tasks such as protein folding are anticipated in the near future by exploiting the latest silicon technology together with new ideas in computer design. For example, IBM has a project named Blue Gene, which is dedicated to biological objectives such as protein folding and aims at building a petaflop computer within 5 years.

~ enlarge ~ |

Page 48

multitude of motor types now known was only dimly suspected. Large families of motors have now been characterized, and the sequence of steps through which they exert force has been largely elucidated. In recent years researchers have measured the forces generated by a single motor cycle and the size of the elementary mechanical step. This research has required the invention of new kinds of equipment, such as optical tweezers, that permit precise manipulation of single bacterial cells as well as novel techniques for measuring movements that are too small to see with an ordinary microscope.

The challenge for the early part of the 21st century will be to develop a theory that relates force and motion generation with the detailed structure of the various types of motor proteins. The end result will be an understanding of how the heart pumps blood and how muscles contract.

Photobiology

Photosynthesis in plants and vision in animals are examples of photobiology, in which the energy associated with the absorption of light can be stored for later use (photosynthesis) or turned into a signal that can be sent to other places (photoreception). The parts of molecules responsible for absorbing light of particular colors, called chromophors, have long been known, as have the proteins responsible for the succeeding steps in the use of the absorbed energy. In recent years biophysicists have identified a sequence of well-defined stages these molecules pass through in using the light energy, some lasting only a billionth of a second. Biophysicists and chemists have learned much about the structure of these proteins, but not yet at the level of the positions of all atoms, except for one specialized bacterial protein, the bacterial photosynthetic reaction center (the work of Deisenhofer, Huber, and Michel was rewarded with the Nobel Prize in 1998).

In photobiology, the challenge is to determine the position of all atoms in the proteins that process energy from absorbed light and to learn how this energy is used by the protein. This will involve explaining, in structural terms, the identity and function role of all of the intermediate stages molecules pass through as they do their jobs.

Ion Channels

The surface membranes that define the boundaries of all cells also prevent sodium, potassium, and calcium ions, essential for the life pro-

Page 49

cesses of a cell, from entering or leaving the cell. To allow these ions through in a controlled manner, a cell's membrane is provided with specialized proteins known as ion channels. These channels are essential to the function of all cell types, but are particularly important for excitable tissues such as heart, muscle, and brain. All cells use metabolic energy (derived from the food we eat) to maintain sodium, potassium, and calcium ions at different concentrations inside and outside the cell. The special proteins that maintain these ionic concentration differences are called pumps. As a result of pump action, the potassium ion concentration is high inside cells and the sodium ion concentration is low: Potassium ions are inside the cell and sodium ions are outside. Various ion channels separately regulate the inflow and outflow of the different kinds of ions (letting sodium come in or permitting potassium to leave the cell). These channels working in concert produce electrical signals, such as the nerve impulses used for transmitting information rapidly from one place in the brain to another or from the brain to muscles.

Ion channels exhibit two essential properties, gating and permeation. “Gating” refers to the process by which the channels control the flow of ions across the cell membrane. “Permeation” refers to the physical mechanisms involved in the movement of ions through a submicroscopic trough in the middle of the channel protein: A channel opens its gate to let ions move in and out of the cell. Permeation is a passive process (that is, ions move according to their concentration and voltage gradients) but a rather complicated one in that channels exhibit great specificity for the ions permitted to permeate. Some channel types will allow the passage of sodium ions but exclude potassium and calcium ions, whereas others allow potassium or calcium ions to pass. The ability of an ion channel to determine the species of ion that can pass through is known as “selectivity.”

At mid-century, it was realized that specific ion fluxes were responsible for the electrical activity of the nervous system. Soon, Hodgkin and Huxley provided a quantitative theory that described the ionic currents responsible for the nerve impulse (in 1963 they received the Nobel Prize for this work), but the nature of the hypothetical channels responsible remained mysterious, as did the physical basis for gating and selectivity.

In the past half-century, ion channels have progressed from being hypothetical entities to well-described families of proteins. The currents that flow through single copies of these proteins are routinely recorded (in 1991, Neher and Sakmann received the Nobel Prize for this achievement), and such studies of individual molecular properties, combined with modern molecular biological methods, have provided a wealth of information about

Page 50

which parts of the protein are responsible for which properties. Very recently, the first crystal structure of the potassium channel was solved, with its precise structure having been determined down to the positions of its constituent atoms.

The physics underlying gating, permeation, and selectivity is well understood, and established theories are available for parts of these processes. The main challenge for channel biophysicists now is to connect the physical structure of ion channels to their function. A specific physical theory is needed that explains how, given the structure of the protein, selectivity, permeation, and gating arise. Because the channels are rather complicated, providing a complete explanation of how channels work will be difficult and must await more detailed information about the structure of more types of channels. This is the goal of channel biophysics in the early part of the 21 st century. The end result will be an understanding, in atomic detail, of how neurons produce the nerve impulses that carry information in our own very complex computer, the brain.

Theoretical Biology and Bioinformatics

Theoretical approaches developed in physics—for example, those describing abrupt changes in states of matter (phase transitions)—are helping to illuminate the workings of biological systems. Three areas of biology in which this approach of physics is being applied fruitfully to improve understanding are computation by the brain, complex systems in which patterns arise from the cooperation of many small component parts, and bioinformatics. Although neurobiologists have made dramatic progress in understanding the organization of the brain and its function, further advances will likely require a more central role for theory. To date, most progress has been made by guessing, based on experiments, how parts of the brain work. But as the more complicated and abstract functions of the brain are studied, the chances of proceeding successfully without more theory diminish rapidly. Because physics offers examples of advanced and successful uses of theory, the methods used in physics may be most appropriate for understanding brain structure and function.

The life of all cells depends on interactions among the genes and enzymes that form vastly complicated genetic and biochemical networks. As biological knowledge increases, particularly as the entire set of genes that constitutes the blueprint for organisms—including humans—becomes known, the most important problems will involve treating these complex networks. Modern biology has provided means to measure experimentally

Page 51

the action of many—in some cases all—of an organism's genes in response to environmental changes, and new theories will be required for interpreting such experiments.

One of biology's most fundamental problems is explaining how organisms develop: How do the 105 genes that specify a human generate the correct organs with the right shape and properties? This question of pattern specification by human genes has strong similarities with many questions asked by physicists, and physics offers good models for approaching it and similar questions in biological systems.

The information explosion in biology produces vastly complex problems of data management and the extraction of useful information from giant databases. Methods from statistical physics have been helpful in approaching these problems and should become even more important as the volume of data increases.

EARTH AND ITS SURROUNDINGS

As the impact of humans on the environment increases, there is a greater need to understand Earth, its oceans and atmosphere, and the space around it. The economic and societal consequences of natural disasters and environmental change have been greatly magnified by the complexity of modern life. For this reason, the quest for predictive capability in environmental science has become a huge multidisciplinary scientific effort, in which physics plays an important role. In this section, the committee discusses three important areas—earthquake dynamics, coastal upwellings, and magnetic substorms—where new ideas are leading to dramatic progress.

Earthquakes

The 1994 Northridge earthquake (magnitude 6.8) in southern California was the single most expensive natural disaster in the history of the United States. Losses of $25 billion are estimated for damaged structures and their contents alone. Earthquakes are becoming more expensive with each year, not because they are happening more often but because of the increased population density in our cities. An earthquake on the Wasatch fault in Utah would have had little effect on the agrarian society in 1901; in 2001, a bustling Salt Lake City sprawls along the same fault scarp that raised the mountains that overlook it.

Earthquakes happen when the stress on a fault becomes large enough to cause it to slip. Releasing the stress on one fault often increases the stress on

Page 52

another fault, causing it to slip and creating an avalanche effect. Recent studies of computer models of coupled fault systems under stress have yielded important insights. A large class of these models produces a behavior called self-organized criticality, in which the model's fault system sits close to a point of only marginal stability. The stress is then released in avalanche events. A remarkably large number of models yield the same statistical behavior despite differences in microscopic dynamics. These models correctly predict that the frequency of earthquakes in an energy band is proportional to the energy raised to a power. This, in turn, allows the accurate prediction of seismic hazard probabilities.

Studies of natural earthquakes provide the opportunity to observe active faults and to understand how they work. The Navy's NAVSTAR GPS has provided a relatively inexpensive and precise method for measuring the movement of the ground around active faults. An exciting new development in recent years has been the use of synthetic aperture radar (SAR) to produce images (SAR interferograms) of the displacements associated with earthquake rupture.

The largest earthquakes on Earth occur within the boundary zones that separate the global system of moving tectonic plates. On most continents, plate boundaries are broad zones where Earth is deformed. Several active faults of varying orientations typically absorb the motion of any given region, raising mountains and opening basins as they move. Modeling suggests that the lower continental crust flows, slowly deforming its shape, while sandwiched between a brittle, earthquake-prone upper crust and a stiff, yet still moving, mantle. This ductile flow of the lower crust is thought to diffuse stress from the mantle portion of the tectonic plate to the active faults of the upper crust. The physical factors that govern this stress diffusion are still poorly understood (see sidebar “Pinatubo and the Challenge of Eruption Prediction”).

Coastal Upwellings

Environmental phenomena involve the dynamic interaction between biological, chemical, and physical systems. The coastal upwellings off the California coast provide a powerful example of this interaction. Upwellings are cold jets of nutrient-rich water that rise to the surface at western-facing coasts. These sites yield a disproportionately large amount of plankton and deposit large amounts of organic carbon into the sediments. Instant satellite snapshots of quantities such as the ocean temperature and the chlorophyll content (indicating biological species) provide important global data for

Page 53

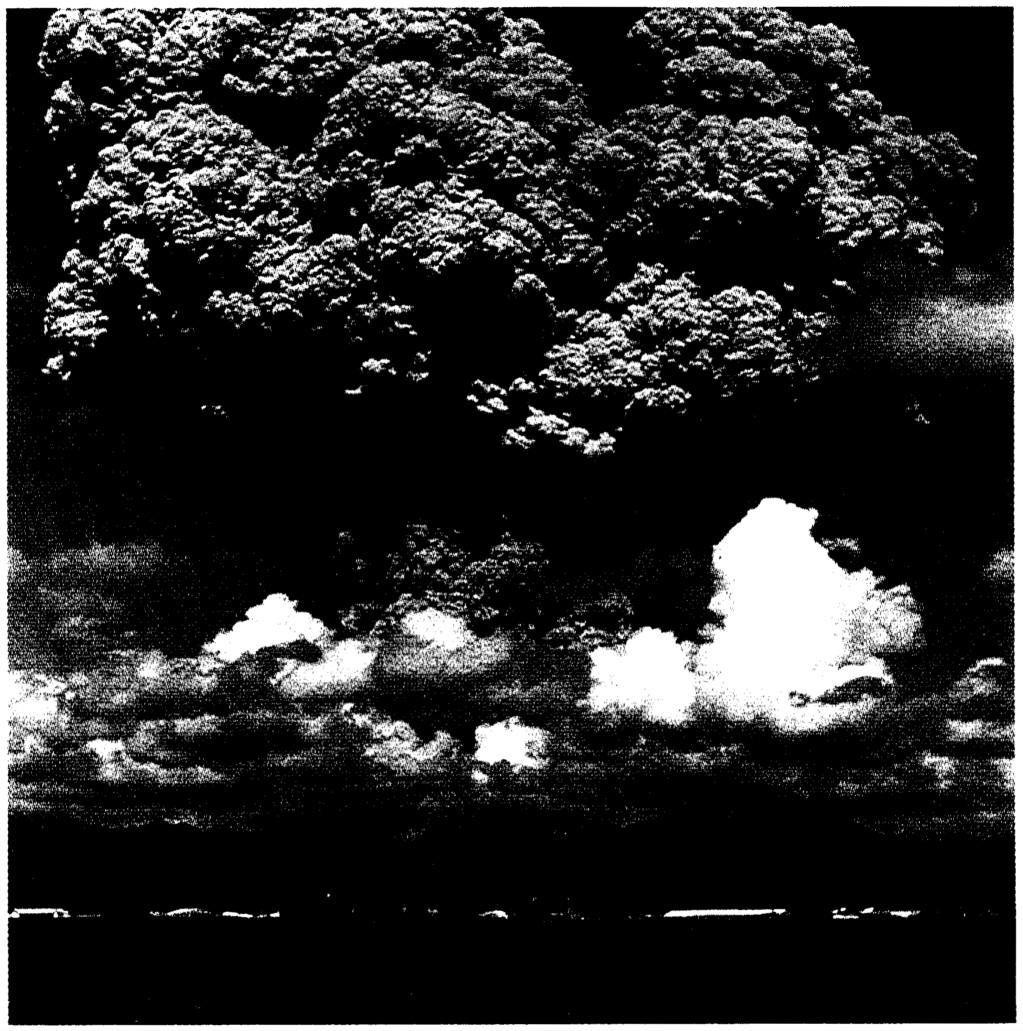

PINATUBO AND THE CHALLENGE OF ERUPTION PREDICTION

Volcanoes consist of a complex fluid (silicate melt, crystals, and expansive volatile phases), a fractured “pressure vessel” of crustal rock, and a conduit leading to a vent at Earth's surface. Historically, eruption forecasting relied heavily on empirical recognition of familiar precursors. Sometimes, precursory changes escalate exponentially as the pressure vessel fails and magma rises. But more often than not, progress toward an eruption is halting, over months or years, and so variable that forecast windows are wide and confidence is low. In unpopulated areas, volcanologists can refine forecasts as often as they like without the need to be correct all the time. But the fertile land around volcanoes draws large populations, and most episodes of volcanic unrest challenge volcanologists to be specific and right the first time. Evacuations are costly and unpopular, and false alarms breed cynicism that may preclude a second evacuation. In 1991, the need to be right at the Pinatubo volcano in the Philippines was intense, yet the forecast was a product of hastily gathered, sparse data, various working hypotheses, and crossed fingers. Fortunately for evacuees and volcanologists alike, the volcano behaved as forecast. As populations and expectations rise, uncertainties must be trimmed. Today's eruption forecasts use pattern recognition and logical, sometimes semiquantitative analysis of the processes that underlie the unrest, growing more precise and accurate. Tomorrow's sensors may reveal whole new parameters, time scales, and spatial scales of volcanic behavior, and tomorrow's tools for pattern recognition will mine a database of episodes of unrest that is now under development. And, increasingly, forecasts will use numerical modeling to narrow the explanations and projections of unrest. Volcanoes are one of nature's many complex systems, defying us to integrate physics, chemistry, and geology into ever-more-precise forecasts. Millions of lives and billions of dollars are at stake.

~ enlarge ~ |

Page 54

models that have to describe the physical (turbulent) mixing of the upwelling water with the coastal surface water, the evolution of the nutrients and other chemicals, and the population dynamics of the various biological species. Since the biology is too detailed to model entirely, models must abstract the important features. The rates of many of the processes are essentially determined by the mixing and spreading of the jet, which involves detailed fluid mechanics. Research groups have begun to understand the yearly variations and the sensitivities of these coastal ecosystems.

Magnetic Substorms

The solar wind is a stream of hot plasma originating in the fiery corona of the Sun and blowing out past the planets. This wind stretches Earth's magnetic field into a long tail pointing away from the Sun. Parts of the magnetic field in the tail change direction sporadically, connect to other parts, and spring back toward Earth. This energizes the electrons and produces spectacular auroras (the Northern Lights is one example). These magnetic substorms, as they are called, can severely damage communication satellites. Measurements of the fields and particles at many places in the magnetosphere have begun to reveal the intricate dynamics of a substorm. The process at the heart of a substorm, sporadic change in Earth's magnetic field, is a long-standing problem in plasma physics. Recent advances in understanding this phenomenon have come from laboratory experiments, in situ observation, computer simulation, and theory. It appears that turbulent motion of the electrons facilitates the rapid changes in the magnetic field—but just how this occurs is not yet fully understood.