6

Technology Integration

It will take more than technology development to field operationally capable, supportable, and affordable unmanned ground vehicles (UGVs) for the Army. The Army will also need to pursue a system engineering approach to component and subsystem design and integration. Key considerations for integration of UGV technologies are life-cycle support, software engineering and computational hardware, assessment methodology, and modeling and simulation.

System engineering is defined as an integrated design approach, from requirements definition, through design and test, to life-cycle support, that optimizes the synergistic performance of a system, or system of systems, so that assigned tasks can be accomplished in the most efficient and effective manner possible. Each component of each system, and each system within a system of systems, is designed to function as part of a single entity (the platform) and network (network-centric environment). Overall performance of a system is enhanced with the inclusion of manpower, reliability, maintainability, supportability, preplanned product improvement, and safety considerations.

A successful UGV technology integration strategy must seek to meaningfully evolve the overall UGV architectures (technical, systems, and operational) within the Future Combat Systems (FCS) architectures, the UGV interfaces with humans and organizations, and UGV relations with external environments (e.g., maintenance and supply). Without such a strategy, it will be difficult, if not impossible, to integrate and transition UGV technologies.

STATUS OF UNMANNED GROUND VEHICLE SYSTEM DEVELOPMENT

As the committee reviewed the UGV activities in the Army and elsewhere, it was apparent that the projects and programs are pursuing independent objectives and are not adequately coordinated to lead to higher-level developments. Top-level planning for UGV systems is not happening (at least, the committee was not made privy to any top-level planning) and the efforts are technology driven, rather than requirement or system driven.

The history of Army and Defense Advanced Research Project Agency (DARPA) UGV programs has been one of developing new UGV platforms in almost every program, which consumes a sizable fraction of the money and time available to each program without leaving substantial legacy to following programs, in terms of reusable development platforms. The goal of advancing perception technology, for example, would be furthered more effectively by making reasonably standardized, low-cost, low-maintenance test-bed vehicles available. The DARPA Perceptor program has taken an approach along these lines, but even that program required about $1 million and 1 year per vehicle for design, fabrication, software development, and integration to produce test-bed vehicle systems ready for new perception research, even though the program took commercial all terrain vehicles (ATVs) at under $10,000 each as the point of departure. The Demo III program spent a major share of its money on adapting and outfitting the experimental unmanned vehicle (XUV) platform. As the FCS architecture slowly evolves, the multiple efforts to develop UGV capabilities will require an effective degree of overall or enterprise management to make UGVs a viable part of the FCS and Objective Force concepts. Thus, there is an immediate need for a disciplined system engineering approach to determine UGV performance and platform design. This, in turn, will ensure an adequate flow-down of functional performance design requirements and a subsequent definition of technology requirements.

Missing Ingredients

Activity to define a UGV architecture that would provide an effective and economic path to modernization for technology insertion over time is missing from the Army UGV program. System structures that ensure operational

robustness and economic manufacturability are also absent. As implied above, the important role and fit of a UGV platform into the higher-level FCS system of systems structure (operationally, functionally, and synergistically) is missing.

Engineering process discipline can be a major force to ensure that the Army does the right thing as well as does the thing right. The committee applied such discipline to the conduct of its study by postulating UGV scenarios and related example UGV systems to guide its technology assessments. Regardless of whether the examples coincide with Army requirements for FCS, they provide a rational basis for identifying needed elements in the Army’s program.

From a technical viewpoint, important system engineering and design processes need attention. Besides a system engineering approach, technology integration considerations include designation of a lead for system engineering and lifecycle cost management, software engineering, development of an effective assessment methodology, and use of modeling and simulation assessment tools. These considerations are discussed further in this chapter.

To optimize system engineering efforts the Army should consider:

-

Assigning all technology integration and system engineering responsibilities to a single person (office) with resources and ability to influence changes in design and development

-

Identifying, developing, and integrating technologies early in UGV design that can reduce life-cycle support requirements

-

Developing an effective and efficient software engineering effort

-

Developing an integrated assessment methodology that includes experimental analysis throughout the design and development of the UGV, as well as early user evaluation and test of UGV components and systems

-

Developing metrics to support the above assessment and

-

Utilizing modeling and simulation, where appropriate, to support experimentation and test.

Integration and Advocacy

In the absence of clear requirements to drive UGV development efforts the Army UGV program must be one of developing capabilities, but without focus and advocacy a capabilities-driven process is likely to suffer from diffusion and incoherence. Although the existing technology demonstration and science and technology objective (STO) programs have explicit objectives (see Chapter 3), these efforts do not add up to a coherent, coordinated UGV program.

The Army needs to pursue an integrated science and technology (S&T) user approach to UGV technology developments. This integrated approach should consider all relevant S&T programs, identify gaps in capabilities and technology, support FCS planning and programming, and create a system engineering environment for development of UGV technologies and systems. Just as special high-level emphasis was needed for successful planning and implementation of digitization initiatives for the Army, the committee believes that it will take a strong advocate or office high in the Army chain of command to advance UGV technologies and systems. While the Army Digitization Office is an excellent example of a successful high-level integration office, the Army’s UGV programs are not mature enough nor funded enough to warrant such an office at this time.

There are multiple UGV technology programs being pursued across the Army, Department of Defense (DOD), and other services and agencies. There are multiple spokes-persons for the development of UGVs for FCS, including Army Research Laboratory (ARL), Army Aviation and Missile Command (AMCOM), and Tank-Automotive and Armaments Command (TACOM) within the Army; the DOD Joint Program Office; DARPA; and FCS program managers. These multiple efforts to produce UGV systems are not focused and require integration and visibility for overall program success.

Past attempts at integration have involved various approaches in the Army S&T and user communities, including technology roadmaps, integrated product teams, advanced technology demonstrations, and warfighting experiments. However, development of supporting technologies, such as mobility, communications, and power, are associated with separate functional branches of the Army research and development (R&D) community, each an S&T advocate in its own right. Additionally, the numerous potential functions for UGV systems make it difficult to identify a single user advocate.

Using system engineering principles, this advocate could influence the development and assessment of UGV operational concepts, as well as the direction and level of effort for UGV S&T programs. This person could capitalize on robotics technology development, both military and commercial, and maximize technology integration and transition efforts for prospective FCS UGV systems.

The immediacy of the essential system-level considerations, which are discussed in the remainder of this chapter, make the designation of a board-selected Army program manager (PM) for UGV technologies, serving as an agent for the ASA (ALT), a desirable and recommended course of action. The PM could serve as principal advocate and focus on the development and integration of several experimental UGV prototypes to expedite the evolution of viable systems. Working closely with the user community, this PM could significantly influence the requirements and the acceptance of UGV systems. This position would contrast with the present program managers (for FCS and Objective Force), who are focused on objectives that can be achieved with or without a dollar of investment in UGV technology.

LIFE-CYCLE SUPPORT

Support requirements that may have technology solutions (hardware or software) must be considered and designed early into UGV development programs, because integration late in system design may become very costly or even impossible. Determining support requirements for systems in development is usually based on reviewing historical support data of similar fielded predecessor systems and adjusting support requirements for new technology benefits or disadvantages.

The difficulty with determining future support infrastructure needs for a UGV system is that there is little to no historical information from operational uses of UGVs. There is indirectly related information from unmanned aerial vehicles (UAVs) and manned vehicles. The committee considered this information as well as its subject matter expertise to identify potential support infrastructure needs.

Support requirements should first consider manpower, skill level, and training needs of the operators, and then maintenance and transportation needs.

Manpower

A large UAV may have as many as 20 people involved with its operations. UGVs may need more than one operator to share tasks like maneuvering, sensing, and engagement. Optimally, one person should be able to operate at least one and probably a few UGVs. The human to UGV ratio needed for UGV operations in a 24-hr period will be dependent on the autonomy of the UGV, the complexity of the interface, the number of operators, and the cognitive loads on the operator(s). The cognitive loads should take into account operational mission tasks not associated with the UGV (e.g., an observer or loader in a manned attack vehicle may have an additional duty of being the operator for a UGV Wingman).

Skill Levels

Current Army UGVs require highly skilled personnel to operate them. The skill level of a UGV operator might have to be that of a combat vehicle commander (an E-6). Operation of multiple UGVs may require the skill levels of armored vehicle team leaders (an E-7 for two UGVs) and platoon leaders (an O-1 for three to four UGVs). Simulation experiments should be conducted to assess these needs. There will have to be separate UGV skill identifiers.

Training Needs

UGV trainers will be needed for operational users (at all TRADOC schools that utilize UGVs within their perspective branches). Training levels will have to be maintained with system (e.g., software) upgrades. Training will vary from that required for teleoperation to training needed to manage network centric UGVs.

To begin to understand the requirements for UGV personnel (operators and trainers), the Army should conduct technical and simulation assessments of manpower, skill levels, and training. The human–robot interaction technologies will significantly impact these personnel needs. Trade-off analyses need to be conducted to assist in determining levels of effort and design requirements for human–robot interaction technology.

Maintenance Needs

Part of the problem with current UGVs is that they need more people to operate and maintain them than the general military population realizes. By today’s standards, when a UGV returns to its maintenance location, two levels of maintenance capability may have to be available immediately: Level I (organic) for checking fluid levels, tire pressures, and so on, and Level II for UGV hardware (organic and automotive), vetronics, and software and the mission package (numbers and types relative to the mission package).

Cooperative diagnostic systems (for an integrated assessment of UGV hardware, vetronics, software, and mission package) must be designed into the UGV to assist maintenance personnel in identifying UGV and mission package faults early enough to prevent catastrophic failures. These diagnostic systems must maintain historical records of faults that occur during operations, just as a human would relay problems during operations. The less capable the cooperative diagnostics, the more highly skilled and cross-trained the Level II maintenance personnel will have to be. For a UGV with learning software the software technician may need to have the capability and skills to recognize that unwanted behavior has been learned and be able to modify the UGV’s behavior to erase that fault. Additional personnel may be needed as UGV maintenance instructors at maintenance schools. Cooperative diagnostic technology should be designed and integrated into the Army UGV program now. Without it preventative maintenance and repairs may become too resource intensive.

Postulated maintenance requirements for the Wingman are at a level that it could self-diagnose its maintenance problems and allow that information to be downloaded for action by human maintainers. When receiving alerts from its diagnostic software, the Wingman would also notify the section leader of serious problems that could impact current operations. The Wingman concept was predicated on humans doing the maintenance.

The Hunter-Killer team would be a step beyond that in maintenance capability—each robot would not only have self-diagnostic capabilities but also be able to do some self-repair. NASA (National Aeronautics and Space Administration) is initiating research on robotic planetary explorers that can perform self-repair or that can repair other robots, thus

extending the lives of robots.1 Using such a concept, the Hunter-Killer marsupial UGV could actually do some field repairs on other Hunter-Killers or on themselves. Another concept is that maintenance could be conducted between missions, in safe areas, by other highly specialized robots.

This self-repair capability would significantly reduce the requirement for human maintenance, thus enabling a very small ratio of humans to UGVs—the idea being that the Hunter-Killer team would have evolved to a state where “unmanned” means “almost no people.” Whether the committee’s number is too big or too small is obviously a matter of judgment and should be investigated further. The committee was attempting to think “out of the box” not only about UGV operational capabilities but also about maintenance support.

Transport Needs

For rapid, precise movements in small areas (e.g., roll on, roll off ship) or movements over large distances (moving in convoys on roads over large distances in a short amount of time), large UGVs may need to have an override driver’s position for humans to operate the vehicle for those times when a human can move the vehicle more efficiently than the UGV (tethered, semiautonomous, or even autonomous). This may require standby drivers for the UGVs.

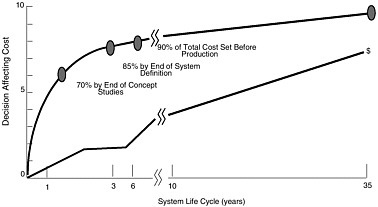

Figure 6-1 illustrates how most of the life-cycle cost decisions are made early in the development of a system (top line) when relatively small amounts of money are available for the program (bottom line). By the end of the concept design phase, for which S&T technologies have a major impact, decisions have already been made that impact 70 percent of a system’s life-cycle costs. Thus, the use of system engineering processes in UGV S&T programs will significantly influence the life-cycle costs of UGV systems. Additionally, it is important for the Army’s UGV S&T program to define and assess technologies and concepts that when integrated into a UGV system, may significantly reduce lifecycle costs.

The foregoing provides the basis for the answer to Task Statement Question 5.c in Box 6-1.

SOFTWARE ENGINEERING

The effectiveness of the software engineering effort for a program the size and scope of the UGV will be determined by key programmatic decisions. Among these decisions are the architecture of the hardware and software infrastructure, the general development methodology, and software development environment and tool sets.

Software Architecture Considerations

Well-defined software architecture is a necessary antecedent to efficient software engineering. The architecture must be defined at several levels of abstraction, encompassing the basic computing infrastructure (e.g., processor and operating system), the inter-application communications infrastructure and services (communication profiles and middleware), and ancillary support infrastructure (e.g., system health, fault monitoring, and recovery; software loading). To enable efficient long-term evolution of the UGV computing infrastructure and software, an open system architecture approach is mandatory. Open systems leverage industry-standard application programming interfaces and enable large systems to be constructed quickly from existing components. Further, open-system architectures enable the use of commercial off-the-shelf (COTS) and/or open source hardware and software for major infrastructure components such as the processor, operating systems, communication stacks, and middleware. Substantial non-recurring cost savings will be realized during initial development as well as during maintenance and evolution.

The use of COTS (and/or open source) hardware, operating system, and middleware ensures a degree of hardware and software independence. Of these major parts of the software infrastructure, the middleware layer is perhaps the most critical, as it enables definition of hardware-independent services and inter-application interfaces. In addition, middleware allows cooperating applications to be located as needed on the communications network in order to meet system requirements for responsiveness or redundancy. It also allows applications to be developed and deployed without concern for the processor or network topology and technology. Changes in the processing hardware or network design may impact the open source or middleware implementations, but revising the infrastructure software would only need to be done once for a large system. Software applications may simply need to be recompiled.

Software Development Methods and Technologies

By taking the open-system approach to UGV system and software architecture, numerous opportunities are afforded to software developers. Most significant among these is the ability to develop and test applications and systems of applications on ordinary microcomputers and workstations. Transferring applications from the development hosts to lab equipment for final testing can be as simple as copying the binary if the development environment accurately replicates the embedded hardware. This allows for rapid, iterative software development and integration without dependence on embedded hardware development.

FIGURE 6-1 Life-cycle cost decisions. SOURCE: Adapted from Jones (1998).

Industry-standard middleware technology enables an object-oriented component-based application development approach. Use of object-oriented technology allows the use of existing COTS tools and methods in the specification of the software engineering environment. Note, however, that use of object-oriented technology in high-assurance systems can be problematic if certain restrictions are not enforced. Recent research in the application of object-oriented technology to high-assurance systems promises to improve the predictability of such systems, while retaining the benefits of the object-oriented approach: abstraction, encapsulation, inheritance, and polymorphism.

To increase the efficiency of software development, a model development approach should be used. In the same way that high-level languages obviated the need for software developers to be concerned with how processor registers are used, model development eliminates the concern about how a source module is coded. Automatic code generation and automatic test case generation based on detailed requirements and design models substantially reduce development effort. Examples of commercially available model development approaches include the Object Management Group’s (OMG) Model Driven Architecture and Rational’s Unified Process.

Where necessary, formal methods can be applied to prove the correctness of software requirements for high-assurance applications through the use of formal modeling languages and model checkers.

Given well-defined software architecture and prescriptive application construction guidelines based on an open architecture (i.e., software building codes), applications can be efficiently created, integrated, and maintained.

Software Technology Gaps

To put the capabilities of today’s software technology in perspective, weaknesses must be considered. For example, current software technology focuses on specification and implementation of individual applications or individual software components. Software technology is weak in the specification and testing of systems of real-time software components (software systems of systems). Object-oriented and component-based software development approaches provide the potential to solve these problems, but more work is needed in methods and tools aimed at the inter-application level. One effort focusing on this level of abstraction is the Society of Automotive Engineers’ (SAE) emerging standard AS-5C, which defines an Avionics Architecture Definition Language (AADL). The AADL has the potential to evolve into a general-purpose-architecture description language for hard real-time embedded systems.

Specification of complex systems is only half of the battle. Typically, validation approaches for key technical system-level performance measures (e.g., whether deadlines can be met, whether application interactions meet requirements) are ad hoc. There is no standardized approach to validating or verifying inter-application performance requirements. Once an inter-application specification language (like AADL) is in place, automated approaches to validating and verifying the interfaces can be developed.

End user configuration management of software systems of systems can be problematic due to evolving application functionality. Certain applications in the UGV application domain will evolve more rapidly than others. Without making a concerted effort to maintain backward compatibil-

|

BOX 6-1 Task Statement Question 5.c Question: Are there implications for Army support infrastructure for a UGV system? For example, will other technologies need to be developed in parallel to support a UGV system, and are those likely to pose significant barriers to eventual success in demonstrating the UGV concept or in fielding a viable system? Answer: Cooperative diagnostic systems (capable of providing an integrated assessment of UGV hardware, vetronics, software, and mission-function equipment) must be designed into the UGV to assist maintenance personnel in identifying UGV/mission-package faults early enough to prevent catastrophic failures. These must be developed in parallel to support UGV systems. Other support requirements that may have technology solutions (hardware or software) must be considered and designed early into UGV development programs, because integration late in system design may become very costly or even impossible. Support requirements are usually based on reviewing historical data of similar fielded systems and adjusting as appropriate. There is little historical information on UGV systems; the committee had to resort to similar information on UAV system and manned vehicle systems operations and experiences to identify likely support infrastructure needs and weaknesses. These include requirements for operators (number, skill level, and training), vehicle maintenance, and deployment transportation. |

ity, software upgrades will need to be performed in a block (i.e., multiple software components would need to be upgraded simultaneously). This complicates vehicle maintenance and logistics significantly. For example, inter-application compatibility would need to be determined and compatibility data consulted whenever a software upgrade was planned or performed. This problem is similar to that of installing new or updated software on a microcomputer and discovering that device drivers also need to be updated at the same time.

Among the most problematic software technologies to specify and verify is that of adaptive algorithms. Validation of nonadaptive high-assurance systems is well understood and can be efficiently performed through the application of test automation tools and coverage analyzers. The challenge is how to validate systems that change their behavior in response to external conditions. Research into these methods is being performed, but to date no standardized approach exists.

While the software technology principles that have been discussed may be common to all system developments, the area of adaptive algorithms and intelligent software may prove to be critical to the timeline for development of acceptable UGV systems. As discussed in Chapter 4, significant uncertainty exists regarding the degree to which methods of machine learning will apply to UGV systems. The Army must determine and invest in decision making and control algorithms that will be adequate to support required levels of performance, because the impact on time and effort to complete software developments could be severe.

For over two decades, formal methods have held out the promise of mathematically precise specifications and automated verification of system and software specifications. Industrial adoption has been slow for a variety of reasons, including notations that engineers find difficult to use, limited offerings in U.S. universities, and lack of automated tools; however, this is changing rapidly. In recent years, several companies, mostly in Europe, have successfully used notations such as State Charts, Lustre, Esterel, and B on large commercial products. These initiatives routinely report savings of 25 to 50 percent in development costs and an order-of-magnitude reduction in errors. These gains are achieved through early identification of errors, better communication between team members, automated verification of safety properties, and automatic generation of code and test cases.

COMPUTATIONAL HARDWARE

An ideal UGV would be capable of replacing an equivalent manned vehicle. Unfortunately, present-day limits on computing power, among other factors, make this expectation highly unrealistic. It is clear that a brute-force approach to mimicking the computational power of the brain cannot be supported by semiconductor technology. While software can do much to enable machines to mimic human behaviors, the upper limit on the computing power has always been determined by hardware, and this will continue to be the case for the future. Thus it is useful to examine present and projected hardware performance not only to estimate how much room exists for improvement but also to ensure that the Army aims for reasonable targets and avoids unrealistic expectations.

Human Versus Semiconductor-Based Technology

The human brain is capable of performing about 1014 computations/second (c/s) (Moravec, 1999; Albus and Meystel, 2001), has the capacity to store some 1015 bits of information, and achieves this level of performance with a relatively low power input of 25 watts. In its major conscious task of visual perception it is aided by the fact that 90 percent of the image processing of the output of the approximately 108 pixels of the eye is actually done in the retina, which is connected to the brain by approximately 106 nerve fibers that operate in a pulse-code-modulation mode at approximately 50 Hz (Werblin et al., 1996).

In UGVs the equivalent capacity is supplied by semiconductor technology. One characteristic of semiconductor technology that is often taken for granted is its continuing rapid improvement of capabilities. Over the last 30 years the various measures of performance have faithfully followed

Moore’s law, which states that capabilities double approximately every 18 months (Intel, 2002). This essentially self-fulfilling prediction is perhaps better understood as a guideline used by the industry to ensure that semiconductor technology advances uniformly across an enormously complex front. The questions that should be asked in the context of UGVs are where are we today and where are we likely to be in the future?

Considering first computing power, laptop computers currently operate at about 109 c/s with 100 W of power. Highend, reasonably portable systems operate at about 1010 c/s and 1,000 W. Thus computational power is presently about four orders of magnitude short of human performance and has considerably greater power requirements.

Future performance capabilities can be estimated in several ways. One possibility is to count the number of functions (transistors) per chip, which for high-end computers is predicted to rise from its present value of about 2 × 108 to about 2 × 1010 in 2014 (SIA, 2001). Another, but less accurate measure is to extrapolate the performance gains made over the last 30 years. Using the 1995 trend, Albus and Meystel project a human-equivalent performance level of 1014 c/s at 2021 (Albus and Meystel, 2001); using the more conservative average trend from 1975 to 1985, Moravec predicts this level at 2030 (Moravec, 1999). Again following Albus and Meystel, the 1011 and 1012 c/s performance levels needed for good driving skills and average human driving performance, respectively, are expected to be reached in 2006 and 2011, respectively. Of course, “driving” is not the only activity that will require computational resources for a sophisticated Wingman or Hunter-Killer UGV.

Impending Limits

Aside from the brute-force nature of their analyses, the problem with the Moravec and especially the Albus and Meystel estimates is that they do not take into account the impending limits of silicon technology. Up to now, speed has been increased by reducing the sizes of the individual devices (scaling), but scaling has reached the point where the Si-SiO2 materials system on which the last 30 years of progress has been based is approaching its theoretical limits. Some improvements are expected with the replacement of SiO2 with so-called high-k dielectrics. However, Intel terminates its Moore’s law estimates at 2011 at a point still about one or two orders of magnitude short of human performance (Intel, 2002). Advances of the scale envisioned by Albus and Meystel and by Moravec will almost certainly require a new hardware technology that is not presently developed. These constraints are “hard” in the sense that given the scale of the semiconductor industry (approximately U.S. $150 billion in worldwide sales in 2001), it would be effectively impossible for any single source to provide enough financing to influence these trends.

To provide a more definitive illustration of the shortfall in computing power, a 640 × 480 pixel array (somewhat over 300,000 pixels total) operating at a typical readout rate of 30 Hz and an 8-bit gray scale with three colors delivers information at a rate of about 200 MHz. Comparing pixel ratios and data rates, one sees that the human visual-perception system is presently superior by nearly three orders of magnitude. Coupling this with the four-orders-of-magnitude lower performance of computers, the relatively primitive perception capabilities of UGVs are easily understood.

It should be noted that this argument presupposes exclusive use of general-purpose microprocessors. Digital signal processors (DSPs) may achieve a MIPS/watt (million instructions per second/watt) advantage of an order of magnitude over general-purpose machines for many UGV applications; also, field programmable gate arrays (FPGAs) have been said to achieve a two-orders-of-magnitude advantage for specific applications. This suggests that once algorithms are mature enough to be stable, implementing them in dedicated digital hardware (i.e., programmable logic) may provide a key path to enable small, low-power systems with limited degrees of semiautonomy. These solutions would thus forestall the overall shortcoming in computational power needed for near-human levels of autonomy and offer a way around the impending limits in silicon technology.

In addition to such breakthroughs in computational power, the most direct approach toward achieving autonomy goals for UGVs will be to augment visual perception systems with other sensors. The multimodal approach will likely need to be combined with analog or optical processing techniques to overcome any future deficits in semiconductor processing power. While existing or forthcoming processors should be adequate for meeting the anticipated computational loads for this approach, a major system engineering problem is to optimize the perception system hardware and software architecture, including sensors, embedded processors, coded algorithms, and communication buses.

The use of hardware and software in-the-loop simulation, appropriately instrumented, would move architecture design and optimization in this direction and would also support algorithm benchmarking. As noted in the previous section, the quality of software is also a major issue, adding three or more years for software engineering, re-implementation, and performance testing before system fielding to the UGV system development time line.

ASSESSMENT METHODOLOGY

Demonstrations alone do not provide statistically significant data to assess the maturity, capabilities, and benefits of a particular technology both as an individual technology and as part of a larger system or system-of-systems concept. For example, while it may be reasonable to assume that the Demo III program has advanced the state of the art over the

Demo II program, there is no way to know in the absence of statistically valid test data. To make progress emphasis should be placed particularly on data collection in environments designed to stress and break the system, e.g., unknown terrain, urban environments, night, and bad weather.

No quantitative standards, metrics, or procedures exist for evaluating autonomous mobility performance, particularly off-road. It is difficult to know where deficiencies may exist and where to focus research. Similarly, there is no way to determine if the algorithms being used are the best available. In the “A-to-B” mobility context, for example, no system-level process exists for benchmarking algorithms described in the literature and evaluating them for incorporation in UGVs. This must represent a major lost opportunity. All off-road perception research is handicapped because of a lack of ground truth, lack of consistent data packages for researchers to use, and a lack of quantitative standards for evaluation.

The assessment methodology should be designed to assess technology issues in the operational context of FCS and the Objective Force. This assessment methodology should describe the objectives, issues, and analytical methodology required to address the key issues for the UGV within the FCS and Objective Force architectures. The methodology should also identify input and support requirements, key assumptions, and time lines for the assessments.

The assessment methodology should include a series of experiments that initially begin with analyses of concepts and technologies and mature to technology-integration warfighting experiments that approach levels of assessment similar to operational test and evaluation. The level of detail of the assessments should grow with the level of maturity of the UGV technologies and their ability to be integrated into an FCS.

The assessment methodology should be iterative, or as some call this approach in Army acquisition, a spiral development approach. Experimentation, defined as “an iterative process of collecting, developing and exploring concepts to identify and recommend the best value-added solutions for changes to DOTML-P (doctrine, organization, training, materiel, leadership and people), is required to achieve significant advances in future joint operational capabilities” (VCDS, 2002).

Metrics

The assessment methodology must have appropriate metrics for both operational and technical issues. Metrics are needed at all evaluation levels: technical, tactical, and operational. For example, the evaluation of a Hunter-Killer UGV will require metrics on at least three levels:

-

Level 1: technical performance metrics. Examples of technical performance include such things as detection range, probability of detection (PD), probability of false alarm (PFA), time needed to process and send critical C2 data, and ability to communicate with other FCS elements. These metrics can be called measures of performance (MOPs).

-

Level 2: tactical effectiveness metrics. Examples of tactical effectiveness are the impact of the UGV on the mean time required by an intelligence officer to acquire a target accurately, the percentage of accurate acquisitions, target selections by a commander, sensor-to-shooter times, and probability of kill (PK). These metrics can be called measures of effectiveness (MOEs).

-

Level 3: operational utility metrics. The impact of the UGV on battle outcomes (e.g., force exchange ratios, percentage and numbers of indirect fire kills) is an example of operational utility. These metrics can be called measures of value (MOVs).

It is important to realize that the determination of a single valid technical performance metric, such as the appropriate measure for obstacle negotiation, is nontrivial and may by itself be the basis for extensive research. To ensure that the UGV metrics are relevant to the warfighter it would seem prudent to follow a process that identifies a warfighter’s goals; derives performance objectives and criteria that relate to these goals (performance objectives are usually expressed in terms of key issues or, as in the Army, essential elements of analysis [EEAs]); and develops appropriate MOPs, MOEs, and MOVs.

A recommended approach for developing UGV metrics should include:

-

Development of analytical requirements.

-

Determination of subjective metrics. Subjective metrics of importance to the warfighter could relate to warfighter utility or operational issues. Some examples of non-quantifiable metrics may be

-

Usefulness. User assessment of value added, completeness of information, and accuracy of information are examples of usefulness metrics.

-

Usability. Human factors, interoperability, accessibility, and consistency of information are examples of usability metrics.

-

User assessment of performance. Standards compliance, overall capability, bandwidth requirements, and system availability are examples of metrics that may reflect the user’s personal opinion.

-

-

Determination of quantifiable metrics. Quantifiable metrics for technologies being evaluated in an experiment should

-

Be relevant to the warfighter’s needs (which include system requirements and system specifications).

-

-

Provide flexibility for identifying new UGV requirements.

-

Be clearly aligned with an objective.

-

Be clearly defined, including the data that is to be collected for each metric.

-

Identify a clear cause and effect or audit trail for the data being collected and the technology being evaluated.

-

Minimize the number of variables being measured, with a process identified for deconflicting data collected from and perhaps impacted by more than one variable.

-

Identify nonquantifiable effects (e.g., leadership, training) and impacts of system wash-out (i.e., a technology’s individual performance is lost in the host system performance), and control (or reduce) them as much as possible.

-

Documentation of each measure.

Some examples of UGV-specific metric-generating issues may include:

-

Example operational issues

-

Command and control issues may include the impact of UGVs on command efficiency (e.g., timeliness of orders, understanding of the enemy situation and intentions) of a small unit leader or unit commander and his staff

-

What is the cognitive workload (e.g., all critical events observed, accuracy of orders) of a small unit leader or unit commander and staff?

-

What can an array of UGVs do that a single UGV cannot?

-

What information needs to be shared to support collaboration among systems (manned and unmanned)?

-

-

-

Example technical issues

-

Mobility issues such as those enumerated in the High Mobility Robotic Platform study (U.S. Army, 1998) will provide a basis for UGV mobility metrics. UGV-specific mobility issues, such as the mobility metrics used in the DARPA Tactical Mobile Robot program should be considered. The measures should be based on specific applications being evaluated (e.g., logistics follower on structured roads, soldier robot over complex terrain).

-

Other supporting technology issues

-

How do data compression techniques impact UGV performance?

-

What is the optimal trade-off between local processing and bandwidth?

-

How much of a role should ATR play in perception?

-

-

There are many more issues and metrics that need to be defined for Army UGV assessments. The process must be to identify UGV objectives first, then the issues generated by each objective, then the hypotheses for each issue, and finally the measures needed to prove or disprove the hypotheses.

A major goal going forward must be a science-based program for the collection of data sufficient to develop predictive models for UGV performance. These models would have immediate payoff in support of system engineering and would additionally provide a sound basis for developing concepts of operation (reflecting real vehicle capabilities) and establishing requirements for human operators, e.g., how many might be required in a given situation. Uncertainties with regard to these last represent major impediments to eventual operational use.

MODELING AND SIMULATION

Modeling and simulation (M&S) is an essential tool for analyzing and designing the complex technologies needed for UGVs. Much has been written on the use of simulations to aid in system design, analysis, and testing. The DOD has also developed a process for simulation-based acquisition. However, little work has been done to integrate models and simulations into the system engineering process to assess the impact of various technologies on system performance and life-cycle costs.

To fully realize the benefits of M&S the use of M&S tools must begin in the conceptual design phase, where S&T initiatives have the most impact (Butler, 2002). For example, early in the conceptual design phase M&S can be used to evaluate a technology’s impact on the effectiveness of a UGV concept, determine whether all the functional design specifications are met, and improve the manufacturability of a UGV. By using simulations in this fashion, S&T programs can support significant reductions in design cycle time and the overall lifetime cost of future UGVs. Simulations provide a capability to perform experiments that cannot be realized in the real world due to physical, environmental, economic, or ethical restrictions. Because of this, they should play a very important role in implementing the assessment methodology used to assess UGV concepts and designs.

Just as with training, UGV system experimentation could be supported with any one or mix of the following types of simulations:

-

Live simulations—real people operating real systems.

-

Virtual simulations—real people operating simulated systems.

-

Constructive simulations—simulated people operating simulated systems (note: real people stimulate,

-

or make input, to these simulations, but they are not involved with determining the outcomes).

Simulations, however, are meaningful only if the underlying models are adequately accurate and if the models are evaluated using the proper simulation algorithms. Technical experiments will be difficult to conduct, since data is lacking for detailed engineering models of UGVs and detailed multispectral environment representations. The multispectral environment includes detailed terrain elevation (<1-meter resolution) data, feature (natural and manmade) data, and effects of weather (including temperature).

Both the use of UGVs and the FCS in an operational environment are relatively new concepts, so little data has been accumulated that could be used to develop verified and validated models. The Army has made good strides toward overcoming similar deficits in this area by extrapolating the results of laboratory experiments, using information from similar fielded systems and applying subject matter expertise from the Joint Virtual Battlespace at the Joint Precision Strike Demonstration Project Office (DMSO, 2001).

While existing M&S tools are adequate for the near term, complex UGV systems in the far term are likely to require M&S tools designed specifically to address system engineering issues. In the future, for example, material and structural systems will have sensors and actuators embedded so that the material serves multiple functions simultaneously (e.g., solving problems to achieve particular thermal properties, electromagnetic properties, sensing properties, antenna functions, mechanical and strength functions, and control functions). The kinds of mathematical and numerical tools that would be required to jointly optimize these disciplines, or to develop mathematical models that are appropriate for such system design efforts, are not currently being investigated. These tools would be invaluable aids to determine performance-limiting factors and to integrate technologies into multiple disciplines.