6

Methods for Predicting and Assessing Unintended Effects on Human Health

This chapter focuses on current and prospective approaches for predicting and assessing unintended effects on human health from genetically modified (GM) foods, including those that are genetically engineered (GE), both before and after commercialization. (For an explanation of the distinction between GM and GE foods, see Chapter 1.)

BACKGROUND

The major challenges to predicting and assessing unintended adverse consequences—such as toxicity, nutritional deficiency, and allergenicity—stem from limitations in available data as well as in current scientific knowledge. For example, information about the range of normal compositional variability, especially in plant-derived food, is very limited. This significantly constrains the ability to distinguish true compositional differences of a “new” food from the normal variation found among its antecedents.

To the extent that it cannot be determined whether the composition of a food has changed, it also cannot be predicted whether such changes have either adverse or beneficial health consequences. Even in cases where food composition changes are known, current understanding of the potential biological activity in humans for most food constituents is very limited. This becomes most evident when considering mixtures or diets consumed by human populations and then attempting to predict adverse health consequences from chronic intake of specific foods.

Thus the present state of knowledge requires relying on a range of toxicological, metabolic, and epidemiological sciences to assess the significance of un-

intended health effects, using both targeted and profiling approaches (see Chapter 4). Employing a combination of these approaches builds on what is known and will increase the ability to detect or even prevent unsuspected consequences.

Current approaches likely will be limited when applied to new GE foods with substantially altered composition. Consequently, a conceptual approach is presented in this chapter, grounded in the biological basis of adverse effects on human health and relying significantly on robust information regarding exposure.

Despite the power of methods suggested by this conceptual approach and their ability to identify GE foods likely to have adverse effects, it is impossible using any method to prove the lack of an unintended effect. This is particularly true given the current state of knowledge regarding the exposure patterns of U.S. populations and how single food and mixtures of food components affect health. Thus requiring proof that there is no possibility of an unintended effect is not realistic for an assessment standard.

The general conceptual approach for predicting and detecting adverse health outcomes discussed in this chapter is based on a risk assessment strategy proposed by the National Research Council (NRC, 1983) and relies on “substantial equivalence” to illustrate distinctions that may exist between foods modified by genetic engineering and those modified through traditional (non-GE) methods. This approach rests on the likelihood and functional significance of adverse outcomes of unintended or intended modifications being determined by several factors. These factors relate to the nature of the modification, such as whether it is quantitatively large or small and whether it is novel, and the characteristics of the compositional changes in question, such as dose-response outcomes and the nature and extent of likely exposures. Additionally, it considers population characteristics related to susceptibility, such as age, genetics, and nutritional status.

STAGES IN THE DEVLOPMENT OF GE FOODS

The development of a GE food involves a complex process that can be viewed as occurring in three stages: gene discovery, selection, and product advancement to commercialization. The safety of GE food should be assessed at all stages of its development (Taylor, 2001).

Starting with an initial product concept, the gene discovery stage involves screening genes from many sources and selecting those that might contribute to a marketable result. Ideally, safety assessment should begin during this early gene-selection phase by taking into account each gene’s source, previous consumer exposure to the source, and whether there is a history of safe use for source material, the gene, and its specific products.

In the case of GE plants, animals, and microbes, the next stage of the developmental process is line selection. Plants, for example, progress through a variety of steps in the greenhouse and field during which the biological and agronomic equivalence of the GE crop should be compared with its traditional counterpart.

These evaluations do not specifically focus on safety assessment, but many potential products with unusual characteristics are eliminated during this stage. This elimination process enhances the likelihood that a safe product will be generated.

Finally, in the precommercialization stage for both GE plants and animals, the GE product should go through a detailed and specific safety assessment process. This process should focus on the safety of the products associated with the introduced gene and any other likely toxicological or antinutritional factors associated with the source of the novel gene and the product to which it was introduced. The safety of the GE product for both human and animal feeding purposes must be considered.

SUBSTANTIAL EQUIVALENCE AND ITS ROLE IN SAFETY ASSESSMENT

Given the relative novelty of genetic engineering, few examples are available that involve safety assessments for GE food, especially those with substantially altered composition that are the focus of this report. The use of substantial equivalence is one approach used to illustrate distinctions that may exist between foods modified by genetic engineering compared with traditional (non-GE) methods for modifying food composition.

The concept of substantial equivalence provides a basis to plan a safety assessment designed to determine if GE foods are as safe as their traditional counterparts (FAO/WHO, 1996; IFT, 2000; OECD, 1993). It was developed in part because traditional toxicological approaches for evaluating the safety of food additives, pesticide residues, and contaminants do not work well in evaluating the safety of whole food, including GE food, because of the difficulties encountered in exaggerating the dosages of whole food in the diets of experimental animals.

The concept of substantial equivalence is frequently misinterpreted because of the mistaken perception that the determination of substantial equivalence is the end point of a safety assessment, rather than the starting point. From a safety assessment perspective, the concept of substantial equivalence merely provides a framework for focusing any safety studies on the areas of greatest potential concern. Current GE varieties of traditional crops, such as corn and soybeans, are altered very little from their traditional counterparts. Thus the safety evaluation focuses on how GE crops differ from their traditional counterparts and further assumes that the unchanged components are just as safe as the traditional counterparts (see Chapter 4). With the concept of substantial equivalence, the GE food, or food component, is compared with its traditional counterpart for such attributes as origins of genes, phenotypic characteristics, composition—including key nutrients, antinutrients, and allergens—and consumption patterns. More recently, the phrase “substantial equivalence” has evolved into “comparative safety assessment” to encompass a broader meaning that includes an analytical

comparative component and a safety testing component of identified differences (Kok and Kuiper, 2003).

Three outcomes are possible from the substantial equivalence comparisons (FAO/WHO, 1996). The subsequent examples are intended to illustrate the types of adverse consequences that may occur and not to signal a “clear and inevitable danger” of food derived by deliberate genetic modifications of food by traditional or more contemporary technologies.

One possible outcome is that a GE food could be judged to be substantially equivalent to its conventional counterpart. In this case, no further safety testing would be required. However, this possibility is rather unlikely to occur in cases of GE foods that have substantially altered compositional traits compared with their conventional counterparts.

In other cases, GE foods may be judged to be substantially equivalent to their conventional counterparts except for specific differences, including the introduced traits. In this situation the safety testing likely would focus on the safety of these differences and primarily on the introduced trait or gene product. An example for this outcome would be Bt corn or Roundup Ready soybeans. These products are those with traits, such as enhanced nutrients or reduced toxins, that usually are expressed by single genes and that share commonality with the vast majority of currently commercialized GE plants.

Finally, the GE food could be judged not to be substantially equivalent to the conventional food or food component. Examples would include products with dramatically altered food composition, such as those aimed at improved nutritional profiles. More extensive safety assessments would be required for such products, including a more rigorous nutritional and toxicological assessment. Few products from this final category have been released into the commercial marketplace, so the nature of the safety assessment process in such cases has not yet been addressed by domestic and worldwide regulatory agencies. These safety assessments would need to be conducted in a rigorous but flexible manner, depending on the nature of the novel food product.

During the process of substantial equivalence comparisons, extensive compositional analyses are conducted on the GE crop to compare it with the conventional counterpart. The selection of an appropriate comparator or comparators is obviously a key factor in this process. Comparisons should be made to the near isogenic parental variety from which the GE food was derived, and ideally to major commercial varieties of the same food, including varieties that are important in certain parts of the world where the crop will be exported.

As noted previously, safety assessments typically focus on novel gene products and proteins, as well as any components that might be created in a GE food as a result of an enzymatic protein activity, or if the food has an effect on the metabolism of the host organism. The approaches to evaluating the safety of novel gene products are discussed later in this chapter. Limitations to assessments based

on the comparisons that can be made and sampling strategies are discussed in Chapters 3 and 4.

Evaluation of Substantial Equivalence with Other Predictable Changes

Applying the concept of substantial equivalence makes it possible to focus on the intentionally introduced traits and the novel proteins produced from the inserted genes. However, other predictable differences also may be identified. The possibility of altered metabolic profiles from the introduction of novel proteins with enzymatic activity is predictable for some GE food. In the case of golden rice, enhanced levels of carotenoids are produced as a direct, intended consequence of the genetic modification (Beyer et al., 2002). While this compositional difference in golden rice is intended to be beneficial to health (Nestle, 2003), the presence of the altered levels of carotenoids must also be part of the safety assessment.

Applicability to Plant, Animal, and Microbial Organisms

The framework of substantial equivalence has also been applied to GE animals and microorganisms. Assessment of the safety of the introduced traits or novel proteins can be approached in a similar fashion, no matter what the source of the inserted gene. This approach could also be applied to the identification of compositional differences and the safety assessment of food produced by all means of genetic modification.

CURRENT SAFETY STANDARDS FOR GE FOODS

On a worldwide basis, several organizations, including the Food and Agriculture Organization of the United Nations (FAO), the World Health Organization (WHO), and the Organization for Economic Cooperation and Development, have established the background for the safety assessment of GE food (FAO/WHO, 2000; OECD, 2000). In general, these organizations have concluded that GE products are not inherently less safe than those developed by traditional breeding (IFT, 2000). Furthermore, food safety considerations are similar to those arising from the products of traditional breeding other than food additives, which are subject to different regulations and testing procedures than food products.

In the United States, the accepted standard of safety for foods produced from GE crops is the same as that for other similar food products. Under U.S. law for food additives, there must be a reasonable certainty that no harm will result from intended uses under anticipated conditions of consumption (Federal Register, 1992). There is no burden on the food manufacturer to demonstrate the safety of food products that are not food additives. However, the Food and Drug Adminis-

tration (FDA) can take action against a food, including GE food, if the food presents a demonstrable safety risk.

SAFETY ASSESSMENT PRIOR TO COMMERCIALIZATION

Safety of Ingested DNA

As described in Chapter 2, genetic transfer between species has been shown to occur naturally as well as through human intervention. The deoxyribonucleic acid (DNA) present in plants, microorganisms, and animals used as food is ingested in significant quantities. Further, consumption of DNA, typically 0.1 to 1.0 g per day from food sources, is not known to be toxic (Doerfler and Schubbert, 1997). Additionally, the amount of DNA from a given GM food would likely represent less than 1/250,000 of the total amount of DNA consumed from all food sources (FAO/WHO, 2000).

However, the possibility of transferring and incorporating novel genes from GM foods into cells has been investigated in animal models, humans, and microorganisms (gut bacteria). In model experiments in which mice were orally administered high doses of bacterially derived DNA, test DNA fragments were apparently incorporated into bacterial and mouse cells (Schubbert et al., 1998). This report contrasts with others in which no transfer or only a low frequency of transfer was observed (Biosafety Clearinghouse, 2003). Furthermore, the significance of the observations of Schubbert and coworkers (1998) has been seriously questioned by others (Beever and Kemp, 2000).

As pointed out previously (WHO, 2000), the transfer of DNA from GM plants into microbial or mammalian cells, under normal circumstances of dietary exposure, would require all of the following conditions to exist:

-

Relevant (potentially hazardous) genetic material in the plant DNA would have to be released from the plant cells, presumably as linear fragments.

-

The released genetic material would have to survive digestion by nucleases both in the plant and in the gastrointestinal tract.

-

The genetic material, once exposed to the gut, would have to compete for uptake with DNA from conventional foods.

-

The recipient cells would have to be competent for transformation (uptake of the DNA), and the genetic material would have to survive enzymatic degradation by normal cellular mechanisms.

-

The genetic material would have to be incorporated into the host DNA by rare enzymatic events.

The consequences of uptake of DNA by somatic mammalian cells differs from that of uptake of DNA by microorganisms, as DNA in mammalian somatic cells is not transmitted to subsequent generations, but in microbes it may be. The

vast majority of known bacteria are not naturally transformable. No evidence exists for the transfer to and expression of plant or animal genes in microorganisms under natural conditions. Nielsen and coworkers (1998) did observe that plant genes could be transferred to bacteria under laboratory conditions only if homologous recombination was possible. In summary, the safety of GE products should be predicated on the characteristics of the novel protein or other product expressed by the gene, rather than on the safety of ingesting DNA or the possibility of horizontal transfer of novel genetic material to humans or gastrointestinal microorganisms.

Safety Evaluation of Marker Genes and Their Products

Products of marker genes are obvious predictable differences that should be highlighted in initial substantial equivalence comparisons. In addition to principal gene products, GE foods often contain antibiotic resistance marker genes or other marker genes that remain from the product development process. The most common antibiotic resistance marker gene expresses an enzyme called neomycin phosphotransferase II.

The safety of commonly used antibiotic resistance markers has been well-established (WHO, 1993, 2000). However, a concern exists that antibiotic resistance might be transferred from a GE plant cell to intestinal bacteria in humans. Expert groups (WHO, 1993, 2000) have concluded that there is no evidence to support that the antibiotic resistance markers currently in use pose a health risk to humans or domestic animals (WHO, 2000).

Several reasons exist for the well-established safety of antibiotic marker genes in GE foods, including:

-

the lack of any evidence for the transfer of antibiotic resistance to intestinal bacteria or dietary pathogens;

-

the extremely low theoretical likelihood of such transfers; and

-

the use of antibiotic resistance markers for antibiotics that have limited clinical applications, such as neomycin (WHO, 2000).

While there has been an increase in the prevalence of antibiotic-resistant bacteria, it cannot be attributed to the use of antibiotic resistance markers in GE foods.

Increasingly, other methods are being employed in agricultural biotechnology that avoid the incorporation of antibiotic resistance marker genes into the commercial product. These methods include removing the antibiotic resistance marker gene after successfully transferring the desired genetic trait, or using alternative marker genes in the genetic transformation. If alternative marker genes are used, the products of these genes would also need to be evaluated for safety. Since limited experience exists with such alternative marker genes, the safety of these gene products has not yet been well established.

As previously stated, the safety of GE products should be predicated on the characteristics of the novel protein or other product expressed by the gene, rather than on the safety of ingesting DNA or the possibility of horizontal transfer of novel genetic material to humans or gastrointestinal microorganisms.

Safety Assessment of Novel Gene Products

Assessing the Potential Toxicity of GE food

Toxicological studies in animals are considered on a case-by-case basis as part of assessing the safety of GE food (Kuiper et al., 2001). The demonstration of a lack of an amino acid-sequence homology of a novel protein to known protein toxicants and rapid proteolytic degradation under simulated mammalian conditions of digestion are often deemed sufficient to presume the safety of a novel protein. However, subchronic animal toxicological studies have also been conducted on some of the novel proteins and on GE food. As noted previously, the design and interpretation of animal toxicological studies with whole food, including GE food, is challenging.

Methods exist for detecting the toxicity of chemicals in premarket evaluations. FDA has compiled a set of guidelines for toxicity testing of proposed food additives (OFAS, 2001). Similarly, the U.S. Environmental Protection Agency (EPA) has developed a number of guidelines for the toxicology assessment of pesticides, including a number relevant to the health effects of pesticides in food (OPPTS, 1996).

Present guidelines, with the exception of the oral acute toxicity test, have not been applied to the assessment of currently approved GE foods. The traditional toxicological tests may be more relevant to the next generation of GE foods that will be substantially different in composition from traditional counterparts. However, application of the tests to whole food is difficult with existing methodologies, so such testing could likely be applied only to specific unique components identified in the GE variety.

Although much testing is done in vitro, most involves feeding studies with whole animals. These studies attempt to minimize the numbers of animals used because of animal welfare concerns and because of costs. Most strategies rely on high doses of the agent under study to compensate, in part, for these limitations. Generally toxicologists first determine the maximum tolerated dose (MTD) of a substance, that is, a sufficiently high dose to cause an adverse effect, but not death. Levels close to and below the MTD are tested. This procedure is designed to maximize the tests’ statistical power, but it is not without controversy since testing at the MTD induces toxic effects that can cause physiological alterations to the animals that are not relevant in the case of humans exposed at much lower levels.

In a number of rare cases, animal models have produced results that are not biologically relevant to humans at all, but generally these models have been work-

able. However, this approach was designed to assess conventional food additives and pesticides—not the effects of macronutrients or other food components that are difficult to isolate from whole food. Feeding food at the MTD is not feasible due to the high mass and volume of intake required, which would confound the results because of excessive caloric intake and other probable dietary imbalances.

Occasionally, subchronic toxicity tests are conducted on GE food (Kuiper et al., 2001), although such testing is not typically part of the safety assessment approach used by commercial seed companies. In these tests the GE food is fed generally to rats or mice for at least 28 days (Kuiper et al., 2001).

Feed consumption, body weight, organ weights, blood chemistries, and his-topathology are among the parameters that can be assessed in such experiments. However, the complexities involved in the design of subchronic toxicity tests complicate the interpretation of the results (Kuiper et al., 2001). From a practical perspective, subchronic toxicity tests likely can only be performed with the whole GM food (or some significant component of it, such as the oil fraction) because purification of sufficient quantities of the novel protein would usually be extremely difficult.

The difficulties involved in the extraction of specific components from food for testing is illustrated by the bacterially-produced Cry9C protein in StarLink corn mentioned earlier. Moreover, traditional toxicological approaches have never been proven to have utility for testing complex mixtures, including whole food. The design of tests for food components will need to be informed by the fact that food components always occur as part of a complex mixture (see Chapter 4). The toxicity of any individual compound in food could be offset by other factors that are protective, such as those that prevent exposure by binding to dietary fiber or natural antioxidants. Likewise, the toxicity could be enhanced, for example, by facilitating absorption or by inclusion of natural substances that act via the same mechanism.

A large effort is under way to develop new microarray technologies (see Chapter 4) in order to examine patterns of DNA expression that are associated with various types of toxicity, such as immune system response, receptor biology, signal transduction, protein modification, membrane transport, growth and development, metabolism, oxidative stress, and regulation of the cell cytoskeleton (Pennie, 2002).

Assessing the Potential Acute Toxicity of Novel Proteins

Most proteins are unlikely to be acutely toxic, particularly when ingested. However, an assessment of the acute toxicity of the novel proteins introduced into GE food is one approach to preventing unintended health consequences. Nevertheless, evaluation of the acute oral toxicity of a GE food and the novel proteins it may contain should be considered. Additionally, a bioinformatics database containing the amino acid sequences of known protein toxins should be

developed and maintained. This database could then be used to screen novel proteins for a sequence similar to known protein toxins. Further research will be needed to develop appropriate searching strategies to use with the database.

Currently the acute toxicities of novel proteins are evaluated as part of the overall safety assessment in certain circumstances. These experiments typically involve oral administration of high doses of the novel protein by stomach tube to either rats or mice. Because many proteins are not for the most part toxic, the evaluation of the acute toxicity of novel proteins has not been particularly revealing. Examples of this application can be found for 5-enolpyruvylshikimate-3-phosphate synthase (Harrison et al., 1996) and neomycin phosphotransferase II, a marker gene product (Fuchs et al., 1993). The assessment results from these two studies indicated that the products containing the marker genes were as safe and nutritious as their conventional counterparts.

The likelihood of the unintentional introduction of a novel, toxic protein into a GE food is extremely low, simply because proteins are rarely toxic, with a few noteworthy exceptions, such as botulinum toxins and staphylococcal entertoxins. Appropriate methods exist to assess the acute toxicity of novel proteins, and they can be implemented on a case-by-case basis as necessary.

The use of subchronic and chronic toxicity testing in animals is not currently recommended as part of the safety assessment approach. Subchronic testing may be considered in cases where the novel protein has no safe history of use (e.g., proteins with lectins that may have neurotoxic actions). The current need, however, for comparatively large quantities of material precludes the use of the purified novel protein in long-term animal toxicology studies. Thus such studies would involve the use of whole GE food, which presents challenges for experimental design and interpretation.

Assessing the Possible Allergenicity of Novel Proteins

The identification of an unanticipated allergic response to a newly introduced protein in the diet is expected to be a rare event. If such responses were not anticipated from the premarket testing phase, the identification of these rare events would depend upon medical diagnosis of the allergic response and proper attribution of this response to the GE food, as discussed in Chapter 5. However, clinical approaches to the detection of rare allergenic reactions are questionable, so the focus of current assessment approaches has been on premarket assessment.

Premarket Allergenicity Assessment

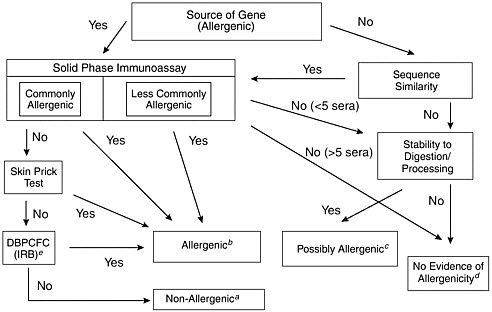

Virtually all known food allergens are proteins, so the allergenic potential of all novel proteins must be determined. In 1996 a decision-tree approach for assessing the potential allergenicity of GE food was developed that relied upon evaluating the source of the gene, the amino acid sequence homology of the newly

introduced protein, the immunoreactivity of the new protein with serum immunoglobuline-E (IgE) from individuals with known allergies to the source of the transferred genetic material (specific serum screening), and the various physicochemical properties of the newly introduced protein, such as heat stability and digestive stability (Metcalfe et al., 1996).

This decision-tree approach, as modified by FAO/WHO (2000), is depicted in Figure 6-1. Additional criteria have been suggested to assess the allergenicity of GE food, including comparing the overall structural identity with known allergens, targeted serum screening, and animal models (FAO/WHO, 2001). The Codex Ad Hoc Intergovernmental Task Force on Safety Assessment of Genetically Modified Foods recommended using only information on the source of the gene, structural comparisons with known allergens (both overall structural identity of 35 percent or greater and amino acid sequence identity of eight contiguous amino acids or more), specific serum screening, and pepsin resistance because targeted serum screening and animal models have not yet been validated for use in such applications (Codex Alimentarius Commission, 2002). The Report of the Fourth Session of the Codex Ad Hoc Intergovernmental Task Force on Foods Derived from Biotechnology (FAO/WHO 2003) reviewed these assessment guidelines for inclusion in the Draft Guideline for the Conduct of Food Safety Assessment of Foods Produced Using Recombinant-DNA Microorganisms.

As noted, the likelihood of the unintentional introduction of an allergen into a GE food is low, but it should be evaluated in every case. Although no single test can provide complete assurance that a novel protein from a source with no history of allergenicity will not act as an allergen, the combined application of all of the approaches discussed above can provide reasonable assurance that a novel protein has a low probability of acting as one. The various tests and criteria used to evaluate the potential allergenicity of GE food have been thoroughly discussed elsewhere (FAO/WHO, 2000, 2001; Metcalfe et al., 1996; Taylor, 2002; Taylor and Hefle, 2002).

Several approaches should be considered to improve the assessment of the potential allergenicity of novel proteins. First, since structural comparison between novel proteins and known allergens are predicated on the availability of a sequence homology database of known allergens, a publicly available database, as mentioned above, should be created for use by researchers, regulators, and agricultural biotechnology companies. This database would ideally contain known allergens from all environmental sources, including food.

A scientifically rigorous approach should be used to determine which proteins should be included in this database as known allergens. Research is recommended on searching strategies to develop sound, discriminating approaches that identify potential allergens. Because pepsin resistance seems to be a characteristic of many food allergens, a need exists to standardize methods for assessing this attribute.

In those cases in which genes are obtained from known allergenic sources or the sequence comparison yields potentially significant similarities to known al-

FIGURE 6-1 Assessment of the allergenic potential of foods derived from genetically modified crop plants. Adapted from a decision-tree approach developed by the International Food Biotechnology Council and Allergy and Immunology of the International Life Sciences Institute (Metcalfe et al., 1996).

aThe combination of tests involving allergic human subjects or blood serum from such subjects would provide a high level of confidence that no major allergens were transferred. The only remaining uncertainty would be the likelihood of a minor allergen affecting a small percentage of the population allergic to the source material.

bAny positive results obtained in tests involving allergic human subjects or blood serum from such subjects would provide a high level of confidence that the novel protein was a potential allergen. Food containing such novel proteins would need to be labeled to protect allergic consumers.

cA novel protein that either has no sequence similarity to known allergens or has been derived from a less commonly allergenic source with no evidence of binding to immunoglobulin-E (IgE) from the blood serum of a few allergic individuals (less than 5), but that is stable to digestion and processing, should be considered a possible allergen. Further evaluation would be necessary to address this uncertainty. The nature of the tests would be determined on a case-by-case basis.

dA novel protein with no sequence similarity to known allergens and that was not stable to digestion and processing would have no evidence of allergenicity. Similarly, a novel protein expressed by a gene obtained from a less commonly allergenic source and demonstrated to have no binding with IgE from the blood serum of a small number of allergic individuals (between 5 and 14) provides no evidence of allergenicity. Stability testing may be included in these cases. However, the level of confidence based on only two decision criteria is modest. It has been suggested that other criteria should also be considered, such as the level of expression of the novel protein (FAO/WHO, 2001).

eDBPCFC: Double-blind Placebo-controlled Food Challenge; IRB: Institutional Review Board.

lergens, others have recommended specific serum screening as an approach to determine if the novel protein is indeed a potential allergen by virtue of its ability to bind serum IgE antibodies from humans with the specific allergy in question. However, the ability to conduct specific serum screening is limited by the lack of access to sera from individuals with well-characterized allergies to various food or environmental sources. Approaches to address this constraint should be developed. Additionally, standardized approaches for conducting specific serum screening must be developed, particularly due to the occurrence of false positive reactions in testing.

A second approach to improving methods to assess the allergenicity of novel proteins involves animal models. Animal models have been studied for IgE-mediated food allergy, but regulatory agencies have not proposed or instituted any whole animal or in vitro assays for the prediction of food allergy from novel proteins. Efforts to develop animal models for the assessment of IgE-mediated food allergenicity are under way, such as the Brown Norway rat, several mouse models, a dog model, and a swine model (Dearman et al., 2000; Ermel et al., 1997; Ito et al., 1997; Knippels and Penninks, 2003; Knippels et al., 1999; Li et al., 1999). However, none have been proven to be satisfactory (Kimber et al., 2003) due to limitations in extrapolating responses from animal models of food allergenicity to humans (Helm, 2002).

The ideal animal test model possesses several attributes: it should produce a significant amount of IgE or other Th2-specific antibody class; it should tolerate most food proteins, especially those that are known nonallergens, such as ribulose bisphosphate carboxylase; and it should develop allergen-specific antibodies on oral exposure to known food allergens.

Currently used animal models have been validated (FAO/WHO, 2001); therefore, a need exists to determine if any of these animal models can reliably discriminate between known food allergens and known nonallergens. This validation has been hindered to some extent by disagreement over which known food allergens and nonallergens should be tested. Also, sufficient quantities of the purified allergens and nonallergens have not been obtained in the quantities necessary for validation. Some debate exists about the appropriate route of exposure and whether the use of adjuvants should be permitted. More research is needed to make these determinations.

A third approach to assessing the allergenicity of novel proteins is targeted serum screening, which involves the determination of the binding of a novel protein of interest to serum IgE antibodies obtained from individuals who are allergic to materials that are broadly related to the source of the novel gene. For example, the source for a novel gene, such as a dicot plant, may not be associated with known allergies, but other dicot plants, including peanuts and various specific tree nuts, are known food allergens. The possibility exists that the novel protein may be cross-reactive with allergens from related sources.

Targeted serum screening has not been incorporated routinely into the allergenicity assessment of novel proteins from GE food. Serious concerns exist

about the possibility of false positive reactions. Furthermore, if the structure of the allergens in the related species is known, the cross-reactivity should be evident from sequence homology testing. More research is needed on targeted serum screening before this approach can be recommended for routine use.

Heat processing is yet another approach that has been proposed to assess allergenicity. Empirically it has been noted that food allergens tend to survive heat processing (Metcalfe et al., 1996), so they must be comparatively heat stable. The Japanese government uses heat stability as an additional approach in allergenicity assessment (MHLW, 2000). However, this measure is not sufficient because no specific approach to heat stability assessment exists. The application of heat may alter the tertiary structure of the novel protein and thereby alter its biological functions, such as enzymatic activity, ability to kill insects (in the case of Bt proteins), and ability to bind IgG antibodies in animal antisera. The loss of biological functions may not completely correlate with the loss of allergenic activity, so the inability to detect a novel protein after heating does not demonstrate that the protein is no longer present in some altered form that may be allergenic.

Nutritional Evaluation of Modified Food Products

Thus far the genetic engineering of plants and animals has been aimed primarily toward enhancing agricultural productivity or improving agronomic characteristics. The development of GE food with enhanced nutritional profiles has not yet been accomplished on a commercial scale, with the exception of higholeic acid soybeans, whose oil fraction is quite similar to olive oil (Kinney, 1996). However, several crops with enhanced nutritional characteristics are currently under development, including golden rice (Ye et al., 2000) and golden mustard (AgBiotechNet, 2000), both with enhanced levels of beta-carotene.

Such products have not been commercialized because adequate data about the safety of these products are not yet available. Certainly most of these products would not be considered substantially equivalent to their traditional counterparts. Because these types of products have not been approved for commercialization, no experience exists with respect to the adequacy of safety assessment methodology for products that are not substantially equivalent to their traditional counterparts. However, the products may be substantially equivalent to other products already in the human diet. For example, edible oils with altered fatty acid profiles (e.g., high-oleic soybeans) may be substantially equivalent to other edible oils.

Agronomic or Phenotypic Comparisons

Agronomic or phenotypic comparisons are routinely conducted as part of the line selection phase in the development of GE crops (see Chapter 3). These com-

parisons serve to identify varieties with altered phenotypic characteristics, and such varieties are typically abandoned. Such varieties also might have a higher likelihood of eliciting unexpected effects on human health simply because the presence of unanticipated phenotypic characteristics signifies the occurrence of unintentional compositional changes, some of which may cause adverse health effects. However, these phenotypic comparisons are rather superficial and could easily miss some varieties containing altered compositions that could adversely affect human health.

Animal Feeding Trials

In cases in which GE crops are intended to be used, in part, to feed domesticated animals, feeding trials are often conducted on cows, pigs, chickens, or sheep. The purpose of these trials is to compare the nutritional qualities of the GE crop with its conventional counterpart. Although these feeding trials are not toxicology experiments, adverse effects on the health of these animals are noted during the feeding trial. Any adverse events would indicate the possible existence of unexpected alterations in the GE crop that could adversely affect human consumers of products derived from that crop.

APPLICATION, VALIDATION, AND LIMITATIONS OF TOOLS FOR IDENTIFYING AND PREDICTING UNINTENDED EFFECTS

Hazard Identification versus Overall Risk Assessment

The safety assessment process for foods begins with hazard identification. Subsequently, other key aspects of an overall risk assessment, such as dose-response evaluation and exposure assessment are conducted, followed by risk characterization. Hazard identification in GE foods is generally based on comparisons between the GE food and its conventional counterpart to identify uniquely different components. Any potential hazards that are identified through this process that may be associated with unique components introduced into the food by genetic engineering are assessed.

When health hazards become apparent to regulatory agencies, the commercialization of GE food is likely to be stopped without any attempt to determine the overall risk using a complete risk assessment approach. As an example, the identification of a Brazil nut allergen in a genetically engineered, high-methionine soybean (see Chapter 5 for details) caused the development of this promising new crop to be abandoned (Nordlee et al., 1996). One of the challenges for the future is to incorporate the additional steps of a risk-assessment process into the safety assessment scheme; this will be particularly important in situations in which there is uncertainty regarding the hazard. A general scheme for assessing potential unintended effects is presented in Chapter 7, Figure 7-1.

Compositional Databases and Selection of Suitable Comparators

The identification of unique components in GE food is dependent upon comparisons between the composition of the GE product and a suitable comparator. Typically, these comparisons are made on the basis of proximate analysis, nutritional components, toxicants, antinutrients, and any other characterizing components. Additionally, as noted earlier, considerable focus is placed upon the unique components, usually proteins, that are produced by the inserted gene.

The selection of a suitable comparator is a pivotal and complex decision. The ideal comparator in most cases would be a near-isogenic parental variety from which the GE variety was derived. Obviously, a comparison to the near-isogenic parental variety would allow comparisons that might identify unintended changes. However, comparisons might also be needed to the most relevant comparators, that is, the commercially important varieties that are likely to be displaced in the marketplace by the GE variety. Such comparisons should be restricted to varieties that are in common use, although the identification of such varieties can be variable in different parts of the world. For crops that are likely to be exported, considerations should be made for comparisons to varieties that will be displaced in other countries, as well as varieties commonly grown in the United States.

Considerable variations can occur in the composition of a food on the basis of factors such as agronomics, environment, and natural variability. In the selection of suitable comparators, such varieties should be taken into account. Suitable comparator varieties and the GE variations might need to be grown in several different geographic areas under different environmental conditions to determine the typical ranges for key and characterizing components among these variations.

The composition of the crop can also vary among seed, leaf, stalk, and other components for each relevant variety. The compositional comparisons may be made on other portions of the plant, especially if those segments are used for animal feeds. In all such situations, suitably robust sampling strategies must be employed to obtain representative samples for analytical comparisons.

A need exists to develop compositional databases to augment identification of the range of concentrations of key characterizing components. These databases should include relevant, commercially important varieties from various parts of the world, as explained above. The databases also need to include compositional information on the edible portion of the plant and any other portions of the plant that are likely to be fed to domesticated animals. As explained in Chapter 4, such databases should include both targeted analyses and profiling analyses.

Any adverse health effect that arises from unintended compositional changes will be a consequence of the inherent toxicity of the component in question and the level of dietary exposure to that particular component. Therefore, dietary exposure should also be considered as a part of the compositional comparison. Careful attention should be paid to potential exposure levels for high-level consumers. Cultural anthropological considerations and their effect on dietary exposure

should be evaluated. For example, a high proportion of the diet in some Mexican populations is corn.

Compositional comparisons should also consider the possible effects food processing may have on any unique components that might be identified in comparisons of the edible portions of the unprocessed crop. In some cases, the unintended unique components could be removed by simple processing steps, such as soaking or peeling. However, in other cases, chemical alterations would be anticipated in identified unique components as a consequence of processing operations. Again, cultural issues should be considered. Processing corn into masa or polenta would be more important in some geographic areas than in others. Processing soybeans into tofu, miso, or soy sauce would be more important in some Asian countries than in other parts of the world.

Power of Agronomic Comparisons

In the case of GE plants, the line selection stage leads to the elimination of the majority of the candidate varieties. In laboratory, greenhouse, and field trials prior to commercialization, various agronomic traits are evaluated, beginning with a rather large number of transformants. Traits such as plant height, leaf orientation, leaf color, early plant vigor, root strength, and yield may be considered depending upon the crop type. Varieties with unusual agronomic features are discarded even though these agronomic traits are not specifically linked to the safety assessment protocol. Such agronomic evaluations are important and useful, but not entirely sufficient for the identification of unintended changes.

Limitations to Toxicological Evaluation in Animal Models

Conventional toxicological tests in animals are of limited value in assessing whole food, including GE food (FAO/WHO, 2000). The amounts of food administered to animals are limited by the effects of satiety and the possibility of nutritional balance (Kuiper et al., 2001). For example, in a study of tomatoes that were genetically engineered to contain a Bt endotoxin, the level of lyophilized tomato powder was limited to 10 percent in the diet of rats because of the comparatively high potassium content of tomatoes (40-60 g/kg) and the possibility that higher levels of incorporation would have lead to potassium-induced renal toxicity (Noteborn and Kuiper, 1994).

In such situations it may be impossible to incorporate the GE food into the diet at levels that would be used in conventional safety assessments of individual food ingredients, that is, levels that are 100-fold or higher than those expected in human diets. Furthermore, other naturally occurring food components, such as antinutrients, could be present at much higher levels than the substances that result from the genetic engineering process, so observed abnormalities in the animals could be attributable to other factors. In cases in which the GE food is

intentionally altered in its nutritional characteristics, those attributes could profoundly affect the results of animal toxicity testing.

Animal toxicology testing may be useful in some situations. The highest test dosages should be the maximum amount that can be included in a balanced animal diet, while the lowest dosage should be comparable with the expected amount in the human diet (Kuiper et al., 2001). WHO (2000) recommended that a subchronic, 90-day study in rodents with whole food should be sufficient to demonstrate the safety of long-term consumption. Longer-term studies could be considered if the results of the 90-day study indicated progressive adverse effects, such as proliferative changes in tissues (WHO, 2000). Obviously any animal toxicology studies should include carefully constructed control groups to minimize the misinterpretation of potentially confounding effects.

While the application of animal toxicology tests is of debatable value, in the case of GE food in which the novel protein is expressed at a low level, is susceptible to pepsin hydrolysis, and is not similar to known protein toxins, such testing will assume greater importance in situations in which the GE food is not substantially equivalent to its traditional counterpart and is significantly altered in its composition. In such cases, appropriate animal toxicology testing with whole food should be considered on a case-by-case basis in parallel with toxicological evaluation of individual food constituents (including novel proteins), in vitro experiments with animal and human tissues and organs, and possibly human clinical studies (Kuiper et al., 2001). Using various biomarkers to improve the sensitivity of subchronic and chronic animal toxicological tests has also been advocated (Diplock et al., 1999; Schilter et al., 1996). However, such approaches require further assessment and validation of their predictive capability.

Need for Validated Methods and Standardized Approach

The methods used in safety assessments of GE food ideally will be standardized and well-validated. Some of the current methods used for specific macronutrients (amino acids, carbohydrates, fatty acids) and micronutrients (vitamins and minerals) are reasonably well-validated and standardized. This is the case for many of the analytical procedures currently used to determine the composition of the GE food compared with their traditional counterparts (e.g., proximate analysis, nutritional profile, toxicant and antinutrient levels). As new analytical methods, such as proteomics and metabolomics, are developed (see Chapter 4), validating and standardizing them will be critical if they are to be implemented in screening procedures.

Need for Flexibility

While a core of data developed by well-validated and standardized approaches should be expected as part of the safety assessment of all GE food (in-

cluding proximate analysis and nutritional profiling), many of the other comparisons between GE food and its traditional counterparts should be based upon knowledge of the inherent characteristics of the particular food and the source material providing the desirable gene. For example, characterizing antinutrients and toxicants, such as the glycoalkaloid levels in potatoes, should be compared (see also Chapter 5).

Thresholds and Adventitious Presence

The central axiom of toxicology is that the dose makes the poison. Even with respect to unintended effects on human health, the dose of the substance in question would be a major determinant of the likelihood of an adverse effect. The overall aim of the safety assessment of GE food appropriately focuses on any unique components that are intentionally produced in the GE food and do not exist in the comparator variety. The dose of any such unique component would directly influence the likelihood that this component might be associated with an adverse health effect. For metabolic components of GE food, the “threshold of regulation” concept developed for unintentional food additives should aid in the identification of those components that should be evaluated for safety (Rulis, 1992).

The threshold doses for allergic sensitization are quite low and not particularly well-defined. The possibility exists that a novel protein contained in GE food could either be or become an allergen. However, levels likely exist below which novel proteins would be unable to elicit allergic sensitization in susceptible individuals. Thus as the concentration of a potential allergen in a food decreases (dilution effect), the probability for an adverse health effect decreases as well.

EVALUATION OF POSSIBLE UNINTENDED CONSEQUENCES OF INSERTED GENES

Likelihood of Unintended Effects

All plant breeding procedures, including conventional breeding, can produce unintended effects. For example, GE soybeans altered to produce enhanced levels of the amino acid lysine (Falco et al., 1995; Hitz et al., 2002) showed an unexpected decrease in oil content, and golden rice designed to express increased levels of beta-carotene showed an unexpected increase in naturally occurring plant pigments called xanthophylls (FAO/WHO, 2000). In another example, the conventional breeding of potatoes to produce a variety with superior chipping characteristics, the Lenape variety, was developed, which had unintentionally high levels of glycoalkoloids, a class of naturally occurring toxicants typically found at low levels in commercial potato varieties (Zitnak and Johnston, 1970). This unintended effect was not discovered until after commercialization, and Lenape

potatoes (see Chapter 3) were withdrawn from the market. Neither of these examples was predicted to have adverse health consequences, but they do demonstrate that unintended effects can occur.

The likelihood of unintended modifications leading to adverse outcomes is determined, to a large extent, by the method or methods used to produce intended changes. This likelihood is considered best as an incompletely understood continuum because available information does not permit the identification of clear demarcations in the likelihood of unintended modifications. Nonetheless, highly narrow crosses created by traditional breeding techniques appear to be at the low end of this putative continuum, and undirected mutagenesis by chemical radiation appears to be at the highest extreme. This probabilistic continuum is discussed in detail in Chapter 3.

Awareness of the possibility of adverse consequences will most likely result from comprehensive compositional analyses and preliminary determinations of their quantitative and functional significance (see Chapters 3 and 4). Awareness also may be raised as a consequence of more direct assessments, for example, feeding trials in humans or animals and postmarketing surveillance studies that are motivated by compositional analyses or other sources or experiences.

Importance of the Gene Discovery Stage

The initial safety assessment during the gene discovery stage is important because it highlights concerns and questions that must be effectively addressed later in the safety assessment process. Examples of health concerns that might be raised during this initial stage include the allergenicity of the source of the gene or known naturally occurring toxicants in the source of the gene. If, for example, a gene is selected from a source with a known history of allergy, such as peanuts, tree nuts, or fish, then assurance must be sought that the gene product is not the allergen from that source.

Allergenicity concerns might not be as obvious as those straightforward examples, however. As an illustrative example only, chitinase genes might be selected as a means to prevent various fungal diseases common to some crop plants. Chitinases from several plants are known allergens (Breiteneder and Ebner, 2000), so the possible cross-reactivity between the selected chitinase product and the known allergenic chitinases should be evaluated.

The Bt proteins used to produce various insect-resistant crops serve as a useful example of the considerations involved at the gene discovery stage. Bt proteins are naturally derived from Bacillus thuringiensis, a common soil microorganism, and more than 100 different forms of the protein are known to exist (Schnepf et al., 1998). Microbial Bt products have been a commercial option for insect control for several decades.

The microbial Bt sprays used agriculturally contain certain specific Bt proteins as the active insecticidal components. The particular Bt proteins present in

these commercial insecticidal sprays have been subjected to toxicological assessment, including acute, subchronic, and chronic toxicity testing in experimental animals and oral gavage studies in humans (McClintock et al., 1995). The Bt proteins in these commercial products have been judged to be safe by EPA.

The various Bt proteins exhibit selective toxicity to specific insect targets. GE corn or maize, potatoes, and cotton have been developed that express specific Bt proteins within the plants. Several different Bt genes that express slightly different Bt proteins have been used or are in commercial development. The safety assessment of these Bt crops relies in part upon the existing history of safe use of similar products as microbial sprays. However, the various Bt proteins are subjected to further scrutiny, as outlined elsewhere, to supplement the existing information. In one case, a potential safety issue was identified due to further scrutiny: the comparative digestive stability of the Cry9c Bt protein in StarLink corn prevented its commercialization for use as a food, but not for animal feed.

In the evaluation of StarLink corn, the structure of the novel protein became a key and controversial aspect of the safety assessment. The process of extracting specific proteins from food is complex and difficult, and such extracts have not been readily available for testing. Attempts to circumvent these problems often have relied on microorganisms modified to produce the target agent. For example, in the case of the Cry9C protein, the bacterially encoded protoxin is a 129.8 kDa protein. The bacterial cry9Ca1 gene was modified so that the inserted form in Starlink expresses a protein (Cry9c) that, among other characteristics, replaces the amino acid arginine with lysine at position 123 (APHIS, 1998). This substitution reduces the susceptibility of the active 68.7 kDa toxin to the action of trypsin. (The bacterial protoxin normally is cleaved to a 68.7 kDa active toxin whose toxicity normally is reduced by further trypsin digestion to an inactive 55 kDa fragment.) Therefore, the Cry9c protein expressed in Starlink corn is nearly identical to the bacterial toxin, but is more resistant to trypsin digestion.

For pesticide toxicity testing, EPA accepted microbially produced trypsinized Cry9c as a test substance. However, doubts have been raised about whether such equivalence should be accepted for allergenicity assessment because Cry9c may be glycosylated post-translationally in plants, but not in bacteria (Bucchini and Goldman, 2002). Allergenicity from exposure to GE foods is discussed later in this chapter.

Identification of Potential Hazards

The first step in assessing the potential of an adverse outcome is to identify suspected compositional changes and then assess their potential for adverse health effects. Adverse outcomes may be divided into two major subgroups: adverse consequences of unintended modifications that accompany presumably targeted changes, and unintended consequences of successful, highly targeted, intended modifications.

The best documented examples of an unintended modification of presumably targeted changes is the increase of psoralen in celery bred by conventional means to enhance insect resistance and breeding efforts that unexpectedly led to increased solanine levels in potatoes (Beier, 1990).

Among the best documented examples of an unintended consequence of a successful, highly targeted, intended modification achieved by transgenic techniques that resulted in a potentially harmful product is the insertion of a gene from Brazil nuts into soybeans to increase its methionine. The product included the unintended addition of a major Brazil nut allergen (Nordlee et al., 1996). This effect, while unintended, was predictable because Brazil nut allergy was well-known, and the inserted protein had to be evaluated to determine if it was a Brazil nut allergen (Nordlee et al., 1996). Further development of the product was halted once this likelihood was evident. Although field trials were conducted, there is no evidence to suggest that the Brazil nut gene crossed over into other soybean varieties.

Although dietary trans fatty acids were not introduced through genetic manipulation of a food, their increased consumption is among the best examples of unintended health consequences of a fully predictable compositional change in food. It not only illustrates the difficulties involved in preventing adverse health consequences of known compositional changes, but also, importantly, the ability to discover them through combined metabolic and epidemiological approaches in postmarketing phases using hypothesis-driven studies.

TOOLS FOR PREDICTING AND ASSESSING UNINTENDED EFFECTS

Genetic Analysis/Genomics

New methods of identifying proteins and genes, known as proteomics and genomics, are creating exponentially greater amounts of information about the contents of food components that have unknown relevance to human health. GE foods are typically assessed with respect to the localization and characterization of the genetic material that is inserted into the genome of the host organism. The number of copies of the inserted material is determined, as are the fidelity of the transferred DNA and the localization of those inserts with respect to other genecoding regions and promoters. This genomic information has some value in reducing the likelihood of unanticipated effects by selecting those events that are not adjacent to or do not disrupt other genes in the genome and therefore are more likely to have unintended effects on other proteins produced by the organism. Additionally, nutrition research will be advanced by the use of nutritional genomics, which offers the potential to reduce the risk of unintended effects from exposure to certain food components and to allow for dietary planning that is focused on preventing or coping with chronic disease (Kaput and Rodriguez, 2004; Stover, 2004).

As previously discussed, many GE foods are minimally altered from their conventional counterparts. Thus novel proteins and other unique components are present at relatively low levels in these foods. Accordingly, on a dose-response basis, these novel proteins and components would not be expected to provoke unexpected adverse health effects unless they are profoundly toxic or allergenic. This situation could change considerably with the introduction of future GE products that are intentionally modified to be significantly different from their traditional counterparts. Thus greater scrutiny of such GE foods is expected during their safety assessment. However, flexibility will be required with respect to the nature of the safety assessment protocol.

Compositional Comparisons

Proximate Analysis

Proximate analysis involves determining the levels of protein, fat, carbohydrate, fiber, ash, and water in GE food. Because such determinations are relatively crude, this approach would likely identify unintended consequences only in situations in which such changes had considerable impacts on the functional and phenotypic characteristics of the food.

Nutritional Components

Nutritional analysis involves determining levels of appropriate macro- and micronutrients in the GE food. If the food is engineered specifically to enhance its nutritional characteristics, alterations in key nutrient levels would be anticipated. In other situations, changes in the nutritional composition of a GE food as compared with that of a suitable comparator would indicate the possibility of unintended effects. Such effects would not necessarily be significant in terms of human health unless the nutrient level was substantially changed in a food that served as an important dietary source of that particular nutrient. Another possibility is that genetic engineering could alter the nutrient profile or affect the bioavailability of an essential nutrient.

Endogenous Toxicants and Antinutrients

On a case-by-case basis, comparisons could also be made with respect to the levels of endogenous toxicants and antinutrients in plants, animals, and microorganisms. For example, potatoes contain naturally occurring glycoalkaloids, so glycoalkaloid levels in potatoes would typically be compared. With other foods, the identity of any such toxicants and antinutrients would be dependent upon existing knowledge. For example, soybeans contain several documented toxicants and antinutrients (e.g., phytic acid), flatulence-producing oligosaccharides

(e.g., raffinose and stachyose), and trypsin inhibitors (OECD, 2001). All of these components could be assessed as part of the comparative evaluation.

Endogenous Allergens

In addition to concern about new allergens (see Chapter 5), concerns may also be expressed about endogenous allergens. Endogenous allergens are the allergenic proteins that naturally occur in specific food, for example, Ara h 1 and Ara h 2 in peanuts (Burks et al., 1991; Stanley et al., 1997) and Ber e 1 in Brazil nuts (Nordlee et al., 1996). Occasionally, safety assessment includes some consideration of the effect of the genetic engineering on the levels of endogenous allergens in the host organism. Under most circumstances, alteration in the number or levels of endogenous allergens would not be expected. In other words, both GE and conventional soybeans should be equivalently allergenic to soy-allergic consumers. If changes occurred in the levels of endogenous allergens, they would be properly characterized as unanticipated.

Glyphosate-tolerant soybeans were documented to have allergen profiles similar to those of conventional soybeans (Burks and Fuchs, 1995). The necessity of assessing GE food for altered levels of endogenous allergens is probably questionable in circumstances in which the host organism is rarely allergenic (e.g., maize) and the GE food is substantially equivalent to its conventional counterparts in all other respects. The impacts of altered levels of endogenous allergens on human health are questionable, even if they were proven to occur. For example, soybean-allergic individuals would avoid all soybeans, including GE soybeans, so that exposure to a GE soybean with higher levels of soybean allergens would have no anticipated effect on individuals already sensitized to soybeans.

An increased level of endogenous allergens might increase the likelihood of sensitization, but sensitization usually occurs after rather substantial exposure to the offending food and its allergens. Of course genetic engineering could also possibly lower the level of endogenous allergens. However, this effect would have to be quite pronounced before the GE crop could be considered hypoallergenic.

Other Characterizing Components

GE food can be compared with its conventional counterpart on the basis of any other characterizing component. For example, soybeans contain isoflavones, which may have potential health benefits, including preventing cardiovascular diseases, osteoporosis-related hip fractures, and some cancers; treating diabetes; and possibly relieving menopausal symptoms (Anderson et al., 1999; Goldwyn et al., 2000; Vedavanam et al., 1999). Thus a comparison of isoflavone levels between GE and conventional soybeans could be made. Obviously, information

must exist on the range of typical levels for such characterizing components in varieties of the particular crop grown under a range of agronomic conditions before such comparisons can be meaningful.

Characteristics of Compositional Changes with Adverse Effects

Several general characteristics of food constituents are of particular relevance to early phases of identifying potential hazards. From a broad mammalian physiological perspective, the chemical structures of food constituents that are newly introduced or whose levels are altered may provide clues regarding their potential biological role in developmental and subsequent life stages through possible structure-activity relationships.

In general, the length of bioactivity of a compound and its potential for adverse effects is influenced by the stage of development during which it is first expressed (e.g., early embryonic compared with late fetal) and the number of functions the compound fulfills. In addition, if the concentration range that separates expression of a physiological role from levels that result in toxic outcomes is narrow, the compound has a higher possibility of causing adverse outcomes that may be functionally significant (Anderson et al., 2000; Vesselinovitch et al., 1979). The same is true regarding a compound’s bioavailability. If a compound has high levels of gastrointestinal absorption and distribution in multiple metabolic pools and is efficient in its bioactive transformation and not efficient in its detoxification or excretion, there is a greater possibility that it will cause adverse consequences.

Other relevant factors in an agent’s early evaluation are its novelty (i.e., lack of historical experience with its consumption) and allergenic potential. Obviously, potential problems are more predictable for food constituents with a long history of consumption.

Nature of Modification

The nature of compositional changes also merits consideration, for example, the magnitude of additions or deletions of specific constituents and modifications that may result in enhanced allergenic potential. It is also important to acknowledge that the most serious challenges of anticipating unintended human health consequences will be presented by components for which there is little documented knowledge. Preceding chapters have described the challenges presented by the limited information we have regarding the range of normal variation of most of the thousands of known plant constituents and their functional roles, if any, in consumers. The major exceptions are essential or nondispensable nutrients, as protocols for assessing known agents are relatively well developed (OECD, 1993, 2000; OFAS, 2001).

NEED FOR CLINICAL AND EPIDEMIOLOGICAL STUDIES

Although not a focus of this report, postmarketing studies of GE foods with substantially altered composition are also of interest because such studies often inform the selection and design of scientific approaches for assessing potential impacts, such as potential toxicity, nutritional aspects, and allergenicity, of new food prior to commercialization. Thus postmarketing studies are often vital components of an essential feedback loop that informs evaluations of food in various stages of commercialization. Similarly, timely recognition of the future potential utility of epidemiological studies can guide the premarketing development of systems to facilitate postcommercialization tracking of food or components of interest.

Clinical and Epidemiological Studies

Clinical and epidemiological studies are essential for anticipating and detecting adverse effects, identifying health outcomes, and assessing exposures. Because epidemiological approaches provide an important array of tools for anticipating and detecting adverse outcomes, there are several issues involved with interpretation of such studies that must be considered. These issues include the degree of specificity and precision for measures of exposure and outcome, study design, statistical power, potential for and control of confounding factors, analysis of effect modification, and measures of association.

Careful delineation of each of these issues is the hallmark of quality epidemiological investigations. The more tightly the exposure measurement can be defined, the stronger the interpretation of any study. Where inferences warrant interpretation beyond assessments of association, reference must be made to established criteria for causality. When toxicological studies suggest a hypothetical adverse health consequence in relation to a new GE food, postmarket epidemiological studies can be targeted to particular health consequences and, if a suspected adverse outcome is documented, aid in preventing recurrences of similar unintended effects.

Where no such suggestions arise from toxicology or other types of evaluation, routine monitoring and surveillance of the most sensitive indicators of infant health, cancer risk, cardiovascular disease risk, and other outcomes have been very valuable in detecting unanticipated problems.

Metabolic Studies

The relationship between epidemiological, toxicological, and metabolic studies can be illustrated by what happened in the usage trends and investigation of the health effects of trans fatty acids. Although trans fatty acids in food were not introduced by genetically modifying food, their introduction in food is a particularly instructive example of an unintended adverse health consequence of an in-

tended compositional change originally designed to benefit the population at large.

It was well-established during the late 1970s that serum cholesterol levels were positively associated with coronary heart disease (CHD), suggesting that dietary factors might be responsible. This led to an increase in production of nonanimal sources of fat, namely vegetable oils, and many of these were partly hydrogenated to convert liquid oil to margarine and shortening. Concerns related to the potential for adverse consequences of partially hydrogenated oils led to studies more than three decades ago that examined the effects of partially hydrogenated fats in the diet. These studies found either modest elevations or no effects on serum cholesterol (Anderson et al., 1961; Katan, 2000; Vergriese, 1972). Metabolic studies by Katan and others in the late 1980s and 1990s showed that trans fatty acids had much more significant effects on overall lipoprotein patterns than were evident from changes in serum cholesterol alone, for example, increases in plasma concentrations of low-density lipoproteins and decreases in high-density lipoproteins (Aro et al., 1997; Hu and Willett, 2002; Judd et al., 1998; Mensink and Katan, 1990).

Epidemiological studies using data from several countries suggested dietary trans fatty acids were associated with population rates of CHD death (Kromhout et al., 1995), and in several cohort studies (Ascherio et al., 1996; Oomen et al., 2001; Pietinen et al., 1997), higher intakes of trans fat were associated with increased risk of CHD (Hu and Willett, 2002). The two types of evidence combined provided strong support that trans fatty acid intake is causally related to the risk of CHD (Willett and Ascherio, 1994).

SAFETY ASSESSMENT AFTER COMMERCIALIZATION

Postmarketing surveillance is another approach to identify unanticipated adverse health consequences from the introduction of GE food. However, postmarketing surveillance has not been used to evaluate any of the GE crops that are currently on the market, and several challenges exist to its use. First, using postmarketing surveillance presumes that the GE food will be identifiable in the marketplace, making it possible to identify consumers with exposure to that product and whose health status can then be monitored. With commodity crops such as soybeans and corn, the intermingling of GE and traditional varieties occurs on a wide scale due to shared harvesting, transportation, and storage equipment and facilities.

Consumers are often exposed to ingredients derived from GE crops, such as corn syrup or soybean oil, rather than the whole food, and some future GE food will be modified with the intent of improving the nutritional composition of the food. The incorporation of such food into the human diet presents many challenges for postmarket assessment of unintended adverse health effects. Postmarket surveillance holds considerably more promise in monitoring potential effects of

GE foods that are not substantially equivalent to their conventional counterparts and that contain significantly altered nutritional and compositional profiles.

Assessing Nutrient Profiles of Individuals

Assessing the proportion of a population at risk for a nutrient deficiency and determining the level of intake necessary to avoid deficiency in a specified proportion of a population requires that the average nutrient requirement and intake distribution be known. Methods for assessing population risk and planning for intakes of groups have been reviewed recently (IOM, 2001, 2003).

A model for establishing upper intake levels for nutrients has been developed to minimize the risk of nutrient toxicities (IOM, 1998). This model defines the Tolerable Upper Intake Level, that is, the highest level of daily nutrient intake that is likely to pose no risk of adverse health effects to almost all individuals in the general population. These levels generally are based on total nutrient intakes, regardless of source (e.g., food and nutrient supplements), and are not intended as recommended levels of intake. Intakes above the Recommended Dietary Allowance and below the Tolerable Upper Intake Level likely entail no added benefit or risk to most healthy individuals.

This model takes as its definition of an adverse health effect any “significant alteration in the structure or function of the human organism or any impairment of a physiologically important function” (Klassen et al., 1986; WHO, 1996). The model is based on two previous reports (NRC, 1983, 1994). It accommodates unique attributes of nutrients, that is, their beneficial role at lower levels, sources of variability in sensitivity, issues of bioavailability, nutrient-nutrient interactions, and other relevant factors.