Driving Technologies

Advances in Software

GEORGE E.PAKE

MY RESPONSIBILITIES for the past two decades have been as a research manager in association with a research enterprise that has devoted substantial effort toward advancing software technologies. In our research and technology planning, it has often been difficult to partition the research—or especially the projected technological and business systems—into a software sector and a hardware sector. Indeed, some of our greatest technological successes were a consequence of taking the largest overall systems viewpoint: the recognition that the real systems issues were those of the supersystem, which integrates software and hardware so intimately as to obscure hypothetical boundaries between the software system and the hardware system.

As an example of these integrated software-hardware successes, consider that the human interface to the computer that was pioneered by the Xerox Palo Alto Research Center is now being copied or imitated by many computer workstations on the market. This human interface is characterized by a bitmap display, the mouse for guiding the display cursor, and “windows”1 on the display screen. As is readily seen, the software for this interface requires certain associated hardware components (e.g., the mouse) and characteristics of the processor hardware to operate and refresh the display. Thus, the experimental Xerox Alto computer of the 1970s, introducing the bit-map display and WYSIWYG,2 employed a larger portion of the computer memory and processing power to operate and refresh the display than had been used in most preceding computers. This architectural feature of the hardware was carried over into the Xerox 8010 Star system in the first commercial product offering to take advantage of the software for the Xerox human interface. This approach is now becoming almost ubiquitous for computer workstations, notably having been appropriated by Apple for the Macintosh personal computer.

A similar difficulty applies in searching for the boundaries of a software industrial sector. Portions of this possible sector are readily recognized. The publishers and developers of software for the personal computers that are finding wide application in the business world are surely part of this industrial sector (e.g., Microsoft or Ashton-Tate). So also are the firms that deal in applications software for large computer systems (e.g., Electronic Data Systems). A substantial fraction of the business of the major computer companies (IBM, DEC, Fujitsu, etc.) is in software; yet these companies are frequently regarded, and probably regard themselves, as hardware companies.

Despite the difficulties we may have in defining the software industrial sector, we can agree that software and associated software technologies are playing a larger and larger role in other industries. Thus, there is widespread interest in software technology and in its associated advances as they affect and stimulate the global economy.

Although software technology is widely held to be critically important to the broad technological advance that now spurs the global economy, it is difficult to define. The new Software Engineering Institute being established with U.S. Department of Defense funding at Carnegie Mellon University is, according to its First Report in Carnegie Mellon Magazine, struggling to define what software engineering as a professional field really should be. “Unlike other engineering professions, software engineering does not have well-articulated goals, standards, or methods for its practice,” states the report.3 I would add that the key scientific and engineering underpinnings of software technology remain to be identified and organized into a course of instruction so that we might proceed to train a software technologist in the same way we train a metallurgical technologist, for example. Many of these difficulties relate to the earlier problems we encountered in attempting to segment software from hardware. Furthermore, I believe that complete separation of software considerations from hardware considerations can be done only at severe peril to the effective function and efficient performance of the overall supersystem, hardware plus software.

Consistent with this view, my list of the key technological advances contributing to effective software technology begins with the components and architecture of the hardware systems used for software development. Of paramount importance has been the decline in cost and the increase in speed of processing power and memory, putting at the disposal of the software developer a fast, fully interactive system with rapid access to memory and to data bases.

These hardware advances have made possible the second key advance, the advent of extremely powerful software development environments that combine advances in writing, editing, running, and debugging of software. Gains in effectiveness are afforded by windowing, bit-map displays characterized as WYSIWYG, and other programming innovations that are them-

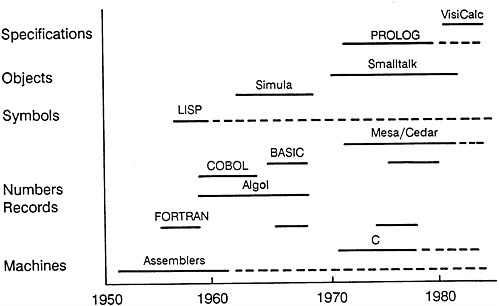

FIGURE 1 Selected programming languages.

selves inherently software in character but are enabled by the more powerful hardware systems.

A third area of technological advance is in the development of different kinds of programming languages, each designed to be optimal for certain types of problems. Many experts classify programming languages into three general categories: (1) procedural, such as FORTRAN, BASIC, COBOL, C, and LISP; (2) object-based, such as Simula and Smalltalk; and (3) constraint-based, such as VisiCalc and Prolog. See Figure 1 for some selected programming languages arrayed in these categories against a time line.

The fourth area of technological advance is, for software development projects, the systematic forward planning and task analysis—application of sound engineering principles to the system specification and to the handling of change orders (last-minute revisions) for large software development projects.

How can we control the powerful systems we establish, so that they do not enslave us or stifle creativity? I believe that, insofar as software development and the supporting technologies are concerned, we have not yet installed systems of such rigidity as to be threatening in that way. The software development process is extremely diverse throughout industry, and many of our concerns about its cost and low efficiency relate to that diversity. There are many languages and development environments in use—a plurality that fosters creativity and facilitates revolutionary or innovative approaches, but at the cost of low efficiency and low schedule predictability. The desire

for greater efficiencies in large software development tasks will produce pressures for greater standardization in the languages used and in software engineering procedures. Once these pressures begin to rigidify software development processes, at some time far in the future, concerns about enslavement and stifling of creativity could indeed characterize software technology. My personal assessment is that software technology is today remote from that degree of standardization and rigidification of the development system.

ACKNOWLEDGMENT

I would like to express appreciation for helpful discussions with three of my Xerox colleagues, Dr. Adele Goldberg, Dr. Robert Ritchie, and Dr. Robert Spinrad. I also acknowledge a helpful and stimulating discussion with Mr. William Gates, chairman of Microsoft Corporation.

NOTES

Advances in Materials Science

PIERRE R.AIGRAIN

MATERIALS ARE SO IMPORTANT for society that we classify the various eras in the development of mankind by the kind of materials used; for example, we commonly refer to the Stone, Copper, Bronze, Iron, and Steel Ages. Only 120 years ago we entered the Steel Age; all the materials we now call new, such as aluminum, have come into being since that time.

New materials are discovered and introduced practically every day. In 1900, aluminum was still a specialty product. Plastics were not unknown; celluloid and bakelite were discovered at about that time, but their use was extremely limited. Celluloid was initially introduced as a substitute for ivory for billiard balls, and this was its main use for 20 years. Today, a comprehensive list of materials would include at least 500 entries, ranging from structural materials to conductors.

Although new materials appear every day, a long period of time elapses after their initial introduction and specialized application, which require only small quantities of the materials, before they become economically important materials in tonnage and value. Obviously, when materials are introduced for large-scale application, their influence on industry and society is enormous.

The sequence of events from introduction to large-scale use of new materials raises three questions that can be addressed first in a general, almost philosophical way and then examined further by looking specifically at the new superconducting materials. The first question is, Why have new materials been discovered so quickly during the past few decades, and will this trend continue? Will we continue to invent new types of materials at the same rate? The second question is, Why does it take a long time for materials to be introduced in all phases of industry? Why are they often limited to special applications for extended periods? The third question, of course, is, How can new materials really change industry and society when they finally reach the broad applications stage?

The answer to the first question is that new materials will continue their rapid trajectory of discovery and limited application for the following reasons:

This paper is adapted from the transcripts of the Sixth Convocation of the Council of Academies of Engineering and Technological Sciences.

A much better interaction between basic science and materials technology has developed. One important contribution of basic sciences to materials development has been the development of analytic instrumentation, including synchrotron light, for the study of materials. For centuries, the approach to materials research was systematic empiricism because there was no other way. Then there was movement to educated empiricism in which some general rules drawn from the basic sciences were useful.

The market has also been a strong influence on the rate at which new materials have been discovered. Sometimes the market demands a new product, which technologists must create. At other times, the technology is available, but there is no market for its applications. Optical fibers are a typical illustration of this interaction between technology push and market pull. There was a market need for optical fibers because of the crowding of the broadcast bands for radio communication and the lack of space underground in large cities to lay telephone wires. Because of improved scientific understanding of the light absorption process in solid materials, people realized that the absorption coefficient of light in a glassy material was almost entirely due to extrinsic problems, such as impurities. These could, at least in theory, be eliminated. Basic science research had also shown that intrinsic absorption of this silica was many orders of magnitude lower than that of the glass that was available only 15 or 20 years ago.

The technology with which to purify silica was available, and it could be transferred with few changes from the semiconductor industry, which had already developed chemical vapor deposition techniques. In addition, many ancillary components were available for building an optical fiber communication system, components such as the semiconductor laser, detectors, and signal treatments. All of these conditions combined to produce unexpectedly rapid development of new materials used for fiber optical communications. In fact, in this case, optical fiber has become an industrial product in an astonishingly short time.

Whereas materials technologies once developed independently, they now develop through continuous interaction between basic science and materials research, and this trend will continue. The interaction among technologies has become the biggest engine of technological development during the past few years.

The second question concerns the length of time required for new materials to achieve broad applications. For example, carbon fibers are used in the aeronautics industry and in the leisure industry for tennis rackets and fishing rods. However, it will take a long time before carbon fibers take the place of many automobile components.

There are several reasons for this phenomenon. Most of the new materials of interest are not costly, because they are based on plentiful elements of the periodic table. For example, new magnetic materials are based on iron and boron, which are very inexpensive. Neodymium is one of the second

most abundant elements, and there are enormous deposits of bastnaesite and monazite from which neodymium is easily extracted. Despite the low cost of the elements themselves, at the present time the process for making these materials in the proper form is very costly. It often takes time to develop methods to reduce the price, and as long as a process is costly, the material will not be used in large quantities. In addition, the manufacturer of a particular material sells his product to people who make components, which are introduced into products that are then sold to consumers or industry. Consequently, there is a small profit incentive for the developer of the technology, because he is under the price pressure of a long train of customers.

Superconductors illustrate many of the problems inherent in bringing a new material from discovery to widespread application in a short time. Although superconductors were discovered before the First World War, they were not understood. Nevertheless, it was recognized that a material that could carry electricity without loss of energy should be extremely marketable. When it was discovered that these properties held true only for very low current levels and at very low temperatures, enthusiasm died out.

Solving the problem of the temperature and current constraints on superconductivity became a matter for pure science research. Some progress was achieved through empirical theories such as London’s and later through the work of Bardeen, Cooper, and Schrieffer. New materials, such as niobium nitrate, that had slightly higher transition temperatures were discovered. Then Matthias applied his genius to devising more or less empirical rules, which led to the discovery of materials with higher transition temperatures, culminating at 23.2 K. Although this was an important discovery, it was not sufficient to maintain market pull.

Other people were working in completely different materials and found that some oxides, for example, have transition temperatures above 10 K. During that time there was also work in France on the lanthanum copper oxide-based material and yttrium copper oxide. These materials produced higher conductivity but were not tried at low temperatures.

Müller and Bednorz, working at the IBM Laboratory in Zurich, proposed that these oxides might work at a higher temperature. In early 1986, Müller and Bednorz published results showing the initial appearance of superconductivity at 40 K, a sudden jump by a factor of 2. Within three months, it was announced in the People’s Republic of China that beginning superconductivity at 73 K and zero resistance at 80 K had been observed. The result was soon reproduced in the United States, Europe, and Japan. There are indications that a sample was superconducting at a temperature of 244 K, very close to room temperature. Thus, from the time of the first theoretical treatment of superconductivity in the 1930s, it has taken more than 40 years for superconductors to be brought to the stage where it is realistic to talk about widespread application of this technology.

Let us consider likely potential uses and effects of these new supercon-

ducting materials in answering our third question: How can new materials change industry and society when they reach the broad applications stage? First, they will be used in specialized, high-value-added applications in the electronics industry. The number of possible applications is enormous, from measuring small magnetic fields to fast computing with Josephson devices. These are applications that will come quickly.

Second, high-temperature superconductors will probably influence electrotechnology, that is, the production and transport of electrical power, even though several critical developments are necessary. For example, because the materials are ceramics, which are notoriously nonductile, it is difficult to use them as windings in electric generators. How can they be put in the right shape? What kind of auxiliary equipment is necessary to keep them at a low temperature? Even if they are superconductive at room temperature, their current-carrying capacity will be only one-fourth of the transition temperature.

Thus, “high-temperature” superconducting materials are much easier to use than those cooled with liquid helium, mostly because they can be cooled with liquid nitrogen, which is a much better coolant with a much higher heat of evaporation. However, enormous developments are required before these superconductors can be used for massive applications.