9

Teaching Science as Practice

Main Findings in the Chapter:

-

Students learn science by actively engaging in the practices of science, including conducting investigations; sharing ideas with peers; specialized ways of talking and writing; mechanical, mathematical, and computer-based modeling; and development of representations of phenomena.

-

All major aspects of inquiry, including managing the process, making sense of data, and discussion and reflection on the results, may require guidance.

-

Instruction needs to build incrementally toward more sophisticated understanding and practices. To advance students’ conceptual understanding, prior knowledge and questions should be evoked and linked to experiences with experiments, data, and phenomena. Practices can be supported with explicit structures or by providing criteria that help guide the work.

-

Discourse and classroom discussion are key to supporting learning in science. Students need encouragement and guidance to articulate their ideas and recognize that explanation rather than facts is the goal of the scientific enterprise.

-

Ongoing assessment is an integral part of instruction that can foster student learning when appropriately designed and used regularly.

Children come to school with powerful resources on which science instruction can build. Even young children can learn to explain natural phenomena, design and conduct empirical investigations, and engage in mean-

ingful evidence-based argumentation. Through instruction, teachers can take much better advantage of the resources children bring to school than is commonly the case in K-8 science classrooms in the United States. Although K-8 science instruction has long been a subject of research, breakthroughs in research on teaching and learning have dramatically altered understanding of how children learn science and what can be done to structure, support, and develop their knowledge, use, and understanding of science.

In this chapter we focus on the classroom-level implications of the learning and instruction research. The chapter is divided into four sections. First, we begin with a description of typical instruction in U.S. K-8 science classrooms. In the second section we present the contrasting view of science as practice put forth in the instructional research, pointing to promising evidence of student learning when instruction is framed around science as practice. In the third section we look more closely at the common forms of scientific practice that students engage in across different types of instructional design, pointing to the challenges students encounter as they do so. Fourth, we characterize strategies that teachers and curriculum developers can use to promote student learning of science through practice. We close with the major conclusions that can be drawn from current research on science instruction.

In order to have a productive and meaningful discussion of science instruction, we need to be clear about what questions about science instruction research can and cannot answer. First, some pedagogical debates rest on differences in values rather than questions that are answerable through empirical research and, accordingly, cannot be resolved in this chapter. For example, one may be tempted to ask “Is inquiry better than direct instruction?” However, when comparing inquiry and direct instruction, the critical question is “Better for what?” Advocates of one or the other instructional approach may have different underlying visions for what it means to learn science. Thus, we need to be clear about what our goals for science learning are and ask how inquiry and direct instruction compare in reaching specific educational goals.

Second, this chapter does not provide a blanket endorsement of particular strategies for instruction (e.g., group work, computer-mediated activities, hands-on science, explicit instruction). These general instructional approaches are underspecified and gloss over important considerations of instructional goals. Computers, for example, can be used in many ways—to facilitate drill and practice exercises or to provide access to powerful analytical tools and real scientific data sets, such as visualizations of real-time climate data. Group work can be used to simply divide up the work among students (e.g., one handling the experimental apparatus, another taking notes) or groups may work more organically—debating evidence or coming to consensus about interpretations of empirical findings. Depending on the goals of a specific lesson, one or sev-

eral instructional strategies may be appropriate, and none will be an instructional panacea. Thus, we discuss a variety of instructional interventions that incorporate inquiry, group work, computers, and explicit instruction and suggest how these strategies can be useful for reaching particular goals.

Third, our argument rests heavily on a growing number of small-scale, long-term intervention studies that demonstrate the profound learning effects that well-designed, high-quality science instruction can have, as well as a few controlled quasi-experimental studies. However, we acknowledge that we do not have strong evidence of these interventions at scale, and we adapt the nature of our claims accordingly. We point to features of instruction that are common across research programs that we view as “best bets” for organizing instruction. We recognize that instructional practice is situated in a layered and interactive system in which curriculum and assessment policy, teacher knowledge, and professional development opportunities have a profound effect on instructional quality.

The research points in fruitful directions and uncovers what is possible under certain conditions. Much work remains to be done to identify how to put the necessary conditions in place and support students’ learning of the type of science articulated here. Furthermore, the studies do not allow one to conclude that a particular design approach is the most effective way to achieve a particular set of goals in contrast to other approaches. However, these studies do reveal the kinds of science classroom learning environments that are possible, what students can achieve therein, and what challenges remain to be addressed in instructional design. They help us explore what K-8 science teaching and learning could become.

CURRENT INSTRUCTIONAL PRACTICE

Typical science instruction in the United States does not support learning across the four strands of proficiency of our framework (see Box 2-1). Pursuing the strands framework implies providing students with opportunities to learn topics in depth, to use science in meaningful contexts, and to engage in scientific practices. In contrast, as noted earlier, the U.S. curriculum and standards are seen as “a mile wide and an inch deep.” Typical classroom activity structures convey either a passive and narrow view of science learning or an activity-oriented approach devoid of question-probing and only loosely related to conceptual learning goals (O’Sullivan and Weiss, 1999). Further, U.S. textbooks fail to guide teachers in how to build on students’ understanding, to contextualize science in meaningful problems, or to treat complex ideas other than superficially (Kesidou and Roseman, 2002; Schmidt, Houang, and Cogan, 2000).

Of course, what children learn is not solely dictated by curriculum and standards “content,” but also by ways in which their encounters with cur-

riculum are structured—the things students typically do in science classrooms. Analyses of pedagogy in classrooms corroborate the findings about curriculum and standards. As teachers aspire to cover a broad but thin curriculum, they give insufficient attention to students’ understanding and focus on superficial recall-level questions (Weiss and Pasley, 2004; Weiss et al., 2003).

The recurring activities in science classrooms offer entrée to a narrow slice of scientific practice, leaving students with a limited sense of science and what it means to understand and use science. A steady stream of reading sections from textbooks, taking notes on definitions of key terms, and taking exams that test recall, for example, leaves students with a distinct, and problematic, sense of what it means to know and do science. Linn and Eylon (in press) have characterized the typical activity structure in U.S. science classrooms as “motivate, inform, and assess” in which teachers “motivate” a scientific idea (perhaps with a surprising demonstration), present the normative view, and then assess students’ understanding. This is analogous to patterns observed in U.S. mathematics classrooms (Stigler and Hiebert, 1999). Immersed in these patterns, students come to view science as a compilation of “right answers” provided and confirmed by teachers or textbooks. In class, students expect to be asked to recall facts on demand, rather than thinking about science as a sense-making activity requiring analysis, discussion, and debate (Carey and Smith, 1993; Smith et al., 2000).

In contrast to U.S. science classrooms, in Japan, a nation whose students outscore U.S. students, classroom activity patterns are quite different. Japanese students contribute their ideas in solving problems collectively and critically discuss alternative solutions to problems. Students in classroom environments like these come to expect that these public, social acts of reasoning and dialogue are a regular part of classroom life and learning across the disciplines (Linn et al., 2000; Stigler and Hiebert, 1999).

In sum, the patterns in U.S. pedagogy, curriculum materials, and curriculum standards exhibit a tendency to treat science as “final form” science (Duschl, 1990), in which science consists of solved problems and theories to be transmitted. The dynamics of the discipline—asking questions, finding ways to explore them empirically, investigating and evaluating competing alternative models, arguing—are severely lacking in the enacted U.S. curriculum, classrooms, and, most importantly, in students’ expectations about science and what it means to learn and do science in schools.

The learning and instruction research suggests a dramatic departure from this typical approach, revealing that science instruction can be much more powerful and can take on new forms that enable students to participate in science as practice and to master core conceptual domains more fully. In the next section we sketch in broad brushstrokes an image of science as practice

that can support K-8 students in learning science across the strands and point out how this diverges from current practice.

APPROACHES TO SCIENCE AS PRACTICE IN RESEARCH-BASED INSTRUCTIONAL DESIGN

Scientific practice itself is multifaceted, and so are instructional programs that frame science as practice. Researchers have designed and studied several instructional programs in which students develop scientific explanations and models, participate in scientific argumentation, and design and conduct scientific investigations. Although different programs may emphasize one aspect or another of the strands, they all reflect an approach to science in which students own and engage in aspects of scientific practice modeled on expert practice.

Underlying science as practice are meaningful problems that students work on. This is a crucial shift away from typical K-8 science instruction. A meaningful problems approach explores how to teach the skills in the context of their application (Collins, Brown, and Newman, 1989). As we have argued throughout this report, rather than teaching individual skills separately and having students practice them, skills can be taught as needed, in the context of a larger investigation linked to questions developed with students.

To call a problem meaningful, however, implies two senses of “meaning.” One sense is that a problem is meaningful from a disciplinary perspective—it frames scientific concepts, disciplinary practices, and evidence bases that can be coordinated to articulate and examine central principles and questions within a scientific discipline. Another sense of “meaning” in meaningful problems is that these problems are made intelligible and compelling to students. For example, a problem might draw on issues related to the local ecology. Educators and materials developers can enhance the meaningfulness of problems by drawing on ones that situate learning in the context of networks of ideas and practices. While the programs of research we review take different approaches to engaging students in science practice, they all work from problems that are, in this sense, meaningful to students.

It is also important to bear in mind that here and throughout this chapter, the instructional programs we present are not mutually exclusive hypotheses about instruction. On the contrary, as becomes evident shortly, these are variations on a theme of common elements. Here we discuss the interventions themselves and evidence that children can in fact engage in science as practice in meaningful and productive ways. We do so to underscore the empirical basis of this work before going more deeply into details

about the practices themselves. After providing a broad overview of the interventions and summarizing the evidence that K-8 students can in fact engage in science as practice in meaningful ways, in the next major section we return to finer-grained characterizations of what science as practice looks like in the classroom, and how it can be supported.1

Designing and Conducting Empirical Investigations in K-8 Classrooms

One approach to science as practice is teaching students to design and conduct empirical investigations. Testing ideas by gathering empirical evidence is a mainstay of science education. Researchers have found that, with appropriate instruction, K-8 students can engage in making hypotheses, gathering evidence, designing investigations, evaluating hypotheses in light of evidence, and in the process they can build their understanding of the phenomena they are investigating (Crawford, Krajcik, and Marx, 1999; Geier et al., in press; Kuhn, Schauble, and Garcia-Mila, 1992; Lehrer and Schauble, 2002; Metz, 2000, 2004; Schneider et al., 2002).

Contrary to current practice, which provides students with narrowly conceived, even misleading opportunities to “do science” (e.g., focusing exclusively on validating theories by following lockstep laboratory experiments or doing activities with no clear intellectual goal), these instructional programs engage children in designing and conducting scientific investigations and answering complex questions. These investigations take place over several weeks or months and require careful attention to students’ initial and emerging understanding of the phenomena and instruction designed to gradually build their knowledge and skills. Metz (2004), for example, reported on second, fourth, and fifth grade students’ efforts to design and conduct scientific investigations in the context of a life science unit. The unit gradually built in opportunities for children to master research methods and instrumentation as they learned about animal behavior. After 6 to 7 weeks of instruction, children were invested in the problem, knowledgeable about the domain, and familiar with tools and research design. At that point they were asked to think about a new species (crickets) and to propose researchable questions that they could examine empirically. Metz found that, with strong instructional guidance, children could design and carry out their own investigations—posing questions, determining appropriate methods of inquiry, carrying out the study, and reporting and critiquing their own results.

Of course, students do not learn how to do science only over extended periods of time through highly integrated units of study. Some topics can be treated more discretely and students can make measurable gains in a few days of instruction and practice. An example is the Klahr and Chen (2003) report on a classroom-based experiment that tested instructional approaches to teaching a control-of-variables strategy. In a short instructional sequence, students investigated balls rolling down a ramp to determine the factors that influence the distance the balls will roll. Instruction began with an “exploration and assessment” phase, in which the children were asked to make comparisons to determine how different variables affected the distance the balls rolled after leaving the ramp. Students used a wooden ramp that allowed them to manipulate two variables: the pitch of the ramp (high or low) and the texture of its surface (rough or smooth). In this phase, the children gained a base level of understanding of the phenomena and the test apparatus and were given an opportunity to think about the problem. In this study the performance of students who received instruction far surpassed that of those who did not, and their gains were sustained over time and transferred to new problems.

Whether instruction aims at narrowly defined outcomes (as the Klahr and Chen study did) or long-term investigations and a range of integrated learning goals (as did Metz’s study), there is broad agreement that children need a base level of knowledge about a domain in order to work in meaningful ways on scientific problems. Although the aims of the studies described here varied they both suggest that students need familiarity and interest in the scientific problems and that their learning requires explicit guidance. These interventions also underscore that children will often need clear statements about basic conceptual knowledge in order to succeed in conducting investigations and in learning science generally. These statements may originate from teachers “telling,” or from children reading texts, or hearing from other experts. While these intervention studies suggest that students can learn science across the strands through highly scaffolded and carefully structured experiences designing and conducting investigations, we also note that having students design and conduct investigations may be particularly difficult and require a very high level of teacher knowledge and skill in order for students to master content across the strands (see, e.g., Roth, 2002).

We elaborate on the features of problems that students investigate and the support they need to succeed in the next section. The emerging evidence suggests that learning how to design, set up, and carry out experiments and other kinds of scientific investigations can help students understand key scientific concepts, provide a context for understanding why science needs empirical evidence, and how tests can distinguish between explanations.

Argumentation, Explanation, and Model Building in K-8 Classrooms

Another common approach in the research literature is to create opportunities for students to engage in other aspects of scientific activity, such as argumentation, explanation, and model building. As scientists investigate empirical regularities in the world, they attempt to explain these regularities with theories and models, and to apply those models to new phenomena. Furthermore, the scientific community reaches consensus through a process of proposing and arguing about their own and others’ ideas through talk and writing, using the particular discourse conventions of the discipline. Some instructional interventions have brought these activities into the K-8 classroom. As students conduct investigations to develop and apply explanations to natural phenomena, they develop claims, defend them with evidence, and explain them, using scientific principles.

With a focus on explanation, students attempt to produce evidence that supports a particular account or claim (McNeill et al., 2006; Sandoval, 2003; Sandoval and Reiser, 2004). An emphasis on scientific argument adds the element of convincing peers of the explanation, responding to critiques, and reaching consensus (Bell and Linn, 2000; Driver, Newton, and Osborne, 2000; Duschl and Osborne, 2002; Osborne, Erduran, and Simon, 2004). A focus on model building adds the element of representing patterns in data and formulating general models to explain candidate phenomena (Lehrer and Schauble, 2000b, 2004; Schwarz and White, 2005).

Several elements emerge as critical in these approaches to argumentation, explanation, and model building. In these approaches, units of study are framed to address a question or set of questions about the natural world. The question may arise from benchmark lessons that elicit curiosity, from observations of perplexing natural phenomena, from a problem situated in the real world that can be addressed with scientific evidence, or from questions that scientists themselves are currently struggling to answer (Blumenfeld et al., 2000; Edelson, 2001; Linn et al., 1999). For example, Linn and colleagues used a documented cases of frog mutation in particular ecosystems and an overall pattern of increased mutations nationwide to frame a middle school environmental science unit. In this case there was no definitive scientific explanation for the pattern of mutated frogs; instead, students were engaged in a genuine scientific quandary and explored several competing explanations, including two leading hypotheses in the scientific community. One leading explanation entailed a type of parasite that scientists believe can physically interfere with the natural development of frog limbs, and the other involved a pesticide which, with exposure to sunlight, may interfere with the hormonal signals that control limb development. Once questions are framed and students understand and buy into them, they conduct inves-

tigations whose purpose is to explore one or more possible claims about the question. Dealing with authentic scientific debates required that the educators and researchers involved in this intervention take great efforts to make the scientific problems accessible to students without sacrificing core scientific concepts required to understand ecosystem relations, and the role of hormones in development. To focus and support student learning teachers and instructional materials narrowed the focus and provided students with a handful of factors to investigate and a method or structured choice of methods to choose from in order to explore the problem.

Interacting with Texts in the K-8 Classroom

Reading and texts are important parts of scientific practice and play an important role in science classrooms. Much of the research on students’ interaction with text has been conducted by reading researchers and is often not well situated in the context of science curricula and pedagogy. A few studies do, however, consider the role of text in conjunction with scientific inquiry and the consequences for students learning.

Concept-oriented reading instruction (CORI) is a program of research in which elementary students and teachers pursued the study of conceptual issues in science of the students’ choosing. In CORI, students were introduced to a complex knowledge domain, such as ecology or the solar system, for several weeks. They were then allowed to select a topic in that domain (such as a particular bird or animal) to study in depth and chose which books to read related to the topic. In the course of their inquiry about the topic, students received support in finding relevant resources, learned how to use those resources, and how to communicate what they learned to others. In conjunction with this text-based research, students participated in related inquiry, such as a habitat walk, specimen collection, feeder observations, feather experiments, and owl-pellet dissection. Students in CORI showed better reading comprehension of science-related texts and were more motivated to read about science than were students in traditional instruction (Guthrie et al., 2004).

In another line of research, Palinscar and Magnusson have explored students’ and teachers’ use of text in the context of guided inquiry science instruction (Palincsar and Magnusson, 2005). They describe the interplay of first- and second-hand investigations and the support they provide for the development of scientific knowledge and reasoning. In the latter stages of their research, the researchers developed an innovative text-genre (a scientist’s notebook) to scaffold students’ and teachers’ use of text in an inquiry fashion. The innovative text is a hybrid of exposition, narration, description, and argumentation in which the imagined scientist’s voice personalizes the text for the reader. Use of the text supported students’ learning about the topics

being studied (reflection and refraction). However, engaging students to interact with text in an inquiry fashion required careful mediation by the teachers. Likewise, teachers needed to be supported in developing instructional practices that supported the use of text as inquiry.

Evidence of Student Learning

Thus far we have briefly described science as practice as an instructional approach that presents scientific skills as integrated—the skills of data collection and analysis are encountered in places where they can be useful for learning about a phenomena. We have also contrasted this approach with prevailing patterns of current instructional practice that present content and process separately. The prevalent practice of separating process and content in instruction has often been premised on notions of what students can (and cannot) do. However, the evidence from instructional research suggests that students can in fact engage in science as practice in meaningful ways.

Elementary Grades: Inquiry and Models

The study of the inquiry skills of elementary school students by Metz (2004), described above, situates children’s learning of investigating skills in the context of a study of animal behavior. In these interventions, students develop questions, discuss ways to operationalize their questions in observations, and then collect data, interpret the data, and debate conclusions. In this work, students consider and critique different interpretations of data, and consider such factors as how different measurement or experimental procedures they or other students have chosen could affect what the data reveal about the underlying question. In this way, students exhibit some proficiency in coordinating theory and evidence, distinguishing between the intuitive appeal of their conjectures (their “theory”), and what the evidence actually reveals about the truth of their conjectures.

In Metz’s analysis of investigations designed and conducted by a second and a fourth-fifth split grade class, all of the students succeeded in designing and carrying out investigations. Furthermore, more than 70 percent of the second graders and 87 percent of the fourth and fifth graders demonstrated knowledge that their research was in some respects “uncertain”—a precondition of posing scientific questions and an inevitable feature of scientific work. Upon reflection, 80 and 97 percent of these students, respectively, posited a strategy to address the uncertainty in their research design. Metz’s findings contrast sharply with views that young children cannot conduct scientific investigations or that they are necessarily bound to concrete experiences with natural phenomena.

Similarly, Lehrer and Schauble have worked with elementary school teachers to support students in data modeling practices (Lehrer, Giles, and Schauble, 2002; Lehrer and Schauble, 2000a, 2004, in press). In this approach, students are involved in developing questions for investigation, deciding how to measure the variables of interest, and developing data displays to represent their results. A focus of the approach is involving students in grappling with the need to represent their data in ways that communicate what they believe the data show about the question of interest, rather than giving students ready-made procedures for graphically representing their data. Students create representations and debate their relative merits for helping analyze and communicate their findings. They then revise the representations and use them as a tool to analyze the scientific phenomenon. These representations become more abstract and model-like and less literal over time. In one study, fifth grade students developed graphical representations to analyze naturally occurring variation in growing plants (Lehrer and Schauble, 2004). These students were able to develop representations that captured the properties of the distributions, and they were able to use these tools in designing and conducting investigations of such variables as light and fertilizer on plant growth. The focus on the meaning of the data representation and its use to communicate among the community of students seemed to help learners develop more sophisticated understandings of distribution as a mathematical idea, and the biological variation in their samples it represents.

Middle Grades: Problem-Based and Conceptual Change Approaches

In the middle grades, one common approach to engage students in the practices of science is problem-based or project-based science (Blumenfeld et al., 1991; Edelson, Gordin, and Pea, 1999; Edelson and Reiser, 2006; Kolodner et al., 2003; Krajcik et al., 1998; Reiser et al., 2001; Singer et al., 2000). In these approaches, a research question about a problem can provide the context for extended investigations. Students learn the target science content and processes in the context of pursuing that question. For example, students learn about the particulate nature of matter and chemical reactions while investigating the quality of air in their community (Singer et al., 2000), or they learn about how species interact in ecosystems while investigating a mystery of what killed many plants and animals in a Galapagos island system (Reiser et al., 2001). Characteristics of this approach include establishing a need for the target understanding, through a problem students find compelling (Edelson, 2001), often a real-world application. Students then investigate the problem context and attempt to apply their findings to address the original problem. Often the projects include a culminating activity in which students apply what they have learned to address the problem,

for example, making a presentation or developing a poster to communicate their findings. Culminating activities provide students the opportunity to reflect on their experiences and apply their scientific understanding, for example, discussing atmospheric phenomena to argue about possible causes of global warming, or analyzing forces and motion to redesign and test model vehicles and their propulsion systems (Kolodner et al., 2003).

A slight variation on problem-based approaches is to frame more purely scientific questions, focusing instruction directly on changing students’ conceptual understanding of core scientific ideas like atomic structure, speciation, and the nature of matter. These approaches stem from the research (reviewed in Chapter 4) that characterizes the types of conceptual changes that learners undergo—acquiring new concepts; elaborating existing conceptual structures; restructuring a network of concepts; or adding new, deeper levels of understanding—and discuss strategies for effecting conceptual change. A common thread across these programs of instruction is a strong metacognitive component.

Typically, activities are introduced to make students aware of their initial ideas and that there may be a conceptual problem that needs to be solved. A variety of techniques may be useful in this regard. Students may be asked to make a prediction about an event and give reasons for their prediction, a technique that activates their initial ideas and makes students aware of them. Class discussion of the range of student predictions emphasizes alternative ways of thinking about the event, further highlighting the conceptual level of analysis and creating a need to resolve the discrepancy. In addition, gathering data that expose students to unexpected discrepant events or posing challenging problems to students that they cannot immediately solve are further ways of sending signals that they need to stop and think, step outside the normal “apply” conceptual framework mode, to a more metaconceptual “question, generate and examine alternatives, and evaluate” mode.

Conceptual change shares several of the features of problem-based learning described above. In conceptual change approaches, teachers make complex scientific problems meaningful to students from the outset of study and integrate multiple strands of proficiency. They then provide students with pieces of the problem that will allow them to make incremental progress in understanding a large, complex area of science over weeks or months. The problems—whether practical, applied, or conceptual—require the integration and coordination of multiple ideas and aspects of scientific practice.

Research on these varied approaches to teaching science as practice reveals promising results. First, there is much evidence that, with appropriate support, students engage in the inquiry, use the tools of science, and succeed in complex scientific practices. For example, students engaged in problem-based learning succeed in working with complex primary data sets

to uncover patterns in data and develop complex scientific arguments supported with evidence (Reiser et al., 2001). They can use scientific visualization tools to analyze primary data sets of atmospheric data and explain patterns of climate change (Edelson, 2001; Edelson, Gordin, and Pea, 1999). There is also some evidence that these project-based experiences can help students learn scientific practices. Kolodner et al. (2003) found that middle school students who practiced inquiry in several project-based science units performed better on the inquiry tasks of scientific practice (as measured by performance assessments) than students from traditional classrooms (Quellmalz et al., 1999). Students in project-based science classrooms performed better than comparison students on designing fair tests, justifying claims with evidence, and generating explanations. They also exhibited more negotiation and collaboration in their group work and a greater tendency to monitor and evaluate their work (Kolodner et al., 2003).

Analyses of students’ content learning also reveal the promise of these approaches for their mastery of scientific principles. Conceptual change researchers have found that across the K-12 grade span, involving children in cycles of model-based reasoning can be a highly effective means of building their deeper conceptual understandings of core scientific principles (Brown and Clement, 1989; Lehrer et al., 2001; Raghavan, Sartoris, and Glaser, 1998; Smith et al., 1997; Stewart, Cartier, and Passmore, 2005; White, 1993; Wiser and Amin, 2001). Problem-based approaches have demonstrated that students succeed in learning complex scientific content as represented in state and national standards, using assessments like the National Assessment of Educational Progress (NAEP) and standardized state tests. For example, Rivet and Krajcik (2004) found that students in a lower income urban district achieved significant gains in both science content (e.g., balanced forces, mechanical advantage) and inquiry process skills, as measured by pre- and posttest achievement items based on state assessments and items from the Trends in International Mathematics and Science Study.

There is also some evidence of the scalability of the approach. Marx and his colleagues (2004) examined the learning gains for 4 project-based units enacted in a school district across 3 years. Again, using curriculum-based test items designed to parallel those on state and NAEP assessments, they found significant learning gains (more than 1 standard deviation in effect sizes) on both content and process items for all four units. These gains persisted and even increased across years of enactment, as the intervention scaled to 98 classrooms and 35 teachers in 14 schools. In more recent work, this research group has compared performance on the high-stakes state assessments for students in project-based classrooms with those of the rest of the district, again focusing on students from the lower socioeconomic distribution in this urban district (Geier et al., in press). Project-based students from seventh and eighth grade achieved higher content and process scores

than their peers and had significantly higher pass rates on the statewide assessment. The effects of participation in the project-based classrooms were cumulative, with higher scores associated with more exposure to project-based instruction.

Taken together, these results demonstrate that instruction that situates science as practice and that integrates conceptual learning can have real benefits for learners. Students at both elementary and middle school levels can succeed in engaging in science and in learning the science content that is encountered in these contexts. The challenges students have with epistemology and coordinating theory and evidence shown in some studies do not arise in the same way in these very supportive classrooms. An important aspect of these designs is that they contain very carefully crafted support for the scientific practices. In the next sections we look more closely at practices that may help science learners master target concepts and practices.

ELEMENTS OF PRACTICE

We have argued that children should engage in meaningful problems in science class and experience science as practice and that when they do, they can realize tremendous advances in their understanding and ability to use science. Here we provide a finer grained description of elements of practices students can engage in that support their learning.

Research on the professional practices of scientists reveals a number of interacting activities that characterize their engagement (e.g., Latour, 1980; Longino, 1990; Nersessian, 2005). Scientists talk through problems in real time—through publication and through less formal written venues, such as lab books, email exchanges, and colloquia. They engage in an iterative process of argumentation, model building, and refinement. In some classrooms, students are engaged in several core practices that resemble scientific practice. Just like scientists, students ask questions, talk and write about problems, argue, build models, design and conduct investigations, and come to more nuanced and empirically valid understandings of natural phenomena. As we’ve said, K-8 students are neither scientists nor blank slates. They have a store of life experiences and intellectual resources, but they lack content expertise, refined knowledge of investigative methods, familiarity and acceptance of scientific norms, and deep experience working with peers on scientific problems. To do meaningful scientific work in classrooms, they require strategic supports, input, and guidance from teachers and curriculum materials.

Research reveals both the promise and challenges of teaching science as practice. As instruction taps their entering knowledge and skills, students must reconcile their prior knowledge and experiences with new, scientific meanings of concepts, terms, and practices. Similarly, they may enter class-

rooms with very limited or inaccurate views of science—that science is unchanging, that experiments are portals to uncovering truth, and that learning science means merely accumulating facts (Carey et al., 1989). In this section, we discuss three key features of K-8 science practice that require carefully crafted support and instruction. As students wrestle with meaningful scientific problems they (1) engage in social interaction, (2) appropriate the language of science, and (3) use scientific representations and tools. These are features that are central to scientific practice and require that teachers and instructional materials provide clear guidance and support for learners as they acquire these practices.

Science in Social Interactions

Social interaction is a central feature of both scientific practice and productive learning generally, and accordingly it plays an important, specialized role in K-8 science learning. As noted in Chapter 2, social studies of science describe a highly interactive and social practice of bench scientists in which argumentation—articulating and communicating understandings, testing ideas in a community, giving and receiving feedback, and processes for evaluating and reaching consensus—is a central feature (e.g., Latour, 1980; Longino, 1990; Nersessian, 2005). Prior reviews have also identified the importance of social interactions for learning generally. The National Research Council report on advanced study of math and science in high school, for example, found that “socially supported interactions can strengthen children’s ability to learn with understanding” (National Research Council, 2001). The research studies of social interaction in K-8 science classrooms reveal both the unique challenges of drawing on and teaching productive social interaction and the promise of seriously attending to social interactions.

Children enter school with a range of resources that can be tapped to support meaningful social interaction. They also bring habituated ways of interacting with their peers that often run contrary to desirable productive social interactions that sustain science learning. For example, while science practice entails argumentation as a process for refining knowledge claims, students may view argumentation in a different light. They may see arguments as unpleasant experiences. Children may also view argument as something that is won or lost on the basis of status and authority (e.g., bigger kids may be more persuasive in a playground dispute irrespective of evidence and logic), rather than on its logical or empirical merits. Furthermore, traditional school values, such as competition among students, emerge in tension with scientific values of comparing results among peers to explore a factor’s effect on other variables (Hogan and Corey, 2001).

We can expect that students will need instruction in how to work on science problems collectively. National data suggest that opportunities for meaningful social interaction are limited across U.S. student groups. These may be particularly infrequent for nonmainstream students, students in urban schools, English-language learners, and students with disabilities (Gilbert and Yerrick, 2001; Palincsar and Magnusson, 2005; Rodriguez and Berryman, 2002).

When educators succeed in creating a community of learners, in which students see their goal as one of contributing to a community understanding of scientific problems, students can reap cognitive, social, and affective benefits. For example, student learning from hands-on investigation is dramatically improved when they also present their ideas and arguments about investigations to their peers (Crawford, Krajcik, and Marx, 1999; Krajcik et al., 1998). Debating with peers can help make scientific tasks more meaningful, lead to more productive and conceptually rich classroom dialogue, and improve conceptual mastery (Brown and Campione, 1990, 1996; Herrenkohl and Guerra, 1998; Herrenkohl et al., 1999; Lehrer and Schauble, in press).

The benefits of rich social interactions apply to the range of students that populate K-8 classrooms. The program of research at the Cheche Konnen Center has demonstrated that urban English-language learners can effectively engage in high-level scientific reasoning and problem solving if taught in ways that respect their interests and modes of social interaction (e.g., Ballenger, 1997; Hudicourt-Barnes, 2003; Warren et al., 2001). For example, Hudicourt-Barnes used her knowledge of the traditional Haitian form of talk called bay odyans (chatting) to foster arguments or diskisyon (discussion) in science classrooms for Haitian students. She worked with other members of the Cheche Konnen Center to help poor bilingual students build on their interest in talking and in exploring phenomena in the world by using their indigenous form of argument (and their interests, e.g., in African drums) as a link to more conventional scientific investigations of the physics of sound, the reproductive cycle of snails, and the causes of mold. The message that culturally diverse students can participate in meaningful science discussion is echoed by Lemke (1990).

The Specialized Language of Science

Communication and argumentation about scientific ideas involves characteristic uses of language defined by the discipline: “controlled experiments,” “trends in data,” “correlation versus causation.” Scientific discourse also requires use of special patterns of language, which enable individuals to identify and ask empirical questions, describe the epistemic status of an idea (hypothesis, claim, supported theory), critique an idea apart from its author

or proponent, and specify types of critiques (e.g., concerns about a claim versus claims about evidence). For K-8 students, each of these kinds of communication may require learning new uses of language. Thus, while scientific language skills can be considered important learning goals in their own right, specialized language can also help students perform the activities of scientific practice (Lemke, 1990; Moje et al., 2001; Rosebery, Warren, and Conant, 1992).

Disciplinary language can carry specialized, technical meanings. As mentioned in Chapter 2, some words may have nonscientific, lay meanings that conflict with their scientific meanings (e.g., theory). Students need opportunities to master the specialized meanings of scientific words and to sort these from their nonscientific meanings. Using technical language appropriately may be particularly trying for students who bring different ways of using language into academic settings (Rosebery, Warren, and Conant, 1992). An important strand of instructional research is the attempt to support more productive ways of using scientific language. Such efforts involve attempts to bridge learners’ ways of using language and more normative scientific discourse in doing scientific activities by supporting their language interactions (Moje et al., 2001; Rosebery et al., 1992).

Another area of research probes efforts to make explicit the social roles and associated language that governs students’ interactions in scientific practice (Herrenkohl and Guerra, 1998; Herrenkohl et al., 1999; Hogan and Corey, 2001; Palincsar, Anderson, and David, 1993). Given the challenges that learners face in acquiring modes of discourse associated with science, researchers have analyzed the effects of teaching distinct roles to individuals and assigning individual students to a particular role.

Work with Scientific Representations and Tools

Finally, the representation of ideas is a central part of scientific work that carries over to instruction and is evident across programs of research on instruction. Scientists use diagrams, figures, visualizations, and mathematical representations to convey complex ideas, patterns, trends, and proposed explanations of phenomena in compressed, accessible formats. These tools require expertise to be understood and to be used to reason about underlying scientific phenomena (Edelson, Gordin, and Pea, 1999; Gordin and Pea, 1995; Lehrer and Schauble, 2004). As with the social interaction and discourse aspects of practice, work with representations and tools poses challenges for learners, but also offers promise as a vehicle to more effectively support learners and bridge the resources they bring to the classroom and more sophisticated scientific practices.

Challenges arise when representations such as graphing are taught procedurally. Current instruction often underestimates the difficulty of connect-

ing work with scientific representations to reasoning about the scientific phenomena they represent. To exploit their utility, students need support in working with interpreting and creating data representations that carry meaning. Access to scientific data in the form of data sets, data collected through observation and experimentation, interaction with simulations, and visualizations can become an important part of providing opportunities for students to experience and reason about scientific phenomena.

SUPPORTING THE LEARNING OF SCIENCE AS PRACTICE

Having laid out central features of doing science in K-8 classrooms and the challenges that learners face, we now shift our focus to examine the ways in which teachers and instructional materials can act to support student learning. Research has uncovered several types of complementary strategies that can be part of instructional support for students learning science as practice. The areas of support build on what is known about learning in general and about science in particular. In this section we discuss the means of supporting science learning as students engage in science as practice—designing and conducting investigations, developing arguments, and building and refining models and explanations.

Our discussion of support for student learning relies heavily on forms of guidance that are, in part, embedded in curriculum materials. However, this is not to suggest that teachers are somehow less important to the process. On the contrary, no system of instruction can operate without skillful teachers. Curriculum materials, specific instructional approaches (project-based science, coherent instruction focused on conceptual change), and software tools, such as scaffolded simulations and visualization tools, offer useful structure to student learning experiences, but they cannot dictate learning. In all these examples, the teacher plays a critical role in realizing these designs. Even if the intervention is represented by carefully specified curriculum materials (e.g., Blumenfeld et al., 2000; Singer et al., 2000), the teacher plays a role in how the instructional materials are enacted. In most of these designs, teachers need to carefully orchestrate classroom discussions to establish research questions; consider hypotheses; establish classroom norms for evidence; compare results; help elucidate, question, and critique conceptual models; and so on.

In all of these cases, teachers’ beliefs and understandings of the discipline and of the pedagogy shape how they interpret and put the design ideas of the materials into action (Ball and Cohen, 1996; Clandinin and Connelly, 1991). For example, Schneider, Krajcik, and Blumenfeld (2005) found a range of enactments of critical aspects of the project-based science approach (such as attention to students’ prior ideas), resulting from particu-

lar teachers’ interpretations of the materials. Some of these adaptations diverged from some of the important instructional characteristics intended by the designers. Yet it is not possible to script these interactions or to embed all possible alternatives in “teacher-proof” curriculum materials (Doyle and Ponder, 1977). Instead, these approaches call for careful attention to teacher learning, perhaps through researcher-teacher partnerships (as in the early design studies) in an approach, or in more formalized professional development programs as interventions develop (Blumenfeld et al., 2000; Marx et al., 1997). Teacher learning is discussed further in Chapter 10.

Sequencing Units of Study

As discussed above, science as practice frames meaningful problems that depict complex phenomena and require that students master and coordinate a range of concepts and practices. As students begin to wrestle with these problems, teachers and instructional materials necessarily provide an important sequencing function. Students cannot do everything at once from the start. After framing a complex problem and assessing students’ entering capabilities to work on it, the teacher must adjust instruction to focus on smaller pieces of the problem at hand. While students are always working in the context of a large, complex problem, throughout the unit instruction emphasizes smaller, manageable pieces in their daily classroom experiences.

Let us consider how sequencing works by briefly examining the BGuILE middle school Struggle for Survival unit, a 6- to 7-week classroom examination of core evolutionary concepts through an investigation (Table 9-1). In this unit, “students learn about natural selection by investigating how a drought affects the animal and plant populations on a Galapagos island. Students can examine background information about the island, read through field notes, and examine quantitative data about the characteristics of the islands’ species at various times and points to look for changes in the populations” (Reiser et al., 2001, p. 275).

While from the outset this unit frames the large-scale, complex problem of explaining the impact of a drought on plant and animal populations, it unfolds over four phases, which are sequenced to gradually ratchet up the demands of the learning experiences and the sophistication of students’ reasoning about core concepts. The first phase (10 classes) sets the stage for the study by discerning students’ entering knowledge of natural selection and providing requisite background knowledge (about ecosystems and the theory of natural selection) and building student motivation. In the second phase (5 classes), students learn background information specific to the Galapagos investigation. They learn about the Galapagos Islands and the methods scientists use to study ecosystems. They generate initial hypotheses,

TABLE 9-1 The Struggle for Survival Middle School Curriculum

|

Phase A: General Staging Activities (10 Classes) |

Staging activities provide background knowledge and motivation for the investigation. Brainstorming activities reveal what students believe and understand about island ecosystems. Activities include a geography game using characteristics of tropical island as clues, student research on how animals are adapted to the local ecosystem of an island, a background video, and reading on Darwin and the Galapagos. |

|

Phase B: Background for Investigations (5 Classes) |

Activities focus directly on the Galapagos ecosystem and understanding how to investigate ecosystem data. Activities include a video introduction to the Galapagos and the methods scientists use to study the ecosystem, brainstorming about hypotheses, and a mini paper-based investigation in which students work with a small data set from the software and make a graph that backs up a claim about the data. |

|

Phase C: Software Investigations (10 Classes) |

Students investigate data using the Galapagos finches software environment, documenting their developing explanations as they progress. At the midpoint, student teams pair up and critique each other’s explanations. |

|

Phase D: Presenting and Discussing Finding (6 Classes) |

Student teams prepare their reports. Each team presents their findings, and the class analyzes key points of agreement and dissension. |

|

SOURCE: Reiser et al. (2001) |

|

work from a small data set, and learn about the computer system they will use in the major investigation.

Only after these 15 lessons lay important conceptual groundwork, provide justification for the study of ecosystems, and build the intellectual tools and motivation that the students need, do they themselves conduct investigations of the natural selection data set. In the third phase (10 classes), students explore the data set and generate explanations for observed patterns of change in the finch populations and critique the explanations of their classmates. In the fourth phase (6 classes), student teams prepare reports, present findings, and analyze key points of agreement and disagreement across reports.

Such carefully sequenced experiences can provide an intelligible roadmap for student learners. At each turn, they develop important elements of scientific practice as they wrestle with evidence, consider different ways of looking at phenomena and interpreting evidence, and work collectively to determine what they understand and which interpretations they find compelling. Students need guidance, including explicit and direct guidance, as well as support that helps them develop the tacit knowledge of scientific practice that will inform judgments and decisions they make as they do science. We now turn to discussion of the mechanisms that teachers and curriculum designers can use to provide support to students as they work on tasks.

Embedding Instructional Guidance in Students’ Performance of Scientific Tasks

Learners face many obstacles in learning science as practice, and they require support in order to engage in it productively. For example, in conducting and interpreting science, students often confuse evidence with its interpretation (the “theory/evidence” confusion), are unfamiliar with the strategy of controlling variables in order to design experiments to test a hypothesis, and do not continually reevaluate hypotheses in light of new evidence. Their entering understandings of and experiences with the physical, biological, and social worlds may confound their efforts to master new knowledge. Their prior exposure to science, including science instruction, may leave them with a distorted impression of the scientific enterprise. Explicit support is required to help students learn the practices, the concepts, and the very nature of science. Students left free to explore, as in pure “discovery learning” approaches, may continue to face these obstacles, interfering with their ability to learn through inquiry. Simple experience with inquiry alone does not lead to acquisition of better experimentation skills or conceptual mastery (Roth, 1987; Klahr, 2000). Students need firsthand experiences working on meaningful scientific problems, as developing expertise in a discipline entails developing more sophisticated strategies for solving problems

(VanLehn, 1989) and much of the knowledge involved in solving problems is tacit. Thus pure explicit instruction will fail to produce awareness, understanding, or knowledge of appropriate use of important strategies (Greeno, Collins, and Resnick, 1996).

Instead of pure discovery or pure direct instruction, students need strategic “scaffolds” that embed instructional guidance in ongoing investigations to call attention to important decision points and to make data patterns more explicit (Box 9-1). Scaffolding has been defined broadly and used to mean

|

BOX 9-1 Scaffolding Scaffolding is ongoing guidance provided to students as they perform a task, which facilitates performance and learning. Scaffolding can be viewed as the additional support built around a core (baseline) version of a task to make it more tractable and useful for learning. Scaffolding is always defined relative to some assumed baseline version of the task (Sherin, Reiser, and Edelson, 2004). The original scaffolding metaphor included the eventual removal or “fading” of the scaffolding. This aspect of scaffolding has yet to be thoroughly investigated. Most studies of scaffolding are not extensive enough to include the fading of scaffoldings. A notable exception is the recent study by McNeill et al. (2006) of scaffolds for students’ evidence-based explanations. They found that middle school students in a 2-month project-based unit performed better on posttests requiring explanations if the scaffolding was gradually faded during the instructional unit, rather than if the scaffolding was continued throughout the entire unit. More research is needed to explore the time frame and approaches for fading scaffolds.

|

different things; however, recent analyses of scaffolding emphasize the ways that support can be embedded in students’ ongoing performance of tasks to support learning (Hogan and Pressley, 1997; Linn, Bell, and Davis, 2004; Quintana et al., 2004; Reiser, 2004; Sherin, Reiser, and Edelson, 2004). We think of scaffolding as strategic support that enables students to do scientific tasks with a higher degree of sophistication than they could without it. Scaffolding may structure students’ interactions with one another or their thinking about a particular model, concept or practice, or it may guide students’ interpretation of scientific tools and representations. It might also entail teachers telling things to students—giving them clear canonical explanations, or facts to build upon.

Various approaches to scaffolding scientific tasks have emerged in the literature. A central theme is to make a process or concept more explicit for learners by enabling them to do something they could not do without some crucial element provided through scaffolding. The elements might come through teacher actions, instructional materials, or actions of other students. Students might be cued to reflect, reminded to incorporate a key concept in their work, or prompted to reflect on their experiences. In this section, we describe three ways in which scaffolds can support students’ learning. Scaffolding can structure experiences to draw attention to the elements of scientific practice, provide guidance in students’ efforts to engage in social processes around scientific problems, and help them track the important conceptual aspects of the problems they are working on. Furthermore, these different approaches to scaffolding science learning can mutually reinforce one another and together provide necessary guidance, enabling students to perform in complex ways that they could not do without the scaffolding.

Scaffolding Scientific Process

When students learn science, they do not necessarily come to understand their experiences, observations, or science itself in ways that teachers and curriculum designers intend. In conducting investigations, students may ignore or choose not to believe unexpected results, rather than wondering why it was that an unexpected result occurred and puzzling over how to interpret it (e.g., as an error in procedure, an interesting result that should be further tested). Clear cues and guidance at strategic points in an investigation can prompt students to focus on the salient features of their experiences, observations, and the concepts they are working with to support critical engagement and movement toward desired learning outcomes.

One approach is to develop an instructional framework that can be presented and then used in ongoing fashion to structure students’ work, such as the evidence-based explanation framework developed by Krajcik and his colleagues (McNeill et al., 2006; Moje et al., 2004). While evidence-

based explanations in science may have a very complex structure, this instructional approach identifies the most central elements and makes them explicit to students. These elements consist of the claim, the empirical evidence in support of the claim, and the reasoning that articulates why the evidence supports the claim. This instructional framework is developed with students’ input, as they learn first about evidence supporting claims and then consider how to organize a written or oral presentation to defend a claim. Teachers discuss the three elements with students, who then begin to use this framework to represent their written explanations in response to research questions. As they construct and refine these explanations, students use worksheets with scaffolding prompts that remind them of the elements and the criteria for them. This framework becomes a repeated structure that they use to guide their investigation, and it guides the synthesis of results into an explanation. Empirical studies of students’ explanations reveal that students using this instructional framework improve in their ability to cite relevant data and connect it with claims within their written explanations (McNeill and Krajcik, in press). A similar approach embedded in software tools rather than paper and pencil worksheets was used in the Knowledge Integration Environment (Linn, 2000). In these tools, a checklist of tasks specifying important steps in inquiry was provided to help students coordinate the different steps in the activity. Similar approaches have been explored for scaffolding prompts to help learners articulate their experimental designs (Kolodner et al., 2003).

Another approach is to structure the tools that students use to represent their ideas in order to make the important aspects of the task more explicit. This is apparent in several software tools for argumentation. For example, SenseMaker (Bell and Linn, 2000) provides a representation that helps students develop and record their arguments. Students explicitly identify relevant evidence and code it as supporting or refuting sets of competing claims. Belvedere (Toth, Suthers, and Lesgold, 2002) supports students in constructing argument graphs, in which claims and evidence are visually distinguished, and students construct a chain of reasoning that includes claims, subclaims, and their supporting evidence. These tools can help learners develop more accurate and elaborate arguments, focusing them on the distinctions relevant for the domain (such as claim or theory versus evidence).

Scaffolding Social Interaction

We have discussed the promise and the complexity of social interactions in doing science. Scaffolding science learning through students’ social interactions can harness the complexity of a scientific task and students’ varied experiences and observations with it to build understanding in a student group. This type of approach has its roots in the reciprocal teaching ap-

proach to reading comprehension, which makes the process of comprehension explicit for learners (Palincsar and Brown, 1984). In reciprocal teaching of reading comprehension, for example, teachers model the important elements of comprehension, such as predicting, summarizing, and questioning, and then students begin to take on individual elements of the task. The task is essentially distributed among students, who share responsibility for its completion.

In elementary science classrooms, researchers have attempted to establish classroom versions of scientific communities (e.g., “community of learners” or “learning community” approaches), beginning with the “community of learners” (Brown and Campione, 1990, 1994, 1996) and “knowledge building” (Scardamalia and Bereiter, 1991, 1994) approaches in elementary school classrooms. Core to the approach is the notion that students are working together to build their understanding of answers to questions; the models they build can be revised as new ideas are uncovered through research; proposal and critique are essential to testing ideas in the community; and the teacher’s role is to facilitate this process rather than provide authoritative answers to questions. The classroom designs attempt to realize these goals through various “distributed expertise” activity structures, such as creating research teams pursuing particular topics, jigsaw activities in which representatives of different teams (who have developed different expertise) come together in new teams to pursue new questions, and culminating activities in which teams present and critique one another’s findings.

In general, the empirical research uncovers both the challenges and the promise of these approaches. Students in elementary school classrooms participate successfully in these types of learning communities. They take responsibility and ownership of the questions they pursue, and they exhibit increasing focus on discipline-appropriate peer-to-peer discourse, such as justifying and critiquing ideas and their evidence (Scardamalia and Bereiter, 1991, 1994). However, the classroom interactions are not simple to facilitate, requiring professional development for participating teachers. They also take time to become established and develop into shared classroom norms. These interventions are usually year-long collaborations between schools and researchers.

Herrenkohl and her colleagues have explored using “intellectual roles” to help make tasks more explicit to elementary schoolchildren (Herrenkohl and Guerra, 1998; Herrenkohl et al., 1999). These approaches build on the idea of supporting a task by making the process explicit, through assignment of specific responsibilities or roles for particular individuals. For example, Herrenkohl and Guerra (1998), working with two fourth grade classrooms, identified intellectual roles corresponding to particular aspects of the investigation task, such as making or checking predictions, summarizing findings, and connecting findings to theories. As the investigation proceeded,

the teacher established these as important aspects of the investigation, identifying them as important jobs or roles, to be divided among the group sharing their results and among the audience listening and critiquing. As in some of the scaffolding prompts described earlier, the teacher established particular questions to ask associated with each job. For example, audience members would ask a range of results questions, such as “What helped you find your results?” “Did your group agree on your results?” Students use question prompts appropriate for their role, tailoring them to the current experiment and findings. In this way, the design attended to multiple elements of the scientific practice of investigation and argumentation, by associating the cognitive task of arguing for or critiquing an experimental result with particular types of social interactions and particular uses of language.

Herrenkohl and Guerra found that students were able to take on these roles and that they tailored their questioning as they became more sophisticated with the approach. Classroom discussions became more focused on important aspects of the scientific task (e.g., critiquing fit of evidence with hypothesis) and included more peer-to-peer rather than student-to-teacher interactions. Importantly, these peer interactions happened around issues, such as coordinating theory and evidence, that appear to be very challenging for students in the context of unstructured discovery or traditional instruction (Klahr, 2000; Kuhn, 1989; Kuhn, Amsel, and O’Loughlin, 1988).

Researchers exploring these approaches caution that these types of classroom participation structures, such as distributed expertise (Brown and Campione, 1994) and intellectual roles for science (Herrenkohl and Guerra, 1998), can become proceduralized, with students taking on particular jobs or asking questions in a superficial fashion. Hogan and Corey (2001) characterize science classrooms as having a “composite culture,” which emerges as the traditional norms of schools (such as doing work to get grades, expecting the teacher to know the answers, contributing to show the teacher that one knows the answer she has previously provided) interact with the new scientific norms of knowledge building (valuing evidence, seeing questions as open, etc.) that teachers and designers are attempting to create. They emphasize the need to establish a shared understanding of these norms through ongoing discussion, identifying the need, clarifying what it means in terms of responsibilities and ways of interacting, and reflecting as the practice proceeds.

Scaffolding Conceptual Models

Instructional supports can be designed with conceptual models or dynamic simulations that make science concepts more transparent for learners, helping them connect their prior understandings with more sophisticated scientific understandings. These scaffolds can remind learners of important

concepts that they need to include in their work or draw their attention to important conceptual distinctions, problematizing these issues and focusing their attention in productive ways. Interactive simulations can also highlight key concepts, helping students see concepts within a network of interrelated ideas.

Scaffolding can help students examine, scrutinize, and critically appraise their understanding of key scientific concepts. Visualizations can help learners connect patterns in data to a better understanding of the scientific phenomenon. For example, White and Fredericksen (1998) developed Thinkertools, a software environment that allows users to examine Newtonian physics, facilitates experimentation, and focuses users on salient features of key concepts. Designed for middle grade users, this system allows students to create “what if” experiments that are difficult or impossible to create in the real world—contrasting the behavior of moving objects in an environment with or without friction or in which gravity can be turned on or off. Users can manipulate the mass of balls, as well as stationary objects, which can serve as barriers to balls as they move through space. Users can assign impetuses to balls. The environment can also automatically generate accurate measurements of time, distance, and velocity. Graphical representations of variables help users visualize the results of changes in the variables’ values. For example, moving balls can leave dot patterns as they move across the screen. This can make the velocity of a moving object more immediately salient as slower traveling balls leave dot patterns that are closer together and faster balls leave dot patterns at larger intervals. Students also have access to analytic tools that enable them to slow or freeze time and closely examine how a given object is moving (e.g., rate, distance, direction). These features help students control their investigation and analysis and focus their observations while they explore underlying physical principles.

Similarly, computer-based visualization tools can help learners see patterns in data that support scientific views of phenomena. Often, “seeing the data,” that is, finding and interpreting coherent patterns, can be particularly challenging for students who have little familiarity with the content area and with using data in inductive ways. Scaffolds built into instruction, including computer simulations, can highlight for students the relationships between data patterns and possible explanations for phenomena.

Edelson (2001) has developed and tested computer visualization tools that enable middle school students to explore the impact of light entering the atmosphere on weather. Students use two software tools to conduct observations and observe patterns in data, from which they draw conclusions about the influence of geography on climate. WorldWatcher is a visualization and data analysis tool that is based on tools scientists use, but it is designed for learners (Edelson, Gordin, and Pea, 1999). This tool helps students see the tacit meanings that scientists see in tools and the representa-

tions that they use in the course of their work. It enables students, for example, to click on a region and automatically view numerical data. Or the software may provide links between two different weather maps, for example, by providing comparative data on average temperature for a given region during June and September. Close linking of multiple elements for students, visual representations of patterns, as well as simple summary statistics (e.g., cell-by-cell temperatures, average temperature on a given date) can help students uncover important relationships that underpin a scientific understanding of phenomena. The Progress Portfolio tool structures students’ reflections, allowing them to capture, annotate, and organize information and create presentations from their data (Loh et al., 2001). It also allows them to chart their progress through the investigation. As students view data in the WorldWatcher, they are cued to reflect on relevant parts of the data representation by the Progress Portfolio. For example, students examining the influence of large bodies of water on land temperature would make focused observations of coastal areas on the world map illuminated with colored heat bands. Then they would be prompted to examine specific geographic features, to record a pattern of data, and to draw conclusions from it. Used together, these tools can help students see patterns clearly and interpret them in light of the intended learning outcomes by focusing their observations and cueing them to interpret data in scientifically viable ways.

Supporting Articulation and Reflection

Articulation and reflection are mutually supportive processes that are at the core of the scientific enterprise, and they are critical to the four strands of scientific thinking. In scientific practice, constructing and testing knowledge claims require a focus on articulating those claims, that is, developing clear statements of how and why phenomena occur. Argumentation requires articulating claims and teasing apart when there is agreement or divergence among different claims.

Reflection is critical to a complex cognitive process, such as managing an investigation. Researchers have documented that children repeat experiments and forge local interpretations of current results without connecting to prior hypotheses (Schauble et al, 1991; Klahr, 2000). To harness their investment in experimentation and focus their interpretations, children need regular opportunities to reflect. Reflection helps students monitor their understanding and track progress of their investigations. It also helps them solve problems along the way—identifying problems with current plans, rethinking plans, and keeping track of pending goals.

Supports for articulation and reflection have been a focus of instructional guidance and scaffolding design efforts. For example, in the Thinkertools work, White and Frederiksen have demonstrated students’ increased suc-

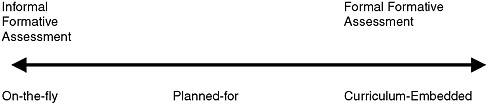

cess when asked to analyze and explain the products and process of their investigation (White and Frederiksen, 1998). Davis and Linn (2000) examined student learning in the context of a computer-based investigation that provided prompts asking students to reflect on their ideas as they engaged in investigations. They analyzed eighth grade students’ performance as they engaged in computer-based investigations in which they collected real-time data and performed simulations and experiments. As students read articles on the computer, for example, they were prompted to state the major claim of the piece. As they evaluated claims, they were prompted to provide concrete concerns and revise claims to more accurately reflect the evidence. Students who used prompts developed greater awareness of their own knowledge and were better able to take advantage of opportunities to learn and integrate their knowledge.