5

Specifying Questions and Locating Evidence: An Expanded View

|

KEY MESSAGES

|

A basic premise of the L.E.A.D. framework is that good decisions should draw on a sound body of relevant evidence, acquired by applying a systematic process for locating, appraising, and compiling information relevant to a major public health issue

such as obesity. Evidence-based policy and practice imply that interventions aimed at reducing obesity or its risk in the general population are supported by the best available research evidence on their efficacy and population impact. Decision makers also frequently seek additional information that has direct utility within their local context. Such information may be related to costs, implementation, scalability, sustainability, and factors that could facilitate or impede the success of an obesity prevention intervention. Locating useful evidence requires a clear concept of the types of information that may be useful for a particular purpose, as well as an awareness of where that information can be found.

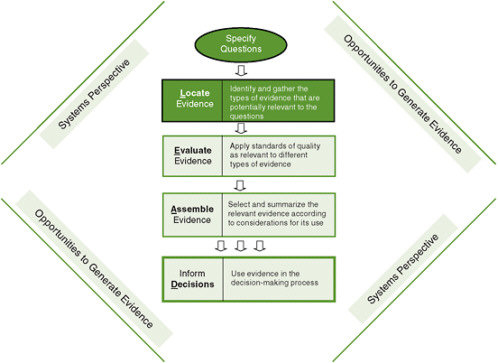

This chapter addresses fundamental issues related to the types of evidence useful for answering a range of user questions and how that evidence can be located and gathered (Figure 5-1). Criteria for screening evidence are also addressed as background for the discussion of evidence evaluation in Chapter 6. The chapter begins by presenting an evidence typology to demonstrate how particular questions can be addressed with various methods. It then reviews potentially useful sources of evidence. The chapter concludes with a discussion of considerations in gathering the evidence.

FIGURE 5-1 The Locate Evidence, Evaluate Evidence, Assemble Evidence, Inform Decisions (L.E.A.D.) framework for obesity prevention decision making.

NOTE: The elements of the framework addressed in this chapter are highlighted.

SPECIFYING QUESTIONS: AN EVIDENCE TYPOLOGY FOR THE L.E.A.D. FRAMEWORK

As explained in Chapter 3, the L.E.A.D. framework is oriented to decision makers and researchers who are typically called upon to use evidence to make decisions that apply within and across different levels of a system. The system may be at the local, regional, or national level; it may be a health or education system, another type of institution, a whole community, or a subsystem of a community. Evidence needs may vary substantively depending on when, where, at what level, and under what conditions users of the evidence operate. Differences in decision contexts are even found frequently within the same system. Evidence should be appropriately matched to user needs.

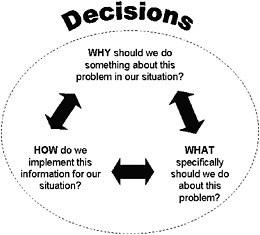

Locating a comprehensive pool of evidence useful for decision making is considerably facilitated when one starts with a focused and well-formulated set of guiding questions. The overall premise is that a problem is best grasped when examined from multiple perspectives with multiple forms of evidence, appropriately aligned with specific questions. Accordingly, the evidence typology of the L.E.A.D. framework identifies a set of guiding questions, adapting the approaches of the International Obesity Task Force framework and the evidence-based public health concepts outlined in Chapter 3 (see Figure 5-2): “Why” questions as shorthand for “Why should we do something about this problem in our situation?”; “What” questions as shorthand for “What specifically should we do about this problem?”; and “How” questions as shorthand for “How do we implement this information for our situation?” This typology is detailed in the following subsections.

“Why” Questions

Locating evidence guided by “Why” questions helps decision makers characterize the reasons for taking action on a public health issue in their particular region or locale.

FIGURE 5-2 Questions that guide the gathering of evidence.

Some users of the framework may be tempted to skip this step, thinking they already know why they need to take action on obesity. However, the committee strongly recommends this as a first step in intervention planning. Table 5-1 lists some examples of areas of concern addressed by “Why” questions and the corresponding evidence that might be gathered.

“Why” questions help decision makers assess a public health problem in their particular region and locale. For example, a study characterizing determinants of obesity in an urban area identified individual and neighborhood contexts that were important considerations in formulating obesity-related policies (Black and Macinko, 2010).

To answer “Why” questions about obesity and other public health problems, decision makers require specific, often quantifiable evidence with which to evaluate the scope and severity of the problem, both in an absolute sense and relative to other issues requiring action. The evidence secured may help define characteristics of high-risk populations; it may also help develop benchmarks for setting goals and tracking progress. In cases where decisions have already been made, this kind of information is frequently needed to undergird and justify those decisions.

Studies that generate this type of evidence may include sample surveys or compilations of administrative data for monitoring and surveillance, population trend analysis, or studies of health impact, as well as studies that produce cost estimates and projections of future burden. For example, evidence that provides insight into obesity-related health care costs and lost productivity is helpful for comparing the eco-

TABLE 5-1 Areas of Concerns and Examples of Evidence Needed: “Why” Questions

|

Area of Concern |

Examples of Evidence Needed |

|

Public health situation |

|

|

• Health burden |

Recorded levels of illness, disease, or death related to obesity |

|

• Frequency/incidence of disease or risk factor |

Number of people or rate of new cases affected by obesity or obesity-related diseases |

|

• Social or environmental determinants of disease or risk factor |

Number of catchment areas that do not have a supermarket or food stores offering healthful foods or suitable options for physical activity and exercise |

|

• Trends |

Rates of increase of obesity, obesity-related diseases, or adverse social determinants |

|

• Health disparities |

Relative or absolute differences in risk among demographic groups or subgroups |

|

Monetary and social costs |

|

|

• Health care costs |

Estimates of public dollars currently spent on providing health care for obesity or related conditions |

|

• Other societal costs |

Estimates of dollars spent by or lost from the public or private sector due to consequences of obesity (e.g., employee absenteeism) Estimates of other adverse outcomes associated with obesity with implications for social productivity |

nomic burden of obesity with that associated with other health problems (Chenoweth and Associates, 2009; Finkelstein et al., 2009; Wolf and Colditz, 1998). Finkelstein and colleagues (2009), for instance, use data from the 1998 and 2006 Medical Expenditure Surveys to estimate health care costs attributable to obesity for all health care payers. When programs are competing for funding, such an evidence pool is necessary to support decisions.

“What” Questions

Locating evidence to answer “What” questions helps decision makers assess the effects of interventions with the potential to yield the best health outcomes and to ascertain the optimal conditions for obtaining those outcomes. Table 5-2 lists some examples of areas of concern addressed by “What” questions and the corresponding evidence that might be gathered.

To answer “What” questions, decision makers require evidence on the effects or impact of particular interventions on specific health outcomes over the short or long

TABLE 5-2 Areas of Concerns and Examples of Evidence Needed: “What” Questions

|

Area of Concern |

Examples of Evidence Needed |

|

Presumed mechanisms of intervention effects in target populations |

|

|

• Intervention theory or logic |

Underlying assumptions (explicit or implicit) about how the intervention will improve health outcomes |

|

• Causal pathways |

Expected direct or indirect pathways linking the intervention to the outcomes at one or more levels and for different demographic groups |

|

• Multiple causal levels for multiple influences |

Intervening or interacting influences that might facilitate or hinder the effects in groups at risk |

|

Effectiveness of intervention based on empirical studies or simulations |

|

|

• Links between intervention delivery and outcomes |

Evidence that the intervention leads to the outcomes, including evidence of authentic and consistent implementation when effects were obtained |

|

• Comparative outcomes |

Effects of an intervention on the outcome in comparison with other intervention options or no intervention, including evidence on effects in different demographic groups |

|

• Sustained effects |

Evidence that effects of the appropriately implemented intervention are sustained over time |

|

• Contextualized effects |

Evidence of circumstances under which the evidence for effectiveness of the intervention is strongest, including evidence of other factors that influenced or interacted with the intervention (e.g., individual, family, community, or school factors) |

|

• Unintended (unexpected) consequences |

Positive or negative outcomes that lie outside the theory or underlying logic of the intervention |

term. This is a core issue in the selection of an intervention or set of interventions. Decision makers need to understand how interventions work to change environments or behavior and whether they have additional indirect effects—positive or negative. A key aspect of “What” questions is whether a particular intervention is sufficient alone or requires other interventions at the same or different levels to have any effect or the maximum effect.

The most informative study designs for generating evidence of impact are comparative experiments or approximations of experiments that allow for evaluation of the effects of an intervention against a comparison condition or control group. The control group provides a reference point for what might have occurred in the absence of the intervention. Without a comparison condition of some type, one cannot determine whether changes observed were actually due to the intervention or might have occurred anyway as a result of other influences that coincided with the intervention. Outcomes may be compared against those of another intervention thought to have no effect on the problem being studied or with an alternative intervention designed to address the same problem. Comparisons may be for a population overall but may also focus on specific subgroups, for example, to assess whether the effects of the intervention and the alternative are similar in higher- versus lower-risk groups. Outcomes at one point in time may also be compared with outcomes assessed in the same population previously (e.g., a historical comparison or time series approach.

Studies that generate answers to “What” questions are referred to as impact assessments or outcome evaluations in the evaluation literature (Fitzpatrick et al., 2004; Rossi et al., 2004); they fall within the general category of effectiveness research in the social and behavioral sciences. The interventions evaluated might include programs, policies, laws, or some combination thereof operating at different levels of a region, community, organization, or institution. Kuo and colleagues (2009) provide a good example of a health impact assessment of a state law. Using published and unpublished data to model consumer response to point-of-purchase calorie postings at large chain restaurants, the authors quantify the potential impact of California’s state menu labeling law on population weight gain in Los Angeles County.

Additional evidence of interest for “What” questions can be obtained by study designs that examine multiple pathways to outcomes (various causal mechanisms with direct and indirect effects), ripple effects (effects of an intervention on secondary outcomes that are linked to the main outcomes of interest), and unintended consequences (either positive or adverse effects that can be attributed to the intervention). For example, Schwartz and colleagues (2009) surveyed school students in Connecticut before and after low-nutrition snacks were removed from their schools to address concerns about potential unintended adverse effects (e.g., compensatory eating); these effects were not found after the intervention was implemented. A similar finding was reported from Arkansas based on surveys conducted after the statewide body mass index (BMI) screening and related school-based obesity prevention policies were implemented (Thompson and Card-Higginson, 2009) (see the discussion of this initiative later in this chapter).

“How” Questions

The potential effects of an intervention can be realized only if it is delivered appropriately in a particular setting. Answers to “How” questions help decision makers determine how to meet this need. Table 5-3 lists some examples of areas of concern addressed by “How” questions and the corresponding evidence that might be gathered.

To answer “How” questions, decision makers require evidence about the population, setting, and time frame at hand. Answers to “How” questions may feed back to “Why” and “What” questions by helping decision makers determine how plans and expectations should be adjusted to their context, what resources are needed to

TABLE 5-3 Areas of Concern and Examples of Evidence Needed: “How” Questions

|

Area of Concern |

Examples of Evidence Needed |

|

Relevance of the intervention on a large scale |

|

|

• Generalizability |

Likelihood of achieving the expected outcomes in different demographic groups and in different communities/regions |

|

• Sustainability |

Likelihood that the intervention effect will last more than 1 to 5 years in the various groups |

|

• Stakeholder acceptance |

Likelihood that the intervention be well received by the target population/community and program delivery personnel |

|

Costs and benefits of large-scale implementation |

|

|

• Cost-effectiveness |

Costs of the intervention versus measured effects on the outcomes of interest (e.g., premature deaths averted, years of life saved, pounds lost) compared with other competitive interventions |

|

• Cost/benefit |

Value of health benefits versus the costs of implementation compared with other competitive interventions |

|

• Cost feasibility |

Costs of implementing the intervention on a communitywide scale compared with other competitive interventions |

|

• Cost minimization |

Costs of implementing the intervention in a hospital compared with costs of implementing a competitive intervention in a community clinic and achieving the same outcome |

|

• Cost utility |

Stakeholder preferences versus implementation costs compared with other competitive interventions |

|

Political and practical concerns |

|

|

• Implementation priorities |

Fit of the intervention with community or policy priorities; basis for giving this intervention high priority |

|

• Portfolio balance |

Fit within an overall set of interventions if considerations of feasibility, size of impact, and certainty of effect are combined |

|

• Strategic planning |

Strategies and tactics that can be used to mount this intervention |

|

• Potential challenges |

Implementation challenges that can be anticipated and evidence on how to overcome them |

obtain the theoretical or desired effects, how interventions need to be adapted, what training is needed by staff, and how effects can be sustained over time. Answers to these questions relate to such concerns as feasibility, adaptability, and robustness of effects in natural contexts; acceptability to various stakeholder groups; replicability; sustainability; and resources needed for implementation. For example, the Pathways obesity prevention study in American Indian Children and the HEALTHY study to reduce risk factors for type 2 diabetes were school-based interventions that included extensive, detailed qualitative and quantitative measures to track implementation in addition to measures used to track study outcomes (Schneider et al., 2009; Steckler et al., 2003; Stone et al., 2003). Likewise, Wang and colleagues (2003) estimate the cost-effectiveness and cost/benefit of a completed school-based obesity prevention program (Planet Health), taking into account the costs of implementing the program as well as long-term outcomes, to assess the appropriateness of using public funds for this type of program. This form of evidence is often used to assess generalizability to other populations, settings, contexts, or time frames, referred to as “translation evidence” in public health and medicine.

Posing “Why,” “What,” and “How” Questions After a Policy or Program Is in Place

The above discussion addresses the types of concerns that guide information gathering before an intervention is undertaken. However, not all interventions relevant to obesity prevention have been based on a complete evidence picture. As noted earlier in this report, decision makers are not waiting for the best possible evidence but are acting on the available evidence because of the urgency of the problem. Initiatives may be debated in the public policy arena and proceed if the logic for their implementation is convincing and there is sufficient stakeholder and political support, but may subsequently be debated or criticized. Evidence is therefore required to document an intervention’s implementation, specific outcomes, and further needs. This was the case in the Arkansas school-based child and adolescent obesity prevention initiative, whose components are listed in Box 5-1 (Ryan et al., 2006). These components were based on documentation of need and on national consensus recommendations for appropriate actions. After the initiative was launched, a program of annual monitoring of its implementation and outcomes was established. In such cases, the conceptual framework of “Why,” “What,” and “How” questions and the areas of concern identified in Tables 5-1 to 5-3 can readily be applied (Thompson and Card-Higginson, 2009).

Following implementation, “Why” questions used for needs assessment guide the gathering of information on whether the underlying situation has changed (e.g., using population data to quantify why an intervention is still needed or to justify funds that have been expended on the intervention by showing that progress has been made). In Arkansas, for example, standardized BMI screening data are used to track BMI changes and overall obesity prevalence and trends among schoolchildren (Thompson and Card-Higginson, 2009).

|

Box 5-1 Arkansas Framework for Combating Childhood and Adolescent Obesity, with National Recommendations for Action

|

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

“What” questions, used to identify the effectiveness of interventions, guide the gathering of information on whether a specific intervention or interventions had the intended effects and contributed to addressing the problem in the expected manner or with the expected impact. In the Arkansas case, the subsequently established program of annual monitoring provides data that can be used to determine whether the various components of the initiative are resulting in improvements in the relevant school environment or child and parent behaviors. Likewise, definitive evidence for the effects on obesity prevention of such initiatives as posting calorie content on chain restaurant menus will not be available until the interventions have been in effect for a period of time. In the interim, effects on consumer behavior can be modeled for defined populations based on such data as the numbers of such restaurants, sales data on menu items with different calorie content, the types of consumers who patronize these restaurants, and how chain restaurant purchases influence their caloric intake.

“How” questions, used to identify issues related to relevance and implementation, can guide the gathering of information on what actually took place when an intervention was undertaken. Answers to these questions can be used to inform decisions about what changes might be needed going forward, to explain why the intervention might not have worked as well as intended, or to understand why costs were higher or lower than those initially estimated. The Arkansas monitoring program, for example, collects data on the quality and process of implementation (Thompson and Card-Higginson, 2009). Likewise, the methodology used in the Assessing Cost-Effectiveness in Obesity Study (ACE-Obesity) can help guide future decisions on interventions to prevent obesity in children and adolescents (Carter et al., 2009; Haby et al., 2006). In this study, researchers assessed absolute costs and potential cost savings to help determine the cost-effectiveness of 13 different interventions applied populationwide.

Applying the Evidence Typology: An Illustrative Example

In New York City, a decision was made to implement a policy requiring restaurants to publish calorie information on menus (Mello, 2009). “Why,” “What,” and “How” questions that guided, or may have guided, the search for and synthesis of evidence to support this broad-based policy decision are listed in Box 5-2. While the case report from which the questions were derived does not detail the exact decision-making processes, the relevant types of evidence that may have been used can be inferred from the documentation provided.

To approach this issue by applying the L.E.A.D. framework, decision makers would obtain a full picture of the scope and dimensions of the obesity problem in the city. For example, questions 1 to 5 in Box 5-2 could help decision makers assess the city’s health needs comprehensively. Much of this evidence speaks to various aspects of the obesity prevalence issue, correlates of obesity, and relevant lifestyle factors in a situation-specific manner. This evidence might be collected by using archival data from

|

Box 5-2 Applying the Evidence Typology: A Case Study of New York City’s Menu Labeling Policy The Problem: “New York City is home to approximately 2 million overweight and 1 million obese adults. Diabetes has been diagnosed in nearly 10% of overweight adults and 18% of obese adults in the city, and an estimated 200,000 residents have undiagnosed diabetes. Hospitalization costs for New Yorkers with diabetes topped $481 million in 2003” (Mello, 2009, p. 2015). Public Health Laws and Policy Actions to Address the Problem: Restaurants were required to make calorie information publicly available, posted on all menus and menu boards. Types of Questions Decision Makers Might Ask to Grasp the Problem Comprehensively and Guide Evidence-Gathering Efforts: “Why” take action?

“What” action should we take that will give us the results we want?

“How” should we implement this action?

NOTE: This list is not intended to be exhaustive. SOURCE: Mello, 2009. |

hospital discharge records and/or survey data (see the previous discussion of “Why” questions), some of which could be used to examine relationships among relevant variables (e.g., caloric intake, obesity, and diabetes). Some of the evidence could be used in descriptive form. According to Mello (2009), this type of evidence gathering was actually conducted in the New York case.

Questions 6 to 8 in Box 5-2 would allow for a more complete problem assessment to examine potential impacts of candidate interventions. Causal evidence on particular interventions is important to answer “What” questions. Additionally, parallel practice evidence (that is, evidence about the effectiveness of other relevant interventions for other health or social issues) might be useful. Likewise, informed expert opinion could be a useful source of evidence for appraising what is likely to work before population-based investments are made. With limited resources, comparative cost evaluations might also be imperative.

A variety of sources, then, could be used to gather evidence applicable to this case, such as observational research, experimental and quasi-experimental studies, expert knowledge, and parallel evidence on the implementation of interventions. The next section elaborates on these sources.

POTENTIALLY USEFUL SOURCES OF EVIDENCE

Seven categories of study designs and sources of evidence may be useful for addressing the concerns listed in Tables 5-1 to 5-3:

-

nonexperimental or observational studies,

-

experimental and quasi-experimental studies,

-

qualitative research and analysis,

-

mixed-method studies,

-

evidence synthesis methods,

-

parallel evidence, and

-

expert knowledge.

It is important to note that quality standards apply to all of these categories, and the relative value of each source depends on the decision-making context (see Chapter 6). Each category is discussed in turn below (see Appendix A for formal definitions and Appendix E for further discussion).

Nonexperimental or Observational Studies

An observational study is one in which the researcher assesses relationships among variables but does not manipulate an intervention or variables associated with potential outcomes. Study designs or methodologies that fall within this category include cross-sectional, longitudinal, or retrospective survey research or opinion polls, trend analysis, some types of market research, secondary analysis of existing databases,

cohort and case-control studies, predictive studies, archival studies, census studies, monitoring and surveillance studies, implementation tracking, and policy analysis. These designs and methodologies are helpful for answering several “Why,” “What,” and “How” questions that can guide the search for associated evidence. Table 5-4 provides examples of how this type of evidence might be used. See Appendix E for a detailed discussion of economic cost analysis.

Cross-sectional studies, conducted at one point in time, raise the additional problem of identifying whether observed associations are temporal: Did a person become obese because of a low physical activity level, or did physical activity decline as weight increased, or both? Also, many cross-sectional surveys are conducted for general use or for specific administrative purposes and may vary in the completeness of coverage of relevant variables and the quality of data.

Despite these limitations, good-quality observational data are the best evidence sources for answering many questions of potential importance for decision making. They can also be useful as a source of baseline measures in populations.

Experimental and Quasi-experimental Studies

An experimental study is one in which the investigator has full control over the allocation of subjects to a preventive or treatment intervention versus a control condition, as well as the timing of an intervention. Randomized manipulation and assignment of individuals or groups to an intervention is a defining requirement of an experimental study. By contrast, a quasi-experimental study (e.g., matched cohort, regression-discontinuity, or interrupted time series design) is often described as nonrandomized because the investigator lacks full control over the allocation process and timing of the intervention. A quasi-experimental study design often includes pre–post intervention studies in which outcomes are measured both before and after the intervention is implemented.

Both experimental and quasi-experimental studies are potentially helpful sources of evidence for answering “What” questions about certain categories of interventions. For example, a quasi-natural experimental design was used to estimate the causal impact of physical education classes on overall student physical activity and weight (Cawley et al., 2007), and an interrupted time series design was used to evaluate the effectiveness of a framework designed to increase fruit and water consumption (Laurence et al., 2007). Table 5-5 provides examples of how this type of evidence might be tied to specific questions and applications.

As explained in Chapter 3, quasi-experiments are of critical importance as sources of evidence for obesity prevention interventions as an alternative to randomized controlled trials (RCTs). Experiments simply cannot be conducted with certain environmental or policy variables that influence obesity because they lie outside the control of researchers. Quasi-experimental approaches may be used for evaluating ongoing initiatives. See Appendix E for a detailed discussion of some commonly used

TABLE 5-4 Types of Observational Evidence and Examples of Their Uses

|

Type of Evidence |

Questions That Could Be Addressed |

Specific Applications |

|

Quantitative Surveys, Longitudinal Studies, and Opinion Polls |

Based on measurements or self-reports of height and weight, how many people are obese in a given region? (“Why” question) |

A cross-sectional sample survey yielding descriptive data on levels of obesity using height and weight indicators |

|

|

Going forward, do self-reported eating choices change in a group of adolescents exposed to different levels of calorie information at their school cafeterias? (“What” question) |

A longitudinal cohort study yielding analytic breakdowns of teens’ eating choices according to menu labeling policies in their respective school cafeterias |

|

|

Given two or more intervention options, which ones do stakeholders prefer? (“How” question) |

A cross-sectional sample survey or poll yielding descriptive data on stakeholder preferences |

|

Analysis of Existing Databases |

Based on the health department’s data on a city population, what were the recorded levels of cardiovascular disease related to obesity in 2007? (“Why” question) |

A secondary analysis of the correlation or other measure of risk between levels of measured obesity and cardiovascular disease in a selected cross-sectional sample |

|

|

Based on the health department’s data on a city population, what are the trends in cardiovascular disease and obesity levels from 2005 to 2010? (“Why” question) |

A trend analysis comparing data for 2005 and 2010 for the percent of people with cardiovascular disease and the percent of people with obesity |

|

|

Based on the health department’s data on a city population, what are the past lifestyle correlates of cardiovascular disease in obese and nonobese adults in 2007? (“Why” question) |

A retrospective, case-control analysis of the relationship between past lifestyle factors and cardiovascular disease in obese and nonobese adults |

|

|

Compared with another intervention, what are the costs for implementing and operating a school-based program districtwide, based on data from pilot or single-site studies? (“How” question) |

A cost-feasibility analysis using preexisting budget and accounting databases |

|

|

What combination of factors maximally predicts stakeholder preferences with regard to program participation and use? (“How” question) |

A projection analysis using preexisting databases on stakeholder preferences, participation, and program management |

|

|

What level of sales taxes or excise taxes on sugar-sweetened beverages directly results in decreased consumption of these beverages in a state by 50%? (“What” question) |

A modeling study in which data on price elasticity, together with data on patterns of sales and consumption of these beverages, are used to estimate the effective level of taxation to decrease consumer consumption by 50% |

|

|

Which neighborhoods have the highest rates of childhood obesity, and what other characteristics of these neighborhoods might influence these rates? (“Why” question) |

A geographic mapping study in which the locations of food stores and outdoor recreational facilities are plotted by neighborhoods or zones around neighborhoods alongside area data on child obesity prevalence |

|

|

What would be the potential reach of a policy to require calorie labeling on menus of chain restaurants? (“How” question) |

Analysis of administrative data on food retail establishment locations and customer sales for the types of restaurants that would fall under the policy |

TABLE 5-5 Types of Experimental and Quasi-Experimental Evidence and Examples of Their Uses

|

Type of Evidence |

Questions That Could Be Addressed |

Specific Applications |

|

Randomized Controlled Trial (RCT) |

Compared with another intervention, are the obesity outcomes better for individuals assigned at random to receive this intervention than for those assigned not to receive the intervention? (“What” question) |

A randomized controlled trial of a manipulated nutrition program in two groups of obese adults who were placed in the program based on a coin flip (The random assignment usually balances individual characteristics across those who receive or do not receive the program so that the result can be interpreted as “all other things being equal”; some statistical controls may be required.) |

|

Quasi-experimental Study |

Compared with another intervention, are the obesity outcomes better with this intervention when administered to adults in two similar communities? (“What” question) |

A matched-cohort study design comparing obesity outcomes of a manipulated nutrition program in two communities (The two communities are the groups that are matched on relevant characteristics; other potential influences on intervention outcomes are statistically controlled.) |

|

|

Using ongoing obesity measures as control data in a group of children, is body mass reduced when this intervention is administered in alternating cycles? (“What” question) |

An interrupted time series study tracking changes in obesity outcomes over time when a nutrition program is administered periodically |

quasi-experimental approaches in other fields that are recommended for increased emphasis in research on obesity prevention.

Qualitative Research

Qualitative research evidence is typically derived from documentary sources, field observations, interviews, and open-ended verbal interactions between participants and researchers. Examples of studies that fall within this category include logic modeling or program theory analysis, ethnographic studies, focus group or key informant interviews, content or documentary analysis, case studies, some intervention process delivery and implementation monitoring, and evaluability assessments of programs and interventions. Qualitative research approaches give researchers the ability to assess perceptions of respondents at a much richer level than is possible using questionnaires with fixed responses.

Qualitative methods employ emergent designs (not preset, as in quantitative methods) and contextualized understandings of phenomena. Those investigating an intervention will want in-depth information on how people interact with the intervention and with different variables in that context. Questions and data-gathering techniques may be expanded or modified as data are collected. This type of research may

be helpful in answering all three types of questions; Table 5-6 provides examples illustrating how this type of evidence can address “What” and “How” questions.

Limitations of qualitative methods lie in the subjectivity they introduce by making the researcher an instrument of the research process and the difficulty of bringing closure to open-ended forms of inquiry. There are well-developed conventions for adding rigor to qualitative inquiry, such as triangulation, convergent validation, and internal and external criticism (see Chapter 6).

Mixed-Method Studies

Mixed-method studies employ methodologies drawn from a variety of disciplines, including both qualitative and quantitative data gathering and analysis methods. Studies may combine extensive descriptions of context and the experiences of program participants with standardized assessments of changes in institutional, environmental, or individual behavior–related variables. The realization that these types of data are complementary has increased interest in the use of such studies in the public health arena. Examples of mixed-method studies include surveys and interviews combined with RCTs, interviews combined with interrupted time series analysis, policy-related content analysis combined with focus group interviews, health impact analysis using archival databases and surveys, economic analysis using archival databases and surveys, systems mapping based on a review of the literature, simulation studies, and mixed-method evidence synthesis techniques (discussed in the next section). Roux and colleagues (2008) use a combination of a systematic review of disease burden and data from clinical trials, population-based surveys, and other published literature to assess the cost-effectiveness of community-based physical activity interventions associated with disease incidence. This type of research is particularly helpful in answering “What” questions. Mixed-method studies may also help in garnering evidence to

TABLE 5-6 Types of Qualitative Evidence and Examples of Their Uses

|

Type of Evidence |

Questions That Can Be Addressed |

Specific Applications |

|

Logic Modeling or Program Theory Analysis |

What are the underlying assumptions about how an intervention will improve health outcomes? What are the expected causal pathways? What intervening factors in the larger system and community are likely to affect outcomes? (“What” questions) |

A content analysis and systematic review of documents and literature relevant to an intervention to develop a logic model or causal path diagram |

|

Process Delivery and Implementation Monitoring |

What features of program implementation are associated with the maximum effect of this program? (“What” question) What are the documented barriers to implementation of this intervention, and how have they been overcome? (“How” question) |

A qualitative focus group interview of program delivery personnel from an effective program |

address “Why” and “How” questions. For example, Manning and colleagues (1991) assess the economic costs of poor health behaviors, including a sedentary lifestyle. This information can be helpful in addressing why something should be done to increase the level of physical activity in a community. Using data from the National Health Interview Survey and the RAND Health Insurance Experiment, the authors employ an incidence-based approach to determine the lifetime costs of a sedentary lifestyle. Table 5-7 provides examples of the uses of this type of evidence.

Evidence Synthesis Methods

Evidence synthesis methods encompass systematic reviews, and meta-analyses of experimental and/or quasi-experimental studies, syntheses of qualitative research, and mixed-method evidence syntheses. Evidence synthesis is particularly useful in answering “What” questions, but may also be useful for assembling information to address “Why” and “How” questions. Table 5-8 provides examples of how this type of evidence might be used.

Systematic Reviews

The literature on evidence synthesis distinguishes between primary studies, or individual studies presenting original data, and systematic reviews, or organized summaries of a body of research that addresses a focused question using methods intended to reduce the likelihood of bias. Traditional methods of evidence synthesis (in the evidence-based medicine tradition) have relied heavily on quantitative methods, such as meta-analysis, to generate summaries of research-based evidence (see Hunt, 1997; Smith and Glass, 1977). Several systematic reviews relevant to obesity prevention are listed in Appendix C. Some of the limitations of this type of evidence synthesis when applied to public health issues are discussed in Chapter 3.

TABLE 5-7 Types of Mixed-Method Evidence and Examples of Their Uses

|

Type of Evidence |

Questions That Can Be Addressed |

Specific Applications |

|

Surveys Combined with Randomized Controlled Trial (RCT) |

What self-reported individual-, family-, and community-level factors moderate effects on obesity outcomes when an intervention is randomly assigned to one of two adult groups? (“What” question) |

A randomized controlled trial of a manipulated nutrition program using two groups of obese adults, with survey-based data of individual-, family-, and community-level factors analyzed as potential moderators of outcomes |

|

Qualitative Analysis Combined with Quasi-experimental Study |

Is there qualitative evidence to show that an intervention was implemented as intended when outcomes improved in a time series analysis? How consistently and authentically was the intervention implemented when effects were obtained? (“What” questions) |

A content analysis of food logs, menus, and meal plans combined with an interrupted time series study tracking changes in obesity outcomes when a nutrition program is implemented periodically |

TABLE 5-8 Types of Evidence Synthesis Methods and Examples of Their Uses

|

Type of Evidence |

Questions That Can Be Addressed |

Specific Applications |

|

Systematic Reviews: Experimental and/or Quasi-experimental Studies |

Based on formal syntheses of experimental or quasi-experimental research, what is the evidence on the effectiveness of this intervention? (“What” question) |

A systematic review of effects of mandatory school exercise programs on childhood obesity levels |

|

Meta-analyses: Experimental and/or Quasi-experimental Studies |

Based on meta-analysis of effects from experimental or quasi-experimental research, what is the evidence on the effectiveness of this intervention? (“What” question) |

A meta-analysis to estimate the average effect on childhood obesity levels found in eligible studies of mandatory school exercise programs |

|

Mixed-Method Syntheses: Stakeholder Studies |

Based on a formal summary of results, what are the facilitators of and barriers to implementation of this intervention in light of stakeholder perspectives? (“What” question) |

A “realist” review using mixed-method analysis of stakeholder participation studies (drawing on the realist philosophy of science) |

Meta-Analyses

Meta-analysis is a statistical procedure that pools results from a sample of preexisting experimental/quasi-experimental studies to derive a single effect size. Effect size is a quantitative index expressing the difference between the treatment and control group means in standard deviation units. Studies are selected on the basis of some preset criteria, typically a common question about an intervention or therapeutic procedure, a defined target population, and common outcomes of interest.

The chief difficulty with applying meta-analysis is that the number of available studies on a given topic is usually rather small. This difficulty forces researchers to use a backwards logic in justifying the procedure—to start with a selected sample of studies and then imagine a hypothetical population in which the studies belong. Given this scenario, assumptions can never be properly tested; Glass (2000) finds this logic to be untenable today. Further, the studies selected typically vary in the effects they show, from positive to negative. Technical advances now make it possible to improve the analysis, for example, by testing for and statistically ruling out heterogeneity in the sample (Cooper and Hedges, 1994). However, Glass (2000) finds these added procedures to be problematic, as further assumptions need to be made that are both untestable and, often, not defensible.

The most important limitation, according to Glass (2000), is that the meta-analysis is guided by a very limiting question: On average, is the (intervention) effective? The procedure cannot address questions about differential effects of an intervention, reducing findings across studies to an average that frequently removes the most important information about the intervention. It is this loss of information through averaging that Glass now finds regrettable. His current view is that the standard guiding question in meta-analysis needs to be replaced with deeper and broader questions, such as “What type of therapy, with what type of client, produces what kind of

effect?” (Glass, 2000). Looking to the future, he suggests that “meta-analysis needs to be replaced by archives of raw data that permit the construction of complex data landscapes that depict the relationships among independent, dependent and mediating variables” (Glass, 2000).

Syntheses of Qualitative Research

Despite some initial controversy, a place for the inclusion of qualitative research in systematic reviews and evidence syntheses was established in medicine in 2001 in an editorial in the British Medical Journal (Dixon-Woods and Fitzpatrick, 2001). Citing Cochrane reviews that could have benefited from the use of qualitative research to assess how children and adolescents with cancer communicate about their disease, the editors called for expanded inclusion criteria incorporating relevant types of qualitative evidence to answer complex questions involving social variables and outcomes. At the same time, they cautioned evidence users about the need for rigor in identifying and appraising the quality of research evidence. The methodological challenges of distilling information from qualitative studies—frequently representing diverse theoretical, disciplinary, and analytic perspectives—into secondary summaries should not be underestimated (Dixon-Woods et al., 2001).

Likewise, Bower and Scambler (2007) demonstrate the relevance and utility of qualitative data in expanding the scope of evidence-based practice in dental public health. In their view, methods should be aligned with questions asked (see also Petticrew and Roberts, 2003).

Mixed-Method Evidence Synthesis

Qualitative and quantitative methods play complementary but contrasting roles in facilitating different types of inquiry aimed at better understanding practice-related phenomena (Bower and Scambler, 2007, p. 161). Traditional quantitative methods address questions about the prevalence of a disease or disorder, the statistical significance of an effect, the strongest predictors of a condition, program, or policy impact, or cost considerations in numerical terms. In contrast, qualitative methods help researchers ask questions about the nature, content, process or meaning of a particular process or outcome. Mixed-method approaches are suitable when questions cross these boundaries. One example in Bower and Scambler (2007) deals with examining the impact of advertisements on dental care and prevention of caries. In this case, social and personal factors outside an intervention, documented using both quantitative and qualitative methods, were found to mediate health-seeking behaviors of individuals and affected the expected outcomes.

Newer methods for synthesis of findings from qualitative studies and evidence from trials or other quantitative studies are now available in the fields of obesity prevention (Connelly et al., 2007; Thomas et al., 2004), medicine (Barbour and Barbour, 2003; Dixon-Woods et al., 2001), nursing (McInnes and Chambers, 2008; McNaughton, 2000), and public health. Following is an example that shows how evi-

dence screening and data compilation and analysis can be approached by synthesizing diverse types and sources of evidence.

In a mixed-method synthesis of evidence, Connelly and colleagues (2007) combined a systematic review of eligible quantitative trials with qualitative content analysis of descriptive data on interventions, as reported in the studies. Because the approach relies on the realist philosophy of science that examines causal pathways while acknowledging social realities (as opposed to the logical-positivistic philosophy of traditional laboratory science), this approach to evidence synthesis has been referred to as a “realist review.” The topic was prevention of childhood obesity and overweight. The authors began with a search of four electronic databases (including MEDLINE and PsychInfo) and applied published criteria to screen studies for quality and inclusion (e.g., execution of random assignment or other controls in study designs, sample size, lack of subject attrition, measurement validity and reliability, treatment duration and fidelity). This procedure initially identified 30 RCTs or controlled trials. Trials were included if they had a measured index of adiposity (the outcome), a population aged 0-18, and a 12-week follow-up to treatment. Blind to the study outcomes, the authors then sorted the studies into two categories based on a systematic content analysis of the intervention descriptions provided, using an “intensity of physical activity” indicator. This procedure allowed them to qualitatively separate “compulsory” from “voluntary” activity interventions. The authors then proceeded with the evidence synthesis, summarizing effects found across studies by group. Their conclusions showed that while nutrition education, skills training, and physical education were not effective in controlling childhood obesity, compulsory rather than voluntary physical activity was. Notably, the effects were not evident with the undifferentiated physical activity indicator in the overall sample. According to the authors, their rational, realist review permitted them to link the physical activity type causally with decreases in overweight and obesity.

Parallel Evidence

Parallel practice evidence is an indirect form of research evidence. Evidence of the effectiveness of an intervention in addressing another public health issue using similar strategies—such as the role of social marketing, curriculum programs, or financial factors in reducing smoking, speeding, or sun exposure—may have implications for an obesity-related outcome such as dietary intake. Here, the characteristics of the original research or evaluation reports, such as how the evidence was generated, whether the work was peer-reviewed, where the work is published, and the credentials of the author(s), help determine the quality of the evidence (Swinburn et al., 2005). Should such data be available in the form of a compiled policy brief or legal brief, again the quality of the evidence should be carefully evaluated based on the original data sources. Ideally, information about quality assessment would be incorporated into the report, with an explanation of the criteria used and a clear statement of the study

limitations. This does not preclude checking original sources where feasible, but helps in judging the credibility of secondary sources. In general, one should also always rule out any conflicts of interest in secondary compilations of evidence. Parallel evidence is particularly helpful in answering “What” and “How” questions. Table 5-9 provides examples of how this type of evidence might be used. Also see Chapter 2 for examples of parallel evidence, including activities surrounding tobacco and alcohol use and HIV/AIDS.

Expert Knowledge

Expert knowledge includes the views of professionals with expertise in a particular field of practice or inquiry, such as clinical practice or research methodology. Expert opinion may refer to one person’s views or to the consensus view of a group of experts. Consensus views are sometimes based on systematic reviews or other forms of evidence synthesis but still require interpretation or judgment about the evidence that is collected, and may require drawing conclusions in the absence of any or enough relevant evidence. Expert consensus and expert opinion should be appraised based on the credentials and experience of the experts involved, supporting documentation, the transparency and rigor of the consensus process, and ruling out of any conflicts

TABLE 5-9 Types of Parallel Evidence and Examples of Their Uses

|

Type of Evidence |

Questions That Can Be Addressed |

Specific Applications |

|

Research Evidence on Effects of Parallel Interventions |

Given the existing evidence on the effectiveness of tobacco and alcohol taxes, would soda taxes be similarly effective in reducing obesity on a large scale? (“What” question) |

Intervention impact or effectiveness studies showing that strategies to influence public behaviors work |

|

Parallel Research on Legal Issues |

Given the constitutional issues involved in restricting free speech, what grounds have been used to justify controls on advertising? (“What” question) |

Content analysis of relevant cases to identify arguments that have been advanced for and against such restrictions and how these arguments have been resolved |

|

Parallel Research on Implementation Process or Policy Development |

Given that eating and physical activity are individual behaviors but are affected by policies and programs in the broader community, what are some precedents for environmental and policy approaches that impact personal behavior, and how were they achieved and justified? (“What” question) |

Case studies of effects of populationwide interventions on nutrition, physical activity, obesity, or cardiovascular disease or obesity in other countriesa |

|

|

Given that obesity is a populationwide problem for which many of the drivers are a part of the social fabric, what can be learned from approaches used in other public health efforts of similar scope and complexity? (“How” question) |

Retrospective case analysis of successes related to other complex public health issues that have been addressed by policies that have led to social changea |

|

a See Economos et al., 2001; Eriksen, 2005; Kersh and Morone, 2002. |

||

of interest. For example, participation and selection processes for committees that generate reports such as the Guide to Community Preventive Services (http://www.thecommunityguide.org/) or Common Community Measures for Obesity Prevention (http://www.activelivingresearch.org/node/11698) are highly rigorous and systematic. Expert knowledge is particularly helpful in answering “What” or “How” questions, although consensus reports usually also include a rationale for why action is needed. Examples of this type of evidence include national committee reports based on deliberative processes; guidelines from national associations, health foundations, and committed practitioners or health professional organizations; and other expert statements. Table 5-10 provides examples of this type of evidence and how it might be used. Also see Chapter 7 for further discussion of the use of expert opinion and experience.

GATHERING THE EVIDENCE

Locating evidence from the expanded perspective proposed by the committee requires access to a broad base of resources that extends beyond research journals. Databases used by researchers and decision makers and their intermediaries typically incorporate

TABLE 5-10 Types of Expert Knowledge and Examples of Their Uses

|

Type of Evidence |

Questions That Can Be Addressed |

Specific Applications |

|

National Committee Reports Based on a Deliberative Process |

In view of present and potential human and monetary costs of treating current levels of obesity or obesity-related diseases, what types of actions are recommended within and outside of the health care system? (“What” question) |

Reasoned and formal analysis by a committee of established experts, such as a report by a committee convened by the public or private sector with established bias and conflict-of-interest procedures |

|

Guidelines from National Associations, Health Foundations, and Committed Practitioner or Health Professional Organizations |

Given the availability of effective measures to treat high blood pressure, what steps are needed to improve levels of blood pressure control in the population at large? (“What” question) |

Public statements of consensus by a committee of established professionals and practitioners |

|

Other Expert Statements |

How does this intervention fit with community politics or national policy priorities? On what basis should it be given high priority? (“How” questions) |

The considered opinion of experts in a particular field or practitioners, leaders, stakeholders, and policy makers able to make informed judgments on implementation issues and having local or governmental expertise (e.g., doctors, lawyers, nutritionists, scientists, or academics able to interpret the scientific literature or specialized forms of data, such as legal evidence) |

data, studies, or reports from numerous disciplines but may still miss many potentially useful sources, such as compilations from economics, education, business, and law and information from newspapers, government documents, and reports of community agencies and programs. Appendix D provides guidance on where to gather evidence. This aspect of the process is presumed to rely primarily on electronic databases. Some relevant databases are in the public domain or accessible in public facilities; others require subscriptions or access through institutions such as university libraries. The search process generally involves identification of a set of key concepts and terms that can be used as probes in these databases. A preliminary screening of titles and abstracts of identified items for relevance is followed by detailed examination of items selected for potential use. Some types of evidence can be readily identified and evaluated by lay persons or professionals without scientific expertise. Examples include program descriptions, news reports, “report cards,” and other compilations or analyses intended for direct use by lay audiences or nonresearchers. However, identifying and sifting through research studies requires academic or scientific expertise.

Awareness of the wide array of evidence sources and potentially relevant content areas is critical for full implementation of the L.E.A.D. framework. The value of electronic databases is in providing easily searchable centralized compilations of information within specific fields of knowledge. Unfortunately, however, although some databases overlap and some provisions exist for searching multiple databases simultaneously, there are gaps related to boundaries between disciplines. For example, those searching biomedical or health databases may encounter a limited amount of information from management science, law, public policy, marketing, education, communications, or anthropology—although all of these fields include both content and methodologies that are relevant to aspects of obesity prevention and public health in general. Also of potential relevance are electronic compilations of newspaper articles, program reports and evaluations, scientific and technical reports, and public surveys that are not available in academic journals. The listing in Appendix D includes databases for these sources as well as the academic literature.

Evidence sources should be evaluated to determine their degree of relevance to the user’s question and context based on the nature of the outcomes for which the evidence is informative. Evidence quality should be judged by standards appropriate to that type of evidence. The evidence evaluation step in the L.E.A.D. framework is discussed in Chapter 6. Evidence gaps will be identified in this process and in some cases may predominate. That is, the available evidence—even when broadly sought—may not address decision makers’ questions because no studies relevant to the issue or to the potential setting have been done, or because important studies do not report key details needed to fully interpret and apply the results from a practical perspective. Transmitting information about what evidence is not available to researchers is critical to the process of generating evidence to fill these gaps. Approaches to using actual ongoing policy and programmatic initiatives as the basis for generating evidence are discussed in Chapter 8.

REFERENCES

Barbour, R. S., and M. Barbour. 2003. Evaluating and synthesizing qualitative research: The need to develop a distinctive approach. Journal of Evaluation in Clinical Practice 9(2):179-186.

Black, J. L., and J. Macinko. 2010. The changing distribution and determinants of obesity in the neighborhoods of New York City, 2003-2007. American Journal of Epidemiology 171(7):765-775.

Bower, E., and S. Scambler. 2007. The contributions of qualitative research towards dental public health practice. Community Dentistry and Oral Epidemiology 35(3):161-169.

Carter, R., M. Moodie, A. Markwick, A. Magnus, T. Vos, B. Swinburn, and M. M. Haby. 2009. Assessing cost-effectiveness in obesity (ACE-obesity): An overview of the ACE approach, economic methods and cost results. BMC Public Health 9(Article 419).

Cawley, J., C. Meyerhoefer, and D. Newhouse. 2007. The impact of state physical education requirements on youth physical activity and overweight. Health Economics 16(12):1287-1301.

Chenoweth and Associates. 2009. The economic costs of overweight, obesity, and physical inactivity among California adults—2006. New Bern, NC: Chenoweth and Associates, Inc.

Connelly, J. B., M. J. Duaso, and G. Butler. 2007. A systematic review of controlled trials of interventions to prevent childhood obesity and overweight: A realistic synthesis of the evidence. Public Health 121(7):510-517.

Cooper, H., and L. V. Hedges. 1994. The handbook of research synthesis. New York: The Russell Sage Foundation.

Dixon-Woods, M., and R. Fitzpatrick. 2001. Qualitative research in systematic reviews. British Medical Journal 323(7316):765-766.

Dixon-Woods, M., R. Fitzpatrick, and K. Roberts. 2001. Including qualitative research in systematic reviews: Opportunities and problems. Journal of Evaluation in Clinical Practice 7(2):125-133.

Economos, C. D., R. C. Brownson, M. A. DeAngelis, S. B. Foerster, C. Tucker Foreman, J. Gregson, S. K. Kumanyika, and R. R. Pate. 2001. What lessons have been learned from other attempts to guide social change? Nutrition Reviews 59(3, Supplement 2):S40-S46.

Eriksen, M. 2005. Lessons learned from public health efforts and their relevance to preventing childhood obesity. In Preventing childhood obesity: Health in the balance, edited by J. Koplan, C. Liverman, and V. Kraak. Washington, DC: The National Academies Press. Pp. 343-375.

Finkelstein, E. A., J. G. Trogdon, J. W. Cohen, and W. Dietz. 2009. Annual medical spending attributable to obesity: Payer and service-specific estimates. Health Affairs 28(5): w822-w831.

Fitzpatrick, J. L., J. R. Sanders, and B. R. Worthen. 2004. Program evaluation: Alternative approaches and practical guidelines. 3rd ed. Boston, MA: Allyn and Bacon.

Glass, G. 2000. Meta-analysis at 25. http://glass.ed.asu.edu/gene/papers/meta25.html (accessed December 24, 2008).

Haby, M. M., T. Vos, R. Carter, M. Moodie, A. Markwick, A. Magnus, K. S. Tay-Teo, and B. Swinburn. 2006. A new approach to assessing the health benefit from obesity interventions

in children and adolescents: The assessing cost-effectiveness in obesity project. International Journal of Obesity (London) 30(10):1463-1475.

Hunt, M. 1997. How science takes stock: The story of meta-analysis. New York: Russel Sage Foundation.

Kersh, R., and J. Morone. 2002. The politics of obesity: Seven steps to government action. Health Affairs 21(6):142-153.

Kuo, T., C. J. Jarosz, P. Simon, and J. E. Fielding. 2009. Menu labeling as a potential strategy for combating the obesity epidemic: A health impact assessment. American Journal of Public Health 99(9):1680-1686.

Laurence, S., R. Peterken, and C. Burns. 2007. Fresh Kids: the efficacy of a Health Promoting Schools approach to increasing consumption of fruit and water in Australia. Health Promotion International 22(3):218-226.

Manning, W. G., E. B. Keeler, J. P. Newhouse, E. M. Sloss, and J. Wasserman. 1991. The costs of poor health habits. Cambridge, MA: Harvard University Press.

McInnes, R. J., and J. A. Chambers. 2008. Supporting breastfeeding mothers: Qualitative synthesis. Journal of Advanced Nursing 62(4):407-427.

McNaughton, D. B. 2000. A synthesis of qualitative home visiting research. Public Health Nursing 17(6):405-414.

Mello, M. M. 2009. New York City’s war on fat. New England Journal of Medicine 360(19):2015-2020.

Petticrew, M., and H. Roberts. 2003. Evidence, hierarchies, and typologies: Horses for courses. Journal of Epidemiology and Community Health 57(7):527-529.

Rossi, P. H., M. W. Lipsey, and H. E. Freeman. 2004. Evaluation: A systematic approach. 7th ed. Thousand Oaks, CA: Sage Publications.

Roux, L., M. Pratt, T. O. Tengs, M. M. Yore, T. L. Yanagawa, J. Van Den Bos, C. Rutt, R. C. Brownson, K. E. Powell, G. Heath, H. W. Kohl, 3rd, S. Teutsch, J. Cawley, I. M. Lee, L. West, and D. M. Buchner. 2008. Cost effectiveness of community-based physical activity interventions. American Journal of Preventive Medicine 35(6):578-588.

Ryan, K. W., P. Card-Higginson, S. G. McCarthy, M. B. Justus, and J. W. Thompson. 2006. Arkansas fights fat: Translating research into policy to combat childhood and adolescent obesity. Health Affairs 25(4):992-1004.

Schneider, M., W. J. Hall, A. E. Hernandez, K. Hindes, G. Montez, T. Pham, L. Rosen, A. Sleigh, D. Thompson, S. L. Volpe, A. Zeveloff, and A. Steckler. 2009. Rationale, design and methods for process evaluation in the HEALTHY study. International Journal of Obesity 33(Supplement 4):S60-S67.

Schwartz, M. B., S. A. Novak, and S. S. Fiore. 2009. The impact of removing snacks of low nutritional value from middle schools. Health Education Behavior 36(6):999-1011.

Smith, M. L., and G. V. Glass. 1977. Meta-analysis of psychotherapy outcome studies. American Psychologist 32(9):752-760.

Steckler, A., B. Ethelbah, C. J. Martin, D. Stewart, M. Pardilla, J. Gittelsohn, E. Stone, D. Fenn, M. Smyth, and M. Vu. 2003. Pathways process evaluation results: A school-based prevention trial to promote healthful diet and physical activity in American Indian third, fourth, and fifth grade students. Preventive Medicine 37(Supplement 1):S80-S90.

Stone, E. J., J. E. Norman, S. M. Davis, D. Stewart, T. E. Clay, B. Caballero, T. G. Lohman, and D. M. Murray. 2003. Design, implementation, and quality control in the Pathways American-Indian multicenter trial. Preventive Medicine 37(Supplement 1):S13-S23.

Swinburn, B., T. Gill, and S. Kumanyika. 2005. Obesity prevention: A proposed framework for translating evidence into action. Obesity Reviews 6(1):23-33.

Thomas, J., A. Harden, A. Oakley, S. Oliver, K. Sutcliffe, R. Rees, G. Brunton, and J. Kavanagh. 2004. Integrating qualitative research with trials in systematic reviews. British Medical Journal 328(7446):1010-1012.

Thompson, J. W., and P. Card-Higginson. 2009. Arkansas’ experience: Statewide surveillance and parental information on the child obesity epidemic. Pediatrics 124(Supplement 1): S73-S82.

Wang, L. Y., Q. Yang, R. Lowry, and H. Wechsler. 2003. Economic analysis of a school-based obesity prevention program. Obesity Research 11(11):1313-1324.

Wolf, A. M., and G. A. Colditz. 1998. Current estimates of the economic cost of obesity in the United States. Obesity Research 6(2):97-106.