Digital Instrument Building and the Laptop Orchestra

DANIEL TRUEMAN

Princeton University

Musical and technological innovations have long gone hand in hand. Historically, this relationship evolved slowly, allowing significant time for musicians to live with and explore new possibilities and enough time for engineers and instrument builders to develop and refine new technologies. The roles of musician and instrument builder have typically been separate; the time and skills required to build an acoustic instrument are typically too great for the builder to also master that instrument, and vice versa.

Today, however, the terms of the relationship have changed. As digital technologies have greatly accelerated the development of new instruments, the separation between these roles has become blurred. However, at the same time, social contexts for exploring new instruments have developed slowly, limiting opportunities for musicians to make music together over long periods of time with these new instruments.

The laptop orchestra is a socially charged context for making music together with new digital instruments while simultaneously developing those instruments. As the role of the performer and builder have merged and the speed with which instruments can be created and revised has increased, the development of musical instruments has become part of the performance of music. In this paper, I look at a range of relevant technical challenges, including speaker design, human-computer interaction (HCI), digital synthesis, machine learning, and networking, in the musical and instrument-building process.

CHALLENGES IN BUILDING DIGITAL INSTRUMENTS

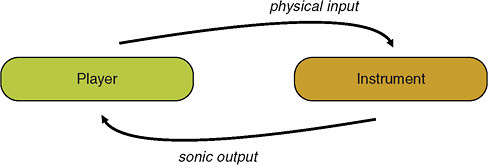

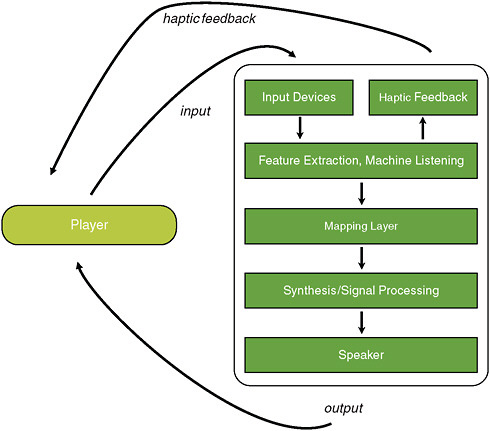

Digital building of musical instruments is a highly interdisciplinary venture that touches on disparate disciplines. In addition to obvious engineering concerns, such as HCI, synthesis, speaker design, and so on, the fields of perception, cognition, and both musical and visual aesthetics also come into play. The basic feedback loop between a player and a generic (acoustic, electronic, or digital) musical instrument is illustrated in Figure 1. In Figure 2, the instrument is “exploded” to reveal the components that might make up a digital musical instrument.

Traveling along the feedback loop in Figure 2, we can see relevant challenges, including sensor design and configuration, haptic feedback systems, computational problems with feature extraction, mapping and synthesis, and amplifier and speaker design. Add to these the ergonomic and aesthetic design of the instrument itself.

Building a compelling digital instrument involves addressing all of these challenges simultaneously, while taking into account various practical and musical concerns, such as the size, weight, and visual appeal of the instrument, its physicality and sound quality, its ease of setup (so it might be “giggable”), and its reliability. The most active researchers in this field are typically either musicians with significant engineering skills or engineers with lifelong musical training.

DIGITAL INSTRUMENT BUILDING IN PRACTICE

My own bowed-sensor-speaker-array (BoSSA) illustrates one solution to the digital instrument problem (Trueman and Cook, 2000). BoSSA (Figure 3) consists of a spherical speaker array integrated with a number of sensors in a violin-inspired configuration. The sensors, which measure bow position, pressure, left-hand finger position, and other parameters, provide approximately a dozen streams of control information. Software is used to process and map these streams to a variety of synthesis algorithms, which are then amplified and sent out through the 12 speaker drivers on the speaker surface.

FIGURE 1 Basic player/instrument feedback loop.

FIGURE 2 Player/digital-instrument feedback loop.

BoSSA, a fairly low-tech, crude instrument made with off-the-shelf sensor and speaker components, is more of a proof of concept than a prototype for future instruments. Its most compelling feature is the integration of sensor and speaker technology into a single localized, intimate instrument.

In recent years, sensor technologies for these kinds of instruments have become much more refined and commercially viable. For example, the K-bow (Figure 4) is a wireless sensor-violin bow that hit the markets just this past year (McMillen, 2008). In terms of elegance and engineering, it surpasses earlier sensor bows (including mine), and if it turns out to be commercially viable, it gives us hope that these kinds of experimental explorations may gain broader traction.

Another set of instruments, built by Jeff Snyder (2010), integrates speaker and sensor technology directly into acoustic resonators so thoroughly that, at first glance, it is not apparent that these are not simply acoustic instruments (Figure 5).

FIGURE 3 The Bowed Sensor Speaker Array.

However, none of these instruments integrates any kind of active haptic feedback. Although the importance of haptic feedback remains an open question, I am convinced it will become more important and more highly valued as digital musical instrument design technologies continue to improve. The physical feedback a violinist receives through the bow and strings is very important to his/her performance technique, as well as to the sheer enjoyment of playing. Thus an ability to digitally manipulate this interface is, to say the least, intriguing.

Researchers developing new haptic technologies for music have demonstrated that they enable new performance techniques. For instance, Edgar Berdahl and his colleagues (2008) at Stanford have developed a haptic drum (among other instruments) that enables a one-handed roll, which is impossible on an acoustic drum (Figure 6).

FIGURE 6 Edgar Berdahl’s haptic drum. Photo courtesy of Edgar Berdahl.

Perhaps the most compelling aspect of these instruments is in the mapping layer. Here, relationships between body and sound that would otherwise be impossible can be created, and even changed instantaneously. In fact, the mapping layer itself can be dynamic, changing as the instrument is played.

As described earlier, however, creating mappings by manually connecting features to synthesis parameters is always challenging, sometimes practically impossible. One exciting development has been the application of machine-learning techniques to the mapping layer. Rebecca Fiebrink has created an instrument called the Wekinator that gives the musician a way to rapidly and dynamically apply techniques from the Weka machine-learning libraries (Witten and Frank, 2005) to create new mappings (Fiebrink, 2010; Fiebrink et al., 2009).

For instance, a musician might create a handful of sample correspondences (e.g., gesture G with the controller should yield sound S, as set by particular parameter values) to create a data set from the sensor features that can be used by machine-learning algorithms of various sorts to create mapping models that can then be performed. The player can then explore these new models, see how they sound and feel, add new data to the training set, and retrain or simply start over until he/she finds a model that is satisfying. This application of machine learning is unusual in that the end result is usually not known at the outset. Instead, the solutions provided by machine learning feed back into a creative process, finally resulting in an instrument that would have been impossible to imagine fully beforehand. Ultimately, machine learning and the Wekinator are both important in facilitating the creative process.

PLAYING WELL WITH OTHERS

From a musical perspective, computers are terrific at multiplication—they can make a lot from a little (or even from nothing at all).. As a result, much of laptop music is solo, one player who can make a lot of sound, sometimes with little or no effort. One of the main goals of the Princeton Laptop Orchestra (Figure 7) is to create a musically, socially charged environment that can act as a counterweight to this tendency (Trueman, 2007). By putting many musicians in the same room and inviting them to make music together, we force instrument builders and players (often one and the same person) to focus on responsive, subtle instruments that require constant effort and attention, that can turn on a dime in response to what others do, and that force players to break a sweat. The laptop orchestra is, in a sense, a specific, constrained environment within which digital musical instruments can evolve and survive; those that engage us as musicians and enable us to play well with others survive.

While a laptop orchestra is, like any other orchestra, a collection of musical instruments manned by individual players, the possibility of leveraging local wireless networks for musical purposes becomes both irresistible and novel. For instance, a “conductor” might be a virtual entity that sends pulsed information over the network. Or, musicians might set the course of an improvisation on the fly via some sort of musically coded text-messaging system (Freeman, 2010). Instruments themselves might be network enabled, sharing their sensor data with other instruments or players (Weinberg, 2005).

Humans are highly sensitive to variations in timing, and wireless networks are often not up to the challenge. For instance, when sending pulses over the network every 200 milliseconds (ms), humans hear jitter if the arrival times vary by more than 6 ms (a barely noticeable difference); some researchers have concluded that expressive variability occurs even within that window (Iyer, 1998). Preliminary research has been done on the usability of wireless routers instead of computers (Cerqueira and Trueman, 2010), but a perfect wireless router for musical purposes has yet to be found.

CLOSING THOUGHTS

Musical instruments are subjective technologies. Rather than optimal solutions to well defined problems, they reflect individual and cultural values and are ongoing ad hoc manifestations of a social activity that challenges our musical and engineering abilities simultaneously. Musical instruments frame our ability to hear and imagine music, and while they enable human expression and communication, they also are, in and of themselves, expressive and communicative. They require time to evaluate and explore, and they become most meaningful when they are used in a context with other musicians. Therefore, it is essential to view instrument building as a fluid, ongoing process with no “correct” solu-

tions, a process that requires an expansive context in which these instruments can inspire and be explored.

REFERENCES

Berdahl, E., H.-C. Steiner, and C. Oldham. 2008. Practical Hardware and Algorithms for Creating Haptic Musical Instruments. Eighth International Conference on New Interfaces for Musical Expression, Genova, Italy.

Cerqueira, M., and D. Trueman. 2010. Laptop Orchestra Network Toolkit. Senior Thesis, Computer Science Department, Princeton University.

Fiebrink, R. 2010. Real-Time Interaction with Supervised Learning. Atlanta, Ga.: ACM CHI Extended Abstracts.

Fiebrink, R., P.R. Cook, and D. Trueman. 2009. Play-along Mapping of Musical Controllers. Proceedings of the International Computer Music Conference (ICMC), Montreal, Quebec.

Freeman, J. 2010. Web-based collaboration, live musical performance, and open-form scores. International Journal of Performance Arts and Digital Media 6(2) (forthcoming).

Iyer, V. 1998. Microstructures of Feel, Macrostructures of Sound: Embodied Cognition in West African and African-American Musics. Ph.D. Dissertation, Program in Technology and the Arts, University of California, Berkeley.

McMillen, K. 2008. Stage-Worthy Sensor Bows for Stringed Instruments. Eighth International Conference on New Interfaces for Musical Expression Conference, Genova, Italy.

Snyder, J. 2010. Exploration of an Adaptable Just Intonation System. D.M.A Dissertation, Department of Music, Columbia University.

Trueman, D. 2007. Why a laptop orchestra? Organised Sound 12(2): 171–179.

Trueman, D., and P. Cook. 2000. BoSSA: the deconstructed violin reconstructed. Journal of New Music Research 29(2): 121–130.

Weinberg, G. 2005. Local performance networks: musical interdependency through gestures and controllers. Organised Sound 10(3).

Witten, I., and E. Frank. 2005. Data Mining: Practical Machine Learning Tools and Techniques. 2nd ed. San Francisco: Morgan Kaufmann.