Challenges and Opportunities for Autonomous Systems in Space

CHAD R. FROST

NASA Ames Research Center

With the launch of Deep Space 1 in 1998, the autonomous systems community celebrated a milestone—the first flight experiment demonstrating the feasibility of a fully autonomous spacecraft. We anticipated that the advanced autonomy demonstrated on Deep Space 1 would soon be pervasive, enabling science missions, making spacecraft more resilient, and reducing operational costs.

However, the pace of adoption has been relatively slow. When autonomous systems have been used, either operationally or as an experiment or demonstration, they have been successful. In addition, outstanding work by the autonomous-systems community has continued to advance the technologies of autonomous systems (Castaño et al., 2006; Chien et al., 2005; Estlin et al., 2008; Fong et al., 2008; Knight, 2008).

There are many reasons for putting autonomous systems on board spacecraft. These include maintenance of the spacecraft despite failures or damage, extension of the science team through “virtual presence,” and cost-effective operation over long periods of time. Why, then, has the goal of autonomy not been more broadly adopted?

DEFINITION OF AUTONOMY

First, we should clarify the difference between autonomy and automation. Many definitions are possible (e.g., Doyle, 2002), but here we focus on the need to make choices, a common requirement for systems outside our direct, hands-on control.

An automated system doesn’t make choices for itself—it follows a script, albeit a potentially sophisticated script, in which all possible courses of action

have already been made. If the system encounters an unplanned-for situation, it stops and waits for human help (e.g. it “phones home”). Thus, for an automated system choices have either already been made and encoded, or they must be made externally.

By contrast, an autonomous system does make choices on its own. It tries to accomplish its objectives locally, without human intervention, even when encountering uncertainty or unanticipated events.

An intelligent autonomous system makes choices using more sophisticated mechanisms than other systems. These mechanisms often resemble those used by humans. Ultimately, the level of intelligence of an autonomous system is judged by the quality of the choices it makes. Regardless of the implementation details, however, intelligent autonomous systems are capable of more “creative” solutions to ambiguous problems than are systems with simpler autonomy, or automated systems, which can only handle problems that have been foreseen.

A system’s behavior may be represented by more than one of these descriptions. A domestic example of such a system, iRobot’s Roomba™ line of robotic vacuum cleaners, illustrates how prosaic automation and autonomy have become. The Roomba must navigate a house full of obstacles while ensuring that the carpet is cleaned—a challenging task for a consumer product. We can evaluate the Roomba’s characteristics using the definitions given above:

-

The Roomba user provides high-level goals (vacuum the floor, but don’t vacuum here, vacuum at this time of day, etc.)

-

The Roomba must make some choices itself (how to identify the room geometry, avoid obstacles, when to recharge its battery, etc).

-

The Roomba also has some automated behavior and encounters situations it cannot resolve on its own (e.g., it gets stuck, it can’t clean its own brushes, etc.).

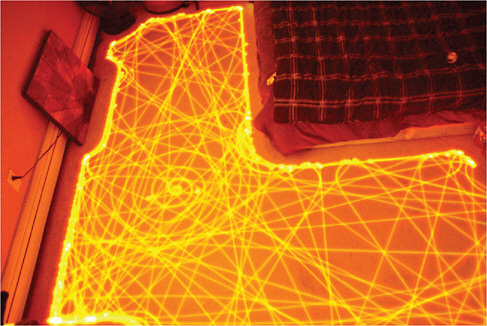

Overall, the Roomba has marginal autonomy, and there are numerous situations it cannot deal with by itself. It is certainly not intelligent. However, it does have basic on-board diagnostic capability (“clean my brushes!”) and a strategy (as seen in Figure 1) for vacuuming a room about whose size and layout it was initially ignorant. Roomba demonstrates the potential for the widespread use of autonomous systems in our daily lives.

AUTONOMY FOR SPACE MISSIONS

So much has been written on this topic that we can barely scratch the surface of a deep and rewarding discussion in this short article. However, we can examine a few recurring themes. The needs for autonomous systems depend, of course, on the mission. Autonomous operation of the spacecraft, its subsystems, and the science instruments or payload become increasingly important as the spacecraft

FIGURE 1 Long-exposure image of Roomba’s path while navigating a room. Photo by Paul Chavady, used with permission.

is required to deal with phenomena that occur on time scales shorter than the communication latency between the spacecraft and Earth. But all spacecraft must maintain function “to ensure that hardware and software are performing within desired parameters, and [find] the cause of faults when they occur” (Post and Rose, undated).

Virtual Presence

“Virtual presence” is often cited as a compelling need for autonomy. Scientific investigation, including data analysis and discovery, becomes more challenging the farther away the scientific instruments are from the locus of control. Doyle (2002) suggests that “… a portion of the scientist’s awareness will begin to move onboard, i.e., an observing and discovery presence. Knowledge for discriminating and determining what information is important would begin to migrate to the space platform.” Marvin Minsky (1980), a pioneer of artificial intelligence, made the following observation about the first lunar landing mission in 1969:

With a lunar telepresence vehicle making short traverses of one kilometer per day, we could have surveyed a substantial area of the lunar surface in the ten years that have slipped by since we landed there.”

Add another three decades, and Minsky’s observation takes on even greater relevance.

Traversing extraterrestrial sites is just one example of the ongoing “dirty, dull, dangerous” work that it is not cost-effective, practical, or safe for humans to do. Yet these tasks have great potential for long-term benefits. As Minsky pointed out, even if the pace of investigation or work is slower than what humans in situ might accomplish, the cumulative effort can still be impressive. The Mars Exploration Rovers are a fine example. In the six years since they landed, they have jointly traversed more than 18 miles and collected hundreds of thousands of images.

Thus, autonomous systems can reduce the astronauts’ workload on crewed missions. An astronaut’s limited, valuable time should not be spent verifying parameters and performing spacecraft housekeeping, navigation, and other chores that can readily be accomplished by autonomous systems.

Another aspect of virtual presence is robotic mission enablers, such as the extension of the spacecraft operator’s knowledge onto the spacecraft, enabling “greater onboard adaptability in responding to events, closed-loop control for small body rendezvous and landing missions, and operation of the multiple free-flying elements of space-based telescopes and interferometers” (Doyle, 2002). Several of these have been demonstrated, such as the Livingstone 2, which provided on-board diagnostics on Earth Orbiter 1 (EO1) (Hayden et al., 2004) and full autonomy, including docking and servicing with Orbital Express (Ogilvie et al., 2008).

Common Elements of Autonomous Systems

The needs autonomous systems can fulfill can be distilled down to a few common underlying themes: mitigating latency (the distance from those interested in what the system is doing); improving efficiency by reducing cost and mass and improving the use of instrument and/or crew time; and managing complexity by helping to manage spacecraft systems that have become so complex they are difficult, sometime impossible, for humans on-board or on the ground to diagnose and solve problems. Nothing is flown on a spacecraft that has not “paid” for itself, and this holds true for the software that gives it autonomy.

SUCCESS STORIES

Where do we stand today in terms of deployed autonomous systems? Specifically, which of the technology elements that comprise a complex, self-sufficient, intelligent system have flown in space? There have been several notable successes. Four milestone examples illustrate the progress made: Deep Space 1, which flew the first operational autonomy in space; Earth Observing 1, which demonstrated autonomous collection of science data; Orbital Express, which autonomously carried out spacecraft servicing tasks; and the Mars Exploration Rovers, which

have been long-term hosts for incremental improvements in their autonomous capabilities.

Deep Space 1. A Remote Agent Experiment

In 1996, NASA specified an autonomous mission scenario called the New Millennium Autonomy Architecture Prototype (NewMAAP). For that mission, a Remote Agent architecture that integrated constraint-based planning and scheduling, robust multi-threaded execution, and model-based mode identification and reconfiguration was developed to meet the NewMAAP requirements (Muscettola et al., 1998; Pell et al., 1996). This architecture was described by Muscettola in 1998:

The Remote Agent architecture has three distinctive features: First, it is largely programmable through a set of compositional, declarative models. We refer to this as model-based programming. Second, it performs significant amounts of on-board deduction and search at time resolutions varying from hours to hundreds of milliseconds. Third, the Remote Agent is designed to provide high-level closed-loop commanding.

Based on the success of NewMAAP as demonstrated in the Remote Agent, it was selected as a technology experiment on the Deep Space 1 (DS1) mission. Launched in 1998, the goal of DS1 (Figure 2) was to test 12 cutting-edge technologies, including the Remote Agent Experiment (RAX), which became the first operational use of “artificial intelligence” in space. DS1 functioned completely autonomously for 29 hours, successfully operating the spacecraft and responding to both simulated and real failures.

Earth Observing 1. An Autonomous Sciencecraft Experiment

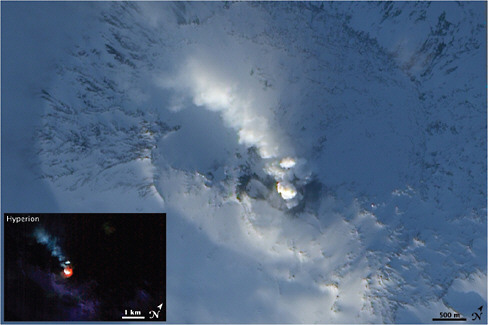

Earth Observing 1 (EO1), launched in 2000, demonstrated on-board diagnostics and autonomous acquisition and processing of science data, specifically, imagery of dynamic natural phenomena that evolve over relatively short time spans (e.g., volcanic eruptions, flooding, ice breakup, and changes in cloud cover).

The EO1 Autonomous Sciencecraft Experiment (ASE) included autonomous on-board fault diagnosis and recovery (Livingstone 2), as well as considerable autonomy of the science instruments and downlink of the resulting imagery and data. Following this demonstration, ASE was adopted for operational use. It has been in operation since 2003 (Chien et al., 2005, 2006).

A signal success of the EO1 mission was the independent capture of volcanic activity on Mt. Erebus. In 2004, ASE detected an anomalous heat signature, scheduled a new observation, and effectively detected the eruption by itself. Figure 3 shows 2006 EO1 images of the volcano.

FIGURE 3 Images of volcanic Mt. Erebus, autonomously collected by EO1. NASA image created by Jesse Allen, using EO-1 ALI data provided courtesy of the NASA EO1 Team.

Orbital Express

In 2007, the Orbital Express mission launched two complimentary spacecraft, ASTRO and NextSat, with the goal of demonstrating a complete suite of the technologies required to autonomously service satellites on-orbit. The mission demonstrated several levels of on-board autonomy, ranging from mostly ground-supervised operations to fully autonomous capture and servicing, self-directed transfer of propellant, and automatic capture of another spacecraft using a robotic arm (Boeing Integrated Defense Systems, 2006; Ogilvie et al., 2008). These successful demonstrations showed that servicing and other complex spacecraft operations can be conducted autonomously.

Mars Exploration Rovers

Since landing on Mars in 2004, the Mars Exploration Rovers, Spirit and Opportunity, have operated with increasing levels of autonomy. An early enhancement provided autonomous routing around obstacles; another automated the process of calculating how far the rover’s arm should reach out to touch a particular rock.

In 2007, the rovers were updated to autonomously examine sets of sky images, determine which ones showed interesting clouds or dust devils, and

send only those images back to scientists on Earth. The most recent software has enabled Opportunity to make decisions about acquiring new observations, such as selecting rocks on the basis of shape and color, for imaging by the wide-angle navigation camera and detailed inspection with the narrow-field panoramic camera (Estlin et al., 2008).

REMAINING CHALLENGES

Despite the compelling need for spacecraft autonomy and the feasibility demonstrated by the successful missions described above, obstacles remain to the use of autonomous systems as regular elements of spacecraft flight software. Two kinds of requirements for spacecraft autonomy must be satisfied: (1) functional requirements, which represent attributes the software must objectively satisfy for it to be acceptable; and (2) perceived requirements, which are not all grounded in real mission requirements but weigh heavily in subjective evaluations of autonomous systems. Both types of requirements must be satisfied for the widespread use of autonomous systems.

Functional Requirements

From our experience thus far, we have a good sense of the overarching functional requirements for space mission autonomy. Muscettola et al. (1998) offer a nicely distilled set of these requirements (evolved from Pell et al., 1996).

“First, a spacecraft must carry out autonomous operations for long periods of time with no human intervention.” Otherwise, what’s the point of including autonomous systems? Short-term autonomy may be even worse than no autonomy at all. If humans have to step in to a nominally autonomous process, they are likely to spend a lot of time trying to determine the state of the spacecraft, how it got that way, and what needs to be done about it.

“Second, autonomous operations must guarantee success given tight deadlines and resource constraints.” By definition, if an unplanned circumstance arises, an autonomous system cannot stop and wait indefinitely either for human help or to deliberate on a course of action. The system must act, and it must do so expediently. Whether autonomy can truly guarantee success is debatable, but at least it should provide the highest likelihood of success.

“Third, since spacecraft are expensive and are often designed for unique missions, spacecraft operations require high reliability.” Even in the case of a (relatively) low-cost mission and an explicit acceptance of a higher level of risk, NASA tends to be quite risk-averse! Failure is perceived as undesirable, embarrassing, “not an option,” even when there has been a trade-off between an increased chance of failure and a reduction in cost or schedule. Autonomy must be perceived as reducing risk, ideally, without significantly increasing cost or schedule. Program managers, science principal investigators, and spacecraft

engineers want (and need) an answer to their frequently asked question, “How can we be sure that your software will work as advertised and avoid unintended behavior?”

“Fourth, spacecraft operation involves concurrent activity by tightly coupled subsystems.” Thus, requirements and interfaces must be thoroughly established relatively early in the design process, which pushes software development forward in the program and changes the cost profile of the mission.

Perceived Requirements

Opinions and perceptions, whether objectively based or not, are significant challenges to flying autonomous systems on spacecraft. Some of the key requirements and associated issues are identified below.

Reliability

As noted above, the question most frequently asked is whether autonomy software will increase the potential risk of a mission (Frank, 2008b). The question really being asked is whether the autonomous systems have the ability to deal with potentially loss-of-mission failures sufficiently to offset the added potential for software problems.

Complexity

Bringing autonomous systems onto a spacecraft is perceived as adding complexity to what might otherwise be a fairly straightforward system. Certainly autonomy increases the complexity of a simple, bare-bones system. This question is more nuanced if it is about degrees of autonomy, or autonomy versus automation. As spacecraft systems themselves become more complex, or as we ask them to operate more independently of us, must the software increase in sophistication to match? And, does sophistication equal complexity?

Cost

Adding many lines of code to support autonomous functions is perceived as driving up the costs of a mission. The primary way an autonomous system “buys” its way onto a spacecraft is by having the unique ability to enable or save the mission. In addition, autonomous systems may result in long-term savings by reducing operational costs. However, in both cases, the benefits may be much more difficult to quantify than the cost, thus, making the cost of deploying autonomous systems highly subjective.

Sci-Fi Views of Autonomy

This perceptive requirement boils down to managing expectations and educating people outside the intelligent-systems community, where autonomous systems are sometimes perceived as either overly capable, borderline Turing machines, or latent HAL-9000s ready to run amok (or worse, to suffer from a subtle, hard-to-diagnose form of mental illness). Sorry, but we’re just not there yet!

ADDRESSING THE CHALLENGES

The principal challenge to the deployment of autonomous systems is risk-reduction (real or perceived). Improvements can be achieved in several ways.

Processes

Perhaps the greatest potential contributor to the regular use of autonomous systems on spacecraft is to ensure that rigorous processes are in place to (1) thoroughly verify and validate the software, and (2) minimize the need to develop new software for every mission. Model-based software can help address both of these key issues (as well as others) as this approach facilitates rigorous validation of the component models and the re-use of knowledge.

The model-based software approach was used for the Remote Agent Experiment (Williams and Nayak, 1996) and for the Livingstone 2 diagnostic engine used aboard EO1 (Hayden et al., 2004). However, processes do not emerge fully formed. Only through the experience of actually flying autonomous systems (encompassing both successes and failures) can we learn about our processes and the effectiveness of our methods.

Demonstrations

The next most effective way to reduce risk is to increase the flight experience and heritage of autonomous software components. This requires a methodical approach to including autonomous systems on numerous missions, initially as “ride-along” secondary or tertiary mission objectives, but eventually on missions dedicated to experiments of autonomy.

This is not a new idea. NASA launched DS1 and EO1 (and three other spacecraft) under the auspices of the New Millennium Program, which was initiated in 1995 with the objective of validating a slate of technologies for space applications. However, today there is no long-term strategy in place to continue the development and validation of spacecraft autonomy.

Fundamental Research

We have a long way to go before autonomous systems will do all that we hope they can do. Considerable research will be necessary to identify new, creative solutions to address the many challenges that remain. For example, it can be a challenge to build an integrated system of software to conduct the many facets of autonomous operations (including, e.g., planning, scheduling, execution, fault detection, and fault recovery) within the constraints of spaceflight computing hardware. Running on modern terrestrial computers, let alone much slower flight-qualified hardware, algorithms to solve the hard problems in these disciplines may be intractably slow.

Academic experts often know how to create algorithms that can theoretically run fast enough, but their expertise must be transformed into engineering discipline—practical, robust software suitable for use in a rigorous real-time environment—and integrated with the many other software elements that must simultaneously function.

Education

As we address the issues listed above, we must simultaneously educate principal investigators, project managers, and the science community about the advantages of autonomous systems and their true costs and savings.

OPPORTUNITIES

There are many upcoming missions in which autonomy could play a major role. Although so far, human spaceflight has been remarkably devoid of autonomy, as technologies are validated in unmanned spacecraft and reach levels of maturity commensurate with other human-rated systems, there is great potential for autonomous systems to assist crews in maintaining and operating even the most complex spacecraft over long periods of time. Life support, power, communications, and other systems require automation, but would also benefit from autonomy (Frank, 2008a).

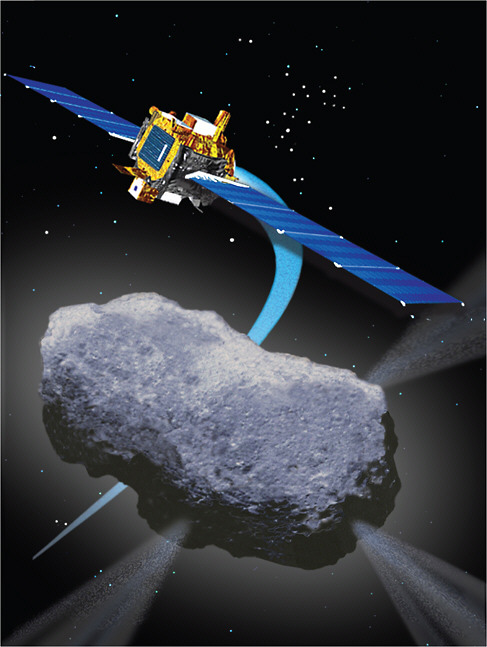

Missions to the planets of our solar system and their satellites will increasingly require the greatest possible productivity and scientific return on the large investments already made in the development and launch of these sophisticated spacecraft. Particular destinations, such as the seas of Europa, will place great demands on autonomous systems, which will have to conduct independent explorations in environments where communication with Earth is difficult or impossible. Proposed missions to near-Earth objects (NEOs) will entail autonomous rendezvous and proximity operations, and possibly contact with or sample retrieval from the object.

A variety of Earth and space science instruments can potentially be made

autonomous, in whole or in part, independently of whether the host spacecraft has any operational autonomy. Autonomous drilling equipment, hyperspectral imagers, and rock samplers have all been developed and demonstrated terrestrially in Mars-analog environments. EO1 and the Mars Exploration Rovers were (and are) fine examples of autonomous science systems that have improved our ability to respond immediately to transient phenomena.

This is hardly an exhaustive list of the possibilities, but it represents the broad spectrum of opportunities for autonomous support for space missions. Numerous other missions, in space, on Earth, and under the seas could also be enhanced or even realized by the careful application of autonomous systems.

CONCLUSIONS

Looking back, we have had some great successes, and looking ahead, we have some great opportunities. But it has been more than a decade since the first autonomous systems were launched into space, and operational autonomy is not yet a standard practice. So what would enable the adoption of autonomy by more missions?

-

An infrastructure (development, testing, and validation processes) and code base in place, so that each new mission does not have to re-invent the wheel and will only bear a marginal increase in cost;

-

A track record (“flight heritage”) establishing reliability; and

-

More widespread understanding of the benefits of autonomous systems.

To build an infrastructure and develop a “flight heritage,” we will have to invest. Earth-based rovers, submarines, aircraft, and other “spacecraft analogs” can serve (and frequently do serve) as lower cost, lower risk validation platforms. Such developmental activities should lead to several flights of small spacecraft that incrementally advance capabilities as they add to the flight heritage and experience of the technology and the team.

Encouraging and developing understanding will depend largely on technologists listening to those who might someday use their technologies. Unless we hear and address the concerns of scientists and mission-managers, we will not be able to explain how autonomous systems can further scientific goals. If the autonomous-systems community can successfully do these things, we as a society stand to enter an exciting new period of human and robotic discovery.

REFERENCES

Boeing Integrated Defense Systems. 2006. Orbital Express Demonstration System Overview. Technical Report. The Boeing Company, 2006. Available online at http://www.boeing.com/bds/phantom_works/orbital/mission_book.html.

Castaño, R., T. Estlin, D. Gaines, A. Castaño, C. Chouinard, B. Bornstein, R. Anderson, S. Chien, A. Fukunaga, and M. Judd. 2006. Opportunistic rover science: finding and reacting to rocks, clouds and dust devils. In IEEE Aerospace Conference, 2006. 16 pp.

Chien, S., R. Sherwood, D. Tran, B. Cichy, G. Rabideau, R. Castano, A. Davies, D. Mandl, S. Frye, B. Trout, S. Shulman, and D. Boyer. 2005. Using autonomy flight software to improve science return on Earth Observing One. Journal of Aerospace Computing, Information, and Communication 2(4): 196–216.

Chien, S., R. Doyle, A. Davies, A. Jónsson, and R. Lorenz. 2006. The future of AI in space. IEEE Intelligent Systems 21(4): 64–69, July/August 2006. Available online at www.computer.org/intelligent.

Doyle, R.J. 2002. Autonomy needs and trends in deep space exploration. In RTO-EN-022 “Intelligent Systems for Aeronautics,” Applied Vehicle Technology Course. Neuilly-sur-Seine, France: NATO Research and Technology Organization. May 2002.

Estlin, T., R. Castano, D. Gaines, B. Bornstein, M. Judd, R.C. Anderson, and I. Nesnas. 2008. Supporting Increased Autonomy for a Mars Rover. In 9th International Symposium on Artificial Intelligence, Robotics and Space, Los Angeles, Calif., February 2008. Pasadena, Calif.: Jet Propulsion Laboratory.

Fong, T., M. Allan, X. Bouyssounouse, M.G. Bualat, M.C. Deans, L. Edwards, L. Flückiger, L. Keely, S.Y. Lee, D. Lees, V. To, and H. Utz. 2008. Robotic Site Survey at Haughton Crater. In Proceedings of the 9th International Symposium on Artificial Intelligence, Robotics and Automation in Space, Los Angeles, Calif., 2008.

Frank, J. 2008a. Automation for operations. In Proceedings of the AIAA Space Conference and Exposition. Ames Research Center.

Frank, J. 2008b. Cost Benefits of Automation for Surface Operations: Preliminary Results. In Proceedings of the AIAA Space Conference and Exposition. Ames Research Center.

Hayden, S.C., A.J. Sweet, S.E. Christa, D. Tran, and S. Shulman. 2004. Advanced Diagnostic System on Earth Observing One. In Proceedings of AIAA Space Conference and Exhibit, San Diego, California, Sep. 28–30, 2004.

Knight, R. 2008. Automated Planning and Scheduling for Orbital Express. In 9th International Symposium on Artificial Intelligence, Robotics, and Automation in Space, Los Angeles, Calif., February 2008. Pasadena, Calif.: Jet Propulsion Laboratory.

Minsky, M. 1980. Telepresence. OMNI magazine, June 1980. Available online at http://web.media.mit.edu/~minsky/papers/Telepresence.html.

Muscettola, N., P.P. Nayak, B. Pell, and B.C. Williams. 1998. Remote agent: to boldly go where no AI system has gone before. Artificial Intelligence 103(1–2): 5–47. Available online at: http://dx.doi.org/10.1016/S0004-3702(98)00068-X.

Ogilvie, A., J. Allport, M. Hannah, and J. Lymer. 2008. Autonomous Satellite Servicing Using the Orbital Express Demonstration Manipulator System. Pp. 25–29 in Proceedings of the 9th International Symposium on Artificial Intelligence, Robotics and Automation in Space (i-SAIRAS’08).

Pell, B., D.E. Bernard, S.A. Chien, E. Gat, N. Muscettola, P.P. Nayak, M.D. Wagner, and B.C. Williams. 1996. A Remote Agent Prototype for Spacecraft Autonomy. In SPIE Proceedings, Vol. 2810, Space Sciencecraft Control and Tracking in the New Millennium, Denver, Colorado, August 1996.

Post, J.V., and D.D. Rose. Undated. A.I. In Space: Past, Present & Possible Futures. Available online at http://www.magicdragon.com/ComputerFutures/SpacePublications/AI_in_Space.html.

Williams, B.C., and P.P. Nayak. 1996. A Model-Based Approach to Reactive Self-Configuring Systems. Pp. 971–978 in Proceedings of the National Conference on Artificial Intelligence. Menlo Park, Calif.: Association for the Advancement of Artificial Intelligence.