10

Communicating About Analysis

Baruch Fischhoff

Useful analysis requires effective communication among diverse individuals. Members of an analytical community must communicate with each other and with their clients. Both kinds of communication can bring into contact individuals with very different missions, backgrounds, and perspectives. Within an analytical community, a single analysis might require communication among individuals with expertise in economics, anthropology, psychology, engineering, and logistics. Each contributing discipline might have subfields and competing theories, each needing to be heard. Setting the terms of an analysis and reporting its results might require communication with clients who differ from the analysts in their objectives, careers, and education. These professional differences overlay the cultural, socioeconomic, and other differences that can complicate any communication in a diverse society.

Other chapters in this volume document the threats to mutual understanding that arise in the absence of clear communication. One such threat arises when members of a group interact intensely with one another, but lose touch with how differently the world looks to members of other groups (see Tinsley, this volume, Chapter 9). A second threat arises when individuals misread one another’s objectives (see Arkes and Kajdasz, this volume, Chapter 7). A third arises when different experiences and objectives lead individuals to evaluate the quality of analyses differently (see this volume’s McClelland, Chapter 4; Spellman, Chapter 6; and Kozlowski, Chapter 12).

This chapter considers social, behavioral, and decision science research about overcoming such threats in order to improve communications about analysis. It considers communication both between analysts and their

clients and among analysts themselves. It considers the communication issues raised by analytical methods, psychological processes, and management practices. (For analytical methods, see this volume’s Kaplan, Chapter 2; Bueno de Mesquita, Chapter 3; McClelland, Chapter 4; and Skinner, Chapter 5. For psychological processes, see this volume’s Kaplan, Chapter 2; Spellman, Chapter 6; Arkes and Kajdasz, Chapter 7; and Hastie, Chapter 8, For management practices, see this volume’s Fingar, Chapter 1; Tetlock and Mellers, Chapter 11; and Kozlowski, Chapter 12). Like the other chapters, this chapter recognizes the need for additional research dedicated to the specific needs of analysts and their clients. Where it refers to general principles of decision science, additional sources include Clemen and Reilly (2002), Hastie and Dawes (2001), Raiffa (1968), and vonWinterfeldt and Edwards (1986). Where it refers to general principles of communicating decision-relevant information, additional sources include Fischhoff (2009), Morgan et al. (2001), Slovic (2001), Schwarz (1999), and Woloshin et al. (2008).

In a well-known essay, philosopher Paul Grice (1975) described the obligations of communication as saying things that are (1) relevant, (2) concise, (3) clear, and (4) truthful. Fulfilling the last of these conditions is at the core of the analytical enterprise. Taking full advantage of that commitment requires effective two-way communication, allowing analysts to understand their clients’ information needs and present their answers comprehensibly. After presenting the science available to meet those goals, the chapter outlines the organizational challenges to mobilizing it.

ANALYST–CLIENT COMMUNICATIONS

At times, analysts’ clients face specific decisions, such as whether to enter an international coalition, deploy military forces, suspend diplomatic relations, or reduce foreign aid. Serving such clients means efficiently communicating the information most critical to their choices. At other times, analysts’ clients face no specific decisions, but want the situational awareness needed for future decisions (e.g., what conditions affect the stability of international coalitions, the effectiveness of military deployments, the impacts of diplomatic sanctions, or the usefulness of foreign aid?). Serving such clients means communicating information that might be useful one day. The former might be called need-to-know communication and the latter nice-to-know communication.

In both cases, the communication task is the same as that of everyday life: Listening well enough to identify relevant facts and to convey them comprehensibly. Unlike most everyday life, for analysts, the set of things that might be said can be very large and the communication window

very small. The behavioral, social, and decision sciences offer methods for overcoming these barriers to communication.

Need-to-Know Communication

For need-to-know communication, value-of-information analysis (Clemen and Reilly, 2002; Raiffa, 1968) is the formal name (in decision analysis) for evaluating facts by their ability to increase the expected utility of recipients’ choices. Consider, for example, a client considering whether to strengthen sanctions on a target country. The most valuable information, for that decision, might pertain to the cohesiveness of the international coalition enforcing the sanctions, the strength of the target country’s economy, the vulnerability of its industries, the strength of its political opposition, or the probability of sanctions rallying nationalist feelings. Identifying the most valuable information requires knowing how clients see their choices, including their goals, the action options that they contemplate, and the probabilities of achieving each goal with each option. For example, when deciding about sanctions, some clients may be especially concerned about effects on civilian populations. They will need more information on those effects, on alternatives to sanctions, and on the probability of sustaining sanctions. If analysts fail to see these concerns, then they will not produce the relevant analyses. Conversely, if they see concerns where none exist, then they may produce analyses without an audience.

Value-of-information analysis provides a structured way to characterize decision-relevant information needs. It begins by sketching a client’s decision tree, then examines the impact of possible analytical results on identifying the best choice. Its product is a supply curve (to use the economic term), which orders information in terms of decreasing marginal contribution to improved decision making. The communication window is used best by providing the most valuable analytical results first. At some point, it may pay to close that window, when additional results cannot improve the decision or the cost is too high (in terms of the time spent conveying analyses or the opportunities lost while waiting for analytic results to be produced).

Decision theory offers formal procedures for computing the expected utility of choices and the value of information in aiding them. Used slavishly, these procedures can undermine analysts’ work, especially if the calculations are done outside the analytical team or by computer programs whose results must be taken on faith. However, understanding the principles underlying these procedures, which show how to think about making various kinds of decisions, can help to structure communications and the analyses that precede them.

These formal procedures assume that decision makers are rational when integrating new information with their existing beliefs and values. Such

rationality is unattainable when inevitably fallible individuals face complex choices (see this volume’s Spellman, Chapter 6; Arkes and Kajdasz, Chapter 7; Hastie, Chapter 8; and Tinsley, Chapter 9). However, the assumption of rationality allows analysts to evaluate information needs in an orderly way. It also fits their organizational role. Whatever they think about their clients in private, analysts are expected to treat them as rational individuals, able to make good use of analytical results. Fortuitously, decision research has found that many choices tolerate modest imprecision in how well decision makers understand and integrate their facts (Dawes, 1979; Dawes et al., 1989; vonWinterfeldt and Edwards, 1986). With such decisions, analysts who create and convey relevant facts may have done all that is needed to allow imperfect clients to make good choices.

Nice-to-Know Communication

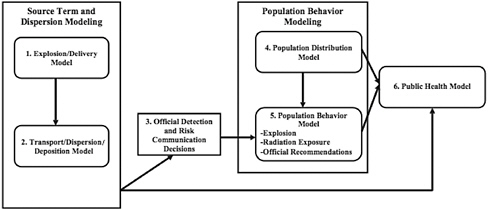

As mentioned, sometimes clients are not making choices, but looking for signs that decisions might need to be made—or remade. In order to “follow the action,” they need to understand the factors that shape the outcomes that matter most to them. Influence diagrams (Clemen and Reilly, 2002; Horvitz, 2005; Howard, 1989) provide a general way to represent such understanding, consistent with most of the models described in chapters in this volume by Kaplan (Chapter 2), Bueno de Mesquita (Chapter 3), McClelland (Chapter 4), and Skinner (Chapter 5). Figure 10-1 provides a highly simplified example of an influence diagram. In it, the nodes represent variables describing a situation, whereas the arrows represent the relationships between them. The nodes require observation, whereas the arrows require theory. Between them, they provide a standard, transparent representation of what analysts (and their clients) believe.

With such models, sensitivity analysis (Clemen and Reilly, 2002; Raiffa, 1968) plays the role that value-of-information analysis plays with specific decisions. It asks how “sensitive” predictions are to variations in each variable (node) or relationship (arrow). For example, if clients want to predict a country’s political stability, then analysts might examine how sensitive that stability is to variables such as the health of the country’s major industries, news media, police force, and public health system. Further analyses can then focus on the most important of these factors. For example, if the country’s leadership is vulnerable to its public’s perception that it cannot handle emergencies, then analysts might study the adequacy of the country’s disease surveillance, risk communication, and disaster response systems.

The most useful communications contain information with high predictive value that is not already known. Telling people what they already know wastes their time and trust. Thus, if clients already know how public perceptions of their leaders’ competence affect public morale, then they just

FIGURE 10-1 An influence diagram.

NOTE: Simplified version of an influence diagram for predicting the public health consequences of a radioactive dispersion device (RDD). Each of the six nodes summarizes one subset of the analysis in a form that can be used to predict the variables in the next nodes to which it is connected. Any RDD scenario can be represented as an instantiation of each element of the model (e.g., one source term for the amount of material dispersed, one official communication, one degree of public compliance).

SOURCE: Dombroski and Fischbeck (2006). Reprinted with permission from John Wiley and Sons. Original publication available: http://onlinelibrary.wiley.com/journal/10.1111/(ISSN)1539-6924.

need to be told the state of those perceptions. If they do not understand those processes, then they first need background information on the roles played by public opinion. In psychological jargon, they need help creating a mental model of the processes being described; that is, an intuitive representation, in which they can accommodate new information and from which they can derive predictions (Bartlett, 1932; Gentner and Stevens, 1983; Rouse and Morris, 1986).

Assessing Clients’ Knowledge and Needs

Setting communication priorities requires knowing how clients currently view their choices. What are their goals? What options will they consider? What do they believe about the expected outcomes of choosing different options? How accurate and certain are those beliefs? Given the well-established limits to interpersonal understanding (see this volume’s Arkes and Kajdasz, Chapter 7; Hastie, Chapter 8; and Tinsley, Chapter 9), it would be perilous to assume that clients’ views are accessible to analysts by observation alone.

Scientific approaches to assessing others’ views can be categorized

as open-ended, structured, inferred, and indexed methods—in order of roughly decreasing sensitivity to individual differences in those views (e.g., Converse and Presser, 1986; Fischhoff, 2009; Schwarz, 1996; Sudman and Bradburn, 1982). Applying these methods before an analysis allows assessment of clients’ information needs. Applying them after communicating analytical results allows assessment of how well those needs have been met; that is, to what extent clients have learned what they most need to know. The opportunities to apply these methods will depend on the clients, the analysts’ relationship with them, and the resources devoted to communication. Knowing what scientifically sound assessment entails can help analysts to make the best of whatever opportunities they have—and to recognize the barriers to understanding their clients.

Open-Ended Methods

The best way to understand clients (or anyone else) is to work closely and continuously with them, learning their views and receiving feedback on how well one is communicating (and saying things that seem worth knowing). If analysts lack access to the ultimate client, they may still be able to talk with surrogates, such as current staff members or previous holders of the client’s position—recognizing that these surrogates may not understand the client’s view and may not faithfully represent it even if they do (when they have agendas of their own).

Effective consultation must have both enough structure to identify clients’ specific needs and enough freedom to allow clients to express themselves in their own words on topics of their own choosing. A common research procedure for addressing these somewhat conflicting goals is the semi-structured, open-ended interview. It entails asking increasingly specific questions, based on preliminary analysis of the client’s decision (Morgan et al., 2001). The opening section of an interview allows the client to raise any issue on the general topic. The middle section asks the client to elaborate on all those issues. The final section asks about issues that arose in the preliminary decision analysis, but not in the interview. Thus, an interview might gravitate from “What do you see as the main factors in South Asia?” to “How do you characterize the Taliban in Afghanistan?” to “What about [X]?” (where X is a seemingly important local leader who has not been mentioned). Such interviews allow analysts to follow their clients’ lead, without inadvertently missing topics because the discussion drifts in other directions.

Structured Methods

Open-ended interviews demand quality time from busy clients, while allowing them to speak their minds in their own terms. When such interviews

are impossible, structured methods might be an alternative. Indeed, there are often standard ways for clients to communicate their perceived needs to the intelligence community (e.g., Requests for Information, Collection Directives). For analysts to produce and communicate useful results, these structured communications must capture the decisions that their clients are facing. Unfortunately, such communications have well-known limits. Their contents are limited to issues that those who use them see as relevant and to terms that users expect to be understood. They offer little opportunity for analysts to request clarifications or to reveal the need for them. Thus, structured methods can leave an illusion of understanding in situations where analysts and clients are talking past one another.

Some misunderstandings have been extensively documented. For example, verbal quantifiers (e.g., “most,” “likely,” “rare”) can mean different things to different people and to the same person in different contexts (Kent, 1964; O’Hagan et al., 2006; Wallsten et al., 1993). People prefer receiving numeric estimates (which are more informative), but prefer giving verbal ones (which are easier to produce) (Erev and Cohen, 1990). From a cognitive perspective, this problem is easily addressed. Probabilities are everyday terms, readily applied to any well-specified event (e.g., “What is the probability that at least 10 percent of voters will support far-right parties?”). Studies find that people typically provide probabilities having good construct validity, in the sense of being systematically related to their other beliefs. Such probabilities still may not have external validity, in the sense of predicting what will happen. However, those errors reflect misinformation, rather than an inability to translate beliefs into numbers (Fischhoff, 2008, 2009).

There is less consensus on how to express the uncertainty surrounding analyst’s knowledge (e.g., how much confidence can be placed in predictions, see Funtowicz and Ravetz, 1990; Politi et al., 2007). Researchers focused on specific domains have found how differently people can use everyday terms such as “inflation” (Ranyard et al., 2008) and “safe sex” (McIntyre and West, 1992) or how they express race and ethnicity (McKenney and Bennett, 1994). If analysts and their clients unwittingly use terms differently, then analyses may not be understood as intended (Fischhoff, 1994).

When limited to structured communications with their clients, analysts might use two procedures familiar to survey researchers (Converse and Presser, 1986). One is the cognitive interview, which asks people like the intended users of a structured instrument to think aloud as they attempt to use it (Ericsson and Simon, 1994). Allowing these test readers to say whatever comes to mind when they use a communication instrument allows them to surprise its designer with interpretations that they never imagined.

The second procedure is the manipulation check, which asks users to report how they interpreted selected items. Without such empirical checks, it is hard to assess a communication channel’s noisiness.

Inferred Methods

Without direct or indirect communication, analysts are left watching their clients, wondering what decisions they are facing now or might be considering in the future. Applied economics has formalized the process of inferring intentions from actions in revealed preference analysis. For example, many studies attempt to discern the importance of various housing features (e.g., size, style, age, school district) from purchase prices (e.g., Earnhart, 2002). A controversial application involves inferring the value of a human life from the wage premium paid for riskier jobs (Viscusi, 1983).

Revealed preference analyses have the attraction of examining actual behavior, rather than verbal expressions, as with open-ended interviews or structured surveys. However, economic research has also demonstrated how difficult it is to isolate the role that each goal and belief plays in a decision. Economists typically simplify their task by assuming that decision makers accurately perceive the expected outcomes of their choices. That allows them to focus on deducing decision-makers’ goals.

Analysts cannot make that simplifying assumption. Rather, they must face the full complexity of inferring what their clients believed and wanted when they make a decision. As a further complication, their clients may make choices that are designed to hide their beliefs and values (unlike home buyers and workers in hazardous industries). Those beliefs and values may change over time, as circumstances change. Decisions that they never made cannot be observed. “Reading the boss’s mind” is a perennial workplace activity. However, it provides weak guidance for communicating with clients.

Indexed Methods

If analysts can neither communicate with nor observe their clients adequately, they may be left thinking about how people like those clients view their decisions. That means asking questions such as “How much do they know about human rights (smuggling, corruption …)?” “How much do they care?” “What are their blind spots?” “Which options are unthinkable for them?” Analysts placed in this position need, in effect, a theory of how people like their clients think.

Some of the science relevant to that understanding appears elsewhere in this volume. For example, organizational research (see this volume’s Hastie, Chapter 8; Tinsley, Chapter 9; Tetlock and Mellers, Chapter 11; and Kozlowski, Chapter 12) can describe clients in terms such as:

-

What kinds of people end up in those positions (e.g., by ethnicity, politics, socioeconomic background)?

-

What cross-cultural experiences have they had?

-

What disciplines have they studied?

-

What do they read?

-

Whom do they consult?

-

Are they rewarded for bold or for conservative decisions?

-

Do they find statistical or case-study evidence more persuasive?

-

Do they find particular words offensive?

To use such general information, analysts must overcome judgmental challenges such as those presented in chapters in this volume by Spellman (Chapter 6), Arkes and Kajdasz (Chapter 7), and Tinsley (Chapter 9). For example, weak anecdotal evidence about a specific client might overwhelm strong base-rate knowledge about what such people generally do. Decisions dominated by situational constraints might be misattributed to individual preferences. Undue confidence may be placed in small samples. However, reading about the limits to intuitive judgment (e.g., Ariely, 2009; Poulton, 1994; Thaler and Sunstein, 2008) provides no guarantee of being able to overcome them (Milkman et al., 2009).

Communication Strategies

Knowing their clients’ views allows analysts to focus their work, thereby producing the most useful analyses. This knowledge also positions them to report that work effectively, by choosing the best language, examples, and background information. As with assessing clients’ needs, the weaker the connection is between analyst and client, the greater the need is to rely on general behavioral principles when designing communications. Some examples follow, building on the principles of chapters in this volume by Spellman (Chapter 6), Arkes and Kajdasz (Chapter 7), Hastie (Chapter 8), and Tinsley (Chapter 9). (See also National Research Council, 1996, 1989.)

Need-to-Know Communication

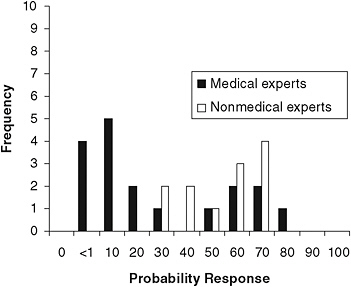

Decision makers often need to know the probability of an event happening. Communicating such predictions requires unambiguous events and numeric probabilities. Figure 10-2 shows one apparent consequence of communicating analytical results imprecisely. It summarizes judgments of “the probability of efficient human-to-human transmission of the H5N1 [avian flu] virus within 3 years.” These judgments were elicited in October 2005 from a group of leading public health experts and a group of similarly accomplished non-medical experts (associated with technologies that

might ameliorate a pandemic). At the time, the news media were saturated with flu-related reporting. However, those reports had few quantitative estimates. In the absence of such estimates, these nonmedical “experts” saw much higher risks than did the medical experts, a miscommunication that was revealed once their beliefs were made explicit. In retrospect, the 15 percent chance seen by the medical experts seems like a reasonable value, whereas the 60 percent chance imputed to them seems alarmist. Thus, poor communication encouraged unduly harsh judgment of these analysts’ work (see McClelland, this volume, Chapter 4).

An event is unambiguous if one could, eventually, tell whether it has occurred. When events are ambiguous, predictions are confusing, even for people comfortable with probabilities. In the late 1970s, the National Weather Service experienced resistance to the probability-of-precipitation forecasts that it had introduced a decade earlier. Studies found that the problem was not with the probabilities, but with the events. Did a “70 percent chance of rain” mean “rain over 70 percent of the period,” “rain

FIGURE 10-2 Probability judgments for efficient human-to-human transmission of avian flu.

NOTE: Responses to “What is the probability that H5N1 will become an efficient human-to-human transmitter (capable of being propagated through at least two epidemiological generations of humans) sometime during the next 3 years?” Answers were from 19 public health experts (median = 15 percent) and 18 experts in other fields, primarily technology (median = 60 percent).

SOURCE: Bruine de Bruin et al. (2006). Reprinted by permission of the publisher (Taylor and Francis Group, http://www.informaworld.com).

over 70 percent of the area,” or “70 percent chance of measurable rain?” The answer is the last one (Joslyn and Nichols, 2009; Murphy et al., 1980).

Research has found that some details are particularly hard to communicate (Fischhoff, 2009; Schwarz, 1996). For example, people often overlook quantitative details of an event’s scope (e.g., how broad an area is involved; how often the event will be repeated). One possible solution is highlighting quantitative details (e.g., how long a medical procedure lasts) that might be overshadowed by more tangible qualitative ones (e.g., how much it hurts). A second possible solution is using the most natural scope. The formulation in Figure 10-2 reflected public health experts’ belief that 3 years is the normal planning horizon for pandemic preparations. Box 10-1 shows four other design problems, along with solutions suggested by the research literature (Fischhoff, 2009; Slovic, 2001).

Nice-to-Know Communication

Even when individuals understand the intended meaning of an analytical result, they may lack an intuitive feeling for why it was made and how much to trust it. Without that understanding, they may lack the feeling of self-efficacy needed to rely on it. They would, in effect, be forced to make and defend decisions based on “what my staff [or contractors] tell me,

|

BOX 10-1 Four Communication Design Problems and Solutions

SOURCE: Drawn from Fischhoff (2009) and Slovic (2001). |

although I can’t really explain why.” They would also lack the substantive knowledge needed to identify new options or tell when circumstances have changed enough to require new analyses.

Psychology has a long tradition of studying the mental models that individuals use when thinking about how their world works (Johnson-Laird, 1983; Rouse and Morris, 1986). Studies have examined thinking in domains as diverse as reading maps, reasoning syllogistically, following instructions, interpreting personal health signs, predicting physical mechanics, making sense of physiological processes (e.g., the circulatory system), and grasping the interplay of physical, biological, and social forces associated with climate change. These studies all begin with a normative analysis, summarizing the relevant science in something like influence-diagram form. They proceed to descriptive studies capable of revealing intuitive beliefs, including ones very different from the normative standard. The studies then seek prescriptive interventions for bridging the gap between the normative ideal and descriptive reality.

The descriptive studies often reveal heuristics, or rules of thumb, that allow people to approximate solutions to problems for which they lack needed information or skills. Some heuristics apply to broad classes of inferences. A classic source is Pólya’s (1957) How to Solve It, with heuristics such as, “Can you find an analogous problem and solve that?” “Can you draw a picture of the problem?” “Have you used everything that you know about it?” Communications that evoke these ways of thinking might help clients to think like analysts. Thus, it may help to draw analogies, use pictures (e.g., influence diagrams), or invoke otherwise forgotten facts (Larkin and Simon, 1987).

The judgment heuristics described by Spellman (this volume, Chapter 6) and Arkes and Kajdasz (this volume, Chapter 7) might be used similarly, while taking care to avoid the biases they can produce. For example, using the availability heuristic means evaluating the probability of an event by how easily instances come to mind. It can be a useful guide, unless some examples are disproportionately available (e.g., Munich, shark attacks). Analysts might reduce this threat by presenting balanced sets of examples and explicitly noting ones that might be neglected.

Other heuristics are domain specific, like those used to predict how explosives work, gases disperse, traffic moves, or inflation behaves. Communications may fail unless they make sense to people, given these normal ways of thinking (or mental models). For example, when analyses predict human behavior (see this volume’s Kaplan, Chapter 2; Bueno de Mesquita, Chapter 3; McClelland, Chapter 4; and Skinner, Chapter 5), their work will be interpreted in terms of clients’ “folk wisdom” heuristics about human behavior (see this volume’s Spellman, Chapter 6; Arkes and Kajdasz, Chapter 7; and Tinsley, Chapter 9). When those heuristics are accurate, that part of the analytical story need not be explained. For example, if clients share

the heuristic that “Everyone has sacred values that they will not compromise,” communications can focus on what is sacred to the specific people being analyzed. If clients lack that heuristic, then it needs to be explained in intuitively plausible terms (e.g., by reference to values that they themselves hold sacred). If clients hold contradictory heuristics, then evoking both may help, so that clients can think about their relative strength. For example, “If we make that gesture, it will be interpreted as a drop in the bucket, rather than as a foot in the door, toward deeper engagement.”

If clients’ common sense is wrong, then better intuitions are needed. For example, typically seeing people in similar circumstances leads to the fundamental attribution error (Arkes and Kajdasz, this volume, Chapter 7), whereby observers exaggerate the power of personality factors, relative to situational pressures, in shaping behavior. One possible antidote is suggesting missing behaviors (e.g., “We’ve never seen him without his advisors. Would he be so brave alone?”). Similarly, analyses that predict orderly public reaction to emergencies may confront the widespread myth of mass panic. It might be undermined by noting (1) that memory can conflate movie scenes with reality, (2) that people running from problems may be acting rationally and cooperatively (as in the 9/11 evacuations), (3) that feeling panicky rarely leads to panic behavior, and (4) that, in emergencies, most people are rescued by “ordinary citizens,” who happen to be there, before professional rescuers arrive to do their brave work trying to save the last few lives (Wessely, 2005). Such explanations replace faulty elements of mental models with sound ones, while building on client’s existing knowledge.

Extrapolation

The relevancy of these results (and others like them) to communicating analytical results in any specific setting depends on how different the real situation is from the conditions that behavioral scientists have studied. Three general aspects of the research that merit attention are:

-

The people involved in the research. To a first approximation, basic decision-making skills are acquired by the mid-teen years and retained through life (Fischhoff, 2008; Reyna and Farley, 2006). Thus, how people think in behavioral studies should not be that different from how people think in other settings. However, most behavioral research involves individuals without subject matter expertise. As a result, conclusions based on what people think must be generalized cautiously.

-

The prevalence of the effects. Like other sciences, the behavioral sciences often create conditions that exaggerate effects, so as to

-

observe their operation more closely. As a result, effects can be larger in the lab than in life. Like other sciences, the behavioral sciences often focus on problems. As a result, people can look worse in the lab than in life. The scientific reason for focusing on problems is that there are often many explanations for good performance (e.g., instruction, inference, trial and error), but few explanations that fit a pattern of errors.

-

The soundness of the normative analyses. The rhetoric of behavioral research tends to emphasize problems. However, claims of bias are not always supported by normative analyses of what constitutes sound decision making (see this volume’s Kaplan, Chapter 2; Bueno do Mesquita, Chapter 3; McClelland, Chapter 4; and Skinner, Chapter 5). For example, people respond differently to reports of absolute and relative risk (see Box 10-1). Although this difference is sometimes identified as a bias, there is no necessary connection between the two judgments: People who only hear relative risk estimates must guess at the associated absolute risks. If they guess wrong, then they should see the risks differently than do people who know the correct values.

Extrapolating results from research settings to other ones requires understanding both. The chapters of this volume seek to make behavioral and social research accessible to those knowledgeable about the world of analysis. Fully examining these connections requires collaborations between people with expertise in both domains.

Measuring Communication Success

The success of communications is an empirical question. Without evidence, one must guess whether clients have absorbed what they need. When direct assessment is possible, measures include (1) clients’ memory of what has been reported; (2) manipulation checks of whether information was interpreted as intended; (3) active mastery of the content, allowing clients to make valid inferences from it; (4) predictive ability, enhanced by the analysis; (5) calibration of confidence judgments in the analysis, expressing recognition of its limits; and (6) coherence, showing internal consistency of beliefs (see Fischhoff, 2009, for details and references). When direct assessment is impossible, analysts are left guessing how well they have achieved these goals.

ANALYST–ANALYST COMMUNICATIONS

To meet client needs, analysts must predict the outcomes that those clients value. Typically they must assemble knowledge distributed among diverse individuals, some with established working relations, some without. These analyst–analyst communications face challenges analogous to those of analyst–client communications, with analogous opportunities to bridge the gaps. These are covered in the next two sections, which draw on Fischhoff et al. (2006), Morgan et al. (2001), Morgan and Henrion (1990), and O’Hagan et al. (2006). The chapters in this volume by Tetlock and Mellers (Chapter 11), Kozlowski (Chapter 12), and Zegart (Chapter 13) discuss institutional arrangements needed to allow such collaboration.

Task Analysis of Challenges in Analytical Collaboration

Analyses take varied forms, ranging from fully computational to purely narrative (see this volume’s Kaplan, Chapter 2; Bueno de Mesquita, Chapter 3; McClelland, Chapter 4; and Skinner, Chapter 5). Whatever methods an analytical team uses, it faces common communication challenges. In influence diagram terms, a fully computational model is represented by the variables and relationships in a diagram, while a fully specified narrative analysis describes a path through a diagram, instantiating its variables and relationships.

Assessing the variables in an analysis requires eliciting experts’ beliefs with the precision required of probability judgments (see Figure 10-2). Without that precision, other analysts cannot know what they believe about, say, a regime’s stability, an army’s readiness, or a leader’s health. When experts disagree, other analysts need to know the distribution of their answers (and not just a verbal summary of their degree of consensus). Experts in one domain also need to know the assumptions made by experts in other ones. For example, in Figure 10-1, experts in official responses (node 3) need some assumptions about the kinds of attack (node 1) and dispersion (node 2) scenarios they must consider. Dispersion modelers need some assumptions about the kinds of input that officials need. Without good communication, their work may not connect.

Specifying the relationships in an analysis requires similar clarity. Analysts need to know the functional form of each dependency. For example, do experts believe that deterrence increases monotonically with armament levels or does it only “kick in” after they pass some threshold? Do experts believe that a single act of humiliation can radicalize a young person or that radicalization reflects a cumulative effect? Here, too, analysts need to know the degree of consensus in the fields on which they depend.

Clear communication about uncertainties and disagreements is essential

to determining how much they matter. Even fields that are full of disputes and uncertainties may still have all the precision that clients need. For example, Morgan and Keith (1995) conducted extensive interviews with climate scientists, representing the range of expert opinions—and found that nearly all their experts saw a significant chance of major warming, seemingly enough to convince any policy maker that a large gamble is being taken with the environment. Seemingly different theories can make similar predictions when they add up related factors (Dawes et al., 1989).

Communication between analysts may have special value when it allows triangulating the perspectives produced by using narrative and computational methods. Computational models create scenarios from individually plausible links, then assess their overall probability. However, in the life of a person (or political movement), individual links can be plausible, yet add up to an implausible story. For example, someone who perceives deep personal humiliation may be more likely to accept radical ideologies, while someone who accepts radical ideologies may be more likely to be recruited to commit violent acts. However, deep personal humiliation may leave psychological scars that forestall such action (Ginges and Atran, 2008).

Examining the coherence of a sequence of links is at the heart of narrative analysis. Conversely, ensuring the completeness and precision of an account is at the heart of quantitative analysis. At times, practitioners of the two approaches mistrust one another’s work. Narrative analysts fear undue pressure for quantification, which can happen when easily quantified factors (e.g., estimates of materiel) are privileged over more qualitative ones (e.g., political sentiment). Quantitative analysts fear undue opacity in narratives, which can happen when good writing takes precedence over clear thinking. A possible compromise is requiring narratives to be clear enough to allow creating models that could be computed, were the data available. Conversely, quantitative models should not be constrained by data concerns when identifying potentially relevant predictors. Fischhoff and colleagues (2006) use computable to describe models that are designed to facilitate such communication between qualitative and quantitative analysts.

Communication Methods

Methods for analyst–analyst communication parallel those for analyst–client communication, with similar strengths, weaknesses, and supporting science.

Open-ended methods involve direct interactions among analysts, designed to allow disagreements about variables, relationships, and terms to emerge. Jointly creating influence diagrams is one such method. Electronic

media offer new possibilities for understanding (e.g., collaborative work-spaces) and misunderstanding (e.g., flaming, Wiki wars).

Structured methods elicit experts’ judgments (as in Figure 10-2), allowing other analysts to consult the summaries. Omitting direct communication among analysts increases the importance of asking precise questions and eliciting precise answers.

Inferred methods involve reading other experts’ analyses, hoping to grasp their reasoning. Narrative analyses can be misread, when the ability to read their lines suggests the ability to read between their lines. Quantitative analyses can be misread, when their lack of transparency leads them to be taken on faith or rejected outright.

Indexed methods summarize a field’s typical results, in stylized form, for others’ use. In order to represent a field responsibly, they must also reveal its controversies and uncertainties. The Cochrane Collaboration offers valuable procedures for that process on its website.1

Chapters in this volume by Spellman (Chapter 6), Arkes and Kajdasz (Chapter 7), Hastie (Chapter 8), and Tinsley (Chapter 9) describe some of the perils to understanding, even among individuals with shared goals. Sound analyst–analyst communication increases the chances of analysts having something valuable to communicate to clients.

MANAGING FOR COMMUNICATION SUCCESS

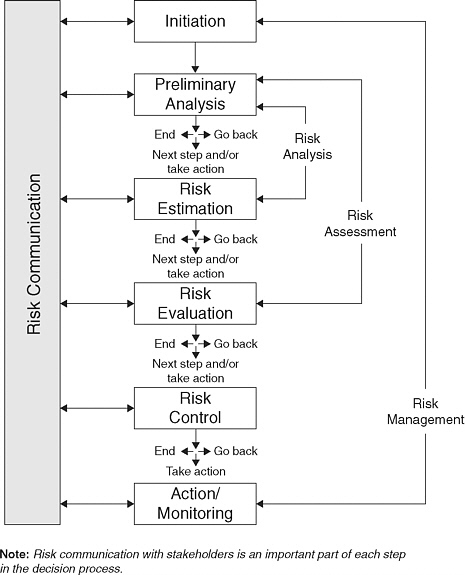

As with other vital organizational functions, effective communications require proper people and processes (see this volume’s Tetlock and Mellers, Chapter 11, and Kozlowski, Chapter 12). Figure 10-3 shows a model for integrating communication with analysis and action (National Research Council, 1996, described a process that is similar in spirit). The central column in the picture depicts a fairly conventional analytical process, beginning with problem formulation and proceeding to action. (It has noteworthy reality checks between stages, asking whether the work bears continuing.)

The left-hand bar requires ongoing two-way communication between clients and analysts. In the early stages, analysts are in the center column, learning what their clients need to know, while preparing them with nice-to-know background information. In the later stages, analysts are in the left column, communicating their analyses and listening for future analytical needs, as they observe their clients taking action.

Management must create these channels to make communication possible. It must also staff them properly. To implement the methods described here, analytical teams need subject matter expertise (regarding potentially

|

1 |

See http://www.cochrane.org [accessed October 2010]. |

FIGURE 10-3 A model for integrating analysis and communication.

NOTE: The center column shows the stages in a conventional, analytically driven decision-making process (here, expressed in terms of risks). The right shows the needed strategic commitment to two-way communication at all stages of the process.

SOURCE: Canadian Standards Association (1997). Reproduced with the permission of Canadian Standards Association from CAN/CSA-0850-97 (R2009)—Risk Management: Guideline for Decision Makers, which is copyrighted by CSA, 5060 Spectrum Way, Mississauga, ON, L4W 5N6 Canada. While use of this material has been authorized, CSA shall not be responsible for the manner in which the information is presented, nor for any interpretations thereof. For more information on CSA or to purchase standards, please visit our website at http://www.shopcsa. ca or call 1-800-463-6727.

relevant facts), methodological expertise (for identifying and synthesizing those facts), and communication expertise (for designing and evaluating communications).

Although having subject matter expertise is natural for analytical organizations, having the other two kinds may not be. Without them, it is not possible to take full advantage of the behavioral, social, and decision science research available to the intelligence community. Given the diversity of that research, such expertise might be provided through a central unit available to all analytical teams, with matrix management to ensure that the unit’s members are integrated with analytical teams. An alternative management approach is to secure the needed behavioral expertise through external contracts. For such outsourcing to succeed, an analytical organization needs the internal expertise to evaluate service providers. Choosing between internal and external expertise requires balancing the short-term benefits of improving specific analyses against the long-term benefits of enhancing an organization’s own human capital.

CONCLUSION

Effective communication is essential to effective analysis. It is needed to identify the questions that clients need to have answered, to coordinate the expertise relevant to providing those answers, and to ensure that analytical results are understood as intended. A scientific approach to communication entails both analytical and empirical research. The former involves decision analysis of information needs and task analysis of information flows. The latter involves assessment of beliefs before and after receiving analytical results.

The execution of that research can draw on behavioral and decision science theory and method. Decision science provides methods for identifying the information that needs to be known, by clients with well-formulated decisions, and the information that would be nice to know, for clients hoping to create decision options, monitor changes in their environment, or understand what information they need to know. Behavioral science provides guidance on obstacles to understanding analytical results and on ways to overcome them, along with organizational guidance on securing and deploying the needed human resources.

Communication is sometimes seen as a tactical step, transmitting results to clients. However, unless communication also plays a strategic role, those analyses may be off target and incompletely used. If an analytical organization makes a strategic commitment to communication, behavioral science can help with its execution, overcoming some of the flawed intuitions that can lead people to exaggerate how well they understand one another.

REFERENCES

Ariely, D. 2009. Predictably irrational. New York: Harper.

Bartlett, F. C. 1932. Remembering. Cambridge, UK: Cambridge University Press.

Bruine de Bruin, W., B. Fischhoff, L. Brilliant, and D. Caruso. 2006. Expert judgments of pandemic influenza. Global Public Health 1(2):178–193.

Canadian Standards Association. 1997. Risk management: Guideline for decision makers (reaffirmed 2002). Etobicoke, Ontario, Canada: Canadian Standards Association.

Clemen, R. T., and R. Reilly. 2002. Making hard decisions: An introduction to decision analysis. Belmont, CA: Duxbury.

Converse, J. M., and S. Presser. 1986. Survey questions. Handcrafting the standardized questionnaire. Thousand Oaks, CA: Sage Publications.

Dawes, R. M. 1979. The robust beauty of improper linear models in decision making. American Psychologist 34:571–582.

Dawes, R. M., D. Faust, and P. E. Meehl. 1989. Clinical vs. actuarial judgment. Science 243:1668–1674.

Dombroski, M. J., and P. S. Fischbeck. 2006. An integrated physical dispersion and behavioral response model for risk assessment of radiological dispersion device (RDD) events. Risk Analysis 26:501–514.

Earnhart, D. 2002. Combining revealed and stated data to examine housing decisions using discrete choice analysis. Journal of Urban Economics 51:143–169.

Erev, I., and B. L. Cohen. 1990. Verbal versus numerical probabilities: Efficiency, biases, and the preference paradox. Organizational Behavior and Human Decision Processes 45:1–18.

Ericsson, A., and H. A. Simon. 1994. Verbal reports as data. Cambridge, MA: MIT Press.

Fischhoff, B. 1994. What forecasts (seem to) mean. International Journal of Forecasting 10:387–403.

Fischhoff, B. 2008. Assessing adolescent decision-making competence. Developmental Review 28:12–28.

Fischhoff, B. 2009. Risk perception and communication. In R. Detels, R. Beaglehole, M. A. Lansang, and M. Gulliford, eds., Oxford textbook of public health, 5th ed. (pp. 940–952). Oxford, UK: Oxford University Press.

Fischhoff, B., W. Bruine de Bruin, U. Guvenc, D. Caruso, and L. Brilliant. 2006. Analyzing disaster risks and plans: An avian flu example. Journal of Risk and Uncertainty 33:133–151.

Funtowicz, S., and J. Ravetz. 1990. Uncertainty and quality in science for policy. Dordrecht, The Netherlands: Kluwer.

Gentner, D., and A. L. Stevens, eds. 1983. Mental models. Hillsdale, NJ: Erlbaum.

Ginges, J., and S. Atran 2008. Humiliation and the inertia effect. Journal of Cognition and Culture 8:281–294.

Grice, P. 1975. Logic and conversation. In P. Cole and J. L. Morgan, eds., Syntax and semantics: Speech acts. Vol. 3 (pp. 133–168). New York: Guilford Press.

Hastie, R., and R. M. Dawes. 2001. Rational choice in an uncertain world, 2nd ed. Thousand Oaks, CA: Sage.

Horvitz, E., ed. 2005. Special issue on graph-based representation. Decision Analysis 2(4): 183–244.

Howard, R. A. 1989. Knowledge maps. Management Science 35:903–922.

Johnson-Laird, P. N. 1983. Mental models. New York: Cambridge University Press.

Joslyn, S. L., and R. M. Nichols. 2009. Probability or frequency? Expressing forecast uncertainty in public weather forecasts. Meteorological Applications 90:185–219.

Kent, S. 1964. Words of estimative probability. Available: https://www.cia.gov/library/center-for-the-study-of-intelligence/csi-publications/books-and-monographs/sherman-kent-and-the-board-of-national-estimates-collected-essays/6words.html [accessed May 2010].

Larkin, J., and H. A. Simon. 1987. Why a diagram is (sometimes) worth ten thousand words. Cognitive Science 11(1):65–99.

McIntyre, S., and P. West. 1992. What does the phrase “safer sex” mean to you? Understanding among Glaswegian 18 year olds in 1990. AIDS 7:121–126.

McKenney, N. R., and C. E. Bennett. 1994. Issues regarding data on race and ethnicity: The Census Bureau experience. Public Health Reports 109:16–25.

Milkman, K. L., D. Chugh, and M. H. Bazerman. 2009. How can decision making be improved? Perspectives on Psychological Science 4:379–383.

Morgan, M. G., and M. Henrion. 1990. Uncertainty. New York: Cambridge University Press.

Morgan, M. G., and D. W. Keith. 1995. Subjective judgments by climate experts. Environmental Science and Technology 29:468A–476A.

Morgan, M. G., B. Fischhoff, A. Bostrom, and C. Atman. 2001. Risk communication: The mental models approach. New York: Cambridge University Press.

Murphy, A. H., S. Lichtenstein, B. Fischhoff, and R. L. Winkler. 1980. Misinterpretations of precipitation probability forecasts. Bulletin of the American Meteorological Society 61:695–701.

National Research Council. 1989. Improving risk communication. Committee on Risk Perception and Communication, Commission on Physical Sciences. Commission on Behavioral and Social Sciences and Education. Washington, DC: National Academy Press.

National Research Council. 1996. Understanding risk: Informing decisions in a democratic society. P. C. Stern and H. V. Fineberg, eds. Committee on Risk Characterization. Commission on Behavioral and Social Sciences and Education. Washington, DC: National Academy Press.

O’Hagan, A., C. E. Buck, A. Daneshkhah, J. R. Eiser, P. H. Garthwaite, D. J. Jenkinson, J. E. Oakley, and T. Rankow. 2006. Uncertain judgments: Eliciting expert probabilities. Chichester, UK: Wiley.

Politi, M. C., P. K. J. Han, and N. Col. 2007. Communicating the uncertainty of harms and benefits of medical procedures. Medical Decision Making 27:681–695.

Pólya, G. 1957. How to solve it, 2nd ed. Princeton, NJ: Princeton University Press.

Poulton, E. C. 1994. Behavioral decision making. Hillsdale, NJ: Erlbaum.

Raiffa, H. 1968. Decision analysis. Reading, MA: Addison-Wesley.

Ranyard, R., F. Del Missier, N. Bonini, D. Duxbury, and B. Summers. 2008. Perceptions and expectations of price changes and inflation: A review and conceptual framework. Journal of Economic Psychology 29:378–400.

Reyna, V., and F. Farley. 2006. Risk and rationality in adolescent decision making: Implications for theory, practice, and public policy. Psychology in the Public Interest 7(1):1–44.

Rouse, W. B., and N. M. Morris. 1986. On looking into the black box: Prospects and limits in the search for mental models. Psychological Bulletin 110:349–363.

Schwarz, N. 1996. Cognition and communication: Judgmental biases, research methods, and the logic of conversation. Hillsdale, NJ: Erlbaum.

Schwarz, N. 1999. Self reports. American Psychologist 54:93–105.

Slovic, P., ed. 2001. The perception of risk. London, U.K.: Earthscan.

Sudman, S., and N. Bradburn. 1982. Asking questions: A practical guide to questionnaire design. San Francisco, CA: Jossey-Bass.

Thaler, R. H., and C. R. Sunstein. 2008. Nudge: Improving decisions about health, wealth, and happiness. New Haven, CT: Yale University Press.

Viscusi, W. K. 1983. Risk by choice: Regulating health and safety in the workplace. Cambridge, MA: Harvard University Press.

vonWinterfeldt, D., and W. Edwards. 1986. Decision analysis and behavioral research. New York: Cambridge University Press.

Wallsten, T. S., D. V. Budescu, and R. Zwick. 1993. Comparing the calibration and coherence of numerical and verbal probability judgments. Management Science 39:176–190.

Wessely, S. 2005. Don’t panic! Journal of Mental Health 14(1):1–6.

Woloshin, S., L. M. Schwartz, and H. G. Welch. 2008. Know your chances: Understanding health statistics. Berkeley: University of California Press.