Reference Guide on DNA Identification Evidence

David H. Kaye, M.A., J.D., is Distinguished Professor of Law, Weiss Family Scholar, and Graduate Faculty Member, Forensic Science Program, The Pennsylvania State University, University Park, and Regents’ Professor Emeritus, Arizona State University Sandra Day O’Connor College of Law and School of Life Sciences, Tempe.

George Sensabaugh, D.Crim., is Professor of Biomedical and Forensic Sciences, School of Public Health, University of California, Berkeley.

CONTENTS

B. A Brief History of DNA Evidence

II. Variation in Human DNA and Its Detection

A. What Are DNA, Chromosomes, and Genes?

B. What Are DNA Polymorphisms and How Are They Detected?

2. Sequence-specific probes and SNP chips

C. How Is DNA Extracted and Amplified?

D. How Is STR Profiling Done with Capillary Electrophoresis?

E. What Can Be Done to Validate a Genetic System for Identification?

F. What New Technologies Might Emerge?

1. Miniaturized “lab-on-a-chip” devices

4. What questions do the new technologies raise?

III. Sample Collection and Laboratory Performance

A. Sample Collection, Preservation, and Contamination

1. What forms of quality control and assurance should be followed?

2. How should samples be handled?

IV. Inference, Statistics, and Population Genetics in Human Nuclear DNA Testing

A. What Constitutes a Match or an Exclusion?

B. What Hypotheses Can Be Formulated About the Source?

C. Can the Match Be Attributed to Laboratory Error?

D. Could a Close Relative Be the Source?

E. Could an Unrelated Person Be the Source?

1. Estimating allele frequencies from samples

2. The product rule for a randomly mating population

3. The product rule for a structured population

F. Probabilities, Probative Value, and Prejudice

1. Frequencies and match probabilities

G. Verbal Expressions of Probative Value

1. “Rarity” or “strength” testimony

2. Source or uniqueness testimony

V. Special Issues in Human DNA Testing

D. Offender and Suspect Database Searches

2. Near-miss (familial) searching

3. All-pairs matching within a database to verify estimated random-match probabilities

Deoxyribonucleic acid, or DNA, is a molecule that encodes the genetic information in all living organisms. Its chemical structure was elucidated in 1954. More than 30 years later, samples of human DNA began to be used in the criminal justice system, primarily in cases of rape or murder. The evidence has been the subject of extensive scrutiny by lawyers, judges, and the scientific community. It is now admissible in all jurisdictions, but there are many types of forensic DNA analysis, and still more are being developed. Questions of admissibility arise as advancing methods of analysis and novel applications of established methods are introduced.1

This reference guide addresses technical issues that are important when considering the admissibility of and weight to be accorded analyses of DNA, and it identifies legal issues whose resolution requires scientific information. The goal is to present the essential background information and to provide a framework for resolving the possible disagreements among scientists or technicians who testify about the results and import of forensic DNA comparisons.

Section I provides a short history of DNA evidence and outlines the types of scientific expertise that go into the analysis of DNA samples.

Section II provides an overview of the scientific principles behind DNA typing. It describes the structure of DNA and how this molecule differs from person to person. These are basic facts of molecular biology. The section also defines the more important scientific terms and explains at a general level how DNA differences are detected. These are matters of analytical chemistry and laboratory procedure. Finally, the section indicates how it is shown that these differences permit individuals to be identified. This is accomplished with the methods of probability and statistics.

Section III considers issues of sample quantity and quality as well as laboratory performance. It outlines the types of information that a laboratory should produce to establish that it can analyze DNA reliably and that it has adhered to established laboratory protocols.

Section IV examines issues in the interpretation of laboratory results. To assist the courts in understanding the extent to which the results incriminate the defendant, it enumerates the hypotheses that need to be considered before concluding that the defendant is the source of the crime scene samples, and it explores the

1. For a discussion of other forensic identification techniques, see Paul C. Giannelli et al., Reference Guide on Forensic Identification Expertise, in this manual. See also David H. Kaye et al., The New Wigmore, A Treatise on Evidence: Expert Evidence (2d ed. 2011).

issues that arise in judging the strength of the evidence. It focuses on questions of statistics, probability, and population genetics.2

Section V describes special issues in human DNA testing for identification. These include the detection and interpretation of mixtures, Y-STR testing, mitochondrial DNA testing, and the evidentiary implications of DNA database searches of various kinds.

Finally, Section VI discusses the forensic analysis of nonhuman DNA. It identifies questions that can be useful in judging whether a new method or application of DNA science has the scientific merit and power claimed by the proponent of the evidence.

A glossary defines selected terms and acronyms encountered in genetics, molecular biology, and forensic DNA work.

B. A Brief History of DNA Evidence

“DNA evidence” refers to the results of chemical or physical tests that directly reveal differences in the structure of the DNA molecules found in organisms as diverse as bacteria, plants, and animals.3 The technology for establishing the identity of individuals became available to law enforcement agencies in the mid to late 1980s.4 The judicial reception of DNA evidence can be divided into at least five phases.5 The first phase was one of rapid acceptance. Initial praise for RFLP (restriction fragment length polymorphism) testing in homicide, rape, paternity, and other cases was effusive. Indeed, one judge proclaimed “DNA fingerprinting” to be “the single greatest advance in the ‘search for truth’…since the advent of cross-examination.”6 In this first wave of cases, expert testimony for the prosecution rarely was countered, and courts readily admitted DNA evidence.

In a second wave of cases, however, defendants pointed to problems at two levels—controlling the experimental conditions of the analysis and interpreting the results. Some scientists questioned certain features of the procedures for extracting and analyzing DNA employed in forensic laboratories, and it became apparent

2. For a broader discussion of statistics, see David H. Kaye & David A. Freedman, Reference Guide on Statistics, in this manual.

3. Differences in DNA also can be revealed by differences in the proteins that are made according to the “instructions” in a DNA molecule. Blood group factors, serum enzymes and proteins, and tissue types all reveal information about the DNA that codes for these chemical structures. Such immunogenetic testing predates the “direct” DNA testing that is the subject of this chapter. On the nature and admissibility of the “indirect” DNA testing, see, for example, David H. Kaye, The Double Helix and the Law of Evidence 5–19 (2010); 1 McCormick on Evidence § 205(B) (Kenneth Broun ed., 6th ed. 2006).

4. The first reported appellate opinion is Andrews v. State, 533 So. 2d 841 (Fla. Dist. Ct. App. 1988).

5. The description that follows is adapted from 1 McCormick on Evidence, supra note 3, § 205(B).

6. People v. Wesley, 533 N.Y.S.2d 643, 644 (Alb. County. Ct. 1988).

that declaring matches or nonmatches in the DNA variations being compared was not always trivial. Despite these concerns, most cases continued to find the DNA analyses to be generally accepted, and a number of states provided for admissibility of DNA tests by legislation. Concerted attacks by defense experts of impressive credentials, however, produced a few cases rejecting specific proffers on the ground that the testing was not sufficiently rigorous.7

A different attack on DNA profiling begun in cases during this period proved far more successful and led to a third wave of cases in which many courts held that estimates of the probability of a coincidentally matching DNA profile were inadmissible. These estimates relied on a simple population genetics model for the frequencies of DNA profiles, and some prominent scientists claimed that the applicability of the mathematical model had not been adequately verified. A heated debate on this point spilled over from courthouses to scientific journals and convinced the supreme courts of several states that general acceptance was lacking. A 1992 report of the National Academy of Sciences proposed a more “conservative” computational method as a compromise,8 and this seemed to undermine the claim of scientific acceptance of the less conservative procedure that was in general use.

In response to the population genetics criticism and the 1992 report came an outpouring of critiques of the report and new studies of the distribution of the DNA variations in many populations. Relying on the burgeoning literature, a second National Academy panel concluded in 1996 that the usual method of estimating frequencies in broad racial groups generally was sound, and it proposed improvements and additional procedures for estimating frequencies in subgroups within the major population groups.9 In the corresponding fourth phase of judicial scrutiny of DNA evidence, the courts almost invariably returned to the earlier view that the statistics associated with DNA profiling are generally accepted and scientifically valid.

In the fifth phase of the judicial evaluation of DNA evidence, results obtained with the newer “PCR-based methods” entered the courtroom. Once again, courts considered whether the methods rested on a solid scientific foundation and were generally accepted in the scientific community. The opinions are practically unanimous in holding that the PCR-based procedures satisfy these standards. Before long, forensic scientists settled on the use of one type of DNA variation (known as short tandem repeats, or STRs) to include or exclude individuals as the source of crime scene DNA.

7. Moreover, a minority of courts, perhaps concerned that DNA evidence might be conclusive in the minds of jurors, added a “third prong” to the general-acceptance standard of Frye v. United States, 293 F. 1013 (D.C. Cir. 1923). This augmented Frye test requires not only proof of the general acceptance of the ability of science to produce the type of results offered in court, but also of the proper application of an approved method on the particular occasion. For criticism of this approach, see David H. Kaye et al., supra note 1, § 6.3.3(a)(2).

8. National Research Council, DNA Technology in Forensic Science (1992) [hereinafter NRC I].

9. National Research Council, The Evaluation of Forensic DNA Evidence (1996) [hereinafter NRC II].

Throughout these phases, DNA tests also exonerated an increasing number of men who had been convicted of capital and other crimes, posing a challenge to traditional postconviction remedies and raising difficult questions of postconviction access to DNA samples.10 The value of DNA evidence in solving older crimes also prompted extensions of some statutes of limitations.11

In sum, in little more than a decade, forensic DNA typing made the transition from a novel set of methods for identification to a relatively mature and well-studied forensic technology. However, one should not lump all forms of DNA identification together. New techniques and applications continue to emerge, ranging from the use of new genetic systems and new analytical procedures to the typing of DNA from plants and animals. Before admitting such evidence, courts normally inquire into the biological principles and knowledge that would justify inferences from these new technologies or applications. As a result, this guide describes not only the predominant STR technology, but also newer analytical techniques that can be used for forensic DNA identification.

Human DNA identification can involve testimony about laboratory findings, about the statistical interpretation of those findings, and about the underlying principles of molecular biology. Consequently, expertise in several fields might be required to establish the admissibility of the evidence or to explain it adequately to the jury. The expert who is qualified to testify about laboratory techniques might not be qualified to testify about molecular biology, to make estimates of population frequencies, or to establish that an estimation procedure is valid.12

10. See, e.g., Osborne v. District Attorney’s Office for Third Judicial District, 129 S. Ct. 2308 (2009) (narrowly rejecting a convicted offender’s claim of a due process right to DNA testing at his expense, enforceable under 42 U.S.C. § 1983, to establish that he is probably innocent of the crime for which he was convicted after a fair trial, when (1) the convicted offender did not seek extensive DNA testing before trial even though it was available, (2) he had other opportunities to prove his innocence after a final conviction based on substantial evidence against him, (3) he had no new evidence of innocence (only the hope that more extensive DNA testing than that done before the trial would exonerate him), and (4) even a finding that he was not source of the DNA would not conclusively demonstrate his innocence); Skinner v. Switzer, 131 S. Ct. 1289 (2011); Brandon L. Garrett, Judging Innocence, 108 Colum. L. Rev. 55 (2008); Brandon L. Garrett, Claiming Innocence, 92 Minn. L. Rev. 1629 (2008).

11. See, e.g., Veronica Valdivieso, DNA Warrants: A Panacea for Old, Cold Rape Cases? 90 Geo. L.J. 1009 (2002).

12. Nonetheless, if previous cases establish that the testing and estimation procedures are legally acceptable, and if the computations are essentially mechanical, then highly specialized statistical expertise might not be essential. Reasonable estimates of DNA characteristics in major population groups can be obtained from standard references, and many quantitatively literate experts could use the appropriate formulae to compute the relevant profile frequencies or probabilities. NRC II, supra note 9, at 170. Limitations in the knowledge of a technician who applies a generally accepted statistical procedure can be explored on cross-examination. See Kaye et al., supra note 1, § 2.2. Accord Roberson v. State, 16 S.W.3d 156, 168 (Tex. Crim. App. 2000).

Trial judges ordinarily are accorded great discretion in evaluating the qualifications of a proposed expert witness, and the decisions depend on the background of each witness. Courts have noted the lack of familiarity of academic experts—who have done respected work in other fields—with the scientific literature on forensic DNA typing and on the extent to which their research or teaching lies in other areas.13 Although such concerns may affect the persuasiveness of particular testimony, they rarely result in exclusion on the grounds that the witness simply is not qualified as an expert.

The scientific and legal literature on the objections to DNA evidence is extensive. By studying the scientific publications, or perhaps by appointing a special master or expert adviser to assimilate this material, a court can ascertain where a party’s expert falls within the spectrum of scientific opinion. Furthermore, an expert appointed by the court under Federal Rule of Evidence 706 could testify about the scientific literature generally or even about the strengths or weaknesses of the particular arguments advanced by the parties.

Given the great diversity of forensic questions to which DNA testing might be applied, it is not feasible to list the specific scientific expertise appropriate to all applications. Assessing the value of DNA analyses of a novel application involving unfamiliar species can be especially challenging. If the technology is novel, expertise in molecular genetics or biotechnology might be necessary. If testing has been conducted on a particular organism or category of organisms, expertise in that area of biology may be called for. If a random-match probability has been presented, one might seek expertise in statistics as well as the population biology or population genetics that goes with the organism tested. Given the penetration of molecular technology into all areas of biological inquiry, it is likely that individuals can be found who know both the technology and the population biology of the organism in question. Finally, when samples come from crime scenes, the expertise and experience of forensic scientists can be crucial. Just as highly focused specialists may be unaware of aspects of an application outside their field of expertise, so too scientists who have not previously dealt with forensic samples can be unaware of case-specific factors that can confound the interpretation of test results.

II. Variation in Human DNA and Its Detection

DNA is a complex molecule that contains the “genetic code” of organisms as diverse as bacteria and humans. Although the DNA molecules in human cells are

13. E.g., State v. Copeland, 922 P.2d 1304, 1318 n.5 (Wash. 1996) (noting that defendant’s statistical expert “was also unfamiliar with publications in the area,” including studies by “a leading expert in the field” whom he thought was “a ‘guy in a lab somewhere’”).

largely identical from one individual to another, there are detectable variations—except for identical twins, every two human beings have some differences in the detailed structure of their DNA. This section describes the basic features of DNA and some ways in which it can be analyzed to detect these differences.

A. What Are DNA, Chromosomes, and Genes?

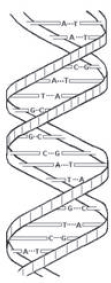

The DNA molecule is made of subunits that include four chemical structures known as nucleotide bases. The names of these bases (adenine, thymine, guanine, and cytosine) usually are abbreviated as A, T, G, and C. The physical structure of DNA is often described as a double helix because the molecule has two spiraling strands connected to each other by weak bonds between the nucleotide bases. As shown in Figure 1, A pairs only with T and G only with C. Thus, the order of the single bases on either strand reveals the order of the pairs from one end of the molecule to the other, and the DNA molecule could be said to be like a long sequence of As, Ts, Gs, and Cs.

Figure 1. Sketch of a small part of a double-stranded DNA molecule. Nucleotide bases are held together by weak bonds. A pairs with T; C pairs with G.

Most human DNA is tightly packed into structures known as chromosomes, which come in different sizes and are located in the nuclei of cells. The chromosomes are numbered (in descending order of size) 1 through 22, with the remaining chromosome being an X or a much smaller Y. If the bases are like letters, then each chromosome is like a book written in this four-letter alphabet, and the nucleus is like a bookshelf in the interior of the cell. All the cells in one

individual contain identical copies of the same collection of books. The sequence of the As, Ts, Gs, and Cs that constitutes the “text” of these books is referred to as the individual’s nuclear genome.

All told, the genome comprises more than three billion “letters” (As, Ts, Gs, and Cs). If these letters were printed in books, the resulting pile would be as high as the Washington Monument. About 99.9% of the genome is identical between any two individuals. This similarity is not really surprising—it accounts for the common features that make humans an identifiable species (and for features that we share with many other species as well). The remaining 0.1% is particular to an individual. This variation makes each person (other than identical twins) genetically unique. This small percentage may not sound like a lot, but it adds up to some three million sites for variation among individuals.

The process that gives rise to this variation among people starts with the production of special sex cells—sperm cells in males and egg cells in females. All the nucleated cells in the body other than sperm and egg cells contain two versions of each of the 23 chromosomes—two copies of chromosome 1, two copies of chromosome 2, and so on, for a total of 46 chromosomes. The X and Y chromosomes are the sex-determining chromosomes. Cells in females contain two X chromosomes, and cells in males contain one X and one Y chromosome. An egg cell, however, contains only 23 chromosomes—one chromosome 1, one chromosome 2,…, and one X chromosome—each selected at random from the woman’s full complement of 23 chromosome pairs. Thus, each egg carries half the genetic information present in the mother’s 23 chromosome pairs, and because the assortment of the chromosomes is random, each egg carries a different complement of genetic information. The same situation exists with sperm cells. Each sperm cell contains a single copy of each of the 23 chromosomes selected at random from a man’s 23 pairs, and each sperm differs in the assortment of the 23 chromosomes it carries. Fertilization of an egg by a sperm therefore restores the full number of 46 chromosomes, with the 46 chromosomes in the fertilized egg being a new combination of those in the mother and father. The process resembles taking two decks of cards (a male and a female deck) and shuffling a random half from the male deck into a random half from the female deck, to produce a new deck.

During pregnancy, the fertilized cell divides to form two cells, each of which has an identical copy of the 46 chromosomes. The two then divide to form four, the four form eight, and so on. As gestation proceeds, various cells specialize (“differentiate”) to form different tissues and organs. Although cell differentiation yields many different kinds of cells, the process of cell division results in each progeny cell having the same genomic complement as the cell that divided. Thus, each of the approximately 100 trillion cells in the adult human body has the same DNA text as was present in the original 23 pairs of chromosomes from the fertilized egg, one member of each pair having come from the mother and one from the father.

A second mechanism operating during the chromosome reduction process in sperm and egg cells further shuffles the genetic information inherited from mother

and father. In the first stage of the reduction process, each chromosome of a chromosome pair aligns with its partner. The maternally inherited chromosome 1 aligns with the paternally inherited chromosome 1, and so on through the 22 pairs; X chromosomes align with each other as well, but X and Y chromosomes do not. While the chromosome pairs are aligned, they exchange pieces to create new combinations. The recombined chromosomes are passed on in the sperm and eggs. As a consequence, the chromosomes we inherit from our parents are not exact copies of their chromosomes, but rather are mosaics of these parental chromosomes.

The swapping of material between chromosome pairs (as they align in the emerging sex cells) and the random selection (of half of each parent’s 46 chromosomes) in making sex cells is called recombination. Recombination is the principal source of diversity in individual human genomes.

The diverse variations occur both within the genes and in the regions of DNA sequences between the genes. A gene can be defined as a segment of DNA, usually from 1000 to 10,000 base pairs long, that “codes” for a protein. The cell produces specific proteins that correspond to the order of the base pairs (the “letters”) in the coding part of the gene.14 Human genes also contain noncoding sequences that regulate the cell type in which a protein will be synthesized and how much protein will be produced.15 Many genes contain interspersed noncoding, nonregulatory sequences that no longer participate in protein synthesis. These sequences, which have no apparent function, constitute about 23% of the base pairs within human genes.16 In terms of the metaphor of DNA as text, the gene is like an important paragraph in the book, often with some gibberish in it.

Proteins perform all sorts of functions in the body and thus produce observable characteristics. For example, a tiny part of the sequence that directs the production of the human group-specific complement protein (a protein that binds to vitamin D and transports it to certain tissues) is

G C A A A A T T G C C T G A T G C C A C A C C C A A G G A A C T G G C A.

14. The sequence in which the building blocks (amino acids) of a protein are arranged corresponds to the sequence of base pairs within a gene. (A sequence of three base pairs specifies a particular 1 of the 20 possible amino acids in the protein. The mapping of a set of three nucleotide bases to a particular amino acid is the genetic code. The cell makes the protein through intermediate steps involving coding RNA transcripts.) About 1.5% of the human genome codes for the amino acid sequences.

15. These noncoding but functional sequences include promoters, enhancers, and repressors.

16. This gene-related DNA consists of introns (which interrupt the coding sequences, called exons, in genes and which are edited out of the RNA transcript for the protein), pseudogenes (evolutionary remnants of once-functional genes), and gene fragments. The idea of a gene as a block of DNA (some of which is coding, some of which is regulatory, and some of which is functionless) is an oversimplification, but it is useful enough here. See, e.g., Mark B. Gerstein et al., What Is a Gene, Post-ENCODE? History and Updated Definition, 17 Genome Res. 669 (2007).

This gene always is located at the same position, or locus, on chromosome 4. As we have seen, most individuals have two copies of each gene at a given locus—one from the father and one from the mother.

A locus where almost all humans have the same DNA sequence is called monomorphic (“of one form”). A locus where the DNA sequence varies among significant numbers of individuals (more than 1% or so of the population possesses the variant) is called polymorphic (“of many forms”), and the alternative forms are called alleles. For example, the GC protein gene sequence has three common alleles that result from substitutions in a base at a given point. Where an A appears in one allele, there is a C in another. The third allele has the A, but at another point a G is swapped for a T. These changes are called single nucleotide polymorphisms (SNPs, pronounced “snips”).

If a gene is like a paragraph in a book, a SNP is a change in a letter somewhere within that paragraph (a substitution, a deletion, or an insertion), and the two versions of the gene that result from this slight change are the alleles. An individual who inherits the same allele from both parents is called a homozygote. An individual with distinct alleles is a heterozygote.

DNA sequences used for forensic analysis usually are not genes. They lie in the vast regions between genes (about 75% of the genome is extragenic) or in the apparently nonfunctional regions within genes. These extra- and intragenic regions of DNA have been found to contain considerable sequence variation, which makes them particularly useful in distinguishing individuals. Although the terms “locus,” “allele,” “homozygous,” and “heterozygous” were developed to describe genes, the nomenclature has been carried over to describe all DNA variation—coding and noncoding alike. Both types are inherited from mother and father in the same fashion.

B. What Are DNA Polymorphisms and How Are They Detected?

By determining which alleles are present at strategically chosen loci, the forensic scientist ascertains the genetic profile, or genotype, of an individual (at those loci). Although the differences among the alleles arise from alterations in the order of the ATGC letters, genotyping does not necessarily require “reading” the full DNA sequence. Here we outline the major types of polymorphisms that are (or could be) used in identity testing and the methods for detecting them.

Researchers are investigating radically new and efficient technologies to sequence entire genomes, one base pair at a time, but the direct sequencing methods now in existence are technically demanding, expensive, and time-consuming for whole-genome sequencing. Therefore, most genetic typing focuses on identifying only

those variations that define the alleles and does not attempt to “read out” each and every base pair as it appears. The exception is mitochondrial DNA, described in Section V. As next-generation sequencing technologies are perfected, however (see infra Section II.F), this situation could change.

2. Sequence-specific probes and SNP chips

Simple sequence variation, such as that for the GC locus, is conveniently detected using sequence-specific oligonucleotide (SSO) probes. A probe is a short, single strand of DNA. With GC typing, for example, probes for the three common alleles are attached to designated locations on a membrane. Copies of the variable sequence region of the GC gene in the crime scene sample are made with the polymerase chain reaction (PCR), which is discussed in the next section. These copies (in the form of single strands) are poured onto the membrane. Whichever allele is present in a single-stranded DNA fragment will cause the fragment to stick to the corresponding, immobilized probe strands. To permit the fragments of this type to be seen, a chemical “label” that catalyzes a color change at the spot where the DNA binds to its probe can be attached when the copies are made. A colored spot showing that the allele is present thus should appear on the membrane at the location of the probe that corresponds to this particular allele. If only one allele is present in the crime scene DNA (because of homozygosity), there will be no change at the spots where the other probes are located. If two alleles are present (heterozygosity), the corresponding two spots will change color.

This approach can be miniaturized and automated by embedding probes for many loci on a silicon chip. Commercially available “SNP chips” for disease research incorporate enough different probes to detect on the order of a million different known SNPs throughout the human genome. These chips have become a basic tool in searches for genetic changes associated with human diseases. They are described further in Section II.F.

Another category of DNA variations comes from the insertion of a variable number of tandem repeats (VNTR) at a locus. These were the first polymorphisms to find widespread use in identity testing and hence were the subject of most of the court opinions on the admissibility of DNA in the late 1980s and early 1990s. The core unit of a VNTR is a particular short DNA sequence that is repeated many times end-to-end. The first VNTRs to be used in genetic and forensic testing had core repeat sequences of 15–35 base pairs. In this testing, bacterial enzymes (known as “restriction enzymes”) were used to cut the DNA molecule both before and after the VNTR sequence. A small number of repeats in the VNTR region gives rise to a small “restriction fragment,” and a large number of repeats yields a large fragment. A substantial quantity of DNA from a crime scene sample is required to give a detectable number of VNTR fragments with this procedure.

The detection is accomplished by applying a probe that binds when it encounters the repeated core sequence. A radioactive or fluorescent molecule attached to the probe provides a way to mark the VNTR fragment. The probe ignores DNA fragments that do not include the VNTR core sequence. (There are many of these unwanted fragments, because the restriction enzymes chop up the DNA throughout the genome—not just at the VNTR loci.) The restriction fragments are sorted by a process known as electrophoresis, which separates DNA fragments based on size. Many early court opinions refer to this process as RFLP testing.17

Although RFLP-VNTR profiling is highly discriminating,18 it has several drawbacks. Not only does it require a substantial sample size, but it also is time-consuming and does not measure the fragment lengths to the nearest number of repeats. The measurement error inherent in the form of electrophoresis used (known as “gel electrophoresis”) is not a fundamental obstacle, but it complicates the determination of which profiles match and how often other profiles in the population would be declared to match.19 Consequently, forensic scientists have moved from VNTRs to another form of repetitive DNA known as short tandem repeats (STRs) or microsatellites. STRs have very short core repeats, two to seven base pairs in length, and they typically extend for only some 50 to 350 base pairs.20 Like the larger VNTRs, which extend for thousands of base pairs, STR sequences do not code for proteins, and the ones used in identity testing convey little or no information about an individual’s propensity for disease.21 Because STR alleles

17. It would be clearer to call it RFLP-VNTR testing, because the fragments being measured contain the VNTRs rather than some simpler polymorphisms that were used in genetic research and disease testing. A more detailed exposition of the steps in RFLP-VNTR profiling (including gel electrophoresis, Southern blotting, and autoradiography) can be found in the previous edition of this guide and in many judicial opinions circa 1990.

18. Alleles at VNTR loci generally are too long to be measured precisely by electrophoretic methods—alleles differing in size by only a few repeat units may not be distinguished. Although this makes for complications in deciding whether two length measurements that are close together result from the same allele, these loci are quite powerful for the genetic differentiation of individuals, because they tend to have many alleles that occur relatively rarely in the population. At a locus with only 20 such alleles (and most loci typically have many more), there are 210 possible genotypes. With five such loci, the number of possible genotypes is 2105, which is more than 400 billion.

19. For a case reversing a conviction as a result of an expert’s confusion on this score, see People v. Venegas, 954 P.2d 525 (Cal. 1998). More suitable procedures for match windows and probabilities are described in NRC II, supra note 9.

20. The numbers, and the distinction between “minisatellites” (VNTRs) and microsatellites (STRs), are not precise, but the mechanisms that give rise to the shorter tandem repeats differ from those that produce the longer ones. See Benjamin Lewin, Genes IX 124–25 (9th ed. 2008).

21. See David H. Kaye, Please, Let’s Bury the Junk: The CODIS Loci and the Revelation of Private Information, 102 Nw. U. L. Rev. Colloquy 70 (2007), available at http://www.law.northwestern.edu/lawreview/colloquy/2007/25/.

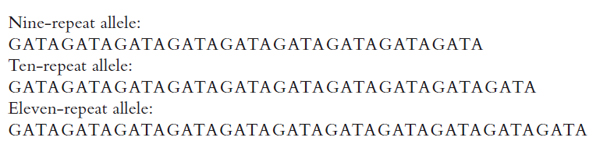

are much smaller than VNTR alleles, however, they can be amplified with PCR designed to copy only the locus of interest. This obviates the need for restriction enzymes, and it allows laboratories to analyze STR loci much more quickly. Because the amplified fragments are shorter, electrophoretic detection permits the exact number of base pairs in an STR to be determined, allowing alleles to be defined as discrete entities. Figure 2 illustrates the nature of allelic variation at an STR locus found on chromosome 16.

Figure 2. Three alleles of the D16S539 STR. The core sequence is GATA. The first allele listed has 9 tandem repeats, the second has 10, and the third has 11. The locus has other alleles (different numbers of repeats), shown in Figure 4.

Although there are fewer alleles per locus for STRs than for VNTRs, there are many STRs, and they can be analyzed simultaneously. Such “multiplex” systems now permit the simultaneous analysis of 16 loci. A subset of 13 is standard in the United States (see infra Section II.D), and these are capable of distinguishing among almost everyone in the population.22

DNA contains the genetic information of an organism. In humans, most of the DNA is found in the cell nucleus, where it is organized into separate chromosomes. Each chromosome is like a book, and each cell has the same library (genome) of books of various sizes and shapes. There are two copies of each book of a particular size and shape, one that came from the father, the other from the mother. Thus, there are two copies of the book entitled “Chromosome One,” two copies of “Chromosome Two,” and so on. Genes are the most meaningful paragraphs in the books. Other parts of the text appear to have no coherent message. Two individuals sometimes have different versions (alleles) of the same paragraph. Some alleles result from the substitution of one letter for another. These are SNPs. Others come about from the insertion or deletion of single letters, and still

22. Usually, there are between 7 and 15 STR alleles per locus. Thirteen loci that have 10 STR alleles each can give rise to 5513, or 42 billion trillion, possible genotypes.

others represent a kind of stuttering repetition of a string of extra letters. These are the VNTRs and STRs.23 The locations within a chromosome where these interpersonal variations occur are called loci.

C. How Is DNA Extracted and Amplified?

DNA usually can be found in biological materials such as blood, bone, saliva, hair, semen, and urine. A combination of routine chemical and physical methods permits DNA to be extracted from cell nuclei and isolated from the other chemicals in a sample. PCR then is used to make exponentially large numbers of copies of targeted regions of the extracted DNA. PCR might be applied to the double-stranded DNA segments extracted and purified from a forensic sample as follows: First, the purified DNA is separated into two strands by heating it to near the boiling point of water. This “denaturing” takes about a minute. Second, the single strands are cooled, and “primers” attach themselves to the points at which the copying will start and stop. (Primers are small, manmade pieces of DNA, usually between 15 and 30 nucleotides long, of known sequences. If a locus of interest starts near the sequence ATCGAATCGGTAGCCATATG on one strand, a suitable primer would have the complementary sequence TAGCTTAGCCATCGGTATAC.) “Annealing” these primers takes about 45 seconds. Finally, the soup containing the annealed DNA strands, the enzyme DNA polymerase, and lots of the four nucleotide building blocks (A, C, G, and T) is warmed to a comfortable working temperature for the polymerase to insert the complementary base pairs one at a time, building a matching second strand bound to the original “template” and thus replicating part of the DNA strand that was separated from its partner in the first step. The same replication occurs with the separated partner as the template. This “extension” step for both templates takes about 2 minutes. The result is two identical double-stranded DNA segments, one made from each strand of the original DNA. The three-step cycle is repeated, usually 20 to 35 times in automated machines known as thermocyclers. Ideally, the first cycle results in two double-stranded DNA segments. The second cycle produces four, the third eight, and so on, until the number of copies of the original DNA is enormous. In practice, there is some inefficiency in the doubling process, but the yield from a 30-cycle amplification is generally about 1 million to 10 million copies of the targeted sequence.24 In this way, PCR magnifies short sequences of interest in a small number of DNA fragments into millions of exact copies. Machines that automate the PCR process are commercially available.

For PCR amplification to work properly and yield copies of only the desired sequence, however, care must be taken to achieve the appropriate chemical con-

23. In addition to the 23 pairs of books in the cell nucleus, other scraps of text reside in each of the mitochondria, the power plants of the cell. See infra Section V.

24. NRC II, supra note 9, at 69–70.

ditions and to avoid excessive contamination of the sample. A laboratory should be able to demonstrate that it can amplify targeted sequences faithfully with the equipment and reagents that it uses and that it has taken suitable precautions to avoid or detect contamination from foreign DNA. With small samples, it is possible that some alleles will be amplified and others missed (preferential amplification, discussed infra Section III.A.1), and mutations in the region of a primer can prevent the amplification of the allele downstream of the primer (null alleles).25

D. How Is STR Profiling Done with Capillary Electrophoresis?

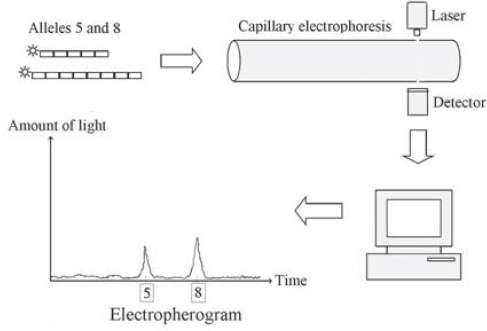

In the most commonly used analytical method for detecting STRs, the STR fragments in the sample are amplified using primers with fluorescent tags. Each new STR fragment made in a PCR cycle bears a fluorescent dye. When struck by a source light, each dye glows with a particular color. The fragments are separated according to their length by electrophoresis in automated “genetic analyzer” machinery—a byproduct of the technology developed for the Human Genome Project that first sequenced most of the entire genome. In these machines, a long, narrow tube (a “capillary”) is filled with an entangled polymer or comparable sieving medium, and an electric field is applied to pull DNA fragments placed at one end of the tube through the medium. Shorter fragments slip through the medium more quickly than larger, bulkier ones. A laser beam is sent through a small glass window in the tube. The laser light excites the dye, causing it to fluoresce at a characteristic wavelength as the tagged fragments pass under the light. The intensity of the light emitted by the dye is recorded by a kind of electronic camera and transformed into a graph (an electropherogram), which shows a peak as an STR flashes by. A shorter allele will pass by the window and fluoresce first; a longer fragment will come by later, giving rise to another peak on the graph. Figure 3 provides a sketch of how the alleles with five and eight repeats of the GATA sequence at the D16S539 STR locus might appear in an electropherogram.

Medical and human geneticists were interested in STRs as markers in family studies to locate the genes that are associated with inherited diseases, and papers on their potential for identity testing appeared in the early 1990s. Developmental research to pick suitable loci moved into high gear in England, Europe, and Canada. Britain’s Forensic Science Service applied a four-locus testing system in 1994. Then it introduced the “second generation multiplex” (SGM)—for simultaneously typing six loci in 1996. These soon would be used to build England’s National DNA Database. The database system allows a computer to check the STR types of millions of known or suspected criminals against thousands of crime

25. A null allele will not lead to a false exclusion if the two DNA samples from the same individual are amplified with the same primer system, but it could lead to an exclusion at one locus when searching a database of STR profiles if the database profile was determined with a different PCR kit than the one used to analyze the crime scene DNA.

Figure 3. Sketch of an electropherogram for two D16S539 alleles. One allele has five repeats of the sequence GATA; the other has eight. Each GATA repeat is depicted as a small rectangle. Although only one copy of each allele (with a fluorescent molecule, or “tag” attached) is shown here, PCR generates a great many copies from the DNA sample with these alleles at the D16S539 locus. These copies are drawn through the capillary tube, and the tags glow as the STR fragments move through the laser beam. An electronic camera measures the colored light from the tags. Finally, a computer processes the signal from the camera to produce the electropherogram. Source: David H. Kaye, The Double Helix and the Law of Evidence 189, fig. 9.1 (2010).

scene samples. A six-locus STR profile can be represented as a string of 12 digits; each digit indicates the number of repeat units in the alleles at each locus. These discrete, numerical DNA profiles are far easier to compare mechanically than the complex patterns of fingerprints. In the United States, the FBI settled on 13 “core loci” to use in the U.S. national DNA database system. These are often called the “CODIS core loci,” and an additional 7 STR loci are under consideration.26

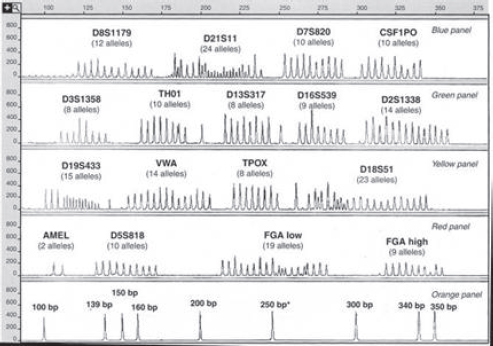

Modern genetic analyzers produce electropherograms for many loci at once. This “multiplexing” is accomplished by using dyes that fluoresce at distinct colors

26. Douglas R. Hares, Expanding the CODIS Core Loci in the United States, Forensic Sci. Int’l: Genetics (forthcoming 2011). CODIS stands for “convicted offender DNA index system.”

to label the alleles from different groups of loci. A separate set of fragments of known sizes that comigrate through the capillary function as a kind of ruler (an “internal-lane size standard”) to determine the lengths of the allelic fragments. Software processes the raw data to generate an electropherogram of the separate allele peaks of each color. By comparing the positions of the allele peaks to the size standard, the program determines the number of repeats in each allele. The plotted heights of the peaks (measured in relative fluorescent units, or RFUs) are proportional to the amount of the PCR product.

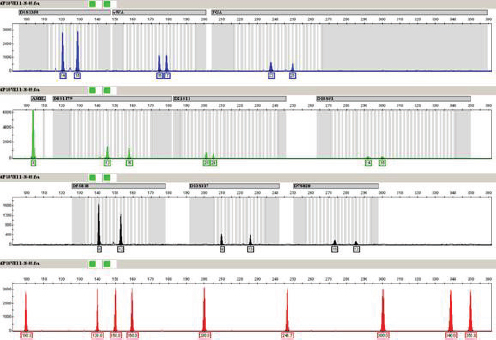

Figure 4 is an electropherogram of all 203 major alleles at 15 STR loci that can be typed in a single “multiplex” PCR reaction. (In addition, it shows the two alleles of the gene used to determine the sex of the contributor of a DNA

Figure 4. Alleles of 15 STR loci and the amelogenin sex-typing test from the AmpFISTR Identifiler kit. The bottom panel is a “sizing standard”—a set of peaks from DNA sequences of known lengths (in base pairs). The numbers in the vertical axis in each panel are relative fluorescence units (RFUs) that indicate the amount of light emitted after the laser beam strikes the fluorescent tag on an STR fragment.

Note: Applied Biosystems makes the kit that produced these allelic ladders.

Source: John M. Butler, Forensic DNA Typing: Biology, Technology, and Genetics of STR Markers 128 (2d ed. 2005), Copyright Elsevier 2005, with the permission of Elsevier Academic Press. John Butler supplied the illustration.

sample.27) An electropherogram from an individual’s DNA would have only one or two peaks at each of these 15 STR loci (depending on whether the person is homozygous or heterozygous). These “allelic ladders” aid in deciding which allele a peak from an unknown sample represents.

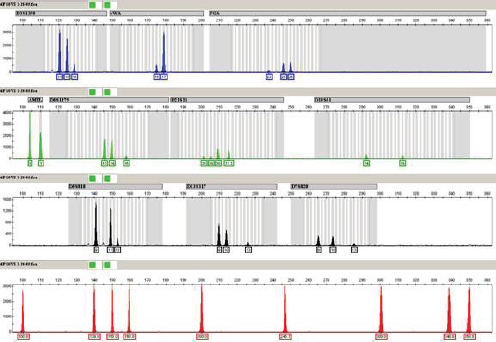

Figure 5 is an electropherogram from the vaginal epithelial cells of the body of a girl who had been sexually assaulted and killed in People v. Pizarro.28 It was produced for the retrial in 2008 of the defendant who was linked to the victim by VNTR typing at his first trial in 1990.

Figure 5. Electropherogram for nine STR loci of the victim’s DNA in People v. Pizzaro. (The amelogenin locus and a sizing standard at the bottom also are included.) Some STR loci have small peaks, indicating that there was not much PCR product for those loci, likely because of DNA degradation. All of the STR loci have two peaks, as would be expected when the source is heterozygous at those loci.

Source: Steven Myers and Jeanette Wallin, California Department of Justice, provided the image.

27. The amelogenin gene, which is found on the X and the Y chromosomes, codes for a protein that is a major component of tooth enamel matrix. The copy on the X chromosome is 112 bp long. The copy on the Y chromosome has a string of six base pairs deleted, making it slightly shorter (106 bp). A female (XX) will have one peak at 112 bp. A male (XY) will have two peaks (at 106 and 112 bp).

28. 12 Cal. Rptr. 2d 436 (Ct. App. 1992), after remand, 3 Cal. Rptr. 3d 21 (Ct. App. 2003), review denied (Oct 15, 2003).

E. What Can Be Done to Validate a Genetic System for Identification?

Regardless of the kind of genetic system used for typing—STRs, SNPs, or still other polymorphisms—some general principles and questions can be applied to each system that is offered for courtroom use. First, the nature of the polymorphism should be well characterized. Is it a simple sequence polymorphism or a fragment length polymorphism? This information should be in the published literature or in archival genome databanks.

Second, the published scientific literature can be consulted to verify claims that a particular method of analysis can produce accurate profiles under various conditions. Although such validation studies have been conducted for all the systems ordinarily used in forensic work, determining the point at which the empirical validation of a particular system is sufficiently convincing to pass scientific muster may well require expert assistance.

Finally, the population genetics of the system should be characterized. As new systems are discovered, researchers typically analyze convenient collections of DNA samples from various human populations and publish studies of the relative frequencies of each allele in these population samples. These studies measure the extent of genetic variability at the polymorphic locus in the various populations, and thus of the potential probative power of the marker for distinguishing among individuals.

At this point, the capability of PCR-based procedures to ascertain DNA genotypes accurately cannot be doubted. Of course, the fact that scientists have shown that it is possible to extract DNA, to amplify it, and to analyze it in ways that bear on the issue of identity does not mean that a particular laboratory has adopted a suitable protocol and is proficient in following it. These case-specific issues are considered in Sections III and IV.

F. What New Technologies Might Emerge?

1. Miniaturized “lab-on-a-chip” devices

Miniaturized capillary electrophoresis (CE) devices have been developed for rapid detection of STRs (described in Section II.D) and other genetic analyses. The mini-CE systems consist of microchannels roughly the diameter of a hair etched on glass wafers (“chips”) using technology borrowed from the computer industry. The principles of electrophoretic separation are the same as with conventional CE systems. With microfluidic technologies, it is possible to integrate DNA extraction and PCR amplification processes with the CE separation in a single device, a so-called lab on a chip. Once a sample is added to the device, all the analytical steps are performed on the chip without further human contact. These integrated devices combine the benefits of simplified sample handling with rapid analysis

and are under active development for point-of-care medical diagnostics.29 Efforts are under way to develop an integrated microdevice for STR analysis that would improve the speed and efficiency of forensic DNA profiling. A portable device for rapid and secure analysis of samples in the field is a distinct possibility.30

The initial success of the Human Genome Project and the promise of “personalized medicine” is driving research to develop technologies for DNA analysis that are faster, cheaper, and less labor intensive. In 2004, the National Human Genome Research Institute announced funding for research leading to the “$1000 genome,” an achievement that would permit sequencing an individual’s genome for medical diagnosis and improved drug therapies. Advances in the years since 2004 suggest that this goal will be achieved before the target date of 2014,31 and the successful innovations could provide major advances in forensic DNA testing. However, it is too soon to identify which of the nascent sequencing technologies might emerge from the pack.

As of 2009, three different next-generation sequencing technologies were commercially available, and more instruments are in the pipeline.32 These new technologies generate massive amounts of DNA sequence data (100 million to 1 billion base pairs per run) at very low cost (under $50 per megabase). They do so by simultaneously sequencing millions of short fragments, then applying bioinformatics software to assemble the sequences in the correct order. These high-throughput sequencing technologies have demonstrated their usefulness in research applications. Two of these applications, the analysis of highly degraded DNA33 and the identification of microbial bioterrorism agents, are of forensic relevance.34 As the speed and cost of sequencing diminish and the necessary bioinformatics software becomes more accessible and effective, full-genome sequence

29. P. Yager et al., Microfluidic Diagnostic Technologies for Global Public Health, 442 Nature 412 (2006).

30. K.M. Horsman et al., Forensic DNA Analysis on Microfluidic Devices: A Review, 52 J. Forensic Sci. 784 (2007). As indicated in this review, there remain challenges to overcome before the forensic lab on a chip comes to fruition. However, given the progress being made on multiple research fronts in chip fabrication design and in microfluidic technology, these challenges seem surmountable.

31. R.F. Service, The Race for the $1000 Genome, 311 Science 1544 (2006).

32. Michael L. Metzker, Sequencing Technologies—The Next Generation, 11 Nature Rev. Genetics 31 (2010).

33. The next-generation technologies have been used to sequence highly degraded DNA from Neanderthal bones and from the hair of the extinct woolly mammoth. R.E. Green et al., Analysis of One Million Base Pairs of Neanderthal DNA, 444 Nature 330 (2006); W. Miller, Sequencing the Nuclear Genome of the Extinct Woolly Mammoth, 456 Nature 387 (2008). The approaches used in these studies are readily translatable to SNP typing of highly degraded DNA such as found in cases involving victims of mass disasters.

34. By sequencing entire bacterial genomes, researchers can rapidly differentiate organisms that have been genetically modified for biological warfare or terrorism from routine clinical and envi-

analysis or something approaching it could become a practical tool for human identification.

Hybridization microarrays are the third technological innovation with readily foreseeable forensic application. A microarray consists of a two-dimensional grid of many thousands of microscopic spots on a glass or plastic surface, each containing many copies of a short piece of single-stranded DNA tethered to the surface at one end; each spot can be thought of as a dense cluster of tiny, single-stranded DNA “whiskers” with their own particular sequence. A solution containing single-stranded target DNA is washed over the microarray surface. The whiskers on the array serve as probes to detect DNA (or RNA) with the corresponding complementary sequence. The spots that capture target DNA are identified, indicating the presence of that sequence in the target sample. (The hybridization can be detected in several different ways.) Microarrays are commercially available for the detection of SNPs in the human genome and for sequencing human mitochondrial DNA.35

4. What questions do the new technologies raise?

As these or other emerging technologies are introduced in court, certain basic questions will need to be answered. What is the principle of the new technology? Is it simply an extension of existing technologies, or does it invoke entirely new concepts? Is the new technology used in research or clinical applications independent of forensic science? Does the new technology have limitations that might affect its application in the forensic sphere? Finally, what testing has been done and with what outcomes to establish that the new technology is reliable when used on forensic samples? For next-generation sequencing technologies and microarray technologies, the questions may be directed as well to the bioinformatics methods used to analyze and interpret the raw data. Obtaining answers to these questions would likely require input both from experts involved in technology development and application and from knowledgeable forensic experts.

ronmental strains. B. La Scola et al., Rapid Comparative Genomic Analysis for Clinical Microbiology: The Francisella Tularensis Paradigm, 18 Genome Res. 742 (2008).

35. One study of 3000 Europeans used a commercial microarray with over half a million SNPs “to infer [the individuals’] geographic origin with surprising accuracy—often to within a few hundred kilometers.” John Novembre et al., Genes Mirror Geography Within Europe, 456 Nature 98, 98 (2008). Microarrays also are used in studies of variation in the number of copies of certain genes in different people’s genomes (copy number variation). Microarrays to detect pathogens and other targets also have been developed.

III. Sample Collection and Laboratory Performance

A. Sample Collection, Preservation, and Contamination

The primary determinants of whether DNA typing can be done on any particular sample are (1) the quantity of DNA present in the sample and (2) the extent to which it is degraded. Generally speaking, if a sufficient quantity of reasonable quality DNA can be extracted from a crime scene sample, no matter what the nature of the sample, DNA typing can be done without problem. Thus, DNA typing has been performed on old blood stains, semen stains, vaginal swabs, hair, bone, bite marks, cigarette butts, urine, and fecal material. This section discusses what constitutes sufficient quantity and reasonable quality in the context of STR typing. Complications from contaminants and inhibitors also are discussed. The special technique of mitotyping and the treatment of samples that contain DNA from two or more contributors are discussed in Section V.

1. Did the sample contain enough DNA?

Amounts of DNA present in some typical kinds of samples vary from a trillionth or so of a gram for a hair shaft to several millionths of a gram for a postcoital vaginal swab. Most PCR test protocols recommend samples on the order of 1 billionth to 5 billionths of a gram for optimum yields. Normally, the number of amplification cycles for nuclear DNA is limited to 28 or so to ensure that there is no detectable product for samples containing less than about 20 cell equivalents of DNA.36

Procedures for typing still smaller samples—down to a single cell’s worth of nuclear DNA—have been studied. These have been shown to work, to some extent, with trace or contact DNA left on the surface of an object such as the steering wheel of a car. The most obvious strategy is to increase the number of amplification cycles. The danger is that chance effects might result in one allele being amplified much more than another. Alleles then could drop out, small peaks from unusual alleles at other loci might “drop in,” and a bit of extraneous DNA could contribute to the profile. Other protocols have been developed for typing such “low copy number” (LCN) or “low template” (LT) DNA.37 LT-STR

36. This is about 100 to 200 trillionths of a gram. A lower limit of about 10 to 15 cells’ worth of DNA has been determined to give balanced amplification.

37. See, e.g., John Buckleton & Peter Gill, Low Copy Number, in Forensic DNA Evidence Interpretation 275 (John S. Buckleton et al. eds., 2005); Pamela J. Smith & Jack Ballantyne, Simplified Low-Copy-Number DNA Analysis by Post-PCR Purification, 52 J. Forensic Sci. 820 (2007).

profiles have been admitted in courts in a few countries,38 and they are beginning to appear in prosecutions in the United States.39

Although there are tests to estimate the quantity of DNA in a sample, whether a particular sample contains enough human DNA to allow typing cannot always be predicted accurately. The best strategy is to try. If a result is obtained, and if the controls (samples of known DNA and blank samples) have behaved properly, then the sample had enough DNA. The appearance of the same peaks in repeated runs helps assure that these alleles are present.40

2. Was the sample of sufficient quality?

The primary determinant of DNA quality for forensic analysis is the extent to which the long DNA molecules are intact. Within the cell nucleus, each molecule of DNA extends for millions of base pairs. Outside the cell, DNA spontaneously degrades into smaller fragments at a rate that depends on temperature, exposure to oxygen, and, most importantly, the presence of water.41 In dry biological samples, protected from air, and not exposed to temperature extremes, DNA degrades very slowly. STR testing has proved effective with old and badly degraded material such as the remains of the Tsar Nicholas family (buried in 1918 and recovered in 1991).42

38. E.g., R. v. Reed [2009] (CA Crim. Div.) EWCA Crim. 2698, ¶ 74 (reviewing expert submissions and concluding that “Low Template DNA can be used to obtain profiles capable of reliable interpretation if the quantity of DNA that can be analysed is above the stochastic threshold [of] between 100 and 200 picograms”).

39. People v. Megnath, 898 N.Y.S.2d 408 (N.Y. Sup. Ct. 2010) (reasoning that “LCN DNA analysis” uses the same steps as STR analysis of larger samples and that the modifications in the procedure used by the laboratory in the case were generally accepted); cf. United States v. Davis, 602 F. Supp. 2d 658 (D. Md. 2009) (avoiding “making a finding with regard to the dueling definitions of LCN testing advocated by the parties” by finding that “the amount of DNA present in the evidentiary samples tested in this case” was in the normal range). These cases and the admissibility of low-template DNA analysis are discussed in Kaye et al., supra note 1, § 9.2.3(c).

40. John M. Butler & Cathy R. Hill, Scientific Issues with Analysis of Low Amounts of DNA, LCN Panel on Scientific Issues with Low Amounts of DNA, Promega Int’l Symposium on Human Identification, Oct. 15, 2009, available at http://www.cstl.nist.gov/strbase/pub_pres/Butler_Promega2009-LCNpanelfor-STRBase.pdf.

41. Other forms of chemical alteration to DNA are well studied, both for their intrinsic interest and because chemical changes in DNA are a contributing factor in the development of cancers in living cells. Some forms of DNA modification, such as that produced by exposure to ultraviolet radiation, inhibit the amplification step in PCR-based tests, whereas other chemical modifications appear to have no effect. C.L. Holt et al., TWGDAM Validation of AmpFlSTR PCR Amplification Kits for Forensic DNA Casework, 47 J. Forensic Sci. 66 (2002); George F. Sensabaugh & Cecilia von Beroldingen, The Polymerase Chain Reaction: Application to the Analysis of Biological Evidence, in Forensic DNA Technology 63 (Mark A. Farley & James J. Harrington eds., 1991).

42. Peter Gill et al., Identification of the Remains of the Romanov Family by DNA Analysis, 6 Nature Genetics 130 (1994).

The extent to which degradation affects a PCR-based test depends on the size of the DNA segment to be amplified. For example, in a sample in which the bulk of the DNA has been degraded to fragments well under 1000 base pairs in length, it may be possible to amplify a 100-base-pair sequence, but not a 1000-base-pair target. Consequently, the shorter alleles may be detected in a highly degraded sample, but the larger ones may be missed. Fortunately, the size differences among STR alleles at a locus are quite small (typically no more than 50 base pairs). Therefore, if there is a degradation effect on STR typing, it is usually “locus dropout”—in cases involving severe degradation, loci yielding larger products (greater than 200 base pairs) may not be detected.43

DNA can be exposed to a great variety of environmental insults without any effect on its capacity to be typed correctly. Exposure studies have shown that contact with a variety of surfaces, both clean and dirty, and with gasoline, motor oil, acids, and alkalis either have no effect on DNA typing or, at worst, render the DNA untypable.44

Although contamination with microbes generally does little more than degrade the human DNA, other problems sometimes can occur. Therefore, the validation of DNA typing systems should include tests for interference with a variety of microbes to see if artifacts occur. If artifacts are observed, then control tests should be applied to distinguish between the artifactual and the true results.

1. What forms of quality control and assurance should be followed?

DNA profiling is valid and reliable, but confidence in a particular result depends on the quality control and quality assurance procedures in the laboratory. Quality control refers to measures to help ensure that a DNA-typing result (and its interpretation) meets a specified standard of quality. Quality assurance refers to monitoring, verifying, and documenting laboratory performance. A quality assurance program helps demonstrate that a laboratory is meeting its quality control objectives and thus justifies confidence in the quality of its product.45

43. Holt et al., supra note 41. Special primers and very short STRs give better results with extremely degraded samples. See Michael D. Coble & John M. Butler, Characterization of New MiniSTR Loci to Aid Analysis of Degraded DNA, 50 J. Forensic Sci. 43 (2005).

44. Holt et al., supra note 41. Most of the effects of environmental insult readily can be accounted for in terms of basic DNA chemistry. For example, some agents produce degradation or damaging chemical modifications. Other environmental contaminants inhibit restriction enzymes or PCR. (This effect sometimes can be reversed by cleaning the DNA extract to remove the inhibitor.) But environmental insult does not result in the selective loss of an allele at a locus or in the creation of a new allele at that locus.

45. For a review of the history of quality assurance in forensic DNA testing, see J.L. Peterson et al., The Feasibility of External Blind DNA Proficiency Testing. I. Background and Findings, 48 J. Forensic Sci. 21, 22 (2003).

Professional bodies within forensic science have described procedures for quality assurance. Guidelines for DNA analysis have been prepared by FBI-appointed groups (the current incarnation is known as SWGDAM);46 a number of states require forensic DNA laboratories to be accredited;47 and federal law requires accreditation or other safeguards of laboratories that receive certain federal funds48 or participate in the national DNA database system.49

a. Documentation

Quality assurance guidelines normally call for laboratories to document laboratory organization and management, personnel qualifications and training, facilities, evidence control procedures, validation of methods and procedures, analytical procedures, equipment calibration and maintenance, standards for case documentation and report writing, procedures for reviewing case files and testimony, proficiency testing, corrective actions, audits, safety programs, and review of subcontractors.

46. The FBI established the Technical Working Group on DNA Analysis Methods (TWGDAM) in 1988 to develop standards. The DNA Identification Act of 1994, 42 U.S.C. § 14131(a) & (c) (2006), created a DNA Advisory Board (DAB) to assist in promulgating quality assurance standards, but the legislation allowed the DAB to expire after 5 years (unless extended by the Director of the FBI). 42 U.S.C. § 14131(b) (2008). TWGDAM functioned under DAB, 42 U.S.C. § 14131(a) (2006), and was renamed the Scientific Working Group on DNA Analysis Methods (SWGDAM) in 1999. When the FBI allowed DAB to expire, SWGDAM replaced DAB. See Norah Rudin & Keith Inman, An Introduction to Forensic DNA Analysis 180 (2d ed. 2002); Paul C. Giannelli, Regulating Crime Laboratories: The Impact of DNA Evidence, 15 J.L. & Pol’y 59, 82–83 (2007).

47. New York was the first state to impose this requirement. N.Y. Exec. Law § 995-b (McKinney 2006) (requiring accreditation by the state Forensic Science Commission).

48. The Justice for All Act, enacted in 2004, required DNA labs to be accredited within 2 years “by a nonprofit professional association of persons actively involved in forensic science that is nationally recognized within the forensic science community” and to “undergo external audits, not less than once every 2 years, that demonstrate compliance with standards established by the Director of the Federal Bureau of Investigation.” 42 U.S.C. § 14132(b)(2) (2006). Established in 1981, the American Society of Crime Laboratory Directors–Laboratory Accreditation Board (ASCLD-LAB) accredits forensic laboratories. Giannelli, supra note 46, at 75. The 2004 Act also requires applicants for federal funds for forensic laboratories to certify that the laboratories use “generally accepted laboratory practices and procedures, established by accrediting organizations or appropriate certifying bodies,” 42 U.S.C. § 3797k(2) (2004), and that “a government entity exists and an appropriate process is in place to conduct independent external investigations into allegations of serious negligence or misconduct substantially affecting the integrity of the forensic results committed by employees or contractors of any forensic laboratory system, medical examiner’s office, coroner’s office, law enforcement storage facility, or medical facility in the State that will receive a portion of the grant amount.” Id. § 3797k(4). There have been problems in implementing the § 3797k(4) certification requirement. See Office of the Inspector General, U.S. Dep’t of Justice, Review of the Office of Justice Programs’ Paul Coverdell Forensic Science Improvement Grants Program, Evaluation and Inspections Report I-2008-001 (2008), available at http://www.usdoj.gov/oig/reports/OJP/e0801/index.htm.

49. See 42 U.S.C § 14132 (b)(2) (2006) (requiring as of late 2006, that records in the database come from laboratories that “have been accredited by a nonprofit professional association…and…undergo external audits, not less than once every 2 years [and] that demonstrate compliance with standards established by the Director of the Federal Bureau of Investigation….”).

Of course, maintaining documentation and records alone does not guarantee the correctness of results obtained in any particular case. Errors in analysis or interpretation might occur as a result of a deviation from an established procedure, analyst misjudgment, or an accident. Although case review procedures within a laboratory should be designed to detect errors before a report is issued, it is always possible that some incorrect result will slip through. Accordingly, determination that a laboratory maintains a strong quality assurance program does not eliminate the need for case-by-case review.

b. Validation

The validation of procedures is central to quality assurance. Developmental validation is undertaken to determine the applicability of a new test to crime scene samples; it defines conditions that give reliable results and identifies the limitations of the procedure. For example, a new genetic marker being considered for use in forensic analysis will be tested to determine if it can be typed reliably in both fresh samples and in samples typical of those found at crime scenes. The validation would include testing samples originating from different tissues—blood, semen, hair, bone, samples containing degraded DNA, samples contaminated with microbes, samples containing DNA mixtures, and so on. Developmental validation of a new set of loci also includes the generation of population databases and the testing of alleles for statistical independence. Developmental validation normally results in publication in the scientific literature, but a new procedure can be validated in multiple laboratories well ahead of publication.

Internal validation, on the other hand, involves the capacity of a specific laboratory to analyze the new loci. The laboratory should verify that it can reliably perform an established procedure that already has undergone developmental validation. In particular, before adopting a new procedure, the laboratory should verify its ability to use the system in a proficiency trial.50

c. Proficiency testing

Proficiency testing in forensic genetic testing is designed to ascertain whether an analyst can correctly determine genetic types in a sample whose origin is unknown to the analyst but is known to a tester. Proficiency is demonstrated by making correct genetic typing determinations in repeated trials. The laboratory also can be tested to verify that it correctly computes random-match probabilities or similar statistics.

An internal proficiency trial is conducted within a laboratory. One person in the laboratory prepares the sample and administers the test to another person in the laboratory. In an external trial, the test sample originates from outside the labo-

50. Both forms of validation build on the accumulated body of knowledge and experience. Thus, some aspects of validation testing need be repeated only to the extent required to verify that previously established principles apply.

ratory—from another laboratory, a commercial vendor, or a regulatory agency. In a declared (or open) proficiency trial, the analyst knows the sample is a proficiency sample. The DNA Identification Act of 1994 requires proficiency testing for analysts in the FBI as well as those in laboratories participating in the national database or receiving federal funding,51 and the standards of accrediting bodies typically call for periodic open, external proficiency testing.52

In a blind (or, more properly, “full blind”) trial, the sample is submitted so that the analyst does not recognize it as a proficiency sample. A full-blind trial provides a better indication of proficiency because it ensures that the analyst will not give the trial sample any special attention, and it tests more steps in the laboratory’s processing of samples. However, full-blind proficiency trials entail considerably more organizational effort and expense than open proficiency trials. Obviously, the “evidence” samples prepared for the trial have to be sufficiently realistic that the laboratory does not suspect the legitimacy of the submission. A police agency and prosecutor’s office have to submit the “evidence” and respond to laboratory inquiries with information about the “case.” Finally, the genetic profile from a proficiency test must not be entered into regional and national databases. Consequently, although some forensic DNA laboratories participate in full-blind testing, they are not required to do so.53

2. How should samples be handled?

Sample mishandling, mislabeling, or contamination, whether in the field or in the laboratory, is more likely to compromise a DNA analysis than is an error in genetic typing. For example, a sample mixup due to mislabeling reference blood samples taken at the hospital could lead to incorrect association of crime scene samples to a reference individual or to incorrect exclusions. Similarly, packaging two items with wet bloodstains into the same bag could result in a transfer of stains between the items, rendering it difficult or impossible to determine whose blood was originally on each item. Contamination in the laboratory may result in artifactual

51. 42 U.S.C. § 14132(b)(2) (requiring external proficiency testing of laboratories for participation in the national database); id. § 14133(a)(1)(A) (2006) (same for FBI examiners).

52. See Peterson et al., supra note 45, at 24 (describing the ASCL-LAB standards). Certification by the American Board of Criminalistics as a specialist in forensic biology DNA analysis requires one proficiency trial per year. Accredited laboratories must maintain records documenting compliance with required proficiency test standards.

53. The DNA Identification Act of 1994 required the director of the National Institute of Justice to report to Congress on the feasibility of establishing an external blind proficiency testing program for DNA laboratories. 42 U.S.C. § 14131(c) (2006). A National Forensic DNA Review Panel advised the Director that “blind proficiency testing is possible, but fraught with problems” of the kind listed above). Peterson et al., supra note 46, at 30. It “recommended that a blind proficiency testing program be deferred for now until it is more clear how well implementation of the first two recommendations [the promulgation of guidelines for accreditation, quality assurance, and external audits of casework] are serving the same purposes as blind proficiency testing.” Id.

typing results or in the incorrect attribution of a DNA profile to an individual or to an item of evidence. Procedures should be prescribed and implemented to guard against such error.

Mislabeling or mishandling can occur when biological material is collected in the field, when it is transferred to the laboratory, when it is in the analysis stream in the laboratory, when the analytical results are recorded, or when the recorded results are transcribed into a report. Mislabeling and mishandling can happen with any kind of physical evidence and are of great concern in all fields of forensic science. Checkpoints should be established to detect mislabeling and mishandling along the line of evidence flow. Investigative agencies should have guidelines for evidence collection and labeling so that a chain of custody is maintained. Similarly, there should be guidelines, produced with input from the laboratory, for handling biological evidence in the field.

Professional guidelines and recommendations require documented procedures to ensure sample integrity and to avoid sample mixups, labeling errors, recording errors, and the like.54 They also mandate case review to identify inadvertent errors before a final report is released. Finally, laboratories must retain, when feasible, portions of the crime scene samples and extracts to allow reanalysis.55 However, retention is not always possible. For example, retention of original items is not to be expected when the items are large or immobile (e.g., a wall or sidewalk). In such situations, a swabbing or scraping of the stain from the item would typically be collected and retained. There also are situations where the sample is so small that it will be consumed in the analysis.