The proposed DUSEL science program encapsulates an initial suite of physics experiments and diverse multidisciplinary research experiments in subsurface engineering, the geosciences, and the biosciences and has the capacity for more future experiments. This chapter undertakes to present the committee’s assessment of the main physics questions to be addressed by the proposed physics experiments and of the impact of the proposed facility on research in fields other than physics. The proposed physics experiments are one or more dark matter experiments; a long-baseline experiment for the study of neutrino oscillations and proton decay that is also capable of measurements in neutrino astrophysics; a neutrinoless double-beta decay experiment; and an accelerator-based nuclear astrophysics experiment. Accordingly, the chapter assesses, in no particular order, the physics questions of dark matter, of long-baseline neutrino oscillations and neutrinoless double-beta-decay in the larger context of neutrino physics and, together with proton decay, in the context of unified theories; of nuclear astrophysics, and of neutrino astrophysics. It also undertakes an assessment of the impact of the proposed laboratory infrastructure on research in fields other than physics—namely, subsurface engineering and the geosciences and biosciences.

To give an idea of the scale of the experiments needed to address the elements of the proposed DUSEL program, the construction cost ranges estimated by the DUSEL project during the preliminary design process were $80 million to $200 million for the dark matter experiment(s); $785 million to $1,065 million for the long-baseline neutrino and proton decay experiment, $250 million to $350 million for the neutrinoless double-beta decay experiment, $30 million to $50 million for

the nuclear astrophysics facility, and $60 million to $180 million total for multiple experiments in subsurface engineering and geoscience and bioscience. The estimated incremental costs associated with efforts to detect supernovas and proton decay are not significant. Budgetary considerations and further development of the experiments will, of course, change the actual costs of these experiments.

Because both the DUSEL program and the designs for the experiments to address the critical physics questions are still evolving, the committee chose to focus its assessment on the scientific merits of the questions to be addressed rather than on the technical merits of the experiments as they are now designed. Accordingly, it did not assess the technical merits of each experiment being sited at DUSEL or the suitability of alternative sites. Similarly, the committee chose to focus its assessment on the general scientific merits of research in the fields other than physics that would be enabled by the availability of an underground research facility rather than on the specific scientific or technical merits of a particular suite of nonphysics underground experiments. In choosing to focus in this way, the committee intends its assessments to be of value to the future direction of underground research, independent of whether the DUSEL program, as presently conceived, is realized. Finally, the committee assessed the intellectual merit of the underground science of the proposed DUSEL program in the general context of frontier scientific research worldwide. It was not a purpose of this study to rank the different fields or subfields of science, or to prioritize across programs. Neither the individual science questions nor the overall scientific program were compared with those of any other particular projects or investments.

Overview

Astronomers are sure that what can be detected by telescopes represents only a small portion of the Universe; furthermore, only a small fraction (~4 percent) is made of normal matter of the type that we live with here on Earth and observe directly elsewhere. The remainder of the Universe is composed of dark matter (about 22 percent), which has mass but does not emit or absorb light, and dark energy (about 74 percent). While dark energy is best studied using astrophysical techniques, direct detection of dark matter in the laboratory is possible, and direct experimental detection of dark matter interactions would profoundly change our understanding of both the microscopic world of elementary particles and the macroscopic astrophysical world, thus bridging the very smallest and the very largest objects in the known Universe.

The first evidence for the existence of dark matter came from observations of the rate at which astronomical objects such as stars, gas clouds, and galaxies rotate. It was discovered that bodies far from the center of rotation move faster than would be predicted using the laws of gravity and the visible mass of known objects, suggesting that unseen bodies existed on a grand scale. Additional evidence for dark matter comes from cosmological observations such as the fluctuation patterns of the cosmic microwave background, and further corroboration is provided by observations of colliding galaxies where the dark matter has been imaged using gravitational lensing. Depictions of this phenomenon have captured the imagination of the general public (see Figure 3.1).

Many explanations of the composition of dark matter have been proposed and compared with experimental data. Some of the dark matter could come from unobserved dark bodies of ordinary matter, such as massive compact halo objects or molecular gas clouds. However, to understand cosmological data requires the existence of exotic dark matter, and there now is consensus that most of the dark matter consists of as-yet-undiscovered elementary particles whose nature has yet to be determined. One possibility motivated by theory is that the dark matter arises from a particle called the axion. Experimental searches for axions and indirect astrophysical detection of dark matter use techniques that do not operate underground and so will not be discussed here. A second theoretically attractive possibility is that dark matter consists of weakly interacting massive particles (WIMPs). Such WIMPs could be directly detected in underground experiments and would be the focus of an underground dark matter search program.

Scientific Landscape

Theories of elementary particle physics provide natural candidates for WIMPs. For example, in many supersymmetric models, the lightest supersymmetric particle is stable, and many of these theories naturally provide particles with masses and interaction cross sections that are consistent with astronomical and cosmological bounds on WIMP properties. There are also nonsupersymmetric theories that postulate the existence of particles with the appropriate properties. Several of these particles are being searched for in accelerator-based programs such as the Large Hadron Collider (LHC) of the European Organization of Nuclear Research (CERN). However, only the direct detection of naturally occurring WIMPs would assure that these particles, whether discovered at an accelerator or not, are in fact the source of dark matter.

Because they are elementary particles not found in the Standard Model, it is likely that, when discovered, dark matter particles will be a central ingredient in finding solutions to known problems with present particle theory. Knowledge of the mass, the interaction rate, and the number density of dark matter particles

FIGURE 3.1 Color-coded image of colliding galaxies, with familiar matter shown in red (from x-rays) and dark matter shown in blue (modeled from weak lensing measurements). The interactions of familiar matter slow the collision, while the weakly interacting dark matter associated with each galaxy is essentially transparent and so passes through. The cluster, known as MACS J0025.4-1222, is a composite of separate exposures from the Hubble Telescope and the Chandra observatory. Astronomers say the images may shed light on the behavior of dark matter. SOURCE: x-ray image, National Aeronautics and Space Administration/Chandra X-ray Center/Stanford University/S. Allen; optical ensing image, National Aeronautics and Space Administration/Space Telescope Science Institute/University of California at Santa Barbara/M. Bradac.

independent of any theoretical framework would allow predictions of production and annihilation rates that could be tested in future experiments. These data would also affect cosmological calculations relevant for describing the evolution of the Universe.

Experimental Aspects

The direct detection of dark matter would involve the search for collisions between ordinary nuclei and WIMPs from the halo of our galaxy. Such observations would be difficult, since WIMPs interact rarely and the signals of the collision would be very faint. Therefore, detectors having a good likelihood of measuring such collisions would need to be large and operate deep underground to reduce backgrounds of cosmic ray origin that can mimic the signals being sought.

These searches are based on the hypothesis that dark matter consists of WIMPs with a mass of a few tens of proton masses or greater. When such a particle collides with a target it should produce a recoiling nucleus whose energy can be measured through scintillation light flashes, phonons, or ionization produced by the nucleus. Learning to address the challenges associated with these types of studies requires a series of experiments with ever-increasing target mass and improvements in methods for rejecting background signals. History teaches that each generation of detector corresponds to an increase of about an order of magnitude in target mass. In the 25 years since WIMPs were first proposed as a dark matter candidate, the sensitivity of nuclear recoil experiments has improved by a factor of more than 1 billion. Once irreducible backgrounds are encountered for a specific detector, further running in the same configuration improves sensitivity only very slowly. It is much more efficient to determine appropriate solutions to identify and account for backgrounds and then to incorporate these improvements while also increasing the target mass.

Past experiments are referred to as generation zero (G0) and ongoing experiments as generation one (G1). G1 experiments typically operate with tens of kilograms of target mass and are reaching much better background reduction and sensitivity than G0 experiments. Experience with the targets and the handling of backgrounds have informed next-generation designs, and G2 experiments are currently under development and installation. These experiments will have hundreds of kilograms of target mass, and the following generation, G3, will have even greater target masses, 0.5 to multiple tons. The experiments considered for DUSEL are in the G3 category.

The dark matter experiments summarized in Table 3.1 illustrate the current and future generations of detector and techniques. U.S. scientists historically have been heavily involved in this research and are expected to continue their involvement. The compelling nature of the science, and the high discovery potential,

makes it important that they do so and that opportunities exist for discoveries to be made in the United States.

The strategy for experimental background rejection depends on which of the three signatures currently used to observe the nuclear recoil is chosen: scintillation, phonons, or ionization. Some experiments use a “single signature,” including the shape and localization of that signal. These include single-phase noble liquid (xenon, argon) scintillation experiments and experiments exploiting the bubble chamber concept, where ionization in a supersaturated liquid creates bubbles that can be detected visually or acoustically or both.

Other experiments, including most of the leading large experiments, use combinations of two signatures to reinforce background rejection: (1) light/ionization together with phonons or heat in crystals at millikelvin temperatures and (2) light/ionization in noble liquid detectors. Experiments of the first kind use germanium or scintillating crystals; those of the second are double-phase ionization/ scintillation xenon or argon experiments, so called because they operate under conditions where the gas and liquid phases coexist, enabling amplification of the weak ionization signal in the gas. Research and development are under way on direction-sensitive detectors using low-pressure-gas “time projection chambers.” Debates regularly surface in the dark matter community about whether certain experiments have properly excluded or included claims of positive signals. To address these uncertainties about signals, it is important that a single experiment be able to collect multiple complementary signals and that multiple experiments using different nuclear targets are conducted.

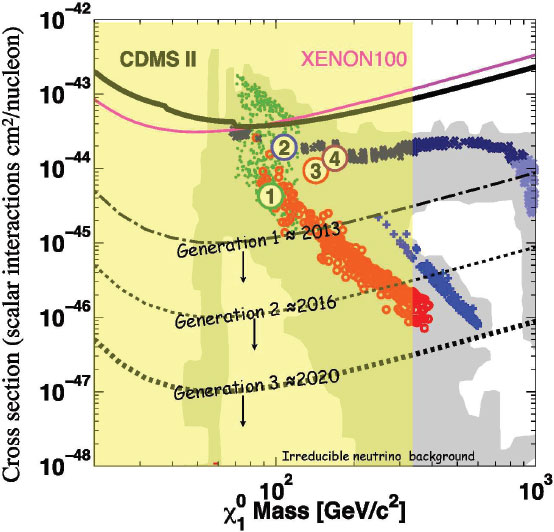

The most recent results over the WIMP mass range of 10-1,000 GeV exclude cross sections approaching 10−44 cm2 per nucleon for the simplest models (see Figure 3.2). However, the DAMA/Libra experiment has a long-standing observation with an annual modulation of the event rate that is consistent with a WIMP having a mass of less than 10 GeV. These results have persisted over 7 years of data taking. However, the cross section indicated by the DAMA/Libra experiment is not consistent with limits from the CDMS and XENON-100 experiments in most WIMP models. There are also measurements that may indicate an excess above backgrounds at very low WIMP masses, but this signal is not as well established as the DAMA/Libra observation. Finally, a number of cosmic ray experiments (PAMELA and ATIC) report excess electron or positron signals that could be from WIMP annihilation in our galaxy. It is, however, somewhat complicated to find dark matter models that reconcile these results with the charged cosmic ray data from the Fermi/LAT experiment. However, several research groups have pointed out that conventional astrophysical sources of positrons could account for the putative PAMELA/ATIC signal. The theoretical community has been very active in trying to explain some or all of these results and has developed new models leading to new signals to search for at the LHC, in B-factory data and in electron-scattering

TABLE 3.1 Plans of WIMP Search Collaborations Using Nondirectional Detectors Around the World

|

|

||||||

| Country/ Region |

Current Generation (G1) | Generation 2 (G2) | Generation 3 (G3) | |||

|---|---|---|---|---|---|---|

|

|

||||||

| Gross Mass | Current Status | Gross Mass | Current Status | Gross Mass | Current Status | |

|

|

||||||

| United States | LUX 350 kg Xe Sanford Lab |

Assembly 2011 Install |

LZS 1.5-3 tons Xe Sanford Lab |

Design Same water tank as LUX |

LZD 20 tons Xe DUSEL |

S4, R&D 2017 |

| U.K./Portugal/Russia | ZEPLIN III 10 kg Xe Boulby, U.K. |

Running (2009-2010) |

||||

| United States | Darkside-50 50 kg Ar LNGS |

Design DAr under procurement 2011-2012 |

1 ton | Design Same shield as DarkSide-50 |

MAX 6 tons Xe 20 tons DAr DUSEL |

S4, R&D 2017 Install |

| United States/Europe/China | XENON100 80 kg Gran Sasso |

Running | XENON1T 2.4 tons Xe |

Design 2012 Install |

||

| United States/Canada | SCDMS 10 kg Ge Soudan |

Construction 2011 Install |

SCDMS 100 kg Ge SNOLAB |

R&D 2014 Install |

GEODM 1.5 tons DUSEL |

S4, R&D 2018 |

| United States | COUPP 60 kg CF3 SNOLAB |

Construction NUMI test 2010 |

500 kg | 2011 Design 2013 Install |

16 ton scale | S4 R&D |

| Canada | PICASSO 2.6 kg SNOLAB |

Running | PICASSO II 25 kg |

2010/11 Install |

PICASSO III >500 kg |

2012/13 Install |

| United States/Canada | MiniCLEAN 500 kg Ar |

Construction 2011 Install |

DEAP-3600 3.6 tons |

Funded 2012 Install |

CLEAN 50 tons Ar/Ne |

Planning R&D |

| Europe | Edelweiss Now 3 kg → 24 kg Ge 2011 Modane |

Running 24 kg funding secured |

EURECA 100 kg Ge interleaved Ge/scintillator, Modane |

Active R&D 2013 Install |

EURECA 1 ton Ge/Scintillator, LS Modane extension Merging of CRESST and Edelweiss |

Planning 2016 |

| Europe | CRESST 5 kg of CaWO4 Gran Sasso |

Running | ||||

| Europe | ArDM 800 kg Ar Canfranc | Construction 2011 Install | ||||

| Europe/United States | WARP 140 kg Ar Gran Sasso | Running | ||||

|

|

||||||

| Country/ Region |

Current Generation (G1) | Generation 2 (G2) | Generation 3 (G3) | |||

|---|---|---|---|---|---|---|

|

|

||||||

| Gross Mass | Current Status | Gross Mass | Current Status | Gross Mass | Current Status | |

|

|

||||||

| Japan | XMASS 800 kg Xe Kamioka | Installation Running 2010 | XMASS II 5 tons | R&D 2014 Install | XMASS III 10 tons | Planning 2016 |

| China | JinPing lab Ge and/or Xe | Planning | 100 kg | 2015 R&D | >1 ton | 2020 |

|

|

||||||

NOTE: All masses are the active masses of the central detectors. DAr, Ar depleted in 39Ar; LUX, Large Underground Xenon experiment; LZS, 1,500 to 3,000 kg liquid xenon detector; LZD, 20-ton liquid xenon detector; S4, NSF Solicitation 4; ZEPLIN III, two-phase Xe detector; Darkside, Depleted Argon [K]ryogenic Scintillation and Ionization Detector; MAX, Multiton Argon and Xenon detector; LNGS, Laboratori Nazionali del Gran Sasso; XENON100, Xenon 100-kg dark matter experiment; XENON1T, Xenon 1 ton dark matter experiment; SCDMS, Soudan Cryogenic Dark Matter Search; GEODM, Germanium Observatory for Dark Matter; COUPP, Chicagoland Observatory for Underground Particle Physics; MiniCLEAN, Mini-Cryogenic Low Energy Astrophysics with Noble liquids experiment; DEAP-3600, Dark matter Experiment using Argon Pulse-shape discrimination; CLEAN, Cryogenic Low Energy Astrophysics with Noble liquids experiment; EURECA, European Underground Rare Event Calorimeter Array; CRESST, Cryogenic Rare Event Search with Superconducting Thermometers; ArDM, Argon Dark Matter experiment; WARP, Wimp Argon Program experiment; XMASS, Xenon Dark Matter Search Experiment. SOURCE: Adapted from B. Sadoulet, University of California at Berkeley, “Dark Matter at DUSEL,” Presentation to the committee on December 14, 2010.

experiments. At some level, dark matter imposes itself on every branch of particle physics. To keep all these communities from confusion as claims of discovery are made, definitive conclusions must be reached, and this will necessitate more than one detector that uses more than one technique.

In the next 4 to 6 years, with the deployment of the G2 experiments such as MiniCLEAN, DEAP-3600, and LUX, and as new results from XENON-100 become available, sensitivities can be expected to increase by another order of magnitude.

Two of the approaches under consideration for G3 detectors are 1-ton phonon-mediated low-temperature detectors and 1-ton or multiton noble liquids. U.S. scientists are playing leadership roles using both techniques, and contacts between this country and European groups are well developed. G3 experiments will push the cross section sensitivity below 10−47 cm2 per nucleon. Sensitivity near 10−48 cm2 per nucleon approaches a new background regime at which solar neutrino coherent scattering becomes important. This solar neutrino background is irreducible, and to progress past the regime, statistical background subtraction or directional detection become necessary, both of which represent a quantum step in difficulty. Thus, supporting G3 experiments are a natural goal for the next decade. On a longer timescale, large directional detectors may be required. Underground access for detector development is essential because background signals at the surface make it impossible to accurately assess performance aboveground. Post-G3 and large directional detectors would likely require large caverns at great depth.

FIGURE 3.2 WIMP current limits (solid top curves) and sensitivity and goals (numbered) for the next 3 years; Generation 1 (G1), with results in 2013; Generation 2 (G2), with results in about 2016; and Generation 3 (G3), with results in about 2020. The shaded regions represent the expectation of several minimum supersymmetry models. SOURCE: Courtesy of Bernard Sadoulet, University of California at Berkeley, and Richard Gaitskell, Brown University.

Once a definitive dark matter signal is established, the next goal would be to observe the annual signal modulation as Earth’s, velocity relative to the dark matter halo changes owing to Earth’s motion around the Sun. Such velocity effects, largest at the threshold energy of the detector, would take several years of operation to convincingly establish. An annual modulation signal would be compelling evidence and within the scope of a G2 or G3 experiment.

Beyond annual modulation, a detector with directional sensitivity could potentially observe a daily modulation of the direction of dark matter at all energies due

to the finite rotational velocity at the surface of the Earth, thereby opening the door to dark matter astronomy. Directional detection would give information about the velocity distribution of WIMPs and would begin to discriminate between models of the dark matter halo. Directional detectors would rely on detecting the nuclear recoil in low-pressure gas and so represent a new technology. They would require large caverns and are not expected to be deployed before 2024.

Summary

The predominant mass in the Universe is dark matter. Demonstrating that dark matter consists of elementary particles would be a major discovery. Understanding the nature and composition of these particles is a major scientific challenge for our time.1

The direct detection of dark matter would provide a crucial experimental connection between particle physics and cosmology. To be definitive, their signature signals would need to be significantly above the background and would need to come from different experiments. Concurrence between experiments will be essential: Several experiments have already claimed dark matter signals, but these have not been confirmed by other experiments. The program in dark matter detection will by necessity involve a number of G2 experiments that will coalesce into a smaller number of highly sophisticated and massive G3 detectors. Based on the history of leadership by U.S. physicists on experiments using all detection modes, it is expected that there will be U.S. involvement in more than one G3 experiment, and given the importance of this science and the discovery potential, it would be desirable for the United States to be a leader in at least one. Once dark matter has been observed, a major program for understanding the properties of the new particles will be required.

Conclusion: The direct detection dark matter underground experiment is of paramount scientific importance and will address a crucial question upon whose answer the tenets of our understanding of the Universe depend. This experiment would not only provide an exceptional opportunity to address a scientific question of paramount importance, it would also have a significant positive impact upon the stewardship of the particle physics and nuclear physics research communities and would have the United States assume a visible leadership role in the expanding field of underground science. In light of the leading roles played by U.S. scientists in the study of dark matter, together with the need to build two or more large experiments for this area,

___________________

1 NRC. 2006. Revealing the Hidden Nature of Space and Time. Washington, D.C.: The National Academies Press, p. 13.

U.S. particle and nuclear physicists are well positioned to assume leadership roles in the development of one direct detection dark matter experiment on the ton- to multiton scale. While installation of such a U.S.-developed experiment in an appropriate foreign facility would significantly benefit scientific progress and the research communities, there would be substantial advantages to the communities if this experiment could be installed within the United States, possibly at the same site as the long-baseline neutrino experiment.

Tests of Grand Unification Theories

The three other major physics experiments proposed for DUSEL—neutrino oscillations, neutrinoless double-beta decay, and proton decay—are among the most promising tests of theories that seek to provide a unified description of the forces.2 After providing a general overview of the nature of grand unification theories, these three experiments, and the roles they might play in resolving outstanding questions, are described.

We are able to observe the Universe because it contains important ingredients that are the stuff of ordinary matter: protons and neutrons, which are composites of quarks, and electrons. Whatever the history of the Universe, these particles were left behind and are stable enough to account for what is visible to us. Most of the properties and interactions of the visible matter made up of these particles can be accounted for by current particle theories. However, significant inconsistencies within existing theories and gaps in our knowledge remain. The remaining major physics experiments proposed for DUSEL should help fill those gaps and address those inconsistencies.

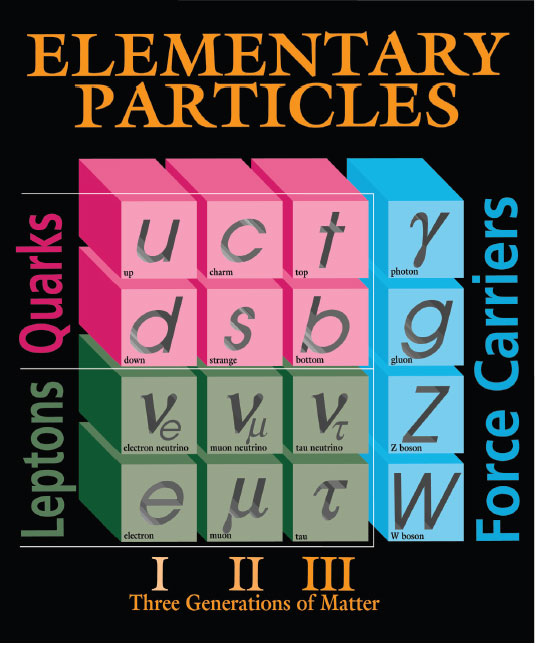

What is now called the Standard Model evolved throughout the twentieth century and aimed to describe the physics of these elementary particles and how they interact. In the Standard Model there are two fundamental fermion-type particles, as shown on the left side of Figure 3.3. They divide into six flavors of quarks and six types of leptons, three with a charge—the electron, the muon, and the tau—and their associated neutrinos. The quarks are strongly interacting fundamental particles that combine to make up the baryons (protons and neutrons); the leptons do not strongly interact. Each particle type is associated with a conserved quantum number. Quarks carry the baryon number, and baryon number conservation guarantees the stability of the proton and many nuclei. However, quarks also come in different flavors, and weak nuclear interactions can change one flavor of quark into another. The leptons carry lepton number L, and until the 1990s and

___________________

2 The dark matter experiment discussed in the preceding section also has implications for tests of grand unified theories by way of the information it might provide on supersymmetry.

FIGURE 3.3 A complete list of the known fundamental particles as of April 2011. The twelve particles on the left are the fermions (the building blocks of matter), while those on the right (in blue) are the bosons (the force carriers). The charged leptons (electron, muon, and tau) are in the bottom row and their associated neutrinos in the row above. The quarks (red) engage in strong, or nuclear, forces while the leptons (green) do not. All these particles engage in weak nuclear forces. The neutrinos are the only fermions without electric charge and so are the only matter particles that do not engage in electromagnetic interactions. Matter in the “visible” Universe consists of quarks (inside protons, neutrons, and other nuclei) and electrons, either alone or bound in atoms. Much of this matter, along with the neutrinos, is stable. The list does not contain the unknown stable particles comprising the “invisible” dark matter. SOURCE: Courtesy of Fermilab.

the discovery of neutrino oscillations, experimental observations were consistent with there being three separate conservation laws associated with the three lepton flavors—electron, muon, and tau lepton numbers.

In addition to the lepton and quark particles, there are four known fundamental forces—strong, electromagnetic, weak, and gravity. In any theory consistent with relativity and quantum mechanics, each force is associated with a boson-type particle, shown in the fourth column of Figure 3.3, which is the carrier of the force. In the Standard Model, the weak nuclear and electromagnetic forces are unified into the so-called electroweak interactions. The electromagnetic and weak interactions appear to be very different at low energy only because of the mass differences between their respective force carriers: The carrier of the electromagnetic force (the photon) is massless, while the carriers of the weak force (weak bosons) are approximately 100 times heavier than the proton.

A central question in particle physics is whether there are further unifications of forces. In the 1970s, the first grand unified theories (GUTs) unified the strong and electroweak interactions. From the broad class of GUTs emerges a unified picture of the quarks (strongly interacting fundamental particles) and the leptons that shares many similarities with the Standard Model. However, the GUTs differ from the Standard Model in several significant ways—for example, they predict that the proton is unstable and that there are bosons whose interactions can change a quark into a lepton or into an antiquark. Furthermore, unlike the Standard Model, in which neutrinos are massless, most GUTs predict that neutrinos would have tiny masses and would be their own antiparticles (Majorana-type particles) and that neither lepton number nor lepton flavor would be conserved. In GUTs, baryon and lepton number violations are associated with the exchange of new, extremely heavy force carriers, with masses ∼1015 times that of the proton, so that a process like proton decay exists but would be extremely rare. While direct production of such heavy force carriers will not be possible in any conceivable high-energy collider, proton decay may well be observable.

Cosmology also presents strong arguments in flavor of many of the conclusions drawn from GUTs—that the proton is unstable, that neutrinos have mass, and that lepton flavor violation should be different for neutrinos and antineutrinos. The present day excess of matter over antimatter translates to an excess of quarks over antiquarks in the early Universe of about one part in 108. In principle this excess could be an initial condition of the Universe; however, in standard cosmological explanations, inflationary expansion in the extremely early Universe would probably have removed any such initial excess. However, the Soviet nuclear physicist Andrei Sakharov pointed out that fundamental physics could produce a tiny excess of matter over antimatter during the early Universe provided three conditions are met: baryon number is not conserved, charge conjugation and

charge parity (CP) are not symmetries of nature (nature distinguishes between matter and antimatter), and the early Universe went through a period when it was out of thermal equilibrium. If the first two conditions are met, the proton should decay.

GUTs offer a beautiful explanation for the origin of the asymmetry between matter and antimatter, which is deeply connected with CP violation in neutrino oscillations, tiny neutrino masses, and neutrino flavor change. In GUTs, the neutrino masses are inversely proportional to the masses of very heavy particles, so the tiny size of the neutrino masses suggests the existence of particles that are too heavy to be produced today but that could have been produced in the early Universe. Such particles decay out of equilibrium into leptons, violating CP and lepton number conservation, thus producing an excess of leptons over antileptons (a process known as “leptogenesis”). Anomalous electroweak processes then convert some of the excess leptons into quarks (“baryogenesis”). Thus CP violation in neutrino physics and the Majorana nature of neutrinos could well be linked to the origin of the matter excess in the Universe.

Some of the inconsistencies between the Standard Model and GUTs have been resolved. For example, it is now known (and described in the following section) that, contrary to the tenets of the Standard Model but consistent with GUTs, neutrinos have small masses and that lepton numbers are not conserved, as neutrinos oscillate between types. However, many other inconsistencies have not been resolved, and gaps remain in our knowledge of the characteristics of these most fundamental of particles. The studies proposed for DUSEL are highly promising experimental approaches for testing many of these outstanding questions—do neutrinos oscillate, are neutrinos their own antiparticles, and do protons decay? These proposed studies are discussed in the following sections.

Although neutrinos were first observed experimentally more than 50 years ago, their properties are less well understood than those of other elementary particles, in part because they have no electric charge and interact very weakly, making them difficult to detect. Like other matter particles, neutrinos have spin, a form of angular motion, but very small masses, weighing many million times less than any other matter particles. Neutrinos come in three different generations, called flavors, and each neutrino shares a flavor quantum number with a charged lepton partner: the electron, the muon, and the tau (shown below the neutrinos in the bottom row of Figure 3.3). While the weakness of neutrino interactions makes them difficult to observe, it also makes them ideal probes of certain astrophysical processes (see the section “Neutrino Astrophysics”), and the study of tiny masses

may point to physics at far higher energies than could ever be reached with a terrestrial particle accelerator.

An interesting property of neutrinos is that a neutrino born with one flavor will spontaneously transform into another flavor as it moves through space. This phenomenon, known as “neutrino oscillation,” has only been known for about 15 years. A similar phenomenon among quarks has been studied extensively, most recently at the two B-factories, one in the United States and the other in Japan. Scientific interest in such oscillations arises because they provide a mechanism whereby particles and their antiparticles can interact differently. Such difference between particle and antiparticle behavior is known as “CP violation” and is a key component of theories attempting to explain why the Universe is made primarily of matter, with very little antimatter. The amount of CP violation among quarks is insufficient to allow these theories to explain our matter-dominated Universe. However, CP violation involving neutrinos is an attractive theoretical way to explain this matter/antimatter imbalance through a process called leptogenesis, which was discussed in the preceding section.

Another interesting property of neutrinos is their relationships with antineutrinos. For each particle species, there is a corresponding antiparticle species. When particles have a charge, their antiparticle partners have the opposite charge, making these particles and antiparticles distinct. For particles like neutrinos, which have no net charge, it will have to be determined experimentally whether the particle and the antiparticle are different. In the Standard Model of particle physics, neutrinos have a lepton number and antineutrinos have a lepton number of the opposite sign. Since lepton number is conserved in the Standard Model, neutrinos and antineutrinos are distinguishable and their differences can be studied through their interactions with matter. In many theories that extend the Standard Model, however, lepton number is not conserved. In such theories, it is possible for neutrinos to be their own antiparticles, a property that would make them Majorana particles. The most promising experimental sign of neutrinos being Majorana particles would be the observation of a rare nuclear decay called neutrinoless double-beta decay. This fundamentally important process has been unsuccessfully sought for many decades but is expected to become observable in the next decade.

We are entering an era when the accurate determination of the properties of neutrinos needed for a deeper understanding of particle physics will be possible. There are still anomalies in the data and huge gaps in our knowledge, making this a very exciting time to gather largely unexplored information about this perplexing group of particles. The experiments considered here address the most critical open questions in neutrino physics. Because the answers that emerge will have major impacts on cosmology as well as on particle physics, this work represents an important scientific opportunity for the U.S. physics community.

The Nature of Neutrinos—Oscillations (Long-Baseline Neutrino Experiment)

Electron neutrinos are the main product of the thermonuclear reactions that power the Sun. It has been about 40 years since Ray Davis and his collaborators first discovered evidence that their flux at Earth is substantially less than predicted by the best solar models of the time.3 S.M. Bilenky and Bruno Pontecorvo suggested that electron neutrinos change flavor in transit and become muon or tau neutrinos.4 Such neutrino oscillations require that neutrinos have mass. The ideas of both massive and flavor-changing neutrinos were revolutionary, and because the experimental evidence was not strong, the Standard Model of particle physics was constructed with massless and flavor-conserving neutrinos. Only within the last 15 years has the experimental evidence for neutrino oscillations become convincing enough for the scientific community to accept that they are a fact of nature.

To illustrate the most important phenomenological features of mixing, the case when only two neutrinos are involved will be considered. In a two-flavor world, the probability, P, that one flavor (say, ve) will appear in a pure beam that was initially of another flavor (say, vμ) is given as

P = sin2(2θ) sin2(1.27Δm2L/E)

where θ is the mixing angle, Δm2 is the difference between the squares of the masses of the two neutrinos (in eV2), L is the distance (in kilometers) from the source to the detector, and E is the energy of the neutrinos (in GeV). Of course the original flavor disappears at this same rate, so the total number of neutrinos remains constant.

This formula suggests two types of experiments: disappearance experiments, where a changing flux of the original flavor is observed as a function of distance or energy; and appearance experiments, where neutrinos of a different flavor appear in the beam. Note that the experimentally controllable parameters, the distance from the source and energy of the neutrinos, appear only in the ratio L/E. It is also noteworthy that oscillation experiments cannot determine the absolute masses of neutrinos since the probability P depends only on the difference between the squared masses. This formula for P also indicates how to measure the oscillation parameters—the amplitudes of the measured oscillations determine the mixing

___________________

3 See, for example, R. Davis, D.S. Harmer, and K.C. Hoffman. 1968. Search for neutrinos from sun. Physical Review Letters 20: 1205.

4 S.M. Bilenky and B. Pontecorvo. 1977. Lepton mixing and neutrino oscillations. Physics Review 41: 225.

angles, while the variations with either distance or energy determine the mass squared differences.

An important feature was added when Stanislav Mikheyev and Alexei Smirnov, building on the earlier work of Lincoln Wolfenstein, realized that interactions of the neutrinos with electrons in the Sun (or even in Earth) could lead to a substantial modification of the oscillation probabilities, resonantly making amplitudes of oscillation either larger or smaller than otherwise. These matter, or Mikheyev-Smirnov-Wolfenstein (“MSW”), effects can be important in understanding data and are very useful in that they allow the neutrino masses to be ordered.

With the three known flavors of neutrinos, the oscillation phenomena are more complicated but also much richer in possibilities. The formulas governing the three flavor mixing are well understood and contain a number of independent parameters that govern neutrino flavor change and propagation:

• Δm221, the mass-squared difference primarily associated with solar neutrino disappearance.5 It has been measured thus far to be |Δm221| = 7.59 ± 0.20) × 10−5eV2.

• Δm232, the larger mass-squared difference primarily associated with atmospheric muon neutrino disappearance. It has been measured thus far to be |Δm232| = 2.35 +0.11−0.08) × 10−3eV2.

• θ12 the parameter known best for governing the disappearance of solar electron neutrinos; sometimes known as the solar mixing angle. It has been measured thus far to be large: sin2 2θ12 = 0.87 ± 0.03.

• θ23 the parameter primarily known for its role in the disappearance of muon neutrinos. Because it was initially discovered in atmospheric neutrino experiments, it is sometimes known as the atmospheric mixing angle. It has been measured thus far to be consistent with the maximum possible value: sin2 2θ > 0.91.

• θ13, a still unknown parameter. It governs the probability that propagation involving the larger Δm213 associated with atmospheric neutrinos will involve electron flavor change. Its upper limit is sin2 2θ < 0.15.

• δ, the parameter representing a phase that governs the CP-violating difference between neutrino and antineutrino flavor change; its value is completely unknown.

• The hierarchy, or ordering, of the neutrino masses is contained in the signs of the linear mass differences. The sign of Δm221 is known, so the sign of Δm232 completely determines this ordering. The latter sign, and therefore the overall hierarchy, is completely unknown.

___________________

5 For comparison, the mass of the electron, the lightest of the leptons, is 0.511 × 106 eV.

• The effects of matter that produce resonant MSW oscillations are contained in additional calculable parameters.

This picture of three elementary particles of very tiny mass evolving into and out of each other has the ring of science fiction. Indeed, it took some time and extraordinary evidence to be accepted by the scientific community. How is it known that neutrino oscillations do occur? The data summarized above were gathered by underground experiments of the type assessed in this report.

The first experimental indications that neutrinos oscillate came from the experiment in Homestake mine by Davis, followed by Kamiokande-II’s direct detection of solar neutrinos and the other solar neutrino experiments with gallium, SAGE and GALLEX, which observed solar neutrinos from the fundamental proton-proton fusion reaction for the first time. The final incontrovertible evidence came from experiments at SNO that definitively confirmed changes in neutrino flavor. Meanwhile, measurements of neutrinos created in Earth’s atmosphere (originally considered a background to proton decay experiments) at Super Kamiokande in 1998 showed that muon neutrinos arising from cosmic ray interactions in the atmosphere (so-called atmospheric neutrinos) disappear as a function of distance.

The study of antineutrinos from reactors has also been important, starting with the first observations of neutrinos in the 1950s by Reines and Cowan and leading through a long series of experiments to KamLAND. By observing electron antineutrino disappearance from all the reactors in Japan, this experiment eliminated any credible option to neutrino oscillations as explanations of solar and atmospheric neutrino effects.

Just as in more conventional particle physics, where initial observations of new particles in cosmic rays gave way to the controlled creation of new particles in accelerators, precision observations of neutrino oscillations must move from the now exploited natural sources to controlled high-flux accelerator-produced neutrinos. In this way, the K2K and MINOS experiments have already provided observations of muon neutrino disappearance, the NOVA experiment will operate in the next few years, and the new T2K experiments are just beginning to produce results in a search for electron appearance in a muon neutrino beam.

Our current knowledge of some of these neutrino oscillation parameters, arising from experiments performed to date, is briefly summarized in the above list. The mixing angles show a curious mix: Two are rather large and the third (θ13) is currently consistent with zero. While the sign of the very small Δm221 has been determined by matter effects in the Sun, the sign of the other much larger mass-squared difference (Δm232) is unknown. The overall mass hierarchy is therefore unknown. The CP phase parameter, δ, has not so far been determined by any

experiment. Measurements of these three (θ13, δ, and the sign of Δm232) are major goals of future experiments.

Four Critical Questions on the Nature of Neutrino Oscillations

The above discussion leads to four critical questions on neutrino oscillations that could be addressed by the long-baseline neutrino oscillation experiment (LBNE) discussed in this report. These questions (or close variants of them) have also been discussed by other review bodies such as the DOE/NSF Neutrino Scientific Assessment Group (NUSAG) and the Particle Physics Project Prioritization Panel (P5), and by the NRC report Connecting Quarks to the Cosmos. They have motivated a number of related international projects such as T2K in Japan and LAGUNA-LBNO (proposed) in Europe. The committee agrees with those other bodies that these questions are among the highest priority questions in particle physics today, and long-baseline neutrino oscillation experiments are essential to answer them. No credible experimental alternatives exist that would not require large underground detectors. The four critical questions that need answers are these:

1. What is the value of the mixing angle θ13? Presently we only have limits on θ13. A null value, θ13 = 0, would point to a deeper symmetry. On the other hand, θ13≠ 0 would imply observable phenomena (questions 2 and 3) that answer other key neutrino oscillation questions.

2. What is the hierarchy of the neutrino masses? Is it similar to that in the quark sector, so that the neutrino mostly made up of the same flavor as the heaviest charged lepton is the heaviest neutrino (“normal” hierarchy)? Or is it the lightest (“inverted” hierarchy)? This hierarchy is determined by the sign of Δm231 and has important implications for both neutrino oscillations and neutrinoless double-beta decay, discussed in the next section.

3. Is CP violated in the neutrino sector and if so, what is the value of the phase δ? This is a key question, since observing CP violation in neutrino oscillations would open a new window into the physics of matter and antimatter, providing essential inputs into models of leptogenesis, discussed more fully in the section on proton decay.

4. Are there new neutrino properties (or new neutrinos) that are not described by the three flavor neutrino model? Anomalies in existing data do not fit into this model, although no anomaly so far has been confirmed.6 However,

___________________

6 Neutrino oscillation experiments are very difficult, often limited by systematic effects or backgrounds, and initially with only modest statistical precision. It is therefore essential that multiple observations be made with complementary techniques and with different energies, initial flavors, energies, and baselines.

the history of neutrino physics is full of surprises, and the existence of a simple phenomenological model that works does not guarantee its correctness. Nature is often richer than imagined. New neutrino properties and new neutrino states7 could emerge if the neutrino model described here cannot fit all the observations.

Experimental Details

The amount of observable oscillation depends on the ratio of the distance between where the neutrinos are produced and where they are detected to the neutrino energy (L/E). For ranges of neutrino energies that are easily produced and detected with present technology, a detector must be located appropriately. On the one hand, if it is placed too far from the source, it sees little flux and presents technical difficulties in beam construction (because of Earth’s curvature). On the other hand, a minimum distance (“baseline”) of about 1,000 km is needed in order to provide sufficient time and distance for the neutrinos to oscillate. Such an experiment requires an intense neutrino source, as well as a massive and sensitive detector. In order to eliminate backgrounds from cosmic ray events, it must be located underground. An experiment with such capabilities—the LBNE—will allow, in addition to the search for CP violation, a broad program of neutrino physics, as well as sensitivity to proton decay.

The Homestake site, the intended location for the DUSEL program, is approximately 1,250 km from Fermilab, the presumptive neutrino source. The principal existing general science underground laboratory, the Soudan Underground Laboratory, is only 730 km from Fermilab. Other sites considered in the DUSEL site selection process that culminated in choosing Homestake also meet the requisite minimum 1,000 km distance from Fermilab. Similar large-scale long-baseline experiments are under consideration in Japan and Europe. The baseline length between J-PARC and Kamioka in Japan is 295 km, which is too short for the determination of mass hierarchy. Furthermore, the CP violation parameter cannot be determined uniquely with this configuration alone due to the immeasurable mass hierarchy. In Europe, studies are in progress to select a possible underground site for future large detectors. The physics questions that can be addressed by such detectors will depend on the selected site and detector technology.

If LBNE proceeds, the design and construction of it and the neutrino beamline will take at least 7 years. To consider what new knowledge LBNE would provide requires a comparison with the expectations of experiments currently operating or under construction. Experiments that will be sensitive to electron neutrino

___________________

7 Such new states are called sterile neutrinos, because other measurements show that only the three currently known neutrino species can have normal weak interactions.

appearance on that timescale include the long-baseline experiments MINOS, T2K, and NOvA and the reactor experiments RENO, Double Chooz, and Daya Bay. The last five are focused primarily on determining θ13. Their sensitivity to θ13 as a function of time is difficult to predict precisely, but by 2020 they are expected to have measured a finite value if sin22θ13 is greater than 0.03-0.04. By comparing the results from these experiments, it will be possible to place constraints on the other oscillation parameters. In particular, combined results from these experiments could provide some evidence for CP violation over about 20 percent of the allowed parameter space if sin22θ13 is in this range. Even in the most optimistic scenarios, the statistical significance of such a result would be marginal. In addition, experimental data obtained before the LBNE becomes operational would have only a small window for determining whether the mass hierarchy is inverted or normal.

LBNE therefore offers the real prospect of a transformative discovery of CP violation in the lepton sector, with sensitivity greater than three sigma over half the possible values of δ for sin22θ13 greater than 0.03 after 10 years of operation at the initial beam intensity.8 With potential future accelerator upgrades, sensitivity to values of θ13 extends to almost an order of magnitude below expected pre-LBNE limits. In addition, for sin22θ13 greater than 0.04, LBNE can unambiguously distinguish the normal from the inverted hierarchy over the full range of possible CP parameters. Determination of the hierarchy would shed some light on whether neutrinos have the same flavor-ordering of masses and perhaps demonstrate that the source of neutrino mass is different from the source of mass for other leptons and quarks. It would also significantly impact the interpretation of the sensitivity of any double-beta decay experiments.

The main goal of LBNE is to significantly extend our sensitivity to the neutrino oscillation parameters over existing experiments using a broad-band neutrino beam (a beam with a wide range of neutrino energies) with a peak energy of about 3.5 GeV from Fermilab. The experiment requires a small “near detector” located at the Fermilab site and a much more massive “far detector” located underground. Both detectors would observe the flux of neutrinos of a given flavor by reconstructing neutrino interactions through charged current processes that identify the final-state charged-lepton flavor. The near detector would monitor the flux and composition of the neutrino beam near the point of production. The far detector would primarily search for the appearance of electron neutrinos (or antineutrinos) in the muon neutrino (or antineutrino) beam. The details of the oscillation predictions are complex because it is necessary to include the interference effects of three-flavor mixing as well as the effects of neutrino interactions with the portions of Earth traversed by the beam. It is precisely these interference effects that produce a potentially observable CP violation effect; however, they

___________________

8http://www.int.washington.edu/PROGRAMS/10-2b/LBNEPhysicsReport.pdf.

make the oscillation probability at any particular baseline and neutrino energy depend on all the oscillation parameters. Thus the oscillations must be observed over an extended energy range for both neutrinos and antineutrinos in order to disentangle all the parameters.

Two main technologies for the far detector have been proposed. The first entails building a huge water Cherenkov detector similar in design to the extremely successful Super-Kamiokande experiment in Japan, but larger by a factor of eight. Energetic charged particles traveling through a transparent medium such as water emit a cone of Cherenkov light until they slow down below the speed of light in water. A water Cherenkov detector consists of a large tank of water with the vessel surface partially covered by inward-looking photomultiplier tubes (PMTs), large sensors capable of detecting single photons of Cherenkov light. Each charged particle appears as a ring of light detected by the PMTs, with electrons distinguished from muons by the sharpness of the ring (electrons undergo much more multiple scattering in water than muons, and so have fuzzier rings). Water Cherenkov detectors are a well-proven and well-understood technology. However, since they cannot detect slow particles and because rings can be merged or confused in events with many charged particles, they have a relatively low efficiency for low-velocity particles, on the one hand, and a significant fraction of misreconstructed events for the relatively complicated events in the multi-GeV range.

The alternative technology is a liquid argon (LAr) tracking calorimeter similar in concept to the ICARUS detector currently in the Laboratori Nazionali del Gran Sasso in Italy, but larger by a factor of 40. In a LAr detector, ionization deposited along charged particle tracks is drifted to a grid of sense wires, allowing the tracks to be reconstructed in three dimensions. Such a detector is sensitive to low-velocity particles, and the spatial resolution is excellent (potentially a few millimeters or even better). Thus a LAr detector is capable in principle of reconstructing quite complex events and is expected to have a lower misreconstruction fraction and higher efficiency. Because of this added efficiency, a LAr detector can be smaller in mass by a factor of about six and (owing to the greater density of LAr) almost an order of magnitude smaller in volume. As a result, the far detector hall could be much smaller than for the other option. However, there is much less experience with large LAr neutrino detectors than with water Cherenkov detectors. The challenges include the technical complexity and safety considerations involved in producing and retaining multikiloton volumes of a cryogenic noble liquid in an underground laboratory, the high purity requirements for the argon, and the technical complexity of the readout system. Techniques for reconstructing LAr events are still in development. The development and operation of the MicroBOONE 800-ton LAr experiment at Fermilab will be a first step in resolving many of these technical issues.

From the point of view of neutrino oscillations alone, the physics sensitivity of the water Cherenkov module and the LAr options are similar. The choice

between them relies heavily on technical and financial considerations. The choice of technology may also affect the required depth underground. This requirement is under study and has not yet been firmly established.

The first observation of CP violation in the neutrino sector will be followed by a long sequence of experiments of different types intended to more accurately measure the oscillation parameters and to understand whether the three-neutrino parameterization is correct. There are at present uncorroborated anomalies that, if correct, would need to be explained through modifications or additions to this picture.

The two technologies discussed above have different and complementary strengths both for the initial discovery of nonstandard phenomena and for second-generation measurements. Proposals for new second-generation experiments with water Cherenkov detectors include very imaginative possibilities, such as the DAEdALUS proposal to create neutrinos using a series of small nearby cyclotrons. These may well become important complementary techniques and illustrate the possibilities for additional use of a large water Cherenkov detector for constraining neutrino parameters, depending on what is found. Liquid argon, on the other hand, should be able to analyse complicated events with its particle identification and tracking capabilities, which may also open new possibilities.

LBNE would also allow a broad program of physics measurements beyond accelerator-produced neutrinos. Examples include studies of atmospheric neutrinos, solar neutrinos, and neutrinos from astrophysical sources.

There is a long history of measurements of atmospheric neutrinos that will continue over the next decade with new results from the Super-Kamiokande detector. With the same kind of water Cherenkov detector, the statistical improvement would be at best nominal, by a factor of, say, 2 or 3. Although an alternative LAr detector of lower tonnage would provide less statistical improvement, it might be able to make a more definitive observation of tau neutrinos produced from oscillated cosmic ray muon neutrinos. This would depend on many factors not yet demonstrated.

A massive water Cherenkov detector would allow measurements with high statistics of the small day-night asymmetry in electron solar neutrino flux that arises from the small (∼2 percent) additional oscillation that takes place within the matter of Earth. Thus far, Super-Kamiokande has measured only a small (negligible) effect (2 standard deviations). A LAr detector would have better particle detection and identification for much less tonnage. In any case, use of LAr for solar neutrinos would require addressing significant technical issues, including the production of 39Ar by cosmic rays, a source of serious background signal, as well as control of radon. These technical issues mean that solar measurements would probably require substantial depth.

Summary

Realization of an LBNE with experimental reach for CP violation and the understanding of the mass hierarchy will require a large underground detector and a high-intensity neutrino source separated from each other by more than 1,000 km or so. Such an experiment has the potential to determine whether the current phenomenological description of neutrino oscillations is correct and to measure the associated parameters. Observations of both the mass hierarchy and CP violation in the neutrino sector would have profound effects on extensions to the Standard Model as well as on our ability to model the early Universe. An experiment capable of these discoveries will enable a broad program of discovery and measurement in neutrino physics. Such a program would be a cornerstone of basic science research in the United States.

Conclusion: The long-baseline neutrino oscillation experiment is of paramount scientific importance and will address crucial questions upon whose answers the tenets of our understanding of the Universe depend. This experiment would not only provide an exceptional opportunity to address scientific questions of paramount importance, it would also have a significant positive impact upon the stewardship of the particle physics and nuclear physics research communities and have the United States assume a visible leadership role in the expanding field of underground science. The U.S. particle physics program is especially well positioned to build a world-leading long-baseline neutrino experiment due to the combined availability of an intense neutrino beam from Fermilab and a suitably long baseline from the neutrino source to an appropriate underground site such as the proposed DUSEL.

The Nature of Neutrinos—Antiparticles, Mass Scale (Neutrinoless Double-Beta Decay)

In 1937, the Italian physicist Ettore Majorana conjectured that the neutrino could be its own antiparticle, thereby lending his name to particles that have this characteristic of being their own antiparticle.9 Whether or not neutrinos are Majorana particles remains a fundamental and unresolved question in particle and nuclear physics. Double-beta decay experiments could resolve this question.

Double-beta decay is a process in which a nucleus decays into another nucleus with the same mass and two more protons by emitting two electrons. Because it

___________________

9 E. Majorana. 1937. Nuovo Cimento 14: 171.

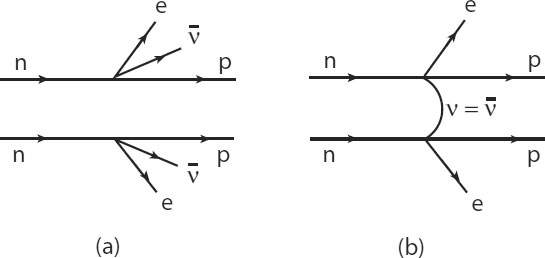

FIGURE 3.4 Feynman diagrams describing double-beta decay processes in which two neutrons (n) in a nucleus are converted to two protons (p): (a) 2vββ, in which two antineutrinos (![]() ) are emitted; (b) 0vββ, in which no neutrinos are emitted. This second process requires that the neutrino is its own antiparticle(v=

) are emitted; (b) 0vββ, in which no neutrinos are emitted. This second process requires that the neutrino is its own antiparticle(v=![]() ). SOURCE: Courtesy of Yoram Alhassid, Yale University.

). SOURCE: Courtesy of Yoram Alhassid, Yale University.

typically is accompanied by the emission of two electron antineutrinos, it is known as two-neutrino, double-beta (2vβ) decay (see Figure 3.4a). In the absence of emitted neutrinos, the process is called neutrinoless double-beta (0vββ) decay10 (see Figure 3.4b). The 2vββ decay can occur whether or not the neutrino is a Majorana particle and has been observed in a number of nuclei. However, the 0nbb decay can occur only if the neutrino is a Majorana particle, so its existence would be an unambiguous demonstration of the neutrino’s peculiar nature. Thus far, no confirmed observation of such a decay has been made.

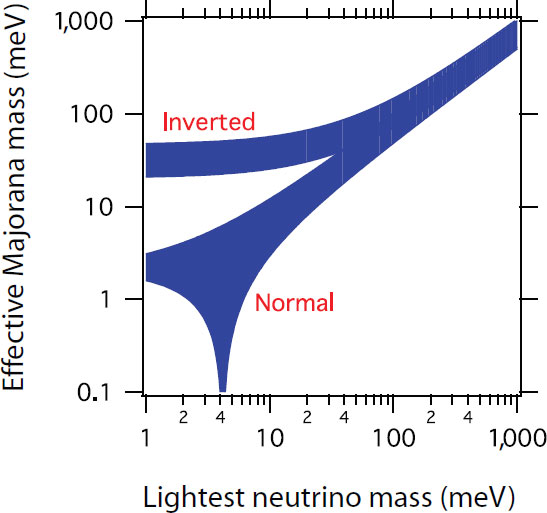

Establishing that neutrinos are Majorana particles would have a number of important consequences. Because 0vββ decay rates depend on neutrino masses, determining those rates would be the most sensitive laboratory experiment to determine the neutrino mass scale. The 0vββ decay rate is calculated to be proportional to the square of an effective neutrino mass and a quantity that is determined by the nuclear structure of the corresponding decaying nucleus. Various nuclear structure models have been used to calculate this quantity and, with recent progress, they agree with each other to within a factor of about two. The observation of a Majorana neutrino would also provide support for a subset of GUTs that have massive Majorana neutrinos.

___________________

10 For a recent review, see F.T. Avignone III, S.R. Elliott, and J. Engel. 2008. Review of Modern Physics 80: 481 and references therein.

Although the values of the neutrino masses are not known precisely, neutrino oscillation studies and other evidence indicate they are much smaller (by factors of many millions) than those of other elementary particles, whose masses are thought to be generated by the well-known Higgs mechanism. This fact alone strongly indicates that neutrino masses are due to very different and very likely much higher energy mechanisms. In many GUTs, such a mechanism is most natural if the neutrino is its own antiparticle—that is, if it is a Majorana particle.

Finally, the 0vββ decay implies a change of lepton number by two units, and its observation would lead to the important conclusion that lepton number conservation is violated. This provides support to leptogenesis, a process that violates CP and lepton number conservation and leads to lepton-antilepton asymmetry. In turn, this lepton-antilepton asymmetry could lead to baryon-antibaryon asymmetry and might help to explain the preponderance of matter over antimatter in the Universe (see discussion in the section “Tests of Grand Unification Theories”).

However, even if 0vββ decay is not observed, such studies can provide important information about the nature and mass of neutrinos. The neutrino oscillation data are consistent with the three neutrinos having different masses and two of the masses having a smaller mass splitting as determined by the solar mass scale. However, there are still two possibilities for the neutrino spectrum: the normal hierarchy and the inverted hierarchy (see discussion in the section “The Nature of Neutrinos—Oscillations [Long-Baseline Neutrino Experiment]”). If oscillation experiments demonstrate that the mass hierarchy of neutrinos is inverted, then having 0vββ decay results establish an upper limit of 20 meV on the effective Majorana neutrino mass would show that neutrinos are not Majorana particles (see Figure 3.5). Third-generation experiments at the ton scale would reach this limit, so that the failure to observe 0vββ decay would rule out neutrinos being a Majorana particle in the inverted hierarchy scenario. In the case of the normal hierarchy scenario, an experimental sensitivity of 1 meV would likely be required to rule out most possibilities for a Majorana neutrino. Furthermore, an experimental lifetime limit for the 0vββ decay will directly constrain the mass of the lightest neutrino (assuming the neutrino is a Majorana particle).

Two Critical Questions on the Nature of Neutrinos—Antiparticles and Mass Scale

The above discussion leads to two critical discussions that could be addressed by the neutrinoless double-beta decay experiment considered in this report.

• Are neutrinos Majorana or Dirac particles? It would be an amazing discovery in itself to demonstrate the existence of an entirely new type of elementary particle: one that is its own antiparticle. However, the existence

of Majorana neutrinos also allows leptogenesis to be an explanation for the matter-antimatter asymmetry of the Universe.

• What is the absolute mass scale of neutrinos? Knowledge of the absolute mass scale is needed in order to understand neutrino masses within the framework of particle physics, as well as to gauge the impact that massive neutrinos have on cosmology. Neutrino oscillation experiments cannot determine the absolute mass scale (only the squared mass differences), but neutrinoless double-beta decay experiments address this question.

FIGURE 3.5 Relation between the effective Majorana mass and the lightest neutrino mass. Both inverted and normal mass hierarchies are considered. SOURCE: Reprinted with permission from F.T. Avignone, S.R. Elliott, and J. Engel. Double beta decay, Majorana neutrinos, and neutrino mass. Reviews of Modern Physics 80: 481. Copyright 2008 by the American Physical Society.

Experimental Aspects

There is an overwhelming interest in the international particle and nuclear physics communities to pursue the science of 0vββ decay. Many of the underground laboratories have programs to search for the process, including Gran Sasso in Italy, Canfranc in Spain, Modane in France, Kamioka in Japan, SNOLAB in Canada, and the Waste Isolation Pilot Plant (WIPP) and Sanford Underground Laboratory in the United States. A number of the offshore experiments have significant U.S. involvement.

The typical 0vββ decay experiment consists of a set amount of an isotope susceptible to double-beta decay in which detectors have been incorporated and which involves searching for very rare monoenergetic electron signals superimposed on continuum backgrounds. Because cosmic ray muons can create neutrons whose interactions form such a continuum, experiments must be conducted deep underground where such muons only rarely penetrate. A reliable 0vββ decay program requires multiple experiments worldwide using different isotopes. There are several reasons for this: (1) very different experimental techniques are used for different isotopes, some of which may prove to be more effective in, for example, background suppression; (2) a signal observed in one particular isotope might be a misidentification because of unknown background; (3) if a signal is detected, the measurement of multiple isotopes can provide a more reliable effective neutrino mass given the uncertainties in the calculated nuclear matrix elements; and (4) measuring the signal in different isotopes can help distinguish between different possible mechanisms of 0vββ decay. Worldwide, there are ongoing or proposed experiments searching for 0vββ in 48Ca, 76Ge, 82Se, 100Mo, 116Cd, 130Te, 136Xe, 150Nd, and 160Gd. Those experiments use several key experimental detection techniques, such as calorimetric bolometers (e.g., CUORICINO and CUORE at Gran Sasso), cryogenic semiconductor detectors (e.g., GERDA at Gran Sasso and MAJORANA at the Sanford Underground Laboratory), and liquid/gas detectors (e.g., SNO+ at SNOLAB and EXO at WIPP). See Table 3.2 for a more complete list.

Since the 0vββ decay is a very rare process, large masses of the corresponding isotope are required to reach a given sensitivity. First-generation (G1) experiments use detector masses in the range of 10-25 kg and have sensitivity to a neutrino mass of about 1 eV. Typically, these are prototype experiments to demonstrate the feasibility of various techniques. Demonstrating the scalability of a particular method is accomplished by using 30-200 kg detectors, which provide sensitivities down to 100 meV. There are about 10 of these second-generation (G2) experiments, and all experiments currently running or in construction are either G1 or G2 experiments.

Reaching the atmospheric scale (the mass scale associated with atmospheric muon neutrino disappearance) of 50 meV requires third-generation (G3) detectors using detectors with masses of 1 ton or more. For the reasons above, a meaningful

TABLE 3.2 0vββ Decay Experiments Worldwide Classified by Generation and Experimental Technique

|

|

||||||

| Generation | Experimental Techniques | |||||

|---|---|---|---|---|---|---|

|

|

||||||

| Calorimetric Bolometer | Calorimetric Semiconductor | Calorimetric Liquid/Gas | Tracking Calorimetry | |||

|

|

||||||

| G1 | CUORICINO, Gran Sasso | Heidelberg-Moscow, Gran Sasso | NEMO3, Modane | |||

| G2 | CUORE, Gran Sasso | GERDA-I-II, Gran Sasso | XMASS, Kamioka | SuperNEMO, Modane | ||

| CANDLE, Kamioka | Majorana Demonstrator, Sanford Lab | SNO+, SNOLab | ||||

| LUCIFER, Gran Sasso | COBRA, Gran Sasso | EXO-200, WIPP | ||||

| NEXT, Canfranc | ||||||

| G3 | GERDA-III, Gran Sasso | XMASS-10 ton, Kamioka | ||||

| Majorana, Sanford Lab | ||||||

|

|

||||||

NOTE: The G1 experiments use on the order of 10 kg of isotopes. Most projects plan on scaling up the corresponding isotope mass (and sensitivity) by a factor of 10 (G2) during the next 5 years and moving up to the Ion scale (G3) within 10 years. The latter can probe most of the phase space permitted by the inverted hierarchy spectrum of neutrino masses.

0vββ decay program requires multiple experiments and, although costly, there should be at least two such G3 experiments worldwide. It is appropriate that such a 1-ton detector be mounted at a U.S. site: (1) a 0vββ decay experiment in this country will be part of the required complement of experiments worldwide using different isotopes and different techniques, (2) a detector installed at a U.S. facility will ensure U.S. leadership in this field and enable U.S. scientists to participate more readily, and (3) a U.S. facility hosting a ton-scale 0vββ decay detector will attract top foreign scientists in the field and will foster international collaborations. At the same time, U.S. scientists are likely to continue their involvement in 0vββ decay experiments abroad.

The primary technical challenge facing the 0vββ experiments is to increase their scale at reasonable costs. A list of 0vββ decay experiments around the world is provided in Table 3.2. The experiments are tabulated according to their generation and the experimental technique they use. Proponents of these experiments have made convincing cases that at least the xenon and germanium experiments can scale to 1 ton. Going to the even larger detector masses needed to test limits for the normal mass hierarchy will present a more difficult challenge.

To reach sensitivity at the solar scale (the mass scale associated with the solar electron neutrino disappearance) of 5 meV and even down to 1 meV requires a 50-ton detector. Such a massive 0vββ detector is difficult to contemplate: A 50-ton germanium detector would be the size of a small house and very expensive. However, technology does advance, and it makes sense to ensure that the underground cavern for a 0vββ experiment could eventually accommodate a 50-ton experiment and its attendant shielding.

Detectors in the mass range of 10-25 kg have established the current limits on 0vββ decay lifetime to be around 1025 years, implying that the effective Majorana neutrino mass scale must be less than 1 e V. There is one claimed observation of 0vββ decay in 76Ge with a lifetime of 2 × 1025 years,11 but the interpretation of the data is disputed. A potential scientific challenge to extracting an effective neutrino mass from the 0vββ decay measurements is the numerical uncertainty in the nuclear structure calculations. There has been much theoretical progress in improving these calculations in the last few years. Further progress is likely, and should minimize the nuclear structure uncertainties on the effective neutrino mass derived for the timescale of much larger experiments.

Summary

The 0vββ experiment addresses crucial unanswered questions in particle and nuclear physics.12 It is the only practical experiment that could determine whether the neutrino is a Majorana or Dirac particle. If the neutrino is a Majorana particle, it would also be the most sensitive laboratory experiment that could measure or at least constrain the absolute mass scale of neutrinos. Were 0vββ to be observed, it would tell us that neutrinos are Majorana particles and lepton number conservation is violated, a model-independent conclusion and a Nobel Prize-level achievement. The 0vββ decay is very rare and its detection necessitates massive detectors. Given the paramount scientific importance of this experiment and the leadership roles taken by U.S. scientists, it is appropriate that the United States take a leadership role in one 0vββ decay ton-scale experiment for installation at a U.S. site, or at an appropriate foreign facility if necessary, and that U.S. scientists be supported to participate in other such experiments worldwide.

Conclusion: The neutrinoless double-beta decay experiment, like the direct detection dark matter experiment and the long-baseline neutrino oscillation experiment, is of paramount scientific importance and will address crucial

_______________________

11 H.V. Klapdor-Kleingrothaus, A. Dietz, L. Baudis, et al. 2001. European Physics Journal A 12: 147.

12 NRC. 2006. Revealing the Hidden Nature of Space and Time. Washington, D.C.: The National Academies Press, p. 13.

questions upon whose answers the tenets of our understanding of the Universe depend. These three experiments are of comparable scientific importance. This experiment would not only provide an exceptional opportunity to address scientific questions of paramount importance, it would also have a significant positive impact upon the stewardship of the particle physics and nuclear physics research communities and would have the United States assume a visible leadership role in the expanding field of underground science. In light of the leading roles played by U.S. scientists in the study of neutrinoless double-beta decay, together with the need to build two or more large experiments in this area, U.S. particle and nuclear physicists are also well positioned to assume leadership roles in the development of one neutrinoless double-beta decay experiment on the scale of a ton. While installation of such a U.S.-developed experiment in an appropriate foreign facility would significantly benefit scientific progress and the research communities, there would be substantial advantages to the communities if this experiment could be installed within the United States, possibly at the same site as the long-baseline neutrino experiment.

Overview

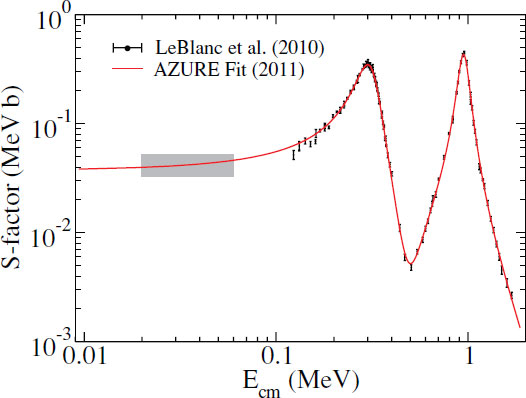

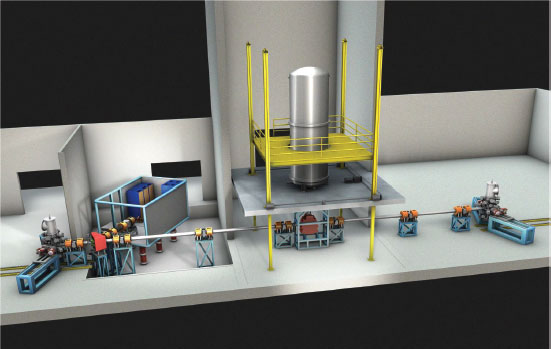

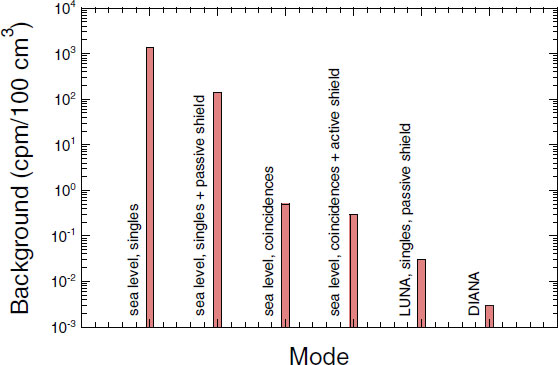

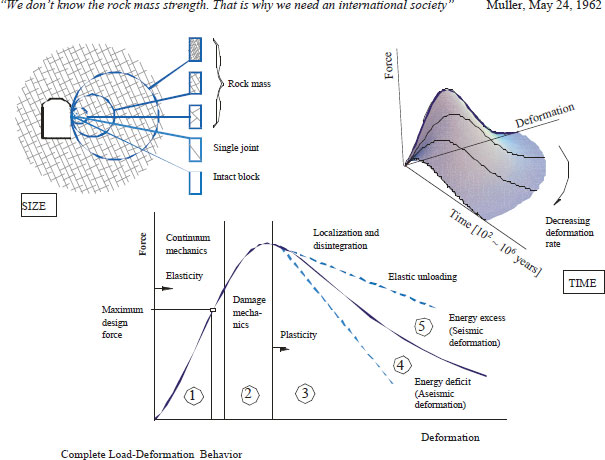

Atoms are made of electrons and nuclei and nuclei are made of protons and neutrons. Protons and neutrons are the lightest particles that carry the baryon number, B, a quantum number that is conserved in Standard Model processes. While free neutrons, slightly heavier than protons, decay to protons with a lifetime of about 900 s, they can live much longer when bound in nuclei. The free neutron decays through nuclear beta decay, a weak interaction (n → p + e− + ![]() e, where n is the neutron, p is a proton, e− an electron, and