Analysis and Imaging of Small Particles

Although the research community has studied nanoparticles for several decades and has made many advances with imaging and analyzing the chemical composition of individual and mixtures of nanoparticles, it still struggles with understanding how nanoparticles interact and undergo changes in different environments. In particular, investigators are just now developing methods for determining the three-dimensional structure and chemical composition of nanoparticles in the atmosphere and in nanocomposites. They also are designing new techniques for studying and modeling how particles form in the atmosphere and how those processes ultimate determine the nanoparticles’ properties and their impact on the environment. Nanoparticle structure and composition also is critically important for the materials and catalyst industries, both for understanding how existing materials and catalysts behave and for improving their design and function. Speakers in this session, as well as the subsequent sessions, discussed the specific challenges of imaging and analyzing nanoparticles and the wide-ranging benefits that will come from solving those challenges.

MULTIDIMENSIONAL CHARACTERIZATION OF INDIVIDUAL AEROSOL PARTICLES

Alla Zelenyuk of the Pacific Northwest National Laboratory (PNNL) reiterated the important point that aerosols are everywhere, with impacts on the climate and health and potential for misuse as agents of terror (Figure 3-1). She also noted that aerosols arise from a variety of sources, each of which produces particles of unique structure and composition. What makes nanoparticle characterization even more challenging is the fact that aerosols are most often mixtures of particles. While it is important to understand the mixture, Zelenyuk and her coworkers are first trying to look at one particle at a time to determine as many relevant properties as possible. Doing so is a daunting task given the size and mass of an individual aerosol particle and their low concentrations in the atmosphere. Particle concentrations range from a few to a few thousand particles/cm3. However, said Zelenyuk, instruments are now available that are capable of looking at the properties of individual particles, with high sensitivity and resolution, and of determining many properties for the same particle.

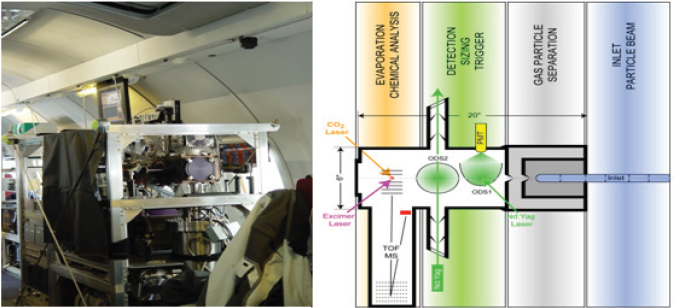

One of the main instruments in use is the single particle laser ablation time-of-flight mass spectrometer called SPLAT II. This instrument, shown in Figure 3-2, is used in the laboratory and in the field (Zelenyuk and Imre, 2009, Zelenyuk et al., 2009). SPLAT II was flown for a month in an airplane to determine which particles form in ice clouds and how they affect climate to better understand sources of air pollution over Alaska. Zelenyuk and her collaborators also have developed software capable of examining millions of particles to establish correlations between different properties and different sources.

Zelenyuk and her colleagues are attempting to use SPLAT II and software to quantitatively determine many particle properties, including particle number and concentration, size, composition, and density. They also are examining different aspects of particle shape and dynamic shape factor, that is, whether a particle is spherical or symmetric, and particle morphology in terms of what is on the outside of the particle. Measuring the content of the very thin outer layer of a particle is important because layers of secondary organic aerosols on top of a hydroscopic layer can change water retention and completely stop the water content of these particles from evaporating. After describing how SPLAT II works (Figure 3-2, right panel), Zelenyuk discussed some of the results from the Alaska study. Flying through clouds, for example, the instrument showed that very few particles do not activate and form droplets. By repeatedly sampling

FIGURE 3-1 The importance of aerosols to society are many and varied.

SOURCE: Zelenyuk, 2010.

FIGURE 3-2 SPLAT II, an ultrasensitive high-precision instrument for multidimensional single particle characterization.

SOURCE: Zelenyuk, 2010; modified from Zelenyuk et al., 2009.

the free particles, SPLAT II provided information that may reveal what is special about these particular particles in terms of their size, composition, and morphology. Modelers will then be able to use this information to improve their predictions about cloud formation. Zelenyuk noted that any particle more than 100 nanometers in diameter can be detected at levels as low as 1 particle/cm3 in 1 second of sampling and can be sized with an accuracy of close to 100 percent. Zelenyuk and her colleagues recently demonstrated that they can determine the density of a particle, which is very important for determining the mass of particles, the value of which is regulated by the Environmental Protection Agency.

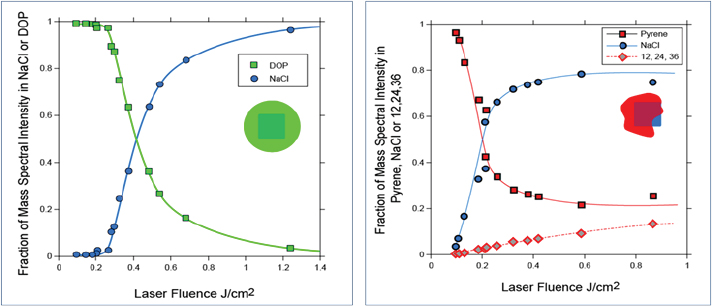

Characterizing Particle Morphology

When SPLAT II was first developed, Zelenyuk and her colleagues used it to study ultrapure molten salts that form metastable phases in far-from-equilibrium states. These studies enabled them to report the first density measurements for hygroscopic particles found in the atmosphere that exist in highly metastable phases (Zelenyuk et al., 2005). They found, for example, that the shape of sodium chloride particles could vary from spherical to the more typical cubic and rectangular, and that the particles could agglomerate into structures with much larger shape factors. In addition, the researchers demonstrated that they could measure the symmetry of different types of particles and even separate particles in real time based on their shapes. For each shape, they could then measure density, size, and composition (Zelenyuk et al., 2006). Turning to the issue of particle morphology, Zelenyuk showed that it is possible to drill down into a particle to study particle composition as a function of depth (Figure 3-3) (Zelenyuk et al., 2008). In her initial experiments, she worked with the sodium chloride model system and coated the particles with liquid organics and solid organics. The experiments revealed that different organic layers deposited over time on the particles do not mix with one another, contrary to predictions of modeling studies. Instead, the organics develop a layered structure. The researchers were able to create some of these structures and to show that they can be stable for many hours, which Zelenyuk said was surprising.

FIGURE 3-3 Characterizing particle composition as a function of depth.

SOURCE: Reprinted (adapted) with permission from Zelenyuk et al., 2008.

Zelenyuk also noted that small amounts of organic vapors, such as those emitted in auto exhaust, can have a profound impact on particle morphology and behavior. One series of measurements, for example, showed that particle chemistry changed as the aircraft travelled through a cloud, implying that the chemistry in a cloud is heterogeneous and changing with location and over time. Another set of experiments found that a thin layer of organics can reduce particle evaporation by 96 percent over 24 hours. Those data are now being used by modelers to attempt to predict the properties and life cycles of different types of particles in the atmosphere. As a final example of the type of studies that SPLAT II can enable, Zelenyuk briefly discussed work being done on engine exhaust. One finding from those studies is that particles emitted by new-generation fuel-neutral1 spark-ignition direct injection engines are fractal in structure, and that they incorporate polyaromatic hydrocarbons and nitropolyaromatic hydrocarbons at levels as high as 40 percent on their surfaces, which she said was also surprising.

Discussion

Doug Ray of PNNL commented that there is a similarity between the data acquisition presented by Zelenyuk and the work presented in Chapter 2 by Gerry McDermott, in that high-throughput analysis of large numbers of items allows critically new conclusions to be extracted from these data. He said, “That is a theme. If a tool can be built with the capability to perform high-throughput measurements with the proper data analysis tools, it is possible to tease out information that would be otherwise invisible.”

MATERIALS DESIGN AND SYNTHESIS

In his presentation, Ralph Nuzzo from the University of Illinois in Urbana-Champaign discussed his work on nanoscale characterization from the perspective of understanding structural dynamics in the context of catalysis. “First and foremost,” he said, “there’s a gigantic toolbox that can be applied to this area.” Among the examples he cited, which were developed through large investments by the Department of Energy, include neutron- and x-ray-based approaches and emerging technologies such as analytic electron microscopy. The development of methods that correct for the complications that come from both chromatic and spherical aberrations were the key factors that enable electron microscopy to reach atomistic resolution.

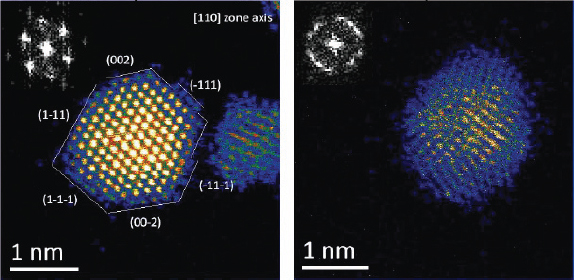

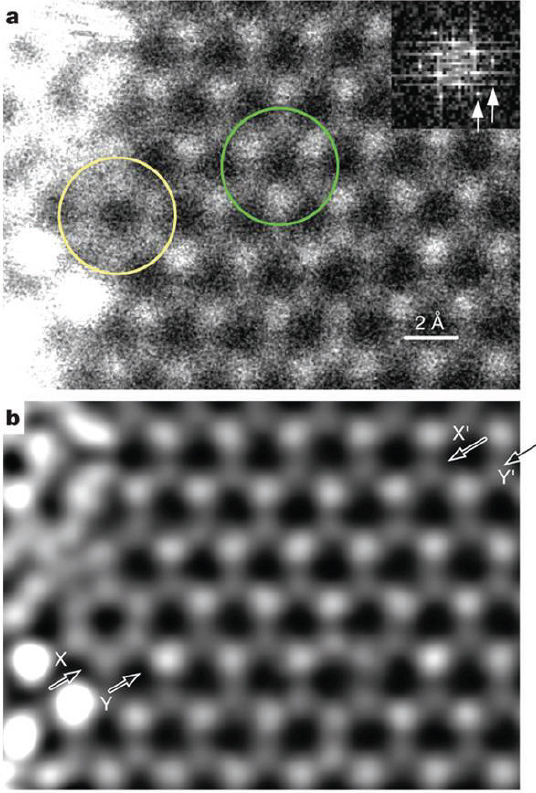

Using these new techniques, it is possible to obtain atomic resolution images that clearly delineate the atoms in polymer-capped platinum and palladium nanoparticles, for example, as shown in Figure 3-4. A comparison of these two images shows that while the platinum particles have a very well-defined order, the palladium nanoparticles have a significant amount of disorder that is independent of the orientation. It is possible from these images to count atoms and to correlate particle size with atom count in various types of particle morphologies. This study can be extended to more complex structures, including core-shell platinum-palladium and palladium-platinum nanoparticles that are relevant to catalysis (Sanchez et al., 2009a). These types of experiments have yielded important insights into particle

_________________________

1Not requiring a specific transportation fuel.

FIGURE 3-4 Atomic resolution electron micrographs of platinum and palladium nanoparticles.

SOURCE: Reprinted (adapted) with permission from Sanchez et al., 2009a.

nucleation and growth and have provided input for modeling studies that have advanced our theoretical understanding of these important processes.

Complementary to these imaging studies are those that involve spectroscopy, which is an averaging technique. Taking a population of clusters, it is possible to use x-ray absorption spectroscopy to measure properties such as the average coordination number for an absorbing atom and average bond distances and bond disorder. Combining microscopy and spectroscopy data can provide information on mesoscopic phenomena such as how bond distances change with temperature. Nuzzo discussed one set of measurements made on platinum γ-alumina, a quintessential heterogeneous catalyst that is used to make gasoline. These experiments showed that bond distances contract as temperature rises when particle diameter reaches sizes as small as 1 nanometer. This phenomenon, known as negative thermal expansion, was correlated with changes in electronic structure (Sanchez et al., 2009b). Molecular dynamics simulations of a 10-atom platinum cluster supported on γ-alumina determined that the bonding between the cluster and the support was dynamic in nature.

Understanding Defects

γ-Alumina is an interesting support at an atomistic level because it has a great many defined defects created by oxygen atom vacancies that cause electronic perturbations in the support. These perturbations occur on a scale that is of the same order as the size of the platinum clusters and therefore perturb the static disorder of the clusters on the support. The level of disorder in these structures is also highly sensitive to nanoparticle size and the presence of reactive gas. In related work, a detailed modeling study, combined with nano-area coherent electron diffraction data of gold nanoparticles, revealed that the vertices of gold clusters are deformed more than expected in an “ideal” structure. This finding makes sense, Nuzzo noted, because atoms at the vertices have the lowest coordination numbers, and, as a result, their structural relaxations are most profound (Huang et al., 2008). This type of structural behavior has been very hard to characterize in the past. Nuzzo also discussed work done on the impregnation and reduction of an iridium and platinum bimetallic catalyst supported on γ-alumina. He showed images detailing the atomic-level structure of this system. The support lattice was observable and identifiable in these images to be near the metal clusters and aligned with the zone axes. These images, he said, illustrate that it is now possible to directly correlate specific lattice planes in face-centered cubic structures, which are essentially single crystals, and to map them onto specific orientations of the γ-alumina structure. Another technique that researchers are putting to use in atomic-level studies is electron energy loss spectroscopy (EELS), which can be used to characterize the electronic structure of a material at the atomic level. EELS can elucidate the patterns of charge transfers using an aberration-corrected microscope and can identify regions in a catalyst support that are not homogenous. Nuzzo showed images of a gold cluster on a titanium dioxide support that clearly identify areas in the lattice that contain a disproportionate number of titanium (III) centers located under the gold clusters (Sivaramakrishnan et al., 2009). He noted that these types of studies are providing information about the nature of catalyst-support interactions and their structural and electronic consequences, which have been the “dark matter of catalysis.”

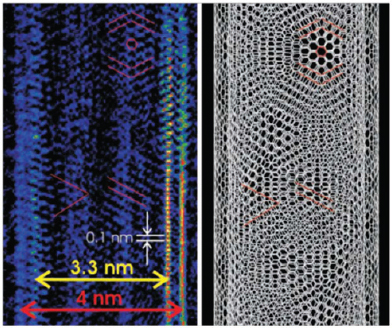

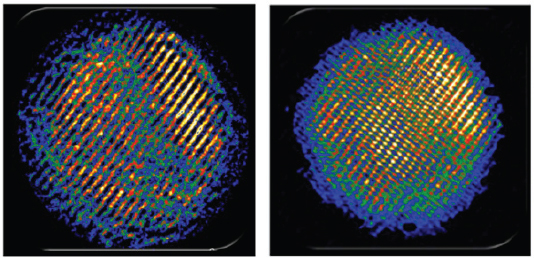

Turning Low-Resolution into High-Resolution Images

As a final example, Nuzzo described the use of coherent diffractive imaging to provide atomic-resolution structural determinations even when an atomic-resolution imaging lens is not available. Using synergistic information from electron diffraction and low-resolution images, Nuzzo’s team was able to reconstruct an image showing the chirality and registration of the two concentric walls of a double-walled carbon nanotube (Figure 3-5; Zuo et al., 2003). He also showed a low-resolution image of a cadmium selenide quantum dot and the subsequent, far more detailed, image that had been refined using diffractive imaging (Figure 3-6). In the latter image, the resolution was sufficient to see the separation between cadmium and selenium atoms (Huang et al., 2009).

Nuzzo explained that there are still some important limitations to current analytical techniques that point to future directions for research. Current methods can reveal atomic structure, speciation of elements at the nanoscale, and electronic structure at the atomic scale. However, structural dynamics is still needed, because current methods provide limited or no temporal resolution to monitor ongoing processes. Also needed is the ability to characterize adsorbate-interface bonding at atomic resolution, particularly in terms

FIGURE 3-5 Coherent diffractive imaging reveals the chirality and registration of the two walls of a double-walled carbon nanotube.

SOURCE: Zuo et al., 2003.

FIGURE 3-6 Coherent diffractive imaging was used to refine a low-resolution image of a cadmium selenide quantum dot (left) and to enable visualization of the separation between atoms in the crystal.

SOURCE: Huang et al., 2009.

of the dynamics of that bonding given that clusters are vibrating and moving, not merely sitting, on the support structure. Finally, there is a need to better merge theory and experimentation to sort out the many atoms and many excited states that actually exist on the catalyst’s surface.

PARTICLE CHARACTERIZATION NEEDS FOR NANOCOMPOSITES

Lee Silverman of DuPont’s Central Research and Development Laboratory provided an industrial perspective on the kinds of tools needed to analyze nanomaterials. Nanotechnology, he said, is a huge field that includes nanostructured materials, nanotextured surfaces, nanoscale-thick surface films, nanoscale devices, and nanoparticles. “DuPont’s interest is in adding nanoparticles to polymers to try to augment the properties of already existing polymer platforms and extend the material applications,” said Silverman. “We believe that manipulation of materials on a very fine scale is broadly applicable across all sorts of material platforms, and nanotechnology enables you to combine different property sets into specific materials.” As examples, Silverman said that nanoparticles added to a polymer can improve its rheological properties in the molten state and its mechanical properties once the material has cooled. Nanomaterials can add barrier properties to a film while enabling it to remain transparent. For single property materials, turning to nanomaterials is not necessary. Aluminum, for example, makes a great conductive film, and metal films in general make superior barriers. Particle dimensionality plays a large role in defining the bulk physical properties that a nanomaterial can add to the material being designed, and Silverman briefly described the design rules that come from that relationship. “If you want transparency or photonic properties, you use spheres,” he explained. “If you want electrical conductivity or thermomechanical behavior, you use rods. If you want barrier properties or flame retardant properties, you turn to plates.” Once the nanomaterial is chosen, the polymer chemist selects the polymer that will serve as the matrix based on other physical properties such as temperature capability or tribilogical properties. The real work, said Silverman, comes in developing the nanocomposite so that it has the desired properties and is manufacturable. “Anyone who’s done polymer processing understands that it’s really easy to take an extruder that’s full of polyester, throw clay in it, and make something that comes out with the mechanical properties of chalk and not useful to anybody.” In the end, nanocomposite systems require compatible particles, polymers, and processes.

Probing Complex Materials

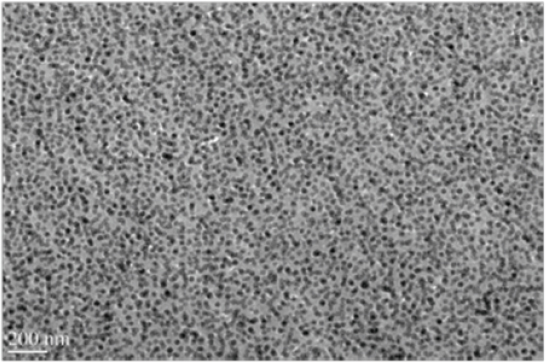

Although it is interesting scientifically to examine single particles, polymer chemists are more interested in materials with high loadings of the nanoscale filler, and analysis at this level is very difficult (Figure 3-7). It would be useful to know the spacing of the particles in a matrix, and researchers have tried to use small-angle x-ray scattering (SAX) to get

FIGURE 3-7 Silica nanoparticles in polystyrene.

SOURCE: Silverman, 2010.

at the microstructure of a heavily loaded polymer. However, SAX only provides limited detail when an 80-nanometer-thick film is loaded with 20-nanometer-diameter particles and there is little information about how the particles are spatially organized. Silverman also noted that transmission electron tomography is useless in this type of system because the particles are too concentrated.

In his research, Silverman is most interested in plates and rods, because he is concerned with creating nanocomposites with useful mechanical characteristics such as barrier or permeability properties for gases and liquids. Studies on permeability conducted in the late 1960s showed that relative permeability falls substantially as the aspect ratio of the nanorod increases (Nielsen, 1967). Because aspect ratio is the key feature, it would be desirable to have a technique for measuring the aspect ratio in a clay nanocomposite, but such a technique does not exist. Silverman cited another example, this one from the mid-1990s, of a model that relates the percolation threshold of a composite to the ellipsoid aspect ratio of the filler particles as they progress from plates to spheres to rods (Garboczi et al., 1995). The most complete picture of such composites covers spheres, but they are very uninteresting when it comes to them serving as barriers. The problem arises when trying to measure the aspect ratio of plates or rods when they are buried inside a composite. Other properties, such as conductivity, thermal conductivity, and elastic modulus also require particles with larger aspect ratios, not spheres.

Environment, Health, and Safety

The other issue that DuPont worries about, said is environmental, health, and safety and product stewardship. “We believe that we are going to need to understand these materials very well before we start putting them in consumer products. We just cannot risk having another ‘asbestos’ or another kind of incident like that,” he explained. For materials that may shed fibers, that understanding must include a complete characterization of fiber dimensions and biopersistence, which are key factors in determining the pathogenicity of a fiber. Silverman believes that no usable techniques exist today that can provide that data for materials that are densely packed with nanomaterials. Many techniques are available for studying dry or dispersed spherical particles, but spheres are not very useful in making high-performance nanocomposites. For rods and plates, scanning electron microscopy (SEM) can provide some information, but only if the particles are in specific orientations and in dilute solution. SEM is not useful for composites. Transmission electron microscopy and SAX are more useful, but the information they generate is only helpful if the plates or rods are aligned in the sample.

Particle size distribution represents just one level of complexity to the analytical challenges found in dealing with composites. The next level adds in multimodal distributions of different types of particles or differently shaped particles. Particles can also be bent and have kinks, which makes them very interesting from the perspective of creating a nanocomposite but introduces still another level of complexity that cannot yet be analyzed at any satisfactory level. Certainly, Silverman noted, this field is hampered by a lack of the kind of physical characterization data that would enable a polymer chemist to predict how any given composite will behave. Silverman summarized the situation by stating that particle shape, size, and size distribution are critical determinants not only for creating useful materials but also for understanding how they will behave from an environment, health, safety, and stewardship perspective. “We know how to characterize monodispersed spherical systems, but characterization of plate and rod-like materials is onerous at best, and is almost impossible in real nanocomposites,” he stated. He added that surface chemistry of nanocomposites is another area that must be better understood, and one that also suffers from a lack of analytical techniques applicable to real-world materials. An audience member from the National Institute of Standards and Technology (NIST) commented that NIST has developed some special techniques for making subsurface measurements in some types of composites using scanning electron microscopy and scanning probe microscopy.

QUANTIFYING THE CHEMICAL COMPOSITION OF ATMOSPHERIC NANOPARTICLES

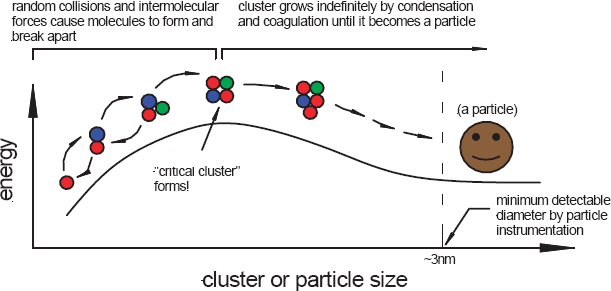

James Smith, of the U.S. National Center for Atmospheric Research (NCAR) and a visiting professor, addressed the phenomenon of new particle formation in the atmosphere and the recent progress that has been made in quantifying the composition of these spontaneously formed nanoparticles in our atmosphere. Nanoparticles form in the atmosphere by condensation to stable clusters formed by nucleation (Figure 3-8), or they can be emitted directly from sources such as diesel engines.

According to the theory of classical nucleation, particle formation is an endothermic process that creates a stable cluster in the atmosphere. As an endothermic process, molecules that collide and stick to one another tend to fall apart, and so the key is to cause enough collisions to occur that a pair is formed, then a triplex, and so on, until a “critical cluster” is formed. This cluster can contain any number of different compounds. Smith explained that sulfuric acid is a particularly sticky molecule in the atmosphere and that it plays a key role in the formation of critical clusters. Once a critical cluster has formed, any additional collisions involving the cluster will actually cause the particle to grow, which may be a rapid process, although it depends on other factors including constraints in kinetics, concentration and chemical nature of gaseous species, and particle surface properties. A post-doctoral fellow in Smith’s group at NCAR has developed

FIGURE 3-8 Formation of atmospheric nanoparticles by classical nucleation.

SOURCE: Smith, 2010.

a unique instrument that for the first time provides direct chemical measurements of neutral clusters in the atmosphere. Smith asked, “Why should we care about new particle formation?” To answer that question, he presented data from the Po Valley in Italy, which is a much polluted region. At one time, researchers thought that particle formation would not occur in such heavily polluted areas, because existing aerosols in the local environment would capture all of the small clusters before they could grow large enough to act as nucleation centers. That idea was proven wrong when it was observed that there can be sudden bursts of particle formation when the atmospheric boundary layer (part of troposphere closest to the Earth’s surface) lifts in the afternoon. Particle formation can produce as many as 100,000 particles/cm3, and growth can be as rapid as 20 nanometers/hour. At 100 nanometers in size these particles can then act as nuclei for cloud droplet formation. Researchers have been modeling this event. They estimate that new particle formation can contribute up to 40 percent of the cloud condensation nuclei in the boundary layer and up to 90 percent in the remote troposphere. Given these numbers, said Smith, “It is imperative to understand this growth event and be able to predict it in models in order to actually get at the role of aerosols in climate.” The real mystery is why these nanoparticle growth rates are so high. Smith asked, “What species, other than sulfuric acid, contributes to this remarkable growth?” He presented a collection of observations that detail the growth rates of these particle formation events and make clear that something other than sulfuric acid is involved. These events occurred in a wide range of environments from around the world, from Tecamac, Mexico, near Mexico City, to McCrory Island in the South Pole. “What these data show, universally, is that the growth rates are between a factor of 2 and a factor of 50 higher than is predicted by the only species we really know with 100 percent certainty contributes to growth, and that’s sulfuric acid,” said Smith. “So the question is, what species are contributing to this?”

Uncovering the Role of Amines

The challenge in searching for these mystery agents is that the quantities of material that need to be analyzed fall in the picogram range. Ideally, Smith will collect about 15 picograms of 5-nanometer particles, but at best, he will collect 800 picograms of a 20-nanometer particle. To analyze these samples, his team has developed an instrument they call the thermal desorption chemical ionization mass spectrometer (TD-CIMS) for characterizing the composition of 8- to 50-nanometer particles. After describing how the instrument works, Smith presented data produced by the instrument from 20-nanometer particles sampled in Atlanta, a strongly sulfur-dominated environment. The instrument revealed large amounts of sulfate compounds, as expected, but also dimethylamine. Smith repeated these measurements on particles collected at Hyytiälä Forestry Field Station in Finland, where they found large amounts of aminium ions. Indeed, measurements from all of the sites his team visited revealed the presence of amines. From these observations, he concluded that aminium salt formation is an important mechanism that accounts for 10 to 50 percent of new nanoparticle growth in the atmosphere (Smith et al., 2010). Smith concluded by stating that acid-based chemistry plays an important role in the formation and growth of these new particles and that amines appear to be important compounds

involved in new particle growth. “Time and time again,” he said, “We’re starting to hear in the atmospheric aerosol field about the growing awareness of the impact of amines on atmospheric aerosol formation.” But despite this awareness, very little is known about amines, where they come from, and what their fate is in the atmosphere. Acquiring that information is critical to understanding their impact on the environment and climate, and that, explained Smith, requires more and better atmospheric measurements.

Discussion

Doug Tobias of the University of California, Irvine, asked if the sources of these amines are being worked out. Smith replied that currently there is no real idea of where they originate.2 However, he said a new instrument can measure amines in the gas phase. The data from this instrument show that the sum of all the amines is about the same as the total concentration of ammonia in the atmosphere. Work from his team suggests that when an ammonium sulfate aerosol is exposed to gaseous amines, the amines can partition into the aerosol and displace the ammonia, producing an aminium sulfate aerosol. Observations by Smith’s team and others suggest that agriculture may be a significant source of amines in some parts of the United States and the rest of the world. Amine levels also show diurnal variation, which Smith hypothesized might be related to temperature control.

PARTICLE DESIGN AND SYNTHESIS FOR CATALYSTS

Abhaya Datye of the University of New Mexico reiterated the importance of catalytic technologies to the U.S. economy. Catalysts, he said, are engines that operate at the nanoscale and generate more than $1 trillion in economic activity in the United States each year. Although many people imagine catalytic reactors as being enormous, on the scale of a chemical refinery, they come in all sizes, some as small as the battery that fits in a laptop. In fact, one company has developed a catalytic fuel cell designed to power a typical laptop for about 20 hours. Nonetheless, most catalysts, certainly in terms of volume, are used in large-scale chemical production where it might take 6 months to produce one batch of catalyst needed to turn natural gas into liquid fuels on a scale of metric tons. Given that scale, it is critically important to be able to make catalysts, which are composed of complex nanoparticles, in a highly reproducible manner, which requires the ability to characterize catalysts in great detail.

Probing the Interactions Between Catalyst and Support

Nanoparticles make good catalysts because they provide large surface areas on which both catalysis and contact between the particles and their support material can occur. The latter is critical because many catalysts are bifunctional and require the participation of both nanoparticle and support to drive catalysis. Datye noted that recent work with gold nanoparticles on a titanium dioxide support showed unexpected activity in catalyzing oxidation of carbon monoxide. This activity peaked at a particle size of 3 nanometers, suggesting that some interesting interactions occur between the particles and the support. Because this reaction took place at room temperature and because gold is less expensive than platinum, there is a significant incentive to characterize the nanoparticle-support interactions to better understand how to make use of this discovery. In reviewing the challenges to catalyst characterization, Datye said, “Of course, we want to know the size, shape, bulk and surface structure, composition, oxidation state, and the location of individual atoms.” In particular, catalyst researchers would like to pinpoint the location of promoter atoms that are present at parts per million levels and have a significant impact on a catalyst’s behavior. Catalyst designers also want a better understanding of the interface between the nanoparticle and its support, as well as the location of nucleation sites and atom trapping sites, all under reaction conditions. This is a difficult challenge, although the development of aberration-corrected transmission electron microscopy (TEM) will help the field tremendously. So, too, will recent advances in performing in situ TEM at pressures up to 1 bar in closed cells, and in energy dispersive x-ray spectroscopy (EDS) and EELS. Datye presented a few examples of how these techniques have been used to study catalytic systems. One example showed how aberration-corrected TEM was used to reveal surface features on a 6-nanometer platinum nanoparticle (Gontard et al., 2007). From such images it is possible to see steps on the particle’s surface and therefore to determine how the facets of the nanoparticle interconnect. These interconnects are important features because they are where some of the most active catalytic sites may lie. Annular dark-field electron is another useful technique that provides atom-by-atom structural and chemical information. Images of the atoms on a single sheet of boron nitrite clearly show the location of boron, nitrogen, carbon, and oxygen atoms (Figure 3-9) (Krivanek et al., 2010). This technique acquires images on a relatively low-power 60 kilovolt microscope. To really understand how a catalyst works, it is necessary to obtain structural information at the single atom level. Aberration-corrected TEM images combined with EELS data can provide such information. Datye described how single lanthanum atoms were imaged inside the bulk structure of a calcium titanium oxide support (Varela et al., 2004). The small size of the EELS probe allowed the material to be scanned column by column to determine the chemical signature of each atom and its state.

_________________________

2After the workshop was held, new information on atmospheric amines has become available. For example, see Ge, X., A. S. Wexler, and S. L. Clegg, 2011. Atmospheric amines—Part I. A review. Atmospheric Environment 45(3):524–546.

FIGURE 3-9 Annular dark-field scanning-tunneling electron microscope image of monolayer boron nitride (BN). (a) As recorded, and (b) Corrected for distortion, smoothed, and deconvolved. The area circled on the right in (a) indicates a single hexagonal ring of the BN structure, which consists of three brighter nitrogen atoms and three darker boron atoms. The circle on the left indicates a deviation from the pattern. Inset at top right in (a) shows the Fourier transform of an image area away from the thicker regions. Its two arrows point to reflections of the hexagonal BN that correspond to recorded spacings of 1.26 and 1.09 Å. The image was recorded at 60 kV primary voltage, and the probe size was about 1.2 Å.

SOURCE: Reprinted with permission, Krivanek et al., 2010.

Catalysts by Design

Given this level of detailed structural information, the next step, Datye explained, is to use the information to control the features of a catalyst by design. And, in fact, several groups have been able to do just that. For example, Greeley and colleagues were able to design alloys of platinum and early transition metals that were superior oxygen reduction electrocatalysts compared to platinum alone (Greeley et al., 2009). Key to the effort’s success was the careful design of the catalyst’s surface. Wang and colleagues created a multi-metallic gold, iron, and platinum nanoparticle that proved to be a highly durable electrocatalyst (Wang et al., 2011). In this case, the deposition of 1.5-nanometer iron and platinum particles on gold yielded nanoparticles with five-fold symmetry, a structure not seen in bulk platinum materials and one with many exposed facets at which catalysis can occur. This structure was far more stable under catalytic conditions than one constructed from pure platinum particles on a carbon support. The final step in intentional catalyst design is controlling the site of nucleation; that is, controlling the exact placement of catalytic nanoparticles on the support surface. Datye, for example, is working with graphene sheets that have corrugations on the order of an angstrom and is using those corrugations as nucleation sites to anchor ruthenium nanoparticles. He said Farmer and colleagues have capitalized on information about the atomic-level energetics of cerium to stably anchor small gold nanoparticles (Farmer and Campbell, 2010). In real-life application, catalysts undergo changes over their lifetime. For example, by the end of its lifetime, the platinum in an automobile catalytic converter no longer disperses evenly over the support but agglomerates in clumps. It would be useful to understand the mechanism by which the platinum no longer takes the form of a nanoparticle. Using in situ TEM to study this process, Datye has discovered that the clumps appear to form via Ostwald ripening. He explained that it is actually possible to see particles disappearing rapidly and that, by observing this happening many times, he concluded that the particles are not evaporating, which would happen over a much longer time period. Instead, he believes that the particles emit atoms to the surface and then diffuse across the support surface. The particles then form clusters, a few atoms across, at step edges on the support surface in much the same way that blowing leaves collect against a curb. As the particles grow, they become pinned against these edges and eventually become the clumps seen in an aged catalyst. As a final example of the type of structural detail that modern microscopy can provide, Datye described the use of high angular annular dark field scanning transmission electron microscopy to produce tomographic images of nanostructured heterogeneous catalysts. In one study, the investigators compared the dispersion of platinum and rhenium nanoparticles on two different supports. On a typical Vulcan carbon black support used in fuel cells, particles were found in localized areas, but on Norit activated carbon the particles were distributed evenly throughout the support. This suggests that activated carbon provides more nucleation sites to form smaller clusters of metal. To summarize, Datye said that these developments in electron microscopy are providing unprecedented insights into the structure of these catalysts. “As we develop better strategies, we should be able to make these catalysts more stable and more active,” he said.

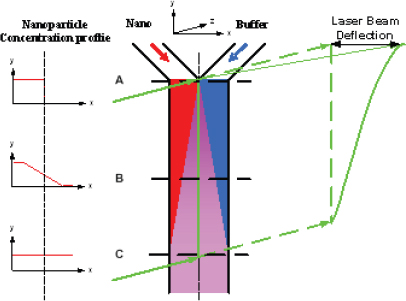

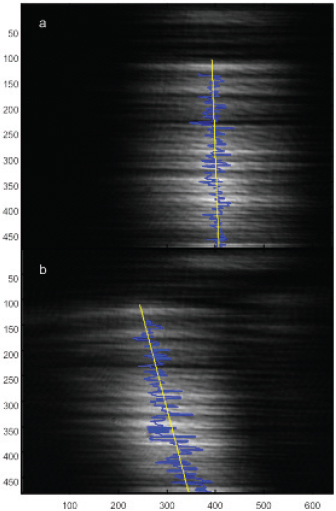

In his presentation, Yi Qiao of the 3M Corporate Research Process Laboratory discussed some of the challenges facing those who need to characterize nanoparticle dispersions used in industrial applications. He said there is a disconnect between what academia uses to make such measurements and what industry needs to help its efforts in process control and quality monitoring. To meet the needs of a manufacturing environment, a measurement technique must be fast enough to provide feedback on a meaningful timeframe, have few restrictions for sample preparation in terms of nanoparticle concentration and purity, and be able to distinguish “good” from “bad” so a line operator can make necessary adjustments to the manufacturing process in real time. To address that disconnect, Qiao and his colleagues at 3M have developed two techniques that are now used in manufacturing plants for process monitoring and feedback control. The first technique uses a device called a microfluidic Y-cell (Figure 3-10). This device takes advantage of the fact that fluid flows through a microfluidic device in laminar mode; that is, two fluid streams flowing next to one another will not mix. When a nanoparticle-loaded fluid is introduced next to a buffer solution at one end of a microfluidic channel and the fluids are allowed to flow through the channel, the only nanoparticles that enter the buffer stream will be those that diffuse into it. The diffusion coefficient, which reflects the size of a nanoparticle, can then be measured by passing a laser beam through the microfluidic channel (Figure 3-11).

This very simple technique provides a measure of nanoparticle size in real time. A small amount of a process stream can be diverted into the Y-cell device, providing a line operator with the information needed to make adjustments to a process in real time to ensure particle size falls within the desired parameters. The technique, however, works best with particle sizes less than 15 nanometers in diameter. For larger particles, diffusion occurs too slowly for the device to measure size changes within a useful timeframe. Dielectrophoresis is proving useful for characterizing larger particles, even in the micron range, in manufacturing settings. Qiao and colleagues used microfabrication techniques to create an electrode array that can trap nanoparticles when an alternating electric field is applied to the array. Once the nanoparticles are trapped, the electric field is turned off and the particles are allowed to diffuse, washing out the density

FIGURE 3-10 Microfluidic Y-cell for nanoparticle size measurement.

SOURCE: Qiao, 2010.

FIGURE 3-11 In a microfluidic Y-cell, a laser beam shows no deflection prior to the admission of nanoparticles into the channel (top). Once nanoparticles are introduced into the channel, the laser beam is deflected with a slope that reflects the size of the nanoparticles.

SOURCE: Qiao, 2010.

gradient that was created by the electric field. A laser beam is then used to measure the speed with which the particles move across the gradient. Key to this device is its ability to make an electrode array capable of generating a large electric field gradient that can overcome Brownian motions. Because the strength of the electric field is controllable, it is possible to measure both nanoparticle aggregates and individual nanoparticles in real time.

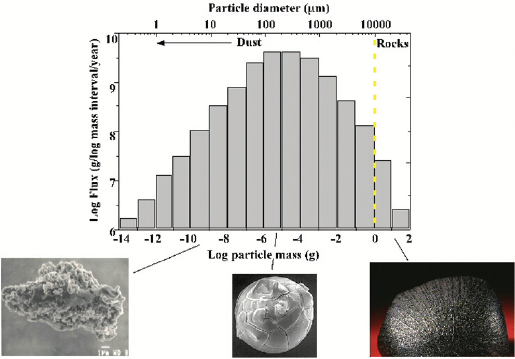

DECODING THE UNIVERSE AT THE NANOSCALE

Rhonda Stroud of the Naval Research Laboratory explained that the Navy has long been interested in nanoparticles, primarily for their application as propellants, in photovoltaics, and as fuel cell catalysts. As a result, she has developed a wide range of tools for analyzing the composition of nanoparticles. Some of these methods have proven useful for studying the cosmic origins of the 40,000 tons of extraterrestrial dust that enters Earth’s upper atmosphere annually. Although this type of analysis may seem far afield, the challenges to characterizing these types of nanoparticles are the same as those for environmental and engineered nanoparticles. Nanoparticles form in large quantities around dying stars and in interstellar gas clouds. Most of the particles that bombard Earth ablate in the upper atmosphere. In particular, the particles in the 100-micron range, which make up most of the dust’s mass, vaporize completely (Figure 3-12). Some of this vapor recondenses to form individual nanoparticles in the upper atmosphere. These nanoparticles are an important source of iron and possibly sodium; therefore, they may play an important role in climate processes.

Stroud and her collaborators at NASA would like to answer three important questions about cosmic dust using state-of-the-art analytical tools:

- Did the dust form in our solar system or around another star?

- How did the dust form?

- Is it pristine or has it been heated, shocked, irradiated, or otherwise altered?

Cosmic nanoparticles have a variety of compositions. Most are silicates, although nanodiamonds may in fact be more abundant. Research has identified dust particles made of silicon carbide, magnesium aluminum oxide in spinel form, graphite, aluminum, calcium-aluminum oxides, and silica nitrite. “The majority of the materials analyzed so far are refractory-type things, essentially interstellar sandpaper materials,” said Stroud. “This is part of why they’ve survived 4.5 billion years.”

Snapshots of Single Grains of Dust

One approach to studying cosmic nanoparticles is to map the isotopic signature of individual grains of a meteorite. Stroud and colleagues have developed methods for using a focused ion beam to slice particles as small as 200 nanometers and a combination of Z-contrast imag-

FIGURE 3-12 The flux of extraterrestrial dust.

SOURCE: Stroud, 2010.

ing and EDS to measure the elemental composition of the exposed grains (Stroud et al., 2004). She showed an image of a silicon carbide nanoparticle with an isotopic signature indicating it came from a nova star (Figure 3-13). The image also revealed a number of subgrains that Stroud presumes came from the same star because they were trapped inside this nanoparticle. However, the subgrains are below the size limit at which she can measure individual isotopes to confirm their origin.

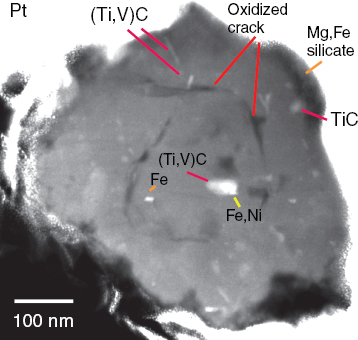

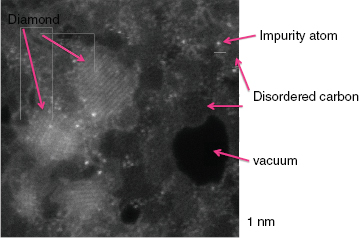

Stroud explained that the SEM instruments have a beam spot size of approximately 100 nanometers, and although it is possible to make the spot smaller, it has to be big enough to capture sufficient numbers of atoms to make accurate isotopic measurement. “Mostly we’re looking for a few rare isotopes, and there are just not enough atoms present in a 50-nanometer particle in general to get a good isotopic measurement,” she explained. Analyzing the origins of individual nanodiamonds, which average about 2 nanometers in diameter, is therefore challenging. In 1987, researchers reported identifying nanodiamonds with an isotopic signature indicating they were formed outside of the solar system (Daulton et al., 1996). However, these measurements were done as bulk average measurements, and they were identified on the basis of signatures in krypton and xenon isotopes. “The problem here is that there is only one xenon atom for 105 of these nanodiamonds, so it is not clear which fraction of those nanodiamonds really formed inside our solar system and which came from supernova or somewhere else,” explained Stroud. “We would really love to be able to go in and locate the individual xenon atom and say, Aha! That one is probably from a supernova.” Identifying nanodiamonds as coming from outside of the solar system is also problematic because spectroscopic studies since 1987 have consistently demonstrated that there is a soot-like component on the nanodiamonds. Stroud recounted a variety of microscopy studies showing that nanodiamond particle aggregates contain multiple phases of poorly ordered carbon sheets, agglomerated nanodiamonds, and what appears to be ordered graphite. To accurately characterize the nanodiamonds, Stroud needed a better microscope. The new aberration-corrected NanoSTEM microscope at Oak Ridge National Laboratory (ORNL) fits the bill. Using this instrument, she and her collaborator at ORNL produced images with subnanometer resolution that clearly identified the various phases of carbon present as well as individual impurity atoms (Figure 3-14). These images showed that the nanodiamonds contain impurities ranging from fluorine and neon to vanadium and chromium, but nothing nearly as heavy as xenon. The presence of individual neon atoms in the secondary phases of carbon, and not in the nanodiamonds, argues for a supernova origin for that material.

FIGURE 3-13 Z-contrast imaging and energy dispersive spectroscopy reveal subgrain structure and elemental composition of a silicon carbide cosmic nanoparticle.

SOURCE: Stroud, 2010.

Stroud used EELS measurements to further characterize the sheet-like or sub-nanometer-thick layer of carbon associated with the nanodiamonds. She found that the electronic profile of sheet-like carbon was spatially distinct from nanodiamond surfaces in the agglomerate that makes up the dust particle. Inside diamond, however, the electronic signature was distinctively that of diamond. These data suggest that the nanodiamonds did not form in the supernova, but, rather, in the interstellar medium. Flash heating of organic matter would have converted some of that matter to nanodiamond and some to amorphous forms of carbon. In summary, Stroud said that the problem of performing multiple, coordinated,

FIGURE 3-14 Dark-field scanning transmission electron micrograph of cosmic particles from the Murchison meteorite.

SOURCE: Stroud, 2010.

nanoscale analyses on particles 200 nanometers and larger has been solved. “We can pick up individual grains, do the isotope measurements, do transmission microscopy, do whatever we like,” she said. “It gets a lot harder to do multiple analyses when you get below 200 nanometers.” Aberration-corrected electron microscopes are effective tools for doing atomic-scale characterizations on periodic or ordered samples with well-constrained impurities. Conducting such analyses on natural samples, where there may be large numbers of different elements present and phase mixtures, some of which are disordered, is more difficult but can be done with the right preparation and patience.

Discussion

When asked by Barbara Finlayson-Pitts about how the noble gas neon manages to remain in a dust fragment for billions of years, Stroud said that the noble gas atoms are only seen when more than one layer of carbon is present. They are likely trapped inside C60 cages that formed at the same time as the nanodiamond.

In response to a question from Jim Litster of Purdue University about whether he can measure aggregation or the degree of dispersion of nanoparticles in a matrix, Silverman explained that the methods he uses cannot yet make those distinctions. Currently, no tool exists that can provide adequate information at that level of detail. Nuzzo then asked if it was really necessary to make measurements with atomic-level detail for materials such as engineering polymers that are used in bulk-scale applications. Silverman responded that it is not necessary from a process control point of view to know the exact location of every atom in an engineering polymer—a statistical average over a square kilometer or 50 pound sample will do. However, such information is needed to understand how specific properties of a composite material arise, and perhaps more importantly, to understand how the material can fail. Agglomeration of the nanoparticles in a matrix can be meaningless in some materials or applications and catastrophic in others; understanding which will be the case requires the ability to first create a perfect dispersion to show that agglomeration does not adversely affect material performance. Mark Barteau from the University of Delaware asked if work to characterize catalyst structure under reducing conditions has been done to the neglect of work to study catalysts under more challenging oxidizing conditions. Nuzzo replied that entire industries have been built on conducting catalysis under reducing conditions, including the petrochemical industry, but he agreed that interesting oxidative reaction conditions require the same amount of attention. Doing so is proving to be a big challenge, however, particularly because most atomic-level characterizations are performed in a vacuum. Datye added that new heating elements that can withstand high-temperature, oxidizing conditions are starting to move that aspect of the field along. Levi Thompson of the University of Michigan asked how meaningful these techniques are given the rapid timescale at which catalysis occurs. Nuzzo responded that the rapid timescale of these reactions means that, from a dynamic perspective, the catalysts are in fact sitting still most of the time. However, techniques have been developed to study the conformational dynamics in proteins in real time, which may be useful for studying heterogeneous materials. In response to a question from Vicki Grassian of the University of Iowa about small particle monitoring, Lippmann said that there is a clear need for better monitoring of the ultrafine particles to which people are exposed in the environment.

This Pase is Blank