One of the long-term goals of discipline-based education research (DBER) is to contribute to the knowledge base in a way that can guide the translation of DBER findings to classroom practice (see Chapter 1). To examine the translation of DBER into instructional practice, the committee was charged with two related questions:

1. To what extent and how has DBER informed teaching and learning in the various disciplines?

2. What factors are influencing differences in the state of research and its impact in the various disciplines?

As discussed in Chapters 4 through 6, DBER scholars often develop research-based teaching strategies and test those strategies in their own classes. Clearly, DBER has informed teaching practice in these classes, with demonstrated gains in student learning in many cases. However, within each science or engineering discipline, DBER scholars comprise only a small fraction of all faculty members. We therefore address the two questions above by examining the extent to which DBER has informed the teaching of science and engineering faculty members who are not DBER scholars.

In education as in other fields, translating research into practice has posed a challenge for decades, and many have argued that efforts to integrate the two have met with limited success (Feuer, Towne, and Shavelson, 2002). Two types of translation are (1) translating basic research into interventions or programs, and (2) translating interventions that have had localized success into larger-scale interventions. Both are relevant to DBER.

Regardless of which dimension of translation is the goal, determining the extent to which DBER has informed teaching practice is difficult for many reasons. First, there is a limited empirical baseline of faculty members’ instructional practices in science and engineering—few studies have rigorously examined instructional practices within disciplines, and even fewer have studied practices across disciplines at the undergraduate level. Second, because faculty members may draw on similar findings from DBER, cognitive science, educational psychology, science education, education, and/or the scholarship of teaching and learning to inform their practice, it is difficult to disentangle the effects of DBER from those of related research. Third, DBER and related research can influence teaching practices to varying degrees, from increased awareness of student learning challenges to complete transformation of instructional approaches. It is difficult to measure some of the more indirect effects, such as increased awareness, on instruction. And finally, as research on higher education policy and organization has shown, instructional decisions—including the decision to incorporate DBER and other research—are influenced by many more factors than the mere availability of research (Fairweather, 2008). For example, science and engineering faculty are likely to be concerned with fitting new techniques into their overall teaching, research, and service responsibilities. Factors including rewards, the relative importance of teaching and research, and an institutional emphasis on bringing in research money are major influences on these decisions (Austin, 2011). However, research on the importance of these factors relative to each other and the ways in which they interact to influence instruction is relatively scarce (see Quinn-Patton, 2010).

This chapter discusses the available national research on current teaching practices; describes research on efforts within the sciences and engineering to increase faculty members’ adoption of research-based practices, including findings from DBER; and situates those efforts in the broader context of research on the factors that influence faculty members’ instructional decisions and change in higher education institutions. The committee recognizes that a wide variety of practices affect student learning, such as advising, co-curricular learning activities, and learning communities comprised of faculty members or students. However, the focus of this chapter is on classroom practices.

THE CURRENT STATE OF TEACHING IN

UNDERGRADUATE SCIENCE AND ENGINEERING

The documentation of instructional practices is based on two types of sources. First are national surveys of faculty work that have a representative sample of disciplines, including science and engineering. These surveys

include the National Surveys of Postsecondary Faculty sponsored by the National Center for Education Statistics (e.g., Schuster and Finkelstein, 2006; U.S. Department of Education, 2005) and the Higher Education Research Institute surveys sponsored by the University of California, Los Angeles (e.g., DeAngelo et al., 2009). Second are a limited number of national surveys of faculty members in engineering (Borrego, Froyd, and Hall, 2010), the geosciences (Macdonald et al., 2005), and physics (Henderson and Dancy, 2009).

The sample sizes of national surveys of faculty permit cross-disciplinary comparisons only at a gross level such as natural sciences versus social sciences. The discipline-specific surveys, in contrast, contain larger samples in the disciplines studied. However, those results are not readily comparable across the disciplines because researchers asked different types of respondents about different types of research-based practices. In addition, findings from the individual disciplinary surveys must be interpreted with caution. Response rates vary from 12 percent in engineering to 50 percent in physics (compared with almost 90 percent across all disciplines in the National Surveys of Postsecondary Faculty 1994). The results may reflect selection bias, if faculty members more engaged in research-based teaching responded more frequently than others who were less engaged. In addition, the findings in the geosciences and physics are based on faculty self-reports, which may overestimate the extent of change in teaching practice. The engineering surveys report on department chairs’ perceptions of faculty members’ teaching practices, which also might not be accurate.

National survey results of faculty instructional approaches show that faculty members in science and engineering fields are, on average, the least likely to use any form of student-centered or collaborative instruction. They are the most likely to rely primarily on lectures in their classrooms (Fairweather, 2005; Fairweather and Paulson, 1996, 2005; Schuster and Finkelstein, 2006). These results are consistent with the more detailed studies of individual science and engineering disciplines described next.

Researchers in the geosciences conducted a web-based, national survey of 2,207 faculty members in 2004, with a 39 percent response rate (Macdonald et al., 2005). Survey responses revealed that traditional lecture was the most commonly used classroom teaching method. Sixty-six percent of those teaching introductory classes and 56 percent of those teaching courses for majors reported lecturing in nearly every class. More than half of respondents said they incorporated some interactive activities at least once a week, usually lecture with questions or lecture with demonstrations. Faculty reported using interactive techniques more frequently in courses with fewer than 31 students—including small introductory courses and courses for majors—than in medium-sized (31-80 students) or large classes (more than 80 students).

The extent to which instructors in the geosciences reported using interactive activities varied. Most respondents reported asking students to solve problems and analyze data, although they rarely asked students to pose and solve their own problems. In addition, a majority of respondents teaching introductory courses and 80 percent of those teaching courses for majors, asked students to read journal articles at least once per semester. In 2009, these data were used as the baseline in an evaluation of On the Cutting Edge (see “Efforts to Promote Research-Based Practices in Science and Engineering” in this chapter for a discussion of the evaluation).

Taking a slightly different approach, surveys of teaching practice in physics and engineering1 focused on the diffusion of innovations, using Rogers’ (2003) theory of the process involved in adopting an innovation (see Box 8-1) as a frame for data collection and analysis. In a nationally representative sample of 722 physics faculty across the United States (with a 50 percent response rate), nearly all respondents (87 percent) indicated familiarity with one or more research-based instructional practice(s) and approximately half (48 percent) reported currently using at least one such practice (Henderson and Dancy, 2009). At the same time, faculty reported they frequently modified the research-based practices (40 percent reported minor modifications, 41 percent reported major modifications, and only 17 percent reported implementing the research-based practice with fidelity to the developer’s design). In addition, faculty frequently discontinued a practice, typically after trying it for at least one semester. The reported rate of dropping a practice ranged from 30 to 80 percent, depending on the practice, with an overall average of 40 to 50 percent.

Based on these results, Henderson and Dancy (2009) argue that current physics education research dissemination approaches (such as journal articles, conferences, and workshops) have been more successful in raising widespread awareness of new instructional practices than in helping faculty understand the underlying principles of these practices, or how to deploy them effectively. Moreover, Henderson and Dancy suggest that the high level of discontinuance (even after modification) indicates that faculty either lacked the knowledge needed to customize a research-based practice to their local situation or underestimated the factors that tend to work against the use of innovative instructional practices. Indeed, Rogers (2003) warns that a lack of knowledge of how to implement the innovation correctly or of its underlying principles can lead to discontinuance of an innovation. These findings argue for shifting the conversation from “what works” and the

__________________

1Because of the low response rate (12 percent) in the Borrego, Froyd, and Hall (2010) survey of engineering department chairs, and the fact that research universities were overrepresented in the sample compared with the universe of engineering departments, the committee chose not to report results from that study.

BOX 8-1

Rogers’ (2003) Theory of the Innovation-Decision Process

As defined by Rogers (2003), the process of deciding to adopt an innovation includes five stages:

Stage 1: Knowledge. The individual learns about the innovation and seeks information about it.

Stage 2: Persuasion. The individual evaluates the innovation and begins to develop a positive or negative attitude. Close peers’ evaluation of the innovation have the most credibility.

Stage 3: Decision. The individual decides to adopt or reject the innovation.

Stage 4: Implementation. The individual puts the innovation into practice, possibly with some modifications, yet some uncertainty remains.

Stage 5: Confirmation. The individual looks for support for his or her decision. At this stage, the individual may decide to discontinue the innovation, either by replacement (adopting a better innovation) or by “disenchantment,” because the innovation does not meet the individual’s needs.

concomitant evidence for those practices to putting proven practices into place efficiently.

EFFORTS TO PROMOTE RESEARCH-BASED PRACTICE

IN THE SCIENCES AND ENGINEERING

Efforts to increase the impact of DBER on instruction must be viewed in the broader context of currently proliferating efforts to promote research-based undergraduate instruction in science and engineering. Professional societies, federal funding agencies, and accreditation organizations, all located outside academic institutions, have worked to inform faculty members about research that can inform their teaching and encourage them to change their teaching practices. Not all of these efforts focus solely on DBER, and the extent to which they emphasize DBER is unknown.

The National Science Foundation (NSF) has been an important external force in undergraduate science and engineering education, encouraging faculty to use research—including DBER—to improve their teaching practices.

Financial support from NSF has sponsored conferences and professional development opportunities across the science and engineering disciplines. NSF also funds research into the process of change in science and engineering faculty teaching practices, including individual studies (e.g., Henderson and Dancy, 2009), and national conferences (“Facilitating Change in Science and Engineering Undergraduate Education”2 in 2008 and “Vision and Change in Undergraduate Biology Education”3 in 2009 to help improve instructional quality in biology).

Current NSF efforts build on three decades of national discussion and debate about the need to improve science teaching and learning (National Science Board, 1986), including National Research Council reports (2007, 2009) calling for rapid increases in the number and quality of science and engineering graduates if the United States is to remain competitive. In response, NSF now has a clear mission “to support science and engineering education programs at all levels and in all fields of science and engineering.”4

Professional societies also have played important roles in undergraduate education. In engineering, the accrediting agency ABET is a particularly important external force (see Chapter 2 for a more detailed discussion). Accreditation standards introduced by ABET in the 1990s were aimed at improving the quality of engineering teaching and learning (ABET, 1995). The standards call for an outcomes-focused, evidenced-based cycle of observation, evaluation, and improvement of instruction. ABET reinforces the importance of teaching and learning in engineering by requiring programs seeking accreditation to demonstrate effective instructional practices and learning outcomes. This external organization has increased the commitment of engineering programs to student learning (Lohmann and Froyd, 2010) and led to documented improvements in student learning (Lattuca, Terenzini, and Volkwein, 2006). In a related vein, the American Chemical Society approves programs but does not accredit them. Participation in the approval process is voluntary, and the committee did not find evidence demonstrating the impact of the approval process on chemistry programs or student learning.

Through these and other efforts, science and engineering faculty and future faculty have many options for professional development that is focused on integrating research into practice, ranging from campus-based initiatives to national programs. The following discussion concerns national-level

__________________

2For more information, see http://www.wmich.edu/science/facilitating-change/ [accessed February 17, 2012].

3For more information, see http://visionandchange.org/ [accessed March 13, 2012].

4For NSF’s authorizing language and rules, see http://www.nsf.gov/od/ogc/leg.jsp [accessed March 31, 2012].

initiatives for which research or evaluation data exist. Given the committee’s charge to examine the extent to which DBER has informed instruction, the bulk of the discussion addresses discipline-specific professional development opportunities for faculty and future faculty because DBER is most likely to be incorporated into discipline-specific initiatives. DBER also might be used in professional development efforts that span multiple science and engineering disciplines, such as Project Kaleidoscope,5 but research and evaluations on such programs are not currently available.

The committee recognizes that non discipline-specific professional development that addresses research-based principles of teaching and learning also has the potential to increase the use of discipline-specific, research-based practices such as those identified by DBER. However, the committee found no research establishing linkages between these broader programs and the influence of DBER on instruction. The committee also recognizes that multiple professional development opportunities (as well as other factors) interact to influence faculty members’ practices—ranging from increased awareness to actual changes in practice. However, it was beyond the scope of this study to examine the extent to which professional development writ large affects teaching practice or to describe the landscape of professional development activities available to science and engineering faculty. For these reasons, the committee’s analysis of the extent to which DBER has informed instruction excluded more general professional development programs.

Large-Scale, Discipline-Specific Professional Development

Disciplinary and cross-disciplinary societies have implemented national professional development programs and workshops designed to encourage the use of research to change teaching practices. Some notable examples that have been evaluated include the following:

• The New Faculty Workshop in Physics and Astronomy, established in 1996 and sponsored by the American Association of Physics Teachers with financial support from NSF and in partnership with the American Physical Society and the American Astronomical Society. These workshops promote research-based reforms that new faculty can adopt with minimal time commitment and minimal risk to their chances of winning tenure (Krane, 2008).

• The National Academies Summer Institute for Undergraduate Education in Biology, established in 2003, these week-long intensive

__________________

5For more information on Project Kaleidoscope, see http://www.pkal.org/ [accessed March 31, 2012].

professional development workshops for university faculty emphasize the application of teaching approaches based on education research, or “scientific teaching” (Handelsman et al., 2004; Wood and Gentile, 2003a, 2003b).

• Faculty networks and professional development opportunities associated with POGIL (Process-Oriented Guided Inquiry Learning) and PLTL (Peer Led Team Leading), two major initiatives that are sponsored by NSF and originated from the systemic change in chemistry initiatives in the 1990s. Specifically, the POGIL Project6 offers workshops, classroom and laboratory materials, consultations with POGIL experts, funding for visits to locations where POGIL is used, and regional POGIL networks to help teachers integrate guided inquiry and exploration into the classroom.

• The National Effective Teaching Institute in Engineering Education, a multiday workshop established in 1991 to familiarize engineering faculty members with proven, student-centered strategies (Felder and Brent, 2010).

• On the Cutting Edge, a professional development series that includes workshops and a related website dedicated to content knowledge and teaching strategies in the geosciences (Macdonald et al., 2004; Manduca, Mogk, and Stillings, 2004).

The extent to which these programs are based on DBER relative to other, related research has not been systematically documented. As a result, no conclusions can be drawn about the influence of DBER on instruction from evaluations of these programs. However, the evaluations do offer some insights into the broader challenge of translating research into practice, and of accurately measuring faculty members’ instructional practices.

Echoing the national survey findings from physics (Henderson and Dancy, 2009), evaluations from these programs suggest that some have been more successful in increasing faculty awareness about research-based practices than in driving actual change in teaching practice. For example, in an evaluation of the New Faculty Workshop in Physics and Astronomy (Henderson, 2008), 192 former participants said that they used the DBER-based instructional strategy of peer instruction (Mazur, 1997)—a collaborative learning approach in which lectures are interspersed with conceptual questions designed to expose common difficulties in understanding the material. However, only 19 percent of the 192 participants reported instructional activities that could be consistent with the basic features of peer instruction, as many of them omitted the peer-to-peer interaction component. These responses suggest that the workshop participants were

__________________

6For more information, see http://pogil.org/ [accessed March 31, 2012].

unaware of the research on the social nature of learning—a key underlying principle of peer instruction. As previously discussed, lack of awareness about the underlying principles of an innovation can lead faculty members to discontinue using that innovation (Rogers, 2003).

Surveys of participants in the National Academies Summer Institute workshops conducted before, shortly after, one year after, and two years after their participation, indicated substantial increases in scientific teaching practices over time (Pfund et al., 2009). However, another investigation of the Summer Institutes and the NSF-funded Faculty Institutes for Reforming Science Teaching Program yielded more mixed results (Ebert-May et al., 2011). That evaluation included surveys and observations of videotaped classes within 6 months of the workshop and again up to 18 months later. On the surveys, more than 75 percent of participants reported frequent use of learner-centered and cooperative learning activities following their workshop training. Yet, analysis of the videotapes using the Reformed Teaching Observation Protocol (Sawada et al., 2002) revealed that the bulk of instruction included pure lecture or lecture with some demonstration and minor student participation (Ebert-May et al., 2011). From the first to the final videotaped class, 25 percent of instructors moved toward more learner-centered practices and 15 percent moved toward more instructor-centered practices. The practices of the remaining 60 percent did not change. A caveat to the findings of Ebert-May et al. (2011) is that alumni of the Summer Institutes frequently commented in surveys that it took three or more years of experimenting with learner-centered teaching strategies before they could implement those strategies effectively (Pfund et al., 2009). These results suggest that measuring the influence of DBER and related research on teaching requires a nuanced, longitudinal model of individual behavior rather than a traditional “cause and effect” model using a workshop or other delivery mechanism as the intervention.

An evaluation of POGIL workshops used a modified version of Rogers’ (2003) innovation decision model (see Box 8-1) to identify six stages of readiness to adopt POGIL (Bunce, Havanki, and VandenPlas, 2008). At the start of the workshop, most survey respondents (56 percent) reported that they had already implemented POGIL, while another 30 percent reported plans to adopt this innovation (stages 4 and 5 of readiness to implement). Comparing responses about adoption readiness from pre-survey to post-survey, the results suggest that workshop participation had a limited effect: Nearly half (47 percent) of participants stayed at the same stage of readiness to adopt POGIL, 29 percent increased by one or two stages, and 26 percent decreased by one or two stages. The evaluators interpreted the movement to lower levels of adoption readiness as an indication that, for some participants, the workshops served as a reality check, causing them to

more accurately describe their adoption (or nonadoption) of the innovation in the postsurvey (Bunce, Havanki, and VandenPlas, 2008).

In contrast to physics, biology, and chemistry, evaluations of professional development programs in engineering and the geosciences do reveal changes in practice. However, as with other research based on faculty self-reports, these findings must be interpreted with caution. In a survey of National Effective Teaching Institute in Engineering Education alumni who participated between 1993 and 2006, participants credited the workshop with raising their awareness and use of various learner-centered strategies (Felder and Brent, 2010). In the geosciences, a survey comparing faculty who had participated in On the Cutting Edge (either by using the website, or by attending a workshop and using the website), to faculty who had not participated in the program revealed several differences between the two groups. Participants were more likely than nonparticipants to report adding group work or small group activities to their teaching (40 percent of participants compared with 15 percent of nonparticipants); spending less time lecturing (43 percent of participants, compared with 22 percent of nonparticipants) and more time using in-class questioning, small group discussion and in-class exercises; and making more use of education research. In many cases, impacts were more pronounced for those who attended a workshop and made use of the website. Additional, qualitative data indicated that workshop participants underwent a shift in their teaching philosophy to an approach that was more focused on student-centered learning (McLaughlin et al., 2010).

Discussion of Evaluation Results

The literature on effective professional development in the sciences and engineering including in K-12 education (Loucks-Horsley et al., 2009; Wilson, 2011), along with a review of 191 articles published in peer-reviewed journals (Henderson, Beach, and Finkelstein, 2011) can help to explain the findings from the evaluations of disciplinary professional development programs. This research suggests that successful efforts to translate research into practice include more than one of the following components:

• Sustained, focused efforts, lasting 4 weeks, one semester, or longer. This finding implies that one-time workshops or a collection of unrelated workshops are unlikely to be successful (Cohen and Hill, 2001; Garet et al., 2001).

• Feedback on instructional practice. Faculty members are more likely to make significant changes in their teaching practice if they receive coaching and feedback when trying a new instructional practice (Henderson, Beach, and Finkelstein, 2011).

• A deliberate focus on changing faculty conceptions about teaching and learning. Just as research has shown that deep, conceptual change is often required for students to replace their alternative conceptions with scientifically correct understandings of phenomena (National Research Council, 2007), the research on change in undergraduate science and engineering education shows that faculty are more likely to change their teaching practice when they engage in deep conceptual change (e.g., Gibbs and Coffey, 2004; Ho, Watkins, and Kelly, 2001).

Although the research suggests that a sustained approach enhances effectiveness, some of the professional development programs described above consist of one-time workshops. Recognizing the importance of a sustained approach, the design of the Physics New Faculty Workshop initially included two types of follow-up activities for participants. However, because few workshop participants actually attended these reunions, most of the funding has been reprogrammed to support the single annual workshop (Krane, 2008). The lack of follow-up might explain why this workshop appears more effective in raising awareness of the research than in leading to change in teaching practice.

In contrast, the National Effective Teaching Institute also relies on one-time workshops, yet the evaluations indicated that the workshops did improve instruction. The workshop’s evaluators drew on the adult learning literature to explain that workshop’s success (Felder and Brent, 2010). Specifically, the workshops meet five important criteria that motivate adult learners (Wlodkowski, 1999):

1. Expert presenters

2. Relevant content

3. Different options for applying recommended methods

4. Praxis (action plus reflection)

5. Group work

Evaluations of On the Cutting Edge, which does not rely on one-time workshops, also indicated that the workshop has led to a moderate level of change in teaching practice. These results might be explained by some key aspects of the program that are consistent with the research on effective professional development (Macdonald et al., 2004). First, the program promotes a sustained approach through its interactive website, which is designed to build ongoing collegial networks and provide ready access to integrated resources that link science, pedagogy, assessments and research on learning (Manduca, Mogk, and Stillings, 2004). Second, the underlying change strategy goes beyond promoting a particular type of finished

curriculum or pedagogy. Rather, it engages faculty in reflecting on, and contributing to, curriculum and teaching strategies—primarily through the website.

Despite the challenges of using professional development to translate research into practice, these findings, albeit largely from faculty self-report data, illustrate that it is possible to increase awareness of innovations. A limited amount of evidence suggests that practices can change, although the long-term nature of those changes is not well understood. Moreover, efforts to scale professional development to the level that would influence large numbers of faculty across different institutions are in their early stages.

Professional Development for Future

Faculty in Science and Engineering

Various efforts also are under way to shift the socialization of prospective faculty toward greater commitment to good teaching, including the use of research-based practices (Austin, 2011). This work is based on previous research suggesting that early socialization of future faculty members carries long-term consequences in their professional behavior (Bess, 1978; Clark and Corcoran, 1986).

At the national level, the NSF-supported Center for the Integration of Research, Teaching, and Learning (CIRTL) involves a core network of 6 universities and an expanded network of more than 30 universities to provide professional development opportunities for doctoral students and postdoctoral scholars in science, technology, engineering, and mathematics. Evaluations of the impact of CIRTL-related professional development rely largely on faculty self-report data. These evaluations suggest that participants develop a greater sense of the value of teaching as part of their careers; a wider range of approaches to analyzing teaching problems; and an enhanced ability to encourage student learning (Austin, Connolly, and Colbeck, 2008). Furthermore, participants often indicate that they feel better prepared for undergraduate teaching, have a greater sense of self-efficacy about teaching, and value opportunities to interact with others with similar interests regarding teaching through the learning communities (Austin, 2011). In a similar vein, alumni of the Preparing Future Faculty Program report that the program legitimizes teaching and offers ongoing support through a community of teachers (DeNeef, 2002). Launched in 1993 and sponsored by the American Association of Colleges and Universities, this program prepares doctoral students in various disciplines (including the sciences) for teaching, academic citizenship, and research. No discipline-specific evaluations of the program exist; the overall evaluation includes a survey of 271 alumni (129 responded) and follow-up interviews with 25 survey respondents, so the findings are not conclusive.

Although the evidence from these efforts is too limited to draw conclusions, altering the preparation and expectations of doctoral students for teaching in science and engineering potentially represents a more efficient way to influence future instructional practice than changing the teaching behavior of already active faculty (Fairweather, 2005).

PUTTING REFORM EFFORTS INTO CONTEXT

Regardless of the availability of quality research and quality professional development to translate that research into practice, change in the teaching practices of science and engineering faculty does not come easily. Teaching behavior—like human behavior generally—is influenced by the contexts in which it is situated (Bronfenbrenner, 1979). Faculty members’ teaching decisions depend on the interplay of individual beliefs and values, which have been shaped by their previous education and training, and the norms and values of the contexts in which they work. These contexts include the department, the institution, and external forces beyond the institution (Austin, 2011; Quinn-Patton, 2010; Seymour, 2001; Tobias, 1992).

Institutional Factors Influencing the

Translation of DBER into Practice

One possible explanation for the continuing gap between awareness of DBER and adoption of new research-based teaching practices is that the initiatives described in the previous section, led by national organizations, were not sufficiently attuned to factors within academic institutions. Efforts to change science and engineering faculty members’ teaching may encounter “local” barriers, such as institutional leadership, departmental peers, reward systems, students’ attitudes, and, of course, the beliefs and values of the individual faculty members themselves (Austin, 2011; Fairweather, 2008). These factors have been analyzed extensively in the higher education research literature (see Eckel and Kezar, 2003; Fairweather, 2005; Fisher, Fairweather, and Amey, 2003; Kezar, 2009; Schuster and Finklelstein, 2006). We briefly discuss them here to provide context for efforts to translate DBER into practice.

The Department

As the immediate context in which faculty members work, the academic department or program has the greatest influence on how faculty members allocate their work time and the decisions they make about teaching (Austin, 1994, 1996). Given the importance of the department, improving learning in undergraduate science and engineering courses may

depend as much on research into departmental culture, curriculum content, sequencing, and assignment of teachers to courses as it does on research on the impact of various teaching methods (Fairweather, 2008).

Among other important departmental decisions, class size and physical space can influence the extent to which faculty apply findings from DBER and related research. Research on innovation in physics teaching indicates that large class sizes and the traditional classroom space can pose barriers to faculty adoption of innovative teaching approaches (Henderson and Dancy, 2007). Similarly, geoscience faculty are less likely to use research-based, interactive techniques in large classes than in smaller ones (Macdonald et al., 2005).

In response to these challenges, a few departments and institutions have remodeled classroom space and facilities (see Box 6-1 for some examples).7 For example, in 1993, Rensselaer Polytechnic Institute applied findings from physics education research to establish a studio physics course in a special classroom designed to support small-group collaboration and problem solving (Cummings, 2008). The redesign was so well-received by students and faculty that, by 2008, all introductory physics courses at Rensselaer (15 to 20 sections, each with approximately 50 students) were studio courses. Early studies showed little improvement in student conceptual understanding or problem-solving skills. Later implementations, which added research-based curricula, resulted in content learning gains over traditional courses (Cummings et al., 1999; Sorensen et al., 2006). To offset the considerable expense of studio courses, the NSF-sponsored Student Centered Active Learning for Undergraduate Programs project (SCALE-UP) supports institutions in restructuring large-enrollment classes following the studio model. By 2011, nearly 100 colleges and universities (or about 2 percent of the 4,400 degree-granting 2- and 4-year institutions in the United States, as counted by the National Center for Education Statistics) had adopted the SCALE-UP approach, with specially designed classrooms for physics, chemistry, mathematics, engineering, and literature courses. As discussed in Chapter 6, learning gains have been shown in many of these courses, especially for female students (Beichner, 2008).

While posing some barriers, the departmental context and culture also may facilitate the productive adoption of DBER findings. For example, faculty members may be more inclined to use DBER in their teaching if they learn about it from disciplinary colleagues, rather than from education researchers (Wieman, Perkins, and Gilbert, 2010). Guided by this assumption, two initiatives at the University of Colorado and the University

__________________

7Project Kaleidoscope is also spearheading an initiative to change learning spaces. Evaluations of those efforts have not yet been published. For more information, see http://www.pkal.org/activities/PKALLearningSpacesCollaboratory.cfm [accessed March 31, 2012].

of British Columbia focus on the department as the key unit of change. These initiatives make significant amounts of funding available to academic departments through a competitive process designed to encourage departmental colleagues to discuss their shared educational goals and to promote the idea that courses belong to the department as a whole (Wieman, Perkins, and Gilbert, 2010). In a 2010 faculty survey, most respondents at the University of Colorado reported undertaking activities that were consistent with the goals of the initiative. Sixty-two percent of respondents reported they had developed learning goals and used those goals to guide instruction, 56 percent reported they used information on student thinking and/or attitudes to guide their teaching, and 47 percent used pre/post measures of learning to inform their teaching practice. In addition, respondents indicated that more than 55 courses incorporated more research-based teaching practices than in the past (Wieman, Perkins, and Gilbert, 2010).

As noted earlier, such self-report data must be interpreted with caution. In addition, these initiatives include some features that might be difficult to replicate, and those features might explain some of the positive results. Specifically, the departments that won funds used most of their money to employ science education specialists, postdoctoral scholars with a doctorate in the discipline who receive intensive training in education and cognitive science. These specialists assist faculty in formulating learning objectives, designing assessments, and creating research-based classroom activities—tasks essential to instructional reform for which research-active faculty may not have time. It is unclear how the reforms will continue as the funds for these postdoctoral positions come to an end.

Institutional Priorities and Reward Systems

The reward system influences instructional decisions because it sends strong signals about the relative priority of teaching and research to faculty members (for detailed analyses of institutional priorities, time allocations, and salaries see Braxton, Lucky, and Helland, 2002; Fairweather, 2005; Leslie, 2002; Schuster and Finkelstein, 2006). In turn, faculty members respond to these signals. They may be less interested in change efforts related to their teaching if they expect that such efforts will give them less time to do research, and if they perceive research as more highly valued by the institution (Fairweather, 2005, 2008). At minimum, if faculty members are to consider investing time in implementing new pedagogies, they must not feel that such time will be a negative factor in salary and advancement considerations.

Institutional priorities and reward systems influence workloads, including how much time faculty members have to understand and incorporate new teaching strategies based on DBER and related research. Much DBER investigates the learning process itself and identifies the relationships

between instruction and student learning. DBER also attempts to uncover what works. However, this basic research is not always (or even often) translated into methods that faculty members can adopt to incorporate the research findings into their instructional practice. Science and engineering faculty members work an average of 55 to 60 hours per week (Fairweather, 2005). As scientists, they may be interested in what DBER tells them about effective instructional practices and about how students learn. Indeed, as we have discussed in this chapter, faculty awareness of research on effective teaching is increasing (Henderson and Dancy, 2009). Yet with their myriad obligations, most faculty members cannot afford to spend an unspecified (and perhaps open-ended) allocation of their work time figuring out how to translate DBER findings into effective and efficient ways to change their instructional practices (Fairweather, 2008). Despite the efforts of university-level professional development offices and professional development efforts like the ones described above, the translation process often remains elusive.

Students

Because students can exert a strong influence on the learning process (Brower and Inkeleas, 2010; Smith et al., 2004), student responses to new teaching practices informed by DBER and related research may facilitate or discourage adoption of such teaching practices. Students in classes that have been restructured face the difficult and sometimes frustrating task of learning new ways of thinking and solving problems in science or engineering. When participating in more challenging and effective learning experiences, they sometimes complain because they have grown comfortable with being told facts to memorize (Silverthorn, 2006). Cummings (2008) has reported that the students with the strongest academic records are sometimes the most resistant to change, perhaps because they have grown accustomed to earning good grades through memorization and more traditional approaches. Faculty members at institutions where student course evaluations play a role in assessment of their teaching may be reluctant to try new, research-based teaching approaches if they expect that those approaches will lead to critical evaluations.

However, there are documented examples of student course evaluations improving after the adoption of research-based teaching practices (e.g., Hativa, 1995; Silverthorn, 2006). With increased exposure to research-based learning environments, students can become enthusiastic. At North Carolina State University, hundreds of students have taken physics classes that have been restructured from lectures and laboratories into the SCALE-UP classrooms described above. Students who have taken a first-semester SCALE-UP class universally select the SCALE-UP version, rather than the lecture version, for their second semester physics course. Students report

that their friends direct them into SCALE-UP classes, and SCALE-UP sections fill before the lecture sections. In focus groups of students who had taken the lecture version for their first semester and SCALE-UP in their second semester, students reported that they were learning at a deeper conceptual level in the SCALE-UP class; these perceptions were supported by evidence of gains in learning and performance (Beichner, 2008).

Faculty Members’ Beliefs

Individual faculty members have strongly held beliefs and conceptions of teaching in general and of their own teaching in particular that may influence the extent to which they adopt research-based practices (see Blackburn and Lawrence, 1995; Leslie, 2002; and Schuster and Finkelstein, 2006, for reviews of the role of faculty members’ beliefs). Indeed, one review of the literature on change strategies in science and engineering found that faculty beliefs were among the most common barriers to developing reflective teachers (Henderson, Beach, and Finkelstein, 2011).

More specifically, in one interview study of 30 physics faculty, the faculty members identified learning goals for their students that largely aligned with the research on effective teaching and learning (Yerushalmi et al., 2010). Also consistent with the research, they identified problem features that would support those learning goals. Nevertheless, many of the faculty members reported that they did not use the problem features they identified, because the features conflicted with the instructors’ strongly held values about minimizing student stress and presenting problems clearly during exams. In contrast, Gess-Newsome et al. (2003) observed that science faculty who experienced a mismatch between their personal beliefs and their teaching practices were more likely to be dissatisfied with their teaching and more open to developing new knowledge and beliefs. These mixed findings indicate that role of faculty members’ beliefs is ripe for further exploration.

The Change Process in Undergraduate Science and Engineering

Broader research on change strategies in higher education also can shed light on efforts to translate DBER and related research into practice. One review identified three communities involved in efforts to promote change in undergraduate science and engineering education (Henderson, Beach, and Finkelstein, 2011) as follows:

1. DBER scholars (referred to as the science education research community). Scholars in this community typically examine teaching and learning within the disciplines, and are situated in disciplinary departments.

2. The faculty development research community. Scholars in this community conduct, evaluate, and improve faculty professional development. These scholars are often located at campus centers for teaching and learning.

3. The higher education research community. Scholars in this community often investigate institutional and organizational culture, climate, and policies, and are found within a school or college of education.

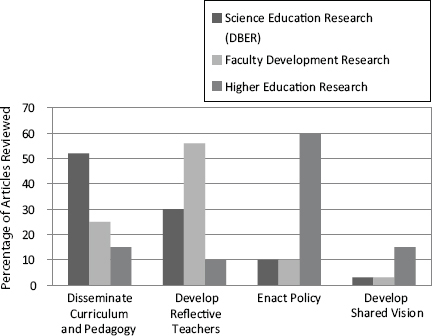

Each of these communities operates relatively independently, rarely communicating with the other communities or trying to build on their research methods or findings (Henderson, Beach, and Finkelstein, 2011). Indeed, as Figure 8-1 illustrates, although the change efforts described in the literature shared the common goal of changing undergraduate teaching in science and engineering, they reflected different change strategies. DBER papers emphasized the strategy of disseminating curriculum and pedagogy, faculty development research sought to develop reflective teachers, and

FIGURE 8-1 Change strategies used by different research communities (as summarized by Henderson, Beach, and Finkelstein, 2011).

higher education research was largely concerned with policy. This distribution led Henderson, Beach, and Finkelstein to observe that the three communities would benefit from greater communication to share research methods and findings.

In addition to fostering greater communication among the research communities seeking to influence instructional practices in undergraduate science and engineering, these efforts must attend to the broader system in which that education takes place (Austin, 2011; Quinn-Patton, 2010). A systems approach to change in higher education shows that multiple factors influence faculty members’ choices about their teaching practice (see “Factors Influencing the Translation of DBER into Practice” in this chapter). While some factors may encourage use of new approaches to teaching, other factors may simultaneously discourage such innovative practice. Thus, the multiple contexts within which faculty members work, and the influences and interactions of various features of higher education institutions and systems, must be considered to more fully understand the translation of DBER and related research into practice.

Approaches to change that take a linear path, relying on one factor or intervention alone, are unlikely to yield the desired outcome. Given the complexity of higher education institutions, change efforts are most effective when they use both a “top-down” and a “bottom-up” approach, take into consideration the relevant factors that affect faculty work, and strategically use multiple change levers (see Austin, 2011, for a summary of this literature).

• Faculty instructional practices in science and engineering have not been systematically documented. National surveys exist for faculty in general and separately in the geosciences, engineering, and physics. The results of the discipline-specific surveys are not always nationally representative and the conclusions are based on self-report data. These surveys suggest that lecture is the primary mode of instruction in the sciences.

• Available research on efforts to translate DBER and related research into practice mostly consists of evaluations of professional development programs in physics, biology, the geosciences, chemistry, and engineering, which largely rely on self-report data. For the most part, these evaluations suggest that translational efforts have been more effective at raising awareness of research-based practices than at changing practice.

• Professional development initiatives that have led to (self-reported) changes in practice are sustained over time, are consistent with

research on motivating adult learners, engage faculty in reflection of their teaching practices, and include a deliberate focus on changing faculty conceptions about teaching and learning.

• Multiple factors interact to affect faculty instructional decisions. Efforts to translate DBER into practice should take into account individual, departmental, and institutional influences on instruction.

• Although the evidence on efforts to prepare future faculty to incorporate research-based practices into their instruction is not conclusive, such efforts could represent a significant leverage point to translate DBER and related research into practice.

DIRECTIONS FOR FUTURE RESEARCH

Although it is inherently difficult and complex to assess the extent to which DBER has informed instruction, the understanding of this topic is particularly limited because the research base is particularly sparse. The first step is to develop and test a model of what influences the teaching practices of individual science and engineering faculty members and of how teaching is situated in the larger organizational context. Because both individual and contextual aspects are relevant to assessing the effects of DBER, both are relevant to increasing the effects of DBER on teaching in the future.

A reliable baseline understanding of faculty instructional practices in the sciences and engineering also is needed; this research should mitigate the limitations of self-report data to the extent possible and provide insights into variations by discipline, institutional type, and course type (e.g., large courses versus small, introductory courses, courses for majors). In a related vein, current research on discipline-specific professional development efforts to translate DBER and related research into practice mostly consists of program evaluations. By their nature, these evaluations do not rigorously examine the question of how such programs influence instruction across disciplines. Future research on this topic should take into account the larger body of research on adult learning and effective professional development to identify guiding principles for the effective translation of research into practice. Specifically, it would be productive to study what support, guidance, and knowledge of underlying principles faculty need to successfully implement research-based practices (Henderson and Dancy, 2009).

Because departmental and institutional norms and cultures reflect shared values and beliefs—including beliefs about teaching and learning—gaining understanding of departmental and institutional norms and cultures is an essential step in designing efforts to enact new policies supporting educational innovation. Based on this understanding, policy initiatives can be aligned with existing norms and/or be carefully tailored to modify those

shared norms that pose barriers to the use of DBER and related research. And finally, among the 191 studies reviewed by Henderson, Beach, and Finkelstein (2011), none examined initiatives that included significant changes to faculty recognition and reward systems. Considering that many change initiatives are slowed by barriers within institutional recognition and reward systems, it is important to develop approaches to overcome these barriers. This gap in the research on change initiatives that are based on DBER and related research represents an important opportunity for future study.