Strategies for Developing Climate Models: Model Hierarchy, Resolution, and Complexity

There is an almost bewildering landscape of climate models used in different communities and for different purposes. At present, as in the past, important advances in climate science are being made with models from across this landscape, including both more comprehensive models that are arguably more realistic and simpler models whose behavior can be more readily understood.

In the 1950s, simple energy-balance models of climate with analytical solutions gave important insights into climate sensitivity and processes such as ice-albedo feedback. In the 1960s and 1970s, simple column radiative-convective equilibrium models were used to interpret the behavior of early atmospheric general circulation models.

Through the 1970s and 1980s, high-end general circulation models became progressively more comprehensive to better capture climate change feedback processes and to provide more credible regional information on climate change. The incorporation of dynamic ocean and sea-ice models in the 1980s and 1990s to create coupled climate models allowed some of the first estimates of the transient response of the climate system to changing greenhouse gases.

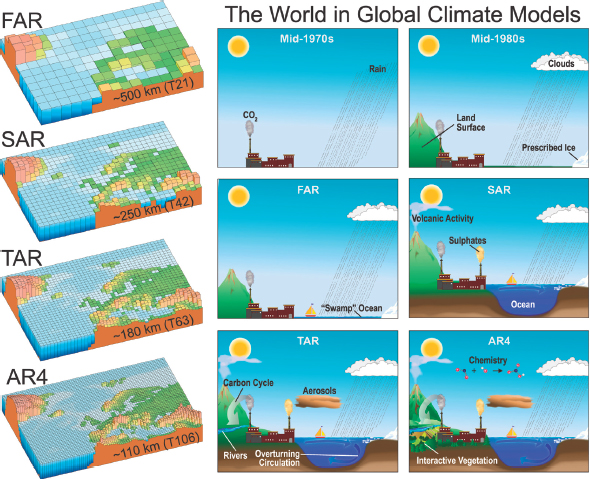

In the 1990s and 2000s, more complete treatments of sea-ice and land-surface processes were included, along with submodels of terrestrial vegetation, ecosystems, and biogeochemical cycles such as the carbon cycle. The role of aerosols and atmospheric chemistry has become a major focus of recent climate modeling efforts, including representations of processes related to the formation of the Antarctic ozone hole and of aerosol effects on climate (e.g., their interaction with clouds). These new models are often referred to as Earth system models (ESMs), because they can track the propagation and feedbacks of perturbations through the different components of the Earth system. Currently, most ESMs use global resolutions of 100-300 km and 20-100 vertical layers and can be run for thousands of simulated years at a few years to a few decades per day of actual time on supercomputing systems. Figure 3.1 highlights aspects of the evolution of climate models over the past few decades.

FIGURE 3.1 Illustration of increasing complexity and diversity of elements incorporated into common models used in the Intergovernmental Panel on Climate Change (IPCC) process over the decades. Evolution of the resolution (left side) and physical complexity (right side) of climate models used to inform IPCC reports from the mid-1970s to the most recent IPCC report (IPCC, 2007a,b,c). The illustrations (left) are representative of the most detailed horizontal resolution used for short-term climate simulations. SOURCES: Figure 1.2 and 1.4 from IPCC (2007c). FAR, First Assessment Report, 1990; SAR, Second Assessment Report, 1995; TAR, Third Assessment Report, 2001; AR4, Fourth Assessment Report, 2007.

Although comprehensive climate models are becoming more complex, an increasing range of other models has helped to evaluate and understand their results and to address problems that require different tradeoffs between process complexity and grid resolution. Uncoupled component models, often run at higher resolution or with idealized configuration, allow a more controlled focus on individual processes such as clouds, vegetation feedbacks, or ocean mixing and enable the behaviors of the uncoupled components to be studied in more detail.

Unified weather-climate prediction models (Chapter 11), which are typically run with higher resolution than general circulation models (GCMs), are an increasingly important part of the spectrum of climate models. Testing a climate model in a weather forecast mode, with initial conditions taken from a global analysis at a particular time, allows evaluation of rapidly evolving processes such as cloud properties that are routinely observed (Phillips et al., 2004). Such simulations are short enough to test model performance over a range of grid resolutions relevant not only to current but also to prospective climate simulation capabilities.

Process models such as single-column models and cloud-resolving models were developed as a bridge between observations and parameterized representation of unresolved processes needed in GCMs. This has fed back into GCM development through evaluation and improvement of physics parameterizations. This has also led to the development of a special class of “superparameterized” climate models in which the atmospheric physics in each column of the global model is simulated using a highresolution cloud-resolving model that better represents the small-scale cloud-forming processes associated with turbulence and atmospheric convection (Khairoutdinov et al., 2005). This method, however, is computationally demanding, so its use has been limited mainly to research so far.

Because of computational requirements for running GCMs at high resolution, nested regional atmospheric and oceanic models forced at the lateral boundaries have become attractive tools for addressing problems requiring locally high spatial resolutions, such as orographic snowfall and runoff, or oceanic eddies and coastal upwelling. Regional atmospheric models were mainly adapted in the past two decades from regional weather-prediction models and have attracted a somewhat different and more diverse user and model development community than global models (Giorgi and Mearns, 1999). These models have been used to further the understanding of regional climate processes, to provide dynamical downscaling of GCM simulations to produce more spatially resolved (typically between 5- and 60-km resolution) seasonal-to-interannual predictions and century-scale climate projections, as well as to test physical/chemical process parameterizations at finer spatial resolutions that anticipate the next generation of global climate models, including slow time scale physics (such as land-surface processes) that are not amenable to testing by weather forecast simulations. Hybrids between regional and global models are being actively developed, using stretched or variable grids that simulate the entire globe but telescope to much finer resolution within a region of interest.

There has always been a desire for global models that can simulate climate change and variability on millennial and longer time scales, for example, for understanding

glacial cycles. This has led to Earth system models of intermediate complexity (EMICs), which use highly simplified process representations but add slowly evolving components such as ice-sheet and dynamic vegetation models that are critical on centennial to millennial scales. Some of the physics developed in such models has been the nucleus of parameterizations now used in ESMs for simulating processes like the carbon cycle. EMICs are also important tools for understanding the role of different Earth system components and their interactions in climate variability at millennial and longer time scales.

Climate models have also started to include some representations of human systems. Examples include efforts to represent air quality in atmospheric models and water quality, irrigation, and water management in land-surface models. More substantial efforts in coupling integrated models of energy economics, energy systems, and land use with ESMs have recently been undertaken to represent a wider range of interactions between human and Earth systems. These models, called integrated assessment models, could be useful tools for exploring climate mitigation and adaptation where human systems play an important role.

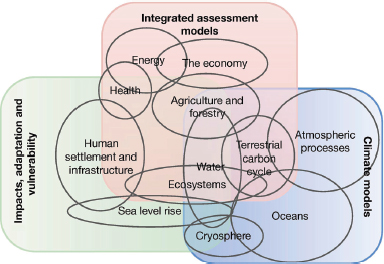

The landscape of climate models that developed naturally in the past will continue to evolve. Figure 3.2 shows one view of the landscape of the types of current models

FIGURE 3.2 The landscape of the various types of climate models within a hierarchy of models is complex and overlapping. This is one view of that landscape centered on the three broad types of models and analytic frameworks in climate change research that contribute to the IPCC reports: integrated assessment models, physical climate models, and models and other approaches used to help assess impacts, adaptation, and vulnerability. SOURCE: Moss et al., 2010. Reprinted by permission from Macmillan Publishers Ltd., copyright 2010.

involved in the IPCC assessments. This chapter discusses issues of model resolution and complexity as well as future trends in the hierarchy of models and development pathways.

MODEL RESOLUTION

A major component of climate models is the dynamical core that numerically solves the governing equations of the system components. Computation of the solution is carried out on a three-dimensional spatial grid. Increasing model resolution enables better resolution of processes, but this comes at considerable computational cost. For example, increasing horizontal resolution by a factor of 2 (say from 100 to 50 km2) generally requires a factor of 2 decrease in time step for numerical stability. Thus, the overall computational cost is a factor of 8. Furthermore, to avoid distortion of the results, the horizontal resolution cannot be increased without concomitant increases in vertical resolution. Increasing complexity independently adds to the computational cost of a model, so a balance must be sought between resolution and complexity. In practice, the ensemble of these considerations has led to an increase in atmospheric grid resolution from ~500 km to ~100 km in state-of-the-science climate models since the 1970s.

To enable higher resolution within computational constraints, alternative approaches such as regional climate models and global models with variable resolution, stretched grids, or adaptive grids have been developed to provide local refinements for geographic regions or processes of interest. Similar to global atmospheric models, regional climate models numerically and simultaneously solve the conservation equations for energy, momentum, and water vapor that govern the atmospheric state. Solving these equations on limited-area domains requires lateral boundary conditions, which can be derived from global climate simulations or global analyses. Because of the dependence on large-scale circulation, biases in global climate simulations used to provide lateral boundary conditions propagate into the nested regional climate simulations. Similar to global models, regional models are sensitive to model resolution and physics parameterizations. However, the nesting approach can introduce additional model errors and uncertainties. This issue has been addressed in a series of studies using an idealized experimental framework, known as “Big Brother Experiments (BBE)” (Denis et al., 2002). As summarized by Laprise et al. (2008), the BBE show that, given large-scale conditions provided by the GCMs, regional climate models can downscale to produce finer-scale features absent from the GCMs. Moreover, the fine scales produced by the regional models are consistent with what the GCMs would generate if

they were applied at similar spatial resolution as the regional models, thus confirming the practical validity of the nested regional modeling approach.

More recently, global variable-resolution models using unstructured grids have become feasible (Skamarock et al., 2011). By eliminating the physical boundaries, these models provide local mesh refinement with improved accuracy of the numerical solutions. However, the challenge of developing physics parameterizations that work well across the variable resolution is significant. Systematic evaluation and comparison of different approaches is important for developing a more robust framework to model regional climate. Besides increasing grid resolution, subgrid classification is used in some land-surface models or even atmospheric models (e.g., Leung and Ghan, 1998) to capture the effects of land-surface heterogeneity such as vegetation and elevation to improve simulations of regional climate.

There is considerable evidence that refining the horizontal spatial resolution of climate models improves the fidelity of their simulations. At the most fundamental level, increasing resolution should improve the accuracy of the approximate numerical solutions of the governing equations that are at the heart of climate simulation. However, because the climate system is complex and nonlinear, numerical accuracy in solving the dynamical equations is a prerequisite to climate model fidelity, but is not the only consideration.

One of the more obvious impacts of improving climate model resolution is the representation of geographic features. Resolving continental topography, particularly mountain ranges and islands, can significantly improve the representation of atmospheric circulation. Examples include the South Asian monsoon region and the vicinities of the Rockies, Andes, Alps, and Caucasus, where the mountains alter the large-scale flow and give rise to small-scale eddies and instabilities. Resolving topography can also improve simulations of land-surface processes such as snowpack and runoff that rely strongly on orographically modulated precipitation and temperature (e.g., Leung and Qian, 2003) and may also have upscaled or downstream effects on atmospheric circulation (e.g., Gent et al., 2010) and clouds (e.g., Richter and Mechoso, 2006). Similarly, weather and climate variability associated with landscape heterogeneity, as well as coastal winds influenced by local topography and coastlines, are better represented in models with refined spatial resolution, which can also lead to improved simulation of tropical variability through improved coastal forcing (Navarra et al., 2008).

Many processes in the ocean and sea ice can benefit from increasing spatial resolution. Improved resolution of coastlines, shelf and slope bathymetry, and sills separating basins can significantly improve the simulation of boundary and buoyancy-driven

coastal circulation, oceanic fronts, upwelling, dense water plumes, and convection, as well as sea-ice thickness distribution, concentration, deformations (including leads and polynyas), drift, and export. In particular, high spatial resolution is needed in the Arctic Ocean, where the local Rossby radius of deformation (determining the size of the smallest eddies) is on the order of 10 km or less, and exchanges with other oceans occur via narrow and shallow straits. Bryan et al. (2010) showed improvements in simulating the mean state as well as variability with an ocean model at 10 km versus 20-50 km spatial resolution, suggesting a regime change in approaching the 10 km resolution.

Besides improving stationary features such as those associated with terrain, increasing spatial resolution also allows transient eddies such as synoptic-scale frontal systems and local convective systems to be better represented. These transient eddies, as well as small-scale phenomena in the ocean-atmosphere system such as tropical cyclones, play important roles in the energy, moisture, and momentum transports that determine the mean climate and its variability.

Most current climate models divide the atmospheric column into 20-30 vertical layers, but some models include more than 50 layers with the increased vertical levels mostly added near the surface (to better resolve boundary-layer processes) or near the tropopause (to better simulate atmospheric waves and moisture advection).

Typical vertical resolution of ocean models that are part of climate models is 30-60 vertical layers, which could be at fixed depths or vary according to density or topography. Ocean models whose vertical grids extend to the ocean bottom are better able to represent the abyssal circulation. As in the case of atmospheric models, increased vertical resolution is added near the surface in order to better resolve the surface mixed layer and upper ocean stratification, as well as shelf and slope bathymetry. In addition, high vertical resolution is often needed near the bottom, especially to improve representation of bottom boundary-layer, density-driven gravity flows (e.g., over the Arctic shelves) and dense water overflows (e.g., Denmark Strait or Strait of Gibraltar).

Finally, there is evidence of feedbacks that are strongly dependent on model resolution and that therefore influence a model’s response to perturbations, for example:

• atmospheric blocking, which is dependent on the feedbacks between the large-scale atmospheric circulation and mesoscale eddies (Jung et al., 2011);

• feedbacks between western boundary currents with sharp temperature gradients in the ocean and the overlying atmospheric circulation (Bryan et al., 2010; Minobe et al., 2008);

• feedbacks between tropical instability waves in the ocean and wind speed in atmospheric eddies (Chelton and Xie, 2010);

• air-sea interactions in presence of a sea-ice cover, which depend on the accuracy of detailed representation of sea-ice states, including ice edge position, thickness distribution, and deformations; and

• ice sheet-ocean interactions, which require representation of local flow under and into the ice, including fjord circulation and exchanges.

However, increasing spatial resolution is not a panacea. Climate models rely on parameterizations of physical, chemical, and biological processes to represent the effects of unresolved or subgrid-scale processes on the governing equations. Increasing spatial resolution does not automatically lead to improved accuracy of simulations (e.g., Duffy et al., 2003; Kiehl and Williamson, 1991; Leung and Qian, 2003; Senior, 1995). Often, the assumptions in the parameterizations are scale dependent, although so-called scaleaware parameterization development has been pursued recently (e.g., Bennartz et al., 2011). As model resolution is increased, the assumptions may break down, leading to a degradation of the simulation fidelity. Even if the assumptions remain valid over a range of model resolutions, there is still a need to recalibrate the parameters in the parameterizations as resolution is refined (sometimes called model tuning), and the tuning may only be valid for the time period for which observations used to constrain the model parameters are available. The lack of understanding and formulation of the interactions between parameterizations and spatial resolution makes it hard to quantify the influence of spatial resolution on model skill. Furthermore, structural differences among parameterizations may have comparable, if not larger, effects on the simulations than spatial resolution.

Climate projections at finer scales (such as resolving climatic features for a small state, single watershed, county, or city) are typically produced using one of two approaches: either dynamical downscaling using higher-resolution (50 km or finer) regional climate models nested in the global models or empirical statistical downscaling of projections developed from global climate model output and observational data sets. Neither downscaling approach can reduce the large uncertainties in climate projections, which derive in large part from global-scale feedbacks and circulation changes, and it is important to base such downscaling on model output from a representative set of global climate models to propagate some of these uncertainties into the downscaled predictions. The modeling assumptions inherent in the downscaling step add further uncertainty to the process. There has been inadequate work done to date to systematically evaluate and compare the value added by various downscaling techniques for different user needs in different types of geographic regions. However, as the grid spacing of the global climate model becomes finer, simple statistical down-

scaling approaches become more justifiable and attractive because the climate model is already simulating more of the weather and surface features that drive local climate variations.

Finding 3.1: Climate models are continually moving toward higher resolutions via a number of different methods in order to provide improved simulations and more detailed spatial information; as these higher resolutions are implemented, parameterizations will need to be updated.

Finding 3.2: Although different approaches to achieving high resolution in climate models have been explored for more than two decades, there remains a need for more systematic evaluation and comparison of the various downscaling methods, including how different grid refinement approaches interact with model resolution and physics parameterizations to influence the simulation of critical regional climate phenomena.

MODEL COMPLEXITY

The climate system includes a wide range of complex processes, involving spatial and temporal scales that span many orders of magnitude. As our understanding of these processes expands, climate models need to become more complex to reflect this understanding. The balance between increased complexity and increased resolution, subject to computational limitations, represents a fundamental tension in the development of climate models.

Model complexity can broadly be described in terms of the sophistication of the model parameterizations of the physical, chemical, and biological processes, and the scope of Earth system interactions that are represented. Increasing sophistication of model parameterizations is evident in models of all Earth system components. Atmospheric models, for example, have grown from specified clouds to simulated clouds using simple convective adjustment and relative humidity-based cloud schemes to a host of shallow and cumulus convective parameterizations with different convective triggers, mass flux formulations and closure assumptions, and cloud microphysical parameterizations that represent hydrometeors (mass and number concentrations) in multiple phases. Today most climate models include some representation of atmospheric chemistry and aerosols, including aerosol-cloud interactions (e.g., Liu et al., 2011). Although model parameterizations have become more detailed, uncertainty in process representations remains high as different formulations of parameterizations can lead to large differences in model response to greenhouse gas forcing (e.g., Bony and Dufresne, 2005; Kiehl, 2007; Soden and Vecchi, 2011).

Land-surface models have grown from simple bucket models of soil moisture and temperature in the 1970s (Manabe, 1969) to sophisticated terrestrial models that represent biophysical, soil hydrology, biogeochemical, and dynamic vegetation processes (e.g., Thornton et al., 2007). Most land-surface models now represent the canopy, soil, snowpack, and roots with multiple layers and simulate detailed energy and water exchanges across the layers (Pitman, 2003). Some models have begun to include a dynamic groundwater table (e.g., Leung et al., 2011; Niu et al., 2007) and its interactions with the unsaturated zone. With the representation of canopy, soil moisture, snowpack, runoff, groundwater, vegetation phenology and dynamics, and carbon, nitrogen, and phosphorous pools and fluxes, land-surface models can now simulate variations of terrestrial processes important to predictions from weather to century or longer time scales.

Ocean models are energy conserving and typically use hydrostatic (assumption of balance between the pressure and density fields) and Boussinesq approximations (assumption of constant density) to solve the three-dimensional primitive equations for fluid motions on the sphere. However, non-Boussinesq models are also used, especially to study sea-level rise. In many models, a free surface formulation is used to allow unsmoothed realistic bathymetry, especially in z-level vertical high-resolution models. Other models use a hybrid coordinate system in the vertical, which combines an isopycnal discretization in the open, stratified ocean, terrain-following coordinates in shallow coastal regions, and z-level (vertical) coordinates in the mixed layer and/or unstratified seas.

Sea-ice models commonly consist now of both dynamic and thermodynamic processes. They can often include an improved calculation of ice growth and decay, multicategory sea-ice thickness distribution to allow nonlinear profiles of temperature and salinity, snow layer, computationally efficient rheology approximation, advanced schemes for remapping sea-ice transport and thickness, and ice age for comparison with satellite measurements (e.g., Maslowski et al., 2012).

Besides increasing the complexity of individual model components, the scope of Earth system interactions that are represented has continued to increase to capture the feedbacks among Earth system components and to provide more complete depictions of the energy, water, and biogeochemical cycles. Some land-atmosphere feedbacks are snow-albedo feedback, feedback between soil moisture, vegetation, and cloud/precipitation. Some key ocean-atmosphere feedbacks are the Bjerknes feedback between easterly surface wind stress, equatorial upwelling, and zonal sea-surface temperature (SST) gradients, feedback between clouds, radiative energy fluxes, and

SST, and between sea ice, albedo, and ocean circulation. Carbon dioxide has “carboncycle” feedbacks with land and ocean ecosystems and with ocean circulations. Many more feedbacks are also important to the response of the Earth system to climate perturbations.

More complete depiction of the energy, water, and biogeochemical cycles enabled by coupled ESMs has important implications for climate predictions at multiple time scales. Errors in characterizing sources, sinks, and transfer of energy, water, and trace gases and aerosols due to missing, oversimplified, or uncoupled system components can have significant impacts on climate simulations because of feedback processes such as those described above.

The ability to predict climate in the long term will be limited by the ability to predict human activities. Prognostic climate calculations are dependent on the scenarios of future emission and land use, which traditionally have been exogenously prescribed. This prescription is tenuous because land cover and water availability will change as climate changes, and humans will respond and adapt to those changes. In fact, the largest force altering the Earth’s landscape in the past 50 years is human decision making (Millennium Ecosystem Assessment, 2005a,b,c).

Integrated assessment models (IAMs) couple models of human activities with simple ESMs. IAMs typically take population and the scale of economic activity as prescribed from outside as well as the set of technology options available to society and national and international policies to develop time-evolving descriptions of the details of the energy, economic, agriculture, and land-use systems on annual to century time scales. IAMs open the door to internally consistent descriptions of simultaneous emissions mitigation and adaptation to climate change. For example, integrating human ESMs with natural ESMs provides a mechanism to reconcile the most obvious internal inconsistencies, such as the competition for land to mitigate anthropogenic emissions (e.g., through afforestation or bioenergy production), while adapting to climate change (e.g., expansion of croplands to compensate for reduced crop yields). IAMs introduce a new set of modeling assumptions and uncertainties; a continuing challenge will be designing IAMs with an appropriate balance of sophistication between the model components for the specific problems to be addressed.

Finding 3.3: Climate models have evolved to include more components in order to more completely depict the complexity of the Earth system; future challenges include more complete depictions of Earth’s energy, water, and biogeochemical cycles, as well as integrating models of human activities with natural Earth system models.

FUTURE TRENDS AND CHALLENGES

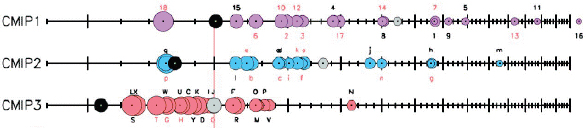

Over the past four decades there has been steady, quantifiable progress in climate modeling (Figure 3.3). Much additional insight has been gained with new components and processes and higher resolution. These trends suggest considerable further increase in model fidelity can be achieved over the next two decades if anticipated advances in computational performance can be fully exploited.

These advancements will require significant changes in the numerical codes and software engineering, as discussed in more detail in Chapter 10, because future computers will have many more processors, but each will still have a clock speed similar to today’s 1-2 GHz. In 2012, systems with ~500,000 processors that deliver a peak computational performance rate of 10 petaflops are becoming available. In theory such computers would already allow a global climate model with a grid spacing as fine as 6-10 km to simulate 5-10 years per day of computer time, a throughput rate suitable for decadal climate prediction or centennial-scale climate change projection. In practice, current climate model codes are not parallelized nearly well enough to efficiently use so many processors, and the highest-resolution centennial simulations use 25- to 50-km grid spacing. A decade hence, supercomputers will have 107-109 processors. If models could be designed to efficiently exploit this degree of concurrency (a major challenge), then this would enable an additional 2-2.5× refinement of global climate model grids to “cloud-resolving” resolution of ~2-4 km.

FIGURE 3.3 Performance of subsequent generations of climate models compared to observations showing improvement from the earliest (CMIP1) to latest (CMIP3). The x axis shows the performance index (I2) for individual models (circles) and model generations (rows). The performance index is based on how accurately a model simulates the seasonally varying climatology of an aggregate of fields such as surface temperature, precipitation, sea-level pressure, etc. Best performing models have low I2 values and are located toward the left. Circle sizes indicate the length of the 95 percent confidence intervals. Letters and numbers identify individual models (see supplemental online material at doi:10.1175/BAMS-89-3Reichler); flux-corrected models are labeled in red. Grey circles show the average I2 of all models within one model group. Black circles indicate the I2 of the multimodel mean taken over one model group. SOURCE: Modified from Reichler and Kim, 2008.

While model resolution continues to increase, model complexity is anticipated to expand to fill critical gaps in current ESMs to address the scientific challenges discussed in Chapter 4. For example, one of the most daunting scientific challenges in climate modeling is the creation of internally consistent calculations of future climate that represent interactions between terrestrial Earth systems, climate systems, and human Earth systems. Modeling of biogeochemistry in the ocean and terrestrial biosphere will need to be more complete to represent the coupled hydrologic, biological, and geochemical processes. Proper inclusion of atmospheric chemistry is computationally daunting because of the large number of chemical variables and their large range of reaction time scales. Other research areas include modeling of ocean and land contributions to reactive and trace gases (e.g., how soil microbial populations regulate the release and consumption of trace gases) and interactive marine and terrestrial ecosystem models. More sophisticated models of ice sheets and of aerosol interactions with clouds and radiation are being developed to reduce key uncertainties in interpreting the historical record of climate change and in climate projection.

New model parameterizations need to be designed to work well across the range of model grid spacings and time steps over which they may be used, which is often not straightforward. The design of a parameterization is subjective and involves expert judgment about what level of complexity to represent and how physical processes interact, creating structural uncertainty (see Chapter 6). Hence, different climate models often incorporate different parameterizations of a given physical process. Careful observational testing is required to validate, compare, and improve parameterizations. First steps to advance the model development and testing processes are presently under way to address these challenges. One example is the Department of Energyfunded Climate Science for a Sustainable Energy Future project, which involves nine U.S. institutions working on regional predictive capabilities in global models for 20152020 in a strategic, multidisciplinary effort, including development of observational data sets into specialized data sets for model testing and improvement, development of model development testbeds, enhancement of numerical methods and computational science to take advantage of future computing architectures, and research on uncertainty quantification of climate model simulations.

“Complexity” is still a diffuse term in these discussions. To the committee’s knowledge, there has not been a systematic comparative study of complexity across high-end climate models. Randall (2011) comes closest. If the behavior of a complex model under known modifications to inputs is conceptually understood, it may become possible to reproduce that behavior in a simpler model. A model hierarchy allows key mechanisms of climate response in complex models to be related to underlying physical principles through simpler models, hence giving complex models credibility. Model

hierarchies also allow one to proceed in this manner by slotting out (either conceptually or in software) a model component, which could be a physics parameterization, a hierarchy of fluid dynamical solvers, a dynamical framework (e.g., regional versus global), or a complete model component (e.g., land-surface models) for a simpler or more computationally efficient one. One could even return to a box model with a handful of degrees of freedom (see Held [2005] for a good discussion of simulation versus understanding in a model hierarchy).

It is the nature of complex systems to exhibit emergent and surprising behavior: as researchers in other fields involving systems with many processes and feedbacks (such as biological systems) find, there are many dynamical pathways linking cause to effect, and hypotheses encouraged by simpler models often fail in a more complex model, and in nature. Even simple models often require substantial cross-disciplinary expertise to develop and effectively use and are beyond the capability of a single investigator to sustain. Maintaining a hierarchy of models with some shared components enables systematic evaluation of model components and allows models of different complexities to be maintained and used for specific scientific investigations of different time and space scales.

Finding 3.4: A hierarchy of models will be a requirement for scientific progress in order to maintain the ability to (1) systematically evaluate model components one at a time and (2) use simpler models to understand complex model behaviors and underlying mechanisms.

Finding 3.5: Investments to increase resolution as well as increase complexity are both needed. The community does not yet have the experience to extrapolate which investment may result in faster or larger benefits in the future.

MODEL EVALUATION

To guide future investments in model development, careful assessment of the additional benefits of increasing model resolution and complexity will be important. Model evaluation, in the context of predictability and uncertainty, will be increasingly critical to improve understanding of model strengths and weaknesses. Quantitative evaluation has the added benefit of helping to provide more robust estimates of uncertainty (see Chapter 6) and to discriminate the quality of climate simulations and predictions made using different models (e.g., Knutti, 2010).

However, evaluation of the increasingly more complex and higher-resolution models will be a significant challenge. Different model evaluation strategies have been

explored by the climate modeling community to take advantage of the hierarchy of models, including single-column models, regional models, and uncoupled and coupled global models discussed above. Strategies that have been explored range from running idealized simulations (e.g., aqua-planet simulations) to isolate specific aspects (e.g., tropical variability) of the models, to running short case studies or weather forecasts with focus on fast processes such as clouds, to performing sensitivity experiments with new parameterizations included one at a time to reveal weaknesses of other parameterizations, to running long-term integrations of coupled and uncoupled models to evaluate the variability generated by the models and to model intercomparisons that elucidate the sources and consequences of model discrepancies.

Central to the discussion of model evaluation is the definition of performance metrics. There has been considerable progress in recent years from single metrics that quantify evaluation of a fairly narrow range of model behaviors to multivariate methods that can evaluate more aspects of models, including the spatial patterns of bias and variability and the correlations among different variables (e.g., Cadule et al., 2010; Gleckler et al., 2008; Pincus et al., 2008) as well as more modern methods of statistical analysis that are only beginning to be applied to climate simulations.

Such evaluation is hampered by the limited availability and quality of the climate observational record. For truly rigorous quantification of model fidelity, long-term observational records of climate-relevant processes and phenomena are needed that include estimates of uncertainty. Much of the instrumental record is associated with weather analysis and prediction, which means that many processes of importance to climate have not been robustly monitored.

Model development testbeds (e.g., Fast et al., 2011; Phillips et al., 2004) are important advances to streamline and modernize model evaluation through development of model-observation comparison techniques, use of a wide range of observations, and use of computational software to facilitate the model evaluation processes. Objective measures of model skill are actively being developed to guide model development and implementation. More advanced methods of evaluating climate models are coming into use that take advantage of more recent statistical analysis methods and the growing use of ensembles of climate simulations to estimate uncertainty and reliability and ensembles of climate models confronted with the same experimental protocols to evaluate the relative performance of different models. Much work is needed to refine our understanding of the relationship between observations and ensembles of climate simulations, especially because Earth’s climate represents a single realization. Furthermore, highly detailed evaluation of the representation of climate processes in models is critical as the models become more complex. The growing body of paleocli-

mate simulations can also be compared to observations of past climate to determine the robustness of assumptions and parameters in current climate models.

Computational infrastructures that help streamline the simulation and analysis processes, as well as improved observing systems and analyses to provide data with sufficient spatial resolution and long time periods to evaluate models, are further discussed in Chapter 10 and Chapter 5, respectively.

Finding 3.6: As models grow in complexity and ensembles of climate simulations become commonplace, they are likely to exhibit an increasingly rich range of behaviors and unexpected results that will require careful evaluation; important tools for these evaluations are robust statistical comparisons among models with both historical and paleoclimate observations.

THE WAY FORWARD

To inform research, planning, and policy development, a full palette of modeling tools is required. The choice of model for a given problem would ideally be optimized to various length and time scales and with different degrees of complexity in their representation of the Earth system. This hierarchy of models is necessary to advance climate science and improve climate predictions from intraseasonal to millennial time scales.

An important challenge, therefore, for the U.S. climate modeling community over the next two decades is how to efficiently manage the interactions between models in hierarchies or linked groups of models. A shared model development process identifies key gaps in our understanding and defines experiments aimed at resolving those using protocols that different models can run (the “MIP” process). There is a need for methodological advances in comparisons across levels of the model hierarchy in a modern, distributed architecture. Common infrastructure allows differing process representations to be encoded within a common framework to allow clear comparisons. Components that enjoy community-wide consensus will be widely used; components for which there is still disagreement can go head-to-head in systematic experiments. In addition, a desirable characteristic of a model hierarchy is that results obtained at a given level in the hierarchy can inform development at other levels. Software has a role to play here; systematic approaches to maintaining model diversity under a common infrastructure are discussed in Chapter 10.

In summary, creating a structure within the model hierarchy that uses common software frameworks and common physics where appropriate is highly beneficial. Doing this well can develop the interdisciplinary modeling communities, reduce overlapping

efforts, allow more efficient development of new modeling tools, promote higher standards or best practices, facilitate interpretation of results from different modeling approaches, speed scientific advance, and improve understanding of the various sources of modeling uncertainty.

Decision makers at many levels often desire projections of climate and its future range of variability and extremes localized to individual locations or very fine, subkilometer, scales. While the resolution of global climate models may eventually be this fine, the committee judges that this is not likely to occur within the next 20 years. In the meantime, other statistical or dynamical downscaling techniques will continue to be used to provide finer-scale projections. Although an evaluation of various methods would be useful, this would likely require a study unto itself. Furthermore, as global climate resolutions become finer themselves, statistical downscaling techniques become simpler and more straightforward and the need for more computationally expensive dynamical downscaling may diminish. Thus, the committee focused its attention in this report on improving the fidelity of models at all scales. However, like downscaling itself, using climate models with finer grids does not guarantee a much more certain or reliable climate model projection at local scales. As noted above, the uncertainties in climate models even at local scales derive in large part from global-scale processes such as cloud and carbon-cycle feedbacks, as well as uncertainties in how future human greenhouse gas and aerosol emissions unfold.

Recommendation 3.1: To address the increasing breadth of issues in climate science, the climate modeling community should vigorously pursue a full spectrum of models and evaluation approaches, including further systematic comparisons of the value added by various downscaling approaches as the resolution of climate models increases.

Recommendation 3.2: To support a national linked hierarchy of models, the United States should nurture a common modeling infrastructure and a shared model development process, allowing modeling groups to efficiently share advances while preserving scientific freedom and creativity by fostering model diversity where needed.