10

A Broader Concept of Deception

Although laboratory studies of lying have produced interesting results, critics can point to many features that might limit their generality to “real world” deception. The lying is done by individuals who are not experienced at deception. Because the lying is done at the request of the experimenter, it may differ qualitatively from unsanctioned lying in social contexts. Laboratory lying does not, in itself, benefit the liar, nor does it do obvious harm to the target of the lie.

This chapter considers deception in a broader context. We begin with a review of attempts to provide a framework for understanding deception and lying. Some of those attempts were aimed at constructing theories of deception; others were aimed at understanding how ordinary individuals conceptualize deception. We focus on these latter approaches to determine whether, in principle, it is possible to detect if a given individual is being truthful or is trying to deceive. We then suggest additional research paradigms to supplement the standard one. The goal of this new paradigm is to understand how different cultural and subcultural groups represent deception and its moral implications. As discussed in Chapter 9, an individual can be expected to “leak” deception cues when he or she has violated a cultural norm; thus, it is important to know what the cultural norms are and what constitutes a violation for that individual.

Morality is a concern because of its impact on leakage. In most situations, a person has to rely on verbal and nonverbal cues to judge if someone is lying or trying to deceive in other ways. As discussed in Chapter 9, these cues, in turn, are assumed to reflect an internal psycho-

logical state. Presumably, internal states that correspond to guilt can produce physiological and motor responses that may reveal the guilty state to a discerning detector. At least this is the basic premise that underlies the attempts to discover cues to deception.

TYPES OF DECEPTION

Lying in the real world covers more than lying studied in the laboratory: lying is often done for personal or ideological gain; it can harm the victim, and it is often carried out over a considerable span of time. More broadly, deception includes more than lying. In fact, deception can be carried out without explicit lying.

Deception can be defined broadly as the manipulation of appearances such that they convey a false reality. However, when one tries to list a variety of deceptions and their contexts, it becomes difficult to find a common set of features that characterizes all of them. At best, the various types of deception bear what Wittgenstein (1953) calls a family resemblance to one another. Deception includes both dissimulation (hiding or withholding information) and simulation (putting out wrong or misleading information). Both deception and lying can be accomplished by omission as well as by commission. Interestingly, folk theories of deception are more likely to attach moral significance to deceptions accomplished by commission than to those accomplished by omission.

The first psychologists to study deception looked upon conjuring or sleight-of-hand magic as the paradigm (Hyman, 1989). Certainly, conjuring is one of the few occupations in which success depends entirely on the ability to deceive. Although deception is the essence of conjuring, such deception differs from the deception of the confidence man or that of the spy. A conjuror engages in “sanctioned deception ”: he or she has an implicit contract with the audience that doing a good job means fooling the onlookers. A magician would not be a good magician if he or she failed to deceive the audience. A conjuror 's “victims” know, even before the tricks have been done, that the magician intends to fool them.

Deception, unlike conjuring, generally depends for its success on keeping intended victims unaware that deception is taking place. And many deceptions, unlike magic tricks, are definitely not sanctioned by society. Although most lying and deceiving are considered immoral by almost all cultures, some forms are sanctioned, or at least tolerated, by social customs. In the United States, such tolerated untruths include “social lies” and “white lies.” Jokes, fantasies, teasing, and fibbing are other examples of untruths that carry little or no social disapproval. These considerations suggest that one way deceptions vary is in the perceived harm that they can do to other people or society.

There are many examples of the varied types and contexts of deception. Many adolescent children have successfully “fooled” adults; some have gained notoriety as psychics or individuals around whom poltergeist phenomena occur. Both children and adults have succeeded in fooling doctors into giving them unneeded prescriptions and even unnecessary operations. Consumer and health fraud has always been a huge industry in civilized countries. Military and strategic deception have been practiced since countries or even tribes have existed. Feints and ploys are highly valued and important forms of deception in games and sports. Fraudulent psychics, gambling cheats, and impersonators are everywhere. Confidence games and swindles continually take their toll of seemingly willing victims. Forgery is a criminal case of deception. In recent years, more and more articles and books have been devoted to plagiarism and other deception in science (e.g., National Academy of Sciences, 1989). Self-deception has also recently been gaining much attention among philosophers, psychologists, and sociobiologists.

All these forms of deception are interesting and worthy of study, but the focus of this chapter is more limited: two individuals in face-to-face communication. Under these circumstances, the questions of hiding and detecting deception are about leakage and the extent to which one of the parties can detect whether the other is talking or acting deceptively. One focus is how to generalize the existing findings from laboratory research to more realistic settings and to wider contexts of deception.

Although the focus is on two individuals in face-to-face communication, it is important to remember that most important informational exchanges occur within a more complicated organizational setting in which structural and psychological factors interact and help to determine, in important ways, the consequences of the exchanges. These added complexities are briefly addressed.

THEORIES, TAXONOMIES, AND FRAMEWORKS

During the late 1800s and early 1900s several psychologists tried to develop a psychology of deception, using conjuring as the paradigm case for deception (Hyman, 1989). By discovering and classifying the principles that conjurors use to mystify audiences, the psychologists hoped to provide a general framework for understanding all deception. But, for the reasons noted above, conjuring is probably not the best paradigm for deceiving.

A few writers have tried to develop taxonomies as frameworks for a theory of deception: a good taxonomy helps focus on which studies are most likely to contribute to the development of an adequate theory. At first, any taxonomy has to be considered highly tentative; it is likely that

as research is surveyed, some categories will be found to include no representatives and many studies will be difficult to fit into any category. More broadly, a good taxonomy cannot be evaluated in general terms because its usefulness depends on the purposes of its users.

Some theorists on deception have developed preliminary taxonomies that can be used as a basis for developing more comprehensive systems for future investigations. A major objective of the existing taxonomies is to develop a scientific theory of deception. Such a theory would consist of basic concepts, variables, and laws that would enable people to understand and explain deception. Just as the current theories of physics, chemistry, and biology deviate greatly from earlier intuitive notions about how the world “works,” we can expect that a scientific theory of deception will probably have little in common with common sense ideas of deception.

The current attempts to develop a framework for understanding deception, in fact, do not depart very much from general intuitions about deception. In some cases this simply reflects the primitive state of theorizing about deception. The developers of the taxonomies have to begin with the concepts and data that exist in everyday terminology. Presumably, with more scientific investigation and further refinements, the taxonomies will begin to deviate more and more from everyday concepts and terminology.

A few attempts to develop a framework, however, deliberately adopt the stance of the layperson. Hopper and Bell (1984), for example, base their approach on what they refer to as “the linguistic turn. ” This approach seeks the basis for a taxonomy within the language usage of ordinary people. Others have as their focus the deliberate attempt to find out how people conceptualize deception and lying. Thus, we can identify two motivations for studying the folk psychology of deception: one is based on the hope that the folk psychology, with suitable refinements, can be transformed into a scientific psychology of deception; the other is to study the folk psychology as an end in itself. As will be pointed out, this latter motivation may have important implications for questions raised in this chapter.

A brief overview of some frameworks devised for the purpose of developing a scientific theory of deception is discussed below, followed by a look at taxonomies deliberately aimed at understanding how ordinary people conceive of deception.

Frameworks as Scientific Theories

Chisholm and Feehan (1977) provide an analysis of deception from a logical, philosophical viewpoint. They identify three dimensions for

distinguishing among deceptions: omission-commission; positive-negative; intended-unintended. The combination of these dimensions provide eight different categories of deception.

Daniel and Herbig (1982) define deception as the deliberate misrepresentation of reality to gain a competitive advantage; thus, they differ from Chisholm and Feehan in that only intended misrepresentations would count as deceptions. They add a further restriction in that the misrepresentation has to be aimed at providing a “competitive advantage” to the deceiver. They divide deceptions into three categories: cover, lying, and deception. Cover refers to secret keeping and camouflage. Lying is subdivided into simple lying and lying with artifice. Lying is more active than cover in that it draws the target away from the truth. Artifice goes beyond straight lying in that it involves manipulating the context surrounding the lie. Deception is not the same as lying; lying focuses more on the actions of the liar, and deception deals with the effects on the receiver. Daniel and Herbig further classify deceptions as ambiguity-increasing and ambiguity-decreasing: the former deceives by confusing the target, the latter misleads by building up the attractiveness of one wrong alternative.

In a different approach, Heuer (1982) builds a taxonomy in terms of the underlying psychological biases that are assumed to result in deception. He lists various perceptual and cognitive biases that may help to develop a theory of deception and counterdeception. Heuer 's approach points to the fact that one can attempt to base a taxonomy on an ordering of the various exemplars of deception or, instead, on an ordering of psychological and sociological constructs that may explain deception. Each approach has its merits. The ultimate goal is to develop a framework that can account for the effectiveness of deception. But one can argue that it might be wiser to begin the search with a taxonomy that is based on surface similarities and dissimilarities among exemplars of deception. Possibly, there might be some value in trying to start with both types of taxonomies.

One attempt to create a theory of deception includes the only taxonomy that has been put to a test. Whaley (1982) devised a taxonomy on the basis of systematic analysis of examples from war and conjuring. He also obtained inputs from diplomats, counterespionage officers, politicians, businessmen, con artists, charlatans, hoaxers, practical jokers, poker players, gambling cheats, football quarterbacks, fencers, actors, artists, and mystery story writers. The resulting framework was tested by classifying an exhaustive list of magic tricks. All deception for Whaley is a form of misperception. He divides misperceptions into other induced, self-induced, and illusions. Self-induced misperception is what we ordinarily refer to as self-deception. Other-induced misperception

includes misrepresentation (unintentional misleading) and deception proper (deliberate misrepresentation).

Every deception, according to Whaley, is comprised of two parts: dissimulation (covert, hiding what is real) and simulation (overt, showing the false). Both dissimulation and simulation come in three forms: the three types of dissimulation are masking, repackaging, and dazzling; the three types of simulation are mimicking, inventing, and decoying. In decreasing order of effectiveness, these components of deception can be listed as masking, repackaging, dazzling, mimicking, inventing, and decoying. Nine categories of deception are obtained by combining the three kinds of dissimulation with the three kinds of simulation. Whaley claims that these nine types exhaustively classify all deceptions; he was able to classify every magic trick into one of these nine categories. Whether other researchers and independent judges can use his system to reliably classify the same magic tricks, as well as other deceptions, into these categories remains to be seen.

One schematic framework was developed for understanding deception in intelligence work (Epstein, 1989). The framework is attributed to the late J.J. Angleton, who was a senior officer, as well as a controversial person, in the Central Intelligence Agency. The basic idea is that of a deception circle that includes, as a minimum, a victim (target person or group), an inside person, and an outside person. The outside person is typically an enemy agent who pretends to have become an informant. He is the source of disinformation to the target group. For the disinformation to be effective, however, the enemy must know how it is being accepted and interpreted by the target group. The feedback comes to the enemy from the inside man (usually a “mole” or other enemy agent who has managed, over a period of years, to penetrate the innermost sanctums of the target group). With the appropriate feedback, the enemy can tailor and fine-tune the disinformation so that it is more consistent with what the target group believes or wants to believe. This deception circle, which also characterizes many other successful forms of deception, such as confidence games, provides a potent and almost irresistible way to plant disinformation. Although this model is relatively simple, it is important in making clear that the dynamics of successful deception typically involve organizational and social factors that go beyond the simple dyadic relationship of the informer and the “informed. ”

Frameworks Based on Folk Psychology

Philosophers and some psychologists debate the role that folk psychology —common beliefs—should play in a scientific psychology. One

position is that folk psychology should play no role since many folk beliefs about human behavior have been wrong. Trying to build a scientific psychology on the basis of folk psychology, according to this position, would hamper progress by starting with vague, contradictory, and almost certainly wrong beliefs. People who advocate this position argue that the science of psychology should begin with those concepts and laws that have already been shown to be useful in biology, physiology, and neuroscience.

The contrary view maintains that any complete science of psychology must take into account people's beliefs about their own and others ' mentality and behavior. This is the view accepted in this chapter. We believe that any attempt to understand and explain deception must somehow take account of how ordinary people conceptualize deception.

One obvious way in which a folk psychology of deception can matter is related to the leakage hypothesis, which assumes that people enter a particular psychological state when they believe they are violating a social norm. The experience or state of guilt is accompanied by hormonal and muscular changes that can potentially be detected by an observer. Perhaps the best use of this hypothesis is to predict or detect deception on the basis of beliefs and acts that constitute deceptions and transgressions for a given individual. What a person believes about lying and deception is part of that individual's folk psychology.

Two empirical studies have attempted to describe the way ordinary people conceptualize lying and deception. Although both studies were psychological investigations, the investigators were communication scientists (Hopper and Bell, 1984) and linguists (Coleman and Kay, 1981). Subsequently, another linguist carried out a provocative analysis of the Coleman and Kay study (Sweetser, 1987). These studies are important because they show that the paradigm laboratory experiment might be supplemented by approaches that better elicit leakage cues from members of various groups.

A Taxonomy in Psychological Space

One taxonomy of deception was devised by looking for systematic relationships among deception terms used by speakers of English (Hopper and Bell, 1984). By examining how their subjects classified 46 terms related to deception, the investigators inferred that folk theories of deception recognize six types: playings, fictions, lies, crimes, masks, and unlies. This taxonomy organizes the deception realm in terms of perceived similarities among the various concepts that refer to deception in some sense. The investigators suggest that their taxonomy is hierarchical. The six categories can be subsumed under two more general

categories, benign fabrications and exploitative fabrications: benign fabrications include fictions and playings; exploitative fabrications include lies, crimes, masks, and unlies.

Hopper and Bell add further organization to their taxonomy by looking for attributes or dimensions along which they can order their categories with respect to one another. Their statistical analyses identify three such dimensions and hint at three additional ones. The dimension that accounts for most of the ordering among the deception terms is evaluation: it contrasts socially acceptable, harmless, and moral types of deception with socially unacceptable, harmful, and immoral deceptions. The two other dimensions that account for most of the ordering among their categories are detectability and premeditation. The three additional dimensions that may also play a role are directness, verbal-nonverbal, and prolonged.

The investigators had their subjects—180 undergraduates in an American university—each sort 46 words into categories that seemed to go together. The 46 words had been selected to represent a larger set of 120 deception terms. The assumption was that the more subjects who put the same two words in the same category, the more these two words were psychologically similar. After obtaining an index of similarity between every pair of words, the authors used multidimensional scaling to construct a psychological space for these terms.

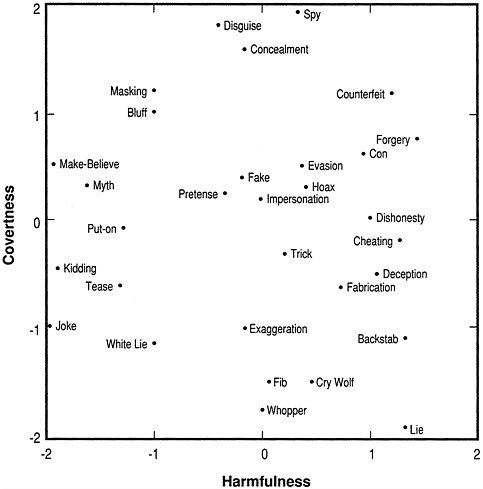

Hopper and Bell had another group of subjects rate each of the deception words on ten bipolar adjective scales, such as good-bad, harmless-harmful, moral-immoral, direct-indirect. In our own analysis, we used these ratings with factor analytic procedures to generate a psychological space for the 46 terms. The resulting space was similar to the one that Hopper and Bell found using their clustering procedure. Our analysis indicated that two psychological dimensions were sufficient to account for the variations of the terms on the rating scales. Figure 1 shows 31 of the words plotted in this two-dimensional space. (We have omitted 15 of the terms from the graph because they overlapped with other terms and would have made the graph unintelligible.)

For our purposes, it is the principle illustrated by this figure that matters. We labeled one dimension “harmfulness”: the terms that are high on this dimension (on the right-hand side) tended to be highly rated on scales such as bad, harmful, unacceptable, and immoral; the terms that are low on this dimension (on the left-hand side) were highly rated on the opposite scales, good, harmless, socially acceptable, and moral. The second dimension is labeled covertness: those items that are high on this dimension (at the top) were rated high on covert, indirect, and nonverbal; those at the opposite end of this dimension (at the bottom) were rated high on overt, direct, and verbal.

FIGURE 1 Deception terms plotted in a two-dimensional space.

Notice that the concept lie is located at the bottom right-hand corner of the figure. The subjects agreed in rating lying as harmful, socially unacceptable, and immoral, as well as being highly verbal and direct. Deception also is high on harmfulness but is relatively neutral on the covertness dimension. This suggests that the subjects recognize that some deceptions can be highly verbal, such as lying, but that they can also be nonverbal. Although fib and whopper are near lie on the covertness dimension, they are rated as relatively neutral on the harmfulness scale. A white lie is rated as only somewhat harmless and somewhat overt.

There are some possible limitations of these data. The variations among the terms are circumscribed by the limited number and range of

rating scales used. Some important dimensions in the folk psychology of deception may have been left out. A more important limitation is that the role of context is omitted. Disguise is rated as neutral on harmfulness though covert, but a disguise used for a costume party is quite different from a disguise used to rob a bank. Perhaps the apparently neutral rating simply reflects that some respondents rated it as harmful and others rated it as neutral. Similarly, most jokes are harmless, but certain practical jokes can be quite cruel and harmful.

Despite these limitations, however, Hopper and Bell's data probably capture some important aspects about how undergraduates in our culture perceive deception. Presumably, if such subjects were telling a lie as a joke, they would display less leakage than if they were telling a lie about who wrote their term paper. We have illustrated this procedure in some detail because we believe that, with suitable refinements, it can be used to yield psychological spaces for different subgroups and cultures. We might expect that different groups would differ on which kinds of deception they placed at the socially acceptable and socially unacceptable ends of the harmfulness dimension. This could be useful information, for example, if leakage cues to deception mainly occur when an individual is deceiving in a way that his or her culture finds socially unacceptable.

A Prototype Theory

Another way to uncover the psychological space with respect to deception was illustrated by Coleman and Kay (1981). These authors carried out their empirical study of the word lie as an assault on the previously dominant checklist theories of meaning. The checklist view assumes that the meaning of a word consists of a set of features: any object that contains these features belongs to the category defined by that word. For example, bachelor refers to any object that is human, adult, male, unmarried. According to the checklist theory, any person who possesses all four of these defining features is a bachelor, and a person who lacks one or more of these features is not a bachelor. The classic theory of meaning asserts that the possession of these defining features is both necessary and sufficient for being a bachelor. Such a definition establishes what is called an equivalence class—every person who satisfies the definition is as much a bachelor as every other bachelor. Even before this classical theory began to be challenged in the 1970s, some scholars had pointed to problems with the classical definition. For example, is the Pope a bachelor? What about a widower?

The alternative view of meaning, which became popular during the 1970s, is known as the prototype theory. Prototype theories recognize

the intuitive notion that some members of a category are better exemplars than others. Much of the early work supporting this theory had been done with colors (Rosch, 1975). Not all examples of the color red, say, are equal. People in different cultures agree that some examples are better reds than others. Additional experimental work dealing with directly perceptible objects such as plants, animals, utensils, and furniture supported the prototype theory. Coleman and Kay wanted to show that the prototype phenomenon can also be found in words referring to less concrete things, such as the speech-act word lie.

They first used standard linguistic analysis to devise a definition of a “good” (true) lie. A good lie is one for which the speaker (S) asserts some proposition (P) to addressee (A) such that:

-

P is false (false in fact);

-

S believes P to be false (believe false); and

-

in uttering P, S intends to deceive A (intent to deceive).

In the checklist or classical theory these three properties would be necessary and sufficient for a statement to be a lie. The prototype theory asserts that a concept such as lie is a “fuzzy set” in which different members vary in their degree of goodness or closeness to the prototype. Statements that possess all three properties would be the best examples of a lie. Statements that possessed only two of the three defining properties might still be considered lies, but not very good examples. Statements that had only one of the properties would be considered even poorer examples of lies. Finally, statements that possessed none of these three properties would not be considered as lies.

Coleman and Kay (1981) conducted an empirical test of the theory by constructing brief stories to correspond with each of the eight possible combinations of possessing or not possessing the property. The story corresponding to the possession of all three properties was as follows (p. 31):

-

Moe has eaten the cake Juliet was intending to serve to company. Juliet asks Moe, “Did you eat the cake?” Moe says, “No.” Did Moe lie?

The story corresponding to the possession of none of three defining properties was (p. 31):

-

Dick, John, and H.R. are playing golf. H.R. steps on Dick's ball. When Dick arrives and sees his ball mashed into the turf, he says, “John, did you step on my ball?” John replies, “No, H.R. did it.” Did John lie?

As expected, all 67 of Coleman and Kay's American subject's agreed that (1) was a lie and that (2) was not a lie. What they were interested in was how these respondents would react to the other stories, which

-

possessed either one or two of the defining characteristics. Story (3), for example, had two defining characteristics: false in fact and intent to deceive. It lacked the property that S believes P to be false. This story was as follows (p. 31):

-

Pigfat believes he has to pass the candy store to get to the pool hall, but he is wrong about this because the candy store has moved. Pigfat's mother doesn't approve of pool. As he is going out the door intending to go to the pool hall, Pigfat's mother asks him where is going. He says, “I am going by the candy store.” Did Pigfat lie?

Pigfat's statement was considered to be a lie by 58 percent of the judges; 37 percent judged it not to be a lie; and 5 percent could not make a decision.1 In general, he results supported the prototype model. The more defining properties a story contained, the more likely it was to be judged a lie. The authors noted some minor departures from the ordering that their theory would have predicted. In part, these departures could be attributed to the fact that each story differed in content. Coleman and Kay also obtained comments from their subjects. In agreement with their theory, the comments referred to the three prototypical properties to justify why a statement was judged as a lie. But the subjects also referred to other properties, such as the reprehensibility of the acts and the motives of the person making the statement.

The authors discuss their reasons for not including some of these additional properties in the prototype for lie. They distinguish between prototypical and typical properties of a concept. Lies, in their view, are prototypically statements that the speaker believes to be false, intends the hearer to believe, and are, in fact, false. Lies are also typically reprehensible, but not prototypically so. They conclude (Coleman and Kay, 1981:38):

Although this is not the only plausible way to do so, we summarize our observations by saying that reprehensibleness, although characteristic of typical acts of lying, is not a prototypical property of such acts, and as such does not play a role in the semantic prototype (or in the meaning) of lie.

This distinction between prototypical and typical properties of a concept is controversial. We do not take a side in this controversy; we discuss the issue here because, regardless of whether reprehensibility is a prototypical or typical property of lying, it may matter for the leakage hypothesis. Only acts that are reprehensible or otherwise morally unacceptable to a given individual would be expected to lead to those states that generate nonverbal leakage. To gain an understanding of how different cultural groups construe various types of lying and deception, the Coleman and Kay approach should be extended by adding stories that also vary on such properties as perceived social acceptability, potential harmfulness, and the like.

A Linguistic Approach

Sweetser (1987) uses the data from Coleman and Kay's investigation to provide an alternative interpretation. Like Hopper and Bell, Sweetser wants to describe the folk theory or conception of lying; unlike Hopper and Bell, her method is linguistic rather than psychological. Sweetser notes the view of concepts as fuzzy sets rather than classical equivalence classes, but she argues for a different account.

She suggests that within folk psychology, the classical view may actually hold. She claims that the folk definition of lying holds for a prototypical, simplified model of the world. This prototypical world contains maxims such as: (1) be helpful; (2) correct information is helpful; (3) speakers only talk about information they have good reasons to know is correct; (4) listeners can correctly assume that speakers know what they are talking about. In this prototypical context, if the speaker's statement is false, then it is clearly a lie.

When subjects are not completely sure whether a speaker is lying, Sweetser argues that this is not because the specific act differs from the prototypical lie, but rather the act occurs in a context that differs from the prototypical one. A joke is a false statement deliberately uttered by the speaker. It is not clearly a lie, according to Sweetser, because the context in which the joke is made differs from the prototypical one. In the prototypical context for defining a lie, conveying true information is paramount. But in the context of a joke, playing is paramount, and conveying true information is irrelevant. Subjects are unsure about whether a joke is a lie because they are not sure that a lie can occur in a context in which the truth is irrelevant.

Sweetser's analysis of lying highlights the need to keep context in mind when devising a taxonomy of deception. Indeed, we believe that an adequate taxonomy of deception will include a taxonomy of the contexts in which each kind of deceptive act can occur.

Sweetser's analysis points to a further issue that may be important for a good taxonomy. She states that a speaker feels less immoral if he or she manages to deceive the target without directly lying. She suggests that deceivers feel less guilt about deceiving by implication rather than by deceiving by an explicitly false claim. (In contrast, she also suggests that a victim feels more resentment by having been deceived by implication than by a direct lie.) If Sweetser is correct, then it could be hypothesized that a deceiver is less likely to leak deceptive signals when engaging in indirect deception than in direct deception.

Implications for this hypothesis are derived from the Druckman et al. (1982) experiments reviewed above. These investigators found both similarities and differences in displayed nonverbal behaviors between

subjects in an evasion (indirect deception) condition and those in a direct deception condition. Discriminant-analysis results showed that the evaders were more similar to deceivers than to honest subjects in overall displays. However, the evaders could be distinguished from deceivers in terms of particular nonverbal behaviors displayed during certain time periods and as deviations from baseline data: for example, deceivers made more speech errors (calculated as a deviation from a baseline period) than evaders. These findings suggest that evaders are not less likely to leak deceptive signals; rather, different cues may be leaked by indirect (evaders) and direct deceivers.

The cross-cultural question is intriguing. Several interesting suggestions are made in the anthropological, linguistic, and other related literatures. A first reading of the literatures provides a pessimistic answer to the possibility of finding universal indicators of deception. Some cultures seem to have notions of what constitutes lying and deception that differ markedly from American folklore. Even more disquieting is the claim that in some cultures lying and deceiving, in several contexts, are not only socially acceptable, but actually considered exemplary behavior when the fabrications succeed.

The reason these first impressions of cross-cultural differences are disheartening is because of the assumption that a deceiver will only leak his or her intentions when knowingly deceiving and violating a social taboo. Thus, one faces the task of finding out what is considered a socially unacceptable fabrication within various cultural groups. Once such contexts have been discovered, then interrogations could be arranged so as to put informants, for example, in a situation in which they would be violating a cultural taboo if they lied when presenting information to interrogators. Our reading of the literature indicates that if a socially unacceptable deception for a given informant has been identified, then circumstances can be created that will lead to leakage if the informant is willfully lying.

Socialization

Our focus has been on a folk psychology of deception because it seems to offer the best way to achieve a general theory of deception. The leakage hypothesis assumes that a given individual will display potentially detectable signs when he or she is in a certain psychological state. As we have noted, a key issue is the extent to which these diagnostic cues are universal—that is, to what extent they show the same pattern across cultures and situations—and the evidence to date suggests the possibility that there may be both universal and culturally specific aspects to leakage displays.

Presumably, the underlying psychological state that gives rise to leakage corresponds to what is ordinarily labeled guilt, shame, humiliation, disgrace, or dishonor—terms that refer to how people feel when they violate a social taboo. Everyone is raised within family and cultural settings that teach which acts are socially unacceptable. This socialization process is considered to impart a relatively permanent tendency to experience guilt or a similar state whenever a person commits, or even considers committing, social transgressions. Such tendencies may persevere even when an individual, as an adult, consciously rejects many of the values within which he or she was raised.

One assumption, then, is that for all cultures people will react with a guilty state when they believe they are violating a cultural norm. Knowing the folk psychology for various cultures becomes important if one wants to know what constitutes a cultural taboo for a given individual. That is why the techniques used by Hopper and Bell and by Coleman and Kay have been discussed in some detail. By properly refining and adapting their methods, information can be gathered on how individuals from various cultures and subcultures conceive of deception and lying. This information, in turn, might lead to determining what sorts of stories or actions constitute socially unacceptable behaviors. Such findings could then be used to create appropriate stimuli that may elicit leakage if the individual is, in fact, trying to deceive.

This model assumes people have been socialized. What about individuals who have not been socialized? In the past, psychologists and psychiatrists diagnosed some individuals as psychopaths or sociopaths. Whether such diagnostic categories are still useful or appropriate is debatable; the current tendency is to use the term “antisocial personality.” Folklore and clinical observation suggest that antisocial people are able to lie and deceive successfully because they have undeveloped social consciences and no feelings of guilt. A few experiments have been carried out with such persons using the polygraph; all of them are controversial and the results are contradictory.2 Whether antisocial people turn out to be better or worse than normal people at hiding and detecting deception, the practical consequences are not clear. It is doubtful, for example, that an intelligence agent would want to trust a person so diagnosed, even if such a person could successfully deceive the enemy.

INTERACTIVE SETTINGS

The discussion in this chapter (and in Chapter 9) deals with the simplest situation in which deception occurs, a face-to-face confrontation between two individuals, in part because this situation has been the context of most of the research. Another reason is that such a situation

provides a starting point for considering extensions to more complicated and realistic interactions.

The typical dyadic situation is one in which the two individuals interact and each adapts his or her behavior in the light of what the other person says and does. In contrast, all the research on the leakage hypothesis has been conducted within a relatively static, noninteractive mode. Typically, the detector makes judgments in response to a videotaped presentation. The detector cannot continue and ask further questions, nor can the deceiver adjust his or her behavior on the basis of feedback from the detector. But this is just the context in which ordinary dyadic communication occurs.

It is possible that a detector can do better than the dismal performances so far reported if he or she is allowed to actually interact with the sender. However, the sender might actually do even better at hiding deception in an interactive mode, because the deceiver can use feedback from the victim to adjust both the content and mode of presentation so as to be more acceptable to the victim.

The Expression Game

Goffman's (1969) concept of an “expression game” depicts the dynamics of interaction between a detector and a sender. Accurate reading of expressions is made difficult by the paradox that “the best evidence for (the detector) is also the best evidence for the sender to tamper with” (Goffman, 1969:63). The detector's challenge is to determine the significance of a cue when he or she knows that the sender is managing his or her expressions. The detector must anticipate what the subject expects him or her to look for and then focus attention on other cues. The sender is challenged by the need to fool the detector when he or she knows that the detector is assessing his or her moves. The sender must anticipate the cues likely to be used by the observer and then control them. As Goffman (1969:58) notes: “uncovering moves must eventually be countered by counter-uncovering moves.” Both the detector and sender are vulnerable. The objective for each is to minimize his or her vulnerability under difficult circumstances. This assessment dilemma renders the expression game a highly sophisticated art form.

The dynamics of the expression game pose an analytical problem. Both senders (encoders) and receivers (decoders) determine the outcome of the communication process. The problem is one of disentangling effects of senders and receivers. Accurate detection may be the result of encoding skill, decoding skill, or both. Consequently, an analyst does not know whether detection is due to style of presentation or astute observation. One solution is to control part of the interaction, either the

sender's enactments or the observer's clues, as is done in most laboratory experiments. By so doing, however, one may lose the essential features of an interactive situation. This problem illustrates the difficulties in making the transition between laboratory and field settings.

The World of Intelligence Agencies

Theories of deception, such as those outlined in Epstein (1989), often assume deception works best when it can be tailored to the expectations and desires of the victim. Intelligence agencies attempt to do this tailoring by using feedback about which parts of the disinformation are being accepted and which parts are being rejected by the victim. One source of such feedback is provided by information gained in face-to-face communication between informant and interrogator. In the world of spying, however, the most effective form of feedback comes from an inside person (or mole) who has penetrated the target agency. The deception loop includes several layers of an organization. The disinformation (lying) from a presumed informer makes its way from the controlling agent up through the various layers of the bureaucracy. The inside person sees how this information is being accepted and interpreted and informs the enemy agency. The enemy agency, in turn, adjusts the disinformation being provided by the informant to better fit with the expectations and desires of the enemy.

As this example illustrates, deception can and often does involve several levels of organizational complexity. A complete understanding of how deception operates in most real-world situations often requires considerations at several levels of analysis such as the international scene, the relation between states, governmental structures, internal special interest groups, and the like (Handel, 1976; Mauer et al., 1985). Much of this complexity deals with how information is accepted and used or not. This, in turn, relates to the problem of detecting deception because it is possible that even when cues to deception should be obvious to an interrogator, the disinformation being provided may so fit in with preconceptions and desires of superiors that the interrogator subconsciously ignores all the signs to the contrary.

As many have pointed out, an interrogator (case officer) who controls an informant has much of his esteem and pride invested in this informant. A symbiotic relationship exists between a case officer and an informant. The informant requires the trust of the case officer so that the information being provided will be accepted. The case officer needs to believe that the informant is trustworthy because his or her reputation depends on controlling this important source of information. In fact, even when an informant is a plant, he or she will make sure to provide

enough true information, along with disinformation, to ensure that the case officer is rewarded and promoted. In this way the case officer becomes even more dependent on the trustworthiness of the informant.

Once a case officer and his or her superiors have decided that an informant is trustworthy, the dynamics of the situation are tilted toward maintaining the belief in the reliability of the informant. Careers can be made, or broken, if the agency ultimately decides that an informant was a true defector or a plant. A variety of institutional and psychological factors come into play to maintain the belief, even in the face of subsequently strong information to the contrary, in the trustworthiness of the agent. Striking cases of such institutional self-deception have been documented by Epstein (1989), Handel (1976), Watzlawick (1976), and Whaley (1973).

Such institutional self-deception typically involves accepting or rejecting the content of information. At the level of face-to-face communication, we have been focusing on the nonverbal cues rather than the content of what is being communicated. The two bases for judging the credibility of information obviously interact. When the content of the information is consistent with the beliefs and desires of the receiver, then the tendency to accept the information as accurate is strong. As noted above, the relationship between suspicion and detection accuracy —how much a tendency to believe or disbelieve can override the ability to pick up deception cues—is important for research.

CONCLUSIONS

From the discussion in the chapter we draw four conclusions. The first conclusion is based on the assumption that leaked cues to deception occur when an individual's psychological state reflects the experience of guilt. However, the situations that produce guilt may vary with an individual's cultural background and experience. Therefore, detection of deception would be improved if one could anticipate the sorts of settings that constitute social transgression or a guilt-producing state for particular individuals.

The research on the folk psychology of deception and on the prototypical structure of lying are examples of methods for assessing perceptions of socially unacceptable deceptions within subcultural groups. With suitable refinements, those methods can be used to discover what stories and deceptive acts might induce leakage for particular individuals.

Once discovered, the stories could be varied systematically to determine whether leakage occurs (or is enhanced) when an individual deceives under conditions that violate his or her cultural norms. The analysis would compare the reactions of various cultural groups to the

same set of stories, with different stories hypothesized to produce nonverbal leakage for different groups.

Our second conclusion about cultural differences has practical implications. An interrogator can arrange a situation that will violate a cultural taboo if deception occurs. One possible way of doing this is to use an interrogator of the same cultural background as that of the informant. If the folk psychology of the informant holds that deceiving is immoral only when the victim is a member of one's own culture, then this setting should produce leakage clues if the informant is, in fact, lying.

The third conclusion concerns extending and modifying the laboratory research paradigm as a technique for studying deception clues. In order to examine complex interactions between individuals operating in organizational contexts, it would be necessary to supplement classical laboratory experiments with modern quasi-experimental methods, careful surveys, and detailed case histories. Moving between experimental and field studies also entails the development of statistical procedures that enable an investigator to make the comparisons of interest.

The fourth conclusion is a recommendation for the use of videotapes for research and training. Ongoing face-to-face interactions pose a problem for detecting subtle deception clues. Videotaped exchanges between interrogators and informants can be replayed for details easily overlooked in the course of an ongoing conversation. Independent observers can analyze the interactions, providing judgments that may be free from the biases possessed by participants. Moreover, taped discussions can provide baseline data on cues emitted from a number of communication channels. Such information can serve as a basis for evaluating deviations from past behavior. They can also be used to train individuals to code nonverbal behavior for leakage clues.

NOTES

1. Coleman and Kay obtained confidence ratings along with the judgments of whether a statement was a lie. They were able to use these ratings to construct a more refined rating scale. For the sake of simplicity, only the simple judgments of lying are discussed here.

2. Robin-Ann Cogburn, a doctoral student at the University of Oregon, is currently conducting research with antisocial persons who are in the state prison system. She is using a variation of the classic laboratory paradigm to test the leakage hypothesis with them.

REFERENCES

Chisholm, R.M., and T.D. Feehan 1977 The intent to deceive. The Journal of Philosophy 74:143-159.

Coleman, L., and P. Kay 1981 Prototype semantics: the English verb lie. Language 57:(1)26-44.

Daniel, D.C., and K.L. Herbig 1982 Propositions on military deception. In D.C. Daniel and K.L. Herbig, eds., Strategic Military Deception. New York: Pergamon Press.

Druckman, D., R.M. Rozelle, and J.C. Baxter 1982 Nonverbal Communication: Survey, Theory, and Research. Beverly Hills, Calif.: Sage Publications.

Epstein, E.J. 1989 Deception: The Invisible War Between the KGB and the CIA. New York: Simon and Schuster.

Goffman, E. 1969 Strategic Interaction. Philadelphia, Pa.: University of Pennsylvania Press.

Handel, M.I. 1976 Perception, Deception, and Surprise: The Case of the Yom Kippur War. Jerusalem Papers on Peace Problems, No. 19. Jerusalem, Israel: The Hebrew University.

Heuer, R.J. 1982 Cognitive factors in deception and counterdeception. Pp. 31-69 in D.C. Daniel and K.L. Herbig, eds., Strategic Military Deception. New York: Pergamon Press.

Hopper, R., and R.A. Bell 1984 Broadening the deception construct. Quarterly Journal of Speech 70:288-302.

Hyman, R. 1989 The psychology of deception. Annual Review of Psychology 40:133-154.

Mauer, A.C., M.D. Tunstall, and J.M. Eagle, eds. 1985 Intelligence: Policy and Process. Boulder, Colo.: Westview Press.

National Academy of Sciences 1989 On Being a Scientist. Committee on the Conduct of Science. Washington, D.C.: National Academy Press.

Rosch, E. 1975 Human categorization. Pp. 1-72 in N. Warren, ed., Advances in Cross-Cultural Psychology. London: Academic Press.

Sweetser, E.E. 1987 The definition of lie: examination of folk models underlying a semantic prototype. In D. Holland and N. Quinn, eds., Cultural Models in Language and Thought. New York: Cambridge University Press.

Watzlawick, P. 1976 How Real is Real? Confusion—Disinformation—Communication. New York: Random House.

Whaley, B. 1973 Codeword Barbarossa. Cambridge, Mass.: MIT Press.

1982 Toward a general theory of deception. The Journal of Strategic Studies 5:178-192.

Wittgenstein, L. 1953 Philosophical Investigations. New York: Macmillan.