A Common Approach and Other Overarching Issues

The committee was asked to comment specifically on scientific and technical approaches that might assist the US Environmental Protection Agency (EPA), the National Marine Fisheries Service (NMFS), and the Fish and Wildlife Service (FWS) in estimating risk to species listed under the Endangered Species Act (ESA) posed by pesticides (chemical stressors) under review by EPA for registration or reregistration as required by the Federal Insecticide, Fungicide, and Rodenticide Act (FIFRA). In this chapter, the committee discusses how the risk-assessment paradigm could serve as a common approach for EPA and the Services (NMFS and FWS) in examining the potential for listed species to be exposed to pesticides and the probability (that is, the risk) that such exposures would result in adverse effects. The risk-assessment paradigm was originally set forth in the report Risk Assessment in the Federal Government: Managing the Process (NRC 1983) and has been used and refined over the last few decades to evaluate both human health and environmental risks. Because this report is focused on risk to listed species in the environment posed by pesticide exposure, the committee focuses on ecological risk assessment (ERA) as described by such comprehensive references as Suter (2007). This chapter also addresses two general issues related to risk assessment: analysis of uncertainty and use of best data available.

To comply with or administer the ESA during the pesticide registration process, EPA and the Services need to determine the probability of adverse effects on listed species or their habitats due to expected pesticide use that is consistent with label requirements. The committee understands that EPA and the Services are responding to different federal regulations and legal requirements

and that the ESA places different responsibilities on the action agency (EPA) and the decision agency (NMFS or FWS). However, the committee has concluded that when the determination involves risk posed by chemical stressors, the agencies should use the same ERA paradigm to reach conclusions about adverse effects. Scientific obstacles to reaching agreement between EPA and the Services during consultation have emerged apparently because of the agencies’ differences in implementation of the ERA process, including differences in underlying assumptions, technical approaches, data use, exposure models, and risk-calculation methods. Agreement has also been impeded because of a lack of communication and coordination throughout the process.

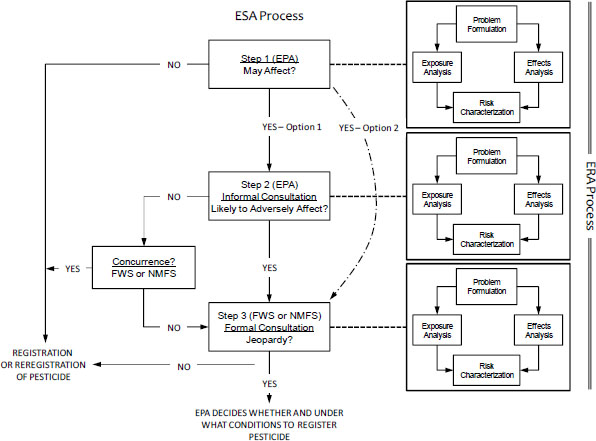

To understand and reconcile the differences between how EPA assesses risk to listed species from pesticide use and how the Services reach jeopardy decisions, it is important first to understand the consultation process under the ESA. The Services’ Endangered Species Consultation Handbook (FWS/NMFS 1998) details the procedural and legal steps that they must follow when engaging in informal or formal consultations regarding listed species. As discussed in Chapter 1, the process involves three steps; the first two steps are to determine whether a proposed action needs formal consultation (Figure 2-1). In Step 1, the action agency (EPA) determines whether the action “may affect” a listed species. If the answer is yes (as it almost always is at the screening level for outdoor-use pesticides because “may affect” is interpreted broadly), EPA has two options: it can enter into formal consultation or proceed to Step 2—an optional step known as informal consultation—in which it must determine whether the action is “likely to adversely affect” a listed species. If the answer is no and NMFS or FWS concurs, the consultation process ends. However, if the answer is yes, Step 3 (formal consultation) is triggered. In formal consultation, NMFS or FWS must determine whether the action is “likely to jeopardize the continued existence of the species.” A jeopardy decision must be informed by science, but the final regulatory determination of whether a risk is sufficient to constitute jeopardy is partly a policy decision. As the action agency, EPA is responsible for Step 1. It is also responsible, with concurrence from the Services, for Step 2; the Services are responsible for Step 3. In 2004, the Services promulgated a rule that would essentially authorize EPA to conduct Step 2 on its own without concurrence from the Services. The court found that this was a violation of the ESA, and it invalidated that portion of the Services’ rule [Washington Toxics Coalition v U.S. Fish & Wildlife Serv., 475 F.Supp.2d 1158 (W.D. Wash. 2006)]. In recent years, EPA seems to be bypassing Step 2 and initiating formal consultation whenever it finds that a pesticide “may affect” a listed species. Although this approach is permissible, it might be more efficient in many cases to conduct a Step 2 analysis before deciding to enter formal consultation. Presumably, Step 2 would filter out some actions, and fewer biological opinions would be needed. An agreed-on common approach to ERAs would give the Services more confidence in EPA’s Step 2 analyses.

FIGURE 2-1 Relationship between the Endangered Species Act (ESA) Section 7 decision process and the ecological risk assessment (ERA) process for a chemical stressor. Each step answers the question that appears in the box.

As shown in Figure 2-1 and summarized in Table 2-1, the committee is suggesting that each step in an ESA consultation process for a chemical stressor be coordinated with an ERA process. Although the complexity of each ERA would depend on the step, each would involve the same four basic elements—problem formulation, exposure analysis, effects (or exposure-response) analysis, and risk characterization—that make up a risk assessment of a chemical stressor, such as a pesticide. The four basic elements and their relationships to one another trace their origin to the seminal Risk Assessment in the Federal Government: Managing the Process (NRC 1983; commonly referred to as the Red Book) and, more recently, to Science and Decisions: Advancing Risk Assessment (NRC 2009; commonly called the Silver Book). After 30 years of use and refinement, this risk-assessment paradigm has become scientifically credible, transparent, and consistent; can be reliably anticipated by all parties involved in decisions regarding pesticide use; and clearly articulates where scientific judgment is required and the bounds within which such judgment can be made. That process is used for human-health and ecological risk assessments and is used broadly throughout the federal government (for example, by the Food and Drug Administration). The committee notes that the Services’ Consultation Handbook is silent regarding technical approaches to assessing risks to listed species posed by chemical stressors, such as pesticides. Consequently, the committee has concluded that the risk-assessment paradigm reflected in the ERA process is singularly appropriate for evaluating risks to ecological receptors, such as listed species, posed by chemical stressors, such as pesticides.

TABLE 2-1 Steps in the ESA Process as Related to Elements in the ERA Process for Pesticidesa

| Step in ESA Process [Responsible Agency] | Element of the ERA Process | ||

| Exposure Analysis (Chapter 3) | Effect (Exposure-Response) Analysis (Chapter 4) | Risk Characterization (Chapter 5) | |

| 1 [EPA] Determine whether use of a pesticide “may affect” any listed species | Distribution of listed species in space and time | Distribution of the pesticide in space and time if used as labeled (toxicity is assumed) | Possibility that species and pesticide distributions would overlap in space and time |

| 2 [EPA] Determine whether use of a pesticide is “likely to adversely affect” any listed species | Modeled exposure concentrations | Exposure-response function for an individual receptor’s survival and reproduction | Probability of adverse effects on survival and reproduction of individual receptors |

| 3 [SERVICES] Determine whether use of a pesticide is likely to cause “jeopardy” | Modeled or measured exposure concentrations | Exposure-response functions for survival and reproduction rates | Probability of adverse effects on population viability over space and time |

aSee section “Coordination among Agencies” for a discussion of problem formulation, the first element of the ERA process.

Although the ERA process should always include the four elements, the content of each is expected to change as the question shifts from whether a pesticide “may affect” a listed species (Step 1) to whether it is “likely to affect” a listed species (Step 2) to whether the continued existence of the listed species is likely to be jeopardized (Step 3). Consistency of the basic ERA process throughout the three steps (if all are needed) is the first essential point. The second is that each ERA becomes more focused and specific to the chemicals and species of concern as it moves from Step 1 to Step 3. The third point is that the Services should build in Step 3 on what EPA did in Steps 1 and 2; they should not start over with a separate and different analysis in Step 3.

Thus, the committee envisions the following process. In Step 1, EPA would consider whether any listed species might be harmed by the pesticide simply by asking whether areas proposed for pesticide application and known (or suspected) species ranges or habitats coexist. Not all listed species exist everywhere, nor are all pesticides used everywhere, so that simple formulation of the problem would help to narrow the scope of later assessments. In Step 2, EPA would address the question of whether the use of a pesticide in the specific context of its proposed patterns of use is “likely to adversely affect” one or more listed species or their critical habitats. EPA would approach that question from a chemocentric viewpoint and estimate potential environmental concentrations and possible toxic effects. Essentially, EPA would evaluate whether the pesticide would be used in a manner that would result in environmental concentrations that have the potential to affect a listed species, other organisms in its habitat, or its critical habitat adversely. The assessment would be relatively generic (that is, not site-specific), and the effects analysis would focus on individuals of the listed species. If the predicted concentrations could adversely affect individuals in a population of a listed species, EPA would consult with the appropriate Service, which would then be responsible for a jeopardy determination. In Step 3, NMFS or FWS ideally would focus more specifically on potentially affected listed species in an ecological context and address the question of whether locally applicable predicted or measured exposures result in effects on the listed species or on other species in their habitats in a manner that would change the ability of a population to persist or to recover or that would change the time to extinction.

The possible differences in risk assessments between Steps 2 and 3 in the ESA process can be seen by considering that the imaginary pesticide X—designated PX for this discussion—will be applied to wheat in Illinois in summer. In this hypothetical example, EPA decides that because PX is used in a region where there are listed sturgeon species, some PX could get into streams and possibly affect the fish. So, the agency progresses to Step 2. Here, problem formulation is used to narrow the assessment’s scope by asking two questions: Are there any organisms for which we know that the pesticide is nontoxic (for example, exposures at greater than 5,000 ppm cause no effect)? Are there any environmental media (water, soil, and air) in which the pesticide will not reside? Following problem formulation, EPA runs the standard farm-pond model to

determine an initial estimate of the probable concentration of PX in the water, recognizing that the farm-pond model might not accurately represent conditions that apply to flowing streams and rivers where sturgeon actually live. That concentration is compared with the toxicity threshold that is based on full life-cycle tests in standard laboratory species, and EPA also considers that sturgeon are generally more sensitive to PX-like chemicals for the assessment end point than are standard test species. EPA concludes that pesticide concentrations in streams could exceed toxicity thresholds at the proposed application rates and notes further that PX-like chemicals can cause sublethal effects, including behavioral changes and darker color in adults. PX also kills aquatic invertebrates (the prey base of sturgeon) at concentrations lower than ones that affect sturgeon. On the basis of those results, EPA reaches a conclusion of “likely to adversely affect” and institutes formal consultation with the Services as required.

FWS builds on EPA’s analysis in Step 3 and uses site-specific data on Illinois soils to calculate potential runoff to the slow-moving rivers and streams favored by sturgeon during summer. Because of the high clay content of the soils, PX binds to the root zone, and little is expected to move through soil into the streams. However, surface runoff—particularly during heavy rains, when a lot of soil is lost from fields—can result in water concentrations above effect concentrations. FWS reviews the information on behavioral effects and concludes that the studies are not reliable indicators of field effects. It also concludes that a darker color induced by PX exposure would increase the probability of survival of the fish because they would be more mud-colored and therefore better camouflaged. Because of concern about potential effects of PX on sturgeon in areas of the state that have a potential for substantial soil loss during summer rain events, FWS runs a spatially explicit population model to determine whether there could be a reduction in reproductive output that would affect the recovery of the population; it determines that changes in the growth rate of the population are unlikely. Furthermore, FWS concludes that the effects on aquatic invertebrates occur during times of the year when young sturgeon (the insectivorous life stage) are not present. Therefore, FWS reaches a conclusion of “no jeopardy.”

In that hypothetical example, EPA and FWS use the same exposure models but different input parameters (generic farm-pond analyses vs site-specific soil runoff into shallow streams), assume different environmental transport pathways (surface runoff vs below ground), incorporate effects thresholds from the same studies, and review the same studies on sublethal effects. EPA uses reasonable worst-case assumptions of effects of PX on individual fish to reach a “likely to adversely affect” conclusion, whereas FWS uses site-specific data, incorporates spatial variability, and bases its decision on changes in population growth rates to reach a finding of “no jeopardy.”

The committee concludes that using a common approach would eliminate many problems in assessing risks to listed species that are being encountered by EPA and the Services. As noted by Suter (2007, p. 37), the “advantages of using a single standard framework include familiarity and consistency, which

reduce confusion and allow comparison and quality assurance of assessments.” The ERA process that has evolved over the decades is best suited to evaluating the risk to listed species and their critical habitats posed by pesticides, and, as noted by Suter (2007, p. 37), the “EPA framework is a preferred default for ecological risk assessment in the United States.” Although the committee does not expect the basic risk-assessment framework to change, it recognizes that risk-assessment approaches and methods for determining, for example, what is hazardous, how much is hazardous, what end points constitute an adverse effect, and when, where, and how much exposure is occurring will continue to evolve.

A letter from the Services to EPA in 2004 (Williams and Hogarth 2004) detailed previous efforts to reconcile the differences between EPA’s and the Services’ approaches to pesticide evaluation. That letter was followed, in the same year, by an alternative consultation agreement between EPA and the Services. Although all six tasks assigned to the committee were discussed in that letter and the later agreement, the extent to which the agreement was implemented remains unclear. The committee emphasizes that given the changing scope of the ERA process from Steps 1-3, EPA and the Services need to coordinate to ensure that their own technical needs are met.

First, before a risk assessment is even initiated, the agencies need to connect the decision that must be made with the risk assessment that will inform it. That stage, often referred to as planning and scoping (EPA 1998, 2004), involves a team of decision-makers, stakeholders, and risk assessors who identify the problem to be assessed, develop a common understanding of why the risk assessment is being conducted, and establish the management goals of the assessment. Decision-makers can identify information that they need to make decisions, and risk assessors can ensure that the science meets the needs of decision-makers and stakeholders. Together, all stakeholders should be able to evaluate whether the assessment is likely to address the identified problems with the desired confidence (EPA 2004).

Second, problem formulation, conducted as part of the ERA process (see Figure 2-1), could provide an effective means for EPA and the Services to coordinate and reach agreement on many of the key technical issues involved in assessing risk posed to listed species by pesticides. Problem formulation frames the risk-management objectives sufficiently for the risk assessor to identify all potential inputs into the risk-assessment model. Guided by the needs of the decision-makers and using the best data available, the risk assessor develops a conceptual model of stressor sources, exposure pathways, and receptors; poses risk questions or hypotheses; and identifies the methods and analyses that will be used to address the questions and hypotheses. If problem formulation is successful, a comprehensive, scientifically credible conceptual model will be developed, there will be agreement on the risk-assessment approach, and the output of

the assessment will have sufficient specificity for decision-making. The analysis phase of the risk assessment should not begin until the decision-makers are satisfied that the risk assessor understands the questions that need to be addressed and understands how much confidence in the final risk estimate is needed. Problem formulation is also an excellent time to discuss how the risk estimate will be communicated at the conclusion of the assessment.

The committee views coordination among EPA and the Services as a collegial exchange of technical and scientific information for the purpose of producing a more complete and representative assessment of risk, including the types and depths of analyses to be conducted at each step in the process. Such coordination would allow EPA’s expertise in pesticides to be effectively combined with the Services’ expertise in life histories of listed species and in abiotic and biotic stressors of the species. Coordination discussions would include many of the issues discussed by the committee in the present report, such as datasets to use to delineate species’ habitats, the need for additional fate data, and new approaches for exposure and effects analysis. The agencies can use Steps 1-3 as a framework for such discussions but need not be constrained by them. It might be that technical working groups would form around various aspects of the assessment approach—such as fate and transport modeling, estimating species distributions and habitats, data-sharing, and uncertainty analysis—to discuss technical details and that others would discuss policy-based issues, such as which evolutionarily significant units to include in the analysis. The committee recommends that such collaboration meetings be formal, structured workshops that have stated goals and objectives, be led by professional facilitators, and have formal agendas agreed to by all parties. That approach would enhance productivity and allow expectations to be met. The periodicity of such discussions would necessarily be at the discretion of the agencies, but the committee recommends a frequency of at least once every 2 years to capture updates in risk-assessment and population-biology methods, newly listed species, new pesticide classes, and changing agricultural practices.

The committee concludes further that coordination during problem formulation regarding the ESA and ERA processes would be enhanced if a common outline, such as the one shown in Box 2-1, were adopted. The details of the outline would be adapted according to the step being conducted. However, the outline should incorporate specific elements of concern and interest to EPA and the Services. For example, examination of earlier EPA assessments has revealed a need for EPA to include and consider all available information about the life history of a listed species early in the process, ideally during planning and scoping (Item 1.1.4 in Box 2-1). Although assessment end points might ultimately involve only common surrogate or test species, the inclusion of natural life-history information on the listed species and critical habitat would at least enable a qualitative assessment of the similarities and differences between the listed species and the identified surrogates.

1. PROBLEM FORMULATION

1.1. Background

1.1.1. Defining the Regulatory Action

1.1.2. Nature of the Pesticide

1.1.3. Pesticide-Use Characterization

1.1.4. Natural History of Listed Species

1.1.5. Designated Critical Habitats

1.2. Action Area (based on use and natural history)

1.3. Assessment End Points

1.3.1. Individuals

1.3.2. Populations

1.3.3. Critical Habitats

1.4. Conceptual Model

1.4.1. Risk Questions or Hypotheses

1.4.2. Graphical Representation

1.5. Analysis Plan

1.5.1. Measures (exposure, effect, and characteristics)

1.5.2. Approach to Risk Estimation

2. EXPOSURE ANALYSIS

2.1. Label Application Rates and Intervals

2.2. Habitats of Listed Species

2.3. Exposure (Transport and Fate) Modeling

2.3.1. Aquatic Organisms

2.3.2. Terrestrial Organisms

2.4. Exposure to Mixtures

2.5. Monitoring Data

2.6. Exposure Estimate (with uncertainty)

3. EFFECTS ANALYSIS

3.1. Incident Database Review

3.2. Individuals

3.2.1. Direct Effects (acute, sublethal, and chronic)

3.2.2. Indirect Effects

3.3. Effects on Critical Habitats

3.4. Mixture Effects

3.5. Exposure-Response Estimate (with uncertainty)

4. RISK CHARACTERIZATION

4.1. Risk Estimate

4.1.1. Individuals

4.1.2. Populations

4.1.3. Critical Habitat

4.2. Field and Laboratory Comparisons

4.3. Risk Description (integration and synthesis)

The committee was asked to consider the interpretation of uncertainty and specifically the selection and use of uncertainty factors to account for lack of data. However, before the committee can answer the question about uncertainty factors, it must consider how uncertainty has been treated in past assessments. The committee addresses the question about uncertainty factors in Chapters 4 and 5.

In the context of this report, risk is defined as the probability of adverse effects on listed species or their critical habitats due to anticipated pesticide use that is consistent with label requirements. Ultimately, the adverse effect is jeopardy to the continued existence of a listed species defined in terms of demography, habitat, or other resources. The risk is estimated on the basis of predicted future pesticide exposure concentrations and the type and magnitude of effects (as determined by exposure-response functions) that the pesticide could have on the species. The risk estimate reflects uncertainty due to natural variability, lack of knowledge, and measurement and model errors in the host of underlying assumptions and variables used to predict exposure and effects. Natural variability or variation is true heterogeneity that might be better defined (but never eliminated) through increased sampling. Lack of knowledge (ignorance) is due to an absence of data or incomplete knowledge of important variables or their relationships; it can be reduced through additional data collection or further research. As indicated in Box 2-1, uncertainty will need to be characterized in the exposure estimation (Item 2.6) and the effect-response estimation (Item 3.5) analyses, then propagated, and finally integrated (Item 4.3) to provide the risk as a probability with an estimate of uncertainty.

The committee has concluded that achieving such integration will require that the ERA process in Steps 2 and 3 adopt a probabilistic approach that allows uncertainty in exposure and effect to be explicitly recognized and then combined to yield a risk as a probability with associated uncertainty (see Chapter 5). The present practice of relegating the consideration of uncertainty to a separate, often qualitative, narrative at the end of an assessment is of marginal value because doing so has little notable effect on risk estimation itself or on a decision-maker’s ability to understand the confidence that should be placed in a risk estimate. Although the committee is aware of the administrative and other nonscientific hurdles that will need to be overcome to implement such an approach, it nonetheless has concluded that moving the uncertainty analysis from a narrative addendum to an integral part of the assessment is both possible and necessary to provide realistic, objective estimates of risk. Because a core dataset is required for all pesticide registration decisions, there should be sufficient information to conduct a quantitative assessment, which can include a quantification of the associated uncertainty.

The committee recognizes that the quantitative propagation of uncertainty through ecological risk assessments is not a new concept, particularly in the context of pesticide assessments. The topic was addressed by EPA’s Scientific Ad-

visory Panel for FIFRA in 1996 (Bailey et al. 1997) and was explicitly addressed in a workshop held in 2009 (Warren-Hicks and Hart 2010). EPA has since developed and begun to implement the Terrestrial Investigation Model (TIM; Odenkirchen 2003); TIM version 2.0 includes Monte Carlo simulations for calculating pesticide concentrations in a simulated farm pond and estimating activity patterns of potentially exposed wildlife. The committee recognizes that the use of frequentist statistics and Monte Carlo simulations, although widespread, is only one approach to quantifying and propagating uncertainty through an ERA. Bayesian approaches to environmental assessments, some of which also use Monte Carlo simulations, have become more widely understood and more feasible over the last few decades as computational power and capability have improved (Ellison 1996; McCarthy 2007; Link and Barker 2010). For example, Borsuk and Lee (2009) describe the application of Bayesian approaches to increase environmental realism in population modeling, and Reckhow (1999) applies similar approaches to water-quality predictions. Their applicability to analyses of data on chemicals and to other environmental risk assessments (Clark 2005), including those for endangered species, has been recognized in the federal government (FDA 2010; Conn and Silber 2013), although they have not yet been widely adopted for chemical risk assessment. Bayesian methods reliably estimate modeled variables, and Bayesian models can readily propagate uncertainties in data (such as measurement errors) and uncertainties in model structure (such as selection of covariates and relationships among them). The models can incorporate data from multiple sources, expert knowledge, and empirical evidence about relationships among variables and about the shape of the data distributions; however, these are not required to use or run the models. Bayesian approaches are most useful during Step 3 of ESA pesticide analyses when an in-depth analysis is needed, such as when alternative pesticide-use scenarios or proposed mitigation actions might have large spatial or economic consequences.

EPA has noted that “the explicit treatment of uncertainty during problem formulation is particularly important because it will have repercussions throughout the remainder of the assessment” (EPA 1998, p. 26). For ESA Section 7 consultations on pesticide risk to listed species, it is likely that the amount of data available for producing a risk estimate will vary by species and by chemical. The risk assessor will therefore need to ascertain during problem formulation how much confidence in the risk estimate the decision-maker requires to support a decision, given the decision context. Does the decision-maker need a risk estimate with low uncertainty or is, for example, ± 25% acceptable? Decisions regarding uncertainty need to be balanced with a discussion about availability of time and resources and need to consider the extent to which uncertainties are unavoidable given likely data gaps. A quantitative analysis of expected value of information could be conducted to answer the question of whether the reduction in uncertainty warrants obtaining more information (Yokota and Thompson 2004; Runge et al. 2011; Moore and Runge 2012). However, the committee recognizes that time limitations might preclude such an analysis (Yo-

kota and Thompson 2004). The committee acknowledges the utility of a qualitative assessment and discussion between risk assessors and decision-makers at both Step 2 and Step 3 of the ESA risk-assessment process. A decision-maker will then be adequately informed about the estimated probability of an adverse effect and can make a decision about whether the proposed action is “likely to adversely affect” or can be “reasonably expected” to result in jeopardy. Decisions about the acceptable level of risk and how to manage the risk are policy decisions that are not part of the scientific analysis.

The committee recognizes that decision-makers will need to understand how to interpret and use the information on uncertainty in their decision-making. There is a great body of literature on risk management and decision-making under uncertainty that can help to guide and guard against misuses of uncertainty in decision-making (see, for example, Cropper et al. 1992, Morgan and Henrion 1992, EPA 2010, and IOM 2013).

As discussed in Chapter 1, the Services have a mandate to use the “best scientific and commercial data available” in their assessments. There is little guidance on what constitutes “best data available,” and the agencies do not appear to have formal protocols that define “best data available.” However, the following sections describe the agencies’ approaches to data collection and evaluation, and the committee provides some guidance on important data characteristics.

Scope of Data Collection and Selection

EPA (1998) indicated that a search for all available data is conducted at the start of each risk assessment and iteratively throughout the assessment to support and guide each step of the process. EPA’s primary repository for peer-reviewed toxicity studies that are publicly available is ECOTOXicology (ECOTOX; EPA 2012a). The Services and EPA agreed to use ECOTOX as the common source for data on ecotoxic effects of pesticides (EPA 2011).

Data used by EPA in pesticide risk assessments are typically derived from detailed reports of standardized studies required for pesticide registration under FIFRA; studies in peer-reviewed journals or other publications, such as reference books; and government reports and surveys. Repository databases are used if they meet data-quality standards. The Services also include anecdotal or oral information and other unpublished materials from such sources as state natural-resources agencies and natural-heritage programs, tribal governments, other federal agencies, consulting firms, contractors, and persons associated with professional organizations and institutions of higher education (59 Fed. Reg. 34275 [1994]). Accordingly, the scope of data collection by the Services appears

broader, although some of the information collected can be brief and be insufficient for independent evaluation.

Evaluation of Data Relevance and Quality

Information on pesticides and the ecology of listed species that is used in risk assessments should be both relevant and of high quality. Relevance refers to information that is consistent with its intended use. Accordingly, the information should be from studies of the species and chemicals being assessed, or there should be a strong theoretical basis for extrapolation to the species and chemicals being assessed. The information should be spatially applicable to the locations being considered and be sufficiently recent to be pertinent. For example, information on the environmental transport and fate of the specific pesticide active ingredient under review or of the class to which the pesticide belongs would be relevant to the assessment. Similarly, information on the ecology of the listed species is highly relevant and useful particularly if it has been obtained recently from the area of pesticide use. Conversely, a study that used a population census of the listed species conducted 20 years ago would not be relevant. Information that is not relevant clearly should not be used to assess risk, and the question of relevance is the first question that needs to be addressed in considering whether information should be used for a risk assessment.

The quality of the relevant information should be reviewed before it is used in a risk assessment. A critical question to answer is whether the data conform to best scientific practice. Best practice includes providing sufficient information that characterizes the data (such as who collected them, when and where they were collected, what variables were measured, and how and in what units measurements were taken), clear methods that would allow a third party to replicate the data-collection process or the analyses conducted with the data, and estimates of data accuracy or uncertainty. If sufficient information is not available, data quality is unknown, and the data should be given less prominence in the risk assessment. Accordingly, data of lower quality should not be used to nullify data of higher quality. Ideally, data are objective and unbiased, although failure to meet those requirements might not be a cause for rejection if biases are sufficiently described and clearly identified in the assessment.

EPA has a formal set of data relevance and quality criteria that are applied in selecting information for use in regulatory assessment. The EPA Science Policy Council published a set of five assessment factors for evaluating scientific and technical information on the basis of EPA practices, input from the public, and results from a workshop hosted by the National Academy of Sciences (EPA 2003). The assessment factors are intended to improve data generation, use, and dissemination in EPA and by the data-generating public. The assessment factors are applicability and utility (relevance of the information to its intended use and applicability to the current scenarios of concern), soundness (scientific validity of experimental study, survey, modeling, and data collection and adequate sup-

port for conclusions), clarity and completeness (documentation that includes underlying assumptions, study protocol and design, data accessibility, and data analysis), uncertainty and variability (quantitative and qualitative characterization, effect on conclusions, and the identification of parameter values that, if changed, would substantially affect the outcome of the model), and evaluation and review (independent verification, validation, and peer review and consistency with results of similar studies). The EPA Office of Pesticide Programs has additional guidelines for acceptance of scientific literature, as described in the documentation supporting the ECOTOX database (EPA 2012b).

FWS and NMFS do not have agency-specific guidelines on data relevance and quality. However, all federal agencies are expected to comply with the Office of Management and Budget (OMB) guidelines on objectivity, utility, and integrity of disseminated information. OMB (67 Fed. Reg. 8452 [2002]) describes those attributes as follows:

“Objectivity” focuses on the extent to which information is presented in an accurate, clear, complete and unbiased manner; and, as a matter of substance, the extent to which the information is accurate, reliable and unbiased. “Utility” refers to the usefulness of the information to the intended users. “Integrity” refers to security, such as the protection of information from unauthorized access or revision, to ensure the information is not compromised through corruption or falsification.

The Services and EPA (EPA 2002; FWS 2007) have separately published information quality guidelines (IQGs) that follow closely the government-wide OMB guidelines. Similar basic principles for achieving a scientifically credible assessment are prescribed in the IQGs from the agencies; the agencies are committed to ensuring the quality of evaluations and the transparency of information from external sources used in their disseminated assessments and actions (EPA 2003; NMFS 2005). They also recognize that a high level of transparency and scrutiny is needed for influential information that is expected to have a substantial effect on policies and decisions (EPA 2002; NMFS 2004; FWS 2007).

In the biological opinions provided, the committee was able to discern at least one approach that the Services use to evaluate relevance and quality of data. In the ESA consultation for assessing the effects of 12 organophosphates on salmonids (NMFS 2010), NMFS described and used a qualitative set of evaluation criteria. Three criteria were used to judge the relevance of the publicly available toxicity data: whether the studies were conducted on salmonids, whether they measured end points of concern, and whether they evaluated effects of exposure to the specific chemicals or structurally related chemicals. The more criteria were met, the more relevant the studies were deemed. A fourth criterion was related to data quality and had to do with whether relevant studies had substantial flaws in experimental design.

Important Data Characteristics

Data relevance and data quality clearly are primary factors in determining whether data constitute “best available data.” Several data characteristics noted in the committee’s charge and described below are related to relevance and quality and can help to determine whether data are useful for assessing the risk to listed species posed by pesticides.

Validity. Data that are used in risk assessment should be accompanied by sufficient information for repeatability, independent scientific review, and additional data analysis when needed (NRC 1995). For example, an additional analysis, such as a dose-response analysis, might be necessary to ensure accurate interpretation of the data. Data sources that lack sufficient details for an adequate scientific evaluation—such as poster presentations, abstracts, anecdotal or personal communications, and data files that contain no information on fundamental data attributes—might provide background knowledge or support an overall weight-of-evidence evaluation but should not be the sole basis for drawing assessment conclusions. Thus, although secondary information can be useful for identifying an original report, it should not be used directly in risk assessment. The original study is necessary for an independent review of accuracy, quality, and relevance. An example from the draft biological opinion on the effects of 2, 4-dichlorophenoxyacetic acid (2, 4-D) on salmonids illustrates the problems with using secondary sources. That biological opinion cited Brock et al. (2000), which attributed a value for an aquatic-community effect to a report by Boyle (1980), but the effect cited was not found in the primary source.

Availability. Many data used in pesticide risk assessment are taken from unpublished studies that are conducted to support pesticide registrations. Those studies are conducted according to well-defined protocols and prescribed good laboratory practices. The detailed reporting allows EPA scientists to evaluate study quality independently and to conduct data analysis beyond what is possible with studies published in the open literature.1 EPA’s evaluation is documented in a data evaluation record (DER), which contains information on study methods, results, and discussions. Additional data analysis or modeling is also documented. Recent DERs, in contrast with older ones, can serve as stand-alone reports based on full study reports submitted for pesticide registration. Public availability of DERs is important because the submitted studies are typically protected confidential business information (CBI) and not publicly available or readily accessible. However, other government agencies, such as NMFS and FWS, can review CBI once necessary information controls are in place and therefore provide data-quality assurance for EPA’s reported information on in-

______________________

1As noted in Chapter 1, the Services do not have the authority under the ESA to require the generation of data but instead must rely on the best data that are available. Furthermore, the ESA makes it clear that the Services are not to delay action because of a lack of data.

dustry studies. In addition, EPA has increased public access to DERs in recent years by making more information available to the general public. The committee encourages EPA to continue to share the studies with the Services, to provide sufficient details in DERs to ensure a reasonable understanding of the studies, and to make DERs readily available to the public.

Consistency. Data consistency is an important consideration in drawing scientific inferences. Apparently conflicting results from different studies should be examined with care. Different results from studies that use different species, life stages, exposure regimens, observation methods, experimental conditions, or statistics do not necessarily constitute conflicting evidence, and all might be useful in drawing conclusions. However, statistical outliers should be given particular scrutiny to verify the quality of an underlying study, particularly if they differ from all other data by orders of magnitude.

Clarity. The strengths and weaknesses of data and the reason that they were or were not used in a risk assessment should be clearly documented. Expert opinion or judgment is also used in risk assessment and is valuable especially when uncertainty is high because of data gaps. However, it is important that the assumptions or judgments be clearly described. As stated in NRC (1995), a clear presentation of expert knowledge should include the line of reasoning used and should separate facts from speculation. Similarly, adequate rationale should be given throughout the assessment for the assumptions that are made in the absence of data.

Utility. Utility clearly is related to relevance. One specific issue that has arisen regarding utility concerns the usefulness of foreign-language articles. Studies might be excluded by EPA because of a language barrier and lack of funding to obtain an English translation. For example, foreign-language reports, especially ones that are not readily available in the open literature, might be included in ECOTOX but not used in a risk assessment. If foreign-language reports are used in a risk assessment, translated versions will be needed so that the data in the reports can be subjected to the same data quality and relevance evaluation as data from studies published in English.

Peer Review. Regardless of the data criteria, it is not unusual for well-qualified risk assessors to disagree on the quality of data or on their relevance to a specific assessment. Because OMB attaches stricter requirements to discretional peer review of highly influential scientific assessments (Bolten 2004), the committee emphasizes the value of external peer review to enhance the quality, transparency, and credibility of a risk assessment.

CONCLUSIONS AND RECOMMENDATIONS

A Common Approach and Coordination among the Agencies

• Lack of a common approach has created scientific obstacles to reaching agreement between EPA and the Services during consultation.

• The risk-assessment paradigm, as reflected in the ERA process, is a scientifically credible basis of a single, unified approach for evaluating risks to listed species posed by pesticide exposure under FIFRA and the ESA.

• The committee’s recommendation is that the ERA process include the same four elements (problem formulation, exposure analysis, effects analysis, and risk characterization) at each step but that the content of each changes as the question shifts from whether the pesticide “may affect” a listed species (Step 1) to whether it is “likely to adversely affect” a listed species (Step 2) to whether the continued existence of the listed species is jeopardized (Step 3).

• The ERA process would be enhanced if it were accompanied by use of a common outline that incorporates specific elements of concern to EPA and the Services.

• Given the changing scope of the ERA process from Step 1 to Step 3, EPA and the Services should coordinate to ensure that their own technical needs are met.

• Problem formulation, conducted as part of the ERA process, could be an effective way for the agencies to coordinate and reach agreement on many of the key technical issues involved in assessing risks posed by pesticide exposure.

Uncertainty

• Risk assessments and jeopardy decisions require recognizing and analyzing uncertainty and quantitatively propagating it through any assessment so that it is clearly reflected in the eventual risk estimate.

• The agencies should adopt a probabilistic approach that allows uncertainty in exposure and effect to be explicitly recognized and then combined in forming a risk estimate.

• Although administrative and other nonscientific hurdles will need to be overcome to implement such an approach, changing uncertainty analysis from a narrative addendum to an integral part of the assessment is possible and necessary to provide realistic, objective estimates of risk.

• Decisions about acceptable levels of risk and how to manage risk are policy decisions that are not part of the scientific analysis.

Best Data Available

• The agencies do not appear to have formal protocols for defining “best data available” and appear to approach data collection and selection from different perspectives.

• To ensure that the best data available are captured, a broad data search is needed at the beginning of the process. Dates of searches and search strategies should be clearly documented to ensure transparency of the process. If a repository database is searched, its contents and scope should be described, including

criteria for data inclusion and exclusion, periodicity of updates, and quality control for data entry.

• Given that stakeholders are aware of and can provide valuable and relevant data, the committee encourages provision for their involvement at the early stage and throughout the ERA process. Stakeholder data are expected to meet the same data relevance and quality standards as all other data.

• To ensure that the best data available are used, information should first be screened for relevance and then subjected to quality review.

• The agencies should, at a minimum, subject all information to a review based on OMB criteria of “objectivity, utility and integrity.” Information sources that fail any of the criteria can be used at the discretion of the risk assessor, provided that their limitations are clearly described.

• Comparisons of all information sources with the relevance and quality attributes should be documented in the risk assessment and described in the overall characterization of uncertainties.

Aguilera, P.A., A. Fernadez, R. Fernandez, R. Rumi, and A. Salmeron. 2011. Bayesian networks in environmental modelling. Environ. Modell. Softw. 26(12):1376-1388.

Bailey, T., E. Fite, P. Mastradone, B. Montague, R. Parker, I. Sunzenauer, and J. Wolf. 1997. EFED's Summary of the SAP Review of Ecological Risk Assessment Methodologies. April 14, 1997 [online]. Available: http://www.epa.gov/oppefed1/ecorisk/ecofram/efedsum.htm [accessed Feb. 22, 2013].

Bolten, J.B. 2004. Issues of OMB’s “Final Information Quality Bulletin for Peer Review”. Memorandum for Heads of Departments and Agencies, from Joshua B. Bolten, Director, Office of Management and Budget, Washington, DC. M-05-03, December 16, 2004 [online]. Available: http://www.whitehouse.gov/sites/default/files/omb/memoranda/fy2005/m05-03.pdf [accessed Nov. 9, 2012].

Borsuk, M.E., and D.C. Lee. 2009. Stochastic population dynamic models as probability networks. Pp. 199-219 in Handbook of Ecological Modeling and Informatics, S.E. Jorgensen, T.S. Chon, and F. Recknagel, eds. Billerica, MA: WIT Press.

Boyle, T.P. 1980. Effects of the aquatic herbicide 2, 4-D DMA on the ecology of experimental ponds. Environ. Pollut. A Ecol. Biol. 21(1):35-49.

Brock, T.C.M., J. Lahr, and P.J. Van den Brink. 2000. Ecological Risk of Pesticides in Freshwater Ecosystems, Part 1. Herbicides. Alterra-Rapport 088. Alterra, Green World Research, Wageningen, The Netherlands [online]. Available: http://edepot.wur.nl/18131 [accessed Nov. 5, 2012].

Charest, S. 2002. Bayesian approaches to the precautionary principal. Duke Env. L. Policy F. 12(2):265-291.

Clark, J.S. 2005. Why environmental scientists are becoming Bayesians. Ecol. Lett. 8:2-14.

Conn, P.B., and G.K. Silber. 2013. Vessel speed restrictions reduce risk of collision-related mortality for North Atlantic right whales. Ecosphere 4(4):Art. 43.

Cropper, M., W.N. Evans, S.J. Berardi, M.M. Ducla-Soares, and P.R. Portney. 1992. The Determinants of pesticide regulation: A statistical analysis of EPA decision-making. J. Polit. Econ. 100(1):175-197.

Ellison, A.M. 1996. An introduction to Bayesian inference for ecological research and environmental decision-making. Ecol. Appl. 6(4):1036-1046.

EPA (U.S. Environmental Protection Agency). 1998. Guidelines for Ecological Risk Assessment. EPA/630/R-95/002F. Risk Assessment Forum, U.S. Environmental Protection Agency, Washington, DC. April 1998 [online]. Available: http://www.epa.gov/raf/publications/pdfs/ECOTXTBX.PDF [accessed Nov. 5, 2012].

EPA (U.S. Environmental Protection Agency). 2002. Guidelines for Ensuring and Maximizing the Quality, Objectivity, Utility, and Integrity of Information Disseminated by the Environmental Protection Agency. EPA/260R-02-008. Office of Environmental Information, U.S. Environmental Protection Agency, Washington, DC. October 2002 [online]. Available: http://www.epa.gov/quality/informationguidelines/documents/EPA_InfoQualityGuidelines.pdf [accessed Nov. 5, 2012].

EPA (U.S. Environmental Protection Agency). 2003. A Summary of General Assessment Factors for Evaluating the Quality of Scientific and Technical Information. EPA 100/B-03/001. Science Policy Council, U.S. Environmental Protection Agency, Washington DC. June 2003 [online]. Available: http://www.epa.gov/stpc/pdfs/assess2.pdf [accessed Nov. 5, 2012].

EPA (U.S. Environmental Protection Agency). 2004. Overview of the Ecological Risk Assessment Process in the Office of Pesticide Programs, U.S. Environmental Protection Agency: Endangered and Threatened Species Effects Determinations. Office of Prevention, Pesticides and Toxic Substances, Office of Pesticide Programs, U.S. Environmental Protection Agency, Washington, DC. January 23, 2004 [online]. Available: http://www.epa.gov/espp/consultation/ecorisk-overview.pdf [accessed Nov. 5, 2012].

EPA (U.S. Environmental Protection Agency). 2010. Integrating Ecological Assessment and Decision-Making at EPA: A Path Forward. EPA/100/R-10/004. Risk Assessment Forum, U.S. Environmental Protection Agency, Washington, DC. December 2010 [online]. Available: http://www.epa.gov/raf/publications/pdfs/integrating-ecolog-assess-decision-making.pdf [accessed Mar.21, 2013].

EPA (U.S. Environmental Protection Agency). 2011. Procedures for Screening, Reviewing, and Using Published Open Literature Toxicity Data in Ecological Risk Assessments. Office of Pesticide Programs, U.S. Environmental Protection Agency. May 9, 2011 [online]. Available: http://www.epa.gov/pesticides/science/efed/policy_guidance/team_authors/endangered_species_reregistration_workgroup/esa_evaluation_open_literature.htm#guidance [accessed Nov. 5, 2012].

EPA (U.S. Environmental Protection Agency). 2012a. ECOTOX Database Release 4.0 [online]. Available: http://cfpub.epa.gov/ecotox/ [accessed Nov. 12, 2012].

EPA (U.S. Environmental Protection Agency). 2012b. ECOTOX Limitations. ECOTOX Database Release 4.0 [online]. Available: http://cfpub.epa.gov/ecotox/help.cfm?help_id=limitations [accessed Nov. 12, 2012].

FDA (Food and Drug Administration). 2010. Guidance for the Use of Bayesian Statistics in Medical Device Clinical Trials. Guidance for Industry and FDA Stuff. Food and Drug Administration, Center for Devices and Radiological Health, Division of Biostatistics, Washington DC. February 5, 2010 [online]. Available: http://www.fda.gov/downloads/MedicalDevices/DeviceRegulationandGuidance/GuidanceDocuments/ucm071121.pdf [accessed Feb.33, 2013].

FWS (U.S. Fish and Wildlife Service). 2007. Information Quality Guidelines. U.S. Fish and Wildlife Service [online]. Available: http://www.fws.gov/informationquality/topics/IQAguidelines-final82307.pdf [accessed Nov. 5, 2012].

FWS/NMFS (U.S. Fish and Wildlife Service and National Marine Fisheries Service). 1998. Endangered Species Consultation Handbook: Procedures for Conducting Consultation and Conference Activities Under Section 7 of the Endangered Species Act. U.S. Fish and Wildlife Service and National Marine Fisheries Service, Washington, DC [online]. Available: http://sero.nmfs.noaa.gov/pr/esa/pdf/Sec%207%20Handbook.pdf [accessed Nov. 5, 2012].

IOM (Institute of Medicine). 2013. Environmental Decisions in the Face of Uncertainty. Washington, DC: National Academies Press.

Link, W.A., and R.J. Barker. 2010. Bayesian Inference: With Ecological Applications. New York: Academic Press.

McCarthy, M.A. 2007. Bayesian Methods for Ecology. New York: Cambridge University Press.

Moore, J.L., and M.C. Runge. 2012. Combining structured decision making and value-of-information analyses to identify robust management strategies. Conserv. Biol. 26(5):810-820.

Morgan, M.G., and M. Henrion. 1992. Uncertainty: A Guide to Dealing with Uncertainty in Quantitative Risk and Policy Analysis. Cambridge: Cambridge University Press.

NMFS (National Marine Fisheries Service). 2004. Section 515 Pre-dissemination Review and Documentation Guidelines. Data Quality Act. National Marine Fisheries Service Policy Directive PD 04-108-03-2004. Renewed July 27, 2012 [online]. Available: http://www.nmfs.noaa.gov/op/pds/documents/04/108/04-108-03.pdf [Nov. 12, 2012].

NMFS (National Marine Fisheries Service). 2005. Policy on the Data Quality Act. Policy Directive PD 04-108, December 30, 2005. Renewed July 27, 2012 [online]. Available: http://www.nmfs.noaa.gov/op/pds/documents/04/04-108.pdf [accessed Nov. 12, 2012].

NMFS (National Marine Fisheries Service). 2010. Endangered Species Act Section 7 Consultation, Biological Opinion - Environmental Protection Agency Registration of Pesticides Containing Azinphos methyl, Bensulide, Dimethoate, Disulfoton, Ethoprop, Fenamiphos, Naled, Methamidophos, Methidathion, Methyl parathion, Phorate and Phosmet. August 31, 2010 [online]. Available: http://www.nmfs.noaa.gov/pr/pdfs/final_batch_3_opinion.pdf [accessed Nov. 5, 2012].

NRC (National Research Council). 1983. Risk Assessment in the Federal Government: Managing the Process. Washington, DC: National Academy Press.

NRC (National Research Council). 1995. Science and the Endangered Species Act. Washington, DC: National Academy Press.

NRC (National Research Council). 2009. Science and Decisions: Advancing Risk Assessment. Washington, DC: National Academies Press.

Odenkirchen, E. 2003. Evaluation of OPP’s Terrestrial Investigation Model Software and Programming to Meet Technical/Regulatory Challenges. Presentation at EUropean FRamework for Probabilistic Risk Assessment of the Environmental Impacts of Pesticides Workshop, June 5-8, 2003, Bilthoven, the Netherlands [online]. Available: http://www.epa.gov/oppefed1/ecorisk/presentations/eufram_overview.htm [accessed Feb. 22, 2013].

Reckhow, K.H. 1999. Water quality prediction and probability network models. Can. J. Fish. Aquat. Sci. 56:1150-1158.

Runge, M.C., S.J. Converse, and J.E. Lyons. 2011. Which uncertainty? Using expert elicitation and expected value of information to design an adaptive program. Biol. Conserv. 144(4):1214-1223.Warren-Hicks, B., and A. Hart. 2010. Application of Uncertainty Analysis to Ecological Risks of Pesticides. Boca Raton: CRC Press.

Suter II, G.W. 2007. Ecological Risk Assessment, 2nd Ed. Boca Raton, FL: CRC Press. 643 pp.

Warren-Hicks, W.J., and A Hart, eds. 2010. Application of Uncertainty Analysis to Ecological Risks of Pesticides. Pensacola, FL: SETAC Press.

Williams, S., and W. Hogarth. 2004. Services Evaluation U.S. EPA’s Risk Assessment Process. Letter to Susan B. Hazen, Principal Deputy Assistant Administrator, Office of Prevention, Pesticides and Toxic Substances, U.S. Environmental Protection Agency, from Steve Williams, Director, U.S. Fish and Wildlife Service, and William Hogarth, Assistant Administrator, National Marine Fisheries Service. January 24, 2004 [online]. Available: http://www.epa.gov/oppfead1/endanger/consultation/evaluation.pdf [accessed Nov. 12, 2012].

Yokota, F., and K.M. Thompson. 2004. Value of information analysis in environmental health risk management decisions: Past, present, and future. Risk Anal. 24 (3):635-650.