Increasingly autonomous (IA) systems will require research and technology development in many areas. In some cases, that research has been and will continue to be motivated for applications other than civil aviation, and there is no need for aviation-specific research in those areas, which include computing, digital communications, sensors, and open-source hardware and software.

The committee identified a set of high-priority research projects that, if implemented, would address technological issues directly related to the development and application of IA systems in civil aviation. Many of the issues addressed by these projects are related, and many of the research areas included in each project touch on issues of interest to one or more of the other projects. Thus, progress in autonomy research as a whole would be achieved most efficiently by proceeding in a coordinated fashion across multiple areas. Because the identified high-priority projects and the barriers they must overcome are so challenging, they will require government, industry, and academia to perform rigorous and protracted research.

The committee considered a variety of flight crew interaction scenarios ranging from the existing flight deck and remote pilot options that are available today to optionally piloted, unmanned, and unattended (autonomous) operations. Because progress in research tends to be incremental, the committee anticipates a progressive introduction of IA systems into air traffic management (ATM) systems, crewed aircraft, and unmanned aircraft systems (UAS). Similarly, the progressive introduction of UAS operations into shared airspace will also be paced by progress in addressing the high-priority research projects.

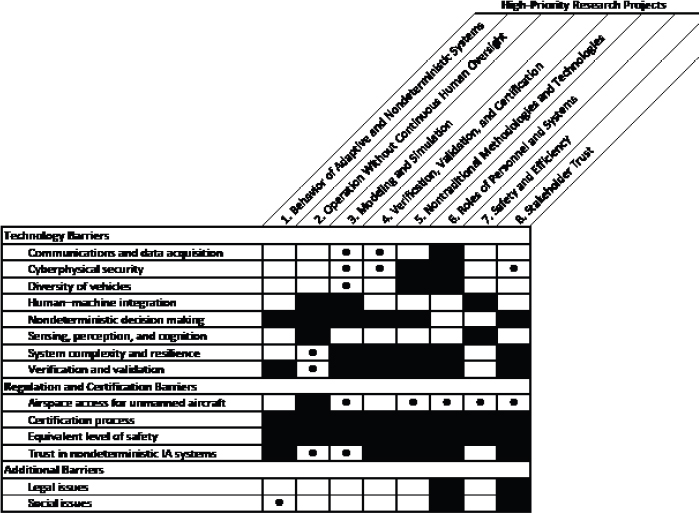

Table 4.1 lists the eight high-priority research projects identified by the committee and the barriers addressed by each. A multistep process was used to converge on the eight projects. Committee members were initially briefed by experts in the field and held informal discussions related to these briefings. Each committee member then individually created a list of top research priorities. The compiled list was distributed to the group, organized, and discussed. The committee reached consensus that all of the eight research areas listed in Table 4.1 are a high priority.

The committee could have ranked the projects based on how effectively they improve individual elements of the Observe, Orient, Decide, and Act (OODA) loop or on the extent to which each project contributes to realizing the potential benefits of IA systems described in Chapter 2. However, the contributions of many or most of the research projects are not limited to individual elements of the OODA loop or to the individual benefits that IA systems can provide.

Table 4.1 Mapping of High-Priority Research Projects to the Barriers

NOTE: Black squares indicate that a research project will make a vital contribution to overcoming the corresponding barrier. Circles indicate that the research project will make an important but less vital contribution. Empty cells indicate that the research project will make at best a minor contribution.

Alternatively, the committee could have decided to rank the research projects based on how many barriers they address. However, as the shown in the table, all eight projects address multiple barriers, and the collective research agenda addresses all barriers to some extent. Also, outputs from all of the research projects are ultimately required to allow introducing advanced IA systems into the National Airspace System (NAS).

The committee concluded, accordingly, that level of difficulty and degree of urgency would be the most suitable bases for categorizing the high-priority research projects, because it is those criteria that are most directly related to (1) the extent to which the current state of the art must be advanced; (2) the time and resources needed to make those advances; and (3) the time-phased application of research project results in the overall scheme of developing ever-more-capable IA systems, bringing them to market, and deploying them operationally.

Based on the committee’s perception of level of difficulty and urgency, research projects 1 through 4 were determined to be particularly urgent and difficult to accomplish. Projects 5 through 8 are also to be given high priority, but they were determined to be less urgent and/or less difficult. The committee did not rank the research projects within each group of four; instead, they are listed alphabetically.

MOST URGENT AND MOST DIFFICULT RESEARCH PROJECTS

This section describes the four most urgent and most difficult high-priority research projects. The first project addresses the fundamental need to better characterize and bound the behavior of adaptive/nondeterministic systems. The second describes research in system architectures and technologies that would enable increasingly sophisticated IA systems and unmanned aircraft to operate for extended periods of time without real-time human cognizance and control. The third calls for the development of the theoretical basis and methodologies for using modeling and simulation to accelerate the development and maturation of advanced IA systems and aircraft. The fourth calls for research to develop standards and processes for the verification, validation, and certification of IA systems. For each research project, background information is followed by a description of specific research areas and how they would support the development of IA systems for civil aviation.

Behavior of Adaptive/Nondeterministic Systems

Develop Methodologies to Characterize and Bound the Behavior of Adaptive/Nondeterministic Systems over Their Complete Life Cycle

Adaptive/nondeterministic properties will be integral to many advanced IA systems, but they will create challenges for assessing and setting the limits of their resulting behaviors. Advanced IA systems for civil aviation operate in an uncertain environment where physical disturbances, such as wind gusts, are often modeled using probabilistic models. These IA systems may rely on distributed sensor systems that have noise with stochastic properties such as uncertain biases and random drifts over time and under varying environmental conditions. Adaptive/nondeterministic IA systems take advantage of evolving conditions and past experience to improve their performance. As these IA systems take over more functions traditionally performed by humans, there will be a growing need to incorporate autonomous monitoring and other safeguards to ensure continued appropriate operational behavior.

There is a tension between the benefits of incorporating software with adaptive/nondeterministic properties in IA systems and the requirement to test such software for safe and assured operation. Research is needed to develop new methods and tools to address the inherent uncertainties in airspace system operations and thereby assure the safety of complex adaptive/nondeterministic IA systems.

Specific tasks to be carried out by this research project include the following:

- Develop mathematical models for describing adaptive/nondeterministic processes as applied to humans and machines. New mathematical models for describing adaptive, nondeterministic, and learning processes as applied to humans and machines are desirable to support the design and testing challenges of IA systems. Current approaches often rely on linearized models with simple normal probability distributions to characterize uncertainty. These simplified representations often do not adequately capture the complexities of IA systems. More complex models create challenges to the design community as their tools commonly assume the same linearized, normally distributed characterizations. The potential behaviors of complex and adaptive/nondeterministic systems operating in environments with many sources of random inputs can quickly overwhelm the conventional tools used in exhaustive deterministic simulation testing. The desired mathematical models would support the goals for effective testing over the wide range of operational conditions as well as support the community that designs algorithms with the desired performance and robustness properties.

- Develop performance criteria, such as stability, robustness, and resilience, for the analysis and synthesis of adaptive/nondeterministic behaviors. Performance criteria for such systems, including operational mission envelopes, stability margins, robustness in the face of uncertainty, and resilience to unanticipated events, are needed so that both the design and test communities can effectively account for adaptive/nondeterministic behaviors. These performance criteria would apply and scale to various levels and applications of IA systems, including basic flight performance, onboard aircraft operational decision making, and ATM. Tools to

-

assess the trade-offs among these criteria are needed along with technologies to remove today’s constraints and allow the expansion of performance simultaneously across multiple dimensions.

- Develop methodologies beyond input-output testing for characterizing the behavior of IA systems. Testing of aircraft avionics and software is often performed today with a large set of test scripts intended to cover the wide range of expected inputs and anticipated operational configurations. The resulting output response behaviors are evaluated for acceptability. The rapid expansion of computational capabilities from advanced processors and cloud computing, along with applications of experimental techniques, is not sufficient to address the exponential growth in potential input-output behaviors and system configurations for complex IA systems. Therefore new methodologies beyond input-output testing for characterizing the behavior of IA systems are needed.

- Determine the roles that humans play in limiting the behavior of adaptive/nondeterministic systems and how IA systems can take over those roles. There is an evolving trade-off in IA systems between the functions performed by humans and by machines. Humans play a key role in monitoring and limiting the behavior of systems as well as in helping IA systems overcome their own limitations, making them more robust in unforeseen situations. Research is needed to identify the roles of humans and to learn how to effectively and safely transition those roles to these systems.

Research into the behavior of adaptive/nondeterministic systems that takes into account the needs of both the design and test communities can have a meaningful effect on advanced IA systems operating in civil airspace.

Operation Without Continuous Human Oversight

Develop the System Architectures and Technologies That Would Enable Increasingly Sophisticated IA Systems and Unmanned Aircraft to Operate for Extended Periods of Time Without Real-Time Human Cognizance and Control

Crewed aircraft have systems with varying levels of automation that operate without continuous human oversight. Even so, pilots are expected to maintain continuous cognizance and control over the aircraft as a whole. Advanced IA systems could allow unmanned aircraft to operate for extended periods of time without human operators to monitor, supervise, and/or directly intervene in the operation of those systems in real time. Eliminating the need for continuous human cognizance and control could achieve multiple objectives:

- Enhance system performance by better matching the roles of humans and machines to their respective strengths. For example, repetitive scans of instruments and the environment might be done by IA systems that enable operators to focus more on task- or mission-level decision making. This capability could help maintain the attentiveness of the pilots or operators of crewed aircraft and UAS, and it could also be used to better manage the workload of a single pilot, making the operations safer.

- Increase the number of aircraft that can be managed by a single human operator and increase the capacity of the civil aviation system. IA systems capable of safely functioning without continuous human cognizance and control will support intentional and potentially long-term gaps in supervision. Such gaps would need to be acceptable in order to allow single operators to manage multiple aircraft. IA systems could increase capacity and throughput by offering capabilities such as precise and predictable flight plan following, enhanced separation assurance and deconfliction in airspace with positive control,1 and detect-and-avoid.2

- Enhance the ability of unmanned aircraft to perform dangerous and/or long-endurance missions that are beyond the abilities of humans. An example of a dangerous mission would be disaster response at the site of a damaged nuclear reactor, where acquiring aerial data (e.g., images and atmospheric chemistry) would

___________________

1 R. Chipalkatty, P. Twu, A. Rahmani, and M. Egerstadt, 2012, Merging and spacing of heterogenerous aircraft in support of NextGen, Journal of Guidance, Control, and Dynamics 35(5): 1637-1646.

2 X. Prats, L. Delgado, J. Ramírez, P. Royo, and E. Pastor, 2012, Requirements, issues, and challenges for sense and avoid in unmanned aircraft systems, Journal of Aircraft 49(3): 677-687.

-

be critical but too hazardous for humans. An example of a long-endurance mission would be polar climate monitoring by recording atmospheric temperature and winds as well as surface ice extent and movement.

- Facilitate new unmanned aircraft operations where continuous human cognizance and control would not be cost-effective. For example, unmanned aircraft supporting large-scale agriculture operations would require long-range flights that go beyond the line of sight of the operator and that collect data overnight (when other farming operations are shut down). In other markets, a host of small unmanned aircraft applications could become profitable if they did not need to be continuously supervised by a human operator.

Specific tasks to be carried out by this research project include the following:

- Investigate human roles, including temporal requirements for supervision, as a function of the mission, capabilities, and limitations of IA systems. This includes a variety of IA system operational scenarios ranging from full-time human supervision to potentially no supervision. Risk analyses that consider mission, vehicle type, and environment (airspace and ground) could identify operational scenarios in which continuous cognizance and control are not required. The requirements for supervision might be periodic (e.g., an operator might be required to regain cognizance and control once every 15 minutes), event-based (e.g., an operator is alerted whenever a problem is detected by the IA system), or both. It will be important to understand how IA can appropriately alert operators and help them regain situational awareness when requests for operator input are event-based.

- Develop IA systems that respond safely to the degradation or failure of aircraft systems. Onboard hardware and software failures can pose a serious risk, particularly when safety-critical systems provide erroneous data or become unavailable. Performance degradation—due, for example, to structural damage—also increases risk. Autopilots incorporated in current flight management systems disengage when an exception condition is triggered by a degradation or failure. This sudden and potentially unexpected event can place sudden demands on operators, in terms of workload, skill, and decision making, that are beyond reasonable levels. IA systems can address many of the challenges associated with degradation or failure conditions. For example, IA systems could recognize the extent of a failure, determine a course of action, and execute a safe recovery.

- Develop IA systems to identify and mitigate high-risk situations induced by the mission, the environment, or other elements of the NAS. Examples include loss of separation from other aircraft or terrain and flight into adverse weather conditions. Mitigation could involve warning and informing human supervisors about risk factors as well as engaging IA systems to rapidly mitigate the risk, for example, by recovering stable flight in loss-of-control situations. Key IA technologies contributing to this research include diagnostic, prognostic, and decision-making systems that may be adaptive and/or nondeterministic. Advanced sensing (e.g., onboard or ground-based radar), database access (e.g., weather), and effective communication with operators and ATM systems could enhance onboard IA capabilities.

- Develop detect-and-avoid IA systems that do not need continuous human oversight. IA detect-and-avoid systems can reduce the possibility of collision or loss of separation for crewed and unmanned aircraft. The ATM system, supplemented by systems such as the Traffic Alert and Collision Avoidance System (TCAS)3 and Automatic Dependent Surveillance-Broadcast (ADS-B) provide human operators with data that may be critical in an emergency to assure separation and to maneuver aircraft to avoid collision if separation is lost. Relying on detect-and-avoid systems that operate without human supervision would require that the IA system be sufficiently reliable and secure to function correctly in all shared airspace. Redundancy, for example, through ADS-B and radar systems, will be key to ensuring sufficient reliability.4 Aircraft performance and maneuverability differences will need to be accommodated in detect-and-avoid

___________________

3 TCAS is an airborne system that alerts pilots to conflicting air traffic and provides guidance on maneuvers to avoid collisions.

4 S. Cook, A. Lacher, D. Maroney, and A. Zeitlin, 2012, UAS sense and avoid development—The challenges of technology, standards, and certification, Proceedings of the AIAA Aerospace Sciences Meeting, January 2012, Nashville, Tenn., Paper AIAA-2012-0959, Reston, Va.: American Institute of Aeronautics and Astronautics.

-

solutions. For example, detect-and-avoid systems should be able to handle deconfliction scenarios with other aircraft that are not equipped with ADS-B and with aircraft whose pilots who respond inappropriately to traffic alerts.

- Investigate airspace structures that could support UAS operations in confined or pre-approved operating areas using methods such as geofencing. Aircraft performing missions such as agricultural or pipeline inspections typically operate over unpopulated areas at low altitudes. Such missions pose little risk to people or property on the ground but may pose risk to other aircraft. IA systems for geofencing can ensure a vehicle operates within a given airspace volume designated by constraints on altitude ceiling, latitude, longitude, and, in some cases, time. Such a geofencing system will likely need to be matured and certified even if other systems on the geofenced aircraft are not. For geofencing to be useful, IA systems would also need to support dynamic allocation of (low-altitude) airspace to geofenced flight operations. Such IA systems can reduce collision risk for both geofenced and legacy aircraft.

The Department of Defense (DOD) has invested substantial effort in technologies related to this research project. Areas of interest include autopilots for fighters that can execute and recover from maneuvers that may induce pilots to lose consciousness, unmanned aircraft that can continue a mission or return home following loss of the communications link to ground operators, and UAS ground stations from which one operator is responsible for multiple unmanned aircraft. NASA has studied IA systems for airspace management and loss-of-control prevention and has successfully demonstrated that unattended operation of orbiting spacecraft can reduce mission costs. Numerous researchers and commercial vendors are developing and demonstrating IA systems with the potential to eliminate the requirement for continuous human cognizance and control for robotic system applications, including small unmanned aircraft and automotive applications. DOD, NASA, and industry have the expertise to pursue efforts in this research direction. The Federal Aviation Administration (FAA), which has historically presumed continuous human cognizance and control, would need to expand its expertise and engage the community in this research task to efficiently establish standards and policies to authorize the operation of unmanned aircraft without continuous human oversight.

Develop the Theoretical Basis and Methodologies for Using Modeling and Simulation to Accelerate the Development and Maturation of Advanced IA Systems and Aircraft

Modeling and simulation capabilities will play an important role in the development, implementation, and evolution of IA systems in civil aviation because they enable researchers, designers, regulators, and operators to understand how something performs without real-life testing. Civil aviation has leveraged modeling and simulation throughout its history. Examples include computational fluid dynamic models and human-in-the-loop simulations to test operational concepts and decision support tools. Modeling and simulation enable insights into system performance without necessarily incurring the expense and risk associated with actual operations. Furthermore, computer simulations may be able to test the performance of some IA systems in literally millions of scenarios in a short time to produce a statistical basis for determining safety risks and establishing the confidence of IA system performance.

Researchers and designers are likely to make use of modeling and simulation capabilities to evaluate design alternatives. Developers of IA systems will be able to train adaptive (i.e., learning) algorithms through repeated simulated operations. Such capabilities could also be used to train human operators.

Modeling and simulation capabilities will be a tool leveraged in addressing several of the other projects identified in this report, such as characterizing the behavior of adaptive/nondeterministic systems; generating the data and artifacts needed for VV&C; generating data for determining how IA systems could enhance the safety and efficiency of the civil aviation system; providing a mechanism to evaluate stakeholder roles and responsibilities and human–machine interfaces of IA systems; and producing data to inform rulemaking and policy changes.

Specific tasks to be carried out by this research project include the following:

- Develop theories and methodologies that will enable modeling and simulation to serve as embedded components within adaptive/nondeterministic systems. Modeling and simulation capabilities are likely to be embedded as parts of adaptive/nondeterministic IA systems. An introspective system would be capable of passing judgment on its own performance. This would require the ability to model its own behavior and predict the outcome in a variety of environmental circumstances in which it is intended to act.

- Develop theories and methodologies for using modeling and simulation to coach adaptive IA systems and human operators during training exercises. Many IA systems will have adaptive or learning algorithms as components of the system. Adaptive algorithms evolve internal parameters as a function of historical performance. Modeling and simulation could be used to help evolve these internal parameters by creating a virtual operating environment enabling literally millions of scenarios to be experienced by the IA systems and their associated adaptive algorithms. The simulated scenarios and resulting performance would serve as feedback to the adaptive algorithm, enabling it to learn and improve without actual operations.

- Develop theories and methodologies for using modeling and simulation to create trust and confidence in the performance of IA systems. Modeling and simulation capabilities could be used to generate performance data and demonstrate the behavior of IA systems over a wide range of operational scenarios. Evaluations will need to be conducted in a manner that models the environmental circumstances in which the IA system is intended to operate. These performance data will be used to help create trust and confidence in the performance of IA systems. In some instances, modeling and simulation of virtual environments could be linked with IA systems operating in the real world.

- Develop theories and methodologies for using modeling and simulation to assist with accident and incident investigations associated with IA systems. Modeling and simulation capabilities can also be a forensic tool for analysts trying to understand the behavior of IA systems when investigating the factors that may have contributed to an accident, incident, or other anomaly. To assist analysts, IA systems will need to be instrumented to collect operational data for subsequent analysis. A functional equivalent of flight data recorders may need to be devised to understand what the IA system might have been “thinking” when making certain decisions or taking specific actions.

- Develop theories and methodologies for using modeling and simulation to assess the robustness and resiliency of IA systems to intentional and unintentional cybersecurity vulnerabilities. By their very nature, IA systems are complex cybersystems that may be vulnerable to intentional cyberattacks or unintentional combinations of circumstances. Modeling and simulation can create virtual environments to evaluate how well the system will continue to perform in a variety of circumstances.

- Develop theories and methodologies for using modeling and simulation to perform comparative safety risk analyses of IA systems. IA systems will be used to supplement or replace existing mechanisms in the current aviation system. Their use will introduce new hazards even as they eliminate or mitigate existing hazards. Modeling and simulation tools will be needed to assist the aviation community in comparing the risks of continuing to operate with the legacy systems on the one hand and employing the IA systems on the other.

- Create and regularly update standardized interfaces and processes for developing modeling and simulation components for eventual integration. Monolithic modeling and simulation efforts intended to develop capabilities that can “do it all” and answer any and all questions tend to be ineffective owing to limitations on access and availability; the higher cost of creating, employing, and maintaining them; the complexity of application, which constrains their use; and the centralization of development risks. Rather, the committee envisions the creation of a distributed suite of modeling and simulation modules developed by disparate organizations with the ability to be interconnected or networked as appropriate. To ensure that these systems can be interconnected, modeling and simulation capabilities will need to meet community standards, which themselves will need to be established and periodically reviewed and updated.

- Develop standardized modules for common elements of the future system, such as aircraft performance, airspace, environmental circumstances, and human performance. To reduce the cost of research, development, and analysis, reuse of common modeling elements for the future system could be encouraged by

-

developing them in a manner that allows them to be integrated through established standards. These elements also need to be readily accessible. A mechanism could be established that would allow organizations involved with researching and developing IA systems to share modeling components. This would facilitate reuse of previously developed components, saving time and resources.

- Develop standards and methodologies for accrediting IA models and simulations. Given the importance of modeling and simulation capabilities to the creation, evaluation, and evolution of IA systems, mechanisms will be needed to ensure that these capabilities perform as intended. Just as computational fluid dynamic models are validated with wind tunnel tests, and wind tunnels are validated with flight tests, a means to validate the modeling and simulation capabilities for IA systems will be needed.

Verification, Validation, and Certification

Develop Standards and Processes for the Verification, Validation, and Certification of IA Systems and Determine Their Implications for Design

The high levels of safety achieved in the operation of the NAS largely reflect the formal requirements imposed by the FAA for VV&C of hardware and software and the certification of personnel as a condition for entry into the system. These processes have evolved over many decades and represent the cumulative experience of all elements of civil aviation—manufacturers, regulators, pilots, controllers, other operators—in the operation of that system. Although viewed by some as unnecessarily cumbersome and expensive, VV&C processes are critical to the continued safe operation of the NAS.

Existing VV&C standards and processes are inadequate for the development and regulation of advanced IA systems with attributes such as nondeterministic outcomes, emergent behaviors, vehicle teaming, and software and/or system changes arising from machine learning. Current practice involves a comprehensive examination of all system states in all known conditions. This approach is neither practical nor feasible from the standpoints of timeliness and affordability for adaptive/nondeterministic IA systems. Fixed-logic models that contain closed-world assumptions, where all system interactions are predetermined and known, are unable to account for unplanned events falling outside the conditions that were considered in the reasoning process. Given the inevitable uncertainty of the real-world environment, current safety methods rely heavily on the human operator to mitigate system anomalies and unanticipated events. With IA systems, a portion of this responsibility would shift to the IA system.

Specific tasks to be carried out by this research project include the following:

• Characterize and define requirements for intelligent software and systems. The issue of trust resides in trust verification and trust validation5 for both the software-based “intelligence” and the systems in which it is embedded. The ability of such systems to function acceptably in real-world conditions requires the development of trust in the IA system. Thus, it will be important to identify and characterize the attributes, characteristics, and metrics required to enable trust verification of the intelligent software used by advanced IA systems through interaction with testers and/or examiners in a manner analogous to how human performance is assessed in civil airspace today.

• Improve the fidelity of the VV&C test environment. To ensure that the VV&C processes for IA systems can substantiate the intended requirements to effectively manage unplanned events, novel new verification and validation (V&V) tools, criteria, and evaluation methodologies need to be developed, including representative test cases and trust metrics that fully identify and characterize the capabilities of IA systems. This will require significant research in V&V methodologies, focusing on the full range of uncertain behaviors and environmental conditions expected to be encountered in the airspace system. Stress testing of this underlying IA system logic will assess the extent to which nominal, off-nominal, and even unanticipated

___________________

5 Trust verification is the process of assuring that the intelligent software of an IA system is trusted to perform as expected in the tasks, missions, and environments where it is intended to be utilized. Trust validation is the process of assuring that the system as a whole (hardware, software, and human operator) is trusted to perform as expected in the tasks, missions, and environments where it is intended to be utilized.

cases can be accommodated. Confronting the full IA system with as-yet-to-be-experienced situations in realistic scenarios (e.g., detecting taxiing aircraft on a runway during final approach) is key to identifying shortcomings in the capabilities of an advanced IA system. Using objective measures within this evaluation process is intended to lead to greater predictability and trust in the logic of the IA system. To maintain an equivalent level of safety, it is incumbent on the developers to assure that IA systems have undergone these rigorous V&V processes, including safety risk assessments. Mission diversity and complexity create design challenges, because the decision making, sensing, and experience level required may vary significantly from mission to mission. This complexity and diversity will require the system to be completely reconfigurable to ensure safe and successful mission execution, creating challenges for the VV&C process. Elements of this research include the following:

—Integration of modeled and actual subsystems, such as sensors, reasoning algorithms, hardware components, and human operators.

—Simulation of low- and high-fidelity tests.

—Research into VV&C processes for interoperability frameworks that allow rapidly configurable trust verification and trust validation.

—Investigation of the impacts of system- and mission-level disruptions in the human-hardware-software system, such as the loss of Global Positioning System inputs, control links, and sensors.

• Develop, assess, and propose new certification standards. In addition to the development of rigorous new V&V processes, new certification standards and practices will need to be written with guidance for the regulatory authorities on understanding how IA systems function and what it will take to establish a means of compliance with the yet-to-be-established certification processes for advanced IA systems. Once certified, it may be necessary to continually monitor IA system compliance with requirements and to assess the performance of nondeterministic IA systems as they learn and adapt. Elements of this research include the following:

—Establishment of criteria and standards uniquely focused on the emergent behaviors of nondeterministic IA systems and approaches for assuring continual adherence to these standards.

—Identification of critical pass-fail boundaries that can be used to assure safe operation of IA systems for different mission sets in civil airspace.

—Exploration of novel certification approaches that would take place concurrently with the design and development of advanced IA systems, as well as novel techniques to enable continuous retest of nondeterministic IA systems.

• Define new design requirements and methodologies for IA systems. In the course of setting a clear path to certification and eventual deployment, unique design requirements could be created for IA systems so that they can interoperate with other elements of the NAS. Emergent behaviors could lead to fundamental changes to the design methodologies and design characteristics required of the IA systems. For example, to understand and accept an IA system’s actions as reasonable and predictable, it will be critical to understand the underlying logic of the decision-making process. Understanding the logic enables the stakeholders within the airspace to infer intent, which adds to the system’s predictability, credibility, and trust. New design frameworks need to address mission diversity and complexity while simultaneously simplifying the VV&C process for IA systems. Elements of this research include the following:

—Investigation of new and agile system design approaches and methodologies to enable integration into civil airspace and trust in IA systems.

—Enabling transparency for the rationale and decision making of an IA system for given situations.

—Development of high-level intelligent software tools to reveal an IA system’s underlying logic in situ and, if required, alter it.

—Creation of design frameworks (interoperability, complex networks, plug and play, and so on) that address mission complexity and diversity while simplifying VV&C processes.

• Understand the impact that airspace system complexity has on IA system design and on VV&C. The effectiveness of IA systems will depend significantly on how they interact with the NAS. Real-world operational

issues such as system latency, degraded sensor performance, and intermittency of communications will be amplified by mission and environmental conditions. Elements of this research include the following:

—Developing design methodologies that effectively and comprehensively address IA system requirements over a broad range of intended operating conditions, encompassing geographical complexity (e.g., rural versus urban), mission complexity (e.g., single vehicles used for agriculture versus multiple high-precision aircraft), and environmental changes (e.g., weather anomalies).

—Developing the ability to leverage the maturation of IA systems over time with regard to airspace complexity, such as acquisition of experiential knowledge of operations at diverse classes of civil airspace and analysis of incidents, accidents, and other anomalies involving IA systems.

• Develop VV&C methods for products created using nontraditional methodologies and technologies. Some new civil aviation products will be developed using nontraditional methodologies and technologies (see the discussion below of the research project of the same name). The deployment and operation of products such as consumer electronics provide large amounts of user data that can be used for validation and verification when the technology is applied to civil aviation. Although the information generated from deployment in one application or industry is not complete, it could nevertheless be considered in the overall certification process. Furthermore, unconventional techniques such as crowd sourcing6 can increase levels and volumes of testing, including the diversity and uniqueness of test scenarios that could not or would not be achieved by traditional methods. These or similar test techniques might be used in conjunction with more traditional test methodologies to accelerate the acceptance process.

ADDITIONAL HIGH-PRIORITY RESEARCH PROJECTS

This section describes four additional high-priority research projects. They are distinguished from the four already discussed by their lower degree of urgency and/or difficulty. The first of these final four research projects would develop methodologies for accepting technologies not traditionally used in civil aviation in IA systems. The second focuses on how the roles of key personnel and systems, as well as human–machine interfaces, should evolve to enable the operation of advanced IA systems. The third would analyze how IA systems could enhance the safety and efficiency of civil aviation. The fourth would investigate processes to engender broad stakeholder trust in IA systems for civil aviation. For each research project, background information is followed by a description of specific research areas and how they would support the development of IA systems for civil aviation.

Nontraditional Methodologies and Technologies

Develop Methodologies for Accepting Technologies Not Traditionally Used in Civil Aviation (e.g., Open-Source Software and Consumer Electronic Products) in IA Systems

Many key elements of IA systems have their roots in the commercial world of information technology, which has long tended toward increasing capability at decreasing cost. The same cannot be said of aircraft avionics. Civil aviation in general and IA systems in particular can benefit directly and indirectly from a broad variety of technologies developed through other nontraditional methods in areas that include low-cost, high-capability computing systems; digital communications; miniaturized sensor technology; position, navigation, and timing systems; open-source hardware and software; mobile communications and computing devices; and small unmanned aircraft developed for personal or hobbyist use. Furthermore, IA technologies have the potential to spawn new nontraditional uses, applications, and industries. Methodologies are needed that would enable IA systems that incorporate these nontraditional technologies to be integrated in the NAS.

Enabling the acceptance of nontraditional technologies, methods, and uses can have a variety of immediate and future benefits. For example, the large and growing community of hobbyists who work on UAS is currently

___________________

6 Crowd sourcing relies on the general public to contribute funds or labor to a project, most commonly via the Internet and without compensation.

building and operating such devices for personal use. These could be but often are not operated within the FAA’s very narrow guidelines for recreational flight. 7 New acceptance methods would provide a pathway to the commercialization and legal use of personal UAS, which could in turn clear the way for many new UAS applications. In addition, new methodologies will enable the incorporation of IA capabilities developed for and currently used in other industries by means of diverse and often very different V&V processes. For example, the automotive industry is deploying IA systems, and the large number of automobiles on the road means that these systems will undergo extensive field testing and evaluation. In addition, accepting nontraditional technologies could exploit some rapidly evolving and proliferating emerging technologies and systems and reap their economic benefit. For example, personal computing devices such as tablet computers, whose capabilities have been advancing at a phenomenal rate, are now often used as a pilot aid by private and commercial pilots.

Specific tasks to be carried out by this research project include the following:

- Develop modular architectures and protocols that support the use of open-source products for non-safety-critical applications. Open-source software and products are prominent in several technology areas that could support IA systems, especially in the areas of mobile computing and network communications. The openness of the product development process increases the potential number of developers and accelerates the pace of innovation and change. The pace at which advances occur in this arena is at odds with the time needed for traditional certification processes and their complexity. New modular architectures and protocols are needed to accommodate this pace. Non-safety-critical system elements and applications that inherently involve little risk, such as the operation of small unmanned aircraft over fields on large farms, are natural candidates for focusing initial efforts.

- Develop and mature nontraditional software languages for IA applications. As intelligent machines evolve, the enabling software will require new attributes unavailable from today’s deterministic code. This software will require an architectural underpinning that (1) permits integration of multiple tasks, often conducted concurrently; (2) is autonomously reconfigurable as mission and environmental circumstances warrant; (3) is scalable to encompass large, complex systems functionality; and (4) is verifiable and trustworthy in its domain of applicability.

- Develop paths for migrating open-source, intelligent software to safety-critical applications and unrestricted flight operations. Additional processes could be developed for migrating open-source software to safety-critical applications and unrestricted flight operations. Open-source development does not imply simple or unsafe functionality, as evidenced by the Linux computer operating system, which is used in a large variety of mainframes and servers, personal computers, and consumer devices. Approaches such as software-based “functional redundancy” could be explored to increase overall system reliability as an adjunct to traditional hardware redundancy.

- Define new operational categories that would enable or accelerate experimentation, flight testing, and deployment of nontraditional technologies. In order to provide the regulatory flexibility to accept nontraditional technology into civil aviation, new operational categories could be developed that specifically focus on the advancement of IA capabilities, recognizing overall system safety, risk, performance, and so on, as the guiding factors rather than component development process or pedigree. These categories should include operations in confined or nonnavigable airspace. This type of mechanism could accelerate maturation of nontraditional applications and missions, such as beyond-line-of-sight precision agriculture.

The final phase of this research project would be accomplished by the VV&C research project as it develops certification standards.

___________________

7 FAA, 1981, Advisory Circular AC 91-57, June 9.

Roles of Personnel and Systems

Determine How the Roles of Key Personnel and Systems, As Well As Related Human–Machine Interfaces, Should Evolve to Enable the Operation of Advanced IA Systems

Effectively integrating humans and machines in the civil aviation system has been a high priority and sometimes an elusive design challenge for decades. Human–machine integration may become an even greater challenge with the advent of advanced IA systems. Although the reliance on high levels of automation in crewed aircraft has increased the overall levels of system safety, persistent and seemingly intractable issues arise in the context of incidents and accidents.

The very nature of IA systems dictates the need for new methods to interface and interact with their human operators and other counterparts. The prospect of shared human–machine intelligence presents daunting challenges ranging from technical to infrastructural to ethical. The emergence of advanced IA capabilities in civil aviation will also require changes to the definition and management of operations within the NAS. The role of key personnel and systems will evolve in response to these changes. In some instances new roles may be added while others become less significant or cease to add value. Advanced IA systems may reduce the number of air traffic controllers, pilots, and other human operators needed by the NAS, while the need for IA system management and supervision might actually increase. Some of today’s active control roles will transition to monitoring functions and may ultimately become extinct. In any case, the roles of these key personnel will have to be revisited in relation to their contribution to and impact on the NAS and the requirements imposed by the adoption of emerging technologies.

Much of the research to be conducted revolves around the roles that human beings currently play and the roles that they should play in interacting with intelligent machines. There is no simple answer. In fact, the answer depends on the nature of the system intelligence and can be expected to change over time as technologies and machine capabilities evolve. As an example, in contrast to the nearly continuous interaction that a pilot has with a highly automated flight control system, which is now the case on commercial transports, interactions between an intelligent, autonomous mission management system and its human supervisor could be highly intermittent. As it now stands, the flight control system executes its decision cycle an order of magnitude (or more) faster than the pilot, who issues input commands via manual ergonomic means (e.g., stick or yoke, rudder pedals, throttle) and specifies the coordinates of way points. In the not-too-distant future, advanced IA systems may be able to assume primary responsibility for functions such as hazardous weather avoidance.

More capable IA systems will offer opportunities for reallocation of functions within the integrated human–machine system. Where practical, humans and machines could each be allocated the functions for which they are best suited while maintaining a secondary capability in functions assigned to the other one. Individual and systemic resilience would be enhanced by maintaining this degree of dual functionality. However, many of the functional issues ascribed to today’s automated systems can be traced to some combination of overlapping capabilities (manual operation vs. automated function), insufficient integration of these functions, and deficiencies in system design and/or training that impede the smooth transition from one mode to the other under some operating conditions.

The allocation of functions between humans and machines could be dynamic, allowing role changes based on evolving factors such as fatigue, risk, and surprise. Regardless, new methods are and will be needed for advanced IA systems to assure that human operators smoothly transition to a more proactive command-and-control mode, as needed. As the intelligence of IA systems advances, new opportunities for improved decision making and task execution by the human-machine system will arise, and additional research will be needed to determine how best to take advantage of these opportunities.

The human–system integration problem becomes even more complex with the hierarchical control imposed by flight in managed airspace. This system-of-systems problem, involving onboard pilots, remote UAS operators, intelligent machines, air traffic controllers, and an intelligent ATM network, is perhaps the most daunting human–system integration challenge that civil aviation will face, and overcoming it will require research in relevant technical disciplines and social sciences.

Specific tasks to be carried out by this research project include the following:

- Develop human–machine interface tools and methodologies to support operation of advanced IA systems during normal and atypical operations. As IA systems evolve, the need for more robust human–machine interfaces will grow. This research will involve understanding the various options for dynamic human–machine integration, including their respective strengths and weaknesses. Both machines and humans will need tools and methodologies to execute the OODA loop8 in harmonious fashion for mission accomplishment and safe operation. It may be necessary for machines and humans to share input data, to understand each other’s perspectives, and to be aware of the logic behind their respective decisions. If they make different decisions on how to proceed, some means for resolving the differences will be needed. Alternatively, it may be possible to improve the decision rationale used by humans and machines by selectively applying the best elements of each. Unambiguous communication between humans and machines is also important. The use of traditional displays could be a starting point for more robust, meaningful ways to share information and insight between humans and machines. This could include voice, tactile responses, gesture recognition, and other novel means of communicating.

- Develop tools and methodologies to ensure effective communication among IA systems and other elements of the NAS. At present, communication between a single air traffic controller and multiple aircraft on one frequency occasionally results in the omission of critical information, overlapping transmissions that block reception, and limited opportunity for clarification or questioning of transmitted information. Data link communication alleviates some of these concerns, but it presents challenges of latency and commonality of terminology in free text communications. Advanced IA aircraft will require a new paradigm to overcome frailties in connectivity and foster concise, unambiguous communications. Research is required to identify and develop effective means of communication between air- and ground-based IA systems. The impact of flight path deviations due to weather, turbulence, traffic congestion, and so on would need to be communicated, along with viable, real-time solutions.

- Define the rationale and criteria for assigning roles to key personnel and IA systems and assessing their ability to perform those roles under realistic operating conditions. Key personnel and IA systems will need to operate as an integrated system to operate safely and efficiently in the increasingly complex and congested NAS. Roles of key personnel would need to be identified and aligned with the degree of autonomy and then adjusted over time as the technology and IA capabilities evolve. For example, the roles of ground-based UAS operators and air traffic controllers may change dramatically if an advanced IA ATM network can issue commands directly to onboard autonomous mission management systems. The role of UAS operators could be even more dramatically different if they are able to simultaneously operate multiple aircraft as a constellation of unmanned aircraft. IA systems may need to monitor human workload and performance to dynamically shift allocated tasks for optimum human–machine or network performance. Conversely, humans may need to monitor IA systems to determine and adjust their ability to execute roles.

- Develop intuitive human–machine integration technologies to support real-time decision making, particularly in high-stress, dynamic situations. Such situations impose hefty workloads on humans, often reducing their physical, cognitive, or sensory performance. Advanced IA systems provide an opportunity to more effectively manage these workloads, allowing for improved decision making and task execution. As IA capabilities advance, high-stress, dynamic situations may be an area where machine intelligence and decision making could augment or even supplant human intelligence.

- Develop methods and technologies to enable situational awareness that supports the integration of IA systems. Awareness of situations such as potentially conflicting decisions, environmental conditions, and the status of mission execution is essential to mission safety and success. The development of IA systems with improved sensing technologies could greatly enhance situational awareness for both the human and the IA system. Research into human and machine perception and the resulting situational awareness could lead to safer and more efficient operations. The research could determine what sensing technologies need

___________________

8 The OODA loop (Observe, Orient, Decide, Act) is discussed in Chapter 1.

to be developed to enable the required situational awareness. It could also explore sensor options that can reproduce the value added from the pilot’s seat-of-the-pants experience for both the onboard IA system and the remote operator, if warranted. Sensor research could also explore the cuing required for off-board operators to remain effective during remote operating conditions.

Determine How IA Systems Could Enhance the Safety and Efficiency of Civil Aviation

As with other new technologies, poorly implemented IA systems could put at risk the high levels of efficiency and safety that are the hallmarks of civil aviation, particularly for commercial air transportation. However, done properly, advances in IA systems could enhance both the safety and the efficiency of civil aviation. For example, IA systems have the potential to reduce reaction times in safety-critical situations, especially in circumstances that today are encumbered by the requirement for human-to-human interactions. The ability of IA capabilities to rapidly cue operators or potentially render a fully autonomous response in safety-critical situations could improve both safety and efficiency. IA systems could substantially reduce the occurrence of these classes of accidents, which are typically ascribed to operator error. These benefits could be of particular value in those segments of civil aviation, such as general aviation and medical evacuation helicopters, that have accident rates much higher than commercial air transports.

Whether on board an aircraft or in ATM centers, IA systems also have the potential to reduce manpower requirements, thereby increasing the efficiency of operations and reducing operational costs.

Where IA systems make it possible for small unmanned aircraft to replace crewed aircraft, the risks to persons and property on the ground in the event of an accident could be greatly reduced owing to the reduced damage footprint in those instances, and the risk to air crew is eliminated entirely.

Specific tasks to be carried out by this research project include the following:

- Analyze accident and incident records to determine where IA systems may have prevented or mitigated the severity of specific accidents or classes of accidents. A wealth of historic accident and incident data, including causal information, is archived and available for study. Research could focus on understanding if root causes could have been countered by an IA system and, if not, if an IA system could have mitigated or prevented the consequences of the incident or accident. This research could also explore other methods for avoidance and mitigation to achieve balance and reduce any bias toward IA approaches. Such research could be broad based, assessing the risk to people and property posed by aircraft of widely varying sizes, weights, performance characteristics, and missions, and by various types of operations (ramp, ground, transport, etc.), affording a comprehensive perspective on the value proposition for IA systems and capabilities in this regard.

- Develop and analytically test methodologies to determine how the introduction of IA systems in flight operations, ramp operations by aircraft and ground support equipment, ATM systems, airline operation control centers, and so on might improve safety and efficiency. This task would develop quantifiable methods of assessing the value of IA capabilities for various elements of the NAS. Methods of interest could include measures of merit and key performance parameters that map onto safety and efficiency metrics. For example, this research element could develop guidelines and methods to allow current safety methodologies, including nonpunitive incident reporting programs such as the Flight Operations Quality Assurance program and the FAA’s Aviation Safety Information Analysis and Sharing system, to help assess the impact of operational IA systems on safety and efficiency. The analysis tools would be able to model the effects, both positive and negative, of IA systems on other portions of the NAS. This research could also determine the training, education, and experience requirements for systems safety personnel, such as accident investigators and airworthiness and operations inspectors, who are involved with IA systems operations.

- Investigate airspace structures and operating procedures to ensure safe and efficient operations of legacy and IA systems in the NAS. The introduction of IA systems will increase the complexity and diversity of the NAS, which is already struggling with legacy systems and high traffic volumes. The introduction of

large numbers of UAS by hobbyists, farmers, law enforcement agencies, and others would bring new complexities and place additional stress on the NAS. Airspace partitioning is one of the fundamental tools of risk management used in the current aviation system. Various categories of airspace are defined based on a great variety of factors, including weather, terrain, time of day (day vs. night), traffic type, traffic density, aircraft equipage and performance, and crew qualifications. These factors determine which operations are permitted in a particular region of airspace at any given time. Advanced IA systems might present an opportunity and/or an incentive to redesign some regions of civil airspace, operating conditions, and rules. For example, IA systems might make it feasible and beneficial to dynamically reconfigure some airspace to improve the safety and efficiency of airspace for selected applications.

Develop Processes to Engender Broad Stakeholder Trust in IA Systems in the Civil Aviation System

IA systems can fundamentally change the relationship between people and technology. Although increasingly used as an engineering term in the context of software and security assurance, trust is a social term and becomes increasingly relevant to human–machine interactions when technological complexity exceeds human capacity to fully understand its behavior. Trust is not a trait of the system; it is the system status in the mind of human beings based on their perception of and experience with the system. Trust concerns the attitude that a person or technology will help achieve specific goals in a situation characterized by uncertainty and vulnerability.9 It is the perception of trustworthiness that influences how people respond to a system.

Trust in technology is different from trust between people, but there are similarities. Increasing levels of autonomy can blur the distinction from trust in people and trust in technology, because the advanced IA systems might behave in ways that are hard to differentiate from the ways of a person. The basis of this trust includes experience with the system, an understanding of the underlying process, and knowledge of its purpose. Trust can also be based on the recommendation of third parties based on their experience or on their formal certification of a system, or it can reflect informal information sharing that defines the reputation of the trustee. Importantly, the trust engendered by a technology depends in part on an analytic assessment of its capabilities, but it also depends on an intuitive assessment of its behavior.

Fostering an appropriate level of trust in IA systems is critical to overcoming barriers to adoption and acceptance by the broad stakeholder community, which includes operators, supervisors, acquirers, patrons, regulators, designers, insurers, the operating community, and the general public. An individual operator who interacts with an IA system but harbors unwarranted skepticism about the reliability and performance of autonomous systems in general might fail to capitalize on their capabilities and instead rely on less efficient approaches. At the societal level, unwarranted skepticism might cause patrons to seek other services, and the public might even push for legislation that unnecessarily limits the pace of innovation. On the other hand, excessive trust could lead to failures to adequately supervise imperfect IA systems, potentially resulting in accidents and a loss of trust that could be very difficult to regain. Trust influences how people respond to IA systems across various organizational levels, circumstances, and timescales.

Although closely related to V&V and certification, trust warrants attention as a distinct research topic because formal certification does not guarantee trust and adoption. A significant component of this project could be cybersecurity and related issues. Trustworthiness depends on the intent of designers and on the degree to which the design prevents both inadvertent and intentional corruption of system data and processes.

Specific tasks to be carried out by this research project include the following:

- Identify the objective attributes of trustworthiness and develop measures of trust that can be tailored to a range of applications, circumstances, and relevant stakeholders. A central challenge in supporting effective

___________________

9 J.D. Lee and K.A. See, 2004, Trust in automation: Designing for appropriate reliance, Human Factors 46(1): 50-80.

-

use of IA systems concerns the need to calibrate the level of trust with the trustworthiness of the system. This would require a metric for trustworthiness of the system, which, like risk, might not be determined by a technical assessment of the IA system.10 It would also require measures of stakeholder trust in the system that are comparable to the measures of trustworthiness. This would identify situations when people might neglect the system just when it actually requires human cognizance and control. Stakeholder trust is relevant for a broad range of stakeholders and situations, but its measurement and aggregation present a substantial challenge.

- Develop a systematic methodology for introducing IA system functionality that matches authority and responsibility with earned levels of trust. The authority of an IA system should be commensurate with its trustworthiness and the degree of trust it earns from the stakeholders. This calls for introducing increased autonomy in a way that both demonstrates trustworthiness and builds trust. Possible methods include graduated authority, in which less and less attention is paid to supervising the system as it demonstrates increasing competence. Another possibility is declarations of third-party trust, whereby developers, other users, and/or an agency testify to the capabilities and characteristics of the system. Because the use of advanced IA systems is likely to grow over time, they would need to be continually assessed in the face of changing technology and situations.

- Determine the way in which trust-related information is communicated. The diversity of stakeholders, applications, and circumstances makes it critical to describe the behavior of IA systems in terms that can be understood. Because trust is based on both analysis and intuition, specifying the level at which information is to be delivered is not sufficient to engender trust; in addition, the form in which the information is presented must allow stakeholders to understand what the system is doing and why. In some cases the passive receipt of information will be sufficient; in other cases it may be important to allow users to influence the system’s response with specific commands or by manipulating external stimuli.

- Develop approaches for establishing trust in IA systems. Trust plays an important role in relationships among individuals, teams, and organizations. Trust also affects the way that people interact with and use conventional technology. Research into both of these areas will be relevant to research into the role that trust plays in human interactions with IA systems. Making good use of this past research, however, will require a better understanding of the differences and similarities between trust in people and machines and how those similarities and differences relate to the specific characteristics of IA systems.

COORDINATION OF RESEARCH AND DEVELOPMENT

All of the research projects described above could and should be addressed by partnerships involving multiple organizations in the federal government, industry, and academia.

The roles of academia and industry would be essentially the same for each research project because of the nature of the role that academia and industry play in the development of new technologies and products.

The FAA would be most directly engaged in the VV&C research project, because certification of civil aviation systems is one of its core functions. However, the subject matter of most of the research projects is related to certification directly or indirectly, so the FAA would be ultimately be interested in the progress and results of those projects.

DOD is primarily concerned with military applications of IA systems, though it must also ensure that military aircraft with IA systems that are based in the United States satisfy requirements for operating in the NAS. Its interests and research capabilities encompass the scope of all eight research projects, especially with regard to the roles of personnel and systems and human cognizance and control.

NASA would support basic and applied research in civil aviation tools, methods, and technologies (including ATM technologies of interest to the FAA) for application in the near, medium, and far term for a wide range of operating concepts. Its interests and research capabilities also encompass the scope of all eight research projects, particularly modeling and simulation, nontraditional methodologies and technologies, and safety and efficiency.

___________________

10 P. Slovic, 1987, Perception of risk, Science 236(4799): 280-285.

For each of these organizations, it will be important to involve researchers with relevant expertise who might not normally see themselves as addressing civil aviation issues. For example, most research and development activity related to machine learning, artificial intelligence, and robotics is not taking place in the context of IA systems for civil aviation, but it will be essential to draw on the latest research and development in these areas to most effectively carry out some of the research projects.

Each of the high-priority research projects overlaps to some extent with one or more of the other projects, and each would be best addressed by multiple organizations working in concert. There is already some movement in that direction.

The FAA has created the Unmanned Aircraft Systems Integration Office to foster collaboration with a broad spectrum of stakeholders, including DOD, NASA, industry, academia, and technical standards organizations. The FAA is working with this broad community to support safe and efficient integration of UAS in the NAS. Key activities include the development of regulations, policies, guidance material, and training requirements. In addition, the FAA is coordinating with relevant departments and agencies to address related policy areas such as privacy and national security, and in 2015 it will establish an air transportation center of excellence for UAS research, engineering, and development.11,12

The Senior Policy Committee that oversees the work of the NextGen Joint Planning and Development Office is chaired by the Secretary of Transportation and includes representatives from the FAA, NASA, DOD, the Department of Commerce, the Department of Homeland Security, and the White House Office of Science and Technology Policy. The NextGen Program is executing a multiagency research and development plan to improve the NAS. The Senior Policy Committee views the integration of UAS into the NAS as a national priority and, to that end, in 2012 it published the NextGen Unmanned Aircraft Systems Research, Development and Demonstration Roadmap Version 1.0. As the title suggests, this document describes an approach for coordinating UAS research, development, and demonstration projects across the agencies involved in NextGen.

There are also positive indications of coordination in the recreational or hobbyist unmanned aircraft community. There are many websites that share open-source software and hardware, with open forums that enable widespread sharing of ideas, techniques, and methods for achieving IA operations of small, amateur-built UAS. However, these activities are largely undertaken without consideration of issues such as certification and thus are generating designs and approaches that might be technologically advanced but could be certified for use in the civil aviation system only with great difficulty, if at all. Since these systems are not verified or validated for qualities such as robustness, completeness, and fault tolerance, the ultimate contribution of this sector to the advancement of IA systems in the NAS remains to be seen.

The collaborative interagency and private efforts described above are necessary and could be strengthened to assure that the full scope of IA research and development efforts (not just those focused on UAS applications) are effectively coordinated and integrated, with minimal duplication of research and without critical gaps. In particular, more effective coordination among relevant organizations in government, academia, and industry would help execute the recommended research projects more efficiently, in part by allowing lessons learned from the development, test, and operation of IA systems to be continuously applied to ongoing activities.

The recommended research agenda would directly address the technology barriers and the regulation and certification barriers. As noted in Table 4.1, although several research projects would address the social and legal issues, the agenda would not address the full range of these issues. In the absence of any other action, resolution of the legal and social barriers will likely take a long time, as court cases are filed to address various issues in various locales on a case-by-case basis, with intermittent legislative action taken in reaction to highly publicized court cases, accidents, and the like. A more timely and effective approach for resolving the legal and social barriers could begin with discussions involving the Department of Justice, FAA, the National Transportation Safety Board, state attorneys general, public interest legal organizations, and aviation community stakeholders. The discussion of some related issues may also be informed by social science research. Given that the FAA is the federal govern-

___________________

11 FAA, 2013, Integration of Civil Unmanned Aircraft Systems (UAS) in the National Airspace System (NAS) Roadmap, November.

12 FAA, Center of Excellence for Unmanned Aircraft Systems, “Welcome,” http://faa-uas-coe.net/, accessed April 3, 2014.

ment’s lead agency for establishing and implementing aviation regulations, it is in the best position to take the lead in initiating a collaborative and proactive effort to address legal and social barriers.

Executing the research agenda set forth in this report would require significant resources from multiple federal agencies and research organizations. However, substantial advances could be achieved using currently available resources. In large part, the primary responsibilities of specific agencies for given elements of the research agenda will be determined by the resident expertise and specialized research facilities. For example, as noted above, there is one critical, crosscutting challenge that must be overcome to unleash the full potential of advanced IA systems in civil aviation: How can we assure that advanced IA systems—especially those systems that rely on adaptive/ nondeterministic software—will enhance rather than diminish the safety and reliability of the NAS? Executing the research projects that will overcome this critical challenge would require a broad mix of expertise, analytic capabilities, and specialized facilities that are resident at research, development, test, and manufacturing organizations within NASA, the FAA, DOD, industry, and academia. It would also require an ongoing commitment of resources and expertise and a willingness to work together toward a shared goal.

Civil aviation in the United States and elsewhere in the world is on the threshold of profound changes in the way it operates because of the rapid evolution of IA systems. Advanced IA systems will, among other things, be able to operate without direct human supervision or control for extended periods of time and over long distances. As happens with any other rapidly evolving technology, early adapters sometimes get caught up in the excitement of the moment, producing a form of intellectual hyperinflation that greatly exaggerates the promise of things to come and greatly underestimates costs in terms of money, time, and—in many cases—unintended consequences or complications. While there is little doubt that over the long run the potential benefits of IA in civil aviation will indeed be great, there should be equally little doubt that getting there, while maintaining or improving the safety and efficiency of U.S. civil aviation, will be no easy matter. Furthermore, given that the potential benefits of advanced IA systems—as well as the unintended consequences—will inevitably benefit some stakeholders much more than others, the enthusiasm of the latter for fielding such systems could be limited. In any case, overcoming the barriers identified in this report by pursuing the research agenda proposed by the committee is a vital next step, although more work beyond the issues identified here will certainly be needed as the nation ventures into this new era of flight.