Appendix C

Methods Used to Assess ARPA-E

This chapter explains the methods and data used in this report’s assessment of ARPA-E; further detail is in Appendixes D and G. The methods used for the committee’s operational and technical assessments were determined largely by the specific charges in the statement of task for this study (Box 1-1 in Chapter 1). As an example, Table C-1 presents the committee’s translation of the first nine bulleted items in the statement of task into research/descriptive and evaluative/expert questions. The former questions were aimed at illuminating and explaining specific aspects of ARPA-E, whereas the latter were designed to determine the relative merit, worth, or significance of specific aspects of the agency. The first charge in the statement of task, for example—“Evaluate ARPA-E’s methods and procedures to develop and evaluate its portfolio of activities”—implicitly embodies two questions: a research-oriented question focused on describing and understanding the methods and procedures ARPA-E uses to evaluate its portfolio of activities, and two evaluative questions focused on determining the sufficiency/adequacy of these procedures in relationship to the agency’s mission and goals. As shown in Table C-1, there were often multiple research and evaluative questions for a single task, so questions were prioritized according to their relative importance. Next, the committee determined the appropriate methods and corresponding data sources for addressing these questions. The list of data sources was reviewed for redundancy and became the basis for the committee’s data request to ARPA-E (Appendix F). Tables C-2, C-3, and C-4 provide examples of the committee’s mapping of research and evaluative questions to methods and data sources for charges 1, 6, and 9 in Table C-1.

As summarized in Chapter 1, the committee formed four subgroups based on the methods to be used: (1) the Internal Operations Qualitative Data Team, which gathered and analyzed qualitative data, including information obtained through interviews with current and former ARPA-E personnel and U.S. Department of Energy (DOE) officials and discussions with stakeholders at ARPA-E events, such as program kickoffs and interim meetings; (2) the Case Study Team, which conducted three types of case studies (a single focused

| Statement of Task | Corresponding Research Question(s) |

|---|---|

|

1. Evaluate ARPA-E’s methods and procedures to develop and evaluate its portfolio of activities. |

Research/Descriptive: |

| 1. What are ARPA-E’s methods and procedures for developing and evaluating its portfolio of activities? Have they continued to evolve? If so, what are the factors that contributed to their evolution? | |

| Evaluative/Expert: | |

| 2. Are ARPA-E’s methods and procedures for developing and evaluating its portfolio of activities sufficient for identifying cutting-edge technology projects? | |

| 3. Have the methods and procedures used by ARPA-E to develop and evaluate its portfolio of activities resulted in measurable contributions to ARPA-E’s mission or stated goals? | |

|

2. Examine the appropriateness and effectiveness of ARPA-E programs for providing awardees with nontechnical assistance, such as practical financial, business, and marketing skills. |

|

| Research/Descriptive: | |

| 1. What are ARPA-E’s activities to provide awardees with nontechnical assistance, such as practical financial, business, and marketing skills? | |

| 2. Compared with awardees that receive less or minimal nontechnical assistance, are awardees with more technical assistance experiencing different outputs and outcomes? | |

| Evaluative/Expert: | |

| 3. To what extent has the nontechnical assistance, such as practical financial, business, and marketing skills, provided to awardees helped them to bring their technology to market? Helped their technologies to contribute to ARPA-E’s mission or stated goals? | |

| 4. Has ARPA-E provided the appropriate practical financial, business, and market skills for the current challenges and barriers in the technology and commercialization arena? | |

|

3. Assess ARPA-E’s recruiting and hiring procedures to attract and retain qualified key personnel. |

Research/Descriptive: |

| 1. What are ARPA-E’s recruiting and hiring procedures? | |

| 2. How do the qualifications of key personnel within ARPA-E compare with those of similar personnel within other departments or agencies within DOE? | |

| Evaluative/Expert: |

| Statement of Task | Corresponding Research Question(s) |

|---|---|

| 3. To what extent have ARPA-E’s recruiting procedures been successful at attracting and retaining the best and brightest to key roles such as deputy directors? Program directors? | |

|

4. Examine the processes, deliverables, and metrics used to assess the short- and long-term success of ARPA-E programs. |

Research/Descriptive: |

| 1. What processes, deliverables, and metrics are used by ARPA-E to assess the short- and long-term success of its programs in contributing to ARPA-E’s mission or stated goals (or means)? | |

| Evaluative/Expert: | |

| 2. How effective have the processes, deliverables, and metrics been in ensuring that programs are meeting their identified goals? In identifying challenges or difficulties that inhibit ARPA-E’s ability to contribute to its mission or goals? In helping programs to stay on track? | |

|

5. Assess ARPA-E’s coordination with other federal agencies and alignment with long-term DOE objectives. |

Research/Descriptive: |

| 1. What process does ARPA-E use to coordinate with other federal agencies (including other parts of DOE)? | |

| 2. What other federal agency programs does ARPA-E coordinate with? | |

| 3. How does ARPA-E leverage work from other federal agencies and address unintentional duplicative work? | |

| Evaluative/Expert: | |

| 4. How well do ARPA-E’s mission and goals align with long-term DOE objectives? | |

| 5. Has ARPA-E proactively looked for ways to coordinate its long-term goals with other federal agencies? If so, how successful have these efforts been? | |

|

6. Evaluate the success of the focused technology programs in spurring formation of new communities of researchers in specific fields. |

Research/Descriptive: |

| 1. What is ARPA-E’s process for creating focused technology programs? | |

| Evaluative/Expert: | |

| 2. Have the focused technology programs spurred the formation of new communities of research? If so, how sustainable have these communities been? | |

| 3. What, if any, significant products and technologies have emerged from these communities of researchers? | |

| 4. How have the products and technologies from these communities of researchers contributed to ARPA-E’s mission or stated goals? |

| Statement of Task | Corresponding Research Question(s) |

|---|---|

|

7. Provide guidance for strengthening the agency’s structure, operations, and procedures. |

Research/Descriptive: |

| 1. Outside of DARPA, what are some appropriate models for ARPA-E to consider for strengthening its structure, operations, and procedures? | |

| Evaluative/Expert: | |

| 2. Based on the findings from this committee, where are areas for improving how the agency’s structure, operations, and procedures contribute to its mission and stated goals? | |

|

8. Evaluate, to the extent possible and appropriate, ARPA-E’s success at implementing successful practices and ideas utilized by the Defense Advanced Research Projects Agency (DARPA), including innovative contracting practices. |

Research/Descriptive: |

| 1. What practices and ideas utilized by DARPA have been most useful and relevant for ARPA-E? | |

| Evaluative/Expert: | |

| 2. How successful has ARPA-E been in using practices and ideas utilized by DARPA to create an infrastructure to support high-risk/high-payoff programs and projects and contribute to ARPA-E’s mission and stated goals? | |

|

9. Reflect, as appropriate, on the role of ARPA-E, DOE, and others in facilitating the culture necessary for ARPA-E to achieve its mission. |

Research/Descriptive: |

| 1. What challenges, if any, does ARPA-E face from DOE in developing an innovative and nimble culture to enable it to achieve its mission? | |

| Evaluative/Expert: | |

| 2. How has ARPA-E created a sustained innovative and nimble culture? | |

| 3. Can aspects of the ARPA-E model be transferred to DOE? If so, what is transferable? |

| Research/Evaluation Question | Methodological Approach | Data Sources |

|---|---|---|

| What are ARPA-E’s methods and procedures for developing and evaluating its portfolio of activities? |

|

ARPA-E:

FOAs:

|

| Are ARPA-E’s methods and procedures for developing and evaluating its portfolio of activities sufficient for identifying cutting-edge technology projects? |

|

General: energy system—major opportunities for contribution (from experts & literature):

|

| Have the methods and procedures used by ARPA-E to develop and evaluate its portfolio of activities resulted in measurable contributions to ARPA-E’s mission or stated goals? |

Documenting of program development cycle to:

|

General and ARPA-E:

|

NOTE: FOA = Funding Opportunity Announcement.

| Research/Evaluatio n Question | Methodological Approach | Data Sources |

|---|---|---|

| 1. What is ARPA-E’s process for creating focused technology programs? |

Review of program documentation on focused programs:

|

ARPA-E:

|

| 2. Have the focused technology programs spurred the formation of new communities of research? If so, how sustainable have these communities been? |

|

ARPA-E:

|

| 3. What, if any, significant products and technologies have emerged from these communities of researchers? |

|

|

| Research/Evaluation Question | Methodological Approach | Data Sources |

|---|---|---|

| 1. What challenges, if any, does ARPA-E face from DOE in developing an innovative and nimble culture to enable it to achieve its mission? |

|

|

| 2. How has ARPA-E created and sustained an innovative and nimble culture? |

|

|

| 3. Can aspects of the ARPA-E model be transferred to DOE? If so, what is transferable? |

|

|

program, a portfolio of 63 energy storage projects, and 10 individual projects); (3) the Internal Operations Quantitative Data Team, which accessed internal, proprietary ARPA-E data and reported aggregated findings from these data; and (4) the External Quantitative Data Team, which obtained data from external sources, such as the U.S. Patent and Trademark Office’s (USPTO) patent database, publications, and other sources. The sections below describe these methods and the data used in greater detail. As shown in Tables C-2, C-3, and

C-4, the data for the research questions were to be obtained primarily from such sources as literature reviews, question-and-answer sessions with ARPA-E staff during panel presentations at committee meetings, conversations with ARPA-E awardees at agency events, interviews with former agency staff, case studies, and event observations. The evaluative questions were to be addressed through the expert assessment of committee members.

The committee formulated a data request to ARPA-E that included both internal, proprietary information about firms and agency personnel and processes and nonproprietary information (see Appendix F). ARPA-E worked cooperatively with the committee to provide the requested data. The committee worked with independent consultants to analyze these data; the data were anonymized before being provided to the consultants. The committee directed the consultants to perform certain analyses of the anonymized data so that aggregated results could be presented to the entire committee with no risk of revealing propriety business information. The consultants’ reports are included in Appendix G. Where confidentiality was a concern, ARPA-E allowed the consultants to work in agency offices to gather the data, which were then presented to the committee in aggregated form.

QUALITATIVE DATA ANALYSIS

Individual Consultations

Semistructured individual consultations were conducted with former ARPA-E program directors and DOE officials who had either worked with ARPA-E during their career or been involved in collaboration for ARPA-E projects. Table C-5 provides a list of the individuals the committee consulted during the course of its assessment.

The questions discussed in these consultations were drawn primarily from the statement of task (see Table C-1) and focused on the following key topics:

- recruiting and hiring procedures used to attract and retain talented personnel;

- coordination with other federal agencies and alignment with DOE objectives;

- guidance for strengthening ARPA-E’s structure, operations, and procedures;

- the role of ARPA-E, DOE, and others in facilitating the culture necessary for ARPA-E to achieve its mission; and

- lessons learned from the operation of ARPA-E that may apply to other DOE programs and factors that Congress should take into consideration in determining the agency’s future.

TABLE C-5 Consultations Conducted for ARPA-E Assessment

| Consulted | Title | Date |

|---|---|---|

| Cadieux, Gena | DOE, Deputy General Counsel for Transactions, Technology, & Contractor Human Resources | 1/15/2016 |

| Chalk, Steven | Deputy Assistant Secretary for Renewable Energy | 1/5/2016 |

| Chu, Steven | Secretary of Energy | 2/15/2016 |

| Danielson, David | DOE, Assistant Secretary of Energy Efficiency & Renewable Energy (EERE), and former ARPA-E Program Director | 12/3/2015 |

| Friedmann, Julio | DOE, Principal Deputy Assistant Secretary for the Office of Fossil Energy | 1/6/2016 |

| Gur, Ilan | DOE, former ARPA-E Program Director, currently founder of Cyclotron Road | 9/22/2015 |

| Hoffman, Patricia | DOE Assistant Secretary for the Office of Electricity (OE) | 1/6/2016 |

| Howell, David | DOE, Vehicle Technology Office, Program Manager, and currently Manager, Energy Storage R&D at DOE | 1/27/2016 |

| Johnson, Mark | DOE, Director of the Advanced Manufacturing Office (AMO) and former Program Director at ARPA-E | 2/9/2016 |

| Kolb, Ingrid | DOE, Director of the Office of Management | 1/15/2016 |

| Le, Minh | DOE, Deputy Director, Solar Energy Technologies Office | 1/27/2016 |

| Prashar, Ravi | Lawrence Berkeley National Laboratory (LBNL), Division Director of Energy Storage and Distributed Resources Division | 1/29/2016 |

| Ramamoorthy, Rameesh | LBNL, Materials Scientist, Department of Materials Science and Engineering, University of California, Berkeley | 1/29/2016 |

| Simon, Horst | LBNL, Deputy Director | 1/29/2016 |

| Smith, Christopher | DOE, Assistant Secretary for Fossil Energy | 1/6/2016 |

Notes from the discussions were analyzed using the qualitative data management software ATLAS.ti (Muhr, 2004). Questions discussed in the consultations provided the basis for the preliminary list of codes used for this analysis. This first iteration of coding was descriptive. Each set of discussion notes (in some cases, more than one set of notes was taken for each interview) was coded using the consultation questions, enabling the committee to categorize the various responses. These codes were later refined and grouped within a “people, process, and culture” framework, which essentially served as the parent code structure. This framework emerged from subgroup review and discussion of the notes from the consultations and reflected a common response

to the question of important factors related to ARPA-E’s success, and was used for the initial outline of the chapter of this report on ARPA-E’s internal operations (Chapter 3). Because the discussions centered on ARPA-E operations and DOE’s relationship with the agency, the codes were categorized into “Internal to ARPA-E” and “Intersection of ARPA-E with DOE,” as shown in Table C-6.

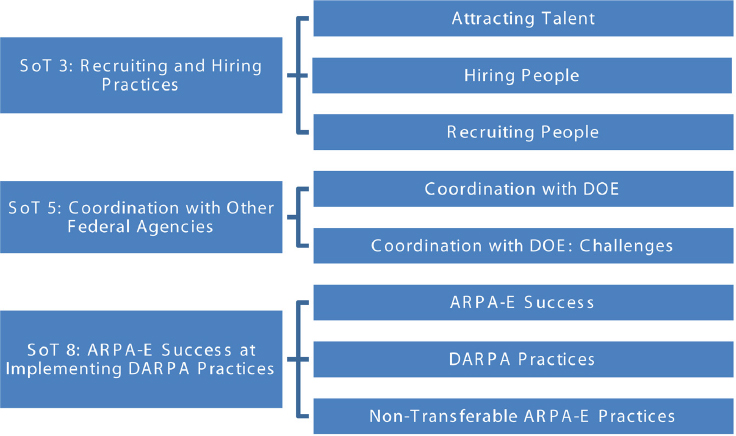

The second iteration of coding was more interpretive, as the committee specifically linked the consultation notes back to the statement of task. The statement of task provided an organizational structure that made it possible to expand these codes as needed and identify important themes related to the key areas listed earlier. Figure C-1 provides an example of how the themes relate back to the statement of task.

Event Observation

In addition to the semistructured interviews discussed above, committee members across all of the subgroups attended the ARPA-E events listed in Table C-7 and talked informally with key stakeholders.

Committee members developed and used an observation protocol to standardize the information gathered during each event. This protocol included two sections for comment—the overall event function and the energy sector issue addressed. The event function section included the following dimensions on which committee members were asked to provide comments and ratings:

- Program director’s program vision—Is there a clear vision for the program? Are the program director’s authority and leadership in this area apparent?

- Participation and representation—Are attendees actively participating? Are key stakeholders present? Are multiple perspectives represented? Are differences in perspectives and opinions openly shared?

TABLE C-6 Categorization of Questions Used for Individual Consultations

| Internal to ARPA-E | Intersection with DOE |

|---|---|

|

ARPA-E Culture: confrontation ARPA-E Culture: risk ARPA-E Process: active management ARPA-E Process: budgetary control ARPA-E Success ARPA-E People: attracting and hiring talent ARPA-E People: recruiting |

Coordination with DOE Coordination with DOE: challenges DOE Culture: ARPA-E practices DOE Culture: barriers to adopting ARPA-E practices DOE Culture: exportability of ARPA-E practices DOE People: hiring practices DOE Process: ARPA-E recommendations Nontransferable ARPA-E practices |

NOTES: SoT = statement of task. Numbers refer to the specific charges in the SoT listed earlier in Table C-1.

TABLE C-7 ARPA-E Events Attended by Committee Members

| Event | Location | Date |

|---|---|---|

|

Transportation Fuels Workshop |

Denver, CO | Aug. 27–28, 2015 |

|

Methane Opportunities for Vehicular Energy (MOVE) Annual Meeting |

Denver, CO | Sept. 14–15, 2015 |

|

Advanced Research In Dry cooling (ARID) Program Kickoff Meeting |

Las Vegas, NV | Sept. 29–30, 2015 |

|

Accelerating Low-cost Plasma Heating and Assembly (ALPHA) Program Kickoff Meeting |

Santa Fe, NM | Oct. 14, 2015 |

|

Generators for Small Electrical and Thermal Systems (GENSETS) Program Kickoff Meeting |

Chicago, IL | Oct. 21–22, 2015 |

|

Annual Energy Innovation Summit, 2016 |

National Harbor, MD | Mar. 1, 2016 |

- Organization and structure—Is there a clear agenda? Is the event organized and well planned? Are objectives appropriate for time allowed?

- Results and actions—Did the event have clear outcomes? Are the results relevant? Are next steps and action items are clear? Were the overall purpose and objectives for workshop met?

The energy sector section was designed to capture more substantive information on projects and their potential impact. Committee members were asked to comment on and rate the following:

- Coherent statement of the energy problem to be solved—Was the energy problem easily understood and well researched?

- Potential impact on ARPA-E’s mission—Was there a clear description and understanding of the impact of the agency’s goals for reducing imported energy, enhancing energy efficiency, and reducing energy-related emissions?

- Transformative and disruptive approach—Was there a clear understanding of the transformative aspects of the technology on which the event focused? Was the approach innovative and unlikely to be considered for investment through traditional DOE programs?

- Positioning technology for market—Was there a clear plan for uptake of the technology by the market?

Data captured through these observational protocols were shared with the full committee and deliberated.

Case Studies

Case studies of one focused program, electrical storage battery projects from several focused programs, and individual projects were undertaken to provide descriptive examples of ARPA-E’s operations and the technical outcomes of its projects and programs. The committee cautions that these cases studies may not be representative of all ARPA-E projects because of the limited number of case studies that was feasible to conduct. Nonetheless, the case studies served as a key element of the committee’s weighing of the evidence on the operations and technological impacts of ARPA-E.

As discussed in Chapter 1, ARPA-E had been in existence for 6 years at the time of this evaluation, which, relative to the life span of energy technologies, is relatively young (Powell and Moris, 2002).1 Thus, the findings from these case studies may not fully reflect the maturation of the respective technologies and their subsequent potential impact. Moreover, most ARPA-E

___________________

1 The Advanced Technology Program is similar to ARPA-E, so the commercialization timelines are expected to be similar. For successful technologies, the commercialization timing varies. The timeline for information technology (IT) projects is roughly 1 year after funding ends. Electronics technologies have some early applications, but then they experience a steep rise in activity in the second year after funding ends, followed by a fall-off more rapid than that in any other technology area except IT. Materials-chemistry and manufacturing-based applications build up somewhat more slowly and tail off more slowly than electronics and IT. Biotechnologies have an initial spurt of activity in the second year after funding ends, then have another spurt 5 or more years later.

projects are funded for roughly 3 years, so that at the time of this assessment, there were at most two cohorts of cases to examine. Therefore the focus was on evaluating ARPA-E’s operations to assess whether the projects selected (1) met the agency’s criterion of funding technologies with the potential to be transformational, (2) fell within a white space that is not being funded by industry because of technical and financial uncertainty, and (3) were in accordance with ARPA-E’s mission.

Experts from the committee provided guidance on the meaning of transformative technologies, which enabled assessment of the potential impacts of ARPA-E’s projects. This guidance included one or more of the following elements:

- evidence of market penetration and ultimately market domination;

- a scientific discovery or technological development that was previously considered impossible or was totally unexpected, often from blue-sky research (although a major scientific discovery or engineering breakthrough is not sufficient to produce a transformative technology, as the transformative nature of a technology ultimately can be measured only in the marketplace or society); and/or

- evidence of deployment along a commercialization pathway as part of this transformation.

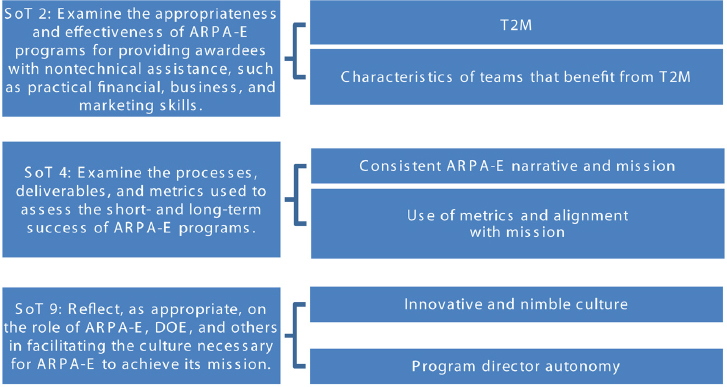

The case studies address in full or in part three specific charges in the statement of task, shown on the lefthand side of Figure C-2, and covered topics shown on the righthand side of the figure.

As noted above, the committee conducted three types of case studies: (1) a single program (SWITCHES [Strategies for Wide-Bandgap, Inexpensive Transistors for Controlling High-Efficiency Systems]), (2) a portfolio of 63 projects related to energy storage technologies, and (3) 10 individual projects. The case studies are described in Chapter 4 and presented in Appendix D.

The committee undertook a blend of illustrative and explanatory case studies.2 Case study information was collected through review of ARPA-E materials (program descriptions, funding announcements, and other multimedia

___________________

2 Illustrative case studies are anecdotes that bring “immediacy, convincingness, and attention-getting” qualities to a study (GAO, 1990). They are often used to complement quantitative data by providing examples of overall findings. Morra and Friedlander (n.d.) note that illustrative case studies tend to have a narrower focus than explanatory cases. Explanatory case studies explain the relationships among program components and help identify performance measures or pose hypotheses for further evaluation research. Explanatory case studies are designed to test and explain causal links when the complexity involved cannot be captured by a survey or experimental approach (Yin, 1984, 2013).

NOTE: SoT = statement of task; T2M = technology-to-market.

information) and external materials (primarily websites, popular press and journal articles, and occasionally Securities and Exchange Commission filings), as well as various metrics and other quantitative data. Information also was obtained from public presentations made to the committee by ARPA-E program directors and through interactions with performers at various venues, including the annual Energy Innovation Summit.

Semistructured conversational interviews were conducted to ensure that similar information would be collected during each interview while also allowing unique or interesting ideas to emerge, referred to as “surprises.” The case study write-ups in Appendix D reflect an attempt to address the interview questions so patterns and differences could be identified across case studies (Caudle, 1994). The External Quantitative Data Team provided other indicators, such as counts of publications and patents. In a few cases, discussions were held with the ARPA-E program directors who oversaw the case study projects.

Each case study interview involved the ARPA-E performer (generally the project’s principal investigator) and often at least one other project team member. The committee team consisted of two members (a scientist or engineer and a social scientist) and typically one or two members of the National Academies staff involved in the study. The interviews were held by phone and lasted from 30 to 60 minutes. The case studies also include an expert assessment of the technology developed during the project and its potential to be transformative, provided by a committee member.

Generally, the technical committee member led the discussion, while the social scientist asked clarifying questions. The discussion covered the following topics: the state of the research before applying to ARPA-E; reasons for responding to the FOA; examples of interactions with ARPA-E program

directors and the T2M team; and other thoughts or insights the performer wished to provide. Box C-1 presents the list of questions used as a guide for the conversations with performers across the case studies to ensure coverage of a consistent set of topics.

As time permitted, other topics were discussed, such as the performer’s plans to move the technology to the next stage of research or to the market, key lessons from the project, mechanisms for industry interaction and feedback concerning the technology, examples of how ARPA-E interactions led to new collaborations that would likely not have occurred, and the performer’s experience with the ARPA-E Energy Innovation Summit. Each case study was reviewed and approved by the performers who had participated in its development.

INTERNAL AND EXTERNAL QUANTITATIVE METHODS

Quantitative Analysis of Data from ARPA-E’s Internal Project Management System

A central question in the statement of task for this study was to what degree ARPA-E has internalized active management practices similar to those utilized by DARPA in its selection, development, and evaluation of projects. In

addition to determining whether ARPA-E used active management practices, the committee sought to assess whether these practices had an impact on internal and external measures of success of the projects. To address these questions, the committee worked with external consultants to investigate ARPA-E’s practices for project selection and management and their impact on project outcomes through a detailed analysis of internal data provided by the agency. The consultants delivered a working paper (Goldstein and Kearney, 2016) that provides detailed analysis.3

The quantitative analysis of ARPA-E’s project management data conducted by the consultants addresses the following charges in the statement of task: (1) evaluate ARPA-E’s methods and procedures for developing and evaluating its portfolio of activities; (2) examine the appropriateness and effectiveness of ARPA-E programs for providing awardees with nontechnical assistance, such as practical financial, business, and marketing skills; (3) examine the processes, deliverables, and metrics used to assess the short- and long-term success of ARPA-E programs; and (4) evaluate, to the extent possible and appropriate, ARPA-E’s success at implementing successful practices and ideas utilized by DARPA, including innovative contracting practices.

To characterize and better understand the range of practices used for active management at ARPA-E, Goldstein and Kearney (2016) analyzed a large set of ARPA-E data consisting of anonymized information for all concept paper applications; full-proposal applications; review scores for all full proposals; binary selection information; quarterly progress reports for each project; and outcome metrics associated with each project, including patent applications, publications, and a series of indicators for market engagement (e.g., follow-on funding).

Using the information on external reviewer scores, the consultants determined which projects would have been funded using those scores alone and determined which projects were selected using ARPA-E’s active selection process, which, as discussed previously, gives the program manager significant discretion (as overseen by the Merit Review Board) to select projects. The consultants compared the outcomes of funded projects with lower external scores and those of projects with higher scores.

The consultants collected information on various characteristics of program managers, such as the year they received their undergraduate degree and the number of years they spent in either an industry or academic position before joining ARPA-E. The consultants also looked at the number of program director changes and the rate of such changes (number of changes divided by project duration in years). Additionally, the consultants calculated the fraction of a project managed by each program director.

The consultants also gathered data from ARPA-E’s quarterly reporting requirement for each project regarding changes in a project’s negotiated

___________________

3 This working paper, provided in Appendix G, includes a more detailed description of the data collected, the methodologies used, the analysis, and the authors’ conclusions.

milestones to determine whether program managers were using active program management. Program managers can adjust project milestones, budgets, and timelines. Milestone changes may be recorded as a change in the text of a milestone, or the milestone itself may be deleted and replaced with a new one. The consultants were able to observe only deletions/new milestones and not milestone revisions under the same milestone number. Thus, the information on milestone changes gathered by the consultants likely underestimates the number of milestone changes.

The data collected on project (or project manager) characteristics was then compared with various internal and external outcome measures, including an internal status update on the project’s performance along technical, cost, schedule, and overall performance. The external outcome measures included publications. As discussed further below, the consultants also created a publications dataset collected by searching Web of Science (WOS) for all publications through December 31, 2015, citing the award or work authorization number for an ARPA-E project (see Goldstein, 2016, for further detail on the methods used to collect publication data). Highly cited publications are defined as publications with more citations than the top 1 percent of most cited papers in a given research field in a given year. Top journal publications are defined as publications in the top 40 journals in science and engineering fields, ranked by number of highly cited papers. Energy journal publications are those from the subject category Energy and Fuels. The consultants created variables in the projects dataset for the number of total publications resulting from a project, as well as the number of each of the above three types of publications. The methods used in collecting information on publications, patents, and market engagement are explained in more detail in the next section.

An additional external outcome measure looked at patents. As part of their cooperative agreement, awardees are required to acknowledge ARPA-E support in any patents, as well as to report intellectual property to DOE. ARPA-E has, in collaboration with the DOE General Counsel’s office, collected data on invention disclosures, patent applications, and patents reported as a result of each ARPA-E award. The consultants obtained the count data on these outcomes for each award through the end of 2015, as well as the filing date of the patent applications and the issue date for the patents.

Finally, the external outcome measures looked at market engagement metrics as tracked by ARPA-E. Each spring, to coincide with the annual Energy Innovation Summit, ARPA-E publishes a list of projects that have (1) received follow-on private funding, (2) generated additional government partnerships, and (3) led to the formation of companies.4 In addition, the consultants obtained separately from ARPA-E a list of awards that have led to (4) initial public offerings (IPOs), (5) acquisitions, or (6) commercial products. These market engagement metrics are those reported by awardees as being directly attributable

___________________

4 Company formation for these purposes includes start-up company awardees for which the ARPA-E award was their first funding.

to ARPA-E support as of February 2016. They were used to create dummy variables for each of the six market engagement metrics as well as a dummy for at least one market engagement metric having been met. The consultants also obtained data on the amount of private funding reported by the awardee in each year and in total.

Finally, the consultants created two additional metrics to combine the three categories of external outputs that also were used in the external data analysis described below—publications, inventions, and market engagement. The first was a “minimum standard” variable that captured whether a project had at least one publication, at least one patent application, or some form of market engagement (as defined by the six market engagement metrics described in the preceding paragraph). The second was a dummy variable for projects that had all three of the above-mentioned metrics.

Quantitative Analysis of Publicly Available Data

The quantitative analysis of publicly available data addressed parts of the statement of task related to the technical assessment: to identify where ARPA-E projects have made an important difference, and to help assess the performance of the portfolio of awards and evaluate whether and to what extent the portfolio has helped ARPA-E achieve its goals. Committee members directed a consultant to conduct a detailed analysis of the impact of ARPA-E compared with that of DOE’s Office of Science and Office of Energy Efficiency and Renewable Energy (EERE). The consultant created a dataset of all awards offered by the three agencies using publicly available data. This dataset links award-level specifications for recipient type, amount of funding, and project length to publicly available outcomes credited to each award. The sample includes data on patents, patent quality, publications, and publication quality.

The consultant created the dataset using award data from the Data Download page of USAspending.gov. Run by the Department of the Treasury, USAspending.gov provides publicly accessible data on all federal awards, in accordance with the Federal Funding Accountability and Transparency Act of 2006.5 The consultant downloaded all transactions made to prime recipients of grants or “other financial assistance” from DOE in fiscal years (FY) 2009–2015. These transactions include grants and cooperative agreements and exclude contracts and loans.

The exclusion of contracts is important to note because contracts are the primary mechanism through which DOE funds research and development (R&D) at the national laboratories. Legally defined, contracts are used for government procurement of property or services, while grants and cooperative agreements are used to provide support to recipients, financial or otherwise.6 A

___________________

5Federal Funding Accountability and Transparency Act of 2006, 109th Cong., 2d sess. (2005-2006).

6 41 U.S.C. § 501 et seq.

cooperative agreement is different from a grant in that it entails “substantial involvement” between the agency and the recipient. Given that ARPA-E uses cooperative agreements as its primary mechanism for distributing funds, grants and cooperative agreements were the most relevant choice for this comparison.

Each transaction downloaded from USAspending.gov is associated with an award ID (or award number), which is a string of up to 16 characters; multiple transactions are associated with most awards. Award numbers begin with the prefix “DE,” followed by a two-letter code indicating the office or program where the award originated. This system for assigning award numbers appears to have become standard for DOE assistance beginning in FY 2009.

Many of the transactions are duplicates; therefore, the data were consolidated to a single entry reflecting the total funding per award. Further modifications were necessary to limit the dataset to those awards related to energy R&D. The consultant then categorized each award based on its Catalog of Federal Domestic Assistance (CFDA) number and excluded those awards not related to R&D activities, such as block grants to city governments for energy-efficiency upgrades.

The resulting dataset (referred to as the “DOE dataset”) contained 5,896 awards, 263 of which were from ARPA-E. None of the awards for FY 2009 were from ARPA-E as the first ARPA-E awards began in December 2009. Together, the Office of Science and EERE produced a majority (80 percent) of the awards in the dataset. The consultant reported the mean and standard deviation of each variable (patents, patent quality, publications, and publication quality) by office.

As noted, publication outputs for DOE awards were obtained from WOS, a subscription-based product from Thomson Reuters with information on scientific publications and citations. The dataset was derived from the WOS Science Citation Index-Expanded. All searches were automated using the WOS application programming interface (API). For each award in the DOE dataset, the consultant searched for the award number in the Funding Text field of the WOS publication records. Each search returned a list of all articles and conference proceedings (collectively referred to here as papers) that acknowledged that award number. The consultant included bibliographic data for all papers published through December 31, 2015, that acknowledge an award in the DOE dataset. Separate searches were submitted to obtain the references within each paper, as well as the citations made to each paper between April 1, 2009, and December 31, 2015. The publication outputs of each award were added to the DOE dataset. A paper-level dataset also was created, containing only those papers that acknowledge an award in the DOE dataset. There were 9,152 papers in this set, 56 of which jointly acknowledge two DOE offices; 561 papers acknowledge an ARPA-E award. The committee notes that there are potential limitations in this dataset and that significant additional technical publications may not be included in the WOS Science Citation Index-Expanded; thus there may be underreporting of publications stemming from ARPA-E funded research using this source.

To determine whether a publication was highly cited, additional information was downloaded from Thomson Reuters to supplement the publication analysis: (1) a list of all indexed journals assigned to one of 22 categories, (2) citation thresholds for highly cited papers in each category by publication year, (3) a list of top journals with the number of highly cited papers published in that journal since 2005, and (4) a list of 88 journals classified by the Science Citation Index subject category “Energy and Fuels.”

In the paper-level dataset, 9 percent of papers were in journals classified under Energy and Fuels, 23 percent were in a top journal, and 5 percent were highly cited for a given field and year. The most prevalent journal categories were Chemistry, Physics, Engineering, and Materials Science; 71 percent of the papers were in one of these four categories.

USPTO maintains a publicly accessible website with the full text of all patents issued since 1976, which was used to determine the patenting activity associated with DOE awards. All searches of the USPTO site were automated using an HTML web scraper. For each award in the DOE dataset, the consultant searched for the award number in the Government Interest field of all USPTO records and then extracted the patent title, patent number, date, and number of claims from the web page of all patents granted through December 31, 2015, that acknowledge an award in the DOE dataset. Separate searches also were conducted to obtain citations made to each of these patents through December 31, 2015. The patenting outputs associated with each award were added to the DOE dataset.

A patent-level dataset also was created on the basis of distinct patents that acknowledge an award in the DOE dataset. Patent databases often lack identifying contract information in the government interest section, so the patent count may also be underrepresented here (Jaffe and Lerner, 2001). There were 392 patents in this set, 2 of which jointly acknowledged two DOE offices. An ARPA-E award was acknowledged by 75 patents.

Separately from the DOE data that were downloaded from USAspending.gov, ARPA-E provided the committee with data on its award history, which were current as of September 17, 2015. These data were organized by project and included project characteristics specific to ARPA-E, such as which technology program funded the project. As discussed in Chapter 2, programs that are broadly solicited across all technology areas are considered “open”; there have been three such programs in ARPA-E—OPEN 2009, OPEN 2012, and OPEN 2015.

Data on organization type of awardees provided by ARPA-E were supplemented with company founding year, which was obtained from public data sources (e.g., state government registries). Awardee companies were categorized as start-ups if they had been founded 5 years or less before the start of the project, while all other business awardees were categorized as established companies. Additionally, the partnering organizations listed on each project were coded as universities, private entities (for-profit and nonprofit), or

government affiliates (including national laboratories and other state/federal agencies).

Two steps were taken to narrow the scope of ARPA-E awards considered in this analysis, both of which made the ARPA-E dataset similar to the DOE dataset:

- Projects with an end date on or before September 30, 2015, were removed.

- Projects led by national laboratories were also removed.

Excluding active projects allowed a fair comparison among those projects that had completed their negotiated R&D activities. The early outputs from ongoing projects, some of which had just begun at the end of FY 2015, would not have accurately represented the productivity of these projects. This step had a dramatic effect on the size of the dataset—more than half of the projects initiated by ARPA-E as of September 30, 2015, were still active at that time. Projects led by national laboratories are funded by ARPA-E through work authorizations under the management and operations contract between DOE and the laboratory. Many of these projects are in fact subprojects of a parent project led by a different organization. In these cases, it is likely that the outputs of a project will acknowledge the award number of the parent project, rather than the national laboratory contract itself. As a result, it was not possible to assess accurately the output of the projects led by the laboratories individually.

The resulting dataset (“ARPA-E dataset”) contained 208 awards. It is important to note that the observations in the ARPA-E dataset are only a subset of the 263 ARPA-E awards in the DOE dataset because ARPA-E reports data on a per-project basis, and each project may encompass multiple awards (e.g., one to the lead awardee and one to each partner). Another consequence of this difference in award-based versus project-based accounting is that awards may have different start and end dates according to the two different data sources. For both the ARPA-E and DOE datasets, the consultant used the stated start and end dates from each data source to determine project duration and the fiscal year in which the project began.

In addition to the patent and publication data described above, the ARPA-E dataset contained information on additional metrics on “market engagement,” as defined by ARPA-E. In a public press release on February 26, 2016, the agency provided lists of projects that had achieved the following outputs: formation of a new company, private investment, government partnership, IPO, and acquisition (ARPA-E, 2016a). These metrics were used as early indicators of market engagement. The consultant analyzed both the likelihood of producing at least one output (patents, publications, or market engagement), and assessed the incidence rate for producing a number of outputs. The consultant also controlled for award amount and project length.

SUMMARY

This appendix has provided details of the committee’s framing of its task as well as specific methods used in this assessment. This discussion is intended to help the reader understand the operational and technical assessments that appear in Chapters 3 and 4, respectively. In particular, the committee used its individual interviews, observations of ARPA-E events, and quantitative analysis of internal data in the operational assessment described in Chapter 3. The committee used its case studies and quantitative analysis of external data in the technology assessment described in Chapter 4.