3

Past and Current Policies

The committee reviewed existing policies for data and software, and many of the lessons learned can be summarized by the statement, “Software is data, but data are not software.” Software is included in the definition of data (Section 2.1), but software is copyrightable, whereas data are not. The ability to claim copyright is an important distinction that changes how software policies can be implemented versus data policies. As NASA began implementing an open data policy in the 1990s, there was a simultaneous expansion in the volume of data. Initial data policies were limited to the simplistic requirement of a data management plan. As scientists and the data archive centers gained experience, data management plans expanded requirements based on lessons learned from previous data sets (e.g., formal archival centers are a better long-term solution). As NASA open data gained broad acceptance and began to be integrated into unexpected applications, data management plans began to require specific file formats and metadata attributes to ensure consistency across NASA’s diverse data sets. Simultaneously, an appreciation of the need for scientific results to be reproducible began gaining momentum within the national and international communities, and open data policies facilitated this need. The culture around NASA space science data has shifted from keeping data closed to open sharing of data. In many disciplines, not publicly sharing data is now seen as antithetical to the goals of science. This transformation of cultural “norms” in the science community is now beginning with software. It could occur more quickly and gain acceptance more broadly if policy is implemented carefully by reviewing lessons learned from existing policies for both data and software. In this chapter, the committee reviews examples of existing data policies, data management plans, and software policies and management plans for NASA Science Mission Directorate (SMD) divisions, other federal agencies, and publishers. Last, scientific journal policies are reviewed as they impact the community through requirements for publication. Only illustrative examples that yielded important lessons to consider are discussed.

3.1 DATA POLICIES

3.1.1 NASA

As discussed in Section 1.1, before the late 1990s, data sharing was cumbersome, involving mailed magnetic tapes, compact disks, or hard drives. The scientist who physically held the data controlled access, thereby limiting scientific advancement and reproducibility of results. Back then, restricting data access, usually to within the science team or individual’s research group, was the accepted practice. With the advent of inexpensive digital storage

and fast transfer of information over the Internet, it became easier to share data. In 1994, NASA’s Earth Science Division (ESD) adopted a full and open data policy, with no period of exclusive access beyond initial instrument calibration periods (usually 6 months), and nondiscriminatory access, for all NASA-generated standard products. This initially applied to Earth Science missions but was adopted by the other divisions. Despite initial resistance to publicly releasing data, open data access with few, if any, restrictions became the new accepted practice.

NASA SMD has been a leader in open data and creating the infrastructures necessary for managing, curating, and disseminating the data from its science missions and programs as well as archiving and providing universal access to science data products. NASA describes its activities as archiving

All science mission data products to ensure long-term usability and to promote wide-spread usage by scientists, educators, decision-makers, and the general public . . . to facilitate the on-going scientific discovery process and inspire the public through the body of knowledge captured in these public archives. The archives are primarily organized by science discipline or theme. Communities of practice within these disciplines and themes are actively engaged in the planning and development of archival capabilities to ensure responsiveness and timely delivery of data to the public from the science missions.1

NASA SMD has had numerous programs that fund mission teams and other experts to document and archive mission data and derived products.

One of the most comprehensive data systems is ESD’s Earth Observing System Data and Information System (EOSDIS), which is a core capability in NASA’s Earth Science Data Systems (ESDS) Program. “It provides end-to-end capabilities for managing NASA’s Earth Science data from various sources—satellites, aircraft, field measurements, and various other programs.”2 EOSDIS processes, archives, and distributes data for “studying the Earth system from space and improving prediction of Earth system change. EOSDIS consists of a set of processing facilities and data centers distributed across the United States that serve hundreds of thousands of users around the world.”3

In 2016, NASA updated agency policy for data in NASA Policy Directive (NPD) 2230.1 as follows:

- Ensure public access to the results of federally funded scientific research;

- Affirm NASA’s commitment to public access to information and data arising from technology development programs and projects;4

An implementation example of this policy is given by the 2016 Heliophysics Small Explorer (SMEX) Announcement of Opportunity (AO):

Mission data will be made fully available to the public by the investigator team through a NASA-approved data archive (e.g., the Planetary Data System, Atmospheric Data Center, High Energy Astrophysics Science Archive Research Center, Solar Data Analysis Center, Space Physics Data Facility, etc.), in usable form, in the minimum time necessary but, barring exceptional circumstances, within six months following its collection.5

NASA’s open data policy has led to increased access to public investments in research and driven investments within NASA to develop infrastructure, such as formal data archive centers. Each division within SMD has created its own data center organization that responds to community specific needs. These communities are actively engaged in the planning and development of archival capabilities. The facilities ensure responsiveness and timely delivery of data to the public, provide data archiving, and provide visualization tools that it would be inefficient for individual researchers to create. Infrastructure such as these archival centers advances the public use of NASA

___________________

1 NASA Open Government Plan, 2010, p. 75, https://www.nasa.gov/pdf/440945main_NASA%20Open%20Government%20Plan.pdf.

2 See https://earthdata.nasa.gov/about.

3 NASA Open Government Plan, 2010, p. 76, https://www.nasa.gov/pdf/440945main_NASA%20Open%20Government%20Plan.pdf.

4 See https://nodis3.gsfc.nasa.gov/displayDir.cfm?t=NPD&c=2230&s=1.

5 See https://nspires.nasaprs.com/external/solicitations/summary.do?method=init&solId={A0C496AC-9B9D-8F7D-A506-B1695BF9BDE8}&path=closedPast.

data and provides expert guidance for users. The NASA data archive centers facilitate finding and using data for applications and research, resulting in increased use of NASA data.

Lessons Learned: Changes in agency data policies prompted changes in accepted practices regarding sharing of data.

NASA’s investments in infrastructure allowed NASA SMD to realize the benefits from an open data policy by providing a robust and comprehensive data system for the scientific research community, policy makers, and the public to have consistent access to curated data. This simple but powerful mechanism to access and use the data requires a large and sustaining investment in this data infrastructure.

3.1.2 USGS

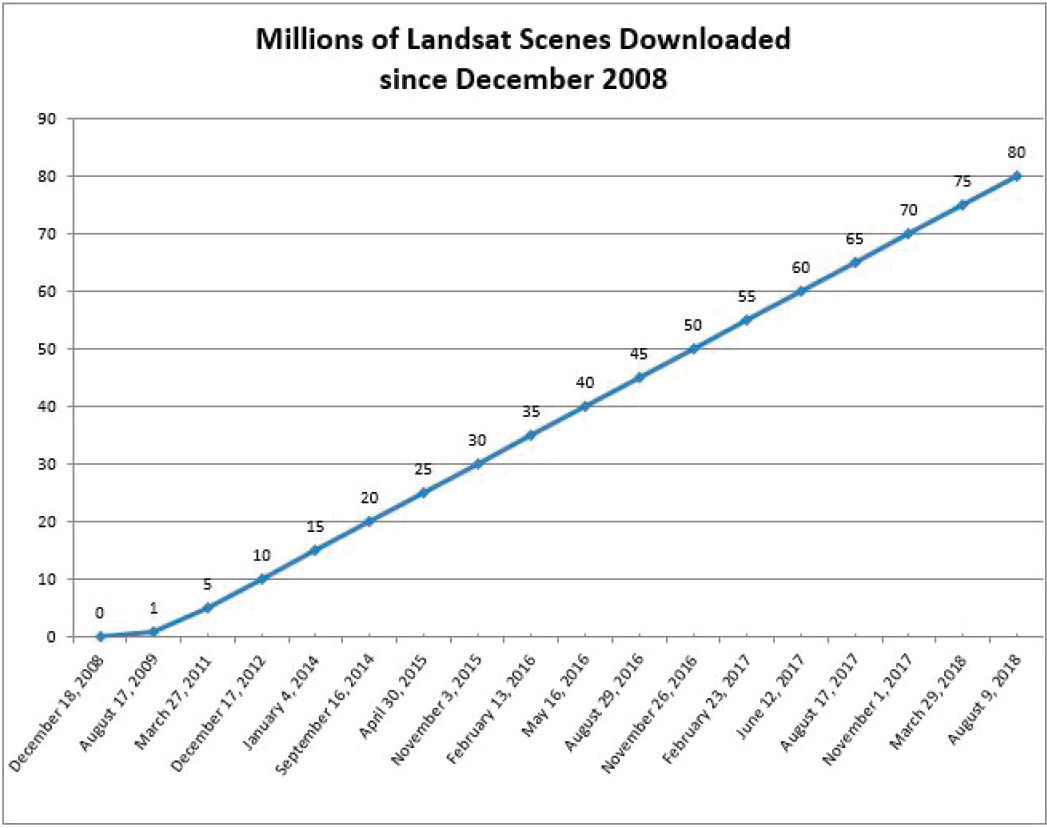

In 2008, the U.S. Geological Survey (USGS) changed policy to provide free and open access to Landsat data, resulting in more than 42 million scenes being downloaded around the globe (Figure 3.1). “After the policy change, the average number of scenes obtained from all sources annually per user more than doubled.”6 The National Research Council report Landsat and Beyond: Sustaining and Enhancing the Nation’s Land Imaging Program summarized the economic value of Landsat data applications:

Landsat images make critical contributions to the U.S. economy, environment, and security. Specific economic analyses of some of the benefits derived from the Landsat series of satellites demonstrate its great value for the nation. Most of the analyses use imagery provided without charge by USGS, so their value is not set by market forces. However, analyses of just 10 selected applications—including consumptive water use, mapping of agriculture and flood mitigation, and change detection among them—show more than $1.7 billion in annual value for focused operational management in the United States.7

Other studies have also demonstrated the general value of open data. A 2013 market assessment estimated U.K. “public sector information” to have an aggregate value to society of between £6.2 billion and £7.2 billion in 2011/12.8 A 2016 Australian study estimates that “open government data” has potential to generate up to $25 billion per year, or 1.5 percent of Australia’s GDP.9

Lessons Learned: Open access policies can dramatically increase the economic value and exploitation of federally funded resources and have unanticipated applications that benefit society.

3.2 DATA MANAGEMENT PLANS

As more data was released to the public through open data policies, in the mid-2000 some federal agencies began requiring that proposals for research funding include a data management plan (DMP). A 2013 Office of Science and Technology Policy (OSTP) memo directed the heads of executive departments and agencies to increase access to the results of federally funded scientific research, including peer-reviewed publications and data.10 The memo called for agencies to

___________________

6 USGS, 2013, Users, Uses, and Value of Landsat Satellite Imagery—Results from the 2012 Survey of Users, https://pubs.usgs.gov/of/2013/1269/pdf/of2013-1269.pdf.

7 National Research Council, 2013, Landsat and Beyond: Sustaining and Enhancing the Nation’s Land Imaging Program, The National Academies Press, Washington, DC, https://doi.org/10.17226/18420.

8 Deloitte, 2013, Market assessment of public sector information, Deloitte (UK) for Department for Business Innovation and Skills, http://www.gov.uk/government/uploads/system/uploads/attachment_data/file/198905/bis-13-743-market-assessment-of-public-sector-information.pdf.

9 See https://www.communications.gov.au/publications/open-government-data-and-why-it-matters.

10 See https://obamawhitehouse.archives.gov/sites/default/files/microsites/ostp/ostp_public_access_memo_2013.pdf.

Ensure that all extramural researchers receiving Federal grants and contracts for scientific research and intramural researchers develop data management plans, as appropriate, describing how they will provide for long-term preservation of, and access to, scientific data in digital formats resulting from federally funded research, or explaining why long-term preservation and access cannot be justified.

The following sections describe some of the DMP requirements of various agencies and programs. While most DMP requirements concern scientific data, some do mention software, and their handling of this is discussed below.

3.2.1 NASA

The 2009 Earth Venture-1 spacecraft mission announcement was the first NASA funding announcement to call for a DMP: it asked proposed missions to give a “schedule-based end-to-end data management plan, including approaches for data retrieval, validation, preliminary analysis, public release and archiving.”11 Then, research

___________________

11 NASA Research Opportunities in Space and Earth Sciences—2009.

funding programs began asking for a DMP as well. Next, a 2010 Mars science program asked that responders follow the Mars Exploration Program Data Management Plan, which is a well implemented, detailed document that describes formatting, access, and archiving.12 Two Earth Science announcements in 2011 required a simple DMP (to be included in the technical proposal page limit and used as an evaluation criterion), which introduced the need for metadata and data formats.13,14 In 2012, three Earth Science and one Mars announcement all included required DMPs.15 One of the Earth Science DMP requirements was expanded from a previous version to include more details on data quality. Individual program managers began realizing the importance of a data management plan before the SMD did, and they began asking for DMPs. The initial requirements evolved, due to community feedback, as both parties’ (the program managers and the science community) understanding of data matured.

In response to the 2013 OSTP memo, NASA developed a Plan for Increasing Access to the Results of Scientific Research,16 which addresses only data and peer-reviewed publications. This plan required that all NASA proposals have DMPs that describe sharing and preservation plans for all data used as part of published findings produced during the project. In 2016, the NASA guidebook for proposers required a DMP for all submitted proposals.17 While the guidebook gave a description of the DMP, individual funding announcements could also ask for additional information and provide evaluation criteria.18 After 7 years, the DMP was included in the guidebook in a standardized format, integrated into the proposal submission system, and required for all submitted proposals.

Lessons Learned: Subject to discipline-specific needs, providing standardized data management plan submission and formatting as well as educational resources such as the NASA guidebook has facilitated community understanding of new requirements for open data.

The NASA guideline for proposers’ DMPs specifically relates to “data generated through the course of the proposed research” and makes passing mention of software. The intended scope of the DMP is to apply to “not only the recorded technical information, but also metadata (describing the data), descriptions of the software required to read and use the data, associated software documentation, and associated data (e.g., calibrations).”19 The DMP guidelines do not address sharing software, but mention the need to describe software that reads or writes data. At this time, management plans for software are not part of the proposal evaluation except for a few specific funding announcements (see Section 3.3.1).

3.2.2 NSF

The National Science Foundation (NSF) updated its data-sharing policy in 2011 and began requiring an additional maximum two-page DMP (to be evaluated during the proposal review) with basic information about data created during the project.20 Each directorate may provide additional information and requirements relevant for its community.

For example, the Directorate for Biological Sciences (BIO) routinely updates its DMP requirements. In 2011, BIO required reporting of DMP, which would be evaluated by program managers and committees of visitors, and that future proposals would be evaluated on previous data management efforts. This was an early example of independent evaluation of data-management practice affecting future funding decisions. The evaluation by an independent group is critical to the perception that the new policy will be implemented fairly and evenly. In the 2018

___________________

12 NASA Research Opportunities in Space and Earth Sciences—2010.

13 NASA Research Opportunities in Space and Earth Sciences—2011.

14 Including the DMP in the technical page limit section of the proposal discouraged including many details about the DMP in order to maximize descriptions of the research.

15 NASA Research Opportunities in Space and Earth Sciences—2012.

16 See https://www.nasa.gov/sites/default/files/atoms/files/206985_2015_nasa_plan-for-web.pdf.

17 See https://www.hq.nasa.gov/office/procurement/nraguidebook/proposer2018.pdf.

18 See https://www.nasa.gov/open/researchaccess/data-mgmt.

19 See https://www.nasa.gov/open/researchaccess/data-mgmt.

20 See https://www.nsf.gov/pubs/policydocs/pappg17_1/pappg_2.jsp#IIC2j.

BIO DMP, the reporting requirements were expanded to include evidence of open sharing by providing the data set location and identifiers or accession numbers that could be easily used to evaluate adherence to the policy. Additionally, the 2018 BIO DMP document included a section on resources providing guidance on data-management practices and writing DMPs, making it easier for scientists to confidently provide the requested information.

Lessons Learned: Clear reporting guidelines and evaluation criteria, as well as evaluation by groups independent from the program, increase the confidence of the community that the policy will be fairly implemented.

It was noted in committee discussion that policy implementation is highly variable across divisions and programs (Box 3.1). There are few NSF-specified formats, and only some disciplines have supported data centers (e.g., Arctic Data Center,21 Geospace Madrigal22). Researchers may find it difficult to find specific data sets, and

___________________

21 See https://arcticdata.io.

once found, they may find it difficult to use the data, due to custom, undocumented formats. Only some programs require submission to a data center.

Lessons Learned: Program managers are key to successful implementation of policy. Central repositories run by funded expert curators enhance data discovery, usability, and compliance with policy.

3.2.3 USGS

USGS began a program in 2010 to develop formal data management plans with three science centers.23 Based on this work, in 2012 USGS recognized the need to develop a comprehensive DMP template and funded the three centers to develop one, and to establish best practices, data standards, and lay the foundation for USGS-wide integration. This pilot recognized the importance of policy implementation, providing guidance for scientists, and establishing clear program policy, guidance, roles, oversight, and review mechanisms. The study found that science center “buy in” was critical and that the existing policy resulted in confusion among all interviewed.

For all projects beginning after 2016, USGS requires a DMP with clear guidelines.24 To help with implementation, the USGS webpage describes the DMP, lists frequently asked questions, and gives examples of DMPs. It also links to “dmptool,” a free online tutorial and DMP generator.25 This well-documented and described plan seems to have directly benefited from the earlier study at three science centers.

Lessons Learned: Pilot studies can provide valuable guidance prior to agency-wide implementation of policy.

3.3 SOFTWARE POLICIES

A fundamental difference between data and software is that writing software creates intellectual property that is covered by copyright laws. Moreover, while data files can be structured to contain all the metadata needed to use the data, software can include multiple interdependent files, resulting in management and version control challenges that are not all fully acknowledged or documented in various federal agencies and programs. This section provides some examples of the evolving nature of software development policies and practices. Although open data policies are becoming the standard, in many fields, software policies are not yet normal practice. The fields that are already implementing open source software (OSS) are benefiting from the large open source community of software developers and the tools to enhance group collaborations. NASA scientists can utilize and build on an extensive infrastructure for OSS—for example, GitHub, GitLab, Bitbucket, or others. Yet, as with open data, support of software management specific to NASA’s needs is still required and is typically more complex due to many factors, including multiple software types, as described in Section 2.2.

3.3.1 NASA

Currently, NASA SMD lacks an overarching policy addressing software management, but several programs within SMD have created both ad hoc and official policies. In the following sections, a few of these policies are described, along with some of the lessons learned from these policies.

Earth Sciences

In fall 2015, NASA’s ESD published an open source policy for the ESDS.26,27 The policy was developed to “promote the full and open sharing of all data, metadata, products, information, documentation, models, images,

___________________

23 See https://www2.usgs.gov/datamanagement/plan/dmplans.php.

24 See https://www2.usgs.gov/datamanagement/plan/dmplans.php.

25 See https://dmptool.org/.

26 See https://sites.nationalacademies.org/cs/groups/ssbsite/documents/webpage/ssb_174603.pdf.

27 See https://earthdata.nasa.gov/earth-science-data-systems-program/policies/esds-open-source-policy.

and research results—and the source code used to generate, manipulate, and analyze them.” It requires that all software developed through NASA ESD awards, including Research Opportunities in Space and Earth Sciences (ROSES), unsolicited proposals, and in-house funded development, be publicly released with a permissive OSS license. The policy requires using a permissive, widely accepted OSS license and developing the software within a public repository from inception of the funded activity. The software may be granted an exception from the OSS policy for a limited number of stated reasons, including patent or intellectual property law, and national security. Education, software maintenance, and documentation are not yet included in the policy. It states, “NASA will evaluate all funded ESDS software development activities for continued compliance with the OSS policy,” but does not discuss how such compliance will be determined.

The first time a ROSES funding announcement references this ESDS OSS software policy is in “A.42 Advancing Collaborative Connections for Earth System Science.” The announcement requires, in the description of the proposal contents, that the proposer “describe the software development approach and lifecycle” and “provide an open source software development plan, identify an open source software license and state an open source software release milestone.”28 In other parts of the 2017 NASA research announcement, the OSS policy is not mentioned. The guidelines also do not state where in the final proposal the software development plan is placed and whether or not it is contained within the 15-page limit.

Planetary Sciences

NASA’s Planetary Science Division (PSD) has incorporated software management in the DMP for most program elements.29 Software developed under a NASA grant is to be made publicly available (either at NASA’s GitHub organizational account30 or any appropriate repository) along with sufficient documentation for its use. However, this requirement allows for a number of exceptions based on the practicality of making the software public. Exceptions include software that is not straightforward to implement and software that requires excessive effort to make public. The policy clearly states that software maintenance is not required. An example of PSD modeling and simulation software is depicted in Figure 3.2.

The Planetary Data Archiving, Restoration, and Tools (PDART) solicitation also allows for software tool development and validation.31 Proposals are required to have a plan for dissemination of the tools and to archive the source code at the NASA’s Planetary Science GitHub organizational account.32 This program also accepts development or enhancement of numerical models, with the expectation that they will be made publicly available.

Astrophysics

The NASA Astrophysics Division does not have a general requirement for software developed within its programs to be made open source, and while the text in ROSES 2018 states that most new astrophysics proposals will require a DMP, there is no similar requirement for software.33 Several solicitations do independently require new software to be open source, however. For example, the solicitation for the Transiting Exoplanet Survey Satellite (TESS) guest investigator (GI) program states that “proposals must clearly describe the plans to make any new software, higher level data products and/or supporting data publicly available. Software developed with TESS GI funds must add value to the TESS science community, be free, and open source.”34

Despite the lack of an overarching requirement, the Astrophysics Division has previously taken steps to make commonly used astrophysics software available as open source. For example, the High Energy Astrophysics Science Archive Research Center (HEASARC), the primary archive for high-energy astronomy missions, makes

___________________

28 ROSES 2017, pp. A.42-1-A.42-5.

29 ROSES 2018, p. C.1-7.

30 See https://github.com/nasa.

31 ROSES 2018, C.7-3.

32 See https://github.com/NASA-Planetary-Science.

33 NASA ROSES, p. D.1-1.

34 NASA ROSES, p. D.11-4.

available an extensive library of general and mission-specific software35 and the Kepler/K2 missions support a suite of data processing software through the Guest Observer Office.36

Heliophysics

Similar to the Astrophysics Division, the committee was unable to find a requirement on software or models developed for any of the currently competed NASA Heliophysics programs, but, as stated earlier, ROSES 2018 does require proposals to include a data management plan.

The NASA Living with a Star (LWS) Science program contained an element called “Strategic Capabilities” that pertained to the development, implementation, and delivery of large computer models for the coupled sun-Earth and sun-solar system.37 These models were sufficiently mature to address a significant and specific need for achieving the LWS Science Objectives. There has not been an LWS Strategic Capabilities competition since 2011, when the program was renamed NASA/NSF Partnership for Collaborative Space Weather Modeling. The 2011 announcement of opportunity states that “the proposed development must integrate the science into one or more deliverables (e.g., models or tools) broadly useful to the larger community and that will be delivered to an appropriate repository or server site within the term of the project.” It later states that “all models and software modules . . . must be submitted to an appropriate NSF and/or NASA modeling center, such as the Community Coordinated Modeling Center (CCMC).” Also, “the proposal must include a description of how the resulting model(s) or other deliverables will be validated,

___________________

35 See https://heasarc.gsfc.nasa.gov/docs/software.html.

36 See https://keplerscience.arc.nasa.gov/software.html#k2fov.

documented, and made available to potential users.”38 The committee notes that these requirements mention only models and software modules without making specific reference to “open source.” Thus, there does not seem to be any requirement to make the source code open. Moreover, since this program has not been competed for 7 years, it is not clear whether the requirements listed in the announcement were enforced or delivered.

Internal Software Development at NASA

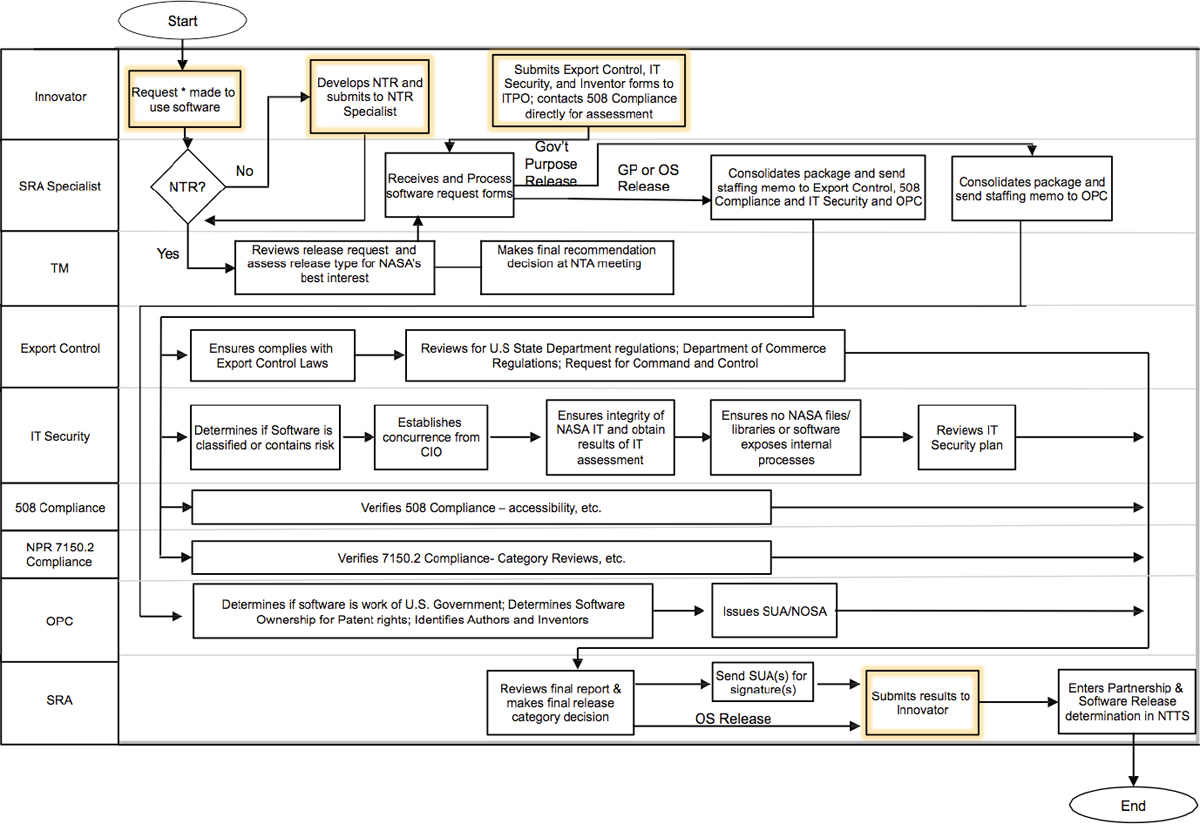

While there is no agency-wide requirement to publicly release all internally developed software, NASA employees are encouraged to do so. Such releases are subject to the Technology Transfer Office (TTO) Software Release Process, as described in NASA Procedural Requirements (NPR) 2210.1C and depicted in Figure 3.3.39,40

___________________

38 See Section 3.3.1. Under the Heliophysics section is page B.7-3 of this announcement of opportunity: https://nspires.nasaprs.com/external/viewrepositorydocument/cmdocumentid=255987/solicitationId=%7B76632D5E-26F6-ACC7-9F82.

39 See https://nodis3.gsfc.nasa.gov/displayDir.cfm?Internal_ID=N_PR_2210_001C_&page_name=Chapter1.

40 A partial waiver from these requirements was requested by, and granted to, the Nebula Cloud Computing Platform in 2010 to allow the release of “incomplete” software for open development. More information can be found at https://www.nasa.gov/open/nebula.html and https://nodis3.gsfc.nasa.gov/NRW_Docs/NRW_2210-35.pdf.

At the start of this process, the software developer must first disclose the creation of the software through the filing of a New Technology Report (NTR), in compliance with NPD 2091.1B.41 The developer may then submit an application to the Software Release Authority (SRA), who will coordinate the review of the software, verifying compliance with export control, information technology security, patent, licensing, and accessibility requirements. Once a release is fully authorized, the software will be made available through an online repository, where it may then be requested by external users. Restrictions on which users will be granted access to the software are dependent on the type of release granted, and most release types will require the signing of a software user agreement (SUA), which can include a nondisclosure obligation or other similar requirements.42

All internal software must follow the Software Release Process, as described above, prior to release, regardless of relative complexity or risk, and software that is intended to be fully open to the public is subject to greater scrutiny during the approval process.43 Because of this, community members have reported a significant increase in the workload and time required to gain approval for the release of certain software through this process, compared to gaining approval to publish the same software in an academic journal (WP 1).44 In response to such concerns, the TTO has recently developed an electronic document routing system that allows release requests to be issued in parallel and closely tracked, meant to streamline the process and to help identify and correct inefficiencies.45 While the new tracking system is a clear improvement, this process still does not allow for preapproval of software or expedited approval for simple software of broad utility and could quickly be overcome if the number of requests increased substantially.46

3.3.2 NSF

The current NSF Proposal and Award Policies and Procedures Guide states, “Investigators and grantees are encouraged to share software and inventions created under the grant or otherwise make them or their products widely available and usable.”47 Proposals require a DMP that includes a description of software created during the project, but no sharing, licensing, or other details are specified. The comprehensive 2011 NSF Software for Science and Engineering (SSE) Task Force report48 had 11 major recommendations to support the research, development, and maintenance of OSS infrastructure.49 SSE was encouraged to develop a multilevel long-term program of support of open source scientific software elements; provide leadership in promoting software verification, validation, sustainability, and reproducibility; develop a consistent policy on OSS that promotes scientific discovery and encourages innovation; support software collaborations among all of its divisions, related federal agencies, and private industry; obtain community input on software priorities and encourage best practices; explore the legal and technical issues with respect to the different open source licenses; promote discussion among its own personnel and with leadership at institutions where its principal investigators are employed; and develop, acquire, and apply metrics for review of SSE projects that are complementary to the standard criterion of intellectual merit. These recommendations have not yet been implemented widely.

While NSF does not have a foundation-wide requirement for open source licensing, the Computer and Information Science and Engineering (CISE) Directorate does include stipulations on a case-by-case basis in various program solicitations. For example, the Future Internet Architecture-Next Phase (FIA-NP) program50 required all

___________________

41 An NTR is required for all new software developed by NASA employees, contractors, and grantees, regardless of whether that software will be released or not; see https://nodis3.gsfc.nasa.gov/displayDir.cfm?Internal_ID=N_PD_2091_001B_&page_name=main.

42 The five release types are General Public Release, Open Source Release, U.S. and Foreign Release, U.S. Release Only, and U.S. Government Purpose Release.

43 See https://www.nasa.gov/centers/johnson/techtransfer/technology/software-release.html.

44 WP [number] is used to reference the white papers submitted to the committee.

45 D. Lockney, 2018, “NASA Software Release,” presentation to the committee on January 18, 2018.

46 NASA approved 1,369 software releases in 2013 and 5,054 in 2017. Numbers from January 18, 2018, “NASA Software Release” presentation by D. Lockney to the committee.

47 NSF Proposal and Award Policies and Procedures Guide, p. XI-12.

48 SSE was formed to identify the needs and opportunities of a scientific open source software infrastructure.

49 See https://www.nsf.gov/cise/oac/taskforces/TaskForceReport_Software.pdf.

developed software to be released with OSI-approved license.51 The Secure and Trustworthy Cyberspace (SaTC) program does not require that software be open source, but states that researchers must strongly justify why developed software will not be open source.52 Any future plans for NSF to require open source on an agency-wide basis were not apparent. These two examples did not specify how to share software or give a specific archive site, provide educational resources on OSS, or give details on how requirements would be enforced. While the 2011 SSE report discussed above presented a clear roadmap for scientific OSS, the existing solicitations do not include its recommendations.

Lessons Learned: The lack of a coherent agency policy at NSF has resulted in inconsistent directorate guidance regarding OSS.

3.3.4 DOE

The Department of Energy (DOE) policies on OSS evolved over time. Release of software at DOE laboratories was allowed in 200253 after required approvals from the DOE program and Patent Counsel. In 2003, approval from the Patent Counsel was removed.54 In 2010, the policy was again changed in response to DOE laboratories’ difficulty in obtaining the necessary program approval: affirmative approvals were no longer required except in certain cases,55 and policy states that DOE programs must be given 2 weeks prior notice to object to licensing as OSS.56 Laboratories were tasked with monitoring the use of OSS and periodically assessing the value of OSS. While this policy removes DOE approval as a barrier to releasing software, individual laboratories’ release policies, which may include overly restrictive export control requirements, may still present barriers (see Section 2.3.5).

Lessons Learned: Removing positive affirmation for software release may reduce agency workloads and streamline software release.

3.3.5 DOD

The Department of Defense (DOD) did a study on OSS with a very different slant than many of the other agencies.57 It focused on the increasing concern of OSS introducing malware.58 The report also references some conferences that focus on OSS in the defense arena. This study found that OSS plays a more critical role in the DOD than has generally been recognized. It had the following three recommendations:

- Create a “Generally Recognized as Safe” OSS list.

- Develop Generic, Infrastructure, Development, Security, and Research Policies to better develop and utilize OSS.

- Encourage use of OSS to promote product diversity.

The Army Research Laboratory (ARL) Software Release Process for Unrestricted Public Release provides procedures that ARL government personnel must follow when releasing software source code and software-related

___________________

51 See https://www.nsf.gov/pubs/2013/nsf13538/nsf13538.htm.

52 See https://www.nsf.gov/pubs/2017/nsf17576/nsf17576.htm.

53 DOE Patent Counsel IPI-II-1-01: Development and Use of Open Source Software.

54 See https://www.energy.gov/sites/prod/files/2015/01/f19/IPI-OSS%20April%202010.pdf.

55 Exceptions include export control, software commercialization, or special grant or contract terms and may affect substantial portions of scientific software that are developed in DOE laboratories, https://www.energy.gov/sites/prod/files/2015/01/f19/IPI-OSS%20April%202010.pdf, p. 2.

56 See https://www.energy.gov/sites/prod/files/2015/01/f19/IPI-OSS%20April%202010.pdf.

57 MITRE Report Number: MP 02 W0000101.

material to the public, and for accepting software-related contributions from the general public.59 The document explains why publishing software is important and some of the legal and regulatory constraints on doing so.

Another example of DOD support for open source development is the Defense Advanced Research Projects Agency (DARPA) XDATA program, which develops “an open source software library for big data . . . [including] tools and techniques to process and analyze large sets of imperfect, incomplete data.” OSS and peer-reviewed publications are released via the DARPA Open Catalog.60

Lessons Learned: Education of software developers and scientists on how to recognize quality OSS and implement it in a secure fashion is essential to maintaining a safe environment.

3.3.6 USGS

USGS has an Instructional Memorandum (IM) establishing the requirements for reviewing, approving, releasing, sharing, and documenting software created by USGS employees and intended for release.61 The Office of Science Quality and Integrity and Core Science Systems develops and maintains the policy and related procedures. Accordingly, the emphasis is on science software, as follows:

USGS software intended for public release includes any custom developed code yielding scientific results, thereby facilitating a clear scientific workflow of analysis, scientific integrity, and reproducibility. USGS software releases are made available publicly at no cost and in the public domain. Not all USGS software is suitable for release to the public, including software developed for use on internal Bureau systems or software that has privacy, confidentiality, licensing, security, or other constraints that would restrict release.

The IM requires that released software have an assigned digital object identifier (DOI) and that “software releases and associated documentation constitute official records of the USGS,” meaning that they have to be managed according to their records management policies or National Archives and Records Administration (NARA). Section 2.3.4 discusses the issues with public domain licensing. Because there is no OSI-approved public domain choice for releasing software, this policy appears to contradict federal policy discussed in Section 3.3.7.

Lessons Learned: USGS releases software as public domain. In the absence of an OSI-approved option for releasing software as public domain, this choice does not appear to be available to NASA.

3.3.7 Federal Policy

The Federal Source Code Policy’s Pilot Program clarified guidance for using open source licenses, as follows:62

Your agency should choose a standard license (or licenses) that can be applied across its open source projects in order to minimize the cost and risk of choosing a license on a project by project basis.

In choosing your open source license, here are some considerations:

- The Open Source Initiative (OSI) approves open source licenses, a list of which can be found at https://opensource.org/licenses/category. Further still, OSI considers some licenses to be “popular, [and] widely used.” Using OSI popular licenses may maximize the interoperability of your open source license with other open source code and increase the comfort level in the minds of potential contributors. OSI maintains a list of popular licenses at https://opensource.org/licenses.

- Choose licenses that do not place unnecessary restrictions on the code. Any restrictions on the code should be reasonable and essential to furthering your agency’s mission.

___________________

59 See https://github.com/USArmyResearchLab/ARL-Open-Source-Guidance-and-Instructions.

60 See https://opencatalog.darpa.mil/XDATA.html.

61 See https://www2.usgs.gov/usgs-manual/im/IM-OSQI-2016-01.html.

62 See https://code.gov/#/policy-guide/docs/open-source/licensing.

- Avoid the creation of ad hoc licenses to prevent uncertainty in the minds of contributors as to the legal rights of distribution and reuse. Opt instead to use standardized and well-vetted legal licenses.

As noted in Section 2.3.4, because of how copyright and patent rights differ around the world, a policy that licenses software created by U.S. government employees is not at odds with the fact that as a matter of U.S. copyright law, software developed by U.S. government employees is in the public domain. This is because such software may not be public domain in other countries where the license is useful for sharing works otherwise restricted by copyright.

3.3.8 Large Community Software Projects

Community-Developed Open Source Software Projects

Research computing has historically involved both proprietary and community-based software. A few software tools predominate in certain Earth and space science fields, some of which were developed by a broad community of scientists. Many of these software projects were developed in collaboration with the computer science and computational physics communities with funding from large grants by DOE or NSF, or through centers such as NCAR. These efforts typically have included both extensive verification and validation testing as well as regression test suites. Often, major code releases are tied to journal publications and credit for code developers is facilitated through citations to these papers. For example, a paper describing the SolarSoftWare set of integrated libraries, databases, and utilities has more than 400 citations.63 In some cases, the community is allowed to modify and contribute to the code and its test suites. But in many cases, code contributions are limited to scientists with specialized expertise. Software developed to solve a specific problem is typically made public after the initial science-application papers are published. Software support in these projects ranges from minimal documentation to fully supported help desks.

Software development in these projects using best practices has been well funded (typically, at $1 million to $3 million per year for 5 to 10 years). Projects that do not have this level of funding usually have less testing and software support. Generally, in a situation where two projects exist addressing the same application, software with less testing and support has fewer users because the software is less trusted in the community. The software projects with large number of users and developers (see Box 3.2) have contributed to many advancements in science that would not otherwise have been possible.

Lessons Learned: Large OSS frameworks provide substantial value to the community, especially when well supported.

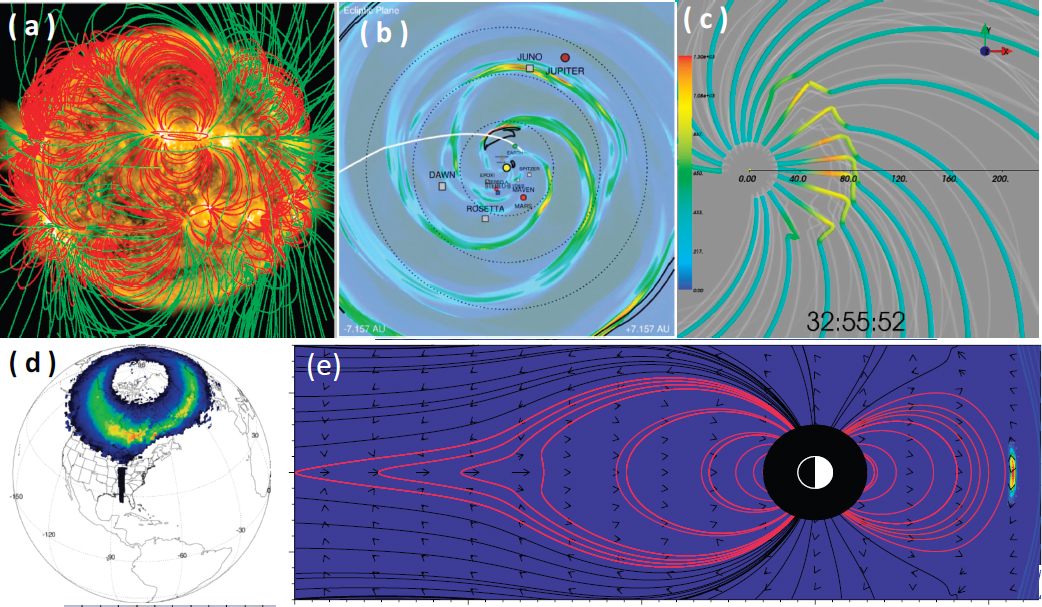

Community Coordinated Modeling Center

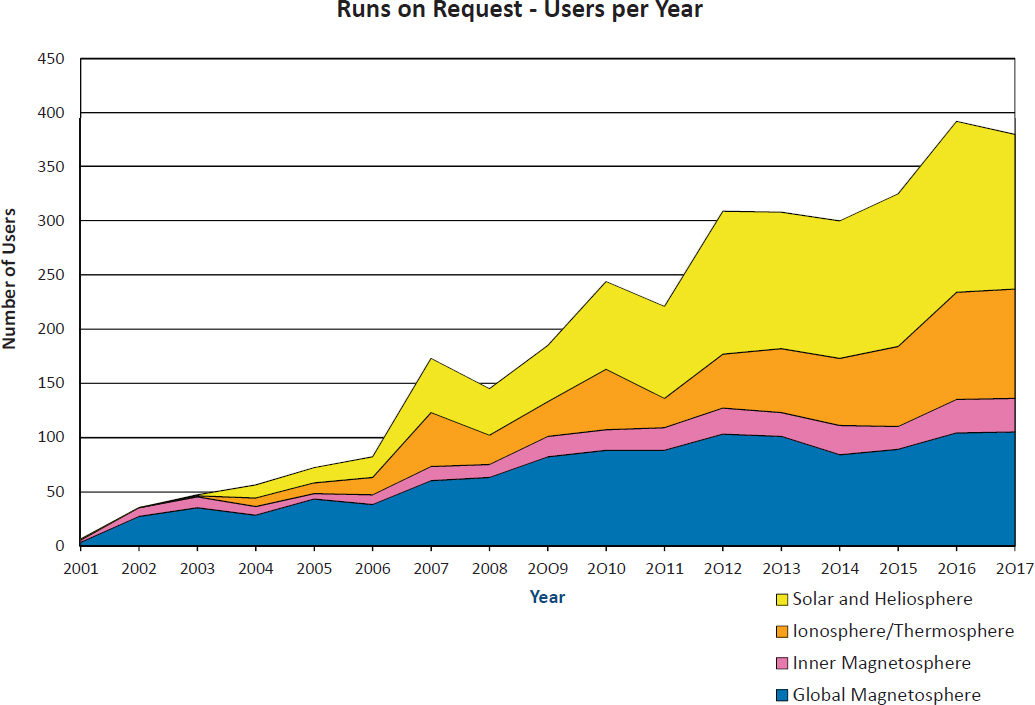

The Community Coordinated Modeling Center (CCMC)64 was established in 2000 by a multiagency partnership including NASA Heliophysics and NSF, and it hosts an expanding repository of heliophysics research modeling software and coupled modeling chains (Figure 3.4). The software packages held by CCMC are generally included in the “simulation software” and “modeling framework” categories of Table 2.1, but in this community, they are referred to as “models” and will be referred to as such in this section. Models are provided for use by the scientific community without disseminating source codes. Users of the CCMC perform runs-on-requests (RoR), which execute simulations using a Web interface or run simulations with staff assistance. Results of these custom simulation runs are archived and made available for Web-based visualization and analysis or through downloads. The majority of CCMC users are not modelers but use the simulation results in their research rather than in developing software.

___________________

63 Number of citations given in Google scholar, https://scholar.google.com/scholar?hl=en&as_sdt=0%2C5&q=Data+analysis+with+the+SolarSoft+system&btnG=.

Submission of a model to the CCMC constitutes explicit permission by the developers for public access to model runs and outputs. The CCMC staff is permitted to modify model software only for the following purposes: adapting models to CCMC-specific hardware or converting model input/output formats to CCMC-specific formats. Other types of code modifications require explicit permission from model developers on a case-by-case basis. Permissions to introduce modifications to the source codes on request of RoR users are often granted by developers. All codes at the CCMC are protected from unauthorized access or unintentional dissemination, and by default, source code may not be distributed to any entity.

The benefits to the CCMC model developers became apparent a few years after the start of the center. Modelers noted broader utilization of their models and an increase in the number of publications and presentations citing the model. After the initial slow growth, and some community resistance, when scientists resisted submitting their model to the CCMC, the number of models hosted at the CCMC grew rapidly alongside community acceptance

(Figure 3.5). While delivering software to the CCMC is now the accepted practice, it took more than 5 years of evolutionary transition to achieve community acceptance of the CCMC and to go from 1 model to 15. It then took another approximately another 5 years before delivery models to the CCMC became a norm and a requirement for some funding opportunities.

Using a modeling center such as the CCMC allows running large-scale models that can take a large amount of computational resources and can have an extremely steep learning curve. Modeling centers bypass this learning curve by having the actual simulation conducted by modeling experts on NASA computing resources, such that the user needs to know only how to interpret the results. While software at the CCMC is not open source, this approach created a mechanism where researchers could share model results without sharing their model. This increased model utilization and reproducibility, but it is not equivalent to an “open source policy.”

Making the models ready for utilization outside the team of original developers requires considerable effort. Many codes are delivered to the CCMC without documentation or comments and require a considerable investment of effort to enable implementation. This has become problematic, because the inflow of models for on-boarding at the CCMC is increasing. Information technology security requirements that include frequent operational system (OS) upgrades also introduce challenges for the long-term usability of models. For example, some models work only on a specific OS and a specific version of a compiler. Any OS upgrade comes with a threat of removing the model from service. To ensure timely implementation of the outcomes of large model development efforts (e.g., NASA living with the Star Strategic Capabilities), the CCMC staff of 13 people (including scientists, software developers, and system engineers) is working with developers on earlier stages of the project.65 To streamline future upgrades and ensure portability and long-term usability, the CCMC staff help to implement and educate modelers in technologies (such as Docker containers66) that are helping to address this problem.

___________________

65 See https://ccmc.gsfc.nasa.gov/community/LWS/lws_mod.php.

CCMC users frequently ask to introduce modifications into the source code of a model to achieve their research goals. Some models come with comprehensive documentation and have used best practices for coding and commenting, and the CCMC scientists are readily addressing user requests with model developer permissions. Such models usually have a large team of developers located at multiple institutions.67 A good example is the Space Weather Modeling Framework68 developed at the Center for Space Environment Modeling at the University of Michigan, where a source code modification (introduction of a floating resistive spot at the subsolar point of a dayside magnetopause) was introduced in support of the research by Borovsky et al. (2008).

For many other models, introducing modification into source codes can be problematic because most heliophysics models and coupled modeling systems that are utilized through the CCMC runs-on-request system have not been designed for broader community involvement in further development. To find the right place in the source code can be time consuming and sometimes is not achievable without the help of the original model developers. Many models are very sensitive and become unstable after even minor modification. To address such requests, the

___________________

67 G. Toth et al., 2005, Space Weather Modeling Framework: A new tool for the space science community, Journal of Geophsyical Research Space Physics, 110:A12226, doi:10.1029/2005JA011126.

68 J.E. Borovsky, M. Hesse, J. Birn, and M.M. Kuznetsova, 2008, What determines the reconnection rate at the dayside magnetosphere?, Journal of Geophysical Research 113(A7):210.

CCMC contacts developers of the models for help or advice. This support from developers is not funded, causing some requests to be delayed or declined.

Lessons Learned: The CCMC has been a successful way to increase public access to software but it is not open source, thus it requires additional infrastructure to run the software and control access. This approach to sharing software, which may be appropriate for some communities, is an example of evolutionary transition to new norms and implementation of evolving policies and requirements that led to achieving desirable goals with moderate investments and limited community resistance.

Lessons Learned: Technologies that enable code portability can lessen the concern that legacy models will become unusable.

Lessons Learned: Original model developer involvement may be necessary to introduce modifications into complex legacy codes that were not originally designed for broader community utilization and typically are not well documented.

3.4 JOURNAL POLICIES ON OPEN DATA AND SOFTWARE

The number of journals and publishers with official data policies has been increasing in response to challenges to the validity of published science. Their policies vary widely in what they require from authors.

Wiley is an international scientific, technical, medical, and scholarly publishing company that is also the publishing partner of the American Geophysical Union (AGU). Wiley encourages open data access but allows individual journals to set their own policies.69 In 1997, the AGU formulated a data policy that encouraged openness.70 In 2013, this policy was updated to apply to AGU journals

To advance scientific exploration and discovery, and allow a full assessment of results presented in AGU’s journals, all data necessary to understand, evaluate, replicate, and build upon the reported research must be made available and accessible whenever possible. For the purposes of this policy, data include, but are not limited to, the following: Data used to generate, or be displayed in, figures, graphs, plots, videos, animations, or tables in a paper. New protocols or methods used to generate the data in a paper. New code/computer software used to generate results or analyses reported in the paper. Derived data products reported or described in a paper.71

This policy is strict in that all data used in a publication and all new code or computer software used in the research must be publicly available. The policy was enacted to ensure reproducibility and transparency in research, and although those reasons were first stated in 1997, it did not become official journal policy until 2013.

The American Meteorological Society (AMS) data policy states that they are

Committed to promoting full, open, and timely access to the environmental data, associated metadata, and derived data products that underlie scientific findings (see the 2013 AMS policy statement). These data and metadata must be properly cited and readily available to the scientific community and the general public. At initial submission, authors must confirm that the datasets are archived and cited/referenced properly. Likewise, peer review editors are asked to ensure that this AMS expectation is being met.72

Reviewers are asked to ensure that data are placed in a location where others can freely download the data. Unless explicit permission is given, it is no longer acceptable to use the language that “the data are available upon request.” AMS policy has gradually become stricter with time.

___________________

69 See https://authorservices.wiley.com/open-science/open-data/index.html.

70 See https://sciencepolicy.agu.org/files/2013/07/AGU-Data-Position-Statement_March-2012.pdf.

71 See https://publications.agu.org/author-resource-center/publication-policies/data-policy/.

72 See https://www.ametsoc.org/ams/index.cfm/publications/ethical-guidelines-and-ams-policies/data-archiving-and-citation/.

American Astronomical Society (AAS) journals have published papers on software with relevance to research in astronomy and astrophysics.73

Such papers should contain a description of the software, its novel features and its intended use. Such papers need not include research results produced using the software, although including examples of applications can be helpful. There is no minimum length requirement for software papers. If a piece of novel software is important to published research, then it is likely appropriate to describe it in such a paper.

AAS highly recommends using an open source license and a standard software repository and provides guidance to ensuring code citation.

Although the American Journal of Political Science (AJPS) is not commonly used to publish articles for NASA-funded projects, it is included here because of its strict open policy. AJPS requires that all data and code used in the publication to be publicly available, specifically so that figures are reproducible exactly as published. Once the review process is completed, an outside company ensures compliance (at a cost of approximately $1,000 per article). This strict policy guarantees reproducibility, and publication in AJPS can be used to verify enforcement of open data and software policies to funding agencies.

Among others, the journal Astronomy and Computing recommends that the software developed to produce results in a paper be made accessible.74 The Journal of Open Source Software, on the other hand, was designed to provide a way for open-software developers to obtain citations for their software. The authors submit a short article about their software (required to have an OSI-approved license), and the peer review process checks the quality of the software itself, including functionality, documentation, performance claims, installation instructions and community guidelines.75

Lessons Learned: Journals and publishers are moving forward in support of a more open science environment, providing both enhanced recognition and access to data and software. Journals first moved forward with open data requirements that gradually became stricter, then moved to open software requirements.

__________________

73 See http://journals.aas.org/policy/software.html.

74 See https://www.elsevier.com/journals/astronomy-and-computing/2213-1337/guide-for-authors.

75 D.S. Katz, K.E. Niemeyer, and A.M. Smith, 2018, Publish your software: Introducing the Journal of Open Source Software (JOSS), Computing in Science & Engineering 20(3):84-88, doi:10.1109/MCSE.2018.03221930.