1

Lessons from the Past Provide Direction for the Future

Since the practice of agriculture began, humans have been struggling to reduce the adverse effects of pests on crops, forests, and other human-managed ecosystems. In crop production, the development of diverse cropping systems, breeding and selection for pest-resistant plants, and beneficial cultural practices helped boost yields and reduce losses caused by pests. The same principles, applied to forests and other managed ecosystems, also helped to protect them against pest damage.

Agriculture, however, was still vulnerable to periodic crop failures and pest destruction, such as the 1845 potato blight in Ireland that led to famine and widespread malnutrition. Technological advances, such as the development of Bordeaux mixture in 1883 to control fungal diseases, helped lessen crop losses. The synthetic, organochlorine and organophosphate insecticides, the herbicide 2,4-D, and the halogenated hydrocarbon fumigants introduced after World War II revolutionized pest management in agriculture. Additional synthetic chemical pesticides including fungicides, nematicides, and other herbicides and insecticides were developed and put to widespread use.

Initially, the benefits these new chemicals brought to agricultural production were thought to be without major disadvantages; however, ecological and human health risks and the economic costs of heavy, widespread use of broad-spectrum chemical pesticides are becoming more apparent. In addition to the potential for adverse effects on human and environmental health, there are growing concerns about the durability of current approaches to pest management. The disruption of inherent natural and biological processes of pest management, the resistance to pesticides developed by many major pests, and the frequency of pesticide-induced or-exacerbated pest problems suggest that dependence on pesticides as the

dominant means of controlling pests is not a durable solution. The failure to develop economically viable pesticides for some of the most damaging pests and the economic costs of continual pesticide application has also led to an interest in alternative approaches to crop protection.

Managing the pests inherent to agroecosystems is imperative to producing adequate supplies of food and fiber to meet current and future world needs. Effective, long-lasting, ecologically sound, and affordable pest management systems are essential to agricultural productivity and profitability. The committee concludes that agriculturalists must learn more about the biological and ecological processes of the crop-production environment in order to develop the management approaches and products that alone or in combination with carefully managed use of selective pesticides will provide novel and lasting solutions to pest problems.

A BRIEF HISTORY OF PEST MANAGEMENT USING NATURALLY OCCURRING SUBSTANCES

The earliest known mention of using naturally occurring compounds to manage pests was in 1000 B.C. when Homer referred to the use of sulfur compounds. In the western hemisphere, early agriculturalists reduced the number of arthropod pests by treating infested plants with tobacco extracts and nicotine smoke. The list of naturally occurring substances used as pesticides expanded over time to include rotenone, soaps, fish oil, lime-sulfur, and copper-sulfate. A shift toward chemical controls coincided with the use of Paris green (an arsenic compound) in 1867 to control an outbreak of Colorado potato beetle in the United States and the fortuitous discovery of a fungicide mix (copper sulfate and hydrated lime) in 1882 in Bordeaux, France (Bottrell, 1979).

Early Biological Management of Arthropods

The use of beneficial organisms to manage pests also has a long history. The Chinese introduced colonies of ants into citrus groves to control caterpillars as early as 324 B.C. In 1752, Linnaeus wrote about the use of predatory arthropods to control arthropod pests on crops. An early insectary design in which caterpillars were placed in cages to attract beneficial arthropods was recommended by Thomas Hartig of Germany in 1827. In one of the first attempts at classical biological control, entomologist Asa Fitch suggested that toadflax, an exotic plant introduced to the United States from Europe, could be managed by importing the natural enemy of this weed from its native habitat. The colonization of the cabbage butterfly in the U.S. mid-Atlantic region and parts of the Midwest compelled Riley and Bignell to import a parasitoid (Apanteles glomeratus) in 1883 that eventually spread and successfully controlled the caterpillar pest throughout the United States (DeBach and Rosen, 1991).

These efforts set the stage for the most dramatic and well-known early application of a beneficial organism in the United States—the introduction of the Australian ladybird beetle (Rodolia cardinalis) to control the cottony-cushion scale (Icerya purchasi) in California. Throughout the 1880s, the cottony-cushion scale devastated the California citrus industry; by 1890, all scale infestations were under control following release of the ladybird beetle. This success was credited with saving the California citrus industry and catalyzed the expansion of biological control of other pests, including other arthropods, weeds, and diseases (DeBach and Rosen, 1991).

Early Biological Management of Weeds

In the 1930s the prickly pear cactus (Opuntia spp.) was successfully controlled by a weed-feeding scale (Dactylopius opuntiae) on Santa Cruz Island off the coast of California (Goeden, 1993; Turner, 1992). One of the most notable achievements, however, occurred on rangeland where devastation by Klamath weed (Hypericum perforatum) so decreased land values that ranchers were not allowed to use their ranches as collateral to borrow money for chemicals to control the noxious weed. In 1946 the arthropods Chrysolina hyperici and C. quadrigemina were released and by 1956 had consumed 99 percent of the Klamath weed, increasing the price of land 300 to 400 percent (DeBach and Rosen, 1991).

The first organized attempt at biological control of an aquatic weed was directed against alligator weed (Alternanthera philoxeroides). More than 30 arthropods that parasitize this weed were found in South America in the weed's native range. Of these organisms, a flea beetle (Agasicles), was found to be safe and potentially effective and was introduced into the United States. Today, alligator weed remains under check in most of the South; however, the effectiveness of the flea beetle has been reduced in Mississippi as a result of extensive aerial spraying of chemical insecticides, and the flea beetles alone are usually not sufficient to manage this weed in high visibility areas such as golf course ponds and irrigation canals.

For water hyacinth, plant pathogens were suggested as possible biocontrol organisms as early as the 1930s in India. It was not until 1970, however, that a serious commitment was made by the University of Florida, the U.S. Army Corps of Engineers, and Florida Department of Natural Resources to explore the potential of microbial pathogens to control aquatic weeds. Several pathogens of alligator weed, water hyacinth, hydrilla, and Eurasian water milfoil were soon discovered, and three fungi—Cercospora rodmanii, Fusarium culmorum, and Mycoleptodiscus terrestris—were studied in detail for development as bioherbicides for water hyacinth, hydrilla, and Eurasian water milfoil, respectively, in Florida, Mississippi, and Massachusetts (Charudattan, 1990a).

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

|

Early Biological Management of Diseases

Early in this century, plant pathologists recognized that (a) both native and exotic microorganisms could suppress plant diseases; (b) disease suppression could be manipulated through the use of cultural and management practices that altered soil's organic matter, temperature, or pH; and (c) disease suppression was attributed to the presence of suppressive microorganisms (Cook and Baker, 1983). The first attempts to suppress plant diseases by introducing beneficial microorganisms to soil were done in the 1920s (Cook and Baker, 1983), and the fungus Phlebia gigantea, the first biological-control organism used commercially for control of an aerial plant disease, was described in the 1950s. In addition, inoculation of cut pine stumps with P. gigantea prevents infection of the stumps by the pathogenic fungus Heterobasidion annosum and its subsequent spread to neighboring standing trees through root grafts. This biological control is still widely used today in commercial pine plantations in England and Sweden. Inoculation of chestnut trees with hypovirulent isolates of the chestnut blight pathogen Cryphonectria parasitica was initiated in France in the 1960s, where it successfully suppressed chestnut blight in many regions. Biological control of crown gall with the bacterium Agrobacterium radiobacter strain K84, which was initiated in the 1970s, is now used to suppress the disease in orchards and nursery stock worldwide. Today, at least 30 different biological-control organisms are available as commercial formulations for suppression of plant diseases (Lumsden et al., 1995).

As early as 1922 the United Fruit Company identified land types in Central America in which banana plants were termed either short-lived or long-lived crops. Both were grown in soil containing wilt pathogens, but only the long-lived plants survived because they were planted in soil that suppressed the pathogen Fusarium oxysporum (Cook, 1990). Since then, this natural process of suppression of fusarium wilt has been demonstrated for carnation, cucumber, cotton, flax, muskmelon, and tomato crops.

In the 1930s, knowledge of medicinal antibiotics was applied to plant pathogens by Weindling, a plant pathologist, who researched the use of Gliocladium and Trichoderma fungi for their antibiotic effects on damping-off disease. In 1938 Linford and colleagues reported on the use of a nematode-parasitic fungus to control root-knot nematodes (Cook, 1990). The knowledge gained by these applications of biological controls continues to be used today in managing plant pathogens, weeds, and arthropods.

A BRIEF HISTORY OF CULTURAL PRACTICES

Early agriculturalists realized the benefits of cultural practices such as rotating crops, altering planting and harvesting schedules, planting mixtures of crops, managing irrigation and drainage, and removal of crop residues, to reduce pest

|

Experimental Demonstration for Importance of Natural Processes Researchers have used pesticides to experimentally eliminate biological-control organisms of pest species as a way to quantify the importance of biological and natural processes in managing pests. The resulting pest infestations and crop damage can be used as an estimate of the degree to which biological and natural processes keep pests in check. California Red Scale (Aonidiella aurantii) on Citrus Experimental DDT applications caused a pest population to increase from 36-fold to more than 1,200-fold over a period of several years (DeBach and Rosen, 1991). Cottony-Cushion Scale (Icerya purchasi) Two experimental applications of DDT to trees with initial light-to-moderate scale populations initially produced scale infestations sufficient for tree defoliation or death within 1 year. With lighter infestations, four treatments over a 2-year period were required to achieve the same level of infestation (DeBach and Rosen, 1991). Citrus Red Mite (Panoncychus citri) Two and one-half months after one application of DDT, the mite population index on treated trees was 2,303 compared to 377 on untreated trees. "The use of DDT on citrus in California was abandoned early in the game by citrus growers because of its obvious effect in causing such mite increases" (DeBach and Rosen, 1991: p. 11). Cyst Nematode Application of soil fungicides to research plots in England revealed the widespread phenomenon of biological control of cereal cyst nematode by naturally occurring soil fungi: populations of cyst nematodes remained low in untreated plots |

growth and reproduction. Many of these cultural practices were effective because they augmented natural processes of pest suppression. Natural processes include predation, parasitism, pathogenesis, competition, and production of antibiotics by organisms that coexist with pests. In their native environments, plant pests live in communities composed of a variety of organisms, including their natural enemies (i.e., biological-control organisms), which constrain the pest populations and activities by natural processes. Through trial and error, early agriculturalists, building on natural processes, developed agricultural practices that suppressed pests. For example, crop rotation and application of manure were

|

but increased greatly in treated plots because the fungicide killed the nematodes' biological-control organisms (Stirling, 1991). Soil-Borne Pathogenic Fungi Certain soils are suppressive to specific soil-borne fungi, such as Gaeumannomyces graminis var. tritici, which causes take-all of wheat, or Fusarium oxysporum, which causes Fusarium wilt of many crops. Although propagules of a pathogenic fungus may be present in these suppressive soils at densities adequate to cause disease, disease is absent or symptoms are very mild. For example, if a suppressive soil is inoculated with propagules of G. graminis var. tritici, symptoms of take-all of wheat typically are very mild. In contrast, if the suppressive soil is fumigated or pasteurized prior to inoculation with the pathogen, symptoms of take-all are severe (Cook and Weller, 1987). Similarly, the suppressive effect of Fusarium-suppressive soils is destroyed by biocidal treatments and can be restored by mixing a small quantity of suppressive soil into a pasteurized soil (Alabouvette, 1993). Disease suppression in both cases is attributed to interactions between the pathogen and the saprophytic microflora present in suppressive soils. Weed Composition Shifting Weed-shifting is a phenomenon in which previously innocuous plants and minor weeds emerge as dominant weeds following the removal of competition from erstwhile major weeds. Numerous instances are reported of differential susceptibility of weed species to herbicides. Continued use of a particular herbicide often causes a shift within a weed community from susceptible to more tolerant species. For example, a species shift that favors grasses is readily observed from applications of 2,4-D for broad-leaf weed control in cereals. Other examples include the increased occurrence of pigweed (Amaranthus spp.) from napropamide, mustards (Brassica spp.) from benefin, and common groundsel (Senecio vulgaris) from diuron and terbacil (Radosevich and Holt, 1984). The phenomenon of weed shifting demonstrates that certain weed populations are suppressed by more dominant populations, in the absence of herbicides. |

used in China approximately 3,000 years ago. In the first century B.C., fallowing fields with poor crop yields was a recommended practice (Cook and Baker, 1983). Similarly, fallowing, rotating cereal crops with legumes, composting, manuring, liming, and optimizing schedules of irrigation and drainage were implemented throughout the Roman empire (50 B.C.–476 A.D.) (Cook and Baker, 1983).

Throughout the history of agriculture, the benefits achieved through advocated cultural practices were recognized, but the importance of natural processes in realizing many of these benefits only began to be appreciated when science

discovered microorganisms in the mid 1800s. The phenomenon of antagonism (a microorganism's ability to sustain its life by parasitizing another organism) was recognized in the late 1880s, but its occurrence in agricultural soils and its role in suppression of plant pathogens and other pests was not fully recognized until early in this century. It is now firmly established that many practices implemented by early agriculturalists enhance the diversity and activities of soil microorganisms, including biological-control organisms. Ironically, the importance of natural processes performed by indigenous biological-control organisms are demonstrated most clearly when they are destroyed by chemical or physical means. If indigenous biological-control organisms are destroyed by sterilization or fumigation of soil, for example, an unaffected indigenous or an introduced pathogen can cause far more damage than would be possible in untreated soil. Many of the cultural practices developed throughout the evolution of agriculture are still used today.

Crop rotation (successive planting of different crops in the same field) is a proven cultural strategy to suppress weed, arthropod, and pathogen pests (National Research Council, 1989b). Optimizing the benefits of crop rotations requires knowledge of the effects of diversified plantings on a cropping system. Rotating a primary crop with another cash crop can limit growth of a pest that feeds on the primary crop (National Research Council, 1989b). Root food sources of soil-borne arthropods as well as inoculum densities of plant pathogens are severely reduced by crop rotation. For instance, populations of plant-parasitic nematodes—a persistent problem in continuous soybean cropping system—are nearly eliminated by switching to corn in alternate growing seasons. Crop rotation alters a soil environment, which can reduce the devastating effects of many soil-borne pests.

Other cropping practices used to encourage growth of beneficial organisms and suppress pest populations include use of green manures (a legume crop plowed under to increase soil fertility), cover crops (crops grown for ground cover), and intercropping (interspersing crops). An example of intercropping is the use of trap crops grown in rows beside a primary crop to both provide habitat for beneficial arthropods and divert pest arthropods from preying on the primary crop. Knowing the effects of cropping on ecosystem components can lead growers to a diversity of plant resources useful in crop-production systems.

A combination of cultural practices is synergistic and can be more effective than a single tactic alone against an agricultural pest. For example, against weeds, a grower may cultivate a field to prevent germination of weed seeds, then plant the crop to ensure that the crop has a competitive advantage in access to limited water, light, and nutrient sources. Manipulation of planting and harvesting schedules also can have a negative impact on pest populations (National Research Council, 1989b; Ferris, 1992; Schroth et al., 1992); however, optimizing these schedules requires prior knowledge of crop-arthropod life cycles and host-free stages of the pest (Edwards and Ford, 1992). With knowledge of

ecological interactions, a grower can integrate several cultural practices to manage a variety of agricultural pests.

PLANT BREEDING

Improving crops by selective breeding—choosing to plant the seed of the largest fruit or the most easily harvested plants, for example—started thousands of years ago (National Research Council, 1984). In 1866 Mendel published findings on controlled pollination techniques which demonstrated that plant characteristics are inherited in a predictable manner. Since 1900 knowledge of genetics has been used to continuously improve crop varieties. The release of new varieties of hybrid corn in 1930 and a rust-resistant wheat in 1938 led to major gains in crop yields per acre.

Breeding to increase plant vigor and resistance can be achieved by classical and molecular approaches; both approaches modify plant DNA and transfer desirable agronomic traits. A common classical technique, hybridization, is used to transfer genes from one plant to another compatible plant of the same genus or species. Crop improvement through hybridization, however, can be limited by a low rate of reproduction from progeny with the desired trait; and with only one growing season per year for many agricultural crops, many years are needed to develop a new cultivar. Nonetheless, classical breeding techniques continue to be the primary way to develop and improve plants to meet agricultural needs (National Research Council, 1989a,b).

Breeding plants to increase their ability to resist attack by pests continues to be an essential component of pest management. Breeding for a uniform trait, however, is not without risk; genetic uniformity can have severe negative risks. An example occurred in 1970 when more than 90 percent of the U.S. corn crop was planted with corn developed from one source of mass-produced hybrids carrying a trait called the Texas male-sterile (Tms) cytoplasm. Corn derived from the Tms hybrids facilitated corn breeding because it was male sterile and therefore did not require tedious and expensive detasselling. However, Tms hybrids were extremely susceptible to race T of Bipolaris maydis, the causal agent of southern corn leaf blight. Race T had always been present but was relatively unimportant until the Tms hybrid corn was planted, almost exclusively. This large-scale planting of a highly susceptible corn hybrid led to a severe outbreak of southern corn leaf blight (Poehlman, 1979). The following year plant breeders returned to improved, inbred lines of corn containing normal cytoplasm, and the disease abated.

A molecular approach to breeding plants with improved agronomic traits involves transgenic techniques using molecular technology. Such techniques involve transferring foreign DNA via a biological agent or by physically inserting the foreign gene into the plant cell. Transgenic techniques can be more precise than hybridization because a specific gene is transferred, and the time required to

|

Take-All Disease and Its Decline Take-all is a major disease of wheat worldwide. The soil-borne fungus Gaeumannomyces graminis var. tritici infects wheat roots, making the infected roots less efficient in taking up water and nutrients from the soil and in transporting these to the aerial portions of the plant. As a result, infected plants are stunted and often display premature senescence. Severe infections can kill plants in the seedling stage, but the disease typically develops more slowly and plants die after heading but before mature grain can be harvested. Crop rotation is an effective means of managing the disease because the pathogen does not persist in soil for extended periods in the absence of wheat plants. However, in many regions where wheat is grown, because of economic pressures, growers plant consecutive crops of wheat without rotation. More than 50 years ago, scientists observed that although the severity of take-all increased with each consecutive crop of wheat for approximately 4 years, continued monoculture of wheat beyond 4 years often resulted in decreased disease severity. Scientists attributed this phenomenon, called ''take-all decline," to the proliferation of antagonistic bacteria on wheat roots and in infested root debris. In fields displaying take-all decline, bacterial antagonists, primarily fluorescent pseudomonades, suppress the growth of G. graminis var. tritici on root lesions and in crop debris. Certain strains of fluorescent pseudomonades isolated from soil in fields where take-all has been naturally suppressed produce antifungal compounds toxic to G. graminis var tritici. In field experiments, when inoculated onto seeds, fluorescent pseudomonades suppress take-all and increase yield of wheat. The take-all decline system illustrates a form of biological control that is achieved through the cultural practice of wheat monoculture, which causes a shift in the composition of microorganisms present on the roots and in crop debris. Although crop rotation is an invaluable agronomic practice for the management of soil-borne pathogens including G. graminis var. tritici, monoculture of wheat favors the proliferation of bacteria suppressive to the take-all fungus. Take-all decline is a well-studied example of what may be a common phenomenon: that much of the value of cultural practices may be achieved through their effects on the microorganisms that form an essential component of the agricultural ecosystem. |

develop a viable, commercial cultivar can be decreased (National Research Council, 1989a).

Genes from animal, microbial, or plant sources can also be inserted into plants to produce a variety with novel resistance characteristics. The first example of genetic engineering for disease resistance involved a gene encoding for the coat protein of the tobacco mosaic virus (TMV) that was introduced into tobacco plants through an agrobacterium vector (Powell-Abel et al., 1986). These transgenic plants were resistant to TMV as well as other related viruses. Modern tools have enabled scientists to enlarge the pool of genetic resources to make needed crop improvements.

Expression of coat protein genes have become increasingly important in developing new varieties of resistant crops; since 1986 there have been more than 75 reports of transgenic plants with virus resistance as a result of the expression of coat protein genes in crops as varied as potato, tomato, squash, rice, corn, sugar beets, and lettuce (Fitchen and Beachy, 1993). In 1995, the first commercial variety of such virus-resistant plants was introduced—crooked-neck squash resistant to cucumber mosaic virus and zucchini yellow mosaic virus. More recently, genes for resistance to a number of different diseases were isolated from tomato, tobacco, Arabidopsis, flax, and rice plants, to name a few. Whitham et al. (1994) isolated a gene for resistance to TMV; Johal and Briggs (1992) isolated a gene for resistance to corn blight. In both cases, resistance was achieved when the resistance gene was reintroduced into a previously sensitive plant variety.

SYNTHETIC ORGANIC PESTICIDES

Between World Wars I and II, large-scale production practices coupled with major developments in synthetic chemistry revolutionized the pesticide industry. Tetraethylpyrophosphate (TEPP), the first organophosphate insecticide, was synthesized in 1938; DDT followed in 1939. An analog of the plant hormone indole acetic acid was synthesized in 1939 as 2,4-D and introduced in 1942 as the first synthetic selective herbicide. The first dithiocarbamate fungicide, zineb, was introduced in 1943; and in 1945, chlordane, the first of the persistent, chlorinated insecticides, and propham, the first carbamate herbicide, were introduced. In 1947, toxaphene, which became the most heavily used insecticide in the United States, was introduced, followed by aldrin and dieldrin in 1948 and malathion in 1950.

In the 1940s and 1950s, soil fumigants, including methyl bromide, ethylene dibromide, dichloropropenes, and dibromochloropropane (DBCP), were found to effectively suppress soil-borne, parasitic nematodes and fungi. New insecticides, herbicides, fungicides, and nematicides were introduced almost annually through the 1950s and 1960s. As remarkable as the pace of discovery and development was the rate of adoption of the new pesticides by growers (Osteen and Szmedra, 1989), who found that the new chemicals (1) were highly effective and predictable at reducing pest populations, (2) produced rapid and easily observed mortality of the pest, (3) were flexible enough to meet diverse agronomic and ecological conditions, and (4) were inexpensive treatments compared to the crop damage that would be otherwise sustained (Metcalf, 1982; National Research Council, 1975). Synthetic pesticides quickly became the favored means of crop protection and dramatically eclipsed other approaches to pest management.

The ready acceptance of pesticides, coupled with comparable increases in the use of commercial fertilizers and mechanization, revolutionized agricultural

production. Yields have increased steadily and dramatically since the 1940s, and specialized production of one or a few crops has become the norm for growers.

Insecticides

By the 1950s, insecticides were used broadly on high-value crops especially susceptible to arthropod attack: cotton (66 percent treated, by area), fruits and nuts (81 percent), potatoes (80 percent), vegetables (74 percent), and tobacco (58 percent). During the 1960s and 1970s both herbicides and insecticides were used on corn and tobacco, and use was expanded to include other major field crops such as cotton, soybean, sorghum, rice, peanuts, wheat and other small grains, hay, and pasture (Osteen and Szmedra, 1989).

From 1964 to 1982 the total volume of insecticide use on cotton declined (Osteen and Szmedra, 1989) after the introduction of pyrethroids, a pesticide used at much lower rates but applied more frequently (Zalom et al., 1992). During this same period, the volume of insecticide applied to corn and soybean nearly doubled. In 1982 the amount of insecticide used on soybean and corn exceeded that used on cotton for the first time in the United States (Osteen and Szmedra, 1989).

Herbicides

During the 1960s and 1970s, increased herbicide applications on corn and soybean accounted for the largest pesticide use increases (Lin et al., 1995). By the 1980s herbicides accounted for 75 percent of total volume of pesticides applied to agricultural crops. The total amount of herbicides used decreased in the 1990s in part because newly introduced herbicides were used at lower application rates. Rice crops, though their total acreage is small, receive the most intensive use of herbicides, 2.5 kg/hectare (5.6 lb/acre). Herbicides account for more than 90 percent of pesticide applications in corn and soybean production today (Lin et al., 1995).

Declining Use

Since peaking in 1982, pesticide use declined from 270 million kg (600 million lb) in 1982 to 260 million kg (570 million lb) in 1992. Currently, corn and soybean production, occupying the largest percentage of crop acreage in the United States, dominate pesticide use. On a per-acre basis, however, pesticides are used more intensively on many fruit and vegetable crops, including potatoes, than on corn; for example, fungicides applied to citrus and apple trees (3.6 million kg or 8 million lb) account for 23 percent of all fungicides used. Cotton accounts for about 10 percent of all pesticides used. Pesticide applications on

wheat, a major crop with the second largest acreage in the United States, account for only 3.5 percent of the total (Lin et al., 1995).

INTEGRATED PEST MANAGEMENT

Integrated pest management (IPM) was intended to put pesticide use on a more sound ecological footing. Stern and colleagues (1959) introduced the term integrated control and defined it as "applied pest control which combines and integrates biological and chemical control. Chemical control is used as necessary and in a manner which is least disruptive to biological control" (p. 86). Pest management was to be based on the naturally occurring regulatory processes in the agroecosystem that help prevent pest outbreaks.

Stern and colleagues (1959) suggested that pests should be reduced (as opposed to completely exterminated) only when their populations reach levels at which economic injury to the crop is expected, thus also introducing the concepts of economic-injury level and economic threshold to guide decisions about when and whether pest populations should be reduced. Economic-injury level is determined by measuring the effect on the crop plant and the damage it can tolerate. Economic threshold, set below the economic-injury level, serves to signal the need for action to keep the pest population from reaching the point at which economic injury would occur.

The founding principles of IPM are that natural processes can be manipulated to increase their effectiveness, and chemical controls should be used only when and where natural processes of control fail to keep pests below economic-injury levels. These principles were refined, expanded, and incorporated into many of the concepts of pest management advocated by others (Doutt and Smith, 1971; National Research Council, 1969; Rabb and Guthrie, 1972; Smith, 1969; Smith and van den Bosch, 1967; U.N. Food and Agriculture Organization, 1967). Similar concepts and definitions of pest management were used as the basis for accelerating adoption of national agricultural initiatives during the administrations of presidents Nixon (Council on Environmental Quality, 1972) and Carter (Bottrell, 1979).

IPM in its original sense of integrated control or ecologically based management, however, has not been implemented on a wide scale (Cate and Hinkle, 1993; Flint and van den Bosch, 1981; Hoy and Herzog, 1985; Kogan, 1986). Critics argue that the most widely used IPM strategies stress improved pesticide usage based on monitoring pest populations and setting economic thresholds (Fitzner, 1993). Many scientists have noted that IPM strategies normally depend on pesticides as the primary management tool and have highlighted the need to develop systems that depend primarily on biological control organisms, resistant plants, cultural controls, and other ecologically based tools (Cate and Hinkle, 1993; Edwards, 1991; Ferro, 1993; Flint and van den Bosch, 1981; Frisbie et al., 1992; Frisbie and Smith, 1989; Hoy and Herzog, 1985; Kogan, 1986; Pedigo and

Higley, 1992; Tette and Jacobsen, 1992; Zalom and Fry, 1992). Indeed, some scientists have proposed that the term biologically intensive IPM be used to distinguish IPM strategies that rely on biologically based tools from those that depend primarily on conventional broad-spectrum pesticides (Edwards, 1991; Ferro, 1993; Frisbie and Smith, 1989; Pedigo and Higley, 1992; Prokopy, 1993; Zalom and Fry, 1992). The historical emphasis on arthropod control, and the subsequent lower priority of pathogen and weed management, does create some confusion for IPM practitioners seeking environmentally sound and economically feasible answers to pest management problems.

Naturally occurring compounds, biological-control organisms, and resistant plants have been developed and used for most of the history of pest management. With the advent of synthetic chemical pesticides, emphasis in research and practice shifted away from biologically based strategies. However, contemporary advances in scientific knowledge coupled with the long experience of the past provides a solid foundation for renewed effort in identifying appropriate ecological approaches to pest management.

OBSTACLES TO CONTINUED USE OF BROAD-SPECTRUM PESTICIDES

Pesticides were readily accepted by growers because, at least initially, they were quite successful at suppressing pests. However, problems began shortly after pesticide use became widespread. Arthropod resistance to DDT was first observed in Sweden in 1946, only 7 years after DDT was introduced. By 1948, 14 species of arthropods were reported to be resistant to DDT, cyclodienes, organophosphates, carbamates, or pyrethroid insecticides; that number exceeded 500 by 1990 (Gould, 1991). But the problem is not simply that some pests develop resistance; some were never controlled by pesticides. For some soilborne pathogens, nematodes, arthropods, and aquatic weeds, there are no acceptable conventional chemical pesticides.

Widespread use of pesticides has also raised concerns about the health effects of pesticide residues in foods humans and livestock animals eat. In 1954, the Food, Drug, and Cosmetic Act was amended to set maximum tolerance levels of pesticide residues in raw agricultural commodities because of concern about health effects and dietary exposure.

Problems and Limitations of Pesticides

The early success of broad-spectrum, synthetic pesticides raised hopes that pest problems that had plagued agriculture from its inception had finally been solved. The inability to sustain the initial effects achieved by conventional pesticides, however, is an important reason to be concerned about continued dependence on ineffective pesticides that may also adversely impact nontarget species

in the environment. The development of resistance to pesticides, the increasing cost of discovering and developing pesticides, and the fact that some pest problems have been caused or exacerbated by the use of pesticides have all contributed to arguments for decreased reliance on these chemicals to provide crop protection.

Development of Resistance

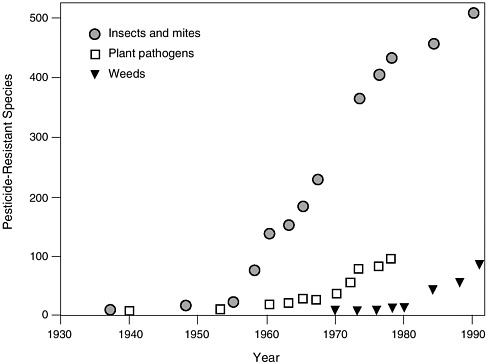

Major arthropod agricultural pests have developed resistance to more than one class of insecticides (Georghiou, 1986; Gould, 1991) (Figure 1-1). Growers normally respond to the first signs of resistance by increasing their rate of application and then, if that does not work, switching to another pesticide that may also become ineffective. The problem is further exacerbated by the ability of a given population of pests to develop several different mechanisms of resistance with each mechanism responding to a different pesticide (Georghiou, 1986).

The increased cost of continued pest management resulting from pesticide resistance can be substantial. Georghiou (1986) cited examples including a more

FIGURE 1-1 Chronological increase in the number of arthropod (insects and mites), plant pathogen, and weed species resistant to synthetic chemical pesticides since their development in the late 1930s. SOURCE: Adapted from Gould, F. 1991. The evolutionary potential of crop pests. Am. Sci. 79:496–507.

than 5-fold increase in the costs of malaria control when DDT was replaced by malathion and a 15- to 20-fold increase when malathion was replaced by propoxur, fenitrothion, or deltamethrin (Metcalf, 1983). In the early 1990s it was estimated that development of resistance added $400 million to the cost of pest management in the United States (Pimentel et al., 1992).

Since systemic fungicides were first introduced for agronomic use in the 1960s, their use to control fungal diseases of plants has been compromised by the development of resistance to these compounds (Dekker, 1993). For that reason, antibiotics and copper compounds used for management of plant diseases are no longer effective against many bacterial plant pathogens (Jones, 1982). In many cases, antibiotic resistance is conferred by plasmids transferred among bacterial species, thereby enhancing the spread of antibiotic or copper resistance among bacterial plant pathogens (Cooksey, 1990).

As regards herbicides, repeated use has caused plants, when reproducing, to select for herbicide-resistant biotypes with alterations in metabolic enzymes targeted by herbicides. Holt and LeBaron (1990) listed 55 weed species, including 40 dicots and 15 grasses, that had biotypes resistant to triazine herbicides. They reported that one or more resistant species had developed in 31 states of the United States and in 4 provinces of Canada. Resistance to several classes of herbicides, such as triazine, trifluralin, paraquat, dichloropmethyl, substituted urea, and sulfonylurea, is rapidly becoming a worldwide problem. Inasmuch as some weed biotypes have developed resistance to several classes of herbicides, herbicide resistance appears to have reached the level where concerted efforts are needed for effective management strategies (Holt and LeBaron, 1990).

The appearance of new pesticide-induced pests and the development of resistance puts growers on a pesticide treadmill—applying pesticides at ever increasing rates as resistance develops or as secondary pests emerge and eventually switching to a new chemical, often at higher cost. Continued reliance on pesticides makes it impossible to step off the treadmill. Experience suggests that resistance should be anticipated as a possible outcome of pest control; thus, management approaches must be developed in tandem with pesticide deployment to minimize resistance problems.

Excalating Costs and Fewer Discoveries

The cost of developing new agricultural chemicals has increased dramatically since 1956. According to the American Crop Protection Association, nearly 12 percent of proceeds from agricultural sales in the United States are spent on research and development, totaling more than $650 million. Despite this investment, the probability that a successful commercial product will be discovered has declined. In fact, since 1970 there has been a steady decline in the number of new pesticides introduced (Ollinger and Fernandez-Cornejo, 1995). Consequently, synthetic chemical pesticide companies are consolidating to maintain profitability

as discovery declines and development costs increase (Ollinger and Fernandez-Cornejo, 1995).

Pesticide-Induced Pest Problems

Broad-spectrum insecticides that kill nontarget arthropods can exacerbate or even create new pest problems by eliminating biological control organisms that previously held the pests in check. For example, DeBach and Rosen (1991) described the elevation of the citrus red mite from a minor or nonpest status to the most important citrus pest in California following the introduction of chlorinated hydrocarbon and organophosphate pesticides. Similar increases in mite problems after the introduction of pesticides have been reported worldwide (Gerson and Cohen, 1989; Huffaker et al., 1969, 1970; McMurtry et al., 1970). Cottony-cushion scale emerged as a major pest in California's central valley after DDT caused large reductions in the populations of a biological control organism (DeBach and Rosen, 1991); brown soft scale became a major citrus pest in the Lower Rio Grande Valley in Texas in 1959 when parathion applied to adjacent cotton fields drifted into citrus groves and killed biological control organisms (Dean et al., 1983); and, outbreaks of citrus red mite, purple scale, and woolly whitefly followed large applications of carbaryl and chlordane in an attempt to eradicate the Japanese beetle (DeBach and Rose, 1977).

Diseases induced by, or made more severe after, the use of healing agents are termed "iatrogenic" disease; examples of iatrogenic plant diseases can be cited for all major groups of crop protection chemicals (Griffiths, 1993). Botrytis rot of cyclamen (caused by Botrytis cinerea) was initially well controlled by the fungicide benomyl, but it became much more severe when benomyl-resistant strains appeared. Prior to the use of benomyl, populations of B. cinerea were suppressed by antagonistic strains of Penicillium brevicompactum. Benomyl eliminated the P. brevicompactum, which was sensitive to the fungicide, so that when the benomyl-insensitive strain of B. cinerea appeared, it was more damaging to the host than it had been prior to the discovery of benomyl. Eventually, benomyl-insensitive strains of P. brevicompactum appeared, effectively returning the original balance of biological suppression (Griffiths, 1993).

Unjudicious use of broad-spectrum chemical pesticides for pest management has encouraged resistance in agricultural pests, increased secondary pest problems, increased the probability of dietary and occupational exposure to harmful chemicals, and produced adverse effects on soil and water resources and nontarget species.

Problems that Defy Conventional Chemical Solutions

For many pest problems, no conventional pesticide offers a feasible solution. Examples include problems caused by exotic pests (for which there are no indig-

|

Management of Arthropod Pests in Cotton Production The history of pesticide-induced pest problems in cotton production serves as one of the most compelling examples of the limitations of broad-spectrum chemical pesticides to provide long-term pest control. The development of resistance to pesticides by primary cotton pests such as the boll weevil combined with simultaneous pesticide-induced outbreaks of secondary pests led to a dramatic reduction in the early 1980s in acreage planted to cotton in the southeastern states and threatened to curtail cotton production in the Rio Grande Valley of Texas, the Imperial and Central Valleys of California, and in various Central American countries (Carlson et al., 1989; DeBach and Rosen, 1991). Boll Weevils vs. The Chemicals The history of attempts to manage the boll weevil (Anthonomous grandis) in the Cotton Belt is a classic example of the pesticide treadmill. The standard treatment from about 1910 through 1949 was multiple applications of calcium arsenate dust. This method was quickly replaced in 1950 when chlorinated hydrocarbon compounds such as DDT, aldrin, and dieldrin were introduced along with broad-spectrum pesticides such as toxaphene. These materials, applied either alone or in combination, were successful in managing the boll weevil—and its natural predators—until 1954 when the boll weevils development of resistance to DDT was first reported (Johnston, 1961; Smith et al., 1964). Between 1956 and 1958, organophosphates, particularly methyl parathion, and several others such as malathion, methomyl, azinphos-methyl, carbaryl, endrin, and heptachlor, were introduced to replace DDT and other materials that had become ineffective in the battle against the boll weevil. Widespread use of these toxic, broad-spectrum insecticides eliminated the natural organisms that controlled bollworm (Heliothis zea) and tobacco budworm (H. virescens). Populations of these formerly minor pest species increased, which contributed to additional serious economic losses. DDT was then reintroduced to control bollworm, but by as early as 1962 this pest became resistant to DDT and to other chlorinated hydrocarbon and carbamate insecticides. Growers then switched back to methyl parathion; again, resistance soon developed, and by 1968 it was not uncommon for growers to apply 15 to 18 treatments per season without achieving satisfactory insect control. Rainwater (1962) estimated that one-third of all insecticides used for agricultural purposes were being applied for control of cotton pests, primarily the boll weevil. Bollworms and budworms, which had reached pest status through the use of insecticides applied to control the boll weevil, now rivaled the boll weevil as the most serious pests in cotton production. Fortunately, pioneering work by Brazzel and colleagues (1961) showed that a series of four malathion pesticide treatments applied in the fall would reduce by 90 percent the population of boll weevils emerging in the spring. The effectiveness of this new management procedure was demonstrated in the Texas High Plains- |

|

suppression program of 1964-1965 when 1.1 million acres of cotton was treated four times in the fall with malathion applied as an ultra-low volume spray. Knipling (1968, 1971) later reported that seven treatments applied in the fall would increase suppression of spring boll weevil populations to 98 percent, especially when used in combination with cultural practices and pheromone-trapping technology. This work led to the initiation of a successful interstate coordinated effort, the Pilot Boll Weevil Eradication Experiment, in southern Mississippi and adjoining areas of Louisiana and Alabama from 1971 to 1973. The favorable results of the Mississippi Pilot Experiment indicated that elimination of the boll weevil from the continental United States was technologically and operationally feasible, and the first full-scale boll weevil eradication program was initiated in North and South Carolina in 1983. The program was expanded to include western Arizona, southern California, and northern Mexico in 1985-1986; Georgia, Florida, and parts of Alabama in 1987; and the remainder of Arizona in 1988 (Brazzel, 1989). Since initiation of the eradication program in 1983, the boll weevil has been virtually eliminated from large sections of the southeast. The Success of a Combined-Treatment Strategy The elimination of this primary pest together with development of an IPM program for other cotton pests has served as a major stimulus for the restoration of economic cotton production in the Cotton Belt. The IPM program is based on biology and behavior, monitoring, cultural practices, and prudent pesticide application. It has provided several significant benefits, including savings in pesticide and pest-control costs, increased yields, substantial increases in acreage planted in cotton, and increased land value (Carlson et al., 1989; Cate, 1988; Roach et al., 1990). In Georgia, for example, pesticide treatments have been reduced from an average of 17 applications/acre/year to 4 applications/acre/year (Suber and Todd, 1980), while overall pest-control costs and losses from damage have been reduced by 50 percent. The number of treatments for control of bollworm and budworm, mites, aphids, and plant bugs has declined as beneficial species that were previously suppressed by insecticides reemerge as effective biological-control agents. In general, per-acre yields have nearly doubled since initiation of the eradication program. Cotton acreage in Georgia has increased from an historic low of 115,000 acres in 1983 to 875,000 acres in 1994, with planting estimates of 1.25 million acres for 1995. The 1994 yield of 1,480,000 bales was the highest in Georgia since 1935, when cotton was produced on more than 2.5 million acres. Total value of the crop in 1983 was $44 million vs. $497 million in 1994. Other discussions of pesticide savings, enhanced yield, and increases in acreage planted following the implementation of durable pest management and boll weevil eradication in cotton have been documented in studies by Cate (1988), Carlson et al. (1989), and Roach et al. (1990). |

enous biological controls), soil-borne arthropods, nematodes, and pathogens, plant viruses, rangeland weeds, row-crop weeds, and aquatic weeds.

Invasion of Exotic Pests

The U.S. Office of Technology Assessment (1993) estimated that more than 4,000 plants, arthropods, and plant pathogens of foreign origin have established free-living populations in the United States. Some once-exotic species clearly are beneficial; soybean and wheat are now primary crops throughout much of the United States and staples in the U.S. diet. Most exotic pests arrived in the United States through human activity and were released, unintentionally or deliberately, before they were recognized as pests. Some well-known exotic pests include

-

arthropods such as Mediterranean fruit fly, boll weevil, gypsy moth, pink bollworm, Japanese beetle, European corn borer, sweet potato whitefly, and Russian wheat aphid;

-

pathogens such as white pine blister rust, Dutch elm disease, chestnut blight fungus, and potato blight fungus; and

-

weeds such as hydrilla, water hyacinth, purple loosestrife, leafy spurge, and kudzu.

Losses to agricultural production caused by exotic plant pests alone equal approximately $28.8 billion per year, and expenditures for their prevention and control equal approximately $3.2 billion per year (Schwalbe, 1993).

The difficulty in identifying an exotic pest species in a timely manner can delay deployment of natural predators. Classical biological control efforts to solve exotic pest problems have not had a high success rate, suggesting an inadequate ecological basis for this approach. A better understanding of exotic pests and their natural predators and parasitoids will increase the potential of biological control as a viable approach for management of these pests.

Soil-Borne Pathogens, Nematodes, and Arthropods

Soil-borne plant diseases are caused by pathogenic fungi, viruses, viroids, mycoplasmas, bacteria, or nematodes that live in or on the surface of soil. No practical chemical controls are available for many soil-borne diseases, including Pythium, Rhizoctonia, and Phytophthora root rots; Fusarium wilt; root-knot, cyst, and other nematodes; or arthropods such as the Japanese beetle and root weevils. Losses from root diseases cause wheat farmers an estimated $1.5 billion annually (U.S. Department of Agriculture, Agriculture Research Service, 1995). There are two primary reasons why these problems cannot be controlled by current pest management strategies.

-

It is difficult to achieve uniform coverage of the root system with non-

|

Soybean Cyst Nematode—An Unmet Need The soybean cyst nematode (Heterodera glycines) (SCN) has been a persistent problem in soybean production regions throughout the South and Midwest and caused more than $250 million in yield reduction in 1991 alone (Meyer and Huettel, 1993); over the last 20 years, it has been the most important soil-borne pest in soybean production in the southern United States (Wrather et al., 1995). Management tools have included nematode-resistant cultivars, nematicides, and cultural controls (Riggs and Wrather, 1992). These tools have been useful but have significant problems or have been inadequate by themselves; new biological control tools will help combat this pest. Although nematode-resistant cultivars have been useful in combating SCN, resistant cultivars often produce lower yields than do susceptible cultivars. More important, the evolution of ''resistance-breaking" nematode races reduces the value of resistance cultivars. Initially, the resistant cultivar is quite effective, as it prevents reproduction of the dominant race of SCN in the particular growing region. But SCN consists of many races, and some developed the ability to reproduce on the resistant cultivars. These resistance-breaking races may be present at low levels initially, but they increase to damaging levels when the resistant cultivar is planted repeatedly. Alternating cultivars with different sources and levels of resistance can reduce the chance that resistance-breaking races will increase to damaging levels (Ferris, 1992). Nematicides, including soil fumigants, effectively control nematodes but have serious limitations. First, they are increasingly unavailable to growers; several effective chemicals, including dibromochloropropane (DBCP) and ethylene dibromide (EDB), have been cancelled because of concerns regarding contamination of groundwater as well as other environmental and toxicological concerns. Second, nematicides are costly. Although application of nematicides often is profitable on high-value crops such as tomato, pepper, eggplant, and strawberry, application often is not cost effective in lower-value crops. In the case of soybeans, nematicides were applied extensively in the 1970s and 1980s when the value of the crop was high, but nematicides have seldom been applied in recent years because the value of the crop is lower. Cultural controls include crop rotation, which is effective because SCN does not have a broad host range and does not persist in soil for extended periods of time. Rotation to nonhosts, however, costs the grower because the nonhost crops (corn, wheat, other grasses, etc.) are lower in value than soybean. Management of weed hosts and management of resistant cultivars, to avoid the race shifts, also are important. In addition to SCN, other plant-parasitic nematodes (including lesion, ring, sting, stunt, and especially root-knot nematodes) damage a wide variety of crops and are difficult to control. Fortunately, these nematodes have a number of natural enemies, and biological controls are being investigated in several laboratories in the United States and elsewhere. The fungus Verticillium lecanii, in combination with a sex pheromone, reduced SCN in recent greenhouse and field tests (Meyer and Huettel, 1993). Another fungus, as yet unnamed, occurs naturally in certain soybean production regions in Arkansas and maintains SCN at low levels (Kim and Riggs, 1994). Researchers are studying these and other beneficial organisms to determine how they can be integrated with resistance and cultural controls to manage SCN and other nematode pests. |

-

systemic fungicides. It is especially difficult to protect root tips, which are the site of infection for many soil-borne pathogens.

-

Many fumigants such as dibromochloropropane and ethylene dibromide, two of the most effective nematicides, have been removed from the market; and the cost of using those fumigants that remain on the market often is too great to justify their use on field crops such as cereal and oil crops (Cook et al., 1995).

Plant Viruses

Plant viruses annually cause crop-yield reductions valued at more than $100 million. In most cases the reductions represent 1 percent to 10 percent of the potential crop yield, a figure generally accepted in cases where disease resistance is not available. In selected crops the impact can be severe, causing losses of up to 80 percent. As with human medicine, there are no chemicals capable of controlling plant viruses—chemical controls must be directed at the arthropod, fungal, or nematode vectors of plant viruses. Control of plant viruses is effected by

-

management of areas surrounding production areas,

-

control of the insects and other vectors of the disease agent, and

-

use of disease resistant varieties.

Agriculturalists have long known that the incidence and severity of virus diseases can be reduced by

-

eliminating weeds that harbor viruses and serve as alternate hosts from which insect vectors spread the virus;

-

using insecticides to control the vectors, for example, aphids and white-flies;

-

crop rotation and crop sanitation practices to reduce the sources of inoculum; and

-

shifting planting dates to avoid infestations of insect vectors.

Even with recommended agricultural practices, growers face the possibility of substantial losses caused by disease in years in which climactic changes augment heavy infestations of arthropod vectors. For example, outbreaks of wheat streak mosaic virus can occur in the Midwest in the spring following a warm, wet fall season—conditions optimal for the mite vector.

The situation is not as bleak with all crops, however, largely because of the success of plant breeders in identifying and deploying natural genes for resistance. Genes for resistance to tomato mosaic virus; potato viruses X, Y, and M; cucumber mosaic virus in pepper and in some varieties of cucumber, tomato, and cantaloupe are all examples of successful deployment of genetic material. Some but not all virus resistance genes are single genetic loci, easily transferred be-

tween varieties by cross-breeding. Other resistance genes are multigenic and not easily transferred.

Plant breeders have also identified genes that confer appetite suppression to the insect vector: reduced feeding leads to reduced transmission and disease. Coupling appetite suppressant genes with genes that confer resistance to the virus can produce excellent control of viral diseases. Unfortunately, it has not been possible to identify and successfully deploy resistance genes to control the more than 50 significant diseases caused by viruses in agriculture in the United States, Canada, and Mexico.

Rangeland Weeds

Rangelands comprise about 312 million hectares (770 million acres) in the United States (National Research Council, 1994). These diverse ecosystems serve many purposes including a major agricultural use as grazing lands for livestock. Annual weeds often dominate because of their faster growth rate in comparison to desirable perennial grasses or, as in the case of broad-leaved weeds, are unpalatable and easily spread on improperly grazed lands (National Research Council, 1975). The vastness and lack of accessibility of these remote land parcels make them particularly vulnerable to introduction of exotic weed species and shifts to nonforage native species (National Research Council, 1975; U.S. Office of Technology Assessment, 1993). Since herbicides are costly and such large land areas are involved, solving these weed problems becomes an enormous task (National Research Council, 1975; Turner, 1992; U.S. Office of Technology Assessment, 1993). Nevertheless, opportunities may exist to apply a combination of innovative biological, chemical, physical, and cultural techniques to manage rangeland weeds.

Row-Crop Pests

Pest management in row crop production systems represents a major challenge. The value of row crops—annual crops grown in rows (vegetables and small fruit)—is estimated to be more than $7.5 billion annually (Zalom and Fry, 1992). Generally, many row-crop growers depend on methyl bromide, a highly effective fumigant used against soil-borne pathogens and arthropods as well as some perennial and annual weeds (U.S. Department of Agriculture, 1993), to provide the high-quality, good-tasting, unblemished produce consumers demand. In addition, some herbicide sprays can damage nearby seedlings as well as weeds. Because acreage allotted to row crops is usually less than that allotted to major grain crops, pesticide manufacturers have little incentive to invest heavily in research and development of new products for row crops. Thus there is an urgent need for new pest management strategies for these horticultural systems.

Aquatic Weeds

In North America more than 170 species of aquatic plants are classified as weeds; 40 to 50 species are considered to be of major importance (Andres and Bennett, 1975). Managing aquatic weeds is complicated by the fact that unrelated aquatic plant species, including some native species that are beneficial, may coexist with aquatic weeds; and not all weed species may require control in every situation. Moreover, water bodies are typically subject to multiple uses and some of the control methods may be incompatible with those diverse uses.

Generally, weeding using mechanical controls or by hand using various types of cutting, hoeing, and harvesting tools is the primary method of managing aquatic vegetation in many parts of the world (Wade, 1990). The secondary method is the use of chemical herbicides of which the predominant ones are 2,4-D, diquat, glyphosate, and various copper compounds (Murphy and Barrett, 1990). The use of chemical herbicides in water can result in residue and tolerance problems in potable water, nontarget effects when herbicide-containing waters are used for irrigation, and lack of selectivity.

These weeding and herbicide uses afford only temporary solutions; thus the cost of control by these methods is recurrent. Many public water bodies are intensively managed with respect to weed control, and the cost of such operations is often high. As a general rule weed management in public waters is underwritten by public funds, thus competing for the limited tax revenues.

Biological control can be a cost-effective, long-term solution (Andres and Bennett, 1975). Host-specific as well as nonspecific agents have been successfully used as biological control agents for several aquatic weeds. The herbivorous fish, the white amur or the grass carp (Ctenopharyngodon idella), has been used for a number of years in many countries for nonselective management of aquatic weeds, especially submerged weeds (Sutton, 1977). Among the weeds preferred by this fish are hydrilla, Chara spp., southern naiad, duckweed, and many other problem weeds. Since grass carp is not native to the Americas, a sterile triploid has been bred that cannot reproduce and become permanently established. Only this hybrid is allowed to be used for aquatic weed management in certain parts of the United States, and it is readily available from commercial suppliers in North America. When used at proper stocking rates, according to the size of water bodies and the nature of the weed problem, the grass carp offers an excellent, sustainable solution (Sutton and Vandiver, 1986).

Host-specific fungal pathogens also have excellent potential as biological control agents for aquatic weeds (Charudattan, 1990b). Several pathogens have been found that are capable of controlling aquatic weeds under experimental conditions. It has also been documented that Cercospora rodmanii and C. piaropi, two related species that cause foliar diseases of water hyacinth, cause natural epidemics capable of controlling this weed (Charudattan, 1986; Martyn, 1985). Similarly, natural epidemics have been associated with decline of large popula-

tions of aquatic plants in fresh water and brackish water systems (Charudattan et al., 1990). However, despite this potential, commercial development and use of microbial agents as bioherbicides to manage aquatic weeds has lagged because of the high cost of registering microbial herbicides under Federal Insecticide, Fungicide, and Rodenticide Act (FIFRA) requirements and because of the technological difficulties in producing practical levels of control under field conditions (Charudattan, 1990b).

Certain other miscellaneous agents such as snails (Marisa cornuarientis and Pomacea australis), the manatee (Trichechus manatus), the crayfish (Orconectes causeyi), and competing plants (e.g., Eleocharis spp.) have been considered as biological-control agents for aquatic weeds. However, practical use of these agents has not been realized because of various constraints.

Herbivorous arthropods have also had an unquestionable record of success as biological-control agents of aquatic weeds. Unlike the fish, weed-control insects are highly selective, and only host-specific agents are used. Outstanding examples of weed control by insects include management of alligator weed (Alternanthera philoxeroides) by the chrysomelid beetle Agasicles hygrophila), water hyacinth (Eichhornia crassipes) by the curculionid weevils Neochetina eichhorniae and N. bruchi and the pyralid moth Sameodes albiguttalis), and giant salvinia (Salvinia molesta) by the curculionid weevil Cyrtobagous salvinia (Harley and Forno, 1990).

Biological control of aquatic weeds has tremendous potential as a nonpolluting, ecologically sensible, cost-effective, long-term alternative to mechanical and chemical controls. Although some agents, such as the fish and insects, have gained considerable recognition as successful agents and have been used on a wide scale for many years, similar success can be obtained with other agents, notably microbial pathogens. Classical biological control against aquatic weeds using fungal pathogens is another promising approach (Charudattan, 1990c).

Despite the demonstrated success in controlling alligator weed, water hyacinth, and salvinia, up to now the overall importance of biocontrol has been relatively small compared to other control methods. Continued research and regulatory support will be key to gaining any future success and benefits from biological control.

Human and Environmental Health Concerns

It has been noted that pesticide use can have unintended adverse effects on beneficial organisms and on other nontarget species of plants and animals that come into contact with the chemical or its residues. However, the risks to human health posed by exposure to pesticides in the environment, drinking water, or food have become primary arguments in the debate about pesticide use.

Concern about chemical pesticides was brought to the attention of the public with the publication in 1962 of Rachel Carson's Silent Spring, a powerful and

|

Time Line for the Discontinuance of Four Major Broad-Spectrum Pesticides Regulatory controls on pesticide use were established by the U.S. government to limit human exposure to residues and at the same time sustain the agriculture industry's ability to provide an abundant, nutritious, and safe food supply. Prior to 1972, approval of pesticide use was based on assurance that use would be safe and effective as sold in interstate commerce—the criteria by which most broad-spectrum pesticides were registered for use in the 1940s. After 1972, approval was based on tests that proved use would not generally cause unreasonable adverse effects to humans or the environment. Suspension of a pesticide use is based on a finding by the U.S. Environmental Protection Agency (EPA) that continued use would pose an imminent hazard. Unless cancellation proceedings are held, the use of the pesticide is reinstated. Cancellation of a use is based on findings that use will result in unreasonable adverse effects to humans or the environment. (Tolerances are set for residues of pesticides resulting from use on food. Revocation of a tolerance can also limit or cancel a use on food or feed crops.) Below are listed four broad-spectrum pesticides that EPA removed from the market because they were found to have unacceptable impacts on nontarget species—including human beings. Decades lapsed between the time these pesticides were registered for use and the time of their suspension of use, cancellation of registration, or withdrawal from the market. Chlordane—an insecticide used for termite control December 1975: EPA issues suspends registration for the use of chlordane January 1977: a Circuit Court of Appeals affirms chlordane use on termites March 1978: EPA cancellation hearings take place as registrant agrees to phase out uses of chlordane on corn and other crops August 1987: registrant agrees to stop sale for use on termites EDB—a fumigant used to control plant pathogens September 1983: EPA issues a notice of intent to cancel registrations of EDB for major uses |

poetic treatise about the unanticipated adverse effects of chlorinated hydrocarbons such as DDT. By 1962 several target pests were already resistant to DDT, a resurgence of secondary (minor) pests was associated with its use, and DDT began to accumulate in the food chain. DDT residues eventually were found in the blood and fat tissues and breast milk of humans (National Research Council, 1993b).

Partly in response to such concerns, the U.S. Environmental Protection Agency (EPA) was created in 1970 under the Nixon administration; authority for the regulation of pesticides was transferred from the U.S. Department of Agriculture to EPA. FIFRA was overhauled by Congress in 1972, providing EPA with new authority to regulate pesticides. EPA inherited a backlog of 40,000 registra-

|

February 1984: EPA Administrator issues an emergency suspension April 1984: EPA revokes tolerances for EDB residues for postharvest uses DBCP—a fumigant used to control plant pathogens September 1977: EPA issues a notice of intent to suspend registration of DBCP based on sterility studies of male industrial plant workers November 1977: EPA issues a notice of intent to cancel registration for use on 9 food crops July 1979: EPA issues a notice of intent to suspend all DBCP registrations after it is detected in groundwater, crop residues, and air samples from outside the pesticide application areas October 1979: EPA issues a final decision to suspend all registrations except on pineapple fields in Hawaii November 1979: EPA reinstates uses on pineapple fields in Hawaii March 1981: EPA withdraws intent to cancel registrations for use on pineapple fields in Hawaii and accepts voluntary cancellations for all other uses January 1984: EPA issues a notice of intent to cancel pineapple use in Hawaii 2,4,5-T—a phenoxy herbicide used to control broad-leaved weeds February 1979: EPA issues an emergency suspension of all uses of 2,4,5-T March 1981: a principal registrant negotiates a phase out of herbicide use January 1985: EPA issues final decisions on suspended uses February 1985: EPA issues final orders on nonsuspended uses February 1986: No 2,4,5-T product is allowed for sale in the United States |

tions for pesticides including some that had been in use for more than 25 years. Carson's legacy is that in 1972, EPA cancelled nearly all uses of DDT, most uses of aldrin and dieldrin in 1975, mercuric compounds in 1976, dibromochloropropane in 1977, and 2,4,5-T and Silvex in 1979.

Surface waters such as lakes and streams have been found to be contaminated by agricultural pesticides that run off the land as a result of chemical, soil, site, and climate conditions. Runoff is also associated with heavy early spring rains that may fall after herbicides are applied prior to planting. Agricultural pollution from runoff contributes to estuary decline and can harm nontarget organisms (National Research Council, 1993c). The presence of herbicides in surface water also seems to have significant adverse effects on fresh water phy-

toplankton and on zooplankton communities. The ecological implications of such population changes are poorly understood, but raise considerable concern about the effect agricultural pesticides have on the environment.

By the early 1980s, public concern had turned to the possibility of pesticide contamination of groundwater, a major source of drinking water for rural populations which was once thought not to be vulnerable to pesticides (U.S. Office of Technology Assessment, 1990). Scientific evidence indicates that groundwater contamination is due, in part, to agricultural pesticides that leach from above (Nielsen and Lee, 1987). In general, pesticides that are more readily soluble, less likely to be sorbed to soil particles, or more persistent (resist degradation) tend to leach into groundwater. In 1988, the U.S. Environmental Protection Agency detected 46 pesticides in groundwater from 26 states (U.S. Environmental Protection Agency, 1988), but was unable to conclude whether the source of contamination originated from normal or mismanaged uses of pesticides (U.S. Office of Technology Assessment, 1990). In addition, there is an incomplete understanding of the potential effects of groundwater contamination on health and environment. Nonetheless, experience indicates that cleanup of such contamination is difficult and costly (National Research Council, 1993a; U.S. Environmental Protection Agency, 1987).

Pesticides can be both acutely and chronically toxic to humans exposed to residues in the environment, drinking water, or food (National Research Council, 1986a, 1987, 1993b). Residues have been frequently detected in the soil, surface water, ground water and in foods (Baker and Richards, 1989; Hallberg, 1989; Holden et al., 1992; National Research Council, 1986a, 1987, 1993b; Thurman et al., 1991; U.S. Environmental Protection Agency, 1990).

The acute effects of a single exposure to high concentrations of pesticides have been documented from clinical and epidemiological studies (National Research Council, 1993b) and are of particular concern for those who apply pesticides. Some acute effects can be skin or eye injury, dermal sensitization, observable neurotoxic behavioral changes, or even mortality (National Research Council, 1993b). Acute effects also arising from consumption of contaminated foods are possible if application rates exceed legally mandated tolerances for pesticide residues or if residues of several pesticides with the same mode of action conjointly exceed those tolerances (National Research Council, 1993b).

The chronic effects of pesticides, caused by exposure to pesticides at concentrations below those causing acute effects, are more difficult to predict or detect in clinical or epidemiological studies. Certain tumors appear to be more common among farmers than in the population at large. This observation prompted a number of epidemiologic studies by federal and medical agencies. The incidence of cancer mortality in Midwestern farmers seems to be associated with corn production, according to studies conducted by the Iowa Institute of Agricultural Medicine and the National Cancer Institute. Cancer mortality in the southeastern United States has also shown a positive association with intensity of cotton and

vegetable farming. The National Cancer Institute is studying the incidence of leukemia and non-Hodgkin's lymphoma among men from Minnesota, Iowa, and Nebraska. Increased rates of cancer have been the primary health concern related to long-term occupational and dietary exposure to pesticides, but neurological, immunological, reproductive, and developmental effects are now being included in chronic risk assessments (National Research Council, 1986a, 1993b). Most recently the potential for differential risks to infants and children from exposure to pesticides has been highlighted (Guzelian et al., 1992; National Research Council, 1993b).

Since the introduction and widespread use of broad-spectrum chemical pesticides, their chronic and acute risks to human and to environmental health have been documented. The public increasingly expresses preference for pest control systems that minimize these risks.

TIME TO REASSESS AND PLAN

To ensure the availability of safe, profitable, and durable solutions to pest problems in the next century, planning must begin now. The combined effects of resistance, escalating costs of developing new compounds, and pesticide-induced pest outbreaks seriously impede agriculture's ability to manage pests economically and safely using current broad-spectrum, chemical-dominated approaches. Because growers need nontoxic and low-cost controls for pest problems, researchers have been exploring a wide range of alternative management practices, including traditional cultural controls (Ferris, 1992). Reliable, safe, economic, and ecologically responsible solutions to pest problems are likely to be readily adopted by growers; therefore, the return on public or private investment in research directed at discovering new management strategies is likely to be substantial.

Planning for the future, however, requires a vision of the needs of society and of the scientific progress required to meet those needs. Chapter 2 describes that vision and strategies for implementing this new approach to pest management.