13

Conclusions and Recommendations

The area of human behavior representation is at least as important to the mission of the Defense Modeling and Simulation Office (DMSO) as the area of modeling of the battlefield environment, yet at the time of this study, human behavior representation was receiving only a fraction of the resources assigned to modeling of the environment. The panel's review has indicated that there are many areas today in which models of human behavior are needed and in which the models being used are inadequate. There are many other areas in which there is a clear need for models, but none exist. Successful development of the needed models will require the sustained application of resources both to the infrastructure supporting human behavior representation and to the development of the models themselves.

The panel believes continued advances in the science and practice of human behavior representation will require effort on many fronts from many perspectives. We recognize that DMSO is primarily a policy-making body and that, as an organization, it does not sponsor or execute specific modeling projects. In those areas in which DMSO does not participate directly, we recommend that it advocate an increased focus by the individual services on the objective of incorporating enhanced human behavior representation in the models used within the military modeling and simulation community.

The overall recommendations of the panel are organized into two major areas—a programmatic framework and infrastructure/information exchange. The next section presents the framework for a program plan through which the goal of developing models of human behavior to meet military needs can be realized. The section that follows presents recommendations in the area of infrastructure

and information exchange that can help promote vigorous and informed development of such models. Additional recommendations that relate to specific topics addressed in the report are presented as goals in the respective chapters.

A FRAMEWORK FOR THE DEVELOPMENT OF MODELS OF HUMAN BEHAVIOR

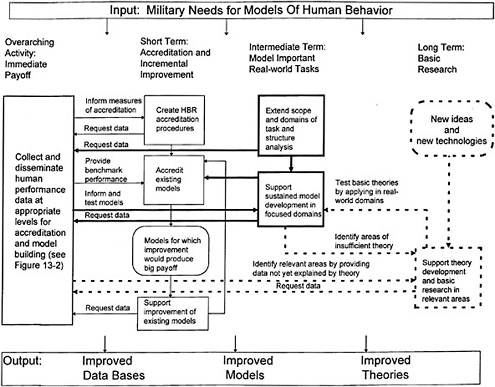

The panel has formulated a general framework that we believe can guide the development of models of human behavior for use in military simulations. This framework reflects the panel's recognition that given the current state of model development and computer technology, it is not possible to create a single integrative model or architecture that can meet all the potential simulation needs of the services. Figure 13.1 presents the elements of a plan for DMSO to apply in pursuing the development of models of human behavior to meet short-, intermediate-, and long-term goals. For the short term (as shown on the left side of the figure), the panel believes it is important to collect real-world, war-game, and laboratory data in support of the development of new models and the development and application of human model accreditation procedures. For the intermediate term (as shown in the center of the figure), we believe DMSO should extend the scope of useful task analysis and encourage sustained model development in focused areas. And for the long term (as shown on the right hand side of the figure), we believe DMSO should advocate theory development and behavioral research that can lead to future generations of models of human and organizational behavior. Together, as illustrated in Figure 13.1, these initiatives constitute a program plan for human behavior representation development for many years to come.

Work on achieving these short-, intermediate-, and long-term goals should begin concurrently. The panel recommends that these efforts proceed in accordance with four themes, listed below in order of priority and discussed in more detail in the following subsections:

-

Collect and disseminate human performance data.

-

Create accreditation procedures for models of human behavior.

-

Support sustained model development in focused domains.

-

Support theory development and basic research in relevant areas.

Collect and Disseminate Human Performance Data

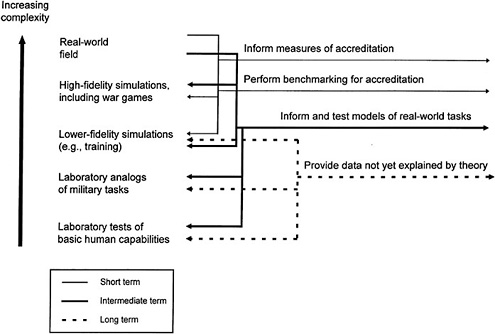

As indicated on the left of Figure 13.1, the panel has concluded that all levels of model development depend on the sustained collection and dissemination of human behavior data. Figure 13.2 expands on this requirement by elaborating the range of data collection needs, which extend from real-world field data to laboratory studies of basic human capacities. Examples of salient field research are

studies of the effects of fatigue on troop movement speeds and the gathering of quantitative data on the communication patterns within and across echelons in typical joint task force command and control exercises. Examples of laboratory work that has importance for real-world contexts are studies of the orientation of behavior to coordinated acoustic and visual stimuli and studies of the relationship between risk taking and information uncertainty. Between these extremes there is a need for data derived from high-fidelity simulations and war games and for data from laboratory analogs to military tasks. Examples of high-fidelity data of value to the modeler are communication logs and mission scenarios.

Chapter 12 emphasizes the need for detailed task analyses, but such analyses are not sufficient for the development of realistic human behavior representations. There is also a need for the kind of real-world military data that reflect, in context, the way military forces actually behave, are coordinated, and communicate. There are some good data on how fast soldiers can walk on various kinds of terrain and how fast tanks can move, but data are sparse on such things as the accuracy of localization of gun shots in the battlefield, the time it takes to communicate a message from one echelon to the next by various media, and the flexibility of different command and control structures.

These data are needed for a variety of purposes, as indicated in Figure 13.2: to support the development of measures of accreditation, to provide benchmark performance for comparison with model outputs in validation studies, to help set the parameters of the actual models of real-world tasks and test and evaluate the efficacy of those models, and to challenge existing theory and lead to new conceptions that will provide the grist for future models. It is not enough simply to advocate the collection of these data. There also must be procedures to ensure that the data are codified and made available in a form that can be utilized by all the relevant communities—from military staffs who need to have confidence in the models to those in the academic sphere who will develop the next generation of models. Some of these data, such as communication logs from old war games, already exist; however, they need to be categorized, indexed, and made generally available. Individual model and theory builders should be able to find out what data exist and obtain access to specific data on request.

Create Accreditation Procedures for Models of Human Behavior

The panel has observed very little quality control among the models that are used in military simulations today. Just as there is a need for accreditation of constructive models that are to be used in training and doctrine development, there is a need for accreditation of models of human and organizational behavior. DMSO should develop model accreditation procedures specifically for this purpose.

One component needed to support robust accreditation procedures is quantitative measures of human performance. In addition to supporting accreditation,

such measures would facilitate evaluation of the cost-effectiveness of alternative models so that resource allocation judgments could be made on the basis of data rather than opinion. The panel does not believe that the people working in the field are able to make such judgments now, but DMSO should promote the development of simulation performance metrics that could be applied equivalently to live exercises and simulations. These metrics would be used to track the relative usefulness (cost-effectiveness, staff utilization efficiency, elapsed time, training effectiveness, transfer-of-training effectiveness, range of applicability) of the available models (across different levels of human behavior fidelity and psychological validity) as compared with performance outcomes obtained from live simulations and exercises. In the initial stages, these metrics would be focused on evaluation of particular models and aspects of exercises; in the long term, however, the best of these metrics should be selected for more universal application. The goal would be to create state-of-health statistics that would provide quantitative evidence of the payoff for investments in human behavior representation. These statistics would show where the leverage is for the application of models of human behavior to new modeling needs. For this goal to be achieved, there must be sustained effort that is focused on quantitative performance metrics and that can influence evaluation across a range of modeling projects.

There are special considerations involved in human behavior representation that warrant having accreditation procedures specific to this class of behavioral models. The components of accreditation should include those described below.

Demonstration/Verification. Provide proof that the model actually runs and meets the design specifications. This level of accreditation is similar to that for any other model, except that verification must be accomplished with human models in the loop, and to the extent that such models are stochastic, will require repeated runs with similar but not identical initial conditions to verify that the behavior is as advertised.

Validation. Show that the model accurately represents behavior in the real world under at least some conditions. Validation with full generality is not possible for models of this complexity; rather, the scope and level of the required validation should be very focused and matched closely to the intended uses of each model. One approach to validation is to compare model outputs with data collected during prior live simulations conducted at various military training sites (e.g., the National Training Center, Red Flag, the Joint Readiness Training Center). Another approach is to compare model outputs with data derived from laboratory experiments or from various archival sources. Other approaches are discussed in Chapter 12.

As previously indicated, the procedures for conducting validation of models as complex as those involving human behavior are not well developed or under-

stood; however it is important to move toward more quantitative evaluation, rather than simply relying on subject matter experts to judge that a model is ''good enough." One reason these models are difficult to validate is that they are so complex that all their aspects cannot be validated within a reasonable amount of time. A second reason is that these models are often dynamic, predicting changes over time, but longitudinal data on individuals and units have rarely been collected because doing so is extremely time intensive and preempts valuable resources. Unit-level models are often particularly difficult to validate in depth because of the large amounts of data required.

Finally, to bring objectivity and specialized knowledge to the validation process, the panel suggests that the validation team include specialists in modeling and validation who have not participated in the actual model development.

Analysis. Describe the range of predictions that can be generated by the model. This information is necessary to define the scope of the model; it can also be used to link this model with others. Analysis is hampered by the complexity of these models, which makes it difficult to extract the full range of behavior covered. Thus investment in analysis tools is needed to assist in this task.

Documentation Requirements. The accreditation procedures should include standards for the documentation that explains how to run and modify the model and a plan for maintaining and upgrading the model. Models will be used only if they are easy to run and modify to meet the changing needs of the user organization. Evaluation of the documentation should include exercising specific scenarios to ensure that the documentation facilitates the performance of the specified modeling tasks. It should also be noted that models that are easy to use and modify run the danger of being used in situations where they are neither validated nor appropriate. The documentation for a model should indicate clearly the model's scope of application and the situations in which it has been validated. In the case of models that learn from simulator experience, a record of past engagement history should be included with the model's documentation. Specific limitations of the models should be listed as well. Finally, as models are modified, they should be revalidated and reaccredited.

As a high priority, the panel recommends that the above accreditation procedures be applied to military models of human behavior that are either currently in use or being prepared for use, most of which have not had the benefit of rigorous quantitative validation, and that the results of these analyses be used to identify high-payoff areas for improvement. Significant improvements may thereby be achievable relatively quickly for a small investment. The analysis results can also be used to identify the most successful models and the resources and methodologies responsible for their success, thus providing a starting point for determining the resources and methods required for successful modeling efforts. The

accreditation procedures described here can lead directly to a program to make the identified improvements to existing models with an immediate payoff.

Support Sustained Model Development in Focused Domains

Several specific activities are associated with model development. They include the following.

Develop Task Analysis and Structure

It is important to continue and expand the development of detailed descriptions of military contexts—the tasks, procedures, and structures (such as common models of mission space [CMMS]; command, control, communications, and intelligence [C3I] architectures; and military scenarios) that provide the structure for modeling human behavior at the individual, unit, and command levels. The development of combat instruction sets at the individual level, CMMS at the unit level, and joint mission essential task lists (JMETLs) at the joint service level represents a starting point for understanding the tasks to be incorporated into models and simulations—but only a starting point. For example, combat instruction sets need to be extended to all services, deeper task analyses are required for model development purposes, and existing descriptions do not cover typical requirements for modeling large units. Databases describing resources, platforms, weapon systems, and weather conditions need to be constructed. There is also a need to codify the variants in organizational structures in the form of a taxonomy. At the individual level, combat instruction sets need to move from describing lower-level physical tasks, to describing higher-level perceptual and cognitive tasks, to describing even higher-level unit tasks and the assignment of subtasks to subunits. Since task analysis is such a knowledge-intensive operation, there is a need to develop tools for knowledge assessment and knowledge acquisition for these higher-level individual- and unit-level tasks.

Further, more in-depth structural analysis of existing C3I architectures is required as a basis for improving models of command and control. To accomplish such structural analysis, there is a need to develop a data archive describing the current architectures and those being used in various simulation systems. Likewise, there is a need for a common scheme for describing and providing data on these architectures. Since structural analysis is a data-intensive operation, it has to be supported with data collection and visualization tools. Finally, there is a need for a data archive of typical scenarios for single-service and joint-service operations in a variety of circumstances, including operations other than war. These scenarios could be used as the tasks against which to examine new models.

Establish Model Purposes

Model building currently requires scientific judgment. The modeler must establish very explicitly the purpose(s) for which a model is being developed and apply discipline to enhance model fidelity only to support those purposes. For example, in early developments, the primary purpose was to teach military tactics to teams. Instead of artillery units being simulated realistically, a fire control officer sitting at a computer terminal with a telephone received and executed requests for fire. From the battlefield perspective, the tactical effects were much like those of real artillery batteries.

Support Focused Modeling Efforts

Once high-priority modeling requirements have been established, we recommend sustained support in focused areas for human behavior model development that is responsive to the methodological approach outlined in Chapter 12. Soar-intelligent forces (IFOR) and the virtual design team are examples of model development in focused areas at the individual and unit levels, respectively. In both cases, specific goals were set, and significant resources were made available to carry out the work. In the case of the virtual design team, many of these resources came from the private sector. We are not necessarily advocating the funding of either of these systems. Rather, we mention them only to point out that high levels of sustained funding and large teams of researchers are necessary to develop such models.

In the course of this study, the panel examined the current state of integrated computational models of human behavior and of human cognitive processes that might lead to the improved models of the future. Chapter 3 describes several candidate integrative models for individual behavior that represent the best approaches currently available, while Chapter 10 describes such models for larger, unit-level behavior. Although these examples may be viewed as very early steps in the field and will surely be supplanted by more advanced and more complex architectures over time, they are nevertheless useful, promising, and a good starting point. However, given the interest in a wide variety of modeling goals, there is currently no single representation architecture suited to all individual human or organizational modeling needs. Each integrated model we have described implies its own architecture, and each chapter on a particular cognitive content area suggests specific alternative modeling methodologies. It is not likely, even in the future, that any single architecture will address all modeling requirements.

On the other hand, we recognize the value of having a unitary architecture. Each new architecture requires an investment in infrastructure that is beyond the investment in specific models to be built using that architecture. Having an architecture that constrains development can promote interoperability of component modeling modules. As applications are built with a particular architecture,

the infrastructure can become more robust, and some applications can begin to stand on the shoulders of others. Development can become synergistic and therefore more efficient.

At this point in the maturity of the field, it would be a mistake for the military services to make a choice of one or another architecture to the exclusion of others. It might be thought that a modular approach, in which modules are selected within each architecture and are then imported for use within other architectures, is a sensible compromise. However, a choice of modular boundaries is a choice of model conception, and one the leaders in the field are not yet ready to provide. Thus we recommend that the architectures pursued within the military focus initially on the promising approaches identified in Chapter 3. This is an especially important point because the time scale for architecture development and employment is quite long, and prior investment in particular architectures can continue to produce useful payoffs for a long time after newer and possibly more promising architectures have appeared and started to undergo development. On the other hand, this recommendation is in no way meant to preclude exploration of alternative architectures. Indeed, resources need to be devoted to the exploration of alternative architectures, and in the medium and especially long terms, such research will be critical to continued progress.

Employ Interdisciplinary Teams

As suggested in the panel's interim report, it is very important that model development be accomplished by interdisciplinary teams. The scope of expertise that is required includes military specialists and researchers and modelers with specific expertise in cognitive psychology, social psychology, sociology, organizational behavior, computer science, and simulation technology. Each development team should be structured such that the expertise needed for the specific task is present. For example, a team building a unit-level model should have a member with sociological, group, or organizational expertise. Similarly, the systematic joint survey recommended in Chapter 6 to identify significant decision making research topics would require intensive collaboration between military simulation experts and decision behavior researchers. Without such mixes of appropriate expertise, it is likely that modelers will simply reinvent prior art or pursue unproductive alternatives.

Benchmark

We recommend the conduct of periodic modeling exercises throughout model development to benchmark the progress being made and enable a focus on the most important shortfalls of the prototype models. These exercises should be scheduled so as not to interfere with further development advances

and should take advantage of interpretation, feedback, and revision based on previous exercises.

Promote Interoperability

In concert with model development, DMSO should evolve policy to promote interoperability among models representing human behavior. Although needs for human behavior representation are common across the services, it is simplistic to contemplate a single model of human behavior that could be used for all military simulation purposes, given the extent to which human behavior depends on both task and environment. Therefore, the following aspects of interoperability should be pursued:

-

Parallels among simulation systems that offer the prospect of using the same or similar human behavior representation modules in more than one system

-

The best means for assembling and sharing human behavior representation modules among simulation system developers

-

Procedures for extracting components of human behavior representation modules that could be used in other modules

-

Procedures for passing data among modelers

-

Modules for measuring individual- and unit-level data that could be used in and calculated by more than one system

One cannot assume that integrated, interoperable models can be achieved merely by interconnecting component modules. Even differing levels of model sophistication within a specific domain may require different modeling approaches. One must generate integrative models at the level of principles and theory and adapt the implementation as needed to accommodate the range of modeling approaches.

Employ Substantial Resources

Improving the state of human behavior representation will require substantial resources. Even when properly focused, this work is at least as resource demanding as environmental representation. Further, generally useful unit-level models are unlikely to emerge simply through minor adjustments in integrative individual architectures. Integrative unit-level architectures are currently being developed, but lag behind those at the individual level both in their comprehensiveness and in their direct applicability to military issues. There are two reasons for this. First, moving from the individual to the unit level is arguably an increase in complexity. Second, far more resources and researchers have been focused on individual-level models. It is important to recognize that advances at the unit level comparable to those at the individual level will require the expenditure of comparable resources and time.

Support Theory Development and Basic Research in Relevant Areas

As illustrated in the discussion of cognition and command and control behavior in earlier chapters of this report, there are many areas in which adequate theory either is entirely lacking or has not been integrated to a level that makes it directly applicable to the needs of human behavior representation. There is a need for continued long-term support of theory development and basic research in areas such as decision making, situation awareness, learning, and organizational modeling. It would be short-sighted to focus only on the immediate payoffs of modeling. Support for future generations of models needs to be sustained as well. It might be argued that the latter is properly the role of the National Science Foundation or the National Institutes of Health. However, the kinds of theories needed to support human behavior representation for military situations are not the typical focus of these agencies. Their research tends to emphasize toy problems and predictive modeling in restricted experimental paradigms for which data collection is relatively easy. To be useful for the representation of military human behavior, the research needs to be focused on the goal of integration into larger military simulation contexts and on specific military modeling needs.

RECOMMENDATIONS FOR INFRASTRUCTURE AND INFORMATION EXCHANGE

The panel has identified a set of actions we believe are necessary to build consensus more effectively within the Department of defense modeling and simulation community on the need for and direction of human performance representation within military simulations. The focus is on near-term actions DMSO can undertake to influence and shape modeling priorities within the services. These actions are in four areas: collaboration, conferences, interservice communication, and education/training.

Collaboration

The panel believes it is important in the near term to encourage collaboration among modelers, content experts, and behavioral and social scientists, with emphasis on unit/organizational modeling, learning, and decision making. It is recommended that specific workshops be organized in each of these key areas. We view the use of workshops to foster collaboration as an immediate and low-cost step to promote interchange within each of these areas and provide opportunities for greater interservice understanding.

Conferences

The panel recommends an increase in the number of conferences focused on the need for and issues associated with human behavior representa-

tion in military models and simulations. The panel believes the previous biennial conferences on computer-generated forces and behavioral representation have been valuable, but could be made more useful through changes in organization and structure. We recommend that external funding be provided for these and other such conferences and that papers be submitted in advance and refereed. The panel believes organized sessions and tutorials on human behavior representation with invited papers by key contributors from the various disciplines associated with the field can provide important insights and direction. Conferences can also provide a proactive stimulus for the expanded interdisciplinary cooperation the panel believes is essential for success in this arena.

Expanded Interservice Communication

There is a need to actively promote communication across the services, model developers, and researchers. DMSO can lead the way in this regard by developing a clearinghouse for human behavior representation, perhaps with a base in an Internet web site, with a focus on information exchange. This clearinghouse might include references and pointers to the following:

-

Definitions

-

Military task descriptions

-

Data on military system performance

-

Live exercise data for use in validation studies

-

Specific models

-

Resource and platform descriptions

-

DMSO contractors and current projects

-

Contractor reports

-

Military technical reports

Education and Training

The panel believes that opportunities for education and training in the professional competencies required for human behavior representation at a national level are lacking. We recommend that graduate and postdoctoral fellowships in human behavior representation and modeling be provided. Institutions wishing to offer such fellowships would have to demonstrate that they could provide interdisciplinary education and training in the areas of human behavior representation, modeling, and military applications.

A FINAL THOUGHT

The modeling of cognition and action by individuals and groups is quite possibly the most difficult task humans have yet undertaken. Developments in

this area are still in their infancy. Yet important progress has been and will continue to be made. Human behavior representation is critical for the military services as they expand their reliance on the outputs from models and simulations for their activities in management, decision making, and training. In this report, the panel has outlined how we believe such modeling can proceed in the short, medium, and long terms so that DMSO and the military services can reap the greatest benefit from their allocation of resources in this critical area.