6

Promotion and Retention

The typical organization of American schools into grades by the ages of their students is challenged by large variations in achievement within ages and grades. The resulting tension is reduced somewhat by overlap in the curriculum from one grade to the next. It is also reduced by strategies for grouping students by observed levels of readiness or mastery: these include special education placement, academic tracking, extended kindergarten, and grade retention. The uses of tests in tracking and with students with disabilities are discussed in Chapters 5 and 8, respectively. The use of testing to support the strategies of extended kindergarten and grade retention is treated in this chapter.

Social Promotion, Retention, and Testing

Much of the current public discussion of high-stakes testing of individual students is motivated by calls for "an end to social promotion." The committee therefore began by looking for data on the actual extent of promotion and retention, on the prevalence of test use for making those decisions, and at trends and differentials in those data.

In a memorandum for the secretary of education, President Clinton (1998:1–2) wrote that he had "repeatedly challenged States and school districts to end social promotions—to require students to meet rigorous

academic standards at key transition points in their schooling career, and to end the practice of promoting students without regard to how much they have learned…. Students should not be promoted past the fourth grade if they cannot read independently and well, and should not enter high school without a solid foundation in math. They should get the help they need to meet the standards before moving on."

The administration's proposals for educational reform strongly tie the ending of social promotion to early identification and remediation of learning problems. The president calls for smaller classes, well-prepared teachers, specific grade-by-grade standards, challenging curriculum, early identification of students who need help, after-school and summer school programs, and school accountability. He also calls for "appropriate use of tests and other indicators of academic performance in determining whether students should be promoted" (Clinton, 1998:3). The key questions are whether testing will be used appropriately in such decisions and whether early identification and remediation of learning problems will take place successfully.

The president is by no means alone in advocating testing to end social promotion. Governor Bush of Texas has proposed that "3rd graders who do not pass the reading portion of the Texas Assessment of Academic Skills would be required to receive help before moving to regular classrooms in the 4th grade. The same would hold true for 5th graders who failed to pass reading and math exams and 8th graders who did not pass tests in reading, math, and writing. The state would provide funding for locally developed intervention programs" (Johnston, 1998). New York City Schools Chancellor Rudy Crew has proposed that 4th and 7th graders be held back if they fail a new state reading test at their grade level, beginning in spring 2000. Crew's proposal, however, would combine testing of students with "a comprehensive evaluation of their course work and a review of their attendance records," and the two-year delay in implementation of the tests would permit schools "to identify those students deemed most at risk and give them intensive remedial instruction" (Steinberg, 1998a).

Test-based requirements for promotion are not just being proposed; they are being implemented. According to a recent report by the American Federation of Teachers (1997b), 46 states either have or are in the process of developing assessments aligned with their content standards. Seven of these states, up from four in 1996, require schools and districts to use the state standards and assessments in determining whether students

should be promoted into certain grades.1 At the same time, Iowa and, until recently, California have taken strong positions against grade retention, based on research or on the reported success of alternative intervention programs (George, 1993; Iowa Department of Education, 1998).

In 1996–1997 the Chicago Public Schools instituted a new program to end social promotion. Retention decisions are now based almost entirely on student performance on the Iowa Test of Basic Skills (ITBS) at the end of grades 3, 6, and 8. Students who fall below specific cutoff scores at each grade level are required to attend highly structured summer school programs and to take an alternative form of the test at summer's end.2 At the end of the 1996–1997 school year, 32 percent, 31 percent, and 21 percent of students failed the initial examination at grades 3, 6, and 8, respectively. Out of 91,000 students tested overall, almost 26,000 failed. After summer school, 15 percent, 13 percent, and 8 percent of students were retained at the three grade levels (Chicago Public Schools, 1998a).3

The current enthusiasm for the use of achievement tests to end social promotion raises three concerns. First, much of the public discussion and some recently implemented or proposed testing programs appear to ignore existing standards for appropriate test use. For that reason, much of this chapter is devoted to a review and exposition of the appropriate use

of standardized achievement testing in the context of promotion or retention decisions about individual students.

Second, there is persuasive research evidence that grade retention typically has no beneficial academic or social effects on students. 4 The past failures of grade retention policies need not be repeated. But they provide a cautionary lesson: making grade retention—or the threat of retention—an effective educational policy requires consistent and sustained effort.

Third, public discussion of social promotion has made little reference to current retention practices—in which a very large share of American schoolchildren are already retained in grade. In part, this is because of sporadic data collection and reporting, but far more consistent statistical data are available about the practice of grade retention than, say, about academic tracking. It is possible to describe rates, trends, and differentials in grade retention using data from the U.S. Bureau of the Census, but these data have not been used fully to inform the public debate. For this reason, the committee has assembled and analyzed the available data. Our findings about grade retention are summarized here and elaborated in the appendix to Chapter 6.

Trends and Differentials in Grade Retention

No national or regional agencies monitor social promotion and grade retention. Occasional data on retention are available for some states and localities, but coverage is sparse, and little is known about the comparability of these data (Shepard and Smith, 1989). The committee asked every state education agency to provide summaries of recent data on grade retention, but only 22 states, plus the District of Columbia, provided data on retention at any grade level. Many states did not respond, and 13 states collect no data at all on grade retention. Among responding states, retention tends to be high in the early primary grades—although not in kindergarten—and in the early high school years, and retention rates are highly variable across states.

The committee's main source of information on levels, trends, and differentials in grade retention is the Current Population Survey (CPS) of the U.S. Bureau of the Census. Using published data from the annual October School Enrollment Supplement of the CPS, it is possible to track the distribution of school enrollment by age and grade each year for groups defined by sex and race/ethnicity. These data have the advantage of comparable national coverage from year to year, but they say nothing directly about educational transitions or about the role of high-stakes testing in grade retention. We can only infer the minimum rate of grade retention by observing changes in the enrollment of children below the modal grade level for their age from one calendar year to the next. Suppose, for example, that 10 percent of 6-year-old children were enrolled below the 1st grade in October 1994. If 15 percent of those children were enrolled below the 2nd grade in October 1995, when they were 7 years old, we would infer that at least 5 percent were held back in the 1st grade between 1994 and 1995.

Extended Kindergarten Attendance

Over the past two decades, attendance in kindergarten has been extended to two years for many children in American schools,5 with the consequence that age at entry into graded school has gradually crept upward and become more variable. There is no single name for this phenomenon, nor are there distinct categories for the first and second years of kindergarten in Census enrollment data. As Shepard (1991) reports, the names for such extended kindergarten classrooms include "junior-first," "prefirst," "transition," and "readiness room." Fragmentary reports suggest that, in some places, kindergarten retention may have been as high as 50 percent in the late 1980s (Shepard, 1989, 1991). The degree to which early retention decisions originate with parents—for example, to increase their children's chances for success in athletics—rather than with teachers or other school personnel is not known. Moreover, there are no sound national estimates of the prevalence of kindergarten retention, and none of the state data in Appendix Table 6-1 indicate exceptionally high kindergarten retention rates.

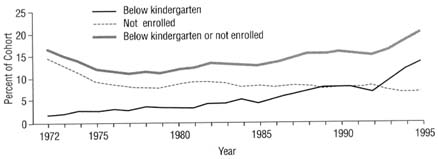

The Census Bureau's statistics show that, from the early 1970s to the

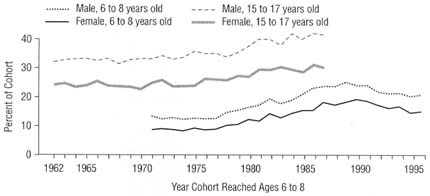

late 1980s, age at entry into 1st grade gradually increased, but for the past decade there has been little change. Among 6-year-old boys, only 8 percent had not yet entered the 1st grade in 1971; in 1987 the number was 22 percent, and in 1996 it was almost that high—21 percent. Among 6-year-old girls, only 4 percent had not yet entered 1st grade in 1971; the number grew to 16 percent in 1987 and to 17 percent in 1996. Although boys are consistently more likely than girls to enter 1st grade after age 6, there are only small differences among the rates for blacks, whites, and Hispanics.

One contributing factor to the rising age at entry into 1st grade has been a rising age at entry into kindergarten, which is not related to retention.6 Although it is not known how widely tests are used in assigning students to extended kindergarten, there is substantial professional criticism of the practice. According to Shepard (1991), such decisions may be based on evidence of "immaturity or academic deficiencies." Shepard adds, "Tests used to make readiness and retention decisions are not technically accurate enough to justify making special placements. … Readiness tests are either thinly disguised IQ tests (called developmental screening measures) or academic skills tests…. Both types of tests tend to identify disproportionate numbers of poor and minority children as unready for school" (1991:287). Other educators, however, believe that such tests may appropriately be used for placement decisions about young children.

An advisory group of the National Educational Goals Panel has recommended against the use of standardized achievement measures to make decisions about young children or their schools: "Before age 8, standardized achievement measures are not sufficiently accurate to be used for high-stakes decisions about individual children and schools" (Shepard et al., 1998:31). This committee has reached a similar conclusion. At the same time, the advisory group encouraged one type of testing of young children: "Beginning at age 5, it is possible to use direct measures, including measures of children's learning, as part of a comprehensive early childhood system to monitor trends. Matrix sampling procedures should be used to ensure technical accuracy and at the same time protect against the misuse of data to make decisions about individual children" (Shepard

et al., 1998:27).7 With young children, it is especially important to distinguish between uses of tests to monitor the progress of large groups and to make decisions about the future of individual students.

Research on kindergarten retention suggests that it carries no academic or social benefits for students. Shepard's (1991:287) review of 16 controlled studies found "no difference academically between unready children who spent an extra year before first grade and at-risk controls who went directly on to first grade." She did, however, find evidence that most children were traumatized by being held back (Shepard, 1989, 1991; Shepard and Smith, 1988, 1989). Shepard further reports that "matched schools that do not practice kindergarten retention have just as high average achievement as those that do but tend to provide more individualized instruction within normal grade placements" (Shepard, 1991:287).

In some cases, even with special treatment for retained students, they were no better off than similar students who had been promoted and given no exceptional treatment. Leinhardt (1980) compared at-risk children in a transition room who received individualized instruction in reading with a group of at-risk children who had been promoted and received no individualized instruction. The two groups performed comparably at the end of first grade, but both performed worse than a second comparison group that had been promoted and given individualized reading instruction.

Retention in the Primary and Secondary Grades

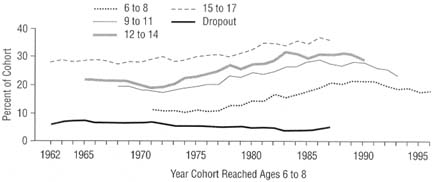

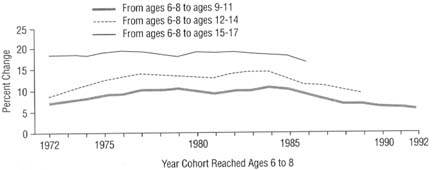

"Age-grade retardation" is a term that refers to enrollment below the modal grade level for a child's age—and no broader meaning is either intended or implied. For example, consider children who were 6 to 8 years old in 1987—the most recent birth cohort whose history can be traced all the way from ages 6 to 8 up through ages 15 to 17. At ages 6 to 8, 21 percent were enrolled below the modal grade for their age; some of this below-grade enrollment reflects differentials in age at school entry, but some represents early grade retention. By 1990, when this cohort reached ages 9 to 11, age-grade retardation grew to 28 percent, and it was 31 percent in 1993, when the cohort reached ages 12 to 14. By 1996,

when the cohort reached ages 15 to 17, the percentage who were either below the modal grade level (or had left school) was 36 percent. Almost all of the growth in retardation after ages 12 to 14, however, was due to school dropout, rather than grade retention among the enrolled. In most birth cohorts, age-grade retardation occurs mainly between ages 6 to 8 and 9 to 11 or between ages 12 to 14 and 15 to 17.

Age-grade retardation increased in every cohort that reached ages 6 to 8 from the early 1970s through the mid- to late 1980s. It increased at ages 15 to 17 for cohorts that reached ages 6 to 8 after the mid-1970s, despite a slow decline in its dropout component throughout the period. That is, grade retention increased while dropping out decreased. Among cohorts entering school after 1970, the proportion enrolled below the modal grade level was never less than 10 percent at ages 6 to 8, and it exceeded 20 percent for cohorts of the late 1980s. Age-grade retardation has declined slightly for cohorts that reached ages 6 to 8 after the mid-1980s, but rates have not moved back to the levels of the early 1970s. Overall, a large number of children are held back during elementary school. Among cohorts who reached ages 6 to 8 in the 1980s and early 1990s, age-grade retardation reached 25 to 30 percent by ages 9 to 11.

Retention After School Entry

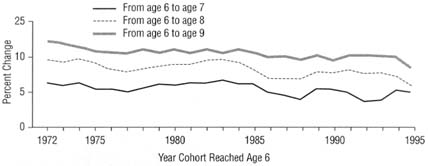

Age-grade retardation cumulates rapidly after age 6. For example, among children who were 6 years old in 1987, enrollment below the modal grade increased by almost 5 percentage points between ages 6 and 7 and by 5 percentage points more between ages 7 and 9. The trend appears to be a decline in retention between ages 6 and 7 after the early 1980s. That is, there appears to have been a shift in elementary school grade retardation downward in age from the transition between ages 6 and 7 to somewhere between ages 4 and 6.

How much retention is there after ages 6 to 8? And does the recent growth in grade retardation by ages 6 to 8 account for its observed growth at older ages? Age-grade retardation grows substantially after ages 6 to 8 as a result of retention in grade. For example, among children who reached ages 6 to 8 between 1972 and 1985, almost 20 percent more were below the modal grade for their age by the time they were 15 to 17 years old. Among children who reached ages 6 to 8 between the mid-1970s and the mid-1980s, age-grade retardation grew by about 10 percentage points by ages 9 to 11, and it grew by close to 5 percentage points more by

ages 12 to 14. Relative to ages 6 to 8, age-grade retardation at ages 9 to 11 and at ages 12 to 14 increased for cohorts who were 6 to 8 years old in the early 1970s; it was stable from the mid-1970s to the mid-1980s; and it has declined since then. However, the gap between retention at ages 15 to 17 and that at ages 6 to 8 has been relatively stable—close to 20 percentage points—with the possible exception of a very recent downward turn. Thus, the rise in age at entry into 1st grade—which is partly due to kindergarten retention—accounts for much of the overall increase in age-grade retardation among teenagers.

In summary, grade retention is pervasive in American schools. Ending social promotion probably means retaining even larger numbers of children. Given the evidence that retention is typically not educationally beneficial—leading to lower achievement and higher dropout—the implications of such a policy are cause for concern.

Social Differences in Retention

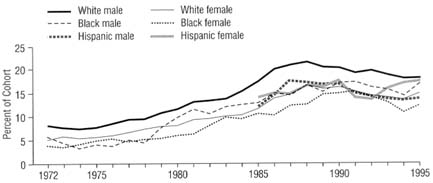

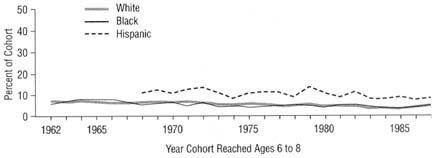

Boys are initially more likely than girls to be placed below the typical grade for their age, and they fall farther behind girls as they move through school. Overall, the sex differential gradually increases with age from 5 percentage points at ages 6 to 8 to 10 percentage points at ages 15 to 17.

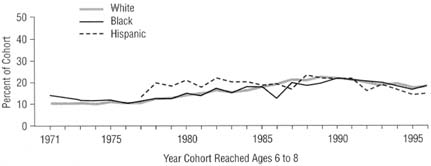

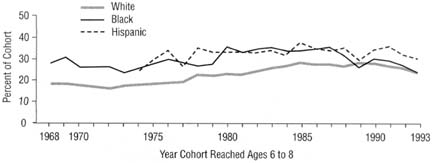

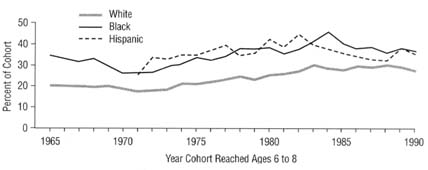

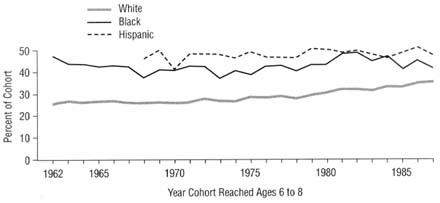

Differences in age-grade retardation by race and ethnicity are even more striking than the gender differential. Rates of age-grade retardation are very similar among whites, blacks, and Hispanics at ages 6 to 8. But by ages 9 to 11, 5 to 10 percent more blacks and Hispanics than whites are enrolled below the modal grade level. The differentials continue to grow with age, and, at ages 15 to 17, rates of age-grade retardation range from 40 to 50 percent among blacks and Hispanics, and they have gradually drifted up from 25 percent to 35 percent among whites.

Gender and race/ethnic differentials in recent years result mainly from retention, not differences in age at school entry. By age 9, there are sharp social differentials in age-grade retardation favoring whites and girls relative to blacks or Hispanics and boys. By ages 15 to 17, close to 50 percent of black males have fallen behind—30 percentage points more than at ages 6 to 8—but age-grade retardation has never exceeded 30 percent among 15- to 17-year-old white girls.

Psychometrics of Certification

This section addresses the underlying rationale for using tests in promotion decisions and then describes the evidence required to validate such use.

Logic of Certification Decisions

Promotion can be thought of in two ways: First, as recognition for mastering the material taught at a given grade level. In this case, a test used to determine whether a student should be promoted would certify that mastery. Second, promotion can also be thought of as a prediction that the student would profit more by studying the material offered in the next grade than by studying again the material in the current grade. In this case, the test is a placement device. At present, most school districts and states having promotion policies use tests as a means of assessing mastery (American Federation of Teachers, 1997a). Furthermore, retention in grade is the most common consequence for students who are found to lack this mastery (Shepard, 1991).

Validating a particular test use includes making explicit the assumptions or claims that underpin that use (Kane, 1992; Shepard, 1991, 1997). On one hand, the most critical assumption in the case of a promotion test certifying mastery is that it is a valid measure of the important content, skills, and other attributes covered by the curriculum of that grade. If, on the other hand, the test is used as a placement device, the most critical assumption is that the assigned grade (or intervention, such as summer school) will benefit the student more than the alternative placement.

In either case, the scores should be shown to be technically sound, and the cutoff score should be a reasonably accurate indication of mastery of the skills in question. As explained in Chapter 4, not every underlying assumption must be documented empirically, but the assembled evidence should be sufficient to make a credible case that the use of the test for this purpose is appropriate—that is, both valid and fair.

Validation of Test Use

Tests used for promotion decisions should adhere, as appropriate, to professional standards for placement, and more generally, to professional standards for certifying knowledge and skill (American Educational Research

Association et al., 1985, 1998; Joint Committee on Testing Practices, 1988). These psychometric standards have two central principles:

-

(1)

A test score, like any other source of information about a student, is subject to error. Therefore, high-stakes decisions like promotion should not be made automatically on the basis of a single test score. They should also take into account other sources of information about the student's skills, such as grades, recommendations, and extenuating circumstances. This is especially true with young children (Shepard and Smith, 1987; Darling-Hammond and Falk, 1995). According to a recent survey, most districts report that they base promotion decisions in elementary school on grades (48 percent), standardized tests (39 percent), developmental factors (46 percent), attendance (31 percent), and recommendations (48 percent). The significance of these factors varies with grade level. It appears that achievement tests are more often used for promotion decisions in the elementary grades than in secondary school: at the high school level, they are used by only 26 percent of districts (American Federation of Teachers, 1997a:12).

-

(2)

Assessing students in more than one subject will improve the likelihood of making valid and fair promotion decisions.8

Content Coverage

The choice of construct used for making promotion decisions will not only determine, to a large extent, the content and scoring criteria, but it will also potentially disadvantage some students. Depending on whether the construct to be measured is "readiness for the next grade level" or "mastery of the material taught at the current grade level," the content and thought processes to be assessed may be quite different. The first construct might be adequately represented by a reading test if readiness for the next grade level is determined solely by a student's ability to read the material presented at that level. With the second construct, however, the number of subjects to be assessed is expanded, along with the type of evidence needed to validate the test use.

In the case of a promotion test being used to certify mastery, it is

important that the items employed be generally representative of the content and skills that students have actually covered at their current grade level. For example, in the case of a reading test, evidence of content-appropriateness might be the degree of alignment between the reading curriculum for that grade level and the test. Evidence that the test measures relevant cognitive processes might be obtained by asking a student to think aloud while completing the items or tasks.9 In addition, the suitability of the scoring criteria could be assessed by examining the relative weighting given to the content and skills measured by the reading test and comparing this to the emphasis given these areas in the curriculum. Some of this information may be collected during test development and documented in the user's manual, or it may be obtained by the user during testing.

Whether the test is being used to certify mastery or predict readiness, students' scores on the test should be interpreted carefully. Plausible rival interpretations of low scores need to be discounted (Messick, 1989). For example, a low score might be interpreted as showing lack of competence, but it could in fact be caused by low motivation or sickness on the day of the test. Or the low score could result from lack of alignment between the test and what was taught in class. Language or disabilities may also be relevant. One way to discount plausible rival interpretations of low scores is to take into account other sources of information about the student's skills, such as grades, recommendations, and extenuating circumstances (American Educational Research Association et al., 1985, 1998).

Setting the Cutoff Score

Chapter 5 described different procedures for setting cutoff scores (cutscores) on tests used for tracking, as well as some of the difficulties involved. With promotion tests, the validity of the cutscore similarly depends on the reasonableness of the standard-setting process and its consequences—especially if they differ by gender, race, or language-minority

groups (American Educational Research Association et al., 1985, 1998).

Different methods often yield very different standards (Berk, 1986; Mehrens, 1995). Shepard reports that these "discrepancies between standards are large enough to cause important differences in the percentage of students who pass the test" (1980). For example, in many states, fewer students attain the cutscore for the "proficient" level on the 4th grade reading test in the National Assessment of Educational Progress (NAEP) than attain the cutscore for the "proficient" level on the state's own 4th grade reading test (U.S. Department of Education, 1997). This is because the NAEP standards for "basic," "proficient," and "advanced" achievement are generally more challenging than those of the states. Even using the current "basic" achievement level on NAEP as a cutscore for a 4th grade reading test could lead to failure rates of 40 percent (U.S. Department of Education, 1997).

Because of such inconsistencies and their possible impact on students, it is generally recommended that the particular method and rationale for setting the cutscore on a test, as well as the qualifications of the judges, be documented as part of the test validation process.

When a score falls within the range of uncertainty around the cutoff, 10 other information should be examined to reduce the likelihood of placement or certification error. Current professional standards recommend that a student's score on a test be used only in conjunction with other information sources in making such important decisions as promotion to the next grade. This concern applies not only to students who score near or below the cutoff; there can also be students who "pass" the test but have not really mastered the material (Madaus, 1983).

Choosing a cutoff score on a test is a substantive judgment. More than a purely technical decision, it also involves social concerns, politics, and maintaining credibility with the public (Ellwein and Glass, 1989). Public relations played a role in the setting of cutscores on the Iowa tests that are part of Chicago's bid to end social promotion. The cutscores on

|

10 |

As discussed in Chapter 4, test reliability may be envisioned as the stability or consistency of scores on a test. An important aspect of the reliability of a test is its standard error of measurement. A small standard error around a student's score is especially important in relation to the setting of cutscores on a test because these may function as decision mechanisms for the placement of students in different grades. A small standard error of measurement for scores near the cutscore gives us more confidence in decisions based on the cutscores. |

the reading and mathematics tests were set at what were perceived to be relatively reasonable levels (2.8 for third graders, 5.3 for sixth graders, and 7.2 for eighth graders) with the intention of raising them later (interview with Joe Hahn, director, Research, Assessment, and Quality Review, Chicago school district).11

"We decided to be credible to the public," Chicago Chief Accountability Officer Philip Hansen told the committee. "If that 3rd grader doesn't get a 2.8 in reading, the public and the press and everyone understands clearly, more clearly than educators, that, gee, that's a problem, so they can see why that child needs to be given extra help …. Our problem comes with explaining it to educators as to why we don't use other indicators."

Educational Outcomes

As mentioned earlier, tests used for student promotion are usually thought to measure mastery of material already taught, but a promotion test may also be interpreted as indicating a student's readiness for the next grade. In the latter case, it would be relevant to obtain evidence that there is a relationship between the test score and certain pertinent criterion measures at the next grade level.12

For example, in the case of a reading test, it may be useful to demonstrate that there is a relationship between students' scores on the promotion test and their scores on a reading test taken at the end of the next school year. Such evidence of predictive validity would, however, not usually be enough to justify use of the test for making promotion decisions. Additional evidence would be needed that the alternative treatments (i.e., promotion, retention in grade, or some other intervention)

were more beneficial to the students assigned to them than would be the case if everyone got the same treatment (American Educational Research Association et al., 1985, 1998; Haney, 1993; Linn, 1997).

This evidence of an "aptitude-by-treatment interaction" could be gathered by taking a group of students who fall just below the cutoff score on the reading test and randomly retaining or promoting them. At the end of the first year, students who were promoted could be given a reading test and their scores recorded. The same test would be given a year later to the students who were retained—after they had been promoted and had spent a year at the next grade level. The results of the two groups could then be compared to see which group benefited the most—if "benefit" is defined as scoring higher on the test. In addition, because reading is necessary for learning other subjects, another potential benefit to examine is differential subject matter learning.13

Effects of Retention

Determining whether the use of a promotion test produces better overall educational outcomes requires weighing the intended benefits against unintended negative consequences for individual students and groups of students (American Educational Research Association et al., 1985; Cronbach, 1971; Joint Committee on Testing Practices, 1988; Messick, 1989).

Most of the relevant research focuses on one outcome in particular—retention in grade. Although retention rates can change even when tests are not used in making promotion decisions, there is evidence that using scores from large-scale tests to make such decisions may be associated with increased retention rates (Hendrie, 1997).

Increased retention is not a negative outcome if it benefits students. But are there positive consequences of being held back in school because of a test score? Does the student do better after repeating the grade, or would he have fared just as well or better if he had been promoted with his peers? Research data indicate that simply repeating a grade does not generally improve achievement (Holmes, 1989; House, 1989); moreover, it increases the dropout rate (Gampert and Opperman, 1988; Grissom and Shepard, 1989; Olson, 1990; Anderson, 1994; Darling-Hammond and Falk, 1995; Luppescu et al., 1995; Reardon, 1996).

For example, Holmes (1989) reports a meta-analysis of 63 controlled studies of grade retention in elementary and junior high school through the mid-1980s. When promoted and retained students were compared one to three years later, the retained students' average levels of academic achievement were at least 0.4 standard deviations below those of promoted students. In these comparisons, promoted and retained students were the same age, but the promoted students had completed one more grade than the retained students. Promoted and retained students were also compared after completing one or more grades, that is, when the retained students were a year older than the promoted students but had completed equal numbers of additional grades. Here, the findings were less consistent, but still negative. When the data were weighted by the number of estimated effects, there was an initially positive effect of retention on academic achievement after one more grade in school, but it faded away completely after three or more grades. When the data were weighted by the number of independent studies, rather than by the estimated number of effects on achievement, the average effects were negligible in every year after retention. Of the 63 studies reviewed by Holmes, 54 yielded overall negative effects of retention, and only 9 yielded overall positive effects. Some studies had better statistical controls than others, and those with subjects matched on IQ, achievement test scores, sex, and/or socioeconomic status showed larger negative effects of retention than studies with weaker designs. Holmes concluded, "On average, retained children are worse off than their promoted counterparts on both personal adjustment and academic outcomes" (1989:27). A more recent study of Baltimore schoolchildren concludes that grade retention does increase the chances of academic success (Alexander et al., 1994), but a detailed reanalysis of those findings yields no evidence of sustained positive effects (Shepard et al., 1996).

Anderson (1994) carried out an extensive large-scale national study

of the effect of grade retention on high school dropout rates. He analyzed data from the National Longitudinal Study of Youth for more than 5,500 students whose school attendance was followed annually from 1978–1979 to 1985–1986. With statistical controls for sex, race/ethnicity, social background, cognitive ability, adolescent deviance, early transitions to adult status, and several school-related measures, students who were currently repeating a grade were 70 percent more likely to drop out of high school than students who were not currently repeating a grade.

There are also strong relationships between race, socioeconomic status (SES), and the use of tests for promotion and retention. A recent national longitudinal study, using the National Education Longitudinal Study database, shows that certain students are far likelier than others to be subject to promotion tests in the 8th grade (Reardon, 1996:4–5):

[S]tudents in urban schools, in schools with high concentrations of low-income and minority students, and schools in southern and western states, are considerably more likely to have [high-stakes] test requirements in eighth grade. Among eighth graders, 35 percent of black students and 27 percent of Hispanic students are subject to [a high-stakes test in at least one subject] to advance to ninth grade, compared to 15 percent of white students. Similarly, 25 percent of students in the lowest SES quartile, but only 14 percent of those in the top quartile, are subject to eighth grade [high-stakes test] requirements.

Moreover, the study found that the presence of high-stakes 8th grade tests is associated with sharply higher dropout rates, especially for students at schools serving mainly low-SES students. For such students, early dropout rates—between the 8th and 10th grades—were 4 to 6 percentage points higher than for students from schools that were similar excepting the high-stakes test requirement (Reardon, 1996).

What does it mean that minority students and low-SES students are more likely to be subject to high-stakes tests in the 8th grade? Perhaps, as Reardon points out, such policies are "related to the prevalence of low-achieving students—the group proponents believe the tests are most likely to help" (1996). Perhaps the adoption of high-stakes test policies for individuals serves the larger social purpose of ensuring that promotion from 8th to 9th grades reflects acquisition of certain knowledge and skills. Such tests may also motivate less able students and teachers to work harder or to focus their attention on the knowledge domains that test developers value most highly. But if retention in grade is not, on balance, beneficial for students, as the research suggests (Shepard and Smith, 1989), it is cause for concern that low-SES children and minority students are disproportionately subject to any negative consequences.

Those who leave school without diplomas have diminished life chances. High dropout rates carry many social costs. It may thus be problematic if high-stakes tests lead individual students who would not otherwise have done so to drop out. There may also be legal implications if it appears that the public is prepared to adopt high-stakes test programs chiefly when their consequences will be felt disproportionately by minority students14 and low-SES students.

Negative findings about the effects of grade retention on dropout rates are reported by Grissom and Shepard (1989), based on data for several localities including the 1979 to 1981 freshman classes from the Chicago Public Schools. Another Chicago study, of students in 1987, by Luppescu et al. (1995), showed that retained students had lower achievement scores. Throughout this period, the Chicago Public Schools cycled through successive policies of loose and restrictive promotion policies, and it is not clear how long, and with what consequences, the present strict policies will hold (Chicago Public Schools, 1997a).

New York City appears to be following a similar cycle of strict and loose retention policies, in which the unsuccessful Promotional Gates program of the 1980s was at first "promising," then "withered," and was finally canceled by 1990, only to be revived in 1998 by a new central administration (Steinberg, 1998a, 1998b). This cycle of policies, combining strict retention criteria with a weak commitment to remedial instruction, is likely to reconfirm past evidence that retention in grade is typically harmful to students.

Another important question is whether the use of a test in making promotion decisions exacerbates existing inequalities or creates new ones. For example, in their case study of a school district that decided to use tests as a way to raise standards, Ellwein and Glass (1989) found that test information was being used selectively in making promotion and retention decisions, leading to what was perceived as negative consequences for certain groups of students.15 Thus, although minorities accounted for 59 percent of the students who failed the 1985 kindergarten test, they made up 69 percent of the students who were retained and received

|

14 |

For a discussion of possible claims of discrimination based on race or national origin, see Chapter 3. |

|

15 |

Ellwein and Glass (1989) assumed that the intervention, i.e., retention, was not as beneficial as promotion to the next grade level. |

transition services. A similar pattern was observed at grade 2. In this instance, disproportionate retention rates stemming from selective test use constitutes evidence of test invalidity (National Research Council, 1982).

In addition, there may be problems with using a test as the sole measure of the effectiveness of retention or other interventions (summer school, tutoring, and so on). This concern is related to the fact that the validity of test and retest scores depends in part on whether the scores reflect students' familiarity with actual test items or a particular test format. For example, there is some evidence to indicate that improved scores on one test may not actually carry over when a new test of the same knowledge and skills is introduced (Koretz et al., 1991).

The current reform and test-based accountability systems of the Chicago Public Schools provide an example of high-stakes test use for individual students that raises serious questions about "teaching to the test." Although Chicago is developing its own standards-based, course-specific assessment system, it is committed to using the Iowa Test of Basic Skills as the yardstick for student and school accountability. Teachers are given detailed manuals on preparing their students for the tests (Chicago Public Schools, 1996a, 1996b). Student test scores have increased substantially, both during the intensive summer remedial sessions—the Summer Bridge program—and between the 1996–1997 and 1997–1998 school years (Chicago Public Schools, 1997b, 1998b), but the available data provide no means of distinguishing true increases in student learning from artifactual gains. Such gains would be expected from the combined effects of teaching to the test, repeated use of a similar test, and, in the case of the Summer Bridge program, the initial selection of students with low scores on the test.16

Alternatives to Retention

Some policymakers and practitioners have rejected the simplistic alternatives of promoting or retaining students based on test scores. Instead, they favor intermediate approaches: testing early to identify students whose performance is weak; providing remedial education to help such students acquire the skills necessary to pass the test; and giving

students multiple opportunities to retake different forms of the test in the hope that they will pass and avoid retention. Here, testing can play an important and positive role in early diagnosis and targeted remediation.

Intervention strategies appear to be particularly crucial from kindergarten through grade 2 (Shepard et al., 1998; American Federation of Teachers, 1997a). Some of the intensive strategies being used at this level include preschool expansion, giving children who are seriously behind their age-level peers opportunities to accelerate their instruction, and putting children in smaller classes with expert teachers.17 Such strategies are being implemented in school districts across the country. 18 Data on their effectiveness are as yet unavailable.

It is the committee's view that these alternatives to social promotion and simple retention in grade should be tried and evaluated. In our judgment, however, the effectiveness of such approaches will depend on the quality of the instruction that students receive after failing a promotion test, and it will be neither simple nor inexpensive to provide high-quality remedial instruction. At present only 13 states require and fund such intervention programs to help low-performing students reach the state standards, and 6 additional states require intervention but provide no resources for carrying it out.19

Issues of Fairness

As discussed in Chapter 4, a fair promotion test is one that yields comparably valid scores from person to person, from group to group, and from setting to setting. This means that if a promotion test results in scores that systematically underestimate or overestimate the knowledge and skill of members of a particular group, then the test would be considered unfair. Or if the promotion test claims to measure a single construct across groups but in fact measures different constructs in different groups, it would also be unfair. The view of fairness as equitable treatment of all examinees in the testing process requires that examinees be given a comparable opportunity to demonstrate their understanding of the construct(s) the promotion test is intended to measure. This includes such factors as appropriate testing conditions on the day of the test, opportunity to become familiar with the test format, and access to practice materials.

The view of fairness as opportunity to learn is particularly relevant in the context of a promotion test used to certify mastery of the material taught. In this regard, when assessing the fairness of a promotion test, it is important that test users ask whether certain groups of students are doing poorly on the test due to insufficient opportunities to learn the material tested. Thus there is a need for evidence that the content of the test is representative of what students have been taught. Chapter 7 discusses several ways of demonstrating that the test measures what students have been taught in the context of graduation tests, although much of this discussion is also relevant to promotion tests. For example, to enhance the fairness of promotion tests linked to state-wide standards and frameworks, states should develop and widely disseminate a specific definition of the domain to be tested; try to analyze item-response patterns on the test by districts, schools within a district, or by student characteristics (for example, race, gender, curriculum tracks in high school, and so on); and plan and, when feasible, carry out a series of small-scale evaluations on the impact of the test on the curriculum and on teaching (Madaus, 1983).20 Taken together, these steps should increase the chance that these tests give students a fair opportunity to demonstrate what they know and are able to do.

Finally, the validity and fairness of test score interpretations used in promotion decisions can be enhanced by employing the following sound educational strategies:

-

(1)

identifying at-risk or struggling students early so they can be targeted for extra help;

-

(2)

providing students with multiple opportunities to demonstrate their knowledge through repeated testing with alternate forms or other appropriate means; and

-

(3)

taking into account other relevant information about individual students (American Educational Research Association et al., 1985).

The committee's findings and recommendations about promotion and retention are reported in Chapter 12.

Technical Appendix

Social Promotion and Age-Grade Retardation

Current public discussion of social promotion has made little reference to current retention practices—in which a very large share of American schoolchildren are already retained in grade. In part, this is because of sporadic data collection and reporting, but far more consistent statistical data are available about the practice of grade retention than, say, about academic tracking. These data have not been used fully to inform the public debate. For this reason, and to support its analyses of high-stakes testing for promotion and retention, the committee has assembled and analyzed data on rates, trends, and differentials in grade retention. Some of the available data have been collected by state education agencies, but the most uniform, long-term data have been collected by the U.S. Bureau of the Census in connection with its Current Population Survey (CPS), the same monthly household survey that produces important economic statistics, like the unemployment rate. This appendix presents the details of the committee's analysis.

No national or regional agency monitors social promotion and grade retention. Occasional data on retention are available for some states and localities, but coverage is sparse, and little is known about the comparability of these data (Shepard and Smith, 1989). For example, the denominators of retention rates may be based on beginning-of-year or end-of-year enrollment figures. The numerators may include retention as of the end of an academic year or as of the end of the following summer session. Some states include special education students in the data; others exclude them. In the primary grades, retention is usually an all-or-nothing matter; in high school, retention may imply that a student has completed some requirements but has too few credits to be promoted.

Table 6-1 shows all of the state data collected by Shepard and Smith (1989:7–8) from the late and mid-1980s, updated with data from 1993 to 1996 that other states have provided. Although we have inquired of every state education agency, 15 states have not responded. Some states do not collect retention data at all, or collect very limited data. For example, 13 states—Colorado, Connecticut, Illinois, Kansas, Montana, Nebraska, Nevada, New Hampshire, New Jersey, North Dakota, Pennsylvania, Utah, and Wyoming—collect no state-level data on grade retention. Another 22 states, plus the District of Columbia, provided data on retention at some grade levels, but in some cases the data were very

limited. For example, New York State collects such data only at the 8th grade.

We can offer few generalizations from the table. Retention rates are highly variable across states. They are unusually high in the District of Columbia, most of whose students are black. Rates are relatively low in some states, like Ohio, including states with relatively large minority populations, like South Carolina and Georgia. Retention rates tend to be relatively high in the early primary grades—although not in kindergarten—and in the early high school years. Perhaps the most striking fact from this effort to bring together available data is that—despite the prominence of social promotion as an issue of educational policy—very little information about it is available.

The committee's main resource for information about levels, trends, and differentials in grade retention is the CPS. Using published data from the annual October School Enrollment Supplement of the CPS, it is possible to track the distribution of school enrollment by age and grade each year for population groups defined by sex and race/ethnicity. These data have the advantage of comparable national coverage from year to year, but they say nothing directly about educational transitions. We can only infer the minimum rate of grade retention by observing trends in the enrollment of children below the modal grade level for their age from one calendar year to the next. Suppose, for example, that 10 percent of 6-year-old children were enrolled below the 1st grade in October 1994. If 15 percent of those children were enrolled below the 2nd grade in October 1995, when they were 7 years old, we would infer that at least 5 percent were held back in the 1st grade between 1994 and 1995.

Extended Kindergarten Attendance

Historically, there has been great variation in age at school entry in the United States, which had more to do with the labor demands of a farm economy and the availability of schooling to disadvantaged groups than with readiness for school. The variability declined as school enrollment completed its diffusion from middle childhood into younger and older ages (Duncan, 1968; National Research Council, 1989).

Contrary to the historic trend, age at entry into graded school has gradually crept up and again become more variable over the past two decades, partly through selective extension of kindergarten to two years,

Table 6-1 Percentages of Students Retained in Grade in Selected States by Grade-Level and Year

|

Grade Level |

PK |

K |

1 |

2 |

3 |

4 |

5 |

6 |

7 |

8 |

9 |

10 |

11 |

12 |

Total |

|

Alabama |

|||||||||||||||

|

1994–95 |

NA |

4.6 |

7.7 |

2.8 |

2.4 |

2.1 |

2.1 |

3.2 |

7.3 |

5.8 |

13.1 |

7.2 |

6.1 |

3.8 |

5.4 |

|

1995–96 |

NA |

4.4 |

7.9 |

2.9 |

2.3 |

2.3 |

2.4 |

2.9 |

6.7 |

5.4 |

12.1 |

7.2 |

6.2 |

3.5 |

5.2 |

|

1996–97 |

NA |

5.1 |

8.5 |

3.3 |

2.5 |

2.1 |

2.0 |

2.9 |

6.1 |

4.4 |

12.6 |

6.7 |

5.2 |

3.1 |

5.1 |

|

Arizona |

|||||||||||||||

|

1979–80 |

NA |

5.2 |

7.7 |

4.0 |

2.4 |

1.9 |

1.4 |

1.3 |

3.1 |

2.3 |

4.4 |

2.4 |

2.5 |

6.9 |

3.5 |

|

1985–86 |

NA |

8.0 |

20.0 |

8.0 |

5.0 |

4.0 |

4.0 |

4.0 |

8.0 |

7.0 |

6.0 |

3.0 |

2.0 |

14.0 |

7.2 |

|

1994–95 |

18.0 |

1.4 |

2.4 |

0.9 |

0.6 |

0.4 |

0.4 |

1.0 |

2.5 |

2.2 |

5.3 |

3.5 |

2.3 |

8.7 |

2.3 |

|

1995–96 |

18.9 |

1.6 |

2.4 |

1.0 |

0.6 |

0.4 |

0.4 |

0.9 |

2.3 |

2.2 |

5.4 |

3.5 |

2.6 |

9.7 |

2.4 |

|

1996–97 |

14.8 |

1.7 |

2.2 |

1.0 |

0.7 |

0.5 |

0.5 |

1.1 |

2.7 |

2.3 |

7.0 |

5.0 |

3.1 |

10.2 |

2.8 |

|

California |

|||||||||||||||

|

1988–89 |

NA |

5.7 |

4.4 |

1.8 |

1.1 |

0.6 |

0.5 |

0.5 |

1.0 |

0.7 |

NA |

NA |

NA |

NA |

NA |

|

Delaware |

|||||||||||||||

|

1979–80 |

NA |

NA |

11.4 |

5.1 |

2.9 |

2.4 |

3.1 |

2.4 |

7.9 |

8.1 |

13.1 |

12.6 |

7.7 |

6.6 |

7.0 |

|

1985–86 |

NA |

5.4 |

17.2 |

4.9 |

2.8 |

2.3 |

3.0 |

3.2 |

9.6 |

7.7 |

15.6 |

16.8 |

8.7 |

7.5 |

8.1 |

|

1994–95 |

NA |

2.1 |

5.8 |

2.1 |

1.1 |

0.7 |

0.6 |

1.4 |

3.4 |

1.7 |

NA |

NA |

NA |

NA |

NA |

|

1995–96 |

NA |

1.6 |

5.3 |

2.0 |

1.9 |

0.8 |

0.9 |

1.3 |

2.8 |

1.6 |

NA |

NA |

NA |

NA |

NA |

|

1996–97 |

NA |

2.0 |

5.0 |

2.4 |

1.4 |

0.9 |

1.0 |

1.9 |

3.4 |

2.8 |

NA |

NA |

NA |

NA |

NA |

|

District of Columbia |

|||||||||||||||

|

1979–80 |

NA |

NA |

15.3 |

10.0 |

7.2 |

7.2 |

6.3 |

3.1 |

NA |

NA |

20.5 |

NA |

NA |

16.6 |

NA |

|

1985–86 |

NA |

NA |

12.7 |

8.4 |

7.4 |

5.4 |

4.6 |

2.8 |

10.6 |

6.6 |

NA |

NA |

NA |

NA |

7.3 |

|

1991–92 |

NA |

NA |

12.9 |

10.8 |

8.9 |

6.9 |

6.5 |

3.0 |

17.3 |

17.6 |

15.2 |

22.1 |

18.3 |

11.8 |

NA |

|

1992–93 |

NA |

NA |

10.4 |

8.2 |

7.4 |

8.0 |

6.2 |

3.3 |

18.5 |

16.4 |

16.5 |

26.0 |

23.8 |

12.7 |

NA |

|

1993–94 |

NA |

NA |

11.1 |

7.9 |

6.3 |

6.1 |

5.3 |

3.5 |

15.6 |

15.2 |

19.5 |

23.7 |

18.6 |

14.1 |

NA |

|

1994–95 |

NA |

NA |

12.7 |

8.5 |

6.2 |

5.9 |

5.8 |

2.4 |

12.2 |

13.6 |

16.1 |

22.1 |

15.1 |

13.9 |

NA |

|

1995–96 |

NA |

NA |

11.4 |

8.7 |

7.4 |

7.0 |

5.5 |

2.3 |

11.9 |

12.1 |

16.2 |

24.3 |

15.9 |

13.3 |

NA |

|

1996–97 |

NA |

NA |

14.7 |

11.3 |

10.8 |

8.0 |

6.1 |

4.1 |

15.4 |

16.5 |

18.7 |

21.8 |

21.7 |

13.6 |

NA |

|

Florida |

|||||||||||||||

|

1979–80 |

NA |

6.1 |

13.7 |

7.4 |

7.0 |

5.9 |

4.6 |

5.5 |

10.4 |

8.3 |

10.2 |

11.5 |

7.5 |

4.4 |

8.0 |

|

1985–86 |

NA |

10.5 |

11.2 |

4.7 |

4.5 |

3.8 |

2.6 |

3.5 |

7.9 |

5.8 |

12.1 |

11.9 |

8.9 |

3.5 |

7.2 |

|

1994–95 |

3.1 |

3.0 |

3.3 |

1.5 |

1.1 |

0.8 |

0.6 |

3.3 |

4.7 |

3.6 |

11.1 |

9.3 |

7.8 |

5.3 |

4.1 |

|

1995–96 |

1.8 |

3.1 |

3.6 |

1.9 |

1.2 |

0.9 |

0.7 |

3.7 |

4.7 |

3.6 |

12.8 |

10.8 |

7.8 |

5.2 |

4.4 |

|

1996–97 |

3.6 |

3.6 |

4.1 |

2.2 |

1.5 |

1.0 |

0.7 |

4.4 |

4.9 |

4.0 |

14.3 |

12.1 |

8.6 |

5.7 |

5.0 |

|

Grade Level |

PK |

K |

1 |

2 |

3 |

4 |

5 |

6 |

7 |

8 |

9 |

10 |

11 |

12 |

Total |

|

Georgia |

|||||||||||||||

|

1979–80 |

NA |

NA |

11.0 |

4.7 |

3.8 |

2.8 |

2.5 |

2.6 |

5.3 |

7.4 |

13.3 |

10.8 |

7.9 |

4.0 |

6.5 |

|

1985–86 |

NA |

8.0 |

12.4 |

6.7 |

7.8 |

5.2 |

3.9 |

5.3 |

6.7 |

7.5 |

18.1 |

12.2 |

8.7 |

4.5 |

8.5 |

|

1994–95 |

NA |

3.8 |

3.5 |

1.5 |

1.1 |

0.7 |

0.6 |

1.5 |

1.8 |

1.9 |

11.6 |

7.5 |

5.0 |

3.0 |

NA |

|

1995–96 |

NA |

3.7 |

3.7 |

1.9 |

1.2 |

1.0 |

0.7 |

1.7 |

2.1 |

2.2 |

12.6 |

7.7 |

5.2 |

3.2 |

NA |

|

1996–97 |

NA |

3.6 |

3.8 |

2.1 |

1.5 |

1.0 |

0.8 |

1.9 |

2.4 |

2.2 |

13.1 |

8.2 |

5.6 |

3.4 |

NA |

|

1997–98 |

NA |

3.7 |

4.0 |

2.4 |

1.7 |

1.3 |

1.1 |

2.1 |

2.5 |

2.1 |

12.4 |

8.7 |

5.4 |

3.5 |

NA |

|

Hawaii |

|||||||||||||||

|

1979–80 |

NA |

NA |

1.1 |

0.7 |

0.5 |

0.4 |

0.4 |

0.4 |

0.2 |

2.3 |

13.1 |

10.1 |

8.5 |

5.2 |

3.8 |

|

1985–86 |

NA |

2.0 |

1.6 |

1.0 |

0.7 |

0.5 |

0.4 |

0.5 |

2.1 |

2.8 |

8.9 |

6.9 |

5.5 |

0.8 |

2.6 |

|

Indiana |

|||||||||||||||

|

1994–95 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

1.4 |

|

1995–96 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

1.6 |

|

1996–97 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

1.4 |

|

Kentucky |

|||||||||||||||

|

1979–80 |

NA |

2.3 |

12.6 |

5.7 |

3.4 |

2.2 |

1.8 |

1.9 |

4.2 |

3.6 |

5.8 |

4.2 |

2.8 |

3.2 |

4.4 |

|

1985–86 |

NA |

4.0 |

5.3 |

4.9 |

3.0 |

2.3 |

1.9 |

2.7 |

5.4 |

3.8 |

9.6 |

6.3 |

4.6 |

3.4 |

5.3 |

|

1994–95 |

NA |

NA |

NA |

NA |

NA |

1.1 |

0.7 |

1.9 |

2.7 |

1.6 |

10.7 |

6.9 |

4.0 |

2.3 |

3.6 |

|

1995–96 |

NA |

NA |

NA |

NA |

NA |

1.1 |

0.8 |

1.8 |

2.7 |

1.9 |

10.7 |

6.8 |

4.1 |

2.2 |

3.6 |

|

Louisiana |

|||||||||||||||

|

1995–96 |

NA |

8.7 |

11.0 |

5.4 |

4.4 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

|

Maryland |

|||||||||||||||

|

1979–80 |

NA |

NA |

7.6 |

3.5 |

3.3 |

2.5 |

2.5 |

1.8 |

8.5 |

7.6 |

8.6 |

11.3 |

6.2 |

4.4 |

5.8 |

|

1985–86 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

5.5 |

|

1994–95 |

NA |

0.8 |

2.0 |

1.1 |

0.7 |

0.5 |

0.2 |

2.1 |

3.2 |

2.4 |

13.1 |

7.1 |

4.8 |

4.7 |

3.1 |

|

1995–96 |

NA |

0.9 |

2.3 |

1.2 |

0.7 |

0.5 |

0.3 |

2.3 |

3.3 |

2.3 |

12.2 |

6.6 |

4.7 |

5.3 |

3.2 |

|

1996–97 |

NA |

1.1 |

2.8 |

1.5 |

1.0 |

0.7 |

0.4 |

2.5 |

3.7 |

2.6 |

10.3 |

6.1 |

4.3 |

5.5 |

3.2 |

|

Massachusetts |

|||||||||||||||

|

1994–95 |

NA |

NA |

NA |

NA |

NA |

0.3 |

0.2 |

0.6 |

1.5 |

1.5 |

6.3 |

4.5 |

3.3 |

2.2 |

NA |

|

1995–96 |

NA |

NA |

NA |

NA |

NA |

0.3 |

0.2 |

0.6 |

1.4 |

1.5 |

6.3 |

4.5 |

3.6 |

1.9 |

NA |

|

Michigan |

|||||||||||||||

|

1994–95 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

7.8 |

5.6 |

3.8 |

2.0 |

NA |

|

1995–96 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

4.8 |

3.9 |

2.7 |

1.7 |

NA |

|

Grade Level |

PK |

K |

1 |

2 |

3 |

4 |

5 |

6 |

7 |

8 |

9 |

10 |

11 |

12 |

Total |

|

Mississippi |

|||||||||||||||

|

1979–80 |

NA |

NA |

15.1 |

6.9 |

4.8 |

5.0 |

5.6 |

5.1 |

13.5 |

11.1 |

12.4 |

11.7 |

8.1 |

6.0 |

8.9 |

|

1985–86 |

NA |

1.4 |

16.1 |

7.0 |

5.3 |

5.7 |

6.0 |

5.6 |

11.2 |

9.3 |

12.9 |

12.6 |

9.0 |

5.7 |

8.9 |

|

1994–95 |

0.0 |

4.9 |

11.9 |

6.1 |

4.9 |

6.2 |

7.1 |

8.3 |

15.4 |

13.2 |

21.0 |

13.5 |

9.5 |

6.5 |

9.6 |

|

1995–96 |

22.2 |

4.8 |

11.6 |

5.8 |

4.6 |

5.6 |

6.3 |

7.5 |

14.2 |

11.5 |

20.9 |

12.9 |

7.9 |

5.5 |

9.5 |

|

1996–97 |

16.7 |

5.4 |

11.9 |

6.6 |

5.4 |

6.1 |

6.6 |

7.7 |

15.6 |

12.9 |

19.7 |

12.8 |

7.7 |

5.2 |

9.8 |

|

New Hampshire |

|||||||||||||||

|

1979–80 |

NA |

NA |

8.6 |

3.3 |

2.0 |

1.3 |

1.1 |

0.9 |

2.5 |

2.8 |

7.7 |

4.9 |

3.6 |

3.6 |

3.6 |

|

1985–86 |

NA |

4.4 |

9.1 |

3.7 |

1.5 |

1.1 |

1.0 |

7.0 |

3.3 |

3.2 |

10.5 |

5.5 |

4.2 |

4.9 |

4.2 |

|

New Mexico |

|||||||||||||||

|

1990–91 |

NA |

2.2 |

6.1 |

2.8 |

1.5 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

|

1991–92 |

NA |

1.6 |

4.8 |

2.0 |

1.1 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

|

1992–93 |

NA |

1.5 |

4.3 |

1.7 |

1.2 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

|

1993–94 |

NA |

1.3 |

4.2 |

1.9 |

1.0 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

|

New York |

|||||||||||||||

|

1994–95 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

16.2 |

NA |

NA |

NA |

NA |

|

1995–96 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

18.2 |

NA |

NA |

NA |

NA |

|

1996–97 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

19.5 |

NA |

NA |

NA |

NA |

|

North Carolina |

|||||||||||||||

|

1979–80 |

NA |

4.5 |

9.8 |

6.0 |

4.5 |

3.2 |

2.8 |

3.4 |

6.8 |

7.1 |

14.1 |

14.8 |

8.6 |

4.2 |

6.9 |

|

1985–86 |

NA |

6.0 |

9.3 |

5.0 |

5.7 |

2.7 |

2.1 |

8.1 |

7.9 |

11.0 |

13.9 |

13.2 |

9.3 |

3.9 |

7.7 |

|

1987–88 |

NA |

7.4 |

7.7 |

3.8 |

2.8 |

2.0 |

1.3 |

2.2 |

3.6 |

3.0 |

9.0 |

7.6 |

4.5 |

2.2 |

4.5 |

|

1988–89 |

NA |

6.8 |

7.2 |

2.9 |

2.7 |

1.6 |

1.1 |

2.3 |

3.5 |

2.8 |

9.6 |

7.8 |

4.5 |

1.8 |

4.2 |

|

1989–90 |

NA |

5.3 |

5.5 |

2.1 |

2.0 |

1.1 |

0.8 |

1.6 |

2.6 |

2.2 |

10.4 |

7.4 |

4.3 |

1.7 |

3.6 |

|

1990–91 |

NA |

3.7 |

4.0 |

1.6 |

1.4 |

0.8 |

0.6 |

1.5 |

2.7 |

2.0 |

10.8 |

7.9 |

4.6 |

1.9 |

3.3 |

|

1991–92 |

NA |

2.9 |

4.1 |

1.9 |

1.5 |

0.7 |

0.6 |

1.4 |

2.4 |

1.9 |

11.3 |

7.8 |

4.6 |

1.7 |

3.2 |

|

1992–93 |

NA |

3.0 |

4.1 |

2.0 |

1.6 |

0.7 |

0.5 |

1.3 |

2.4 |

1.8 |

12.8 |

8.3 |

4.9 |

1.8 |

3.4 |

|

1993–94 |

NA |

3.3 |

4.8 |

2.4 |

1.9 |

0.9 |

0.7 |

1.6 |

2.5 |

2.1 |

13.4 |

10.0 |

5.7 |

2.0 |

3.8 |

|

1994–95 |

NA |

3.5 |

4.7 |

2.4 |

1.9 |

1.0 |

0.7 |

1.7 |

2.6 |

1.8 |

15.0 |

10.2 |

5.9 |

1.9 |

4.0 |

|

1995–96 |

NA |

3.8 |

5.0 |

2.8 |

2.1 |

1.3 |

0.8 |

2.2 |

3.2 |

2.3 |

15.7 |

10.2 |

6.1 |

2.2 |

4.3 |

|

1996–97 |

NA |

4.2 |

5.7 |

3.1 |

2.5 |

1.4 |

1.0 |

2.6 |

3.4 |

2.8 |

15.8 |

10.3 |

6.8 |

2.1 |

4.7 |

|

Ohio |

|||||||||||||||

|

1994–95 |

NA |

NA |

4.0 |

1.6 |

1.2 |

0.9 |

0.9 |

1.9 |

2.9 |

2.3 |

9.1 |

5.2 |

2.9 |

4.4 |

NA |

|

1995–96 |

NA |

NA |

4.1 |

1.7 |

1.1 |

0.8 |

0.7 |

1.6 |

2.1 |

1.8 |

8.1 |

4.8 |

2.7 |

3.9 |

NA |

|

1996–97 |

NA |

NA |

4.7 |

2.0 |

1.8 |

1.1 |

0.9 |

1.8 |

2.9 |

3.1 |

11.4 |

5.9 |

3.2 |

4.2 |

NA |

|

Grade Level |

PK |

K |

1 |

2 |

3 |

4 |

5 |

6 |

7 |

8 |

9 |

10 |

11 |

12 |

Total |

|

South Carolina |

|||||||||||||||

|

1977–78 |

NA |

NA |

8.3 |

4.4 |

3.5 |

2.7 |

2.6 |

3.5 |

3.8 |

2.6 |

NA |

NA |

NA |

NA |

2.6 |

|

1994–95 |

NA |

NA |

7.0 |

2.2 |

1.9 |

1.4 |

1.7 |

2.4 |

3.3 |

2.2 |

NA |

NA |

NA |

NA |

NA |

|

1995–96 |

NA |

NA |

6.8 |

2.6 |

2.0 |

1.4 |

1.6 |

2.4 |

3.8 |

2.7 |

NA |

NA |

NA |

NA |

NA |

|

1996–97 |

NA |

NA |

7.0 |

2.9 |

2.1 |

1.7 |

1.8 |

2.8 |

3.9 |

2.9 |

NA |

NA |

NA |

NA |

NA |

|

Tennessee |

|||||||||||||||

|

1979–80 |

NA |

2.4 |

10.7 |

5.6 |

3.9 |

3.1 |

3.3 |

2.8 |

7.3 |

5.6 |

8.5 |

6.3 |

4.1 |

6.1 |

5.4 |

|

1985–86 |

NA |

3.9 |

10.9 |

5.1 |

3.9 |

3.3 |

3.2 |

3.2 |

8.1 |

6.1 |

9.6 |

8.6 |

7.0 |

5.9 |

6.2 |

|

1991–92 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

4.2 |

|

1992–93 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

3.9 |

|

1993–94 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

3.9 |

|

1994–95 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

3.5 |

|

1995–96 |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

NA |

3.4 |

|

1996–97 |

NA |

4.3 |

5.5 |

2.5 |

1.8 |

1.2 |

1.4 |

2.7 |

7.2 |

5.7 |

13.4 |

9.5 |

7.0 |

5.8 |

5.2 |

|

Texas |

|||||||||||||||

|

1992–93 |

NA |

1.6 |

7.7 |

2.5 |

1.5 |

1.2 |

1.1 |

2.3 |

3.2 |

2.3 |

16.7 |

8.5 |

6.1 |

4.1 |

4.4 |

|

1993–94 |

NA |

1.4 |

6.0 |

2.1 |

1.2 |

0.9 |

0.8 |

2.0 |

3.0 |

2.2 |

16.5 |

8.2 |

5.7 |

3.8 |

4.0 |

|

1994–95 |

NA |

1.5 |

5.8 |

2.2 |

1.3 |

1.0 |

0.9 |

1.7 |

2.7 |

1.9 |

16.8 |

7.9 |

5.4 |

3.9 |

4.0 |

|

1995–96 |

NA |

1.7 |

5.9 |

2.6 |

1.5 |

1.0 |

0.8 |

1.7 |

2.9 |

2.1 |

17.8 |

7.9 |

5.5 |

4.2 |

4.3 |

|

Vermont |

|||||||||||||||

|

1994–95 |

NA |

1.9 |

1.7 |

1.0 |

0.5 |

0.6 |

0.3 |

0.3 |

1.5 |

1.6 |

3.9 |

2.6 |

2.2 |

4.8 |

1.7 |

|

1995–96 |

NA |

1.5 |

1.6 |

1.1 |

0.7 |

0.3 |

0.2 |

0.3 |

1.5 |

1.3 |

4.9 |

3.0 |

2.2 |

3.4 |

1.7 |

|

1996–97 |

NA |

2.1 |

2.4 |

1.2 |

0.6 |

0.5 |

0.4 |

0.4 |

1.5 |

1.3 |

4.8 |

2.6 |

2.2 |

4.4 |

1.8 |

|

Virginia |

|||||||||||||||

|

1979–80 |

NA |

6.2 |

11.0 |

6.3 |

5.3 |

4.4 |

4.2 |

4.2 |

7.7 |

12.6 |

11.5 |

8.3 |

6.3 |

7.4 |

7.4 |

|

1985–86 |

NA |

8.3 |

10.2 |

4.8 |

4.2 |

3.7 |

2.9 |

3.4 |

8.1 |

9.7 |

13.9 |

8.8 |

6.1 |

7.0 |

7.2 |

|

1993–94 |

NA |

3.0 |

3.9 |

2.0 |

1.3 |

1.1 |

0.7 |

3.1 |

5.4 |

6.2 |

12.2 |

8.3 |

6.3 |

6.6 |

4.6 |

|

1994–95 |

NA |

3.5 |

4.2 |

2.1 |

1.5 |

1.2 |

0.7 |

3.4 |

5.2 |

6.3 |

13.4 |

8.6 |

6.6 |

6.5 |

4.9 |

|

1995–96 |

NA |

3.9 |

4.3 |

2.4 |

1.9 |

1.6 |

1.1 |

3.6 |

5.3 |

6.0 |

13.2 |

8.4 |

6.2 |

6.4 |

4.9 |

|

West Virginia |

|||||||||||||||

|

1979–80 |

NA |

1.7 |

10.8 |

3.4 |

2.2 |

1.9 |

1.8 |

1.4 |

3.5 |

2.5 |

NA |

NA |

NA |

NA |

3.4 |

|

1985–86 |

NA |

4.4 |

7.5 |

3.3 |

2.7 |

2.3 |

2.2 |

1.8 |

4.6 |

2.5 |

NA |

NA |

NA |

NA |

3.5 |

|

1994–95 |

NA |

4.7 |

4.9 |

2.0 |

1.5 |

0.9 |

0.9 |

1.3 |

3.7 |

3.0 |

NA |

NA |

NA |

NA |

2.6 |

|

1994–95 |

NA |

4.7 |

5.4 |

2.2 |

1.4 |

0.8 |

0.7 |

1.3 |

3.5 |

2.7 |

NA |

NA |

NA |

NA |

2.6 |

|

1996–97 |

NA |

5.8 |

6.7 |

3.7 |

2.5 |

2.0 |

2.1 |

3.5 |

4.6 |

2.9 |

NA |

NA |

NA |

NA |

3.8 |

rather than a single year prior to graded schooling.21 This is a diffuse phenomenon and there is no single name for it, nor are there distinct categories for the first and second years of kindergarten in the Census enrollment data. Fragmentary reports suggest that, in some places, kindergarten retention may have been as high as 50 percent in the late 1980s (Shepard, 1989; Shepard, 1991). We do not know the degree to which early retention decisions originate with parents—for example, to increase their children's chances for success in athletics—rather than with teachers or other school personnel. Moreover, there are no sound national estimates of the prevalence of kindergarten retention, and none of the state data in Table 6-1 indicate exceptionally high kindergarten retention rates.

The Census Bureau's statistics on grade enrollment by age show that, from the early 1970s to the late 1980s, entry into 1st grade gradually came later in the development of many children, but for the past decade there has been little change in age at school entry. Figure 6-1 shows percentages of 6-year-old children who had not yet entered the 1st grade as of October of the given year.22 Among 6-year-old boys, only 8 percent had

|

21NANA |

Another relevant factor is change in state or local requirements about the exact age a child must reach before entering kindergarten or the 1st grade. |

|

22NANA |

Percentages shown in Figure 6-1 are 3-year moving averages and do not agree exactly with the annual estimates reported in the text. |

Figure 6-1 Percentage of 6-year-old children who have not entered 1st grade.

SOURCE: U.S. Bureau of the Census, Current Population Reports, Series P-20 for various years. NOTE: Entries are 3-year moving averages.

not yet entered the 1st grade in 1971,23 but 22 percent were not yet in the 1st grade in 1987, and 21 percent were not yet in the 1st grade in 1996. Among 6-year-old girls, only 4 percent had not yet entered the 1st grade in 1971, but 16 percent were not yet in the 1st grade in 1987, and 17