8

INFORMATION TECHNOLOGY INFRASTRUCTURE

The information technology (IT) infrastructure and the work of the major service units have long been considered to be orthogonal at the Library of Congress, but that is becoming less and less true because of the movement toward a digital infrastructure for information acquisition, preservation, and access. In addition, current trends in technology and its future uses will continue to suggest and enable new services and new information sources for the Library.

This chapter looks at how the Library of Congress (LC) provides and uses information technology today and offers guidance for the future. Although the chapter occasionally makes specific observations about the organization, it is not a traditional review of information technology within an organization as it might be performed by a management consulting team. Instead, the focus is on the way the Library uses IT as a whole and how key technologies such as IT security, networking, database management, and multimedia are being used, with the aim of learning how IT supports the missions of the Library.

It is not an accident, however, that this particular chapter comes toward the end of the report. Infrastructure choices can and must be made with a full understanding of the institutional mission and the tactical choices made to achieve that mission. One tendency the committee has seen in LC (and one familiar elsewhere in society) is the willingness to be persuaded that a technology that is new and well spoken of elsewhere must be good for something and, more particularly, that a technology or

a product that LC can get either at a discount or at no cost is preferable to other products.

Additionally, vision and direction must be set clearly before making technological choices, because it is so difficult to change technologies at the Library. For example, the Library is still affected by the decision made years ago to standardize around OS/2, which led to the truly bizarre situation of needing to install (in 1999) desktop machines that would be able to “dual boot” (i.e., run either as OS/2 machines for certain legacy applications created during the reign of OS/2 or as Windows 95 machines).

THE INFORMATION TECHNOLOGY SERVICES DIRECTORATE

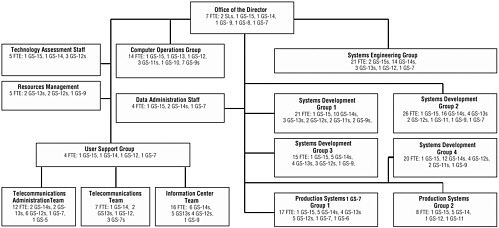

The Information Technology Services (ITS) Directorate is the computer and communication systems and services group for the Library of Congress. It acquires, supports, and maintains the computer, networking, and telephone systems for LC. ITS does most of the in-house programming, handles much of the computer-systems training, and monitors contracts with software and hardware vendors. It has a staff of approximately 200 people (see Figure 8.1).

The ITS Directorate also manages many small to medium-size development projects involving 1 to 10 people for between 3 months and 2 years. Projects generally start with a request from a service organization. ITS works with the client organization to scope the project and to write a requirements document. The project then gets approved in a fairly informal way by consultation among the senior ITS staff and the client organization. Once the project is approved, ITS staff work with the client using a spiral development methodology to manage their work. When the projects are deployed, ITS administers and evolves the applications.

ITS produces substantial and serviceable high-level architectural documents on topics such as storage and retrieval of digital content, centrally supported systems infrastructure, and telecommunications.1 Its server and storage architectures meet its customers’ needs.

Although it is not what in industry would be seen as an exceptionally responsive or cutting-edge technical organization, given the many con-

straints of its environment, ITS adequately provides basic services. The Library of Congress systems work and people get their jobs done, even though—as is true in other organizations—they want more services than the central organization can possibly deliver within budget constraints. The ITS organization at LC operates under unusually difficult hiring constraints (discussed in Chapter 7). These constraints exacerbate the difficulty of attracting talented IT workers, which is a problem that is shared throughout the economy.

The middle-level management cadre seems to be hard-working and competent. They are making the best of limited budgets and limited flexibility with staffing in terms of day-to-day support. They prudently managed the deployment of 5,000 desktop PCs and the transition to systems that addressed the Y2K problem.

ITS staff do not, however, have the technical depth to meet the current challenges. They cannot keep current in areas like networks, security, middleware, and databases (the topics the committee discussed with them) because the Library of Congress does not budget adequate time or money for their continuing education or attendance at professional conferences. Nor is there a budget for members of the ITS staff to spend time investigating new technology. Broader participation in strategic Library initiatives by ITS staff could also be a learning experience for them, allowing them to understand end-user issues and concerns and—probably—inspiring them to consider more innovative technical solutions to the Library’s mid- to long-term issues. As a result of the constraints mentioned, the Library lacks a central repository of knowledge about emerging technologies and a capability for experimenting with them. This causes gaps and inefficiencies when these technologies are deployed. The ITS organization also does not have the bench strength to assess critical technology issues from multiple viewpoints or to withstand key personnel departures (this is a particular example of the human resources difficulties described in depth in Chapter 7).

Outsourcing

Historically, ITS did most systems development in-house using ITS staff. Over the last 8 years, the Library has increasingly used third-party software to meet its needs. The primary example of this is the Voyager system from Endeavor Software that forms the basis for the new Integrated Library System (ILS). Similarly, the Copyright Office has contracted with a third party to build the CORDS system, and the Human Resources Services Directorate is using PeopleSoft software. This shift to outsourcing has changed the ITS skills mix: increasingly, ITS staff are acting as contract monitors and administrators rather than developers.

They spend an increasing proportion of their time writing requirements documents, negotiating contracts, managing contractors, and acting as liaison between the vendors and the Library’s service organizations.

Outsourcing obviates the need to have large quantities of in-house, low-level technical expertise—the requisite talent can be hired on demand. It may well be that the Library could outsource many of the development, support, and operations tasks performed by the ITS organization; this option has not been sufficiently explored. However, outsourcing also increases the need for people who have a deep understanding of both LC requirements and information technology and who can plan what to outsource and can select, negotiate with, and supervise outside vendors. Application service providers claim that they can perform such functions more economically than in-house organizations. There are, of course, numerous vendors that provide systems in fairly standardized domains such as human resources (and indeed, LC is using commercial off-the-shelf software for this directorate). However, even for areas that may appear to be unique at first glance, existing applications elsewhere might be adapted for LC use. For example, copyright registration is not completely unrelated to other registration processes—those for property deeds, cars, patents, and so on—so it should not be assumed that because the Copyright Office is a unique institution, it must necessarily need custom software. The Library gained experience with out-sourcing in two large projects: the apparently successful ILS (see below for a discussion) and the incomplete and uncertain CORDS project, discussed in detail in Chapters 2 and 3.

The committee believes that the Library will increasingly outsource its IT tasks but will continue to need a strong in-house IT organization to perform some in-house development, training, support, and operations and to review and monitor the outside contracts as well as provide technical feedback on proposed contracts. ITS will need to develop skills and processes for producing useful and timely contract reviews.

The Integrated Library System as a Textbook Case of Outsourcing

The scope and timing of the Integrated Library System make it the outsourcing project for which the most useful information is available. If the Library is to undergo a transformation in the born-digital area, then the ILS project is one of the best guides to its potential success, since it touches a huge part of the organization and is a major IT initiative.

A Library-wide ILS program office involving staff from each of the Library’s units managed the acquisition and deployment of the ILS. The requirements came from the end users. Having the users select the system forced them to make the hard decisions about what would be in-

cluded and what was out. Going to an outside vendor and buying a turnkey system largely defined what was possible (or affordable). This process worked. If the users had gone to ITS for a home-grown system it would have had every feature imaginable and would probably have taken an inordinate amount of time to develop. There is a feeling in ITS that deficiencies in the ILS would not have been acceptable in a custom-built ITS system.

The budget for ILS was a separate line item, under the associate librarian for Library Services and the deputy librarian ($5.5 million to start and $16 million over 7 years to complete the project). The original request for proposals covered acquisitions, cataloging, serials check-in, circulation, and an online public catalog. This system replaces a suite of in-house mainframe applications developed by LC over the last 30 years. The ILS database has 12 million bibliographic entries, nearly 5 million authority records, over 12 million holdings records, approximately 26,000 patron records, over 30,000 vendor records, and more than 60,000 order records. The application supports 3,000 simultaneous users, including members of the public making requests via the LC Web site. The Library selected Endeavor Information Systems’ client/server Voyager system. Voyager supports Windows clients accessing a large Sun/Solaris backend server system (SUN E10000 with 56 processors and 64 gigabytes of memory) running Voyager on an Oracle database. A SUN E3500 is the Web server and a SUN E4500 is the test server.

The Library does not have source code access to Voyager. Endeavor fixes bugs and provides new releases periodically. (Since installation of the system, Endeavor Information Systems has been acquired by Reed Elsevier PLC, one of the largest scientific publishers in the world. It is unclear how this development will affect libraries that use the product.)

The ILS program office worked with implementation teams and with ITS and other organizations. ITS managed the hardware and software installations on the servers and is operating the ILS system. ITS also worked with Endeavor via the ILS program office, although clearly Library Services personnel were in charge. If such arrangements are to become increasingly commonplace at LC, then the ITS staff will need training in contract management to further develop their ability to supervise contracts.

The ILS implementation involved 82 teams comprising more than 500 people. There are a small number of ILS technical groups (three) compared to the number of policy groups (nine). The three ILS technical groups address host, workstations, and user support. This echoes what is going on within LC: technology is underemphasized by groups other than ITS, even in major technical undertakings. For example, the first large deployment problem for ILS was technical (the multiprocessor prob-

lem discussed below). The committee cannot tell yet if large problems in other areas, such as training staff to use the system, were successfully solved. That particular problem is less of an issue in ITS-developed systems because they are designed specifically to meet Library of Congress user requirements.

The ILS was first rolled out for operational use in the autumn of 1999. The early trials, in the spring of 1999, were promising. Problems were identified, but overall, the team was optimistic. Benchmark tests at the hardware vendor site in the spring indicated that more memory was required—and the Library complied. Early deployments in August and completion of deployment by October 1, 1999, went well but exposed some more performance problems. ITS continues to work with Endeavor, Oracle, and Sun to address these problems.

The ILS reduced cataloging productivity during the transition and training periods. Training staff for the transition to the ILS involved delicate negotiations with the unions to reduce the required productivity level during the transition period. Productivity may be even lower because Voyager is not tuned to the unique characteristics of the Library of Congress. The Library staff had, moreover, been using the old system for 20 years, and they knew it inside out. Switching to the new system (with the old workflow) is bound to be slower for a while, although the ILS program leadership expects this productivity decline to be a temporary one. Designing a new workflow based on the ILS should yield productivity benefits. That reengineering effort, begun in 2000, includes business process improvements and continuation of the conversion of the manual processes (shelf-list and serials).

The ILS replaces a number of manual and automated systems. Its integration provides inventory control that can help improve physical security, an ongoing issue at the Library, and that substantially reduces the number of steps necessary to track down a copy of a book requested by a Congressperson. By integrating a number of functions under one project, ILS can realize the kinds of synergies and savings that could be realized on an even larger scale if ITS or an organization involving ITS were tasked with reviewing and monitoring systems and infrastructure development across the Library.

In summary, the committee sees ILS as a success story. The Library built the cross-functional organization that coordinated both the technical and organizational issues. It is unlikely that ITS could have built such an ambitious system in-house. The system is operational, and the Library is poised to reengineer the workflows, which may yield substantial productivity improvements.

This example also demonstrates some other lessons and raises a few issues. It can be hard for LC to buy off-the-shelf software for library-

specific applications, because the Library is often many times larger than the next largest institution (indeed, accommodating LC’s size was a challenge for the ILS implementation). Things that work for others may not scale up to LC needs. In the specific area of integrated library systems, LC is following rather than leading the large university libraries. The Library’s size and variety of offerings alone are a challenge to the vendors. In a different world, the Library of Congress might have led the transition to an integrated library system. As it happened, the Library did not have the skill or organization to develop an ILS, so it is just an observer (and a very large customer) on the new scene. The fact that LC was slow to adopt an ILS reflects the enormity of the task, some budget issues, and the fact that the old system was actually working. The Y2K issue provided the additional motivation for the Congress and the Library to act.

How will the Library participate (if it participates) in the development of next-generation automated library systems? The original case for the ILS mentioned better integration with digital library efforts. The intent was to gain built-in Web browsing capability and connections to Web sites, including its own. It is now time to look beyond the initial installation of the ILS.

The Information Technology Services Directorate As a Service Organization

Organizational units served by ITS express frustration about the difficulty of getting staff time and other resources from ITS, although they generally understand the difficulties of serving a variety of organizational units, each with its own priorities, from a single pool of people and budget. The committee believes this frustration comes from two basic flaws in the ITS Directorate: ITS does not act like a service organization, and its services are free.

The ITS Directorate has no explicit service-level agreements2 with its clients and indeed has few performance metrics, either for internal use or for reporting to ITS clients. With no regular or public measures of system performance and availability and no support metrics, there is no way for organizational units to compare the actual usefulness of ITS services with the potential usefulness of decentralized IT support units. In fact, since

|

2 |

See Complete Guide to IT Service Level Agreements: Matching Service Quality to Business Needs, by Andrew Hiles and Philip Jan Rothstein (Brookfield, Conn.: Rothstein Associates, 1999). Also see <http://www.amdahl.com/aplus/servlevel/contents.html>. |

there are so few metrics, ITS cannot even evaluate its own performance or measure internal improvements.

The lack of ITS reporting is related to the fact that ITS services are obtained by persuasion rather than by paying for them. There is not even any direct way to determine, after the fact, how much a particular project costs. ITS is not a budget line item for service units, yet IT expenses and staff account for nearly 10 percent of the total budget. Better accounting for IT costs would facilitate better overall management. The Library’s management needs to rationalize roles, authority, and responsibilities within the organization as a whole. There needs to be clear definition of the role of the individual units and the role of ITS. ITS itself should function as a service bureau for LC as a whole. Funding should come to it through its clients, the various service units. Those units should have the option of using or refusing to use ITS services within broad limits, short of causing outright financial or technical inefficiency for the Library. That is, individual units should be able to contract for services outside or perform them within their own units or agree with other units of LC on joint ventures or contract with ITS.3 In return, the information technology vision, strategy, research, and planning (ITVSRP) group discussed in Chapter 7 and, ultimately, the new deputy librarian should sit in judgment on cases where allowing decentralized or external service provision would be uneconomical or technically unsound for the Library as a whole. For example, the Library needs a resource that can provide technical advice on contracts and recommendations about current and future technology. ITS should not be the only unit making those decisions, though obviously both ITS and other units will make recommendations according to their best professional judgment.

If these steps are taken (and for this it would probably be best to engage an outside management consulting firm with experience in this area for a short time during the restructuring under the new deputy librarian), then the cost of ITS will be more clearly understood. A unit with an IT project will see the full costs identified in its budget and know how much it is paying ITS and how much it is spending internally. With such clear information, it will be better able to make judgments about priorities and the relative value of upgrades and replacements. ITS then becomes a market-based service unit existing to meet the needs of customers who pay the bills for services rendered. That market discipline would go a

long way toward resolving the continuing disputes about resource levels, scheduling, and general relations with users.

Information Technology Support Beyond the ITS Organization

Besides ITS staff, approximately 200 people in the individual service units perform IT-related functions such as frontline support or dedicated IT development for their service unit. Some organizations (e.g., the Congressional Research Service) have built their own IT group to avoid having to compete for ITS’s attention for some projects (although large-scale initiatives such as the Inquiry Status Information System (ISIS) and the Legislative Information System (LIS) involve partnering with ITS). This in effect assigns a cost to the ITS services—CRS can now do a project in-house or contract it to ITS. ITS can argue that as a larger and broader service organization, it provides better service. Certainly, CRS continues to rely on ITS for phone, network, e-mail, and Internet services, infrastructure that is best supported by a central IT organization. However, CRS staff are increasingly developing their own small applications with their local IT staff. The decision by CRS to spend its own money rather than try to make use of the nominally free service provided by ITS indicates strongly that the system for budgeting and setting priorities for ITS activities is not functioning adequately.

Findings and Recommendations

Finding: As the Library increasingly outsources its information technology tasks, it will continue to need a strong in-house information technology organization to perform some in-house development, training, support, and operations and to review and monitor these outside contracts as well as to provide technical feedback on proposed contracts.4

Finding: The Library is underinvesting in the continuing education of its Information Technology Services Directorate staff in technical development and in new skills such as contract management.

Recommendation: The Library should budget much more of each technical staff member’s time for continuing education and participation at professional conferences and should allocate more funds to cover travel and registration expenses.

Recommendation: A practice and a budget should be established to partner members of the Information Technology Services Directorate staff who are interested in exploring a particular new technology with staff in the service units or with outside institutions that are interested in working on a pilot project applicable to the Library’s needs.

Finding: The ITS Directorate lacks measurement and reporting systems and a cost-accounting system that would allow it and its clients to make trade-offs among implementation alternatives and to evaluate the quality of the ITS Directorate’s service.

Recommendation: Together, the Library’s service organizations and its Information Technology Services Directorate should institute service-level agreements based on metrics of system availability, performance, and support requests. These metrics should be used to track ITS Directorate process improvements. Developing and implementing service-level agreements should be a high priority for the new deputy librarian (Strategic Initiatives) and the information technology vision, strategy, research, and planning group.

Recommendation: Wherever possible, services provided by the Information Technology Services Directorate should be charged against the budgets of the client organization within the Library. This would allow comparing the costs and benefits of servicing from within the client organization, outsourcing to the ITS Directorate, or outsourcing outside the Library.

HARDWARE AND SOFTWARE

The Library IT infrastructure is undergoing a major transformation as this report is being written. First, the desktop PCs have all been upgraded, from a variety of machines to a fairly uniform collection of 5,000 desktop Intel Pentium II processors running Windows 95, Corel Office, and Netscape Navigator. ITS is phasing out the use of OS/2 and Windows 3.1.

The upgrade of several thousand desktop PCs and the training of the staff on the “new” (Windows 95) user interface went fairly smoothly. About 150 people throughout the Library do most of the frontline support. There is one IT support person for every 25 desktop PCs (counting staff in ITS and staff in the service units), which is typical of well-supported organizations. The choice of the 5-year-old Windows 95 over Windows 98 or NT4 reflects the Library’s conservatism. ITS plans to automate desktop software deployment using Tivoli, although the committee did not find anyone knowledgeable about this plan, nor is there a resource plan. Today, the process is manual and based on sneaker net.

As discussed in the preceding section, many of the IBM mainframe services were replaced by the ILS. This deployment went as smoothly as could be expected, given the scope of the project and the extreme time pressure to meet the Y2K deadline.

The Library has standardized on IBM AIX and SUN Solaris servers. It is also standardizing on Windows NT4 servers for file and print, phasing out various Netware file and print servers. The Library has contracted with EMC Corporation to provide 42 terabytes of storage to support ILS and other projects over the next several years. The Library has some Sybase databases but has largely standardized on Oracle for its database system and PeopleSoft for human resources applications. Client programming is generally done in Visual Basic and Access, using browsers as client interfaces, although some interfaces have been developed with Powerbuilder. Overall, there is a trend to the Web-based model, including increased use of Java.

For a large organization that relies on IT, it is surprising to find that e-mail is not yet a universal capability within the Library. For example, the LC police force is not connected to e-mail or to some other LC communication systems. It would be an ideal group for wireless communication, either cell phones or pagers with alphanumeric two-way communication. The committee was troubled to find that during a fire emergency, the guards were unaware of the alarm in another LC building.

ITS and the Library of Congress did a good job with respect to Y2K. They had a contingency plan in case deployment of the ILS was delayed, and they executed well in upgrading existing systems, replacing them where necessary.

Information Technology Security

By far the most serious infrastructure problem seen at LC was the lack of IT security. This problem needs urgent attention.5 The current secu-

rity policy is driven piecemeal by outside forces, mostly auditors. Auditor input has been valuable when it has focused on a particular area of security and the auditors have followed up to check on the status of specific vulnerabilities. However, audits can also lead to unproductive actions. For example, LC installed a firewall because it was told to do so, without understanding just what it should or could do. This configuration placed the public reading room systems on the inside of the firewall, giving outsiders easy access to the systems the firewall was supposed to protect. On the plus side, previous audits focused on host/operating system security, so that improvements continue to be made to solve the specific vulnerabilities discovered and IT staff have a clear picture of their stand-alone host security and vulnerabilities. They now need to develop an understanding of distributed security issues and skills to deal with them. This is particularly pressing since enhanced connectivity to the Library will provide more and more opportunities for attacks using the network or distributed applications such as the Web, and the Library is a highly visible, attractive target for would-be perpetrators.

The chief security officer has given considerable attention to organizational and human resource issues (such as education and process). However, there is evidence to suggest that organizational issues still require attention—the vulnerability that enabled the THOMAS break-in in January 2000 was understood before the break-in, but LC did not implement an effective defensive measure in a timely fashion. Nor have the technical challenges of distributed security been tackled, and the committee saw no evidence of any plan to do so. As discussed above, concentrating on tactical responses can delay the advent of strategic and sweeping architectural solutions.

For example, many of the different systems rely on passwords for user authentication, with the usual result that users find they must have as many passwords as there are systems they use. To reduce the confusion, LC is using Tivoli to propagate passwords among systems, a typical tactical response but one that does not address fundamental weaknesses. In demonstrations, the committee observed that important passwords were clearly too short and were being sent over the network unencrypted. Work is under way to implement a virtual private network to protect accesses from foreign offices, but more work is needed to protect all distributed logins.

A strategic plan for this area would instead set a goal of moving as quickly as possible (perhaps in small steps) toward a modern, distributed authentication technology, sometimes called “single login” or “network login.” Several such schemes are now available using, for example, encrypted telnet, IPSec, X.509 certificates, and Kerberos; they would address the confusion that password propagation is trying to reduce and at the same time eliminate many other alarming security exposures, such as

sending passwords over the network in the clear. While the committee heard several organizations mention the need for such technologies, it found no real movement in that direction.

Resources should be devoted immediately to understanding the Library’s distributed security needs and concerns. A series of audits targeted at issues such as network access, distributed authentication, access control, and Web security would be the first step in getting specific professional advice on the topics (much as the National Security Agency’s review of CRS systems impelled CRS to secure its part of the network). A series of audits might provide the impetus for fixing the security problems identified in the audits; follow-through support from all levels in the organization will be necessary to ensure change. In parallel, a member of the ITS staff with expertise in both security and networks should become knowledgeable about common distributed security errors so as to ensure that the Library does not make them. The completion of this task may necessitate external technical training or consulting services. This person should then perform continuous internal security audits.

One example of the sort of vulnerabilities that an informed audit should catch is unnecessary listeners on TCP ports, which should be shut down. An audit should also address what is perhaps the largest overlooked area of computer security at the Library: auditing and logging. Until recently, since LC staff did not know what information was logged, they did not know if a nonobvious break-in had occurred. As a result of the THOMAS break-in,6 staff now monitor a subset of their static files for unauthorized changes. It is possible that other logging information exists that they should be viewing. Finally, it is hard—indeed impossible—to enforce security policies with Windows 95; Microsoft recommends NT4 or Windows 2000 for business users. This discussion of security problems merely touches on the problems the committee identified during its discussions with the ITS staff on security issues.

The Library needs to set its own security goals thoughtfully. If it wants to be perceived as a reliable source of information on the Web, it must consider that the lack of security allows alterations to the information it publishes on the Web. The break-in to THOMAS damaged that site’s reputation for providing information of high integrity and showed that the THOMAS system and other parts of the LC Web site are vulnerable to attack. In this case, the attack was fairly benign, since it changed data in a more or less obvious manner. But Web disinformation is becom-

|

6 |

“Hackers Deface Library of Congress Site,” by Diane Frank, in Federal Computer Week, January 19, 2000, available online at <http://www.fcw.com/fcw/articles/2000/0117/web-lochack-01-19-00.asp>. |

ing more sophisticated. Stock traders are increasingly leery of postings to their newsgroups and other sites, for example. An attack on THOMAS could cause a Congress member’s constituency to misconstrue a bill and press for a vote that would in fact be counter to its interests.

The Library’s future stewardship of digital materials will place further demands on its security infrastructure. As the Library begins to offer controlled access to digital materials, it will need to deploy a more flexible system for their protection. Examples of the restrictions LC may need to honor include copyright and licensing and contractual restrictions such as those that accompany the work of the Shoah Foundation (discussed in Box 1.1). The storage system will need to associate with an item metadata indicating who has access to that item. In addition, it will need to identify its users or their key attributes with confidence in order to apply properly the rules associated with that metadata. As discussed in the Digital Dilemma,7 there are still many outstanding issues, both technical and socio-economic, including the need to control who can view the contents of an item, what can be copied or referenced from an item, and even how searches and indexing can be supported over all items while still honoring the heterogeneous restrictions involved.

The ITS Data Center Disaster Recovery Strategies and other planning documents outline LC’s proposed approach to anticipating a variety of potential disasters that could compromise or destroy LC’s unique collections or make its resources unavailable to the Congress and the nation. Unfortunately, Congress did not fund the disaster recovery mechanisms requested by the Library in past years. This is a false economy. As it stands, the LC’s digital assets are all stored on Capitol Hill. Most agencies and corporations store their data and programs in two or more locations, so that a disaster at one site would be unlikely to destroy the data at other sites. There should be a complete and current copy of the Library’s digital assets at one or more sites, preferably far from Washington, D.C.

Networking

After security problems, computer networking is the next infrastructure issue that needs urgent attention for both current and future needs.

|

7 |

For additional discussion on copyright and digital preservation, see Chapter 3 of The Digital Dilemma, by the Computer Science and Telecommunications Board, National Research Council (Washington, D.C.: National Academy Press, 2000); “Digital Preservation Needs and Requirements in RLG Member Institutions,” by Margaret Hedstrom and Sheon Montgomery, Research Libraries Group, available online at <http://www.rlg.org/preserv/digpres.html>; and the film “Into the Future,” by the Council on Library and Information Resources, description available online at <http://www.clir.org/pubs/film/film.html#future>. |

The network is underpowered. The Library currently supports approximately 80 percent of staff workstations with token ring network connections. These networks are linked in a collapsed star topology through Cisco routers interconnected via a 155 megabit/second asynchronous transfer mode (ATM) backbone. The token rings are being upgraded to 100 megabit/second Ethernets on an as-needed basis (or as funds are available; as of June 2000, approximately 900 workstations had 100 megabit/second connections). There are several identifiable problems with this structure and plan.

ATM switches are designed to switch digitized telephone traffic; they are a second-best choice for Internet applications because they are more costly and complex than alternatives such as a gigabit Ethernet switch. Also, to avoid massive disruptions during an overload they must be configured either with private virtual circuits (which forfeit the opportunity to take advantage of traffic burstiness) or to begin dropping data when operating well below their rated capacity.8 This latter concern regarding overload does not currently affect the LC network, presumably because the aggregate load on the ATM switch is being limited by the low-speed token rings. As those token rings are replaced with 100 megabit/second Ethernets and the routers are upgraded for higher data rates, it is likely that the shortcomings of the ATM switch will become more apparent.

As for as-needed local network upgrades, ITS has a poor track record of identifying need in an accurate and timely fashion. For example, the National Digital Library’s scanning contractor generates 4 to 5 gigabytes/ hour and reports that the network is the bottleneck in getting this data to Library Services. Similarly, the Geography and Map Division creates 300-megabyte files at the rate of 1 to 2 gigabytes/hour, and then wavelet compresses them. The network is the bottleneck. ITS staff did not seem to understand that they needed to upgrade the network to support video, Web access, and future multimedia applications and information transfer everywhere. They should have upgraded the network when the PCs were upgraded rather than getting PCs with antiquated token-ring network cards.

These upgrades should allow continuous data gathering as well as monitoring the use of both the backbone network and the Internet access links, so that the Library can keep ahead of what will undoubtedly be a rapidly rising load. (In industry, a current standard rule for sizing Internet

|

8 |

For additional discussion, see “Frames Reaffirmed at ATM’s Expense,” by Kevin Tolly, in Network Magazine, September 1998, pp. 60-64, available online at <http://www.tolly.com/KT/NetworkMagazine/framesreaffirmed9809.htm>, and “Optical Internets and Their Role in Future Telecommunications Systems,” (draft) by Andrew K. Bjerring and Bill St. Arnaud, undated, available online at <http://www.canet3.net/frames/papers.html>. |

links is to provide capacity for twice the load observed during the busy hours of the day.) As far as the committee could tell, there is no instrumentation in place to measure the security or the performance of the network. It did not get the sense that LC staff understand current and future network demands. This situation is just one aspect of the poor or nonexistent ITS metrics. Indeed, some ITS staff told the committee that they thought network bandwidth was adequate—completely contradicting the calls for more bandwidth.

Databases and Storage

The Library is creating databases (Oracle, Sybase, and so on) at the rate of four per year. Each of these databases and the associated applications will require long-term care and feeding such as backup and restore, security management, system upgrades, and reorganization, even when the database itself is of modest size. To meet its goals, ITS needs to have a clear, long-term plan to ensure adequate database administration and application support in the future. As it stands, ITS faces a substantial maintenance burden for each of these applications.

The Library made a storage estimate9 of 43 petabytes (thousands of terabytes) to digitize the Motion Picture, Broadcasting, and Recorded Sound Division and 5 petabytes for the Geography and Map Division. These estimates are for preservation-quality digitizations that do not take into effect compression, which might save space by a factor of 10 to 100. Still, the committee believes that the Library would need several petabytes of storage to store preservation-quality digital images of all its assets. For access quality, perhaps 1 petabyte would be adequate. No matter how the storage needs of the Library of Congress are measured they will be huge in the coming years.

In light of these huge demands, the committee has some concerns about the current storage pool and storage area network solution. LC recently spent $10 million on a solid, cutting-edge technology: a 42-terabyte storage area network based on EMC/FiberChannel, which includes backup and restore functions. That amounts to $240 million per petabyte—not an affordable approach for LC. The current storage architecture and estimates do not make a clear distinction between storage for access and storage for preservation. In addition, the estimates do not clearly identify whether the amount of storage being discussed is before

or after replication for reliability, nor do they address the fact that the fraction of data modified in a given year is negligible (virtually all changes are additions). This specific access pattern allows alternative storage solutions (see below).

If the Library’s storage needs are as massive as the committee suspects (several thousand terabytes), the Library should consider a lower-cost strategy for storage systems, like that adopted by the Internet Archive and others. The Library’s hierarchical storage management system (a tape management system) was installed based on the assumption that disk storage is very expensive. Now that disk prices are approaching the price of nearline tape, LC should reevaluate its archiving strategy. The goals of maintaining current, authoritative information very reliably and keeping track of older material that is no longer current have become muddled into a single tape backup system. For an application like this, where changes are additions, it would make sense to send newly added files across a network to two or three geographically distant sites that maintain replicas rather than to use redundant arrays of independent disks, which can provide (probably unnecessarily) high performance and good protection against individual disk failures, but little or no protection against site disasters. Since many data items are rarely accessed, the system requires only modest access performance.

Information Retrieval

The Library of Congress was once a leader in the development of information retrieval technology. Library staff created software to provide online access to their catalogs at a time when online catalogs were a novelty. Like many other organizations, the Library has found it difficult to keep up with the pace of change in retrieval software and is making increased use of retrieval packages provided by third parties. The retrieval software provided with the ILS will be used for most catalog access. Applications with a larger full-text component (e.g., THOMAS) make use of other third-party software (primarily InQuery). Given the rate at which IR technology is evolving, the shift to third-party software is appropriate—relatively few organizations can now afford to build their own retrieval software.

Information retrieval technology has become much more visible with the popularity of Web searching. Once the purview of trained searchers, today’s retrieval software allows novice users to find information on the Web, a collection that is huge by the standards of a few years ago. Retrieval software is evolving in several ways: the ability to search the full text of documents in many different languages; support for images, sound, and other media; user aids to help searchers formulate better queries; performance improvements to allow very large collections to be searched

more quickly; feedback techniques to allow searchers to find documents similar to a known document; machine learning techniques to provide recommendations based on a history of use; and document summarization to extract relevant portions of a long document or to combine information from several documents.

The rapid evolution of retrieval software provides real benefits for users, but it complicates the task of system integration for organizations like the Library of Congress that have rich and varied collections and a heterogeneous user population. Clearly, the Library needs a strategy for dealing with information retrieval issues. Information retrieval is currently supported by multiple systems, each with different capabilities and support issues. The InQuery system used in supporting natural language queries to THOMAS and other systems is no longer available from the supplier, and it is unclear whether the product will continue to be supported. The use of PDF to store Global Legal Information Network documents complicates retrieval since there are no good text extractors for PDF. Support for foreign language retrieval, important for applications in the Law Library and Library Services, is weak. Several other retrieval issues have been identified but are not yet being addressed: in particular, the need for access control within the search engines (as discussed in the IT security section above) and the need to provide general support for searching structured documents.

Digital Repository

As discussed in Chapter 4, the Library is experimenting with a repository system (TEAMS) donated by Artesia Technologies, but it is unclear to the committee how much progress has been made in implementing this system. Most of the functioning of repositories depends upon having the appropriate information (metadata) to support the management and preservation of the digital objects deposited. It is unclear whether the Library has been creating such administrative and technical metadata for the American Memory collections. It is imperative that the Library give the implementation of a robust repository very high priority and that it ensure that the metadata needed to manage its digital collections over time and through the process of migration in response to technological change are available for all objects in its digital collection. The committee reviewed the Repository Requirements Report dated September 1998 and found this document to be an excellent general outline specification.10

Findings and Recommendations

Finding: E-mail is not yet universal in the Library.

Recommendation: Infrastructure should be deployed so that all Library employees have easy access to e-mail.

Finding: LC computer and information security competence and policies are seriously inadequate.

Recommendation: The Library should hire or contract with technical experts to examine the current situation and recommend a plan to secure LC information systems. Then, once a plan is in hand, the Library should implement it.

Finding: Although the Library has identified a disaster recovery strategy as a priority, Congress has decided not to fund the implementation of any such strategy.

Recommendation: Congress should provide the funding for a disaster recovery strategy and its implementation for the Library.

Finding: The Library’s networking infrastructure needs urgent attention with respect to serving both current and future needs. The Library’s current policy is to upgrade to fast Ethernet as needed, which is problematic (it is difficult to identify the need in an accurate and timely fashion). The ATM switch currently used as a backbone is poorly matched to the near-term needs of the Library. Network performance is measured on an ad hoc basis at best, so performance information is generally not available when it is really needed.

Recommendation: The ITS Directorate needs to upgrade all of the Library’s local area networks to 100 megabit/second Ethernet on an as-soon-as-possible basis rather than on an as-needed basis. It also needs to replace the ATM switch with Ethernet switches of 1 gigabit or greater and institute continuous performance measurement of internal network usage and Internet access usage. The Congress should provide funding to support these upgrades.

Recommendation: The use of a network firewall as the sole

means of segregating internal from external usage of LC systems needs to be augmented as soon as is feasible in favor of “defense in depth” that incorporates defensive security on the individual computer systems of the Library.

Finding: The Library’s storage pool goals of maintaining current, authoritative information very reliably and keeping track of older material are muddled. The current approach, which entails high-priced storage, makes it prohibitively expensive to put most of the Library online. The disaster recovery plan will nearly double the storage requirements.

Recommendation: The Library should establish disk-based storage for online data and for an online disaster recovery facility using low-cost commodity disks. The Library should also experiment with disk mirroring across a network to two or three distant sites that maintain replicas, for availability and reliability of archives, and use tapes exclusively to hold files that are rarely needed. Some of the resources being spent on installing a separate specialized storage area network for disk sharing should instead be spent on a general, high-performance network for those and other needs.

Finding: The implementation of a robust digital repository is needed to support the Library’s major digital initiatives. The current rate of progress in implementing such a repository is not adequate.

Recommendation: The Library should place a higher priority on implementing an appropriate repository.