4

Basic Methodologies and

Applications for Understanding

and Evaluating Uncertainty

Key Messages Identified by Individual Speakers

- Expert elicitation can be applied to issues of uncertainty, allowing the incorporation of informal evidence that contributes to the expert’s judgment alongside formal evidence.

- Eliciting values for risk governance choices is a process of applying structured common sense to complex problems.

- Language can be limited in its ability to convey accurate information. Making clear to the reader what information is known with certainty and what is reasoned judgment could help address these limitations.

- The valuation process for managing risk includes both technical (scientific) information and value-based information (preferences) to clarify and examine trade-offs.

- Bayesian approaches to evaluating clinical trial data have the potential to facilitate more robust characterization of inferences drawn from studies.

APPLYING DECISION SCIENCE IN THE DRUG REVIEW PROCESS

Several presenters discussed the merit of using judgment and decision science to address uncertainty in the drug review process. Its methods, they stated, serve to make uncertainty tractable, providing a practical

context for considering the value of evidence and revealing important new uncertainties that might otherwise have been overlooked.

Eliciting Expert Judgment1

A technique called expert elicitation uses a one-on-one interview process to seek expert input about topics where there are insufficient data or where data are unattainable. According to M. Granger Morgan, Professor and Head of the Department of Engineering and Public Policy, Carnegie Mellon University, the elicitation process draws out carefully reasoned, individual judgments, then summarizes the results of several interviews to provide an indication of the range of expert views and associated uncertainty, usually in the form of subjective probability distributions.

A key benefit offered by expert elicitation is that informal evidence that contributes to the expert’s judgment can be incorporated together with formal evidence, said Morgan. Expert elicitation can be applied to uncertainty not only about a quantity or mathematical probability, but also about a process or a model function. For example, as suggested by one workshop participant, an expert elicitation in the drug review process might quantify expert judgment about the relative likelihood that alternative models of pharmacokinetic or pharmacodynamic processes correctly describe a given biological process.

Morgan provided three cautions with respect to the application of expert elicitation: (1) only those with relevant expertise should be interviewed, in order to ensure that judgments obtained are well informed; (2) words that are used to describe uncertainty, such as “likely” and “unlikely,” should be quantified, because they often mean different things to different people, or even to the same people in different situations; and (3) cognitive biases inherent to human judgment can affect experts’ responses. Morgan described two of the most frequently occurring biases, “availability” and “anchoring and adjustment.”

Availability

Morgan noted that people tend to estimate the frequency of an event by how quickly or easily they can recall or imagine similar instances or occurrences. The “availability” of such a memory can be influenced by how much time has passed, or whether the event was unusual for some reason and, in turn, can influence judgment. In order to safeguard against availability bias, interviewers provide participants with documents and

__________________

1 This subsection is based on the presentation by M. Granger Morgan, Professor and Head of the Department of Engineering and Public Policy, Carnegie Mellon University.

visual aids to ensure that they have the full complement of information in mind when answering questions.

Anchoring and Adjustment

If people start with a first value (“anchor”) and then adjust up and down from that value, they typically do not adjust sufficiently. It is best not to begin an elicitation with a question about what is the “best” or “most probable” value, but rather to begin work by establishing outer ranges and then move in toward estimates of best value, said Morgan.

Bayesian Approaches2

Joel Greenhouse, Professor of Statistics, Carnegie Mellon University, described how Bayesian approaches can permit the introduction of judgments about plausible values within a given study to be taken into account in forming conclusions about the treatment effect being studied. By incorporating consideration of how an RCT changes our opinion about a treatment effect, Bayesian statistical approaches could help the scientific and regulatory communities come to agreement about the treatment effect seen in a clinical trial, noted Greenhouse.

To set the stage and provide a common terminology, Greenhouse explained that conventional statistical analysis calculates a single probability value (p-value) for its hypothesis in a clinical trial—either that one treatment represents a gain over another, or that it has no effect at all. Before an RCT begins, the Bayesian approach instead calculates a probability distribution of the plausible values of the treatment effect. This probability distribution excludes evidence from the current RCT and forms the “prior distribution.” Then, based on emerging information from the current RCT, a plausible value of the treatment effect is generated, or “likelihood.” Applying Bayes rule and Bayesian methodology, the prior distribution is combined with the likelihood to determine the “posterior distribution” that is ultimately a combination of historical assessments of a treatment effect and current opinion about the treatment effect in the active RCT.

Greenhouse noted that the prior distribution can also be adjusted to take into account judgments about whether particular information should be discounted. For example, previous studies believed to be relevant, but

__________________

2 This subsection is based on the presentation by Joel Greenhouse, Professor of Statistics, Carnegie Mellon University, and material from Characterizing Uncertainty in the Assessment of Benefits and Risks of Pharmaceutical Products: Workshop in Brief (IOM, 2014), also prepared for this project.

not directly related, might be “downweighted,” which has the effect of reducing the sample size of that relevant prior information. The likelihood and prior distribution are ways to formalize and make transparent assumptions by representing uncertainties in terms of probability distributions. The posterior distribution then summarizes the belief about the treatment effect. Greenhouse added that sensitivity analysis can be used to test how assumptions about the prior distribution affect the posterior inference. He noted that “[i]f it does not change very much, that gives you added confidence that the conclusions are not being driven by the prior [distribution]. If it does change a lot, that . . . tells you how much uncertainty you have . . . in the available evidence about the question of interest.”

Key to the Bayesian approach is summarizing and synthesizing evidence that can inform the specifications of these probability distributions. With this in mind, Greenhouse posed the question, “What is the role for non-RCT sources of evidence to help inform the FDA about questions of effectiveness and safety?”

Over the course of the workshops, several participants cited the promise of Bayesian approaches to evaluating clinical trial data and the potential for more robust characterization of inferences drawn from studies. Application of a disciplined Bayesian approach could offer opportunities to characterize and accommodate uncertainty in clinical trials.

APPLYING DECISION THEORY APPROACHES TO REGULATORY DECISION MAKING

Several speaker presentations generally addressed decision theory techniques and the scientific basis for incorporating patient and other stakeholder preferences. Several speakers suggested that scientific methodologies for the incorporation of expert deliberation and stakeholder perspectives can help to improve certainty of forecasts, place what is known and what is unknown in a practical context, address uncertainties in the context of patient preferences, reveal new uncertainties that otherwise might have been overlooked, and provide important information on values for regulatory determinations.

Lessons from the Intelligence Community3

David R. Mandel, Senior Scientist, Socio-Cognitive Systems, Defence Research and Development Canada (DRDC), Toronto Research Centre,

__________________

3 This subsection is based on the presentation by David R. Mandel, Senior Scientist, Socio-Cognitive Systems, Defence Research and Development Canada (DRDC), Toronto Research Centre.

provided a perspective on characterizing uncertainty from the domain of intelligence assessments. Mandel noted that he is currently working on a 3-year study to assess the ability of clinical researchers to accurately predict both operational and scientific outcomes of RCTs.

Mandel reinforced Morgan’s comments that language is severely limited in its ability to convey accurate information, noting that “words are imprecise and vague, their imprecision varies across individuals, and is not necessarily aligned with normative meanings.”

One conventional corrective measure, he noted, is the prohibition of “weasel words” and phrases, such as “reportedly,” “evidence suggests (or indicates),” “distinctly possible,” and “apparently.” According to Mandel, this language seems to insinuate more than the analyst is willing to commit or likely to be held accountable for. If the prediction turns out to be wrong, the analyst can then “weasel” out of responsibility. Another corrective approach is to institutionalize a rank ordering of verbal probability using specific and common definitions. However, Mandel noted, such approaches do little to reduce the vagueness associated with the selected terms and there is no guarantee that decision makers will keep the prescribed rank ordering in mind when reading reports.

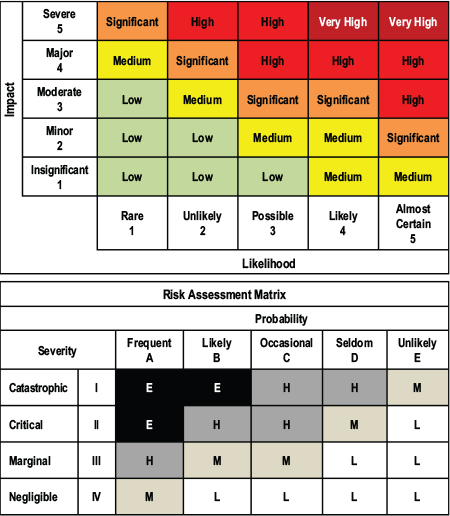

A more radical proposal, he said, would be to assign numbers to words that communicate uncertainty (see Figure 4-1 for two examples of verbal probability terms). When standards are applied consistently, numbers can “smoke out the weasels,” he noted. Numbers also can be operated on, whereas the equation “[likely (times) very improbable]” cannot be solved. In addition, numbers can clearly communicate imprecision; for example, an analyst can be 95 percent confident that the probability of an event is 70 percent, plus or minus 10 percent. Even if numbers are only used internally, such as for audit purposes, they lend themselves to verification of judgment quality, detection of systematic biases, and subsequent correction.

No matter what method is selected to clarify the meanings of uncertainty words, Mandel said, a critical issue is to make clear to the reader what is certain knowledge and what is reasoned judgment, including a way to communicate the degree of certitude that supports each key statement.

Stakeholder Elicitation Methods4

Joseph Arvai, Professor and Svare Chair in Applied Decision Research, Department of Geography, Institute for Sustainable Energy, Environment,

__________________

4 This subsection is based on the presentation by Joseph Arvai, Professor and Svare Chair in Applied Decision Research, Department of Geography, Institute for Sustainable Energy, Environment, and Economy, University of Calgary.

FIGURE 4-1 Example of inconsistent use of verbal probability terms from two standards produced by the same government department.

KEY: E = Extremely High; H = High; L = Low; M = Medium.

SOURCE: Adapted from Mandel, 2007.

and Economy, University of Calgary, stated that decision theory provides a structured approach for gauging the influence of individual perspectives, including a scientific rationale for incorporating stakeholder input in benefit–risk considerations.

Decision research often works with preferences, which represent an individual’s attitude toward a set of alternatives. Preferences are not static beliefs to be uncovered, as an archaeologist might seek an object; instead, Arvai said, preferences are constructed at three key points during the decision process: (1) when the decision is identified as complex or novel; (2) when translation between data and values is necessary to make the decision; and (3) when trade-offs must be made between alternatives and objectives.

When these trade-offs between alternatives and objectives will affect many stakeholders, eliciting their input is effective in helping individuals understand the choices available to them; this enhanced understanding might in turn shape the preferences of decision makers.

Arvai cited his work on point-of-use water treatment options in East Africa as having similar traits to a doctor–patient discussion about treatment options. The community’s question about their water supply was, “What treatment for our water supply will work best both in terms of keeping us healthy and aligning with our cultural values?” To answer this question, Arvai’s team compiled information about the sources of water that people were using and the treatment options that were available. They then met with the relevant stakeholders to present the options, and which ones might best serve their needs.

After some hands-on experience with each of the treatments, the members of the community scored them according to which ones best satisfied their objectives. The results concluded that the most desirable treatment for the villagers was not the one that was being distributed by the aid agencies.

The same type of process, Arvai said, could be used in the context of treatment choices between a doctor and a patient, or between a regulator and a drug maker. A stakeholder elicitation process could produce a list of objectives and performance measures for treatments, ranked against the range of alternatives available. This would allow participants to test-run different scenarios and decide which alternative best meets their objectives.

Such approaches are not new. Arvai cited publications (see Appendix C for additional resources) that explicate several methods by which structured, deliberative processes can combine stakeholder input with analysis. Although structured decision making does not always deal explicitly with uncertainty, Arvai noted that in addition to sensitivity analysis, composite indexes have been developed to assess uncertainty across a suite of attributes. In this process, he said, “tolerance for uncertainty” can be treated as its own objective and included in the assessment of alternatives.

Eliciting Values for Risk Management5

Timothy McDaniels, Professor, Faculty of Science, Institute of Resources and Environment, University of British Columbia, stated that eliciting values for risk management choices is a process of applying structured common sense to complex problems. This includes a reliance on the concept of what is known as decision making under “deep uncertainty,” a condition that exists when the parties to a decision do not know, or do not agree on, the system models that relate actions to consequences. The concept of decision making under deep certainty about the future provides one basis and rationale for statistical decision theory.

Key to eliciting values for risk management is the consideration of alternatives, said McDaniels. Well-managed regulatory approval decisions consider the available alternatives to the proposed drug and the consequences of not approving it. The central valuation question that drives a risk management process, said McDaniels, is this:

Given the estimated impacts of the alternatives on these objectives, is it worthwhile for society to accept the trade-offs in going from “do not approve” to “approve” for one of the alternatives?

McDaniels offered several concepts about the importance of including alternatives in risk decisions:

- The acceptable level of risk for a given decision should be a function of the available alternatives, not a single scientific threshold. Although thresholds can simplify, they also mask trade-offs or disregard them altogether.

- Managing a decision process in order to improve alternatives, or to build in less undesirable alternatives, is one approach to achieving better risk management outcomes.

- When faced with deep uncertainties, learning over time and flexibility to adapt to different contexts are key components of the process that could promote the consideration of robust and resilient alternatives over a wide range of uncertainties.

- Decisions must be made before all uncertainties are resolved; therefore, “surprises” are a potential part of any risk management process.

He discussed several components to the valuation process for managing risk, using the Tysabri case study to note those that he found FDA had

__________________

5 This subsection is based on the presentation by Timothy McDaniels, Professor, Faculty of Science, Institute of Resources and Environment, University of British Columbia.

already adopted successfully. The ideal process, he said, is a legitimate, transparent management process that supports making informed choices among alternatives within an insightful, well-structured framework. This includes both technical (scientific) information and value-based information (preferences) to clarify and examine trade-offs, which should be addressed explicitly and distinctly. In this regard, said McDaniels, FDA is in an enviable position relative to many risk governance bodies. FDA has clear authority, domain expertise, respect, abundant data, flexibility, and the capacity to monitor.

Also, values are context dependent; thus, eliciting values for risk choices can be most effective when focused on a specific regulatory decision process, as does FDA’s benefit–risk framework. In addition, noted McDaniels, FDA makes good use of its advisory committee structure as a forum for combining analysis and reflection on values.

FDA APPROACHES TO DECISION MAKING

In addition to McDaniels’ previous comments on FDA’s decision processes, individual workshop participants also noted a number of FDA attributes and processes that currently incorporate, or could be enhanced to incorporate, the methods and approaches of decision science for making decisions under uncertainty.

FDA Authority and Processes

McDaniels commented that FDA has clear authority conferred on it by statute, with transparent processes allowing for an environment in which informed choices can be made among alternatives within a structured framework. He further noted that FDA has adopted an approach for eliciting stakeholder values through the consultative process the agency is employing in developing its benefit–risk framework. Several workshop participants, including Lisa LaVange, Director, Office of Biostatistics, Office of Translational Sciences, CDER, FDA, noted that FDA has established processes and mechanisms for engaging experts in regulatory decision making, most notably through the convening of advisory committees. McDaniels noted that the advisory panel structure could potentially be further enhanced through a structured or formal attention to stakeholder values elicited through that process.

Bayesian Statistical Methods

Formal Bayesian methods have not been adopted generally by FDA for the evaluation of pharmaceuticals. According to LaVange, however,

there are several possible applications for Bayesian methods to be considered, including safety studies, where evidence accumulates over time; non-inferiority trials, because they call for the incorporation of historical data of one or more comparator drug(s); and antibiotics development, in part because the mechanism of action is more evident: “I can look at a dish of bugs and see if a drug kills them.”

Risk Evaluation and Mitigation Strategy Mechanism

Several workshop participants, including Theresa Mullin, FDA, noted that the Food and Drug Administration Amendments Act of 2007 gave FDA the authority to require a REMS in connection with approval of a marketing application (or later if new safety information emerges). FDA might require a REMS if it determines such action necessary to ensure that the benefits of a drug or biological product outweigh its risks. As outlined in FDA’s Draft REMS Guidance for Industry (FDA, 2009a), REMS could include, as required by FDA, a special medication guide or patient package insert; a communication plan targeted to health care providers; and elements to ensure safe use, including patient registration, physician training, certification, or other monitoring. McDaniels commented that to the extent that the REMS structure provides more approval alternatives (other than approve without conditions/disapprove) and includes ability to learn over time from monitoring, such a system constitutes a valuable tool for applying risk-management choices in a structured format.

Proposed New Regulatory Pathways

Individual workshop participants raised questions about new regulatory approval pathways that could address certain aspects of uncertainty in the drug review process. For example, Special Medical Use (SMU) is a proposed limited-use approval pathway for drugs developed in an expedited manner to meet unmet medical needs in a clearly defined subpopulation. One workshop participant noted that the pathway was proposed in part to take into account that certain severely affected subpopulations with few treatment options might be willing to accept greater uncertainty and greater risk. The SMU regulatory mechanism would limit use of products approved under that pathway to specified populations while requiring additional evidence development and safety surveillance in the postmarket setting prior to the product receiving an unrestricted approval for use in broader populations.

Charles F. Manski, Board of Trustees Professor in Economics, Northwestern University, discussed the role of identification problems in evaluating uncertainty for drug reviews, and the potential for “adaptive

approval” licensing approaches to mitigate them. In econometrics, examining the quality of inferences made from empirical evidence can address one of two components, identification and statistics.

According to Manski, it is an identification problem when evidence wrongly identifies a relationship between a treatment effect and a health outcome. For instance, many issues with trials, such as issues with statistical design, research participant adherence and retention, and extrapolating outcomes from surrogates, could lead to identification problems. It is the dominant source of error in drug approval, he said, and identification problems would persist even if statistical imprecision were eliminated.

Manski indicated that all drug approvals are made with limited data; while unavoidable, this makes regulatory decisions susceptible to two types of errors. Type I, the “false positive” error that occurs when approved drugs are ineffective or unsafe, might eventually be corrected through additional research (assuming the drug gets to market). However, Manski noted, Type II, the “false negative” error of a worthy drug failing approval, could be permanent if the drug is pulled from development and no further data are produced.

Manski suggested broadening the set of approval options beyond yes or no, by empowering FDA to institute what he termed adaptive partial approval, similar in concept to “adaptive licensing” proposals made by others in the field (Eichler et al., 2012). The adaptive mechanism suggested by Manski could allow for earlier approval of a broader class of products than those contemplated in the SMU proposal, he said, coupled with limited use and further evidence-gathering requirements. Limited-term and limited-quantity sales licenses could be granted while Phase III trials are under way, and the duration of Phase III trials could be longer than they typically are at present, enabling measurement of real, rather than surrogate, outcomes. He noted that in this way FDA could make decisions based on outcomes data.

A related idea, Manski added, would be to design response-adaptive trials that sequentially draw participants into traditional RCTs, then allocate new participants based on the health outcomes observed in earlier participants. The objective is to “use the observed response data to adapt the allocation probabilities, so that more patients will hopefully receive the better treatment” (Tamura et al., 1994, p. 768). Manski acknowledged that adaptive partial approval would require a systemic change in drug regulation, raising many issues, including the impact on innovation. Workshop participants also noted that retention of participants in ongoing clinical trials could be undermined by the availability on the market, even on a limited basis, of the product being studied.

This page intentionally left blank.