4

Cybersecurity at Civilian Nuclear Facilities

Key Issues

The key issues noted here are some of those raised by individual workshop participants, and do not in any way indicate consensus of workshop participants overall.

• Cybersecurity refers to the prevention, detection, and mitigation of unauthorized attempts to control or disable computers and electronic control systems as well as protections of imformation in compter databases.

• Cybersecurity for a nuclear facility can be divided into two parts: instrument and control security (ICS), and facility network security (FNS). There are several differences between these parts of security, including different methodologies, mechanisms, and the effect of failure in each domain.

• Cybersecurity is commonly understood to have three attributes: confidentiality, availability, and integrity.

• Security risks cannot be reduced to zero. Managing ICS requires a systematic, comprehensive, and dynamic methodology.

• Every day new viruses, new vulnerabilities, and new problems are found with the systems.

Promising Topics for Collaboration Arising from the Presentations and Discussions

These promising topics for collaboration arising from the presentations and discussions are not those representing the consensus of the participants, but are rather a selection of those topics offered by individual participants throughout the presentations and discussions.

• The people involved in the operation of the plant must be sufficiently sensitive to the various aspects of cyber attacks.

• Traditional methods of security for a computer system do not work in environments that have periodic updates and antivirus software; one cannot run intrusion detection programs because of the very limited processing power. One has to build a weatherproof, robust and hardened system. This is difficult to do and is a major area of concern. Solutions exist, but there is a lot that needs to be done in this area in particular.

• The unknown and unused features of commercial off-the-shelf products can lead to significant vulnerabilities. More work should be done on this.

An Indian Perspective on Cybersecurity

R.M. Suresh Babu began by indicating that he would speak about cybersecurity in nuclear facilities in general, which is a broader area, a bigger picture, than speaking specifically about cybersecurity in civilian nuclear facilities. Indian facilities have a large number of computers distributed across the plants, which perform functions from protection of reactor safety to control functions to information collection from displays, and so on. When these computer systems are attacked or hacked by malicious elements, at a minimum certain functionalities of the plant are affected to some extent, and such attacks can lead to serious accident conditions. Cybersecurity refers to how to tackle such problems and how to protect computer bases against malicious attacks by external elements.

Cybersecurity for a nuclear facility can be divided into two parts: instrument and control security (ICS), and facility network security (FNS). There are several differences between these parts of security, including different methodologies, mechanisms, and the effect of failure in each domain. For example, ICS secures safety and control systems such as the reactor protection system, reactor trip system, and power regulation system. While FNS secures the monitoring network, which basically has administrative and managment functions. ICS is applied right from the intial stages of computer-system development and the control-system development. It goes through design development and operation phases, while FNS is most commonly applied during the operation phase of the plant or the facility.

Cybersecurity is commonly understood to have three attributes: confidentiality, availability, and integrity. The impact of a cybersecurity failure or security breach can range from mild to severe to catastrophic if the safety system is affected by a malicious attack. An FNS security failure can lead from mild to severe effects during which data may be lost or transmitted to external persons. With ICS, the most important attribute is integrity. In other words, if the security function is compromised then a serious situation will result. Integrity of the computer system and the software system is the most important aspect of cybersecurity. The availability of safety functions are the next priority.

In the case of FNS, the attributes in priorty order are confidentiality, protection of data, availability of data, and integrity. There is some overlap betwen ICS and FNS. ICS may use some of the functions implemented in FNS to implement certain security controls, but on the whole ICS has to depend on itself and cannot depend on FNS functions or FNS mechanisms.

Following this overview, Babu went into more detail about the ICS. ICS protects the information and communcation systems against unauthorized modification of its resources or disruption of its services. Modification of resources means alteration of software such that safety functions are not allowed to be executed at the required rate or in the required response time. This differs from physical security which deals with the protection of installations, equipment, buildings, and other physical materials.

Security risks cannot be reduced to zero. Managing ICS requres a systematic, comprehensive, and dynamic methodology. Systematic methodologies, Babu said, should build security features into system design and system development processes. He noted that there have been many times when he has detected security-related problems after deploying a system or software, requiring patches. He noted that this is not a good approach because the patches are never a complete solution. There could be a lingering problem with the system. Therefore, when the system is designed, from the very beginning all security features need to be built it in. Also, during the system development process, system verification should address security vulnerabilities, including insider threats such as an attempt to modify software in the development stages. Such scenarios should be considered during the development process.

Second, Babu said that the methodology should be comprehensive, which means it should cover all aspects of the system and its operating environment. For example, a system that is left to operate unattended will require a completely different kind of approach compared to a system that is well protected, and within a restricted area. This should be considered at the time of system design: What devices are used? What is the connectivity with the external world? All of these issues should be considered during the design phase. Failure to do so allows potential attackers to exploit the system.

The third aspect of managing ICS is that it should be dynamic. It goes without saying that the system must be updated as new vulnerabilities appear. The computer operating system, as well as the software and hardware must be made resistant to new vulnerabilities.

There are three components of ICS:

• Security Control: manages the design, development, and operation of the system and consists of a well-laid out security plan as well as policies and procedures.

• Defense In-Depth: so that a single point failure will not lead to a complete compromise of the most critical system, physically security and

cybersecurity must work together to protect the core safety functions against attack.

• Security Lifecycle: similar to System Lifecycle, and consists of several phases of development and verification between those phases; the emphasis is on security and includes configuration management. How are software and hardware being changed during operation and maintenance?

Babu described a recent incident in Mumbai that occurred while a security update was taking place. When the attack was launched, communications with the test server were brought down at the time that the security levels were brought down. It is, therefore, very important that when any changes are made to the system, all consequences of this change are examined along with what precautions should be taken when that system is not available. Update to the control and design should be based on experience; this is another aspect of the security lifecycle.

Security controls should be implemented after having conducted a vulnerability analysis and impact analysis of the system software. Vulnerability analyses help identify other vulnerabilities of the system and the operating environment, and the impact analysis determines what would happen in the event of a security breech in terms of impact on the reactor or the facility or the safety of the plant. Based on the vulnerability analysis, one builds security controls. One also determines what other appropriate controls should be put in place. For example, if the system has a USB or a serial port, then one has to ensure that they cannot be exploited by an outsider. Security controls have to be put in place either to disable them or to make sure that they cannot be used in a compromising manner. Similarly, one must determine the impact to changes in the system that can be made by one person. For example, if the operator can change certain aspects of the system, which will affect only the displaced material, perhaps a one-factor authentication is needed. This is a common issue that does arise and a common means of addressing it in India. However, if an operator is allowed to change a safety set point, then there should probably be two or three factors of indication. Countermeasures, therefore, are selected based on the impact of security failures. These again come under security control of ICS and are fundamentally different from information systems primarily because these systems are deployed in a place where there is no possibility of periodic updates and other functions that are normally done with a computer system.

Finally, the security controls have to be formally defined in a security plan document. This is absolutely necessary, Babu said, because only a plan document, reviewed by all parties involved, can ensure that all appropriate controls are put into place, including the management operational technique of controls (security assessment certification, training, physical protection, access control, audits, authentication, etc.). Babu underscored training because cybersecurity is an area where sensitivity among personnel is not sufficiently developed. “We have to ensure that the people involved in the operation of the plan are sufficiently sensitive

to the aspects of cyber attacks. Unless that is done, it is still possible that someone could create a nuisance.”

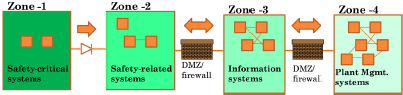

The second component of cybersecurity is what is called defense-in-depth (see Figure 4-1). In systems that use the defense-in-depth approach, ancillary systems in the plant are divided into various zones. The innermost zone contains very few systems and are those which are most critical to safety and security. The outermost zone contains a large number of systems with a great deal of interconnection between them for systems management and for the collection of information to allow management to understand how the reactor is operating, and other important information.

Between the zones, barriers are created and these barriers become more stringent as one moves from outside to inside zones. The information flow is also controlled more strictly moving from outside to inside. As shown in Figure 4-1, the critical safety system will most likely have very few connections with outside systems, and where there are connections they can only be in one direction. Two-way communications are absolutely removed from this particular interaction through technical means. In effect, demilitarized zones are created where the two systems do not directly interact, rather they interact through an agent, which can prevent attempted intrusions from one zone to the end of the zone. Firewalls are created to prevent certain data from flowing from one zone to another zone. This is a typical example of defense in-depth. The idea is that for a safety function to be compromised, multiple failures in these barriers are required.

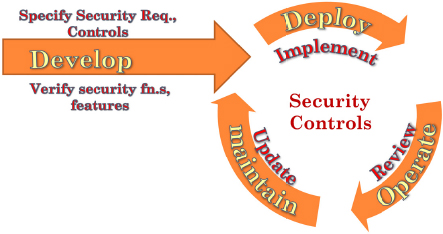

The security lifecycle is similar to a system lifecycle (see Figure 4-2). Specified security requirements and controls are developed in conjunction with the verification and validation processes. This is executed rigorously to ensure that all security controls and features are correctly implemented because one has to consider an insider attack.

If certain functions are not implemented correctly, they can become a point from which an attack into the system could be launched, compromising the system. Therefore, there is a phase where verification and validation have to be done rigorously to ensure that the software system that goes into the plant has been verified at the time of deployment when implementing security controls.

FIGURE 4-1 Defense in Depth for Cybersecurity. SOURCE: Babu, 2012.

FIGURE 4-2 The Security Lifecycle. SOURCE: Babu, 2012.

The security controls could be different at different points during the development phase and during the operation phase. During the maintenance phase, the system is removed from the specific operating environment. When the security controls are deployed and implemented as well as during operation and maintenance, these controls are reviewed and ifnecessary updated.

One fundamental difference is that security lifecycles are proactive systems whereas a normal system lifecycle or a software lifecycle is reactive. In this sense, the changes are normally made in the software or the system when certain bugs are detected or when some improvements are required by the user. But with the security lifecycle, periodic reviews are conducted of the entire situation and, if required, designs or controls are changed to address new vulnerabilities. This all happens because the entire scenario is dynamic. Every day, new viruses, new vulnerabilities, and new problems are found with the systems.

One of the main issues of ICS is that they are embedded systems with very limited processor power, limited memory, and have little or no connectivity to the external world. There may be no human admission interface to these systems. As a result, the traditional methods of security for a computer system do not work in these environments that have periodic updates and antivirus software. For example, one cannot run intrusion detection programs because of the very limited processing power. And once deployed, the system is entirely dependent on itself. That is, it has to manage it on its own. Therefore, one has to build a weatherproof, robust and hardened system. This is difficult to do and is a major area of concern. Solutions exist, but there is a lot that needs to be done in this area in particular. Babu noted that the other issue is that about 20 years ago, they deployed custom computer systems that were developed in-house. In the last 10 to 20 years, they have moved toward a more open system and commercial, off-the-shelf components (COT), such as operating systems or hardware available on the market.

The open system concept and COT is a double-edge sword in that it helps to reduce the development time and reduces difficulties of integration with external systems, and there is a large knowledge base and workforce available. But this also can be used by an attacker to cut down or pierce through the system. From a security point of view, there are two problems with the COTS systems. They may have some unknown bugs at the time of system design or deployment. Maybe the attacker already knows a particular bug and can use it to gain entry into the system. There may be certain limitations, which are not known at the time of deployment of the particular COTS hardware or software. It may have hidden functions, which may be deliberate or isolated. This is one area that creates difficulties for the use of COTS in a critical application.

A second problem is that of unused features. If you buy COTS hardware, for example a CPU, it comes with all CDROM drives, USB drives, and a big protocol with an intercommunication software. One may not use all of these features in a particular system, but they are points of vulnerability. They are points through which an attacker can gain entry into this particular system. Therefore, the problem with COTS is unknown features and unused features, and there has to be sufficient protection against these to use the system in a critical application. These are the two important issues on which further work has to be done in the area of ICS.

The second aspect of cyber security is FNS. The main function of FNS is to provide a secure environment for systems to exchange information. There are a large number of monitoring systems: Data from the plant is collected and accumulated for someone to analyze. The FNS must create a secure environment for the systems to interact, which requires continuous monitoring of network activities.

What are the basic requirements for FNS? The FNS must ensure that communication takes place between trusted entities and if anyone tries to connect a new computer to that particular system, the FNS should be able to detect it and disallow the data from going into the connected, internal system. The communication channels should be secured with the use of encryption methods or other methods to make sure that no one spoofs the system and no one attempts to take the data out of this particular network for malicious use. It should enforce established security policies. FNS should be able to detect and isolate malicious programs, devices, and computers on a particular network. These are the basic requirements of FNS.

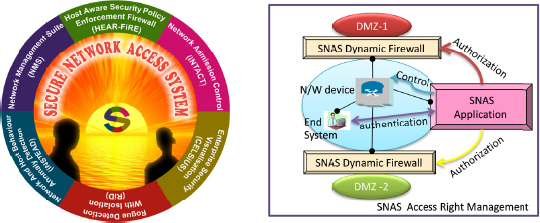

The Secure Network Access System (SNAS), developed at Bhabha Atomic Research Centre, is shown in Figure 4-3. It has several modules; one of which is the network admission control which detects, identifies, and authenticates the end-system and end-network. Unless the system is supposed to be in the network, it will not allow the system to be integrated into the network.

The SNAS does not allow the system to communicate with other agencies in the network and it forces policy compliance. For example, if some aspect is not compliant with the established policies, then it isolates that particular system. The

FIGURE 4-3 The Secure Network Access System. SOURCE: Babu, 2012.

other module is network behavior and anomaly detection. It continuously monitors the network and tries to detect whether there is any malicious behavior in terms of network traffic. If there is a certain increase in the network traffic from a particular node or if certain applications are being spawned by this particular system or if there is a denial of selVice, it tries to look for signatures and behaviors, and tries to isolate those systems.

This is basically for administrators because it allows a network visualization capability whereby one can determine whether or not all systems are connected to the network and what is the state of security is at any given time. The system also allows administrators to know whether the systems are behaving properly or not and if not, they can quickly physically isolate those systems. Visualization is another module that helps implement the FNS requirement.

Finally, another very interesting and important aspect of this system is the firewalls that create barriers between zones. SNAS dynamically changes the rules of the firewalls, depending on the end-system security stage. As long as the system behaves properly, the firewall will allow communication with other systems. The moment that it finds that the security state has changed to an advisable or incorrect state, the SNAS changes the firewall and isolates the system from the particular zone.

On the SNAS console, the intranet is visualized on the screen and one can go into each of the fields, to attempt to determine what other devices are connected to a particular system. It also dynamically displays a particular system if its security status changes through a different icon, allowing the administrator to identify the rogue nodes of this particular system.

Babu concluded by stating that he attempted to demonstrate the important aspects of cybersecurity in a nuclear facility. And also he reiterated the three components of INS and the two main issues on which work is needed going forward in order to tackle and reduce the security risks to nuclear facilities.

A U.S. Perspective on Cybersecurity

Clifford Glantz began his presentation by focusing on the cybersecurity program conducted by the U.S. Nuclear Regulatory Commission (NRC)— responsible for the security of civilian nuclear materials throughout their lifecycle—for all its licensees. Pacific Northwest National Laboratory (PNNL) began aiding the NRC in its cybersecurity work by conducting cybersecurity inspections, visiting four nuclear power plants, and devising preliminary guidance that the nuclear power industry adapted for conducting their own cybersecurity self-assessments and for initiating their own cybersecurity programs. That allowed time for the NRC to go through the long rule-making and regulatory processes. Glantz and his team were involved in providing technical guidance to the NRC for the development of the cybersecurity rule and regulatory guidance. Now the NRC is training its inspectors to start inspecting the plants to ensure that they are in compliance with the appropriate rules and regulations.

The NRC rule is only two pages long and requires the power plants to implement security controls to protect their assets from cyber attacks. In other words, to protect nuclear material and also to protect all the systems responsible for providing safety, security, and emergency preparedness functions at the plants.

The plants are responsible for implementing security and have to apply and maintain defense-in-depth protective strategies to ensure the capability to detect, respond to, and recover from cyber attacks.

They have to be able to mitigate the adverse effects of cyber attacks and the plants have to ensure that the functions of protected assets or the security of nuclear materials are not adversely affected as a result of a cyber attack.

So the basics of defense and cybersecurity defense are very similar, if not exactly the same as for physical security. One must be able to deter an attack. One wants to keep the bad guys from even thinking about attacking because it would take too much time and too many resoures to achieve their objective. One must be able to detect an attack in progress so that an appropriate respond to the attack can be launched. One needs to be able to delay the attackers from achieving their objectives, and allow time to respond. One needs to deny attackers from eliminating those critical functions that need protection and from obtaining radiological materials. Deter, detect, delay, deny, and respond are key elements of defense both for physical and for cybersecurity.

In the mitigation realm, one wants to be able to resist attacks, to limit the adverse consequences, and to protect confidentiality, and prevent unavailability and loss of integrity of these critical systems. Likewise, one wants to be able to absorb an attack, to take a punch and, if a failure is inevitable, to fail gracefully so that there is time to respond. Also one needs to have the ability to restore functionality in a timely manner. This is very important for a nuclear power plant.

The NRC’s regulatory guide 5.7.1 covers cybersecurity. It is about 130 pages long. It provides approximately 140 controls, each with their own set of subcontrols, in 18 different areas. They are divided into three

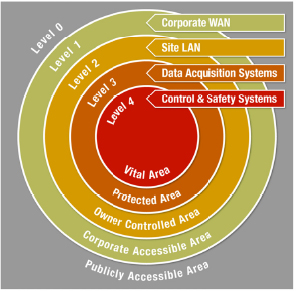

classes; management, operational, and technical. Management involves such things as risk management and system and service acquisition. The operational class covers a whole range of items from developing an effective defensive strategy to defense-in-depth (see Figure 4-4).

Defense-in-depth is multiple layers of defense of a vital area for essential safety and security equipment, a protected area for other systems that are important to operations. There is an outer controlled area, which involves those facilities and assets, which are involved in generating power, for example, when supporting other plant functions. There is a corporate level because most of the plants in the United States are part of corporations that may operate multiple plants so they have several support systems common among their facilities. The publicly accessible area is on the outside. For cybersecurity, this would be the internet, the corporate area would be the intranet, and the company owner controlled area would be a plant network, the protected access area would be critical plant assets, and vital areas-the really essential assets.

Glantz echoed what Babu stated: there are limitations on communication from outside to areas of lower security. From the vital area to the protected area, and from the protected area to the owner controlled area, the NRC requires only one-way communications. Data can only flow outwards. You cannot have information or instructions flowing inward to those particular systems.

It is critical that cyber and physical security zones match up, Glatz said. The NRC is not very clear on this currently, but soon there will be a lot more clarity. One of the concerns is that a facility may have a critical asset with excellent protection, digital protection, but that is located in a place where an unbadged, uncleared staff can get at the digital assets. It makes no sense at all to have A-I cybersecurity and level C or D physical security for that particular asset.

FIGURE 4-4 Full Range of Cybersecurity Measures. SOURCE: Glantz, 2012.

The other areas of operational control include awareness and training, configuration management, maintenance, and, very importantly, media protection. Also important are contingency planning, incident response, personnel security, and physical and environmental protection, which involves maintaining the critical infrastructure that the digital devices need to survive. Electricity, heating and cooling, appropriate fire protection and other support systems cannot be overlooked.

Appropriate security awareness and training is essential, as illustrated by what appears to have occured at the Iranian nuclear facility. Appropriate media protection is also necessary. Media from one level should not be moved into another, higher level of security. Personnel security is another issue. When one hears about the insider threat, one often thinks about malicious threats. What is the insider doing with the intention of causing harm? That is very important, but there is also the non-malicious threat posed by insiders. Effective cybersecurity programs must protect from both malicious and non-malicious insiders that can result in adverse consequences.

Finally, the third class of controls is the technical class, including access control, audit and accountability, identification and authentication of users, communication protection, and system hardening.

Glantz also discussed the importance of integrating physical security and cybersecurity. Before someone breaks into a facility, they will most likely conduct something along the lines of a cyber attack to disable the physical security system. If one is planning an attack against a fortified facility, what better way to defeat the digital physical security controls than through a cyber attack, rather than just a purely physical attack?

This just illustrates that there are many attack vectors to get to assets that an attacker might want to reach. The attacker wants to maximize the probability of success while minimizing the possibility of getting caught. So they are going to take the path of least resistance that achieves their objectives and it doesn’t matter whether that involves a physical attack, a cyber attack, or a combination of both.

The basic concepts of physical security and cybersecurity are essentially the same. For example, with physical security there are fences, for cybersecurity there are firewalls. Basically, they fulfill the same function. For physical security, there are perimeter patrols to ensure that the fences are doing their job in keeping adversaries out. For cybersecurity, monitoring of firewall logs is needed to ensure that attemped break-ins are detected, and that they are not given unlimited time to defeat the defenses. Keys and passwords are analogous, both use intrusion detection methods, both physical and cyber, and both have incident response teams.

The protection systems around nuclear facilities, be they power plants or any facility involved in the fuel lifecycle, have on array of digital controls that are part of this system. In the United States, facilities have various alarms and detection operating between fences. There are admission stations into the facility where access is computer controlled. There is a database that is accessed to

ensure that each person entering has appropriate credentials to enter the facility or the particular security zone.

Physical security programs are increasingly using more digital devices to improve their productivity and efficiency, but one must be aware that this introduces new vulnerabilities into the system. Physical security systems require cyber security to ensure the availability and integrity of their functions. They need to avoid the spoofing of surveillance cameras and other monitoring equipment. Those cameras should show what is actually going on and not what was going on 30 minutes ago or the previous day. One must also avoid alarms being digitally silenced, and alterations to access control data bases and interference with security communications. Physical security systems need to be protected from both physical and cyber attack. Digital systems require physical protection. Again, they need to be held to the appropriate security level to avoid theft or physical damage of critical digital systems, or unauthorized access to those devices or the disruption of their critical infrastructure.

Many vulnerability assessments involve exclusively physical examinations of the physical domain. They are often done by physical security experts. In the cyber domain, cybersecurity experts will examine what is going on to assess vulnerability, but very seldom are total security assessements conducted, during which both the physical security and cybersecurity are examined in the areas where they overlap. There is an important need to integrate these physical and cyber vulnerability assessments and to have the physical security experts and cybersecurity experts working together to develop an effective security system. We need to defend against physical attacks, cyber attacks, cyber-enabled physical attacks, and physical-enabled cyber attacks.

PNNL is developing a tool called Pack Rat. Pack Rat stands for “physical and cyber risk assessment tool.” It uses some of the quantative tools widely used for physical security risk assessments and adds a cyber component. Instead of just looking at the physical security pathways and the time delay provided to the defense force to counteract a physical attack, it also looks at the cyber pathway as well, of entering and attacking physical security systems, the time delay that those cybersecurity measures provide and integrates that with the physical security for a more coherent picture. This is a very interesting tool that is in the final testing phases and will soon be a candidate for commercialization. This is an example of the tools that are needed to integrate physical security and cybersecurity.

DISCUSSION

During the discussion period, a participant noted that one of the statements that U.S. President Barack Obama made in Prague was that the clear and present danger today is nuclear weapons falling into the hands of terrorists. That was his nightmare and as it turns out, it has been a nightmare for India planners for some years now as well. Forensics and cyber/physical security are areas of cooperation

where those at the workshop would do well to note and perhaps put down as a suggestion for future engagement. There have been reports that the security systems of India’s neighbor, Pakistan, are top-notch. But that is not the problem in Pakistan. Who are the people in control? What is their loyalty? Did they not indulge in one of the largest nuclear black markets in the world that anyone has ever seen? Those traces of highly enriched uranimum found in the centrifuges in Natanz are indicative of the problem. If the United States and India could have solid data sharing on this issue, it would be a great step to bring about an end to Obama’s nightmare the participant said.

Glantz noted that there is a dramatic change in threat vectors. The capabilities of potential adversaries are advancing at a faster rate than our ability to defend our systems. This emphasizes the need to have defense-in-depth with multiple layers of defense available to protect the systems. Hopefully the vendor communities are starting to slowly make security an important element in their control systems. But the digital control systems live for a long time in facilities. They are very expensive to replace and so the critical infrastructure has to live with these vulnerabilities for the foreseeable future and we have to take those defense-in-depth steps necessary to deny attacks from actually reaching these vulnerabilities.

Babu added a few points. There was a time when safety systems were all hardware. Then, people began introducing digital computers into safety systems. Now there is an Indian regulatory requirement that critical safety systems completely run on a computer, one must have a parallel system, which would be completely different. Would it not be subject to the same common requirement as a digital system for the most critical safety functions? In one sense, Indian regulatory guidelines and designs ensure that nothing catastrophic will happen in case of a cyber attack.

Further, as people become more and more aware of cyber attacks, controls are being built into digital systems. For example, the systems that are made in India, even though they may be customized systems, may have unnecessary features, functions, and devices, are now becoming more and more resistant to attacks.

A workshop participant asked about cyber offense as a weapon. Should we not also take measures to protect ourselves? Shouldn’t we look at cyber offenses as well?

In response, another participant said that if one is threatened by nuclear weapons, surely one of the weapons in a country’s armory should be cyber weapons. Law is not a problem because if a country is attacked it has the right to self-defense.

Glantz noted that attribution of a cyber attack is difficult. One can suspect where the attack comes from, but it takes a lot of time for digital forensics to determine with close to absolute certainty, where it came from, if it is possible at all to know. There are instances where someone mounted a cyber attack against a country and disguised it so that it looked like it was from their adversaries, and therefore used it to provoke escalation of a conflict between the two adversaries.

Therefore, like any offensive weapon, one has to be very careful about how it is used. There is the temptation for political leaders to respond to a severe cyber attack in kind, and do it before they have full information as to where that attack may have come from.

A workshop participant asked whether or not a cyber attack like the kind that happened with the Stuxnet it is an act of war? Does international law recognize this as an act of war? Right now it does not, although it should, the participant said, because attacking an oil installation with bombers is an act of war. It is time to modify international law such that these acts are considered an act of war. There is the problem of forensics, but in the case of Stuxnet, everybody agrees on the origin. It is a straight act of war. Why isn’t the international community able to do something about setting up a framework to make these acts of war such that retaliation is allowed by international law? Another participant added that it was declared an act of war in June 2010 by the United States—not Stuxnet but cyber attacks. Glantz noted that he does not think there is a limitation on nations working together to develop appropriate responses for cyber attacks or to work on defense. His only caution is that unlike an attack from a marked aircraft, where you know right away who is attacking you or whose missles are incoming, where you can track on radar where they are coming from, a cyber attack comes and it is written in computer language and it spreads around the world even though it is targeted against one particular system or one particular country. Stuxnet was found in computers all over the world, including in India and in the United States. Stuxnet would wake up and look around and say, where am I? If the local language wasn’t Farsi it shut itself off. Then it would wake up and say, well it is Farsi, but it looks like I am in a water plant and it would shut itself off. It was only looking for one location and one type of digital controller and then it did its work. It is very important to have the correct attribution, which is extremely difficult. We could have escalating conflicts all over the world that are starting up not because some country actually attacked another, but because some other country disguised it to take advantage of a situation, that conflict between other countries.

A workshop participant stated that the topics of cyber warfare and cyber defense are very modern topics. It is indeed an area in which individual nations, let alone the community of nations, have yet to really formulate the rules of the road, the rules of engagement, and so on. But clearly, basically all nations recognize that there is a need to do so first internally and then internationally. So it is just the beginning of an effort that is necessary and that necessarily takes time. People in the United States are trying to consider these issues at the government and business levels. The U.S. National Academy of Sciences has conducted a few studies in this area, one which was published three years ago. We are really just beginning to formulate some of the questions having to do with cyber attacks.

The point about attribution cannot be overemphasized, and quite frankly, a standard mode of operation is for an attack to be perpetrated through another computer system, stated a workshop participant. Speaking completely hypo-

thetically, even if a computer system under attack can recognize where the attack is coming from and go after the attacking computer, the owner of that attacking computer may have no idea that their computer is doing the attacking. So to the degree that one could isolate or quarantine that computer system, that in fact is an attack on it. From the owner’s point of view, the participant noted, that is an attack. There are some subtleties here that really have to be thought through as to what is considered legitimate defense and how to deal with this internationally, and what kind of agreements we have. This is urgent, everyone realizes this, but it is really not simple. It is a very subtle and complicated issue. In the United States, there is quite a national tension between the government views of what needs to be done for protection, which may include what is viewed as an attack, versus for example, the business community’s views of their needs for structure, let alone what the public at large feels are our collective needs. This is an area where we could have very productive, intense discussions between the United States and India.

A participant asked whether, as part of defense, it is possible to develop any special computer languages that are more resistant to such attacks? Is it part of the program by any chance?

Babu noted that actually it doesn’t depend on the language. It depends on the programability of the computer itself. That is where the hole is, so, yes, by using a special language, maybe one can build that security hole in the software itself. Maybe you can design a language. But there are still hardware issues, such as a USB drive in the system. One has to take care of the operating system, to harden it so that vulnerability points cannot be misused by anyone. There is a combination of internet software, which runs in a computer including the operating system, the drivers, and the appliction software based on a language. It is very complex.

A participant asked Glantz to clarify the implementation of the NRC cybersecurity rule at U.S. power plants. Glantz explained that the implementation of the NRC cybersecurity rule and regulation is for all 100 plus reactors in the country. Inspections will begin in January 2013 as the initial implementation of the program. By 2016, it is to be fully implemented and the inspections that are conducted at that point will have full regulatory authority, including the ability to levy fines and shut down plants if they don’t fully comply with their cybersecurity requirements.

He also agreed with other workshop participants about the urgency of the issue, but noted that operating systems in the United States are improving and becoming more secure. There is also the issue of flaws in the actual software that is used on U.S. systems, both digital control systems and other enterprise network systems. If one thinks about some of the large codes used in software packages, they are thousands, hundreds of thousands, million of lines long. Because they are developed by human beings, they have design flaws and coding bugs in them as well. That means that no matter how much we have security in mind when that software is written, we can never be 100 percent sure

that there is not a vulnerability and that a skilled hacker, a skilled attacker, can not take advantage of a vulnerability. There is always that possiblity.

A workshop participant asked Babu about a system as it is being developed. He replied that, yes, when security is built in up front, one sees many malfunctions, and there are advantages of not using such systems. When one considers where the attack vectors are for example, an attacker wants to exploit whatever is possible. The attacker is looking forward, for openings. A defender is looking at the past, where an attacker has gone, where the attacker has exploited.

Generally, security and cybersecurity are not thought to be tenable. It is not something you can take for granted. We can say we have closed all attack vectors, but what about the people who are involved in the development? When they go, where do they go? What happens to them? Their knowledge goes with them. Is it possible that someone could have no product with him but has knowledge that can be exploited?

Babu replied that, yes, this is a hot topic, involving insider attacks and outsider attacks, and the most fatal one is the combination of insider and outsider attacks. They are aware of this kind of threat. All software is reviewed by a third party. They have teams who double up intrusion-attack test cases where they try to inject malicious software into the operating systems. Third party verification is a very important part of the qualification process. When a third party looks at it, they may have a completely different perspective on how the system can be exploited.

As far as an insider attack or the knowledge base that leaves is concerned, it does not create much of a problem as long as one ensures that the systems are untamperable. For example, the first software base was from India, and the system cannot be tampered. The software cannot be changed. Even if I want to change it, it is not possible. So as long as one takes care of the security controls and puts in countermeasures, considering the possibilities of attacks and other possibilities in the future (how the system will be used), and as long as they are reviewed by third parties, one can be quite sure that the risk involved will be reduced.

It is an evolving technology, and an evolving methodology. There is no final answer. There is no full-stop in cybersecurity. One must now think about how people have developed the technology and are going to exploit it in the future. The lifecycle must be continuously reviewed and countermeasures put in place at appropriate times.

Paul Nelson asked if there is some limit to the solution by means of adding more layers? For example, as one adds more layers, the number of pathways through the system tends to increase exponentially with the number of layers and that would make verification validation studies more difficult. Is that an issue? Is this a realistic issue in current practical systems?

Glantz replied that the five-layer system developed with the NRC seems to make sense from an industry or plant-operation standpoint, based on the communications that they have to have and the different levels of trust between

those various types of communication. Clearly if one has more layers than one needs, this is economically inefficient. All of the cybersecurity controls tend to have their draw backs. One of the controls is conducting intrusion detection, which is valuable, but then one has to think about communicating that intrustion detection information back to security specialists, which opens up potential new lines for security.

Some corporations that opeate nuclear power plants have decided that the matter is most efficiently handled by people at the corporate office. So now these people at the corporate office have access to firewalls and intrusion detection systems that are in the inner layers. There are active discussions going on about that right now: what is acceptable and what is not acceptable. As security is added, be it layers or something else, one has to be very mindful of the vulnerabilities that such security control may introduce into the system.

A workshop participant asked to clarify whether or not an attack can happen during the time the security update is occuring. Is the attacker conscious of when the security update is being done or does it mean that the attacker is looking to attack all the time? Babu replied that this happened in Mumbai when they were trying to update their software to introduce more antivirus capability. While updating, what happened is the security level was brought down and an insider logged on at that particular time between 11:00 and 11:30 pm. With this information an external attack took place. The most fatal one is where an insider collaborated with an external attacker and logged on and then launched the attack. It becomes extremely difficult to track in this case.

Glantz replied that one of the major issues is where a good system is built with firewalls in the right place and an appropriate rule set, but they do not invest in actually looking at their firewall logs on a regular basis. Maybe they will do it every 30 days or 60 days or maybe they will give it a superficial look. A cyber attack could be an ongoing, non-stop, 24/7, automated attack, just waiting for that security level to drop or a vulnerability to show up that it can take advantage of. Those attacks are ongoing all the time. If attackers suspect that there is a vulnerability, they will keep pinging at it until defenders detect it and do something to stop that threat vector from going forward.

A participant picked up on the other aspect of that question: How well or poorly can one authenticate the updates themselves? It seems that the updates could be a pathway into the system that might not be checked. Babu replied that normally updates come with digital signatures. There are ways to authenticate that it is coming from the right source. That could be a vulnerability point, but there are methods to take care of that.

This page intentionally left blank.