Improving Data Collection Capabilities and Information Resources

Simply collecting data in disasters often presents its own set of barriers. Sometimes the data just do not exist, or tools to collect information have to be recreated or are too clunky for mobile research studies, or curated databases are not made available to open audiences. To address pieces of this, data collection could often be legitimately framed as disaster epidemiology, with an expected impact on public health practice, which may remove some of the administrative challenges often encountered. In this section, participants explored new data collection tools, strategies, and infrastructure needs across many sectors to enable effective and accessible data sharing and field implementation.

To open the discussion on data collection, Steven Phillips, associate director of NLM, shared several examples of information field tools developed by the NLM Disaster Information Management Research Center and in use in disasters. He described a few quick tools they have been tracking that would assist research responders, sometimes in austere environments, in data collection. One is a digital pen that writes like a regular pen but also stores the information that is written (e.g., on a triage form) and sends the information to wherever it needs to be (e.g., the hospital). Another example is a “lost person finder” that simply uses a smart phone camera as a tool to upload photos to an online bulletin board so family and friends can locate each other. Radiofrequency identification tags are used to track patients, equipment, and tools. Responder guidance tools and disaster-related apps are also available to assist first responders, such as the Wireless Information System for Emergency

Responders, Radiation Emergency Medical Management, and Chemical Hazards Emergency Medical Management.1

NIH DISASTER RESEARCH RESPONSE PROJECT

Aubrey Miller, senior medical advisor at NIEHS, expanded on the DR2 project discussed previously by Birnbaum. He reiterated that the project was initiated by NIEHS and NLM to help address the slow deployment of research that leads to the loss of perishable data. Creating a central repository of standardized tools and developing easy-to-use preset protocols for researchers to use during disasters are two main priorities as this project continues to develop. This standardization of tools and protocols will assist both the research teams conducting the study as well as the communities where the research is being performed so they do not have to adapt to new technologies and systems each time a new team wants to collect data.

The first part of the DR2 project is the creation of a central repository of publicly available data collection tools that could be used to help establish early baselines and cohorts for research. Such tools could include surveys, questionnaires, implementation guidance, forms (e.g., clinical testing, consent), and research protocols. Following a comprehensive search, more than 400 of the research tools identified were chosen for evaluation, Miller said, and about 200 were selected for initial inclusion in the database. The repository, housed on the NLM Disaster Research Responder website, is intended to be an easy-to-use, interactive site where researchers can find tools to support research response. The searchable repository will also include metadata about the tools (e.g., ease of use, duration, number of questions, languages the tool is available in, history of use, points of contact for the tool, references, etc.). Ultimately, there could be “drag and drop” type functionality to create new tools specific to a researcher’s needs and have validation. Miller also noted the intent to have preapproval by the NIEHS IRB and the Office of Management and Budget (OMB), to the extent possible, for use of some of the low-impact research tools (e.g., tools to measure respiratory impacts or eye, ear, nose, or throat irritation).

Another aspect of the project is developing the capacity for rapid data collection. NIEHS is working on how to deploy intramural clinical

_______________

1For more information on all of these tools, see http://disaster.nlm.nih.gov/dimrc/toolsnlmdimrc.html (accessed November 3, 2014).

program assets, with support from contracting capabilities, to collect baseline and early data (e.g., questionnaire information, biospecimens, perhaps environmental samples). Part of this rapid data collection capability is the development of a “plug and play” research protocol, Miller explained, that would have preexisting IRB approvals and that could then be sent back through the IRB for a quick approval.

One of the next steps in the DR2 project is engaging a broad array of NIH intramural and extramural researchers, stakeholders, and consortia in an environmental health science “research response network.” This national network would help develop and prioritize tools and training materials, help to evaluate and improve research response concepts, and foster wider participation among the environmental health community (including citizen scientists). Other ongoing activities include training and exercising the tools and implementation plans (e.g., the tabletop exercise conducted in Los Angeles).

DISASTER EPIDEMIOLOGY: APPLIED PUBLIC HEALTH INVESTIGATION AND RESEARCH DURING DISASTERS

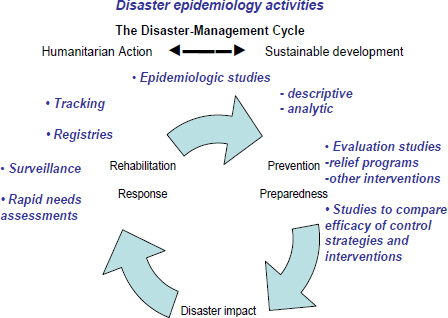

Michael Heumann of Heumann Health Consulting and a consultant for the Council of State and Territorial Epidemiologists (CSTE) provided an overview of disaster epidemiology and shared examples of how a variety of standard epidemiological methods are being applied in the public health response to disasters and disaster research (see Figure 6-1).

Heumann defined disaster epidemiology as applying the tools of epidemiology to assess short- and long-term health effects of disasters, and to predict the consequences for future disasters. It is an applied public health practice that is closely linked to disaster research. The goals of disaster epidemiology are to prevent or reduce deaths, illness, and injuries caused by disasters and to provide timely and accurate health information for decision makers. Heumann suggested that disaster epidemiologists should be research partners and providers of some of the baseline data that disaster researchers seek. Aligning public health practice and research will be important as disaster epidemiology becomes more common, and stronger partnerships can help both medical and public health responders and researchers achieve their goals. Disaster

FIGURE 6-1 Disaster epidemiology conceptual framework describing the applications of epidemiology in the disaster management cycle developed by the Council of State and Territorial Epidemiologists in collaboration with the National Center for Environmental Health at the Centers for Disease Control and Prevention.

SOURCE: Heumann presentation, June 13, 2014.

epidemiology also seeks to improve prevention and mitigation strategies for future disasters by gaining information for response preparation.

Disaster epidemiologists face a variety of challenges during a disaster, many of which are related to collecting data. There is often an absence of baseline information, denominator data are difficult to obtain, electronic health data might be limited and medical or death records might not indicate disaster-relatedness. Infrastructure damage (e.g., power, phone, and Internet outages), logistical constraints (e.g., environmental hazards, blocked roads, fuel shortages), and competing priorities also impact disaster epidemiology.

Heumann stressed the importance of integrating disaster epidemiology and accompanying research across disciplines (e.g., acute diseases, chronic diseases, communicable diseases, environmental and

occupational health, injury assessment) and collaborating with partners in public health, hospitals, academic partners, industrial hygiene and safety professionals, emergency managers, responders, volunteer organizations, and the community.

Disaster Epidemiology Resources

As a partner discipline, disaster epidemiology has a variety of resources to offer. Heumann gave an example from a recent disaster for each type of resource:

- Rapid needs assessments (e.g., assessment of community public health needs after a massive Texas wildfire).

- Shelter surveillance (e.g., for shelters set up after Hurricane Sandy, monitored for outbreaks, illnesses, exacerbations of chronic conditions).

- Morbidity and mortality surveillance (e.g., tracking in Moore, Oklahoma, after 2013 tornadoes).

- Responder health and safety surveillance (e.g., roster and track >55,000 workers during Gulf oil spill and response).

- Descriptive and analytic studies (e.g., description of injuries and fatalities resulting from fertilizer plant explosion in West, Texas).

- Evaluation and impact studies (e.g., evaluation of long-term community recovery after Hurricane Andrew).

- Registries (e.g., post-9/11 WTC registry created to monitor health effects of survivors).

Heumann highlighted three specific examples of disaster epidemiology resources, all of which were developed by CDC and could be mobilized quickly after a disaster if needed. CASPER2 is an epidemiological technique designed to provide rapid and low-cost, household-based information about community needs in a simple format for decision makers. The CASPER methodology involves a two-stage probability sample using 30 clusters based on Census blocks and randomly choosing seven households per block to participate in a household-level interview. Data are then weighted to adjust for non-

_______________

2Also discussed by Lakey in the panel discussion on Partnering with the Community to Enable Access and Baseline Data.

random sampling, and statistically relevant population-based estimates are obtained. Heumann noted that reports are generated within about 72 hours of data collection and shared with partners (including the community where the data were obtained).

ERHMS3 is a health monitoring and surveillance framework for protecting responders through all phases of a response. Heumann noted that the work done in the predeployment phase is similar to the work already being done by fire departments, hazmat teams, and EMS units in terms of training and credentialing, although the documentation is different. ERHMS data are entered into a larger database that is part of the deployment database. Thus, the ERHMS system can track and roster responders during the deployment phase and conduct surveillance and monitoring for exposures and health effects. All of this information is analyzed in the postdeployment phase, which also includes follow-up with responders about their experiences, referral for additional follow-up as needed, or enrollment into medium- or long-term surveillance for delayed adverse effects.

The Assessment of Chemical Exposures program, developed by the Agency for Toxic Substances and Disease Registry, provides training in disaster epidemiological investigation of a chemical incident, technical assistance, and a toolkit of resources to guide local authorities in response. The toolkit includes standardized materials, such as modifiable survey forms, consent forms, a medical chart abstraction form, an interviewer training manual, and databases.4

Disaster epidemiology is now widely practiced, Heumann said, and most local and state health departments carry out some form of disaster epidemiology. CDC deploys field teams, on request from local and state health departments, to provide technical assistance or to lead a response activity in major incidents. The need now is to develop stronger linkages and alignment between public health practice and research. A critical aspect of disaster epidemiology response and research is partnerships, Heumann said. In particular, public health has to be part of the ICS. Disaster epidemiology is a core tool that public health brings to the table, he said. There must also be integration of local, state, and federal engagement. Other key partnerships are between health departments and academia, between applied public health (including epidemiology) and research, and between disaster epidemiology and social science research. Heumann noted that disaster epidemiology data collection does not

_______________

3Discussed by Howard in the plenary session (see Chapter 2).

4See http://www.atsdr.cdc.gov/ntsip/ace.html (accessed September 30, 2014).

require IRB approval, and a key factor in making the data useful for researchers is deidentification and anonymization.

THE NATIONAL EMERGENCY MEDICAL SERVICES INFORMATION SYSTEM

Gamunu Wijetunge and Ellen Schenk of the Office of Emergency Medical Services at the National Highway Traffic Safety Administration (NHTSA) provided a brief overview of the federal role in supporting the development of EMS and described NEMSIS and its role in supporting preparedness planning and research.

NEMSIS

NEMSIS is a system for standardizing EMS patient care data collection across the United States by promoting the use of standard definitions, data formats, and data reporting, Wijetunge explained. NEMSIS currently includes standard definitions and formats for more than 400 data elements (version 2). When emergency medical technicians (EMTs) and paramedics treat a patient at the scene of an incident, information about the incident and the care provided is documented on an electronic patient care report (EPCR). The data elements in that EPCR come from the standardized NEMSIS dataset. There are 83 specific national elements within the larger national dataset that are reported to the NEMSIS Technical Assistance Center, housed at the University of Utah, to populate the National EMS Database. In addition, states and localities select additional elements to collect to stay at the state and local level based on their data needs. Wijetunge noted that 47 states and territories currently submit NEMSIS-compliant data describing EMS events to the National EMS Database, which now contains more than 30 million patient care records.

Using NEMSIS in Research

Schenk presented several examples of how NEMSIS could be used to conduct research and obtain information during disasters. EMS dispatch data in NEMSIS could be used to monitor requests for emergency services realtime during a disaster (e.g., during major flooding) and to prepare the emergency response for similar disasters in

other areas. During the 2009 H1N1 pandemic, NEMSIS data showed the number of people in Florida reporting that they were sick to an EMS dispatcher. A statistically significant temporal pattern was observed, Schenk said, and Florida public health officials could watch this information in real time and respond to the event. Other geographic areas with similar demographics to Florida (particularly with a large percentage of the population at high risk for flu) could learn from the trends in Florida to prepare for responses to future flu pandemics.

In a more detailed example, Schenk and colleagues used data from the NEMSIS National EMS Database to characterize and estimate the frequency of mass casualty incidents (MCIs) occurring in the United States in 2010, as reported by EMS personnel (Schenk et al., 2014). Of the roughly 9.8 million EMS responses in the 2010 database, 14,504 (0.15 percent) were recorded as MCIs. Among these entries, it was estimated that there were nearly 10,000 unique incidents and 14,000 unique patients, which translated to an observed rate of 13 MCIs per 100,000 population and 1.7 MCIs per 1,000 calls to 911. Overall, Schenk said, the study found that MCIs are smaller in scale and more frequent in nature than expected and that response delays were reported to be more common for MCI EMS responses than for non-MCI responses. Schenk is conducting further research on factors that could explain the response delay, as well as looking into perceptions of EMS personnel regarding a response being an MCI. She noted that the study also shows some of the limitations of the current infrastructure of NEMSIS for providing information on disasters. Not all patients involved in a particular MCI may be documented, because some state and local EMS systems have protocols that permit EMS personnel to reduce the time devoted to patient documentation and tracking during larger, more complex incidents. Thus, NEMSIS currently provides fairly accurate information on trends and percentages as well as the count of events, but not the absolute count of patients. Another limitation is that NEMSIS is not currently a population-based, or nationally representative, sample, but this improves every day as more states and more agencies submit their data to the national level, Schenk said. Also, the subjectivity of the NEMSIS definition of MCI provides both opportunities and challenges for conducting research.

In closing, Schenk encouraged participants to consider EMS as a valuable source of data for conducting research and obtaining information during and after disasters and to explore NEMSIS for utility

in their preparedness planning and research efforts.5 NEMSIS can mitigate some of the common challenges of conducting disaster research by providing baseline information, the capacity to conduct longitudinal health assessments for high-risk groups, and deidentified data.

DISASTER RESEARCH AND RESPONSE: APPLIED PUBLIC HEALTH PERSPECTIVE

CDC’s National Center for Environmental Health (NCEH) is the lead CDC center in responses to natural, chemical, and radiological disasters. The Office of Public Health Preparedness and Response provides overall coordination and support of CDC activities and funds state and local capacity building efforts, and the Center for Surveillance, Epidemiology, and Laboratory Services houses the BioSense public health surveillance system.6

The Health Studies Branch of NCEH focuses on building public health capacity at the state and local levels, providing assistance and emergency response during events, and conducting research on risk and protective factors, said Lauren Lewis, chief of the Health Studies Branch at NCEH. The center obtains data in the course of providing technical assistance to its partners, including state and local public health offices and nongovernmental organizations (NGOs), for the primary purpose of public health decision making. NCEH publishes much of the data that are collected in the course of providing a service to facilitate additional research.

Lewis provided several examples of how data collected by NCEH inform public health. For example, NCEH conducted mental health assessments following the Deepwater Horizon oil spill and identified a need for increased mental health services in the region. Using that data, impacted states were then able to obtain funding from BP to increase mental health services. Following the tsunami in American Samoa, NCEH assisted with clinical surveillance and was able to identify injuries as a priority. In addition, surveillance did not detect outbreaks of infectious diseases, and this information was used to combat rumors, Lewis said.

_______________

5See http://nemsis.org (accessed June 12, 2014).

6See http://www.cdc.gov/biosense (accessed June 12, 2014).

Coordinating Across Organizations for Surveillance

NCEH works closely with the American Red Cross and has developed standardized morbidity and mortality data collection tools for the Red Cross volunteers to use in the course of their activities. Data collected are sent to CDC for analysis and are reported back to the Red Cross in the form of information they can use. For example, NCEH worked with the American Red Cross during the Alabama tornadoes in 2011. Workers collected standardized information when they provided services to families of decedents, and NCEH analyzed the circumstances surrounding the fatalities. Women and the elderly were identified as the highest risk groups, and most people died in single-family homes, unexpected findings that have generated much discussion, Lewis noted.

NCEH also partners with the American Association of Poison Control Centers and conducts surveillance of the National Poison Data System. NCEH monitors calls to all 57 U.S. poison centers and receives alerts when there are anomalies in the data that might indicate an event of public health significance. This tool is used in nearly every disaster, Lewis said. For example, NCEH tracks carbon monoxide poisonings during disasters that result in power outages. The system is also used to monitor public concerns and push information out to the public. During the Fukushima meltdown, there was a rise in calls related to iodine poisonings, and NCEH realized that people were taking iodine out of fear. In response, CDC issued communication to educate the public.

Future Directions

Response data make very good data for evaluating programs, Lewis said. Response data are useful for comparison in future studies (e.g., initial versus 1-year follow-up) and for generating hypotheses and designing research studies (e.g., high-risk groups that should be tracked). Data collected for public health practice, such as FEMA claims data, do have identifiable information, Lewis noted, and potentially could be used to identify participants for future research. Lewis concurred with some of the challenges of disaster research mentioned by others, including issues related to the IRBs, OMB, and funding for response versus research.

NCEH is now focusing on improving the data collected during a response, not only for immediate public health actions, but also for research. There is interest in an electronic death registry system and how to link death records to an event for disaster mortality surveillance. There

is also interest in using social media to track disaster-related deaths (e.g., online memorial sites, blogs), although the information posted is generally not very specific. NCEH is also working with emergency managers on institutionalizing the use of data from research, particularly social vulnerability tools and information.

A key issue highlighted in the panel discussions is the need for common infrastructure of terminology and definitions, data collecting, reporting of information, and broad dissemination, Steven Phillips reported in his summary of the session (see Box 6-1). The need for a central repository of data was also discussed, as well as a centralized list (website) of data collection and reporting tools.

A participant raised the issue of actually collecting disaster epidemiology data. While the guidance, tools, and standardized templates are helpful, many rural counties have very small health departments and simply do not have the staff to be in the field collecting data. Heumann noted that sometimes the state has expectations of what localities can provide, and there needs to be dialogue or negotiation about how localities can meet those expectations with the resources they have. There is training available in Disaster Epidemiology through the Health Studies Branch of CDC,7 including in-person, long-distance, webinar, and self-study modules. Miller added that in the face of shrinking resources, the federal government can only do so much, and it is time to engage a much broader community to participate in gathering information (e.g., academia, NGOs, community groups).

A participant suggested selecting some sites where disasters are predicted to happen as test areas to validate collecting baseline data before an event (e.g., floods in the Midwest, hurricanes in the East, wildfires in Southern California or Texas). Heumann concurred, adding that much of the data of interest probably already exist, for example, in electronic health records and other systems, the task is to figure out how to harness those data. Lewis noted that the Environmental Health Tracking Network does have the potential to provide baseline data.

_______________

7See http://www.cdc.gov/nceh/hsb/disaster/training.htm (accessed November 10, 2014)

BOX 6-1

Improving Data Collection Capabilities and Information Resourcesa

Challenges and Issues

- Common terminology, definitions, and collection and reporting systems

- Central repository for data (bidirectional)

- Research goals include helping victim outcomes

- Creating partnership between disparate agencies/groups with a common mission; integrate existing networks

Opportunities for Improvement

- List (website) of collection and reporting tools

- Determine “what is out there” that works or might work

- Collect and provide predisaster health information on responders and victims (a universal electronic health record)

Critical Partnerships and Collaborations

- Local, state, regional, and national agencies, with Congress, to enact consensus legislation

- Communities, clubs, nongovernmental organizations, and agencies

- Who pays? (Centers for Medicare & Medicaid Services, private insurers, other partners?)

______________

aThe challenges, opportunities, and partnerships listed were identified by one or more individual participants in this breakout panel discussion. This summary was prepared by the panel facilitator and presented in the subsequent plenary session. This list is not meant to reflect a consensus among workshop participants.

SOURCE: Plenary session summary of breakout panel discussion as reported by panel facilitator Steven Phillips.

Another common theme throughout the presentations was partnerships, and panelists gave more details on their own experiences. One of the challenges, Heumann said, is that partners may feel they have proprietary ownership of their information, and they have concerns about how others might use it. Developing relationships and trust is the key to

understanding and to developing cooperation, he said. Shared goals and objectives, and respect for each other’s boundaries, have to be established and agreed to, sometimes in writing. Steven Phillips said that standards in terminology, data collection, and data reporting could help to eliminate the silos and the territorial boundaries and make partnerships easier to develop, like what is being done through NEMSIS. Miller reiterated the importance of laying the groundwork and connecting with people, helping people to understand that the government is there to help. A partnership has to be mutually beneficial, Lewis said. Often, the research we want to do is not aligned with the missions and priorities of the partners that we need to help collect the data (e.g., clinicians, state and local public health). Clinicians can ask a few extra questions during care to collect data, but how can that also help them in their mission? Wijetunge reiterated the importance of personal relationships with staff at other agencies. Schenk added that the first step is sharing information and getting people to talk to each other. A few involved participants also highlighted that the goal of collecting data is to improve victim outcomes. This involves engaging the affected populations to understand their needs and helping them to understand why collecting this information is important. A question raised by participants was who ultimately pays for improving data collection infrastructure (e.g., CMS, private insurers, other partners), a topic that will be discussed at greater length in the next chapter.

This page intentionally left blank.