Optimization and Control Methods for a Robust and Resilient Power Grid

The third session of the workshop, chaired by Jeffery Dagle (Pacific Northwest National Laboratory), concerned issues related to optimization and control methods for a robust and resilient power grid. Presentations were made by Pravin Varaiya (University of California, Berkeley), Sean Meyn (University of Florida), and Robert Bixby (Gurobi Optimization).

DURATION-DIFFERENTIATED ELECTRICAL SERVICE FOR INTEGRATING RENEWABLE POWER

Pravin Varaiya, University of California, Berkeley

Pravin Varaiya began by saying that there is a crisis facing utilities over the next decade. Currently, consumer demand for electricity is met mostly through base generation (by large, efficient generators that cannot be switched online or offline quickly), to which is added a small fluctuating supply of renewable energy supplemented with reserve generation that can be altered quickly. Typically, he explained, utilities make a profit selling electricity from the base load but incur losses when they have to draw electricity from the reserve. This status quo looks to change considerably by 2020, when both the demand and the proportion of renewables will likely be higher. Varaiya believes the utilities may need to raise electricity prices by that time because the greater use of renewables will lead to less use of profitable base generation, yet expensive reserve generating capability will be needed because of the intermittent nature of renewable sources. Raising prices can affect

the electricity market, Varaiya cautioned, because as prices increase, consumers are more likely to turn to distributed electricity generation sources (such as rooftop photovoltaic panels) to lower prices, thereby increasing the renewable fluctuation in the market and furthering the dependence on reserve electricity to fill the gaps.

One alternative to this approach is to attempt to tailor the demand to match the renewable supply, Varaiya commented. The ideal outcome would be to modulate the demand to align closely with the renewables available and minimize the reserve electricity used. He said this would result in less overall CO2 while enabling utilities to continue to sell a large portion of electricity from their more efficient base units. The flexible demand necessary to make this alternative a reality requires identifying tasks whose power demand can be shifted over time, modulated, or curtailed.

Varaiya believes all consumers have this flexibility, but there are challenging questions: How can the utility ascertain and quantify this demand flexibility? How can the utility extract the flexibility so demand matches the random renewable supply? He proposed that the key is to incentivize demand through product differentiation.

Currently, Varaiya noted that electricity service is a homogeneous product, but there are services to capture different kinds of demand flexibility:

- Priority pricing (Chao and Wilson, 1987), where higher-priority tasks pay more;

- Interruptible electrical power (Tan and Varaiya, 1991), where more reliable service costs more;

- Demand response (FERC, 2011a), where demand reduction below a baseline is incentivized;

- Price-responsive demand (Chao, 2010), where real-time price equals marginal cost;

- Deadline-differentiated service (Bitar and Low, 2012), where deferrable tasks pay less; and

- Duration-differentiated energy service, with fixed power, flexible time, and different durations, where, for example, a consumer can purchase 1 kilowatt for any number of hours within a preset window.

Varaiya stressed that providing consumers with duration-differentiated energy service to meet time-flexible demand requires the use of mathematical models. He discussed a simple illustration of such a model as follows: time is partitioned into slots (usually hours), and energy is partitioned into discrete units. Each task needs, at most, one unit of energy in one slot but can span multiple (sometimes discontinuous) slots. The consumer is indifferent to which time slots the task is assigned. The algorithm then ranks the tasks in order of how long (or how many slots) are required to complete the task; this makes up the demand profile. A re-

newable profile is defined, and the model checks whether the renewable profile is adequate to meet the energy demand needed to complete the tasks. If it is, the model determines how the electricity should be allocated among the tasks. If it is not, the minimum additional energy needed to make the supply adequate is determined. The model needs to characterize the many potential supply profiles that could meet the required demand.

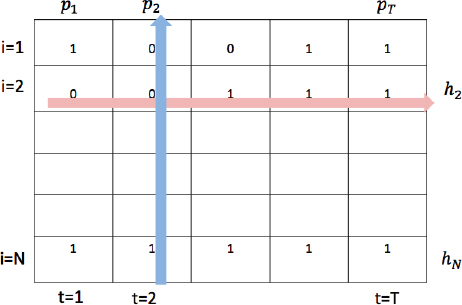

Mathematically, Varaiya said, this can be represented as an “allocation matrix” (shown in Figure 4.1), with the number of columns equal to the number of time slots and the number of rows equal to the number of tasks. Each matrix element is set to either 1 or 0, denoting, respectively, that a task either will or will not receive power during that time slot. Each column sum must be less than or equal to the power available for that slot, and each row sum is equal to the total power that a task requires.

Suppliers, he said, need to determine if the given supply is adequate for the given demand. If it is, they need to determine how the supply should be allotted to consumers; if not, they need to determine how much additional supply is needed to serve the demand. Varaiya explained that this is done by the “longest leftover duration first rule,” where the task with the longest duration left to completion is served first, and the tasks are reordered with each new time slot. An optimization

FIGURE 4.1 Allocation matrix, given the renewable energy supply profile p = (p1, p2, . . . , pT ), consumer demand profile h = (h1, . . . ,hN), and tasks t = (1, 2, . . . , T) for N tasks. SOURCE: Pravin Varaiya, University of California, Berkeley, presentation to the workshop.

problem can determine the minimum additional energy needed to complete all necessary tasks.

Another issue discussed is determining how many units of energy each consumer should receive. Varaiya said there should be an incentive mechanism to reveal how much flexibility each consumer has. The market also has to reach equilibrium on the transaction price for the service provided while also maximizing social welfare.

Social welfare maximization, Varaiya explained, could be described as a convex utility function where consumer satisfaction increases with the number of time slots available. The challenge is getting the market to indicate when consumers’ needs are sufficiently met. He explained that duration-based prices could be used, in which consumers pay based on the number of time slots requested. This allows consumers and suppliers to make purchase and production decisions, respectively, based on prices. The market is in competitive equilibrium, he said, if consumers and suppliers maximize their individual utility and revenue (profit) given particular prices. The equilibrium is called efficient if the allocation maximizes social welfare. Not all utility functions are convex, however, and Varaiya said that determining equilibrium solutions is not straightforward. Research on these solutions is ongoing. Varaiya listed other areas of research, which include considering heterogeneous consumers, examining rate-constrained energy products where total energy and consumption rate are fixed but flexible in time, and factoring in uncertainty.

Varaiya concluded by summarizing that variable supply requires moving beyond the current practice of tailoring supply to load. He said that new types of energy services can be used to tailor demand to variable renewable supply, and services that incorporate demand flexibility can provide the right incentives for consumers. He believes duration-differentiated services can be a valuable tool in the future.

Sean Meyn, University of Florida

Sean Meyn focused on demand-side flexibility for reliable ancillary services in a smart grid, specifically eliminating risk to consumers and to the grid. He also addressed some of the challenges utility and generation companies are facing, such as large sunk costs, engineering uncertainty, policy uncertainty, and volatility.

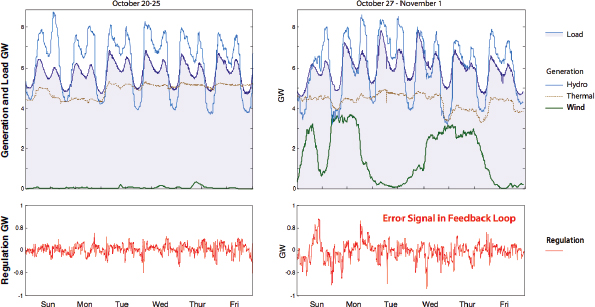

Volatility impacts many areas of grid analysis, Meyn explained, but it is especially important when addressing renewable energy integration. Meyn suggested using a control perspective to manage this volatility, giving the example of electricity generation and load shown in Figure 4.2, which includes the fluctuation of wind

FIGURE 4.2 Generation and load variability over a two-week period (top) and the associated regulation signal (bottom). SOURCE: Sean Meyn, University of Florida, presentation to the workshop.

generation during a 2-week period in a region of the Pacific Northwest. At its peak, nearly 4 gigawatts (GW) of wind energy was generated, but there were long periods with nearly no wind generation. Figure 4.2 also shows the regulation signal used to tell generators to ramp up or ramp down so as to complement the availability of the wind energy. Note that when wind generation ramped up to 4 GW, it caused large oscillations in the regulatory signal. Meyn said that resources are needed to respond to this regulatory signal, and they are called ancillary services. Figure 4.2 reflects that region’s use of hydro-generators as an ancillary service, but the same service could be supplied through other means, such as flexible loads.

Meyn provided the analogy of airplane flight control, which has an automated system and human pilots. He believes that the electricity industry needs to have aileron-like capabilities that can accurately ramp up and ramp down to smooth the variability from renewables such as wind generation. The balancing authority in this case is the autopilot and human pilots; ancillary services (generators that need to ramp up and down) are the ailerons on the wing that help stabilize the plane when it is rolling or banking; and the grid is the mechanical system on the plane that has to coordinate the instructions from the control system with the output of components such as the jet engines.

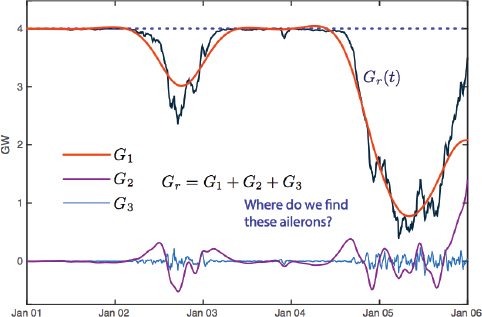

FIGURE 4.3 Example electricity load needed to supplement fluctuating wind energy (black). SOURCE: Sean Meyn, University of Florida, presentation to the workshop.

He provided an example to show how generation from wind could be supplemented to supply a load of precisely 4 GW over 24 hours. The residual generation is divided into the sum of three components shown in Figure 4.3, distinguished by frequency content. He said the lowest-frequency component could be supplied by forecasting supplementary energy needs and smoothing this function to something that a baseload generator (such as coal) could handle. This is denoted as the red G1 curve in the figure. The smaller but more dynamic electricity needs—the purple G2 line—could then be met with a quick-to-respond supplier, such as hydro. With all of these loads taken into account, there is still a high-frequency oscillation—the blue G3 line—that needs to be addressed. Meyn commented that this high-frequency load is difficult to match exactly with a gas generator, for example, but could easily be met with a nickel-metal hydride battery.

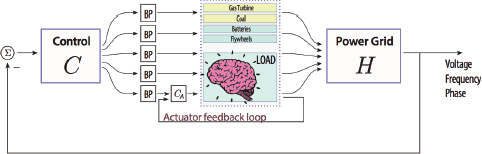

Alternatively, Meyn said, the two higher-frequency, zero-energy signals could be supplied through flexible loads. For example, G2 might be obtained from loads such as irrigation, refrigeration, or district hot water, while G3 could be obtained from modulating fans in commercial buildings (many gigawatts of capacity are available in the United States). Regardless of the resources used, Meyn noted that the control architecture illustrated in Figure 4.4 is one in which all resources work independently to regulate the grid on every timescale.

Meyn emphasized that several considerations must be taken into account to make this acceptable to consumers and to the grid:

- The ancillary services must be of high quality. Does the deviation in power consumption accurately track the desired deviation target? Regulation service from generators is not perfect (Kirby, 2004). For example, coal-fired generators do not follow regulation signals precisely.

- The ancillary services must be reliable. Will they be available each day? While this may vary with time, the capacity must be predictable.

- The ancillary services must be cost-effective. How can installation, communication, maintenance, and environmental costs be managed?

- Consumer incentives for reliability must be effective. Will consumer incentives be consistent and transparent? For example, if a consumer receives a $50 payment for one month and only a $1 payment the next month, will there be an explanation that is clear and acceptable to the consumer?

- Consumer quality-of-service needs must be met. Will the consumer’s experience and service be sufficient and satisfactory?

If done correctly, Meyn said, demand response could satisfy all of these constraints. This could be approached in two ways: like a regulator or like a control engineer. He said a regulator such as the Federal Energy Regulatory Commission (FERC) would partition the needed load for this example by applying traditional generation to G1, FERC Order 745 (134 FERC ¶ 61,187) to G2, and FERC Order 755 (137 FERC ¶ 61,064) to G3. FERC 745 was issued in March 2011 to provide demand response compensation in organized wholesale energy markets, and it was concluded that paying the locational marginal price could address the identified barriers to potential demand response providers. However, Meyn commented that FERC Order 745 was short-lived because of state jurisdiction issues. It was also

FIGURE 4.4 Illustration of control architecture with frequency decomposition for demand dispatch. SOURCE: Sean Meyn, University of Florida, presentation to the workshop.

plagued with controversy over “double payments,” where a consumer was effectively getting paid twice not to use electricity—by not paying for the electricity used and for receiving a payment for not using it. Meyn claimed that FERC Order 745 had to end because its goal of shaving peaks, resolving contingencies in seconds, and smoothing marginal cost is in contradiction to the market’s need to profit. He said there is no business incentive to make investments (and incur sunk costs) if prices are low, as would be the case with effective demand response.

FERC Order 755, Meyn explained, is about regulation services such as batteries and flywheels. In this case, independent systems operators (ISOs) and regional transmission organizations are required to pay regulation resources based on the actual amount of regulation service provided. In addition, he said, a pay-for-performance approach acknowledges speed and accuracy. This specifies that there be a uniform price for frequency regulation capacity and a performance payment for the provision for frequency-regulation service, reflecting a resource’s accuracy of performance. However, the definition of performance is not clear. It is currently being interpreted as mileage (the arc-length of the generation curve), but this does not reflect the cost or value of service. Meyn observed that an unanswerable question underlies this discussion: What is the cost and value of regulation? Regardless, so far it appears that this order has been extremely successful at spurring industry to create new products for higher-frequency regulation services.

Meyn presented the test case of a building at the University of Florida that is effectively being used as a “virtual battery” by allowing heating, ventilation, and air conditioning (HVAC) flexibility to provide additional ancillary service—signals similar to G3 in Figure 4.3. He stated that buildings consume 70 percent of electricity in the United States and that HVAC contributes to 40 percent of this consumption. Buildings have large thermal capability, and modern buildings have fast-responding equipment (e.g., variable-frequency drives) that Meyn said allows them to be ramped up or down rapidly. In this Florida experiment, he explained that the airflow rate in the building was modulated by 10 percent up or down. This modulation—for a building of 40,000 square feet with three air handler fans each operating on a 25-kW motor—achieved a regulation reserve of more than 10 kilowatts at the “cost” of a temperature deviation of less than 0.2 percent.

This example, Meyn explained, is illustrative of the tens of gigawatts of reserve available from commercial buildings in the United States. He said these buildings are well suited to balancing reserves and other high-frequency regulation resources, and this reserve source functions better than any generator. Meyn noted that computing baselines is no longer important because a utility or aggregator is responsible for the equipment and compensates the building owner accordingly.

Swimming pools in Florida provide another opportunity for the electrical industry, according to Meyn. The average pool filtration system circulates and

cleans for 6 to 12 hours every day and uses approximately 1.3 kW per week. This filtration is usually invisible to owners unless the pool is not getting clean enough, so Meyn believes this load could, in principle, be cycled on and off to balance overall system loads. He said a randomized control strategy could be developed to turn pool pumps on or off according to a probability that depends on each pool’s internal state and the utility’s regulatory signal. A mean-field model is used for this analysis, and the input-output system is stable and passive as desired.

Meyn concluded his talk by providing some supplementary references relating to these topics: Brooks et al. (2010); Callaway and Hiskens (2011); Hao et al. (2012); Hao et al. (2014); Meyn (2007); Meyn et al. (2014); Meyn and Tweedie (1993); Schweppe et al. (1980); and Parashar et al. (2004).

In a later breakout session discussion, a participant noted that there would not be much need for ancillary services if all the distributed resources were utilized. Specifically, the participant commented that two key questions need to be considered: How are benefits monetized to consumers? How is synchronization mathematically posed and solved? The participant believes the answer may lie in the successful FERC 755 (137 FERC ¶ 61,064) two-part payment scheme, or contracts for services currently adopted by EnerNOC and New Jersey Power and Light.

ADVANCES IN MIXED-INTEGER PROGRAMMING AND THE IMPACT ON MANAGING ELECTRIC POWER GRIDS

Robert Bixby, Gurobi Optimization

Robert Bixby discussed advances in mixed-integer programming (MIP) and the impact on managing electric power grids. He began by giving an early history of linear programming (LP). In 1947, George Dantzig invented the simplex algorithm, which gave a global view of solutions and paved the way for four Nobel Prizes in economics. In 1951, the first computer code for LP was developed using the simplex method, and by 1960 LP had become commercially viable and was being used extensively in the oil industry for refinery blending models. Around 1970, MIP, where some or all of the variables are forced to take only integral values, became commercially viable, although it took several more years for it to become widespread.

Interest in optimization expanded during the 1970s, Bixby explained, and numerous new applications of mixed-integer problems were identified. However, significant difficulties emerged. Building applications was expensive and risky, often requiring 3- to 4-year development cycles. The technology was not ready; linear programs were hard to solve, and MIP was even more difficult. In the mid-1980s, Bixby stated, there was a perception that LP software had progressed as far as it could go. He noted, however, that there were several key developments that pushed

the field forward, such as desktop computing (dating from the introduction of the IBM PC in 1981) and Karmarkar’s 1984 proof that interior-point methods could run in polynomial time. In the 1990s, he said, LP took off and MIP was demonstrated to work on some difficult, real problems such as airline scheduling and supply-chain scheduling.

Today, practitioners consider LP to be a solved problem, said Bixby. Large models, with millions of variables and constraints, can now be solved robustly and quickly. Challenges are still associated with MIP, Bixby commented, owing to the requirement for integer values, but this approach allows practitioners to model decision problems in many more application areas.1 Bixby first successfully applied MIP to the electrical power industry in 1999 using the California 7-day Model.

The current MIP solution framework is a branch-and-bound approach, Bixby explained, where the integer restrictions are initially relaxed and the problem solved using LP. Each non-integer solution value is then examined and branched up and down to the nearest integer. He elaborated that the problem is then re-solved with these values, and the next non-integer solution value is examined. This will ultimately result in several solutions to the problem, and the minimizing solution is selected.

Bixby also outlined the computational history for integer programming. Dantzig, Fulkerson, and Johnson (1954) solved a 42-city traveling salesman problem to optimality using LP. Gomory (1958) introduced cutting-plane algorithms. Land and Doig (1960) and Dakin (1965) introduced branch-and-bound algorithms. The first commercial application solved with MIP was in 1969. In the 1970s, two cutting-edge commercially viable codes were introduced for the IBM 360 computer, MPSX/370 in 1974 and SCICONIC in 1976, that implemented a LP-based branch- and-bound. From 1975 to 1998, Bixby said, good branch-and-bound techniques remained the state of the art in commercial codes, in spite of many new advances in combinatorial optimization, including polyhedral combinatorics (Edmonds, 1965a,b, 1970), cutting planes (Padberg, 1973), revisited Gomory (Chvátal, 1973), disjunctive programming (Balas, 1974), PIPX and pure 0/1 MIP (Crowder et al., 1983), MPSARX and mixed 0/1 MIP (Van Roy and Wolsey, 1987), and the traveling salesman problem (Grötschel and Padberg, 1985; Padberg and Grötschel, 1985).

In 1998, Bixby said, a new generation of MIP codes came into existence. The biggest two advances for MIP were presolve and cutting planes. Presolve examined user input for logical reduction opportunities in order to reduce the size of

____________________

1 The list of application areas is long and includes accounting, advertising, agriculture, airlines, ATM provisioning, compilers, defense, electrical power, energy, finance, food service, forestry, gas distribution, government, Internet applications, logistics/supply chain, medical, mining, national research laboratories, online dating, portfolio management, railways, recycling, revenue management, semiconductors, shipping, social networking, sourcing, sports betting, sports scheduling, statistics, steel manufacturing, telecommunications, transportation, utilities, and workforce management.

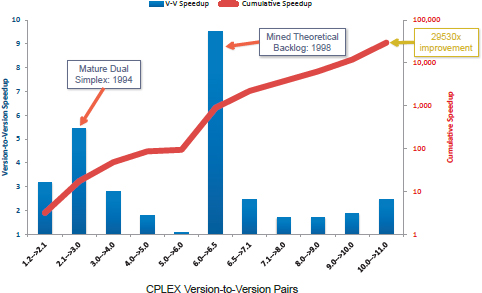

FIGURE 4.5 MIP computing time advancements. SOURCE: Robert Bixby, Gurobi, presentation to the workshop.

the problem passed to the requested optimizer, thereby reducing total run time. Cutting-plane methods refined the objective function using linear inequalities. Other advancements include improvements in LP, variable/node selection, primal heuristics, and node presolve. Figure 4.5 illustrates this rapid speedup.

Bixby recounted some major uses of optimization in ISOs and the value that MIP has provided. Optimization plays a central role in ISO operations, addressing, among others, the following three problems:

- Day-ahead problem. This is the unit-commitment for the next day. For the New York ISO, for example, markets close at 5 a.m. and commitments must be posted by 11 a.m. Windows of only about 30 minutes are available to compute commitments.

- Real-time power dispatch problem. This is same-day unit commitment. These are solved every 5 minutes at the New York ISO.

- Real-time dispatch problem. These problems determine generator clearing prices, and they are likewise solved every 5 minutes at the New York ISO, using an LP. These solutions are comparatively simpler than the first two problems.

Bixby commented that Lagrangian relaxation, which has been in use since the early 1980s, has greatly facilitated these optimizations. Because the technique appeared

to produce high-quality solutions with good solution times, there was a considerable and understandable reluctance to move away from it. However, in 1999, MIP was demonstrated to be a viable alternative, thus pressuring users to switch methods. For example, Bixby said that PJM Interconnection implemented MIP in its day-ahead market in 2004 and estimated its annual production cost savings at $60 million. Two years later, PJM implemented MIP in its real-time market look-ahead, and tests showed $100 million in annual savings. In April 2009, CAISO implemented MIP as part of its Market Redesign and Technology Update with an estimated savings of $52 million. In 2009, the Southwest Power Pool introduced MIP enhancements to its day-ahead market with an estimated $103 million in annual benefits (FERC, 2011b).

Bixby concluded by explaining that MIP is the preferred approach for three reasons:

- Maintainability. The alternative Lagrangian relaxation codes were approximately 100,000 lines of Fortran code and were understood by only one or two people within an organization.

- Transparency. MIP formulation has a much simpler representation with separate model and algorithm components, which are easy to read, interpret, and maintain. The solutions are also much easier to defend against legal challenges.

- Extensibility. It is extremely difficult to add constraints to Lagrangian relaxation codes, while they can be easily added to MIP formulations.