3

Producing High-Quality Economic Evidence to Inform Investments in Children, Youth, and Families

Three of the major economic evaluation methods that can be applied to interventions1 serving children, youth, and families identified in Chapter 2 are cost analysis (CA), cost-effectiveness analysis (CEA), and benefit-cost analysis (BCA). These methods can be used to address a number of important questions relevant to decisions about intervention investments. For example, What does it cost to fully implement a given intervention? If an investment is made, what can be expected to be gained in return (e.g., outcomes, dollars, or overall better quality of life)? Is the investment a justifiable use of scarce resources relative to other investments?

Economic evidence generated by these methods can inform investment decisions, but barriers to using this evidence exist. As noted in Chapter 2, some of these barriers relate to the quality of the economic evidence produced. High-quality economic evidence can be difficult to derive because economic evaluation methods are complex and entail many assumptions (Crowley et al., 2014; Lee and Aos, 2011; Vining and Weimer, 2009a). Moreover, methods are often applied inconsistently in different studies, making results difficult to compare and use appropriately in policy and investment contexts (Drummond and Sculpher, 2005; Foster et al., 2007; Institute of Medicine and National Research Council, 2014; Karoly, 2012; Weinstein et al., 1997). Results also may be communicated in a way that obscures important findings or is not suited for nonresearch audiences, or a way in which decision makers may not deem them reliable or compelling

___________________

1 As noted in Chapter 1, the term intervention is used to represent the broad scope of programs, practices, and policies that are relevant to children, youth, and families.

(National Research Council and Institute of Medicine, 2009; Oliver et al., 2014; Pew-MacArthur Results First Initiative, 2013). Shortcomings in these areas may not only limit decision makers’ use of economic evidence but also reduce their demand for such evidence, as well as other types of evidence, in the future.

The primary aim of this chapter is to examine issues associated with the quality of economic evidence, and thus to address the first of this study’s two guiding principles, as described in Chapter 1: quality counts. As noted in Chapter 2, the quality of economic evidence is essential to its utility and ongoing use. Thus, a major goal of this chapter is to help current and would-be producers of economic evidence understand when interventions are ready for economic evaluation and what it takes to produce and report high-quality economic evidence. In several instances, the chapter identifies emerging issues—such as the importance of incorporating the impact of intervention investments on participants’ quality of life—that merit further investigation to determine their applicability to economic evaluation of investments in children, youth, and families.2

In focusing on the quality of economic evidence, the committee drew on the literature and the expertise of its members to identify best practices that can both support high-quality economic evaluation and potentially lead to greater standardization of evaluation methods. Standardization is particularly important because decisions to invest in interventions for children, youth, and families typically involve weighing alternatives in the face of limited budgets; other constraints; and, perhaps, competing values. The use of differing methods to estimate the costs and benefits of alternative investments impedes understanding the economic trade-offs involved and limits the utility of the evidence. At the same time, it is important to recognize the potential disconnect between ideal practice and the real-world analytic issues and constraints that producers of economic evidence encounter. Where possible, this chapter provides strategies for addressing such practical limitations. In addition, the best practices for producing high-quality evidence recommended at the end of the chapter are divided into those that can be viewed as “core” and readily implemented in most circumstances and those the committee characterizes as “advancing,” to be pursued when feasible.

The focus of this chapter extends to highlighting best practices for re-

___________________

2 In this chapter, the committee discusses at some length both the strengths and limitations of economic evaluations and the economic evidence produced. The committee recognizes that, based on the current state of the field, there is no perfect solution for every issue that is discussed herein. Although economic evidence has its limitations, the hope is that stakeholders, to the extent possible, follow good practices, are transparent about these practices, and understand—whether they are producers or consumers—what can and cannot be derived from economic evaluations.

porting the results of economic evaluations in a consistent and transparent manner. Findings need to be communicated in ways that facilitate understanding, acknowledge limitations, and support their appropriate use in investment decisions. Achieving such transparency and utility is not a small task given the complexity, multiple assumptions, and various sources of uncertainty entailed in the use of economic evaluation methods. Nonetheless, the chapter offers guidelines that in the committee’s view can enhance the utility and use of economic evidence while maintaining scientific rigor.

It should be noted that a well-established literature on best practices in the conduct and reporting of CAs provided a solid foundation for the CA-related conclusions and recommendations offered in this chapter. Best practices in CEA in health and medicine, initially established in 1996 (Gold et al., 1996), are currently under review by the 2nd Panel on Cost-Effectiveness Analysis in Health and Medicine.3 In addition to the best practices pertinent to CEA identified in this chapter, interested readers are encouraged to turn to this panel’s recommendations when they are available. Best practices in the application of BCA to investments in children, youth, and families have just begun to appear in the literature, so the committee’s conclusions and recommendations on this method are based on the consensus view of the committee members, incorporating perspectives from the available literature and papers and panels sponsored for this study.

Finally, although much of this chapter is directed at producers of economic evidence, its content should also be of interest to consumers of the evidence. Consumers can benefit from understanding the analytic issues associated with planning for and conducting economic evaluations, the best practices for the production and reporting of economic evidence, and the limitations of economic evaluation methods. Similarly, producers of the evidence would benefit from understanding the issues raised in Chapter 4, which deals with how consumers use the economic evidence they receive, even if it is of the highest quality, and the context in which investment decisions are made.

The first two sections of this chapter outline issues pertinent to all types of economic evaluation: determining whether an intervention is ready for economic evaluation and defining the scope of the evaluation. Next is a discussion of issues specific to evaluating intervention cost (relevant to CA), and by extension, CEA and BCA, determining intervention impacts (relevant to CEA and BCA), and valuing outcomes (relevant particularly to BCA and related methods). Sections then follow on the development and reporting of summary measures for the results of CA, CEA, and BCA; how the uncertainty intrinsic to economic evaluations can be handled; and how equity

___________________

3 For more information on this effort, see http://2ndcep.hsrc.ucsd.edu/list.html [March 2016].

considerations can be addressed. The chapter closes with the committee’s recommendations regarding best practices for producing and reporting high-quality economic evidence.

DETERMINING WHETHER AN INTERVENTION IS READY FOR ECONOMIC EVALUATION

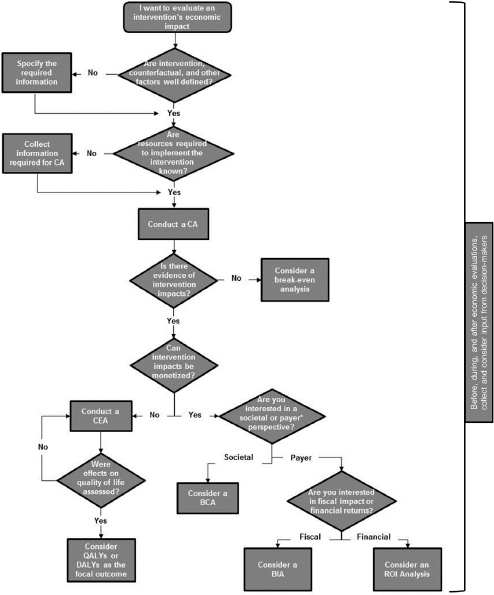

As discussed in Chapter 2, economic evaluation encompasses an array of methods used to answer questions about the economic value of the resources required to implement an intervention, alone or with reference to the intervention’s impact, measured in terms of the outcomes affected or the economic value of those outcomes. Determining whether an intervention is ready for economic evaluation and if so, which evaluation method to use, depends on the question(s) of interest and the information available. This section highlights the requirements for undertaking a high-quality economic evaluation, beginning with the most general requirements and then focusing on those that are specific to different economic evaluation methods. Figure 3-1 provides a decision tree used to guide the discussion.

Intervention Specificity, Counterfactual, and Other Contextual Features

For all types of economic evaluation, whether ex post or ex ante, two essential requirements are that the intervention be clearly defined and the counterfactual condition be well specified (Figure 3-1).

Intervention specificity means that the intervention’s specific purpose, intended recipients, approach to implementation, causal mechanisms, and intended impact can be described in sufficient detail. For an ex post analysis, this specificity means that others can replicate the intervention or apply it in new settings or with new populations (Calculating the Costs of Child Welfare Services Workgroup, 2013; Foster et al., 2007; Gottfredson et al., 2015). For an ex ante analysis, it means that consumers of the analysis understand the nature of the intervention being analyzed.

In the context of an ex post analysis, a logic model describing the intervention’s theory of change, or mechanisms by which its impact is achieved, is useful in establishing specificity, as are written curricula, manuals, detailed policy plans, and other documents outlining how the intervention is to be implemented and how staff implementing it are to be trained and supported in carrying it out effectively. Many interventions meet this requirement and have published manuals and logic models or explicit theories of change (Gill et al., 2014; Hawkins et al., 2014; Hibbs et al., 1997; Smith et al., 2006). Guidelines for developing logic models where they do not exist are also readily available (Centers for Disease Control and Prevention, 2010; W.K. Kellogg Foundation, 2004).

NOTES: This decision tree highlights the major types of economic evaluation based on how the estimate will inform the intervention investment and the available information. BCA = benefit-cost analysis, BIA = budgetary impact analysis, CA = cost analysis, CEA = cost-effectiveness analysis, DALY = disability-adjusted life year, QALY = quality-adjusted life year, ROI = return on investment.

*Payers may include employers, government, the health care system, or recipients of the intervention.

The second key requirement is defining the counterfactual, the alternative with which the intervention is being compared in the economic evaluation, whether the evaluation is an ex post or ex ante CA, CEA, or BCA. In the context of program evaluation, this is usually referred to as the control, status quo or baseline, or comparison condition. The counterfactual condition may be no intervention, the status quo, or business as usual (e.g., an existing intervention), or it may be a less intensive version of the intervention of interest. For example, a school-based teen pregnancy prevention intervention might be evaluated in a community where there was no current intervention, where there was an existing intervention (school- or community-based), or where there was an intervention that provided information materials only but no other services. Defining the counterfactual is key, as a CA will be based on measuring the resources used to implement the intervention relative to the counterfactual condition. If a CEA or BCA is to be performed, intervention impacts should be measured relative to the same counterfactual condition as that used for the CA.

Clarifying other aspects of the context in which the intervention has been or will be carried out is necessary for interpreting the results of economic evaluation. Additional contextual details—such as the sociodemographic characteristics of the population targeted and served; the time, place, and scale of implementation; and other elements detailed in Consolidated Standards of Reporting Trials (CONSORT) guidelines (Schulz et al., 2010)—can also aid interpretation, help consumers understand the circumstances under which economic evidence is likely to apply, and guide appropriate use of the evidence in decision making. Without a clear understanding of the base case, the counterfactual, and other contextual factors—“what is delivered for whom, under what conditions, and relative to what alternative”—interpretation of the results of economic evaluation will be muddy.

Other Requirements for Economic Evaluation

Provided that an intervention is well defined and the counterfactual and other contextual factors can be specified, a CA can be performed to understand the economic cost of the resources required for implementation or to provide the foundation for a CEA or BCA. As discussed later in this chapter, conducting a CA, CEA, or BCA requires estimates of the resources used in intervention implementation and the economic values to attach to those resources (Figure 3-1). Later in the chapter, in the discussion of CA as a stand-alone analysis or as a component of CEA or BCA, best practices for measuring the resources used and their values are reviewed.

When investors have more complex questions than cost, such as which interventions are expected to yield the greatest impact for a given invest-

ment or which investments are likely to generate positive returns, evidence of intervention impact also is needed so that CEA or BCA can be performed (Figure 3-1) (Jamison et al., 2006; Lee and Aos, 2011; Levin and McEwan, 2001). Issues related to the nature of the evidence of impact are considered later in this chapter. When CA is possible but evidence of intervention impact is not available, Figure 3-1 shows that a break-even analysis can be performed to determine how large impacts would need to be for an intervention to be deemed cost-effective or cost-beneficial, provided that potential intervention impacts can be monetized. When intervention impacts are available and the impacts can be monetized, Figure 3-1 indicates that a BCA can be conducted; otherwise, a CEA is a feasible alternative.

CONCLUSION: Key requirements for all types of economic evaluation are that the intervention can be clearly defined, the counterfactual well specified, and other contextual features delineated. To conduct cost analysis, cost-effectiveness analysis (CEA), and benefit-cost analysis (BCA), information on the resources used to implement the intervention is required. For CEA or BCA, credible evidence of impact also is needed.

DEFINING THE SCOPE OF THE ECONOMIC EVALUATION

Once it has been determined that an economic evaluation is feasible, an essential next step is to define key elements of the evaluation’s scope. These include the perspective for the analysis, the time horizon and discount rate, and several other analytic features.

Perspective

The perspective for an economic evaluation is determined by the question(s) to be answered and the audience(s) for the analysis (Figure 3-1). The broadest perspective is the societal perspective, which captures the public and private sectors and includes individuals who may be the focus of the intervention, as well as those who may be affected only indirectly. CA, CEA, and BCA all can be conducted from a societal perspective, with all costs being captured regardless of who bears them, and the economic values associated with all outcomes accounting for all who gain or lose. An economic evaluation conducted from the societal perspective can be disaggregated to consider the results from the perspective of specific stakeholder groups: the individuals who are targeted or served by the intervention; other individuals in society who are not targeted or served by the intervention; and the public sector at all levels of government combined or further disaggregated to consider the federal, state, and local levels separately or

even different agencies at a given level. The public sector can also be viewed as representing the costs and benefits borne by individuals as taxpayers. Providing this detail is particularly useful in showing how costs and benefits of an intervention are distributed to various interested parties. For example, an intervention with a small but positive net benefit could mask losses to participants that were offset by public sector savings. Though a favorable investment overall, an intervention with such a distribution of costs and benefits may not be appealing to investors valuing gains to participants over government savings. Further discussion of perspectives is included later in this chapter in the section on best practices for conducting cost analyses.

For some economic evaluations, the primary focus may reflect mainly or solely a government perspective, which is just one component of the societal perspective. As noted in Chapter 2, cost-savings analysis is a BCA from the government perspective (Figure 3-1). The government perspective may be even more narrowly focused, such as for a specific government agency or level of government (e.g., federal, state, or local). Economic evaluations also can be conducted from the private perspective of a specific stakeholder, such as a business, philanthropy, or private investor. When an analysis is conducted for a specific stakeholder, its conclusions will reflect that stakeholder’s perspective but may fail to capture the full range of costs and benefits of the intervention being analyzed, and the excluded costs and benefits may be substantial. For example, focusing on the government perspective may fail to provide important information about how an intervention impacts the participants involved, in terms of costs borne or gains received from participating in the intervention. In contrast, the advantage of the societal perspective is that in the ideal, it provides a comprehensive accounting of all costs and benefits.

At the same time, when the societal perspective is adopted, it is important to disaggregate societal costs and benefits into those that accrue to the private sector (e.g., to intervention participants and other members of society) and those that accrue to the public sector (e.g., to the government as a whole or subdivisions of the public sector). The justification for public-sector investments in children, youth, and families is strongest when there are positive net benefits to the public sector and the rest of society, in addition to any private returns to the individual participants. Private individuals may underinvest in those areas (e.g., health, education) where the private returns are less than the social returns (i.e., there are also returns to the public sector or other members of society). Conversely, if the only returns to an investment are private, there is little justification for a public-sector investment.4

___________________

4 Disaggregating can show how costs, benefits, and net benefits are distributed to different stakeholders, both in total as well as over the time period of interest (e.g., annual costs and

Time Horizon and Discounting

One feature of investments in children, youth, and families is that an intervention may take place over multiple years, and its impact may extend over long periods of time, sometimes covering the entire life course and even affecting future generations. For this reason, it is important to define the time horizon that will be applied to the economic evaluation and whether the stream of future values associated with the resources used to implement the intervention and the outcomes that result will be discounted.

Establishing the time horizon is relatively straightforward. At a minimum, the time horizon will typically include the period over which the intervention is implemented. For discrete interventions, such as an early childhood or youth development program, implementation will occur over a fixed number of years. Depending on the length of the follow-up period, outcomes may be observed only during the intervention period, or they may extend further into the future if participants are followed after the intervention ends. There may also be interest in projecting outcomes beyond the period when outcomes were last measured. Such projections may extend over an individual’s lifetime or even to future generations. As discussed later in the chapter, such projections introduce additional uncertainty into the results of an economic evaluation.

The issue of discounting arises because economists often assume that individuals and society place a higher value on costs and outcomes occurring in the present than on those that will occur in the future. Two common arguments to justify this assumption are (1) that money and other resources available today can be invested or used in some way to enjoy more benefits later on than would be realized if the same resources were available only in the future, and (2) that having those resources today eliminates any uncertainty of having them in the future (Miller and Hendrie, 2013).

Discounting is a technique used in economic evaluations to adjust costs and outcomes to account for this premium placed on benefits accrued closer to the present. For economic evaluations focused on children, youth, and families, the social discount rate is appropriate (Boardman and Greenberg, 1998). The standard approach to discounting is analogous to the process of compounding interest: a stream of costs or outcomes is reduced to its present value by applying a compounded discount rate to future streams.

___________________

benefits to each stakeholder from intervention’s start through year 5). Stakeholders included in the analysis should be meaningful to the program and/or policy question. For example, costs and benefits to participants and to taxpayers who may finance and also benefit from the intervention are often included in stakeholder analysis, and there may be additional groups to incorporate as well. It is important to align the stakeholder groups on the cost and benefit sides of the analysis. That is, both the costs and benefits to each stakeholder group of interest should be estimated.

Because higher discount rates lead to higher valuation of outcomes occurring in the present relative to those occurring in the future, the discount rate is a key choice in economic evaluation, especially for interventions with significant impacts over long periods of time. The discount rate used in studies reflects the value of a dollar today versus that of a dollar tomorrow at a particular margin, determined largely by what return is required to attract the last dollar of saving. A discount rate also may vary by whether one uses a risky or riskless return. Discount rates used in economic evaluation have varied widely, although recommendations in recent years appear to be settling in the range of 3-7 percent (Drummond et al., 2005; Gold et al., 1996; Haddix et al., 2003; Hunink et al., 2001; Office of Management and Budget, 2003; Washington State Institute for Public Policy, 2015). A later section of this chapter describes the practice of using a base discount rate and then assessing the sensitivity of the results of the economic evaluation using a range of alternative discount rates.

Although there is little disagreement on the validity of discounting intervention costs, more controversy is associated with the issue of whether other outcomes—health in particular—should be discounted, and at what rate. One argument in favor of discounting health outcomes focuses on uncertainty: individuals would prefer to postpone illness because (1) they may not even be alive in the future, and (2) future medical progress could reduce the negative effects of the same illness occurring today (Miller and Hendrie, 2012). Some argue, moreover, that health outcomes should be discounted at the same rate as costs to avoid a paradox that arises when health is discounted at a lower rate than costs: the economic performance of an intervention may sometimes be improved by delaying its implementation, since the same health benefits could be achieved at a lower (discounted) cost simply by waiting (Gold et al., 1996; Keeler and Cretin, 1983; Weinstein and Stason, 1977). As noted by Drummond and colleagues (2005, p. 111), most guidelines for economic evaluation of health interventions, including those of the U.S. Panel on Cost-Effectiveness in Health and Medicine and the World Health Organization, recommend discounting of both costs and health outcomes using the same rate. For example, if an obesity prevention intervention is conducted when children are 10 years old and the expected impacts are expected to reduce the probability of cardiovascular disease (CVD) in the 50th future year, then the benefits of the intervention—either in natural units in a CEA, in cases of CVD avoided, or in monetary benefits in a BCA—are valued at benefit/(1 + discount rate)^50. At a 10-percent discount rate, $1,000 spent today would be worth $9; at a 3 percent rate, $228. Note that future health costs and outcome benefits are discounted at the same rate as the cost saving and outcome benefit. For obesity prevention, for example, costs and benefits will be the same for people of different ages.

With investments in health interventions for children, of course, most benefits accrue over time, as in better educational and then work outcomes due to better health. It is the accumulation of all those benefits over time that is typically to be compared with current costs. For many studies, the danger is more that the future benefits simply are not estimated or cannot easily be estimated—less an issue than that long-term benefits are discounted too heavily.

A related and more complex ethical issue arises from discounting any intervention with impacts that affect future generations, or comparing benefits for a younger generation with costs to an older one. Miller and Hendrie (2013, pp. 356-357) give the example of a hypothetical environmental regulation targeting global climate change, which could affect outcomes of today’s children and of children centuries into the future. Even using a low discount rate, outcomes just a century away would have present values so low that an economic evaluation would likely favor investments that would avoid even small sacrifices in the present, at the cost of potentially significant harm for future generations.

Part of the complication here is that discount rates assume investments apply at the margin, so that an extra benefit (valued in dollar terms) may be worth less to a future generation, expected to be richer, than to the current one. But if comparisons are made with respect to the value of a life today versus a life tomorrow, then the implicit assumption that the calculation applies at the margin no longer obtains. Put another way, there is no case for valuing a life tomorrow less than a life today, even if an extra lifetime dollar is worth more to the older of two generations.

Solutions suggested for avoiding this problem include starting the “discounting clock” when those affected are born, using a zero discount rate, and eliminating the need for discounting by assuming an explicit social utility function (Cowen and Parfit, 1992; Miller and Hendrie, 2013; Schelling, 1995). A broader suggestion, acknowledging that there is no satisfactory solution to this issue, is to consider moral obligations to future generations separately from the question of discounting practice (Institute of Medicine, 2006).

Finally, Karoly (2012) highlights an issue especially relevant to early childhood. Early programs can start at various child ages, from before birth up to age 5. If discounting originates at the age a program starts, some studies will discount to birth, while others will discount to as late as age 4. In such cases, present-value estimates will not be comparable across studies. The same concern applies in comparing interventions at other stages of

development. Unless interventions are discounted to the same age, present-value estimates will not be comparable.5

Other Analytic Features

Two other analytic features to determine at the outset of an economic evaluation are (1) the monetary unit and year in which all economic values will be denominated, and (2) whether to account for the deadweight cost of taxation.6 For the United States, economic evaluations typically use dollars as the currency measure, but any currency is feasible provided resources used and the value of intervention outcomes can be denominated in that currency. To adjust for changes in prices over time, economic evaluations measure the opportunity cost of resources and the economic value of outcomes in inflation-free monetary units, using a base year as reference. Thus, prices of resources used or outcome values before the base year are inflated using changes in relevant price indices (e.g., the consumer price index or employment cost index in the United States), and prices of resources used or outcome values in the future are held constant at the base year levels. The year of measurement may be specific to the point in time at which costs and outcomes were measured, or monetary values may be inflated to a more recent year so that findings can be expressed in current monetary values. As discussed later in this chapter in the section on reporting, the year for which monetary units are valued—whether intervention costs or the value of intervention outcomes—needs to be clearly stated.

When interventions for children, youth, and families involve new taxes for financing the intervention or produce impacts that affect taxes (e.g., an increase in taxes because of higher earnings or a reduction in welfare payments because of reduced welfare participation), there is a corresponding change in the deadweight cost associated with the distortionary effects of taxes on economic behavior and the costs associated with administering the tax—i.e., the dollars of welfare loss per tax dollar (Vining and Weimer, 2010). Producers of economic evidence may account for this deadweight

___________________

5 Maynard and Hoffman (2008) highlight another approach in their analysis of teen pregnancy prevention: assuming that an intervention had been fully implemented (from birth to adulthood for everyone) and then providing a steady-state analysis.

6 Every dollar of government revenue raised through taxes typically costs society more than one dollar in resources because taxes induce changes in behavior (e.g., reduced work effort) that represent an opportunity cost to society and because of the administrative costs of tax collection. The deadweight loss (also known as excess burden) measures those costs and is usually expressed as a percentage of the revenue raised. Although the costs of administering government-transfer payment programs conceptually can be viewed as a deadweight loss, such changes in administrative costs are best handled as costs or benefits in the cost-outcome equation.

cost of taxation as an additional cost when taxes are increased to pay for an intervention or when taxes rise as a result of an intervention. Conversely, the deadweight loss is reduced when the intervention produces a reduction in taxes. While economic evaluations often assume no deadweight loss, a few recent evaluations have produced results assuming different levels of deadweight loss as part of a sensitivity analysis (e.g., as in Heckman et al. [2010] and Washington State Institute for Public Policy [2015], in which deadweight costs are assumed to be 0 percent, 50 percent, and 100 percent).

CONCLUSION: Once an intervention has been determined to be ready for an economic evaluation, an essential next step entails establishing the perspective; the time horizon for capturing costs (all types of analyses) and outcomes (cost-effectiveness analysis and benefit-cost analysis); the baseline discount rate; the monetary unit and reference year; and the assumed magnitude of the deadweight loss parameter, if deadweight loss will be evaluated.

EVALUATING INTERVENTION COST

A systematic CA gives stakeholders important insight into the operation of interventions that impact children, youth, and families, including the overall cost of implementing and sustaining an intervention, costs for specific intervention activities, and costs per intervention participant (Crowley et al., 2012; Foster et al., 2007; Haddix et al., 2003). Beyond assessing actual costs, a CA may serve to facilitate planning, maximizing the efficiency of resource use, replication, dissemination, and implementation of efficacious and effective interventions. Chapter 2 describes the place of CA within evaluation and economic evaluation frameworks. CA relies on information about an intervention’s implementation, such as the specific programmatic activities, the types and quantities of resources used in delivering intervention services, the number and characteristics of providers delivering and individuals or families receiving services, and the intensity or dosage of services provided. This information on intervention inputs is also the focus of process evaluation, which answers the questions of “what is done,” “when,” “by whom,” and “to whom.”

In addition, as discussed in Chapter 2, CA establishes the foundation for other types of economic evaluation, such as CEA and BCA. As detailed later in this chapter, CEA examines the relationship between an intervention’s costs and a relevant unit of intervention effectiveness, while BCA quantifies intervention benefits in monetary terms and assesses whether they exceed intervention costs. The precision of these analyses depends, in part, on accurate analysis of intervention costs.

When a consistent and accurate approach is used to collect and ana-

lyze cost data, CA also can support comparisons of costs across services, interventions, and agencies. Increasingly, federal agencies require that evaluations of the interventions they fund include cost analyses. For example, a number of program announcements of the Administration for Children and Families requires that applicants propose a reasonable cost evaluation design that (1) allows for analyses of personnel and nonpersonnel resources among cost categories and program activities, (2) allows for analyses of direct services and of management and administrative activities, (3) includes both case-level and aggregate data that can reasonably be obtained and tracked, and (4) identifies anticipated and potential strategies for addressing these issues.

Similarly, at the Department of Education, Office of Innovation and Improvement, applicants for Investing in Innovation funding are required to provide detailed information about how they will evaluate whether their proposed projects are cost-effective when implemented.7 This evaluation may include assessing the cost of comparable or alternative approaches. To receive competitive preference points, applicants addressing this priority must provide a detailed budget, an examination of different types of costs, and a plan for monitoring and evaluating cost savings, all of which are essential to improving productivity.

Best Practices for Conducting Cost Analyses

The goal of a CA is to quantify the full economic value of the resources required to implement an intervention relative to the status quo or control condition. The characteristics of a high-quality CA necessarily include (1) defining the purpose and scope of the analysis, (2) defining the intervention, (3) providing comprehensive and valid cost estimates, (4) applying widely accepted best practices in the field, and (5) acknowledging the limitations of the analysis. The discussion of best practices in this section draws on a review and synthesis of guidelines for conducting CA in the literature. In so doing, it provides additional support for practices discussed earlier in the chapter that are relevant to economic evaluation methods in general, such as defining the purpose and scope of the analysis and the intervention to be analyzed. This section addresses these issues specifically in the context of CA and the best practices identified in the literature.

___________________

7 Notice 80 FR 32229. For additional information, see: https://federalregister.gov/a/2015-13673 [March 2016].

Defining the Purpose and Scope of a Cost Analysis

According to the U.S. Children’s Bureau guide for assessing the costs of child welfare programs (Calculating the Costs of Child Welfare Services Workgroup, 2013), internal and external stakeholders should be engaged prior to the CA to (1) clarify the goals and audience for the analysis, (2) clearly define the intervention to be analyzed, and (3) specify the time period to be covered. The goals of the study help define who needs the CA (audience) and the intended uses of its results. This information in turn determines the perspective for the analysis, dictating which cost categories to consider. The perspective selected for the study guides all subsequent decisions around how best to estimate intervention costs. Many guidelines in the existing literature do not offer recommendations for a specific study perspective, but rather state that it should arise from the interests of the stakeholders or audience for the analysis and/or the research question (Detsky and Naglie, 1990; Drummond and Jefferson, 1996; European Commission, 2008; Graf von der Schulenburg and Hoffman, 2000; Hjelmgren et al., 2001; Honeycutt et al., 2006; Task Force on Community Preventive Services, 2005; Vincent et al., 2000). If a study perspective is recommended, it is most commonly the societal perspective (Barnett, 2009; Capri et al., 2001; Haddix et al., 2003; Honeycutt et al., 2006, Graf von der Schulenburg and Hoffmann, 2000; Hjelmgren et al., 2001; Laupacis et al., 1992; Luce et al., 1996; Ontario Ministry of Health and Long-Term Care, 1994; Pritchard and Sculpher, 2000; Suter, 2010; Task Force on Community Preventive Services, 2005; World Health Organization, 2012). Further, guidelines state that economists prefer the societal perspective (Chatterji et al., 2001; Drummond and Jefferson, 1996; Gray et al., 2010), and almost always recommend this perspective for BCAs (Calculating the Costs of Child Welfare Services Workgroup, 2013; Commonwealth of Australia, 2006; European Regional Development Fund, 2013; Treasury Board of Canada Secretariat, 2007; World Health Organization, 2006).

When the societal perspective is used to guide the CA, additional information is often gained by disaggregating overall costs into subperspectives showing how costs are borne by various stakeholders. Subperspectives may reflect the potential investors in an intervention (agencies, private organizations, taxpayers) or those impacted by the intervention (e.g., participants, potential victims). For CEAs of interventions provided by the health care sector, for example, several guidelines additionally recommend a health system or payer perspective (Academy of Managed Care Pharmacy, 2012; Graf von der Schulenburg and Hoffmann, 2000; Haute Autorité de Santé, 2012; Hjelmgren et al., 2001; Institute for Quality and Efficiency in Health Care, 2009; Marshall and Hux, 2009; National Institute for Health and Care Excellence, 2013; Walker, 2001). If the full societal costs of an inter-

vention are not estimated, however, the subperspective may provide only a partial picture of the value of all resources required to implement an intervention. Indeed, multiple perspectives for an analysis are often preferred and expected. From a provider perspective, for example, the costs of an intervention may equate to actual monetary expenditures. From a societal perspective, however, the value of all resources required to implement an intervention is included in the analysis regardless of to whom they accrue, so that, for instance, costs would include in-kind donations in addition to monetary expenditures. They might also include the cost to participants of spending their time on program activities instead of alternatives, such as work or leisure.

Defining the Intervention

Defining the intervention to be delivered is another critical step in the analysis that needs to include stakeholders who know the intervention model well. Many options exist for analyzing intervention costs as part of broader evaluation efforts (Yates, 2009), and the collection and analysis of cost data are more likely to be successful if included in evaluation planning from the outset. Logic models are a convenient evaluation tool that can help delineate intervention inputs with a bearing on the CA.

Specifying the time period over which cost data will be collected is also important (Brodowski and Filene, 2009). CAs may cover a time horizon of several years to provide information on how costs vary over time, or they may focus on a single year that is considered to be representative of the intervention’s typical operating state. Evaluators also need to specify the intervention’s stage of implementation during the CA because costs are likely to differ between a startup or planning period (preimplementation) and a period of steady-state implementation, when the intervention is operating at or near full capacity (Miller and Hendrie, 2015). The potential existence of economies of scale implies that differences in output level need to be taken into account in comparing operating efficiency across intervention sites, and cost projections may be inaccurate if they fail to take into account the decrease in average cost that occurs as output expands (Mansley et al., 2002).

CONCLUSION: The societal perspective is the most commonly recommended perspective for researchers conducting cost analysis (CA). Subperspectives can be used to tailor cost estimates to specific audiences, but do not necessarily provide a comprehensive estimate of costs and may be inadequate for supporting intervention replication. In addition, CA requires carefully defining the intervention and identifying any of its activities that consume its resources. Best practice further requires that the time horizon for the CA be clearly defined.

Providing Comprehensive and Valid Cost Estimates

Developing accurate estimates of the cost of an intervention for children, youth, and families requires carefully quantifying and valuing the resource needs to replicate intervention effects. There are a number of methods for costing an intervention (Barnett, 2009; Calculating the Costs of Child Welfare Services Workgroup, 2013; Gray et al., 2010; Haddix et al., 2003; Honeycutt et al., 2006; Luce et al., 1996; Muenning and Khan, 2002; Yates, 1996). These methods represent one of two general approaches. The first is a macro, top-down approach that uses total public spending (or individual site budget or expenditure) data to provide gross average estimates of intervention costs.8 The other is a bottom-up approach known as micro costing that relies on identifying all resources required to implement an intervention and then valuing those resources in monetary units to estimate intervention costs. The methods used for micro costing—ingredients- and activity-based allocation—are generally considered the methods of choice because, relative to the macro approach, they are more accurate and provide investors with greater detail about intervention costs so that resource needs for success can be projected. This detail includes robust estimates of the marginal and steady-state (average) costs of the intervention (see the section later in this chapter on “Getting to Results” for additional information on summary measures for CA), which allow for estimation of the intervention’s per-unit cost (e.g., per family or child served). However, micro costing can be more difficult and time-consuming to implement than other costing methods (Levin and Belfield, 2013), requiring that an infrastructure be in place with which to collect data on resource use at the unit level.9

To fully understand resource needs and to ensure that all stages of implementation are covered, combining logic models with the micro costing approach is a good solution to avoid “hidden” costs (e.g., for adoption, development, training, technical assistance, and sustainability). Hidden

___________________

8 Budgetary information can be a useful data source for conducting cost analyses, but estimating the economic costs of interventions requires more than a simple accounting of budgetary expenditures. Specifically, while budgets can be used to estimate the quantity of some resources consumed to implement an intervention, it should not be assumed that they reflect all the resources needed to adopt, implement, and sustain an intervention. Further, the price information that can be extracted from a budget may be representative only of local market prices. Adjustments may be needed to estimate intervention costs in new settings or for national dissemination.

9 Many resources are available that can provide comprehensive and field-specific listings of types of costs. For instance, the new Costing-Out tool from Columbia’s Center for BenefitCost Analyses of Education can be used for interventions delivered in educational settings; the Drug Abuse Treatment Cost Analysis Program (DATCAP) can be used for interventions for children, youth, and families delivered in social service and clinical settings; and the Children’s Bureau offers a free Guide for Child Welfare Researchers and Service Providers (Cost Analysis in Program Evaluation).

costs of an intervention also may include resources required beyond the intervention to ensure full implementation. A CA conducted from the societal perspective, for example, may need to include the value of systems-level resources required for implementation, beyond those resources required only at the local level. Considering implementation costs is especially important when comparing differing approaches to intervention. For example, implementation costs are quite different for passing an underage drinking law and issuing regulations to implement it and for adopting a school-based alcohol education program.

Cost Categories Typical cost categories for consideration in micro costing are personnel, space, materials, and supplies. The categorization of costs may be strengthened by consideration of these major cost categories within specified activities associated with an intervention. It may be helpful, for example, to consider an intervention’s costs within the broad categories of the preimplementation and implementation phases of intervention delivery, or startup versus ongoing maintenance costs. It may also be useful to consider direct versus indirect costs. Direct costs may refer to those resources required to provide services directly to participants, such as classroom time for a bullying prevention curriculum or home visits to prevent child maltreatment. When estimating costs at the level of the unit of the participant, a CA may need to allocate more resources to the estimation of direct personnel time (Yates, 1996). Indirect costs typically denote overhead costs related to administrative functions of an intervention or to services not provided directly to but on behalf of the participants. Often these costs are shared by more than one intervention or used to create more than one output, or may be defined as expenses that directly benefit the agency (American Humane Association, 2009; Calculating the Costs of Child Welfare Services Workgroup, 2013; Capri et al., 2001; Chatterji et al., 2001; Cisler et al., 1998; Derzon et al., 2005; European Regional Development Fund, 2013; Federal Accounting Standards Advisory Board, 2014; Foster et al., 2003; Graf von der Schulenburg and Hoffmann, 2000; Haute Autorité de Santé, 2012; Institute for Quality and Efficiency in Health Care, 2009; Leonard, 2009; National Center for Environmental Economics, 2010; Pritchard and Sculpher, 2000; Suter, 2010; Task Force on Community Preventive Services, 2005; Treasury Board of Canada Secretariat, 2007).

The consensus in the literature is that analysts should include program and administrative or overhead costs for programmatic CAs (Barnett, 2009; Calculating the Costs of Child Welfare Services Workgroup, 2013; Greenberg and Appenzeller, 1998; Her Majesty’s Treasury, 2003; Office of Management and Budget, 2004a, 2004b; World Health Organization, 2012). Indirect costs can be distributed using the proportion of time spent in direct delivery of each service. Once the fraction of time devoted by each

staff member to various activities is known, this information can readily be monetized by multiplying the fractions by the staff members’ compensation (salaries and other benefits) over an appropriate time period, such as 1 year (Greenberg and Appenzeller, 1998). Alternatively, the total annual expenditures on each indirect cost (e.g., support staff salaries, supervisors’ salaries, computers, rental space, telephone, electricity, water, maintenance) can be multiplied by the fraction of the organization’s total staff costs devoted to each activity (Greenberg and Appenzeller, 1998; World Health Organization, 2012). However, it is important to assume that not all management tasks are indirect costs (Calculating the Costs of Child Welfare Services Workgroup, 2013); some are directly related to an intervention, and managers may be able to estimate the amount of time they spend on such tasks.

Another important consideration in CA is fixed versus variable costs, particularly when the evaluator is interested in an intervention’s marginal and steady-state (average) costs. Fixed costs are the value of those resources required only occasionally for the intervention, which do not vary with the number of participants served. Typical fixed costs—such as costs to train providers and to buy furniture—occur in the preimplementation phase of an intervention. By annualizing costs of capital equipment over their useful life, it is possible to allocate a fair portion of those costs to each person served. Variable costs are the value of those resources required for each person served by the intervention. Table 3-1 (Ritzwoller et al., 2009) shows a typical valuation of fixed and variable costs in a CA.

Unit Prices The most important determinant of the comprehensiveness of a CA is how well the resources required to implement an intervention are inventoried and then valued. That is, the costing of an intervention is really a function of resources (Q) and their prices (P). But what unit prices should be used? Budget sheets that show intervention expenditures for a given fiscal year include similar resource categories and are often a convenient, but perhaps incomplete, way to value the resources. Stakeholders also may play a role in determining the appropriate unit prices to use, based on the audience for the analysis. If a national intervention is being valued, for example, unit prices may need to reflect national averages for such costs as wage rates, space rental, and supply purchases. Local interventions may need to rely on local unit prices. Either way, transparency of unit prices is critical for replicability of a CA across sites. Moreover, within any analysis, the use of a consistent set of prices (e.g., state, local, federal) and a common reference year is important.

Nearly all recommendations for conducting CAs suggest that resources be valued by their opportunity cost. Often, the market price for a resource is a good approximation for its opportunity cost. However, when a market price does not exist or is suspected not to reflect the opportunity cost, one

TABLE 3-1 Illustrative Valuation of Fixed and Variable Cost in Cost Analysis

| Cost Element | Variable ($) | Fixed ($) | Total ($) |

|---|---|---|---|

| Recruitment | |||

| Project staff | |||

| Mailings | 1,908 | 1,908 | |

| 3,990 | 3,990 | ||

| Overheada | 24,912 | 24,912 | |

| Subject identification | 1,470 | 1,470 | |

| Telephone interviewers | |||

| Training | 3,046 | 3,046 | |

| Enrollment/eligibility calls | 8,104 | 8,104 | |

| Supplies | 776 | 776 | |

| Total Recruitment | 14,778 | 29,428 | 44,206 |

| Intervention Components | |||

| Tailored news letters | 10,102 | 10,102 | |

| Interviewers training and supervision | 23,865 | 23,865 | |

| Phone counseling/data management | 11,872 | 11,872 | |

| Project meetings and e-mail | 5,667 | 5,667 | |

| Equipment and materials | 2,890 | 2,890 | |

| Personnel management | 9,643 | 9,643 | |

| Overheada | 4,603 | 4,603 | |

| 3-Month Intervention | 21,974 | 46,668 | 68,642 |

| Total Recruitment plus 3-Month Intervention | 112,848 | ||

aOverhead includes office tasks, such as printing, copy making, unscheduled staff meetings, phone conversations, intervention preparation time, commute to the intervention site where calls are made and newsletters are produced, etc.

SOURCE: Example from Ritzwoller et al. (2009), reprinted with permission.

method for valuing the resource is to use a shadow price (Commonwealth of Australia, 2006; European Commission, 2008; Gray et al., 2010; Joint United Nations Programme on HIV/AIDS, 2000; The World Bank, 2010; Treasury Board of Canada Secretariat, 2007; Walter and Zehetmayr, 2006; World Health Organization, 2006, 2012). Examples of the use of shadow prices are the shadow wage rate for adjusting labor prices to account for distortions in the labor market and the shadow price of capital, which is used to adjust the valuation of costs for the effects of government projects on resource allocation in the private sector (European Commission, 2008; National Center for Environmental Economics, 2010; Office of Management and Budget, 1992; World Health Organization, 2006). Examples of the shadow price of wages include the value of the time friends or family spend providing unpaid care (Gray et al., 2010) and the value of volunteer

time, which are based on the wage rate for someone carrying out similar work (World Health Organization, 2006). An example of the shadow price of capital is the use of the price of comparable private-sector land for the price of government-owned land (Commonwealth of Australia, 2006).

Sensitivity Analyses A recent and notable addition to the list of steps for conducting a cost evaluation (whether CA or some other method), from the Children’s Bureau10 and others (Haddix et al., 2003; Yates, 2009), is to conduct sensitivity analysis and examine cost variation (Corso et al., 2013; Crowley et al., 2012). The consensus in the literature is that sensitivity analysis should be performed whenever estimates, data, or outcomes are uncertain. It is accepted that providing the results of sensitivity analysis when reporting the results of cost analysis is best practice, both internationally and domestically (Benefit-Cost Analysis Center;11Hjelmgren et al., 2001; Levin and McEwan, 2001; Luce et al., 1996; Marshall and Hux, 2009; Messonnier and Meltzer, 2003; Office of Management and Budget, 1992; Pharmaceutical Benefits Board, 2003; Ramsey et al., 2005; Siegel et al., 1996; Walker, 2001; World Health Organization 2000). Recommendations on sensitivity analyses are usually generic and often are centered on the discussion of discount rates. In some instances, however, especially in international contexts, particular methods are specified (Hjelmgren et al., 2001; Marshall and Hux, 2009; Walker, 2001); Canada, for example, encourages the use of Monte Carlo simulations (Walker, 2001). Further discussion of sensitivity analysis is provided later in this chapter.

CONCLUSION: According to best practices, after establishing the perspective, defining the intervention, and specifying the base year and time period over which the intervention will be assessed, cost analysis includes the following steps:

- inventorying the resources, in specific units (which may vary across different resources), required for all activities entailed in the intervention;

- calculating the real (adjusted for inflation) cost per unit of each resource used, including fringe benefits associated with wages (P);

- counting the number of units of each resource used (Q) in the specified time period for the number of children, youth, or families served;

___________________

10 For more information, see http://www.acf.hhs.gov/programs/cb/resource/cost-workgroup [March 2016].

11 Available: http://evans.uw.edu/sites/default/files/public/Federal_Agency_BCA_PS_Social_Programs.pdf [March 2016].

- calculating the total costs of the intervention by multiplying all resources used by their unit costs (sum of all P × Q);

- calculating the expected cost per child, youth, or family served—i.e., average costs—by dividing P × Q by the number served during the specified time period of the intervention;

- calculating the expected cost per one more child, youth, or family served—that is, marginal costs—by differentiating between fixed and variable costs; and

- conducting sensitivity analysis to test the uncertainty of assumptions made about quantity and price.

Reporting the Results of Cost Analyses

Acknowledging the limitations of a CA requires transparency as to the methods used and the assumptions made. An overall goal is to achieve so much transparency that another community can implement the same intervention with complete understanding of all resources required (even if some resources are donated). As noted earlier, therefore, all resources need to be inventoried, even if all cannot be valued. For example, if one is conducting a cross-site evaluation of the costs to deliver a home visiting intervention and training costs are not available across all sites, these costs may be excluded for purposes of comparability across sites. However, the CA still needs to note that these costs are an important resource required to implement the intervention, even if they are not explicitly included in the CA results.

Because CAs generate such a wide array of estimates that the level of information can overwhelm even the most discerning reader and obscure useful information, reporting transparent and generalizable results is essential to ensure that the results of the analysis can be translated into effective policy. It is important in reporting, then, to balance detail with useful information. Also important is acknowledging that some unit cost estimates are more robust than others. Specifying where data are limited sets the stage for sensitivity analysis of cost estimates based on those variables and creates a research agenda for those implementing the intervention in the future.

DETERMINING INTERVENTION IMPACTS

Investors in interventions for children, youth, and families may want to know more than an intervention’s cost; they may also desire economic analysis of the return on investment in the intervention, which can be measured in various ways. As described briefly in Chapter 2, CEA compares resource investments with intervention impacts measured in their natural units, while

BCA compares investments with impacts that have been monetized. Cost-utility analysis is a form of CEA that compares investments with impacts expressed in quality-adjusted life years (QALYs) or disability-adjusted life years (DALYs).12 The common thread in these approaches is that they all rest on evidence that the intervention caused one or more favorable outcomes to occur. Evidence of impact may be drawn from a completed program evaluation, as in an ex post economic evaluation, or it may be presumed, as in the case of an ex ante economic evaluation, conducted, for example, for planning purposes.

Without valid evidence of causal impact, there is no reliable return on investment to capture in economic evaluation (Karoly, 2008; Washington State Institute for Public Policy, 2015). Causality, the main topic of this section, is one of several major impact-related issues that producers of economic evaluations need to consider in preparation for estimating cost-effectiveness or the return on intervention investment. Other issues common in economic evaluations of interventions serving children, youth, and families include how to combine evidence when multiple evaluations of a given intervention exist, which effects to include in a CEA or BCA, and how to handle uncertainty in intervention effect sizes—all addressed in subsequent sections of this chapter.

Research Designs and Evidence of Intervention Impact

An underlying premise of CEA and BCA is that the outcomes being subjected to economic evaluation were caused by the intervention.13 Logic models can help articulate an intervention’s putative causal mechanisms, but evidence that the intervention caused an outcome comes from certain research designs used in program evaluation. Some research designs can increase confidence that an observed difference was caused by an intervention, such as using many repeated measures over time and space; making comparisons with jurisdictions, population groups, or outcomes that should not be affected; replicating; and establishing that a

___________________

12 A QALY is defined as a measure of quality of life where 1 is a year lived in perfect health and 0 is death. In some circumstances, values less than 0 (fates worse than death) are allowed. Absent equity weights, a QALY is 1 minus a DALY. Quality-of-life measurement is anchored in part in functional capacity, which gives it some objectivity (Wilson and Cleary, 1995).

13 Causal inference is one of many factors that is relevant to the validity of a study or a set of studies for any given decision. It can be challenging to address certain factors beyond causal inference because they are often dependent upon concerns that the researcher cannot reasonably foresee or control (e.g., generalizability of study context).

dose-response relationship14 existed (Shadish et al., 2002; Wagenaar and Komro, 2013).15

Many, if not most, methodologists believe that the clearest unbiased evidence of causal impact comes from well-conducted experimental designs or randomized controlled trials because these designs minimize threats to internal validity, or the chance that something other than the intervention caused the observed differences in outcomes between intervention participants and nonparticipants (Cook and Campbell, 1979; Fisher et al., 2002; Gottfredson et al., 2015; Jones and Rice, 2011). Although research has shown that “real-world” randomized controlled trials of complex programs and policies are more feasible than originally thought (Cook and Payne, 2002; Donaldson et al., 2008; Gerber et al., 2013), relevant concerns have been raised regarding their limitations. These potential limitations include issues related to external validity (e.g., artificial circumstances defined by eligibility criteria, participants failing to represent particular populations, trials by definition only including volunteers who agree to participate for treatment); issues related to the number of treatment and control groups used;16 high costs; potential ethical concerns; and trade-offs with respect to generalizability and statistical power (Wagenaar and Komro, 2013).

Given the limitations of randomized controlled trials, certain methodologists have recommended the use of other research designs. For example, researchers have studied the extent to which quasi-experimental designs can provide unbiased causal evidence (Bloom et al., 2005; Cook et al., 2008; Shadish et al., 2008). These researchers present convincing examples in which well-designed prospective evaluations of laws and regulations using comparison time series designs—where randomization was not a possibility—provided strong causal evidence through careful attention to other design elements.

In addition, regression discontinuity designs (where individuals are compared with those who receive an intervention based on an arbitrary cut-off point) have also been shown to provide unbiased causal estimates (Gottfredson et al., 2015).17 For instance, children who miss eligibility for

___________________

14 The term “dose-response relationship” refers to the change in an outcome resulting from different degrees of exposure to an intervention.

15 With increasing occurrence, these types of alternative designs are used in both implementation and effectiveness studies. The ways in which these designs impact CAs, CEAs, and BCAs, although noteworthy, are not a primary focus of this chapter.

16 This limitation makes it difficult to ascertain what aspect(s) of the treatment are responsible for the observed effect. The ideal trial design would have multiple different treatment groups, with the potential for multiple control groups; however, these efforts are often a challenge to implement because of cost and logistical issues.

17 As is noted throughout this report, describing the research design and sample on which an economic evaluation is based aids in accurate interpretation of the evaluation results. For regression discontinuity designs, this is particularly important as impacts apply to participants

preschool by 1 or 2 months (based on birthdate cut-offs) can be compared with children whose birthdays are 1 or 2 months on the other side of the cut-off. Nonrandomized controlled trial designs such as these have been used to evaluate the effectiveness of universal pre-K programs in Boston, Tulsa, and in the state of Georgia (Yoshikawa et al., in press). Additionally, two other nonrandomized controlled trial designs, difference-in-difference and fixed effects, also have been shown to be effective and have been used to examine the efficacy of preschool (e.g., Bassok et al., 2014; Magnuson et al., 2007).

Propensity matching, propensity scoring, and instrumental variable designs also are popular alternatives, but at times are misapplied (Austin, 2009; Basu et al., 2007) or yield questionable results. Too often, propensity matching studies match to an intervention serving nonequivalent people (e.g., those who declined the intervention), and the instrumental variables chosen violate essential independence requirements. Quasi-experimental studies are intended to serve a useful purpose; however, the literature has several examples of such studies that are poorly designed, and many grapple with the same issues as those encountered with randomized controlled trials.

Ultimately, analysts conducting economic evaluations need to assess and describe the overall quality of the impact evaluation evidence that forms the basis for the economic evaluation, whether that evidence comes from experimental or quasi-experimental designs or both. For some interventions, strong evidence will come from experimental designs. For others, a randomized control trial is not feasible, but other strong quasi-experimental designs can contribute credible evidence.18 Often, there may be multiple evaluations and analysts can use the overall body of evidence as part of the economic evaluation. For example, researchers often pool data from a variety of interventions derived from randomized controlled trials as well as other useful research designs (see blueprintsprograms.com for examples). When multiple impact studies exist, systematic reviews and/or meta-analyses may be necessary to draw valid conclusions about impacts. For a discussion of major issues involved in systematic reviews and meta-

___________________

who are at or near the eligibility threshold, or cut-off score, for receiving an intervention. Reporting of information about the entire group served by the intervention, the cut-off value, and the portion of the group to which the impact estimates apply is encouraged to add transparency to economic evaluation findings that are based on impacts from these designs.

18 As an example, the research examining the intended and unintended effects of the Earned Income Tax Credit (EITC) on employment and other outcomes has relied on several quasi-experimental methods because an experimental design for evaluating this federal program has not been an option. Analysts have used natural experiments, such as the expanded eligibility for the program in the 1990s, the adoption of state EITC add-ons, and the calendar timing of receipt of the lump sum tax credit (Bitler and Karoly, 2015).

analyses of interventions for children, youth, and families, see the paper by Valentine and Konstantopoulos (2015) commissioned for this study.

Issues in Practice

As research on quasi-experimental designs suggests, standards for evidence evolve over time. Best research design practices and methods also may differ across disciplines, for which different concerns may apply. In the real world, moreover, the only available evidence of intervention impact may be from research designs that are not optimal, and impact estimates may indeed be biased. In such cases, should the economic evaluation go forward? Sometimes the answer is no. For example, if the evidence was produced with no comparison group, if measurement was very weak, or if the evaluation is judged to be of poor quality for other reasons, proceeding with a BCA or CEA is probably unwise. In other situations, such as when several quasi-experimental designs consistently suggest positive impact, it may make sense to proceed.19 In such cases, however, conducting sensitivity analyses with varying effect sizes to test the robustness of conclusions about impact is important. Another approach that can be taken in the absence of causal or unbiased evidence is to estimate how strong intervention impacts would have to be to produce economically favorable results and then judge whether such effect sizes appear feasible. If only a small effect size is required, it may be relatively easy to conclude that the investments in the intervention are economically sound.

Placing the goal of using only the highest-quality, unbiased impact estimates in BCA and CEA in the context of real-world realities and imperfections leads to several practical suggestions for economic analysts. First, seek impact evaluations conducted in accordance with the strongest research designs and best practices within a given field or discipline. Second, critically evaluate all design elements and methods used to estimate impact. Third, when available evidence is based on nonoptimal designs or impact estimates are likely to be biased, it may be possible to proceed with caution, including acknowledging possible bias and conducting sensitivity analyses on economic findings. Finally, if economic evaluations appear too speculative to be valid, the next best step may be to attempt a higher-quality efficacy analysis that produces higher-quality evidence of impact.

___________________

19 See the paper by Valentine and Konstantopoulos (2015) commissioned for this study, which emphasizes the importance of identifying all evidence about an intervention’s impact (published and not; positive, negative, and null) before conducting an economic evaluation to avoid publication bias.

CONCLUSION: The credibility of cost-effectiveness analyses, benefit-cost analyses, and related methods is enhanced when estimates of intervention impact are based on research designs shown to produce unbiased causal estimates. Meta-analysis may be used when impact estimates are available from multiple studies of the same or similar interventions. When evidence of impact comes from designs that may be biased because of selectivity or other methodological weaknesses, it is important for researchers to conduct sensitivity analyses to test the robustness of their findings to variation in effect size and to acknowledge the limitations of the underlying evidence base in their reports.

VALUING OUTCOMES

To assess return on investment in an intervention, producers of economic evidence may choose CEA, BCA, or the related methods outlined in Chapter 2. In CEA, results are expressed as the cost per unit of a single outcome, such as dollars per life saved, dollars per incarceration prevented, or dollars per additional college graduate. Costs come from a CA and outcomes from an impact evaluation. As discussed above, the ideal is for outcomes to be linked causally to the intervention being subjected to CEA; when this is not the case, it is important to disclose the fact and to interpret results of the analysis with caution.

In contrast to CEA, BCA compares the economic value of an intervention’s outcomes, expressed in the selected monetary unit, with the costs of the intervention as determined by a CA. When the value of the outcomes exceeds the intervention costs (after both have been adjusted as necessary for inflation and discounting), an intervention can be said to be “cost-beneficial.” For example, a BCA of an obesity prevention intervention would involve estimating the economic gains, or benefits, from reducing obesity and determining whether they exceeded intervention investments (after both had been adjusted for inflation and discounting). As discussed earlier, comprehensive BCAs take a societal perspective, but some BCAs are conducted from a more narrow perspective, such as that of the government or an agency. These more narrow BCAs may under- or overstate both costs and benefits, a limitation that needs to be disclosed when results are communicated.

BCA is an attractive method for interventions impacting multiple outcomes, as is common in interventions for children, youth, and families, because the dollar benefits of each outcome can be summed to produce an estimated total economic impact. Yet while BCA is a powerful tool that increasingly has become part of evidence-based decision making (White and VanLandingham, 2015), monetizing outcomes can be a complex and time-consuming process. Many organizations lack the capacity to under-

take these analyses without consulting experts in the method. Moreover, the valuation of some outcomes—for example, a human life—can be controversial and lead to skepticism regarding the findings and conclusions of the analysis. Haddix and colleagues (2003) suggest this is why CEA became the dominant analytic method for economic evaluation in health care after the 1980s. In other fields concerned with positive development for children and youth, however, interest in BCA is growing.

Consistent with the overall goal of this chapter, this section addresses issues related to valuing intervention outcomes as part of a high-quality BCA (Boardman et al., 2011; Crowley et al., 2014; Karoly, 2012; Vining and Weimer, 2010). As the discussion proceeds, the economic value of the outcomes from an intervention is referred to as the resulting “benefits.” In practice, however, an intervention may generate some favorable outcomes that result in higher costs (e.g., an intervention that increases educational attainment adds education costs as an outcome), or an intervention may generate some unanticipated unfavorable outcomes that translate into higher costs (e.g., an increased use of special education rather than the expected decrease).

Typically, benefit streams are estimated over time and then discounted back to the present to reflect monetary time preferences according to the following formula:

![]()

The size of economic impact in each year (Qy) and the price per unit of economic impact in each year (Py) both need to be estimated and a discount rate (d) selected. The total present-value benefit is the sum of all intervention-related discounted benefits streams. Producing high-quality estimates requires careful attention to each component: quantity, price, changes in each over time, and discount rate. It also involves assessing the implications of uncertainty for the estimates and summary measures derived from the evaluation. This section describes issues involved in estimating economic impacts and their prices over time. The basic rationale for discounting was addressed earlier in the chapter, while uncertainty and summary measures are discussed in subsequent sections.

Quantifying Outcomes for BCA

Undertaking a BCA requires several decisions with respect to the outcomes that result from the intervention being analyzed. As discussed below, these decisions include which outcomes to include in the analysis; whether the outcomes can be valued directly or linked to other outcomes with economic value; and what the appropriate time horizon for the analysis

is, including whether outcomes can be projected beyond the point of last observation to capture expected future outcomes.

Which Impacts to Include in the Analysis

In conducting a BCA, the analysis needs to identify all outcomes impacted by the intervention and determine which ones to include in the analysis. BCAs typically incorporate a subset of outcomes because not all outcomes can be monetized. For example, interventions may impact the quality of parent-child relationships, but the economic consequences of these gains have not been studied. Other reasons for exclusion of outcomes relate to methodological shortcomings, as there may not be a satisfactory approach to or precedent for monetizing them.20 For instance, social and emotional learning outcomes, which may be instrumental in healthy development, generally are not included in BCAs, although research in this area is progressing (Belfield et al., 2015). There may be too much uncertainty in how the outcomes are estimated, such as effects on populations not directly targeted by the intervention (Institute of Medicine and National Research Council, 2014; Karoly, 2012; Vining and Weimer, 2009c). Finally, another common factor in the choice of outcomes is convenience: analysts may focus on the outcomes that are easiest to work with or on those that are more relevant to their field of study or to the intended audience for the analysis (Institute of Medicine, 2006). An important consequence of the exclusion of outcomes for any of these reasons is potential underestimation of the economic benefits of an intervention (or overestimation, if excluded outcomes indicated harms from the intervention). BCA reports need to include information about which intervention outcomes were not included and why.21