1

Introduction

The United States is formally represented around the world by approximately 14,000 Foreign Service officers and other personnel in the U.S. Department of State. Roughly one-third of them are required to be proficient in the local languages of the countries to which they are posted. To achieve this language proficiency for its staff, the State Department’s Foreign Service Institute (FSI) provides intensive language instruction and assesses the proficiency of personnel before they are posted to a foreign country. The requirement for language proficiency is established in law and is incorporated in personnel decisions related to job placement, promotion, retention, and pay. FSI also tests the language proficiency of the spouses of Foreign Service officers, as a point of information, as well as Foreign Service personnel from other U.S. government agencies.

BACKGROUND

Given recent developments in language assessment, FSI asked the National Academies of Sciences, Engineering, and Medicine to review the strengths and weaknesses of key assessment1 approaches for assessing lan-

___________________

1 Although in the testing field “assessment” generally suggests a broader range of approaches than “test,” in the FSI context both terms are applicable, and they are used interchangeably throughout this report.

guage proficiency2 that would be relevant for its language test. In response, the National Academies formed the Committee on Foreign Language Assessment for the U.S. Foreign Service Institute to conduct the review; Box 1-1 contains the committee’s statement of task.

FSI’s request was motivated by several considerations. First, although FSI’s assessment has been incrementally revised since it was developed in the 1950s, significant innovations in language assessment since that time go well beyond these revisions. Examples include the use of more complex or authentic assessment tasks, different applications of technology, and the collection of portfolios of language performances over different school or work settings. Second, in the FSI environment, questions have arisen about limitations or potential biases associated with the current testing program. Third, the nature of diplomacy and thus the work of Foreign Service officers have changed significantly in recent decades. These changes mean that the language skills required in embassy and consulate postings are different from those needed when the FSI test was developed. For example, transactions that once took place in person are now often conducted over email or by text, and television and the Internet are increasingly prominent sources of information. For these reasons, FSI wanted to take a fresh look at language testing options that are now available and that could be relevant to testing the language proficiency of Foreign Service officers.

THE COMMITTEE’S APPROACH

The committee’s charge includes questions about specific approaches to language assessment and their psychometric characteristics. In addressing this charge, the committee began from the fundamental position that choices for assessment methods and task types have to be understood and justified in the context of the ways that test scores are interpreted and used, not abstractly. Also, concerns about fairness, reliability, and other psychometric characteristics should be addressed through the evaluation of the validity of an assessment for its intended use, not abstractly.

Thus, the committee began its deliberations by considering relatively new approaches for designing and developing language assessments that have been growing in use in the measurement field. These approaches are referred to as “principled” because they are grounded in the principles of evidentiary reasoning (see Mislevy and Haertel, 2006; National Research

___________________

2 This report uses the term “language proficiency” to refer specifically to second and foreign language proficiency, which is sometimes referred to in the research literature as “SFL” or “L2” proficiency. The report does not address the assessment of language proficiency of native speakers (e.g., as in an assessment of the reading or writing proficiency of U.S. high school students in English) except in the case of native speakers of languages other than English who need to certify their language proficiency in FSI’s testing program.

Council, 2001b, 2014). They begin with the context and intended use of scores for decision making and then assemble evidence and build an argument to show that the assessment provides reliable and valid information to make those decisions. The committee judged that these approaches would be useful for informing potential changes to the FSI test.

Likewise, the committee focused on FSI’s intended uses of the test to shape its review of the literature on assessment methods. Rather than starting with specific methods or task types—such as assessments that use task-based or performance-based methods, online test administration, or portfolios—the committee focused on the types of information that different methods might yield and how well that information aligned with intended uses. The committee’s analyses are in no way exhaustive because the charge did not include redesigning FSI’s assessment system. Instead, the committee identified some potential goals for strengthening the test and then considered the changes that might help achieve those goals.

FSI’s intended use specifically relates to a set of job-related tasks that are done by Foreign Service officers. To better understand the kinds of assessment methods that might be most relevant to this use, the committee sought information about the language tasks that officers perform on the job and the nature of the decisions that need to be made about test takers based on their language proficiency. Thus, one of the most important aspects of the committee’s information gathering was a series of discussions with FSI representatives about the current context and practice of language assessment in the agency. These discussions provided an analytical lens for the committee’s literature review. In addition, FSI provided an opportunity for many of the committee members to take the current test themselves.

In its review of the literature, the committee was also strongly influenced by the evolution of the understanding of language use and the implications of that understanding for the design of assessments. These trends have heightened the appreciation of several common features of second language use, including the need to evaluate how examinees use language to communicate meanings, the integrated use of language across modalities, and the prevalence of multilingualism in many situations in which multiple languages are used.

A PRINCIPLED APPROACH TO ASSESSMENT DESIGN AND VALIDATION

In this section, the committee provides a framework for thinking about how to develop, implement, and administer an assessment, and then monitor and evaluate the validity of its results: in FSI’s case an assessment of language proficiency for Foreign Service officers. This framework is built on a “principled approach” to assessment design and validation. The section

begins with a review of important measurement properties, followed by a more detailed explanation of what a principled approach involves.

Fundamental Measurement Properties

Assessment, at its core, is a process of reasoning from evidence. The evidence comes from a test-taker’s performance on a set of assessment tasks. This performance serves as the basis for making inferences about the test-takers’ proficiency in relation to the broader set of knowledge, skills, abilities, and other characteristics that are needed to perform the job and are the focus of the assessment. The process of collecting evidence to make inferences about what test takers know and can do is fundamental to all assessments. A test design and evaluation process seeks to ensure the quality of those inferences.

Validity is the paramount measurement property. Validity refers to “the degree to which evidence and theory support the interpretations of test scores entailed by proposed uses of tests” (American Educational Research Association et al., 2014, p. 11). Validation is the process of accumulating evidence to provide a sound basis for proposed interpretations and uses of the scores.

Reliability refers to the precision of the test scores. Reliability reflects the extent to which the scores remain consistent across different replications of the same testing procedure. Reliability is often evaluated empirically using the test data to calculate quantitative indices. There are several types of indices, and each provides a different kind of information about the precision of the scores, such as the extent to which scores remain consistent across independent testing sessions, across different assessment tasks, or across raters or examiners. These indices estimate reliability in relation to different factors, one factor at a time. Other approaches for looking at the consistency of test scores—referred to as “generalizability analyses”—can estimate the combined effect of these different factors (see Mislevy, 2018; Shavelson and Webb, 1991).

Fairness in the context of assessment covers a wide range of issues. The committee addresses it under the broad umbrella of validity, focusing on the validity of intended score interpretations and uses for individuals from different population groups. Ideally, scores obtained under the specified test administrations procedures should have the same meaning for all test takers in the intended testing population.

The Concept of a Principled Approach to Test Development

Recent decades have seen an increasing use of approaches in general assessment that have become known as “principled” (Ferrara et al., 2017). A

principled approach relies on evidentiary reasoning to connect the various pieces of assessment design and use. That is, it explicitly connects the design for assessment tasks to the performances that those tasks are intended to elicit from a test taker, to the scores derived from those performances, and to the meaning of those scores that informs their use. A principled approach specifically focuses on “validity arguments that support intended score interpretations and uses and development of empirical evidence to support those score interpretations and uses throughout the design, development, and implementation process” (Ferrara et al., 2016, p. 42).

The use of a principled approach to test development is intended to improve the design of assessments and their use so that the inferences based on test scores are valid, reliable, and fair. The foundations behind the use of a principled approach are detailed in Knowing What Students Know (National Research Council, 2001b, Ch. 5). Most notably, the use of a principled approach intertwines models of cognition and learning with models of measurement, which govern the design of assessments.

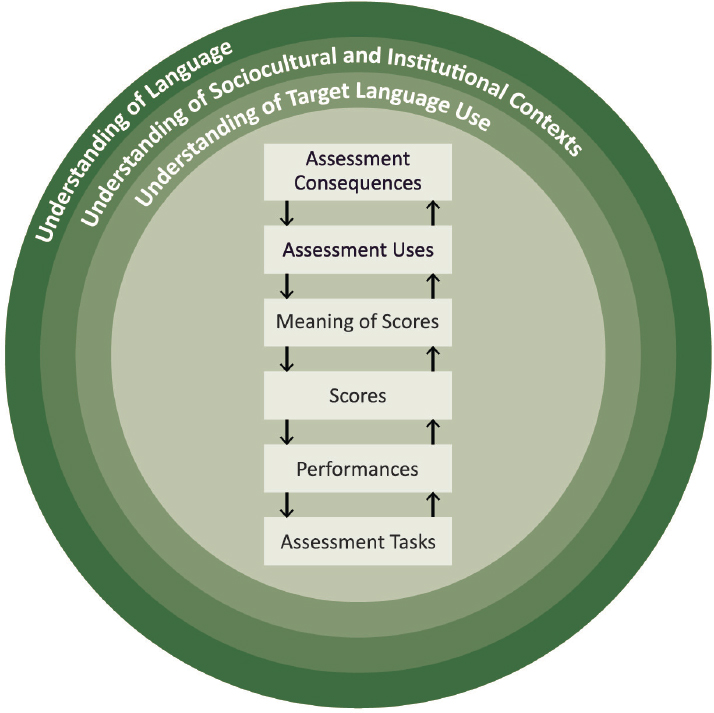

Figure 1-1, developed by the committee using ideas discussed by Bachman (2005), Bachman and Palmer (2010) and Kelly et al. (2018), depicts a set of key considerations involved in language assessment design that are emphasized by a principled approach. The assessment and its use are in the center of the figure, with the boxes and arrows illustrating the logic of test development and validation. Although the arrows suggest a rough ordering of the processes, they are inevitably iterative as ideas are tried, tested, and revised. Surrounding the test and its use, the figure shows the foundational considerations that guide language test development in three rings. Specifically, these rings reflect the test developer’s understanding of language, the sociocultural and institutional contexts for the assessment, and the target language use domain that is the focus of the assessment.

Developed in light of these foundational considerations, the assessment contains tasks that elicit performances, which are evaluated to produce scores, which are interpreted as an indicator of proficiency, which are then used in various ways. The decision to use these scores for any given purpose carries consequences for the test takers and many others. This chain of relationships is fundamental to understanding the design of an assessment and the validity of interpreting its results as a reflection of test-takers’ language proficiency. The validation process collects evidence about these various relationships.

Figure 1-1 is used to structure the report. Chapter 2 describes the FSI context and current test, which reflects all aspects of the figure. Chapter 3 addresses a set of relevant concepts and techniques for understanding the three rings surrounding the test. Chapter 4 addresses possible changes to the tasks, performances, and scoring of the current FSI test. Chapter 5 addresses considerations related to the meaning of the test scores and the way

they map onto uses and consequences. Chapter 6 then discusses the validity arguments that concern the relationships of the elements in the figure. Finally, the report closes by considering how to balance limited time and resources between evaluation to understand how the current test is doing and the implementation of new approaches.

FSI’s request to the National Academies was framed in the context of FSI’s testing needs, and the field’s principled approaches specifically direct attention toward the context and intended use for an assessment. As a result, many of the details of the report are necessarily geared toward the context of FSI’s language assessment needs. Despite this focus, however, the committee hopes the report will be useful to other organizations with language testing programs, for both government language testing and the larger community. The lessons related to the need for building from a clear

argument within the context of test use to assessment design are applicable to all testing programs, even if the specifics of the discussion in this report relate primarily to FSI. In addition, the range of possible design choices for an assessment program are similar across programs, even if the specific contexts of different programs will affect which of those choices may be most appropriate.