6

Workshop Three, Part Two

HAVE TRANSFORMATIONAL THINKING AND ARCHITECTURES USED SPEED AS AN OBJECTIVE?

Mr. John Stenbit, Viasat board of directors and former Assistant Secretary of Defense for Networks and Information Integration/Department of Defense Chief Information Officer, underscored that the government is too risk-averse and backward-looking. Resistance and a desire for consensus impact time, potentially delaying the deployment of available technologies.

He explained that when scarcity turns into abundance, it is crucial to move that transformation into the future quickly instead of watching and waiting. He suggested that this approach be considered specifically for artificial intelligence (AI). Making predictions is difficult with an “unknown” technology such as AI; however, a mechanism to balance risk, reward, and rapidity would be beneficial. He emphasized the value of gaining first-maneuver advantage because it enables faster learning and leads to the formation of a group of people that can exchange ideas. Access to information is the key to success, he continued, for which time is a vital component.

Mr. Stenbit presented two 2 x 2 matrices to describe several “dearth-to-abundance” changes that occurred during his career and highlighted the value of taking advantage of these transitions when they arise. The first example that he provided related to the concept of the synchronization of time and space—it is possible to be synchronous in both time and space, synchronous in time but asynchronous in space, synchronous in space but asynchronous in time, or asynchronous in both time and space. When he worked at the Pentagon, the Department of Defense (DoD) was synchronous in both time and space, and the dominant informational model was the telephone. The second matrix that he presented included “smart versus dumb” and “push versus pull,” concepts that relate to the organizing principle of how information is shared. Using the telephone for communication was expensive in terms of processing, memory, and bandwidth. The “smart push” related to whether the information was interesting, being filtered appropriately, and being shared with the right people. That process was synchronous in both time and space. He described the experience of Lloyd Bucher, Commander of the U.S.S. Pueblo, whose ship was attacked and captured (along with classified documents and equipment) in North Korea in 1968. The information that he shared about the pending attack was intercepted within only 30 hours by the North Koreans, while it took DoD 4 days to determine that they could have sent F-4s to intervene. Mr. Stenbit described this as a time of strategic chaos.

A new system for communication—the broadcast system—eventually emerged, which represented both a smart push and a smart pull. Military broadcasting could take advantage of Moore’s Law, which has made both processing and memory inexpensive (although bandwidth remains expensive). Messages were sent once and multiple people

could be listening, he explained, which created an information integration system. The National Reconnaissance Office and the U.S. Air Force (USAF) engaged the Tactical Exploitation of National Capabilities and began to obtain real-time information, which was the epitome of a smart push: Important information was relayed to the National Security Agency or the National Geospatial Intelligence Agency to be processed and sent forward. This created a flexible way to change an organizational structure as long as someone knew to which channel to listen and had enough processing to assimilate it—this process was synchronous in time but asynchronous in space because people had to be listening at the right time, as a message was only sent once.

The first time that this system was used in command and control (C2) was during a 1975 evacuation from Lebanon. An ultrahigh frequency satellite broadcast (i.e., push to talk) connected the Pentagon with the U.S. Navy in Europe. During the evacuation communications, the system became overwhelmed owing to a conversation about one person who was refusing to evacuate without her pet, which was of little interest to those at the Pentagon. A realization occurred that transitioning to this new system required additional work to make things run more consistently. Mr. Stenbit stressed that strategy enables a movement from scarcity to abundance in order to gain speed; it is insufficient merely to complete the wiring and the programming. He highlighted a fundamental change for the military that resulted from this new communication system. When telephones were the main source of communication, the person that found the target had to be the person to shoot it because no other means existed to coordinate an attack. However, when satellite broadcast technology was adopted during the first Gulf War, anyone who saw a target broadcasted it, and anyone who was in the geographic region listening to the broadcast could shoot the target.

Mr. Stenbit described the challenge of dealing with the bureaucratic issues surrounding control of a country’s covert assets. Secretary Rumsfeld deployed approximately 1 dozen forward air controllers with the Northern Alliance in Afghanistan. The Global Positioning System (GPS) was crucial to hit targets because everything was interconnected by the broadcast system. This is an example of a successful situation that was asynchronous in space but synchronous in time. When this approach was used in the first Gulf War, enough information had been shared such that all of the Iraqi tanks were attacked safely from behind, a point at which they could not return fire. He emphasized that this type of real information integration only happens when there is a mechanism to be synchronous in time and asynchronous in space. He cautioned that this technique may not be successful when too many people are involved in the decision loop—just because information is obtained does not mean that it will be useful, which is an important consideration for AI.

In the 1980s and 1990s, Mr. Stenbit continued, bandwidth became less expensive and lasers and fibers became prominent. Because lasers have short ranges, the use of this technology cannot be asynchronous in space. Thus, even though bandwidth was inexpensive and could be wasted, the flexibility of space was lost. He explained that streaming video is the modern equivalent of fiber technology. Streaming video creates a situation that is asynchronous in time. Mr. Stenbit tried to produce a network using inexpensive and relevant bandwidth in the early 2000s. The objective was for everyone to be attached to a backbone, which could create a situation that would be asynchronous in both time and space. The ground network worked well for DoD and the intelligence community; however, connectivity to those in theater was unsuccessful.

With low-cost processing and memory, Mr. Stenbit remarked that data centers could be used to dynamically allocate processing. Prior to 9/11, the General Counsel under President Clinton decided that the intelligence community and law enforcement/military could not share information so as to protect the “chain of evidence” and control methods and techniques. After 9/11, this rule was revoked and information sharing occurred more frequently. He described a program in Las Vegas that used sophisticated facial recognition to track casino winners, which has since been used for the Non-Obvious Relationship Analysis. Having all 350 million people in the United States in this system would require the collection of approximately 1.5 billion actual identities until they started to converge. He reiterated the power of data centers in “wasting” what is inexpensive (e.g., bandwidth) to move from scarcity to abundance. He explained that fiber is not the only way to cheapen bandwidth: Reusing frequency and satellites makes it possible to transmit data at high rates. Frequency reuse in space is enabled by the application of Moore’s Law to the radiofrequency (RF) components of a satellite communication system. Engaging in frequency reuse in space, Viasat’s first satellite was launched in 2011. It had 150 Gb/sec in capacity, which cost $1 billion. Inmarsat simultaneously launched its system of three satellites, which cost approximately $2.5 billion for 30 Gb/sec. Viasat reused the frequencies more than Inmarsat did and now plans to launch three additional satellites

with worldwide coverage and 1,000 Gb each within the next 18 months. This will collect 3 Tb of data worldwide for $2 billion. He noted that the U.S. Navy may need more high-speed communications over the Pacific Ocean, perhaps via Viasat satellite. He noted that someone has to have spent the capital to take something that used to be very expensive and create what is in effect an overabundance, which presents a challenge when the Pentagon is involved. Making a comparison to the government’s decision to purchase the production of COVID-19 vaccines prior to U.S. Food and Drug Administration approval, Mr. Stenbit said that people spend money to create technologies in the information business that other people buy, using a mentality of waste.

He then discussed DoD’s use of the Defense Satellite Communications System (DSCS) I, DSCS II, and DSCS III. DSCS III had a lens antenna instead of two large satellite dishes—the large dishes were capable of wider bandwidth and better anti-jamming, but the lens offered more flexibility in terms of reuse and reallocation (which was especially important given the shortage of DSCS IIIs). The Wideband Gapfiller program, the replacement for DSCS III, was a version of an Earth coverage satellite in which the terminals communicated. It was channelized but did not reuse frequencies; instead, frequencies were allocated within the satellite to various paths. Mr. Stenbit described an important near-term opportunity: The USAF is interested in Link 16, which he described as the bridge between the satellites and the mobile forces that cannot communicate directly. It has 100 Mb of frequency, but one only gets 100 kb because it is a time division multiple access network; if more data are needed, more slots are obtained. He added that the USAF would benefit from assigning one person to pull all of the information systems together but not necessarily control them.

In closing, Mr. Stenbit said that it is difficult to do coherent acquisition when two groups are buying program elements that do not match (e.g., the USAF buying terminals and the Space Command buying satellites). He emphasized the value of planning for the future. Workshop Series chair and Workshop Three chair Ms. Deborah Westphal, chairman of the board, Toffler Associates, asked about some of the issues that have emerged as the USAF and DoD try to use advanced technology (e.g., AI and machine learning) to push information to the edges, especially amidst organizational stovepipes. Mr. Stenbit explained that if everyone posts everything to the Internet, and everyone changes their searches based on the current mission, a good browser helps to handle the problem. Singapore has the best system, he continued. He noted that people using browsers need help to find what they are looking for in the United States. He suggested that a free, automatic thesaurus for searches be added to browsers. He mentioned how frustrating it was that DoD and the intelligence community would not use the same time standard or geography standard. Problems do not arise owing to amount of bandwidth or size of data centers; they arise when people are not willing to cede control. If DoD adopts modern information technology, Mr. Stenbit continued, the current role of mid-level managers would drastically change. He emphasized that information is the area in which time gives the most advantage.

Dr. Michael Yarymovych, president, Sarasota Space Associates, pointed out that broadcast (i.e., synchronous) satellites create a several-second response delay. He commented that if the objective is to achieve instant communications or shorter time cycles, it may be necessary to work with low-altitude satellites, a network of which can reach the whole world almost instantly. Mr. Stenbit cautioned that satellites run into each other and noted that the cost of capital of a low-flying constellation is five times that of a high-flying constellation. He explained that low-flying satellites are best for reconnaissance and information gathering, which can be done instantaneously. He added that everyone knows how to defeat the current broadcast technology; Level 3 of the Internet of Satellites interoperability is the next step. He advocated for the full use of IP, in which people post the information that they have and others determine whether it is useful. Gen. Gregory “Speedy” Martin (USAF, ret.), GS Martin Consulting, Inc., said that when people start thinking of platforms as nodes, the primacy of the electromagnetic environment (the critical domain for warfighting) becomes a different mindset (the driver instead of the enabler) that the organizations are not set up to handle. Mr. Stenbit stressed that an IP-based system would allow independence in how the information is obtained. A distributed system with IP standards, time standards, and space standards would also be beneficial. To save time, he continued, DoD could rent GoogleSat1 instead of using the Transformational

___________________

1 Google sold its Terra Bella and SkySat satellite constellation to Planet Labs in 2017. “As part of the sale, Google acquired an equity stake in Planet and entered into a multi-year agreement to purchase SkySat imaging data” (Wikipedia, 2021, Planet Labs,https://en.wikipedia.org/wiki/Planet_Labs, accessed January 27, 2021).

Satellite Communications System. A clear rationale and clear standards can enable a focus on AI—the goal is to minimize maximum regret instead of optimizing the outcome.

WHY QUANTUM MATTER WILL MATTER, OR TIME IN A BOTTLE

Mr. Bo Ewald, president and chief executive officer of ColdQuanta, Inc., described the three waves of the Information Age: Electronics, Photonics, and Quantum. Starting in 1900, it was possible to harness electrons. This enabled the digital age, which began in the 1940s with the invention of the transistor. Prior to that, relays and vacuum tubes were the dominant technology. Starting in 1960, it became possible to harness photons and distribute them via fiber optics. This era ushered in a new wave of technologies including gyroscopes, instruments for eye surgery, and compact discs. In 2000, it became possible to control and use quantum mechanical properties, building on electronics and photonics waves.

With the Quantum Wave under way, there is tremendous promise for quantum technologies, including many applications beyond quantum computing (which was first discussed in 1983)—for example, communications, networks, imaging, medical imaging, sensing, timekeeping, positioning, gravimetry, magnetometry, and signal processing. Quantum technology could be used for drug discovery; unhackable time and location information; RF communications and radar; and AI and machine learning. He explained that quantum technology could solve high-value problems that conventional technology cannot. It may enable doing things better, faster, and cheaper as well as help to measure time more accurately and rapidly than is possible today.

While much hype surrounds quantum computing, Mr. Ewald continued, it is only the tip of the quantum-information iceberg. For example, although quantum computing could be helpful for DoD-specific problems in the future, quantum sensing may be more important in the near term. It may be possible to create better clocks and navigation devices that are portable, do not rely on satellites, and can survive GPS-denied environments. With RF sensing, it may be possible to listen to signals that are 100–1,000 times fainter than can be heard today and identify their location. Quantum communication may offer stealthy, secure communication paths. However, the fusion of various sensors may not come to fruition in this lifetime.

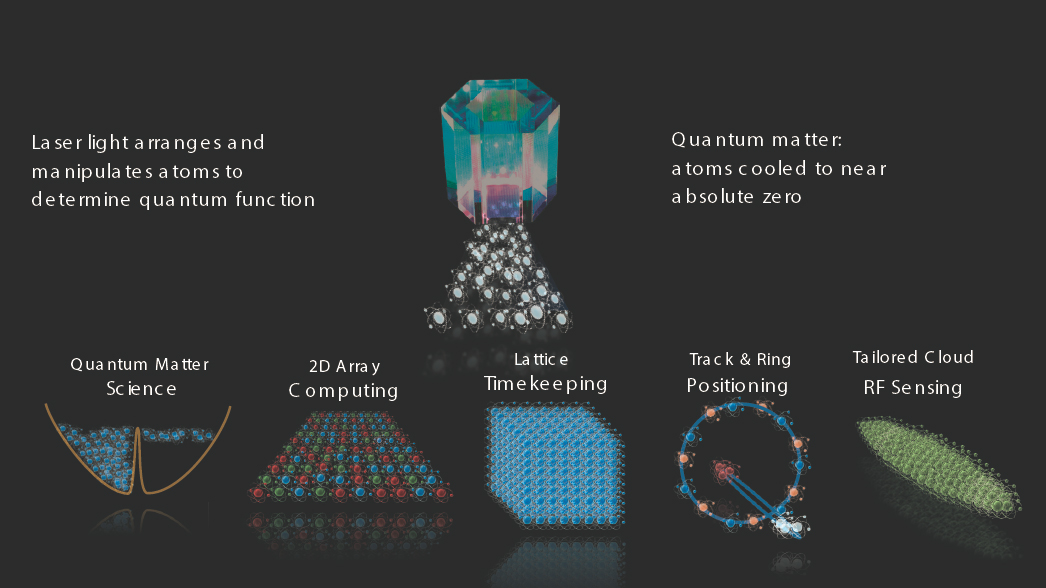

Mr. Ewald indicated that ColdQuanta is accelerating its work in quantum sensing and is focusing on harnessing atoms, just as previous waves of the information age harnessed electrons and photons. ColdQuanta applies Cold Atom Technology to build quantum devices. Cold Atom Technology relies on the 1920 Bose-Einstein condensate, which articulates that if it is possible to stop the motion of atoms, they would become very cold and create a new form of matter—quantum matter. ColdQuanta starts with a small glass cell (1” × 1” × 2”); one end is a high vacuum pump, and the other end is a source of atoms. The cell is evacuated and atoms of a particular species are placed in the cell. Lasers cool these atoms down to temperatures less than 1 billionth of a degree of absolute zero with no refrigeration equipment, and another set of lasers is used to manipulate them to create different products. Lasers provide precise control over the quantum properties of atoms, enabling everything from atomic timekeeping to quantum logic. One core technology can lead to multiple product families, such as two-dimensional (2D) array computing, lattice timekeeping in atomic clocks, track and ring positioning devices, and tailored cloud RF sensing detectors (see Figure 6.1).

Gen. Martin asked if this process is scalable to larger objects. Mr. Ewald said that within one glass cell, individual atoms can be manipulated by high-quality lasers that are typically bought close to off-the-shelf. It is a photonics issue, not a manufacturing issue: Mother Nature makes all of the atoms, and they are all identical. Gen. Martin wondered whether the atoms stay in that state, or if they require the aimed lasers to keep them in the state and to manipulate them to do something of value. Mr. Ewald replied that some work has to be done to prevent the atoms from falling due to gravity: Once the atoms are put in that state, they have to be supported, kept cold, and manipulated.

Two devices have been launched to the International Space Station (ISS) via National Aeronautics and Space Administration (NASA)/Jet Propulsion Laboratory programs. ColdQuanta created the Bose-Einstein condensate machine; NASA packaged it; and it was space hardened, launched, and has been in operation on the ISS for approximately 2 years—the first quantum experiment performed in space. It ran for 18 months, controlled both by people on the ISS and from Earth via the cloud. The second system was launched in December 2019 and is the

first quantum sensor in space, a quantum gravitometer. The purpose of putting a quantum sensor in space was to determine the possibility of more accurately measuring gravity while circling Earth on the ISS—the technology has been successful.

Mr. Ewald explained that GPS has become a global distributor of time that permeates every aspect of modern life. It is used not only for mapping but also by and for banks, timekeeping, stock exchanges, autonomous vehicles, power grids, shopping, point of sale, and transport. However, GPS is highly vulnerable to denial-of-service, jamming, and spoofing, creating high-priority challenges for both military and commercial applications. Quantum Positioning Systems (QPS) could eliminate these vulnerabilities and enhance the accuracy of certain applications. For QPS, one would create an inertial navigation system with a clock and an inertial measurement unit, the latter of which contains an accelerometer and a gyroscope. He commented that cold atom technology could be used to build these and, within a few years, timekeeping and positioning could be at least as accurate as today’s GPS-distributed time and positioning.

He discussed a project funded by the Air Force Research Laboratory on portable and ruggedized instant-on atomic clocks for GPS-denied environments. A prototype is expected to be functional by summer 2021. A second prototype could have the same accuracy but with better size, weight, and power. A third prototype (which would come out of a program not yet awarded) could have two orders of magnitude better accuracy. ColdQuanta is developing microwave clocks (i.e., instant-on clocks for GPS-denied environments) and optical clocks (i.e., always-on, accurate clocks for mobile applications) with prototypes forthcoming.

Another potential important DoD and civilian application is quantum signal processing. Mr. Ewald noted that this technology would improve upon digital signal processing because it would be able to pick up fainter signals, determine their origin accurately, and cover a broader spectrum without having hundreds of antennas. Military applications could include the ability to detect multiple frequencies of a target and of a moving target over time. Civilian applications could include the ability to field a spacecraft to listen to the universe without cryogenic coolers in space.

The quantum information chain is another application that could emerge in the future. In the current modern information chain—for example, in the case of interactive smartphones—information is sensed and signal processing takes place, but if a computer is not powerful enough or local decisions are not being made on what is sensed, the communication may be sent to the cloud for an answer. While that works sufficiently for some consumer devices, he continued, it is not useful for many other applications. The quantum information chain includes quantum signals, quantum sensors, and quantum signal processing. Those processed signals would be sent to a regular computer or a quantum computer. An autonomous vehicle has billions of inputs, so it is not possible to wait to send them to a computer for analysis. He stated that QPS eliminates reliance on satellite GPS and is “spoof proof”; quantum computing via the cloud provides route optimization; and QPS-aided AI gathers data from nearby vehicles and the environment to recognize impending dangers and determine the immediate best course of action. Similar scenarios apply to military and commercial vehicles in the air and sea. He emphasized that, with the example of autonomous vehicles, it is incredibly difficult to collect all of the information within a scene to be able to make real-time decisions (e.g., a pedestrian between two cars will not be seen), so there is much more work to be done to improve these technologies.

In response to a question from Dr. Brendan Godfrey, visiting senior research scientist, University of Maryland, about whether quantum radar exists, Mr. Ewald explained that quantum technology can detect RF signals that can be tracked as they move. If a device is emanating signals, it may be possible to track the incoming device. However, “radar” is not the right word to describe that process. Dr. Rama Chellappa, Bloomberg Distinguished Professor, Departments of Electrical and Computer Engineering and Biomedical Engineering, Johns Hopkins University, asked about how lessons learned from previous cycles of technology development (e.g., optical computing, molecular computing) inform today’s work. He also inquired about the market for a specialized quantum computer. Mr. Ewald detailed one of the most important lessons learned: If an architecture cannot run user applications, it is worthless. Customers always ask the same three questions: Can you run my application, or can I run my application on your computer? How fast? What does it cost? Over the past 10 years, people have also begun to ask how much energy the process consumes. He noted that optimization problems are the easiest for quantum computers to solve. There is also an application for the optimization-like protocol underlying many search and machine learning applications. He pointed out that the area with greatest potential is material science—the ability to model individual and industrial-strength molecules and design new ones from scratch.

Dr. George Coyle, senior program officer, National Academies of Sciences, Engineering, and Medicine, observed that quantum technology could fit in many areas of the Advanced Battle Management System (e.g., from 5G to the communication network, sensors, and compute to process large volumes of data). DoD and the intelligence community are interested in this because quantum computers can easily break conventional encryption keys. He wondered if it is possible to make keys that would be difficult to break, not only from conventional computers but also from other quantum computers. Mr. Ewald responded that, in terms of code breaking, the ability to run Shor’s algorithm on a quantum computer remains in the distant future. The machines need far more qubits as well as the capability for error correction. He suggested that, in the future, it could be possible to create a new encryption technique that is not key-based, could be used repeatedly, and is unbreakable by digital computers. He indicated that quantum machines are probabilistic, not deterministic, so if one were trying to decrypt codes, one might come up with a probability. He emphasized that there is certainly more work to be done in this area.

Ms. Westphal wondered what questions investors ask about quantum technology. Mr. Ewald replied that they do not ask about the physics or about quantum mechanics. They ask more business-focused questions such as why ColdQuanta works on several technologies instead of only one (i.e., quantum computing). He remarked that an appreciation does not yet exist for the breadth of the quantum sensing market. Quantum sensing will likely be more quickly profitable to businesses than quantum computing. Lt. Gen. Ted Bowlds (USAF, ret.), chief technology officer, IAI North America, asked when a useful machine or application could be available, and Mr. Ewald stated that a practical application of computing may be available within the next 5 years. He added that a range of hundreds of qubits is needed before having the ability to attack small, real-world problems.

SPEED AND DISASTER MANAGEMENT: ACHIEVING OUTCOMES IN TIME TO MAKE A DIFFERENCE

Mr. David Kaufman, vice president for safety and security, CNA Corporation, explained that disaster management in the United States is a distributed networked enterprise, operating at all levels of government and across all sectors of society (e.g., public, private, and civic). It falls under police powers reserved to the states and the people, and the federal government is always in a support role. The playing field has many autonomous actors—none of whom report to anyone—trying to achieve unity of effect without unity of command. A lack of capacity and information often hinder federal disaster response. He indicated that time matters in disaster management, and the bigger and more complex the event, the more important it becomes to stabilize quickly (e.g., within 72 hours). He examined the responses to five major disasters over the past decade to illuminate how to achieve outcomes in time to make a difference.

In the Deepwater Horizon Oil Spill in 2010, the technical capabilities needed to respond to the disaster were not available from the public sector. There were insufficient quantities of oil containment resources owned by the government in aggregate to deal with the event, so the public sector had to rely on and engage containment capabilities of private industry as well. There was also a lack of technical expertise in any government agency that was needed to cap the well, which continued to gush at the bottom of the Gulf of Mexico for 87 days. Industry had to be engaged to address this problem. Mr. Kaufman explained that the government was navigating a complex set of dynamics in working with the same industry that caused the disaster to address its consequences.

During Hurricane Sandy in 2012, more than 100 million Americans suffered extreme weather. Critical fuel shortages spanned the Northeast, 25 million people were without power for up to 2 weeks, and many New York City roads and subway tunnels were flooded. Mr. Kaufman stated that the Federal Emergency Management Agency (FEMA) assigned the Defense Logistics Agency to truck fuel in to refuel first responders’ vehicles in Manhattan. That mission quickly diverted to an attempt to provide fuel to the public writ large. Over 2 weeks of operating a full capacity, the federal government was only able to provide a very small fraction of daily demand for gas in New York City, so it was not able to address the problem. He noted that the government lacked an understanding of the fuel system as a whole in the Northeast, and it lacked a rapid diagnostic capability to identify the root cause of the fuel shortages. It spent a significant time (2 weeks) chasing the problem, with both operational and political ramifications. Capabilities deployed through direct action (e.g., fuel push) were grossly insufficient to meet the need, he continued. In contrast, to address the sustained power outages, the government did not try to solve the power problem directly; instead, it looked for ways to act as an accelerant of capacity that existed in other places. This approach was effective—approximately 500 crews from utility companies across the country were transported to the Northeast.

Hurricane Harvey in 2017 brought an unprecedented 50–60 inches of rainfall across the Houston-Galveston region, which made this a life-saving mission. Transportation routes were severed, islanding populations and preventing the inflow of additional response assets. Mr. Kaufman mentioned that this event was not just about the volume of water in the region; it was about the demographic change in the region that occurred prior to the disaster. Harris County (where Houston is located), the fourth largest county in the United States, grew by 35 percent between 2000 and 2017, and many people lived in floodplain areas because there was virtually no code or standard for building. There were simply not enough rescue capabilities to deal with the number of people trapped and whose lives were at risk from rising floodwaters. Harris County Judge Ed Emmett called on citizens with boats to help with the rescue missions, which enabled thousands more lives to be saved. Despite the serious liability issues associated with these actions, Mr. Kaufman commented, this is an example of successfully reaching beyond traditional resource channels to expand capacity to achieve the desired outcome.

Hurricane Maria made landfall in Puerto Rico the following month, only a few weeks after Hurricane Irma had knocked the power offline for approximately 1 million people. Requests for food filtered in, and this became the largest food mission in U.S. history, Mr. Kaufman explained. The goal was to deliver 6 million meals per day to the island, which had a population of 3.4 million people. Over 6 months, FEMA delivered 60 million meals (only about 5 percent of the need), most of which were concentrated in the densely populated San Juan and metro areas because this is where many people work and is the easiest location logistically to deliver mass quantities of

food. Food deserts corresponded with the highest concentrations of vulnerable populations—aid was not sent to the greatest areas of need. Forty percent of the population in Puerto Rico is on Supplemental Nutrition Assistance Program (SNAP) benefits, which could not be provided amidst the power outages.

Long distance transport from the mainland challenged distribution, he continued, and there was an assumption of total failure of grocery/food supply chains on the island. However, the private sector displayed surprising resilience in the food and grocery industry. Most grocery stores were open within 3 to 5 days and began placing orders, receiving shipments, and conducting transactions without power or telecommunications. The lack of power and payment systems hindered but did not stop commodity flow: Because of Puerto Rico’s unreliable power grid, many people and organizations have generators, which provided sporadic power. People paid with cash, or stores wrote lines of credit for the customers. Grocery stores also started acting as de facto banks for their regular customers. Mr. Kaufman described this as another example of the aspects of social capital and their relevance in achieving outcomes for people. He observed that, in this case, the private sector supply networks and the public sector relief channel operated as two mostly separate flows—there was no ability to see, monitor, and understand these parallel systems as a whole. All of the situational awareness, information, understanding of need, and operational understanding of truth on the ground was coming up through levels of the government because that is the way the system is designed. When all of the reporting sources are inside the government sector, it makes sense that the public sector would be unaware of what industry was doing in real time. The government was continuing to maximize its ability to push food for months after the disaster because it had a distorted view of the problem, even though it is now apparent that food was not the most important need. What was truly in short supply was telecommunications equipment. Public sector relief channels could not operate at the scale necessary to solve the perceived problem. The public sector did not fully appreciate or understand key dependencies in Puerto Rico such as the criticality of SNAP card processing or the manufacturing and export of saline. FEMA was thus trying to ensure outflow of a commodity from the impact zone as much as it was trying to get product into the impact zone, he explained. This short-term saline shortage created a serious disruption in hospitals across the country. The public sector did not fully understand where to assist, concentrating aid in urban areas at the expense of the most vulnerable areas and competing for scarce logistics capacity (e.g., containers, trucking).

When COVID-19 hit in 2020, Mr. Kaufman commented, there were widespread and persistent personal protective equipment (PPE) and medical goods shortages, and state governments were competing for supplies without the aid of the federal government. FEMA launched Project Air Bridge in March 2020 to accelerate the transport of commercially owned PPE. From March 29 through June 18, Project Air Bridge delivered nearly 1.5 million N-95 respirators, more than 2.5 million face shields, 937 million gloves, more than 2.4 million thermometers, 113.4 million surgical masks, 1.4 million coveralls, 50.9 million gowns, and 109,000 stethoscopes.2 They kept half of these supplies for strategic national purposes and pushed half into the private market. However, this did not address the core problem: limited production capacity for PPE and medical goods. FEMA was only procuring and delivering commodities, he explained. While the government used the Defense Production Act (DPA) to facilitate industry efforts to manufacture ventilators, no similar effort was made to manufacture PPE. COVID-19 is the first disaster in which the government has confronted critical global shortfalls in the commodities needed to respond to an event. There was no preexisting ability to assemble a real-time picture and understanding of aggregate demand for PPE/medical supplies during the event. He added that there was no preexisting ability to prioritize competing demands for scarce resources, both among states and among public and private entities within states. Solution sets appear to have involved mostly supply-side strategies (i.e., direct action procurement via Project Air Bridge, for example, and delivery and direction of additional productive capacity using the DPA). He asserted that the United States has not seriously explored demand-side strategies, such as leveraging long-term purchasing commitments and market forces to simulate expansion of production capacity.

In closing, Mr. Kaufman remarked that time matters most in combination with distance, density, and dependency. The nation’s greatest barriers to speed (velocity) have not been technical but mental (i.e., preconceived notions, incomplete observations, and failure to orient to context). The government has a bias to focus on its own

___________________

2 Federal Emergency Management Agency, 2020, “FEMA Phasing Out Project Airbridge,” Press Release HQ-20-161, June 18, https://www.fema.gov/press-release/20210318/fema-phasing-out-project-airbridge.

direct action and is slower to set conditions that empower action by others, he explained. Opportunities exist for the U.S. government to act as a catalyst and accelerant to speed response and restoration efforts in industry and by the public, achieving outcomes at greater scale than it could accomplish through direct action alone.

During the question-and-answer session, Ms. Westphal shared a series of takeaways and questions inspired by Mr. Kaufman’s presentation that could apply to military operations:

- There is a lack of understanding of the system of systems. As the USAF tries to network and connect nodes, an understanding of dependencies (e.g., USAF, government, and commercial/private entities) will be crucial.

- If the USAF is going to use commercial technologies, they will be used by citizens too. Does the USAF understand the consequences, and has it built partnerships that can be relied upon in a time of war?

- Thinking about the preservation of initiative, what happens in warfare when manipulation is possible in real time? Operations become far more complex.

Dr. Chellappa wondered if the United States lacks skills in predictive modeling, because it seems as though the nation is never prepared, even for disasters that happen every year at the same time (e.g., forest fires and hurricanes). Mr. Kaufman commented that tremendous gains have been made in predictive capabilities for hurricane tracks but not for predicting the intensity level at landfall. The general understanding of hazard risk is out of touch with reality as climate change increases, because it is currently based on historical information. Responding to Ms. Westphal’s previous statements, Mr. Kaufman added that neither the government nor industry understand the “whole system”; they each only understand their piece. There is value to be delivered by government and industry investments in developing that broader picture. Gen. Martin asked about insights on centralized control, centralized operations versus decentralized operations, and centralized planning. Mr. Kaufman reiterated that constitutionally, emergency management is reserved to the states and the people. Hurricane Katrina revealed that FEMA did not have the ability to control its own successes and failures, and centralized control over operations was needed. Constitutionally, the system is decentralized, with tremendous power. In the past 5–7 years, FEMA has tried to embrace and manage those challenges, but it is difficult when states’ capacities differ. There is always a tendency to try to exert control, which is problematic. It is possible to be much more effective by setting conditions and enabling others to bring their capacities to bear, he continued. In the disaster management realm, that approach more closely resembles the cluster system with underpinned humanitarian relief operations internationally than the National Incident Management System operated in the United States.

ARTIFICIAL INTELLIGENCE ADOPTION AND ITS IMPACT

Dr. Jill Crisman, principal director for AI, Office of the Under Secretary of Defense for Research and Engineering (USD(R&E)), is working across the services to make AI a reality for DoD. DoD is interested in AI because the United States is in an “AI arms race” with Russia and China, who have partnered to develop military applications of AI technologies and who claim leadership in AI by 2030. Whomever leads AI in 2030 may rule the world to 2100, she continued. As a result, DoD had adopted an AI Strategy to accelerate AI adoption.3 This strategy includes delivering AI-enabled capabilities to address key missions; scaling AI impact across DoD through a common foundation and data management approach that enables a decentralized development and experimentation; cultivating a leading AI workforce; engaging with commercial, academic, and international allies and partners; and leading in military ethics and AI safety. She said that the acceleration of AI has to be a department-wide effort, but it will be synchronized and guided by the Joint Artificial Intelligence Center (JAIC). It was determined that science and technology (S&T) (“AI-Next”) would work on the foundational AI research and technology creation, pushing out cutting-edge AI subsystems and components for the mission systems to adopt. The mission systems (“AI-Now”) are integrating these into platforms that can be deployed and providing operational data and feedback. All of this

___________________

3 Department of Defense, 2019, Summary of the 2018 Department of Defense Artificial Intelligence Strategy: Harnessing AI to Advance Our Security and Prosperity, https://media.defense.gov/2019/Feb/12/2002088963/-1/-1/1/SUMMARY-OF-DOD-AI-STRATEGY.PDF.

happens through a common foundation for an AI/machine learning platform. AI-Next is funded through 6.3 money for advanced technology development and AI and is comprised of USD(R&E), the Defense Advanced Research Projects Agency (DARPA), and DoD research laboratories. AI-Now utilizes 6.4 through 6.7 funding and includes the Office of the Under Secretary of Defense for Acquisition and Sustainment, service program executive officers, combatant commands, rapid prototyping offices, chief information officers (CIOs), and DoD CIO/JAIC.

Dr. Crisman shared John McCarthy’s definition of AI: “the science and engineering of making intelligent machines.”4 DoD’s AI Strategy explains that AI “refers to the ability of machines to perform tasks that normally require human intelligence.”5 She described several technologies including GPT-3, which scanned almost the entire Internet of documents, code, and other open source types of information and can now generate text; Alpha-Go, which is a representation of a strategy game for wartime operations; detection of fake images and videos; and DARPA’s Alpha Dogfight, which placed a skilled human pilot in a virtual, simulated world with AI agents. The goal of AI engineering is to transform AI-Next to AI-Now so that technologies can be deployed. USD(R&E) has supported AI research throughout its history—AI has been studied since almost the beginning of the era of electronic computing, and commercial AI software engineering has created commercial products from the research results that were partially funded by DoD. Although the United States is the current leader in AI research and commercial engineering, she cautioned that China is catching up quickly.

Dr. Crisman explained that autonomous robotic systems have always been in AI’s domain. What makes the autonomous systems unique is that they are implementing the observe-orient-decide-act (OODA) loop independently and communicating with people to determine and collaborate on tasks. Research areas include robotic navigation, swarm robots, humanoid robots, and human–robot teaming. The next level down from autonomous robotic systems are AI components, or AI microservices. This could include natural language processing, computer vision, sensory processing, and representation and reasoning, which are all part of building the OODA loop. The base level includes the platforms, such as machine learning, deep learning, and AutoML. Automatic machine learning platforms make it possible for people to write software without writing code. Deep learning is AI research’s latest breakthrough: Deep learning builds computer models of brains that can then be trained by experiences through images, for example. It can learn how to solve a problem. She indicated that AI and machine learning would greatly benefit DoD—for instance, more human–robot interaction and more unmanned systems could better protect warfighters from harm.

Building AI components, hardening them, and improving them over time is one of the pathways to autonomous systems, Dr. Crisman continued. Data discovery, situational awareness, and decision support are critical components for DoD. At the underlying level, DoD is starting to build up factories to create software by traditional coding as well as allow people to write code with data and eventually to write code by changing the rules that the machine can learn. She emphasized that DoD would benefit from the democratization of AI software creation, which happens through machine learning. Most cloud infrastructure vendors also offer machine learning development platforms for text and images. Users upload annotated text or image corpora and automatically build software from machine learning. Users who create their own software understand its capabilities and limitations through continuous data set development and model testing and accept AI/machine learning as a partner in their tasks. Analysts studying data at the forward edge can look for anomalies in the data for early indications that the adversary is changing tactics and build automatic detectors in their data. The models and annotated data can be shared digitally, she explained, enabling information discovery across the entire enterprise. This discovery also enables an alerting process: When an analyst develops an alert, that information can be passed up and then pooled to identify connections. More data about this new threat or indicator leads to the creation of better models for detection, which can be redeployed across the environment, she said. She added that perception for situational awareness is also very important, via access to massive amounts of operational data with labeled information. Data science will also play a key role because when the models are trained, the data sets will have to be curated to represent the problems of relevance. All of this could be enabled by deep supervised learning. AI and deep

___________________

4 J. McCarthy, 2007, “What is Artificial Intelligence?,” November 12, http://jmc.stanford.edu/articles/whatisai/whatisai.pdf.

5 Department of Defense, 2019, Summary of the 2018 Department of Defense Artificial Intelligence Strategy: Harnessing AI to Advance Our Security and Prosperity, https://media.defense.gov/2019/Feb/12/2002088963/-1/-1/1/SUMMARY-OF-DOD-AI-STRATEGY.PDF.

learning enable reasoning for strategic and tactical decision support. A virtual environment then makes it possible to represent the 2D game board or three-dimensional environment, actors, moves, and goals. Digital engineering is used to create the models for these games, and deep reinforcement learning learns the shortcut strategy for determining the next best move in the current situation. She mentioned that unmanned vehicles could augment forces against an adversary with a much larger population if the integration of accurate and fast AI components could be trusted to carry out the OODA loop on its own: Human–machine teaming and a virtual environment for testing could enable this capability.

Dr. Crisman pointed out that there are several AI engineering challenges that are specific to DoD. DoD has to consider whether it wants to push machine learning because it allows people who do not know how to code to build software; if so, it would create platforms for the multiple skill levels that exist within DoD. With users all over the world needing to collaborate, she suggested that DoD build a federated system that would enable the sharing of information. Cost, security, and ethical constraints would also be considered, she continued. In terms of AI components, DoD has to work through the problem of transition between domains. It has to rebuild platforms for every program using limited data and address the problem of inadequate access to operational data and digital models. Performance, timing, cost, and ethical constraints are also worthy of consideration. If DoD is going to create autonomous robots and vehicles, she stressed that AI/machine learning components have to be highly accurate and fast.

Continuous improvement of AI/machine learning components is also necessary, she explained. To do this, intuitive human–machine teaming and ethical use of AI in the human–machine OODA loop are important. DoD compiled a list of five AI ethical constraints in February 2020 for all of the AI deployed across different levels of DoD:

- Responsible. DoD personnel are responsible for the development, deployment, and use of AI.

- Equitable. Unintended bias in AI is minimized.

- Traceable. AI engineers and AI/machine learning software developers possess an appropriate understanding of the AI technology, development processes, and operational methods.

- Reliable. AI will have explicit, well-defined uses that will be tested for safety, security, and effectiveness across their entire life cycle.

- Governable. AI systems will detect and avoid unintended consequences, and DoD personnel will have the ability to disengage or deactivate deployed AI systems that demonstrate unintended behavior.6

AI’s impact across DoD can be scaled through a common foundation and data management approach. A common foundation enables decentralized development and experimentation. Dr. Crisman described several efforts from the research and commercial communities to enable deep learning: (1) Distributed compute and storage, using graphics processing units (GPUs) and GPU clusters; (2) a community of AI/machine learning development ecosystems to share source repositories—model zoos are available for less expensive transfer learning from deep learning models; (3) open competitions, which provide the opportunity to automatically test AI/deep learning models and provide results; and (4) massive persistent indexed data, with archived data from mobile devices and automated governance policies, that are constantly available and growing to continuously improve and curate training and test data sets. She advocated for a structure that is federated in its development and in its operations: a layered approach from data discovery, to data indicators, to game play. The common foundation is DoD’s deep learning enabler. Not only does the common foundation serve as a platform for mission systems to build on but it also allows for the S&T to build on the same technology and potentially push new platforms, AI models, and new systems onto this common platform that has the ability to alter the key components of these systems. Once pushed out onto a common platform, it is easier to adopt in missions. She emphasized that not only does the common foundation accelerate the adoption of AI but it also accelerates DoD’s ability to move AI S&T rapidly into mission systems.

___________________

6 Department of Defense, 2020, “DOD Adopts Ethical Principles for Artificial Intelligence,” Press Release, February 24, https://www.defense.gov/Newsroom/Releases/Release/Article/2091996/dod-adopts-ethical-principles-for-artificial-intelligence.

Dr. Crisman noted that in the past, software and acquisition strategies used a waterfall approach. In the early 2000s, people developed the concept of agile software development, in which developers work on a period update cycle (e.g., every 2 weeks). The architecture was reimplemented to be end tier, and virtual servers replaced physical servers. The new acquisition and sustainment strategy shifts away from this waterfall approach to a more agile method of monitoring the system. This may lead to the development of products that are closer to what is desired. She expressed excitement about the development, security, and operations (DevSecOps) process, a continuous cycle for building software, which is put through an automated test pipeline, a deployed pipeline, and a monitoring pipeline, with feedback for future developments. This is coupled with the concept of microservices, the purpose of which is to do one thing and do it well. Dr. Crisman explained that the software is broken into components that implement a single function. The microservices architecture is organized as per mission capabilities; focuses on software product (not process); utilizes decentralized governance and data management; and is elastic, resilient, composable, minimal, and complete. The DevSecOps platform is a common code base for everyone, with individual mission platforms (e.g., an intelligence software factory and a logistics software factory). With all of these software factories, and services on the platform, it is easier to spin-off several applications. Adding this common foundation of machine learning will help the DevSecOps software developers to develop components that go into the back end of these systems and then rapidly adapt the front-end user interfaces to utilize the best things that warfighters need right away in their handheld device or aircraft applications.

Dr. Crisman displayed her advanced AI adoption roadmap for the coming decade. Several AI technologies for S&T are mature and ready to be developed across the department. Other technologies could mature over time (e.g., with the right data sets) (see Table 6.1). Dr. Crisman reiterated that AI will be impactful for DoD at three levels: autonomous robots, AI components, and AI/machine learning platforms.

During the question-and-answer session, Dr. Godfrey asked if China will overtake the United States in the race for AI and whether U.S. progress is limited by a lack of talent. Dr. Crisman pointed out that the country that will “win” in machine learning and AI is the one with the data and the expertise to use them. She acknowledged that China has been rapidly collecting data, but she did not know if China has a strategy for how to use them

TABLE 6.1 Department of Defense Advanced Artificial Intelligence Adoption Roadmap

| Integrate AI-Now < 2 years |

Engineering 2-5 years |

Applied Research 5-10 years |

Basic Research > 10 years |

|

|---|---|---|---|---|

| Infrastructure |

|

|

|

|

| Data and AI/ML Platforms |

|

|

|

|

| AI Services |

|

|

|

|

| Applications |

|

|

|

|

NOTE: AI, artificial intelligence; ASICS, application specific integration circuits; DL, deep learning; DoD, Department of Defense; FOSS, Engineering and Construction Company Limited; FPGA, field programmable gate array; GPR, ground penetrating radar; GPU, graphics processing unit; ML, machine learning; NLP, natural language processing. SOURCE: Dr. Jill Crisman, Office of the Under Secretary of Defense for Research and Engineering, presentation to the workshop, October 2, 2020.

effectively. She said that the United States has an advantage in its decentralization; whereas China and Russia will try to centralize decision making and analysis, DoD is trying to push down decision making and control (and software) to the lowest level possible and try to understand commander’s intent instead of direct orders. This approach creates agility and flexibility. Recalling the Intelligence Advanced Research Projects Agency’s Janus project, Dr. Chellappa noted that the program has several best practices that could be taken forward by DoD in terms of training core, small data problems, and application programming interfaces. He asserted that DoD would benefit from the acquisition of data, not just software.

Thinking of the efforts of the developers, Ms. Westphal asked if there is a parallel human effort to support the technology endeavor. For example, is there a human roadmap to accompany the technology roadmap? She also wondered how to train people to be good teammates with technology. She referenced games that could be used to teach humans about their own biases, although people have to be continually reminded to avoid reverting to their biases. Dr. Crisman commented that people use AI on smartphones without training; she did not think that people would need training to adopt the technology unless the system is more complicated. She noted that JAIC is considering how to train the workforce that will use AI, and she has been working on how to train the workforce to develop machine learning algorithms. She described the question about human–machine teaming as an important one—interfaces will likely vary by system, and DARPA is currently researching human–machine teaming. Research on bias is also ongoing, but she said that once bias is discovered in a data set, there are concrete steps to remove it. Dr. Chellappa explained that the training process for human–machine teaming is missing the incorporation of human models. He added that AI is much more than deep learning, and the two should not be interchanged. Deep learning provides good representation, but it is only one component of AI. Dr. Godfrey asked how to approach the acquisition issues associated with AI. Dr. Crisman replied that DoD aspires to buy microservices instead of monolithic systems. She is in discussion with the defense industry and various start-up companies about the model to charge for software. Once financial models exist, the acquisition strategies can be revisited, because the goal is to acquire easily and recruit developers rapidly.

OPERATIONS AND THE ECOLOGY OF TIME

Dr. Julie Ryan, chief executive officer, Wyndrose Technical Group, and Dr. Joseph “Jae” Engelbrecht, president and chief executive officer, Engelbrecht Associates, LLC, considered the dimensions of time relative to operations and how humans are going to interact with technology. Dr. Engelbrecht described a scenario to illustrate the dimensions of time:

Tensions between the West and the Red have been growing. Time is linear, time is passing, developments are increasing or decreasing over time, and the capability of both sides has been increasing. From a time perspective, there have been linear developments at different rates. There have been challenges and successes at integration, and synchronization with legacy systems remains a challenge. There have been some leadership, cultural, and organizational initiatives (hopefully the increase in trust and the building of relationships), and the needs for human talent have been clarified. All of this suggests notions of progress for the West. The Red side has recognized the value of progress and is pursuing it. Incidents lead to the West’s deployment to the region. It takes time to deploy—the time to deployment is linear. Previous practice and exercises tend to make deployments seem cyclic, but they are not cyclic in a causal sense. The West’s capabilities now seem exquisite, having surpassed joint all-domain command and control to connect platforms across domains, use multiple sensor modalities, and embrace features for speed and stealth. From a time perspective, this is linear progress. The rate of change seems accelerated, and knowledge has been accumulated over time, which is important. Machines have been added to help learn in near real time. The stakes are high. The salience of the situation, including time, depends on the context. Understanding the “battlespace” is critical; it takes time to understand the adversary, so preparation and perspective need to be in mind and/or built into operational plans. The West understands that it is challenged by complexity and overlapping systems. Complexity, others’ decisions, system effects, and uncertainty expand notions of time and space. The West’s decisions are contingent on others’ decisions and actions (and vice versa). Chaos, chance, and uncertainty are features of complexity; operations “in time” must deal with complexity and chaos.

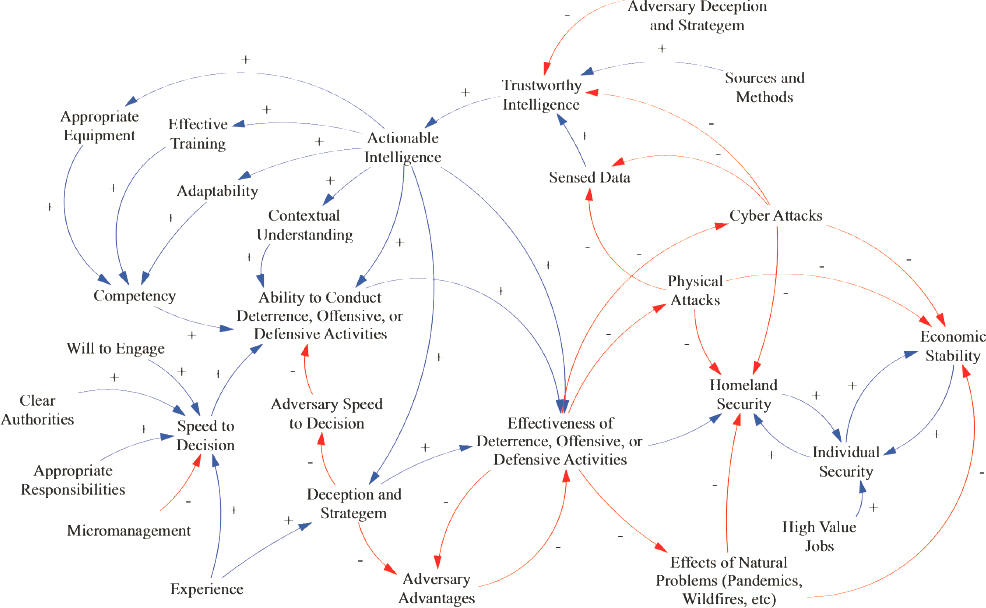

Dr. Ryan explained that a causal model of system complexity illustrates the challenge of chiefly focusing on the speed of time (see Figure 6.2).

There are time dependencies among all of these components. Each line in Figure 6.2 indicates a delay: getting trustworthy intelligence and turning it into actionable intelligence; identifying types of equipment that will be needed in the future battlespace; deciding how to do effective training; determining how to increase adaptability and in which dimensions; and developing the contextual understanding necessary for the competency to create an overall ability to conduct deterrence, offense, and defense. There is a speed to decision on whether to execute that ability, which is dependent on political will to engage, the existence of clear authorities, appropriate responsibilities, and experience. All of these things are affected by adversaries, she said. For example, adversary deception and stratagem undermine trustworthiness of intelligence. Cyber and physical attacks can subvert the sensors depended on for data, and all of this is related to homeland security, economic stability, and individual security. In addition to the time perspective, each line in Figure 6.2 indicates a flow of information in the knowledge supply chain, which includes several elements. One has to create knowledge to be able to act on it, but knowledge is also shared, integrated, routinized, and exploited. She noted that these are underscored by a technology base that is empowered by energy, and machines that are increasingly relied upon require external energy (e.g., alternative and localized energy production). This concept of the knowledge supply chain also implies a knowledge security aspect: Knowledge is available to be used, is accessible, and is sufficiently trustworthy to make decisions. For a human (or an AI) to build knowledge, she continued, it has to be learned, and learning takes a significant amount of time. For humans to learn, they have to be given time to reflect, contextualize, test hypotheses, and integrate into their worldview. This is formalized as training and education, which is used to create disciplines. As every discipline becomes more technologically focused, the language becomes more specialized, which creates friction in knowledge sharing between disciplines and creates more time lags. She stressed that overlaying this is national culture and discipline culture. The time lags associated with acquiring, manipulating, and using knowledge; sharing

knowledge; and appreciating knowledge have to be thought of in terms of cultural aspects, as well as in terms of the tools developed for knowledge use. She emphasized that this can present an opportunity: If cultures appreciate technologies differently, there may be a seam that could be exploited to cause them to doubt the effectiveness of their technology, which would increase a time lag in their use of technologies.

Dr. Engelbrecht returned to the scenario initially presented between the West and the Red:

Imagine that Red implements a series of disaster procedures and “goes dark.” Sensing and orienting in time benefit from predictability and seeing anomalies. Gaining that time advantage can be complicated by a sensor overload or by a decrease in sensor input. Adversary behaviors with a strategic view of time can change the battlespace. Imagine having discovered micro enzymes in the oil storage, pipeline, and refineries. They clog up the whole system, and oil and gas prices are driven up. This leads to protests in the United States and around the world as well as calls for the U.S. strategic reserve to be used only for domestic use. Also troubling are infrequent anomalies in timing and navigation signals. From a time perspective, the adversary may be thinking about time before we were thinking about it. A strategic view of time expands relevant actions and consequences, changes what is salient in time, and can lead to a paradigm shift on which value guides actions. Operations are often asymmetric in place, time, and value (value in terms of what you wish to protect and value in terms of the results of an attack). The West reduces its activities to conserve fuel and remains vigilant. Even when conflict seems imminent, time plays a role in crisis stability, de-escalation, and escalation management. Thresholds in time and space matter. Questions emerge as to whether “joint all-domain operations” means that all domains must play or that asymmetry is selective. The world waits and watches: Others evaluate the global impacts of the conflict, but time is needed to deal with “future shock” and to understand an evolving global order and leadership.

In summary, he said that time in operations is more than speed. It can be linear, progressive, and cyclic. It occurs at different rates and can potentially be accelerated by technology and an enhanced human interface. It can be bounded or stretched by the context of the situation. Decisions and consequences, in time and over time, are affected by complexity, chaos, and uncertainty. Orienting is more important than sensing, and sensing can be distorted by overload, reduced input, feints, and misinformation. Adversaries have a strategic view of time, he continued, which is crucial to understand. The salience of time reflects values and can shift. Managing conflict and time means understanding thresholds, crisis management, de-escalation, escalation, asymmetry, and a clear focus on desired outcomes.

Dr. Engelbrecht proposed five questions and challenges:

- Do “time cycles” accurately reflect how to consider time in operations? Cycles normally reflect repeated activity and most often apply to machines. Where humans are involved, they are normally interchangeable cogs. What more accurately describes time in operations? “Time perspectives,” which are human and varied?

- “Control” is an industrial notion relevant to machines in closed systems. With an increased desire for decentralized operations, changing human–machine interfaces, and increased autonomy, does the term “control” describe operations? Would terms such as “command and conduct” be more accurate than “command and control”?

- How will the USAF develop and sustain the talent to conceive and operate a 21st century CONOPS?

- Timely, trusted data to the tactical edge emerges from good, clean energy, which is not sufficiently available.

- How will the USAF learn and use the adversary’s notion of time?

During the question-and-answer session, Dr. Yarymovych noticed the emphasis on Western society’s view of time as a linear value and wondered about the Chinese model of logic. Dr. Engelbrecht said that in Buddhism or Hinduism, for example, there is no expectation that the current life will improve; instead, life will improve in the next world. Although the notion of progress can be learned in Eastern cultures, the perspective remains different. Reflecting on the differences between Eastern cultures that practice mindfulness and Western cultures that privilege speed and 18-hour workdays, Ms. Westphal asked if there is a connection between operations and cultural differences. Dr. Engelbrecht stressed how much thought a commander puts into the circumstances before

engaging forces; in Eastern cultures, there is a certain notion of mindfulness embedded—what is moral and what is right are one in the same. Dr. Yarymovych explained that the world is on the precipice of a new concept of global domination, with machines performing better than humans and the advent of facial recognition technologies. Dr. Chellappa added that a strength of Eastern culture is the ability to wait, with the long-term game in mind in terms of strategy. He noted that because wars are fought outside of the United States, an understanding of other cultures is imperative.

Dr. Richard Hallion, senior adviser, Science and Technology Policy Institute, described a 1945 study by Maj. Gen. J.F.C. Fuller that said that the weapon with the greatest reach would dominate conflict. He agreed with Dr. Yarymovych that a transformation is taking place now, with a new era of warfare emerging, and he supported Dr. Chellappa’s assertion that the perception of time and immediacy in time as well as the need to move rapidly in time differs greatly among cultures. Dr. Hallion wondered if humans are really “interchangeable cogs” when there is so much variation in the interpretation of events, for example. Military decision making often reflects the dissimilarity of people’s thinking; in fact, in conflict, the United States has taken advantage of systems that have tried to impose a way of thinking on its military members. Dr. Engelbrecht agreed and explained that the word “control” originated from the notion of how humans control machines. When that term is used and people are part of the process, there is an inappropriate assertion that those people are interchangeable. Thus, the phrase “command and control” may not best describe the variety of talent and all of the tasks that people who are so important to the enterprise are asked to do.

Ms. Westphal shared an anecdote about an innovation workshop during which USAF leadership completed Myers-Briggs evaluations. The results showed that there was very little diversity in the mindsets of the senior leaders. Given the rotation of senior leaders every 18 to 24 months, she wondered if they are being treated as “interchangeable cogs.” She expressed concern that as technology advances, an organization is at risk of becoming less human. Dr. Hallion agreed that leadership is a self-selection process for a certain kind of individual—and that template is used for the entire organization. Ms. Westphal added that DoD does not have an appreciation of diversity, especially for great thinkers. Gen. Martin pointed out that the USAF selects the people who are going to lead the USAF based on their previous fighting experience. The USAF is the only service that requires its colonels to fight and pull triggers. He suggested rethinking this “tribal” model, in which good aviators and conformists are promoted—the U.S. Space Force has already broken from this tradition. He advocated for the selection of “champions” with innovative ideas and advised care to avoid becoming so precise in language that the importance of the term “command and control” is lost. He underscored that “command” is the art of warfare (the person responsible for designing the way to employ forces and set up processes to ensure that they can be controlled), and “control” is the science of warfare. He asked how to bring about harmony in C2 networks, training, and structure, as well as synchronize the speed of the tasks. Dr. William Powers, retired vice president of research, Ford Motor Company, explained that conduct is a behavior, command is an input, and control is an output. The command structure determines what should happen, and that structure is controlled to achieve the desired goal. Control implicitly has a goal built into it, but conduct does not; thus, the phrase “command and control” makes sense. Ms. Westphal said that the idea of “command and control” is outdated because it does not take into account the complexity of pushing out technologies. The fact that everyone is connected via social media and is in possession of data creates a complex problem, which adds chaos and chance. The military does not like, nor is it thinking about, the notion of chance. Dr. Godfrey added that although the world is chaotic, it is not random; it is important to recognize the tremendous amount of order in chaos. He suggested that workshop participants view a Public Broadcasting Service series Hacking Your Mind, which demonstrated that behavior is deterministic. People in hostile societies learn how to take advantage of the deterministic character of people’s thinking.

Gen. Martin noted that the world has both interconnectivity and a lack of control. He observed that even American sports (e.g., baseball, basketball, football) are about controlling the clock, controlling the ball, and using the hands; the rest of the world prefers sports, such as soccer, that emphasize finesse. This notion of control is part of the American psyche, he continued. When we lose control, we get violent, which is a cultural issue that is difficult to eliminate. What is also difficult is the notion that the United States is hierarchical by nature; as information is proliferating and organizations are flattening, no one knows how to cope. He wondered what it is about this particular environment that prevents a convergence of interests and a focus to move things forward.

Dr. Engelbrecht clarified that if young talent is going to build a new concept of operations, language is important. For example, the commercial world would never use the term “supremacy.” The commercial world instead uses comparative terms to indicate competitive advantage. He emphasized the value of adopting precise language to accurately reflect the circumstances.

Lt. Gen. Bowlds noted that the USAF organizes around and controls and promotes its people based on its comfort with the status quo. That gets disrupted when the “Challenger Effect” sets in (e.g., NASA did not reform until it had a major crisis). The challenge is to convince people to view the status quo as the crisis instead, he continued. If the same types of people continue to get promoted into senior positions, change will not occur. Gen. Martin reiterated that the USAF does not train its airmen to think; it trains them to execute and then procedures are developed. The USAF allows process to determine how to accomplish a task, and it teaches people how to accomplish that task under different circumstances. This creates a safe, effective system, but it does not teach people how to think or encourage people to ask questions. Ms. Westphal emphasized that there has to be a balance moving forward between execution and thought in order to increase speed. Dr. Godfrey pointed out that all large organizations have the same problem: The USAF’s success may be what prevents it from adapting.