Systems Engineering: Opportunities for Health Care

Jennifer K. Ryan

Purdue University

Systems engineering involves the design, implementation, and control of interacting components or subsystems. A system consists of interacting, interrelated, or interdependent elements that form a complex whole, a set of interacting objects or people that behaves in ways individuals acting alone would not. The overall goal of systems engineering is to produce a system that meets the needs of all users or participants within the constraints that govern the system’s operation. The objectives can generally be divided into two broad categories: service and cost. Service can be measured by a variety of criteria, such as availability, reliability, quality, and so on. Cost is usually measured by how much costs can be reduced or at least controlled.

A final objective of systems engineering is to gain a better understanding of system behavior and the problems associated with it. Models enable us to study the impact of alternative ways of running the system—alternative designs or controls and different configurations and management approaches. In short, systems engineering models enable us to experiment with systems in ways we cannot experiment with real systems.

Systems engineers generally prefer to work with analytical or mathematical models rather than with conceptual models because they are generally better defined, have more clearly defined assumptions, and are easier to communicate, manipulate, and analyze. We begin with a graphical representation of the system, which often includes a diagram showing the flow of information and resources. We then create a mathematical description that includes objectives, interrelationships, and constraints. The components of the mathematical model can be divided into four categories: (1) decision variables, which represent our options; (2) parameters or givens, which are the inputs to the decision-making process; (3) the objective function, which is the goal, the function to be optimized; and (4) the constraints, which are the rules that govern operation of the system.

When dealing with large complex systems, we often deconstruct it into smaller subsystems that interact with one another to create a whole. The decision-making structure provides natural breaks in the system. We model and analyze the subsystems and then connect them in a way that recaptures the most important interdependencies between them.

Systems engineering requires a variety of quantitative and qualitative tools for analyzing and interpreting system models. We use tools from psychology, computer science, operations research, management and economics, and mathematics. The quantitative tools include optimization methods, control theory, stochastic modeling and simulation, statistics, utility theory, decision analysis, and economics. Mathematical techniques have the capability of solving large-scale, complex problems optimally using computerized algorithms.

Mathematical models clarify the overall structure of a system and reveal important relationships. They enable us to analyze the system even when data are sparse. Models, combined with analyses, reveal the most critical parameters and enable us to analyze the system as a whole. Sensitivity analysis involves testing out trade-offs. Before we can convert a model solution to an implementable solution, we must test and validate the model to ensure that it actually predicts the behavior of the system.

A logistics system can be defined as a network of suppliers, manufacturing centers, warehouses, distribution centers, retail outlets, and end consumers. The system includes raw materials, work in process, inventory, finished products, all of the materials in the system, all of the information that flows within the system, and all of the resources in the system (e.g., people, equipment, etc.). Logistics-systems engineering can be defined as the planning, implementation, and control of the system to ensure the efficient, cost-effective flow and storage of all materials and information from point of origin to point of consumption for the purpose of meeting customer requirements. Our goal is to ensure that the right

amount of materials or resources is in the right place at the right time at minimum cost.

We deliberately leave the definition of service (i.e., meeting customer requirements) somewhat vague so we can define the needs and requirements of different customers in different ways. Logistics-systems engineering involves the difficult problem of simultaneously improving customer service and quality, improving timeliness, reducing operating expenses, and, if possible, minimizing capital investment. We are also interested in answering strategic questions, such as where we can expand capacity or what types of collaboration with customers or suppliers would be most beneficial.

Systems engineering problems have some common characteristics. They tend to be interdisciplinary, involving both technical and nontechnical fields. They require multiple, high-level, or strategic metrics or performance measures, often measurements of nonquantitative factors (e.g., customer satisfaction). They involve many participants with different value systems and many decision makers; therefore, we have to find optimal solutions that meet conflicting criteria. The systems and issues tend to be hierarchical and complex, but the systems also evolve and change over time; they generally involve significant uncertainties. Much of the current research in logistics is driven by the needs of public and private organizations, such as health care systems, that operate in environments characterized by intense competition, constant change, and a strong focus on customer needs.

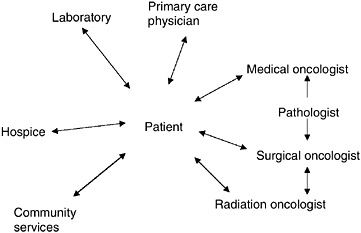

Health care delivery systems, for example, consist of a variety of health care organizations, caregivers, and patients. State and federal governments are involved, as well as a variety of other organizations. These complex systems also involve a large number of interconnections between the components and the system—multihospital systems and provider networks with linkages between hospitals, physician groups, insurers, and others. There are also many decision makers who often have conflicting criteria, and there are complex interactions between participants. The effective organization and management of a health care delivery system requires careful management of resources to ensure that the necessary staff and equipment are in the right place at the right time. The problem is complicated by uncertainties and system complexity.

Some aspects of the health care delivery system, such as government intervention, the level of uncertainty, and the nature of the demand, appear to be unique to health care. But similar problems can be found in other industries, such as the telecommunications and electricity industries, which also have to factor in government intervention. The nature of the uncertainties may be different, but they have similar effects on the system. Both the telecommunications and electricity industries have used logistics models to their advantage.

Systems engineering models can provide structured, quantitative methods of studying alternative control policies and system designs for almost any industry. The methods can be used to help coordinate information systems, operations, and capital investment; develop control policies; predict and evaluate outcomes; and evaluate the benefits and costs of a given program or system design.

The elements included in the model depend on the question or problem to be solved. For the output of the model to be useful, it must mimic the expected behavior of the real system. To control the behavior of one part of the system, the incentives driving that aspect of the system must be built into the model.

A good deal of literature is now available on research in this area. Operations research tools and systems engineering tools have been used to address a wide variety of problems, from the operation of a hospital to higher levels of complexity, such as incentives, efficiency, and payment schemes. Quantitative models can provide important input for making decisions that involve complex societal, ethical, and economic issues.

Supply-Chain Management and Health Care Delivery: Pursuing a System-Level Understanding

Reha Uzsoy

Purdue University

In recent years, effective supply-chain management has emerged as a significant competitive advantage for companies in very different industries (e.g., Chopra and Meindl, 2000). Several leading companies, such as WalMart and Dell Computer, are differentiated from their rivals more by the way they manage their supply chains than by the particular products or services they provide. A supply chain can be defined as the physical and informational resources required to deliver a good or service to the final consumer. In the broadest sense, a supply chain includes all activities related to manufacturing, the extraction of raw materials, processing, storing and warehousing, and transportation. Hence, for large multinational companies that manufacture complex products, such as automobiles, machines, or personal computers, supply chains are highly complex socioeconomic systems.

The ability of successful firms to make the effective management of supply chains a source of competitive advantage suggests that there may be useful knowledge that can provide a point of departure for the development of a similar level of understanding of certain aspects of health care delivery systems. Similar to the supply chains in manufacturing and other industries, the health care delivery system is so large and complex that it has become impossible for any individual, or even any single organization, to understand all of the details of its operations. Like industrial supply chains, the health care “supply chain” consists of multiple independent agents, such as insurance companies, hospitals, doctors, employers, and regulatory agencies, whose economic structures, and hence objectives, differ and in many cases conflict with each other. Both supply and demand for services are uncertain in different ways, making it very difficult to match supply to demand. This task is complicated because demand for services is determined by both available technology (i.e., available treatments) and financial considerations, such as whether or not certain treatments are covered by insurance. Decisions made by one party often affect the options available to other parties, as well as the costs of these options, in ways that are not well understood. However, almost all of these complicating factors are also present, to one degree or another, in industrial supply chains; the progress made in understanding these systems in the last several decades is a cause for hope that some insights and modeling tools developed in the industrial domain can be applied to at least some aspects of health care delivery systems.

In general, a centralized approach to controlling the entire system is clearly out of the question, although centralized decision models may be useful for coordinating the operations of segments of the larger system controlled by a single decision-making body. Designing decentralized models of operation that render the operation of the overall system as effective as possible is the main challenge for both health care delivery and industrial supply chains.

In the following section, I shall briefly discuss how the study of industrial systems has evolved from individual unit processes to considerations of complex interactions among many different components of an industrial supply chain. I shall then describe some examples of modeling approaches that have been applied to supply chains and close with some comments on how these tools might be adapted for the health care delivery environment.

FROM UNIT PROCESSES TO SUPPLY CHAINS

If we examine how industrial operations, particularly manufacturing operations, have evolved since the beginning of the nineteenth century, we can see that many efforts were motivated by a desire to understand and optimize individual unit processes (see, for example, Chandler, 1980). These efforts led to many innovations, among them the development of improved machine tools and fixtures, a significantly better understanding of the chemistry of processes (e.g., steel-making), and through the work of the early industrial

engineers, such as Frederick Taylor and Frank and Lillian Gilbreth, the optimization of interactions between workers and their environment.

As the understanding of unit processes developed, engineers began to consider larger and larger groupings of unit processes, trying to understand interactions between them and optimize the performance of entire systems, sometimes to the detriment of individual components. Hence, from considering individual unit processes, we progressed to considering departments of factories that perform similar operations, entire manufacturing processes from raw materials to finished products, and eventually, the operations of entire firms, as well as their suppliers and customers. It has often been observed that most significant new opportunities, both for cost reduction and the generation of new products and services, have been based on an understanding of interactions between different subsystems, or different agents, operating in the supply chain.

Among today’s leading companies, examples abound. Many automotive companies, for instance, have developed joint ventures with transportation firms; the objective is to optimize the interface between the production and distribution functions and facilitate the just-in-time operation of automakers’ final assembly plants. Software companies that provide supply-chain planning software for multilocation companies is another strong indicator of the advantages companies perceive will accrue to them by the effective management of the various elements of their supply chains. The strong trend in industry to outsource noncritical functions has increased the need for companies to effectively manage and clearly understand their relationships with other companies. As a final example, we can point to the collaborative forecasting, planning, and replenishment initiative in the retail sector; retailers work closely with major suppliers to develop demand forecasts for products through information-sharing and joint planning processes.

Clearly, the basic process of improving a system by a detailed understanding of the most fundamental unit processes, in other words the “atomic” elements of the system, and steadily extending that knowledge to interactions among larger and larger groupings of these elements is directly applicable to health care delivery systems. The individual unit processes in this case include the processing of a patient in an emergency room, the process by which a medical insurance claim is approved, and the scheduling of hospital operating rooms to optimize their performance. The need for a better understanding of how the operations of individual elements affect each other is apparent; these interactions can be quite complex because of long time lags between cause and effect. For example, the decision by a regulatory agency to disallow a certain kind of preventive procedure for infants may result in the emergence of an unexpectedly large number of children with special needs in the elementary school system several years later. The same kinds of problems are present to some degree in industrial supply chains, and a significant body of knowledge has been developed over the years to address them.

Based on the history of industrial enterprises, we know that the development of today’s enterprises required substantial organizational innovations, such as capital budgeting to allocate scarce capital between competing activities, cost accounting to develop an understanding of factors contributing to product costs, and the development of multidivisional corporations with complex structures of management incentives and coordination mechanisms. An important development in recent years has been the recognition of the need for a cross-functional view of supply-chain operations. All aspects of a firm’s operation, from the design of a product to the specific timing of marketing promotions, have a direct effect on the operation of the supply chain. Therefore, different functional specialties must actively collaborate to develop solutions to optimize the performance of the overall system. Similarly, in health care delivery a number of different constituencies, such as doctors, government agencies, insurance providers, and patient groups, are all involved in the operation of the health care delivery supply chain.

KNOWLEDGE OF SUPPLY-CHAIN MANAGEMENT

In the domain of industrial supply chains, it is probably safe to say that we have developed a fairly good understanding of the operation and economics of individual unit processes, including functions such as transportation, distribution, warehousing, and information processing. In particular, we have developed a substantial understanding of the often complex dynamics of capacity-constrained systems subject to variability in both demand and process (Hopp and Spearman, 2000). However, in general we are only beginning to learn how to integrate the solutions to these individual elements to reach a reasonable understanding of the operation of the overall supply chain.

Integrated planning models based on linear and integer programming have been applied to the segments of the supply chain controlled by a single company for at least four decades (e.g., Johnson and Montgomery, 1974). Although these models have been successful in many instances, they have not been effective in addressing the needs of a supply chain that involves many different companies with potentially conflicting objectives. In recent years, considerable efforts have been made to use some of the tools of economics, such as contracts, as a mechanism for coordinating the operation of complex supply chains (Tayur et al., 1998). However, these models are generally subject to long-run, steady-state assumptions that can be carefully evaluated relative to market conditions.

Conventional Monte Carlo simulation techniques (Law and Kelton, 1991) have proven extremely effective for systems in which the operational dynamics can be described at a high level of detail, such as segments of manufacturing processes or hospital operations. The difficulty with these

models is that for large-scale systems the level of detail required to unequivocally model system behavior accurately becomes prohibitive in terms of both data collection and computation time. Systems dynamics models used to model large systems work by establishing input-output relationships for their components and simulating their operation through time using techniques based on the techniques used for the numerical solution of differential equations (Sterman, 2000). Although these techniques are capable of modeling large, complex systems, they usually do so by specifying aggregate input-output relationships for large subsystems, which must be validated and whose parameters must be estimated carefully. Nevertheless, these models can capture many critical aspects of supply-chain behavior, such as the “bullwhip effect,” in which variability in orders is amplified as it passes down the supply chain from the consumer towards the producers of raw materials (Forrester, 1962).

RESEARCH NEEDS AND FUTURE DIRECTIONS

At the risk of overgeneralizing, it appears that most of the tools required for analysis of the individual unit processes in health care delivery, such as efficiency of hospital facilities, have been developed in the engineering literature and have, in fact, been applied intermittently to a variety of systems over the last several decades (e.g., Pierskalla and Brailer, 1994). However, if our experience with industrial supply chains is any guide, only limited improvements in health care delivery can be obtained by these means. Repeated experience has shown that far greater improvements can be obtained by a thorough understanding of the interactions between different elements of the system and restructuring them in a way that leaves all parties better off. This brings the modeling issues squarely into the region where current supply-chain research is weakest (the effective coordination of socioeconomic systems consisting of multiple, independent agents); but this is also the area that is developing most rapidly. The development of novel models at the intersection of conventional engineering and economics promises to provide a wide range of challenging research problems for many years to come.

To support this agenda, the most pressing research need is for techniques that can be used to model systems at the aggregate level, where one can accept some level of approximation to obtain computationally tractable models that achieve the correct qualitative behavior and provide useful insights into interactions between systems. This means that the aggregate models must capture the often nonlinear relationships between critical variables correctly, which has not always been the case in supply-chain modeling. The literature on systems dynamics may be a good starting point for this initiative, but it must be complemented by a variety of other techniques, such as economic models of competition and collaboration and agent-based techniques for modeling complex systems.

It is important to bear in mind that the purpose of these models is far more likely to be descriptive than prescriptive, that is, models are far more likely to be used, and arguably far more useful, to inform debate between the various parties involved in health care delivery than to deliver decisions to be executed. Hence, the development of large-scale computational simulations of different scenarios with different actors and interaction protocols between the actors appears to offer interesting research challenges. These tools would be extremely beneficial to decision makers in health care delivery.

REFERENCES

Chandler, A.D. 1980. The Visible Hand: The Managerial Revolution in American Business. Cambridge, Mass.: Belknap Press.

Chopra, S., and P. Meindl. 2000. Supply Chain Management: Strategy, Planning and Operations. Englewood Cliffs, N.J.: Prentice-Hall.

Forrester, J.W. 1962. Industrial Dynamics. Cambridge, Mass.: MIT Press.

Hopp, W., and M.L. Spearman. 2000. Factory Physics. 2nd Edition. New York: McGraw-Hill/Irwin.

Johnson, L.A., and D.C. Montgomery. 1974. Operations Research in Production Planning, Scheduling and Inventory Control. New York: John Wiley & Sons.

Law, A., and W.D. Kelton. 1991. Simulation Modeling and Analysis, 2nd edition. New York: McGraw-Hill.

Pierskalla, W.P., and D.J. Brailer. 1994. Applications of Operations Research in Health Care Delivery. Vol. 6, pp. 469–505 in Handbooks in OR & MS, S.M. Pollock, M.H. Rothkopf, and A. Barnett, eds. Amsterdam, The Netherlands: Elsevier Science.

Sterman, J.D. 2000. Business Dynamics: Systems Thinking and Modeling for a Complex World. New York: McGraw-Hill.

Tayur, S., M. Magazine, and R. Ganesham, eds. 1998. Quantitative Models for Supply Chain Management. Amsterdam, The Netherlands: Kluwer Academic Publishers.

The Human Factor in Health Care Systems Design

Kim J. Vicente

University of Toronto

The simplest way to think about the discipline of engineering is that engineers design things that are useful to society and satisfy important needs based on what we know about the physical world. When a bridge fails, we do not usually blame the bridge. We look to its design, trying to find a mismatch between what we know about the physical world and the outcome.

We should apply this same logic to people. But when a system is poorly designed, we often blame the person using it rather than the flaws in the system. For example, when we design a mechanical lathe, we must place the mechanical controls in a way that respects what we know about human bodies. But sometimes, if a lathe is poorly designed, we blame the user rather than the design.

Although we know a great deal about teamwork and about human behavior at the organizational and political levels, that knowledge is not always taken into account by designers of health care systems and devices. Clearly, improvements could be made, and not just in terms of safety. The lack of respect for human nature in the design of health care systems causes injuries and deaths, but it also costs money.

Contrast that to the field of aviation. Despite September 11, 2001 was not a bad year for aviation safety. The average number of deadly crashes for the previous decade was 48 per year. In 2001, however, there were only 34 deadly crashes—worldwide, not just in the United States. That’s the lowest number since 1946 when there were far fewer flights.

One reason for the improvement is that aviation engineers pay attention to the human factor. A familiar example is the rather high rate of crashes in a certain type of aircraft that occurred because pilots tended to raise the landing gear as the plane was landing, causing the airplane to scrape along the runway. When Al Chapanis, an aviation engineer, studied the problem, he found that the controls for the landing gear and the wing flaps were right next to each other and that they looked and felt identical. He realized that pilots could easily grab the wrong control, but he also realized that he could not redesign the whole cockpit. He came up with an idea, now called shape coding. He did not move the controls, but he altered the feel of the landing gear control. The controls are still right next to each other, but the change eliminated the errors. It was as simple as that.

Can we apply the same type of thinking to health care systems? Patient-controlled analgesic devices, which allow patients to self-administer analgesics (usually morphine), are a case in point. A number of parameters are programmed into these devices by the nurse, the most important being drug concentration. These devices rely strictly on the programming and cannot independently verify either the concentration or even the type of analgesic in the syringe. Therefore, errors in programming can mean underdoses or overdoses; and errors have enduring effects, that is, the problem lasts until the programming is corrected.

For the particular device that we studied, programming errors were associated with five to eight reported patient deaths. Adverse drug events and adverse events in general in medicine are severely underreported—roughly only 1.2 to 7.7 percent are reported (Vicente et al., 2003). In other words, adverse events may be 13 to 83 times higher than the reported rate. We calculated that programming errors had lethal results for this particular device at least 65 times, and perhaps as many as 667 times, over a 12-year period. To put these numbers in context, the manufacturer reports that the device was used safely over 22 million times.

We then examined the existing design using traditional human-factor principles to see if there was room for improvement. We also talked to nurses, the users of this device. One serious problem we found was that the layout of the buttons on the interface was confusing and counterintuitive. So we came up with a new design by resegmenting the buttons and changing some of the labels. The new design offered the same functionality but changed the mode of interaction between the programmer and the pump. The system now provided more feedback and gave the user an overview

of the programming sequence. The redesigned device told the programmer the drug concentration, what was coming up next, how to program the mode, and then showed the settings. In essence, the new programming sequence was much less convoluted.

We tested the redesigned interface in a laboratory setting with professional nurses who had more than five years of experience programming the commercial device. With the commercially available design, there were eight programming errors for drug concentration, three of which were undetected. With the new interface, there were no errors in drug concentration. They were eliminated.

Given the epidemiological data, the change was obviously important for safety reasons. But it was also important in terms of cultural attitudes. If the problem had originated with the person programming the device, then changing the interface should have made no difference in the error rate. In fact, changing the design did eliminate the errors. Therefore, we concluded that the problem was not with the people, or, at least, not only with people.

Surprisingly, we had a great deal of difficulty getting this research published. One journal refused it because the editor took for granted that what we had scientifically demonstrated was not true. We went through some pretty hard times, both in terms of getting the work published and dealing with the response from the public. One reviewer even suggested that a lawyer look at the research because of potential legal action by the manufacturer. We had chosen the particular device because it was relatively new, but soon after our research was completed, the media began to report some deaths as a result of errors in programming the device.

This example shows three important points. First, we know how to design technology that works for people because we know a lot about people at many levels—physical, psychological, team, organizational, and political. We do not always make the most of this knowledge when we design health care devices, but lack of understanding is not the problem. Second, not making the most of that knowledge results in a tremendous loss to society. Tens of thousands, perhaps even hundreds of thousands, of people are injured or die every year unnecessarily. Finally—the most difficult lesson—change is important and necessary, but there is a great deal of resistance that must be overcome before we can make progress.

REFERENCE

Vicente, K.J., K. Kada-Bekhaled, G. Hillel, A. Cassano, and B.A. Orser. 2003. Programming errors contribute to death from patient-controlled analgesia: case report and estimate of probability. Canadian Journal of Anesthesia 50(4): 328–332.

Changing Health Care Delivery Enterprises

Seth Bonder

The Bonder Group

The health care delivery (HCD) system in the United States is in crisis. Access is limited, costs are high and increasing at an unacceptable rate, and concerns are growing about the quality of service. Many, including the Institute of Medicine, believe the system should be changed significantly in two ways: (1) HCD enterprises should be reengineered to make them more productive, efficient, and effective; and (2) substantially more effort should be devoted to a strategy of prevention and management of chronic diseases instead of the current heavy reliance on the treatment of diseases. Although operations research can make substantial contributions to both areas, the focus of this paper is on: (1) reengineering HCD enterprises, particularly areas in which operations research can provide valuable support to senior health care managers; and (2) enterprise-level HCD simulation models to determine the reengi-neering initiatives with the biggest payoffs before implementation.

HCD enterprises are very large, complex operational systems comprised of large numbers of people and machine elements. Tens of thousands of people are involved as providers, patients, support staff, and managers organized into specialties, departments, laboratories, and other organizations that are considered independent service units (“stovepipes”). Machines include durable medical equipment, information technologies, communications equipment, expendable supplies, rehabilitation equipment, and so on. These elements are affected by many clinical and administrative processes (e.g., arrivals, testing, diagnosis, treatment, scheduling, purchasing, billing, recruiting, etc.), most of which are probabilistic (i.e., uncertain) and change significantly over time.

Perhaps most important, these processes involve large numbers of interactions within units, among units, and across processes. Decisions by enterprise managers regarding one unit may have second, third, and fourth order effects, which may be more significant than the first order effect. HCD enterprises are driven by endogenous and exogenous human decisions made by providers, patients, insurers, administrators, politicians, government employees, and others. Demand and supply issues have complex feedback effects. A great many resources are required for the development and operation of an HCD enterprise. For example, the University of Michigan’s budget for its HCD enterprise is more than $1 billion; the Henry Ford Health System’s budget is $2.5 billion, and these are relatively small HCD enterprises. Billions of dollars have been spent on cost containment initiatives over the past 15 years by the Agency for Healthcare Research and Quality (formerly the Agency for Health Care Policy and Research), the U.S. Department of Defense, the Veterans Administration, National Institutes of Health, foundations, universities, and others to reengineer the HCD system. Nevertheless, costs continue to rise at double-digit rates.

We need better ways of analyzing systems of this magnitude. The operations research community has been involved with HCD enterprises for more than 40 years working on a wide range of problems, such as inventory for perishables; management of intensive care units; laboratory and radiology scheduling; relieving congestion in outpatient clinics; nurse staffing, scheduling, and assignments; and layouts for operating and emergency rooms. These efforts have focused on the small, stovepipe units, referred to by Don Berwick as clinical and support “microsystems,” and have produced some useful information for unit managers but have not addressed enterprise-level reengineering and planning issues (the so-called “macrosystem”). Macrosystem issues have interactive effects across the enterprise and have large cost, access, and effectiveness impacts. Some of these interrelated issues are listed below:

-

the mix of health services necessary to support a given population

-

the staff required (e.g., specialties, numbers, locations) to provide necessary services

-

the impacts of changing demands (e.g., aging populations, effects of preventive measures)

-

the impacts of new HCD models (e.g., home health care, task performance substitution)

-

the effects of centralized radiology services

-

the impacts of primary care outreach

-

facility capacity for the next 20 years and the best way to provide it

-

operational changes to adapt to regulatory changes (e.g., Medicare)

These and other macrosystem issues can be addressed quantitatively using enterprise-level simulation models that represent all of the elements, units, and processes in the enterprise as well as the interactions among them. Because analyses of these issues are necessarily prospective, the models must be structural rather than statistical. Statistical models, which are usually used in economics and the social sciences, use existing system data to develop aggregated statistical relationships between system inputs and outputs (i.e., the model). Statistical models are used primarily retrospectively, that is, for making inferences and evaluations. In contrast, structural models are usually developed in the engineering and physical sciences by modeling the detailed physics of each process and activity. Structural models are used prospectively, that is, for predictions and planning. Statistical models are less appropriate to prospective analyses of future systems because the data used to develop statistical models are intrinsically tied to the existing system.

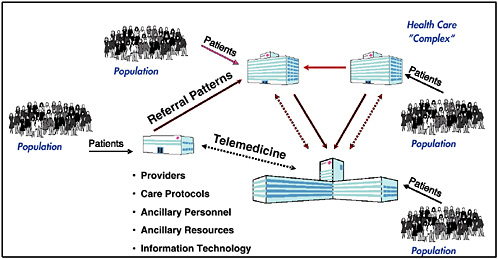

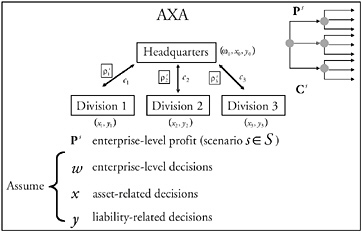

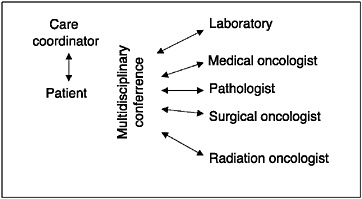

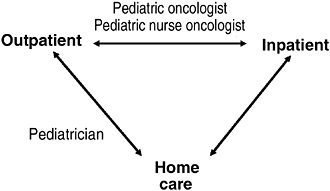

Figure 1 provides an overview of a particular enterprise-level HCD simulation model. The figure shows the elements in the Healthcare Complex Model (HCM), which was developed seven years ago and has been continually updated in a prototyping process by Vector Research Incorporated (now the Altarum Institute). HCM simulates individual patient episodes in a network of facilities for a population of patients. The network of facilities, with its entities and processes, is referred to as a “complex” (synonymous with an enterprise). Complexes usually have one or two major medical centers (where much of the tertiary care is provided), five to ten hospitals, and many clinics. The model can be adapted to represent specific features of any HCD enterprise.

Inputs to the model include demographics of the population that receives care. A model preprocessor converts the demographics into a stream of patients entering the complex; each patient’s condition is described by an International Classification of Diseases, ninth edition (ICD-9) code. Patients can enter the enterprise at a clinic, a hospital, or a medical center. They can be referred physically or via telemedicine consults from clinics to hospitals or to a medical center. Providers of various types are located at each facility in the complex. The care protocols represent practice guidelines and patient pathways, define what service patients receive next, where patients receive the service, and the type of personnel who will provide it. The model keeps track of the resources used and estimates costs using related cost models. Each protocol is a tree with many probabilistic branches to simulate that different providers may provide patients having the same condition with different medical services. The care protocols may be tailored for simulations of specific enterprises and facilities. The model represents various ancillary personnel (e.g., nurses, nurse assistants, medical technicians, etc.) and various ancillary resources (e.g., laboratories, pharmacies, beds, CAT scans, MRIs, and durable medical equipment). Finally, the model represents various clinical (e.g., computerized patient record system) and administrative (e.g., billing, scheduling) information technologies and communications systems.

Because the HCM explicitly simulates all of the entities, processes, and activities in the system, any one or combination of them can be changed, and the impact on various output costs and access metrics can be observed. For example, HCM can determine how a change affects the cost of running the enterprise, a hospital, or a particular unit in a

FIGURE 1 Overview of the Healthcare Complex Model.

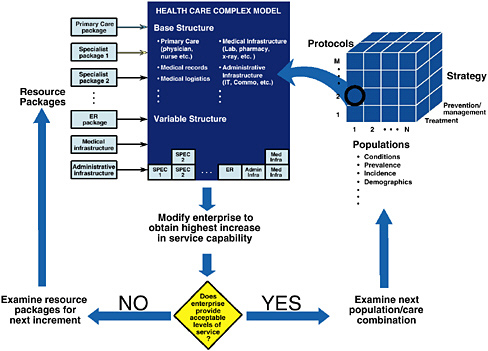

FIGURE 2 Zero-based HCD enterprise design.

hospital. It can calculate the impact on access metrics for the enterprise, a hospital, or a unit in a hospital. Because the model is being enhanced continually via a prototyping process, consideration has been given to simulating false positive and false negative statistical errors and their effects. Although these are not outcomes, they would provide useful quality information about the simulated HCD enterprise.

HCM has reasonable fidelity at this stage in its development. It contains more than 1,200 ICD-9 code conditions (e.g., acute appendicitis, asthma, cellulite, open chest wound, viral hepatitis, low back pain, etc.) and more than 1,500 clinical tasks/procedures (e.g., preoperative anesthesia, computer tomography for staging/radiation, EEG, interpretation of angiogram, administration of antibiotics, etc.). The model simulates 60 different kinds of health care providers, 17 types of ancillary resources (e.g., x-ray, ultrasound, pathology, dialysis unit, etc.), 6 different inpatient beds, and 23 combinations of telemedicine equipment. And its fidelity improves with every study.

The model was tested on one of the smaller regional HCD enterprises in the military health system (MHS). The enterprise has one major medical center, two hospitals, two clinics, and a managed care support contractor that provides additional capacity for the region. Together they handled about 1.6 million outpatient visits in fiscal year 1999. The model was adapted to represent the facilities, workforce, ancillary resources, information technologies, and clinical protocols used by the regional complex. Using population demographics provided by the government, regional operations for the year 1999 were simulated a number of times (because of the probabilistic nature of the protocols) to develop stable average outputs. These were compared to the historical values from the enterprise’s 1999 operations with encouraging results. Total outpatient visits differed by 0.11 percent, same-day surgeries by 1.02 percent, inpatient admissions by 2.99 percent, emergency room visits by 6.04 percent, and average length of stay by 0.94 percent. More detailed comparisons of outpatient visits by individual facility and individual specialty all differed by less than 4 percent. Although this was not a true validation study (which would require implementing model-suggested changes and comparing predicted impacts with actual results after the changes), it did show that simulation models can represent the complex dynamics of health care enterprise operations and can generate useful information and insights for enterprise managers.

HCM has been used in a number of other studies including the geographic distribution of primary care providers for a large, dispersed enterprise; telemedicine needs for a MHS regional complex; centralization of radiologists to service a 20-facility enterprise; and determining return-on-investment for information technologies. HCM is currently being used to determine capacity requirements for an enterprise that would experience increased demand following a bioterrorist attack.

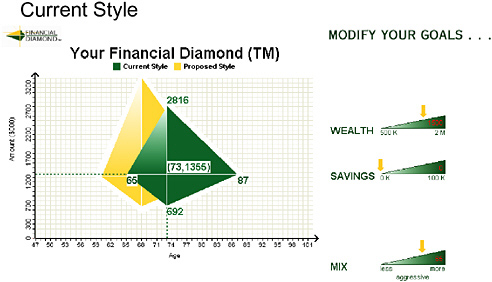

Enterprise-level simulation models like HCM can be used to address a broad range of issues facing enterprise executives. Here is one challenge that could be posed: Given a population of patients, how can operations research determine an efficient set of resources to provide an acceptable level of services to that population. Assuming the HCD enterprise is a shell with no existing medical services, models like HCM can be used to address difficult issues, such as designing a system from scratch to serve a given population (sometimes referred to as “zero-based” design). A schematic drawing of the analysis process is shown in Figure 2. For purposes of this

discussion, we assume that an acceptable level of service can be defined in terms of some access/quality metrics, cost of enterprise operations, and cost of the resources.

The resources required to service the specified population depend not only on characteristics of the population (e.g., conditions, prevalence, incidence, etc.), but also on the protocols, as well as the degree to which the enterprise strategy for servicing the population focuses on treatment or prevention/ management of medical conditions. The three-dimensional structure shown on the right side of Figure 2 allows the analysis team to select a population, a protocol set, and a mixed treatment/prevention strategy as input to the analysis process. (The protocols are obviously related to the strategy and designed to reflect the strategy.) Figure 2 shows that input (1, 2, T), representing population 1, protocol set 2, and a treatment-focused strategy is used to begin the analysis.

Regardless of the input set, the enterprise will need a “base structure” consisting of a primary care package, medical records, medical logistics, a medical infrastructure package, and other base resources, as shown in the figure. Enterprise operations with the base-level resources, protocol set 2, and strategy T can then be simulated for a period of time to see if it provides an acceptable level of service to the selected population (#1). If the answer is no (as shown by the decision diamond), the analysis can then try adding individual resource packages to see which provides the most improvement in service capability to the population. Resource packages are designed by the user team (e.g., pediatrician/ internist/obstetrician/ENT package, which can be substituted for a primary care package; a gastroenterologist/orthopedist package; an oncologist/urologist package; a cardiologist/ thoracic surgeon package; an emergency room package; and other resource groupings). Enterprise operations are simulated for each package to determine the improvement in service capability above the base level. The resource package with the most improvement on the margin is added to the enterprise (as shown under the variable structure).

This process is repeated, and resource packages with the most marginal improvement to the enterprise are added until an acceptable level of service is reached. (Mathematical programming techniques would likely make this iterative search process more efficient.) When this process is complete, the sum of the base and variable resources constitute an efficient set of resources that provide an acceptable level of service (measured by access/quality and cost metrics) to the designated population using the specified protocols. The effect of different protocols on the resource requirements, as well as resource requirements for other populations, can be determined in a similar way. This process could be used to design a “versatile” set of resources that would provide a capability to serve multiple populations using different protocols.

Operations research could address some of the important enterprise-level issues but would require cultural changes on the part of enterprise management, as well as the operations research community. Enterprise management would have to encourage centralized planning for enterprise design and resource allocation issues, simultaneously maintaining decentralized operations. Higher order (and usually large) effects of interactions across stovepipes can only be identified at this level. Enterprise management would have to encourage a culture of prospective analyses to identify necessary changes that would be useful and would provide a high return on investment. (Retrospective analysis is an expensive trial-and-error process to learn what doesn’t work). Enterprise management would have to establish a “requirements-pull” process for equipment and IT decisions, rather than the existing “technology-push” process, which is based on what is available from industry rather than what is needed. Management would also have to require that processes be reengineered when implementing new technologies (technology changes overlaid on existing processes produce zero value).

The health operations research community would also have to make important cultural changes. It needs to begin addressing enterprise-level issues, which should not remain in the purview of health econometricians who have failed to solve the cost, access, and quality problems that have beleaguered health enterprises and the nation. The operations research community would have to start working with enterprise-level structural models and begin using them for prospective analyses. Health operations research practitioners must become integral partners with senior enterprise managers in their business planning. They should use their 40 years of tactical-level support as an entreé and then demonstrate (and market!) the value of enterprise-level analyses to enterprise managers.

Transforming Current Hospital Design: Engineering Concepts Applied to the Patient Care Team and Hospital Design

Ann Hendrich

Ascension Health National Clinical Excellence Operations Office

Health insurance premiums and the cost of hospital services and care have risen significantly over the past few years. Public and private data recently analyzed by PricewaterhouseCoopers (2003) for the American Hospital Association and the Federation of American Hospitals confirmed that, from 1997 to 2001 spending on hospital care increased by $83.6 billion. Increased volume, the most important reason for this increase, accounted for 55.4 percent; 33.4 percent was attributed to increased use and 21.0 percent to population growth. Since 1996, adjusted admissions increased at least 3 percent every year except 1998, when the increase was 2 percent. Other factors included an aging population; lack of effective care management and patient education; less restrictive benefit plans; and new, more expensive technologies.

Spending on hospital services increased 61 percent over the last 10 years and is still the largest component of rising national expenditures on health care (31.7 percent in 2001). Increased compensation is the most significant driver of the rising cost of goods and services purchased by hospitals. Nearly three-fifths of hospital expense goes to the wages and benefits of caregivers and others. Furthermore, labor costs accounted for 38.8 percent of the increase in spending on hospital care between 1997 and 2001. The study also determined that improved hospital efficiency accounted for $15 billion in savings between 1997 and 2001. These initiatives resulted in shorter hospital stays, less inpatient capacity, higher productivity, and consolidations.

Labor costs (related to the nursing shortage) are anticipated to account for the largest share of the current increase in spending on hospital services. Between 1995 and 2000, hospital wages exceeded increases paid in private industry, and, as a result, financial margins eroded. In addition to wages, hospitals have absorbed other expenses to retain or recruit nurses, such as tuition reimbursement, sign-on bonuses or referrals, loan repayments, and financing of child care centers. This has put great financial pressure on hospitals to be more efficient, which in turn has put significant stress on the workforce. The lack of significant, sustained efforts at improvement, coupled with efforts to reduce labor costs, have led to caregivers spending less time with patients and lower job satisfaction. These statistics suggest that we have an enormous opportunity to improve efficiency, safety, and environmental designs to counteract increases in labor costs and inflation.

My presentation is divided into three sections: (1) a study of how health care workers spend their time; (2) a study of current and future hospital designs, with a focus on the patient room (about 400 new hospitals are currently being built from the ground up, many of them designed the same way they have been designed for 100 years or more raising concerns about their sustainability); and (3) the results of changes in design.

BACKGROUND

In Methodist Hospital, a large time-and-motion video study of patient care processes and the patient care team, with Ann Hendrich as the principal investigator, was done to determine how improvements could be made (Hendrich and Lee, 2003a). Four video cameras were installed in hospital patient units: one in the nursing unit hallway, one on each side of the nursing station, and one in each patient room. (This was an informed Institution Review Board consent study.) The four cameras fed video data into a quad screen for data review and analysis. About 1,000 hours of continuous work were studied in a hospital nursing unit very similar to units in most hospitals in this country. Almost 4,000 events in the patient room and thousands more in the nursing station and the nursing hallway were tracked and “trended” to measure how health care workers spend their time.

We found that in this typical unit a nurse executive budgets for about five-and-one-half to six hours of direct nursing hours per patient day. But patients received less than

10 percent (about 20 to 40 minutes) of direct care in their rooms. Nursing-acuity systems cannot account for the waste and inefficiency we were able to measure in design, distance, transfers, and differences among units. We concluded that the built environment (new or transformed) enabled by technology is a nearly untapped opportunity for improving the cost, quality, and access to hospital care. A main reason nurses are unhappy in their professional roles is that most of their time is spent doing things other than professional nursing. For the most part, their time is not spent with patients on healing, intervention, care, or teaching. It is spent instead on what I call “hunting and gathering”—hunting and gathering paper, supplies, medical records, equipment, trays, carts, linen, and so on. Thus hour by hour, much more time is spent in the nursing unit hallway and the nursing station than in the patients’ rooms.

In addition, many patients are moved two to five times during short hospital stays, which adds to waste, inefficiency, and the workload index. Patients are moved from unit to unit for two reasons: (1) the head wall and technology; and (2) nursing skills. Admittedly, these are very important reasons, but if hospitals address these issues, a whole new level of care and efficiency could be provided.

In a separate patient-transport study, patient-placement data (the chance of transfers, waits, and delays) were entered into a simulation model to show actual patient flow (Hendrich and Lee, 2003b). This study affirmed the need for changes in the current hospital design to reduce waste and inefficiency, improve safety, increase meaningful work for caregivers, and align facilities with future needs. The need for flexible, acuity-adaptable rooms for current and future hospital designs is imperative. The need for comprehensive care and progressive-level care will continue to increase with anticipated changes in demographics and technology. The model clearly demonstrated the high cost and inefficiency of running hospitals the way they are run now and the potential improvements of doing things differently. The model suggested that we have a multimillion dollar opportunity to reduce waste for both patients and caregivers.

A NEW DESIGN

Based on the internal and external trends revealed in these studies, a demonstration unit was established at Methodist Hospital, shortly after it was consolidated with University Hospital and Riley Hospital for Children. Additional bed space was needed for the cardiovascular consolidation, but we chose not to replicate the familiar nursing unit design. A coronary critical-care unit was combined with a coronary medical unit into a future-state patient room. The head wall was acuity adaptable, and patients were admitted and discharged from the same room. The unit was called the comprehensive coronary critical care unit (Hendrich et al., 2003).

The simple change in the head wall required minimal investment (approximately $100 dollars per room) to provide the pounds per square inch necessary to handle multiple gases (oxygen and suction) up through a multilevel tower. Other monitoring technologies would cost more and could be added when needed. Private rooms with acuity-adaptable head walls, adequate space for family, and lighting and temperature controlled by the patient could help reduce infection rates and bed placement times. This design offers maximum flexibility for hospitals of the future.

Hospital patient flow also requires a major transformation. The demonstration unit showed the value of not moving patients from unit to unit. When patients are moved, not only do we lose their dentures, but we also make serious clinical errors because of communication gaps. Every time a patient is moved from one nursing unit to another, the patient comes into contact with another 25 or so caregivers.

The new room design balanced privacy with high observation and created a healing environment for the patient. The windows facing the interior hallway were electronically charged. With the flip of a switch located on the wall, the window in front of the decentralized nursing station could become clear or opaque. (The same effect could be provided with an inexpensive blind.) The nurses used an infrared tracking system to reduce hunting and gathering time to find each other on the unit. The phone was modem capable for family or patient use, and blood analysis modules were in each patient room, so routine blood tests could be done quickly, at the point of care, to reduce lead time for physicians and caregivers.

As electronic medical records become more prevalent, hospitals should think about changing how they use the space of a centralized nursing station. This centralized space could become a business/care center for interdisciplinary practice (nurses and physicians), which would in turn make physician office and department practices more efficient. The nursing stations could be decentralized to reduce travel time and workload index and increase direct-care time. Problems relating to cultural change and human factors (nurses are most familiar with centralized stations) can be resolved with concerted effort. The data are clear—decentralized stations reduce the waste and inefficiency of typical work patterns of hospital nurses (see Figures 1 and 2).

When we consolidated the two units (coronary critical-care and the coronary medical unit), we had a definite moment in time for comparison because patients from both units were moved to the new unit on the same day. We were able to compile true pre-baseline data, and, with this case-control comparison, we were able to measure the impact of change on a variety of levels (clinical, cost, satisfaction). The case-mix was unchanged in the new unit. We measured sentinel events, length of stay, cost of care, medication errors, nursing turnover, and patient falls. The decrease in errors and adverse events was a direct result of the changes in design and care model. Patient dissatisfaction decreased greatly and more rapidly in this unit than in any other unit in the hospital. Nursing hours returned to 1997 levels—patient-care time

was increased, not decreased. Direct-care contact was increased, and hunting and gathering time was decreased.

Previously these two units had transported 200 patients a month back and forth between them; the number dropped to fewer than 20. Remember that the average time for a transport is 25 minutes to 48 hours in most acute-care facilities. Theoretically, we had predicted that acuity-adaptable rooms would be more efficient and that there would be less need to move patients; this was demonstrated in the outcome data. Although the total number of beds was reduced by seven, there were dozens more patient days handled on fewer beds. When the data were entered into the simulation model, the results showed millions of dollars in efficiency improvement. This suggests that smaller, more efficient facilities would bring some relief from workforce shortages and growing demand in the future.

At the heart of the hospital capacity and flow problem (or the cause and effect) is the tension between medical and surgical care specialties and critical care. Many patients don’t require critical care, but because progressive beds are usually full, they are often assigned to a critical care bed. Emergency departments and operating room recovery areas are often backlogged with patients waiting for the “right” bed. Thus, patients who are between the critical care and medical–surgical care levels (“tweeners”) create a bottleneck in hospital flow. Physicians and nurses tend to err on the side of safety and “hold them” until critical care beds become available. This bottleneck phenomenon tells us something about future demands for care and the necessity of migrating the middle section of care to the “next generation” of care delivery (Hendrich and Lee, 2003c).

The built environment, enabled by technology, provides an enormous untapped opportunity for reducing waste and improving care when non-value-added analysis is used to improve caregiver work spaces. The development of new care-delivery models to match new hospital environments will be an imperative for the future. This demonstration unit, which provided a healing, patient-centered design to support the patient and caregivers, improved both clinical and fiscal outcomes.

REFERENCES

Hendrich, A.L., J. Fay, and A.K. Sorrells. 2003. Cardiac comprehensive critical care: the impact of acuity adaptable patient rooms on current patient flow bottlenecks and future care delivery. American Journal of Critical Care. Accepted for publication.

Hendrich, A., and N. Lee. 2003a. A Time and Motion Study of Hospital Health Care Workers: Tribes of Hunters and Gatherers. Manuscript in progress.

Hendrich, A., and N. Lee. 2003b. The Cost of Inter-Unit Hospital Patient Transfers. Manuscript in progress.

Hendrich, A., and N. Lee. 2003c. The Cost of Current Hospital Patient Flow: A Simulation Model. Manuscript in progress.

PricewaterhouseCoopers. 2003. Cost of Caring: Key Drivers of Growth in Spending on Hospital Care. Washington, D.C.: American Hospital Association and Federation of American Hospitals.

Discrete-Event Simulation Modeling of the Content, Processes, and Structures of Health Care

Robert S. Dittus, M.D., M.P.H.

Vanderbilt University and Veterans Administration Tennessee Valley Healthcare System

The Institute of Medicine (IOM) report, Crossing the Quality Chasm, challenged health care providers to deliver care that is safe, timely, effective, equitable, patient-centered, and efficient (IOM, 2001). To meet these challenges, health care providers must redesign, implement, and continually improve current health care systems, including: (1) the content of care (what is being delivered); (2) the processes of care (how care is delivered—the microsystems of care); and (3) the structures of care (how delivery systems are organized and financed—the macrosystems of care). Although biomedical and clinical researchers will continue to identify potentially modifiable risk factors for disease and improve methods for diagnosis and treatment through observational and experimental studies, such advances alone cannot address the IOM challenges.

CONTENT OF CARE

The content of care will be shaped largely by advances in biomedical and clinical research. In colorectal cancer, for example, new chemotherapeutic agents have recently been developed that can prolong life for patients with advanced colorectal cancer (Rothenberg, 2004). In addition, new diagnostic modalities have been developed, such as radiographic “virtual colonoscopy” and a fecal DNA test, to detect early colorectal cancer (Winawer et al., 2003).

Traditional clinical research designs can address the efficacy and effectiveness of treatment and the sensitivity and specificity of diagnostic tests, but cannot easily address many important clinical management questions. Clinical research cannot readily examine the cost-effectiveness of screening colonoscopy at different ages, the most cost-effective time for surveillance colonoscopy among patients who have had a polypectomy, or the combination of age and morbidity at which colorectal cancer screening should be stopped. The myriad of possible solutions to these questions precludes comparing alternatives using traditional research designs and the size of a clinical study for adequate power would be prohibitive. In addition, the time required to gather study results would be measured in decades because of the slow growth of adenomatous polyps, the precursor of colorectal cancer. However, simulation modeling is a study design that could effectively address these questions (Banks et al., 2004; Law and Kelton, 2000).

PROCESSES OF CARE

Biomedical research can contribute little to improvements in the processes of care. Clinical observational and experimental studies on the processes of care could be helpful, but little work has been done to date in this area. In the past decade, management science methods have been introduced into clinical medicine more formally and extensively than in the past. A set of such methods, often referred to as continuous quality improvement, have been used worldwide to reduce variations in care delivery. Because health care is generally operating far from the efficiency frontier, these reductions in variation are often accompanied by improvements in quality and reductions in cost. However, the “plan-do-study-act” incremental approach to improvement is not always applicable because external forces, such as governmental or professional regulations, may require significant sudden change. Simulation modeling can be used to explore the implications and consequences of alternative processes of care. Simulation modeling can also generate new insights into underlying systems of care and identify new approaches that might not otherwise be apparent.

STRUCTURES OF CARE

The structures of care will also require substantial modifications. For example, financing systems are not designed to align incentives to improve the quality and efficiency of care delivery. Even though care delivery systems have

changed over the past decades, they are still based on the same general structures as they were a century ago. For example, the relationships and tasks among health care workers have changed very little. In the past two decades, questions have been raised about the effects of long hours (usually more than 80 hours per week) put in by residents on the quality and safety of care. In response to these concerns, the Accreditation Council for Graduate Medical Education recently established work-hour restrictions for residents. However, it is difficult for residency programs and hospitals to make small, incremental changes to their residency programs. Changes are generally made once a year, and implementing a poor system can affect a program’s reputation and subsequent resident recruitment. In this situation, simulation modeling can again be an effective way of examining the potential impact of alternative systems of resident scheduling on both residents and the quality of care.

TWO SIMULATION MODELING PROJECTS

In this paper, I will describe two simulation modeling projects that highlight the benefits of this systems approach to improving health care. Both projects have been previously published. The first project is a disease-based simulation model that examines the content of care for colon cancer; the project also demonstrates how the model can affect the structure of care. The second project is a hospital-based scheduling simulation that examines the structure of care; the results of this simulation led to improvements in both the structure and processes of care.

Disease-Based Simulation Model

Colorectal cancer is currently the second leading cause of death from cancer in the United States (Jemal et al., 2003). There are more than a million deaths per year from colorectal cancer, predominantly among the elderly; mortality rates rise logarithmically with age. There is no cure for unresectable disease, although when discovered at an early stage the disease is curable through resection. Several different screening tests are available for early detection, and studies have shown that screening decreases mortality by 15 to 30 percent and that the removal of adenomatous colorectal polyps (e.g., during colonoscopy) decreases the incidence of cancer by 70 to 90 percent (Winawer et al., 2003). Based on these data, a single screening colonoscopy at an appropriate age might be an appropriate diagnostic and therapeutic strategy. Our objective was to develop a decision model and examine the cost-effectiveness of one-time colonoscopic screening for elderly patients (Ness et al., 2000).

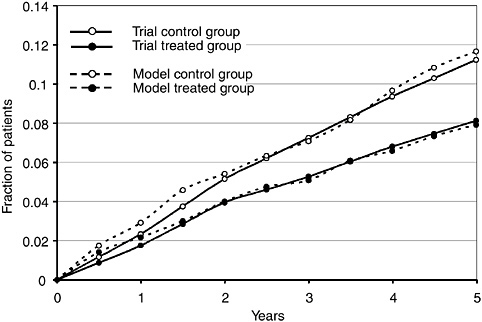

A discrete-event network simulation model was used as the platform. The model included the biology of the disease, risk factors for incidence and prognosis, and the health care system that screens for and treats the disease. Input parameters for the model were described as distributions with characteristics, including distribution shapes, and fit to the data. To measure the cost-effectiveness of alternative screening strategies for colorectal cancer, the outcomes of colorectal cancer had to be described; to measure quality-adjusted life years (QALYs), a standard metric for cost-effectiveness analyses; utilities (as morbidity weights) needed to be measured for each outcome (Gold et al., 1996). Two clinical studies were conducted to create these outcomes, develop a utility instrument, and measure the utilities associated with the outcome states (Ness et al., 1998, 1999). Next, a comprehensive review of the literature (more than 2,500 citations) was conducted. Cost information for diagnosis and treatment were derived from a variety of sources. Once the process was conceptualized and the model formally constructed, verification and validation tests were conducted (Ness et al., 2000).

In constructing the model, an attempt was made to match polyp prevalence data measured through autopsy series and cancer incidence data measured through cancer registries, under the assumption that all adenomas progress to cancer. However, matching the adenoma prevalence rate and the cancer incidence rate required using a dichotomous population of “slow-growing” and “fast-growing” polyps, with mean transition times from adenoma to carcinoma of 52 years and 26 years, respectively. As a result, it was revealed that adenomas progress to cancer at substantially different rates and that some, perhaps many, adenomas regress without treatment. Subsequent data have also suggested that adenomas may regress. As this experience shows, modeling can not only lead to insights into the effectiveness and efficiency of alternative strategies of care, but can also inform the basic biomedical sciences and generate hypotheses regarding the pathophysiology of disease.

The main study results revealed that, among men who had not previously been screened for colorectal cancer (unfortunately, a significant percentage of the population), one-time screening colonoscopy between the ages of 55 and 59 not only reduces the incidence of colorectal cancer, but is also less costly overall than no screening (Ness et al., 2000). In a hypothetical cohort of 100,000 40-year-old men, a screening colonoscopy between the ages of 55 and 59 reduced the overall incidence of colorectal cancer from 5,672 to 2,060 and reduced deaths from colorectal cancer from 2,177 to 654. One-time screening colonoscopy thus was demonstrated in this model to reduce the incidence and mortality of colorectal cancer by approximately 65 to 70 percent. At the same time, the cost of care (colorectal cancer screening, follow-up, and treatment) for these 100,000 men was reduced by 15 percent, from $75 to $63 million. If the screening was done five years earlier, between the ages of 50 and 54, the incidence and mortality were reduced even more, but at a slightly higher cost. The marginal cost per QALY was less than $4,000, which is generally considered a very favorable cost-per-quality ratio. Similar findings were demonstrated for women. The results of this study thus informed

changes in the “content” of health care, that is, the specific, recommended care.

A clinical trial to compare the costs and effectiveness of screening different age groups would be prohibitively expensive and take a very long time. A simulation model is feasible and, in addition, can also examine other features of these strategies of care, such as differential risk patterns among subgroups for the formation of adenomas or the speed of transformation from adenoma to cancer. The impact of differential sensitivities and specificities of diagnostic tests and new diagnostic modalities can be examined quickly. The model can also be used to examine the timing of a repeat “surveillance” colonoscopy after a polyp has been identified and removed. The frequency of surveillance colonoscopies can have a significant impact on the effectiveness and costs of a screening strategy. Given the current lack of capacity in this country to meet the need for colonoscopy under current recommendations, any strategy that reduces demand (such as lengthening the interval for surveillance colonoscopy) can be important. The simulation model can also examine the importance of compliance with certain elements of the strategies on the overall effectiveness and cost-effectiveness of care.

Clinical trials, observational studies, and decision analyses, such as the one described above, have since been used to inform Medicare payment policy. Prior to 2001, Medicare did not reimburse for screening colonoscopies. When cost-effectiveness models demonstrated the overall impact and potential cost savings of screening compared to not screening, this policy was changed. With potential reductions of 70 percent in deaths from colorectal cancer and simultaneous reductions in costs, the “structure” of health care was improved significantly, in this case by a financing change.

Workforce-Scheduling Simulation Model

Outside of healthcare, simulation modeling has been most commonly used to address facility design, inventory management, scheduling, and workforce deployment. Simulation modeling has also been used in a variety of settings to examine and design new structures and processes of health care (Klein et al., 1993). The second project described in this paper addressed issues related to workforce scheduling.

As a result of a variety of pressures to improve patient safety and reduce resident fatigue, many residency programs began in the 1980s to review and implement changes in house staff work schedules. The initial focus was on the frequency of in-hospital call and the amount of resident sleep time. In the 1970s, first-year residents in internal medicine in some programs were on call either 5 nights out of 7 or every other night, with the norm being every third night, and the work hours regularly exceeded 100 per week. Over time, the frequency of call has been reduced, and recently, the work week has been limited to 80 hours by professional training regulations. In addition, the number of continuous work hours and the quantity of work, such as patient volume, have also been regulated. As a result, residency programs have been forced to redesign their resident work hours and, at the same time, hospitals have had to redesign their workforces to make up for the reduction in resident work. Resident work scheduling remains an ongoing problem for academic health centers.

In 1989, simulation modeling was used to examine resident scheduling in a county hospital affiliated with an academic medical center (Dittus et al., 1996). A goal of the project was to show whether a discrete-event simulation model of an internal medicine service constructed from easily obtainable information could make valid predictions of residents’ experiences; the major focus was on the amount of sleep residents experienced while on call. A two-stage study was conducted. First, a network simulation model of the internal medicine service of the teaching county hospital was constructed, parameterized, verified, and validated using readily available hospital data and physician surveys. Second, the model was used prospectively to predict the effects of changes in the resident work schedule; the changes were made the year after the model was built.

The setting for the study was a 450-bed municipal teaching hospital with an average daily census of 90 patients on the internal medicine service (78 ward patients and 12 intensive care unit [ICU] patients). Each week, approximately 91 new patients were admitted. The service averaged eight admissions per night, one-third of which went to the ICU. To care for these patients, the medicine service had six teams; each team included a faculty member, a second or third year resident (resident), two first-year residents (interns), a senior student, and several junior medical students. In the baseline call schedule, two of the six teams were on call each night—one ward resident and his or her two interns and senior student, as well as a consulting resident and two interns from another team. Interns were on call every third night and residents every sixth night. Interns averaged 97 hours per week in the hospital.

To model the service, a discrete-event network simulation model was constructed using the INSIGHT simulation language (Roberts, 1983). The model characterized hospital schedules, such as the on-call schedule, the nighttime cross-coverage plan, clinic and conference schedules, and week-day versus weekend work schedules. The model described patient arrivals based on both scheduled and emergency admissions either to the ward or the ICU and characterized 38 house staff activities (residents and interns), including routine patient care, patient-initiated requests for care, and other activities. A decision-priority list established the order in which tasks would be addressed by the house staff following completion of any task. Twenty preemption levels described the prioritization of new tasks added to the work list, which described the interruption of a task prior to completion when a more urgent request was received. Because tasks were time sensitive, their preemption levels could change

over time. The baseline model was constructed and validated against observational data not used in the parameterization of the model.

In contrast to other types of decision analyses, a discreteevent network simulation model is flexible enough to accommodate such representations. The model also allowed for complete flexibility in the description of the input parameters. A flexible distribution system was used to characterize and parameterize input data elements by mean, variance, skewness, and kurtosis.

The model was used to inform a change in the call system from four interns on call every third night to three interns on call every fourth night. To test the predictive validity of the model following this change, a second phase of the project, a prospective work-measurement study, was conducted. Senior medical students were assigned to track the house staff and record the time for the beginning and end of each task. In a pilot study, we measured interobserver variability among the medical students, and, after making clarifications, more than 96 percent agreement was established. The predictive validation study was conducted on 18 house staff days and 6 house staff nights during which house staff were followed and their tasks recorded. We then programmed the simulation model to reflect the change in call schedules and replicated the timing and number of admissions to the hospital to reflect the actual workload managed during the observed time periods.