1

Introduction and Background

Nuclear science is entering a new era of discovery in understanding how nature works at the most basic level and in applying that knowledge in useful ways.1 This advance is largely the result of technological breakthroughs in the development of equipment for nuclear physics experiments. Until recently, nuclear structure scientists had to be content with conducting experiments using stable nuclei, of which there are only about 300, as beams and targets. In the past decade, however, nuclear structure scientists have learned how to build high-beam-power facilities for producing useful beams of short-lived, radioactive nuclei. With these new beams of unstable nuclei, they can make and study many thousands of exotic nuclear species—most of which have never existed before or are only fleetingly created in the hot interiors of stars. Such experiments will help improve understanding of both the structure of exotic nuclei (see Box 1.1) and the conditions responsible for their synthesis in stars. Rare-isotope beams also offer many opportunities for new medical research and for applications in other areas of research and industry. New, third-generation facilities are now planned or being built in a number of laboratories around the world. They will enable scientists to continue to exploit these new developments in the coming decades.

More than a decade ago, the U.S. nuclear structure and nuclear astrophysics

|

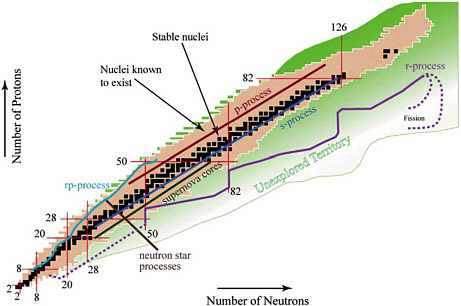

BOX 1.1 Exotic Nuclei, Rare Isotopes, and Radioactive Nuclear Beams The terms exotic nuclei, rare isotopes, and radioactive nuclear beams all refer to essentially the same sector of study, an area to which this report refers as rare-isotope science. The field of rare-isotope science can be characterized in the following way. Atoms that make up everyday matter on Earth are predominantly stable; that is, they retain their identity in terms of their elemental nature (the numbers of protons and neutrons remain constant over time). The nuclei located at the center of each atom comprise over 99.9 percent of the mass of the visible universe. However, in the broader cosmos, many other nuclei exist and play an important role in the evolution of the universe. These nuclei are exotic (they occur only rarely on Earth) and, in terms of chemistry, are isotopes of the stable atoms on Earth. By vast majority, these rare isotopes are radioactively unstable, meaning that, when left alone on the shelf, they undergo spontaneous decay and transform into different nuclei. Figure 1.1.1 depicts the standard organization of knowledge of rare isotopes. Nuclear physics is the general study of the principles that govern phenomena of the nucleus, and rare-isotope science is the study of the behavior and interactions of those nuclei that are unstable, exotic, and rare. By studying physical processes that transform nuclei into other nuclei (with the emission of residual particles and energy), scientists learn not only how to control and predict these phenomena, but they also learn about the origins of the chemical elements in the universe. In particular, the study of rare isotopes allows scientists to expand the basic understanding of nuclear physics in two general ways: (1) rare isotopes present “extremes” to physicists and thereby offer leverage on testing the basic understanding of nuclear physics, and (2) rare isotopes themselves play an important role in physical environments that are hot, dense, or highly interacting, such as those within neutron stars, stellar fusion cycles, nuclear reactions in reactor fuel cycles, and so on. |

communities proposed that such a new rare-isotope accelerator be built in the United States. Such a facility would produce a wide variety of high-quality beams of unstable isotopes at unprecedented intensities. Over the years, studies by the joint Department of Energy/National Science Foundation (DOE/NSF) Nuclear Science Advisory Committee supported the need for such a facility. In a landmark report published in 1999,2 a formal concept was envisioned for achieving these capabilities: it was termed the Rare Isotope Accelerator (RIA). To obtain an inde-

pendent scientific assessment of the scientific agenda for such a facility, the DOE and the NSF agreed to support a study committee convened by the National Research Council. The Rare-Isotope Science Assessment Committee (RISAC) was charged to define the science agenda for a next-generation rare-isotope beam facility. Soon after RISAC was formed, the DOE announced that the budget of what was then understood as RIA should be reduced by about half and that construction would not start until 2011. This report, therefore, identifies a compelling scientific agenda for a future facility termed the U.S. Facility for Rare-Isotope Beams (U.S. FRIB), the construction-cost envelope for which is roughly half that of RIA and at which the first experiments might not begin until 2016 or so (5 years after the start of construction).

HISTORICAL CONTEXT

Nuclear physics is the study of the tiny, massive cores of atoms. Nearly all the mass in the visible universe is locked away in atomic nuclei, as is nearly all the energy. Nuclear physics has realized the ancient dream of alchemy—transmutation of the elements—and seeks to explain how all the variety of elements on Earth were formed in the alchemical cauldrons of exploding stars. Nuclear reactions power our star, the Sun, producing energy that comes to Earth in the form of sunlight, which in turn generates energy in the form of wind. The Sun is ultimately responsible for the energy that has been locked away for millions of years in coal and oil. The forced disintegrations of a few, very special nuclei, generated by supernovae, generate power in nuclear reactors and are essential for nuclear weapons. It is now known that atomic nuclei are composed of protons and neutrons, and these, in turn, are made of smaller, simpler particles, known as quarks. How do the varied and complex properties of nuclei emerge from the simple laws obeyed by quarks? Or, going the other way, can the study of nuclei lead us to new forces and new symmetries, new insights into the world of quarks? How do nuclear reactions power quiescent stars like the Sun and lead to stellar catastrophes like supernovae? How are complex nuclei made in stars? How can we understand the behavior of nuclei well enough to control nuclear power, limit nuclear proliferation, and manage nuclear waste? These are some of the questions that drive modern nuclear physics.

The history of the 20th century is inextricably intertwined with the emergence of nuclear physics. Certainly a culture that does not understand the major implications of nuclear science will not be prepared to face the challenges of science, energy, and politics in the 21st century.

The first, faint murmur of what was to become nuclear physics came at the end of the 19th century, with Henri Becquerel’s discovery that uranium salts emit mysterious forms of radiation. Pierre and Marie Curie isolated other radioactive elements, including radium and polonium, in the first years of the 20th century, and led international efforts to characterize and explain the origins of radioactivity. They sorted radiation into alpha rays—heavy, highly ionizing, and easily stopped; beta rays—light, moderately penetrating, and moderately ionizing; and gamma rays—highly penetrating and very weakly ionizing. In the Curies’ day, little was known about the internal structure of atoms. The prevailing model, proposed by J.J. Thomson, held that the atom was a blob of positive electric charge in which electrons, already known as the carriers of electricity, were embedded as “raisins in a plum pudding.”

This picture was abruptly shown to be incorrect and modern nuclear physics was born when Ernest Rutherford showed that almost all the mass of the atom is

concentrated in a small nucleus at its center. The nucleus, it is now known, is a scant 10−12 centimeters across. The atom, 10,000 times larger, is mostly empty space, filled with a faint haze of orbiting electrons, each 1/2000th the mass of the lightest nucleus.

These early discoveries in nuclear physics jump-started the development of quantum mechanics. Niels Bohr modeled the atom as a nuclear core surrounded by electrons in quantized orbits. Later, nuclear radioactivity came to be seen as a fundamental example of a nondeterministic quantum process: an unstable nucleus has a calculable average lifetime, but exactly when any particular nucleus will decay is fundamentally unknowable. The new quantum theory took shape in the 1920s, spurred largely by the need to explain the properties of atoms, especially the spectra of light emitted by excited atoms. Nuclear physics progressed rather slowly, awaiting the development of more powerful theoretical tools and some fundamental experimental discoveries. By 1920, Ernest Marsden, working with Rutherford, had shown that the nucleus of the hydrogen atom, the proton, was a constituent of heavier nuclei. As beta radiation appeared to be electrons emitted from the core of unstable nuclei, it was natural to suppose that protons and “nuclear electrons” were the constituents of nuclei. This led only to confusion and paradox until James Chadwick, in 1932, discovered the missing building block of nuclei: the neutron, nearly identical to the proton in mass but with no electric charge. Once nuclei were recognized as bound systems of protons and neutrons, progress through the application of the new quantum theory and new experimental methods was both swift and inevitable.

In the 1930s, the basic constituents of the nucleus were identified and the basics of certain radioactive decays were first deduced. Isotopes were understood as nuclei with the same number of protons—and therefore the same chemical properties—but different numbers of neutrons. The Chart of the Nuclides, the analog of the Periodic Table of the Elements, began to fill up as nuclear physicists and chemists created, isolated, and identified heretofore unknown and often unstable nuclei by bombarding stable nuclei with protons, neutrons, and alpha particles (now understood to be the nuclei of helium—two protons and two neutrons bound tightly together). Alpha-particle emission from heavy nuclei such as radium provided dramatic confirmation of the bizarre phenomenon of tunneling predicted by quantum mechanics. The first models of the nucleus, Niels Bohr and John Wheeler’s “liquid-drop” or “compound nucleus” model and Eugene Wigner’s “supermultiplet” model of light nuclei, began to apply new quantum ideas to nuclear structure. Enrico Fermi wrote his famous paper proposing a theory to explain beta decay, an early step on the path to the discovery of the Standard Model of fundamental physics.

Two early discoveries by nuclear physicists in the 1930s had profound impact,

one on society and the other on appreciation for the role of nuclear physics in shaping the universe. The first was the 1938 discovery by Otto Hahn and Fritz Strassmann of nuclear fission and its theoretical interpretation by Lise Meitner and Otto Frisch. Nuclear fission and the subsequent construction of the first nuclear weapons brought nuclear physics out of the esoteric world of universities and research laboratories and forced politicians and citizens to confront moral questions at the boundary where great science and the potential for great destruction meet.

The second was Hans Bethe’s discovery in 1939 that nuclear fusion powers stars. Recently, nuclear physicists have directly confirmed his theory of the Sun’s energy source by a quantitative measurement of the flux of neutrinos from the Sun. Bethe’s work not only led to an understanding of the energy sources that power the universe but also initiated the field of nuclear astrophysics, which now includes the study of supernovae where heavy nuclei are created and of degenerate collapsed stars such as neutron stars, which are, in essence, gigantic nuclei of stellar proportions.

After World War II, scientists started to consider peaceful uses of nuclear energy. The first nuclear power plant produced electricity in 1951. Despite its checkered history—great initial promise and rapid growth followed by misgivings over safety, waste management, and weapons proliferation—energy from nuclear fission will play an important part worldwide in any smooth transition away from a carbon-based energy economy to a more sustainable energy future.

The world of fundamental particles has never again seemed as simple as it did in 1945: the “elementary particles” required to describe nature were very few—the proton, neutron, and electron (the neutrino and muon—unnecessary for ordinary matter—lurked in the shadows, with the neutrino somehow needed in radioactive decay). The rules were relatively simple and the possibilities immense. If the forces among protons and neutrons could be understood, then all of nuclear and atomic physics might be understood, and with it all of everyday phenomena and much of astrophysics.

However, already during the golden era of the 1930s, Hideki Yukawa, working in Japan, had made a proposal that led eventually in a different direction. Yukawa proposed that an as-yet-undiscovered particle, the “mesotron,” now the pi meson, was the carrier of the nuclear force. After a false start, which turned out to be the muon, and after the war had intervened, the pi meson was discovered in 1947. On the one hand, it awakened the hope that nuclear forces and interactions could be described by some simple underlying dynamics. On the other hand, it marked the beginning of elementary particle physics.

In the 1950s the discovery of “elementary particles,” on the same footing as the proton, neutron, and pi meson, proliferated. In the same decade, Robert Hofstadter

and coworkers discovered that the proton is not a point particle. Instead, it has extended structure typical of a composite particle. The effort to explain the forces that bind protons and neutrons into nuclei in terms of these newly discovered particles did not succeed. By the end of the 1950s the stage was set for nuclear and elementary particle physics to part ways: particle physicists set off to figure out the next level of structure beneath protons, neutrons, pi mesons, and their brethren; nuclear physicists meanwhile continued to explore the wealth of quantum phenomena that are displayed in nuclei, to use nuclei as laboratories to test new concepts and look for new regularities and symmetries in nature, and to seek understanding of the nuclear astrophysical processes that make the stuff of the universe.

A large and vibrant community continued the study of nuclear physics after the birth of elementary particle physics. There was much to understand about nuclear structure, nuclear reactions, and other nuclear phenomena. By 1950 it was known that the forces between nucleons (protons and neutrons) are very short range (about 10–13 cm) and complex. They are moderately attractive at 10–13 cm and beyond, but strongly repulsive at separations less than 0.5 × 10−13 cm. Because of this, the nuclear force “saturates.” A nucleon in a nucleus experiences a net attraction to nearby nucleons, but because of the short-range repulsion, the system does not collapse. The nuclear force was found to be roughly the same for neutrons and protons. However, the fact that a proton and neutron bind to form the smallest nucleus, the deuteron, while two neutrons do not bind, showed that the nuclear force between a neutron and proton can be slightly stronger than that between two neutrons or, indeed, two protons.

The nucleus is a system with two different species of strongly interacting particles, neutrons and protons—quite different from atoms, in which usually only the electrons participate in atomic excitations. Because the nuclear force saturates, so does the binding energy of nuclei containing many neutrons and protons. The nuclear contribution to the binding energy grows approximately linearly with the total number of nucleons (A). If it were not for the electromagnetic repulsion between protons, nuclei with very large (and roughly equal) numbers of neutrons and protons would be stable. Eventually, however, nuclei are destabilized by the electromagnetic (Coulomb) repulsion, which builds up proportional to the number of protons (Z) squared. The binding energy (per nucleon) of nuclei reaches a maximum of about 8 MeV in the vicinity of 56Fe (62Ni actually has the largest).

For nuclei with Z greater than around 56, the effects of Coulomb repulsion reduce nuclear binding. Eventually the attractive nuclear force is overcome, with the result that nuclei with Z > 92 are not found in nature. When some heavy nuclei decay (or fission) into two lighter—and more tightly bound—fragments, kinetic energy on the order of 200 MeV is released—more than 20 million times the

energy released in a typical chemical reaction. Gravity is an astoundingly weaker force than either the nuclear force or electromagnetism—roughly a factor of 1040 weaker than the nuclear force. But as with electromagnetic forces, gravitational forces do not saturate. Instead, the universally attractive force of gravity grows like the total number of nucleons squared and eventually overwhelms all other forces for very large numbers of nucleons. When A > 1057, gravitational binding of a giant “nucleus” is responsible for the creation and subsequent evolution of neutron stars, massive objects formed by the collapse of ordinary stars, with interior densities at or above that of normal nuclear matter.

By the early 1950s, two powerful models for describing nuclear spectra and simple reaction rates had emerged and had become the subject of extensive experimental study and further theoretical elaboration. The creators of these models subsequently won a Nobel Prize in physics: J. Hans D. Jensen and Maria Goeppert-Mayer in 1963 for the nuclear shell model; Aage Bohr, Ben Mottelson, and James Rainwater in 1975 for the so-called unified model.

The nuclear shell model pictures the nucleus as a collection of nucleons moving in orbits under the influence of a common spherical potential, which is generated by the average interactions of all the nucleons. As with electrons in the atom, successive nucleons of the same type must be placed in successively higher orbitals because the Pauli exclusion principle forbids identical nucleons from occupying the same state. The participation of two types of nucleons, protons and neutrons, enriches shell phenomena in nuclei compared with atoms. One of the most striking successes of the shell model was the prediction of anomalously stable “closed shell” nuclei, analogous to the noble gases of the Periodic Table of the Elements. The ability to predict the quantum numbers of nuclei with only a few protons or neutrons added to (or subtracted from) a closed shell bolstered belief in the shell model. The shell model in its original formulation, however, had little success describing the spectra of nuclei far from closed shells or in regions of N and Z, where the overall nuclear shape deforms away from spherical symmetry.

The unified model combined the early picture of the nucleus as a deformable, rotating, and vibrating object—a picture that had grown out of Bohr and Wheeler’s liquid-drop model—with the shell model. The unified model couples individual particle states to the collective motion of the other nucleons. The most extreme example of collective motion is a nucleus with an equilibrium deformation that rotates as if it were a rigid body. Possible collective nuclear excitations also include vibrations. Clear evidence was found in nuclear spectra for both rotational and vibrational behavior. One of the important successes of the unified model was its ability to account for the much-faster-than-expected electric quadrupole transitions between low-lying nuclear excitations.

During the 1960s, experimental research in nuclear physics was carried out at

a large number of research facilities (more than 25) at universities and national laboratories located throughout the United States. In addition, many similar facilities were constructed in Europe and parts of Asia. The research focused almost exclusively on studies of nuclear structure and on those nuclear reactions that could quantitatively illuminate nuclear structure. Since the nuclear shell and unified models could not be expected to describe nuclear spectra perfectly, much of the experimental data collected during the 1960s, while confirming the general concepts of the models, also revealed their limitations. Theorists looked to the fundamental interactions between nucleons both for the origins of both models and for insight on how to improve them. The full complexity of the interaction between nucleons was impossible to handle with the limited computing power available at that time. Simpler effective interactions were employed, and even then the mathematical complexity of finite, many-body systems limited the utility of the nuclear shell model to light nuclei (typically A < 40) except for a few nuclei in the near vicinity of closed shells. While clear examples of rotational and vibrational behavior could readily be identified in nuclear spectra, they occurred only in particular regions of the periodic table, and it became clear that such behavior was far from universal. A significant quantitative advance was made when S.G. Nilsson and his collaborators in Copenhagen and in Lund, Sweden, developed a relatively simple and physically intuitive model for characterizing nucleonic orbits in deformed potentials (see Figure 1.1). Much experimental evidence was found to support such a description. This deformed-shell model implemented important principles implicit in the unified model by coupling independent particle models to the collective description.

Significant progress was made during this period in nuclear reaction theory, and the ability to interpret the results of nuclear reactions quantitatively added much to the knowledge of nuclear structure. The so-called direct reaction model was particularly successful in dealing with the reactions involving light projectiles such as protons, neutrons, deuterons, and 4He nuclei. For example, a reaction in which the incoming state consists of a deuteron and the nucleus and the outgoing state consists of a proton and the nucleus can probe the excited states of the nucleus that result when a neutron with a particular value of angular momentum is transferred to the target nucleus. While analysis of these reactions and of electron scattering experiments confirmed much of the underlying physics of the nuclear shell model, they also demonstrated that for a considerable fraction of the time, the nucleons were not in the assumed shell-model orbits but were instead promoted to higher-lying orbits as a result of the very strong, short-range nucleon-nucleon interaction. Refined as a result of intense and thorough studies of nuclear reactions, nuclear models during the 1960s and early 1970s were capable of reproducing most aspects of nuclear structure, although they required a sizable input of

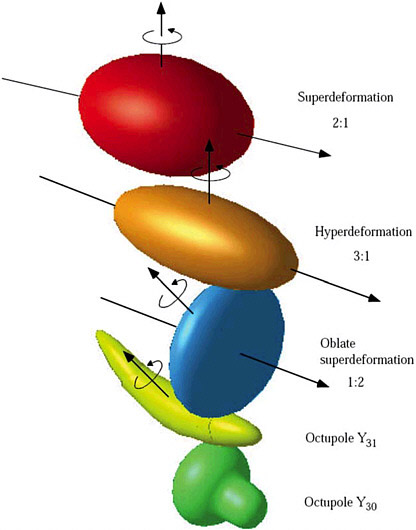

FIGURE 1.1 Various shapes of nuclei either observed or expected. Exotic orbitals that appear in regions far from the stability line may provide some new types of deformation. The superdeformation and octupole pear-shaped deformation (bottom) have been observed experimentally. The oblate superdeformation has been predicted but not observed—less-deformed oblate shapes are, however, quite common. The hyperdeformation has been seen in certain nuclei. The octupole banana-type deformation has not been observed in such extreme form, but vibrations of this kind are well known. Reprinted with permission from Report of the Study Group on Radioactive Nuclear Beams to the OECD Megascience Forum Working Group on Nuclear Physics, copyright 1998 OECD.

experimental data to tune their predictions. It was uncertain how well these models could be extrapolated into regions with a very large neutron excess for which little or, more often, no experimental information existed.

In the 1960s, experiments using heavy-ion reactions were beginning to be used to extend the understanding of nuclear spectra and nuclear reactions. The collisions between heavy nuclei—say, 12C on 24Mg [12C(24Mg, nX)36 – nY]—proved difficult to interpret quantitatively. However, they very effectively brought huge amounts of angular momentum into the nuclei that were created. The use of highly efficient detector arrays with energy resolution on the order of a few keV made possible detailed study of the subsequent multiple gamma radiations, as these high-angular-momentum states radiated away their angular momentum and energy. These decay chains revealed a great deal about the underlying structure in the nuclei in which they were observed. Subsequent research (in the 1980s) of a similar nature revealed that superdeformed nuclear states can carry large amounts of angular momentum with less energy than that of normally deformed nuclei. In superdeformed nuclei, the longer axis may be as much as twice the length of the short axis. The ability of a nucleus to lower its energy sometimes—and therefore gain stability—by assuming a nonspherical shape also accounts for the existence and subsequent discovery of many elements heavier than those found in nature. Currently the observation of nuclei with Z up to 112 has been confirmed, and there is the interesting prospect that it may be possible to make long-lived super-heavy nuclei.

By the middle of the 1960s, there was growing awareness that a more robust understanding of the global properties of nuclear matter was needed. Although they would not directly elucidate nuclear spectra, these global properties would describe the features of the nuclear matter common to all nuclei. Most of the spectroscopic properties of nuclei described by the nuclear shell and unified models are determined by the interactions of the least-bound nucleons in the nucleus, the analogue of the valence electrons in an atom or the particles at or near the Fermi surface in a degenerate Fermi liquid. Thus, neighboring nuclei would often exhibit quite different spectra and reveal very different behavior in low-energy nuclear reactions. However, their binding energy per nucleon and their density were virtually identical. How should the bulk properties of nuclear matter be characterized? It was thought that the interaction between nucleons resulted from the exchange of mesons—indeed these virtual mesons also play an important role in the nucleon’s response to external fields—and that the detailed differences in short-distance behavior gave rise to the differences in average bulk properties. To investigate nucleon-nucleon dynamics at short distances (<1.5 femtometers), quantum mechanics requires that the probe have momentum of several hundred MeV/c and transfer a sizable fraction of this momentum in the collision. This

required building higher-energy accelerators (>400 MeV) than had been employed in nuclear research (the largest up to that time were <50 MeV).

The much greater cost (over $100 million) of these higher-energy facilities dictated that there would be fewer (probably a single facility in the United States) and that they would operate in a user mode.3 Several smaller accelerator facilities operated in-house at universities were closed, and university researchers initiated new research programs at the new user facilities. There was a resulting decline in emphasis on detailed nuclear spectroscopy, but it still remained an important element in the nuclear physics research program.

Worldwide, three higher-energy user facilities were built: one each in Canada, Switzerland, and the United States. The largest of these was the Los Alamos Meson Physics Facility, with an 800 MeV proton beam and a beam power approaching 1 MW; its user community consisted of nearly a thousand physicists. This facility became operational in 1972, producing intense secondary beams of neutrons, pions, muons, and neutrinos. The worldwide activity in this field produced an extensive body of data on the nucleon-nucleon and pion-nucleon interactions, mounted sensitive tests of the Standard Model, and was essential to the development of a relativistic nucleon-nucleus potential based on a mesonic description of the nucleon-nucleon interaction. This model provided a natural explanation for the strong nuclear spin-orbit force required to account for the observed nuclear shell structure; the origins of the spin-orbit force to that point in time had been obscure.

Even before the heavy-ion and medium-energy research cited above, experiments in the 1950s using beams of electrons at Stanford University, Saclay (France), and the Massachusetts Institute of Technology mapped out the distribution of the charge and magnetization in nuclei and, at Stanford, with the higher energies available, in the nucleon. In the 1960s and 1970s, these facilities and others also provided data on the momentum distribution of the nucleons in nuclei, probed deeply bound shell-model orbits, and investigated charged meson exchange currents in nuclei. Scattering processes involving the relatively weaker electromagnetic force were shown to be easier to treat theoretically. Thus, the desire for a dedicated, world-class electron accelerator emerged in the nuclear community.

At about the same time, a revolution was taking place in the paradigm characterizing strong interactions; it was driven by observations of highly inelastic scat-

tering of high-energy electrons from nucleons. In these experiments the electron transfers a large fraction of its energy and momentum to the target nucleon. Surprisingly large cross sections were observed at the largest energy and momentum transfer, requiring that the electrons be scattering from point-like charged particles inside the nucleon. Further observations confirmed that these particles had a spin of 1/2 and electric charges only a fraction of the charge on the electron. These were the properties of the hypothetical quarks that had been proposed to explain the spectrum of the strongly interacting particles (hadrons).

The initial observations were made at the Stanford Linear Accelerator Center and then further elaborated at high-energy accelerators around the world. By the early 1970s, a theory of the strong interactions—quantum chromodynamics (QCD)—was rapidly being established as the underlying description of all strongly interacting particles. QCD described the properties and interactions of baryons and mesons in terms of the interactions of colored, fractionally charged, point-like particles called quarks. Quarks interact by the coupling of their color charges to eight massless, colored “gluons,” a subtle generalization of the electromagnetic interactions. Baryons are viewed as consisting of three constituent quarks, while mesons are formed from a constituent quark and antiquark.

The development of the quark model and its evolution into the theory of strong interactions, QCD, had a large influence within the nuclear physics community. Even though it was soon recognized that QCD would be extremely difficult to implement on the scale of hadrons and even more so on the scale of nuclei, the emergence of a fundamental underlying theory changed the way that nuclear physicists thought about nuclei and changed the criteria for an “explanation” of nuclear phenomena. Ideally, one would like to be able to trace the properties of nuclei back to the fundamental structure of QCD. The selection of 4 GeV as the initial energy for the Thomas Jefferson National Accelerator Facility was clearly influenced by the desire to connect the hadronic and quark descriptions of hadrons and nuclei. The eventual design for the accelerator at the Jefferson Lab employed superconducting radio-frequency (RF) resonant cavities in a mode that produced polarized and unpolarized electron beams of unprecedented intensity, quality, and duty factor. The facility produced the first beam for research in 1995.

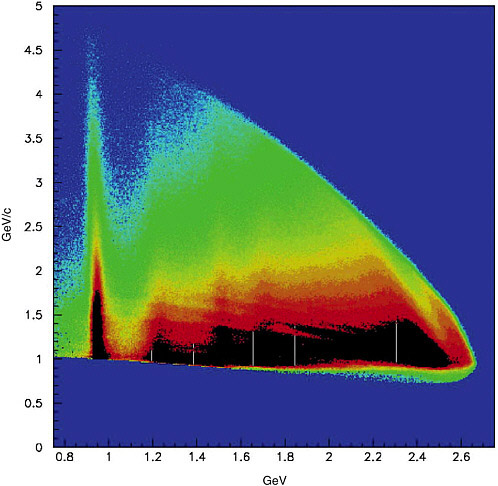

The Jefferson Lab now has some 900 users and has carried out more than 100 experiments. Among the research highlights have been the demonstration of a large difference in the distribution of the proton’s charge and magnetization, measurement of the strange-quark contribution to the nucleon’s charge and magnetization distribution, and direct evidence that the hadronic description of the nucleon-nucleon interaction works to shorter distances than expected. Figure 1.2 shows the energy and momentum of energetic electrons scattered from a hydrogen target.

FIGURE 1.2 The response of the proton as revealed by experiments using the CEBAF Large Acceptance Spectrometer detector at the Thomas Jefferson National Accelerator Facility. The experiments measured how the proton absorbs both energy (horizontal axis) and momentum (vertical axis) from an incident electron. The features in the plot reveal certain resonances that the proton is excited to, confirming that it behaves as a complex system of quarks and gluons. Courtesy of the Thomas Jefferson National Accelerator Facility.

The QCD paradigm changed the way that nuclear physicists think about nuclear matter produced at very high temperature or density. Confinement of quarks and gluons within hadrons is regarded as a (relatively) low-energy phenomenon. At extreme pressure, hadrons overlap, the distinction between individual hadrons disappears, and a “condensed matter” of QCD is expected to be

formed. At high temperatures, the identities of individual hadrons is also lost, leading to the formation of a new state of matter with very high energy and entropy density in which quarks and gluons are the relevant degrees of freedom.

A large community of experimental and theoretical nuclear physicists has launched an ambitious program to explore this very dense, hot, strongly interacting form of matter, often referred to as the quark-gluon plasma (sometimes called QGP). It is certain that in the early universe, some microseconds after the “big bang,” strongly interacting matter would have gone through such a phase in which it consisted of quarks and gluons that cooled to protons, neutrons, and photons and subsequently deuterons and alpha particles. Indeed, the relative amount of these light nuclides produced in the early universe is part of the evidence supporting the big bang hypothesis.

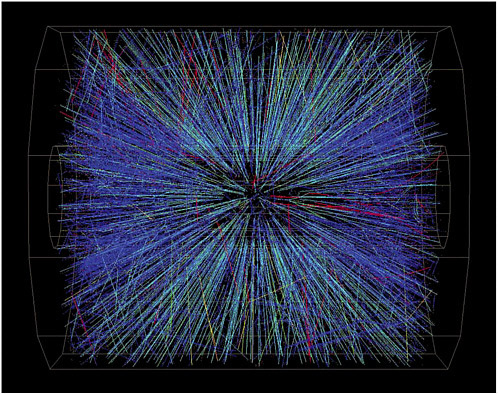

Conditions similar to those of the early universe can be re-created in the laboratory by colliding heavy nuclei together at extremely high energies. Collisions between oppositely directed beams are much more efficient at reaching high energy than are collisions of one beam on a stationary target, so oppositely directed beams of energetic nuclei are typically required in studies of the QGP. Early experiments at the Brookhaven National Laboratory Alternating Gradient Synchrotron (AGS) were followed by higher-energy experiments at the European Organization for Nuclear Research (CERN). The results from the experiments at CERN provided tantalizing although not fully conclusive evidence for the formation of a new state of matter in such collisions. The U.S. quest in this area began in earnest in 2001 with the completion and operation of the Relativistic Heavy Ion Collider (RHIC) at the Brookhaven National Laboratory. In a head-on collision of two gold nuclei, each carrying 100 GeV per nucleon, over 10,000 particles may emerge. Figure 1.3 shows the particles emerging from such a collision. The total energy in such collisions, 40 TeV, is the highest energy achieved to date in any human-made particle collision.

How should this quark-gluon phase manifest itself if it is formed? The earliest conjectures were that it would be plasma of locally free quarks and gluons whose interactions would be weak enough that its properties could be extracted relatively easily from the experiment and could be calculated with some reliability from QCD theory. Results from the experiments at RHIC pointed in a different direction. Much excellent data on a variety of phenomena has been gathered and analyzed from collisions of a variety of nuclei at various energies. The most recent experiments suggest that the material formed in the first instant of these collisions is best characterized as a strongly interacting quark-gluon liquid. Indeed it has been termed a perfect liquid, because the hot, dense material flows with very little viscosity, and the distance between collisions of the liquid’s constituents is extremely short. This is quite different from earlier theoretical expectations; further

FIGURE 1.3 An example of the outgoing particles from a collision of two gold nuclei at the Relativistic Heavy Ion Collider at the Brookhaven National Laboratory. Courtesy of the Brookhaven National Laboratory, Solenoidal Tracker at RHIC Collaboration.

study of this matter is expected to explain much about QCD and the dynamics of the very early universe.

The connection between nuclear reactions and astrophysics goes back to Bethe’s pioneering work on the energy source of stars. Later, careful measurements of solar reaction processes suggested that the observed solar-neutrino flux was too low; this “solar neutrino problem” helped cause neutrino physics to emerge as a new discipline of nuclear physics and astrophysics. The past few decades have seen an explosion in the quality and quantity of astrophysical data. Satellite- and ground-based telescopes operating over a wide range of photon energies have revealed much about the behavior of ordinary stars, white dwarfs, neutron stars,

black holes, galaxies, dark matter, and dark energy. There is every reason to believe that this flow of data will continue and, indeed, increase.

Initially, stellar evolution by hydrogen and helium burning is driven by proton- and alpha-capture reaction sequences on stable nuclei. Subsequent late-evolution phases from carbon to silicon burning are characterized by more complex reaction processes triggered by heavy-ion fusion or photodisintegration processes near the line of stability. Many of the most interesting, powerful, and important stellar phenomena such as supernova explosions and gamma-ray bursts that occur at the end of a star’s life continue to challenge understanding. These explosive phenomena are important, since they create the bulk of the chemical elements above Fe and often lead to the formation of neutron stars or black holes. In these explosive events, an enormous flux of neutrons is created and subsequently captured by nuclei within a time short compared with the nuclear beta-decay lifetime. This is known as the rapid neutron capture process, or r-process. Thus, the nuclei experiencing the r-process are heavy and extremely rich in neutrons. Little knowledge and no data on the properties of such nuclei exist.

In addition to the relevance of nuclear structure to astrophysics, there is widespread interest in the nuclear physics community in investigating the many phenomena encountered with large neutron excesses and nearly unbound systems. Until the 1990s it was not clear that it might be possible to create a viable experimental program to investigate these issues. However, a number of technical advances in the development of high-charge-state ion sources, the availability of superconducting acceleration structures, and the fast and efficient collection of radioactive ions as well as the construction of large acceptance detectors have made such a program possible and attractive. Proposals for the construction of facilities incorporating these advances are now under consideration, with some already in development or operation. Significant advances have also made nuclear structure theory steadily more quantitatively reliable. Particularly notable among these are increases in available computing power and the accompanying formalisms and algorithms that take advantage of the increased capability. Building on these achievements, theoretical and experimental nuclear physicists, working in conjunction with astrophysicists, observational astronomers, and large-scale modelers, have the opportunity to greatly advance the understanding of stellar processes that map out a significant and critical portion of the history of the universe.

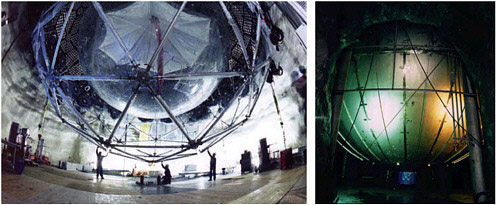

Over the period covered in this brief history of nuclear physics, many important discoveries were made without the use of any accelerator at all. Far and away the most significant has been the study of neutrinos from the Sun. This research, originally suggested by the Italian physicist Bruno Pontocorvo and undertaken in the United States by Raymond Davis, Jr., was viewed as a unique way to investigate the nuclear processes that occur at the center of the Sun and hence are responsible

FIGURE 1.4 Photographic view from underneath the Sudbury Neutrino Observatory experiment in Canada showing the large acrylic vessel and its phototubes; image courtesy of the Sudbury Neutrino Observatory. Photographic view of the Kamioka Liquid-scintillator Anti-Neutrino Detector (KamLAND) experiment in Japan; image courtesy of Hamamatsu Photonics K.K.

for its energy generation. Neutrinos interact so weakly that they readily escape from a stellar interior, allowing observation of interior nuclear processes. Early on in this research, Davis noted that fewer neutrinos than expected had been detected. Subsequent research in Japan and Canada (see Figure 1.4) has confirmed this deficit, showing that it is due to neutrino oscillations and that the characterization of the nuclear reactions driving the Sun is correct. The study of neutrino oscillations has since become an important element in nuclear and particle physics with active worldwide participation. Ray Davis shared the Nobel Prize in physics in 2002 with Masatoshi Koshiba for their work on neutrinos. Davis was recognized for his observation of solar neutrinos—his work to confirm Bethe’s theory of solar-energy generation proved to be an unexpected window on a new area of fundamental physics.

Nuclear physics has also played a leading role in discoveries of fundamental symmetry violations. When the idea was first proposed that parity (symmetry under space inversion) could be violated in weak interactions, few people took it seriously until the dramatic observation of this effect in beta decays of spin-polarized 60Co by C.S. Wu, R.W. Hayward, R.P. Hudson, and D.D. Hoppes. This discovery launched the experimental field of fundamental symmetry tests, leading to the eventual fall of time-reversal symmetry and a series of ever-more-precise tests for several symmetries whose violations have not yet been detected.

A variety of other measurements carried out by nuclear and particle physicists

have further set strong limits on various processes that would require new physics beyond the Standard Model of electroweak interaction. Examples include the limits on electric dipole moments, the existence of second-class currents, and the lepton-flavor-changing decays of the muon. These measurements have also provided positive evidence for such particle physics landmarks as conserved vector currents, the unitarity of the Cabibbo-Kobayashi-Maskawa matrix that describes the interactions of quarks, and parity conservation by the strong interactions.

The past decade has witnessed significant developments in experimental studies of nuclei and nuclear astrophysics, driven largely by qualitative advances in technology, including high-resolution particle separators, large arrays of gamma-ray or particle detectors, a variety of traps, storage ring and laser spectroscopy techniques, and especially the development of first- and second-generation facilities for the production and use of nuclei far from stability. These technical developments have boosted experimental sensitivities by many orders of magnitude. They have led to results that have challenged long-held beliefs on many topics. Examples include the robustness of shell structure (e.g., magic numbers), nuclear geometries and density regimes in weakly bound systems (e.g., in halo nuclei), and evidence for new collective modes and many-body symmetries. Similarly, these developments enabled the creation of new superheavy nuclei. In nuclear astrophysics, experimental results from radioactive-beam facilities have provided improved knowledge on the ignition conditions for novae and x-ray bursts. These experiments also explored the far-from-stability reaction processes in explosive nucleosynthesis scenarios such as the r-process and the rapid proton capture (rp)-process in terms of reaction path and process timescales. These first results also showed that the theoretical basis of existing nucleosynthesis simulations for such processes is more than unsatisfactory and that the predictive power on the basis of these simulations is limited. Improvements in computational capabilities permit new theoretical approaches, giving rise to more realistic calculations for nearly all nuclei.

Thus, nuclear physics has expanded its scope considerably beyond its origins in nuclear structure and radioactivity (see Figure 1.5). It now investigates the properties of strongly interacting matter at a deeper level and contributes to knowledge of objects as diverse as the Sun and neutrinos. On the applied side, nuclear physics plays a significant role in energy, defense, and medicine, and its instruments are spread throughout modern technology. Nuclear physics is now deeply involved in many areas at the frontiers of human knowledge and development.

Looking into the future from today’s perspective, there appear to be several clear avenues for world-class research in nuclear physics. One direction probes the consequences of QCD for hot and cold strongly interacting matter at length scales ranging from the subhadronic level to that of neutron stars. Another uses electro-

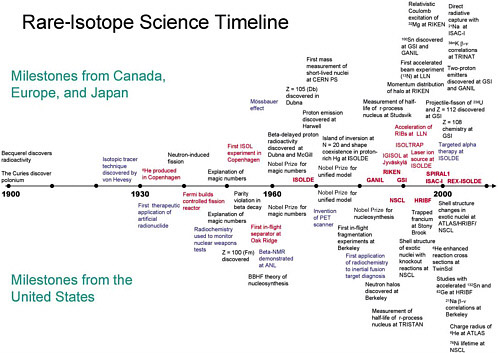

FIGURE 1.5 A chronicle of some of the major events (by no means all-inclusive!) in the history of rare-isotope science (RIS). Scientific milestones in the studies of nuclei, nuclear astrophysics, and physics of fundamental interactions appear in black; technological advances and facilities appear in red; and applications are shown in blue. In order to illustrate the worldwide context, the upper portion displays the milest ones from Canada, Europe, and Japan, while the U.S. milestones are shown below the timeline axis. This display of many leading examples of RIS in a single graph allows one to view couplings between basic science, technology, and applications as well as the steady increase in the activity in RIS and the high degree of competitiveness in the field. The only dedicated radioactive ion beam facilities in the United States are the National Superconducting Cyclotron Laboratory (NSCL) at Michigan State University (1989; in-flight separation) and the Holifield Radioactive Ion Beam Facility (HRIBF) at the Oak Ridge National Laboratory (first Isotope Separator On-Line [ISOL] beam in 1997). The figure is based on input solicited from a number of leading scientists representing the worldwide RIS effort.

NOTE: ANL—Argonne National Laboratory; ATLAS—Argonne Tandem Linear Accelerator System; BBHF—Burbidge Burbidge Hoyle Fowler, referring to a team of scientists who wrote a landmark paper on nucleosynthesis; CERN—European Organization for Nuclear Research; GANIL—Grand Accélérateur National d’Ions Lourds (Great Heavy-Ions National Accelerator); GSI—Gesellschaft für Schwerionenforschung mbH; HRIBF—Holifield Radioactive Ion Beam Facility; IGISOL—Ion Guide Isotope Separator On-Line; ISAC—Isotope Separator and Accelerator; ISOL—Isotope Separator On-Line; ISOLDE—On-Line Isotope Mass Separator, a facility at CERN; ISOLTRAP—Tandem Penning trap mass spectrometer at ISOLDE; LLN— Laboratoire Louis Néel; NMR—nuclear magnetic resonance; NSCL—National Superconducting Cyclotron Laboratory; PET—positron emission tomography; PS—proton synchrotron; REX-ISOLDE—Radioactive Beam Experiment at ISOLDE; RIBs—rare-isotope beams; SPIRAL—Système de Production d’Ions Radioactifs en Ligne; TRINAT—TRIUMF Neutral Atom Trap; TRISTAN—Terrific Reactor Isotope Separator to Analyze Nuclides; TRIUMF—Tri-University Meson Facility.

magnetic and weak processes to probe more delicately inside hadrons and nuclei to see how quarks and gluons give rise to nuclear phenomena and to test the Standard Model of particle physics. Many of these tests of the Standard Model will employ nonaccelerator sources ranging from astronomical objects to radioactive nuclei. A third direction, the one central to the concept of a rare-isotope facility, seeks to investigate nuclear structure at the extreme limits of particle stability, which is crucial for investigating new nuclear phenomena and for achieving a better understanding of the evolution of stars and the creation of the chemical elements.

TECHNOLOGICAL CONTEXT

Frequently there is a synergy between a new scientific direction and recent technological developments that enable groundbreaking research. Rare-isotope science is in a position to exploit recent technical developments that promise much more intense, high-quality beams of short-lived isotopes.4 However, even with the promised increase of many orders of magnitude, the intensities of a next-generation FRIB will still be low compared with what is traditionally available at a stable-beam nuclear physics facility. Fortunately there has also been significant progress in the development of new and more efficient detector systems, which, when combined with the new accelerator developments, significantly expand the reach of new experiments.

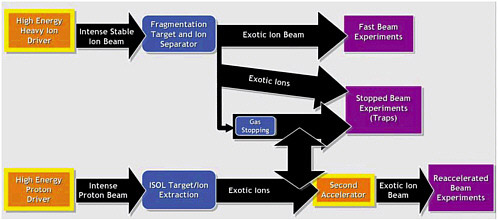

The experimental study of exotic nuclei involves three separate stages: production and preparation of the rare isotopes for research and the observation of the final products by means of the end-station instrumentation. Broadly speaking, there are two basic approaches to producing radioactive beams for use in nuclear physics experiments; they are called the in-flight technique and reacceleration. The techniques are complementary, and each has an important role in the study of exotic nuclei. Figure 1.6 shows the various stages of production, preparation, and experimental utilization of exotic nuclei.

In the in-flight technique, a production target is bombarded with a beam of a heavy stable nucleus. On interacting in the production target, the incident nucleus is fragmented into a variety of lighter exotic nuclei that travel with approximately the velocity of the incident beam. These exotic nuclei are then directed onto the

FIGURE 1.6 The different techniques for creating and utilizing beams of rare isotopes. The purple boxes represent the final stage, in which the nuclei are ready for use in experiments.

experimental target. This preparation technique is fast (less than 10−6 sec), direct, and independent of chemistry. These prepared beams typically have rather high energies (typically 50 to 500 MeV per nucleon), which means that they can be used to then bombard thick secondary targets giving the highest yields of the most exotic nuclei farthest from stability. These in-flight beams can also be inserted into devices called storage rings, which allow them to circulate continuously for mass measurements, or they can be used to enhance yields by being repeatedly recirculated through a given (thin) target. It is, however, very difficult to produce high-quality lower-energy beams by slowing down the fragments of the initial beam; thus, fragmentation is not suitable for many classes of experiments.

The second approach, reacceleration, takes the exotic nuclei formed in the production target and prepares a beam by bringing the exotic nuclei to rest and then injecting them into a second accelerator. This method produces high-quality, reaccelerated beams at the lower energies traditionally used for nuclear structure and nuclear astrophysics experiments, so these well-tested and well-understood techniques can be exploited in investigating their subsequent interaction with the target. There are two versions of this method of exotic-beam preparation—the “gas catcher” and the Isotope Separator On-Line (ISOL) techniques.

The gas catcher approach uses the same fragmentation process as the in-flight method, but in this case the exotic nuclei produced in the target are slowed in an absorber and then stopped in a gas catcher (typically He gas). The fragments remain ionized because of the large binding energy of electrons in the He atoms.

These ions are then fed into the second accelerator. This technique is also chemistry-independent, works for essentially all elements, and is fast. Its applicability for the most intense beams of exotic nuclei is still under investigation.

In the ISOL technique, a beam of light projectile nuclei bombards a thick target of a heavy element. The exotic nuclei are produced by a process called spallation, in which the target nucleus is fragmented into pieces, many of which are exotic. These exotic nuclei stop in the hot, thick target and diffuse from the target into an ion source where they are prepared for injection into the second accelerator and reaccelerated. This technique can often produce the highest intensities of certain isotopes and has a long history of technological development, but the extraction process depends on the atomic chemistry and surface properties of the target, is generally not useful for (refractory) elements with low vapor pressure at high temperatures, and is often slow so that short-lived isotopes are not obtained. Typically, considerable research and development are required to establish a useful beam for the first time that a new element is required.

In all three techniques, the exotic nuclei can be stopped to study their radioactive decay or injected into traps for fundamental studies or measurements of their properties such as their mass or charge radius.

Significant technical advances have been made in the development of super-conducting radio-frequency linear accelerators. Improvements in cavity design and material preparation have led to higher field gradients, leading to more efficient acceleration. Independent tuning and phasing of the individual RF modules allow ion acceleration over a wide range of velocities and charge-to-mass ratios. Continuing ion source development has led to the production of large quantities of highly charged heavy ions ideal for energetic heavy-ion drivers. All this technology is also applicable for the reacceleration phase of an exotic-beam facility in which collection efficiency and beam quality are more important than high energy or beam power. Appropriate proton drivers have been available for some time, and the ISOL technique is now well developed.

An essential additional development in facilitating the study of exotic nuclei is advances in experimental instrumentation that now allow measurements to be carried out with beams as weak as a few hundred particles per second or, in special cases, as low as 1 particle per day, whereas traditionally, nuclear structure and astrophysics experiments have usually been carried out with beams on the order of 108 to 1013 particles per second.

Thus, it appears that the technological advances are now available to allow the construction of rare-isotope facilities of enhanced capability that would permit the execution of experiments unimaginable a decade ago.