6

Generating and Applying Knowledge in Real Time

In 2008, Ann Morrison received two all-metal hip replacements at the age of 50. Soon after the procedure, she experienced intense rashes, pain, and inflammation at the sites of her surgery. The injurious devices were replaced in 2010, just 2 years after she received her initial hip replacements; hip replacements typically last 15 years or more. Today, as a result of extensive tissue damage caused by metal debris shed from the original replacements, Ann requires a brace to walk, and she still has not been able to return to her work as a physical therapist. With the proper digital infrastructure—electronic health records, the use of clinical data to compare the effectiveness and efficiency of different interventions, and registries to track side effects and safety—Ann’s experience could have been avoided. Instead, the U.S. health care system currently lacks the data, monitoring, and analysis capabilities necessary to effectively evaluate, disseminate, and implement the ever-increasing amount of health information and technologies (Meier and Roberts, 2011).

Although an unprecedented amount of information is available in journals, guidelines, and other sources, patients and clinicians often lack access to information they can feel confident is relevant, timely, and useful for the circumstances at hand. Moreover, the current system for disseminating knowledge is strained by the quantity of information now available, which means that new evidence often is not applied to care. After explaining the need for a new approach to generating clinical and biomedical knowledge,

this chapter describes emerging capacities, methods, and approaches that hold promise for helping to meet this need. It then examines what is necessary to create the data utility that will be essential to a continuously learning and improving health care system. Next, the critical issue of building a learning bridge from knowledge to practice is explored. This is followed by a discussion of the crucial role of people, patients, and consumers as active stakeholders in the learning enterprise. The chapter concludes with recommendations for achieving the vision of a health care system that generates and applies knowledge in real time.

NEED FOR A NEW APPROACH TO KNOWLEDGE GENERATION

The current approach to generating new medical knowledge falls short in delivering the evidence needed to support the delivery of quality care. The evidence base is inadequate, and methods for generating medical knowledge have notable limitations.

Inadequacy of the Evidence Base

Clinical and biomedical research emerges at a remarkable rate, with almost 2,100 scientific publications, 75 clinical trials, and 11 systematic reviews being produced every day (Bastian et al., 2010).1 Although clinicians need not review every study to provide high-quality care, the ever-increasing volume of evidence makes it difficult to maintain a working knowledge of new clinical information.

Even so, however, the availability of such high-quality evidence is not keeping pace with the ever-increasing demand for clinical information that can help guide decisions on different diagnostics, interventions and therapies, and care delivery approaches (see Box 6-1 for an example of this information paradox). Rather, the gap between the evidence possible and the evidence produced continues to grow, and studies indicate that the number of guideline statements backed by evidence is not at the level that should be expected. In some cases, 40 to 50 percent of the recommendations made in guidelines are based

___________________________________________________

1The number of journal publications was determined from searches on PubMed for 2010 (National Library of Medicine: http://www.ncbi.nlm.nih.gov/pubmed/) using the methodology described in Chapter 2.

BOX 6-1

The Information Paradox

The treatment of breast cancer is one example of the information paradox in clinical medicine. Relative to years past, a vast array of information about breast cancer is available. Five decades ago, breast cancer was detected from a physical exam, no biopsy was performed, and mastectomy was the recommended treatment for all detected breast cancers (Harrison, 1962). Today, multiple imaging technologies exist for the detection and diagnosis of the disease, including standard x-ray mammography, computed tomography (CT), ultrasound, positron emission tomography (PET), and magnetic resonance imaging (MRI) (IOM, 2001b, 2005). Similarly, traditional biopsies required surgical excision of the area of interest, whereas new methods allow for a less invasive evaluation, such as fine needle aspiration biopsy and core needle biopsy, and may be performed under imaging guidance (Bevers et al., 2009). Once diagnosed, the cancer can be further characterized by genetic characteristics (such as BRCA1, BRCA2, HER-2, and now multigene tests), in addition to its estrogen and progesterone receptor status. Treatments have developed at a similarly fast pace, with a number of surgical, radiological, chemotherapy, and endocrine therapies now being available, along with targeted therapies such as monoclonal antibodies (Kasper and Harrison, 2005; National Comprehensive Cancer Network, 2012). While progress in breast cancer diagnosis and treatment has been swift, however, the comparative efficacy and safety of these diagnostic technologies and treatments have not been evaluated; these innovations are administered without an adequate evidence basis. Likewise, the efficacy of many treatments or the accuracy of many diagnostic technologies is unknown for a given patient with a given condition (IOM, 2008). The results include widespread variation in patient care, confusion among patients and providers on the best methods for treating a specific disease or condition, and waste due to delivering services that are ineffective or even harmful for the patient.

on expert opinion, case studies, or standards of care rather than on multiple clinical trials or meta-analyses (Chauhan et al., 2006; IOM, 2008, 2011b; Tricoci et al., 2009). A study of the strength of the current recommendations of the Infectious Diseases Society of America, for example, found that only 14 percent were based on more than one randomized controlled trial, and more than half were based on expert opinion alone (Lee and Vielemeyer, 2011). Another study, examining the joint cardiovascular clinical practice guidelines of the American College of Cardiology and the American Heart Association, found that the current guidelines were based largely on lower levels of evidence or expert opinion (Tricoci et al., 2009).

The inadequacy of the evidence base for clinical guidelines has consequences for the evidence base for care delivered. Estimates vary on the proportion of clinical decisions in the United States that are adequately informed by formal evidence gained from clinical research, with some studies suggesting a figure of just 10-20 percent (Darst et al., 2010; IOM, 1985). These results suggest that there are substantial opportunities for improvement in ensuring that the knowledge generated by the clinical research enterprise meets the demands of evidence-based care.

Even after identifying relevant information for a given condition, clinicians still must ensure that the information is of high quality—that the risk of contradiction by later studies is minimal, that the information is uncolored by bias or conflicts of interest, and that it applies to a particular patient’s clinical circumstances. Several recent publications have observed that the rate of medical reversals is significant, with one recent paper finding that 13 percent of articles about medical practice in a high-profile journal contradicted the evidence for existing practices (Ioannidis, 2005b; Prasad et al., 2011). Another concern is managing conflicts of interest—which can occur in the research, education, and practice domains. As noted in the 2009 Institute of Medicine (IOM) report Conflict of Interest in Medical Research, Education, and Practice, patients can benefit when clinicians and researchers collaborate with the life science industry to develop new products, yet there are concerns that financial ties could unduly influence professional judgments. These tensions must be balanced to ensure that conflicts of interest do not negatively impact the integrity of the scientific research process, the objectivity of health professionals’ training and education, or the public’s trust in health care. There are approaches to managing conflicts of interest, especially financial relationships, without stifling important collaborations and innovations (IOM, 2009b).

Concerns exist as well about whether the current evidence base applies to the circumstances of particular patients. A study of clinical practice guidelines for nine of the most common chronic conditions, for example, found that fewer than half included guidance for the treatment of older patients with multiple comorbid conditions (Boyd et al., 2005). For patients and their health care providers, this lack of knowledge limits the ability to choose the most effective treatment for a condition. Furthermore, health care payers may not have the evidence they need to make coverage decisions for the patients enrolled in their plans. One analysis of Medicare payment policies for cardiovascular devices, for example, found that participants in the trials that provided evidence for coverage decisions differed from the Medicare population. Participants in the trials often were younger and healthier and had a different prevalence of comorbid conditions (Dhruva and Redberg, 2008).

Further, without greater capacity, the challenges to evidence production will only continue to grow. This is particularly true given the projected proliferation of new medical technologies; the increased complexity of managing chronic diseases; and the growing use of genomics, proteomics, and other biological factors to personalize treatments and diagnostics (Califf, 2004). As noted in Chapter 2, in one 3-year period, genome-wide scans were able to identify more than 100 genetic variants associated with nearly 40 diseases and traits; this growth in genetic understanding led to the availability in 2008 of more than 1,200 genetic tests for clinical conditions

(Genetics and Public Policy Center, 2008; Manolio, 2010; Pearson and Manolio, 2008).

Even as clinical research strains to keep pace with the rapid evolution of medical interventions and care delivery methods, improving and increasing the supply of knowledge with which to answer health care questions is a core aim of a learning health care system. The current research knowledge base provides limited support for answering important types of clinical questions, including those related to comparative effectiveness and long-term patient outcomes (British Medical Journal, 2011; Gill et al., 1996; IOM, 1985; Lee et al., 2005a; Tunis et al., 2003). This lack of knowledge is demonstrated by the fact that many technologies are not adequately evaluated before they see widespread clinical use. For example, cardiac computed tomography angiography (CTA) has been adopted widely throughout the medical community despite limited data on its effectiveness compared with alternative interventions, the risks of its use, and its substantial cost (Redberg, 2007). New opportunities in technology and research design can mitigate these limitations and offer a dynamic view of evidence and outcomes; leveraging these opportunities can bridge the gap between research and practice to accelerate the use of research in routine care.

Limitations of Current Methods

At present, support for clinical research often focuses on the randomized controlled trial as the gold standard for testing the effectiveness of diagnostics and therapeutics. The randomized controlled trial has gained this status because of its ability to control for many confounding factors and to provide direct evidence on the efficacy of different treatments, interventions, and care delivery methods (Hennekens et al., 1987). Yet, while the randomized controlled trial has a highly successful track record in generating new clinical knowledge, it has, like most research methods available today, several limitations: such trials are not practical or feasible in all situations, are expensive and time-consuming, address only the questions they were designed to answer, and cannot answer every type of research question.

A study of head-to-head randomized controlled trials for comparative effectiveness research purposes found that their costs ranged from $400,000 to $125 million, with the average costs for larger studies averaging $15-$20 million (Holve and Pittman, 2009, 2011). Randomized controlled trials also are slow to address the research questions they set out to answer. Half of all trials are delayed, 80 to 90 percent of these because of a shortage of willing trial participants (Grove, 2011). As currently designed and operated, moreover, randomized controlled trials do not address all clinically relevant populations, which may limit a trial’s generalizability to regular clinical

practice and many patient populations (Frangakis, 2009; Greenhouse et al., 2008; Stewart et al., 2007; Weisberg et al., 2009). At a time when many patients have multiple chronic conditions (Alecxih et al., 2010; Tinetti and Studenski, 2011), for example, patients with comorbidities are routinely excluded from most randomized controlled trials (Dhruva and Redberg, 2008; Van Spall et al., 2007). In addition, many current trials collect data only for a limited period of time, which means they may not capture long-term effects or low-probability side effects and may not reflect the practice conditions of many health care providers.

Other research methods have limitations as well. For instance, the strength of observational studies is that they capture health practices in real-world situations, which aids in generalizing their results to more medical practices. This research design can provide data throughout a product’s life cycle and allow for natural experiments provided by variations in care. However, observational studies are challenged to minimize bias and ensure that their results were due to the intervention under consideration. For this reason, as demonstrated by the use of hormone replacement therapy (see Box 6-2) and Vitamin E for the treatment of coronary disease, results of observational trials do not always accord with those of randomized

BOX 6-2

Considerations for Producing Evidence:

The Story of Hormone Replacement Therapy Trials

Research on the impact of hormone replacement therapy on coronary heart disease provides a cautionary note for less traditional research methods (Manson, 2010). Initial observational studies of women taking hormone replacement therapy suggested a reduction in the risk of heart disease in the range of 30 to 50 percent (Grady et al., 1992; Grodstein et al., 2000). However, later randomized trials, especially the Women’s Health Initiative, found no effect or even an elevated risk (Ioannidis, 2005a; Manson et al., 2003). Several factors may have led to these divergent results, including traditional confounding elements, the fact that these studies were limited in their ability to assess short-term or acute outcomes, and the predominance of follow-up data among long-term hormone therapy users. This example demonstrates that observational studies need to be careful to capture both short- and long-term outcomes (Grodstein et al., 2003). In addition, these types of studies need to consider the differential effects on clinically relevant subgroups; in this case, hormone therapy may have different impacts depending on whether it is started before or after the onset of menopause (Grodstein et al., 2006; IOM, 2008). The experience of hormone replacement therapy research highlights several areas for improvement in observational research design.

TABLE 6-1 Examples of Research Methods and Questions Addressed by Each

| Research Design | Questions Addressed |

|

Traditional randomized controlled trial |

Efficacy, therapeutic efficacy |

|

Active comparator randomized controlled trials, matched-pair studies |

Comparative effectiveness |

|

Surveillance studies |

Safety, side effects, indications |

|

Cohort studies, retrospective audit studies, prospective case series |

Effectiveness (generalizability to regular clinical practice and larger patient populations) |

SOURCE: Data derived from Walach et al., 2006.

controlled trials (Lee et al., 2005b; Rossouw et al., 2002), although some studies have shown concordance between the results derived from the two methods (Concato et al., 2000).

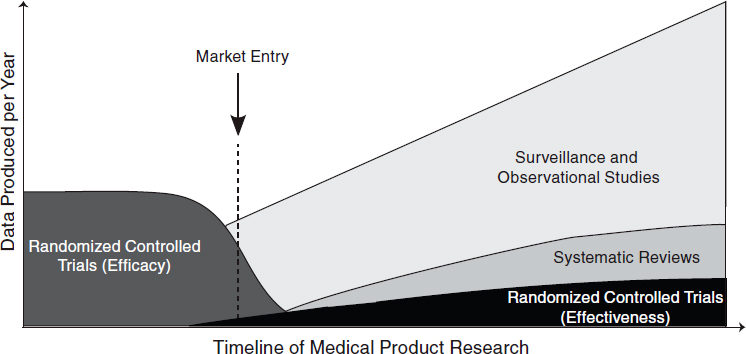

The challenge, therefore, is not determining which research method is the best for a particular condition but rather which provides the information most appropriate to a particular clinical need. Table 6-1 summarizes different research designs and the questions most appropriately addressed by each. In the case of examining biomedical treatments and diagnostic technologies, different types of studies will be more appropriate for different stages of a product’s life cycle. Early studies will need to focus on safety and efficacy, which will require randomized controlled trials, while later studies will need to focus on comparative effectiveness and surveillance of unexpected effects, requiring a mix of observational studies and randomized controlled trials. (See Figure 6-1 for a depiction of the change in appropriate research methods over time.) As this report was being written, the methodology committee of the Patient-Centered Outcomes Research Institute (PCORI) had developed a translation table to aid in determining the research methods most appropriate for addressing certain comparative clinical effectiveness research questions (PCORI, 2012). Each study must be tailored to provide useful, practical, and reliable results for the condition at hand.

Conclusion 6-1: Despite the accelerating pace of scientific discovery, the current clinical research enterprise does not sufficiently address pressing clinical questions. The result is decisions by both patients and clinicians that are inadequately informed by evidence.

Related findings:

- Clinical and biomedical research studies are being produced at an increasing rate. As noted in the findings supporting Conclusion 2-1, on average approximately 75 clinical trials and a dozen systematic reviews are published daily (see Chapter 2).

- The evidence basis for clinical guidelines and recommendations needs to be strengthened. In some cases, 40 to 50 percent of the recommendations made in guidelines are based on expert opinion, case studies, or standards of care rather than on multiple clinical trials or meta-analyses.

- Even at the current pace of production, the knowledge base provides limited support for answering many of the most important types of clinical questions. A study of clinical practice guidelines for nine of the most common chronic conditions found that fewer than half included guidance for the treatment of patients with multiple comorbid conditions.

- New methods are needed to address current limitations in clinical research. The cost of current methods for clinical research averages $15-$20 million for larger studies—and much more for some—yet the studies do not reflect the practice conditions of many health care providers.

FIGURE 6-1 Different types of research are needed at different stages of a medical product’s life cycle. Early trials will need to focus on therapeutic efficacy, while later research will need to focus on comparative effectiveness and surveillance.

SOURCE: Adapted from IOM, 2010a.

EMERGING CAPACITIES, METHODS, AND APPROACHES

As discussed above, there is a clear need for new approaches to knowledge generation, management, and application to guide clinical care, quality improvement, and delivery system organization. The current clinical research enterprise requires substantial resources and takes significant time to address individual research questions. Moreover, the results provided by these studies do not always generate the information needed by patients and their clinicians and may not always be generalizable to a larger population. New research methods are needed that address these serious limitations. Developments in information technology and research infrastructure have the potential to expand the ability of the research system to meet this need. For example, the anticipated growth in the adoption of digital records presents an unprecedented opportunity to expand the supply of data available for learning, generating insights from the regular delivery of care (see the discussion of the data utility in the next section for further detail on these opportunities). These new developments can increase the output derived from the substantial clinical research investments of agencies and foundations, including the Agency for Healthcare Research and Quality (AHRQ), the National Institutes of Health (NIH), and PCORI.

New tools are extending research methods and overcoming many of the limitations highlighted in the previous section (IOM, 2010a). The scientific community has recognized the need for change. High-profile efforts—including NIH’s Clinical and Translational Science Awards and the U.S. Food and Drug Administration’s Clinical Trials Transformation Initiative—have been undertaken to improve the quality, efficiency, and applicability of clinical trials, and new translational research paradigms have been developed (Lauer and Skarlatos, 2010; Luce et al., 2009; Woolf, 2008; Zerhouni, 2005). Based on these efforts and the work of academic research leaders, new forms of experimental designs have been developed, including pragmatic clinical trials, delayed design trials, and cluster randomized controlled trials2 (Campbell et al., 2007; Eldridge et al., 2008; Tunis et al., 2003, 2010). Other new methods have been devised to develop knowledge from data produced during the regular course of care. Initial results derived with these new methods have shown promise (see Box 6-3 for a description of one new method). Advanced statistical methods, including Bayesian analysis, allow for adaptive research designs that can learn as a study advances, making studies more flexible (Chow and Chang, 2008). Taken together,

___________________________________________________

2In pragmatic clinical trials, the questions faced by decision makers dictate the study design (Tunis et al., 2003b). In delayed design trials, participants are randomized to either receive the intervention or have it withheld for a period of time, with both groups receiving the intervention by the end of the study (Tunis et al., 2010). In cluster randomized controlled trials, groups of subjects, rather than individual subjects, are randomized (Campbell et al., 2007).

BOX 6-3

New Methods for Randomized Clinical Trials:

Point-of-Care Clinical Trial

One new method for conducting experimental research is the point-of-care clinical trial. These trials currently are being conducted at the Boston Veterans Affairs Health Care System, with similar trials being proposed or conducted at other locations (Vickers and Scardino, 2009). The method entails using an electronic health records system to conduct randomized controlled trials by automatically flagging patients who have a choice between competing treatments. If patients do not express a preference, they are asked whether they would be willing to participate in a trial and if so, are randomly assigned to a treatment protocol. The electronic health record system records outcome data and automatically calculates the effectiveness of the treatment protocols. Disadvantages of such trials are that they do not allow for a control group and can be used only for treatments that are already approved for standard care. This type of trial has started being applied to consideration of competing methods for insulin administration (a sliding scale versus a weight-based regimen) for blood sugar control (Fiore et al., 2011).

these new methods are designed to reduce the expense and effort of conducting research, improve the applicability of the results to clinical decisions, improve the ability to identify smaller effects, and be applied when traditional methods cannot be used.

In addition to new research methods, advances in statistical analysis, simulation, and modeling have supplemented traditional methods for conducting trials. Given that even the most tightly controlled trials show a distribution in patient responses to a given treatment or intervention, new statistical techniques can help segment results for different populations. Further, new Bayesian techniques for data analysis can separate out the effects of different clinical interventions on overall population health (Berry et al., 2006). With the growth in computational power, new models have been developed that can replicate physiological pathways and disease states (Eddy and Schlessinger, 2003; Stern et al., 2008). These models can then be used to simulate clinical trials and individualize clinical guidelines to a patient’s particular situation and biology; this approach thus holds promise for improving health status while reducing costs (Eddy et al., 2011). As computational power grows, the potential applications of these simulation and modeling tools will continue to increase. Despite the opportunities afforded by new research methods, several challenges must be addressed as these methods are improved. One such challenge for the clinical research enterprise is keeping pace with the introduction of new procedures,

treatments, diagnostic technologies, and care delivery models. As currently structured, clinical trials often are not comparable, so that a new trial must be conducted to compare the effectiveness of new treatments, diagnostics, or care delivery models with that of existing ones. One solution to this problem is to create standard comparators for a given disease or clinical condition, which would allow new innovations to be compared easily using existing data for current treatments or diagnostic technologies. Additionally, as the research enterprise is expanded, additional emphasis may be required in fields that are underserved by the current clinical research paradigm, such as pediatrics (Cohen et al., 2007; IOM, 2009c; Simpson et al., 2010). One exception to this observation is pediatric cancer care. Virtually all of the treatment provided in pediatric oncology is recorded and applied to registries or active clinical trials, which then inform future care for children undergoing treatment (IOM, 2010b; Pawlson, 2010).

In considering how to take advantage of opportunities to create a more nimble, timely, and targeted clinical research enterprise, three basic questions should be considered: (1) What does the system need to know? (2) How will the information be captured and used? and (3) How will the resulting knowledge be organized and shared? These questions have important ramifications for the design and operation of the overall data system.

With respect to the first question, stakeholders in the health care system are interested in comparing the effectiveness of different treatments and interventions, monitoring the current safety of medical products through surveillance, undertaking quality improvement activities, and understanding the quality and performance of different providers and health care organizations. Achieving these goals will require capturing data on the care that is delivered to patients, such as processes and structures of care delivery, and the outcomes of that care, such as longitudinal health outcomes and other outcomes important to patients. With respect to how these data will be used to generate new health care knowledge, uses will include comparing the effects of different treatments, interventions, or care protocols; establishing guidelines and best practices; and searching for unexpected effects of treatments or interventions. Finally, the new knowledge generated will have little impact if not shared broadly with all involved in delivering care for a given patient or, for research cases, all those involved in research. Each of these three questions is explored in further detail below.

What Does the System Need to Know?

Data on how patients respond to diagnostic technologies, treatments, interventions, or care delivery methods are the raw material for generating new clinical knowledge. However, gathering this raw material currently requires significant effort through specialized research protocols. Substantial quantities of clinical data are generated every day in the regular process of care. Unfortunately, most of this information remains locked inside paper records, which are difficult to access, transfer, and query. As of 2011, only about 34-35 percent of office-based physicians were using a basic electronic health record (EHR) system (Decker et al., 2012; Hsiao et al., 2011), while only 18 percent of hospitals had a basic system (DesRoches et al., 2012).

The anticipated growth in the adoption of digital records presents an unprecedented opportunity to improve the supply of data available for learning, particularly as data sources are designed to capture information generated during the delivery of care. Examples of such sources include larger clinical and administrative databases, clinical registries, personal electronic devices (such as smartphones and mobile sensors), clinical trials for regulatory purposes (such as new drug applications), and advanced EHR systems. New sources for data capture are fueled in part by the infusion of capital provided by the Health Information Technology for Economic and Clinical Health (HITECH) Act,3 which included financial incentives for the meaningful use of EHR systems. Just as the information revolution has transformed many other fields, growing stores of data hold the same promise for improving clinical research, clinical practice, and clinical decision making.

Health care providers play a critical role in supplying clinical data for research and ensuring the quality of the data. To achieve strong provider participation in the learning enterprise, data capture must be seamlessly integrated into providers’ daily workflow and must not disrupt the clinical routine. In addition, professional and specialty societies might be engaged to increase the number of providers willing to participate in the clinical research enterprise. Finally, aligning financial incentives and reimbursement can encourage providers and health care organizations to gather, store, and manage clinical data. Currently, many individuals and organizations donate their time when collecting data for research, which limits the amount of effort they can expend on these initiatives. Specific incentives for generating clinical data could increase the supply of data available for research and the quality of the overall enterprise.

___________________________________________________

3Included in the American Recovery and Reinvestment Act, Public Law 111-5, 111th Congress (February 17, 2009).

How Will the Information Be Captured and Used?

New sources of health care data, combined with existing resources, offer unprecedented opportunities to learn from health care delivery and patient care. These sources include, for example, EHR systems; registries on diseases, treatments, or specific populations; claims databases from insurers and payers; and mobile devices and sensors that capture local data. In addition to the capacity these sources bring to the collection of clinical data, they also support clinical effectiveness research; surveillance for safety, public health, and other purposes; quality improvement initiatives; population health management; cost and quality reporting; and tools for patient education.

As noted above, EHR systems provide a substantial opportunity for learning by unlocking information currently stored in paper medical records. For example, one study found that real-time analysis of clinical data from EHRs could have identified the increased risk of heart attack associated with rosiglitazone, a diabetes drug, within 18 months of its introduction, as opposed to the 7-8 years between the medication’s introduction and when concerns were raised publicly (Brownstein et al., 2010). In considering how to maximize the clinical knowledge gained from EHR systems, a tension exists between the data needs of research studies and the resources required to collect and store clinical data on care processes and patient outcomes. Given the range of health care research studies, it is likely to be infeasible for every system to capture the full amount of data needed to fulfill all potential research needs. A compromise solution to this tension is to identify those core pieces of information that are needed for many research questions and ensure that this limited set of information is captured faithfully by most digital health record systems. This method of identifying a core dataset that satisfies both research and clinical care needs has been used by several organizations. For example, the National Quality Forum’s (NQF’s) Quality Data Model defines a set of standardized clinical and administrative data that are needed to calculate quality measures using information from EHRs (National Quality Forum, 2010), while the HMO Research Network’s Virtual Data Warehouse (discussed further on page 165) maps data from the EHRs and medical claims of multiple health maintenance organization (HMO) plans into a standardized dataset. Other efforts focus on population health; for example, popHealth software integrates with providers’ EHRs to automate and simplify the reporting and exchange of quality data on the providers’ patient populations, and the Query Health project is setting data standards to enable research on population health (Fridsma, 2011; popHealth, 2012). In addition to the research benefits, routine adoption of core datasets in EHRs can enhance the

capacity for exchange of consistent health information across systems and organizations, thereby supporting improved coordination of health services.

As EHR systems become more widespread, it will be necessary to provide flexibility to address new and unforeseen research questions. The sheer scale and complexity of the digital utility, its use by a variety of individuals with conflicting needs, and its constant evolution will require new ways to set standards, develop applications, and interact with the users of clinical data. One technological solution is to ensure that these digital systems are designed in the modular fashion popular in other industries, as with smartphone applications and computer software. This modular approach could also provide additional capacity for meeting new research needs without necessitating an overhaul of the central structure of the digital system.

Registries, which are distinguished by their focus on a specific disease, procedure, treatment, intervention, or resource use, are another important tool for developing new knowledge (Robert Wood Johnson Foundation, 2010) (see Box 6-4). A registry collects uniform clinical data using observational methods to evaluate specified outcomes for a specific population and for a specific purpose (AHRQ, 2010). By collecting detailed data not contained in other sources, registries have been able to determine the clinical effectiveness of a variety of health care interventions and treatments (Akhter et al., 2009; Grover et al., 2001; Meadows et al., 2009; Savage et al., 2003). Further, the clinical and financial payoffs of this method of aggregating and generating knowledge can be substantial.

In addition to EHRs and registries, mobile technologies for providers and patients will play an increasingly important role in capturing and storing health care data. These technologies include a wide range of patient-focused devices that monitor patient health, with the potential to support improved diagnosis or treatment. Provider-focused tools include applications that are built into existing personal digital assistants, smartphones, and tablet computers to store patient health information or access clinical databases. According to industry reports, global sales of these portable devices for health care uses reached $8.2 billion in 2009, and growth of up to 7 percent per year is projected for the next 5 years (Kalorama Information, 2010).

Conclusion 6-2: Growing computational capabilities to generate, communicate, and apply new knowledge create the potential to build a clinical data infrastructure to support continuous learning and improvement in health care.

Related findings:

- The application of computing capacity and new analytic approaches enables the development of real-time research insights from existing patient populations. One study found that real-time analysis of clinical data from electronic health records could have identified the increased risk of heart attack associated with rosiglitazone, a diabetes drug, within 18 months of its introduction.

- Computational capabilities offer the prospect of speeding the delivery of important new insights from the care experience. For example, a comprehensive disease registry in Sweden has helped facilitate a 65 percent reduction in 30-day mortality and a 49 percent decrease in 1-year mortality for heart attack patients.

- Computational capabilities present promising, as yet unrealized, opportunities for care improvement. For example, mining data

BOX 6-4

Registries: An Important Source for Developing Knowledge

Registries that are well designed and well managed can promote continuous learning and improvement. One leader in the development and implementation of disease registries is Sweden, which has nearly 90 government-supported registries and where almost 25 percent of the nation’s medical expenses are covered and monitored by disease-specific registries. In the case of acute myocardial infarction (AMI), the Register of Information and Knowledge about Swedish Heart Intensive-Care Admissions, first established in 1991, collects data from all 74 of the nation’s major hospitals and covers approximately 80 percent of patients in Sweden who suffer an AMI. In 2005, the Register created a publicly reported quality index that ranked hospitals on their adherence to clinical guidelines, and by 2009, the average hospital quality index score was growing at an annual rate of 22 percent, with the lowest-performing hospitals improving at a rate of 40 percent per year. By 2009, the Register had helped facilitate a 65 percent reduction in the average 30-day mortality rate for patients who had suffered an acute heart attack, as well as a 49 percent decrease in the 1-year mortality rate from heart attacks.

A recent study estimated the savings that could occur if the United States had a registry for hip replacement surgery comparable to Sweden’s. Such a registry could yield savings amounting to $2 billion by 2015 by decreasing the number of surgeries needed to replace or repair failing hip prostheses. Absent such a registry, the total costs for these surgeries are expected to amount to $24 billion by that time.

SOURCE: Larsson et al., 2011.

- on patient outcomes and care processes at Intermountain’s LDS Hospital allows for continuous improvement of clinical practice guidelines. Implementation of an improved guideline for acute respiratory distress syndrome increased patient survival from 9.5 percent to 44 percent (see Chapter 9).

How Will Knowledge Be Organized and Shared?

Although each individual data source presents an opportunity for learning, the capacity for learning increases exponentially when the system can draw knowledge from multiple sources. Expanding the ability to share data requires developing technological solutions, building a data sharing culture, and addressing privacy and security concerns. Nevertheless, several organizations have successfully overcome these hurdles and implemented large-scale data sharing. Examples include large health care delivery organizations with extensive EHR capabilities, such as Kaiser Permanente and the Veterans Health Administration, and major initiatives in data sharing between different organizations, such as the Nationwide Health Information Network, the Care Connectivity Consortium, the Shared Health Research Information Network, and Informatics for Integrating Biology and the Bedside (i2b2) (Kuperman, 2011; Lohr, 2011; Murphy et al., 2010; Weber et al., 2009).

The technological aspects of sharing depend on the sources of the data. For EHR systems, sharing is complicated by the fact that there is a variety of EHR systems, each of which stores data using different methods and in different formats (Detmer, 2003). An additional complication is the inevitability of systems of different ages being in use, some that incorporate newer technologies and others that are legacy systems. Overcoming these barriers will require several technological solutions, such as interoperability strategies; methods for highlighting the quality of the data; and ways to identify the source, context, and provenance of the data (IOM, 2011c). The challenge to sharing between registries and EHRs is that many registries were developed before EHRs existed, so that in most cases, the two are not interoperable (Physician Consortium for Performance Improvement, 2011). However, improved sharing of data from EHRs may provide a new means of populating registries. One additional technological and policy hurdle is the difficulty of linking records for the same patient across multiple data sources, as different methods (from statistical linkages to unique patient identifiers) strike different balances between the desire for research accuracy and concerns about the privacy of health information (Detmer, 2003).

One method for sharing data securely and efficiently is through distributed data networks. In this design, each organization in the network stores its information locally, often in a common format. When a researcher seeks

to answer a specific research question, the organizations execute identical computer programs that analyze the organizations’ own data, create a summary of the results for each site, and share those summaries with the entire network. The advantage of this approach is that the institutions share only deidentified summary data instead of patient records. (See Box 6-5 for a description of one distributed data network, the Virtual Data Warehouse of the HMO Research Network, alluded to earlier.) Other models that could be used to share data include centralized databases, whereby data are submitted to and accessed at one central source, and alternative distributed designs, whereby clinical data are shared directly between different institutions (Brown et al., 2010).

BOX 6-5

An Example of a Distributed Data Network

One example of a distributed data network is the Virtual Data Warehouse of the HMO Research Network, formed in 1993, which links 16 integrated delivery systems. The participating health maintenance organizations (HMOs) collaborate to develop and implement common study designs and share standardized data (Vogt et al., 2004). Data from electronic health records (EHRs) and claims are mapped to a standardized set of definitions, names, and codes. The data for each local system are in a database format, structured so that the same computer program can be used at all sites for data analysis (Bocchino, 2011; Larson, 2007). Each site receives direction from the Virtual Data Warehouse Operational Committee, which provides implementation guidance, documentation, and quality control evaluation, and also manages the activities of cross-disciplinary workgroups on different data domains. The HMO Research Network has generated several collaborative projects, including the Cancer Research Network and Cardiovascular Research Network (Go et al., 2008; Hornbrook et al., 2005; Wagner et al., 2005).

Types of questions that have been considered by this distributed network include changes in women’s use of hormone replacement therapy after the Women’s Health Initiative (Wei et al., 2005), the risks of birth defects for cases in which a mother took two common heart medications (beta-blockers or calcium channel blockers) during pregnancy (Davis et al., 2011), and the frequency of potentially inappropriate prescriptions for elderly patients (Simon et al., 2005). Other examples of this approach include the National Bioterrorism Syndromic Surveillance Demonstration Program, which uses this distributed approach for surveillance of potential bioterrorism events and clusters of naturally occurring illness (Lazarus et al., 2006; Platt, 2010; Yih et al., 2004); the Shared Health Research Information Network, a federated query tool for three clinical data repositories created using the i2b2 open source software platform (Murphy et al., 2010; Weber et al., 2009); the Food and Drug Administration’s Mini-Sentinel network (Behrman et al., 2011; Lee et al., 2005a); and the Pediatric EHR Data Sharing Network (PedsNET).

While the above technical considerations are important, problems associated with data ownership may pose a greater challenge to the sharing and exchange of information (Blumenthal, 2006; Let data speak to data, 2005; Piwowar et al., 2008). Researchers have invested significant energy and resources in collecting data and thus may be hesitant to share the data freely with others. Clinical data may be viewed as a proprietary good that belongs to its owner, rather than a societal good that can benefit the population at large. Overcoming this barrier will require a shift toward research and organizational cultures that value open sharing of data. This culture change will in turn require efforts on the part of organizational and national leadership, recognition and rewards for data sharing, and education of researchers in the potential benefits of data sharing (Piwowar et al., 2008).

Significant testimony as to the importance of patient and public engagement, support, and demand for the use of clinical data to produce new knowledge is offered by the misinterpretation of the privacy rule of the Health Insurance Portability and Accountability Act (HIPAA), which led to restricted use of data for new insights. Privacy is a highly important societal and personal value, but the current formulation and interpretation of this rule not only offer limited protection to patients, but also may impede the broader health research enterprise (IOM, 2009a). In a 2007 survey, 68 percent of researchers reported that the HIPAA privacy rule had made research more difficult (Ness, 2007). The impediments arise from both actual and perceived barriers to data sharing attributed to the law and its associated regulations. In surveys, approximately half of health researchers have reported that HIPAA regulations have decreased recruitment of research participants; 80-90 percent have indicated that the regulations have increased research costs; and 50-80 percent have said they have increased the time needed to conduct research and noted that different institutional interpretations of the law and its associated regulations have impeded collaboration (Association of Academic Health Centers, 2008; Goss et al., 2009; Greene et al., 2006; IOM, 2009a; Ness, 2007). As suggested in the IOM report Beyond the HIPAA Privacy Rule, solving these problems will likely require a reformulation of the rule, as well as improved guidance to limit disparities in its interpretation (IOM, 2009a).

Conclusion 6-3: Regulations governing the collection and use of clinical data often create unnecessary and unintended barriers to the effectiveness and improvement of care and the derivation of research insights.

Related findings:

- Implementation of current regulations promulgated to improve privacy offers limited protection to patients and may impede the broader health research enterprise. In a 2007 survey, 68 percent of researchers reported that the HIPAA privacy rule had made research more difficult.

- Current regulations have made it difficult to recruit research participants, increased the cost and time needed to conduct research, and impeded collaboration. In surveys of researchers, approximately half have indicated that HIPAA regulations have decreased recruitment of research participants; 80-90 percent have indicated that the regulations have increased research costs; and 50-80 percent have said they have increased the time needed to conduct research.

THE LEARNING BRIDGE: FROM KNOWLEDGE TO PRACTICE

Unless the products of the nation’s clinical data utility and research enterprise are disseminated and applied in practice, their results are meaningless. Current systems that generate and implement new clinical knowledge are largely disconnected and poorly coordinated. While clinical data contribute to the development of many effective, evidence-based practices, therapeutics, and interventions every year, only some of these become widely used. Many others are used only in limited ways, failing to realize their transformative potential to improve care (IOM, 2011a).

Historically, research discoveries in health care have been disseminated through the publication of study results, typically in medical journals. Clinicians are expected to set aside time to read these published results, consider how to integrate them into their practice, and change their behavior accordingly. As noted earlier in this chapter, the extraordinary number of journal articles outstrips any clinician’s ability to read and process the information. Even if a clinician could read all of this information, its growth is rapidly outstripping human cognitive capacity to integrate the full body of literature when considering a specific clinical situation and a specific patient. As noted in Chapter 2, this growth in complexity can hamper a clinician’s ability to make decisions. Moreover, clinicians’ patterns for seeking out information have changed. Fully 86 percent of physicians now use the Internet to gather health, medical, or prescription drug information (Dolan, 2010). Of these physicians, 71 percent use a search engine to start their search for information. This change in information-seeking behavior has consequences for how medical information can be organized and publicized in a way that maximizes its chances of being implemented in clinical practice.

Unfortunately, evidence suggests that simply providing information, albeit more quickly, rarely changes clinical practice (Avorn and Fischer, 2010; Schectman et al., 2003). Multiple reasons may explain this situation. Sometimes, clinicians fail to change their behavior because they are unaware that new knowledge exists. Sometimes they may disagree that a research discovery would improve care for their patients. At other times, they do not perceive a great enough benefit to outweigh the burden of changing established practices (Cabana et al., 1999).

The challenge, therefore, is how to diffuse knowledge in ways that facilitate uptake by clinicians (McCannon and Perla, 2009). Many approaches currently are used to disseminate knowledge throughout the health care system, and these could be leveraged to increase the rate at which knowledge is disseminated. A further challenge is to disseminate knowledge that is useful for the clinical decisions faced by individual patients. To this end, traditional dissemination methods must be modified so that general research knowledge is adapted to the particular circumstances faced by each patient. While logistically demanding, this adaptation holds promise for improving the effectiveness and value of care while meeting the aim of improved patient-centeredness.

One technological tool for bringing research results into the clinical arena is clinical decision support. A clinical decision support system integrates information on a patient with a computerized database of clinical research findings and clinical guidelines. The system generates patient-specific recommendations that guide clinicians and patients in making clinical decisions (IOM, 2001a). One study, for example, found that digital decision support tools helped clinicians apply clinical guidelines, improving health outcomes for diabetics by 15 percent (Cebul et al., 2011). Tools under development may tailor the information to the individual patient, allowing the clinician to predict how an intervention would affect that patient. Further enhancing clinicians’ predictive capacities are advanced informatics and simulation systems that can use data to model likely outcomes for similar patients receiving various treatments or supportive services. Clinical decision support systems also can help address cognitive errors (as discussed in Chapter 2), such as attribution, availability bias, and anchoring,4 all of which may contribute to errors and wrong diagnoses (Blue Cross Blue Shield of Massachusetts Foundation, 2007). Greater adoption of clinical decision support could be achieved through advances in interoperability with EHR and computerized physician order entry (CPOE) systems from

___________________________________________________

4Attribution denotes a clinician’s use of social stereotypes or attributes to link certain diagnoses to certain patients (Blue Cross Blue Shield of Massachusetts Foundation, 2007). Availability bias occurs when memorable cases or frequent clinical phenomena influence a clinician’s diagnosis. Anchoring is a cognitive shortcut in which the first piece of clinical information heard by the clinician has an undue influence on the clinician’s thought process going forward.

multiple vendors, allowing this technology to be embedded seamlessly in the standard clinical workflow (Sittig et al., 2008; Wright and Sittig, 2008).

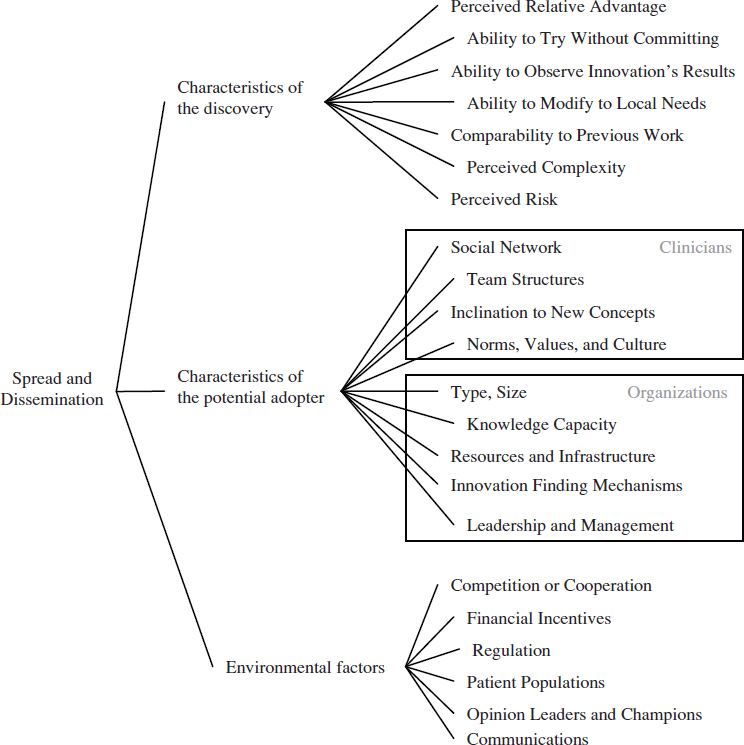

Regardless of the channels used to distribute new clinical knowledge, the clinical research system needs to account for the many factors that promote (or inhibit) the use of this knowledge. These factors will vary in their importance according to different types of clinicians, health care organizations, geographic locations, patient populations, and other factors. In general, the dissemination of a research discovery is dependent on three broad categories of factors: attributes of the discovery, characteristics of the potentially adopting clinician or health care organization, and environmental factors. Figure 6-2 illustrates these factors and their relationships. As depicted, the process of diffusion and scale-up is messy, organic, and dynamic. An individual or organization does not move linearly from research to development to implementation, but rather moves between these stages based on perceived needs and individual concerns (Greenhalgh et al., 2004).

FIGURE 6-2 Multiple factors affect whether new clinical knowledge is disseminated and implemented across the health care system.

The most obvious factor affecting the dissemination of a research discovery is its relative advantage over other competing interventions, therapeutics, or practices (Berwick, 2003; Cain and Mittman, 2002; Della Penna et al., 2009). Simply put, people are more likely to implement a new idea if they believe it can help them with a problem. In a health care context, this relative advantage could take multiple forms, from improved clinical effectiveness over existing treatments, to convenience in delivering the intervention, to reduced cost. While relative advantage is an important factor, other characteristics of a research discovery also have been found to be important, including whether the discovery’s results can be observed easily and quickly, whether a potential adopter can try it without committing to it, its perceived complexity, and its ability to be modified to fit local circumstances (Rogers, 2003; Shih and Berliner, 2008; Vos et al., 2010). Many of these factors are not objective measures, but are based on the perceptions of potential adopters. This means the factors change based on the setting, the potential adopter, and time (Berwick, 2003; Greenhalgh et al., 2004).

A related cluster of factors that affect the dissemination of a research discovery encompasses the characteristics of the potentially adopting clinician. Evidence from adult learning reveals that clinicians’ previous experiences and knowledge will affect their learning about new ideas and practices (Committee on Developments in the Science of Learning et al., 2000). In a related way, the dissemination of a research discovery will depend on individual clinicians’ values and culture, as well as their inclination to experiment with new ideas (Bate et al., 2004; Berwick, 2003; Green and Plsek, 2002). For instance, some individuals are more willing to try new ideas, while others favor traditional methods. Dissemination will also depend on the clinician’s social networks and those networks’ views of the knowledge, practice, or technology (Cain and Mittman, 2002; Dopson et al., 2002; McCullough, 2008; Shih and Berliner, 2008).

This cluster of factors changes when the potential adopter is an organization instead of a clinician. For potentially adopting hospitals and health care organizations, dissemination will vary based on the type of hospital and its resources, especially whether it has resources available for implementing new ideas (McCullough, 2008). Specific capabilities that promote the adoption of new ideas are the support of the organization’s leadership and management, the existence of robust channels for sharing knowledge, and the presence of structures that can discover potentially beneficial ideas from outside of the organization (Della Penna et al., 2009; Ferlie and

Shortell, 2001; Green and Plsek, 2002; Nolan et al., 2005; Norton and Mittman, 2010; Pisano et al., 2001).

Finally, environmental factors that are distinct from the previous two clusters affect the dissemination of research discoveries. Financial incentives, reimbursement, the insurance environment, and regulations all impact whether an idea is adopted (Cutler et al., 1996; Mandel, 2010; McCullough, 2008; Robinson et al., 2009; Shih and Berliner, 2008). As with the previous clusters, these factors are not absolutes, but will vary depending on the specific discovery.

The strategy used to communicate a discovery is a particularly important environmental factor. Some successful strategies have involved using in-person educational methods, providing feedback on the process, employing opinion leaders or developing champions, or outlining an overall vision (Davis and TaylorVaisey, 1997; Flodgren et al., 2011; McCannon et al., 2006; O’Connor et al., 1996; Schectman et al., 2003; Soumerai et al., 1998). Another successful communication strategy is the creation of learning or improvement networks (Podolny and Page, 1998). Such networks provide a structure for the exchange of information and include those individuals necessary for the implementation of change on a larger scale (Carnegie Foundation for the Advancement of Teaching, 2010). This type of tool may be useful for managing the high degree of variation across the health care field, because information can be shared about how to customize guidelines, practice patterns, and other knowledge to fit local conditions (McCannon and Perla, 2009). Finally, reporting of data on performance and practice variation can spur the adoption of evidence-based practices (see Chapter 8 for a discussion of the use of reporting).

The complex interplay of the above factors is illustrated by a case study on disseminating a change across a large organization. In 2005, a large, integrated health care delivery system concluded a randomized controlled trial of several palliative care models, identifying the model that improved patient satisfaction and outcomes most successfully. The next year, after the organization’s national executive leadership had set the expectation that all its member hospitals would implement this care model within 1 year, the organization established a large-scale initiative to disseminate the model. Within a 2-year period, the model was in place at all 32 network hospitals, the number of palliative care consults had risen from 1,572 to 16,293, and the number of interdisciplinary palliative care teams had more than doubled. One of the more important factors responsible for this successful dissemination was the clear relative advantage of the palliative care model in terms of patient satisfaction, outcomes, and cost, as demonstrated by the randomized controlled trial. This initiative also was compatible with clinician values, which spurred an emotional pull to improve care during advanced illness. Additional reasons for the dissemination included the

involvement of senior leadership and opinion leaders, existing communication channels throughout the organization, and broad social networks that shared information. While many positive factors encouraged dissemination, several impediments were faced as well, including resource constraints, the competition of preexisting palliative care models, and ambiguous accountability for implementation (Della Penna et al., 2009).

As demonstrated by this example, a considerable amount is known about the factors that contribute to successful dissemination and scale-up. For any individual case, however, it is unknown which factors will best yield widespread implementation; the success of any particular knowledge, practice, or technology is context specific and depends on local conditions and human factors (Davidoff, 2009) (see Chapter 9 for a discussion of the spread of ideas within an organization). Also unknown is how the factors that influence dissemination interact with one another to increase (or decrease) its likelihood.

A final element in understanding dissemination is customization to local conditions. As new technologies and procedures diffuse into clinical practice, health care professionals further modify and extend their application by discovering new populations, indications, and long-term effects. This observation highlights the importance of measuring the health and economic outcomes of clinical interventions in everyday practice (IOM, 2010a). The case of coronary artery bypass graft surgery offers an example of how the use of treatments changes over time: it is estimated that only 4 to 13 percent of patients who underwent this surgery a decade after its introduction would have met the eligibility criteria of the trials that determined its initial effectiveness (Hlatky et al., 1984). Similar results have been noted for other interventions; for example, slightly more than half of patients receiving the antiplatelet agent clopidogrel for vascular disease would have been eligible for the clinical trials that demonstrated its effectiveness (Choudhry et al., 2008). The ultimate use of a treatment or intervention may be very different from what its developers initially envisioned.

Conclusion 6-4: As the pace of knowledge generation accelerates, new approaches are needed to deliver the right information, in a clear and understandable format, to patients and clinicians as they partner to make clinical decisions.

Related findings:

- The slow pace of dissemination and implementation of new knowledge in health care is harmful to patients. For example, it took 13 years for most experts to recommend thrombolytic drugs for

- heart attack treatment after their first positive clinical trial (see Chapter 2).

- Available evidence often is unused in clinical decision making. One analysis of the use of implantable cardioverter-defibrillator (ICD) implants found that 22 percent were implanted in circumstances outside of professional society guidelines (see Chapter 3).

- Decision support tools, which can be broadly provided in electronic health records, hold promise for improving the application of evidence. One study found that digital decision support tools helped clinicians apply clinical guidelines, improving health outcomes for diabetics by 15 percent.

PEOPLE, PATIENTS, AND CONSUMERS AS ACTIVE STAKEHOLDERS

Given the critical role of patients and consumers in the health care system, patients need to be more fully engaged in clinical research and the data utility. The success of both enterprises depends on patient support and investment in their aims. For clinical research, this means incorporating patient perspectives and greater public participation (see also Chapter 7) to ensure that the research enterprise addresses patient needs (IOM, 2011d). For the data utility, the public has an important role in motivating its expansion to improve care and build knowledge.

Currently, public awareness of and participation in the clinical research enterprise remains limited, as exemplified by a reduced willingness to participate in clinical trials during the past decade (Woolley and Propst, 2005). In addition, national surveys from 2005 and 2010 found that approximately two-thirds of respondents had concerns about the privacy and security of their health information (Holmes and Karp, 2005; Undem, 2010). Improving this situation will require new efforts to build trust in the clinical research enterprise among patients, consumers, and the public. Building this trust will require effort on multiple fronts, including increasing trust in the results of clinical research, being open and honest about the risks and benefits of this type of research, and ensuring that appropriate privacy and security safeguards are in place for health data.

Opportunities exist for improving patient engagement in clinical research. There is some evidence that patients with complex conditions, such as cancer, may be open to sharing data for research purposes, with one study finding that 60-70 percent of cancer patients agreed their deidentified clinical data should be shared to improve clinical knowledge (Beckjord et al., 2011). Similarly, a 2004 survey found that almost 70 percent of respondents would willingly share deidentified health information to improve health care services, and a similar percentage would share their deidentified

data with researchers (Research!America, 2004). A recent national survey of consumers found that almost 90 percent of respondents strongly or somewhat agreed that their health data should be used “to help improve the care of future patients who might have the same or similar condition” (Alston and Paget, 2012).

Ideally, clinical decisions should balance the health benefits of a given intervention against potential harms, taking into consideration the patient’s preferences, needs, and values. Research that incorporates patient perspectives will potentially be more useful for clinicians and patients making such decisions. One means of accomplishing this is to collect information on outcomes from patients with respect to their quality of life, such as their level of function or emotional state. While important, however, it can be difficult to design instruments that can collect high-quality data reflecting a health concept of interest (Rothman et al., 2009). One promising initiative is the NIH Patient-Reported Outcomes Measurement Information System (PROMIS), which incorporates a series of items measuring different aspects of physical, mental, and social health (Cella et al., 2007, 2010). Continued improvements in the collection of this type of clinical data hold promise for improving the ability of research to help patients understand how therapies and interventions may affect their quality of life.

In addition, novel technologies allow for new means of collecting health care data directly from patients. Enabled by advances in digital technologies and informatics, patients and consumers now have the ability to be involved in collecting and sharing data on their personal condition. This vision is being actualized in biobanks operated by disease-specific organizations, in addition to social networking sites. Examples of social networking sites that aim to promote patient participation in research include PatientsLikeMe®, Love/Avon Army of Women, and Facebook health groups (see Box 6-6). While there are specific challenges for these patient-initiated approaches, due especially to bias in self-reporting, as well as issues of data quality and protection against discrimination, the prevalence of such approaches can only be expected to increase.

One major recent initiative that focuses attention on patients in clinical research is PCORI, which was established by the Patient Protection and Affordable Care Act of 2010. As noted in its mission statement, PCORI seeks to help consumers and patients make informed health care decisions by encouraging research guided by patients, caregivers, and the entire health care community (Washington and Lipstein, 2011). Because PCORI is relatively new, it is in the process of considering methods and standards for research focused on patient-centered outcomes, drafting national priorities, and developing a research agenda. This type of research holds promise for increasing the patient-centeredness of the entire clinical research enterprise.

BOX 6-6

Increased Patient Participation in Research

Patients with difficult-to-treat conditions increasingly are using websites to compare experiences and information. These patients sometimes experiment with treatments that do not yet have regulatory approval and post their data and results online. Researchers can potentially use these self-reported data to measure the effectiveness of drugs and treatments in development. While using these data has several statistical drawbacks—the selection is not blind, and self-reported data can leave room for error or fraud—a preliminary study showed the potential of this research method. Patients with amyotrophic lateral sclerosis (ALS) experimented with lithium carbonate after a small study in Italy suggested it might slow the progression of the disease, and reported their experiences on the website PatientsLikeMe. When researchers aggregated and studied these data, they determined that the lithium had no effect—the same conclusion resulting from a subsequent randomized controlled trial. This research method, even with its drawbacks, has several advantages, including speed of data collection, low cost, patient engagement, the availability of control participants, and ease of patient access (Wicks et al., 2011).

FRAMEWORK FOR ACHIEVING THE VISION5

Knowledge generation in the U.S. health care system presents a fundamental paradox. While the clinical research enterprise generates new insights at an ever-increasing rate, the demand for knowledge at the point of care remains unmet. The result is decisions by clinicians and patients that are inadequately informed by evidence. In addition, the data generated from every patient encounter hold tremendous promise to serve as a clinical

___________________________________________________

5Note that in Chapters 6-9, the committee’s recommendations are numbered according to their sequence in the taxonomy in Chapter 10.

data infrastructure that, through the use of new research techniques, can begin to meet the system’s need for real-time clinical knowledge.

Given advances in computing and other technologies, the potential exists to create a clinical data utility that provides a substantial opportunity for learning (President’s Information Technology Advisory Committee, 2001, 2004). The creation of this data utility will require action on the technological, clinical, research, and administrative fronts—from identifying the data that need to be captured, to encouraging broader sharing and communication of the data, to effecting the data’s widespread clinical use. Recommendation 1 details the steps necessary to develop a clinical data infrastructure that supports clinical care, improvement initiatives, and research.

Recommendation 1: The Digital Infrastructure

Improve the capacity to capture clinical, care delivery process, and financial data for better care, system improvement, and the generation of new knowledge. Data generated in the course of care delivery should be digitally collected, compiled, and protected as a reliable and accessible resource for care management, process improvement, public health, and the generation of new knowledge.

Strategies for progress toward this goal:

- Health care delivery organizations and clinicians should fully and effectively employ digital systems that capture patient care experiences reliably and consistently, and implement standards and practices that advance the interoperability of data systems.

- The National Coordinator for Health Information Technology, digital technology developers, and standards organizations should ensure that the digital infrastructure captures and delivers the core data elements and interoperability needed to support better care, system improvement, and the generation of new knowledge.

- Payers, health care delivery organizations, and medical product companies should contribute data to research and analytic consortia to support expanded use of care data to generate new insights.

- Patients should participate in the development of a robust data utility; use new clinical communication tools, such as personal portals, for self-management and care activities; and be involved in building new knowledge, such as through patient-reported outcomes and other knowledge processes.

- The Secretary of Health and Human Services should encourage the development of distributed data research networks and expand the availability of departmental health data resources for translation into accessible knowledge that can be used for improving care, lowering costs, and enhancing public health.

- Research funding agencies and organizations, such as the National Institutes of Health, the Agency for Healthcare Research and Quality, the Veterans Health Administration, the Department of Defense, and the Patient-Centered Outcomes Research Institute, should promote research designs and methods that draw naturally on existing care processes and that also support ongoing quality-improvement efforts.

Legal and regulatory restrictions can serve as a barrier to real-time learning and improvement. Results of previous surveys of health researchers suggest that the current formulation of the HIPAA privacy rule has increased the cost and time needed to conduct research, that different institutional interpretations of HIPAA and associated regulations have impeded collaboration, and that the rule has made it difficult to recruit subjects (Association of Academic Health Centers, 2008; Goss et al., 2009; Greene et al., 2006; IOM, 2009a; Ness, 2007). While privacy is an important societal and personal value, the current formulation of the privacy rule not only offers limited protection to patients but also may impede the broader health research enterprise (IOM, 2009a). Recommendation 2 outlines actions needed to address this challenge, drawing on the IOM report Beyond the HIPAA Privacy Rule (IOM, 2009a).

Recommendation 2: The Data Utility

Streamline and revise research regulations to improve care, promote the capture of clinical data, and generate knowledge. Regulatory agencies should clarify and improve regulations governing the collection and use of clinical data to ensure patient privacy but also the seamless use of clinical data for better care coordination and management, improved care, and knowledge enhancement.

Strategies for progress toward this goal:

- The Secretary of Health and Human Services should accelerate and expand the review of the Health Insurance Portability and Accountability Act (HIPAA) and institutional review board (IRB) policies with respect to actual or perceived regulatory impediments to the protected use of clinical data, and clarify regulations and their interpretation to support the use of clinical data as a resource for advancing science and care improvement.

- Patient and consumer groups, clinicians, professional specialty societies, health care delivery organizations, voluntary organizations, researchers, and grantmakers should develop strategies and outreach to improve understanding of the benefits and importance of accelerating the use of clinical data to improve care and health outcomes.

Further, new knowledge can be poorly integrated into regular clinical care, highlighting the need for new approaches to deliver the right information to the point of care. To ensure the availability of clinical knowledge when and where needed, Recommendation 3 outlines actions that can be

taken to disseminate clinical knowledge broadly and ensure its widespread application.

Recommendation 3: Clinical Decision Support

Accelerate integration of the best clinical knowledge into care decisions. Decision support tools and knowledge management systems should be routine features of health care delivery to ensure that decisions made by clinicians and patients are informed by current best evidence.

Strategies for progress toward this goal:

- Clinicians and health care organizations should adopt tools that deliver reliable, current clinical knowledge to the point of care, and organizations should adopt incentives that encourage the use of these tools.

- Research organizations, advocacy organizations, professional specialty societies, and care delivery organizations should facilitate the development, accessibility, and use of evidence-based and harmonized clinical practice guidelines.

- Public and private payers should promote the adoption of decision support tools, knowledge management systems, and evidence-based clinical practice guidelines by structuring payment and contracting policies to reward effective, evidence-based care that improves patient health.

- Health professional education programs should teach new methods for accessing, managing, and applying evidence; engaging in lifelong learning; understanding human behavior and social science; and delivering safe care in an interdisciplinary environment.

- Research funding agencies and organizations should promote research into the barriers and systematic challenges to the dissemination and use of evidence at the point of care, and support research to develop strategies and methods that can improve the usefulness and accessibility of patient outcome data and scientific evidence for clinicians and patients.