This chapter focuses on the actions that health care organizations can take to design a work system that supports the diagnostic process and reduces diagnostic errors (see Figure 6-1). The term “health care organization” is meant to encompass all settings of care in which the diagnostic process occurs, such as integrated care delivery settings, hospitals, clinician practices, retail clinics, and long-term care settings, such as nursing and rehabilitation centers. To improve diagnostic performance, health care organizations need to engage in organizational change and participate in continuous learning. The committee recognizes that health care organizations may differ in the challenges they face related to diagnosis and in their capacity to improve diagnostic performance. They will need to tailor the committee’s recommendations to their resources and challenges with diagnosis.

The first section of this chapter describes how organizational learning principles can improve the diagnostic process by providing feedback to health care professionals about their diagnostic performance and by better characterizing the occurrence of and response to diagnostic errors. The second section highlights organizational characteristics—in particular, culture and leadership—that enable organizational change to improve the work system in which the diagnostic process occurs. The third section discusses actions that health care organizations can take to improve the work system and support the diagnostic process. For example, the physical environment (i.e., the design, layout, and ambient conditions) can affect diagnosis and is often under the control of health care organizations.

ORGANIZATIONAL LEARNING TO IMPROVE DIAGNOSIS

In any health care organization, prioritizing continuous learning is key to improving clinical practice (Davies and Nutley, 2000; IOM, 2013; WHO, 2006). The Institute of Medicine (IOM) report Best Care at Lower Cost concluded that health care organizations focused on continuous learning are able to more “consistently deliver reliable performance, and constantly improve, systematically and seamlessly, with each care experience and transition” than systems that do not practice continuing learning (IOM, 2013, p. 1). These learning health care organizations ensure that individual health care professionals and health care teams learn from their successes and mistakes and also use this information to support improved performance and patient outcomes (Davies and Nutley, 2000). Box 6-1 describes the characteristics of a continuously learning health care organization.

A focus on continuous learning in the diagnostic process has the potential to improve diagnosis and reduce diagnostic errors (Dixon-Woods et al., 2011; Gandhi, 2014; Grumbach et al., 2014; IOM, 2013; Trowbridge, 2014). To support continuous learning in the diagnostic process, health

care organizations need to establish approaches to identify diagnostic errors and near misses and to implement feedback mechanisms on diagnostic performance. The challenges related to identifying and learning from diagnostic errors and near misses, as well as actions health care

BOX 6-1

Characteristics of a Continuously Learning Health Care Organization

Science and Informatics

Real-time access to knowledge—A learning health care organization continuously and reliably captures, curates, and delivers the best available evidence to guide, support, tailor, and improve clinical decision making and the safety and quality of care.

Digital capture of the care experience—A learning health care organization captures the care experience on digital platforms for the real-time generation and application of knowledge for care improvement.

Patient–Clinician Partnerships

Engaged, empowered patients—A learning health care organization is anchored on patient needs and perspectives and promotes the inclusion of patients, families, and other caregivers as vital members of the continuously learning care team.

Incentives

Incentives aligned for value—In a learning health care organization, incentives are actively aligned to encourage continuous improvement, identify and reduce waste, and reward high-value care.

Full transparency—A learning health care organization systematically monitors the safety, quality, processes, prices, costs, and outcomes of care and makes information available for care improvement and informed choices and decision making by clinicians, patients, and their families.

Culture

Leadership-instilled culture of learning—A learning health care organization is stewarded by leadership committed to a culture of teamwork, collaboration, and adaptability in support of continuous learning as a core aim.

Supportive system competencies—In a learning health care organization, complex care operations and processes are constantly refined through ongoing team training and skill building, systems analysis and information development, and the creation of feedback loops for continuous learning and system improvement.

SOURCE: IOM, 2013.

organizations and health care professional societies can take to achieve this goal, are discussed below.

Identifying, Learning from, and Reducing Diagnostic Errors and Near Misses

Diagnostic errors have long been an understudied and underappreciated quality challenge in health care organizations (Graber, 2005; Shenvi and El-Kareh, 2015; Wachter, 2010). In a presentation to the committee, Paul Epner reported that the Society to Improve Diagnosis in Medicine “know[s] of no effort initiated in any health system to routinely and effectively assess diagnostic performance” (2014; see also Graber et al., 2014). The paucity of attention on diagnostic errors in clinical practice has been attributed to a number of factors. Two major contributors are the lack of effective measurement of diagnostic error and the difficulty in detecting these errors in clinical practice (Berenson et al., 2014; Graber et al., 2012b; Singh and Sittig, 2015). Additional factors may include a health care organization’s competing priorities in patient safety and quality improvement, the perception that diagnostic errors are inevitable or that they are too difficult to address, and the need for financial resources to address this problem (Croskerry, 2003, 2012; Graber et al., 2005; Schiff et al., 2005; Singh and Sittig, 2015). These challenges make it difficult to identify, analyze, and learn from diagnostic errors in clinical practice (Graber, 2005; Graber et al., 2014; Henriksen, 2014; Singh and Sittig, 2015).

Compared to diagnostic errors, other types of medical errors—including medication errors, surgical errors, and health care–acquired infections—have historically received more attention within health care organizations (Graber et al., 2014; Kanter, 2014; Singh, 2014; Trowbridge, 2014). This is partly attributable to the lack of focus on diagnostic errors within national patient safety and quality improvement efforts. For example, the Agency for Healthcare Research and Quality’s (AHRQ’s) Patient Safety Indicators and The Joint Commission’s list of specific sentinel events do not focus on diagnostic errors (AHRQ, 2015b; The Joint Commission, 2015a; Schiff et al., 2005). The National Quality Forum’s Serious Reportable Events list includes only one event closely tied to diagnostic error, which is “patient death or serious injury resulting from a failure to follow up or communicate laboratory, pathology, or radiology test results” (NQF, 2011). The neglect of diagnostic performance measures for accountability purposes means that hospitals today could meet standards for high-quality care and be rewarded through public reporting and pay-for-performance initiatives even if they have major challenges with diagnostic accuracy (Wachter, 2010).

While current research estimates indicate that diagnostic errors are

a common occurrence, health care organizations “do not have the tools and strategies to measure diagnostic safety and most have not integrated diagnostic error into their existing patient safety programmes” (Singh and Sittig, 2015, p. 103). Identifying diagnostic errors within clinical practice is critical to improving diagnosis for patients, but measurement has become an “unavoidable obstacle to progress” (Singh, 2013, p. 789). The lack of comprehensive information on diagnostic errors within clinical practice perpetuates the belief that these errors are uncommon or unavoidable and impedes progress on reducing diagnostic errors. Improving diagnosis will likely require a concerted effort among all health care organizations and across all settings of care to better identify diagnostic errors and near misses, learn from them, and, ultimately, take steps to improve the diagnostic process. Thus, the committee recommends that health care organizations monitor the diagnostic process and identify, learn from, and reduce diagnostic errors and near misses as a component of their research, quality improvement, and patient safety programs. In addition to identifying near misses and errors, health care organizations can also benefit from evaluating factors that are contributing to improved diagnostic performance.

Given the nascent field of measurement of the diagnostic process, the committee concluded that bottom-up experimentation will be necessary to develop approaches for monitoring the diagnostic process and identifying diagnostic errors and near misses. It is unlikely that one specific method will be successful at identifying all diagnostic errors and near misses; some approaches may be more appropriate than others for specific organizational settings, types of diagnostic errors, or for identifying specific causes. It may be necessary for health care organizations to use a variety of methods in order to have a better sense of their diagnostic performance (Shojania, 2010). As further information is collected regarding the validity and feasibility of specific methods for monitoring the diagnostic process and identifying diagnostic errors and near misses, this information will need to be disseminated in order to inform efforts within other health care organizations. The dissemination of this information will be especially important for health care organizations that do not have the financial and human resources available to pilot-test some of the potential methods for the identification of diagnostic errors and near misses. In some cases, small group practices may find it useful to pool their resources as they explore alternative approaches to identify errors and near misses and monitor the diagnostic process.

As discussed in Chapter 3, there are a number of methods being employed by researchers to describe the incidence and nature of diagnostic errors, including postmortem examinations, medical record reviews, health insurance claims analysis, medical malpractice claims analysis, sec-

ond reviews of diagnostic testing, and surveys of patients and clinicians. Some of these methods may be better suited than others for identifying diagnostic errors and near misses in clinical practice. Medical record reviews, medical malpractice claims analysis, health insurance claims analysis, and second reviews in diagnostic testing may be more pragmatic approaches for health care organizations because they leverage readily available data sources. Patient surveys may also be an important mechanism for health care organizations to consider. It is important to note that many of the methods described below are just beginning to be applied to diagnostic error detection in clinical practice; very few are validated or available for widespread use in clinical practice (Bhise and Singh, 2015; Graber, 2013; Singh and Sittig, 2015).

Medical record reviews can be a useful method to identify diagnostic errors and near misses because health care organizations can leverage their electronic health records (EHRs) for these analyses. The committee’s recommendation on health information technology (health IT) highlights the need for EHRs to include user-friendly platforms that enable health care organizations to measure diagnostic errors (see Chapter 5). Trigger tools, or algorithms that scan EHRs for potential diagnostic errors, can be used to identify patients who have a higher likelihood of experiencing a diagnostic error. For example, they can identify patients who return for inpatient hospitalization within 2 weeks of a primary care visit or patients who require follow-up after abnormal diagnostic testing results. Review of their EHRs can evaluate whether a diagnostic error occurred, using explicit or implicit criteria. For diagnostic errors, these tools have been piloted primarily in outpatient settings, but they are also being considered in the inpatient setting (Murphy et al., 2014; Shenvi and El-Kareh, 2015; Singh et al., 2012a). EHR surveillance, such as Kaiser Permanente’s SureNet System,1 is another opportunity to detect patients at risk of experiencing a diagnostic error (Danforth et al., 2014; Graber et al., 2014; HIMSS Analytics, 2015; Kanter, 2014). The SureNet System identifies patients who may have inadvertent lapses in care (such as a patient with iron deficiency anemia who has not had a colonoscopy to rule out colon cancer) and ensures that follow-up occurs by proactively reaching out to affected patients and members of their care team.

Medical malpractice claims analysis is another approach to identifying diagnostic errors and near misses in clinical practice. Chapter 7 discusses the importance of leveraging the expertise of professional liability insurers in efforts to improve diagnosis and reduce diagnostic errors and near misses. Health care professionals and organizations can collaborate with professional liability insurers in efforts to identify diagnostic errors

______________

1 Kaiser Permanente’s SureNet System was previously known as the SafetyNet System.

and near misses in clinical practice; because of the richness of the data source, this method could also be helpful in identifying the reasons why diagnostic errors occur. However, there are limitations with malpractice claims data because these claims may not be representative; few people who experience adverse events file claims, and the ones who do are more likely to have experienced serious harm.

Although there are few examples of using health insurance claims data to identify diagnostic errors and near misses, this may be a useful method, especially if it is combined with other approaches (e.g., if it is linked to medical records or diagnostic testing results). One of the advantages of this data source is that it makes it possible to assess the downstream clinical consequences and costs of errors. It also enables comparisons across different settings, types of clinicians, and days of the week (which can be important because there may be some days when staffing is low and the volume of patients unexpectedly high).

Second reviews of diagnostic testing results could also help health care organizations identify diagnostic errors and near misses related to the interpretive aspect of the diagnostic testing processes. A recent guideline recommended that health care organizations use second reviews in anatomic pathology to identify disagreements and potential interpretive errors (Nakhleh et al., 2015). The guideline notes that organizations will likely need to tailor the second review process that they employ and the number of reviews they conduct to their specific needs and resources (Nakhleh et al., 2015). Some organizations include anatomic pathology second reviews as part of their quality assurance and improvement efforts. The Veterans Health Administration requires that “[a]t least 10 percent of the cytotechnologist’s gynecologic cases that have been interpreted to be negative are routinely rescreened, and are diagnosed and documented as being negative by a qualified pathologist” (VHA, 2008, p. 32). Though the infrastructure for peer review in radiology is still evolving, there are now frameworks specific to radiology for identifying and learning from diagnostic errors (Allen and Thorwarth, 2014; Lee et al., 2013; Provenzale and Kranz, 2011). In addition to the use of peer review in identifying errors, there is an increasing emphasis on using peer review tools to promote peer learning and improve practice quality (Allen and Thorwarth, 2014; Brook et al., 2015; Fotenos and Nagy, 2012; Iyer et al., 2013; Kruskal et al., 2008). Organizations can participate in the American College of Radiology’s RADPEER™ program, which includes a second review process that can help identify diagnostic performance issues related to medical image interpretation (ACR, 2015).

Patient surveys represent another opportunity. The use of such surveys is in line with the committee’s recommendation to create environments in which patients and their families feel comfortable sharing their

feedback and concerns about diagnostic errors and near misses (see Chapter 4). Eliciting this information via surveys may be helpful in identifying errors and near misses, and it can also provide useful feedback to the organization and health care professionals (see section below on feedback). For example, a recent patient-initiated voluntary survey of adverse events found that harm was commonly associated with reported diagnostic errors and the survey identified actions that patients believed could improve care (Southwick et al., 2015).

In addition to identifying diagnostic errors that have already occurred, some methods used to monitor the diagnostic process and identify diagnostic errors can be used for error recovery. Error recovery is the process of identifying failures early in the diagnostic process so that actions can be taken to reduce or avert negative effects resulting from the failure (IOM, 2000). Methods that identify failures in the diagnostic process or catch diagnostic errors before significant harm is incurred could make it possible to avoid diagnostic errors or to intervene early enough to avert significant harm. By scanning medical records to identify lapses in care, the SureNet system supports error recovery by identifying patients at risk of experiencing a diagnostic error (Danforth et al., 2014; HIMSS Analytics, 2015; Kanter, 2014) (see also section on a supportive work system).

Beyond identifying diagnostic errors and near misses, organizational learning aimed at improving diagnostic performance and reducing diagnostic errors will also require a focus on understanding where in the diagnostic process the failures occur, the work system factors that contribute to their occurrence, what the outcomes were, and how these failures may be prevented or mitigated (see Chapter 3). For example, the committee’s conceptual model of the diagnostic process describes the steps within the process that are vulnerable to failure: engagement, information gathering, integration, interpretation, establishing a diagnosis, and communication of the diagnosis. If a health care organization is evaluating where in the diagnostic testing process a failure occurs, the brain-to-brain loop model may be helpful in conducting these analyses, in particular by articulating the five phases of testing: pre-pre-analytical, pre-analytical, analytical, post-analytical, and post-post-analytical (Plebani and Lippi, 2011; Plebani et al., 2011).

It is also important to determine the work system factors that contribute to diagnostic errors and near misses. Some of the data sources and methods mentioned above, such as malpractice claims analyses and medical record reviews, can provide valuable insights into the causes and outcomes of diagnostic errors. Health care organizations can also employ formal error analysis and other risk assessment methods to understand the work system factors that contribute to diagnostic errors and near misses. Relevant analytical methods include root cause analysis,

cognitive autopsies, and morbidity and mortality (M&M) conferences (Gandhi, 2014; Graber et al., 2014; Reilly et al., 2014). Root cause analysis is a problem-solving method that attempts to identify the factors that contributed to an error; these analyses take a systems approach by trying to identify all of the underlying factors rather than focusing exclusively on the health care professionals involved (AHRQ, 2014b). Maine Medical Center recently conducted a demonstration program to inform clinicians about the root causes of diagnostic errors. They created a novel fishbone root cause analysis procedure, which visually represents the multiple cause and effect relationships responsible for an error (Trowbridge, 2014). Organizations and individuals can also take advantage of continuing education opportunities focused on using root cause analysis to study diagnostic errors in order to improve their ability to identify and understand diagnostic errors (Reilly et al., 2015). The cognitive autopsy is a variation of a root cause analysis that involves a clinician reflecting on the reasoning process that led to the error in order to identify causally relevant shortcomings in reasoning or decision making (Croskerry, 2005). M&M conferences bring a diverse group of health care professionals together to learn from errors (AHRQ, 2008). These can be useful, especially if they are framed from a patient safety perspective rather than focusing on attributing blame. Other analytical methods used in human factors and ergonomics research could also be applied in health care organizational settings to further elucidate the work system components that contribute to diagnostic errors (see Chapter 3) (Bisantz and Roth, 2007; Carayon et al., 2014; Kirwan and Ainsworth, 1992; Rogers et al., 2012; Roth, 2008; Salas et al., 1995).

As health care organizations develop a better understanding of diagnostic errors within their organizations, they can begin to implement and evaluate interventions to prevent or mitigate these errors as part of their patient safety, research, and quality improvement efforts. To date, there have been relatively few studies that have evaluated the impact of interventions on improving diagnosis and reducing diagnostic errors and near misses; three recent systematic reviews summarized current interventions (Graber et al., 2012a; McDonald et al., 2013; Singh et al., 2012b). These reviews found that the measures used to evaluate the interventions were quite heterogeneous, and there were concerns about the generalizability of some of the findings to clinical practice. Health care organizations can take into consideration some of the methodological challenges identified in these reviews in order to ensure that their evaluations generate much-needed evidence to identify successful interventions.

The Medicare conditions of participation and accreditation organizations can be leveraged to ensure that health care organizations have appropriate programs in place to identify diagnostic errors and near

misses, learn from them, and improve the diagnostic process. The Medicare conditions of participation are requirements that health care organizations must meet in order to receive payment (CMS, 2015a). State survey agencies and accreditation organizations (such as The Joint Commission, the Healthcare Facilities Accreditation Program, the Accreditation Commission for Health Care, the College of American Pathologists, and Det NorskeVeritas-Germanischer Lloyd) determine whether organizations are in compliance with the Medicare conditions of participation through surveys and site visits. Some of these organizations accredit the broad range of health care organizations, while others confine their scope to a single type of health care organization. Other accreditation bodies, such as the National Committee for Quality Assurance (NCQA), provide administrative and clinical accreditation and certification of health plans and provider organizations. For example, NCQA offers accountable care organization (ACO) accreditation, which evaluates an organization’s capacity to provide the coordinated, high-quality care and performance-reporting that is required of ACOs (NCQA, 2013). Accreditation processes, federal oversight, and quality improvement efforts specific to diagnostic testing can also be used to ensure quality in the diagnostic process (see Chapter 2). By leveraging the Medicare conditions of participation requirements and accreditation processes, it may be possible to use the existing oversight programs that health care organizations have in place to monitor the diagnostic process and to ensure that the organizations are identifying diagnostic errors and near misses, learning from them, and making timely efforts to improve diagnosis. Thus, the committee recommends that accreditation organizations and the Medicare conditions of participation should require that health care organizations have programs in place to monitor the diagnostic process and identify, learn from, and reduce diagnostic errors and near misses in a timely fashion. As more is learned about successful program approaches, accreditation organizations and the Medicare conditions of participation should incorporate these proven approaches into updates of these requirements.

Postmortem Examinations

The committee recognized that many approaches to identifying diagnostic errors are important, but the committee thought that the postmortem examination (also referred to as an autopsy) warranted additional committee focus because of its role in understanding the epidemiology of diagnostic error. Postmortem examinations are typically performed to determine cause of death and can reveal discrepancies between premortem and postmortem clinical findings (see Chapter 3). However, the number of postmortem examinations performed in the United States has declined

substantially since the 1960s (Hill and Anderson, 1988; Lundberg, 1998; MedPAC, 1999). One of the contributors to the decline is that in 1971 The Joint Commission eliminated the requirement that hospitals conduct these examinations on a certain percentage of deaths in their facility—20 percent in community hospitals and 25 percent in teaching facilities—in order to receive accreditation (Allen, 2011; CDC, 2001). Cost is another factor; according to a survey of medical institutions in eight states, researchers in 2006 estimated that the mean cost of performing a postmortem examination was $1,275 (Nemetz et al., 2006). Insurers do not directly pay for postmortem examinations, as they typically limit payment to procedures for living patients. Medicare bundles payment for postmortem examinations into its payment for quality improvement activities, which may also disincentivize their performance (Allen, 2011).

Given the steep decline in postmortem examinations, there is interest in increasing their use. For example, Hill and Anderson (1988) recommended that half of all deaths in hospitals, nursing homes, and other accredited medical facilities receive a postmortem examination. Lundberg (1998) recommended reinstating the mandate that a percentage of hospital deaths undergo postmortem examination, either to meet Medicare conditions of participation or accreditation standards. The Medicare Payment Advisory Commission proposed a number of recommendations designed to increase the postmortem examination rate and evaluate their potential for use in “quality improvement and error reduction initiatives” (MedPAC, 1999, p. xviii).

The committee concluded that a new approach to increasing the use of postmortem examinations is warranted. The committee weighed the relative merits of increasing the number of postmortem examinations conducted throughout the United States versus a more targeted approach. The requirements for postmortem examinations in the current Medicare conditions of participation state that postmortem examinations should be performed when there is an unusual death; in particular, these requirements state that “medical staff should attempt to secure an autopsy [postmortem examination] in all cases of unusual death and of medical–legal and educational interest” (CMS, 2015b, p. 210). In these circumstances, the committee concluded that health care organizations should continue to perform these postmortem examinations. In addition, the committee concluded that it is appropriate to have a limited number of highly qualified health care systems participate in conducting routine postmortem exams that produce research-quality information about the incidence and nature of diagnostic errors. Thus, the committee recommends that the Department of Health and Human Services (HHS) should provide funding for a designated subset of health care systems to conduct routine postmortem examinations on a representative sample of patient deaths. To

accomplish this, these health care systems need to reflect a broad array of different settings of care and could receive funding to perform routine postmortem examinations in a representative sample of patient deaths. A competitive grant process could be used to identify these systems.

In recognition that not all patients’ next of kin will consent to the performance of a postmortem examination, these systems can characterize the frequency with which the request for a postmortem examination is refused and thus better describe the risk of response bias in results. This approach will likely provide better epidemiologic data and it represents an advance over current selection methods for performing postmortem examinations, because clinicians do not seem to be able to predict cases in which diagnostic errors will be found (Shojania et al., 2002, 2003). The data collected from health care systems that are highly qualified to conduct routine postmortem examinations may not be representative of all systems of care. However, the committee concluded that this is a more feasible approach, given the financial and workforce demands of conducting postmortem examinations.

Findings from the health care systems that perform routine postmortem examinations can then be disseminated to the broader health care community. Participating health care systems could be required to produce annual reports on the epidemiology of diagnostic errors found by postmortem exams, the value of postmortem examinations as a tool for identifying and reducing such errors, and, if relevant, the role and value of postmortem examinations in quality improvement efforts.

These health care systems could also investigate how new, minimally invasive postmortem approaches compare with traditional full body postmortem examinations. Less invasive approaches include the use of medical imaging, laparoscopy, biopsy, histology, and cytology. Given the advances in molecular diagnostics and advanced imaging techniques, these new approaches could provide useful insights into the incidence of diagnostic error and may be more acceptable options for patients’ next of kin. For example, instead of conducting a full body postmortem exam, pathologists could biopsy tissue samples from an organ where disease is suspected and conduct molecular analysis (van der Linden et al., 2014). Some studies suggest that minimally invasive postmortem examinations (including a combination of medical imaging with other minimally invasive postmortem investigations) have been found to have accuracy similar to that of conventional postmortem examinations in fetuses, newborns, and infants (Lavanya et al., 2008; Pichereau et al., 2015; Ruegger et al., 2014; Thayyil et al., 2013; Weustink et al., 2009). Postmortem imaging in adults has shown less promise for replacing postmortem exams, but these techniques continue to be actively explored (O’Donnell and Woodford, 2008; Roberts et al., 2012). A concern with minimally invasive postmor-

tem imaging is that it may be subject to similar limitations that affect imaging in living patients, and may not detect premortem and postmortem discrepancies. Further understanding the benefits and limitations of minimally invasive approaches may provide critical information moving forward. If successful approaches to minimally invasive postmortem examinations are found, they could play a role in reestablishing the practice of routine postmortem investigation in medicine (Saldiva, 2014).

Improving Feedback

Feedback is a critical mechanism that health care organizations can use to support continuous learning in the diagnostic process. The Best Care at Lower Cost report called for the creation of feedback loops that support continuous learning and system improvement (IOM, 2013). As it relates to diagnosis, feedback entails informing an individual, team, or organization about its diagnostic performance, including its successes, near misses, and diagnostic errors (Black, 2011; Croskerry, 2000; Gandhi, 2014; Gandhi et al., 2005; Schiff, 2008, 2014; Trowbridge, 2014). The committee received substantial input indicating that there are limited opportunities for feedback on diagnostic performance (Dhaliwal, 2014; Henriksen, 2014; Schiff, 2014; Singh, 2014; Trowbridge, 2014). There are often not systems in place to provide clinicians with input on whether they made an accurate, timely diagnosis or if their patients experienced a diagnostic error. The failure to follow up with patients about their diagnosis and treatment—in both the near term and the long term—is a major gap in improving diagnosis.

The committee concluded that improving diagnostic performance requires feedback at all levels of health care. Feedback can help clinicians assess how well they are performing in the diagnostic process, correct overconfidence, identify when remediation efforts are needed, and reduce the likelihood of repeated mistakes (Berner and Graber, 2008; Croskerry and Norman, 2008). Feedback on diagnostic performance can also provide opportunities for health care organizational learning and improvements to the work system (Plaza et al., 2011). To improve the opportunities for feedback, the committee recommends that health care organizations should implement procedures and practices to provide systematic feedback on diagnostic performance to individual health care professionals, care teams, and clinical and organizational leaders.

Box 6-2 identifies some characteristics for effective feedback interventions (Hysong et al., 2006; Ivers et al., 2014). Feedback interventions in high-performing organizations have been found to share a number of characteristics, including being actionable, timely, individualized, and nonpunitive; a nonpunitive culture helps foster an environment in which

BOX 6-2

Characteristics of Effective Feedback Interventions

Feedback

- Is nonpunitive

- Is actionable

- Is timely

- Is individualized

- Comes from the appropriate individual (i.e., a trusted source)

- Targets behavior that can be affected by feedback

- Is provided to recipients who are responsible for improvement

- Includes a description of the desired performance/behavior

SOURCES: Hysong et al., 2006; Ivers et al., 2014.

mistakes can be viewed as opportunities for growth and improvement (Hysong et al., 2006). Other studies have found that feedback is likely to have the largest effect when baseline performance is low and feedback occurs regularly (Ivers et al., 2012; Lopez-Campos et al., 2014). Tailoring the feedback approach to the individual recipient and choosing an appropriate source of feedback (e.g., supervisor versus a peer as the provider of feedback) are important variables in determining how well recipients will respond (Ilgen et al., 1979).

Health care organizations need to be aware of the factors that can impede the provision of feedback, such as the fragmentation of the health care system, resistance to critical feedback from clinicians, and the lack of time for follow-up (Schiff, 2008). In addition, improving feedback will likely require health care organizations to invest additional time and resources for developing systematic feedback mechanisms.

There are many opportunities to provide feedback in clinical practice. Methods to monitor the diagnostic process and identify diagnostic errors and near misses can be leveraged as mechanisms to provide feedback. Feedback opportunities include disseminating postmortem examination results to clinicians who were involved in the patient’s care; sharing the results of patient surveys, medical record reviews, or information gained through follow-up with the health care professionals; using patient-actors or simulated care scenarios to assess and inform health care professionals’ diagnostic performance; and others (Schwartz and Weiner, 2014; Schwartz et al., 2012; Southwick et al., 2015; Weiner et al., 2010). As discussed in Chapter 4, patients and their families have unique insights into the diagnostic process and the occurrence of di-

agnostic error; therefore, following up with patients and their families about their experiences and outcomes will be an important source of feedback (Schiff, 2008). AHRQ recently proposed recommendations for the development of consumer and patient safety reporting systems, which organizations can use for feedback and learning purposes (AHRQ, 2011). M&M conferences, root cause analyses, departmental meetings, and leadership WalkRounds2 provide additional opportunities to provide feedback to health care professionals, care teams, and leadership about diagnostic performance.

Peer review processes, including second reviews of anatomic pathology specimens and medical images, can also be utilized for feedback, and there is an increasing emphasis on using peer-review tools to promote peer learning and improve practice quality (Allen and Thorwarth, 2014; Brook et al., 2015; Fotenos and Nagy, 2012; Iyer et al., 2013; Kruskal et al., 2008). For example, RADPEER™ allows anonymous peer review of previous image interpretations by integrating previous images into current workflow to allow for a nondisruptive peer review process. Summary statistics of image reviews are made available to participating groups and clinicians to improve performance (ACR, 2015). As of 2013, 16,450 clinicians in 1,127 groups were enrolled in the RADPEER™ program; 1,218 clinicians had used or were using the program as part of the American Board of Radiology’s Practice Quality Improvement project for maintenance of certification (ACR, 2013). Performance monitoring programs designed to satisfy the requirements of the Mammography Quality Standards Act have been used to improve feedback on diagnostic performance on mammography to radiologists and medical imaging facilities (Allen and Thorwarth, 2014).

Leveraging Health Care Professional Societies’ Efforts to Improve Diagnosis

Health care organizations can leverage external input from health care professional societies to inform the organizations’ efforts to monitor and improve the diagnostic process. For example, health care professional societies and their members can help develop and prioritize approaches to improve diagnosis specific to their specialties. By engaging health care professional societies, efforts to improve diagnosis can build on professionalism and intrinsic motivation. Thus, the committee recommends

______________

2 Leadership WalkRounds are a tool to connect leadership with frontline clinicians and health care professionals. They consist of leadership (senior executives, vice presidents, etc.) making announced or unannounced visits to different areas of the organization to engage with frontline employees (IHI, 2004).

that health care professional societies should identify opportunities to improve accurate and timely diagnoses and reduce diagnostic errors in their specialties.

Such an effort could be modeled on the Choosing Wisely initiative, which was initiated by the American Board of Internal Medicine Foundation to encourage patient and health care professional communication as a way to ensure high-quality, high-value care. The initiative invited health care professional societies to each develop a list of five services (i.e., tests, treatments, and procedures) that are commonly used in practice but may be unnecessary or not supported by the evidence as improving patient care. These lists were made publicly available as a way of encouraging discussions about appropriate care between patients and health care professionals. Choosing Wisely received national media attention and engaged more than 50 health care professional societies (Choosing Wisely, 2015). A major lesson from the Choosing Wisely initiative is the importance of beginning with a small group of founding organizations and then expanding membership. Engaging consumer groups as the program progressed was also an important component of the initiative. Another factor in the initiative’s success was that it allowed flexibility within limits; participating health care professional societies and boards were given flexibility in identifying their “Top 5” lists, but items on each list had to be evidence-based and within the purview of that particular society.

Early efforts on prioritization could focus on identifying the most common diagnostic errors and “don’t miss” health conditions, such as those that present the greatest likelihood for diagnostic errors and harm (Newman-Toker et al., 2013). For example, stroke, acute myocardial infarction, or pulmonary embolism may be important areas of focus in the emergency department setting while cancer is a frequently missed diagnosis in the ambulatory care setting (CRICO, 2014; Gandhi et al., 2006; Newman-Toker et al., 2013; Schiff et al., 2013). Efforts to improve diagnosis can include a focus on the quality and safety of diagnosis as well as increasing efficiency and value, such as identifying inappropriate diagnostic testing. Another approach may be for societies to identify “low-hanging fruit,” or targets that are easily remediable, as a high priority. Doing this may increase the likelihood of having early successes that can contribute to the long-term success of the effort (Kotter, 1995). Some groups may identify particular actions, tools, or approaches to reduce errors associated with a particular diagnosis within their specialties (such as checklists, second reviews, or decision support tools).

Each society could identify five high-priority areas to improve diagnosis. The groups would need to be given latitude in the identification of their targets, and, as was the case in Choosing Wisely, a primary constraint could be that there must be evidence indicating that adopting

the recommendation would result in improving diagnosis or reducing diagnostic error. This could also be an opportunity for health care professional societies to collaborate, especially in cases of diagnoses that may be missed because of the inappropriate isolation of symptoms among specialties. For example, urologists, primary care clinicians, and neurologists could collaborate to make the diagnosis of normal pressure hydrocephalus (symptoms include frequent urination, a type of balance problem, and some memory loss) a “not to be missed” diagnosis (McDonald, 2014).

ORGANIZATIONAL CHARACTERISTICS FOR LEARNING AND IMPROVED DIAGNOSIS

Health care organizations influence the work system in which diagnosis occurs and also play a vital role in implementing changes to improve diagnosis and prevent diagnostic errors. The committee identified organizational culture and organizational leadership and management as key characteristics for ensuring continuous learning from and improvements to the diagnostic process. Health care organizations are responsible for developing a culture that promotes a safe place for all health care professionals to identify and learn from diagnostic errors. Organizational leaders and managers can facilitate this culture and set the priorities to achieve progress in improving diagnostic performance and reducing diagnostic errors. The committee drew on the broader quality and patient safety literature to inform this discussion; making connections to previous efforts to improve quality and safety is particularly important, given the limited focus on improving diagnosis in the patient safety and quality improvement literature. The committee concluded that many of the findings from the broader fields of quality improvement and patient safety have the potential to reduce diagnostic errors and improve diagnosis. However, this also represents a research need—further studies need to evaluate the generalizability of these findings to diagnosis (see Chapter 8).

Promoting a Culture for Improved Diagnosis

As discussed in Chapter 1, health care organizations can leverage four major cultural movements in health care—patient safety, professionalism, patient engagement, and collaboration—to create a local environment that supports continuous learning and improvement in diagnosis. Organizational culture refers to an organization’s norms of behavior and the shared basic assumptions and values that sustain those norms (Kotter, 2012; Schein, 2004). Though the cultures in most health care organizations exhibit common elements, they can differ considerably due to varying missions, values, and histories. Another factor that makes culture in

health care organizations more complicated is the presence of subcultures (multiple distinct sets of norms and beliefs within a single organization) (Schein, 2004). Subcultures can reflect the individual attitudes of a nurse manager on a specific hospital floor or interprofessional differences that spring from the long history and social concerns of each health care profession (Hall, 2005). The existence of multiple cultures within a single health care organization may make it difficult to promote the shared values, goals, and approaches necessary for improving diagnosis.

Some aspects of culture may promote diagnostic accuracy, such as the intrinsic motivation of health care professionals to deliver high-quality care and the dedicated focus on quality and safety found in some health care organizations. Other aspects of culture may be detrimental to efforts to improve diagnosis, including the persistence of punitive, fault-based cultures; cultural taboos on providing peer feedback; hierarchical attitudes that are misaligned with team-based practice; and the acceptance of the inevitability of errors. Punitive cultures that emphasize discipline and punishment for those who make mistakes are not conducive to improved diagnostic performance; this type of culture thwarts the learning process because health care professionals fear the consequences of reporting errors (Hoffman and Kanzaria, 2014; Khatri et al., 2009; Larson, 2002; Schiff, 1994). Clinicians within these settings may also feel uneasy about providing feedback to colleagues about their diagnostic performance or the occurrence of diagnostic errors (Gallagher et al., 2013; Tucker and Edmondson, 2003).

There have been multiple calls for health care organizations to create nonpunitive cultures that encourage communication and learning (IOM, 2000, 2004, 2013). Despite these efforts, a punitive culture persists within some health care organizations (Chassin, 2013; Chassin and Loeb, 2013). For example, a recent survey found that less than half (44 percent) of health care professionals perceived that their organizations had a nonpunitive response to error (AHRQ, 2014a). The fault-based medical liability system and, in rare cases, clinicians who exhibit unprofessional or intimidating behavior also contribute to the persistence of punitive cultures (Chassin, 2013; Chassin and Loeb, 2013).

Cultures that continue to view diagnosis as a solitary clinician activity discount the important roles of teamwork and collaboration. A culture that validates the perspective that diagnostic errors are inevitable may also pose problems. When these cultural attitudes are pervasive within health care organizations, attempts to improve diagnosis are challenging (Berner and Graber, 2008).

Changing an organization’s culture is often difficult, and there are many opportunities throughout the change process where failure can occur (Kotter, 1995). Health care organizations may be hesitant to attempt

culture change because of system inertia, concern that benefits due to the present culture could be lost, or because there is uncertainty regarding which approaches to improving culture work best in a given organizational setting (Chassin, 2013; Coiera, 2011; Parmelli et al., 2011). Organizations may attempt to implement multiple change processes simultaneously, and this can lead to change fatigue, where employees experience burnout3 and apathy (Perlman, 2011). Other factors may include: the failure to convey the urgent need for change; poor communication of the successes that have resulted from change; the inadequate identification, preparation, or removal of barriers to change; and insufficient involvement of leadership and management in the change initiative (Chassin, 2013; Hines et al., 2008; IOM, 2013; Kotter, 1995, 2012). Although the challenges to cultural change can be significant, the committee concluded that addressing organizational culture is central to improving diagnosis (Gandhi, 2014; Kanter, 2014; Thomas, 2014). Thus, the committee recommends that health care organizations should adopt policies and practices that promote a nonpunitive culture that values open discussion and feedback on diagnostic performance.

There are a variety of approaches that can be employed to improve culture (Davies et al., 2000; Etchegaray et al., 2012; Schein, 2004; Schiff, 2014; Williams et al., 2007). The measurement of an organization’s culture is often a first step in the improvement process because it facilitates the identification of cultural challenges and the evaluation of interventions (IOM, 2013). A number of measurement tools are available, including surveys to identify health care professionals’ perception of their organization’s culture (AHRQ, 2014c; Farley et al., 2009; Modak et al., 2007; Sexton et al., 2006; Watts et al., 2010).

Organizations can create a culture that supports learning and continual improvement by implementing a just culture, also referred to as a culture of safety (IOM, 2004; Kanter, 2014; Khatri et al., 2009; Larson, 2002; Marx, 2001; Milstead, 2005). A just culture balances competing priorities—learning from error and personal accountability—by understanding that health care is a complex activity involving imperfect individuals who will make mistakes, while not tolerating reckless behavior (AHRQ, 2015a). The just culture approach distinguishes between “human error” (an inadvertent act by a clinician, such as a slip or lapse), “at-risk behavior” (taking shortcuts, violating a safety rule without perceiving it as likely to cause harm), and “reckless behavior” (conscious choices by clinicians to

______________

3 The term “burnout” is defined as occupational stress resulting from demanding and emotional relationships between health care professionals and patients that is marked by emotional exhaustion, a negative attitude toward one’s patients, and the belief that one is no longer effective at work with patients (Bakker et al., 2005).

engage in behavior they know poses a significant risk, such as ignoring required safety steps). The just culture model recommends “consoling the clinician” involved in human error, “coaching the clinician” who engages in at-risk behavior, and reserving discipline only for clinicians whose behavior is truly reckless. Further refinements to this approach employ a “substitution test” (i.e., would three other clinicians with similar skills and knowledge do the same in similar circumstances?) to identify situations in which system flaws have developed that create predisposing conditions for the error in question to occur. Finally, whether or not the clinician has a history of repeatedly making the same or similar mistakes is considered in formulating an appropriate response to error.

Health care organizations can also look to high reliability organizations (HROs), which operate in high-stakes conditions but maintain high safety levels (such as those found in the nuclear power and aviation industries). Health care organizations can benefit from adapting the traits of HRO cultures, such as rejecting complacency and focusing on error reduction (Chassin and Loeb, 2011; Singh, 2014; Thomas, 2014; Weick and Sutcliffe, 2011). The involvement of supportive and committed leadership is another component of successful attempts to improve culture and is a key component of HRO success (Chassin, 2013; Hines et al., 2008; IOM, 2013; Kotter, 1995, 2012).

Health care organizations can espouse cultural values that support the open discussion of diagnostic performance and improvement (Davies and Nutley, 2000) (see Box 6-3). The culture needs to promote the discussion of error and offer psychological safety (Jeffe et al., 2004; Kachalia, 2013). Successes need to be celebrated, and mistakes need to be treated as opportunities to learn and improve. Complacency with regard to current diagnostic performance needs to be replaced with an enduring desire for continuing improvement. An emphasis on teamwork is critical, and it can be facilitated by a culture that values the development of trusting, mutually respectful relationships among health care professionals, patients and their family members, and organizational leadership.

Despite the difficulties one faces in implementing culture change, health care organizations have begun to make changes that can improve patient safety (Chassin and Loeb, 2013). For instance, changing culture was a critical factor in sustaining the reduction in intensive care unit–acquired central line bloodstream infections in Michigan state hospitals (Pronovost et al., 2006, 2008, 2010). Cincinnati Children’s Hospital has focused on better process design that leverages human factors expertise and on building a culture of reliability (Cincinnati Children’s Hospital, 2014). A number of health care organizations have undertaken the process of instituting a just culture by prioritizing learning and fairness and creating an atmosphere of transparency and psychological safety (Marx,

BOX 6-3

Important Cultural Values for Continuously Learning Health Care Systems

- Celebration of success. If excellence is to be pursued with vigor and commitment, its attainment needs to be valued within the organizational culture.

- Absence of complacency. Learning organizations value innovation and change—they are searching constantly for new ways to improve their outcomes.

- Recognition of mistakes as opportunities to learn. Learning from failure is a prerequisite for achieving improvement. This requires a culture that accepts the positive spin-offs from errors, rather than seeking to blame. This does not imply a tolerance of routinely poor or mediocre performance from which no lessons are learned or of reckless disregard for safe practices.

- Belief in human potential. It is people who drive success in organizations—using their creativity, energy, and innovation. Therefore, the culture within a learning organization values people and fosters their professional and personal development.

- Recognition of tacit knowledge. Learning organizations recognize that those individuals closest to processes have the best and most intimate knowledge of their potential and flaws. Therefore, the learning culture values tacit knowledge and shows a belief in empowerment (the systematic enlargement of discretion, responsibility, and competence).

- Openness. Because learning organizations try to foster a systems view, sharing knowledge throughout the organization is one key to developing learning capacity. “Knowledge mobility” emphasizes informal channels and personal contacts over written reporting procedures. Cross disciplinary and multifunction teams, staff rotations, on-site inspections, and experiential learning are essential components of this informal exchange.

- Trust. For individuals to give their best, take risks, and develop their competencies, they must trust that such activities will be appreciated and valued by colleagues and managers. In particular, they must be confident that should they err, they will be supported, not castigated. In turn, managers must be able to trust that subordinates will use wisely the time, space, and resources given to them through empowerment programs—and not indulge in opportunistic behavior. Without trust, learning is a faltering process.

- Outward looking. Learning organizations are engaged with the world outside as a rich source of learning opportunities. They look to their competitors for insights into their own operations and are attuned to the experiences of other stakeholders, such as their suppliers. In particular, they are focused on obtaining a deep understanding of clients’ needs.

SOURCE: Davies and Nutley, 2000. Adapted by permission from BMJ Publishing Group Limited. Developing learning organizations in the new NHS, H. T. O. Davies and S. N. Nutley, BMJ 320, 998–1001, 2000.

2001; Wyatt, 2013). For example, after two high-profile medical mistakes, the Dana-Farber Cancer Institute implemented a plan to develop a just culture in order to improve learning from error and care performance (Connor et al., 2007). Its plan centered on incorporating a set of principles into practice that promoted learning, the open discussion of error, individual accountability, and program evaluation; this plan was endorsed and supported by organizational leadership (Connor et al., 2007). Organizations can explore the strategies that are best suited to their needs and aims (e.g., specific strategies for small practices to improve culture) (Gandhi and Lee, 2010; Shostek, 2007).

Leadership and Management

Organizational leaders are responsible for setting the priorities and expectations that guide a health care organization and for determining the rules and policies necessary to achieve the organization’s goals. Organizational leaders can include the health care organization’s governing body, the chief executive officer and other senior managers, and clinical leaders; collaboration among these leaders is critical to achieving the organization’s quality goals. According to The Joint Commission (2009, p. 3), only “the leaders of a health care organization have the resources, influence, and control” to ensure that an organization has the right elements in place to meet quality and safety priorities, including a nonpunitive culture, the availability of appropriate resources (including human, financial, physical, and informational), a sufficient number of competent staff, and an ongoing evaluation of the quality and safety of care. In particular, health care organization governing boards have an obligation to ensure the quality and safety of care within their organizations (Arnwine, 2002; Callender et al., 2007; The Joint Commission, 2009).4 As a part of their oversight function, governing boards routinely identify emerging quality of care trends and can help prioritize efforts to address these issues within an organization.

The involvement of organizational leaders and managers is crucial for successful change initiatives (Dixon-Woods et al., 2011; Firth-Cozens, 2004; Gandhi, 2014; Kotter, 1995; Larson, 2002; Moran and Brightman, 2000; Silow-Carroll et al., 2007). In many health care organizations, organizational leaders have not focused significant attention on improving diagnosis and reducing diagnostic errors (Gandhi, 2014; Graber, 2005; Graber et al., 2014; Henriksen, 2014; Wachter, 2010; Zwaan et al., 2013). However, facilitating change will require the support and involvement of these

______________

4 42 C.F.R. § 482.12(a)(5); Caremark International Inc. Derivative Litigation, 698 A. 2d 959 (Del. Ch. 1996).

leaders. To start, health care governing boards can prioritize diagnosis and can support senior managers in implementing policies and practices that support continued learning and improved diagnostic performance. For example, potential policies and practices could focus on team-based care in diagnosis, the adoption of a continuously learning culture, opportunities to provide feedback to clinicians, and approaches to monitor the diagnostic process and identify diagnostic errors and near misses. All organizational leaders can raise awareness of the quality and safety challenges related to diagnostic error as well as dispelling the myth that diagnostic errors are inevitable (Leape, 2010; Wachter, 2010). Importantly, organizational leaders can appeal to the intrinsic motivation of health care professionals to drive improvements in diagnosis.

Focusing on improving diagnosis and reducing diagnostic error is necessary to improve the quality and safety of care; in addition, it has the potential to reduce organizational costs (IOM, 2013). For example, a recent study identified a link between inpatient harm and negative financial consequences for hospitals (Adler et al., 2015). The downstream effects associated with diagnostic error, including patient harm, malpractice claims, and inappropriate use of resources, suggest that organizations that focus on improving diagnosis could extend benefits beyond patient outcomes to include reducing costs. Research that evaluates the economic impact of diagnostic errors may be helpful in building the business case for prioritizing diagnosis within health care organizations (see Chapter 8).

There are a variety of strategies that can be drawn upon as leaders chart a course toward improved diagnostic performance, including Six Sigma and lean management principles (Chassin and Loeb, 2013; James and Savitz, 2011; Jimmerson et al., 2005; Pronovost et al., 2006; Todnem By, 2005; Varkey et al., 2007; Vest and Gamm, 2009). Involving leadership in WalkRounds, M&M conferences, and departmental meetings can help increase leadership visibility as diagnostic performance improvements are implemented (Frankel et al., 2003, 2005; Thomas et al., 2005). It may also be beneficial for leaders to pursue improvement efforts that are person-centered and community-driven, contribute to shaping the desired culture, and leverage interdisciplinary relationships (Swensen et al., 2013). Insights from HROs and just culture may also be useful as leaders consider the opportunities to improve diagnosis (Pronovost et al., 2006). In addition, leaders could adapt the IOM’s CEO Checklist for High Value Health Care for improving diagnosis (see Box 6-4) (IOM, 2012). Because a majority of change initiatives fail, leaders need to be aware of the reasons for failure and take precautions to ensure that efforts to improve diagnosis are feasible and sustainable (Coiera, 2011; Etheridge et al., 2013; Henriksen and Dayton, 2006; Kotter, 1995). Ongoing evaluation of the change effort is also warranted.

BOX 6-4

A CEO Checklist for High-Value Health Care

Foundational Elements

- Governance priority—visible and determined leadership by chief executive officer (CEO) and board

- Culture of continuous improvement—commitment to ongoing, real-time learning

Infrastructure Fundamentals

- Information technology (IT) best practices—automated, reliable information to and from the point of care

- Evidence protocols—effective, efficient, and consistent care

- Resource utilization—optimized use of personnel, physical space, and other resources

Care Delivery Priorities

- Integrated care—right care, right setting, right clinicians, right teamwork

- Shared decision making—patient–clinician collaboration on care plans

- Targeted services—tailored community and clinic interventions for resource-intensive patients

Reliability and Feedback

- Embedded safeguards—supports and prompts to reduce injury and infection

- Internal transparency—visible progress in performance, outcomes, and costs

SOURCE: Adapted from IOM, 2012.

A SUPPORTIVE WORK SYSTEM TO FACILITATE DIAGNOSIS

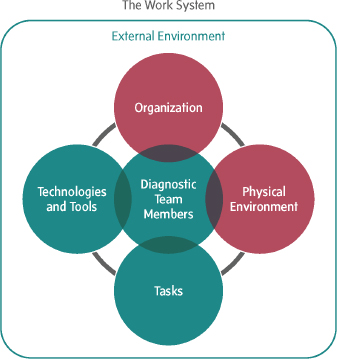

Many components of the work system are under the purview of health care organizations. Thus, organizations can implement changes that ensure a work system that supports the diagnostic process. The committee recommends that health care organizations should design the work system in which the diagnostic process occurs to support the work and activities of patients, their families, and health care professionals and to facilitate accurate and timely diagnoses. The previous section described how health care organizations can use organizational culture, leadership, and management to facilitate organizational change. This section considers additional actions that organizations can take, including a focus on error recovery, on results reporting and communication, and

on ensuring that additional work system elements (i.e., diagnostic team members and their tasks, the tools and technologies they employ, and the physical and external environment) support the diagnostic process.

Error Recovery

One principle that health care organizations can apply to the design of the work system is error recovery (IOM, 2000). There are a variety of opportunities for health care organizations to improve error recovery and resiliency in the diagnostic process. For example, improved patient access to clinical notes and diagnostic testing results is a form of error recovery; this gives patients the opportunity to identify and correct errors in their medical records that could lead to diagnostic errors, potentially before any harm results (Bell et al., 2014) (see Chapter 4). Informal, real-time collaboration among professionals, including face-to-face and virtual communication, presents another opportunity for error detection and recovery. Wachter (2015) noted that before the computerization of medical imaging, treating health care professionals often collaborated with radiologists in reading rooms while reviewing films together, whereas today communication is primarily facilitated electronically, through the radiology report. Health care organizations can consider how to promote these types of opportunities for clinicians to discuss cases and to facilitate more collaborative working relationships during the diagnostic process. For example, some organizations are now situating medical imaging reading stations in clinical areas, such as the emergency department and the intensive care unit (Wachter, 2015). The thoughtful use of redundancies, such as second reviews of anatomic pathology specimens and medical images, consultations, and second opinions in challenging cases or complex care environments, are also a form of error recovery that health care organizations can consider (Durning, 2014; Nakhleh et al., 2015). For example, the tele-intensive care unit is a telemedicine process that helps support clinicians’ care for acutely ill patients by using off-site clinicians and software systems to provide a “second set of eyes” to remotely monitor intensive care unit patients (Berenson et al., 2009; Khunlertkit and Carayon, 2013).

Results Reporting and Communication

The Joint Commission has identified improved communication of critical test results as a key safety issue and urges organizations to “[r]eport critical results of tests and diagnostic procedures on a timely basis” (The Joint Commission, 2015b, p. 2). Input to the committee echoed this call and emphasized the importance of improving communication between treating health care professionals, pathologists, radiologists, and

other diagnostic testing clinicians (Allen and Thorwarth, 2014; Epner, 2015; Gandhi, 2014; Myers, 2014). To facilitate the timely collaboration among health care professionals in the diagnostic process, the committee recommends that health care organizations should develop and implement processes to ensure effective and timely communication between diagnostic testing health care professionals and treating health care professionals across all health care delivery settings. For example, closed loop reporting systems for diagnostic testing and referral can be implemented to ensure that test results or specialist findings are reported back to the treating health care professional in a timely manner (Gandhi, 2014; Gandhi et al., 2005; Myers, 2014; SHIEP, 2012). These systems can also help to ensure that relevant information is being communicated among the appropriate health care professionals. Recent efforts to improve closed loop reporting include the American Medical Association’s Closing the Referral Loop Project and the Office of the National Coordinator for Health Information Technology’s 360X Project, which aim to develop guidelines for closed loop referral system implementation (AMA, 2015; Williams, 2012). Early lessons from the 360X Project include the importance of seamless workflow integration, tailoring the amount of information transmitted between clinicians and specialists, and the importance of national standards for system interoperability (SHIEP, 2012). A task force comprised of pathologists, radiologists, other clinicians, risk managers, patient safety specialists, and IT specialists recommended four actions to improve communication and follow-up related to clinically significant test results: (1) standardize policies and definitions across networked organizations, (2) identify the patient’s care team, (3) results management and tracking, and (4) develop shared quality and reporting metrics (Roy et al., 2013).

Health care organizations can leverage health IT resources to improve communication and collaboration among pathologists, radiologists, and treating health care professionals (Allen and Thorwarth, 2014; Gandhi, 2014; Kroft, 2014; Schiff et al., 2003). HHS’s Tests Results Reporting and Follow-Up SAFER Guide offers insight on how to use EHRs to safely facilitate communication and the reporting of results (HHS, 2015). Some closed loop reporting systems include an alert notification mechanism designed to inform ordering clinicians when critical diagnostic testing results are available (Lacson et al., 2014a,b; Singh et al., 2010). Dalal and colleagues (2014) identified an automated e-mail notification system as a “promising strategy for managing” the results of tests that were pending when the patient was discharged. There is some evidence that the use of alert notification mechanisms improves timely communication of results reports (Lacson et al., 2014a,b). However, closed loop reporting systems need to be carefully designed to support clinician cognition and workflow

in the diagnostic process; if there is a high volume of alerts, a clinician may experience cognitive overload, which can limit the effectiveness of such alerts (Singh et al., 2009, 2010).

The use of standard formats may also improve the communication of test results. Studies have shown that structured radiology reports are more complete, have more relevant content, and have greater clarity than free-form reports (Marcovici and Taylor, 2014; Schwartz et al., 2011). Similar to a checklist, structured reports have a template with standardized headings and often use standardized language. Input to the committee suggests that similar standardized formats for anatomic and clinical pathology results reports are likely to improve communication (Gandhi, 2014; Myers, 2014). Encouraging the use of simpler and more transparent language in results reports may also improve communication between health care professionals.

Additional Work System Elements

In addition to improving error recovery and results reporting and communication, health care organizations can focus more broadly on improving the work system in which the diagnostic process occurs. To ensure that their work systems are designed to support the diagnostic process, health care organizations need to consider all of the elements of the work system and recognize that these elements are interrelated and dynamically interact. For example, a new EHR system (tools and technology) will be most beneficial when an organization ensures that its health care professionals are trained on how to use the system (team members and tasks), when the system meets usability standards (external environment), and when the tool is located in the appropriate location (physical environment). The following sections highlight some of the ways in which health care organizations can improve the design of work systems for improved diagnostic performance. The actions discussed are not meant to be exhaustive; rather, they are offered as examples of steps organizations can take. Discussions in Chapters 4, 5, and 7 further augment these discussions.

In addition to improving a specific work system, health care organizations need to recognize that patients may cross organizational boundaries when seeking a diagnosis. This fragmentation has the potential to contribute to diagnostic errors and the failures to learn from them. Though health care organizations are not solely responsible for this problem, they have a responsibility to ensure, to the best of their abilities, that the health care system as a whole supports the diagnostic process. Teamwork and health IT interoperability will help, but to meet this responsibility, organizations will need to take steps to improve communication with other

organizations. One mechanism, discussed earlier, focuses on improving the communication of diagnostic testing results and referrals. Implementing systematic feedback mechanisms that track patient outcomes over time could also identify diagnostic errors that transcend health care organization boundaries. In addition, payment and care delivery reforms that incentivize accountability and collaboration may alleviate some of the challenges that the fragmented nature of the health care system presents for diagnosis (see Chapter 7).

Physical Environment

The design and characteristics of the physical environment can influence human performance and the quality and safety of health care (Carayon, 2012; Hogarth, 2010; Reiling et al., 2008). Elements of the physical environment include the layout and ambient conditions such as distractions, noise, temperature, and lighting. Researchers have focused primarily on the design of hospital environments and how these environments may influence patient safety, patient outcomes, and task performance. For example, a review of 600 articles on the impact of physical design found three studies that linked medication errors with factors in the hospital environment, including lighting, distractions, and interruptions (Ulrich et al., 2004). Another study found that operational failures occurring in two large hospitals were the result of insufficient workspace (29 percent), poor process design (23 percent), and a lack of integration in the internal supply chains (23 percent); only 14 percent of the failures could be attributed to training and human error (Tucker et al., 2013).

Although the impact of the physical environment on diagnostic error has not been well studied, there are indications that it may be an important contributor to diagnostic performance. For example, the emergency department has been described as a challenging environment in which to make diagnoses because of the presence of high-acuity illness, incomplete information, time constraints, and frequent interruptions and distractions (Croskerry and Sinclair, 2001). Cognitive performance is vulnerable to distractions and interruptions, which influence the likelihood of error (Chisholm et al., 2000). Other physical environment factors that could influence the diagnostic process include the location of health technologies designed to support the diagnostic process, adequate space for team members to complete their tasks related to the diagnostic process, and ambient conditions that can affect cognition, such as noise, lighting, odor, and temperature (Chellappa et al., 2011; Johnson, 2011; Mehta et al., 2012; Parsons, 2000; Ward, 2013). Poorly designed systems that require health care professionals to traverse long distances to perform their tasks may

increase fatigue and reduce face-to-face time with patients (Ulrich et al., 2004).

To address the challenges associated with the physical environment, health care organizations can design workplaces that align with work patterns and support workflow, can locate health technology near the point of care, and can reduce ambient noise (Durning, 2014; Reiling et al., 2008; Ulrich et al., 2004). Other possible actions include using the appropriate lighting, providing adequate ventilation, and maintaining an appropriate temperature to ensure that the ambient conditions do not negatively affect diagnostic performance. Studies suggest that such changes may improve both patient outcomes and patient and family satisfaction with care provision (Reiling et al., 2008; Ulrich et al., 2004).

Diagnostic Team Members and Their Tasks

Health care organizations need to ensure that their clinicians have the needed competencies and support to perform their tasks in the diagnostic process. Health care professional certification and accreditation standards can be leveraged to ensure that health care professionals within an organization are well prepared to fulfill their roles in the diagnostic process. Health care organizations can also offer more opportunities for team-based training in diagnosis and can expand the use of integrated practice units, treatment planning conferences, and diagnostic management teams (see Chapter 4). Ensuring adequate supervision and support of health care professionals—especially the many health care professional trainees involved in the diagnostic process—is another way for health care organizations to improve the work system (ACGME, 2011; IOM, 2009). For example, many health care organizations have adopted policies to address patient safety risks caused by fatigue (including decision fatigue), sleep deprivation, and sleep debt for medical residents (Croskerry and Musson, 2009; IOM, 2009; Zwaan et al., 2009). Health care professionals who work in high-stress environments may also experience mental health difficulties and burnout, which can increase the chance of error (AHRQ, 2005; Bakker et al., 2005; Dyrbye and Shanafelt, 2011). Several studies have identified certain characteristics of the workplace and high patient care demands as a cause of this stress and have suggested workforce and culture changes as potential solutions (AHRQ, 2005; Bakker et al., 2005; Dyrbye and Shanafelt, 2011). For example, work scheduling practices can ensure that a health care organization has the appropriate clinicians for facilitating the diagnostic process (both amount of clinicians and appropriate areas of expertise).

Health care organizations can also make improvements to the work system to better involve patients and their families in the diagnostic pro-