3

Improving Response by Building Respondent Support

This chapter summarizes the workshop sessions titled “Communicating with Respondents: Materials and the Sequencing of Those Materials” and “The American Community Survey: Communicating the Importance to the American People.” The presenters addressed the Census Bureau’s strategies for ameliorating respondent concerns by improving survey materials and providing compelling and accessible public information about the rationale for asking questions—a key component of the Census Bureau’s research and implementation program to reduce respondent burden in the American Community Survey (ACS), as outlined in Agility in Action: A Snapshot of Enhancements to the American Community Survey (U.S. Census Bureau, 2015a). The Census Bureau communication strategy has mainly centered on improving the information on the ACS Internet instrument and on the ACS Website.

MAIL CONTACT STRATEGY AND RESEARCH

Elizabeth Poehler (Census Bureau) focused on the current ACS mailing strategy and recently conducted Census Bureau research on the topic.

Current ACS Strategy

In setting the stage for this discussion, Poehler outlined the sequence of contacts in the current ACS. The data for each monthly panel are collected over 3 months in a multimode sequential process. In the first month, she stated, the focus is on self-response, allowing either Internet or mail

responses and continuing through the month. In the second month, the focus shifts to telephone interviews for those who have not responded. The third month focuses on in-person interviews with a sample of nonrespondents. Poehler discussed the self-response phase in her presentation.

The current ACS mail strategy begins with an initial package mailed to sampled addresses, inviting the potential respondents to respond via the Internet. In that package, the Census Bureau includes a letter, a Frequently Asked Questions (FAQ) brochure, an Internet instruction card, and a multilingual brochure. Approximately 7 days later, the respondents receive a reminder letter. About 14 days after that, addresses that have not yet responded receive a paper questionnaire package, which includes a letter, the FAQ brochure, and Internet instruction card, as well as the paper questionnaire, instruction guide, and a return envelope. (The Census Bureau plans to remove the instruction guide in spring 2016 from this mail package.) The process continues for nonrespondents. Four days after the paper questionnaire package, nonrespondents receive a reminder postcard. About 2 weeks after that, addresses for which the Census Bureau has a phone number receive a telephone call from the computer-assisted telephone interviewing (CATI) operation; those for whom no phone number has been identified receive an additional reminder postcard.

The process is involved and is traced with controls and metrics in order to maximize self-response, explained Poehler. One metric is to gather and assess the calls and written correspondence from those receiving the materials in order to gain insight into respondents’ reactions to the mail materials. Though much of the correspondence asks questions to help understand the materials or clarify Internet access instructions, often the correspondence expresses concerns that tend to concentrate on (a) the legitimacy of the survey, (b) the intrusive nature of the questions, and (c) the perception of a negative tone of the materials, particularly questioning the mandatory language contained in many of the mail items sent to respondents. Poehler stated previous research has indicated that messaging about the mandatory nature of the ACS improves response rates, but some respondents bristle at the tone of the message. They express shock that the survey is required by law and feel threatened by the penalties and fines for failing to comply, she said.

The Census Bureau, in an attempt to address these respondent concerns while maintaining data quality at an efficient cost, has conducted research on ways to improve the mail materials and messaging to encourage self-response. This research has focused on addressing respondent burden, improving self-response rates through streamlined materials, and addressing respondent concerns about the prominent nature of mandatory messages on the mail materials. It has been based on laboratory testing with techniques such as focus groups and one-on-one interviews in order to solicit feedback

on possible changes to the mail materials, such as better explaining the benefits of participating in the survey and modifying the look and feel of the materials. In connection with this research, expert feedback was also obtained.

Poehler stated that the research resulted in five high-level recommendations: (1) test visual design changes of the materials, (2) add deadline-related messaging on the envelopes, (3) eliminate the prenotice letter, (4) test additional mailings, and (5) tailor materials for non-English-speaking respondents.

Field Tests

Poehler described five field tests conducted in 2015: (1) Paper Questionnaire Package Test [March], (2) Mail Contact Strategy Modification Test [April]), (3) Envelope Mandatory Messaging Test [May], (4) Summer Mandatory Messaging Test [September], and (5) “Why We Ask” Insert Test [November]. The first two tests were implemented to field test suggestions from the messaging and mail package assessment research with the goal of increasing self-response by streamlining the mail materials, reducing the number of mailings, and cutting back on materials sent in those mailings. The next two tests focused on ways to soften mandatory messages by changing the visual design of the materials and explaining the benefits of participation. Finally, the “Why We Ask” Insert Test provided respondents with more information about why the ACS asked the questions it does. Poehler provided a high-level overview for each of the tests and their results.

Paper Questionnaire Package Test

The goal of this test was to reduce the complexity of the paper questionnaire package. The experimental design looked at modifying the mail package by varying (1) whether or not an instruction guide was included, (2) whether or not an insert that explained to respondents that they could choose to respond via mail or online was included, and (3) whether or not softened language that indicated the Internet as the preferred mode of response would increase Internet use. The test had four experimental treatments, each with 12,000 addresses and a control group with 238,000 addresses. This test found no significant differences between treatments and return rates, and no significant differences in item nonresponse rates, form completion rates, or response distribution. Costs were lower, but item nonresponse rates were nominally higher for treatments without the instruction guide. Based on this test, the Census Bureau recommended removing the

instruction guide. There were smaller cost savings associated with removing the choice card and modifying the letter.

Mail Contact Strategy Modification Test

The goal of this test was to improve self-response rates by streamlining the mail materials. Streamlining consisted of three initiatives: (1) remove the prenotice letter and send the initial mailing earlier, (2) replace an initial reminder postcard with a letter that highlighted the respondent (user) identification, and (3) send the additional reminder postcard to additional addresses. The test design included a control group with 226,000 addresses and five treatment groups with a sample size of 12,000 each. The treatments varied three factors—whether or not a prenotice letter was included, whether or not the first reminder was a postcard or a letter, and to whom the additional postcard was sent. In the control version, the postcard was sent only to households without a phone number. In the experimental treatments, the card went to everyone, including households in the CATI operation. The results determined that eliminating the prenotice letter and sending the initial mailing earlier decreased total self-response return rates by 1.4 percentage points prior to sending the paper questionnaire mailing. However, prior to starting the CATI operation, there was no measurable difference in the self-response return rates. A reminder letter that highlighted the 10-digit user ID and included mandatory language increased total self-response return rates prior to starting CATI by 3.8 percentage points, while using a reminder letter but eliminating the prenotice letter and sending the initial mailing earlier increased total self-response return rates prior to CATI by a similar 3.5 percentage points. Finally, the tests found that sending an additional reminder postcard to addresses in CATI increased self-response return rates, but this was not translated into a noticeable change in the CATI response rates. Based on these findings, the prenotice letter was eliminated, the initial mailing was sent earlier, and the initial reminder postcard was changed to a letter, which began August 2015.

Envelope Mandatory Messaging Test

The goal of this test was to study the impact of removing mandatory messages from the envelopes. The envelopes in the initial mailing package and the paper questionnaire package contained mandatory messages. The control group received the envelopes with the messaging, and a test group received envelopes without the message. There were 24,000 addresses in each of the treatments. The results of this test pointed to the danger of removing the mandatory messaging. The test treatment had lower return rates by 5.4 percentage points prior to starting the CATI operation and,

after all modes of data collection were complete, the test treatment had lower overall response rate of 0.7 percentage points. The costs of this action would be significant, Poehler said. Eliminating the mandatory messages from the envelopes alone is estimated to cost an additional $9.5 million if implemented due to the need to push response into more expensive modes.

Summer Mandatory Messaging Test

The goal of this test was to study the impact of removing or modifying the mandatory messages from a broader set of mail materials. Five treatments tested softening or removing the messaging. Each had a sample size of 12,000 addresses (see Table 3-1). The first two treatments were based on the current look and feel of the mail materials, and the control panel had basically no changes to the materials. Another “softened” control treatment tested removal of the reference to the mandatory nature of the survey in some places. The final three treatments had visual design changes and an expanded presentation of the benefits and uses of the ACS based on the messaging and mail package assessment research and consultation with external experts. The first “revised design” had a redesigned look and feel

TABLE 3-1 Summer Mandatory Messaging Test Treatments

| Test Treatment | Strategy |

|---|---|

| Control |

|

| Softened Control |

|

| Revised Design |

|

| Softened Revised Design |

|

| Minimal Revised Design |

|

SOURCE: Elizabeth Poehler presentation at the Workshop on Respondent Burden in the American Community Survey, March 8, 2016. Available: http://sites.nationalacademies.org/cs/groups/dbassesite/documents/webpage/dbasse_173178.pdf [September 2016].

of the materials but included strong mandatory language. The “softened revised design” included the revised design but removed messaging related to the mandatory messaging from postcards and envelopes and softened it in other places. The “minimal revised design” removed the mandatory messages in all of the materials except the initial letter. In the initial letter, the mandatory language was on the back of the letter in small font. Poehler showed an example of the envelope change. The control initial envelope (see Figure 3-1) has bold language saying the response is required by law.

The redesigned initial envelope (see Figure 3-2) added the Census Bureau logo and a message to open immediately on the right-hand side. The revised design states, “Your response is required by law.” In the softened revised design and the minimal revised design, that language was replaced with “your response is important to your community.”

Poehler also presented an example of the current and redesigned control initial letter. The revised design letter adds the Census Bureau logo, uses bulleted lists, enhances the Website address by putting a box around it, uses bold lettering, and adds text to appeal to the respondent’s sense of community. The softened revised design letter is nearly identical to the revised design except the sentence about the mandatory nature of the survey is not

SOURCE: Elizabeth Poehler presentation at the Workshop on Respondent Burden in the American Community Survey, March 8, 2016. Available: http://sites.nationalacademies.org/cs/groups/dbassesite/documents/webpage/dbasse_173178.pdf [September 2016].

SOURCE: Elizabeth Poehler presentation at the Workshop on Respondent Burden in the American Community Survey, March 8, 2016. Available: http://sites.nationalacademies.org/cs/groups/dbassesite/documents/webpage/dbasse_173178.pdf [September 2016].

in boldface type. Another version removes the mandatory sentence. Overall, the results from this test indicated that reducing the frequency and visibility of mandatory messages reduces response rates.

“Why We Ask” Insert Test

The goal of this test was to study the impact of including a flyer in the paper questionnaire mailing package explaining why questions are asked in the ACS. The control treatment did not include the flyer. One experimental treatment included the flyer, and a second experimental treatment included the flyer and removed the instruction guide. Each of these treatments had 24,000 addresses. The results from this test were expected to be available in June 2016.

Future Research

Poehler reported on two research initiatives under consideration. First, the Census Bureau is looking into testing the use of targeted digital advertising to deliver video and static-image advertisements to sampled addresses. The advertisements would be intended to create positive associations with the Census Bureau’s work generally and the importance of completing a survey. They would not directly link to or mention the American Community Survey. A second initiative would explore findings from social and behavioral sciences to incorporate into the mail materials and the messages used to encourage people to self-respond.

IMPROVING RESPONSE TO THE AMERICAN COMMUNITY SURVEY

Donald Dillman (Washington State University) congratulated the Census Bureau on its experiments this year. However, he said, more could be done to improve ACS self-administered response.

As background, Dillman reported on a series of tests in the 1990s to assess 16 factors in an effort to improve mail-back response rates to decennial census forms (Dillman, 2000, pp. 298-313). He reported only five of them improved response: (1) respondent-friendly visual design, (2) prenotice letters, (3) postcard thank-you reminders, (4) replacement questionnaires, and (5) prominent disclosure on the envelope (“U.S. Census Form Enclosed: Your response is required by law”). The first four items have been shown to make a difference in all mail-back surveys, he noted, while the “required by law” effect was census specific (and came from business survey research). Moreover, other factors, including multiple contacts, produced an initial 58 percent response. The mandatory response notice added only modestly (9 percentage points) to this amount in noncensus year tests.

Dillman reported on a 1991 survey on nonresponse to the 1990 decennial census. People gave one of five reasons: (1) some did not remember receiving the form, (2) some received it but did not open it, (3) others opened it but did not start to fill it out, (4) still others started to fill it out but did not finish, and (5) a few completed the form but did not send it back. According to Dillman, these findings justify the need for multiple contacts, which are very powerful in boosting response by getting people to start and/or finish responding.

Dillman asserted Census Bureau survey sponsorship is a positive factor for response, “probably the most desirable sponsorship of any that one could have for getting response from the general public,” because of its credibility compared with other organizations. Despite this positive aspect, obtaining response in a Web-push methodology (where a Web response

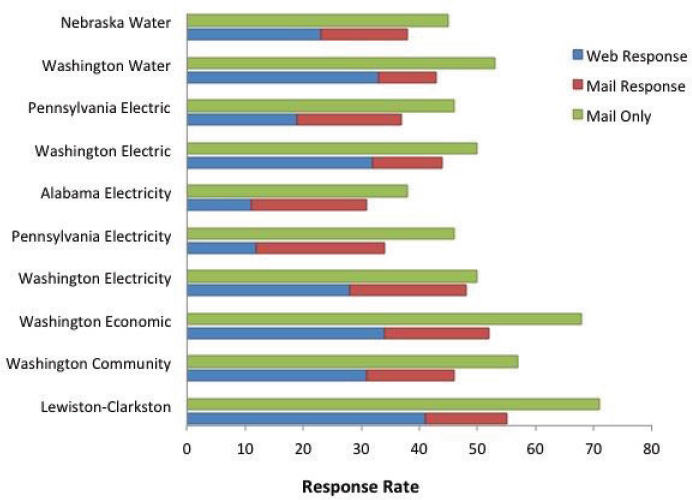

is requested with an offer of mail later in order to recruit different demographics) is much more difficult than getting responses to only a mail-back procedure. He summarized 10 university-sponsored tests in multiple states that produced mean response rates of 43 percent for a Web-push methodology versus 53 percent for a mail-out, mail-back approach (Dillman et al., 2014). He explained the reason for lower response is that switching from one medium of communication (mail contact) to Web response requires special effort on the part of the person who received the request.

He illustrated the effect of three methods with several of his experiments over the course of about 2007 to 2012 (see Figure 3-3). In every case, response rates were higher with mail only. Although he agreed that the Web-push method is needed, he noted that these data illustrate the difficulties of the task.

Dillman then discussed how to make a communication sequence effective. He concluded it is necessary to design all visible aspects of mail contacts—the outside appearance of the envelope or card (size, shape, print), the message (letter) requesting a response, enclosures, the Census form cover pages, and the actual questions—in mutually supportive ways.

SOURCE: Don Dillman presentation at the Workshop on Respondent Burden in the American Community Survey, March 8, 2016. Available: http://sites.nationalacademies.org/cs/groups/dbassesite/documents/webpage/dbasse_173168.pdf [September 2016].

He listed factors that work against individual effectiveness of contacts. These factors include keeping the same outside appearance on most mailings, repeating the same content so new information and appeals cannot be added, including enclosures not relevant to most people who will respond, failing to convey the importance of each household’s response, and failing to use new opportunities/places for effective persuasion in later contacts. In summary, he stated the goal is to avoid sameness of arguments and not to let each contact become unfocused with too many disparate or repetitive enclosures.

In order to implement these improvements, Dillman offered suggestions to modify the five ACS contacts outlined in Poehler’s presentation to avoid sameness of arguments and letting each contact become unfocused. He pointed out that currently there are five contacts for obtaining responses to the Web version plus a mail option.

- First contact In the first contact, he would remove elements that interfere with the focus and add wording about how a person’s response helps America. He would modify the envelope to refer to a “U.S. Census Form” rather than “The American Community Survey,” he said, because the U.S. Census form, unlike the ACS, is an established brand in most Americans’ minds. He would simplify the mailing by removing items. He said he would remove the very general “Frequently Asked Questions” document and place the content into the letter, perhaps on the back page. He would also take out the multilanguage brochure, which, in error, says the Census form will arrive in a few days. He said he would replace these items with the “Why We Ask” brochure, which gives concrete examples of why the ACS is important. Finally, he would change the cover letter so it no longer appears mass produced, taking out the salutation and the message from the director. He would date the letter, because all culturally targeted communications have dates on letters sent sequentially. Additionally, he suggested the letter explain why people are required to respond, inform them the response applies to everyone living at the address, and tie the letter to the “Why We Ask” brochure.

- Second contact Dillman stated he was pleased with the Census Bureau’s changes in the second contact, with a new letter replacing a prenotice letter and reminder postcard. The reminder postcard was a carryover from the mail-only request. It could not provide the name of survey and login information, he said, so a respondent was pushed back to the first mail-out, thus increasing the burden of figuring out how to respond online. He observed that this change illustrates how Web-push methods need different contacts than

-

mail-only approaches. It resulted in an Internet response improvement of about 5 percent, with total Internet and mail response increasing by about 3.5 percent.

- Third contact He suggested major changes in the third contact which, as detailed in Poehler’s presentation, consists of seven pieces of paper: outgoing envelope, frequently asked questions, 16-page instruction booklet, login card, message from the director, paper questionnaire, and return envelope. He concurred with the results of the Census experiment to remove the instruction book and the choice card, shifting the card content to the letter. He would again add the “Why We Ask” brochure. He suggested changes to the first page of the ACS questionnaire, which he stated is not conveying to people the reasons that they should respond. He would add the following across the top: “The American Community Survey—Producing quality-of-life statistics that communities in every state depend on to assess their past and plan for the future.” He would also insert material from the “Why We Ask” brochure to explain what the ACS is, with this content aimed at explaining the ACS to a second or third person in the household who may become involved at this stage of responding.

- Fourth and fifth contacts Dillman said he would leave the fourth contact as a postcard format, but leave out the emphasis that a response is required by law and that an enumerator will visit. He would change the fifth contact to a letter that focuses on why response is required and that states it is the last letter prior to telephone or an in-person visit.

ACS RESPONDENT MATERIALS AND SEQUENCING: APPLICATION OF A RESPONSIVE AND ADAPTIVE SURVEY DESIGN FRAMEWORK

Andy Peytchev (University of Michigan) introduced his remarks by stating that the relationship between survey design and survey burden is complex. On the one hand, response burden is not a well-defined concept. It may have different dimensions, it is difficult to measure, and it depends a lot on the circumstances of the respondent. On the other, design is important to the challenge of ameliorating burden in that it can affect burden by changing mode, asking fewer questions, asking the questions better, and using matrix sampling, among other things. Good survey design needs to consider simultaneously costs, burden, quality of the resulting estimates, and intended use for the estimates. Thus, the relationship is extremely complicated, he said, which means the best design may not be known and may never be achievable. Still, it is important to strive for a better design,

and in the process develop solutions that may improve the survey and get closer to the survey objective. In the end, however, there may not be one specific design prescription, for multiple reasons.

Depending on the objectives and their relative importance, the best design will depend on several factors, Peytchev said. First, it is necessary to define how important burden is relative to the other factors, which may vary by question. The multiple objectives that should be optimized are straightforward—avoid undue burden, reduce cost, and increase response rates. The solutions are not clear, given the near-infinite pool of design options, combinations, and permutations.

In a survey like the ACS, design is an overwhelming challenge, he observed. Many features and constraints on the ACS limit what can be fixed within the design, including the multiple modes, the mode sequence, and the data collection periods. Those limits become even more evident when both Web and mail responses must be collected within the same 1-month period.

The reaction of respondents to the design varies and, like the perception of burden, may change over time. The burden of a mail questionnaire as perceived 10 years ago may be different now, especially with the Web-mode option. Thus, burden may need to be constantly reevaluated. Peytchev pointed out that perception of burden may also vary across sample members. What one person may see as burdensome, another person may see as motivating.

He concluded that the complex interrelationship between design and burden, and the added complexity of the ACS, challenge the usual approach to survey redesign. The usual approach is to try to identify possible main factors to change, package them into several changes at the same time, and mount a standalone experiment to test them. Sample size determines how many features can be disentangled and how many interactions can be identified within the experiment.

The ACS experiments in 2015, he observed, were a bold departure from the usual approach and definitely in the right direction. The 2015 approach should be part of a permanent design, he said. The key was the responsive design framework, developed by Groves and Heeringa (2006). He suggested modification of the framework for purposes of the ACS by inserting consideration of burden and ACS features into each of the framework’s four steps: (1) pre-identify a set of design features potentially affecting burden, costs, and errors of survey estimates; (2) identify a set of indicators of the burden, develop cost and error properties of those features, and monitor those indicators in initial phases of data collection; (3) alter the features of the survey in subsequent phases (and monthly sample releases) based on cost–error tradeoff decision rules; and (4) combine data from the separate design phases (monthly sample releases) into a single estimator. By stating the framework in this manner, it is possible to consider burden within

the key objectives and the responsive design. While cost and survey errors may be prominent feature outcomes within this design, Peytchev suggested inserting burden into what to optimize for, including indicators for burden; altering features that may affect burden; and then combining the data from these multiple phases.

The framework can be very relevant for the ACS, he said, in the following sequence: view the ACS as a 1- and 5-year survey, with multiple/monthly sample releases; learn from one sample release to the next; leverage the continuous use of tests to measure the impact of individual features; employ as a permanent feature of the survey; and ensure that survey errors are evaluated, in addition to cost, burden, and other outcomes. In this manner, over time, it is possible to converge to a more optimal design.

Another key feature of the responsive design framework is the focus on an evaluation of the survey errors, not just the burden. Evaluating the error properties of the survey estimates may result in a limited concern for nonresponse because, within subgroups, there may be greater concern for measurement error. In accord with the framework, Peytchev said that the 2015 Census Bureau studies referred to by Poehler actually evaluated the effect on survey estimates when they omitted the instructions.

Adaptive survey design is a related notion, Peytchev observed. A key feature of adaptive survey design is to acknowledge heterogeneity—people are different, and each person or group of people may need different treatment over time during the data collection period. In this environment, there may be variability in how they perceive burden and how they respond to different design features within the content materials. These variable responses would be considered in tailoring the designs at the sample address or the subgroup level. The materials, content, and sequence of these materials would be taken into account in the resulting design.

He challenged the Census Bureau to adopt the theoretical framework and a responsive and adaptive design approach and to implement the approach over time consistently by testing different factors. The theoretical framework would be based on Leverage-Salience Theory (Groves et al., 2000), which postulates that different people participate or do not participate for different reasons. This theory has relevance to burden on the ACS in that some motivating factors may reduce perceived burden.

Another standard is the Compliance Principles (Cialdini, 1988), which postulate several means of assuring compliance (response). These means include authority (e.g., different ways to invoke the government’s involvement and mandatory nature); reciprocation (e.g., “you have benefited from the services that result from the ACS”); consistency (e.g., “as a good resident in your community…”); social validation (e.g., “98% of selected households complete the ACS”); scarcity (e.g., “your address has been

selected to represent many others in your community”); and liking (e.g., start the introduction with something positive).

In summary, Peytchev observed the ACS is already one of the most innovative survey designs among the major federal surveys in that it has production sample replicates for experimentation, a formal multiphase design with multiple modes, and double sampling of nonresponse. He stated these complexities add value to the survey, and they also make it more flexible. Considering a more formal adoption of the responsive design framework to continuously improve the survey may be of benefit, and, he said, the ACS is uniquely set up to leverage these capabilities and exploit the flexibility.

COMMUNICATING WITH RESPONDENTS: MATERIAL AND SEQUENCING IN THE ACS

Nancy Mathiowetz (University of Wisconsin–Milwaukee [emerita]) focused on two questions related to the documents in the first mailing: to what extent is the information within and across each of these documents consistent, and is the most important information clearly conveyed.

Mathiowetz first discussed the mailing envelope used in the 2016 production. She questioned whether all seven distinct pieces of information on the envelope are needed. For example, both the U.S. Department of Commerce and the Census Bureau are identified. The envelope indicates the Census Bureau is an equal opportunity employer. She acknowledged eliminating pieces of information may be very difficult, but currently it is difficult to know which piece of information is the most important. She stated that the redesigned envelope has much better branding and better identification of the Census Bureau.

She then assessed the letter. At first glance, she said, the respondent sees fairly dense prose and off-putting language. The term “randomly” appears twice. While the first definition of the term in Webster’s dictionary is “proceeding, made, or occurring without definite aim, reason, or pattern,” the meaning of “randomly” for purposes of the ACS is that the person was selected with purpose by a scientific process, she noted. She urged the Census Bureau to consider how the lay population interprets terms like this.

The letter, she assessed, contains contradictory information. It portrays a sense of urgency when, in order to push people toward the Web, it says to complete the survey online as soon as possible. It also says the agency will send a paper questionnaire in a few weeks—mitigating the sense of urgency in the first part of the text and sending a mixed message. Another point of confusion is that the household is selected for the sample, but a person is mandated by law to respond. She asked how the Census Bureau could convey the idea of a mandate to respond when an individual is not the respondent.

She opined that the new letter is visually more appealing and brands the Census Bureau. One problem is that the return address on the letter—Washington, D.C.—does not match the return address on the revised envelope, which says Jeffersonville. Other improvements to the new letter include dropping the issue of timing and no longer pushing the respondent to the Web and then announcing a paper questionnaire in a few weeks.

Agreeing with Dillman, she pointed out an inconsistency in the current brochure. The first part says the person will receive an American Community Survey within a few days. This should not be included in a mailing that tries to push people toward a Web survey. In her view, it would be better to eliminate this brochure to have a consistent message.

COMMUNICATING THE AMERICAN COMMUNITY SURVEY’S VALUE TO RESPONDENTS

Andrew Reamer (George Washington University) presented several ways of raising the perception of the value of the ACS as part of a campaign to reduce burden. Along with previous presenters, he questioned the notion of burden, which he stated has both technical and political meanings. In political terms, burden suggests that the respondent is a victim. The term is used in a political sense by people who are unhappy with the ACS and believe it is intrusive. He encouraged alternative wording when possible.

By way of background, the final report of the congressionally established Commission on Federal Paperwork (Commission on Federal Paperwork, 1977) surfaced the notion of burden. The commission concluded that a wall of paperwork had been erected between the government and the people and that countless reporting and record-keeping requirements and other heavy-handed investigation and monitoring schemes had been instituted based on a faulty premise that people will not obey laws and rules unless they are checked, monitored, and rechecked. In this context, the commission defined different types of paperwork burdens. Economic burdens include the dollar cost of filling out a report as well as the costs of record-keeping systems. Psychological burdens include frustration, anger, and confusion.

Reamer offered ideas to raise the perception of value of the ACS. His suggestions included revising the tagline; including an overarching framework of uses; noting the ACS origins; emphasizing the notion of the community getting its “fair share”; indicating the community response rate; redesigning the “Why We Ask” material to broaden the scope and highlight examples; and testing use of the Census Partnership Program with the ACS.

To Reamer, the tagline—“How your responses help America”—is too general. It should focus on how responses help the community, state, and nation to give a sense that it is about the respondent’s community as well

as the nation. Similarly, the framework of uses should reflect the ACS role in improving the economy, ensuring efficient and effective government, and sustaining democracy. He encouraged communicating these messages to the respondents.

With regard to efficient and effective government, Reamer suggested making clear that the idea of the ACS originated with James Madison. He proposed indicating that the ACS is the current iteration 226 years later of what Madison asked in 1790: to add questions to the decennial census so that Congress might legislate on the basis of the circumstances of the community. Reamer further emphasized the notion of a community getting its fair share of private sector goods and services, jobs, and government grants and government services. In line with the current emphasis on behavioral economics, he encouraged the Census Bureau to consider putting the response rate on communications to establish a social norm around response.

He critiqued the “Why We Ask” publication and urged its redesign. He said the document presents good examples but buries them in the text and makes no mention of the uses of the ACS for democracy (e.g., the ACS citizenship question is used to draw congressional district boundaries, and Section 203 of the Voting Rights Act mandates the use of the ACS language questions). He suggested highlighting the examples and adding an example about legislative boundaries.

Lastly, Reamer suggested a test in which the Census Bureau recreates its decennial census partnership in a few communities in the near future in order to let respondents know they can call someone in their neighborhood to affirm the legitimacy of the ACS and to explain the value of filling out the questionnaire. He observed that this would be a relatively low-cost initiative.

DISCUSSION

In response to an invitation from Linda Gage, cochair of the workshop steering committee, members of the audience offered questions and comments on the communications topic. One commenter noted the ACS letter now prominently features the fact that the ACS data determine the distribution of some $400 billion; however, given the current climate, some might think this is wasteful spending. Use of the figure might do more harm than good for some segments of the population. In response, Poehler clarified the figure appears only at the bottom of the experimental letter and in the letter currently in use. The language is still to be evaluated. Reamer further responded the money will be allocated one way or another and that participating in the ACS ensures a person’s own community gets its fair share.

Another participant stated the success of the stimuli to respond quickly

and with the cheapest mode depends on the timing of these iterations of mailings. Research in the context of the census program has shown that timing matters.

A participant noted presenters discussed proposed measures to downplay the mandatory nature of the survey. The person questioned whether it is a disservice to avoid informing people that they may get a fine for not responding. Poehler said language in at least one place on all of the tested mail materials tells the respondent that the ACS is required by law and the potential fine. The tests varied how much the Census Bureau emphasized or deemphasized that messaging, but it is required by law that a respondent is told if a survey is either mandatory or voluntary. Reamer added the amount of the potential fine—up to $5,000—is also a consideration. He stated that congressional opponents of the ACS always bring up the $5,000 fine, but the Census law passed in 1976, and still on the books, limits the fine to $100 for refusal to fill out the survey and $500 for false responses. The 1976 law was overwritten in the 1980s by comprehensive crime control legislation that pushed the fine up to $5,000. He contended that the $5,000 fine contributes to the perception of burden.

A participant asked about the relationship of respondent burden to the device on which respondents respond to the survey, inquiring if the ACS Internet instrument is optimized for completion on a mobile device. Lower-income individuals are more likely to access the Internet via a mobile device rather than through a desktop or laptop computer and a high-speed Internet connection at home. Whether or not the respondent can respond on the individual’s device should be taken into account in addressing burden, according to the participant. Poehler responded that the Census Bureau conducted studies about people using smaller devices to access the Internet about 6 months ago. The study found problems in that respondents were pinching and zooming multiple times for every screen. Based on these findings, the Census Bureau redesigned and optimized the ACS for smaller devices. That redesign has been implemented.

A participant stated part of the burden is invasiveness, of which the telephone and the in-person interviews are a big component. The participant asked if the Census Bureau knows whether the complaints are about these types of interviews and whether it would make sense to go straight to the computer-assisted personal interview or extend the mail collection. Are there are other methods for self-response that seem less invasive? Poehler answered that the Census Bureau recently studied and addressed the burden associated with telephone calls and in-person visits, curtailed the number of attempts made by phone, and stopped visits when a threshold related to perceived burden has been reached. The Census Bureau has not thoroughly investigated whether to expand mailing beyond the current practice because of the telephone and visit burden.

Mathiowetz suggested one-size-fits-all is perhaps not the best approach. Consideration should be given to Census tracts and zip codes in an adaptive design framework. This would mean, for instance, that people with no Internet access would not be invited to respond on the Internet. It would call for modifying the sequencing, perhaps removing the telephone contact because it is seen as a fairly intrusive form of communication. She said that she is not aware of current research at the Census Bureau looking at these issues more microscopically. Dillman noted telephone contacts amount to about 7 percent of the total, which is not a very large amount. It may be possible to cut down on frustration by pushing the Webmail a little more and going straight to in-person interviewing.

A participant asked for clarification about the size of the problem. How many people are calling and complaining? What percentage of respondents are complaining? What are the gains in reduction and burden from some of the changes that have been introduced? A participant from the floor responded that the number of complaints is small; the problem is not the magnitude but the party doing the complaining. When the complaint comes from the congressional district of the chairperson of the budget committee, for example, the importance of the perception of burden is larger than the small numbers would suggest. Poehler added that, from a pure metrics perspective, because the volume is small, the effect is very difficult to measure. When the Census Bureau implemented the recent changes in treatment, it received about three more phone calls per month than in previous months. It was not possible to attribute this increase to the change.

Reamer commented on the tension between research findings that show an increased response rate when the mandatory response is emphasized and the program to test softening the mandatory message. He said the Census Bureau had to test softening the message for political reasons.

Another participant, a member of the Census Scientific Advisory Committee, emphasized the minuscule number of complaints. She further pointed out that her understanding is that no one has been fined for not responding to the ACS.

A participant who had been a respondent for the ACS expressed pleasure that the first postcard is now no longer a part of the mail-out because she found it confusing. Although glad to be able to respond online, she said she wished that she had more preparation for some of the questions. The nature of some of the questions—such as income in the past 12 months—cannot be answered from taxes from the previous year but only by aggregating paystubs. The need to obtain information to answer the questions meant leaving the computer. In her view, it would have been helpful to have the paper form to view the questions ahead of time.

COMMUNICATING THE IMPORTANCE OF THE ACS TO THE PUBLIC

In introducing this panel session, Nancy Mathiowetz stated that the workshop steering committee wanted to address any gaps in the Census Bureau’s research. In terms of how the ACS is communicated to the U.S. population, there are questions concerning what stakeholders and the public in general know about this survey, what steps the Census Bureau can take to increase its visibility, and how the bureau can develop a long-term incremental marketing strategy to brand the ACS. She noted the discussants in this session represented the commercial marketing sector and market research organizations.

BRANDING TECHNIQUES

Sandra Bauman (Bauman Research & Consulting) noted branding is most often associated with the commercial world and the roots of branding are associated with commercial goods. Other types of organizations have used branding and capitalized on its tenets to make their communications more effective. She said branding is germane to the ACS as a tool to ameliorate respondent burden.

Bauman cited several instances, including the presidential campaign of Ronald Reagan, when political candidates and parties have used branding. Nonprofits and causes have used branding and marketing as well. Personal branding, such as a person’s LinkedIn profile, is also employed. She emphasized a brand is much more than a logo or a graphic identity. She referred to advertising expert Walter Landor, who famously called a brand a promise in that it identifies and authenticates a product or service, and, in so doing, delivers a pledge of satisfaction and quality. Bauman defined a brand as, first, a promise that when delivered results in positive feelings of satisfaction and, second, a collection of perceptions in the mind of the consumer. It is different from a product or service in that it is intangible and is a psychological construct—the sum total of everything that the audience or customer knows about, thinks about, and feels about the brand.

Branding is related in several ways to the ACS, she said. A branded ACS can have greater impact than a nonbranded one. Branding the ACS is a vital part of spreading awareness about the survey. It can address barriers and obstacles to participation and serve as a bridge between the Census Bureau and the community.

She summarized five building blocks that make an effective brand:

- Develop and be able to articulate a positioning. A positioning considers the attributes of the product or service and the benefits that customers can get from it. For the ACS, this building block means finding a benefit important and personally relevant to respondents and determining how to leverage it. According to designer David Galullo (2013), the positioning may be about the connection the seller (in this case, the Census Bureau) has with its employees and customers. Both want to feel like they are part of something larger.

- Tell a story, bring it to life, and link it to personal value. It has to be believable, relevant, unique, and motivating. The story must persuade with reason but motivate with emotion.

- Elevate the benefits of the brand by going from the functional to the emotional.

- Deliver on the brand promise with every experience—authenticity and transparency build trust.

- Make every touch point consistent in tone, language, look, and feel.

Bauman discussed how ACS participation could be reframed to overcome obstacles in the current context that include government distrust, concerns about privacy, and lack of awareness of the survey. She recommended that the Census Bureau assess the branding campaign and communication strategy employed in the 2010 decennial census program. Also, she said, the bureau should gather and publicize people’s stories about how they and their communities benefited from the ACS, which can help to humanize the survey.

Bauman also emphasized the importance of building pride in being chosen for the survey and having participated. She suggested emphasizing exclusivity and how it is a privilege to be chosen to participate in the survey. She pointed out that people take pride in giving blood or voting and are motivated by stickers that announce their action to the world.

In summary, Bauman said, it is important to make respondents feel like they are part of something larger than themselves.

MESSAGING

George Terhanian (NPD Group) focused on the science of messaging and the progression in marketing from messaging about the features, to a focus on the benefits, to messages that evoke emotions, to messages that illustrate core values. He critiqued the current messaging found in materials provided to ACS respondents and, drawing lessons from consumer research and studies of business’ approach to branding, commented on several statements in the materials.

Message: The ACS is sent monthly to a small percentage of the population, with approximately 3.5 million households per year being included in the survey.

Terhanian’s comment: The message should be respondents have been selected at random, but they are special and part of an exclusive group. The fact that respondents are in the survey by invitation only is a message. He likened a message stressing exclusivity to the message used by Google when it first recruited Gmail users and how Nielsen, via the Nielsen Ratings, positioned its service for decades. He stated that respondents should be made to feel as though they have won the lottery. Certainly, the challenge is to communicate the benefits of the ACS (and participation in it) in a way that resonates at a personal level. He suggested that reducing interview length to accommodate respondents reinforces the message that they count and shows respect for their time and effort.

Message: However, the entire country benefits from the wealth of information provided from this survey of over 11 billion estimates each year for more than 40 topics covering social, demographic, housing, and economic variables.

Terhanian’s comment: He challenged using the word “however.” He suggested the positive message is that the entire country benefits through the participation of the 3.5 million lottery winners, the lucky ones others trust, depend on, and even envy. Their individual participation leads to direct benefits for themselves, their communities, and their country.

Message: The data that the ACS collects are critical for communities nationwide—it is the only source of many of these topics for rural areas and small populations.

Terhanian’s comment: He suggested specifying the benefits to the individuals within the communities and, more specifically, to the 3.5 million respondents. The current messaging seems to suggest participation in the ACS is a necessary evil.

Message: . . . Target, JC Penney, Best Buy, General Motors, Google, and Walgreens use ACS data for everything from marketing to choosing franchise locations to deciding what products to put on store shelves. Because ACS data are available free of charge to the entire business community, the program helps lower barriers for new business and promotes economic growth.

Terhanian’s comment: He said this is a wrong message because respondents do not relate to its “great for businesses” tone. They are interested in benefits to individuals and whether the information creates more jobs for working people. The message should be how the businesses are using the data to benefit consumers. For example, do they use the information to ensure they have in stock the products people need and want, which can vary by region? The ACS should communicate individual (consumer) benefit.

Message: First responders and law enforcement agencies use ACS data.

Terhanian’s comment: Although this fact is very important and brings associations with family, safety, and peace of mind, Terhanian commented that disasters and emergencies are rare events. Instead, the message should focus on the close-in benefits to individuals.

Message: Your response to the survey is required by law.

Terhanian’s comment: The message should be softer without diluting the core message that is profoundly important.

He summarized by saying that the ACS’s main message should be that people count and that is why they should participate.

CONNECTING WITH PEOPLE AND COMMUNITIES

Betty Lo (Nielsen) gave examples of campaigns and the communications that Nielsen has leveraged to connect with consumers and communities through the company’s Community Alliances and Consumer Engagement team. The team creates relevant messaging that the company can use to connect with those consumers.

Nielsen focuses on multicultural communities mainly because it has been found—through various advertising and brand awareness studies—that these communities are less likely to be aware of Nielsen and to participate in a Nielsen survey or a study. The methodology is to create a message that resonates with all audiences, and then put a cultural nuance on the message to connect specifically with diverse communities. In this way, Lo said, Nielsen’s challenge is similar to the objective of changing the paradigm around how people think of the census and the ACS from perceiving a burden to understanding that responding helps them.

Nielsen started by developing a market-by-market strategy. This process was a challenge because Nielsen is prohibited from advertising on the media that the company measures. For example, the company cannot use television advertising, but with the approval of the Media Ratings Council, received a reprieve for an extended year to advertise on radio. Radio has been one of the most effective ways in which to connect with the community, Lo said.

As part of its decision-making process for selecting markets and communities, Nielsen annually conducts a brand awareness survey that measures how consumers perceive the Nielsen brand. The survey probes familiarity with the brand and the likelihood of participating on a panel if asked. The team also considers recruitment results, estimates of the universe from census data, and business partners. Lo speculated that, if adopted, this approach could bolster ACS communication and the ACS advertising campaign.

Having selected the communities and the approach, Nielsen seeks to leverage the community by engaging thought leaders and using earned

media to get the message out into the community so that it resonates and connects and so that consumers take ownership. The goal of this approach, Lo explained, is to create a conversation where other people are talking about Nielsen rather than Nielsen having to talk about themselves in a typical direct marketing or advertising way.

The process has resulted in a diverse intelligence insight series for different communities—lesbian, gay, bisexual and transgender (LGBT), women, Asian American, African American, and Latino and Hispanic. In this way, Nielsen has developed culturally relevant messages that have moved away from stressing compliance to a message of empowerment through connection with people’s culture and heritage.

Nielsen’s messaging has three goals: (1) to build awareness and trust through the message and engagement with the community; (2) to develop culturally relevant messages of empowerment, rather than compliance; and (3) to own the message but empower others to share, what Nielsen terms “celebrating the conscious consumer.” In all of the communications, Nielsen stresses, in simple and succinct terms, its policy on data privacy.

Lo shared examples of the medium mix used in the messaging. Within the African American advertising campaign, for example, Nielsen found it effective to target high-traffic cinemas where African American consumers tend to watch movies and presented short, digital clips in cinema advertising. In contrast, for the Asian American community, which is oriented to ethnic print media, Nielsen created advertorials—essentially information that the community would want to know about what they are buying and how they are watching television. Nielsen senior leaders wrote some of the most effective advertorials. In other communities, more traditional out-of-home advertising media like billboards and bus wraps were effective in increasing exposure to the Nielsen brand.

Lo explained that external advisory councils were formed to help guide the campaigns within the communities. Nielsen found community-based nonprofit organizations that are focused on civic engagement or voter empowerment are ready to help, and suggested that the Census Bureau use them to get the ACS message out into their community.

In conclusion, Lo made three suggestions for a messaging campaign:

- Make sure consumers can embrace and own the message. It is important not only to create culturally relevant messages but also to focus on change management from the top of the organization to the field representatives.

- Stress the importance of response. Change management involves stressing why responses are important and providing sound bites to convince people to embrace the empowerment medium message.

- Use vignettes. Successful Nielsen vignettes include heritage month videos for each of the diverse communities showcasing the fact that Nielsen knows and is powered by the consumers.

DISCUSSION

In the question period after this panel, one participant asked about targeting brand communication to specific audiences in addition to the general public, such as ACS employees who should be messengers of the value of the ACS. The participant observed that, in his experience, ACS staff view their mission as production rather than sales. The participant asked the panel for comment on how essential it is for the staff in the ACS office to own the brand.

Lo answered from her perspective in change management. The Nielsen advertising and communications strategy addresses ensuring that changes are adopted, that people embrace the message, and that long-term ownership of the message is sustained. Some ways to make sure key stakeholders are part of the solution are to make sure that they are all involved in the process, from the champions who drive the message, to the stakeholders who are leveraging data in the different communities, to the field representatives who are knocking on doors. All stakeholders need to own part of the discussion, she said.

For example, she noted, the field representative guide instructs field representatives to say a simple thank-you and smile. Because people’s attention span is so short, Nielsen has incorporated a sound bite for its field reps to not only say thank-you but also indicate the importance of the data to provide insights that will be shared with clients. Bauman added the objective should be to embolden employees to feel like they are brand ambassadors.

The same participant asked about communication to Congress. It was noted that the Census Bureau has a wonderful innovative application programming interface (API) that allows each member of Congress to put Census Bureau information on his or her Website. The API is populated with ACS data by congressional district. The participant urged the Census Bureau to communicate its brand to members of Congress both for legislative purposes and for support of congressional district offices that respond to constituents with questions about the ACS.

A participant observed that Nielsen invests in building a relationship with people who are being asked to respond and that relationship-building has a parallel to the ACS. It implies the construction of a continuous relationship with groups over time. The participant suggested the Census Bureau, which is already going in this direction, should leverage the experience of Nielsen to move forward. Lo responded that the perception Nielsen wants to instill is that respondents have won the lottery, that they have been

specially chosen and that they have a responsibility to respond, as consumers who will shape products and services created to serve their needs.

A participant asked if the focus of the messaging should be the ACS or the Census Bureau, observing the agency has huge campaigns associated with the census and the ACS is part of the census. Bauman responded that the Census Bureau has a brand that exists and touches every single household in the country once every 10 years, and the ACS should attach to that brand. She explained this is called brand architecture, where there is a parent (U.S. Census Bureau) and sub-brands. Lo and Terhanian concurred the ACS should capitalize from the efficacies, goodwill, familiarity, and awareness of the Census brand. Terhanian added that building brand awareness is incredibly expensive. The Census brand exists and is very strong, he said, noting he would cobrand the ACS, which is not as strong, with it.

This page intentionally left blank.