4

Methodological Considerations Related to Assessing Intake of Nutrients or Other Food Substances

One of the most important aspects of evaluating the certainty of the diet-chronic disease evidence is the validity and reliability of the food and nutrient or other food substance (NOFS) intake methodology used in each published study. Accuracy in the NOFS intake methodology is essential because the quantitative relationship between the nutrient and the chronic disease must be characterized with considerable certainty in order to establish a chronic disease Dietary Reference Intake (DRI). As Chapter 3 points out, characterizing dietary exposures is one type of challenge that is unique to nutrition research. Without careful attention, intake assessment may add major uncertainty to the judgments about NOFS-chronic disease relationships. Although the recent Options for Basing Dietary Reference Intakes (DRIs) on Chronic Disease Endpoints: Report from a Joint US-/ Canadian-Sponsored Working Group (i.e., the Options Report) (Yetley et al., 2017) raised the issue of characterizing NOFS intakes as a challenge, it did not specify options for any particular methodology. Because NOFS intake methodology is a crucial topic, this committee has devoted this chapter to it.

Within the context of the DRI process (see Figure 1-2) and as described in Chapter 6, decisions about dietary intake methodologies are made mainly at two steps. During the first step—developing the protocol for the systematic review—the inclusion criteria for NOFS intake methods are decided. At this step, the inclusion criteria need not be restrictive, but can include relevant and commonly accepted self-reported methods and biomarker measurements. In the second step—conducting the evidence review—the

DRI committee considers and evaluates the internal validity of individual studies (risk of bias), which would include evaluating the dietary intake methodology. This chapter provides information on dietary assessment methodology to help guide committees with evaluating its contribution to the risk of bias. Specifically, the chapter describes methods currently in use to assess NOFSs, describes novel methods for evaluating NOFS intake, provides guidance that will help DRI committees evaluate published literature, and offers suggestions for future research that may help fill gaps in this important area. Key terms used in this chapter are in Box 4-1.

METHODS TO ASSESS NUTRIENTS OR OTHER FOOD SUBSTANCES

Self-Reported Measures of Dietary Intake

Self-report of NOFSs has been the primary intake assessment method for most cohort studies and randomized controlled trials with chronic disease endpoints. Much has been written about various types of self-reports and the strengths and weaknesses of each in the research setting (Thompson et al., 2015). Briefly, four major types of self-report dietary assessment have been used in studies of diet and chronic disease outcomes: (1) multiple day dietary records, (2) multiple day 24-hour dietary recalls,

(3) semi-quantitative food frequency questionnaires, and (4) brief instruments focused on specific foods or food groups. Each of these methods assesses short-term intake over a period of days to 1 year. Some strengths and weaknesses of these measures are given in Table 4-1.

Self-report of diet is often used in the research setting because it is relatively low cost and carries a relatively low participant burden. Self-report also may need to be considered because valid biomarkers are available only for certain NOFSs. Moreover, even when biomarkers are available they may sometimes usefully be combined with self-reported intake data in disease association analyses. Self-reported dietary assessment methods also provide data on food sources of NOFSs; such data are needed to translate evidence about NOFS-disease associations into food-based dietary guidance.

Standardized protocols exist for the collection of self-reported dietary data. However, even when collected by the best available measures of short- or long-term intake, dietary self-reports have a high level of uncertainty due to the (1) complexity of many foods, (2) limitations of self-report for accurately describing or recording specific foods consumed, (3) difficulty in accounting for day-to-day variability when estimating usual intakes (variability is highest for micronutrients and most or all other food substances, compared to macronutrients), and (4) limitations of food composition databases. Self-reported dietary intake data are well-known to contain random error, such as day-to-day variability, which adds “noise” in the data and can often lead to imprecise findings with very wide confidence intervals or even null results that obscure the ability to detect an association that is actually present (Beaton et al., 1979). Methods for correcting random error in dietary intake data exist (NCI, 2017; NRC, 1986). For example, in many contemporary cohort studies repeated measures of dietary intake have been collected periodically during the follow-up periods. The use of repeated and updated dietary assessments by validated food frequency questionnaires has been found to reduce measurement errors and represent long-term dietary habits, which are most relevant to chronic disease etiology and prevention. Some studies have analyzed changes in dietary exposures over time and subsequent risk of chronic diseases. This is a stronger observational design than a typical cohort study because it mimics an intervention study. Importantly, dietary self-report data are also subject to systematic error or bias that is dependent upon participant characteristics, such as age, sex, body mass index (BMI), and race/ethnicity (NCI, 2017; Neuhouser et al., 2008; Prentice et al., 2011; Tinker et al., 2011; Zheng et al., 2014). This type of bias is especially problematic because it may influence the size and direction of observed associations with chronic disease and it is also difficult to detect. Although adjustments for variables such as age, sex, and race/ethnicity are frequently done to minimize confounding in nutrition studies,

no amount of adjustment can remove or adjust for this systematic error due to the participants characteristics.

Biomarkers of NOFS Intake

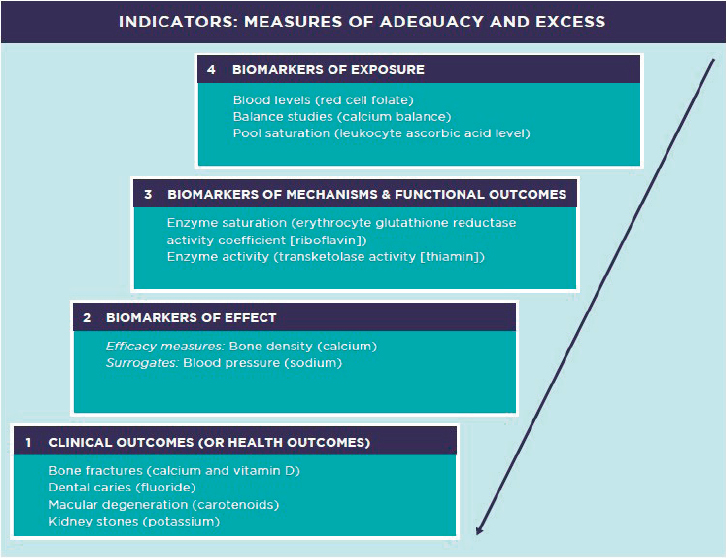

Exposure to NOFSs also can be assessed through biomarkers. Biomarkers are measurements obtained (usually sampled) from a biological system or organism, such as human blood, urine, feces, saliva, hair, skin, nail, and other tissues (i.e., adipose tissue, organ biopsy material). In the context of nutrition, biomarkers have a continuous typology based on the intended purpose, with quality possibly dependent on the intended application (see Figure 4-1).

Biomarkers of nutritional exposure fall into five general categories:

- Compounds or molecules that reflect exposure to nutrients with existing DRIs (i.e., certain vitamins, minerals, macronutrients);

- Bioactive compounds without a DRI (i.e., carotenoids, isoflavones);

- Integrative biomarkers that capture exposure to food substances plus metabolic processing (i.e., metabolomics);

- Functional nutritional biomarkers that may reflect enzyme saturation or functional measures of nutritional status; and

- Food contaminants (i.e., aflatoxin, polycyclic aromatic hydrocarbons [PAHs], nitrosamines, acrylamide, pesticides). These biomarkers are less directly related to nutrients, but may be relevant.

This chapter is concerned only with biomarkers of NOFS intake (i.e., biomarkers of exposure or level 4 in Figure 4-1; biomarkers of effect and clinical outcomes are discussed in Chapter 5). Biomarkers of intake may be subject to the same types of random error or bias as self-report, but suitable biomarkers should be less subject to systematic bias and, for this reason, may be viewed as more objective measures of NOFS intake. For an intake biomarker to be suitable, it should measure (perhaps following appropriate transformation) the intake of interest along with possible measurement error that is unrelated to the targeted intake or to other study participant characteristics. A biomarker that substantially meets this classical measurement model criterion will have the greatest utility when the biomarker measurement error variance is small relative to the variance of the targeted nutritional variable in the study population. However, it is important to note that some nutrition-related biomarkers, useful for other purposes, do not reflect intake but, rather, NOFS status. This is an important distinction in establishing quantitative intake-response relationships. For example, an individual can have zero vitamin D intake and have good vitamin D status.

Most nutritional biomarkers of intake (or exposure) are either recovery

TABLE 4-1 Examples of Uses, Strengths, and Limitations of Self-Report Measures of NOFS Intake

| Self-Reported Measure | What Is Collected? | How? | Feasibility | Strengths and Limitations |

|---|---|---|---|---|

| Dietary Records | All food and drink consumed is reported as it occurs; foods served and left over may be weighed to improve quantification. | May be done directly by respondents themselves on paper or into a computer program or may be done by an observer. | For self-report, requires educated and highly compliant respondents; the cost and logistics of observation is prohibitive for most large studies. |

Strengths: Weighed intakes with highly compliant participants are accurate, but are not a part of most dietary record protocols.

Limitations: High respondent burden usually leads to incomplete records and high drop-out rates. Even when accurate, evidence suggests that eating behavior changes while recording, thus it may not represent usual intake (systematic error). |

| 24-Hour Dietary Recalls | All food and drink consumed is reported for the previous day. A multiple pass system may be used to minimize underestimation due to poor memory or lack of detail. | May be entered by the respondent into a guided computer program or may be conducted by a trained interviewer in person or over the telephone. | Feasible for large studies but requires multiple days to stabilize usual intake estimates of individuals. |

Strengths: Most used method for estimating group mean intakes and the usual intake distribution, as used in national surveys (Beaton et al., 1979; Moshfegh et al., 2008).

Limitations: High day-to-day variability limits extrapolation to usual intake of individuals. This random error leads to attenuation of associations with individual outcomes like chronic disease. Evidence suggests underreporting in general and bias in reporting, with biases larger among persons having higher body mass (systematic error). |

| Food Frequency Questionnaire | The usual frequency of intake is reported for a list of foods. Portion sizes may or may not be ascertained. | The questionnaire may be completed by the respondent or it may be interviewer administered. | Highly feasible, as a single application provides an estimate of usual intake over a period of time, usually the past year. Low-cost method. |

Strengths: When used appropriately, may allow ranking of wide range of nutrients and food substances, usually after adjusting for energy intake.

Provides a moderate-term (e.g., 6 months-1 year) integrated measure of intake, appropriate for use with health outcomes (Willett et al., 1985). Limitations: It is semi-quantitative, based on group foods and recipes, so detail is less than in other methods. Validity depends on the food list, recipe assumptions, and cognitive challenge of integrating frequency of intake over 1 year. May not accurately represent dietary intake for groups with different dietary patterns than for which it was developed. This may introduce systematic bias associated with obesity, race/ethnicity, social class, or unusual dietary lifestyle. |

| Brief Instruments for specific nutrients or food groups, such as fat screeners | Limited questions about usual intake of major food sources of specific nutrients or food groups. | The questionnaire may be completed by the respondent or it may be interviewer administered. | Highly feasible, low respondent burden. |

Strengths: Provides qualitative or general ranking data on specific foods or nutrients.

Limitations: Does not allow for energy adjustment or consideration of these items within the full diet. Subject to systematic bias in estimation of NOFSs due to missing food contributors. |

NOTE: Numbering and arrows reference hierarchical proximity to the clinical outcome of interest. Blood pressure is a surrogate for cardiovascular disease and bone density is a surrogate for fracture risk.

SOURCE: Adapted from Taylor, 2008.

biomarkers or concentration biomarkers. Recovery biomarkers measure intake and output that can be “recovered” and measured quantitatively (usually in urine). The advantage of recovery biomarkers is that they can be used to assess absolute intake over a defined period of time (typically days or weeks). Current methods for assay are excellent and have high precision. Limitations, however, do exist. Importantly, only a few true recovery biomarkers are available, namely doubly labeled water, which estimates total energy expenditure and is used to approximate total energy intake in weight-stable individuals; urinary nitrogen (from 24-hour urine collections), from which protein intake can be computed; and urinary sodium (from 24-hour urine collections).1 Although 24-hour urine collections of

___________________

1 However, 24-hour urine collections can substantially underestimate intake in individuals who have heavy sweat losses (e.g., athletes or those working in hot conditions). This is a much greater problem for sodium (an extracellular anion lost in large amounts in sweat) than for potassium (an intracellular anion present in small amounts in sweat).

potassium has been considered a suitable biomarker, it needs to be further studied due to data showing variability based on race and other factors (Turban et al., 2008). Other limitations of current methods for biomarkers are that the protocols for specimen collection are burdensome to study participants and, therefore, may not be collected completely. Also, the collection procedures and assays are expensive. In addition, the measures are short term—reflecting days or weeks of intake—but the time course for the diet-chronic disease risk occurs over a period of many years or decades.

Concentration biomarkers assess concentrations or relative percentages of NOFSs in the blood, urine, or other tissues. Recently, serum phospholipid fatty acids that correlate strongly with intake have been identified as biomarkers of intake of some specific fatty acids, total saturated fatty acids, total trans fatty acids, and total carbohydrate in postmenopausal women (Song et al., 2017). Likewise, in the same population, serum biomarkers of certain carotenoids, folate, vitamin B12, and α-tocopherol have been identified (Lampe et al., 2017). Many nutritional biomarkers used in studies of chronic disease risk are these concentration-type biomarkers, such as erythrocyte and serum folate, serum carotenoids, plasma phospholipid fatty acids, and serum vitamin D [as 25(OH)D]. Most of these biomarkers are relatively short term, depending on the half-life of the NOFS as well as on the tissue from which it is collected. For example, erythrocyte folate may represent intake from the past 3 months, whereas serum folate may represent the past few weeks. Furthermore, for some NOFSs, erythrocyte measures could be viewed as markers of status and the serum measures as markers of intake. Concentration biomarkers have typically not been used in the same quantitative manner as recovery biomarkers, but for some (not all) NOFSs they can reflect intake following necessary rescaling or other transformations. Importantly, numerous participant characteristics, such as age, sex, race/ethnicity, and BMI, may strongly influence the serum concentration of a particular NOFS. Concentration biomarkers also may contain bias as a measure of intake due to the effects of other exposures, such as smoking, adiposity, medications, and related NOFS intakes. Furthermore, for many concentration biomarkers, metabolic and physiological factors will influence concentrations. For example, phospholipid fatty acids are influenced by both dietary intake and endogenous fatty acid synthesis. In addition, the time of day of a blood draw (and whether in the fasting state or not) will influence whether the fatty acid profile will reflect a state of beta oxidation, de novo synthesis or participation in various intracellular metabolic processes. More generally, for a concentration biomarker to be suitable for intake assessment, it should (following possible rescaling or other transformation) strongly correlate with the intake of interest along with possible measurement error that is unrelated to the targeted intake or to other study subject characteristics.

IMPROVING THE QUALITY OF THE DIETARY INTAKE DATA IN NUTRITION STUDIES

Like any measurement, estimates of exposure to NOFSs may carry biases and uncertainties related to the accuracy and precision of the assessment. Measuring dietary intake with minimal uncertainties, however, is key to establishing quantitative associations between NOFSs and diseases and is a critical criterion in being able to judge the quality of individual studies (part of the risk-of-bias assessment) and overall certainty in the evidence (see Chapter 6). Identifying potential sources of measurement error, evaluating methods used to correct for such errors, and considering which intake methods may provide estimates that are closest to the true exposure, is one of the most important tasks that future DRI committees will need to carry out when evaluating individual studies.

This section presents the committee’s guidance for best approaches related to minimizing uncertainty when measuring long-term NOFS intake with biomarkers of intake (objective measure) and self-reported measures (subjective measure).

Validity and Utility in Assessing Dietary Intake with Biomarkers of Intake

The measurement error associated with dietary self-report may be a significant impediment for DRI committees, whose task will be focused on establishing optimal NOFS intake values for chronic disease risk reduction. Self-report is subject to both random and systematic error, the latter being more troublesome and not resolved through sample size increases or statistical adjustments in chronic disease rate modeling. Although nutritional biomarkers are also subject to random error, they can be objective measures of diet and are, under the classical measurement model mentioned above, not subject to the same types of systematic error commonly found in dietary self-report. However, this does not mean that they are without bias. Biomarkers may not accurately reflect dietary intake due to differential factors affecting absorption, metabolism, and utilization, which must be considered when evaluating their use. For example, ultraviolet (UV) exposure could lead to high serum 25(OH)D even with no vitamin D intake. Calibrating a self-report method with a validated nutritional biomarker, if carefully conducted, may represent an important methodological advance over reliance on self-report alone to improve the accuracy of the NOFS intake data. It may also be possible to directly apply established nutritional biomarkers to a prospective study cohort in a nested case-control study, if they are based on stored specimens (i.e., obtained in both cases and controls before the chronic disease develops).

However, a biomarker of intake must first be validated. Specifically,

the biomarker (typically log-transformed) needs to equal a targeted intake (e.g., log-transformed usual intake over a specified time period), plus random noise that does not depend on the targeted intake, or on other study participant characteristics that are pertinent to the disease under study (e.g., established disease risk factors, other dietary intakes, physical activity patterns). Well-designed and well-conducted human feeding studies can provide evidence of validation for intake biomarkers. A major criterion for biomarker evaluation is the magnitude of the correlation between the known intake and the biomarker values, with corresponding correlations for established biomarkers useful as benchmarks in a specific feeding study context. However, such a context, strictly speaking, can provide direct support for biomarker utility only for the typically short time period of the feeding study in question. Measures of dietary stability over time, along with periodic biomarker measurements over time, are typically needed to establish a biomarker of usual intake over a longer time period, such as the several years or more, that may be pertinent to chronic disease risk. Regression modeling of known intake from a feeding study onto a biomarker that reflects intake, along with participant characteristics that may affect this relationship (e.g., age, sex, BMI), provides a natural framework for biomarker development and evaluation. Such a regression model can provide necessary relocation and rescaling of the biological measures, and the inclusion of relevant characteristics can enhance the resulting biomarker’s adherence to the classical measurement model described above. A biomarker that plausibly adheres to this measurement model can “anchor” a chronic disease association analysis, either by providing a framework for correcting dietary self-report data or, if available, through use of biospecimens stored from members of a study cohort, by direct application in a cohort of interest in a case-control mode.

Methodologic advances have been made in biomarker studies of moderate size (e.g., a few hundred persons). As described in the Options Report and elsewhere (Neuhouser et al., 2008; Prentice et al., 2011; Tinker et al., 2011; Zheng et al., 2014), the development of regression calibration equations that use objective measures (biomarkers) of dietary intake to calibrate self-report data has resulted in biologically plausible and clinically meaningful diet-disease associations that are not observed when only self-report is used. Suitable calibration equations may be developed by regressing established biomarker values on corresponding self-report values and other relevant participant characteristics that need to be included in the risk model for the chronic disease of interest, for confounding control. If the resulting equation explains a substantial fraction of the variation in the targeted dietary variable (this may require replicate biomarker measures to account for random error in the biomarker), then the equation may be used to obtain calibrated intake values for all participants with dietary

self-report and associated data in the larger cohort from which the biomarker study derives. For example, the established intake biomarkers for energy (doubly labeled water) and protein (24-hour urinary nitrogen) had correlations of 0.71 and 0.61, respectively, with estimated actual intakes (Lampe et al., 2017). The committee concluded that biomarkers meeting an R≥0.6 criterion relate to actual intake about as closely as those established biomarkers and may be used to obtain calibrated intake values and provide useful objective measures of intake in the population from which feeding study participants were drawn. These values may be used in disease risk models to obtain disease association analyses in large cohort settings. Such association analyses typically apply to intake over a relatively short time period, and may be limited if the self-report data alone provide only a weak signal for the dietary variable of interest. Correlation with longer term intake, over months or years, is needed for reliable nutritional epidemiology association studies, and may require repeat biomarker application at various times over the cohort follow-up period. The major limitation of the regression calibration approach, however, is the paucity of established biomarkers for the large number of nutritional variables that may be relevant to chronic disease risk.

New Research on Biomarkers of NOFS Intake

Identification and validation of new biomarkers of NOFS intake is urgently needed. In this regard, the use of metabolomics to identify dietary biomarkers is a rapidly growing area of investigation and may provide promise for future nutritional epidemiology research (see Chapter 5). Because metabolomics can reflect both dietary intake as well as the influence of metabolic pathways, it may provide an important approach to the development of additional intake biomarkers (Guertin et al., 2014; Playdon et al., 2017). However, despite its promise, metabolomics has challenges. For example, some analytes generated from a metabolomics platform may link back to a particular NOFS; in other cases, groups of metabolites may reflect the intake of a single NOFS or class of NOFSs (Song et al., 2017).

GUIDANCE FOR FUTURE DRI COMMITTEES

As mentioned in Chapter 1, the committee framed its recommendations and guiding principles in the context of the process shown in Figure 1-2 in which a formal systematic review, including a synthesis of the evidence, of the relevant PICO (population, intervention, comparator, and outcome) questions is conducted by a systematic review team before the formation of the DRI committee and with guidance from a technical expert panel committee. The PICO process is a technique used in evidence-based practice to

frame and answer a clinical or health care–related question and it is also used to develop literature search strategies. The DRI committee would assess such a systematic review, consider any additional evidence, and make decisions about setting chronic DRIs for each outcome.

Assessing NOFS exposures that are valid and relevant for chronic disease outcomes is a challenging task, and the existing systematic reviews that address the intake of NOFS and chronic diseases reveal the diversity in nature and quality of the nutrient intake ascertainment.

Although the committee does not question the important role of dietary self-report data in the field of nutrition (e.g., for nutrition policy purposes where they provide data on food sources of NOFS, allowing interpretation of evidence in the context of dietary guidance for chronic disease prevention), in the context of developing DRIs, random and systematic biases of self-reported methodologies need to be particularly minimized. Considered carefully and within context, studies relying on nutritional biomarkers for intake assessment may present important advantages that should be recognized. However, although methods for addressing some of these challenges are available or emerging, they are not yet reflected in most research studies exploring diet and chronic disease associations. In the near term, and until better dietary methodologies are applied in research, DRI committees will need to identify those studies that provide the maximum level of certainty in exposures data. Such information will then need to be integrated as part of the risk-of-bias evaluation (internal validity of individual studies), an element of the evidence review (see Chapters 6 and 7). In the long term, research agendas should include accelerated efforts to improve dietary exposure assessment for use in chronic disease studies (see Box 4-2).

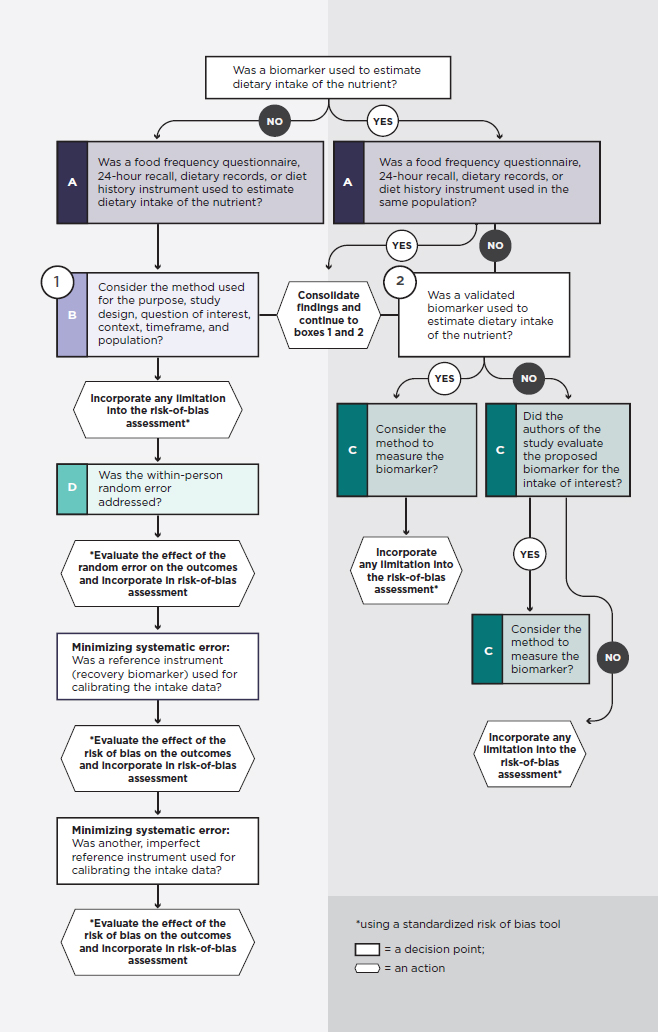

The committee concludes that no single satisfactory approach exists to accurately measure dietary intake, and that each study and methodology needs to be assessed based on its own merit, taking into consideration potential risk of bias. The committee developed a decision guide (see Figure 4-2) for use in considering dietary intake methodological issues in studies on diet and chronic disease and in incorporating questions in the risk-of-bias assessment. For example, to assess risk of bias2 related to dietary intake methods, the questions in Figure 4-2 could be used as guidance. In addition, as mentioned in Chapter 6, before conducting a risk of bias assessment, a priori hypotheses could be developed to explain potential heterogeneity in the relevant PICO categories (e.g., intervention). In this way, if an apparent effect modifier is found, for example due to the specific measure of dietary intake used, inferences would be substantially stronger.

___________________

2 As mentioned in Chapter 6, until a validated risk-of-bias tool is developed for the field of nutrition and chronic disease, the existing risk-of-bias tools could be expanded with questions relevant to nutrition, including questions related to dietary intake assessment.

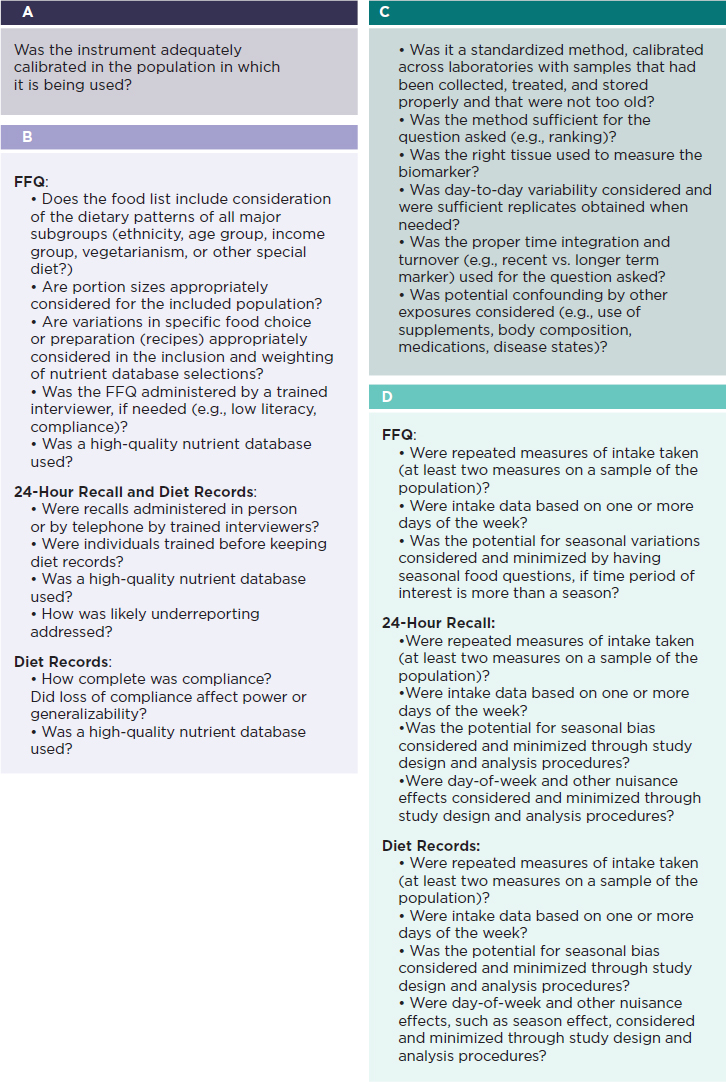

Specifically, based on a systematic search of the scientific literature related to validation of dietary assessment methods, DRI committees will select a priori criteria to define the most accurate dietary intake methods, such as those that use validation and calibration methods to minimize bias. Second, they will carefully review the methodologies in the individual studies to assess the seriousness of the risk of bias (see also Chapter 6), by asking questions about the method and its potential limitations (see Table 4-1), following the decision guide in Figure 4-2. The questions will vary depending on whether a self-report method or a biomarker (or both) have been used. These questions are meant to give committees a sense of the potential biases in the study and whether the biases are serious enough to either exclude or include the studies and the potential limitations. For example, if a food frequency questionnaire was used in a study, questions in Sidebars A and B should provide information about the potential limitations of the methods. Furthermore, questions in Sidebar D will help ascertain whether approaches have been applied to address random error; any systematic error also should be questioned (see also Table 4-1).

In conclusion, accuracy in methods to measure the intake of NOFS is an essential feature in the evidence used as basis for establishing DRIs, particularly when characterizing quantitative relationships. Therefore, consideration of the potential biases in these methods is an essential task of DRI committees.

NOTE: FFQ = food frequency questionnaire; HR = hour. * Using a standardized risk-of-bias tool.

REFERENCES

Beaton, G. H., J. Milner, P. Corey, V. McGuire, M. Cousins, E. Stewart, M. de Ramos, D. Hewitt, P. V. Grambsch, N. Kassim, and J. A. Little. 1979. Sources of variance in 24-hour dietary recall data: Implications for nutrition study design and interpretation. Am J Clin Nutr 32(12):2546-2559.

Guertin, K. A., S. C. Moore, J. N. Sampson, W. Y. Huang, Q. Xiao, R. Z. Stolzenberg-Solomon, R. Sinha, and A. J. Cross. 2014. Metabolomics in nutritional epidemiology: Identifying metabolites associated with diet and quantifying their potential to uncover diet-disease relations in populations. Am J Clin Nutr 100(1):208-217.

Lampe, J. W., Y. Huang, M. L. Neuhouser, L. F. Tinker, X. Song, D. A. Schoeller, S. Kim, D. Raftery, C. Di, C. Zheng, Y. Schwarz, L. Van Horn, C. A. Thomson, Y. Mossavar-Rahmani, S. A. Beresford, and R. L. Prentice. 2017. Dietary biomarker evaluation in a controlled feeding study in women from the Women’s Health Initiative cohort. Am J Clin Nutr 105(2):466-475.

Moshfegh, A. J., D. G. Rhodes, D. J. Baer, T. Murayi, J. C. Clemens, W. V. Rumpler, D. R. Paul, R. S. Sebastian, K. J. Kuczynski, L. A. Ingwersen, R. C. Staples, and L. E. Cleveland. 2008. The US Department of Agriculture Automated Multiple-Pass Method reduces bias in the collection of energy intakes. Am J Clin Nutr 88(2):324-332.

NCI (National Cancer Institute). 2017. Dietary Assessment Primer. https://dietassessmentprimer.cancer.gov (accessed May 11, 2017).

Neuhouser, M. L., L. Tinker, P. A. Shaw, D. Schoeller, S. A. Bingham, L. V. Horn, S. A. Beresford, B. Caan, C. Thomson, S. Satterfield, L. Kuller, G. Heiss, E. Smit, G. Sarto, J. Ockene, M. L. Stefanick, A. Assaf, S. Runswick, and R. L. Prentice. 2008. Use of recovery biomarkers to calibrate nutrient consumption self-reports in the Women’s Health Initiative. Am J Epidemiol 167(10):1247-1259.

NRC (National Research Council). 1986. Nutrient adequacy: Assessment using food consumption surveys. Washington, DC: National Academy Press.

Playdon, M. C., S. C. Moore, A. Derkach, J. Reedy, A. F. Subar, J. N. Sampson, D. Albanes, F. Gu, J. Kontto, C. Lassale, L. M. Liao, S. Mannisto, A. M. Mondul, S. J. Weinstein, M. L. Irwin, S. T. Mayne, and R. Stolzenberg-Solomon. 2017. Identifying biomarkers of dietary patterns by using metabolomics. Am J Clin Nutr 105(2):450-465.

Prentice, R. L., Y. Mossavar-Rahmani, Y. Huang, L. Van Horn, S. A. Beresford, B. Caan, L. Tinker, D. Schoeller, S. Bingham, C. B. Eaton, C. Thomson, K. C. Johnson, J. Ockene, G. Sarto, G. Heiss, and M. L. Neuhouser. 2011. Evaluation and comparison of food records, recalls, and frequencies for energy and protein assessment by using recovery biomarkers. Am J Epidemiol 174(5):591-603.

Song, X., Y. Huang, M. L. Neuhouser, L. F. Tinker, M. Z. Vitolins, R. L. Prentice, and J. W. Lampe. 2017. Dietary long-chain fatty acids and carbohydrate biomarker evaluation in a controlled feeding study in participants from the Women’s Health Initiative cohort. Am J Clin Nutr.

Taylor, C. L. 2008. Framework for DRI development: Components “known” and components “to be explored.” Washington, DC.

Thompson, F. E., S. I. Kirkpatrick, A. F. Subar, J. Reedy, T. E. Schap, M. M. Wilson, and S. M. Krebs-Smith. 2015. The National Cancer Institute’s Dietary Assessment Primer: A resource for diet research. J Acad Nutr Diet 115(12):1986-1995.

Tinker, L. F., G. E. Sarto, B. V. Howard, Y. Huang, M. L. Neuhouser, Y. Mossavar-Rahmani, J. M. Beasley, K. L. Margolis, C. B. Eaton, L. S. Phillips, and R. L. Prentice. 2011. Biomarker-calibrated dietary energy and protein intake associations with diabetes risk among postmenopausal women from the Women’s Health Initiative. Am J Clin Nutr 94(6):1600-1606.

Turban, S., E. R. Miller, 3rd, B. Ange, and L. J. Appel. 2008. Racial differences in urinary potassium excretion. J Am Soc Nephrol 19(7):1396-1402.

Willett, W. C., L. Sampson, M. J. Stampfer, B. Rosner, C. Bain, J. Witschi, C. H. Hennekens, and F. E. Speizer. 1985. Reproducibility and validity of a semiquantitative food frequency questionnaire. Am J Epidemiol 122(1):51-65.

Yetley, E. A., A. J. MacFarlane, L. S. Greene-Finestone, C. Garza, J. D. Ard, S. A. Atkinson, D. M. Bier, A. L. Carriquiry, W. R. Harlan, D. Hattis, J. C. King, D. Krewski, D. L. O’Connor, R. L. Prentice, J. V. Rodricks, and G. A. Wells. 2017. Options for basing Dietary Reference Intakes (DRIs) on chronic disease endpoints: Report from a joint US-/ Canadian-sponsored working group. Am J Clin Nutr 105(1):249S-285S.

Zheng, C., S. A. Beresford, L. Van Horn, L. F. Tinker, C. A. Thomson, M. L. Neuhouser, C. Di, J. E. Manson, Y. Mossavar-Rahmani, R. Seguin, T. Manini, A. Z. LaCroix, and R. L. Prentice. 2014. Simultaneous association of total energy consumption and activity-related energy expenditure with risks of cardiovascular disease, cancer, and diabetes among postmenopausal women. Am J Epidemiol 180(5):526-535.