Summary

Science, technology, engineering, and mathematics (STEM) professionals generate a stream of scientific discoveries and technological innovations that fuel job creation and national economic growth. Undergraduate STEM education prepares graduates for today’s STEM professions and those of tomorrow, while also helping all students develop knowledge and skills they can draw on in a variety of occupations and as citizens. However, many capable students intending to major in STEM switch to another field or drop out of higher education altogether, partly because of documented weaknesses in STEM teaching, learning, and student supports. More than 5 years ago, the President’s Council of Advisors in Science and Technology (PCAST) wrote that improving undergraduate STEM education to address these weaknesses is a national imperative.

Many initiatives are now under way to improve the quality of undergraduate STEM teaching and learning. Some focus on the national level, others involve multi-institution collaborations, and others take place on individual campuses. At present, however, policy makers and the public do not know whether these various initiatives are accomplishing their goals and leading to nationwide improvement in undergraduate STEM education. Recognizing this challenge, PCAST recommended that the National Academies of Sciences, Engineering, and Medicine develop metrics to evaluate undergraduate STEM education. In response, the National Science Foundation charged the National Academies to conduct a study of indicators that could be used to monitor the status and quality of undergraduate STEM education over time.

The committee was charged to outline a framework and a set of indicators to document the status and quality of undergraduate STEM education at the national level over multiple years (see Box 1-1 for the full study charge). In Phase I, the committee would identify objectives for improving undergraduate STEM education at both 2-year and 4-year institutions; review existing systems for monitoring undergraduate STEM education; and develop a conceptual framework for the indicator system. In Phase II, the committee would develop a set of indicators linked to the objectives identified in Phase I; identify existing and additional measures to track progress toward the objectives; discuss the feasibility of including such measures in existing data collection programs; identify additional research needed to fully develop the indicators; and make recommendations regarding the roles of various federal and state institutions in supporting the needed research and data collection.

In addressing its charge, the committee focused on national-level indicators. This focus was important because current undergraduate STEM education reform initiatives tend to gather detailed, local data that are useful and appropriate for local feedback and improvement at the individual, departmental, institutional, or system level. However, such detailed data are not adequate for providing a broad, national picture of STEM teaching and learning.

CONCEPTUAL FRAMEWORK FOR THE INDICATOR SYSTEM

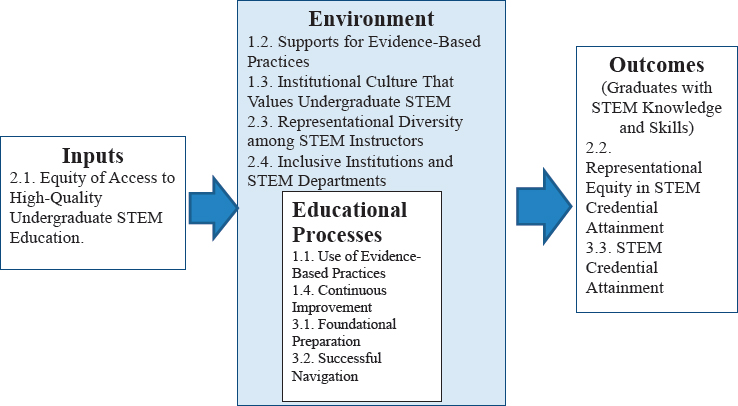

Drawing on organizational theory and research, the committee developed a basic model of higher education: see Figure S-1. The model represents undergraduate education as a complex system comprising four interrelated components: inputs, incoming students; processes, students’ educational experiences inside and outside the classroom; outcomes, including mastery of STEM concepts and skills and completion of STEM credentials; and environment, the structural and cultural features of academic departments and institutions. The environment surrounds and influences the processes and outcomes, and the inputs, processes, and environment all influence student outcomes.

With this model as a framework, the committee considered the current status of undergraduate STEM education and what would be required to improve it.

CONCLUSION 1 Improving the quality and impact of undergraduate science, technology, engineering, and mathematics (STEM) education will require progress toward three overarching goals:

- Goal 1: Increase students’ mastery of STEM concepts and skills by engaging them in evidence-based STEM educational practices and programs.

- Goal 2: Strive for equity, diversity, and inclusion of STEM students and instructors by providing equitable opportunities for access and success.

- Goal 3: Ensure adequate numbers of STEM professionals by increasing completion of STEM credentials as needed in the different disciplines.

As shown in Figure S-1, these goals are interconnected and mutually supportive. They target improvement in various components of the undergraduate education system and interactions among these components that together will enhance students’ success in STEM education, whether they are taking general education courses or pursuing a STEM credential. Some policy makers, parents, and students are particularly concerned about students’ outcomes, especially the employment outcomes included in the third goal. However, attaining this goal is not possible without first attending to the STEM educational processes and environment reflected in the first and second goals. Using evidence-based practices (Goal 1) and striving for equity, diversity, and inclusion (Goal 2) will help to ensure adequate

NOTE: The conceptual model focuses on objectives for the institutional environment closest to students and instructors. Elements of the larger environment (e.g., parents, employers, state governments) are not included in the model for the purpose of parsimony.

numbers of STEM professionals and prepare all graduates with core STEM knowledge and skills (Goal 3).

The study was conducted in the context of calls for greater accountability in higher education and ongoing efforts to define and measure higher education quality, both generally and at individual colleges and universities. For example, student learning is a desired educational outcome. This outcome is reflected in the committee’s goal of increasing all students’ mastery of STEM concepts and skills, whether they are taking general education courses or pursuing a STEM credential. Some higher education and professional associations have developed common disciplinary (or general) learning goals, along with assessments of students’ progress toward these goals, to measure quality. However, establishing common learning goals is challenging because STEM is characterized by rapid discoveries, ongoing development of new knowledge and skills, and continual emergence of new subdisciplines and interdisciplinary fields, which result in ongoing changes to learning goals. In engineering, for example, most graduating students take the Fundamentals of Engineering Exam, which is offered in seven different subdisciplines. A common national assessment of core STEM concepts and skills does not exist. Therefore, the committee proposes to monitor progress in student learning through objectives and indicators of the adoption of teaching practices that have been shown by research to enhance student learning.

OBJECTIVES AND INDICATORS

The committee identified 11 objectives to advance the three overarching goals and 21 indicators to measure progress toward these objectives: see Table S-1. The proposed indicators reflect the complexity of the undergraduate education system, which includes a diverse array of student, instructor,1 departmental, and institutional behaviors, practices, policies, and perceptions. They range from use of evidence-based STEM educational practices both in and outside of classrooms (Objective 1.1) to inclusive environments in institutions and STEM departments (Objective 2.3) to successful navigation into and through STEM programs (Objective 3.2).

Multiple indicators using multiple measures will be needed to fully capture national progress toward each objective. For the purpose of practical feasibility, however, the committee proposes no more than three indicators for each objective. The proposed set of 21 indicators is an important first step for monitoring trends over time in the quality of undergraduate

___________________

1 The committee uses the term “instructors” to refer to all individuals who instruct undergraduates, including tenured and tenure-track faculty, adjunct and part-time instructors, and graduate student instructors.

TABLE S-1 Goals, Objectives, and Indicators to Monitor Progress in Undergraduate Science, Technology, Engineering, and Mathematics (STEM) Education

| Conceptual Framework | Objective | Indicator |

|---|---|---|

| Goal 1: Increase Students’ Mastery of STEM Concepts and Skills by Engaging Them in Evidence-Based STEM Educational Practices and Programs | ||

| Process | 1.1 Use of evidence-based STEM educational practices both in and outside of classrooms | 1.1.1 Use of evidence-based STEM educational practices in course development and delivery |

| 1.1.2 Use of evidence-based STEM educational practices outside the classroom | ||

| Environment | 1.2 Existence and use of supports that help STEM instructors use evidence-based educational practices | 1.2.1 Extent of instructors’ involvement in professional development |

| 1.2.2 Availability of support or incentives for evidence-based course development or course redesign | ||

| Environment | 1.3 An institutional culture that values undergraduate STEM instruction | 1.3.1 Use of valid measures of teaching effectiveness |

| 1.3.2 Consideration of evidence-based teaching in personnel decisions by departments and institutions | ||

| Process | 1.4 Continuous improvement in STEM teaching and learning | No indicators: see “Challenges of Measuring Continuous Improvement” in Chapter 2 |

| Goal 2: Strive for Equity, Diversity, and Inclusion of STEM Students and Instructors by Providing Equitable Opportunities for Access and Success | ||

| Input | 2.1 Equity of access to high-quality undergraduate STEM educational programs and experiences | 2.1.1 Institutional structures, policies, and practices that strengthen STEM readiness for entering and enrolled college students |

| 2.1.2 Entrance to and persistence in STEM academic programs | ||

| 2.1.3 Equitable student participation in evidence-based STEM educational practices | ||

| Conceptual Framework | Objective | Indicator |

|---|---|---|

| Outcome | 2.2 Representational diversity among STEM credential earners | 2.2.1 Diversity of STEM degree and certificate earners in comparison with diversity of degree and certificate earners in all fields |

| 2.2.2 Diversity of students who transfer from 2- to 4-year STEM programs in comparison with diversity of students in 2-year STEM programs | ||

| 2.2.3 Time to degree for students in STEM academic programs | ||

| Environment | 2.3 Representational diversity among STEM instructors | 2.3.1 Diversity of STEM instructors in comparison with diversity of STEM graduate degree holders |

| 2.3.2 Diversity of STEM graduate student instructors in comparison with diversity of STEM graduate students | ||

| Environment | 2.4 Inclusive environments in institutions and STEM departments | 2.4.1 Students pursuing STEM credentials feel included and supported in their academic programs and departments |

| 2.4.2 Instructors teaching courses in STEM disciplines feel supported and included in their departments | ||

| 2.4.3 Institutional practices are culturally responsive, inclusive, and consistent across the institution | ||

| Goal 3: Ensure Adequate Numbers of STEM Professionals | ||

| Process | 3.1 Foundational preparation for STEM for all students | 3.1.1 Completion of foundational courses, including developmental education courses, to ensure STEM program readiness |

| Conceptual Framework | Objective | Indicator |

|---|---|---|

| Process | 3.2 Successful navigation into and through STEM programs of study | 3.2.1 Retention in STEM programs, course to course and year to year |

| 3.2.2 Transfers from 2- to 4-year STEM programs in comparison with transfers to all 4-year programs | ||

| Outcome | 3.3 STEM credential attainment | 3.3.1 Number of students who attain STEM credentials over time, disaggregated by institution type, transfer status, and demographic characteristics |

STEM education. In the future, as STEM educational practices evolve, instructional and assessment technology advances, and measurement systems improve, additional indicators may be needed, and some of the proposed 21 indicators may no longer be needed.

The committee’s indicators are best viewed in concert with one another. Although an individual indicator can provide a discrete marker of the quality of undergraduate STEM teaching and learning, the conceptual framework tells a more complete story, demonstrating how relationships across indicators facilitate movement toward the identified objectives and goals.

In light of current pressures for institutional accountability, the committee stresses that the primary goal of the indicator system is to allow federal agencies and other stakeholders (e.g., higher education associations) to monitor the status and quality of undergraduate STEM education over time, at the national level. The committee envisions that those overseeing the system might make institutional data accessible to individual institutions, state higher education systems, or groups of institutions for the purpose of monitoring and improving their own programs. Although such accessibility might also allow these users to compare or rate institutions with the goal of holding them accountable, that is not the intended purpose of the committee’s proposed indicator system.

DATA FOR THE INDICATOR SYSTEM

The committee reviewed existing systems and data sources for monitoring undergraduate STEM education, considering whether they were rep-

resentative of the national universe of students and institutions and could provide current data for the proposed indicators.

Federal Data Sources

The Integrated Postsecondary Education Data System (IPEDS) obtains data through regular annual surveys of 2-year and 4-year, public and private (both for-profit and nonprofit) institutions. The response rate is nearly universal because institutions must respond if they wish to remain eligible for student financial aid. These high-quality current data cover a range of topics related to the committee’s goals and objectives, including detailed annual data on completion of degrees and certificates in different fields of study. However, the IPEDS data focus only on first-time, full-time students who enter and graduate from a given institution. As a result, they provide limited capacity to track students’ increasingly complex pathways into and through STEM programs, including stopping out for a time, transferring across institutions, and enrolling part time.

CONCLUSION 2 To monitor the status and quality of undergraduate science, technology, engineering, and mathematics education, federal data systems will need additional data on full-time and part-time students’ trajectories across, as well as within, institutions.

Although they are conducted less frequently than IPEDS, federal longitudinal surveys of student cohorts, such as the 2004/09 Beginning Postsecondary Students Longitudinal Study conducted by the National Center for Education Statistics (NCES), provide useful data related to the committee’s objectives and indicators. The survey samples are carefully designed to be nationally representative, and multiple methods are used to obtain strong response rates. The resulting data can be used to track students’ trajectories across institutions and fields of study, including STEM fields. Another formerly useful source was NCES’s National Study of Postsecondary Faculty, which provided data on instructors’ disciplinary backgrounds, responsibilities, and attitudes, but it was discontinued in 2004.

CONCLUSION 3 To monitor the status and quality of undergraduate science, technology, engineering, and mathematics education, recurring longitudinal surveys of instructors and students are needed.

The committee found that IPEDS and other federal data sources generally allow data to be disaggregated by students’ race and ethnicity and gender. However, conceptions of diversity have broadened to include additional characteristics of students that may provide unique strengths in

undergraduate STEM education and may also create unique challenges. To fully support the indicators, federal data systems will need to include additional student characteristics.

CONCLUSION 4 To monitor progress toward equity, diversity, and inclusion of science, technology, engineering, and mathematics students and instructors, national data systems will need to include demographic characteristics beyond gender and race and ethnicity, including at least disability status, first-generation student status, and socioeconomic status.

Proprietary Data Sources

Many proprietary data sources have emerged over the past two decades in response to growing accountability pressures in higher education. Although not always nationally representative of all 2- and 4-year public and private institutions, some of these sources include large samples of institutions and address the committee’s goals and objectives. They rely on different types of data, including administrative data (also referred to as student unit record data) and survey data. In addition, higher education reform consortia have developed new measures of student progress in undergraduate education that are related to the committee’s goals, objectives, and indicators. One of particular note is a measure of students’ program of study selection, which uses data on students’ first-year course enrollments to identify their intended major. Another measure, transfer rate, captures students who transfer from 2-year programs into longer duration programs at their initial or subsequent institution(s). Some of these measures overlap with the committee’s indicators.

Data for the Indicators

Based on its review of existing data sources, the committee considered research needs and the availability of data for each of the 21 indicators it proposes. For some indicators, further research is needed to develop clear definitions and measurement approaches, and, overall, the availability of data for the indicators is limited. For other indicators, nationally representative datasets are available, but when those data are disaggregated, first to focus on STEM students and then to focus on specific groups of STEM students, the sample sizes become too small for statistical significance. For other indicators, no data are available from either public or proprietary sources.

CONCLUSION 5 The availability of data for the indicators is limited, and new data collection is needed for many of them:

- No data sources are currently available for most of the indicators of engaging students in evidence-based educational practices (Goal 1).

- Various data sources are available for most of the indicators of equity, diversity, and inclusion (Goal 2). However, these sources would need to include more institutions and students to be nationally representative, along with additional data elements on students’ fields of study.

- Federal data sources are available for some of the indicators of ensuring adequate numbers of science, technology, engineering, and mathematics professionals (Goal 3). However, federal surveys would need larger institutional and student samples to allow finer disaggregation of the data by field of study and demographic characteristics.

IMPLEMENTING THE INDICATOR SYSTEM

The indicator system’s potential to guide improvement in undergraduate STEM education at the national level can be realized only with new data collection by federal agencies or other organizations. The committee identified three options for obtaining the data needed to support the full suite of 21 indicators.

CONCLUSION 6 Three options would provide the data needed for the proposed national indicator system:

- Create a national student unit record data system, supplemented with expanded surveys of students and instructors (Option 1).

- Expand current federal institutional surveys, supplemented with expanded surveys of students and instructors (Option 2).

- Develop a nationally representative sample of student unit record data, supplemented with student and instructor data from proprietary survey organizations (Option 3).

For Option 1, there are bills pending in Congress to create a national student unit record data system. Such a system would support many of the proposed indicators of students’ progress in STEM programs. However, it would not provide data for the indicators related to instructors, who play a central role in engaging students in evidence-based educational experiences, nor on practices and perceptions related to equity, diversity, and inclusion. Thus, supporting the complete set of indicators in this option (as well as in the other two options) would also require regular surveys of students and instructors.

Option 2 would take advantage of the well-developed system of institutional surveys currently used to obtain IPEDS data annually from the vast majority of 2-year and 4-year institutions across the nation. In this option, these surveys would be supplemented with new measures of student progress developed by various higher education reform consortia. The additional IPEDS measures would provide much of the student data needed for the indicator system, supplemented by data from regular surveys of students and instructors.

Option 3, which might be carried out by a federal agency or another entity (e.g., a higher education association) would take advantage of the rapid growth of data collection and analysis by institutions, state higher education systems, and education reform consortia across the country. Many institutions currently provide student unit record data, and new measures of student progress calculated from these data, to a state data warehouse and also to one or more education reform consortia databases. As in Options 1 and 2, additional data from surveys would be needed to support the indicators. In this case, the federal government or other entity would contract with one or more of the survey providers to revise their survey items as needed to align with the committee’s indicators and develop nationally representative samples of public and private 2-year and 4-year institutions and STEM students and instructors.

Many of the proposed indicators represent new conceptions of key elements of undergraduate STEM education to be monitored over time. Some indicators require research as the first step toward developing clear definitions, identifying the best measurement methods, and implementing the indicator system. Following this process, after the system has been implemented and the indicators are in use, it would be valuable to carry out an evaluation study to ensure that the indicators measure what they are intended to measure. At the same time, the structure of undergraduate education continues to evolve in response to changing student demographics, funding sources, the growth of new providers, and potentially disruptive technologies. In light of these changes, it will be important to regularly review, and revise as necessary, the proposed STEM indicators and the data and methods for measuring them.