Among the best practices discussed during the second panel session were guidelines and checklists (see Chapter 4). In this session, panelists delved further into the practical application and effectiveness of guidelines and checklists for enhancing transparent reporting of biomedical research (see Box 5-1 for corresponding workshop session objectives).

Sowmya Swaminathan, head of editorial policy and research integrity at Nature Research, and Malcolm Macleod discussed the impacts of several current checklists and provided an overview of the Minimum Standards Working Group’s development and pilot testing of the materials, design, analysis, and reporting (MDAR) framework and checklist. Shai Silberberg discussed the uptake and effectiveness of checklists and strategies for improving adherence. The session was moderated by Barry

Coller, physician in chief, vice president for medical affairs, and David Rockefeller Professor at The Rockefeller University.

To open the panel session, Coller described an early example of the successful use of a checklist from The Checklist Manifesto by Atul Gawande (2009). In 1935, the U.S. Army held a competition to award a contract for production of a new long-range bomber. Boeing’s entry, the Model 299 (later designated the B-17), was superior to the other entries in key areas such as design, payload capacity, and performance. However, during an evaluation flight, the anticipated winner of the contract climbed, stalled, and crashed, killing the pilot and a crewmember. The investigation concluded that the crash was the result of “pilot error due to an unprecedented complexity” of the plane, Coller said. The experienced pilot had failed to release the lock on the elevator and rudder controls, and it was said at the time that the Model 299 was “too much plane for one man to fly,” Coller relayed. Boeing lost the contract and came close to bankruptcy.

Still interested in the technology, the Army purchased several Model 299s and worked with test pilots to improve safety. As the original test pilot was highly trained, it was concluded that additional training was not the answer. The solution they reached, Coller said, was to create a concise, step-by-step checklist for takeoff, landing, and taxiing that would fit on an index card. Ultimately, nearly 13,000 B-17 bombers were built, and pilots logged 1.8 million miles without any further accidents.

Coller listed several of the lessons learned about flying a B-17 safely in 1935 and adapted them to a performing and reporting science in 2019. First, he said the alignment of incentives for flying the plane safely are absolute because not flying safely can result in death. For performing and reporting science, the alignment of incentives is “more nuanced and subtle.” Coller described the complexity of flying a plane safely in 1935 as analogue (e.g., dials, binary switches), while science today exists in the digital world. Although pilots needed to make many decisions to fly the B-17 safely, the number of decisions was finite; however, he said the number of decisions involved in performing and reporting science today is “virtually infinite.” A B-17 pilot’s dependence on others involved a limited team, while performing and reporting science depends on a greatly expanded universe of others. Finally, Coller said, the dependence on “black boxes” by pilots in 1935 was finite and he noted they could actually “kick the tires.” In science, what happens in the black boxes can be vital, and it is increasingly difficult to know the quality (e.g., an error in one line of code in one algorithm can have far-reaching effects if that algorithm is used widely).1

___________________

1 A black box in the context of the sciences refers to part of a process or pathway between the inputs and the outputs for which the mechanisms are unknown or are not well understood by the user.

CHECKLIST IMPLEMENTATION BY LIFE SCIENCE JOURNALS: TOWARD MINIMUM REPORTING STANDARDS FOR RESEARCH

Sowmya Swaminathan, Head, Editorial Policy and Research Integrity, Nature Research

Malcolm Macleod, Professor of Neurology and Translational Neuroscience, University of Edinburgh

Swaminathan and Macleod described several examples of checklist initiatives leading up to the creation of the Minimum Standards Working Group, a group of journal editors and experts on reproducibility that has developed minimum standards for reporting in life sciences.2

As background, Macleod shared an example of how poor preclinical study quality can lead to bias in published animal studies, resulting in serious implications for translation to clinical trials. The neuroprotective drug, NXY-059, was shown to be efficacious in animal studies, but the drug was ineffective in a large clinical trial. A systematic review of the published animal studies revealed that, although the overall animal data supported the efficacy conclusion, the majority of the studies did not report randomization, blinded conduct of the experiment, and blinded outcome assessment. The few studies that were of high quality (randomized and blinded) reported significantly lower treatment efficacy (Macleod et al., 2008).

To understand the scale of the problem, Macleod and colleagues assessed the publications included in the Research Assessment Exercise, which evaluated the quality of research at five leading UK institutions. More than 1,000 publications involving animal research were assessed for their reporting of the four key items recommended by Landis and colleagues as the minimum necessary for transparent reporting: blinding, inclusion and exclusion criteria, randomization, and sample size calculation (Landis et al., 2012). Macleod found that less than 20 percent reported blinding, 10 percent reported inclusion and exclusion criteria, 15 percent of the papers reported randomization, and 2 percent reported power calculations. Overall, he said, 68 percent of the papers assessed reported none of these elements, and one paper reported doing all of them. These types of examples have led to a range of initiatives to improve research, including various guidelines and checklists.

___________________

2 The formation of the working group is described here: https://osf.io/preprints/metaarxiv/9sm4x (accessed December 14, 2019).

Checklists as a Solution

Nature Journals Reporting Checklist for Life Science Papers

In 2013, Nature Research announced the implementation of measures to improve the reporting of life science research published in its journals.3 A key component of this initiative, Swaminathan said, was the development of a reporting checklist that authors are now required to include with their manuscript submission.4 The checklist helps to facilitate more complete reporting of study details, establishes expectations for reporting of statistics, and provides journal policies on the sharing of data and code. The author’s completed checklist is provided to the peer reviewers and journal editors who monitor compliance.

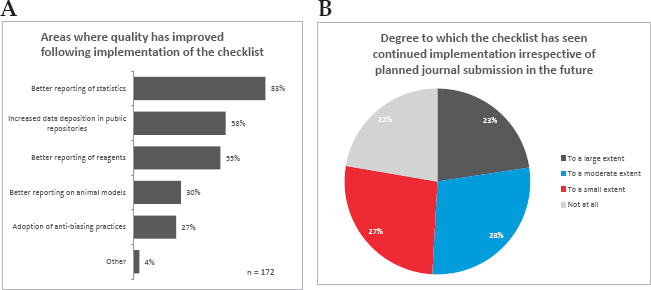

A 2017 survey of authors who had published in Nature journals found that 83 percent of respondents said “the checklist had significantly improved reporting of statistics within papers published in Nature journals,” Swaminathan said. Respondents also perceived improved reporting of reagents and animal models, and increased data deposition in public repositories (see Figure 5-1, panel A). Although the primary goal of implementing the checklist was to improve reporting quality in published papers, Swaminathan said that it was hoped that it might also raise awareness and impact research practice. In this regard, 78 percent of respondents said they continue to use the checklist to some extent in their own work, regardless of planned journal submission (see Figure 5-1, panel B).5

SOURCES: Swaminathan presentation, September 25, 2019, from the 2017 survey of published Nature journal authors (Nature Research, 2018, and footnote 5 below).

___________________

3 See https://www.nature.com/news/polopoly_fs/1.12852!/menu/main/topColumns/topLeftColumn/pdf/496398a.pdf (accessed November 20, 2019).

4 Available at https://media.nature.com/full/nature-assets/ncomms/authors/ncomms_lifesciences_checklist.pdf (accessed November 20, 2019).

5 For complete data and related materials, see https://figshare.com/articles/Nature_Reproducibility_survey_2017/6139937 (accessed November 20, 2019).

Summarizing the experience with the Nature Research journals checklist, Swaminathan said the use of checklists can improve reporting standards and impact research practice, but she emphasized that checklists need to be mandatory and compliance must be monitored. She acknowledged that mandates pose additional burdens for authors, and monitoring compliance is resource intensive. In addition, researchers must contend with a wide diversity of policies from their institutions, funders, and publishers. Journals are “at the end of the process,” she said, and achieving a broad shift in research practice will require initiatives targeting the beginning, within laboratories and academic institutions.

Nature Publishing Group Quality in Publication (NPQIP) Study

Another study, described by Macleod, assessed the impact of the Nature Research reporting checklist for life science papers. The study evaluated reporting quality in published papers that had been submitted after the policy requiring checklist completion was implemented by Nature, compared with reporting quality in publications that had been submitted to Nature journals before policy implementation, and also to similar papers published in other (non-Nature) journals. Macleod reported that there were substantial increases in reporting of all four of the items identified by Landis (blinding, reporting inclusions and exclusions, randomization, and sample size calculation) after the requirement for checklist submission was implemented by Nature Publishing Group (NPQIP Collaborative Group, 2019). NPQIP demonstrates that “a checklist, on its own, is not enough,” Macleod said.

Minimum Standards Working Group

The Minimum Standards Working Group includes editors and experts in reproducibility from Nature Research, the Public Library of Science, Science/American Association for the Advancement of Science, Cell Press, eLIFE, Wiley, the Center for Open Science, and the University of Edinburgh. The aim of the working group was to “improve transparency and reproducibility by defining minimum reporting standards in life sciences,” which Swaminathan said includes biological, biomedical, and preclinical research. She added that the working group, assembled in 2017, was inspired by the success of the International Committee of Medical Journal Editors in influencing clinical trial reporting and the impact of the Consolidated Standards of Reporting Trials (CONSORT) checklist.

The working group consulted with external experts and stakeholders and referenced existing journal checklists and policy frameworks (including the Nature Research checklist, Enhancing the QUAlity and Transparency Of health Research [EQUATOR] Network guidelines, and Transparency and Openness Promotion [TOP] guidelines mentioned in

Appendix B, and others). The work was also informed by meta-research on the implementation of checklists and the National Academies consensus study reports Reproducibility and Replicability in Science (NASEM, 2019) and Open Science by Design (NASEM, 2018).

The working group issued the following three key outputs:

- A minimum standards framework, which establishes minimum expectations of transparency across the core areas of materials, design, analysis, and reporting;

- A minimum standards checklist, which is an implementation tool to facilitate compliance with the framework; and

- An elaboration document, which provides context for the minimum standards framework and guidance for using the checklist.

The three documents have been publicly released as the MDAR Framework, the MDAR Checklist for Authors, and the MDAR Framework and Checklist Elaboration Document, and Swaminathan encouraged participants to provide feedback.6 The target audiences for the deliverables are journals and publishing platforms, as well as research institutions, funders, and other stakeholders, she said. The framework and checklist are broadly applicable across the research life cycle, from study design and grant submission through to manuscript submission, peer review, and publication, and are also intended as a teaching tool.

MDAR Framework Elements

Swaminathan elaborated on the four reporting categories of the MDAR framework, listing the key elements that the working group identified for each:

- “Materials: biological reagents, lab animals, model organisms, animals in the field, unique specimens

- Design: study/experimental design, protocols, statistics, methodologies, dual-use research consent

- Analysis: data, code, statistics as relevant to analysis

- Reporting: discipline-specific guidelines and standards.”

The framework also discusses two levels of reporting, the “minimum” required level and a recommended “best practice” level, both of

___________________

6 The three key outputs of the Minimum Standards Working Group are available at https://osf.io/xfpn4 (accessed November 20, 2019), https://osf.io/bj3mu (accessed November 20, 2019), and https://osf.io/xzy4s (accessed November 20, 2019), respectively.

which include information on the accessibility and unambiguous identification of the elements reported. By adopting the MDAR framework, Swaminathan said, a stakeholder is committing to incorporate the minimum standards into their policies.

MDAR Checklist Pilot Testing

Author and Editor Perceptions Survey

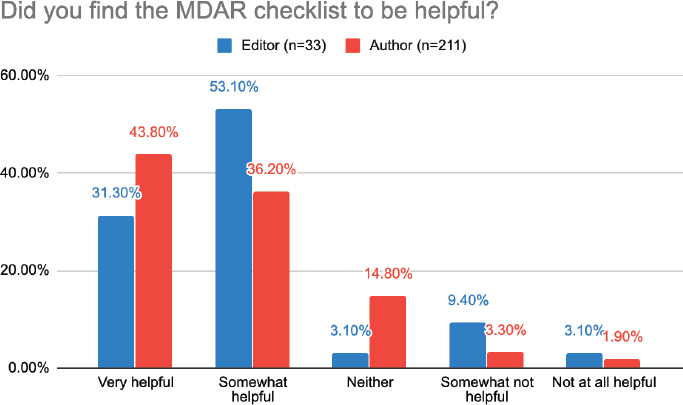

The first objectives of the MDAR pilot test were to collect authors’ and editors’ perceptions of the checklist (e.g., usefulness, accessibility, missing elements, impact on manuscript processing times). Surveys were done of editors from 13 journals,7 and of 211 authors completing checklists (see Figure 5-2). Swaminathan summarized that the majority of authors found the checklist tool to be helpful, with 44 percent of authors responding “very helpful” and 36 percent responding “somewhat helpful.”8 The

NOTE: MDAR = materials, design, analysis, and reporting.

SOURCES: Swaminathan presentation, September 25, 2019. This figure is taken from the presentation, “Summary results of author and editor responses. MDAR working group, September 2019,” available at https://osf.io/znq64 (accessed December 14, 2019).

___________________

7BMC Microbiology, Ecology & Evolution, eLife, EMBO journals, Epigenetics, F1000R, Molecular Cancer Therapeutics, Microbiology Open, PeerJ, PLOS Biology, PNAS, Science, Scientific Reports.

8 Complete data from the author and editor surveys are available at https://osf.io/gqsmp (accessed November 20, 2019).

majority of editors also found the MDAR checklist helpful, with 31 percent of editors responding “very helpful” and 53 percent responding “somewhat helpful.”

Evaluation of the MDAR Checklist Experience

Macleod described another evaluation of 289 manuscripts submitted to the same 13 journals. On average, editors spent 24 minutes assessing checklist performance. Only 15 of the 42 items on the checklist were relevant for more than 50 percent of the manuscripts. Macleod noted that this is not unexpected for an overarching guideline. For example, he said, a checklist item about plant-based research would not be relevant to studies that do not involve plants. Editors assessed the relevance of checklist items in the areas of materials, design, analysis, and reporting, and the extent to which they believed authors had complied. Macleod observed that there were many areas where assessors believed an item was highly relevant, but determined that few authors were reporting those items, and vice versa, where items were deemed to be irrelevant, but were highly reported.

Eighty-nine of the manuscripts were dual-evaluated by two independent assessors and agreement was determined using Kappa statistics (which Macleod explained subtracts chance agreement). Assessors agreed on the relevance of some checklist items, but for others “the agreement was not much better than [it] is by chance alone,” Macleod said. Similarly, for checklist items that both assessors agreed were relevant, there was not necessarily agreement on whether the manuscript had met the guideline criteria for reporting of the item. He noted that the confidence intervals for the Kappa scores were wide.

MDAR Experience Summary and Next Steps

Macleod summarized that “authors and editors seem to like the checklist and find it useful,” and “the time taken to check performance is short.” He suggested that spending more time per checklist item might have resulted in greater agreement among the dual assessors. Not all checklist items are relevant at all times, and a “dynamic checklist” that offers fields relevant for a particular journal, for example, might be useful. Agreement between assessors was limited, and confidence intervals were wide, but the areas of disagreement should be highlighted as areas to focus on with regard to clarity of the checklist item and the information provided in the elaboration and explanation document.

The next steps in the development of the MDAR checklist, Macleod and Swaminathan said, will be a consultation with key stakeholders and

interested individuals, and revisions of the checklist and supporting materials per the feedback.

APPROACHES TO IMPROVE ADHERENCE TO CHECKLISTS AND GUIDELINES9

Shai Silberberg, Director of Research Quality, National Institute of Neurological Disorders and Stroke

By definition, Silberberg said, a guideline is “a general rule, principle, or piece of advice.” In other words, a guideline is a suggestion to be taken into consideration for the longer term, he said, and guidelines are not generally effective at changing behavior. A checklist, by definition, is “a list of items required, things to be done, or points to be considered, used as a reminder.” Checklists are for the specific task at hand, he said.

Less Is More

A systematic review published in 2012 compared the completeness of reporting of randomized controlled trials in journals that had endorsed CONSORT versus those that had not (Turner et al., 2012). The most significant difference found was for the checklist item allocation concealment, which was reported adequately in 45 percent of the trials in the journals that endorsed CONSORT versus 22 percent of the trials in non-endorsing journals. While twice the reporting rate is impressive improvement, Silberberg pointed out that more than 50 percent of the trials published in the CONSORT-endorsing journals did not follow the guidelines. He suggested that the length of the checklist is a contributing factor to this noncompliance. Allocation concealment is very important for reducing selection bias, but it is just one of 38 items on the 2010 CONSORT. When faced with a long checklist, Silberberg said, important items can be overlooked.

“Less can be more,” Silberberg said, and he discussed the need to stage priorities. The CONSORT checklist was implemented more than two decades ago, and yet complete reporting of trial information is still lacking. He suggested a more concise checklist, “a minimum set of items that are the most crucial not to ignore.” After researchers are trained in the highest priority elements and have adopted the desired behaviors, they should then implement the next set of priority items, and so forth, continuing to stage introduction over the longer term, he suggested.

___________________

9 Silberberg stated that the opinions expressed in his presentations are his own and are not official opinions of the National Institutes of Health.

As recommended by Landis and colleagues, “At a minimum, studies should report on sample size estimation, whether and how animals were randomized, whether investigators were blind to the treatment, and the handling of data” (Landis et al., 2012, p. 187). Silberberg said there is a high risk of bias associated with these items and they form the foundation of a rigorous study. He emphasized that if the data are of poor quality, then all that follows (analysis, reporting) is of little value.

Shared Responsibility for Cultural Change

Silberberg noted that the Landis publication on transparent reporting was an output of a 2012 National Institute of Neurological Disorders and Stroke (NINDS) stakeholder workshop in improving the design and reporting of animal studies (Landis et al., 2012). Another conclusion of the 2012 NINDS workshop, he said, was that all stakeholders share responsibility. He observed that the discussions at this National Academies workshop have also emphasized the roles of all stakeholders, and he encouraged participants to ask themselves what they can contribute to creating change and promoting transparent reporting.

The need for change in the research culture has been raised throughout this National Academies workshop, Silberberg said, and changing the culture requires education and a change to the incentive structure. NINDS convened a workshop in 2018 to evaluate the extent to which scientists receive formal training in the design and conduct of research.10 A survey of 41 institutions with NINDS-funded training grants found that only 5 offered a full-length course on the principles of rigorous research, and Silberberg said that all of the elements of rigorous research cannot be covered in one course. Other institutions provided lectures (17) or mini-courses (2), but 12 provided no formal training (and 5 did not respond to the survey).

In considering why so few institutions provide formal training in research principles, Silberberg suggested that building an educational program “from scratch” is difficult and requires a significant investment of time, knowledge and expertise, motivation, and funding. He proposed the development of a free educational resource that institutions could use for their own training programs, eliminating the need to invest energy and resources in creating their own. The resource would be comprehensive, modular, adaptable, and upgradable.

___________________

10 See https://www.ninds.nih.gov/News-Events/Events-Proceedings/Events/Visionary-Resource-Instilling-Fundamental-Principles-Rigorous (accessed November 20, 2019).

Creating Communities of Champions

Silberberg summarized the recommendations from the 2018 NINDS workshop. First, “an effective educational platform should target all career stages.” Silberberg noted that the first panel of this National Academies workshop emphasized the importance of targeting trainees and early career scientists in particular. He added that many senior investigators also need training on the principles of rigorous research. Next, a culture change is needed at all levels of academic, publishing, and funding organizations. This includes a change to the incentive structure. Third, “academic institutions need to play a proactive role in changing the culture,” Silberberg said. He noted that institutions are often missing from the discussions of research culture, and tenure, promotion, and hiring committees within institutions play a central role in the research culture.

Attendees at the 2018 NINDS workshop suggested that to achieve these goals, a “grassroots effort” is needed, Silberberg said. They called for “the establishment of communities of champions within and across institutions to share resources, change culture, and support better training at all academic levels.”

Each stakeholder organization has champions for culture change, Silberberg said. To bring them together, NINDS has created a mechanism on its website for champions of rigorous research practices to self-identify and connect with others in their institution.11 NINDS is currently considering how best to support interactions of these communities of champions with others regionally, nationally, and even globally.

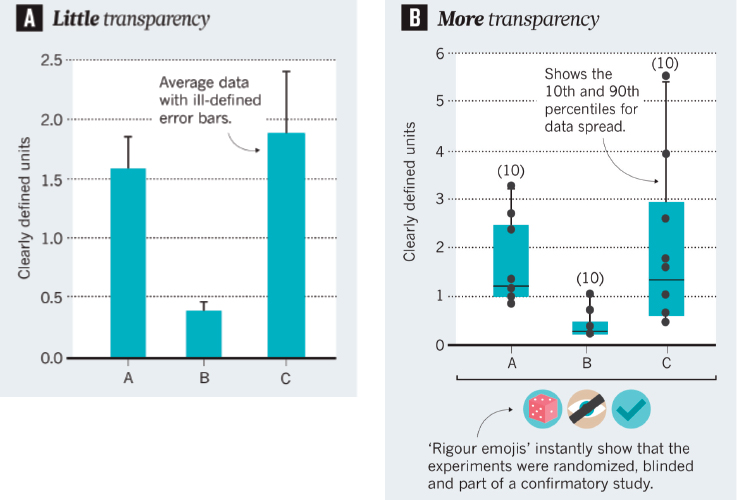

In closing, Silberberg shared an example of how communities of champions can foster improved transparency of presentations at scientific meetings (Silberberg et al., 2017). A short conference talk or poster does not generally allow for sufficient depth of information for attendees to have a sense of the rigor of the work. One approach to increasing transparency, Silberberg said, is to provide more detail in figures (e.g., individual data points, total number of samples). Another approach, he said, is to add symbols or “rigor emojis” to the figures to indicate that the study was, for example, randomized, blinded, or confirmatory (see Figure 5-3).

___________________

11 See https://www.ninds.nih.gov/Current-Research/Trans-Agency-Activities/Rigor-Transparency/RigorChampionsAndResources (accessed November 20, 2019).

SOURCES: Silberberg presentation, September 24, 2019; Silberberg et al., 2017.

DISCUSSION

Motivating Action: Champions for Culture Change

During the discussion, panelists expanded on the topic of the need for champions of culture change, including the need for grassroots efforts and what motivates stakeholders to take action.

Institutional Leadership

Coller observed that, although the focus of the workshop is transparent reporting, it has been noted throughout the discussions that reporting is the end of the process, and there should be more attention to improving the rigor of research from the start. He asked panelists what institutional leaders should be doing to promote rigorous science. He supported the concept of a community of champions, as discussed by Silberberg, and proposed the creation of a “research integrity advocate” that would be comparable to the research participant advocate position established at NIH-funded clinical research centers. The research integ-

rity advocate could be the institutional champion tasked with promoting culture change, he said.

Macleod noted that many of the institutions that have established practices to promote a rigorous research culture have been highly motivated by the need to address an incident of research malpractice. He suggested that focusing efforts primarily on addressing misconduct and preventing falsification, fabrication, and plagiarism is the wrong approach, and institutions should instead emphasize improving research performance broadly for the benefit of all.

Silberberg countered the comment that reporting is the end of the process. Science is a continuous cycle he said, and journals might be at the end of one round, but they are the beginning of the next round in that publications are then used to justify the next grant proposal or the next research plan. He agreed that journals are not responsible for enforcing rigorous science, but they have a role to play in increasing transparency. As an example, he mentioned the checklist required by Nature journals that allows peer reviewers to better assess the rigor of the study being reported. He suggested that major stakeholders are often hesitant to be the first to take action because of the potential financial ramifications. For example, university leadership will continue to push investigators to publish frequently in high-profile journals, potentially at the expense of rigor, because that is what is valued and rewarded by grant reviewers. He reiterated the need for champions and grassroots initiatives to push for change by all the major stakeholders.

Grassroots Stakeholder Efforts

As an example of a grassroots approach to championing rigorous science, Macleod mentioned the UK Reproducibility Network. The network includes self-organized local groups of early career researchers who connect for mentoring and journal clubs that promote openness and reproducibility; stakeholders (e.g., journals, funders); and academic institutions. Macleod added that academic institutions seeking to join must formally commit to promoting rigorous research and must appoint an Academic Lead for Research Improvement that is a senior-level position. Joining the UK Reproducibility Network, he said, provides a mechanism for local communities of early career researchers and their institutions to commit to creating an improved research culture without waiting to be motivated by a public misconduct scandal.

Kelly Dunham, senior manager for strategic initiatives at the Patient-Centered Outcomes Research Institute (PCORI), described the Ensuring Value in Research Funders’ Forum as an example of a grassroots stake-

holder effort.12 The forum, established in 2016, includes about 40 funding organizations and has issued a consensus statement and guiding principles intended to maximize the probability of impact of the research funded, she explained. The forum is working to “characterize what good practice looks like,” she said, and to develop minimum standards and recommendations for the larger biomedical research ecosystem. She said there is occasional pushback from some funders that a particular approach is not practicable, and she emphasized the importance of sharing examples of successes learning from each other. Dunham said funders can play a role in ensuring the results of the studies they fund are made publicly available, and PCORI has taken on the responsibility of providing transparent and well-documented results to the public. PCORI has a process of peer review for all of its funded research and publicly releases lay summaries and clinical summaries of studies.

Cindy Sheffield, project manager of the Alzheimer’s Disease Preclinical Efficacy Database (AlzPED) for NIH, referred participants to AlzPED, which she said currently includes about 900 articles “that have been evaluated for 24 elements of experimental design.”13 A goal of AlzPED is to create awareness and work toward changing the culture. She said that although they cannot evaluate rigor and transparency quantitatively, they do check and record in the database whether the elements of design are reported or not.

The Role of the Investigator

Swaminathan observed that stakeholders are increasingly aware of the problem of irreproducibility and seem interested in taking action. She noted that a survey of researchers found that they believe researchers are responsible for addressing issues of reproducibility, but a supportive institutional infrastructure (e.g., training, mentoring, funding, publishing) is needed.

Silberberg said a senior scientist can have difficulty accepting that the work they have done over the past several decades might not have been of the highest quality. Coller added that having buy-in from senior investigators is essential to effect culture change, and senior investigators are needed as champions as well. Training grants are important, he said, but institutional culture is not defined by trainees and early career investigators. Arturo Casadevall called on the scientific elite to step up and require rigorous research. “Most scientists today want to do rigorous good sci-

___________________

12 See https://sites.google.com/view/evir-funders-forum/home (accessed November 20, 2019).

13 See https://alzped.nia.nih.gov (accessed November 20, 2019).

ence, and the problem is they are caught in a system in which they are not judged by it,” he said. As discussed, much of the effort to change the culture of research has been from editors and grassroots efforts, he said, and few leading scientists have taken a stand on this issue. He lamented that the most respected scientific leaders “are often the most silent,” and are hesitant to criticize the system through which they have come up.

Cross-Sector Coordination

John Gardinier agreed with the emphasis on changing the research culture and noted in particular the need to address the impact of silos in research, including the potential for conflicting information being published by different scientific disciplines. Macleod said he had experience with different disease research communities each asserting that the others had issues with research rigor, but they did not. He said he encourages them to do a systematic review of the quality of reporting in their field, and many come to the conclusion that they do, in fact, have a problem.

Checklists and Study Design

Swaminathan said there is still a lack of general consensus regarding what is a “good study.” She said she and others believe the four items recommended by Landis and colleagues “not only should be reported, but should be incorporated into study design.” Most studies, however, do not incorporate these elements, and if these elements are reported in a publication, it is generally due to an enforcement and compliance mechanism. Coller agreed and observed that there is often agreement on what constitutes “bad science” and perhaps driving consensus on what is bad research form is a place to start.

Veronique Kiermer said that the ultimate goal is conducting well-designed studies, and that addressing study design through the implementation of a reporting checklist is a “very convoluted” approach. However, she was impressed by Swaminathan’s data that showed researchers were continuing to use the reporting checklists in their ongoing work, suggesting that checklists do have an educational aspect. Macleod said institutions can assess how their research measures up against a checklist, then work to improve in areas that are deficient, and reward the investigators who contribute to that improvement with promotion and tenure. Steven Goodman said the checklists discussed are not user friendly. He supported the concept of prioritizing key items and proposed pilot testing checklists to determine what might be useful for end users.

Another problem, as illustrated by dual evaluation of the MDAR checklist discussed by Macleod, is the lack of agreement by checklist

assessors on the compliance of a manuscript. Swaminathan emphasized the need for better coordination of concepts and language across the different stakeholders and different stages of the research process. She noted that a goal of the Minimal Standards Working Group was to establish a minimum standard that would be applicable across the research life cycle. Macleod noted that there have been efforts to coordinate the language between MDAR and the Animal Research: Reporting of In Vivo Experiments (ARRIVE) guidelines so that relevant sections are interoperable, and that tools are in development to automate assessment of checklists. He added that disagreement among assessors and peer reviewers on whether a paper meets a particular standard is often related more to the assessor’s lack of understanding of the concepts (e.g., the unit of assessment, biological versus technical replicates) than the language used in the checklist instrument. Swaminathan agreed and said in implementing the Nature Research checklist, for example, they found that authors conflated the experimental unit with the number of times an experiment had been replicated, presented aggregate data from multiple experiments as if from a single experiment, and confused technical and biological replicates. The extent to which this occurred varied by field, more so in fields “that are inherently qualitative and descriptive, but that as science has evolved, have been forced into a quantitative mold.”

Assessment and Accountability

Yarimar Carrasquillo observed that the discussions have focused on training for students and early career investigators and on checklists for reporting studies, and suggested that institutions and funding agencies need to also implement checkpoints between those stages. Just because training requirements have been met does not mean investigators continue to practice the principles. For example, institutions and funding agencies could assess and report whether trainees are actually conducting experiments that incorporate the four items recommended by Landis. Silberberg said the NIH peer-review process now requires investigators to discuss the rigor of the prior research they are citing as key support of their application. As researchers often cite their own prior work, this necessitates that they acknowledge the shortcomings. He reiterated that research is cyclical, and researchers will come to understand that it is to their advantage to conduct rigorous studies that they can then cite in their next grant application. Carrasquillo emphasized the need for accountability, and proposed a quantitative approach, with the results taken into account in funding renewal and promotion evaluations. For example, investigators could be required to report what percentage of a laboratory’s studies included blinding, inclusion–exclu-

sion criteria, randomization, and sample size calculation, and could note the reason why an element might not have been done for a particular study. Silberberg noted the limitations of a quantitative approach, for example, not all experiments can or should be blinded, and the quantitative aspect is lost once investigators can offer an explanation for each study that does not comply. He added that different standards would need to apply to exploratory versus hypothesis testing or confirmatory studies. Coller pointed to the importance of mentorship and the need to take into account “the subtle distinctions” that do not fit into a cell on a spreadsheet, but that are important in the evaluation process. Goodman proposed evaluating scientists and institutions at both ends of the performance spectrum, “not just on their best research, but by their worst.” In other words, he elaborated, it would be important to acknowledge when an investigator’s “worst” research is still of high quality. If there were some quantification, it would be understood that some research would likely fall at the bottom of the quality scale. However, a few high-impact studies would not balance an overall portfolio of “ignorable” work, he said.

Macleod said the EQIPD project is developing a quality management system that will allow laboratories to self-evaluate, implement measures to improve performance (e.g., designate a quality improvement champion, develop a strategy), and self-assert that their performance is in compliance with the requirements of the scheme. A laboratory will then have a badge as evidence of their performance level, which can be used when applying for grants, submitting manuscripts, or recruiting, for example. The system will be open source and will link supporting resources, including templates.

Training in Systematic Review

Goodman suggested that trainees and young investigators need to be empowered with the skills to conduct methodologic meta-research. Journal clubs, he said, can be an opportunity to identify methods that could be systematically reviewed in depth. This would give students an opportunity to potentially publish a paper, but it also allows them to contribute to improving methodology in their own field, he said. Students become “sensitized to the weaknesses” in the literature in their field and are empowered and motivated to contribute to change. Macleod said the doctoral program in neuroscience at the University of Edinburgh is doing this by having small groups of students conduct a systematic review of the models they will use in their laboratory research. He shared an example of a student who had then applied this in her work.

A participant from the NIH Division of Biomedical Research Workforce said that changes are forthcoming in 2020 that are designed to ensure that NIH training grants include resources for training in rigor, reproducibility, and data science.

Reporting Metadata

Anne Plant, a fellow at the National Institute of Standards and Technology, emphasized the importance of recording and reporting metadata to help deal with the uncertainty around the data. “A measurement consists of two things,” she said, “a value … and the uncertainty around that value.” There is uncertainty in each step of the research process, and while steps can be taken to reduce uncertainty, it is never eliminated. Reporting the metadata as well as the meta-analyses, and actions taken to reduce the uncertainty in the data that are collected and reported, allows researchers to “know what is known,” and with what level of confidence. Coller pointed out using an electronic notebook provides version control and audit trails and suggested that notebooks could be made available as supplementary material to a publication. Plant said researchers need a tool that would allow them to easily capture and collate all of the metadata around their protocol in real time, not after the fact.

Including Other Stakeholders

Coller prompted participants to consider other stakeholders that should be included in the discussions of transparency and reproducibility. He asked whether a checklist might help those who report on scientific advances to be “better informed about how they write about science,” or whether a checklist could help the general public better judge the quality and understand the uncertainties of the many studies in the news.

The Press

Macleod mentioned that the UK government inquiries into the practices of the British Press included inquiries into press coverage of scientific issues. Reports about what causes or cures a disease one day often contradict what was reported the previous day. During the inquiry, Macleod said, it was found that the content of news articles was often taken directly from press releases issued by research institutions. While there are issues to be addressed regarding press coverage of scientific information, he said much of the responsibility for what is reported in the press lies directly with research institutions.

Biomedical Research Investors

Macleod suggested that another stakeholder group in need of quality information about biomedical research is investors. “Those that invest in our pharmaceutical industry are completely uninformed, unaware, and unconcerned about the quality of the biomedical research endeavor,” he said. Referring to his earlier example of NXY-059, which was effective in animal models but failed in a large clinical trial, he said that the manufacturer’s share price fell by 17 percent, a value of $9.6 billion, over the 2 days after publication of the study results, and it took 7 years to recover.

The Pharmaceutical Industry

Silberberg said another stakeholder is the pharmaceutical industry. He noted that the EQIPD project is a good example of how academia and industry can work together to share data, resources, and expertise to advance product development.

Public Health

Gardenier identified public health as another community with a stake in the quality of biomedical research. Caregivers, community hospital groups, nursing homes, and others in public health administer the benefits of biomedical research to the public.

Evaluating Quality Initiatives

Macleod stressed the importance of evaluating research quality initiatives to show that an intervention is achieving the intended outcome. Depending on the type of intervention, a manufacturing process control chart could be used to monitor change, or a randomized controlled trial might be needed to determine benefit. A challenge, he said, is that vocabulary and methodology do not yet exist for this type of “research on research.” He noted the need to proceed cautiously and in a scientific way when “demanding our colleagues and peers change their practice”—he suggested developing the science and methodology and collecting evidence of the impact of interventions to effect lasting change to research practice.