13

From Perception to Pleasure: Music and Its Neural Substrates

ROBERT J. ZATORRE*‡ AND VALORIE N. SALIMPOOR*†

Music has existed in human societies since prehistory, perhaps because it allows expression and regulation of emotion and evokes pleasure. In this review, we present findings from cognitive neuroscience that bear on the question of how we get from perception of sound patterns to pleasurable responses. First, we identify some of the auditory cortical circuits that are responsible for encoding and storing tonal patterns and discuss evidence that cortical loops between auditory and frontal cortices are important for maintaining musical information in working memory and for the recognition of structural regularities in musical patterns, which then lead to expectancies. Second, we review evidence concerning the mesolimbic striatal system and its involvement in reward, motivation, and pleasure in other domains. Recent data indicate that this dopaminergic system mediates pleasure associated with music; specifically, reward value for music can be coded by activity levels in the nucleus accumbens, whose functional connectivity with auditory and frontal areas increases as a function of increasing musical reward. We propose that pleasure in music arises from interactions between cortical loops that enable predictions and expectancies to emerge from sound patterns and subcortical systems responsible for reward and valuation.

_____________

*Montreal Neurological Institute, McGill University, Montreal, QC, Canada H3A 2B4; and †Rotman Research Institute, Baycrest Centre, University of Toronto, Toronto, ON, Canada M6A 2E1. ‡To whom correspondence should be addressed. E-mail: robert.zatorre@mcgill.ca.

Some 40,000 years ago, a person—a musician—picked up a vulture bone that had delicately and precisely incised holes along its length and blew upon it to play a tune. We know this thanks to recent remarkable archeological finds (Fig. 13.1) near the Danube, where several such flutes were uncovered (Conard et al., 2009). What bears reflection here is that, for an instrument to exist in the upper Paleolithic, music must have already existed in an advanced form for many thousands of years already; else it would have been impossible to construct something as technologically advanced as a flute that plays a particular scale. We may safely infer therefore that music is among the most ancient of human cognitive traits.

MUSICAL ORIGINS

Knowing that music has ancient origins is important in establishing it as part of our original “human mental machinery,” but it does not tell us why it may have developed. The answer to this question may always remain unknown, but for insight we may turn to Darwin. One of his most well-known comments about music, from The Descent of Man, is this one: “As neither the enjoyment nor the capacity of producing musical notes are faculties of the least direct use to man in reference to his ordinary habits of life, they must be ranked among the most mysterious with which he is endowed” (Darwin, 1871). Ten years later, in his autobiography, he reflected on and lamented his own musical anhedonia with these words: “if I had to live my life again, I would have made a rule to read some poetry and listen to some music at least once every week; for perhaps the parts of my brain now atrophied would thus have been kept active through use. The loss of these tastes is a loss of happiness, and may pos-

FIGURE 13.1 Ancient bone flute. The flute, made from the radius bone of a vulture, has five finger holes and a notch at the end where it was to be blown; fine lines are precisely incised near the finger holes, probably reflecting measurements used to indicate where the finger holes were to be carved. Radiocarbon dating indicates it comes from the Upper Paleolithic period, more than 35,000 years ago. Adapted from Conard et al. (2009) with permission from Macmillan Publishers, copyright 2009.

sibly be injurious to the intellect, and more probably to the moral character, by enfeebling the emotional part of our nature” (Darwin, 1887). This insightful remark contains a possible answer to the mystery alluded to in the earlier quote, for here Darwin articulates a thought that most people would intuitively agree with: that music can generate and enhance emotions, and that its loss results in reduced happiness. He even goes so far as to suggest that music might serve to prevent atrophy of neural circuits associated with emotion, an intriguing concept.

Enhancement, communication, and regulation of emotion no doubt constitute powerful reasons for the existence, and possibly for the evolution, of music, a topic that others have addressed more specifically than we will here (Wallin et al., 2000; Hauser and McDermott, 2003; Mithen, 2005). Such lines of inquiry will not tell us why music might have such properties, however. Music is the most abstract of arts: its aesthetic appeal has little to do with relating events or depicting people, places, or things, which are the province of the verbal and visual arts. A sequence of pitches—such as might have been produced by an ancient flute—concatenated in a certain way, cannot specifically denote anything, but can certainly result in emotions. Psychological models suggest a number of distinct mechanisms associated with the many different emotional responses that music can elicit (Juslin and Sloboda, 2001). However, in the present contribution, we focus specifically on a particular aspect of musically elicited affective response: pleasure. Because pleasure and reward are linked, and there is a vast literature concerning the neural basis for reward, studying musical pleasure gives us a set of hypotheses that serve as a framework for studying what might otherwise appear as an intractable question. To understand how we get from perception to pleasure, we therefore start with an overview of the perceptual analysis of musical sounds, and then move to the neurobiology of reward, before attempting a synthesis of the two.

NEUROBIOLOGY OF MUSICAL COGNITION

In thinking of how evolution may have specifically shaped the human auditory system, we should consider what is most characteristic of the way we use sound. One obvious feature that stands out is that humans use sound to communicate cognitive representations and internal states, including emotion. Both speech and music can be thought of in this way (Patel, 2008), and one could go so far as to say they constitute species-specific signals. However, unlike the call systems of other species, ours is generative and highly recursive; that is, complex structures are created out of a limited set of primitives in a combinatorial manner by the application of syntactic rules. An important property of both speech and music that is relevant to their innate nature is that they appear in

the vast majority of members of the species fairly early in development, following a relatively fixed sequence, and taking as their input sounds from the immediate environment. A neural architecture must therefore exist such that it allows for these capacities to emerge.

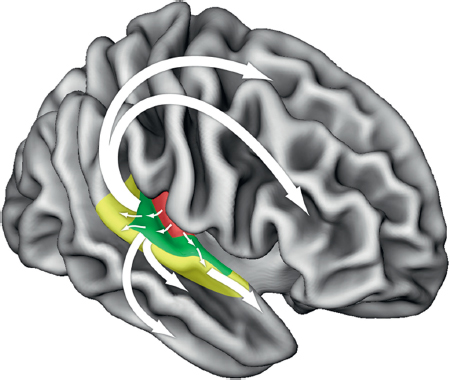

Such neural organization necessarily had to emerge from precursors, and it is therefore useful to consider some of the features of nonhuman primate auditory cortex to identify both homologies and unique properties (Rauschecker and Scott, 2009; Zatorre and Schönwiesner, 2011). Primate auditory cortex, like visual and somatosensory systems, can be thought of as organized in a hierarchical manner, such that core areas are surrounded by belt and parabelt regions within the superior portion of the temporal lobe, with corresponding patterns of feedforward and feedback projections (Rauschecker and Scott, 2009) (Fig. 13.2); both the cytoarchitecture and connectivity of the different subfields support this organization (Kaas et al., 1999). Another organizational feature

FIGURE 13.2 Schematic of putative functional pathways for auditory information processing in the human brain. Pathways originating in core auditory areas project outward in a parallel but hierarchical fashion toward belt and parabelt cortices (colored areas). Subsequently, several distinct bidirectional functional streams may be identified: Ventrally, processing streams progress toward targets in superior and inferior temporal sulcus and gyrus, eventually terminating in the inferior frontal cortex. Dorsally, projections lead toward distinct targets in parietal, premotor, and dorsolateral frontal cortices. Adapted from Zatorre and Schönwiesner (2011). [NOTE: Figure can be viewed in color in the PDF version of this volume on the National Academies Press website, www.nap.edu/catalog.php?record_id18573.]

present across species is the distinct pathways starting in the core areas and proceeding in two directions: one dorsally and posteriorly toward parietal areas, the other ventrally and anteriorly within the temporal lobe (Rauschecker and Scott, 2009); both pathways have eventual targets in separate areas of the frontal cortices and are best thought of as bidirectional. This architecture creates a series of functional loops that allow for integration of auditory information with other modalities; they also permit interactions between auditory and motor systems related to action, and to planning or organization of action, and to memory systems. These interactions with planning and memory functions result in the ability to make predictions based on past events, a topic we shall return to below.

Functional loops between frontal and temporal cortices also play a particularly important part in working memory. Unlike visual events, which can often be static (a scene, an object), auditory events are by their very nature evanescent, leaving no traces other than those that the nervous system can create. To be able to concatenate discrete auditory events such that meaning can be encoded or decoded thus requires a working memory system that can maintain information dynamically for further processing. Here may lie one important species difference: monkeys seem to have a very limited capacity to retain auditory events in working memory (Fritz et al., 2005; Scott et al., 2012) compared with their excellent visual working memory; this limitation may help explain their relative paucity of complex, combinatorial auditory communication ability. In contrast, humans have excellent ability to maintain auditory information as it comes in, which accounts for our ability to relate one sound to another that came many seconds or minutes earlier (consider a long spoken sentence whose meaning is not clear until the last word; or a long melody that only comes to a resolution at the end). Several neuroimaging studies have pointed to interactions between auditory cortices and inferior frontal regions, especially in the right hemisphere, in the processing of tonal information, in part due to working memory requirements for tonal tasks (Zatorre et al., 1994; Gaab et al., 2003). Indeed, congenital amusia, or tone deafness (Ayotte et al., 2002), may be caused by a disruption of this system (Hyde et al., 2007; Loui et al., 2009a).

The organization of frequency maps, which are similarly topographic across both monkeys (Kaas and Hackett, 2000; Petkov et al., 2006) and humans (Schönwiesner et al., 2002; Formisano et al., 2003), presents another relevant homology. However, a more relevant feature for our discussion is sensitivity to the perceptual quality of pitch. Pitch results from periodicity; such sounds have biological significance because in nature they are almost exclusively produced by vocal tracts of other animals, compared with aperiodic natural sounds (wind, water). The ability to track pitch would thus be a useful trait for an organism

to develop in navigating an acoustic environment. Neurophysiological studies have identified pitch-sensitive neurons in marmosets that respond in an invariant manner to sounds that have the same pitch but vary in their harmonic composition (Bendor and Wang, 2005), thus allowing for pitch information to be processed despite irrelevant acoustical variation. Several lines of evidence converge to suggest that a similar neural specialization for pitch may exist in the human auditory cortex, in one or more regions located lateral to core areas (Zatorre, 1988; Patterson et al., 2002; Penagos et al., 2004).

However, in humans, pitch also serves an important information-bearing function because it serves as a medium for encoding and transmitting information. Both speech and music make use of pitch variation; but its use in music seems to have some particular properties that distinguish it from its use in speech (Zatorre and Baum, 2012). Notably, pitch as used in music across many cultures tends to be organized as discrete elements, or scales (as opposed to in speech where pitch changes tend to be continuous), and these elements generally have fixed, specific frequency ratios associated with them. These properties are precisely what would be produced by an instrument such as our ancient flute, with its fixed finger holes producing discrete tones at specific pitches. Thus, music requires a nervous system able to encode and produce pitch variation with a great degree of accuracy. Substantial evidence implicates mechanisms in the right cerebral hemisphere, including pitch-specialized cortical areas, in this fine-grained, accurate pitch mechanism both in perception (Zatorre et al., 2002; Hyde et al., 2008; Zatorre and Gandour, 2008) and production (Ozdemir et al., 2006), as contrasted with the left auditory cortical system, which instead seems to be specialized for speech sounds that do not require as great accuracy in pitch tracking.

Melodies consist of combinations of individual pitches; so once separate tones are encoded by this early cortical system, combinations of pitches need to be processed. Tonal melodies can be structured in terms of the scales that they are constructed from, and the pitch contours. Both lesion (Zatorre, 1985; Stewart et al., 2006) and neuroimaging studies (Griffiths et al., 1998; Patterson et al., 2002) demonstrate that cortical areas beyond the pitch-related regions come into play as one goes from single sounds to patterns, and that these involve both the anteroventral and posterodorsal pathways, following a hierarchical organization. The global picture that emerges is that areas more distal from core and belt regions are likely involved in performing computations beyond pitch extraction, involving combinations of tonal elements, for example, related to analysis of musical interval size (Klein and Zatorre, 2011) and/or melodic contour (Lee Y-S et al., 2011). However, perhaps because of the feedback connectivity from distal regions back to core and belt areas,

there is also evidence that auditory category information may sometimes be encoded in a more distributed fashion (Ley et al., 2012).

The perceptual processing steps just described only allude to the mechanisms involved in passively listening to a sequence of sounds. However, perception of something like a melody does not proceed in a simple sequential manner. It also involves an active component, such that expectancies are generated based upon a listener’s implicit knowledge about musical rules that have been acquired by previous exposure to music of that culture. Thus, hearing a particular set of tones leads one to expect certain specific continuations with greater probability than others (Krumhansl, 1990; Huron, 2006). This phenomenon is significant because it points to our highly adaptive ability to predict future events based on past regularities. There is good evidence that the relevant sequential contingencies are encoded based on a process of statistical learning (Schön and François, 2011), which emerges early in life for both speech and music (Saffran, 2003) and is also operative in adulthood (Loui et al., 2009b). This dependency on environmental exposure also means that different individuals will have different sets of perceptual templates to the extent that they have been exposed to different musical systems or cultures, a point we return to below.

The neural substrates associated with musical expectancies and their violation have been measured using electrophysiological markers. These studies show that there is sensitivity to predictions based on a variety of features including contour (Tervaniemi et al., 2001) and interval size (Trainor et al., 2002), as well as harmonies (Koelsch et al., 2000; Leino et al., 2007). The localization of these processes is complex and not fully deciphered, but most likely involves interactions between belt/parabelt auditory cortices and inferior frontal cortices, using the anteroventral pathway described above (Opitz et al., 2002; Schönwiesner et al., 2007). In keeping with the concept of hierarchical organization, violations of more abstract features are associated with changes coming from frontal areas: for example, if a chord is introduced that is itself consonant but is unexpected in terms of the harmonic relationships established by earlier chords, there will be a response in the inferior frontal cortex, typically stronger on the right side (Maess et al., 2001; Tillmann et al., 2006).

Melodies of course contain temporal patterns as well as pitch patterns. Cognitive science has identified some relevant hierarchical organization in the way rhythms are processed (Large and Palmer, 2002; Patel, 2008) such that there are more local and more global levels. Meter, defined as repeating accents that structure temporal events, would be a key level of global organization; it gains importance in our context because it can be thought of as providing a temporal framework for expected events. That is, in metrically organized music, a listener develops predictions about when to expect sounds to occur (a parallel to how tonality provides the listener with a structure to make predictions about what pitches to expect). Neuroimaging studies

have suggested that this metrical mechanism may depend on interactions between auditory cortices and the more dorsal pathways of the system, particularly with the premotor cortex and dorsolateral frontal regions [for a review, see Zatorre et al. (2007)], although subcortical basal ganglia structures also play an important role (Grahn and Rowe, 2009; Kung et al., 2013). The interaction with motor-related areas provides a possible explanation for the close link between temporal structure in music and movement. It is not far-fetched to suppose that the people listening to that ancient flute were also dancing.

The findings of these various lines of research point toward the conclusion that interactions between auditory and frontal cortices along both the ventral and dorsal streams generate representations of structural regularities of music, which are essential for creating expectancies of events as they unfold in time. This system no doubt plays a critical role in many aspects of perception. In fact, similar phenomena have been described for linguistic expectancies (Friederici et al., 2003; Patel, 2003). However, as we shall see below, these same systems may also hold part of the key to understanding why music can induce pleasure.

A final important phenomenon in considering the role of auditory cortex in complex perceptual processes is that it is also involved in imagery, that is, the phenomenological experience of perception in the absence of a stimulus. Musical imagery is a particularly salient form of this experience, as almost anyone can imagine a musical piece “in the mind’s ear.” Cognitive psychology has shown that imaginal experiences are psychologically real insofar as they can be quantified, and because they share features of real perception, including temporal accuracy and pitch acuity (Halpern, 1988; Janata, 2012). Several neuroimaging studies have shown the neural reality of this phenomenon because, even in the absence of sound, portions of belt or parabelt auditory cortex are consistently recruited when people perform specific imagery tasks (Zatorre and Halpern, 2005; Herholz et al., 2008). This imagery ability is relevant here because it shows that the auditory cortex must contain memory traces of past perceptual events, and that these traces are not merely semantic in nature, but rather reflect perceptual attributes of the originally experienced sound. In the case of music, we may say that these traces, accumulated over time, can also be thought of as templates, containing information about sound patterns that recur in musical structures. One might also ask how this information, if it is stored in these cortical areas, is accessed or retrieved. Although the mechanism is far from being understood, it appears that the frontotemporal loops mentioned above are also relevant for retrieval; this conclusion is supported by evidence that functional interactions between temporal and frontal cortices are enhanced during musical imagery (Herholz et al., 2012). Moreover, the degree of activity in this network is predictive of individual differences in subjective vividness of imagery,

supporting a direct link between engagement of this frontotemporal system and ability to imagine music. This network, as we shall see, may also play an important role in musically mediated pleasure, and recruitment of the reward network, the topic to which we now turn.

NEUROBIOLOGY OF REWARD

A reward can be thought of as something that produces a hedonic sense of pleasure. Because this is a positive state, we tend to be reinforced to repeat the behavior that leads to this desirable outcome (Thorndike, 1911). A biological substrate for reinforcement was discovered in Montreal over half a century ago, when Olds and Milner (1954) reported that electrical stimulation of a specific part of a rat’s brain caused the animal to continuously return to the location where this stimulation had occurred. Subsequent studies demonstrated that, if rats are given a chance to stimulate these areas, they would forgo all other routine behaviors, such as grooming, eating, and sleeping (Routtenberg and Lindy, 1965; Wise, 1978). The electrical stimulation was targeting pathways leading to the mesolimbic striatum, and it has now been widely demonstrated that dopamine release in these regions can lead to reinforcement of behaviors (Schultz, 2007; Leyton, 2010; Glimcher, 2011).

In the animal kingdom, the phylogenetically ancient mesolimbic reward system serves to reinforce biologically significant behaviors, such as eating (Hernandez and Hoebel, 1988), sex (Pfaus et al., 1995), or caring for offspring (Hansen et al., 1993). In humans, dopamine release and hemodynamic activity in the mesolimbic areas has also been demonstrated to reinforce biologically adaptive behaviors, such as eating (Small et al., 2003) and behaviors related to love and sex (Aron et al., 2005; Komisaruk and Whipple, 2005). However, as animals become more complex, additional factors become important for successful survival. For example, among human societies, having a certain amount of money can predict successful survival. Not surprisingly, obtaining money is highly reinforcing, and has also been demonstrated to involve the mesolimbic striatal areas (Knutson et al., 2001). The reinforcing qualities of such secondary rewards suggest that humans are able to understand the conceptual value of an abstract item that does not contain inherent reward value. In line with this, many people obtain pleasure from other stimuli that are conceptually meaningful, with little direct relevance for survival, and listening to music is one example. As Darwin observed, music has no readily apparent functional consequence and no clear-cut adaptive function (Hauser and McDermott, 2003). However, listening to music is ubiquitous throughout human societies since at least Paleolithic times. How does a seemingly abstract sequence of sounds produce such potent and reinforcing effects?

HOW DOES MUSIC CAUSE PLEASURE?

It is widely believed that the pleasure people experience in music is related to emotions induced by the music, as individuals often report that they listen to music to change or enhance their emotions (Juslin and Sloboda, 2001). To examine this link, we performed an experiment in which we asked listeners to select highly pleasurable music and, while listening to it, rate their experience of pleasure continuously as we assessed any changes in emotional arousal (Salimpoor et al., 2009). Increased sympathetic nervous system activity is implicated in “fight or flight” responses (Cacioppo et al., 2007) and thought to be automated; therefore it serves as a reliable measure of emotional arousal. We measured heart rate, respiration rate, skin conductance, body temperature, and blood volume pulse amplitude to track changes that correspond to increasing levels of self-reported pleasure. The results revealed a robust positive correlation between online ratings of pleasure and simultaneously measured increases in sympathetic nervous system activity, thus showing a link between objective indices of arousal and subjective feelings of pleasure.

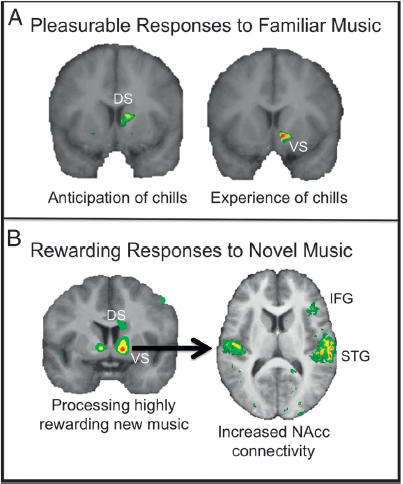

Next we turn to the mechanisms through which emotional arousal can become rewarding. If emotional responses to music target dopaminergic activity in the reinforcement circuits of the brain, there should be a mechanism through which these responses could be considered rewarding. To examine this question, our laboratory has performed two studies in which participants selected music that they find highly emotional and pleasurable (Blood and Zatorre, 2001; Salimpoor et al., 2011). To have an objective measure of peak emotional arousal, people brought in music that gives them “chills,” which are believed to be physical manifestations of peak emotional responses (Panksepp, 1995; Blood and Zatorre, 2001; Rickard, 2004), and related to increased sympathetic nervous system arousal (Salimpoor et al., 2009). In the first study, we demonstrated that the ventral striatum and other brain regions associated with emotion were recruited as a function of increasing intensity of the chills response (Blood and Zatorre, 2001). This finding thus importantly identified that the mesolimbic reward system could be recruited by an abstract aesthetic stimulus. Several other studies have shown consistent findings (Koelsch et al., 2006; Menon and Levitin, 2005); however, because all these studies measured hemodynamic responses, they did not address whether the dopaminergic system was involved. Therefore, we performed another study (Fig. 13.3A) with ligand-based positron emission tomography (PET) (Salimpoor et al., 2011), using raclopride, a radioligand that binds competitively with dopamine receptors. We compared dopamine release in response to pleasurable vs. neutral music and confirmed that strong emotional responses to music lead to dopamine

FIGURE 13.3 Neural correlates of processing highly rewarding music. (A) Spatial conjunction analysis between [11C]raclopride positron emission tomography and fMRI while listeners heard their selected pleasurable music revealed increased hemodynamic activity in the ventral striatum (VS) during peak emotional moments (marked by “chills”), and the dorsal striatum (DS) preceding chills, in the same regions that showed dopamine release. Adapted from Salimpoor et al. (2011). (B) fMRI scanning showing that the best predictor of reward value of new music (as marked by monetary bids in an auction paradigm) was activity in the striatum, particularly the NAcc; the NAcc also showed increased functional connectivity with the superior temporal gyri (STG) and the right inferior frontal gyrus (IFG) as musical stimuli gained reward value. Adapted from Salimpoor et al. (2013). [NOTE: Figure can be viewed in color in the PDF version of this volume on the National Academies Press website, www.nap.edu/catalog.php?record_id18573.]

release in the mesolimbic striatum, which can help explain why music is considered rewarding, and links music directly to the other, biologically rewarding stimuli outlined above.

If the pleasures associated with music are at least in part related to the dopaminergic systems that we share with numerous other vertebrates, why do they seem to be uniquely a part of human behavior? Can animals tell the difference between ancient flutes, Mahler, and Britney

Spears? And if so, do they care? The closest phenomena to music in the animal kingdom are biologically significant vocalizations. However, these musical sounds are thought to be limited to an adaptive role toward territory defense and mate attraction, rather than for abstract enjoyment (Catchpole and Slater, 1995; Marler, 1999). When given a choice between listening to music versus silence, our close evolutionary relatives (tamarins and marmosets) generally prefer silence (McDermott and Hauser, 2007). Some animals may be capable of processing basic aspects of sound with relevance for music. For example, rhesus monkeys do demonstrate an ability to judge that two melodies are the same when they are transposed by one or two octaves (Wright et al., 2000). However, this ability is limited: the monkeys failed to perform this task if melodies were transposed by 0.5 or 1.5 octaves. There is also some evidence (Izumi, 2000; Fishman et al., 2001) that monkeys can distinguish between consonance and dissonance. However, they do not seem to consider consonant sounds more pleasurable, based on the finding (McDermott and Hauser, 2004) that cotton-top tamarins showed a clear preference for species-specific feeding chirps over distress calls, but no preference for consonant versus dissonant intervals. Although certain individuals of some species do demonstrate motor entrainment to externally generated rhythmic stimuli (Patel et al., 2009; Schachner et al., 2009), there is no evidence that primates do so; moreover, such behaviors have been observed in interactions with humans, and not in natural settings. Thus, overall, there is scant evidence that other species possess the mental machinery to decode music in the way humans do, or to derive enjoyment from it.

Why do certain combinations of sounds seem aesthetically pleasant to humans, but not to other animals, even primates? To better understand how we can obtain pleasure from musical sounds, it is important to realize that the mesolimbic systems do not work in isolation, and their influence will be largely dependent on their interaction with other regions of the brain. Mesolimbic striatal regions are found in many organisms, including early vertebrates (O’Connell and Hofmann, 2011); however, the anatomical connectivity of these regions with the rest of the brain varies across species depending on the complexity of the brain (Northcutt and Kaas, 1995). For example, the mesolimbic reward system becomes highly interconnected with the prefrontal cortices in mammals (Cardinal et al., 2002). Furthermore, as animals become more complex, the concept of reward can take on different forms. For example, we humans enjoy activities as diverse as attending concerts, reading fiction, visiting museums, or taking photographs, as well as less “high-brow” but still aesthetic pursuits such as decorating our vehicles, matching our wardrobes, or planting flowers. Aesthetic rewards are often highly abstract

in nature and generally involve important cognitive components. In particular, they are highly culture dependent and therefore imply a critical role for learning and social influences. These features suggest that they may involve the “higher-order” and more complex regions of the brain that are more evolved in humans. Brain imaging studies of aesthetic reward processing lend support to this idea by demonstrating activity in the cerebral cortex, particularly the prefrontal cortex (Cela-Conde et al., 2004; Kawabata and Zeki, 2004; Vartanian and Goel, 2004), which is most evolved in humans (Haber and Knutson, 2010). The cerebral cortex contains stores of information accumulated throughout an organism’s existence. As such, cortical contributions to aesthetic stimulus processing are consistent with the idea that previous experiences may play a critical role the way an individual may experience certain sounds as pleasurable or rewarding. Although evidence exists for some basic similarities in how people across cultures respond to certain cues (Fritz et al., 2009), the rewarding nature of aesthetic stimuli is not entirely universal, differing significantly across cultures, and between individuals within cultures. These responses are related to subjective interpretation of the stimulus, which is likely to be related to previous experiences with a particular stimulus or other similar stimuli. It has been proposed that all individuals have a “musical lexicon” (Peretz and Coltheart, 2003), which represents a storage system for musical information that they have been exposed to throughout their lives, including information about the relationships between sounds and syntactic rules of music structure specific to their prior experiences. This storage system may contain templates that can be applied to incoming sound information to help the individual better categorize and understand what he or she is hearing. As such, each time a sequence of sounds is heard, several templates may be activated to fit the incoming auditory information. This process will inevitably lead to a series of predictions that may be confirmed or violated, and ultimately determine its reward value to the individual.

To examine the neural substrates of predictions and reward associated with music, and how these may contribute to pleasurable responses, in a new study, we scanned people with functional MRI (fMRI) as they listened to music that they had not heard before and examined the neural activity associated with the reward value of music (Salimpoor et al., 2013). We assessed the reward value of each piece of music by giving individuals a chance to purchase it in an auction paradigm (Becker et al., 1964), such that higher monetary bids served as indicators of higher reward value. We were interested in examining the neural activity associated with hearing musical sequences for the first time, and examining the neural activity that can distinguish between musical sequences that become “rewarding” to an individual compared with those that they

do not care to hear again. The results (Fig. 13.3B) revealed that activity in the mesolimbic striatal areas, especially the nucleus accumbens (NAcc), was most associated with reward value of musical stimuli, as measured by the amount bid. The NAcc has been implicated in making predictions, anticipating, and reward prediction errors—that is, the calculated difference between what was expected and the actual outcome (McClure et al., 2003; O’Doherty, 2004; Pessiglione et al., 2006). A prediction may result in a positive, zero, or negative prediction error, depending on the organism’s expectations and the outcome (Montague et al., 1996; Schultz, 1998; Sutton and Barto, 1998), and a number of studies have demonstrated that prediction errors are related to dopamine neurons in the midbrain (Morris et al., 2004; Bayer and Glimcher, 2005) and may be measured in the NAcc (O’Doherty, 2004; Pessiglione et al., 2006). This result therefore provides evidence that temporal predictions play an important role in the way in which individuals obtain pleasure from musical stimuli. A second and perhaps more important finding was that auditory cortices in the superior temporal gyrus (STG), which were highly and equally active during processing of all musical stimuli, showed robustly increased functional interactions with the NAcc during processing of musical sequences with high, compared with low, reward value. As discussed above, auditory cortices are the site of processing not only of incoming auditory information, but also of more abstract computations related to perception, imagery, and temporal prediction. Increased functional connectivity between the NAcc and STG as reward value increases suggests that predictions were linked with information contained in the STG, which we think is related to templates of sound information gathered through an individual’s prior experiences with musical sounds (likely based in part on implicit knowledge, such as might arise via statistical learning). This functional interaction between subcortical reward circuits involved in prediction and highly individualized regions of the cerebral cortex can explain why different people like different music, and how this may be a function of their previous experiences with musical sounds. Moreover, consistent with the studies reviewed above linking the STG with the inferior frontal cortex and implicating this region with hierarchical expectations during music processing, we found increased connectivity also of frontal cortex with the NAcc during highly rewarding music processing. These corticostriatal interactions exemplify the cognitive nature of rewarding responses to music and help to explain why the complexities of the highly evolved human brain allow for the experience of pleasure to an abstract sequence of sound patterns.

In the experiment described, we used new music to rule out veridical expectations (Bharucha, 1994), or explicit expectations of how musical

passages may unfold based on familiarity with the musical selections. However, explicit expectations can also lead to activity in the mesolimbic striatal regions. In the earlier study (Salimpoor et al., 2011), we found activity in the dorsal striatum (caudate nucleus) during the period immediately preceding the chills, that is, during a phase of anticipation (Fig. 13.3A). Indeed, this dorsal component of the mesolimbic striatum has previously been associated with anticipation (Boileau et al., 2006). The dorsal striatum has intricate anatomical connections with various parts of the prefrontal cortex (Monchi et al., 2006; Postuma and Dagher, 2006). The frontal lobes, particularly the prefrontal cortices, are involved in executive functions, such as temporal maintenance of information in working memory and relating information back to earlier events, temporal sequencing, planning ahead, creating expectations, anticipating outcomes, and planning actions to obtain rewards (Stuss and Knight, 2002; Petrides and Pandya, 2004). These cognitive processes are highly significant during musical processing, and it would be consistent that striatal circuits would provide a mechanism for the temporal nuances that give rise to feelings of anticipation and craving. Therefore, it is likely that the cerebral cortex and striatum work together to make predictions about potentially rewarding future events and assess the outcome of these predictions. Additional support implicating the caudate in anticipation comes from other studies that implicate the dorsal striatum in anticipating desirable stimuli, when the behavior is habitual and expected (Boileau et al., 2006; Belin and Everitt, 2008). In this way, the signals that predict the onset of a desirable event can become reinforcing per se. In the case of music, this prediction may include sound sequences that signal the onset of the highly desirable part of the music. Previously neutral stimuli may thus become conditioned to serve as cues signaling the onset of the rewarding sequence. Frontal cortices (Tillmann et al., 2003; Koelsch et al., 2008), and their interactions with the basal ganglia (Seger et al., 2013), have also been implicated in processing syntactically unexpected events during music, suggesting that they might be involved in keeping track of temporal unfolding of sound patterns and their structural relationships, further supporting the role of striatal connectivity with the most evolved regions of the human brain during music processing. It is important to note that the NAcc has also been demonstrated to play a role in anticipation with other types of stimuli, such as monetary rewards (Knutson and Cooper, 2005). The functional roles of these structures are therefore not simply attributable to any one dimension, but are dynamically altered as a function of a variety of factors, not all of which have yet been identified.

The NAcc played an important role with both familiar and novel music. In the case of familiar music, hemodynamic activity in the NAcc was associated

with increasing pleasure, and maximally expressed during the experience of chills, which represent the peak emotional response; these were the same regions that showed dopamine release. The NAcc is tightly connected with subcortical limbic areas of the brain, implicated in processing, detecting, and expressing emotions, including the amygdala and hippocampus. It is also connected to the hypothalamus, insula, and anterior cingulate cortex (Haber and Knutson, 2010), all of which are implicated in controlling the autonomic nervous system, and may be responsible for the psychophysiological phenomena associated with listening to music and emotional arousal. Finally, the NAcc is tightly integrated with cortical areas implicated in “high-level” processing of emotions that integrate information from various sources, including the orbital and ventromedial frontal lobe. These areas are largely implicated in assigning and maintaining reward value to stimuli (O’Doherty, 2004; Chib et al., 2009) and may be critical in evaluating the significance of abstract stimuli that we consider pleasurable.

PUTTING IT ALL TOGETHER

The studies we have reviewed begin to point the way to a neurobiological understanding of how patterns of otherwise meaningless sounds can result in highly rewarding, pleasurable experiences. The key concepts revolve around the idea of temporal expectancies, their associated predictions, and the reward value generated by these predictions. As we have seen, auditory cortical regions contain specializations for analysis and encoding of elementary sound attributes that are found in music, particularly pitch values and durations. These elements are processed in a hierarchical manner within auditory areas to represent patterns of sounds as opposed to individual sounds. The interactions between auditory areas and frontal cortices via the ventral and dorsal routes are critical in allowing working memory to knit together the separate sounds into more abstract representations, and in turn, in generating tonal and temporal expectancies based on structural regularities found in music. These expectancies are rooted in templates derived from an individual’s history of listening, which are likely stored in auditory cortices.

The reward system, phylogenetically old, may be most parsimoniously explained as a mechanism to promote certain adaptive behaviors, with dopaminergic circuits playing a critical role in establishing salience and reward value of relevant stimuli and the sensations generated by them. An important part of this system seems to be devoted to reward prediction; as indicated above, fulfillment of prediction leads to dopamine release in the striatum, with a greater response associated with better-than-expected reward. The findings of enhanced functional interactions between the auditory cortices, valuation-related cortices, and the striatum as a function of how much a new piece of music is liked provide a link between these two

major lines of research. We suggest that the interactions that we observed represent greater informational cross-talk between the systems responsible for pattern analysis and prediction (cortical) with the systems responsible for assigning reward value itself (subcortical). Thus, the highly evolved cortical system is able to decode tonal or rhythmic relationships, at both local and more global levels of organization, that are found in music, such that it can generate expectations about upcoming events based on past events. However, the emotional arousal associated with these predictions, we think, is generated by the interactions with the striatal dopaminergic system. This framework, and others like it (Kringelbach and Vuust, 2009), could also be thought of more broadly as applicable to other types of aesthetic rewards: for example, some authors have suggested that visual aesthetic experiences may arise from interactions across cortical regions involved in perception and memory (Biederman and Vessel, 2006); also, Cela-Conde et al., Chapter 16, this volume, emphasize synchronization across cortical fields as important for visual aesthetics.

Our ability to enjoy music can perhaps now be seen as a little less mysterious than Darwin thought, when viewed as the outcome of our human mental machinery, both its phylogenetically ancient, survival-oriented circuits and its more recently evolved cortical loops that allow us to represent information, imagine outcomes, make predictions, and act upon our stored knowledge. We have little doubt that the ancient musicians, armed with the same machinery as us, and able to coax patterns of tones from a vulture bone, experienced and communicated pleasure, beauty, and wonder, just as much as we do today.