Overview Framework for Complex Evaluations

Important Points Made by the Speaker

- Interventions can vary in complexity along different dimensions.

- Many methods are available to describe the implementation of an intervention, to acquire data about an intervention’s effects, and to assess the contribution to or attribution of impact.

- Many methods are complex and rapidly changing, creating a demand for guidance in their use.

In the opening session of the workshop, Simon Hearn, a research fellow at the Overseas Development Institute, set the stage for discussing the practice of designing and implementing mixed methods evaluations of large complex interventions and presented an overall framework for approaching these types of evaluations. He described the BetterEvaluation Initiative, a global collaboration of many organizations dedicated to improving evaluation practice and theory.1

Hearn identified three major challenges in evaluating complex interventions:

- Describing what is being implemented

- Getting data about impacts

- Attributing impacts to a particular program

1 More information is available at http://betterevaluation.org (accessed April 7, 2014).

Many different evaluation methods are available to take on these challenges, as are guides for how to do evaluations. But many methods are evolving rapidly, and new methods and data sources are becoming available, such as the use of mobile phones or social media. Also, options exist for understanding causality that do not involve experimental methods, though these are often not covered in guides. In such circumstances, evaluators often use the methods they have always used, said Hearn, which may or may not be suited to the problem at hand.

Hearn also discussed six facets of interventions that, when viewed together, can be used to gauge the complexity of an intervention:

- Objectives: Are they clear and defined ahead of time, or are they emergent and changing over time?

- Governance: Is it clear and defined ahead of time, or is it characterized by shifting responsibilities and networks of actors?

- Implementation: Is the implementation of the intervention consistent across places and across time, or will it shift and change over time?

- Necessariness: Is the intervention the only thing necessary for its intended impacts, or do other pathways lead to the impacts, and are these pathways knowable?

- Sufficiency: Is the intervention sufficient to achieve the impacts, or do other factors need to be in place for the impacts to be realized, and are these other factors predictable before the fact?

- Change trajectory: Is the change trajectory straightforward? Can the relationship between inputs and outcomes be defined, and will this relationship change over time?

THE BETTEREVALUATION INITIATIVE RAINBOW FRAMEWORK

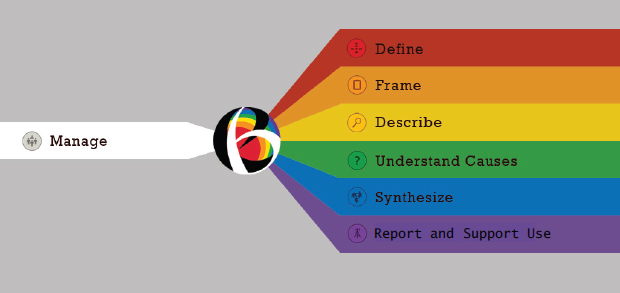

Hearn and his colleagues have developed the BetterEvaluation Rainbow Framework to help evaluators navigate the choices available at each stage of an evaluation (see Figure 2-1). The framework organizes clusters of tasks associated with each stage of the evaluation process, although the stages and tasks are not necessarily sequential, and each is as valuable as the other. Furthermore, all are subject to management, which acts as a sort of prism through which all the different stages of an overall evaluation process are viewed.

The first task is to define what is to be evaluated. This involves developing an initial description of the initiative or program being evaluated (the evaluand), developing a program theory or logic model to describe how the program is intended to create change, and identifying potential unintended results. For example, a logic model may consist of a simple pipeline

FIGURE 2-1 BetterEvaluation Initiative’s Rainbow Framework, as presented by Hearn, separates an evaluation into equally important tasks that each need to be managed.

SOURCE: BetterEvaluation, 2014.

or results chain, a more sophisticated logical framework, more structured and free-flowing outcomes hierarchies, or realist matrices, though complex evaluations are much more likely to rely on the more sophisticated models. Prominent questions include whether an evaluation is looking at the effects on policy, the effects on populations, or both; whether multiple levels of activity are being evaluated; and who the stakeholders are. A clear description can engage and inform all of the stakeholders involved in the evaluation, Hearn said, which is particularly important in complex evaluations where many people are involved.

The second task is to frame what is to be evaluated. Framing an evaluation is necessary to design that evaluation. Framing involves identifying the primary intended users, deciding on the purposes of the evaluation, specifying key evaluation questions, and determining what “success” would look like—what standards or criteria will be used to make judgments about the program? Complex interventions are likely to have multiple contributors and users of results, and Hearn noted that the different purposes of these users can conflict. Users also may have different evaluation questions that need to be addressed, which means they might have different understandings of success.

The third task is to describe what happened. This task involves the use of samples, measures, or indicators; the collection and management of data; the

combination of qualitative and quantitative data; the analysis of data; and the visualization and communication of data. For example, in Chapter 6.) Are data from one phase of a project being used to inform another phase? Are the data being gathered in parallel or in sequence? Many different options are available in this area, said Hearn.

The fourth task is to understand the causes of outcomes and impacts. What caused particular impacts, and did an intervention contribute to those outcomes? Do the results support causal attributions? How do the results compare with the counterfactual analysis? What alternative explanations are possible? Simply collecting information about what happened cannot answer questions about causes and effects, whereas an evaluation must deal with causation in some way, Hearn observed. Evaluations often either oversimplify or overcomplicate this process. Oversimplification can come from an implicit assumption that if something is observed it can be understood as caused by the program or interventions—making a “leap of faith” without doing the analysis to verify the claim. Overcomplication can lead to overly elaborate experimental designs or to the analysis of very large datasets with overly sophisticated statistical techniques. Experimental designs can be powerful, but other options are also available, and causation can be measured even without control groups or experimentation. Theory-based designs, participatory designs, counterfactual analysis, regulatory frameworks, configurational frameworks, generative frameworks, realist evaluation, general elimination method, process tracing, contribution analysis, and qualitative comparative analysis are among the many techniques that can explore causation. Indeed, said Hearn, the BetterEvaluation website has 29 different options for understanding causes.

The fifth task is to synthesize data to make overall judgments about the worth of an intervention. Among the many questions that can be asked at this stage are: Was it good? Did it work? Was it effective? For whom did it work? In what ways did it work? Did it provide value for money? Was it cost-effective? Synthesis can occur at the micro level, the meso level, and the macro level. At the micro level, performance on particular dimensions is assessed. At the meso level, different individual assessments can be synthesized to answer evaluation questions. At the macro level, the merit or worth of an intervention can be assessed.

Synthesis can look at a single evaluation or at multiple evaluations, and it can generalize findings from, for example, a small population to a larger population. Synthesis can be difficult in cases where some positive and some negative impacts have been achieved, which requires weighing up

the strengths and weaknesses of the interventions. But synthesis is essential for evaluations, said Hearn, even though it is often slighted or overlooked in textbooks and research designs.

The sixth task is to report results and support use. “We are in the business of evaluation because we want those evaluations to make a difference,” said Hearn. “We do not want them just to be published as a report and for the users of those reports to ignore them or to misuse them.” This task requires identifying reporting requirements for different stakeholders; developing reporting media, whether written reports, social media campaigns, or some other output; ensuring accessibility for those who can use the results; developing recommendations where appropriate; and helping users of evaluations to apply the findings.

Finally, Hearn discussed the management of these six tasks, which includes but is not limited to the following elements:

- Understand and engage with stakeholders.

- Establish decision-making processes.

- Decide who will conduct the evaluation.

- Determine and secure resources.

- Define ethical and quality evaluation standards.

- Document management processes and agreements.

- Develop an evaluation plan or framework.

- Review the evaluation.

- Develop evaluation capacity.

These management tasks are relevant throughout the entire process of the evaluation, applying to each of the previous tasks.

Hearn provided three tips to help evaluators navigate the framework, which is available on the BetterEvaluation website.2 The first tip is to look at the types of questions being asked, whether descriptive, causal, synthetic, or use oriented. For example, a descriptive question is whether the policy was implemented as planned; a causal question is whether a policy contributed to improved health outcomes; a synthetic question is whether the overall policy was a success; and a use-oriented question is what should be done. The question being asked will determine which part of the framework to access.

The second tip is to compare the pros and cons of each possible

2 More information is available at http://betterevaluation.org (accessed April 7, 2014).

method. The website provides methods and resources for each of the six tasks, and this information can be used to select the optimal method.

The third tip is to create a two-dimensional evaluation matrix that has the key evaluation questions along one side and the methods along the other. By filling out this matrix, a toolkit can be developed to match questions with the methods that will be used to answer those questions.

The website offers much more information on each of the elements of the framework, as well as other resources. The BetterEvaluation organization also runs events, clinics, workshops, and other events to help teams work through evaluation design, and it then feeds the information generated by such experiences into its website. In addition, it issues publications and other forms of guidance and information.

The vision of the BetterEvaluation initiative, Hearn concluded, is to foster collaborations to produce more information and more guidance on methods to improve evaluation. The topic and the structure of this workshop are aligned to the principles of the framework, Hearn observed, and it is an opportunity to “push us further” to fill gaps and work together for a “better understanding of the richness and variety of methods” for evaluation.