4

Changes in Organ Systems Over the Lifespan

Moderated by Regina Tan, director, Office of Food Safety, Food and Nutrition Service, U.S. Department of Agriculture (USDA), and Sharon Ross, program director, Nutrition Science Research Group, Division of Cancer Prevention, National Cancer Institute, National Institutes of Health (NIH), Session 3 examined changes that occur with aging in the cardiovascular system, in the skeletal and muscular systems, in sensory and oral health, and in gastrointestinal health, as well as the role of nutrition in these changes.

First, focusing on the cardiovascular system, Tamara Harris, senior investigator and chief, Interdisciplinary Studies of Aging Section, Laboratory of Epidemiology and Population Sciences, National Institute on Aging, NIH, emphasized that separating cardiovascular disease from aging-related cardiovascular change is difficult, as both can lead to the same end-stage heart disease. She highlighted two age-associated cardiovascular changes in particular—atrial fibrillation and hypertension—both of which are common in old age and have unsettled clinical issues. Penny Kris-Etherton, distinguished professor of nutrition, Department of Nutrition Sciences, The Pennsylvania State University, then described recent trends in several atherosclerosis risk factors, and discussed how these and other risk factors in children relate to cardiovascular disease risk later in life.

Turning to skeletal and muscle systems, Connie Weaver, distinguished professor and head of the Department of Nutrition Science, Purdue University, discussed osteoporosis and other age-related changes in the skeletal system and emphasized the relationship between bone development during

puberty and later risk of bone fracture. Roger Fielding, director and senior scientist, Nutrition, Exercise Physiology, and Sarcopenia Laboratory, Jean Mayer USDA Human Nutrition Research Center on Aging, Tufts University, then described the many problems that older adults have with mobility and that are related to age-associated loss in skeletal muscle mass and function (sarcopenia).

With respect to sensory and oral health changes associated with aging, Nancy Rawson, associate director and associate member, Monell Center, emphasized that although sensitivity to odors decreases substantially as one ages, there is considerable variation in this decline among individuals and odors, and she explained that this decline is not due to loss in the ability to detect odors. Athena Papas, distinguished Erling Johansen professor of dental research and head of the Division of Oral Medicine, Tufts University School of Dental Medicine, then discussed the loss of teeth with aging and evidence indicating a link between loss of even only a few teeth and lower nutrient quality of the diet, which in turn is associated with increased risk for adverse health outcomes.

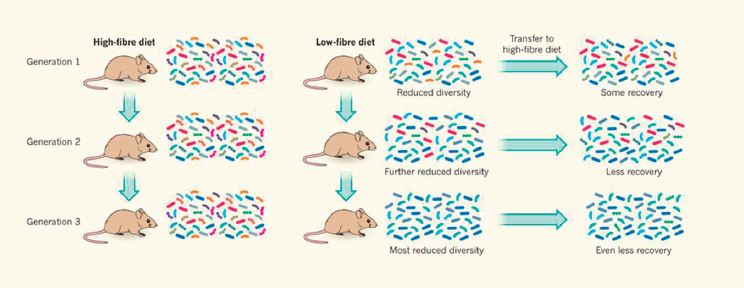

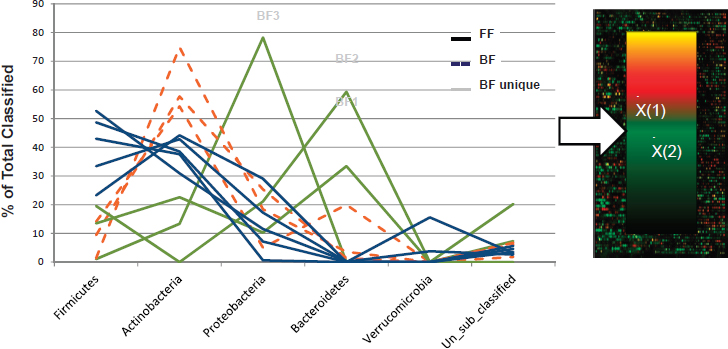

With respect to gastrointestinal health across the lifespan, Cindy Davis, director of grants and extramural activities, Office of Dietary Supplements, NIH, described evidence showing that the human gut microbiome can be modified through diet, and explained that gut bacteria can have both positive and negative health effects. Sharon Donovan, Melissa M. Noel endowed chair in nutrition and health, professor, and interim director, Illinois Transdisciplinary Obesity Prevention Program, University of Illinois at Urbana-Champaign, then discussed how breastmilk, in addition to providing all the nutrients necessary for normal growth and development, contains bioactive components that serve important non-nutritional roles, including stimulating development of the gut microbiota, which play a key role in “educating” the immune system.

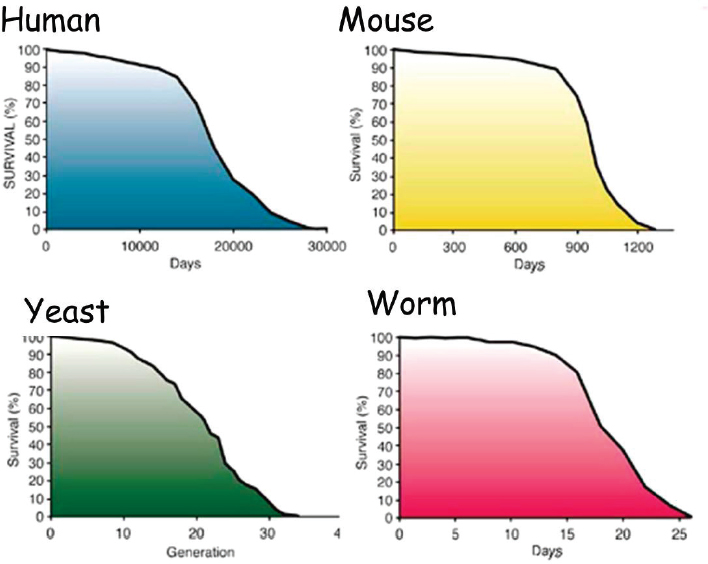

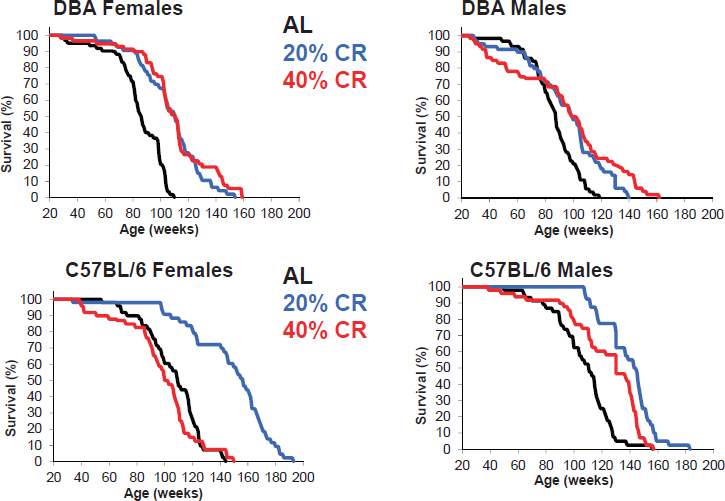

Finally, Rafael de Cabo, senior investigator, chief of the Translational Gerontology Branch, and chief of the Experimental Gerontology Section in the Aging, Metabolism, and Nutrition Unit, National Institute on Aging, NIH, described several studies in which scientists have been able to alter both the onset and the progression of aging in laboratory animals by restricting daily total caloric intake. He discussed how efforts to identify the underlying mechanisms have led to the identification of molecular targets that can be activated or deactivated by pharmacological compounds to produce the same effects, but without actual caloric restriction.

SELECTED AGE-ASSOCIATED CHANGES IN THE CARDIOVASCULAR SYSTEM1

The greatest difficulty in studying the effects of aging on cardiovascular structure and function lies in separating the effects of aging itself from those of the inextricably entwined disease processes and life-style changes that accompany aging. —Jerome Fleg, 19862

Harris began by highlighting three key messages: (1) it is difficult to separate cardiovascular aging from disease; (2) the changes in vasculature are greater than the changes in the heart; and (3) aging-related cardiovascular changes are common and costly, and they have unsettled clinical issues.

Harris noted that heart disease still leads cancer as the number one cause of death in the United States, with many other leading causes being connected to vasculature (e.g., stroke, kidney disease). Heart-related morbidity, cost, and comorbidity are also very important in old age, she observed, and many of the most prevalent chronic conditions among Centers for Medicare & Medicaid Services beneficiaries aged 65 and older—including hypertension, ischemic heart disease, hyperlipidemia, heart failure, and chronic kidney disease (Chen, 2016)—are cardiovascular-related. Even if one avoids developing vascular disease until old age, Harris reported, the remaining lifetime risk at age 70 remains high for any coronary heart disease, coronary artery disease in particular, hypertension (for which the remaining lifetime risk is particularly high), heart failure, stroke, atrial fibrillation, and obesity (Lakatta, 2015). But separating cardiovascular disease from aging-related cardiovascular change is difficult, she said, partly because both cardiovascular risk factors (e.g., dyslipidemia, hypertension, diabetes, smoking, obesity) and aging can lead to end-stage heart disease and other diseases related to heart disease (O’Rourke et al., 2010). Aging leads to end-stage heart disease through changes in the aorta, she noted, which in turn lead to aortic stiffening and isolated systolic hypertension.

Culling from the literature, Harris listed several cardiovascular changes that occur with healthy aging. These include modest left ventricular hypertrophy, where the heart muscle thickens by about 30 percent between the ages of 25 and 80; left ventricular stiffening, which leads to slower filling and leaves older persons more reliant on atrial contraction for blood pressure filling; left atrium thickening and dilation, which increases the risk of atrial fibrillation; calcification of the aortic valve; increased epicardial fat, which has recently been shown to be a risk factor for coronary artery disease; and changes related to fibrosis of the myocardium and myocyte hypertrophy that make the heart less responsive to stress (Keller and Howlett,

___________________

1 This section summarizes information presented by Dr. Harris.

2 Harris shared a portion of this quoted sentence during her presentation.

2016). Additionally, Harris noted, there are age-related declines in maximal exercise heart rate and aerobic exercise capacity (total work or maximal oxygen consumption), but physical activity can change these latter two declines markedly. She did not elaborate, but emphasized the very important role of physical activity. Among the several other changes that occur in cardiorespiratory reserve in healthy, community-dwelling persons during peak cycle exercise between the ages of 20 and 80, she highlighted decreases in peak oxygen consumption, cardiac index, and heart rate.

Among the many mechanisms that contribute to cardiac aging, Harris mentioned mitochondrial reactive oxygen species dysfunction, adverse extracellular matrix remodeling, and impaired calcium homeostasis. She emphasized the multifactorial nature of the underlying mechanism(s) (Chiao and Rabinovitch, 2015). The consequences of cardiac aging, she explained, are that cardiac output falls as people exercise, the ability to increase heart rate in response to stress declines, aortic volume and systolic blood pressure increase (but with no change in resting heart rate), orthostatic changes tend to increase, and falls increase.

Harris stressed that cardiac aging occurs in the context of other age-related problems. For example, slower gait speed has been associated with increased risk of operative mortality following cardiac surgery (Afilalo et al., 2016).

Before focusing in more depth and detail on two aging-related cardiovascular changes in particular—atrial fibrillation and hypertension—Harris cited several other age-related cardiovascular changes that she perceives as important but that she would not be addressing. First is that pulse wave velocity increases with age. Harris explained that in younger people, the aorta dilates with systole, but in older people, because the aorta is stiff, there is no dilatation. As a result, the pumping of blood from the heart ends in the end organs, such as the brain and kidneys, leading to damage over time. Second, Harris remarked that congestive heart failure with preserved ejection is a very important disease of old age (Froman et al., 2013). In middle-aged adults, its prevalence is less than 0.1 percent, but in older adults, its prevalence increases to 6-18 percent. In younger adults, Harris noted, congestive heart failure affects predominantly men, whereas in older age, it affects predominantly women. Also in older adults, she continued, the etiology is primarily hypertensive, and left ventricular systolic function is normal, but left ventricular diastolic function is impaired. Additionally, subjects usually have multiple comorbidities. Third, Harris would not be talking about kidney disease related to vascular changes, but noted its importance in old age. Nor would she be talking about the role of exercise and diet in ameliorating, if not reversing, some of these changes; about atherosclerosis; or about inflammation.

Atrial Fibrillation

Harris defined atrial fibrillation as an irregular heart rhythm caused by a disturbance in the electrical system of the heart so that the atria and ventricles no longer beat in a coordinated way. She noted that while most symptoms of atrial fibrillation are related to how fast the heart is beating, increased risk of stroke is an important complication.

The leading risk factor for atrial fibrillation is increased age, Harris reported, which she said is believed to lead to fibrosis of the electrical conducting system in the heart. Other risk factors for atrial fibrillation include hypertension, diabetes, heart failure, valvular heart disease, and myocardial infarction. Not only does the prevalence of atrial fibrillation increase with aging, with rates of hospitalization for the condition also increasingly markedly with old age, Harris observed, but older people with atrial fibrillation tend to have multiple comorbidities as well (Chen, 2016).

Harris emphasized atrial fibrillation for two reasons: first, because of its medication side effects and interactions and higher rates of hospitalization, and second, and more problematic in her opinion, because of questions around whether people who are at risk for falling or have other risk factors for bleeding should be prescribed anticoagulants. Anticoagulants, she explained, are recommended to protect people with atrial fibrillation, especially those in whom it is chronic, against the risk of stroke. But for older people, especially those who may be at risk for falling or have other risk factors for bleeding, the issue of whether to anticoagulate is what Harris described as a “major clinical quandary that has not yet been solved.”

Hypertension

The prevalence of hypertension increases markedly in old age and is even higher than that of atrial fibrillation, Harris continued. An estimated 76-79 percent of people aged 75 and older have hypertension (Buford, 2016). In some studies, Harris noted, the prevalence is even higher. She explained that in young, healthy blood vessels, the lumen (opening) is large, and the media (wall) is thin and responsive. In contrast, in older individuals, the opening is restricted, and the wall has undergone many fibrotic changes (Harvey et al., 2015). Additionally, Harris continued, in older individuals, the endothelium (the inner lining of the blood vessel) may be dysfunctional. Other potential changes include vascular remodeling, increased stiffening, vascular inflammation, and calcification. Harris reiterated the multifactorial etiology of hypertension, noting that in addition to aging, other prohypertensive factors arise as a result of lifestyle choices or other conditions.

One of the challenges of high blood pressure in older adults, Harris continued, is that it is primarily systolic blood pressure that is elevated,

whereas diastolic blood pressure is usually normal or low. This makes high blood pressure difficult to control because lowering one lowers the other as well. This is in contrast to hypertension in middle age, she observed, which usually involves both systolic and diastolic blood pressure (Smulyan et al., 2016). Also in old age, Harris noted, hypertension affects multiple organ systems. As an example of the many studies on the relationship between hypertension and cognitive change, she cited Buford (2016), who found that people with hypertension showed much greater changes in cognitive functioning 20 years later (as measured by a variety of cognitive tests) relative to people with prehypertension. The author also found that uncontrolled hypertension was associated with greater incidence of physical disability risk.

Harris asked the workshop audience to think back to 1979, when the case for treatment of hypertension in adults under 65 was considered to be “firmly established,” in contrast to the “less firmly based” case for treatment in adults over 65 (Isaacs, 1979). The evidence for treating older adults was considered “conflicting and insufficient,” she noted (quoted phrases are from Isaacs [1979]). She further quoted from Isaacs (1979, p. 115):

The prescribing of potent antihypertensive drugs to every elderly person with a high blood pressure will benefit a few, will harm many, and will be wholly irrelevant to the medical needs of most, especially of those in whom the high pressure is an incidental finding and is not the cause of the symptoms for which the patient has sought medical help. We still do not know in which elderly patients hypertension is a disease and in which it is, like old age itself, an achievement. Doctors are advised to curtail antihypertensive therapy in the elderly until much more is known about its effects.

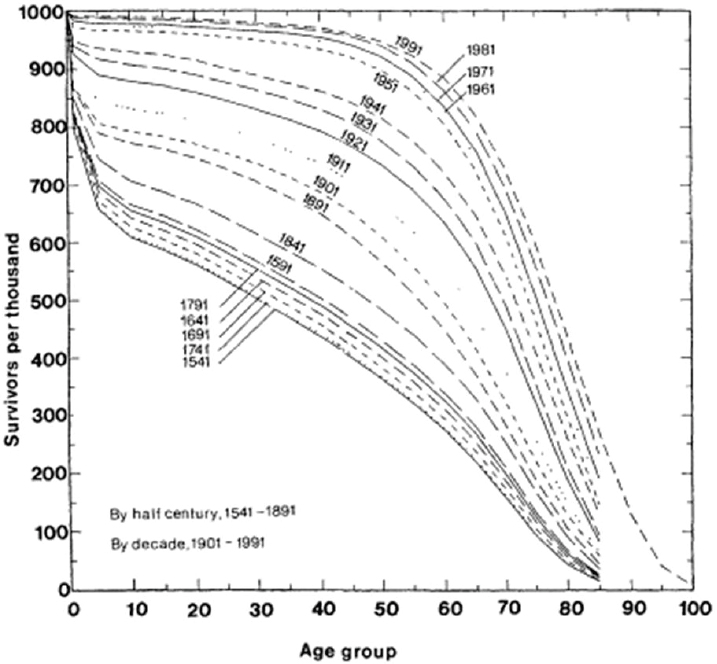

Because so many people who survived to old age had high blood pressure, Harris explained, it was believed that the condition was somehow linked to survivorship and that the brain needed it to function properly. She pointed out that these beliefs existed before any of the many major clinical trials conducted since then had begun (Benetos et al., 2015).

The current recommendation for older adults, Harris said, is to reduce systolic blood pressure to below 150 mm Hg. However, she noted, this recommendation was issued before findings from the Systolic Blood Pressure Intervention Trial (SPRINT) were published and before a recent meta-analysis showed that reducing blood pressure below current recommended levels further decreases the risk of heart disease, and that there is no threshold below which lowering blood pressure does not provide this benefit (Ettehad et al., 2016). Ettehad and colleagues (2016) also found consistency among studies in the effect of treatment, Harris reported, and concluded that drugs specific to outcomes worked, that is, that there is an ability to tailor treatments even within the general guidelines.

SPRINT was an important trial, in Harris’s opinion, because it recruited enough individuals over age 75 that the researchers were able to stratify participants by age (i.e., examine all participants versus participants aged 75 and over). In addition to cardiovascular outcomes, moreover, the researchers examined gait speed and frailty status, she noted. The trial was stopped early, she said, because it was so successful. For example, there were approximately 25 percent fewer cardiovascular disease primary outcome events among individuals receiving intensive treatment compared with those receiving standard treatment. The intensive treatment was more effective than the standard treatment regardless of frailty at baseline, Harris noted.

However, Harris said, “We have passed this way before.” She referred to a 2008 article in the New England Journal of Medicine as an example of a trial showing that treatment of blood pressure in older individuals can be successful (Beckett et al., 2008). It is not clear what the future will hold in terms of recommendations for older adults, she concluded.

THE ROLE OF NUTRITION IN CARDIOVASCULAR HEALTH AND DISEASE IN AGING3

Cardiovascular disease is the leading cause of death in the United States for both men and women, Kris-Etherton began, accounting for about 800,000 deaths yearly (Mozaffarian et al., 2016). She described atherosclerosis as a chronic, progressive condition that starts at birth and begins to manifest in the fourth decade of life (Pepine, 1998); 85-86 percent of adults aged 80 and 36-40 percent of those aged 40-59 have the condition. Some of the risk factors for cardiovascular disease are modifiable, she explained, including many nutrition-related factors, such as dyslipidemia, hypertension, diabetes, obesity, and thrombogenic factors, while others are nonmodifiable (e.g., sex, family history, age) (Wood et al., 1998). She emphasized the key role of nutrition in modifying the risk of coronary heart disease.

With respect to how these risk factors affect disease, much has been learned from the Framingham Heart Study, Kris-Etherton remarked. This seminal ongoing study, she reported, has demonstrated how changes in high-density lipoprotein (HDL) cholesterol, total cholesterol, systolic blood pressure, smoking, and diabetes among individuals aged 50-54 markedly affect the estimated 10-year risk of cardiovascular disease (Mozaffarian et al., 2016).

___________________

3 This section summarizes information presented by Dr. Kris-Etherton.

Major Risk Factors for Cardiovascular Disease in Children and Adults: Trends Over Time

Kris-Etherton went on to describe recent trends in several risk factors for cardiovascular disease based on data from the National Health and Nutrition Examination Survey (NHANES), beginning with total serum cholesterol (Mozaffarian et al., 2016). Among adults aged 20 and older, total serum cholesterol decreased from 1988 to 2012 across all ethnic groups, she reported. By 2012, however, as many as 36-50 percent of adults still had serum total cholesterol levels that exceeded recommended levels. The same trend has been observed among children, Kris-Etherton said, with decreases across all ethnic groups but again with about 10 percent of children aged 6-8 and 9-11, both boys and girls, still having elevated total cholesterol levels.

Also based on NHANES data (Mozaffarian et al., 2016), Kris-Etherton noted, about 80 percent of adults over age 75 have high blood pressure. She characterized this high prevalence as “amazing.” She pointed out that even in midlife, as many as 54-55 percent of adults aged 55-64 have high blood pressure. She described high blood pressure as a chronic condition that increases with age in the United States and other Western countries.

Another noteworthy trend, Kris-Etherton continued, reported by Mozaffarian and colleagues (2016), is the slow, steady increase in the prevalence of obesity, again among both adults and children. The latest data, she noted, indicate that 40 percent of women in the United States are now obese. During 2009-2012, 20 percent of children aged 12-19 and as many as 10 percent of children aged 2-5 years were obese, a finding she views as “quite alarming.”

The prevalence of diabetes in the U.S. population showed a sharp increase starting around 1997, Kris-Etherton observed, and about 6.8 percent of the population had the disease in 2014. Type 2 diabetes has increased in children and adolescents as well, she noted. In 2001, the prevalence per 1,000 youth aged 10-19 was 0.34, compared with 0.46 in 2009 (Dabelea et al., 2014). This percentage is equivalent to approximately 1 in every 500 youth aged 10-19 having type 2 diabetes, she explained. Data from the SEARCH for Diabetes in Youth study from 2001-2009 show this overall increase in diabetes in subpopulations as well: in both females and males; among those both 10-14 and 15-19; and across non-Hispanic whites, African Americans, and especially Hispanics (Hamman et al., 2014).

Relationship Between Risk Factors for Cardiovascular Disease in Children and Cardiovascular Disease Risk Later in Life

Kris-Etherton described evidence from several studies showing how various risk factors for cardiovascular disease in children relate to cardiovascular risk later in life, beginning with findings from the Bogalusa Heart Study showing a relationship between childhood body mass index (BMI) and adult obesity (Freedman et al., 2007). In that study, she noted, only 5 percent of children aged 12-13 in the 0-49 BMI percentile became obese as adults, compared with 84 percent of those in the 95-98 BMI percentile and all of those in a BMI percentile above 99. Looking at this same relationship in a different way, she reported, Nathan and Moran (2008) showed a strong correlation between BMI at age 13 and at age 22, as well as a strong correlation between BMI at age 13 and insulin resistance.

Kris-Etherton described a study that examined the relative risk of various risk factors for cardiovascular disease in adulthood among participants in four different studies of children aged 3-18 who were overweight or obese in childhood. Juonala and colleagues (2011), she reported, showed that obesity in childhood is related to several high-risk risk factors for cardiovascular disease, including type 2 diabetes, hypertension, high low-density lipoprotein (LDL) cholesterol, low HDL cholesterol, high triglycerides, and increased carotid artery intima-media thickness (IMT). She stressed that increases in these risk factors are largely the result of “obesity in and of itself in childhood.”

Results from the Bogalusa Heart Study have similarly shown a relationship between BMI in children at various ages and carotid IMT in adulthood, Kris-Etherton noted, with higher BMI in children being associated with an increase in carotid IMT (Freedman et al., 2004). Data from the Bogalusa study have also shown that elevated childhood lipoprotein levels are correlated with adult serum total and LDL cholesterol (15 years later) (Nicklas et al., 2002), and that both childhood and adult LDL cholesterol levels are predictive of IMT thickness (Li et al., 2003). Kris-Etherton added that data from the Cardiovascular Risk in Young Finns Study show a similar trend, with LDL cholesterol, BMI, systolic blood pressure, and smoking in childhood (aged 12-18) all being associated with IMT in adulthood (21 years later) (Raitakari et al., 2003). Even with no risk factors in adulthood, she noted, these researchers showed that children aged 12-18 with two to four risk factors for cardiovascular disease had a higher IMT 21 years later than those with only one or no risk factors in childhood. Additionally, they found that having two to three risk factors in children aged 3-9 was associated with higher carotid artery IMT than having one or no risk factors in men, but not women, 21 years later. Kris-Etherton underscored the

remarkable nature of these 21-year correlations. “Even in young kids,” she said, “these coronary risk factors take their toll later in life.”

Diet Quality and Cardiovascular Health in Children and Adults

With respect to the role of diet in the development of risk factors for cardiovascular disease, Kris-Etherton emphasized that poor dietary habits are one of three major lifestyle risk factors for cardiovascular disease, the other two being physical inactivity and smoking (Mozaffarian et al., 2008). She suggested that improving these lifestyle habits could significantly decrease morbidity and mortality due to cardiovascular disease. She pointed out that the Global Burden of Disease 2010 study showed that dietary risks are the number one cause of preventable mortality, causing more deaths even than smoking (Murray et al., 2013).

Among children, Kris-Etherton continued, only 0.05 percent of those aged 12-19 have an “ideal” healthy diet score based on NHANES 2011-2012 data (Mozaffarian et al., 2016). Healthy diet score is one of seven metrics used to estimate cardiovascular health, she explained, the others being current smoking, BMI, physical activity, total cholesterol, blood pressure, and fasting plasma glucose. She pointed out that of all these metrics diet is the worst. Over time, she noted, children’s diets have improved slightly, from only 0.2 percent of children having an “ideal” diet score in 2003-2004. “But still,” she asserted, “we have a long, long, long way to go.” The same is true of adults, she observed. Although there has been some improvement over time in the number of adults aged 20 and older with “ideal” diets, from 0.7 percent in 2002-2004 to 1.5 percent in 2011-2012, she questioned whether that progress is good enough and argued that it should be much better.

Kris-Etherton remarked that having at least three or four of the seven cardiovascular health metrics “in check” markedly decreases the risk of cardiovascular disease. Today, she reported, at least 50 percent of children are not meeting at least five of the seven criteria for ideal cardiovascular health (Mozaffarian et al., 2016).

Looking more closely at the diets of children aged 5-11, Kris-Etherton observed that only about 50 percent of these children are meeting targets for sugar-sweetened beverages (450 or fewer kilocalories/week), and fewer than 10 percent are meeting targets for sodium, whole grains, fish, and fruits and vegetables (Ning et al., 2015). She concluded that, given the importance of controlling risk factors for cardiovascular disease in children to lower cardiovascular disease risk later in life, “we have a long way to go.” In her opinion, added sugar is one constituent of the diet on which “we can really have an impact.” She added that children aged 2-5 are consuming

100 calories of added sugar per day, while adolescent boys are consuming 429 (Vos et al., 2016).

THE CARDIOVASCULAR SYSTEM: DISCUSSION WITH THE AUDIENCE

Following Kris-Etherton’s presentation, she and Harris participated in an open discussion with the audience. An audience member remarked that infants and small children are not expected to have the same heart rate or tension in their blood vessels as an older person. It seems natural, she said, that as a person ages, just as the muscles and other tissues stiffen, the tension in the blood vessels would undergo parallel changes. If this natural progression could be controlled for, would the standards for older adults change? Would older adults who are now considered “at risk” no longer be considered as such? Harris responded that although it is usual for people to develop hypertension as they age, it is not normal. To support this point, she noted that there are populations in which people do not develop hypertension as they age. These include people who are, she said, “genetically blessed,” but also people who are institutionalized and people in rurally isolated populations. So hypertension is a relatively pathological process, she argued, and it is associated with an increased risk of cardiac outcomes. Kris-Etherton added that the current recommendations for blood pressure in older adults are highly controversial, and her understanding is that because of the SPRINT trial, they are being reviewed (SPRINT Research Group et al., 2015), and some new guidelines may be issued soon. Additionally, Harris noted that the first generation of individuals who have had their blood pressure treated are now entering old age. She said it will be interesting to see what happens to the incidence of cardiovascular disease as this population continues to age.

Janet King, workshop participant, thanked Kris-Etherton for challenging the field to begin addressing chronic disease problems in children. She then asked, given how difficult it is for a child to change many things at the same time, what would be the most important dietary change to target to reduce the risk of cardiovascular disease in children? Kris-Etherton replied, “I think that we could do a lot if we could just get kids to start eating more fruits and vegetables.” That in and of itself, in her opinion, would improve diet quality and, by providing potassium, help lower blood pressure.

Johanna Dwyer, workshop participant, asked what dietary recommendation Kris-Etherton would have for medicated hypertensives over age 75. Kris-Etherton replied that she would recommend what the American Heart Association recommends—to control sodium intake. It is clear, she said, that increasing sodium intake increases blood pressure, which in turn increases the risk of heart disease and stroke. In addition to focusing on

sodium, she would recommend a diet that is low in saturated fat and meets all current food-based recommendations.

An audience member asked about the continuum from childhood through late adulthood with respect to the impact of the Child and Adult Care Food Program (CACFP) on older adults, given that the program is a common thread across this continuum. The questioner was aware of the impact of the program at the 0- to 5-year-old end of the continuum, but not at the other end. Kris-Etherton replied that all programs receiving federal funding should be obliged to follow the Dietary Guidelines for Americans (DGA). In her opinion, there is no harm in following the guidelines, and everyone benefits. Harris added that neither she nor Kris-Etherton had a “good” answer to this question.

The final question was whether the HDL/LDL ratio is also a good indicator of cardiovascular risk. Kris-Etherton responded that current treatment guidelines target LDL (“bad”) cholesterol, as well as non-HDL cholesterol (all of the “bad” cholesterol, including very low-density lipoprotein), regardless of the ratio.

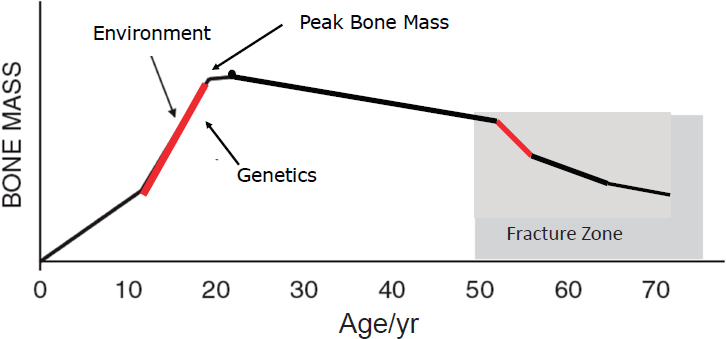

SKELETAL SYSTEMS4

Weaver began by observing that “bone is the tissue that has been thought of to be important throughout the lifespan longer than the other tissues.” Osteoporosis has been considered a pediatric disease for many years, while the notion of cardiovascular and other diseases as pediatric diseases is “just catching up,” she said. She explained that people acquire 40-50 percent of their adult bone mass and arrive at “peak bone mass” during the critical years of adolescence (Weaver et al., 2016b) (see Figure 4-1). An estimated 60-80 percent of peak bone mass is determined by genetic programming, with the remainder being influenced by lifestyle choices (e.g., diet, exercise). Weaver noted that sex differences in the formation of bone mass include men growing taller and larger skeletons than women, and that “cheating” the bone of its needed nutrients can lead to suboptimal formation of peak bone mass. Bone mass begins a slight decline following adolescence, she said, and the decline accelerates during menopause among women and more gradually among men.

Peak bone mass is important, Weaver asserted, because of its relationship to the risk of osteoporotic fracture, with a 5-10 percent difference in peak bone mass resulting in a 25-50 percent difference in hip fracture risk later in life (Heaney et al., 2000). She pointed out that 30-50 percent of children experience at least one fracture by the end of the teenage years, so fracture in childhood is important; in adulthood, however, 50 percent of

___________________

4 This section summarizes information presented by Dr. Weaver.

NOTE: The red lines indicate periods when bone is turning over most rapidly, suggesting life stages for interventions aimed at influencing bone mass acquisition or retention.

SOURCES: C. Weaver, September 13, 2016. Adapted from Heaney et al., 2000, and reprinted with permission of Springer.

women and 20 percent of men over age 50 will experience an osteoporotic fracture. The estimated annual costs for all fractures in the United States exceed $18 billion (Office of the Surgeon General, 2004).

Weaver briefly referred to the common use of bone mineral density (BMD) as an indicator of fracture risk. In adults, she said, the relationship between BMD and fracture risk is linear. She noted that bone mineral content and strength are better measures for monitoring bone health in children.

How Bones Grow

In childhood, Weaver explained, bones grow to become thicker and spongy with greater circumference, acquiring more volume, which contributes to strength. The long bones increase in both length and diameter; their increase in diameter, she said, confers greater strength relative to increases in their mass or density with age. This growth is partially driven, she continued, by sex steroid hormones, which increase prior to the launch of rapid bone growth that occurs around the time of onset of menarche in females. Upon enough estrogen release, epiphyseal closing of the long bones begins, and lengthening ends. According to Weaver, however, the greatest predictor of bone acquisition, based on work conducted in her lab and by other research groups, is insulin-like growth factor-1 (IGF-1). “It is really about growth, or the regulators of growth,” she said, with

genes related to growth playing a prominent role rather than the calcium homeostatic regulators such as parathyroid hormone or vitamin D. IGF-1 is so dominant at predicting growth acquisition, she observed, that when it is included in a model it plays a central role and minimizes the role of the sex steroid hormones.

Weaver suggested that dietary and exercise interventions may be particularly effective during the periods of rapid bone mass turnover, that is, during puberty, but also during the first 3-5 years of menopause for women (when they may lose up to 15 percent of bone mass) (Hansen et al., 1991). Yet, she noted, no study has compared interventions at these different stages of the life cycle. She called for an analytical examination of this hypothesis, noting that it will probably require an animal model.

Peak Bone Mass Development and Lifestyle Factors

Weaver called attention to a 2016 position paper for the National Osteoporosis Foundation on predictors of peak bone mass, for which she was part of the writing team (Weaver et al., 2016b). Based on a systematic search of the literature since 2000, she and other members of the team identified two predictors of peak bone mass with an “A” grade for the strength of the evidence: calcium and physical activity. The team’s literature search amounted to what she characterized as 18 separate systematic reviews. They sought evidence for all macronutrients and micronutrients that might be relevant, as well as physical activity and other predictors. They searched all evidence reported since 2000, which was when the last National Osteoporosis Foundation position paper on peak bone mass had been issued. Weaver explained that the “A” grade for calcium was based on 16 randomized controlled trials (RCTs), 4 prospective studies, and 4 observational studies, while the “A” grade for physical activity was based on 38 RCTs and 19 prospective studies. She said she expects that in the future there will be more studies on physical activity and bone structure (i.e., in addition to studies on physical activity and bone mass), but noted that the tools with which to examine bone structure are relatively new. The writing team found few studies on food patterns in children.

Structural Strength Across the Lifespan

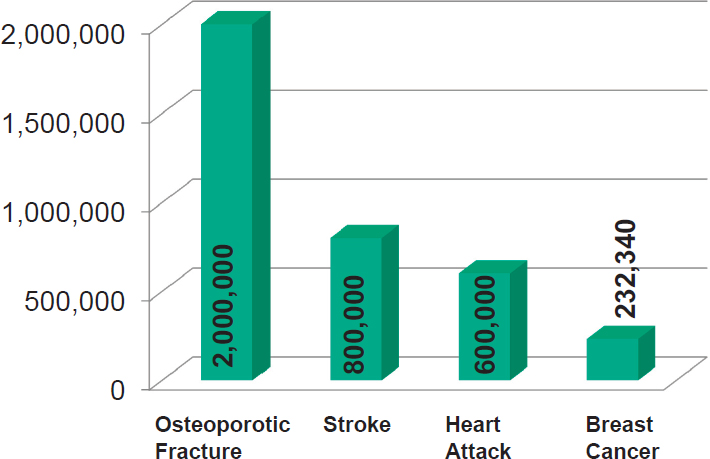

As people age, Weaver continued, their bones thin (i.e., osteoporosis), and the circumference of the bones widens. While the circumference widening helps to make up for the thinning to protect bones from fracturing, she said, it does not do enough to prevent osteoporotic fracture. “The incidence of fracture dwarfs all those other diseases you are talking about,” she asserted (see Figure 4-2). She observed that concerns about heart dis-

SOURCES: Presented by C. Weaver, September 13, 2016. Breastcancer.org, 2017; CDC, 2015, 2016; National Osteoporosis Foundation, 2017. Reprinted with permission.

ease, stroke, and other diseases with consequences for mortality are due to their associations with mortality, but a hip fracture has a major impact on quality of life, making a person less sociable, causing a great deal of pain, and dramatically affecting one’s lifestyle. In Weaver’s opinion, osteoporotic fractures in adults over age 85 warrant more attention.

Weaver defined osteoporosis as the thinning of bones that results from the chronic extraction of calcium and other nutrients needed to sustain homeostatic levels of these nutrients in the blood. The bones thin until the trabeculae are so thin that they snap when force is applied against them. Weaver noted that, according to the most recent statistics (from NHANES 2005-2010) (Wright et al., 2014), an estimated 10.2 million Americans have osteoporosis, and another 43.4 million have low bone mass. She added that the proportion of the U.S. population over age 80 is increasing rapidly and is projected to triple by 2050, which means the overall prevalence of osteoporosis and osteoporotic fractures will likewise increase.

Weaver emphasized the sex differential in osteoporosis, with 80 percent of hip fractures occurring in women and osteoporosis affecting one in three postmenopausal women. She added that the prevalence of osteoporosis is also racially variable: 15.8 percent among white females and 3.9

percent among white males, 7.7 percent among African American females and 1.3 percent among African American males, and 20.4 percent among Mexican American females and 5.9 percent among Mexican American males (Wright et al., 2014). She noted that clinical risk factors for osteoporosis independent of bone mass density include age over 65, low body weight, family history of fracture, and history of postmenopausal fracture (including vertebral fracture).

Current Treatment Options

There is no cure for osteoporosis, Weaver said. All that can be done is to attempt to reduce additional loss and stop the progression of disease. “That’s why prevention with diet and exercise is so important,” she explained.

Weaver remarked on country-level differences in the 10-year probability of hip fracture in women over age 65 (and with a prior fracture and with bone density test results indicating osteoporosis), reported by Kanis and colleagues (2011). Denmark was at one end of the range (i.e., having the greatest probability) and Turkey at the other. This finding has generated much speculation, Weaver said, regarding chosen lifestyles.

Hormone replacement therapy used to be the mainstay of osteoporosis treatment and prevention for all menopausal women, Weaver noted, until the Women’s Health Initiative showed the therapy to be associated with a risk of heart disease, stroke, and breast cancer (Rossouw et al., 2002). She explained that the recommended treatment then became bisphosphonates, which protect against additional bone loss but have some rare side effects that concern physicians and patients (e.g., atypical fractures [Russell et al., 2008] and osteonecrosis of the jaw [Arrain and Masud, 2008]).

In terms of diet and exercise, Weaver continued, calcium (a bone-building nutrient and the major mineral in bone), vitamin D (which helps increase absorption of calcium), and weight-bearing exercise are the most advocated choices for the treatment and prevention of osteoporosis. She observed that, given that 70 percent of calcium in the diet comes from dairy, dairy is an important food group with respect to osteoporosis, and it also is fortified with vitamin D.

Weaver explained that much of the confusion in the literature regarding calcium, dairy, and bone in adulthood stems partly from some of the common challenges related to running RCTs (e.g., unaccounted-for wide range of compliance among study subjects and lack of consideration of baseline status [e.g., a study participant may already have an adequate intake of calcium or vitamin D, in which case providing more will not help]). Additionally, she continued, methods for assessing intake are weak, and life stage, sex, and genetic factors often confound results. In her opinion,

results from a Women’s Health Initiative RCT of calcium and vitamin D and their relationship to hip fracture “put the thumb on the pulse” of why some researchers report no relationship, while others report significant benefit (Prentice et al., 2013). She explained that among all 68,719 postmenopausal women participating in the study no significant relationship was seen between calcium and vitamin D and hip fracture, nor were there significant relationships between calcium and vitamin D and several other outcomes (myocardial infarction, cardiovascular disease, breast cancer, and death). However, Weaver noted, women in this study were not asked to stop taking their own supplements, and many were taking enough calcium and vitamin D supplements that their mean intakes were at about the recommended levels for these nutrients. Moreover, there was a wide range of compliance among subjects.

Weaver cited a reanalysis of the Women’s Health Initiative data by Prentice and colleagues (2013). These researchers found that only women who were at least 80 percent compliant and who were not taking their own calcium and vitamin D supplements at baseline showed a large and significant benefit of the study supplementation over a 5-year period with respect to reducing the incidence of hip fracture. But, Weaver noted, there was still no effect on heart disease. She then cited a meta-analysis of calcium, vitamin D, and hip fracture that included the Prentice et al. (2013) results, which found that calcium plus vitamin D reduces the risk of hip fracture by 30 percent (Weaver et al., 2016a).

In addition to and even stronger than the results of the Weaver et al. (2016a) meta-analysis, in Weaver’s opinion, is the fact that the basic structure-function of bone indicates that 36 percent of the mineral composition of bone is calcium. Again, she said, most of this calcium comes from dietary dairy products. Because milk provides not just calcium but all other bone constituents as well (i.e., phosphorous, magnesium), a prudent recommendation, in her opinion, is the daily 3 cups of low-fat dairy product or equivalent (e.g., from a fortified food or supplement) recommended in the DGA. Other “bone-healthy” foods, according to Weaver, include fruits, vegetables, and whole grains.

MUSCULAR SYSTEMS5

Fielding remarked on the fact that there are more people on the planet over age 65 than ever before in human history. This is an “astounding” thing to think about, he said. He encouraged greater consideration of the health and behavioral needs of older adults, implicit in which is understanding the role of nutrition. Additionally, he noted, the fastest-growing segment

___________________

5 This section summarizes information presented by Dr. Fielding.

of the older population is those over age 85. “So not only are we getting older,” he said, “but we are living to older and older ages.” He called for a greater understanding of the nutritional and other health care needs of this oldest old population in particular.

“One of the important things that I am going to try to impress on you is that skeletal muscles actually really matter,” Fielding said. He noted that skeletal muscle, which makes up 45 to 50 percent of total body mass, tends to be an understudied tissue in many respects. He explained that many of the problems older adults have climbing stairs, getting out of chairs, and otherwise moving around are related to skeletal muscle, which plays a fundamental role in locomotion, oxygen consumption, whole-body energy metabolism, and substrate turnover and storage. Additionally, there is what he described as “exciting, emerging” evidence that skeletal muscle is a secretory organ, secreting proteins termed “myokines.” He described “robust skeletal muscle” as a central factor in whole-body health and as being essential for maintaining energy homeostasis.

Motor proteins and motor functions are ubiquitous across all species, as is the association between mobility and lifespan, Fielding continued (Dickinson et al., 2000). In drosophila (fruit flies), for example, Miller and colleagues (2008) showed that flying capacity declines with aging and that the loss of flying capacity is associated with mortality. This is true in humans as well, Fielding said, with walking speed or the ability to walk one-quarter of a mile being closely related to mortality.

Fielding continued by noting mobility declines in aging (e.g., reduced walking speed, inability or difficulty in walking one-quarter of a mile) are associated not only with increased mortality but also with increased health care costs. Using Medicare beneficiary data, Hardy and colleagues (2011) showed that as self-reported ability to walk one-quarter mile declines (from “no difficulty” to “difficulty” to “unable”), both health care costs and rates of hospitalization increase. Fielding said he suspects that these trends would hold up in many other parts of the world as well.

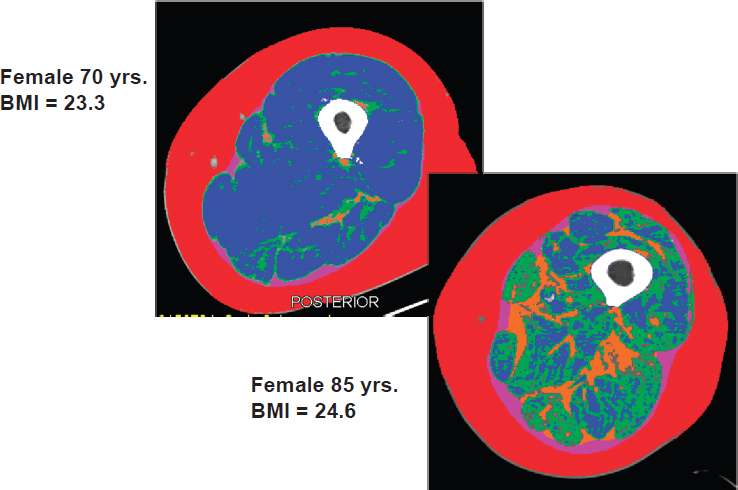

Sarcopenia

Fielding believes that many mobility changes associated with aging are related to changes in skeletal muscle known as sarcopenia, which he defined as age-associated loss in muscle mass and function (see also the discussion of sarcopenia in the summary of Gordon Jensen’s presentation in Chapter 3). He showed digitized images of cross-sectional computed tomography scans of the midthighs of a 70-year-old and an 85-year-old female, both with normal BMIs. The older female has much less total muscle, the majority of which is low-density muscle (i.e., more intramyocellular fat than normal-density muscle), and more intermuscular fat (see Figure 4-3).

NOTES: Red is subcutaneous fat, white is long bone (femur), blue is normal-density muscle, green is low-density muscle, and orange is intermuscular fat. BMI = body mass index.

SOURCE: Presented by R. Fielding, September 13, 2016.

Fielding described the images shown in Figure 4-3 as very characteristic of age-associated loss in muscle mass. Additionally, both images are of community-dwelling, ambulatory adults, not hospitalized or otherwise institutionalized patients.

Fielding explained that muscle size is important because it is related to strength, or maximal force-generating capacity. Data from his lab on about 100 older men and women show that nearly 70 percent of the variance in muscle strength (i.e., maximum force that can be generated by the knee extensor muscles) can be explained by variation in cross-sectional area (i.e., of the thigh). Fielding observed that this close association has implications for locomotion and other functional activities as people age. Over time, however, this relationship between muscle mass and strength becomes more complex. To illustrate, Fielding cited longitudinal data from the Baltimore Longitudinal Study of Aging showing that from about age 30 onward, muscle strength (or force-generating capacity) decreases at about a 2-3 percent annual rate, compared with about a 1-2 percent decline in muscle

cross-sectional area (Moore et al., 2014). He noted that other studies have shown this same trend as well—that muscle strength decreases more quickly than muscle area. He also pointed out that there is quite a bit of variability in these data, with some people declining more rapidly than others in both strength and cross-sectional area.

Fielding observed that, despite the high prevalence of sarcopenia among older adults (which varies from 0.5 to 13 percent, depending on the operational definition used) (Dam et al., 2014) and the mobility implications of age-associated loss in muscle mass and function, sarcopenia as a clinical syndrome still has no broadly accepted clinical definition, consensus diagnostic criteria, or treatment guidelines. He remarked that there have been renewed efforts in the past 10-12 years to define sarcopenia as a clinical syndrome, and that consensus panels have been organized primarily in the United States and Europe, but elsewhere as well, to develop diagnostic criteria and treatment guidelines for the condition. In his opinion, the most important development in the area of sarcopenia as a clinical syndrome is the October 2016 establishment of an ICD-10 code6 for sarcopenia.

Definition of Sarcopenia: From Consensus Definitions to Evidence-Based Criteria

Fielding reported that five consensus definitions of sarcopenia have been published, all of which include an objective measure of muscle or lean mass, and all of which incorporate muscle weakness or reduced functioning. However, he pointed out, all of these definitions are based on expert opinion, and only recently have there been evidence-based attempts to establish criteria for the definition of sarcopenia, largely as part of a Foundation for the National Institutes of Health (FNIH) project conducted and published in 2014 (Studenski et al., 2014). He explained that this project entailed a large, pooled meta-analysis of several cohort studies, mainly in the United States, that examined how sex-based muscle weakness (i.e., reduced grip strength) and appendicular lean body mass (ALM) cut points predicted incident mobility disability (i.e., gait speed of less than 0.8 meters/second). From this analysis, he said, two weakness cut points were developed: for grip strength (GSMAX), <26 kg for men and <16 kg for women; and for grip strength adjusted for BMI (GSMAXBMI), <1.0 for men and <0.56 for women. Additionally, two ALM cut points were developed: for ALM adjusted for BMI, <0.789 for men and <0.512 for women; and for absolute ALM, <19.75 kg for men and <15.02 kg for women. Fielding

___________________

6 ICD-10 is the 10th revision of the World Health Organization’s International Statistical Classification of Diseases and Related Health Problems. An ICD-10 code signifies recognition of a distinctly reportable condition.

reiterated that these are the first evidence-based criteria for sarcopenia that are linked to a hard outcome (i.e., loss of mobility).

In addition to gender differences in the distribution of sarcopenia, Fielding continued, most analyses have found that sarcopenia increases with advancing age, with prevalence being higher among individuals over age 80 than among those over age 60. Prevalence also depends on the population in which sarcopenia is measured, he remarked, including such factors as health status, dietary patterns, and comorbidities.

Importantly, in Fielding’s opinion, estimates of prevalence based on multiple criteria for sarcopenia are lower than estimates based on fewer criteria. He called for consensus around a universal, single definition of sarcopenia and noted that in fact, initiatives are under way to resolve these many definitions of sarcopenia into one singular, internationally accepted definition. He suggested that another future goal will be to link these objective cut points with other clinically valid, hard outcomes, such as falls, injurious fractures, and mortality.

Multifactorial Origins of Sarcopenia

As with many syndromes of old age, Fielding continued, multiple factors contribute to sarcopenia. He cited decreased physical activity; changes in neuromuscular activity (i.e., decreased number and increased irregularity of motor unit firing); decreased anabolic hormones (e.g., testosterone, dehydroepiandrosterone (DHEA), growth hormone, IGF-1); increased proinflammatory cytokine activity (which he said has been shown to activate muscle atrophy genes); and several factors related to nutrition, including total energy intake (Morley et al., 2001).

Dietary and Physical Activity Interventions

Fielding noted that the dietary interventions that have been studied most frequently include those related to protein nutrition and adequacy, either from food or dietary supplements. Additionally, he observed, there is some emerging interest in the role of vitamin D in the health and function of skeletal muscle. Finally, a small number of studies have examined the potential roles of other micronutrients (i.e., those with anti-inflammatory properties, antioxidants, and selected B vitamins).

Much is known about the role of physical activity and exercise interventions in the health and function of skeletal muscle, Fielding continued. These interventions, he explained, encompass both resistance or strength training exercise, which he characterized as probably the most “pro-muscle building” type of exercise, and aerobic exercise. Observational data indicate, he said, that maintaining aerobic exercise throughout the lifespan ap-

pears to protect from or slow the rate of muscle loss. Finally, he noted, some studies have looked at various dietary and physical activity interventions in combination. These, too, he said, have shown some potential benefits in slowing or reversing the rate of muscle loss.

In Fielding’s opinion, in addition to being potential targets for therapy, some of these interventions may serve as important modifiers of the pharmacological therapies currently being studied (in trials most of which are in early to late phase II). He remarked on the emerging interest in muscle biology in the pharmaceutical industry and predicted that in the future, while diet and physical activity may be used as preventive therapies, pharmacological interventions may be needed for severe sarcopenia.

Protein metabolism plays a central role in sarcopenia, Fielding explained, because skeletal muscle mass is regulated by the balance of protein synthesis and degradation. He mentioned that some evidence suggests that protein intake is inadequate in some older adults, while other evidence suggests that protein intake is related to change in muscle mass with aging. He described observational evidence from the Dynamics of Health, Aging, and Body Composition (HEALTH ABC) study indicating that even within the normal range of protein intake (0.7-1.1 g/kg/day), lower protein intake is associated with increased loss of ALM (Houston et al., 2008). He acknowledged the controversy around “appropriate” protein intake in older adults, noting that the methods for assessing this are challenging (see also the summary of Mary Ann Johnson’s presentation in Chapter 2). Nonetheless, he argued, it appears that maintaining adequate protein intake is one way to minimize the loss of lean muscle mass.

Fielding mentioned that vitamin D is probably important as well, based on a preliminary study that he and colleagues conducted (Ceglia et al., 2013). He explained that this study involved supplementing the diets of postmenopausal women who were insufficient for vitamin D with 2,000 international units per day for 4 months. A significant increase was seen in the cross-sectional area of these women’s muscle fibers, particularly the type II fibers, which Fielding described as the fast, glycolytic, high-power, high force–generating fibers that people tend to lose as they age. While this was only a preliminary study, he described it as exciting with respect to its implications for the role of vitamin D in muscle health.

Fielding concluded by summarizing his key points: that sarcopenia is age-related loss in muscle mass and function, that several nutritional factors related to protein intake and specific micronutrients affect its progression, and that nutritional interventions may play a role in its prevention and treatment. An important focus for future research, in his opinion, will be understanding how anti-inflammatory dietary patterns and specific anti-inflammatory nutrients influence muscle in old age.

SKELETAL AND MUSCULAR SYSTEMS: DISCUSSION WITH THE AUDIENCE

Following Fielding’s presentation, he and Weaver participated in a brief open discussion with the audience. Athena Papas, workshop participant, commented on the concern about bisphosphonates (which Weaver had mentioned as a recommended treatment for osteoporosis), noting that the concern focuses on intravenous bisphosphonates. Yet she said she has had many patients who have come into her office “petrified” and have stopped their medication because of worries about necrosis of the jaw, and she usually recommends drug holidays for these patients. But when she conducted a follow-up study of people she had treated for bisphosphonate necrosis, the majority had died from breast cancer or another form of cancer. Based on her experience, she asserted, people should not shy away from bisphosphonates because of the risk of necrosis of the jaw. Weaver agreed that the side effects of bisphosphonates are rare, and suggested that patients, dentists, and general physicians are all overreacting. She said there is no evidence on the effectiveness of drug holidays or on when or for how long they should be recommended.

Fielding was asked about the role of calories in maintaining muscle mass, especially relative to the role of protein. He responded that a state of energy deficit (e.g., if someone is in the hospital and not consuming enough calories) can lead to accelerated loss of muscle. But a small study that he and colleagues conducted showed that even when energy balance is maintained, a reduction in protein intake still leads to significant muscle loss. Weaver added that the combination of energy deficit and reduced protein intake affects bone as well.

Johanna Dwyer, workshop participant, expressed concern that dietitians and nutritionists are not paying enough attention to sarcopenia. She was also curious about the role of drugs in treating sarcopenia. Fielding reiterated that most of the drugs being developed to target muscle are in early to late phase II trials. He noted that the main class in development is the selective androgen receptor modulators, which act on the androgen receptor but are specific for skeletal muscle, so they have fewer of the off-target effects of testosterone and typically a lower safety profile. The other class of drugs in development consists of those that target the inhibition or trapping of the myostatin protein, a negative regulator of muscle growth. Blocking myostatin action causes muscle hypertrophy, Fielding explained. These drugs are also being investigated for use in treating other diseases for which muscle loss is a secondary outcome, such as chronic obstructive pulmonary disease and heart failure. In his opinion, it will be important to understand how these medicines interact with nutrients, energy intake, and physical activity. Again, he imagined a future scenario in which treat-

ment decisions begin with lifestyle changes, but when people have such low muscle mass that they cannot walk, for example, pharmaceutical intervention may be appropriate.

Fielding was also asked about a comment he made during his presentation on the need for future research on anti-inflammatories and sarcopenia. He replied that some small studies have showed that omega-3 fatty acids can increase protein synthesis and lean mass accumulation in skeletal muscle in older adults. This is just one example of what is known, he noted. It is also known that a Mediterranean diet can reduce inflammatory burden, he said, but it is not known whether that benefit is enough to have effects on skeletal muscle. Nonetheless, he added, observational studies, many of them Ferrucci’s work, have clearly shown that higher levels of inflammation are associated with reduced walking speed and increased mobility disability. “We haven’t connected all those dots,” he said, “but I think we should try to get there.”

Weaver was asked whether there are any longitudinal data showing a relationship between intakes of calcium and vitamin D during adolescence and later fracture risk. Weaver replied that better data on this question come from animal studies, in which diet can be controlled for a longer period of time relative to studies in humans. These data, she said, suggest some ability to catch up later from an earlier deficit, but the catch-up is not complete.

AGE-ASSOCIATED CHANGES IN TASTE AND SMELL FUNCTION7

In her presentation on how nutrition and nutritional status influence what and how much and how often people eat, Rawson began by describing how the brain receives both external, sensory inputs (“hedonic value”) and internal, metabolic inputs (“nutritional value”), and how this information is filtered through experience, health, and age. Sensory experiences are not absolutes, she explained. The sensory experience of bitter foods, for example, is a very different experience for different people, depending on multiple, interacting factors. Moreover, she explained, people’s metabolic state can influence their sensory experience. For example, she said, insulin status influences the turnover of taste cells, and hunger increases sensitivity to food-related odors.

Rawson emphasized that food preference and enjoyment represent a multisensory experience, influenced not only by taste (e.g., sweet, salty, sour) and smell (e.g., fruity, smoky, toasty, floral), but also by a chemically stimulated sense known as chemesthesis (e.g., stinging, burning, tingling), the texture of food (e.g., crispy, juicy, dry), vision, and hearing. She noted,

___________________

7 This section summarizes information presented by Dr. Rawson.

for example, that even a wine expert can be fooled by a change in the color of a wine, such that experts describing a white wine that has been colored red use descriptors typically used to describe a red wine. Similarly, a person’s response to an odor such as valeric acid is different if the person is told that the smell is from sweaty socks versus a very expensive cheese.

Although flavor is multisensory, Rawson continued, aroma, or odor, is arguably the most informative component. There are thousands of different flavors, she explained, and without the sense of smell, it is impossible to differentiate between wintergreen and spearmint, for example, or between a mango and a peach. Food becomes “very uninteresting,” she said, without smell.

Decline in Variation in Odor Sensitivity with Age

Rawson explained that the flavor of food depends on both orthonasal (from the front of the nose) and retronasal (from the back of the throat and nose) olfaction, with the duration of time in the mouth determining how much of a food’s aroma actually reaches the olfactory receptors (which are located in the upper part of the nose). By the age of 60, odor sensitivity decreases by 2.5-3 orders of magnitude, with females experiencing the decline slightly later than males and also having a slightly better odor identification ability. Rawson speculated on whether this sex difference is due to differences in attention or experience or in biology. She emphasized, however, that individuals vary greatly in how quickly odor detection declines, observing that there are people even in their mid-80s whose odor sensitivity is comparable to that of people in their 20s. She noted that both age-related decline in odor sensitivity and gender and individual variability in this decline are supported by a history of consistent evidence.

In addition to these gender and individual differences, Rawson continued, recent data show that loss of odor sensitivity in older adults varies among odors, with sensitivity to odors of high molecular weight declining to a greater degree than sensitivity to odors of low molecular weight (Sinding et al., 2014). She explained that heavy odors tend to be deposited more anteriorly in the nose, whereas lighter odors, which are more volatile, travel farther into the nasal cavity. It may be, she observed, that the olfactory epithelium in the anterior region of the nose is exposed to more irritants, pollutants, and potentially infectious agents traveling up into the nose; indeed, anatomical studies have shown more patchiness and more infiltration of respiratory epithelium in the anterior regions. In other words, Rawson said, the receptors may simply no longer be present in that part of the nose in older adults.

Perceived Decline in Smell Versus Actual Dysfunction in Olfactory Sense Identification

Rawson went on to describe a recent analysis of NHANES 2011-2014 olfactory data on 1,281 participants aged 40 and older. In this study, researchers used both an eight-item, forced-choice odor identification task and self-reports of smell alterations. Rawson explained that the self-report data showed it was between the ages of 60 and 69 when most people really noticed that their sense of smell was beginning to decline (Hoffman et al., 2016). When the researchers actually measured dysfunction, however, the largest deficit was measured in the age groups 70-79 and 80 and older. Among the latter individuals, Rawson reported, nearly 40 percent experienced significant dysfunction in olfactory sense identification. When the researchers examined the type of smell dysfunction by age—specifically, whether individuals were experiencing hyposmia (impaired sense of smell) or anosmia/severe hyposmia (no ability to smell)—they found that both hyposmia and anosmia increased with age. Rawson noted that the prevalence of hyposmia was less than 5 percent in those aged 40-49, but more than 25 percent in those aged 80 and older; likewise very little anosmia was seen in those aged 40-49, whereas the prevalence was nearly 15 percent in those aged 80 and older.

Why Does Odor Sensitivity Decline with Age?

Hoffman and colleagues (2016) went on to identify several factors associated with smell dysfunction. Specifically, subjects with a measured smell dysfunction were more likely to be older, male, Mexican American, with a lower income-to-poverty ratio, in poorer general health, not regular exercisers, and heavy drinkers (four to five drinks per day). Additionally, they were more likely to have had two or more sinus infections and to have had their wisdom teeth or tonsils removed or ear tubes inserted. Of these factors, Rawson said, she found the association between smell dysfunction and lack of regular exercise to be particularly interesting, as well as the association with having had ear tubes inserted.

With regard to the biological underpinnings of olfactory dysfunction, Rawson emphasized the importance of hydration in maintaining the right composition of the olfactory mucus, which plays a key role in protecting and transporting odors across the olfactory epithelium. If the olfactory mucus is not properly hydrated, she explained, odors cannot reach the olfactory receptors. Again, she emphasized individual variation in olfactory receptor expression. Another important feature of the olfactory epithelium, she pointed out, is that it is regenerative and continues to regenerate even in old age. That the olfactory epithelium regenerates in old age is “good

news,” she said, but she cautioned that it is not able to respond to injury or other traumatic effects as well as it does in younger individuals.

In Rawson’s opinion, gaining a better understanding of the early development of the olfactory epithelium could help to identify therapeutic approaches to repair of the olfactory epithelium or ways of preventing olfactory loss. There are ways, she said, to culture human olfactory epithelial cells and study their functional characteristics in vitro. One of the more interesting recent studies on olfactory epithelium regeneration, in her opinion, demonstrated in a mouse model that telomere shortening impaired regeneration from injury, but did not affect recovery of cells under normal homeostatic conditions (Watabe-Rudolph et al., 2011). She identified this as an area for further exploration.

As another example of efforts to understand regeneration of olfactory epithelium, Rawson described some of her own research. Knowing that retinoic acid plays an important role in early development, including promotion of olfactory sensory neuron differentiation (Rawson and LaMantia, 2007), Yee and Rawson (2005) found that administering retinoic acid in a mouse dramatically reduced the time required for an olfactory sensory nerve that had been cut to regenerate.

Rawson described as another potential opportunity for therapeutic improvement in olfaction what she referred to as “olfactory training.” The expression “use it or lose it” applied to the brain or muscle, she said, applies as well to olfaction. Among both mouse and human olfactory neurons, she explained, the cells that are activated by odor exposure have longer lifespans. She noted that olfactory training is currently being tested in human subjects who have anosmia as a result of trauma or chronic sinus infections.

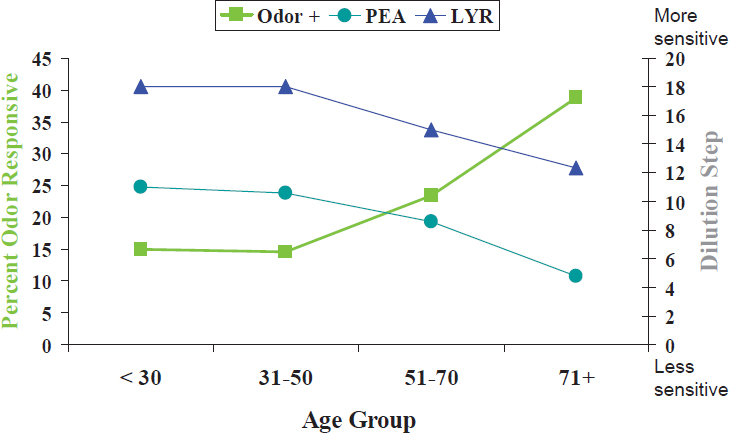

In their work on olfactory neuron function, Rawson and her team biopsy olfactory neurons from a part of the nose known as the superior aspect of the middle turbinate. She cited one study in which they collected more than 600 neurons from 440 subjects aged 18-88 and individually tested the reaction of each neuron to odor stimuli (Rawson et al., 2012). In so doing, she said, they found something “quite unexpected”: from age 50 onward, sensitivities to two different specific odors declined with age, as expected; however, the opposite occurred when they measured the number of olfactory neurons that responded to any odor stimulation, such that as age increased, a higher percentage of cells responded (see Figure 4-4). Upon closer examination, she and her team found that the olfactory cells still active in older adults are those with more broadly tuned odor sensitivities. Specifically, they found that all olfactory cells from individuals aged 45 and younger responded to only one of two odor mixtures, in contrast to about 10 percent of cells from individuals aged 60 and older that responded to both odor mixtures. Given that olfactory neurons typically express only a single type of odor receptor, Rawson and her team have hypothesized that

NOTE: Odor is any odor; PEA and LYR are specific odors.

SOURCE: Presented by N. Rawson, September 13, 2016. Reprinted with permission.

the olfactory neurons that are more likely to be activated have more broadly tuned odor receptors and that these cells become more predominant as an individual ages. So loss of odor sensitivity with age, she explained, is not a result of olfactory cells not functioning but of a loss of selectivity. Thus, older adults are still able to smell, but they have a more difficult time differentiating among odors.

NUTRITION AND ORAL HEALTH IN AGING8

“Previously,” Papas began, “old age was ‘dentures.’ Not anymore.” She explained that fewer and fewer older adults have dentures. According to data from the U.S. Centers for Disease Control and Prevention (CDC), she reported, the number of adults who are edentulous (total tooth loss) has declined over the past decade from 31 percent to 25 percent among individuals aged 60 and older and from 9 percent to 5 percent among baby boomers (CDC, 2010). People do not want to give up their teeth, she said, but unfortunately, the teeth they are keeping are unhealthy, with root decay being more common than diabetes, heart disease, mental illness, arthritis,

___________________

8 This section summarizes information presented by Dr. Papas.

and hypertension in adults aged 45-64. In the mouth, Papas explained, it is the root that begins to decay first. The hardest tissue in the mouth is enamel, followed by dentin, then bone, she noted. With regard to dental spending, those aged 65 and older outspend every other age group (Wall et al., 2013), and among members of this older age group with autoimmune disease, dental spending is three times that of their peer-control group because of a lack of saliva.

Loss of Teeth, Periodontal Disease, and Nutrition

Papas referred to two studies conducted in the 1980s. The first, the Nutritional Status Survey (NSS) by the Human Nutrition Center on Aging, was focused on developing dietary recommendations. In the second, the Nutrition and Oral Health Study (NOHS), Papas and colleagues followed volunteers from the NSS, along with additional recruits from 30 inner-city Boston sites, for 3 additional years. This second study, Papas said, was more representative than the NSS of the Boston population, and included more African Americans and people with less education. Across both studies, loss of teeth was associated with decreased consumption of about 20 micronutrients, including calcium and vitamin D. Papas cited another bone density study, in which Krall and colleagues (1994) showed that bone loss was associated with loss of teeth. She explained that as calcium and vitamin D decline, an increase occurs in alveolar bone loss, which in turn has been associated with increased periodontal disease (Inagaki et al., 2001). “Across the board,” she said, the nutrient quality of the diet significantly declines with loss of teeth. Without teeth, or even after having lost only a few teeth, she noted, older adults find it difficult to meet recommendations to increase consumption of green vegetables or fiber, for example. Additionally, NSS and NOHS data show that people who wear dentures tend to eat more baked desserts, chips and crackers, and refined carbohydrates. Denture wearers also have lower weights and skinfold-test results and lower levels of plasma albumin, carotenoids, and vitamin B12. In sum, Papas said, “The nutrient quality of the diet significantly goes down as you lose teeth,” and this, in turn, increases the risks for stroke, coronary heart disease, and diabetes (Lara et al., 2014). She added that denture wearers may also be at increased risk for cancer, osteoporosis, and other diseases that are already common in elderly populations.

As part of the NOHS, Papas and colleagues examined periodontal disease in addition to loss of teeth and found that people with periodontal disease consumed less fiber. She interpreted this to mean that diet is affected even before tooth loss. She noted that periodontal disease has also been associated with decreased vitamin C intake, and although she has seen only one

case of scurvy in her life, she commented on the horrible gum disease with which it is associated.

Papas continued by observing that loss of teeth itself has been associated with increased risks for high blood pressure, high waste circumference, and metabolic syndrome (Zhu and Hollis, 2015). Compared with study participants with full dentition, she reported, the odds of metabolic syndrome were 79 percent higher in edentulous participants.

Papas emphasized that the link between oral disease and nutrition goes both ways. Periodontal disease and loss of teeth affect nutrition, she said, and nutrition affects periodontal disease and loss of teeth.

Medication-Induced Xerostomia (Dry Mouth), Saliva, and Nutrition

According to CDC data, Papas reported, 88 percent of people aged 60 and older are taking medications. In a study of medication-induced xerostomia (dry mouth), she and colleagues compared older adults in inner-city Boston (N = 1,058) taking versus not taking medications and found that the former had fewer teeth and that those taking multiple medications had more root decay. Using the Block food frequency questionnaire, with the addition of the 100 most frequently eaten carbohydrates, they found that, compared with healthy individuals, people who were taking psychiatric medications ate more cakes, cookies, and ice cream (because the sugar made them feel better, Papas explained) and more candies as well (to alleviate medication-induced xerostomia). Psychiatric medications, previous caries, and sugar all were identified as significant predictors of caries incidence. In this same study, Papas and colleagues also found that periodontal disease was highest among adults with diabetes or metabolic syndrome. Among the medicated individuals (N = 980), those who took a multivitamin or supplemented their diet with calcium experienced less severe increases in periodontal disease, and intake of both too little and too much vitamin A was detrimental. Papas said she suspected that high vitamin A intake was detrimental because of its effect on bone density.

Generally, Papas continued, reduced salivary flow results in increased infection (e.g., candidiasis), loss of remineralization (which results in dental caries), and decreased lubrication (which can cause trouble swallowing). She and her research team have been studying Sjögren’s disease, an autoimmune disease that leads to the loss of saliva and can cause the salivary glands to swell. It affects women nine times more frequently than men, and up to 4 million American women are living with the disease. Papas explained that with the loss of saliva, what is supposed to be water in the mouth turns into mucus, decay increases, and acid reflux increases to the point where pulp becomes exposed. The Sjögren’s population consumes a great deal of sugar, she noted, again because it makes them feel better.

Papas described some of what she and her research team have learned about the oral microbiome in individuals with Sjögren’s. Compared with healthy individuals and those with periodontitis, she explained, people with Sjögren’s have fewer microbial organisms in their mouths. Individuals with periodontitis have the greatest number of microbial organisms. They have what Papas described as the “red complex,” a group of bacteria that have been associated with heart disease. Sjögren’s individuals, in contrast, have no red complex bacteria. These individuals get “long in the tooth,” Papas said, not because of deep pockets but because of recession and inflammation. She and her team found two potential oral microbiome biomarkers for Sjögren’s. One of these, Veillonella parvula, she explained, is not a pathogen itself; rather, it augments the actions of accompanying pathogens.